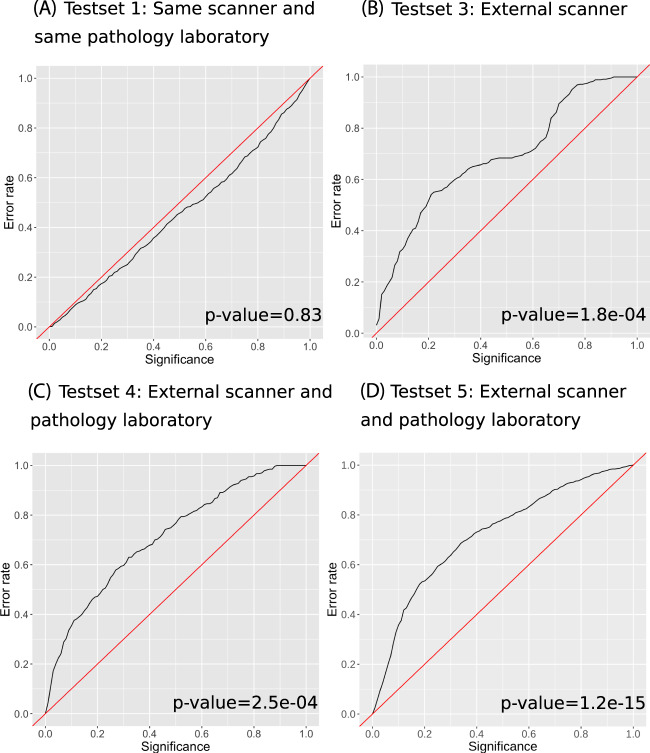

Fig. 2. Calibration plot of the observed prediction error (i.e., the fraction of true labels not included in the prediction region) on the y-axis and the pre-specified significance level ε i.e., the tolerated error rate.

The conformal predictor is valid if the observed error rate does not exceed ε i.e., the observed error rate should be close to the diagonal line, the tolerated error rate for all significance levels. The main advantage of conformal predictors is that they provide valid predictions when new examples are independent and identically distributed to the training examples. The graphs show results for Test set 1, 3, and 4, respectively. Panel A shows prediction regions on Test set 1, an independent test set consisting of 794 biopsies from 123 men from the STHLM3 study, all from the same laboratory as well as scanned on the same scanners as the training data. Panel B shows prediction regions on Test set 3 (external scanner), a set of 449 slides, held out from training, that was scanned on a different scanner than the training data. To evaluate Test set 3 (external scanner), we excluded images scanned on Hamamatsu from training, leaving 2152 Aperio images for training. The prediction regions were non-valid when evaluated on the new scanner (Hamamatsu), as the prediction error is larger than the tolerated error for all significance levels. Panel C shows prediction regions on Test set 4, a set of 330 slides from an external clinical workflow, these slides were processed using a different laboratory and a different scanner compared to the training data. Panel D shows prediction regions on Test set 5, an external test set of 1220 slides from Stavanger University Hospital representing an external clinical workflow; these slides were processed using a different laboratory and a different scanner compared to the training data and used as prospective validation of the conformal predictor. We used the Kolmogorov–Smirnov test of equality of the distribution of the predictions in the calibration set and each test dataset to test the validity of the prediction regions. The null hypothesis was that the samples were drawn from the same distribution. A p-value of less than 5% was considered statistically significant (two-sided).