Abstract

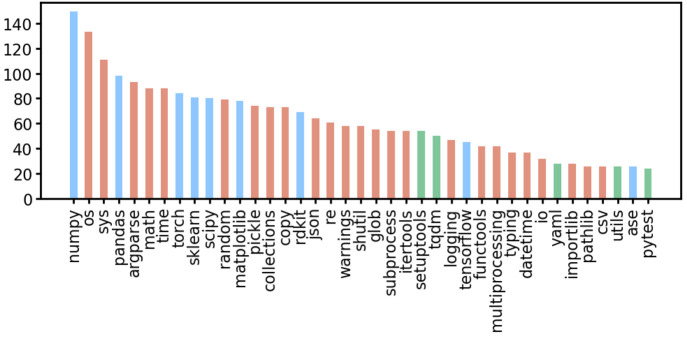

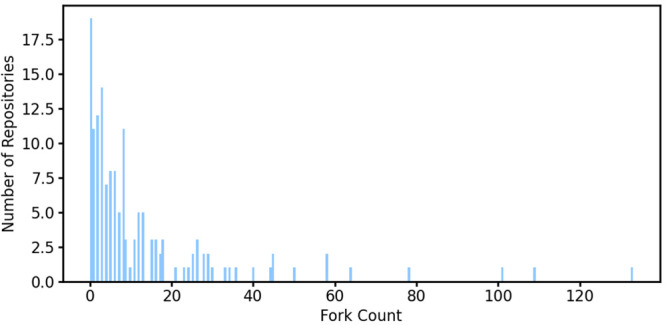

The field of computational chemistry has seen a significant increase in the integration of machine learning concepts and algorithms. In this Perspective, we surveyed 179 open-source software projects, with corresponding peer-reviewed papers published within the last 5 years, to better understand the topics within the field being investigated by machine learning approaches. For each project, we provide a short description, the link to the code, the accompanying license type, and whether the training data and resulting models are made publicly available. Based on those deposited in GitHub repositories, the most popular employed Python libraries are identified. We hope that this survey will serve as a resource to learn about machine learning or specific architectures thereof by identifying accessible codes with accompanying papers on a topic basis. To this end, we also include computational chemistry open-source software for generating training data and fundamental Python libraries for machine learning. Based on our observations and considering the three pillars of collaborative machine learning work, open data, open source (code), and open models, we provide some suggestions to the community.

1. Introduction

Creating models and performing simulations are cornerstones of science. Prior to computers, models were created on paper (e.g., mathematics, diagrams) or physically constructed from material. Modern modeling is done on computers (in silico), allowing one to easily adjust parameters and quickly observe the resulting effects. Today, a plethora of simulation and modeling codes exist, which can be either open or closed to the public. While closed-source software is created by companies for economic reasons, open-source software (OSS) has played an important role in scientific discovery. The OSS philosophy promotes the distribution of code (i.e., tools) and subsequently the natural and computer science knowledge that is embedded within the code. OSS encourages researchers to read the code critically, to understand its mathematical formulations, parameters, and assumptions and the workflow’s logic, and to modify it as desired. Free and open-source software (FOSS), a subcategory of OSS, also demands licensing models that provide a legal framework for the free distribution, use, and development of the code, albeit that commercial usage might still be restricted.

The field of machine learning (ML) has clearly grown, as can be seen by the increased number of research articles published that include it and through the interest shown by the general public. Paraphrasing Sonnenburg et al., OSS benefits the ML field by enabling better reproducibility of scientific results and quicker detection of errors as well as faster, innovative combinations of scientific ideas and their subsequent applications to diverse disciplines.1 To this list are added the benefits of being able to more easily validate the assumptions and approximations made during model building. The very goal and act of making ML algorithms and their trained models open-source has the following three benefits to the field: (1) standardizing interfaces (e.g., adopting specific frameworks), (2) enabling experimentation (e.g., guiding project choices and obtaining alternative perspectives), and (3) community creation (e.g., developer–user interactions and improved educational material).2

The field of computational chemistry has benefited significantly from the advancement of OSS and ML. The development of and access to software tools has enabled nonexperts to apply ML in their chemical and biological research and also enabled ML experts to solve problems in the chemical and biological domains. Informing others about available tools is a 2016 review article written by Pirhadi et al. that covers open-source computational chemistry software that includes some ML approaches.3 The authors also maintain an accompanying GitHub repository software list that includes annotations on open-source molecular modeling software (https://opensourcemolecularmodeling.github.io). The interest in ML by computational chemists is natural given the physics, statistical, algorithmic, and data-based ideas that are already present within the field. At its core, ML is built upon statistics and complex black-box modeling techniques that are implemented, distributed, and made accessible through thoughtful programming. The Python programming language is particularly suited for natural scientists since it is easy to generate readable procedural and object-oriented code that allows for quick and creative exploration of ideas. Python is also the most popular language in the ML community.

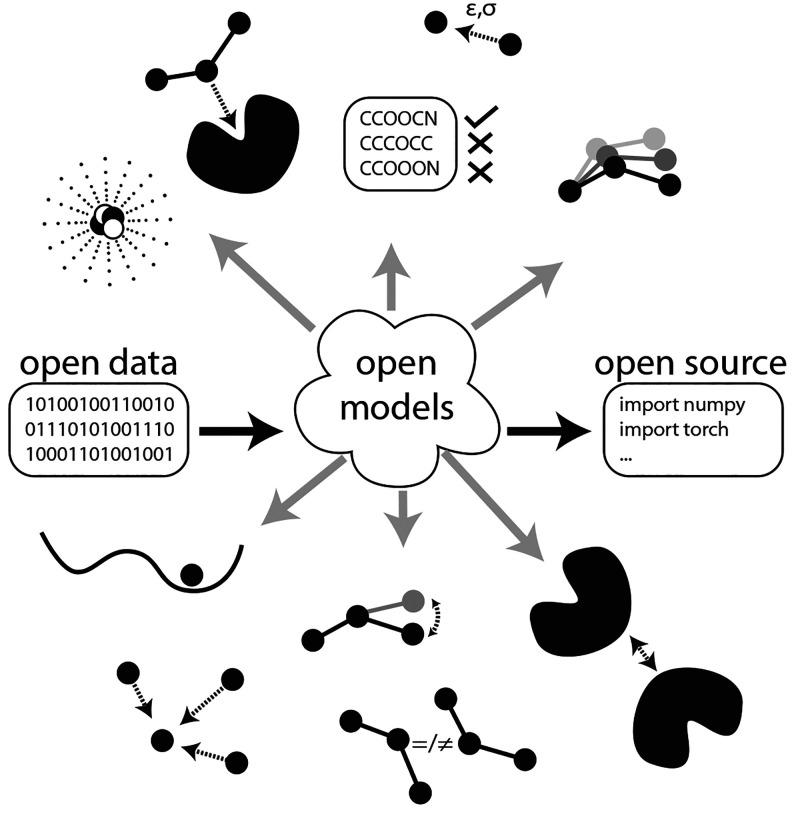

This Perspective’s goal is to provide an introduction concerning open-source Python-based (with a few exceptions) ML tools that are available for computational chemistry researchers who want to start exploring the technology, either in direct usage (i.e., application) of the learned models or in reading the code for purposes of self-education or experimentation (i.e., modification). To this end, four software groups will be covered, starting with general software development tools. Following this, standard scientific Python libraries for data manipulation and visualization are discussed. As the third group, libraries that form the foundation of ML software will be presented. Finally, selected computational-chemistry-specific tools released in the last 5 years will be discussed. While developing and applying ML code is often focused upon, the importance of generating and understanding the data on which ML is trained should not be underestimated. Consequently, we will also include notable computational chemistry software for generating data that are distributed freely through licenses and whose source code is then made available. Our choice for including the application areas (e.g., predicting energies, chemical reactions, partial atomic charges, etc.) is motivated by the ML software that is highly cited (e.g., AlphaFold), recently released research (e.g., via ASAP articles), cited literature within those publications, subsequent literature found from citation searches, and our research interests. A graphical illustration concerning the concept of the openness of machine learning computational chemistry tools is shown in Figure 1. The three building blocks for openness in ML research are considered to be (1) the availability of datasets (open data), (2) the availability of code (open source), and (3) the availability of trained ML models (open models).

Figure 1.

An illustration of how open data, open models, and open source codes are used in the different subdomains, as described herein, of computational chemistry.

In addition to providing a listing of computational-chemistry-focused ML repositories, we also note whether the training data and the resulting model and/or its parameters (i.e., weights) are published. Although this community might often be more interested in the resulting observable predictions, for reproducibility it is very important to ensure that a published model reported in a paper produces the same output as that provided in a repository. In the ML community, it has become standard practice to release training data and model parameters alongside the publication, mostly arising from the model’s size and the amount of training required (i.e., a resource and time issue). In this context, the model parameters refer to the weights that determine its output and should not be confused with the model’s training hyperparameters (e.g., the learning rate). For smaller models that are easily trained on a workstation, the publication of the hyperparameters is sufficient for reproducibility. Nevertheless, publishing the model’s parameters can increase the publication’s robustness against unnoticed changes in the libraries used by the model, outlier random seeds that increase the model’s performance, or mistakes in handling code publication, all of which might lead to differences in the model’s training during experimentation and publication.

As a final comment, we ask the reader and the researchers whose work we included or did not include to be forgiving in their critique of this Perspective. Given the large scope that ML and computational chemistry cover and the speed at which the combined domains are growing, our interpretation and categorization may sometimes be incomplete or imprecisely described. We have limited our focus to OSS that has been released alongside a peer-reviewed paper (i.e., work originating from a preprint manuscript was not included), thereby helping to ensure a higher academic standard of the work. Due to its limited in-depth coverage, the reader is referred to reviews written within the past 3 years that cover the use of ML in specific computational chemistry subdomains for additional information.4−52 Given the numerous review articles and vastness of the topic, we believe that the information herein can serve as an introductory point into the application of ML in computational chemistry.

2. Software and Libraries

2.1. Software Development Tools

The first set of tools to discuss are those forming the software environment that helps one install and organize Python libraries as well as to encode, train, test, and implement an ML workflow. The Conda53 software enables the installation and management of Python and its libraries. Apart from easing library installations, Anaconda54 and Miniconda55 enable the creation of independent environments that isolate projects from one another and from the operating system’s default installed libraries. At an advanced level, this can enable users to test different versions of their code’s imported libraries.

The use of an online code repository, hosted by a service like GitHub56 or GitLab,57 is strongly recommended. Git is a code version control tool that has overtaken older tools such as Apache Subversion (SVN). A git repository (a) serves as a backup to one’s work through a version control system, allowing users to have local and remote instances of the deposited code, (b) enables multiple people to work on the same code (i.e., project) and merge their work back to the centralized source, and (c) enables the creation of branches that allow safe experimentation and implementation that do not immediately affect the main branch. To differentiate the two aforementioned code repository systems, GitHub serves as a public platform for repositories, while GitLab is an entirely independent and self-contained ecosystem that can also be installed on self-managed local servers. A significant number of codes covered in this Perspective are hosted on GitHub.

While computer scientists often use integrated development environment (IDE) software to develop their code (e.g., PyCharm,58 Spyder,59 or Visual Studio Code60), a natural scientist might favor coding in Jupyter notebooks61,62 locally or online using a JupyterHub (private or public server) or Google’s Colaboratory.63 All of these latter tools allow one to mix code (i.e., code cells) with regular textual writing (i.e., markdown cells), thus serving very much like a traditional science lab notebook. Consequently, researchers can transcribe their thoughts while encoding their workflow within a single notebook, which can easily be shared with others (e.g., validation during a manuscript’s peer review; enable reproducibility and transparency). One advantage of the Colaboratory is its free access to reasonably powered GPU computing for executing code and training ML models. A disadvantage of such a third-party hosting solution can be data security and privacy when running code and uploading data. Alternatively, JupyterHub can be used, which enables self-managed collaborative work on multiple shared Jupyter notebooks using centrally organized computational resources and easy sharing of demonstrations with the community. A possible drawback for some researchers is that JupyterHub might require a server and IT expert to install and maintain.

2.2. Standard and Scientific Python Libraries

The Python programming language offers a basic set of common tools in its standard library (https://docs.python.org/3/library), which includes reading and writing using standard data formats, performing numerical and mathematical operations, interacting with the file and operating system, handling data, testing code using unit tests, handling exceptions, and many more things. In addition to the standard library, many libraries have been developed that support more advanced operations and algorithms. Several libraries are considered to be fundamental for programmers who are interested in natural science and machine learning. In the following, we introduce the libraries that are widely used and adapted by researchers within our field. They are maintained by active communities of OSS developers and can be relied upon due to their widespread use.

For mathematical modeling, data analysis, and manipulation, the following libraries are often used: NumPy, Pandas, SciPy, Matplotlib, Seaborn, and SymPy (Table 1). NumPy64 was designed to perform fast numerical calculations on arrays and matrices, which it achieves by interfacing with code that was written in the more low-level C and Fortran programming languages. NumPy calculations are vectorized, which makes its usage inherently parallel. Consequently, many other libraries, such as those mentioned above, use NumPy for their calculations. The Pandas library enables data to be easily imported and exported (e.g., from and to CSV-formatted files) and manipulated (e.g., filtered, sorted).65,66 At a superficial level, Pandas can be considered as Python’s version of a spreadsheet. The focus of the SciPy library is scientific computing, and it includes algorithms for extrapolation, fast Fourier transforms, interpolation, linear algebra, numerical integration, optimization, polynomial fitting, statistics, and signal processing.67 Matplotlib68 and Seaborn69 are libraries for visualizing data through plots. Seaborn is built on top of Matplotlib and simplifies the coding for high-quality, complex visualizations. SymPy is a symbolic mathematics library that includes the ability to compute derivatives, integrals, and the limits of equations, with submodules that focus on matrices, polynomials, series, and quantum mechanics.70 While not a complete list, these libraries are very helpful in ML projects for reading, manipulating, and visualizing data.

Table 1. OSS Available for Scientific Computation.

| software | link | license |

|---|---|---|

| NumPy64 | https://github.com/numpy/numpy | BSD-3-Clause |

| Pandas65,66 | https://github.com/pandas-dev/pandas | BSD-3-Clause |

| SciPy67 | https://github.com/scipy/scipy | BSD-3-Clause |

| Matplotlib68 | https://github.com/matplotlib/matplotlib | BSD-compatible |

| Seaborn69 | https://github.com/mwaskom/seaborn | BSD-3-Clause |

| SymPy70 | https://github.com/sympy/sympy | BSD |

2.3. Machine Learning

ML has become a vast field that can be hard to traverse, even for weathered scientists from the field itself. Development is going at a lightning pace, and sometimes the field even outpaces the scientific review process and suffers from catastrophic forgetting and quality reduction. The lessons we can learn from the fast development of generative, large language, and text-to-image ML models is that it is not only the amount of scientific activity that leads to fast development but rather the active exchange of code, data, and, foremost, trained models. Although large companies with vast computing power are nowadays often the first to increase a model’s size, they do not always share the model’s parameters, partially closing models off for scientific evaluation. These so-called foundation models, which can be used to derive more specifically trained models a posteriori, often end up as closed models whose use requires a fee. Interestingly, a portion of the ML community seems to immediately move to open models as soon as they reach the performance level or outperform the foundation models. These open models are more accessible for scientific evaluation and use and can be more computationally efficient due to the limited resources available outside of large companies.

Increasing the computational efficiency when training ML models enables more groups to do research. It is therefore important to cover classical ML (i.e., “shallow learning”) algorithms that can be more appropriate when dealing with datasets that have certain characteristics (e.g., small number of data points). These models are often much smaller, require less training data, and are trained quicker. Inference, using models for prediction, also tends to require much less computational effort. Some problems might require more rigorous statistics or explainability, for which statistical learning methods such as Bayesian learning are more appropriate. If a lot of training is available, deep learning methods can be used to handle data with an unknown structure. Finally, graph neural networks (GNNs) are of particular use for tasks like molecular modeling due to their inherent graphlike structure that mimics a molecule’s structural formula representation.19,48 Addressing the computational costs of GNNs is a GNN coarsening framework based on functional groups nicknamed FunQG (https://github.com/hhaji/funqg; MIT).

A large amount of ML code has appeared over the last two decades. Herein we focus on the larger Python-focused libraries that are widely used, open-source, and actively maintained.

2.3.1. Classical Frameworks

At a simplistic level, shallow learning can be thought of as having only one or two learning layers in the ML model. Oftentimes, the input data are preprocessed by humans to extract certain known derived data features that help the model learn more easily, reducing the complexity of the models. Table 2 lists the most widely used frameworks.

Table 2. OSS for Classical Machine Learning.

| software | link | license |

|---|---|---|

| scikit-learn71 | https://scikit-learn.org | BSD-3-Clause |

| scikit-cuda72 | https://github.com/lebedov/scikit-cuda | custom |

| ffnet73 | https://ffnet.sourceforge.net | LGPL-3.0 |

| XGBoost74 | https://github.com/dmlc/xgboost | Apache-2.0 |

| LightGBM75 | https://github.com/microsoft/LightGBM | MIT |

Scikit-learn contains a large number of basic shallow learning algorithms for supervised learning (i.e., learning targets from source–target data) and unsupervised learning (i.e., finding structure in unlabeled data).71 The latter include the two major categories of the classical unsupervised ML paradigm: clustering (i.e., grouping data based on similarities) and manifold learning (i.e., resolving a low-dimensional substructure in high-dimensional data). The library provides assistive tools for model selection, inspection, and evaluation as well as data handling and visualization. Scikit-CUDA enables users to easily access GPU-accelerated linear algebra operations.72 The small ffnet package allows the training of shallow feed-forward neural networks (NNs) in Python.73 XGBoost is an optimized, distributed ML library.74 LightGBM offers tree-based learning algorithms.75

2.3.2. Statistical Frameworks

As an alternative to classical ML approaches, statistical learning provides more rigorous models that are mostly based on Gaussian process modeling and regression, collectively called Kriging. This category of ML uses the assumption that a function that we would like to predict, based on input data, is sampled from a normal distribution of functions (just like an input data point is sampled from a normal distribution of points). The stochastic process that produces this distribution is called a Gaussian process. The modeling technique interpolates between data points and follows an underlying assumption that the function is smooth. Other underlying assumptions can be added as well, such as periodicity. We list the most widely used frameworks in Table 3. Representative libraries are GPy,76 GPflow,77 and GPytorch,78 which provide a large array of models and training paradigms. The latter two support fast GPU computations.

Table 3. OSS Available for Statistical ML.

| software | link | license |

|---|---|---|

| GPy76 | http://github.com/SheffieldML/GPy | BSD-3-Clause |

| GPflow77 | https://github.com/GPflow/GPflow | Apache-2.0 |

| GPytorch78 | https://github.com/cornellius-gp/gpytorch | MIT |

2.3.3. Deep Frameworks

Increasing the depth of the model (i.e., the number of layers) opens up the realm of deep learning. These models learn what features are important by themselves based solely on raw data. This is conceptually different from classical ML that uses manually defined features. Deep learning can discover structure and features in much larger datasets, and its concept has had an incredible impact on the usability of ML. The most widely used deep learning frameworks are listed in Table 4. PyTorch79 is a heavily used tensor (i.e., conceptually, a high-dimensional matrix) library for ML, allowing programmers to build vast models and make use of GPUs. Previously, the de facto deep learning framework library was TensorFlow, which is still widely used.80 Together, PyTorch and TensorFlow form the basis of most modern ML codes. Keras81 provides a user-friendly interface to TensorFlow. The PyTorch-based ξ-torch library provides differentiable functions and optimization algorithms for use in deep learning.82 An alternative framework to PyTorch and TensorFlow is Aesara, which grew from Theano,83 but its current use has been limited. Extending beyond Python, Apache MXNet supports deep learning with a wide range of programming languages (e.g., C++, R, Python).84 OpenNMT85 provides an ecosystem for neural machine translation and sequence learning, which can be applied to string-based representation (e.g., SMILES, InChI). It offers model architectures and training procedures for tasks such as string generation and translation. OpenNMT was created for natural language processing and is thus usable with string-based molecular representations.86

Table 4. OSS Available for Deep Learning.

| software | link | license |

|---|---|---|

| PyTorch79 | https://pytorch.org | BSD-style |

| TensorFlow80 | https://www.tensorflow.org | Apache-2.0 |

| Theano/Aesara83 | https://github.com/aesara-devs/aesara | custom |

| Keras81 | https://keras.io | MIT |

| ξ-torch82 | https://github.com/xitorch/xitorch | MIT |

| MXNet84 | https://github.com/apache/incubator-mxnet | Apache-2.0 |

| OpenNMT85 | https://opennmt.net | MIT |

2.3.4. Graph Neural Network Frameworks

A particular type of deep learning model that is specifically useful for computational chemistry is the GNN,19,48 whose most popular frameworks are listed in Table 5. The graph representation conceptually resembles a molecule’s structural formula. Consequently, GNNs are frequently used in ML models that focus on molecule-dependent features (e.g., elemental symbols and bond distances). Exemplifying GNN creations are the PyTorch-based PyG library87 and (currently not peer-reviewed) TensorFlow-based graph_nets library88 for network creation. The Deep Graph Library89 enables the integration of GNNs into the PyTorch, TensorFlow, and Apache MXNet frameworks.

Table 5. OSS Available for GNNs.

| software | link | license |

|---|---|---|

| PyG87 | https://github.com/pyg-team/pytorch_geometric | MIT |

| graph_nets88 | https://github.com/deepmind/graph_nets | Apache-2.0 |

| Deep Graph Library89 | https://www.dgl.ai | Apache-2.0 |

2.4. Automating Machine Learning

ML models must be designed and configured, which is one of the central tasks for ML users. The field of automated machine learning (AutoML) tries to alleviate this task by providing systematic approaches to find the right data features, architecture, and hyperparameters of the model, such as the learning rate, activation function, optimization strategy, and loss function.

2.4.1. Feature Engineering

An important task in classical ML, as opposed to deep learning, is feature engineering, which includes the selection and extraction of high-level features based on the raw data. In deep learning, these features are learned, but oftentimes expert knowledge is used to enable shallow learning, which is much more appropriate when datasets are small. Specific tools and libraries have been developed to assist with selecting, extracting, or calculating the right features from (raw) data. We list the most widely used libraries in Table 6. Featuretools90 is a general library for this task, whereas Feature-engine91 was specifically built for scikit-learn. tsfresh extracts features for time series.92

Table 6. Libraries Used for Automated Feature Extraction.

| software | link | license |

|---|---|---|

| Featuretools90 | https://github.com/alteryx/featuretools | BSD-3-Clause |

| Feature-engine91 | https://github.com/feature-engine/feature_engine | BSD-3-Clause |

| tsfresh92 | https://github.com/blue-yonder/tsfresh | MIT |

2.4.2. Model Selection

Finding a good model architecture (e.g., the number of layers and dimensions) is part of the neural architecture search and the model selection process. The most widely used libraries are provided in Table 7. scikit-learn71 has built-in methods to assist in model selection. Libra is a general library for model selection that supports Keras, TensorFlow, PyTorch, and scikit-learn. PyCaret93 is similar to Libra and supports scikit-learn, XGBoost, LightGBM, Optuna, Hyperopt, and others. Finally, Yellowbrick94 provides visual analysis and diagnostic tools.

Table 7. Libraries Used for Model Selection.

| software | link | license |

|---|---|---|

| scikit-learn71 | https://scikit-learn.org | BSD-3-Clause |

| Yellowbrick94 | https://github.com/DistrictDataLabs/yellowbrick | Apache-2.0 |

| Libra | https://github.com/Palashio/libra | MIT |

| PyCaret93 | https://github.com/pycaret/pycaret | MIT |

2.4.3. Hyperparameter Optimization

Hyperparameters are, in contrast to parameters or weights, not learned by the model but have to be preconfigured. The values of these hyperparameters directly affect the model’s resulting accuracy and should always be reported for reproducibility. Hyperparameter optimization can be done using a variety of libraries (Table 8), including Hyperopt,95 scikit-optimize,96 and Optuna.97 Auto-sklearn98 provides automatic hyperparameter tuning tools and includes visualization. Recent reviews regarding hyperparameter optimization and additional tools for use with Python can be found in the review article by Bischl et al.99 (particularly sections 2, 6.5.4, and 6.5.5) and references within. Syne Tune100 supports many optimization methods and offers distributed computing, multifidelity methods, transfer learning, and multiobjective optimization that can optimize not only model accuracy but latency simultaneously. Using efficient statistical models, GPyOpt101 and SMAC102 provide hyperparametrization using Bayesian optimization via Gaussian process models, with the aim of reducing the number of evaluations needed.

Table 8. Libraries Used for Hyperparameterization.

| software | link | license |

|---|---|---|

| Hyperopt95 | https://github.com/hyperopt/hyperopt | custom |

| scikit-optimize96 | https://scikit-optimize.github.io | BSD-3-Clause |

| Optuna97 | https://optuna.org | MIT |

| auto-sklearn98 | https://github.com/automl/auto-sklearn | BSD-3-Clause |

| Tune and Syne Tune100 | https://github.com/awslabs/syne-tune | Apache-2.0 |

| GPyOpt101 | http://sheffieldml.github.io/GPyOpt | BSD-3-Clause |

| SMAC102 | https://github.com/automl/SMAC3 | BSD-3-Clause |

2.4.4. AutoML for Classical Machine Learning

Fully automating entire ML processes allows users to quickly try and roll out models for specific tasks with less need for in-depth knowledge about ML. However, one should be warned that the more control over the learning process is given to automated frameworks, the more vigilant one should be when analyzing the results. We mention two well-known example libraries that perform AutoML (Table 9). TPOT103 uses genetic programming to create ML pipelines with scikit-learn. AutoGOAL104 uses a framework for program synthesis for AutoML.

Table 9. Libraries Used for AutoML of Classical Machine Learning.

| software | link | license |

|---|---|---|

| TPOT103 | https://github.com/EpistasisLab/tpot | LGPL-3.0 |

| AutoGOAL104 | https://github.com/autogoal/autogoal | MIT |

2.4.5. AutoML for Deep Learning

Although in deep learning we generally do not perform feature engineering, model selection and hyperparameter optimization can still take significant time, effort, and resources. AutoML for deep learning is an active field, whose most widely used libraries are listed in Table 10. Auto-PyTorch105 and AutoKeras106 are two well-known examples that support PyTorch and Keras/TensorFlow models, respectively.

Table 10. Libraries Used for AutoML in Deep Learning.

| software | link | license |

|---|---|---|

| Auto-PyTorch105 | https://github.com/automl/Auto-PyTorch | Apache-2.0 |

| AutoKeras106 | https://github.com/keras-team/autokeras | Apache-2.0 |

3. Computational Chemistry Tools

The usage of ML to improve the primary methodological tools in computational chemistry is uniquely important since they are widely used in researching different problems. In the following, we will focus on the first-principles methods of quantum mechanics (QM) and density functional theory (DFT) and the Newtonian-based methods of molecular mechanics (MM) and molecular dynamics (MD).

3.1. Quantum Mechanics and Density Functional Theory

The fields of QM, DFT, and ML generally overlap in different ways.8,9,14,15,25,34 The largest overlap is the use of QM/DFT calculations to create reliable data for the training and validation of a model. The experimental-level accuracy that can be obtained by QM is offset by the high resource cost of the calculations. However, once these datasets are generated, they provide an exciting opportunity for training significantly faster ML models that compute a variety of wave-function-based observables (e.g., orbital energies, polarizability).8,107−110 Due to their speed, these QM-/DFT-trained ML algorithms are enabling researchers to perform MD simulations in time domains that are generally prohibitive for QM/DFT methods.111

Concerning OSS, there are several tools for performing QM/DFT calculations (Table 11). Psi4 is a sophisticated OSS that is built upon C++ and Python.112,113 Another well-known tool is NorthWest Chemistry (NWChem), which was written in Fortran and C and thus does not natively interface with Python.114,115 OpenMolcas is a modularly developed Fortran code with a Python input parser for control.116,117 Quantum ESPRESSO is a DFT-based software that focuses on material modeling and includes some Python integration in its recent version.118,119 Abacus120 and BigDFT121,122 were designed to perform DFT calculations on very large systems. A software tool that is strongly coupled with Python is the Python-based Simulations of Chemistry Framework (PySCF) library.123,124 Semiempirical software tools for modeling very large systems include MOPAC,125 DivCon,126 and DFTB+.127 Finally, additional electronic-structure-based software that is available for performing specialized modeling (e.g., MD simulations, condensed phase) includes ABINIT128 and Siesta.129

Table 11. OSS Available for Computing QM and DFT Target Data for Training and Validation.

| software | link | license |

|---|---|---|

| Abacus120 | https://github.com/deepmodeling/abacus-develop | LGPL-3.0 |

| ABINIT128 | https://www.abinit.org | GPL-3.0 |

| BigDFT121,122 | https://bigdft.org | multiple |

| DivCon126 | http://www.merzgroup.org/divcon.html | unavailable |

| DFTB+127 | https://dftbplus.org | multiple |

| MOPAC2016125 | http://openmopac.net | unavailable |

| NWChem114,115 | https://www.nwchem-sw.org | Educational Community License-2.0 |

| OpenMolcas116,117 | https://gitlab.com/Molcas/OpenMolcas | LGPL-2.0 |

| Psi4112,113 | https://psicode.org | LGPL-3.0 and GPL-3.0 |

| PySCF123,124 | https://github.com/PySCF/PySCF | Apache-2.0 |

| Quantum ESPRESSO118,119 | https://www.quantum-espresso.org | GPL |

| SIESTA129 | https://siesta-project.org/siesta | GPL-3.0 |

Another overlap between the fields is the use of ML to optimize DFT calculations themselves130 (Table 12). For example, ML can help to optimize or replace the DFT parameters that are embedded within the theory. With the goal of improving functions for DFT theory, several OSS tools have been developed, including PROPerty Prophet (PROPhet; written in C++),131 D3-GP,132 Deep Kohn–Sham (DeepKS),133,134 NeuralXC,135 NNFunctional,136,137 Differentiable Quantum Chemistry (DQC),138 JAX-DFT,139 Compressed scale-Invariant DEnsity Representation (Cider),140 Fourth-order Expansion of the X Hole,141 Symbolic Functional Evolutionary Search (SyFES),142 and CF22D (written in Fortran).143 The D3-GP workflow implements Gaussian process regression and batchwise-variance-based (as opposed to sequential-variance-based) sampling to improve D3-type dispersion corrections in DFT calculations.132 SyFES is unique in that it generates DFT functionals in a symbolic form that are easier to interpret. Including prior knowledge into training exchange functionals was done in JAX-DFT,139 whose GitHub repository links to a Google Colaboratory notebook for demonstration.

Table 12. Computational-Chemistry-Focused ML Tools for Improving DFT Functionals.

| software | link | license | public data | public model |

|---|---|---|---|---|

| CF22D143 | 10.5281/zenodo.7306137 | CC-BY-4.0 | Y | N |

| Cider140 | https://github.com/mir-group/CiderPress | MIT | Y | N |

| D3-GP132 | https://zenodo.org/record/7785794 | unavailable | Y | N |

| DeepKS133,134 | https://github.com/deepmodeling/deepks-kit | LGPL-3.0 | Y | N |

| DQC138 | https://github.com/diffqc/dqc | Apache-2.0 | Y | N |

| Fourth-order Expansion of the X Hole141 | https://gitlab.com/electronic-structure-udem/fourth-order-expansion-of-the-x-hole | unavailable | Y | Y |

| JAX-DFT139 | https://github.com/google-research/google-research/tree/master/jax_dft | Apache-2.0 | Y | Y |

| NeuralXC135 | https://github.com/semodi/neuralxc | BSD-3-Clause | Y | Y |

| NNFunctional136 | https://github.com/ml-electron-project/NNfunctional | MIT | Y | Y |

| PROPhet131 | https://github.com/biklooost/PROPhet | GPL-3.0 | N | N |

| SyFES142 | 10.5281/zenodo.6767222 | CC-BY-4.0 | Y | N |

3.2. Molecular Mechanics Force Fields

Critical to all MM-based methodologies are their employed force fields, whose state-of-the-art parameter optimization is being pursued by using ML. MM potentials are explicitly defined by Newtonian equations, which are generally divided into bonded (e.g., bonds, angles, torsion) and nonbonded (e.g., partial atomic charges and Lennard-Jones) components. Of these, the nonbonded parameters are often the isolated targets of the ML algorithms. Alternatively, the functional form of the force field equation can be replaced entirely by ML that models the potential energy surface (see section 4.3), still enabling MD simulations to be performed.

Table 13 provides representative ML algorithms for determining nonbonded and bonded parameters. GA4AMOEBA (written in Fortran) uses a genetic algorithm and MP2 target data to parametrize polarizable force fields, specifically the electrostatic and van der Waals parameters, for use with AMOEBA.144 The force field precursors for metal–organic frameworks (FFP4MOF) tool was developed for use in materials research and is able to predict nonbonded parameters for metal-containing systems.145 Molecule-specific (i.e., ammonium perchlorate, pentafluoroethane, difluoromethane) examples of optimizing Lennard-Jones parameters through multiobjective surrogate-assisted Gaussian process regression and support vector machine workflows can be found in ref (146) and its two cited GitHub repositories. Similarly, PREMSO uses a presampling-enhanced, surrogate-assisted global evolutionary optimization strategy that allows the use of features at different scales (e.g., single-molecule and bulk-phase observables).147 Also released recently, Thürlemann et al. developed a GNN to predict nonbonded parameters based on QM target data,148 which includes atom typing prediction. Coming out of the Chodera lab, the impressive Extensible Surrogate Potential Optimized by Message-passing Algorithms (espaloma) uses a GNN to perceive chemical environments and then predict bonded and nonbonded parameters.149

Table 13. Computational-Chemistry-Focused ML Tools for Force Field Parameter Determination.

| software | link | license | public data | public model |

|---|---|---|---|---|

| espaloma149 | https://github.com/choderalab/espaloma | MIT | Y | Y |

| GA4AMOEBA144 | https://github.com/AmYingLi/GA4AMOEBA | unavailable | Y | N |

| GNN Parametrized Forcefields148 | https://github.com/rinikerlab/GNNParametrizedFF | MIT | Y | Y |

| FFP4MOF145 | https://github.com/korolewadim/ffp4mof | MIT | Y | Y |

| PREMSO147 | https://github.com/maxm89/PREMSO-2022 | GPL-3.0 | Y | N |

Partial atomic charges (PACs) unto themselves have had several research groups develop ML concepts for their prediction (Table 14). Focusing on small molecules, the Atom-Path-Descriptor (APD) uses a new type of atomic descriptor for training random forest and extreme gradient boosting models for predicting PAC.150 To predict Quantum Theory of Atoms in Molecules’ PACs, the NNAIMQ (an NN model) was created.151 The SuperAtomicCharge model, a feed-forward NN, was written to predict QM-derived RESP, DDEC4, and DDEC78 PACs.152 For metal-containing systems, mpn_charges153 and PAC in Metal–Organic Frameworks (PACMOF)154 were developed using message-passing NN and a random-forest approach, respectively. The PhysNet algorithm predicts both dipole moments and PACs for larger systems such as peptides, as well as their energies and forces.155 To generate PACs for even larger systems (e.g., proteins), the Electron-Passing NN (epnn) was created.156 The drude_electrostatic_dnn algorithm was developed to generate PACs for the Drude polarizable force field.157 Extending the PAC concept, ESP-DNN158 (GNN) predicts the electrostatic potential surface trained on B3LYP/6-311G**//B3LYP/6-31G* data. Thürlemann et al. developed an equivariant GNN approach that predicts multipoles (e.g., dipole and quadrupole) that includes a database of electrostatic potentials and multipoles.159

Table 14. Computational-Chemistry-Focused ML Tools for Partial Atomic Charge Determination.

| software | link | license | public data | public model |

|---|---|---|---|---|

| APD150 | https://github.com/jkwang93/Atom-Path-Descriptor-based-machine-learning | unavailable | Y | N |

| DeepFMPO160 | https://github.com/giovanni-bolcato/deepFMPOv3D | MIT | Y | N |

| drude_electrostatic_dnn157 | https://github.com/mackerell-lab/drude_electrostatic_dnn | BSD-2-Clause | Y | Y |

| epnn156 | https://github.com/derekmetcalf/epnn | unavailable | Y | Y |

| EquivariantMultipoleGNN159 | https://github.com/rinikerlab/EquivariantMultipoleGNN | MIT | Y | Y |

| ESP-DNN158 | https://github.com/AstexUK/ESP_DNN | Apache-2.0 | Y | Y |

| mpn_charges153 | https://github.com/SimonEnsemble/mpn_charges | unavailable | Y | Y |

| NNAIMQ151 | https://github.com/m-gallegos/NNAIMQ | CC-BY-NC-SA-4.0 | Y | Y |

| PACMOF154 | https://github.com/snurr-group/pacmof | BSD-3-Clause New or Revised | Y | N |

| PhysNet155 | https://github.com/MMunibas/PhysNet | MIT | Y | N |

| SuperAtomicCharge152 | https://github.com/zjujdj/SuperAtomicCharge | Apache-2.0 | Y | Y |

3.3. Molecular Dynamics

Classical-physics-based MD simulations can also be used to generate data for use in ML algorithms. OSS available for performing MD simulations includes AmberTools,161 CP2K,162 GROMACS,163 LAMMPS,164 OpenMM,165 and ORAC166 (Table 15). The TorchMD software was created as a framework for MD simulations that can implement a mix of classical and ML potentials.167 PLUMED is another framework for performing and analyzing MD simulations, which interfaces with 10 MD software codes.168

Table 15. OSS Available for Computing Molecular Mechanics and Molecular Dynamics Target Data for Training and Validation.

| software | link | license |

|---|---|---|

| AmberTools161 | https://ambermd.org/AmberTools.php | GPL-3.0 (mostly) |

| CP2K162 | www.cp2k.org | GPL-2.0 |

| GROMACS163 | www.gromacs.org | LGPL-2.1 |

| LAMMPS164 | www.lammps.org | GPL-2.0 |

| OpenMM165 | https://openmm.org | MIT and LGPL |

| ORAC166 | http://www1.chim.unifi.it/orac | GPL |

| TorchMD167 | https://github.com/torchmd | MIT |

| PLUMED168 | www.plumed.org | LGPL-3.0 |

To enhance sampling of MD simulations5,17,18 (Table 16), several groups have developed the following ML approaches: Variational Dynamical Encoder (VDE)169 and Reweighted Autoencoded Variational Bayes for Enhanced Sampling (RAVE).170 As their names suggest, these algorithms focus on how encoders and decoders may be used to generate synthetic data based on known data. Learn the Effective Dynamics (LED) offers a unique approach that uses ML in conjunction with coarse-gaining and atomistic simulations.171 The mapping between the coarse- and fine-grained system is achieved by using an autoencoder, while a recurrent NN advances the latent space dynamics. In a different approach, Deep Generative Markov State Model (DeepGenMSM) predicts new possible configurations for a molecular system.172 GLOW is an algorithmic workflow that combines Gaussian accelerated MD to generate structural maps that are then used in a convolutional NN to identify reaction coordinates of biomolecules.173 To enhance sampling of rare events, Bonati et al. developed the Deep Time-lagged Independent Component Analysis (Deep-TICA).174 In addition to their interest in characterizing a molecular system, collective variables are used to enhance sampling in MD simulations. Identifying collective variables associated with slow or hard-to-model modes in an MD simulation is the focus of Molecular Enhanced Sampling with Autoencoders (MESA),175 FABULOUS (genetic algorithms and NN),176 COVAEM,177 and DeepCV (deep autoencoder NN).178,179 To bypass the use of MD simulations for sampling, Atomistic Adversarial Attacks can generate molecular conformation and nonbonded configurations, which is achieved by combining uncertainty quantification, automatic differentiation, adversarial attacks, and active learning.180

Table 16. Computational-Chemistry-Focused ML Tools for Enhancing MD Simulations.

| software | link | license | public data | public model |

|---|---|---|---|---|

| Atomistic Adversarial Attacks180 | https://github.com/learningmatter-mit/Atomistic-Adversarial-Attacks | MIT | Y | Y |

| COVAEM177 | https://github.com/ai-atoms/covaem | MIT | N | N |

| DeepCV179 | https://lubergroup.pages.uzh.ch/deepcv and https://gitlab.uzh.ch/lubergroup/deepcv | MIT | Y | Y |

| DeepGenMSM172 | https://github.com/markovmodel/deep_gen_msm | unavailable | Y | N |

| Deep-TICA174 | https://github.com/luigibonati/deep-learning-slow-modes | unavailable | Y | N |

| FABULOUS176 | https://github.com/Ensing-Laboratory/FABULOUS | LGPL-3.0 | Y | N |

| GLOW173 | http://miaolab.org/GLOW | MIT | N | N |

| LED171 | https://github.com/cselab/LED | unavailable | N | N |

| MESA175 | https://github.com/weiHelloWorld/accelerated_sampling_with_autoencoder | MIT | Y | N |

| RAVE170 | https://github.com/tiwarylab/RAVE | MIT | Y | N |

| VDE169 | https://github.com/msmbuilder/vde | MIT | Y | N |

Analysis of existing MD-generated data is an additional area where ML meets computational chemistry24,44 (Table 17), primarily involving data dimensionality reduction. A general and popular OSS Python library for analysis is MDAnalysis (https://www.mdanalysis.org; GPL-2),181,182 which is used in various ML projects (e.g., MESA,175 EncoderMap,183,184 MDMachineLearning,185 RTMScore186). EncoderMap combines autoencoders with multidimensional scaling for dimensionality reduction and can generate structures in the reduced space—for example, to visually examine a protein’s conformational changes along a pathway.183,184 Another dimensionality reduction approach to identify metastable states is the Gaussian mixture variational autoencoder (GMVAE)187 and the stateinterpreter.188 DiffNets uses the autoencoder’s dimensionality reduction idea to identify the structural features that are predictive of biochemical differences between protein variants using MD simulations of those variants.189 Additional approaches for using existing MD trajectories to train a machine for predicting protein conformations (e.g., metastable states) are MDMachineLearning185 and Molearn (convolutional NN).190 The State Predictive Information Bottleneck (SPIB) algorithm learns the reaction coordinate within MD trajectories.191 A unique pixel-based approach was developed in the Interpretable Convolutional Neural Network-based deep learning framework for MD (ICNNMD) algorithm.192 ICNNMD represents protein conformations obtained from MD simulations as pixel maps that are subsequently used to perform feature extractions and then classification. Finally, it should be noted that shallow learning concepts (e.g., clustering, principal component analysis) are incorporated into the Markov State Model Python package MSMBuilder (http://msmbuilder.org; LGPL-2.1) for statistical-based predictive modeling that uses MD input data.193

Table 17. Computational-Chemistry-Focused ML Tools for Analyzing MD Simulations.

| software | link | license | public data | public model |

|---|---|---|---|---|

| DiffNets189 | https://github.com/bowman-lab/diffnets | LGPL-3.0 | Y | N |

| EncoderMap183,184 | https://github.com/AG-Peter/EncoderMap | LGPL-3.0 | Y | N |

| GMVAE187 | https://github.com/yabozkurt/gmvae | unavailable | Y | Y |

| ICNNMD192 | https://github.com/Jane-Liu97/ICNNMD | unavailable | Y | N |

| MDMachineLearning185 | https://github.com/Imay-King/MDMachineLearning | MIT | Y | N |

| Molearn190 | https://github.com/Degiacomi-Lab/molearn | GPL-3.0 | Y | N |

| SPIB191 | https://github.com/tiwarylab/State-Predictive-Information-Bottleneck | MIT | N | N |

| Stateinterpreter188 | https://github.com/luigibonati/md-stateinterpreter | MIT | Y | N |

3.4. Docking, Protein–Ligand Interactions, and Virtual Screening

The role that ML has within the docking community was reviewed in refs (16), (30), and (43). OSS for performing small molecule docking to proteins includes Autodock Vina,194 MOLS 2.0,195 rDock,196 SEED,197 and Smina198 (Table 18). GNINA, a recently released tool, docks molecules using an ensemble of trained convolutional NNs as a scoring function.199,200 To facilitate the integration of ML with docking, the DOCKSTRING package (https://dockstring.github.io; Apache 2.0) was developed that enables learned models to be easily benchmarked.201 This package contains Python wrappers, a large dataset of scores and poses, and benchmarking tasks and employs AutoDock Vina as its docking engine. The CABSdock software focuses on the flexible docking of peptides to proteins.202 In addition to peptide–protein docking, the LightDock software can also perform docking between DNA–protein and protein–protein biopolymers.203,204 Very recently, the GNN EDM-Dock was developed that generates protein–ligand poses from distance matrices and implicitly incorporates protein flexibility through coarse-graining of the protein.205 Researchers interested in computer-aided drug design11,27,37,51 should also be aware of the Open Drug Discovery Toolkit (https://github.com/oddt/oddt; BSD-3),206 a Python code that implements the ML scoring functions NNscore207 and RFscore197 (both of which were developed in the early 2010s).

Table 18. OSS Available for Docking Calculations.

| software | link | license |

|---|---|---|

| Autodock Vina194 | https://vina.scripps.edu | Apache 2.0 |

| CABSdock202 | https://bitbucket.org/lcbio/cabsdock/src/master | MIT |

| LightDock203,204 | https://lightdock.org and https://github.com/lightdock/lightdock | GPL-3.0 |

| MOLS 2.0195 | https://sourceforge.net/projects/mols2-0 | LGPL-2.1 |

| Open Drug Discovery Toolkit206 | https://github.com/oddt/oddt | BSD-3-Clause |

| rDock196 | http://rdock.sourceforge.net | LGPL-3.0 |

| SEED197 | https://gitlab.com/CaflischLab/SEED | GPL-3.0 |

| Smina198 | https://sourceforge.net/projects/smina | GPL-2.0 |

| GNINA200 | https://github.com/gnina/gnina | Apache-2.0 and GPL-2.0 |

Concerning scoring functions, significant ML research has focused on improving them for use in docking software and for virtual screening (Table 19). A recent assessment indicated that learned scoring functions can improve predictions, but diligence must be maintained since their performance depends upon the training dataset used (e.g., the degree of protein sequence similarity).208 Such functions include RF-RF-Score-VS,209 Δvina eXreme Gradient Boosting (XGB),210 AEScore (Δ-learning),211 ET-Score (extremely randomized trees),212 ΔLinF9 XGB,213 PharmRF (random forests),214 RTMScore,186 XLPFE,215 DeepRMSD+Vina (multilayer perceptron),216 and GB-Score (Gradient Boosting Trees).217 For docking ligands to RNA, the AnnapuRNA scoring function—a k-nearest neighbors and feed-forward NN scoring function—was developed that uses coarse-grained representations in the modeling.218 Based on KDEEP’s three-dimensional (3D) convolutional NN,219 DeepBSP is an algorithm that predicts the most likely native complex structure from an ensemble of poses generated by a docking software.220

Table 19. Machine-Learned Scoring Functions and Tools for Docking and Virtual Screening.

| software | link | license | public data | public model |

|---|---|---|---|---|

| AEScore211 | https://github.com/bigginlab/aescore | BSD-3-Clause | Y | Y |

| AnnapuRNA218 | https://github.com/filipspl/AnnapuRNA | GPL-3.0 | Y | Y |

| DeepBSP220 | https://github.com/BaoJingxiao/DeepBSP | GPL-3.0 | Y | Y |

| DeepRMSD+Vina216 | https://github.com/zchwang/DeepRMSD-Vina_Optimization | unavailable | Y | Y |

| ΔLinF9XGB213 | https://github.com/cyangNYU/delta_LinF9_XGB | GPL-3.0 | Y | Y |

| ΔvinaXGB210 | https://github.com/jenniening/deltaVinaXGB | GPL-3.0 | Y | Y |

| EDM-Dock205 | https://github.com/MatthewMasters/EDM-Dock | MIT | N | Y |

| ET-Score212 | https://github.com/miladrayka/ET_Score | GPL-3.0 | Y | Y |

| GB-Score217 | https://github.com/miladrayka/GB_Score | AGPL-3.0 | Y | Y |

| NNforDocking221 | https://github.com/mksmd/NNforDocking | MIT | Y | N |

| PharmRF214 | https://github.com/Prasanth-Kumar87/PharmRF | unavailable | Y | Y |

| RF-Score-VS209 | https://github.com/oddt/rfscorevs_binary | BSD-3-Clause | Y | N |

| RTMScore186 | https://github.com/sc8668/RTMScore | MIT | Y | Y |

| XLPFE215 | https://github.com/LinaDongXMU/XLPFE | unavailable | Y | N |

Similar to the goals of docking, several groups have created algorithms for predicting protein–ligand interactions (see Table 20 and the review in ref (20)). DeepDTA,222 DeepConv-DTI,223 DeepCDA,224 and DeepScreen225 make use of a convolutional NN. Using two stacked 3D convolutional NNs—for learning intramolecular and intermolecular interactions, respectively—InteractionGraphNet predicts protein–ligand interactions and binding affinities.226 The ML-ensemble-docking algorithm explored whether ML could aggregate docking scores for better binding predictions.227 GNN_DTI228 and DeepNC229 predict ligand–receptor interactions using GNN. SSnet is built upon a deep learning framework that uses a protein’s secondary structure to predict how a ligand might bind.230 STAMP-DPI uses a protein’s sequence to generate a predicted contact map.231 The NNforDocking code uses an NN to predict possible binding pockets, followed by AutoDock Vina to obtain a possible protein–ligand configuration.221 Of the algorithms listed above, GNN_DTI uses 3D structural information through an adjacency network and a distance-aware graph attention mechanism.228 Protein–Ligand Interaction Fingerprints (ProLIF) is a Python analysis tool for analyzing molecular dynamics trajectories, docking simulations, and experimental structures.232 LUNA is another interaction analysis Python tool that implements Extended Interaction FingerPrint (EIFP), Functional Interaction FingerPrint (FIFP), and Hybrid Interaction FingerPrint (HIFP) for both protein–ligand and protein–protein complexes, outputting a PyMOL session for visualization.233

Table 20. ML Tools for Protein–Ligand Interactions.

| software | link | license | public data | public model |

|---|---|---|---|---|

| DeepCDA224 | https://github.com/LBBSoft/DeepCDA | unavailable | Y | N |

| DeepConv-DTI223 | https://github.com/GIST-CSBL/DeepConv-DTI | GPL-3.0 | Y | N |

| DeepDTA222 | https://github.com/hkmztrk/DeepDTA | unavailable | Y | N |

| DeepNC229 | https://github.com/thntran/DeepNC | unavailable | Y | N |

| DeepScreen225 | https://github.com/cansyl/DEEPscreen | GPL-3.0 | Y | N |

| GNN_DTI228 | https://github.com/jaechanglim/GNN_DTI | unavailable | Y | N |

| InteractionGraphNet226 | https://github.com/zjujdj/InteractionGraphNet/tree/master | unavailable | Y | Y |

| SSnet230 | https://github.com/ekraka/SSnet | MIT | Y | Y |

| STAMP-DPI231 | https://github.com/biomed-AI/STAMP-DPI | GPL-3.0 | Y | Y |

Several recent reviews have been published on virtual screening and ML—for example, see refs (23), (28), and (30). In addition to the scoring functions mentioned above, other goals have been pursued. GATNN is a molecular-graph-focused NN tool that enables scaffold hopping during its virtual screening.234 A fully automated tool for performing virtual screening is PyRMD, which uses a random matrix discriminant to screen and identify potential active ligands based on trained biological activity data.235 This algorithm was designed to be usable by both coding experts and nonexperts. RealVS uses transfer learning and graph attention networks to improve predictions and enable a level of model interpretability.236 An interesting deep-learning-based tool for use in virtual screening is DeepCoy, a GNN that enables researchers to generate property-matched decoy molecules based on a known active ligand input.237 The TocoDecoy tool also creates decoys but uses a conditional recurrent NN.238

4. Selected Research-Focused Topics

4.1. Protein Binding Site Prediction

Very closely related to the ideas just covered but often categorized separately is the goal of predicting small-molecule binding pockets on proteins, whose representative ML algorithms are given in Table 21. One such approach, P2Rank, uses a random forest approach and was developed using Apache’s Groovy and Java programming languages.239,240 The kalasanty algorithm uses image segmentation via a 3D U-net convolutional NN to predict binding sites.241 DeepSurf is a 3D convolutional residual NN that uses a protein surface representation of local 3D voxelized grids to identify binding pockets.242 PUResNet employs a U-net variant residual NN that predicts binding sites based on structure similarity.243 The DeepPocket algorithm employs Fpocket (https://github.com/Discngine/fpocket; MIT)244 and a 3D convolutional NN to identify sites, ranking them using a classification model and mapping the binding sites’ shapes using a segmentation U-net-like model.245 Also using a U-net architecture in a 3D NN, the InDeep algorithm focuses on predicting binding pockets in and near the protein–protein interaction (PPI) interface.246 Using a primary sequence as input, BiRDS is a residual NN that predicts a protein’s amino acids that are most likely to form a binding region.247 PointSite uses an atom-level point cloud segmentation approach in a submanifold sparse convolution NN.248 Mentioned in section 3.4, NNforDocking uses an NN to make a binding pocket prediction as part of its workflow.221

Table 21. ML Tools for Predicting Possible Binding Pockets in Proteins.

| software | link | license | public data | public model |

|---|---|---|---|---|

| BiRDS247 | https://github.com/devalab/BiRDS | unavailable | Y | Y |

| DeepPocket245 | https://github.com/devalab/DeepPocket | MIT | Y | Y |

| DeepSurf242 | https://github.com/stemylonas/DeepSurf | AGPL-3.0 | Y | Y |

| InDeep246 | https://gitlab.pasteur.fr/InDeep/InDeep | unavailable | Y | Y |

| kalasanty241 | https://gitlab.com/cheminfIBB/kalasanty | BSD-3-Clause | Y | Y |

| P2Rank239,240 | https://github.com/rdk/p2rank | MIT | Y | N |

| PointSite248 | https://github.com/PointSite/PointSite | MIT | Y | Y |

| PUResNet243 | https://github.com/jivankandel/PUResNet | unavailable | Y | Y |

4.2. Protein–Protein Interactions and Protein Folding

The prediction of PPIs has seen a lot of ML activity, as reviewed in refs (42), (46), (50), and (249) and given in Table 22. PPI prediction can occur at different resolution levels, for example, sequence-based versus structure-based ML approaches.42 Depending on the focus, the output can range from identification of the primary sequence components (i.e., the interacting amino acids) to 3D “docked” structures. ML-based PPI prediction algorithms include pipgcn,250 masif (convolutional NN),251 GraphPPIS (graph NN),252 Struct2Graph (graph attention network),253 and DeepHomo2 (web server and downloadable package).254 To identify a protein’s possible interfacial binding region, Fout et al. developed pipgcn using graph NN, where each amino acid residue is described by a node.250 Masif employs the unique approach of computing a protein–surface fingerprint, which is then used to predict PPIs (it can also predict protein–ligand interactions). Concerning ML scoring functions for protein–protein docking, DOcking decoy selection with Voxel-based deep neural nEtwork (DOVE) scans PPIs with a 3D voxel, resulting in their ranking.255 iScore provides a scoring function built using a support vector machine and random-walk graph kernels approach.256,257 DeepRank258 and DeepRank-GNN259 were built using a 3D convolutional NN and GNN, respectively. TopNetTree is a novel algorithm that predicts the binding affinity changes for a PPI upon an amino acid mutation.260

Table 22. ML Tools for Exploring Protein–Protein Interactions and Protein Folding.

Data and model are no longer available through the provided link.

Protein structure prediction (i.e., protein folding) is another related topic that is making significant advances due to ML.21,49 MELD uses Bayesian inference to predict protein structure using a limited amount of experimental information and physics-based modeling.261 DeepECA is an end-to-end convolutional NN to predict a protein’s intramolecular contacts and its secondary structure.262 AlphaFold263 and RoseTTAFold264 are well-known, with well-maintained GitHub repositories. A 2022 paper by Bryant et al. describes AlphaFold2, which can predict heterodimeric protein complexes.265 DMPfold2 is a third approach, which uses a multiple sequence alignment as input to generate folded prediction in an ultrafast time frame.266 While not directly involving ML code, ColabFold (https://github.com/sokrypton/ColabFold; MIT) is OSS that couples MMseqs2,267,268 a many-against-many sequence searching and clustering algorithm, with AlphaFold2/RoseTTAFold and can be implemented in Google’s Colaboratory.269

In a closely related topic, DLPacker’s goal is to predict amino acid side-chain conformations using a 3D convolutional U-net architecture.270 For structure validation (e.g., from a prediction using the above method), as one possible application, one can use the Int2Cart algorithm. Int2Cart uses a gated recurrent NN that detects internal coordinate correlation and refines bond distances and bending angles for a given set of torsion rotations.271

4.3. Energies and Forces

In many situations, understanding an experimental observation is significantly aided by elucidating a portion of the system’s potential energy (PE) surface, the corresponding forces, and its free energies. Consequently, this is why much research is devoted to modeling PE using physics-based approaches (i.e., QM and MM) and now by data-driven ML8,12,22,25,29,31,33,38,39 (Table 23). The resulting learned potentials and force fields can be used to predict conformational energies or perform MD simulations. Isayev and co-workers developed Atomic Simulation Environment–Accurate NeurAl networK engINe for Molecular Energies (ASE-ANI),272−274 TorchANI,275 and AIMNet276 as approaches for realizing universal ML interatomic potentials for neutral organic molecules.39 Additional algorithms for modeling PE surfaces include DeePMD-kit,277,278 PES-Learn,279 SchNetPack,280−282 Symmetric Gradient Domain Machine Learning (sGDML),283,284 ml-dft,285 Fast Learning of Atomistic Rare Events (FLARE),286 Module for Ab Initio Structure Evolution (MAISE) (written in C but has a Python wrapper available called MAISE-NET),287 KIM-based learning-integrated fitting framework (KLIFF),288 and AisNet.289 SchNetPack predicts not only PE but also other observables such as atomic forces, formation energies, and dipole moments.282 Building off of SchNet281 and using a trainable encoding module, AisNet can predict the energy and forces for molecules and crystalline materials (e.g., crystalline ceramics and multicomponent alloys).289 Also building off of SchNet,281 the g4mp2-atomization-energy algorithm was developed for predicting atomization energies.290

Table 23. ML Tools for Predicting Molecular Energies, Solvation Energies, and Binding Affinities.

The TorsionNet algorithm enables the prediction of PE curves as a function of torsion angle rotation and was trained using active learning.291 However, a license for the OpenEye Toolkit is needed for its full implementation. The component-based machine-learned intermolecular force field (CLIFF) algorithm can predict intermolecular PE energies by combining physics-based potential forms with ML-based parametrization.292 The concept behind BAND-NN is to predict a molecule’s energy and to enable geometry optimization, which divides the energy into bonds, angles, dihedrals, and nonbonded terms within the NN.293 With a different focus, the D3-GP workflow implements Gaussian process regression and batchwise-variance-based (as opposed to sequential-variance-based) sampling to improve D3-type dispersion corrections in DFT calculations.132 In the domain of materials science, fast_reorg_energy_prediction makes use of the ChiRo ML model to predict the reorganization energy.294

Several groups have worked on using ML to predict binding affinities. Ligand–receptor binding affinities can be computed using Pafnucy,295 DeepAffinity,296 GLXE,297 OctSurf,298 OnionNet-2,299,300 and Hybrid Attention-Based Convolutional Neural Network (HAC-Net).301 Pafnucy’s convolutional NN makes use of a 3D grid input representation with 1 Å resolution for affinity prediction. DeepAffinity was trained to predict binding affinities based on IC50, Ki and Kd.296 The GLXE297 algorithm combines MM/GBSA with shallow and deep ML approaches to predict binding free energies. The OctSurf algorithm computes the 3D surface areas of the protein pocket and ligand to predict a resulting binding affinity.298 The two-dimensional convolutional NN OnionNet-2 generates rotation-free pairwise contacts between protein and ligand atoms to predict binding free energies.299,300 HAC-Net is one of the newest algorithms, which combines the concept of attention with a 3D convolutional NN to compute protein–ligand binding affinity.301

DeepMoleNet is an atomwise NN that predicts a molecule’s internal energy, thermodynamic energies at 298.15 K, HOMO and LUMO energies, and zero-point vibrational energy as well as dipole moment, polarizability, electronic spatial extent, and heat capacity.302 Its model and training data are not directly released but can be requested if used noncommercially. Finally, ML is used to predict solvation free energies, as represented by the following algorithms: Hybrid FEP/ML,303 MLSolvA,304 A3D-PNAConv-FT,305 chemprop_solvation,306 and MolSolv.307

4.4. Molecule Generation

ML has also been used to generate suggestions for new molecules4,13,40 (Table 24). These hypothetical molecules represent new ideas that synthetic chemists can pursue toward various ends (e.g., drug design). Variational autoencoders provide one path for generating new ideas, as implemented in LigDream308 and the Molecular Graph Conditional Variational Autoencoder (MGCVAE).309 LigDream creates structures based on a seed molecule’s volume by coupling a shape autoencoder to convolutional and recurrent NNs.308 MGCVAE includes the use of a GNN to propose molecules that have specific properties (e.g., log P).309 DeepGraphMolGen performs a multiobjective optimization using reinforcement learning based on a graph convolution policy approach for generating molecules with desired properties.310 A unique concept for 3D molecule generation is to include a representation of the receptor’s topology in the model training, as realized by the LiGAN algorithm.311 Boström and co-workers developed Deep Fragment-based Multi-Parameter Optimization (DeepFMPO),160 which generates fragments (i.e., residues) that can be combined to form a new molecule. Extending this idea to biopolymers, Graph-Based Protein Design, an autoregressive language model, was created to generate an amino acid sequence that should fold into a desired 3D structure.312 MOLEcule Generation Using reinforcement Learning with Alternating Reward (MoleGuLAR) is an algorithm that proposes molecules for targeting specific protein binding sites using reinforcement learning.313 Using a ML transformer model that was trained on pairs of similar bioactive molecules, the transform-molecules algorithm can generate new molecules that ideally would have higher potency against a specific protein target.314 Kao et al. developed QuMolGAN and related models to explore the use of quantum generative adversarial networks to create new molecules.315

Table 24. ML Tools for Designing New Molecules.

| software | link | license | public data | public model |

|---|---|---|---|---|

| DeepFMPO160 | https://github.com/giovanni-bolcato/deepFMPOv3D | MIT | Y | N |

| DeepGraphMolGen310 | https://github.com/dbkgroup/prop_gen | unavailable | Y | N |

| DeLinker316 | https://github.com/oxpig/DeLinker | BSD-3-Clause | Y | Y |

| DEVELOP317 | https://github.com/oxpig/DEVELOP | BSD-3-Clause | Y | Y |

| DRLinker319 | https://github.com/biomed-AI/DRlinker | unavailable | Y | Y |

| Graph-Based Protein Design312 | https://github.com/jingraham/neurips19-graph-protein-design | MIT | Y | N |

| LigDream308 | https://github.com/compsciencelab/ligdream | AGPL-3.0 | Y | Y |

| LiGAN311 | https://github.com/mattragoza/liGAN | GPL-2.0 | Y | Y |

| MGCVAE309 | https://github.com/mhlee216/MGCVAE | unavailable | Y | N |

| MoleGuLAR313 | https://github.com/devalab/MoleGuLAR | unavailable | Y | Y |

| QuMolGAN315 | https://github.com/pykao/QuantumMolGAN-PyTorch | MIT | Y | N |

| SyntaLinker318 | https://github.com/YuYaoYang2333/SyntaLinker | MIT | Y | N |

| transform-molecules314 | https://github.com/pfizer-opensource/transform-molecules | Apache-2.0 | Y | N |

With a slightly different end goal, several algorithms approach the creation of new molecules by designing chemical linkers to combine fragments. The graph-based DeLinker NN generates possible linkers for connecting two residues.316 Combining DeLinker with a 3D pharmacophore concept, DEep Vision-Enhanced Lead OPtimisation (DEVELOP) was developed to generate potentially more impactful molecular suggestions.317 SyntaLinker learns and uses the “rules” for linking fragments via syntactic patterns embedded in SMILES strings.318 Using reinforcement learning, DRLinker designs linkers that join two desired residues together, whose resulting molecules are tailored towards specific attributes.319

4.5. Chemical Reactions and Synthesis

Synthetic chemists now have the opportunity to use ML to predict the products from chemical reactions and design synthetic routes to obtain a desired outcome32,47,52 (Table 25). For predicting products, Molecular Transformer uses SMILES strings as input reactants to an autoregressive encoder–decoder.320 Based on a GNN active learning architecture, DeepReac+ was developed to predict reaction products and to help optimize experimental conditions for organic reactions.321 Also using SMILES strings as input and a k-nearest neighbor classifier, the differential reaction fingerprint (DRFP) algorithm was developed for predicting reaction classification and yield prediction.322 The competing-reactions algorithm focuses on predicting the energetic barrier height of possible chemical pathways based on reactants.323 The Open-NMT-py algorithm uses multitask transfer learning to predict the stereoisomeric products of enzyme-catalyzed reactions using a SMILES input.86 Employing a graph-to-graph transformer architecture, G2GT is a retrosynthesis predictor.324 As a pipeline tool, AiZynthTrain can be used to train new synthesis prediction models that are usable by the AiZynthFinder OSS (https://github.com/MolecularAI/aizynthfinder; MIT).325,326

Table 25. ML Tools for Predicting the Products of Reactants and Optimizing Synthesis.

| software | link | license | public data | public model |

|---|---|---|---|---|

| AiZynthTrain326 | https://github.com/MolecularAI/aizynthtrain | Apache-2.0 | N | N |

| competing-reactions323 | https://github.com/ferchault/competing-reactions | unavailable | Y | N |

| DeepReac+321 | https://github.com/bm2-lab/DeepReac | Apache-2.0 | Y | Y |

| DRFP322 | https://github.com/reymond-group/drfp | MIT | Y | Y |

| G2GT324 | https://github.com/Anonnoname/G2GT_2 | unavailable | Y | N |

| Molecular Transformer320 | https://github.com/pschwllr/MolecularTransformer | MIT | Y | Y |

| OpenNMT-py86 | https://github.com/reymond-group/OpenNMT-py | MIT | Y | N |

| ReTReK327 | https://github.com/clinfo/ReTReK | MIT | N | N |

4.6. Conformation Generation

Elucidating and understanding 3D structures and the conformational space of molecules is an important goal in many research fields (e.g., spectroscopy and drug design), whose difficulty increases with a molecule’s number of rotatable bonds. The ML community has generated several algorithms that help predict the structures of conformations (Table 26). The DL4Chem-geometry algorithm uses a conditional variational graph autoencoder to predict conformations by learning the underlying PE surface, which utilizes Cartesian coordinates as part of the input data.328 GraphDG predicts conformations by combining a conditional variational autoencoder with a Euclidean distance geometry algorithm, resulting in an approach that is invariant to rotation and translation.329 Keeping the invariance goal in mind, ConfVAE was developed using bilevel programming to provide an end-to-end generation approach.330 The same group also developed ConfGF, which adds the idea of gradient fields (analogous to force fields) and Langevin dynamics to their ML workflow.331 Auto3D addresses the challenge of sampling configurational stereoisomers when using a SMILES string for generating 3D conformers; it is trained on the author’s atomistic neural network potentials (i.e., AIMNet, ANI-2x, ANI-2xt) and is able to identify the lowest-energy conformer.332 The MolTaut algorithm generates possible tautomer geometries, performs subsequent optimization using the ANI-2x ML model, and ranks the results based on energies (i.e., internal and solvation).307

Table 26. ML Tools for Exploring the Conformational Space of Molecules.

| software | link | license | public data | public model |

|---|---|---|---|---|

| Auto3D332 | https://github.com/isayevlab/Auto3D_pkg | MIT | Y | Y |

| ConfGF331 | https://github.com/DeepGraphLearning/ConfGF | MIT | Y | N |

| ConfVAE330 | https://github.com/MinkaiXu/CGCF-ConfGen | unavailable | Y | Y |

| DL4Chem-geometry328 | https://github.com/nyu-dl/dl4chem-geometry | BSD-3-Clause | Y | Y |

| GraphDG329 | https://github.com/gncs/graphdg | MIT | Y | N |

| MolTaut307 | https://github.com/xundrug/moltaut | GPL-2.0 | Y | Y |

4.7. Spectral Data

A long-standing goal of computational chemistry is the modeling of spectral data, with the recent ML contribution given in Table 27. As one of its goals, SchNarc can predict the UV spectrum by modeling a molecule’s transition dipole moments and excited-state energies using a continuous-filter convolutional NN.333 ML_UVvisModels is an algorithm that extends SchNet,290 SolTranNet,334,335 Chemprop-IR (https://github.com/gfm-collab/chemprop-IR; MIT), and a model developed by Ghosh et al.336 to predict UV–vis spectra.337 The prediction of electronic spectra was explored in the GMM-NEA algorithm, which uses probabilistic machine learning.338 In the GMM-NEA paper, an intriguing application of their model was to identify anomalous QM calculations that could lead to incorrect spectra predictions.

Table 27. ML Tools for Predicting Spectra.

| software | link | license | public data | public model |

|---|---|---|---|---|

| CANDIY-spectrum339 | https://github.com/chopralab/candiy_spectrum | unavailable | Y | N |

| FTIRMachineLearning340 | https://github.com/Ohio-State-Allen-Lab/FTIRMachineLearning | Apache-2.0 | Y | N |

| GMM-NEA338 | https://github.com/lucerlab/GMM-NEA | LGPL-2.1 | Y | N |

| MLforvibspectroscopy341 | https://github.com/elizabeththrall/MLforPChem/tree/main/MLforvibspectroscopy | CC-BY-SA-4.0 | Y | N |

| ML_UVvisModels337 | https://github.com/PNNL-CompBio/ML_UVvisModels | BSD-2-Clause | Y | Y |

| SchNarc333 | https://github.com/schnarc/schnarc | MIT | Y | N |

Not strictly coming from the computational chemistry community but aligning with the goal of assisting experimentalists is the use of ML to help assign functional groups to recorded spectra. For Fourier transform infrared (FTIR) spectroscopy and mass spectrometry, CANDIY-spectrum was developed that uses a multilayer perceptron NN.339 Focusing solely on FTIR spectra, 15 functional group identification models were created using the FTIRMachineLearning algorithms.340 With a focus on educating bachelor students—and thus, a valuable resource for learning—the MLforvibspectroscopy repository contains Jupyter notebooks that demonstrate how one can build a model for identifying functional groups from vibrational frequencies.341

4.8. pKa

For predicting a molecule’s pKa using ML (Table 28), OPERA,342,343 Machine-learning-meets-pKa,344 MolGpKa,345 pKAI,346 pkasolver,347 and DeepKa348 approaches are available as OSS. The pKa models of OPERA consist of a support vector machine, an extreme gradient boosting, and a four-layer fully connected NN. The Machine-learning-meets-pKa model predicts macroscopic pKa values for monoprotic molecules. MolGpKa and pkasolver use a convolutional GNN to make pKa predictions. The pKAI346 model was developed to predict the pKa values of amino acids within a protein. It was trained on the change in pKa values (i.e., ΔpKa) computed when a water-immersed amino acid residue was placed in the protein environment relative to that of its neutral form. To predict the pKa of proteins, one can use DeepKa, which was recently trained and validated using 23817 and 2735 data values, respectively.348,349

Table 28. ML Tools for Predicting pKa.

| software | link | license | public data | public model |

|---|---|---|---|---|

| DeepKa348,349 | https://gitlab.com/yandonghuang/deepka | GPL-3.0 | Y | N |

| Machine-learning-meets-pKa344 | https://github.com/czodrowskilab/Machine-learning-meets-pKa | MIT | Y | N |

| MolGpKa345 | https://github.com/xundrug/molgpka | MIT | Y | Y |

| OPERA343 | https://github.com/NIEHS/OPERA | MIT | Y | Y |

| pKAI346 | https://github.com/bayer-science-for-a-better-life/pKAI | MIT | Y | Y |

| pkasolver347 | https://github.com/mayrf/pkasolver | MIT | Y | Y |

5. A Foray into Additional Topics

In addition to the OSS described above, several additional projects warrant mention (Table 29) but are not easily classified into the above categories. Of particular note is DeepChem, a Python library that was developed to simplify the creation of machine and deep learning models for use in life sciences.350 DeepChem’s deep learning algorithms can be used with Keras, TensorFlow, PyTorch, and Jax frameworks and can include shallow learning libraries like sklearn.

Table 29. ML Tools for Exploring Miscellaneous Topics.

General package.

Molecular fignerprints.

Molecular similarity.

Ionization energy.

Partition coefficient.

Multiple and diverse observables.

Metal–organic framework stability.

Aqueous solubility.

ADMET.

Various interesting and more isolated concepts.

Molecular Fingerprints

There are several existing OSS for generating molecular fingerprints (i.e., representations) that can be used in ML,351,352 most notably OpenBabel353 and RDKit.354 While these tools are not ML algorithms, they are frequently used in ML projects. An ML tool for generating fingerprints is PretrainModels, a self-supervised learning algorithm that uses a bidirectional encoder transformer for reading input SMILES strings.355

Molecular Similarity

Computing molecular similarity is a topic that often arises in pharmaceutical research. As part of a virtual screening project, VS-SVM (implemented in MATLAB) uses support vector machines to predict pairwise similarity.356 Published in 2022, MLKRR is a similarity-based model built using the QM9 dataset and a metric learning approach for kernel ridge regression, and it was used to predict atomization energies.357

ADMET