Abstract

Across research disciplines, cluster randomized trials (CRTs) are commonly implemented to evaluate interventions delivered to groups of participants, such as communities and clinics. Despite advances in the design and analysis of CRTs, several challenges remain. First, there are many possible ways to specify the causal effect of interest (eg, at the individual-level or at the cluster-level). Second, the theoretical and practical performance of common methods for CRT analysis remain poorly understood. Here, we present a general framework to formally define an array of causal effects in terms of summary measures of counterfactual outcomes. Next, we provide a comprehensive overview of CRT estimators, including the t-test, generalized estimating equations (GEE), augmented-GEE, and targeted maximum likelihood estimation (TMLE). Using finite sample simulations, we illustrate the practical performance of these estimators for different causal effects and when, as commonly occurs, there are limited numbers of clusters of different sizes. Finally, our application to data from the Preterm Birth Initiative (PTBi) study demonstrates the real-world impact of varying cluster sizes and targeting effects at the cluster-level or at the individual-level. Specifically, the relative effect of the PTBi intervention was 0.81 at the cluster-level, corresponding to a 19% reduction in outcome incidence, and was 0.66 at the individual-level, corresponding to a 34% reduction in outcome risk. Given its flexibility to estimate a variety of user-specified effects and ability to adaptively adjust for covariates for precision gains while maintaining Type-I error control, we conclude TMLE is a promising tool for CRT analysis.

Keywords: cluster randomized trials, clustered data, data-adaptive adjustment, group randomized trials, Hierarchical data, targeted maximum likelihood estimation

1 ∣. INTRODUCTION

Cluster randomized trials (CRTs) provide an opportunity to assess the population-level effects of interventions that are randomized to groups of individuals, such as communities, clinics, or schools. These groups are commonly called clusters. The choice to randomize clusters, instead of individuals, is often driven by the type of intervention as well as practical considerations.1 For example, interventions to improve medical practices are often randomized at the hospital or clinic-level to reduce logistical burden and to minimize potential contamination between arms if individual patients were instead randomized. The design and conduct of CRTs has improved considerably,2-5 and results from CRTs have been widely published in public health, education, policy, and economics literature.6 However, a recent review found that only 50% of CRTs were analyzed with appropriate methods.7

Due to the hierarchical nature of the data and the correlation of participant outcomes within clusters, the analysis of CRTs is fundamentally more complicated than for individually randomized trials.1,7 To start, there are many ways to define the causal effect of interest in CRTs. We may, for example, be interested in the effect for the sample enrolled in the CRT or for a wider target population. Furthermore, we may be interested in the effect at the individual-level or cluster-level. As detailed below, the individual- and cluster-level effects can diverge markedly under “informative cluster size,”8,9 occurring when cluster size modifies the intervention effect. Finally, various summary measures of arm-specific outcomes (eg, weighted means) and their contrasts (eg, difference or ratio) may be of interest. Altogether, the causal effect should be determined by the study’s goal and primary research question.10-13 This is in line with the International Council for Harmonization (ICH)-E9(R1) guidance for trial protocols to explicitly state the “estimand,” including the target population, comparison conditions, endpoint, and summary measure.14 Adhering to this guidance ensures the statistical estimator follows from the target estimand, which follows from the trial’s objective. Neglecting this guidance risks letting the statistical approach determine the effect estimated and thus determine which research question is answered.

For the analysis of CRTs, statistical estimation and inference must respect that the cluster is the independent unit. Ignoring the dependence of observations within a cluster can lead to power calculations based on the wrong sample size and underestimates of the SE. Analyses ignoring clustering may also inappropriately attribute the impact of a cluster-level covariate to the intervention and, together with variance underestimation, result in inflated Type-I error rates. Many analytic approaches are available to account for the dependence within a cluster; examples include conducting a cluster-level analysis or applying a correction factor.1-4,7 Once we have committed to an analytic approach that aligns with our research question and addresses clustering, the adjustment of baseline covariates is often considered to improve precision and, thereby, statistical power. In an individually randomized trial setting, Benkeser et al recently demonstrated an 18% savings in sample size that could be achieved by including baseline covariates in the analysis.15 Likewise, using real data from a CRT, Balzer et al recently showed the adjusted analysis was five times more efficient than an unadjusted analysis for the same parameter.16 While our focus is on the potential of covariate adjustment to improve precision, we note that in other settings covariate adjustment may be essential to reducing bias due to missingness, selection, or restricted randomization.16-25

Fortunately, many methods are available to incorporate covariates for improved efficiency when estimating intervention effects in CRTs. Examples include well-established methods, such as generalized estimating equations (GEE) and covariate-adjusted residuals estimator (CARE), as well as more recent developments, such as targeted maximum likelihood estimation (TMLE) and Augmented-GEE (Aug-GEE).1,11,26-30 While these methods differ in their exact implementation, each aims to improve the CRT’s statistical power by controlling for individual- or cluster-level covariates when fitting the “outcome regression”: the conditional expectation of the outcome given the randomized intervention and the adjustment variables. As detailed below, these algorithms naturally estimate distinct causal effects, again highlighting the importance of pre-specifying the target estimand and then choosing the optimal estimator of that effect. Previous literature has used simulation studies to compare the attained power and Type-I error rate of various approaches to CRT analysis (eg, References 31 and 32). However, to the best of our knowledge, these comparisons have largely excluded the more recent approaches of Aug-GEE and TMLE.

This paper aims to provide a general framework for defining causal effects in CRTs and to assess the comparative performance of analytic methods for estimation of those effects. Building on prior work in CRT analysis (eg, References 1,5,21,24,25,32-34), our key contributions are as follows. First, we demonstrate the utility of a nonparametric structural causal model, accounting for clustering, to derive counterfactuals and define a variety of causal effects of potential interest. Using this causal model framework, we examine the impact of varying cluster sizes when defining the target causal effect and discuss identification of each causal effect as a function of the observed data distribution (ie, a statistical estimand). Next, we review recently developed CRT estimators along with well-established ones, emphasizing their natural target of inference. Specifically, we describe each algorithm’s ability to estimate marginal effects on the additive or relative scale and at the individual- or cluster-level, while also adjusting for covariates to improve precision. To the best of our knowledge, this is the first paper to describe several implementations of TMLE for the analysis of CRTs; these TMLEs allow for estimation of individual-level effects with cluster-level data and, conversely, for estimation of cluster-level effects with individual-level data. Also to the best of our knowledge, this is the first paper using finite sample simulations to provide a head-to-head comparison of these TMLEs, overall and under informative cluster size. Importantly, this work is motivated by a real data application with 20 clusters of widely variable size.

For an alternative and complementary presentation using potential outcomes and a “design-based” perspective, we refer the reader to Su and Ding,32 who focus on effects defined for the sample and on the absolute scale (ie, difference in mean outcomes) as well as estimation using weighted least squares regression. Here, we define and estimate effects on both the absolute and relative scale and for both the sample population and the larger target population. Additionally, our work is applicable regardless of the outcome-type (ie, binary, count, or continuous) and to trials with more complex designs (eg, pair-matched). While prior work has focused on asymptotic theory and provided simulation results with a fairly large number of clusters, here, we focus on the practical application of these estimators and evaluate their performance in simulations with limited numbers of clusters.

As motivating example, we consider the Preterm Birth Initiative (PTBi) study, a maternal-infant CRT which took place in 20 health facilities across Kenya and Uganda (ClinicalTrials.gov: NCT03112018). The trial assessed whether a facility-based intervention, designed to improve the uptake of evidence-based practices, was effective in reducing 28-day mortality among preterm infants. An important feature of PTBi was the widely varying cluster sizes; specifically, the median number of mother-infant dyads within a facility was 236 and ranged from 29 to 447. Following PTBi, we focus on a setting where individual participants are grouped into clusters (eg, patients in a health facility). However, our discussion and results are equally applicable to other hierarchical data structures that may be observed in a CRT. Examples include households within a community, classrooms within a school, and hospitals within a district.

The remainder of the paper is organized as follows. In Section 2, we use a nonparametric structural equation model to provide a broad approach to formally defining causal effects in CRTs. We discuss how the primary endpoint may be defined at the individual-level or at the cluster-level, often as an aggregate of individual-level outcomes. We highlight how these endpoints can differ in terms of interpretation and magnitude, especially under the setting of informative cluster size. We additionally address the distinction between effects defined for the study sample vs a target population. In Section 3, we discuss several CRT estimation methods. For each method, we describe its target of inference and its use of baseline covariates to improve statistical precision. In Section 4, we provide two simulation studies to evaluate the finite sample performance of common CRT estimators and to demonstrate the distinction between different causal effects. In Section 5, we apply these methods to estimate intervention effects for the PTBi study and highlight the real-world impact of varying cluster sizes. We conclude in Section 6 with a brief discussion.

2 ∣. DEFINING CAUSAL EFFECTS IN CRTS

We begin by formalizing the notation that will be used throughout. We index the cluster, such as a community or hospital, by . The study clusters could be randomly sampled from a larger target population of clusters or, due to practical constraints, selected for convenience from a set of candidate clusters. In either case, there is a real or theoretical target population of clusters to which we may want to make inferences. Below, we explicitly discuss the definition and estimation of the population versus sample effects. In all settings, the selection of clusters should be reflected in the CONSORT diagram. Each cluster is comprised of a finite set of individuals (ie, participants), which are indexed . The cluster size, denoted , could be constant across clusters, but more often varies by cluster. The study participants could be randomly sampled within a cluster or be a census of all persons in a cluster. Discussion of more complex settings with systematic sampling or other forces of selection bias is important but beyond the scope of this paper.16,20-23 Here, the study participants are representative of or comprise the cluster. Thus, for a given cluster, is fixed and finite.

In each cluster, denotes the set of baseline covariates for the study participants. Additional baseline covariates related to the cluster are denoted ; these could be summaries of individual-level characteristics or may have no individual-level counterpart.28 For ease of notation, we include the cluster size , a random variable, in . These cluster-level and individual-level covariates are assumed not to be impacted by the intervention, which is randomized to clusters in a CRT. In the case of a pair-matched trial, which may increase study power,1,33,35 randomization occurs within pairs of clusters matched on characteristics expected to be predictive of the primary endpoint. Throughout, we will use as an indicator that cluster was randomized to the intervention. The outcome of interest is , which for simplicity we assume is measured for all participants in cluster . Extensions to handling missing data time-dependent covariates, censoring, and selection bias are important, but beyond the scope of this paper.16-18,20-23

2.1 ∣. Hierarchical structural causal model

We now use Pearl’s nonparametric structural equation model36 to represent the hierarchical data generating process of a CRT. As detailed in Balzer et al,28 the following model encodes independence between clusters, but makes no restrictions on the dependence of participants within a cluster.

| (1) |

Here, () denote the set of functions that determine the values of the measured random variables: the cluster-level covariates , the individual-level covariate matrix , the cluster-level intervention assignment , and the vector of individual-level outcomes . These functions also account for unmeasured factors (). By design in a CRT, the unmeasured factors determining the intervention assignment are independent of others. These functions are also nonparametric with the exception of , the function to generate the cluster-level intervention. For example, in a two-armed trial with equal allocation probability, we have with . In contrast, the function to generate the outcome vector is left unspecified and can take any form of the “parent” variables () and unmeasured factors . Importantly, the cluster size , included in , can influence the outcome vector in complex ways. For example, very large and very small clusters may have poorer outcomes. Additionally the intervention effect maybe attenuated or, alternatively, enhanced in the largest and smallest clusters. Beyond the cluster-level covariates and intervention (), there are additional unmeasured and measured factors influencing and inducing dependence in the outcomes in a cluster. For example, the joint error term induces correlation among participants’ outcomes within a cluster. Additionally, within cluster , an individual’s outcome may depend on the covariates of others in the same cluster . In other settings, the dependence between participants in a cluster might be more restricted; for further details, we refer to reader to Balzer et al.28

We now consider the data generating process for the PTBi study, where the cluster corresponds to a health facility and the “individual” to a mother-infant dyad. For cluster , we measure facility-level baseline characteristics , including the average monthly delivery volume, facility preparedness assessment score, staff to delivery ratio, and community-type (ie, urban vs rural). The facility is then randomly assigned to intervention () or control (). When a mother delivers her infant, the covariates for the mother-infant dyad are collected. These include the mother’s characteristics, such as age, parity, and receiving a cesarean section (C-section), and the infant’s characteristics, such as sex, weight, length, and arm circumference. Again, we assume these covariates are not impacted by the intervention , even though they are measured after randomization. Finally, the infant’s vital status is recorded; is an indicator of infant death within 28 days. Over the course of study follow-up, we observe many such deliveries, but for the primary population of interest, we restrict to pre-term births, defined as born before 37 weeks of gestation. This process is repeated for the sample size of facilities.

2.2 ∣. Counterfactuals and target causal effects

We generate counterfactual outcomes by replacing the structural equation in causal model (Equation 1) with our desired intervention.36 Let be the counterfactual outcome for individual in cluster if, possibly contrary-to-fact, their cluster received treatment-level . In the PTBi study, represents the 28-day vital status for infant in facility if that facility had been randomized to the intervention arm (), while represents the 28-day vital status for infant in facility if that facility had been randomized to the control arm ().

We can also define counterfactual outcomes at the cluster-level by taking aggregates of the individual-level ones. Many such summary measures are possible. In line with common practice,1,28,32-34,37,38 we focus on weighted sums of the participants from cluster :

| (2) |

Often, this weight is selected to be the inverse of the cluster size and, thus, constant across participants in a cluster: for . With this choice, is the average counterfactual outcome for the participants in cluster . For a binary individual-level outcome, using the inverse cluster size yields a cluster-level outcome corresponding to a proportion or probability. In PTBi, for example, is the counterfactual cumulative incidence of death by 28-days if, possibly contrary-to-fact, facility received treatment-level . Of course, other weighting schemes can be used to summarize the individual-level counterfactual outcomes to the cluster-level.

By applying a summary measure to the distribution of counterfactual outcomes, we define the causal effect corresponding to our research query. A wide variety of causal parameters can be expressed as the empirical mean over the sample:34,37-41

| (3) |

where again is the user-specified weight. Throughout, superscript denotes a summary of cluster-level outcomes, and superscript denotes a sample parameter. As the number of clusters grows (), the sample parameter converges to the expectation over the target population of clusters:

| (4) |

In words, is the expected cluster-level counterfactual outcome if all clusters in the population had been assigned to treatment-level , whereas in Equation (3) is the average cluster-level counterfactual outcome for the clusters in the CRT. For the PTBi study and weights , represents the expected incidence of 28-day mortality among preterm infants if all health facilities in the target population had been assigned to treatment arm , while represents the average incidence if the health facilities included in the study had been assigned to treatment arm .

By taking contrasts of these treatment-specific causal parameters, we can define causal effects on any scale of interest. For example, we may be interested in the relative effect for the clusters included in the study: . Alternatively, we may be interested in their difference at the population-level (a.k.a., the average treatment effect): . For simplicity we refer to contrasts defined using Equation (3) or (4) as “cluster-level effects.”

Letting be the total number of participants in the CRT, we can also use Equation (3) to define causal effects at the individual-level by setting :

| (5) |

As sample size grows (), this converges to the expectation over the target population of clusters, each containing a finite number of participants:

| (6) |

In words, is the expected individual-level outcome if all clusters in the target population received treatment-level , whereas in Equation (5) is the average individual-level outcome for the participants in the CRT. In the PTBi study, represents the counterfactual risk of mortality for a preterm infant if all health facilities in the target population received treatment-level , while represents the counterfactual proportion of preterm infants who would die if the health facilities included in the study had been assigned to treatment arm . As before, we can take the difference or ratio of these treatment-specific parameters to define causal effects. For simplicity we refer to contrasts defined using Equation (5) or (6) as “individual-level effects.”

In summary, we can define a wide variety of causal parameters by considering alternative summary measures of the individual-level or cluster-level counterfactual outcomes. Further generalizations are available in Appendix A of the Data S1. When the cluster size varies (ie, ), causal parameters giving equal weight to clusters (eg, Equation 3) will generally differ from causal parameters giving equal weight to participants (eg, Equation (5). As a toy example, suppose we have clusters of varying sizes, specifically for and . Further suppose the counterfactual probability of the individual-level outcome depends on cluster size, such that the total number of outcomes () is 2 for clusters and 7500 for cluster . Then using the inverse cluster size as weight (), the cluster-level sample parameter, given in Equation (3), would be = (2/10 + 2/10 + 2/10 + 2/10 + 7500/10 000) ÷ 5 = 0.31. In contrast, the individual-level sample parameter, given in Equation (5), would be = (2 + 2 + 2 + 2 + 7500) ÷ (10 + 10 + 10 + 10 + 10 000) = 0.748. While this is an extreme example, it illustrates the potential divergence of the treatment-specific means when cluster size varies.

Additionally since causal effects are defined through contrasts of these treatment-specific means, effects defined at the cluster-level or individual-level can also be meaningfully different when cluster size varies. The potential divergence in these causal effects is exacerbated under informative cluster size, occurring when the intervention effect is modified by cluster size.8,9 This scenario is explored in detail in the second simulation study and real data example, below. Nonetheless, it is worth emphasizing that even if the cluster size is constant (ie, ), the cluster-level and individual-level effects have subtly different interpretations, as discussed previously. In observational studies, failing to recognize that effects defined at the aggregate-level (ie, at the cluster-level) may differ from effects defined at the individual-level is known as the “ecological fallacy.”42

For completeness in the above, we defined both sample-specific and population-level measures. For ease of comparison of analytic methods, we focus on the population-level effects (Equations 4 and 6) for the remainder of the paper and refer the reader to References 37-41,43 for further discussion on the trade-offs between targeting population vs sample effects. In brief, the sample effect might be more appealing when clusters are selected for convenience and can be estimated more precisely than the population effect.

2.3 ∣. Observed data and identification of causal effects

For a given cluster, the observed data are the set of measured cluster-level covariates, the matrix of individual-level covariates, the randomized treatment, and the vector of individual-level outcomes:

We assume the observed data are generated by sampling times from a data generating process compatible with the above causal model (Equation 1). This provides a link between the causal model and the statistical model, which is the set of possible distributions of the observed data.11 The causal model encodes that the cluster-level treatment is randomized, but does not otherwise place restrictions on the joint distribution of observed data, denoted . Importantly, there are not any parametric restrictions on how the outcome vector is generated; instead, it may be any function of the covariates (), the intervention , and unmeasured factors . Altogether, our statistical model is semi-parametric.

As with the counterfactual outcome vector , we can summarize the observed outcome vector in a variety of ways. We again consider weighted sums of the individual-level outcomes within a cluster:

| (7) |

where matches the definition of the cluster-level counterfactual outcome in Equation 2. For the remainder of the paper, we focus the cluster-level outcome defined as the empirical mean within each cluster, corresponding to weights for . However, as previously discussed, we can consider alternative weighting schemes depending on our research question.

To identify the expected counterfactual outcome as a function of the observed data distribution and define our target statistical estimand, we require the following two assumptions. Both are satisfied by design in a CRT. First, there must be no unmeasured confounding, such that . Second, there must be a positive probability of receiving each treatment-level: . Given these conditions are met in a CRT, we can express the cluster-level causal parameter as the expected cluster-level outcome under the treatment-level of interest , where subscript 0 is used to denote the observed data distribution ; a proof is provided in Reference 28. Likewise, the individual-level causal parameter equals the expected individual-level outcome under treatment-level of interest: , recognizing the slight abuse to notation because the observed data are at the cluster-level.

We can gain efficiency in CRTs by adjusting for baseline covariates (eg, References 1,7,29, and 44). Specifically, the treatment-specific expectation of the cluster-level outcome can be expressed as the conditional expectation of the cluster-level outcome given the treatment-level of interest and the baseline covariates (), averaged over the covariate distribution: . In practice, we cannot directly adjust for the entire matrix of individual-level covariates during estimation. However, we can still improve precision by including lower-dimensional summary measures of in the adjustment set or by aggregating the individual-level conditional mean outcome to the cluster-level (details in Appendix B of the Data S1).28 For simplicity, we use to denote either approach to including individual-level covariates in our cluster-level statistical estimand, defined as

| (8) |

As previously discussed, under the randomization and positivity assumptions, both holding by design, this equals the treatment-specific mean of the cluster-level counterfactual outcomes .

Likewise, our statistical estimand for the treatment-specific mean of the individual-level counterfactual outcomes is

| (9) |

again acknowledging the slight abuse to notation, because subscript 0 denotes the distribution of the cluster-level data . Our individual-level estimand is the conditional expectation of the individual-level outcome given the treatment-level of interest a, the cluster-level covariates and the individual-specific covariates , averaged over the covariate distribution. For both estimands (Equations 8 and 9), we emphasize that covariate adjustment is being used for efficiency gains only and not to control for confounding or other sources of bias, such as missing outcomes or selection.16-18,20-23

As before, we take contrasts of the cluster-level or individual-level estimands corresponding to our causal effect of interest. To give context for the methods comparison, we will focus on the relative scale for the remainder of the paper; however, our discussion is equally applicable to other scales (eg, additive or odds ratio). Specifically, the relative effect is identified at the cluster-level as

| (10) |

and at the individual-level as

| (11) |

As detailed below, most analytic methods naturally only estimate one of the above statistical estimands, whereas few have the flexibility to estimate both. While we focused on identification of population-level effects, the extensions to sample effects are fairly straightforward, as discussed in Reference 41.

3 ∣. STATISTICAL ESTIMATION AND INFERENCE

In this section, we compare the methods commonly used to analyze CRTs. We describe their target of inference and their ability to adjust for baseline covariates to improve precision and thereby improve statistical power. We broadly consider two classes of estimation methods: approaches using only cluster-level data and approaches using both individual- and cluster-level data. The former immediately aggregate the data to the cluster-level and can only adjust for cluster-level covariates, while the latter allow for adjustment of individual-level covariates, an appealing option, as these pair naturally with individual-level outcomes. Examples of cluster-level approaches include the t-test and cluster-level TMLE. Cluster-level approaches naturally target cluster-level effects (eg, Equation 10), but with the appropriate choice of weights can also target individual-level effects (eg, Equation 11). Examples of approaches using individual-level data include GEE, CARE, and Hierarchical TMLE1,26,28. These individual-level approaches often estimate different causal effects, as detailed below. To the best of our understanding, of the algorithms discussed here, Hierarchical TMLE is the only individual-level approach that can estimate effects defined at the cluster-level (eg, Equation 10) or at the individual-level (eg, Equation 11). It should be possible to modify G-computation and inverse probability weighting to target both cluster-level and individual-level effects, but these extensions are beyond the scope of this paper. It is not well understood how to incorporate weights for GEE.45

We now define the notation used throughout this section. Recall the cluster level-outcome is defined as a weighted sum of individual-level outcomes, as in Equation (7). We denote the conditional expectation of the cluster-level outcome given the cluster-level intervention and covariates () as

| (12) |

Likewise, we denote the conditional expectation of the individual-level outcome given the cluster-level intervention , the cluster-level covariates , and that individual’s covariates as

| (13) |

Throughout, we refer to Equation (12) and to (13) as the cluster-level and individual-level outcome regressions, respectively. The unadjusted expectations of the cluster-level and individual-level outcomes within treatment arm are defined as and , respectively. We denote the cluster-level propensity score as

| (14) |

and the individual-level propensity score as

| (15) |

We define unadjusted probabilities and , analogously.

3.1 ∣. Analytic approaches using cluster-level data

Cluster-level approaches obtain point estimates and inference after the individual-level data have been aggregated to the cluster-level.1,7 Most commonly, this aggregation is done by taking the empirical mean within each cluster. However, as previously detailed in Section 2.2, we can consider several ways to summarize the individual-level data to the cluster-level (ie, different weighting schemes).

3.1.1 ∣. Unadjusted effect estimator

Once the data are aggregated to the cluster-level, a common approach for estimation and inference is based on contrasts of the treatment-specific average outcomes:

| (16) |

where denotes the unadjusted estimate of the cluster-level propensity score (ie, the proportion of clusters in the trial receiving treatment-level ). For simplicity for the remainder of the manuscript, we assume the trial has equal allocation of arms, such that ; however, our results should generalize to trials with more than two arms and to imbalanced trials. Then, if we let denote the cluster-level outcome for observation in treatment arm , the treatment-specific mean simplifies to . In PTBi, represents the average incidence of 28-day infant mortality among facilities that received treatment-level . We obtain a point estimate of the cluster-level effect by contrasting and on the scale of interest and obtain statistical inference using the t-distribution. Suppose, for example, we were interested in the cluster-level average treatment effect ; then our point estimate would be and we would test the null hypothesis using a Student’s t-test. Statistical power may be improved by considering alternative weighting schemes when summarizing individual-level outcomes to the cluster-level;1 however, as previously discussed, different weights imply different target effects.

For the relative effect, applying the logarithmic transformation is sometimes recommended when the cluster-level summaries are skewed, which may be more common for rate-type outcomes.1 However, it is important to note that depending on how this transformation is implemented, estimation and inference may be for the ratio of the geometric means, as opposed to the ratio of the arithmetic means. (Recall for observations of some variable , the geometric mean is , whereas the arithmetic mean is ). Specifically, suppose we first take the log of the cluster-level outcomes and then take the average within each arm:

| (17) |

where again denotes the cluster-level outcome for cluster in treatment arm . Applying a t-test to the difference in these treatment-specific means (and then exponentiating) targets the ratio of the geometric means. Continuing our toy example from Section 2.2, the arithmetic mean of the cluster-level outcomes in the control was , while the geometric mean of the cluster-level control outcomes would be 0.26. To avoid changing the target of inference, we can instead apply the Delta Method to obtain point estimates and inference for the ratio of arithmetic means, as in Equation (10). We refer the reader to Reference 46 for more details.

3.1.2 ∣. Cluster-level TMLE with adaptive prespecification

As previously discussed, statistical power is often improved by adjusting for baseline covariates that are predictive of the outcome. Once the data have been aggregated to the cluster-level, we can proceed with estimation and inference for the cluster-level estimand , using methods for i.i.d. data. Examples of common algorithms for ) include parametric G-computation, inverse probability of treatment weighting estimators (IPTW), and TMLE.11 Due to treatment randomization, these algorithms will be consistent, even under misspecification of the outcome regression.47 Given that G-computation and IPTW are well-established approaches, we focus on TMLE, which is a general class of double robust, semiparametric efficient, plug-in estimators.11 Here, we briefly review the steps of a cluster-level TMLE and then present a solution for optimal selection of the adjustment covariates in trials with limited numbers of clusters. We conclude with a discussion of how to apply weights to the cluster-level TMLE to estimate an individual-level estimand (eg, ). In the next section, we discuss an alternative implementation of TMLE that can harness both individual-level and cluster-level covariates to increase precision, while maintaining Type-I error control.

To implement a cluster-level TMLE of the cluster-level effect, we first obtain an initial estimator of the expected cluster-level outcome . Next, we update this initial estimator using information contained in the estimated propensity score . Specifically, we define the “clever covariate” as the inverse of the estimated propensity score for cluster :

Then on the logit-scale, we regress the cluster-level outcome on the covariates and with the initial estimator as the offset. This provides the following targeted estimator, while simultaneously solving the efficient score equation:

| (18) |

where and denote the estimated coefficients for and , respectively. Finally, we obtain a point estimate of the treatment-specific mean by averaging the targeted predictions of the cluster-level outcomes across the clusters:

To evaluate the intervention effect, we contrast our estimates and on the scale of interest and apply the Delta Method for inference. The variance of asymptotically linear estimators, such as the TMLE, may be estimated using the estimator’s influence function.11 These types of estimators enjoy properties that follow from the Central Limit Theorem, allowing us to construct 95% Wald-type confidence intervals. As a finite sample approximation to the normal distribution, we recommend using the t-distribution with degrees of freedom. For the treatment-specific mean , for example, the influence function and asymptotic variance for the cluster-level TMLE are well-approximated as and , respectively.

In a CRT, the propensity score is known and does not need to be estimated. However, further gains in efficiency can be achieved through estimation of the propensity score.44,47 If both the cluster-level outcome regression and cluster-level propensity score are consistently estimated, the TMLE will be an asymptotically efficient estimator. However, consistent estimation of the outcome regression is nearly impossible when using an a priori-specified regression model.

To improve precision while preserving Type-I error control, we previously proposed “Adaptive Prespecification,” a supervised learning approach using sample-splitting to choose the adjustment set that maximizes efficiency.29 In brief, we prespecify a set of candidate generalized linear models for the cluster-level outcome regression and propensity score . To avoid forced adjustment at the detriment of precision, the unadjusted estimator should always be included as a candidate. We also prespecify a cross-validation scheme; for small trials (eg, ), we recommend leave-one-cluster-out. To measure performance, we prespecify the squared influence function as our loss function. Then we choose the candidate estimator of that minimizes the cross-validated variance estimate using the influence function based on the known propensity score (ie, ). We then select the candidate estimator of propensity score that further minimizes the cross-validated variance estimate using the influence function when combined with the previously selected estimator . Together, the selected estimators and form the “optimal” TMLE according to the principle of empirical efficiency maximization.44

Application of TMLE with Adaptive Prespecification to real data from the SEARCH CRT resulted in notable precision gains.16,48 As compared to the unadjusted estimator, TMLE was 4.6 times more efficient for the effect on HIV incidence, 2.6 times more efficient for the effect on the incidence tuberculosis, and 1.8 times more efficient for the effect on hypertension control. Given that this cluster-level TMLE adjusted for at most two cluster-level variables, these gains in efficiency may seem surprising. However, we believe that they demonstrate the power of using Adaptive Prespecification to flexibly select the optimal adjustment strategy to maximize empirical efficiency. These gains are also seen in a recent extension of Adaptive Prespecification for flexible adjustment of many covariates in trials with a larger number of randomized units.49

3.1.3 ∣. Targeting an individual-level effect with an estimator using cluster-level data

With or without Adaptive Prespecification, the cluster-level TMLE naturally estimates cluster-level parameters (eg, Equation (10)) corresponding to contrasts of cluster-level counterfactuals, such as . However, the cluster-level TMLE can also estimate individual-level parameters (eg, Equation 11) corresponding to contrasts of individual-level counterfactuals, such as . To do so, we include weights in each step of the cluster-level analysis (derivation and R code in the Data S1). This approach may be relevant when data are only available at the cluster-level, but interest is in an individual-level effect. Finally, we note that the unadjusted effect estimator can be considered a special case of the TMLE where the adjustment set is empty: . Therefore, the unadjusted effect estimator based on cluster-level data can also estimate an individual-level effect if the appropriate weights are applied.

3.2 ∣. Analytic approaches using individual-level data

We now discuss how to leverage individual-level covariates when estimating effects in CRTs. This is done by aggregating to the cluster-level after estimating the expected individual-level outcome or by implementing a fully individual-level approach. In all cases, clustering must be accounted for during variance estimation. Estimators using individual-level data vary in their flexibility to estimate both cluster-level effects (eg, Equation (10) and individual-level effects (eg, Equation 11).

3.2.1 ∣. Hierarchical TMLE

In Section 3.1, we discussed a cluster-level TMLE for estimating effects in CRTs based on aggregating the data to the cluster-level. An alternative and equally valid approach for CRT analysis is to ignore clustering when obtaining a point estimate and then account for clustering during variance estimation and statistical inference.50,51 Such an approach naturally estimates an individual-level parameter, such as . We now present an individual-level TMLE using this alternative approach and refer to it as “Hierarchical TMLE” to emphasize its distinction from the standard TMLE for an individually randomized trial with i.i.d. participants. In Appendix B, we also present a “Hybrid TMLE” that obtains an initial estimate of the cluster-level outcome regression based on aggregates of the individual-level outcome regression (ie, ) and then proceeds with estimation and inference as outlined in Section 3.1.2. Both approaches leverage the natural pairing of individual-level outcomes with individual-level covariates, extend to a pair-matched design,28 and can be combined with weights to estimate effects at the cluster-level or individual-level.

To implement Hierarchical TMLE for the individual-level effect, we pool participant-level data across clusters to obtain estimators of the individual-level outcome regression and the individual-level propensity score . The initial outcome regression estimator is then updated based on the estimated propensity score . As before, we calculate the “clever covariate,” but now at the individual-level:

for . Then on the logit-scale, we regress the individual-level outcome on the individual-level covariates and with the initial individual-level estimator as the offset. This provides the following updated estimator of the expectation of the individual-level outcome, while simultaneously solving the efficient score equation:

| (19) |

where and now denote the estimated coefficients for and . Then we obtain a point estimate of the treatment-specific mean by averaging these targeted predictions:

where, again, denotes the total number of participants across all clusters. Thus, this implementation of Hierarchical TMLE to estimate an individual-level parameter is nearly identical to standard TMLE for i.i.d. data. Key distinctions are in sample-splitting (if used) and variance estimation, both of which must respect the cluster as the independent unit in CRTs. Specifically, to estimate the variance of Hierarchical TMLE for , we aggregate an individual-level influence function to the cluster-level and then take the sample variance of the estimated cluster-level influence function, scaled by the number of independent units . For example, the influence function for this TMLE of is well-approximated by where . (See Schnitzer et al for a proof.51) Altogether, this implementation of Hierarchical TMLE naturally estimates individual-level effects (eg, Equation 11) and is analogous to using an independent working correlation matrix with the robust variance estimator in GEE,45 described below.

Unlike GEE, however, Hierarchical TMLE can also easily estimate cluster-level effects (eg, Equation 10).28 To do so, we incorporate the weights throughout the analysis to obtain cluster-level point estimates and inference. Specifically, when obtaining a point estimate, we aggregate the targeted predictions within clusters before averaging across clusters: . Likewise, we estimate a cluster-level influence function for this TMLE of as where . Further details are available in Balzer et al28 and R code is provided in the Data S1.

For either the cluster-level or individual-level effect, the variance of Hierarchical TMLE is well-approximated by the variance of the cluster-level influence function, scaled by the number of independent units . As before, the Delta Method is applied to obtain point estimates and inference for the intervention effect on any scale of interest. Again, we recommend the t-distribution with degrees of freedom for confidence interval construction and testing the null hypothesis. Hierarchical TMLE will be an asymptotically efficient estimator if the outcome regression and propensity score are consistently estimated at reasonable rates. In practice, we again recommend using Adaptive Prespecification to select the optimal adjustment strategy to maximize efficiency.

3.2.2 ∣. Covariate-adjusted residuals estimator

The covariate-adjusted residuals estimator (CARE) was first proposed in Gail et al27 and later popularized by Hayes and Moulton.1 CARE is implemented by pooling individual-level data across clusters and then regressing the individual-level outcome on the individual-level and cluster-level covariates of interest (), but not the cluster-level intervention . Then the predictions from this regression are aggregated to the cluster-level. Finally, a t-test comparing the mean residuals (ie, the discrepancies between observed and predicted outcomes) by arms is performed, since the average residuals should be the same between arms under the null hypothesis. CARE naturally targets cluster-level effects, as in Equation (10), and it is not immediately obvious how to incorporate weights to target individual-level effects, as in Equation (11).

As a concrete example, suppose our goal is to estimate the cluster-level effect on the relative scale and the individual-level outcome is binary. To implement CARE in this setting, we first fit an individual-level logistic regression, such as

| (20) |

where and denote the magnitude by which the log odds of the outcome for the ith individual in the jth cluster is affected (linearly) by the cluster-level covariates and individual-level covariates , respectively. From this regression we obtain the expected number of events in the jth cluster as and compare it with the observed number of events through ratio-residuals:

Hayes and Moulton1 note that these ratio-residuals are often right-skewed and recommend a logarithmic transformation. Specifically, they recommend applying a t-test to obtain point estimates and inference for the difference in the treatment-specific averages of the log-transformed residuals:

| (21) |

where denotes the ratio-residual for cluster in arm . As detailed in Section 3.1.1, after exponentiation, we recover estimates and inference for the ratio of the geometric means and thereby a different causal effect than the standard risk ratio, given in Equation (10). A straightforward extension to pair-matched design is illustrated in Reference 1.

3.2.3 ∣. Generalized estimating equations

We now consider a class of estimating equations, sometimes referred to as “population-average models,” for estimating effects in CRTs.50 In GEE, estimation and inference is conducted at the individual-level and a working correlation matrix is used to account for the dependence of outcomes within clusters. GEE naturally targets individual-level effects, as in Equation (11). As with CARE, it is unclear whether weights can be incorporated to instead target cluster-level effects, as in Equation (10). Indeed, recent work by Wang et al45 suggest that when targeting the cluster-level effect, inappropriate use of weights in GEE can result in meaningful bias.

In GEE, the expected individual-level outcome is modeled a function of the treatment and possibly covariates of interest.50,52 Specifically, consider the following “marginal model” for the expected individual-level outcome :

| (22) |

where denotes the inverse-link function. Commonly, the identity link is used for continuous outcomes, the log-link for count outcomes, and the logit-link for binary outcomes. Effect estimation in GEE is usually done by obtaining a point estimate and inference for the treatment coefficient . Then at the individual-level, represents the additive causal effect for the identity link; represents the relative effect for the log-link, and represents the odds ratio effect for the logit-link. In other words, the link function often determines the scale on which the effect is estimated.

As with other CRT approaches, GEE may improve efficiency by adjusting for covariates. Consider, for example, the following “conditional model” for the expected individual-level outcome :

| (23) |

where again denotes the inverse-link function. Except for linear and log-linear models without interaction terms, the interpretation of is generally not the same as in the marginal model52 For the logistic link function, for example, in Equation (23) would yield the conditional log-odds ratio, instead of the marginal log-odds ratio. However, a recent modification to GEE, presented in the next subsection, allows for estimation of marginal effects, while adjusting for individual-level or cluster-level covariates.

For either a marginal or conditional specification, the GEE estimator solves the following equation:

where is the vector containing individual-level outcome regressions for cluster , is the gradient matrix, and is the working correlation matrix used to account for dependence of individuals within a cluster .50 GEE yields a consistent point estimate of under the marginal model, even if the correlation matrix has been misspecified. It is worth noting, however, that the interpretation of the estimated effect changes subtly when using alternative correlation matrices.52 Additionally, under misspecification of the correlation matrix, the usual SEs obtained are not valid, and the sandwich variance estimator must be used.50 In general, unless the number of clusters is relatively large and the number of participants within cluster is relatively small, the sandwich-based SEs can underestimate the true variance of and yield confidence intervals with coverage probability below desired nominal level. Fortunately, several corrections to variance estimation exist.7,53,54 Finally, for a CRT where the intervention is randomized within matched pairs of clusters, a pair-matched analysis can be conducted by specifying a fixed effect for the pair, while maintaining the correlation structure at the cluster-level. However, pair-matched analyses are generally discouraged for studies with fewer than 40 clusters.1,7,53

3.2.4 ∣. Augmented-GEE

As discussed in the previous subsection, the treatment coefficient resulting from GEE does not always correspond to the marginal effect. Recently, a modification to GEE was proposed to ensure can be interpreted as a marginal effect, while simultaneously adjusting for baseline covariates to improve efficiency.30 This approach is referred to as Augmented-GEE (Aug-GEE) and naturally targets individual-level effects, as in Equation (11). As with standard GEE, it is unclear if weights can be incorporated in Aug-GEE to instead target cluster-level effects, as in Equation (10). Additionally, as with standard GEE, the link function determines the scale on which the effect is estimated in Aug-GEE (ie, additive for the identity link, relative for the log-link, and odds ratio for the logit-link).

As commonly implemented, Aug-GEE modifies GEE by including an additional “augmentation" term, which incorporates the conditional expectation of the individual-level outcome .

The general form of the Aug-GEE for a binary treatment is given by

| (24) |

with augmentation term

For cluster denotes the vector of marginal regressions (as in Equation 22) and denotes the vector of conditional regressions (as in Equation 23). The cluster-level propensity score, defined in Equation (14), is treated as known (ie, ). By solving Equation (24), we can obtain point estimates and inference for the marginal effects. As with the other estimators considered, if the conditional outcome regression is misspecified, the resulting estimator is asymptotically normal and consistent; however, it is not efficient.30 Indeed, the efficiency of Aug-GEE heavily depends on the matrix . Stephens et al55 show how to further improve Aug-GEE by deriving the semi-parametric locally efficient estimator, but ultimately conclude that the high-dimensional inverse covariance matrix, required in their approach, presents a substantial barrier to any practical gains.

4 ∣. SIMULATION STUDIES

To examine the finite sample properties of the previously discussed CRT estimators and to demonstrate the practical impact of targeting different causal effects, we conducted two simulation studies. Full R code is provided in the Data S1.

4.1 ∣. Simulation I

We simulated a simplified data generating process reflecting the hierarchical data structure of the PTBi study, which randomized clusters, corresponding to health facilities. For each cluster , we generated cluster-level covariates Norm(2, 1) and Norm(0, 1) and cluster size Norm(150, 80) subject to a minimum of 30 participants. For each cluster , we also simulated random variables Unif(−0.2, 1.5) and Unif(−0.5, 0.5) to act as an unmeasured source of dependence within each cluster. Then for participant in each cluster , we generated four individual-level covariates: ; ; , and .

To reflect the PTBi study design, we paired clusters on using the nonbipartite matching algorithm.56 Within the pair, one cluster was randomized to the intervention arm () and the other to the control arm (). Lastly, we generated the individual-level outcomes as a function of the intervention , the cluster-level and individual-level covariates, and unmeasured factor :

| (25) |

To assess Type-I error control, we generated the outcomes after setting the terms involving the treatment to 0. For a population of 2500 clusters, we also generated counterfactual outcomes under the intervention and under the control by setting and , respectively. These counterfactuals were used to calculate the true values of the causal parameters, defined at both the cluster-level and individual-level. Specifically, we calculated the cluster-level relative effect (Equation 10), the individual-level relative effect (Equation 11), as well as the geometric incidence ratio (ie, the ratio of the geometric means of the cluster-level outcomes).

For estimation of the cluster-level relative effect, we implemented Hierarchical TMLE using Adaptive Prespecification to select from {} and the cluster-level TMLE with Adaptive Prespecification to select the optimal adjustment set from {}, where were the empirical means of their individual-level counterparts. For comparison, we also implemented a cluster-level TMLE not adjusting for any covariates, hereafter called the “unadjusted estimator.” Inference for the unadjusted estimator and the TMLEs was based on their influence functions.

CARE, GEE, and Aug-GEE relied on a fixed specification of the individual-level outcome regression with main terms adjustment for both cluster-level and individual-level covariates: {}. For CARE, we used the logit-link to obtain outcome predictions in the absence of the treatment, then applied the log-transformation to the ratio residuals, as recommended by Hayes and Moulton,1 and finally obtained inference with a t-test. For comparison, we also implemented a standard Student’s t-test after log-transforming the cluster-level outcomes. In GEE and Aug-GEE, we used the log-link function and Fay and Graubard’s finite sample variance correction Reference 54, as implemented in the geesmv and CRTgeeDR packages, respectively.57,58 All algorithms ignored the matched pairs used for intervention randomization.

4.2 ∣. Results of simulation I

In these simulations, the cluster-level incidence ratio (Equation 10), targeted by the unadjusted estimator and the TMLEs, was identical to the individual-level risk ratio (Equation 11), targeted by GEE and Aug-GEE: 0.83. The ratio of geometric means, targeted by the t-test and CARE, was 0.81, indicating a slightly larger effect. In other words, the simulated intervention resulted in a relative reduction of the mean cluster-level outcomes of 17% on the arithmetic scale and 19% on the geometric scale. As demonstrated in the second simulation study, there can be substantial divergence between the causal parameters, and it is essential to prespecify a target effect corresponding to the research query.

While the CRT estimators targeted different effects, it is still valid to compare their attained power, defined as the proportion of times the false null hypothesis was rejected at the 5% significance level, and confidence interval coverage, defined the proportion of times the 95% confidence intervals contained the true value of the target effect. Additionally, we examined Type-I error, defined as the proportion of times the true null hypothesis was rejected at the 5% significance level. These metrics are shown in Table 1 for each estimator (for its corresponding target estimand) across 500 iterations of the data generating process, each with clusters.

TABLE 1.

Performance of common cluster randomized trial estimators when there is an effect and under the null across 500 iterations of Simulation I.

| When there is an effect | Under the null | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| pt | bias | covg | power | pt | bias | covg | Type-I | |||||

| Unadj | 0.84 | 0.01 | 0.17 | 0.17 | 0.97 | 0.18 | 1.01 | 0.01 | 0.16 | 0.16 | 0.96 | 0.04 |

| C-TMLE | 0.83 | 0.00 | 0.04 | 0.05 | 0.98 | 0.99 | 1.00 | 0.00 | 0.03 | 0.03 | 0.98 | 0.02 |

| H-TMLE | 0.83 | 0.00 | 0.04 | 0.05 | 0.98 | 0.99 | 1.00 | 0.00 | 0.03 | 0.04 | 0.98 | 0.02 |

| t-test | 0.83 | 0.02 | 0.22 | 0.22 | 0.96 | 0.14 | 1.02 | 0.02 | 0.21 | 0.21 | 0.96 | 0.04 |

| CARE | 0.83 | 0.02 | 0.05 | 0.05 | 0.86 | 0.93 | 1.00 | 0.00 | 0.03 | 0.03 | 0.96 | 0.04 |

| GEE | 0.82 | −0.01 | 0.08 | 0.07 | 0.89 | 0.75 | 1.00 | 0.00 | 0.08 | 0.07 | 0.92 | 0.08 |

| A-GEE | 0.83 | −0.00 | 0.09 | 0.06 | 0.84 | 0.77 | 1.00 | 0.00 | 0.09 | 0.06 | 0.84 | 0.16 |

Abbreviations: “A-GEE,” Augmented-GEE; bias, the average difference between the point estimate and the target effect; “covg”, the proportion of times the 95% confidence interval contained the true effect; “C-TMLE” and “H-TMLE”, the cluster-level TMLE and Hierarchical TMLE, respectively; both were implemented with Adaptive Prespecification; “pt", the average point estimate; “power”, the proportion of times the false null hypothesis was rejected; , the SD of the point estimates on the log-scale; , the average SE estimate on the log-scale; “Type-I”, the proportion of times the true null hypothesis was rejected; “Unadj”, the unadjusted estimator, implemented as a cluster-level targeted maximum likelihood estimation (TMLE) for the effect of interest and without covariate adjustment.

As shown in Table 1, the unadjusted estimator of the cluster-level relative effect achieved low statistical power (18%) with slightly conservative confidence interval coverage (97%) when there was an effect and controlled Type-I error (4%) under the null. In this simulation, the cluster-level TMLE and Hierarchical TMLE performed similarly and provided substantial efficiency gains over the unadjusted estimator of the same effect. Specifically, the TMLEs achieved a statistical power of 99% with conservative interval coverage (≥ 95%) and strict Type-I error control (<5%) under the null. Thus, by adaptively adjusting for at most two covariates (one in the outcome regression and one in the propensity score), the TMLEs improved statistical power over the unadjusted estimator by 81%. In line with previous works,15,16,29 this reiterates the importance of a data-driven approach to covariate adjustment that is tailored to maximizing efficiency. Specifically, the TMLEs differed in their selection of adjustment variables. For estimation of the outcome regression, the cluster-level TMLE selected in 54% of the simulated trials and in the other 46%. In contrast, the Hierarchical TMLE selected in 82% of iterations and in 18% of iterations. The different selections illustrate how the relationship between the cluster-level outcome and cluster-level covariates can be distinct from the relationship between the individual-level outcome and individual-level covariates—impacting the optimal adjustment strategy. Importantly, both approaches avoided adjustment for covariates that were not predictive of the outcome (ie, {} at the cluster-level and {} at the individual-level). For these estimators, additional simulation results, including fewer clusters (), smaller clusters (mean cluster size of 20), and for the individual-level effect, are given in the Data S1. These simulations again demonstrate substantial gains in statistical power from using TMLE, while tightly preserving confidence interval coverage and Type-I error control under the null.

The other estimators, relying on fixed specifications of their outcome regressions, did improve power over their unadjusted counterparts, but not to the same extent as the TMLEs. Specifically, for estimation of the geometric incidence ratio, using CARE to adjust for both cluster-level and individual-level covariates improved statistical power to 93%, vastly surpassing the power of the t-test (14%). However, CARE failed to maintain nominal confidence interval coverage (86%). For the individual-level risk ratio, equal to the cluster-level incidence ratio in these simulations, GEE offered power improvements over the unadjusted approach (75% vs 18%, respectively). However, GEE exhibited less than nominal confidence interval coverage (89%) and inflated Type-I error rates (8%) under the null. While Aug-GEE, also targeting the individual-level risk ratio, slightly improved power over standard GEE (77%); it exhibited worse confidence interval coverage (84%) and doubled the Type-I error rate (16%).

4.3 ∣. Simulation II

In many CRTs, participant outcomes are influenced by the cluster size. Suppose, for example, that the smallest health facilities have the fewest resources to the detriment of their patients’ health, and largest health facilities are overburdened also to the detriment of their patients’ health. In this setting, wide variation in cluster size can result in a divergence between cluster-level and individual-level effects. Intuitively, cluster-level parameters (eg, Equations 3 and 4) give equal weight to each cluster, regardless of its size, while individual-level parameters (eg, Equations 5 and 6) give equal weight to all trial participants. The distinction between the parameters is exacerbated when cluster size interacts with the treatment and is said to be “informative.”8,9 Therefore, in the second simulation study, we considered a more complex data generating process to highlight the distinction between the cluster-level effects and individual-level effects.

We again focused on a setting with clusters, reflecting the PTBi study. For each cluster , we generated cluster-level covariates Norm(0, 1), Norm(0, 1), and the cluster size Norm(400,250), again truncated at a minimum of 30 participants. For each participant in cluster , we generated three individual-level covariates , , and with cluster-specific means , , and .

As before, clusters were pair-matched on , and within each pair, one cluster was randomized to the intervention arm () and the other to the control arm (). Lastly, we simulated the individual-level outcomes as a function of the treatment, the cluster-level and individual-level covariates, and unmeasured factor :

where denotes the scaled cluster size. Unlike Simulation I, the probability of the individual-level outcome was a function of cluster size . Specifically, the outcome risk was lower for participants in larger clusters, especially large clusters in the intervention arm. As before, we generated counterfactual outcomes under the intervention and under the control by setting and , respectively. Then for a population of 1000 clusters, we calculated the relative effect at the cluster-level (Equation 10) and at the individual-level (Equation 11).

For this simulation, we focused on the performance of the cluster-level TMLE and Hierarchical TMLE, given their flexibility to estimate a variety of effects and their ability to incorporate baseline covariates to improve precision and statistical power, while maintaining Type-I error control. As previously discussed, through the application of weights, the cluster-level TMLE can estimate individual-level effects. Likewise, Hierarchical TMLE, an estimation approach based on individual-level data, can estimate cluster-level effects. It is possible that the other CRT estimators have this flexibility, but the needed extensions remain to be fully studied.45

In this simulation, each TMLE used Adaptive Prespecification to choose at most two covariates for adjustment if their inclusion improved efficiency as compared to an unadjusted effect estimator. The candidate adjustment set for the cluster-level TMLE included {}, while the candidate adjustment set for individual-level TMLE included {}. For comparison, we also considered a cluster-level TMLE with fixed adjustment for in the cluster-level outcome regression and a Hierarchical TMLE with fixed adjustment for in the individual-level outcome regression. Inference was based on an estimate of the influence function. We again focus on the analysis breaking the matches used for randomization.

4.4 ∣. Results of simulation II

In this simulation, the true value of the cluster-level relative effect (Equation 10) was 0.78, substantially smaller than the true value of the individual-level relative effect (Equation 11) of 0.69. In other words, the intervention resulted in a 22% relative reduction in the incidence of the outcome and a 31% relative reduction in the individual-level risk of the outcome. Of course, this is just one simulation study, and in practice, there is no guarantee that the cluster-level effect will be smaller, or even different, from the individual-level effect.

For this simulation study, Table 2 shows the performance of the cluster-level TMLE and Hierarchical TMLE with fixed and adaptive adjustment. Given the differing magnitude of the effects, it is unsurprising that estimators of the individual-level effect, shown on the right, achieved notably higher power than estimators of the cluster-level effect, shown on the left. Specifically, estimators of the cluster-level effect achieved a maximum power of 44%, while estimators of the individual-level effect achieved a maximum power of 68%.

TABLE 2.

Performance of targeted maximum likelihood estimations (TMLEs) for the cluster-level and individual-level relative effects across 500 iterations in Simulation II.

| Cluster-level effect: | Individual-level effect: | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| pt | bias | covg | power | pt | bias | covg | power | |||||

| C-TMLE | 0.78 | 0.00 | 0.13 | 0.14 | 0.97 | 0.40 | 0.71 | 0.02 | 0.15 | 0.15 | 0.95 | 0.59 |

| C-TMLE-AP | 0.79 | 0.01 | 0.12 | 0.12 | 0.96 | 0.44 | 0.71 | 0.02 | 0.14 | 0.14 | 0.94 | 0.68 |

| H-TMLE | 0.78 | 0.00 | 0.12 | 0.14 | 0.98 | 0.40 | 0.71 | 0.02 | 0.15 | 0.16 | 0.96 | 0.60 |

| H-TMLE-AP | 0.79 | 0.01 | 0.11 | 0.12 | 0.97 | 0.43 | 0.72 | 0.03 | 0.13 | 0.14 | 0.95 | 0.65 |

Abbreviations: “bias”, the average difference between the point estimate and the target effect; “covg”, the proportion of times the 95% confidence interval contained the true effect; “C-TMLE” and “H-TMLE’, the cluster-level TMLE and Hierarchical TMLE, respectively. Both were implemented with fixed or adaptive adjustment via Adaptive Prespecification (“-AP”); “power”, the proportion of times the false null hypothesis was rejected; “pt”, the average point estimate; , the SD of the point estimates on the log-scale; , the average SE estimate on the log-scale.

Focusing on estimators of the cluster-level effect (Table 2, Left), all approaches resulted in low bias, similar variability (), and good confidence interval coverage (≥96%). For a given adjustment strategy (fixed or adaptive), there was little practical difference in performance between analyses using cluster-level or individual-level data. However, as expected by theory,29 the TMLEs using Adaptive Prespecification achieved higher power (≈44%) than the TMLEs relying on fixed specification of the outcome regression (40%). For the individual-level effect (Table 2, Right), the gains in power with adaptive adjustment were more substantial. Specifically, TMLEs with fixed adjustment achieved a maximum power of 60%, while the TMLEs using Adaptive Prespecification achieved a maximum power of 68%. While the approaches were slightly biased toward the null, all had good confidence interval coverage (94%-96%). Again we see little practical difference between approaches relying on cluster-level data or utilizing individual-level data. As shown in the Data S1, all approaches also maintained strong Type-I error control under the null.

Altogether the results of this simulation demonstrate the ability of TMLE to estimate both cluster-level and individual-level effects, while adaptively adjusting for baseline covariates to maximize efficiency. These results also highlight the critical importance of prespecifying the primary effect measure and using a CRT estimator of that effect. Notable bias and misleading inference may arise when the statistical estimation approach is mismatched with the desired causal effect. In all settings, the research question should drive the specification of the target effect and thereby the statistical estimation approach.10,12-14

5 ∣. REAL DATA APPLICATION: THE PTBI STUDY IN KENYA AND UGANDA

In East Africa, preterm birth remains a leading risk factor for perinatal mortality, defined as stillbirth and first-week deaths.59 Evidence-based practices, such as use of antenatal corticosteroids and skin-to-skin contact, are not routinely used and have the potential to improve outcomes for preterm infants during the critical intrapartum and immediate newborn periods. The PTBi study was a CRT designed to improve the quality of care for mothers and preterm infants at the time of birth through a health facility intervention (ClinicalTrials.gov: NCT03112018).59 The primary endpoint was intrapartum stillbirth and 28-day mortality among preterm infants delivered from October 2016 to May 2018 in Western Kenya and Eastern Uganda.

In more detail, 20 health facilities, including large hospitals and smaller health centers, were selected for participation in the study. The facilities ranged in size, staff-to-patient ratio, and capacity to perform cesarean section (C-section), among others. Prior to randomization, facilities were pair-matched on country, delivery volume, staff-to-patient ratio, as well as rates of stillbirths, low-birth weight infants, and pre-discharge neonatal mortality.60 Within matched pairs, they were than randomized to either the intervention or control arm.59 Facilities in the control arm received (1) strengthening of routine data collection and (2) introduction of the WHO Safe Childbirth Checklist.61 The facilities in the intervention arm received the components included in the control arm in addition to (1) PRONTO™ Simulation training,62 and (2) quality improvement collaboratives aimed to reinforce and optimize use of evidence-based practices. All study components consisted of known interventions and strategies aiming to improve quality of care, teamwork, communication, and data use.59

The results of the PTBi Study have been previously published.60 Here, we focus on the real-world impact of highly variable cluster sizes when defining and estimating causal effect in CRTs. Specifically, during the study period, an unforeseen political strike led to lack of medical providers at certain facilities, thereby decreasing volume at some facilities while increasing volume at others. The number of preterm births for a given facility ranged between 40 and 366 in the intervention arm and between 29 and 447 in the control arm. Differences in cluster size were also pronounced within matched pairs and ranged between 9 and 211.

To study the impact of high variability in cluster size, we return to the consequences of specifying the effect in terms of cluster-level outcomes (Equation 10) vs individual-level outcomes (Equation 11). In PTBi, the individual-level outcome was an indicator of preterm infant mortality by 28-day follow-up. Infants dying before discharge (stillbirth and predischarge mortality) were also included in the study; for these infants, . For facility , the cluster-level endpoint was the incidence of fresh stillbirth and 28-day all-cause mortality among preterm births and calculated as the empirical mean of the individual-level outcomes: .

For both the cluster-level and individual-level effects, we compared estimates and inference from the TMLEs using cluster-level or individual-level data. The cluster-level TMLE used Adaptive Prespecification to select between no adjustment and adjustment for the proportion of mothers receiving a C-section, while the Hierarchical TMLE used Adaptive Prespecification to select between no adjustment and adjustment for an individual-level indicator of receiving a C-section. For comparison, we also implemented each TMLE without adjustment and each approach breaking or preserving the matched pairs used for randomization. (See Balzer et al.35 for details on how matched analyses impact estimation and inference with TMLE.) Since C-section status was missing for 47 participants, all analyses restricted to the 2891 mother-infant dyads with complete data to improve comparability of the methods in this demonstration paper.

5.1 ∣. PTBi results

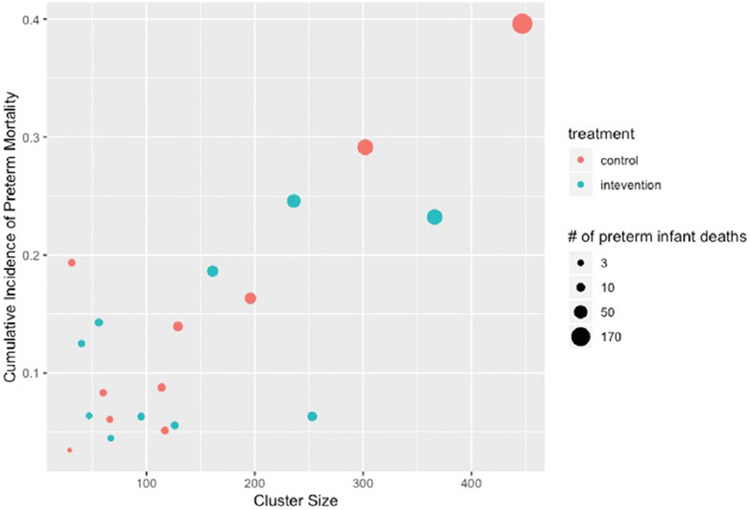

As shown in Table 3, estimates and inference for the effect of the PTBi intervention varied substantially by the target of inference. At the cluster-level, the average incidence of 28-day mortality among preterm infants was 12% among facilities randomized to the intervention and 15% among facilities randomized to the control. Therefore, the unadjusted estimator of the cluster-level relative effect (Equation 10) was 0.81, corresponding to 19% reduction in the incidence of mortality at the facility-level. The results for the individual-level effect were markedly different, reflecting how outcome risk varied by cluster size. As shown in Figure 1, larger hospitals tended to have poorer outcomes (Pearson’s correlation r = 0.77), especially in the control arm (r = 0.88). At an individual-level, the overall proportion of preterm infants who died within 28 days was 15% in the intervention arm and 23% in the control arm. Therefore, the unadjusted estimator of the individual-level relative effect (Equation 11) was 0.66, corresponding to a 34% reduction in the mortality risk. As predicted by theory,1,33,35 for a given statistical estimand, the analysis preserving the matched pairs used for randomization was notably more precise than the analysis breaking the matches. Specifically, when keeping vs breaking the matches, the unadjusted estimator was five times more efficient for the cluster-level effect and three times more efficient for the individual-level effect. Throughout, efficiency is defined as the variance of the unadjusted effect estimator when breaking the matches divided by the variance of another approach.16

TABLE 3.

Estimating cluster-level and individual-level relative effects in the Preterm Birth Initiative (PTBi) study.

| For the cluster-level effect | For the individual-level effect | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Ratio (95% CI) | Eff. | Adj. | Ratio (95% CI) | Eff. | Adj. | |||||

| Breaking the matches | ||||||||||

| Unadj. | 12% | 15% | 0.81 (0.43-1.55) | 1 | — | 15% | 23% | 0.66 (0.33-1.31) | 1 | — |

| C-TMLE | 13% | 14% | 0.88 (0.59-1.34) | 2 | C-sect | 17% | 21% | 0.84 (0.53-1.34) | 2 | C-sect |

| H-TMLE | 12% | 15% | 0.84 (0.50-1.41) | 2 | C-sect | 16% | 22% | 0.70 (0.38-1.27) | 1 | C-sect |

| Preserving the matches | ||||||||||

| Unadj. | 12% | 15% | 0.81 (0.59-1.11) | 5 | — | 15% | 23% | 0.66 (0.44-0.99) | 3 | — |

| C-TMLE | 12% | 15% | 0.81 (0.59-1.11) | 5 | 15% | 23% | 0.65 (0.43-0.98) | 3 | ||

| H-TMLE | 12% | 15% | 0.82 (0.60-1.11) | 5 | 16% | 22% | 0.70 (0.46-1.06) | 3 | C-sect | |

Abbreviations: “C-TMLE” and “H-TMLE”, the cluster-level TMLE and Hierarchical TMLE, respectively; both were implemented with Adaptive Prespecification to adjust for C-section (“C-sect”) or nothing (); “Eff”, the relative efficiency: the variance estimate for the unadjusted effect estimator breaking the matches used for randomization, divided by the variance estimate of another approach (eg, Hierarchical-TMLE with Adaptive Prespecification, keeping the matches used for randomization); “Unadj.”, the unadjusted effect estimator.

FIGURE 1.

Scatter plot of cluster size by the cumulative incidence of preterm mortality and trial arm in Preterm Birth Initiative.