Abstract

I present PHLASH, a new Bayesian method for inferring population history from whole genome sequence data. PHLASH is population history learning by averaging sampled histories: it works by drawing random, low-dimensional projections of the coalescent intensity function from the posterior distribution of a PSMC-like model, and averaging them together to form an accurate and adaptive size history estimator. On simulated data, PHLASH tends to be faster and have lower error than several competing methods including SMC++, MSMC2, and FITCOAL. Moreover, it provides a full posterior distribution over population size history, leading to automatic uncertainty quantification of the point estimates, as well to new Bayesian testing procedures for detecting population structure and ancient bottlenecks. On the technical side, the key advance is a novel algorithm for computing the score function (gradient of the log-likelihood) of a coalescent hidden Markov model: when there are hidden states, the algorithm requires time and memory per decoded position, the same cost as evaluating the log-likelihood itself using the naïve forward algorithm. This algorithm is combined with a hand-tuned implementation that fully leverages the power of modern GPU hardware, and the entire method has been released as an easy-to-use Python software package.

1. Introduction

Many natural populations experience significant changes in abundance over the course of their existence. Our own species, for example, has increased over a thousand-fold since the advent of agriculture 12,000 years ago, with various human subpopulations having expanded or contracted due to migration, disease, climate change, interbreeding, and other factors (Cavalli-Sforza, 2000; Diamond, 2005). In general, while some change in population size may be due to random chance, measurable growth and decline over evolutionary timescales is frequently the result of interesting biological, cultural, or ecological phenomena. By developing tools to estimate population history from genetic data, a pursuit known as demographic inference, we may hope to learn more about the past, and possible future, of our biome.

That said, estimating population history can be lamentably difficult. Signals of this history are only faintly manifested as patterns of allele sharing across sampled individuals. These patterns can be further obscured by natural phenomena such as meiotic recombination or natural selection, or by bioinformatic error. Complex mathematical models are needed to relate the data to a hypothesized size history. Solving these models is computationally expensive, particularly when a large number of samples is analyzed. Moreover, because of a somewhat diffuse relationship between the model and the data, there can be many evolutionary histories that explain a given collection of observations equally well.

Nevertheless, given the potential to enhance our understanding of evolution, significant effort has been invested in developing accurate and user-friendly methods for inferring population size history. An early and well-known example is the pairwise sequentially Markovian coalescent (psmc; Li and Durbin, 2011), which infers historical effective population size using data from a single diploid individual. The basic idea of psmc, outlined in more detail below, is to relate variation in the local time to most recent common ancestor (TMRCA) between a pair of homologous chromosomes to historical fluctuations in population size through a hidden Markov model (HMM). psmc continues to be popular, because it is fast, fairly robust, and does not require phased genotypes, which can be difficult to obtain when working with non-human data (Spence et al., 2018; Mather, Traves, and Ho, 2020) However, it is not without limitations. As originally formulated, the method can only analyze data from a single sample, and it assumes a fairly simplistic evolutionary model in which size history changes at only a small number of pre-determined locations. The latter property leads to obvious visual bias in the resulting estimates, which have a “stair-step” appearance, and has other, less obvious consequences for inference as well (Parag and Pybus, 2019; Ki and Terhorst, 2023).

A number of successor methods have been proposed which remove some of these limitations (Sheehan, Harris, and Yun S Song, 2013; Schiffels and Durbin, 2014; Terhorst, J. A. Kamm, and Yun S Song, 2017; Steinrücken et al., 2019; Schiffels and K. Wang, 2020). Building on the basic psmc model, they are able to analyze larger sample sizes and/or more realistic demographic models involving e.g. population structure and admixture. Several Bayesian variants of psmc have also been proposed (Palacios, Wakeley, and Ramachandran, 2015; Henderson et al., 2021; Ki and Terhorst, 2023), though they are not as widely used, owing perhaps to the inherent computational difficulty of Bayesian inference in this setting. In a related vein, a large related class of methods exists which infer demography using the site frequency spectrum (SFS), a highly compressed summary statistic formed from genotype data (Gutenkunst et al., 2009; Excoffier and Foll, 2011; Excoffier, Dupanloup, et al., 2013; Bhaskar, Y. X. R. Wang, and Y. S. Song, 2015; Jouganous et al., 2017; J. A. Kamm, Terhorst, and Yun S Song, 2017; J. A. Kamm, Terhorst, Durbin, et al., 2020; Excoffier, Marchi, et al., 2021; Hu et al., 2023). These methods are fast, in some cases capable of analyzing tens of thousands of samples, but they completely ignore linkage disequilibrium information, which contains rich information about population history.

In this paper I present phlash, a new Bayesian method for inferring size history from recombining sequence data. phlash aims to combine the advantages of many of the methods mentioned above into single, general purpose inference procedure that is simultaneously fast, accurate, able to analyze many samples (thousands), invariant to phasing, capable of utilizing of both linkage and frequency spectrum information, and able to return a full posterior distribution over the inferred size history function. Aesthetically, phlash estimates have an appealing, non-parametric quality which enables them to adapt to variability in the underlying size history without user intervention or fine-tuning. The key advance that propels these innovations is a novel technique for efficiently differentiating the psmc likelihood function, which enables the method to rapidly navigate to areas of high posterior density. Combined with an extremely efficient, GPU-based software implementation, the end result is a method for performing full Bayesian inference of population size history, at speeds that match (or exceed) many of the optimized methods mentioned above.

2. Methods

In this section I formally define the inference problem, the statistical model, and the estimation procedure.

The goal of this paper is to estimate the historical effective population size of a panmictic population using genetic variation data. Mathematically, the estimand is a function such that the effective population size generations ago was . (Except where otherwise noted, the variable and the word “time” always refer to “generations before the present”.) phlash uses a coalescent hidden Markov model to infer from recombining sequence data, an approach pioneered by Li and Durbin (2011). The basic idea is as follows: given and recombination and mutation rates , , and diploid genotype data encoding whether two homologous chromosomes are the same or different at each of loci, psmc models as generated according to the latent variable model

| (1) |

| (2) |

| (3) |

Here, represents the unobservable time to most recent common ancestor (TMRCA) at genomic position , is its marginal distribution under , and is the conditional distribution of TMRCA at a neighboring position given knowledge of the current TMRCA. (The precise form of is not essential here, but see Hobolth and Jensen, 2014, for a detailed investigation.) Note this assumes that the sequence is Markov. psmc approximates the above generative model by discretizing the time axis, for some partition

| (4) |

of the real line, and adjusting the transition and emission functions accordingly. A crucial assumption of psmc is that the size history function also only changes discretely at :

| (5) |

Given these approximations, inference machinery for hidden Markov models can be used to iteratively increase the likelihood of the observed data using the E-M algorithm. The learned parameters are the recombination rate , and the levels of in (5). Hence, the choice of discretization (4) thus plays a strong determining role in the overall shape of the estimated function , and it is not obvious how these points should be chosen (Terhorst, J. A. Kamm, and Yun S Song, 2017; Ki and Terhorst, 2023). Because of this, in most existing methods, they are selected according to an automated heuristic, with unclear consequences for inference.

Related methods are variations on this approach. msmc (Schiffels and Durbin, 2014) for example, considers time to first coalescence as the latent state, allowing the analysis of larger sample sizes. msmc2 (Schiffels and K. Wang, 2020) uses a composite psmc likelihood taken over all pairs of haplotypes. smc++ augments the observed genotype vector with frequency spectrum information, leading to a more complex emission distribution than (3), and optionally uses a spline basis for . At a high level, though, each of these methods works by discretizing the time axis, as in (4), assuming some parametric representation for , as in (5), and then optimizing to find a point estimate.

2.1. Bayesian model

phlash is a simple Bayesian extension of the above model: instead of optimizing to find a single point estimate , it places a prior on the space of size history functions, and then samples from the posterior distribution

The prior is defined as follows. signifies “piecewise-constant with pieces”: let be the “ordered tuple space consisting of ordered positive sequences of elements,

and define . The product space is in bijection with positive, piecewise-constant functions defined on the half-line via the mapping

where denotes the indicator function of the set , and by convention I set , . In words, each element defines a piecewise-constant function with breaks at , , and corresponding levels .

Now I define a prior over this space. For all of the examples in this paper, I fixed and used the following prior:

| (6) |

| (7) |

| (8) |

where time is measured in the coalescent scaling, and then set the remaining such that the ratio is constant. The choice is motivated by hardware architecture considerations discussed below.

The key feature of this prior is that the time discretization is random. This means that when a large number of samples from are averaged together, their individual biases cancel out, resulting in smooth estimates whose shape adapts to the true underlying size history function. Just as important, the user is relieved of the burden of needing to choose the discretization by hand.

2.2. Augmenting the model with frequency spectrum information

The psmc model defined above assumes we have a single sample . However, in many modern applications, multiple samples from a population are available, say , where denotes the number of sampled diploids. Additional information from the joint samples can improve estimation accuracy, particularly in the recent past (Schiffels and Durbin, 2014; Terhorst, J. A. Kamm, and Yun S Song, 2017). To enable phlash to analyze larger samples, I define the modified likelihood function

| (9) |

where is the -th row of the genotype matrix . The first term in the product, , is the likelihood of the site frequency spectrum (SFS) obtained from under a “Poisson random field” model (S. A. Sawyer and Hartl, 1992), and can be efficiently computed even for very large samples (Bhaskar, Y. X. R. Wang, and Y. S. Song, 2015). The second term is the product of the psmc likelihood taken over all diploid samples.

Note that the form of (9) is the same as would be obtained if and were all pairwise independent, which is not true due to shared ancestry. Thus is a “composite likelihood”. In general, composite likelihood methods give unbiased point estimates, but overly optimistic confidence intervals if dependence between the component likelihoods is not taken into account (Varin, Reid, and Firth, 2011). The extent to which this affects the dispersion of the posterior distribution returned by phlash is explored in Section 3.4.

2.3. Efficient computation of the score function

As with many Bayesian procedures, the principal challenge of the model described above is how to sample from the posterior. State-of-the-art sampling methods like Hamiltonian Monte Carlo (HMC; Neal et al., 2011; Hoffman and Gelman, 2014), stochastic Langevin dynamics (Welling and Teh, 2011), or variational inference (VI; Hoffman, Blei, et al., 2013; Blei, Kucukelbir, and McAuliffe, 2017), all require access to the so-called score function, in the above notation, in order to guide the sampler towards regions of high posterior density. By Bayes’ rule, the score function equals , so in view of (9) and the fact that is essentially just a matrix-vector multiply (Polanski and Kimmel, 2003), the main obstacle to sampling is being able to efficiently calculate .

2.3.1. Novel algorithm

The primary technical achievement of this paper is a substantially faster method for computing the score function of the psmc model. First, I summarize the existing state of the art. Using the standard forward algorithm (e.g., Bishop, 2006), as in psmc, the log-likelihood of a hidden Markov model with hidden states can be computed in time and storage when there are sequential observations. Thus, each likelihood evaluation requires a complete pass over the data, and the algorithm must proceed serially (cannot be parallelized) because of the sequential dependency structure of the hidden Markov model. By exploiting problem-specific structure, Harris et al. (2014) improved the asymptotic running time of the psmc forward algorithm to , with the same storage requirement. Palamara et al. (2018) later extended this technique give forward and backward algorithms for the more accurate model.

To numerically differentiate the log-likelihood, one obvious approach is to apply automatic differentiation to the linear-time forward algorithm of Palamara et al. This is very easy to implement using differentiable programming languages designed for neural networks (Martín Abadi et al., 2015; Bradbury et al., 2018). However, from a performance perspective, it is suboptimal. Using reverse-mode automatic differentiation, the algorithm has to store all intermediate computation values, requiring bytes of storage. This results in very high memory overhead; in particular it renders the resulting procedure unsuitable for running on a graphics processing unit (GPU), which is currently the main growth area in high performance computing. Alternatively, one can employ forward-mode automatic differentiation, which works by tracking a set of “dual numbers” alongside the primal computation. However, this has high computational overhead, since every floating-point operation results in additional dual operations, and it is not as optimized, since neural networks rely almost exclusively on back-propagation for training. In my initial experiments, both approaches required 10–30 seconds per gradient evaluation (Figure 4a), too slow to be useful in practice.

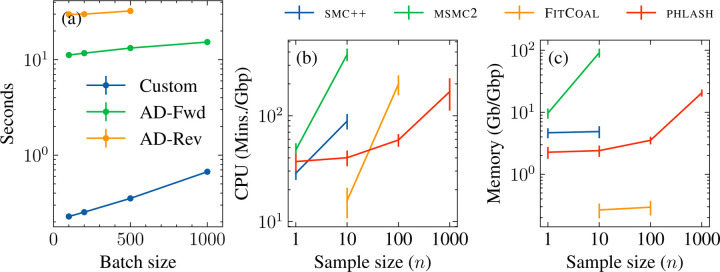

Figure 4:

Benchmarking results. (a) Time needed to evaluate score function as a function of batch size. The sequence length was . Custom algorithm is compared forward- and reverse-mode automatic differentiation of the accelerated/linear-time forward algorithm using Jax (Bradbury et al., 2018). For , reverse-mode automatic differentiation exhausted the available GPU memory and was unable to be evaluated. (b) Mean CPU time and (c) peak memory usage for the various methods, with standard error bars.

To achieve greater performance, I developed a novel score function algorithm which has time and storage complexity. In other words, the algorithm gives gradients “for free” in the same amount of time that it takes the naïve forward algorithm to evaluate the likelihood of an HMM, and its low memory requirement renders it suitable for running massively in parallel on a GPU. Numerical experiments (see Figure 4a) show that it is around 30–90× faster than automatic differentiation. In one sentence, the algorithm works by exploiting a classical identity due to R.A. Fisher for computing the score function of a latent variable model, and accelerating the computations by harnessing problem-specific structure in a manner similar to Palamara et al. (2018). The precise technical explanation requires much additional notation, and is not necessary to understand the rest of the paper, so it is deferred to Appendix A.

2.3.2. Parallel evaluation

The preceding subsection shows how it is possible to evaluate the psmc score function in a memory-limited environment, such as on a GPU. However, each evaluation requires a complete pass over the chromosome, which is expensive when there are many chromosomes and the genome length is long. To achieve greater speed, I employ a further approximation which breaks up long stretches of the chromosome into smaller “chunks” that are effectively independent. The idea is to exploit the fact that a hidden Markov model forgets its initial state quickly in the sense that, for sufficiently large ,

| (10) |

where and are as in (1)-(3). In other words, the predictive distribution is effectively unchanged if, instead of conditioning on the entire observation sequence up to position , we instead condition only up to a fixed window before it. This is a result of the fact that the hidden Markov model forgets its initial state exponentially quickly, so that is approximately independent of conditional on (Le Gland and Mevel, 2000).

The approximation immediately leads to a parallelizable stochastic gradient algorithm for ascending the psmc likelihood surface: selecting a “window size” such that (assume for simplicity evenly divides ),

Then observe that a simple recursion, displayed in Figure 1, can be used to compute each of the “within-block” inner summations in the last line in parallel. The same idea also works for computing the score function due to the connection to the standard forward algorithm described in Appendix B. This leads to a natural stochastic gradient procedure where, instead of computing the gradient across all blocks of a chromosome, we randomly sample a small number of blocks, compute the score, and then return an unbiased estimator of the gradient.

Figure 1:

Parallel algorithm for evaluating the likelihood of an HMM. The exact algorithm evaluates the likelihood sequentially across each observation (grey blocks in figure). The parallel algorithm breaks the observation sequence up into a set of overlapping blocks which can be evaluated in parallel. Blue blocks are used to “warm up” the filtering distribution, and red blocks contribute to the likelihood calculation.

The accuracy of this approximation depends on selecting the number of blocks and, particularly, the “overlap” parameter which controls how many observations are used to approximate the target filtering distribution in (10). Although some exact bounds on the rate of forgetting are known (Le Gland and Mevel, 2000) which could be used to choose , they depend on knowing the underlying model which is the quantity we are trying to infer in the first place. After some experimentation (see Section 3.3) I settled on as providing a numerically accurate approximation to the log-likelihood across the range of simulated models considered in this paper.

2.4. Fitting procedure

With the fast score function estimator in hand, a variety of techniques are available for sampling from the posterior . A particular feature of the phlash model is that the posterior is likely to be highly multimodal: there are many low-dimensional time discretizations that can explain the data equally well. Several of the procedures mentioned above, VI in particular, are known to have difficulty fitting multimodal posterior distributions (Rezende and Mohamed, 2015). After some experimentation, I found that Stein variational gradient descent (SVGD; Liu and D. Wang, 2016) resulted in the best combination of accuracy and speed. SVGD is an optimization-based particle method which is well-suited to running on GPUs, since the particle updates can be performed in parallel. All the experiments in this paper were performed using 500 particles. Smoother approximations to the posterior can be obtained by increasing the particle count, at the expense of greater running time.

To prevent overfitting, phlash supports the ability to terminate early based on a measure of out-of-sample predictive accuracy. If supplied with an independent dataset (i.e., a held-out autosome), it will terminate if the expected log-predictive density has not increased for the past 100 iterations. All of the examples in this paper utilized this fitting procedure. For simulated data (Section 3.1), the first chromosome (in lexicographic order) was held out for each simulated dataset. For the real human data analyses (Section 4), the p arm of chromosome 1 was held out.

3. Results

To orient the reader, a typical output from phlash is shown in Figure 2. For most applications, the main quantities of interest will be the posterior median of the sampled size histories, shown in dark blue in the figure, as well as the corresponding credible interval, shown in light blue. In my testing I found the posterior median to have good accuracy as a point estimator of the underlying size history function; this will be systematically examined in the next section. The credible interval provides a (point-wise) estimate of uncertainty for the point estimate: it grows wider when the data are more ambiguous about effective population size at a given time point, such as for the period in the figure, where there are not a lot of coalescent events remaining to estimate . Finally, the credible bands delineate the smallest region such that 95% of the sampled size history functions are completely contained within the bands; they are necessarily larger than the point-wise credible intervals. The credible bands are calculated by solving an optimization problem as described in Appendix B.

Figure 2:

Example output of the algorithm for the “sawtooth” demography (Schiffels and Durbin, 2014; Adrion et al., 2020). diploid samples were simulated according to the size history shown in black. The posterior distribution returned by PHLASH is summarized by its median (dark blue line), central 95% point-wise credible interval (shaded blue region), and 95% simultaneous credible band (dashed green line).

3.1. Accuracy compared to existing methods

I first studied how well phlash performs on simulated data where the ground truth is known. I compared phlash to three existing methods: smc++, msmc2, and FitCoal. smc++ (Terhorst, J. A. Kamm, and Yun S Song, 2017) is a generalization of psmc which also incorporates frequency spectrum information, by modeling the expected site frequency spectrum conditional on knowing the TMRCA of a pair of distinguished lineages. msmc2 (Schiffels and K. Wang, 2020) optimizes a composite objective where the psmc likelihood is evaluated over all pairs of haplotypes. Finally, FitCoal (Hu et al., 2023) is a newer method which uses the site frequency spectrum to estimate size history. These three were chosen as laying at the intersection of methods that a) are relatively recent, b) have an easy-to-use software implementation, and c) can run on non-human data (i.e., do not require phased data, or detailed genetic maps).

I compared the methods across a panel of 12 different demographic models from the stdpopsim catalog (Adrion et al., 2020). Each model is the result of a previously published study. A particular goal of phlash is for it to be generally applicable across a wide range of biological systems. To that end, a total of eight different species are represented in the benchmark suite: A. gambiae, A. thaliana, B. taurus, D. melanogaster, H. sapiens, P. troglodytes, P. anubis, and P. abelii. Additional details of each model are shown in Table D.1. Together, these constitute a fairly diverse range of demographic models, biological parameters (i.e., mutation and recombination rates), and genome sizes.

To perform the benchmarks, I simulated whole-genome data under each of the models for diploid sample sizes . For reasons of budget and carbon footprint, only three replicates were performed for each model, resulting in a total of different simulation runs. For some species in the panel, such as A. gambiae, the population-scaled recombination rate is so high that the default simulation engine for stdpopsim (msprime, Baumdicker et al., 2022), did not terminate after 24h. Thus, for uniformity, all simulations were performed using the coalescent simulator scrm (Staab et al., 2015).

I ran each inference method on each of the simulated datasets. All methods were limited to 24h of wall time and 256Gb of RAM. This meant that it was only possible to run some of the methods for certain sample sizes: smc++ could only analyze in the allotted amount of time; msmc2 could only analyze in the allotted amount of memory, and FitCoal could only analyze in the allotted amount of time (it crashed with an error for ). The command lines used for simulation and estimation are listed in Appendix C, and summarized plots of every model fit are provided in Figures D.1–D.4.

I considered three accuracy metrics. The first, root mean-squared (or ) error, has previously been used to compare the accuracy of demographic inference methods (e.g., Robinson et al., 2014; Sellinger, Abu-Awad, and Tellier, 2021). It is defined as

where is the true historical effective population size that was used to simulate data, and is an arbitrarily-chosen time cutoff (I chose generations). Overall results are shown in Table 1. No method uniformly dominates, but overall, phlash tended to be the most accurate most often, and is at least competitive with the more accurate method when it is not: of the 4 × 12 = 48 scenarios considered, phlash was the most accurate 30/48 = 62.5% of the time, compared with 7/48 for FitCoal, 6/48 for smc++, and 5/48 for msmc2. For the n = 1 scenarios where only a single diploid genome is available, the difference in performance between phlash and smc++/msmc2 tended to be small, and sometimes in favor of the latter methods. I attribute this to the non-parametric nature of the phlash estimator, which does not benefit from as much prior regularization and generally needs more data to perform well. Also, as can be seen for the Constant model, FitCoal is extremely accurate when the true underlying model is a member of its assumed model class, i.e. containing a small number of epochs of constant or exponential growth. However, it is not clear that this is a reasonable assumption for natural populations; indeed the real data results presented below seem to suggest otherwise.

Table 1:

Root mean-squared error for simulated data. All results have been divided by 108 for clarity. Each entry is averaged over three simulation replicates. Bold marks the entry with the lowest mean in each row. Entries with * are significantly lower than all other entries in the same row using a Bonferroni-corrected t -test with FWER = 0.05.

| Model | SMC++ | MSMC2 | PHLASH | FITCOAL | |

|---|---|---|---|---|---|

| Africa_1T12 | 1 | 0.0232 | 0.0352 | 0.0184* | — |

| 10 | 0.0242 | 0.622 | 0.0209 | 0.0238 | |

| 100 | — | — | 0.0166* | 0.0238 | |

| 1000 | — | — | 0.0134 | — | |

|

| |||||

| nAfrican3Epoch_1H18 | 1 | 0.2 | 0.179 | 1.28 | — |

| 10 | 0.258 | 0.235 | 0.198 | 0.237 | |

| 100 | — | — | 0.148 | 0.0767* | |

| 1000 | — | — | 0.147 | — | |

|

| |||||

| African3Epoch_1S16 | 1 | 1.8 | 3.64 | 2.5 | — |

| 10 | 1.52 | 4.21 | 0.635 | 0.273* | |

| 100 | — | — | 0.548 | 0.232* | |

| 1000 | — | — | 0.68 | — | |

|

| |||||

| AmericanAdmixture_4B11 | 1 | 0.0134 | 0.988 | 4.66 | — |

| 10 | 0.0188 | 0.0569 | 0.126 | 0.0222 | |

| 100 | — | — | 0.0723 | 0.0283* | |

| 1000 | — | — | 0.0487 | — | |

|

| |||||

| BonoboGhost_4K19 | 1 | 0.374 | 0.289* | 0.324 | — |

| 10 | 0.375 | 0.289* | 0.357 | 0.358 | |

| 100 | — | — | 0.378 | 0.358 | |

| 1000 | — | — | 0.392 | — | |

|

| |||||

| Constant | 1 | 0.00579 | 0.0428 | 0.0102 | — |

| 10 | 0.00597 | 0.0145 | 0.00692 | 0.00009* | |

| 100 | — | — | 0.00732 | 0.000123* | |

| 1000 | — | — | 0.00359 | — | |

|

| |||||

| GabonAg1000G_1A17 | 1 | 6.57 | 2.77 | 3.88 | — |

| 10 | 5.3 | 9.29 | 0.836 | 0.955 | |

| 100 | — | — | 0.847* | 0.977 | |

| 1000 | — | — | 0.74 | — | |

|

| |||||

| HolsteinFriesian_1M13 | 1 | 0.055 | 0.086 | 0.0503 | — |

| 10 | 0.0563 | 0.0553 | 0.0438 | 0.0715 | |

| 100 | — | — | 0.0564* | 0.117 | |

| 1000 | — | — | 0.0617 | — | |

|

| |||||

| SinglePopSMCpp_1W22 | 1 | 0.208 | 0.547 | 0.123 | — |

| 10 | 0.198 | 0.121 | 0.108* | 0.167 | |

| 100 | — | — | 0.109* | 0.161 | |

| 1000 | — | — | 0.11 | — | |

|

| |||||

| SouthMiddleAtlas_1D17 | 1 | 0.241 | 0.152 | 0.157 | — |

| 10 | 0.237 | 0.164 | 0.163 | 0.555 | |

| 100 | — | — | 0.127* | 0.388 | |

| 1000 | — | — | 0.174 | — | |

|

| |||||

| TwoSpecies_2L11 | 1 | 0.0122 | 0.044 | 0.0154 | — |

| 10 | 0.0114 | 0.0184 | 0.0112 | 0.154 | |

| 100 | — | — | 0.0176* | 0.0862 | |

| 1000 | — | — | 0.0128 | — | |

|

| |||||

| Zigzag_1S14 | 1 | 0.0265 | 0.0642 | 0.157 | — |

| 10 | 0.0337 | 0.449 | 0.0118 | 0.0235 | |

| 100 | — | — | 0.0132* | 0.0324 | |

| 1000 | — | — | 0.014 | — | |

Although RMSE is a natural error measure, it does not necessarily paint a complete picture, since portions of the size history function may be effectively inestimable by any method due to the coalescent sampling process. This can happen, for example, when estimating very recent population history in an exponentially growing population, or very ancient history in a population that has undergone a bottleneck. In both cases, lack of coalescent events render these time periods “invisible” to coalescent-based demographic inference methods (Terhorst and Yun S Song, 2015; Baharian and Gravel, 2018). In these time periods, error represents algorithm bias only since there is minimal signal to guide the estimator. An example of this phenomenon shown in Figure 3: in panel (a), phlash has very large error compare to smc++ and msmc2, because the latter two methods assume that is flat until 104–105 generations ago, which happens to be true for this particular demographic model. However, since this model has a large until 105 generations ago, the probability of coalescence during this period is low (Figure 3b).

Figure 3:

versus total variation error for the African3Epoch_S16 demography when n = 1. phlash has large mean-squared error relative to msmc2 and smc++ (a), but it mainly occurs at very recent times, when there is low density of coalescence. Total variation error (b–c) accounts for this by measuring the distance between the true and inferred density functions.

Since, ultimately, size history inference is a density estimation problem, a natural alternative measure is the total variation distance between inferred and true demographic models. This is defined as

| (11) |

where is the cumulative hazard rate of coalescence up to time , is the estimate, and is the truth. An example of the integrands is shown in Figure 3(c); phlash in fact has lowest TV error for this example, despite possessing the largest mean-squared error.

Finally, I considered an additional error metric derived from the fact that the coalescent intensity function actually indexes an entire family of distributions, since it also gives the time to first coalescence in a sample of size , with hazard rate of coalescence . Define the “scaled” total variation distance

and then

| (12) |

which is total variation distance and averaged over their implied first-coalescence distributions, in diploid samples of size . Note that as defined in equation (11). The idea of is to measure how increasing sample sizes improves estimation accuracy in the recent past. In the results below I chose , where is the simulated sample size, though this choice is somewhat arbitrary.

Results for and are shown in Tables 2 and 3. When considering error, phlash was most accurate two-thirds of the time (32 of 48 scenarios), and 75% of the time (36/48) when considering . A few other aspects are worth considering. It can be seen that error does not always decrease with increasing sample size , though it does tend to shrink the confidence intervals. Some possible causes and solutions are discussed in Section 5. This was also observed for , though we should not necessarily expect it to decrease with , since by (12), it places greater emphasis on accuracy in the very recent past as grows. Finally, it must be noted many of the models in the stdpopsim catalog were in fact estimated using a psmc-type model, so in that sense the catalog is enriched for members of the simplified model spaces considered by these programs. Hence there is liable to be a small, intangible bias against nonparametric methods in these benchmarks.

Table 2:

Total-variation error for simulated data. Formatting is same as Table 1.

| Model | SMC++ | MSMC2 | PHLASH | FITCOAL | |

|---|---|---|---|---|---|

| Africa_1T12 | 1 | 0.0887 | 0.052 | 0.0408* | — |

| 10 | 0.107 | 0.0476 | 0.0375* | 0.066 | |

| 100 | — | — | 0.0369* | 0.0661 | |

| 1000 | — | — | 0.0257 | — | |

|

| |||||

| African3Epoch_1H18 | 1 | 0.113 | 0.0874 | 0.0832 | — |

| 10 | 0.139 | 0.0578* | 0.065 | 0.102 | |

| 100 | — | — | 0.0514 | 0.0306 | |

| 1000 | — | — | 0.0448 | — | |

|

| |||||

| African3Epoch_1S16 | 1 | 0.548 | 0.21 | 0.0886* | — |

| 10 | 0.563 | 0.199 | 0.0545 | 0.0132* | |

| 100 | — | — | 0.0452 | 0.0106* | |

| 1000 | — | — | 0.063 | — | |

|

| |||||

| AmericanAdmixture_4B11 | 1 | 0.121 | 0.0743 | 0.0747 | — |

| 10 | 0.165 | 0.0649* | 0.118 | 0.188 | |

| 100 | — | — | 0.151* | 0.196 | |

| 1000 | — | — | 0.153 | — | |

|

| |||||

| BonoboGhost_4K19 | 1 | 0.307 | 0.159 | 0.152 | — |

| 10 | 0.279 | 0.155 | 0.111* | 0.629 | |

| 100 | — | — | 0.139* | 0.239 | |

| 1000 | — | — | 0.16 | — | |

|

| |||||

| Constant | 1 | 0.0533 | 0.0245 | 0.0232 | — |

| 10 | 0.0549 | 0.0218 | 0.0179 | 0.000319* | |

| 100 | — | — | 0.0159 | 0.000454* | |

| 1000 | — | — | 0.0122 | — | |

|

| |||||

| GabonAg1000G_1A17 | 1 | 0.527 | 0.157 | 0.0423* | — |

| 10 | 0.524 | 0.156 | 0.0267 | 0.00424 | |

| 100 | — | — | 0.0326 | 0.00452* | |

| 1000 | — | — | 0.0277 | — | |

|

| |||||

| HolsteinFriesian_1M13 | 1 | 0.15 | 0.125 | 0.0922* | — |

| 10 | 0.185 | 0.096 | 0.0977 | 0.39 | |

| 100 | — | — | 0.0883* | 0.444 | |

| 1000 | — | — | 0.0867 | — | |

|

| |||||

| SinglePopSMCpp_1W22 | 1 | 0.245 | 0.0953 | 0.0807 | — |

| 10 | 0.249 | 0.0935 | 0.0801 | 0.113 | |

| 100 | — | — | 0.0798 | 0.0961 | |

| 1000 | — | — | 0.0803 | — | |

|

| |||||

| SouthMiddleAtlas_1D17 | 1 | 0.0572 | 0.0491 | 0.0487 | — |

| 10 | 0.0621 | 0.0425 | 0.0504 | 0.108 | |

| 100 | — | — | 0.0365* | 0.0816 | |

| 1000 | — | — | 0.0423 | — | |

|

| |||||

| TwoSpecies_2L11 | 1 | 0.0267 | 0.0147* | 0.0314 | — |

| 10 | 0.0249 | 0.0132 | 0.0342 | 0.172 | |

| 100 | — | — | 0.0296* | 0.138 | |

| 1000 | — | — | 0.0224 | — | |

|

| |||||

| Zigzag_1S14 | 1 | 0.142 | 0.0666* | 0.0899 | — |

| 10 | 0.136 | 0.0667* | 0.0862 | 0.103 | |

| 100 | — | — | 0.0688* | 0.143 | |

| 1000 | — | — | 0.0695 | — | |

Table 3:

TVn error for simulated data. See main text for description of this metric. Formatting is same as Table 1.

| Model | n | SMC++ | MSMC2 | PHLASH | FITCOAL |

|---|---|---|---|---|---|

| Africa_1T12 | 1 | 0.0887 | 0.052 | 0.0408* | — |

| 10 | 0.245 | 0.0965* | 0.109 | 0.44 | |

| 100 | — | — | 0.268* | 0.856 | |

| 1000 | — | — | 0.138 | — | |

|

| |||||

| African3Epoch_1H18 | 1 | 0.113 | 0.0874 | 0.0832 | — |

| 10 | 0.264 | 0.228 | 0.216 | 0.214 | |

| 100 | — | — | 0.0572 | 0.0836 | |

| 1000 | — | — | 0.0291 | — | |

|

| |||||

| African3Epoch_1S16 | 1 | 0.548 | 0.21 | 0.0886* | — |

| 10 | 0.124 | 0.229 | 0.0849* | 0.22 | |

| 100 | — | — | 0.042* | 0.429 | |

| 1000 | — | — | 0.0271 | — | |

|

| |||||

| AmericanAdmixture_4B11 | 1 | 0.121 | 0.0743 | 0.0747 | — |

| 10 | 0.318 | 0.145* | 0.542 | 0.468 | |

| 100 | — | — | 0.156* | 0.631 | |

| 1000 | — | — | 0.045 | — | |

|

| |||||

| BonoboGhost_4K19 | 1 | 0.307 | 0.159 | 0.152 | — |

| 10 | 0.136 | 0.124 | 0.0715* | 0.193 | |

| 100 | — | — | 0.0292* | 0.441 | |

| 1000 | — | — | 0.0196 | — | |

|

| |||||

| Constant | 1 | 0.0533 | 0.0245 | 0.0232 | — |

| 10 | 0.0792 | 0.0302 | 0.0289 | 0.000319* | |

| 100 | — | — | 0.0137 | 0.000454* | |

| 1000 | — | — | 0.0257 | — | |

|

| |||||

| GabonAg1000G_1A17 | 1 | 0.527 | 0.157 | 0.0423* | — |

| 10 | 0.36 | 0.405 | 0.171 | 0.167* | |

| 100 | — | — | 0.309* | 0.363 | |

| 1000 | — | — | 0.457 | — | |

|

| |||||

| HolsteinFriesian_1M13 | 1 | 0.15 | 0.125 | 0.0922* | — |

| 10 | 0.453 | 0.643 | 0.514 | 0.89 | |

| 100 | — | — | 0.497* | 0.978 | |

| 1000 | — | — | 0.507 | — | |

|

| |||||

| SinglePopSMCpp_1W22 | 1 | 0.245 | 0.0953 | 0.0807 | — |

| 10 | 0.207 | 0.152 | 0.0843* | 0.185 | |

| 100 | — | — | 0.204* | 0.422 | |

| 1000 | — | — | 0.0115 | — | |

|

| |||||

| SouthMiddleAtlas_1D17 | 1 | 0.0572 | 0.0491 | 0.0487 | — |

| 10 | 0.0911 | 0.139 | 0.118 | 0.243 | |

| 100 | — | — | 0.0261* | 0.315 | |

| 1000 | — | — | 0.0183 | — | |

|

| |||||

| TwoSpecies_2L11 | 1 | 0.0267 | 0.0147* | 0.0314 | — |

| 10 | 0.0616 | 0.0307 | 0.0948 | 0.415 | |

| 100 | — | — | 0.0487* | 0.377 | |

| 1000 | — | — | 0.104 | — | |

|

| |||||

| Zigzag_1S14 | 1 | 0.142 | 0:0666* | 0.0899 | — |

| 10 | 0.278 | 0.22 | 0.175* | 0.393 | |

| 100 | — | — | 0.105* | 0.674 | |

| 1000 | — | — | 0.00889 | — | |

3.2. Running time and memory consumption

Next, I examined the computational resources required by each method when analyzing the simulated datasets mentioned above. The peak amount of memory used, as well as total CPU time, was recorded for each simulation run. Because the datasets are simulated from organisms with differing genome lengths, I normalized both of these measures by genome length (measured in gigabase-pairs) to make them comparable across runs, and then averaged the data from all runs together for each method and sample size.

The results are shown in Figure 4(b)–(c). For analyzing a single diploid sample, , all methods required a similar amount of CPU time, around 20–30 minutes per gigabase; msmc2 required the most memory, and phlash required the least. For , the only sample size where it was possible to run all four methods, FitCoal was the most efficient in terms of time and memory usage, which was expected since it only analyzes the frequency spectrum. Of the HMM-based approaches, phlash required significantly less CPU time and memory than smc++ and, especially, msmc2. Increasing the sample size to caused the running time of FitCoal to increase by roughly tenfold, while memory consumption remained low, while for phlash, CPU and memory demands increased only moderately. Finally, for , no method except phlash was able to run given the allotted computational resources, and one thousand diploid samples using phlash required roughly the same amount of memory, and less CPU time, than analyzing ten samples using msmc2.

3.3. Accuracy of the parallel approximation

As mentioned above, the key feature that enables phlash to analyze large datasets quickly is that it runs in parallel on many GPU cores. Although it is somewhat implied by the simulation results of the preceding section, I directly verified that this approximation is accurate and does not introduce too much numerical error into the computations. Specifically, for each of the simulation runs, I compared the results of a) evaluating the log-likelihood using the exact (i.e., sequential) forward algorithm and b) the parallel algorithm, for different settings of the overlap parameter (cf. Section 2.3.2). Smaller values of lead to greater computational gains, since there is less sequence overlap, but more approximation error, since the Markov chain does not have as much time to forget its initial distribution.

Results are shown in Figure 5(a). When , the method simply breaks the chromosome into non-overlapping chunks and analyzes them in parallel; as expected, relative error is highest at this setting. Increasing the overlap length from up to successively diminished the numerical error. At the relative error is about 10−8, roughly the smallest difference that can be represented using single-precision floating point. Since the GPU implementation also runs in single precision, I chose for all the results reported in the paper. Finally, I note the presence of a few outliers in Figure 5(a), which correspond to organisms and demographic models where the approximation error diminishes less rapidly than average. Generally this occurs in inbred populations, where long runs of homozygosity appear to lessen the rate of forgetting in the coalescent Markov chain. Indeed, the model with the highest relative error was HolsteinFriesian_1M13, which features a very recent population crash. For species with similar characteristics, users may want to consider increasing .

Figure 5:

Accuracy of likelihood approximations. Panel (a) is a box-whisker plot of the relative error, defined as , for various settings of the overlap parameter , across all simulations. Panels (b)–(e) compare the results of model fitting using the composite likelihood approximate, versus independent likelihoods (see text for description).

3.4. Calibration of the composite likelihood posterior

Finally, I studied the extent to which the composite likelihood approximation used in phlash affects the resulting posterior distribution. In the frequentist setting, it is well-known how to adjust the Fisher information matrix in order to obtain asymptotically valid confidence intervals for the maximum composite likelihood estimator. However, the theory of Bayesian inference using composite likelihood is still in its early stages, and it is not as obvious how to adjust the posterior distribution of a composite Bayesian model in order to ensure proper calibration (Pauli, Racugno, and Ventura, 2011; Ribatet, Cooley, and Davison, 2012).

To assess the extent to which the credible intervals in phlash are deflated, I compared the estimation results on several human real data examples under two scenarios. The first scenario, “composite”, is the default setting for phlash and utilizes both frequency spectrum and linkage information as described in Section 2.2. For the second scenario, “exact”, I separated the data into two independent and roughly equally-sized subsets consisting of chromosomes 1–8 and 9–22. On the first eight chromosomes, I extracted data from a single diploid member of the population, and for the remaining ones, I estimated a site frequency spectrum at approximately unlinked sites by considering only 100bp segments at regular intervals of 25kbp. For model fitting, the psmc and SFS likelihoods were evaluated separately on these respective sources of information, leading to a model where the two likelihood terms are approximately independent of each other, and the constituent entries of the SFS are also approximately independent.

The results of these analyses are shown in Figure 5(b)–(e). There is fairly good agreement between the two scenarios, particularly in the ancient past. The “composite” scenario tends to produce narrower confidence intervals in the very recent past, though the differences do not appear extreme. There is particularly good agreement between the two sets of estimates in the ancient past, a fact which will be revisited in Section 4.4. In general, phlash estimates using the default model seem to be relatively well calibrated, and users who desire stricter calibration can employ the downsampling scheme outlined above.

3.5. Inferring recombination rates

phlash returns a full posterior distribution over recombination rate. Although it is not the main focus, I examined the accuracy of this posterior distribution for each of the simulated datasets described above. Results are shown in Figure 6. For reasons that are currently unclear to me, there is a slight downward bias across all models. However, in general, the estimates are fairly accurate, with a posterior median that is within 5–15% of the ground truth. Increasing the sample size sharpens the posterior distribution to an extent, with the greatest jump occurring at . Accuracy was worse for models 6 and, especially, 10; again I do not currently understand the cause, though it does not appear to adversely impact estimation accuracy (cf. Table 1 and Figures D.1 and D.4). Finally, I note that phlash currently assumes a single, uniform recombination rate across all contigs, whereas the rate is chromosome-specific in the stdpopsim catalog. Allowing the rate to vary by chromosome could be an avenue for future work.

Figure 6:

Posterior distribution of the recombination rate for each of the models shown in Table D.1.

4. Applications

Having verified that phlash performed well on simulated examples, I turned to analyzing real data. The analyses in this section are based on a recently published unified genomes dataset generated by Wohns et al. (2022). The dataset contains 3,601 modern samples, as well as several ancient samples, obtained from a variety of sources (Meyer et al., 2012; Prüfer et al., 2014; The 1000 Genomes Project Consortium, 2015; Mallick et al., 2016; Bergström, McCarthy, et al., 2020; Mafessoni et al., 2020). The samples are organized into 214 different subpopulations, however there is some duplication amongst them—for example, HGDP, SGDP, and 1000 Genomes all contain samples from the Yoruba population. After merging samples from duplicated populations, 159 populations remained; all of the descriptors below refer to the data after merging. Except where noted, all of the estimates assume a human mutation rate of 1.29×10−8 per base pair per generation and a generation time of years.

4.1. Inferring demographic history

The main use of phlash is to infer population history. Figure 7(a) shows the results of running phlash on all 159 populations in the dataset: each opaqued black line is the posterior median for one of the populations. For clarity, I also grouped the populations into six geographical super-populations, plus one for the ancient samples, and ran phlash on the combined data (Figure 7b). The estimates recover some of the known motifs of human evolution, including shared ancestry in the ancient past, the African/non-African divergence, recent rapid expansion, a deep divergence between the African and non-African lineages, divergence of the Oceania super-population from a Eurasian ancestral population (Wollstein et al., 2010), and the gradual extinction of all archaic populations.

Figure 7:

Results of running phlash on 3,609 genomes. Panel (a): estimates for all populations. Vertical shaded region is 813–930kya, see Section 4.4. (b) Estimates after grouping by super-population. (c-d): Estimates and 95% credible intervals for three (c) modern and (d) archaic subpopulations.

phlash outputs more than just point estimates: Figure 7(c) visualizes the complete posterior distribution for the Han, Yoruba, and Papuan subpopulations, as does Figure 7(d) for several archaic genomes. The width of the confidence bands can address more nuanced questions about how the populations evolved—they show, for example, increased uncertainty in the ancient as well as very recent past. Another interesting feature concerns population divergence. Panel (a) shows the African/non-African divergence occurring approximately 6,500 generations ago, which is earlier than most published estimates (e.g., López, Van Dorp, and Hellenthal, 2015). However, panel (b) reminds us that the estimates are noisy; a reasonable lower bound on the divergence time could be when the Yoruba and Han/Papuan credible bands diverge, which results in a more reasonable estimate of ~87kya (~3k generations ago, assuming a generation time of 29 years).

4.2. Detecting admixture and population structure

The preceding observation suggests a non-parametric technique for detecting population structure in pairs of populations: look for the earliest time when the effective population sizes are significantly different from each other. Formally, given two size history functions and , a plot of the “ratio estimator” over time should roughly reveal when the two populations diverged, assuming of course that they experienced distinct changes in effective population size after the divergence. This estimator bears some resemblance to the so-called “cross-coalescent rate” (CCR) estimator, defined by Schiffels and Durbin (2014) as the ratio , where denotes the instantaneous rate of coalescence between a haplotype sampled from population 1 and one sampled from population 2. CCR curves have been proposed as a non-parametric method of detecting population divergence and admixture (Spence et al., 2018; Schiffels and K. Wang, 2020). However, there is one important difference: estimating requires accurately phased data, which may be difficult to obtain in practice, whereas the ratio estimator does not.

To explore this idea further, I simulated data for the Yoruba, Han, and European populations according to a published out-of-Africa model (Gutenkunst et al., 2009), and computed the CCR and ratio curves. Then, I estimated the same sets of curves using real data for these three populations. Results are shown in Figure 8. (Although CCR is usually constrained to lie in by jointly estimating , , and , phlash currently lacks this capability, so the CCR curves shown are based on independent estimates and can exceed 1.) In this model, basal CEU/CHB population diverges from YRI around 6,500 generations ago, with residual gene flow until the present. The shaded region in panels (a) and (b) highlights the period between the YRI/basal divergence, and the divergence of CEU and CHB. In both the real and simulated data, the ratio estimator has a pronounced spike around this time, which simply reflects the fact that the divergent population experienced a population crash immediately after the split. The CCR also shows an the expected transition from to around this time, however it is perhaps a bit harder to discern. Overall, both estimators can be useful in practice. When a large/ancient split has occurred, the ratio estimator can be used to date it with decent accuracy, while the CCR curve will be more sensitive when the data can be confidently phased.

Figure 8:

Posterior inference of population structure. (a) Posterior distribution (median + 95% credible interval) of cross-coalescent rate between the Yoruba (YRI) and Han (CHB) populations. (b) Posterior distribution of non-parametric ratio estimator. (c) Three-population out-of-Africa model used to generate simulated data.

4.3. Analyzing ancient DNA

phlash can be used on ancient samples without modification. In this case, the time units should be interpreted as “generations before the lifetime of the sample” instead of “generations before present”. Users should keep in mind that ancient DNA variant calls tend to have a higher error rate, which has the potential to corrupt size history inferences. For example, when I first ran phlash on the Neanderthal and Denisovan genomes in the Wohns et al. data, it estimated a sharp, counterintuitive increase in across all ancient populations roughly 100 generations before sample lifetime (Figure D.5). I then performed some exploratory analysis of the Wohns et al. call set, and found a roughly four-fold enrichment for (or complementary ) mutations compared to other mutation types in the ancient samples (Figure 9a). This motif is known to arise in ancient DNA through a chemical process known as deamination (Dabney, Meyer, and Pääbo, 2013). Hence, the introduction of spurious variants can cause phlash to inflate estimates of in the recent past.

Figure 9:

Analyzing ancient DNA using phlash. a) Histogram of the different mutation types in the ancient samples. Enrichment for mutations are likely the result of deamination, as are mutations after accounting for uncertainty in the ancestral allele. mutations are likely the result of deaminated bases on the complementary strand. b) Size history estimates for the Vindija Neanderthal before and after filtering out probable deaminated bases.

To correct for this effect, I re-ran phlash on a filtered version of the Wohns et al. call set which excluded all and variants that were private to the ancient populations. Filtered and unfiltered results are shown for the Vindija population in (Figure 9b) and for all ancient populations in Figure D.5. Filtering mostly removes the sudden, recent increase in , although evidence of it still persists to an extent in the credible intervals. It is possible that this simplistic filter does not detect other types of errors that can arise in aDNA. Users who want to study ancient samples using phlash, or other demographic inference tools, will want to pay careful attention to issues of data quality and filtering (see Orlando et al., 2021 for a review of best practices).

4.4. Detecting a population bottleneck

Finally, I studied the phlash’s ability to infer a sharp bottleneck in human data. Hu et al. (2023) have recently claimed that the population ancestral to all modern humans experienced an extreme bottleneck during the period 930–813kya, with the effective population size reduced to roughly 1,280 breeding individuals, or ~1.3% of the ancestral size. The findings are based on fitting a novel demographic inference procedure, FitCoal, to frequency spectrum data from various African and non-African subpopulations in the 1000 Genomes and HGDP-CEPH panels. Curiously, although their method detected a bottleneck in African populations, it failed to do so in any of the 40 non-African populations surveyed, despite all extant populations having descended from a common basal population during the period in question (Bergström, Stringer, et al., 2021). Hu et al. attribute this discrepancy to the fact the out-of-Africa dispersal hinders the chance of discovering an ancient severe bottleneck, and suggest that in suitably corrected data, it is detectible in all modern populations. Finally, they assert that psmc, smc++, and relate (Speidel et al., 2019) all underestimated the severity of the ancient bottleneck.

I investigated whether phlash, which makes fewer parametric modeling assumptions and provides uncertainty quantification, could shed additional light on possibility of a human near-extinction event. First, I separately fit size history estimates to all of the distinct subpopulations in the Wohns et al. dataset, for a total of 159 different estimates. Posterior medians for each subpopulation are plotted in Figure 2(a), with the putative bottleneck interval shaded in gray (assuming a generation time of years, as in Hu et al.). A posteriori, the data show little sign of a bottleneck, with all populations tightly clustered around during the suggested interval. However, these estimates merely reflect the central tendency of each posterior—could stronger evidence emerge by examining the full distribution? I next considered distribution of the functional

| (13) |

evaluated over all populations. Here, is a cutoff time designed to focus the estimator on ancient times (i.e., before the OOA bottleneck). I conservatively chose generations, i.e. 500kya, which should give the estimator good power to detect a bottleneck in the distant past. A histogram of the posterior median of this functional is plotted in Figure 10(a). Although there is somewhat greater dispersion, there is still little evidence that dipped below during the period in question.

Figure 10:

Searching for signs of an ancient bottleneck in real and simulated human data. (a) Histogram of posterior median of for each of the 159 populations considered. (b-c) The estimated size history of the Yoruba/Han population (black line, estimated from real data) was modified to incorporate a bottleneck. Orange, green, and blue correspond to bottleneck strength respectively. Grey shaded region is years before present, assuming a generation time of 24 years. Dashed lines represent , the population size during the bottleneck, and solid lines represent the corresponding phlash estimates. (d) Mirror plot of posterior density of test statistic for Han and Yoruba. Shaded density curves are its distribution in simulated data; bar plots are a histogram of its distribution in real data.

One potential explanation of these results is that phlash is biased, a possibility already suggested by Hu et al. with regard to the methods mentioned above. I view this as unlikely in light of the simulation results presented above; Figures D.1–D.4 show that phlash is quite generally quite accurate in the distant past, across a wide range of systems. Nevertheless, to probe further, I then followed Hu et al.’s methodology by simulating data under a pre-estimated model, introducing an artificial bottleneck, and then assessing accuracy. I performed this experiment for two populations, Han and Yoruba, that did and did not experience the OOA bottleneck, respectively. The number of diploid genomes for each population was and .

Using the fitted size histories for these two populations say, and , I introduced bottlenecks of strength , such that the perturbed size history was

for . Results are shown in Figure 10(b)–(c): the black line is the baseline estimate, , and the colored lines are the result of simulating from and then estimating to obtain . A total of ten replicates were performed for each setting of and , for a total of 60. The bottleneck interval is depicted in shaded gray. The scenario (orange lines) reiterate that phlash has good power to estimate the true underlying size history function. When (green lines), the phlash estimates dip noticeably, though some upward bias is apparent when comparing and (the true level of is shown as a dashed green line). Finally, when (blue lines), the phlash estimates closely track the true size history function, but fail to increase after the bottleneck interval, presumably because the severe bottleneck has forced all lineages to coalesce.

Focusing on the case, which corresponds to the result claimed by Hu et al., we can conclude the following: even though phlash does not perfectly recapitulate the size history for the period , there is a substantial difference in the estimated size history functions between the and cases. In fact, as shown in Figure 10(d), the posterior distributions of the test statistic (13) are effectively disjoint between the two scenarios. From a decision-theoretic standpoint, this implies nearly perfect statistical power to distinguish the null hypothesis from the alternative (e.g., Berger, 2013). In other words, if were true, it would be readily discernible in data, irrespective of any estimation bias, because the test statistic would lay uniquely in the support of one of two disjoint distributions, and conversely for . In fact, this is precisely what I observed: the empirical distribution of the test statistic (Figure 10(d), grey bars) closely matches the scenario for both populations, and barely overlaps with the distribution at all. Thus, at least in the limited set of analyses I have performed here, I find little support for the strong ancient bottleneck hypothesis.

5. Conclusion

In this paper I have presented phlash, a new method for estimating historical effective population size. Using an extensive battery of simulation tests, I showed that phlash tends to be more accurate and efficient than several state-of-the-art methods. Moreover, the posterior distribution returned by phlash has a number of other uses, including uncertainty quantification, detection of population structure, and testing for ancient population bottlenecks. phlash is implemented as an efficient, open source Python package (see Data availability) and features a user-friendly API: almost all of the analyses presented here require but a few lines of code, and took under 60 minutes per population to run. One important caveat is that the implementation currently requires an Nvidia GPU. I hope to extend it it to work with AMD (and potentially other) accelerators in the future.

A few other possible extensions and avenues for improvement come to mind. Here I chose a simple prior model (equations 6–8) for which fixes the time discretization to a logarithmically-spaced grid with random endpoints. A more flexible model would permit the time discretization to be completely arbitrary, which could allow for greater adaptivity. Indeed this was my initial approach, but I found that the particle-based sampling algorithm had difficulty converging, and so discarded it in favor of the simpler model with fewer parameters. Also, as noted in Section 3.1, phlash’s estimation error sometimes increased with larger sample sizes. I believe that this is because the composite likelihood (9) places equal weight on the “PSMC” and “SFS” components, which leads to the SFS component dominating for large , combined with greater variance in the low-frequency SFS entries for large sample sizes. A smarter scheme might be to adaptively weight the the two terms, based perhaps on some measure of out-of-sample error, and to employ a binning procedure in the SFS component of the likelihood, as in Bhaskar, Y. X. R. Wang, and Y. S. Song (2015). Finally, phlash currently assumes a single global recombination rate parameter across all analyzed contigs. It could be generalized to learn contig-specific rates or, with considerably more effort, to utilize a fixed (non-learnable) position-specific rate map. Here I do note, however, that estimation quality seems to depend only very weakly on accurate knowledge of the recombination rate, as previously remarked by other authors (Schiffels and Durbin, 2014).

As far as extensions, the main technical contribution of the paper, which is a fast way to evaluate the gradient of the psmc log-likelihood function, seems generally useful for population genetic inference. Indeed, the so called “inverse instantaneous coalescent rate” function, denoted in this paper, has been utilized in a number of previous studies to estimate more complex models than the simple panmictic one considered here (Rodríguez et al., 2018; Arredondo et al., 2021; Boitard et al., 2022; Mazet and Noûs, 2023). Extending these methods to take advantage of a differentiable likelihood function is, technically at least, straightforward. Similarly, certain methods which take as input pre-called identity-by-descent (IBD) tracts (e.g., Al-Asadi et al., 2019) can be re-cast as probabilistic models which depend on an underlying psmc likelihood, and could be generalized to obtain procedures which integrate over all possible IBD scenarios, instead of fixing one of them a priori.

Acknowledgements

I am grateful to Dat Do for helpful providing comments on a draft of the manuscript. This research was supported by NSF grant DMS-2052653 and the National Institute of General Medical Sciences of the NIH under award number R35GM151145. The content is solely the responsibility of the author and does not necessarily represent the official views of the NIH.

A. Fast computation of the score function

This section describes a fast algorithm for computing the score function of the psmc model. The algorithm combines several existing results from the literature. The first of these is Fisher’s identity (Cappé and Moulines, 2005), which is a general expression for the score function of a latent variable model. To state the identity I recall some notation from their paper. Let be a parameter, denote the transition function between states and in a hidden Markov model, and denote the probability of the -th observation when the hidden state at position is . Define , where is a prior on the initial state of the hidden Markov chain, and for . Fisher’s identity applied to the hidden Markov model is then

| (14) |

where the expectation is with respect to the latent states given the observed data . This identity gives the intuitive result that the score function decomposes as a sum of expectations over the pairwise posterior distributions . It is well known that these posterior distributions may can recursively computed using the forward-backward algorithm; indeed this is essentially the “E”-step of the EM algorithm used by for parameter estimation in HMMs (Bishop, 2006). However, this requires storing the results of the forward pass which costs memory which, as noted above, is prohibitive in the GPU setting.

In fact, there is a less well-known variant of the Baum-Welch algorithm which has lower memory and higher computational cost. Jensen (2009) shows how the so-called “linear memory” Baum-Welch algorithm can recursively compute posterior expectations of the form

| (15) |

where is an arbitrary functional, using only a single pass over the data. Observe that (14) has the form (15) for . Therefore, the linear memory Baum-Welch algorithm can also be used to compute the score function. A single run of the algorithm takes time. Hence, to run the standard Baum-Welch algorithm using the linear-memory variant, we would have to compute (15) for for all , at an overall cost of operations, explaining why the algorithm is not widely used in practice.

A.1. A constant memory quadratic-time algorithm

In this section I show how the linear memory Baum-Welch algorithm can be combined with fast matrix-vector multiplication algorithms that have been developed for coalescent hidden Markov models to yield a new algorithm that is simultaneously time- and memory-efficient. The central recursive quantity analyzed by Jensen (2009) is

which is (proportional to) the posterior expectation of evaluated over all hidden state paths ending in . Jensen shows that satisfies the recursion

| (16) |

where if the emission probability of the symbol observed at position conditional on the latent state being , and is the filtering density.

To advance the algorithm one step we have to compute for all . Because of the summation in (16), computing each entry costs FLOPS, for a total cost of . Note also that once is computed, the algorithm no longer makes use of for . Hence the memory requirement is only per computed ; in particular, it does not depend on the sequence length .

To arrive at a faster algorithm I first observe that (16) can be written more compactly in matrix notation as

| (17) |

where denotes Hadamard (entry-wise) product. Now, the term is a standard matrix-vector multiply costing . However, in the specific case where is the transition matrix of a PSMC-like hidden Markov model, Palamara et al. (2018) show that matrix-vector products of the form can be computed in time. (Note that, although v is a probability vector in their application, the result holds for an arbitrary .) Hence, the cost of computing the first term in (17) can immediately be reduced to .

The second term, , does not necessarily have the structure needed for the linear-time matrix-vector multiply algorithm to work. However, by reparameterizing the transition matrix of the SMC coalescent hidden HMM in a favorable way, I can ensure that it can be computed in time linear in . The next section describes how to do that.

A.2. Structure of the SMC’ transition matrix

Palamara et al. (2018) have shown that the transition matrix for the SMC, SMC’, and conditional Simonsen-Churchill (CSC) models have the following structure:

(Here is a discretized version of the corresponding transition function defined in equation (2).) In the above display, , are the first sub- and super-diagonals, respectively, is the main diagonal, and is the ratio of successive columns in the first row.

I first reparametrize this matrix in a different coordinate system which will make computation of score function easier:

| (18) |

where I defined and . Now let denote the entry-wise logarithm of in the above parameterization. We then have

| (19) |

Now let denote the log-likelihood under the PSMC, PSMC’, or PSMC-CSC models. Consider how to compute where are the diagonal entries of the transition matrix . Applying Fisher’s identity (14), we have

which is (15) with . Hence, one can apply the algorithm of the preceding section, where I note that, in this case,

where denote the standard coordinate vectors. Note that this computation takes time. Overall, computing therefore requires time and memory, and hence can be evaluated in time and memory.

The score function with respect to the other entries of can be computed in a similarly, if slightly more involved, manner. I show how to compute and omit the similar derivations of the remaining parameters. Using (19), we have

So contains the lower diagonal entries of the -th column of , and is zero everywhere else. But by (18), the lower diagonal entries are constant, so that

which can again be computed in time using recursion.

A.3. Technical considerations

One important caveat of the algorithm is that, for technical reasons related to the Nvidia CUDA architecture, performance is currently optimized when , or a small multiple thereof. This occurs for two reasons: a) the size of a half-warp on all existing CUDA GPUs is 16 threads, and b) the memory requirement of the algorithm is roughly bytes. With , the state of the entire algorithm can be store in on-chip shared memory, which is hundreds of times faster to access that global/hierarchical block memory.

Although results in a somewhat coarse approximation, the experimental results in the main text show that this choice does not overly bias the estimates. Furthermore, it should be possible to increase with future generations of hardware.

B. Algorithm for computing credible bands

Given a posterior sample from , let

denote the set of all bracketing functions that contain at least a fraction of the sample. The credible band is the function pair such that, for any , there exists a such that or ; in other words, the tightest possible band in .

The credible band can be determined by solving a mixed-integer linear programming problem. Let be a grid of timepoints such that is constant on for all and ; this is always possible for large enough since each is piecewise-constant. Then define the decision variables

: upper bound on the confidence band at .

: lower bound on the confidence band at .

: binary indicator that equals 1 iff .

The objective function is

subject to the constraints:

, for all and ,

, for all and ,

, for all ,

, , for all .

where is a sufficiently large constant such that the constraint is ignored if (the so-called “big “ method).

Exactly solving the optimization problem amounts to taking the union of all time points in all of the models , which are almost surely distinct, so that using the program defaults. This results in a very large optimization problem which takes many days to solve. For this reason, the implementation in phlash defaults to approximating each at a sparse grid of points. Specifically, it finds and such that the posterior sample is constant outside of and then approximates each function at a geometrically spaced grid of 100 points ,

The optimization problem is then solved with in place of .

C. Command lines

The command line used to run scrm was:

scrm {sample size} 1 {demographic model} -t {theta} -r {rho} {L}

-transpose-segsites -SC abs -p 14 -oSFS -seed {seed}

where <sample size>, theta, etc. denote various simulation- and species-specific parameters. The demographic model parameters were obtained by exporting each stdpopsim model into a sequence of ms demography commands using demes (Gower et al., 2022).

The command line use to run smc++ was:

smc++ estimate --cores 4 --knots 24 -o {outdir} {mutation_rate} {input_files}

The command line used to run msmc2 was:

msmc2_Linux -o {outdir}/output -I {pairs} -t4 {hets}

where pairs was set equal to the sequence . This setting ensured that msmc2 only had access to unphased data, and also reduced memory consumption.

The command line used to run FitCoal was

java -cp {jar} FitCoal.calculate.SinglePopDecoder

-table {tables}

-input {afs} -output {output_base}

-generationTime 1

-mutationRate {mutation_rate_per_kb}

-omitEndSFS {trunc}

-randSeed {seed}

-genomeLength {genome_length_kbp}

The trunc parameter in the preceding display was obtained by first running

java -cp FitCoal.jar Fitcoal.TruncateSFS

as suggested in the FitCoal README.

D. Additional Figures and Tables

Table D.1:

Description of the simulation models used to benchmark each method. Each model is taken from the stdpopsim catalog (Adrion et al., 2020). is the recombination rate, computed as a weighted average over the chromosome-specific rates. is total genome length in gigabase-pairs; simulations were restricted to recombining autosomes.

| Species | Model | Population | Description | μ/bp/gen | r/bp/gen | L (Gbp) | |

|---|---|---|---|---|---|---|---|

| 1 | Anopheles gambiae | GabonAg1000G_1A17 | GAS | Stairwayplot estimates of for Gabon sample | 3.5 × 10−9 | 1.38 × 10−8 | 0.206 |

| 2 | A. thaliana | African3Epoch_1H18 | SouthMiddleAtlas | South Middle Atlas African three epoch model | 7 × 10−9 | 8.06 × 10−10 | 0.119 |

| 3 | A. thaliana | SouthMiddleAtlas_1D17 | SouthMiddleAtlas | South Middle Atlas piece-wise constant size | 7 × 10−9 | 8.06 × 10−10 | 0.119 |

| 4 | Cattle | HolsteinFriesian_1M13 | Holstein_Friesian | Piecewise size changes in Holstein-Friesian cattle. | 1.2 × 10−8 | 9.26 × 10−9 | 2.49 |

| 5 | D. melanogaster | African3Epoch_1S16 | AFR | Three epoch African population | 5.49 × 10−9 | 2.01 × 10−8 | 0.109 |

| 6 | Human | Africa_1T12 | AFR | African population | 1.29 × 10−8 | 1.28 × 10−8 | 2.88 |

| 7 | Human | AmericanAdmixture_4B11 | ADMIX | American admixture | 1.29 × 10−8 | 1.28 × 10−8 | 2.88 |

| 8 | Human | Constant | pop_0 | Constant population size, | 1.29 × 10−8 | 1.28 × 10−8 | 2.88 |

| 9 | Human | Zigzag_1S14 | generic | Periodic growth and decline. | 1.29 × 10−8 | 1.28 × 10−8 | 2.88 |

| 10 | Chimpanzee | BonoboGhost_4K19 | bonobo | Ghost admixture into bonobos | 1.6 × 10−8 | 1.2 × 10−8 | 2.79 |

| 11 | Olive baboon | SinglePopSMCpp_1W22 | PAnubis_SNPRC | SMC++ estimates of for Papio Anubis individuals | 5.7 × 10−9 | 1.12 × 10−8 | 2.59 |

| 12 | Sumatran orangutan | TwoSpecies_2L11 | Bornean | Two population orangutan model | 1.5 × 10−8 | 6.4 × 10−9 | 2.67 |

Figure D.1:

Simulation results for models 1–3. For , shaded regions indicate 95% credible intervals. For all other , the solid (dotted) lines represent the median (min/max) across all simulation replicates.

Figure D.2:

Simulation results for models 4–6. See Figure D.1 for additional information.

Figure D.3:

Simulation results for models 7–9. See Figure D.1 for additional information.

Figure D.4:

Simulation results for models 10–12. See Figure D.1 for additional information.

Figure D.5:

estimates for archaic humans. Solid lines are posterior medians, and blue shaded area shows the 95% credible intervals for the filtered data. “Unfiltered” refers to the raw genotypes extracted from the Wohns et al. (2022) dataset, while “filtered” are the estimates obtained after running the filtering procedure described in the main text.

Data availability

phlash is available as a Python package from https://github.com/jthlab/phlash. Code to reproduce the experiments is available at https://github.com/jthlab/phlash_paper. Tree sequences inferred by Wohns et al. (2022) containing the 1000 Genomes, HGDP, SGDP, and ancient genomes are available at https://zenodo.org/records/5512994.

References

- Adrion Jeffrey R et al. (2020). “A community-maintained standard library of population genetic models”. In: elife 9, e54967. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Arredondo Armando et al. (2021). “Inferring number of populations and changes in connectivity under the n-island model”. In: Heredity 126.6, pp. 896–912. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Al-Asadi Hussein et al. (2019). “Estimating recent migration and population-size surfaces”. In: PLoS genetics 15.1, e1007908. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Baharian Soheil and Gravel Simon (2018). “On the decidability of population size histories from finite allele frequency spectra”. In: Theoretical Population Biology 120, pp. 42–51. [DOI] [PubMed] [Google Scholar]

- Baumdicker Franz et al. (2022). “Efficient ancestry and mutation simulation with msprime 1.0”. In: Genetics 220.3, iyab229. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Berger James O (2013). Statistical decision theory and Bayesian analysis. Springer Science & Business Media. [Google Scholar]

- Bergström Anders, McCarthy Shane A, et al. (2020). “Insights into human genetic variation and population history from 929 diverse genomes”. In: Science 367.6484, eaay5012. [DOI] [PMC free article] [PubMed] [Google Scholar]