Abstract

Background

The global health system remains determined to leverage on every workable opportunity, including artificial intelligence (AI) to provide care that is consistent with patients’ needs. Unfortunately, while AI models generally return high accuracy within the trials in which they are trained, their ability to predict and recommend the best course of care for prospective patients is left to chance.

Purpose

This review maps evidence between January 1, 2010 to December 31, 2023, on the perceived threats posed by the usage of AI tools in healthcare on patients’ rights and safety.

Methods

We deployed the guidelines of Tricco et al. to conduct a comprehensive search of current literature from Nature, PubMed, Scopus, ScienceDirect, Dimensions AI, Web of Science, Ebsco Host, ProQuest, JStore, Semantic Scholar, Taylor & Francis, Emeralds, World Health Organisation, and Google Scholar. In all, 80 peer reviewed articles qualified and were included in this study.

Results

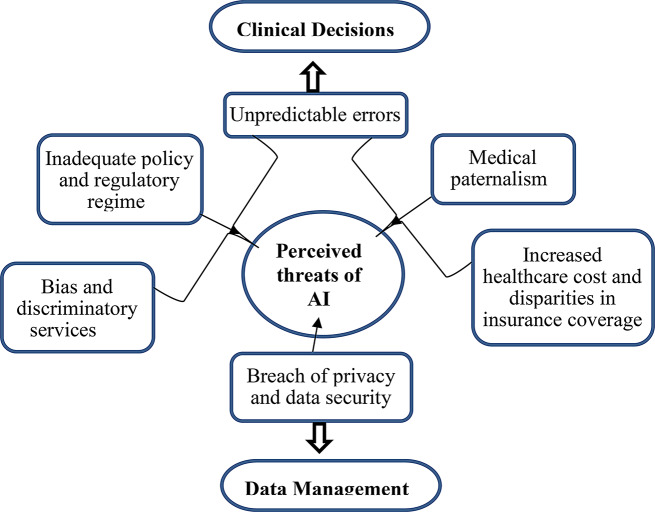

We report that there is a real chance of unpredictable errors, inadequate policy and regulatory regime in the use of AI technologies in healthcare. Moreover, medical paternalism, increased healthcare cost and disparities in insurance coverage, data security and privacy concerns, and bias and discriminatory services are imminent in the use of AI tools in healthcare.

Conclusions

Our findings have some critical implications for achieving the Sustainable Development Goals (SDGs) 3.8, 11.7, and 16. We recommend that national governments should lead in the roll-out of AI tools in their healthcare systems. Also, other key actors in the healthcare industry should contribute to developing policies on the use of AI in healthcare systems.

Keywords: Artificial intelligence, Computational intelligence, Computer vision systems, Confidentiality, Knowledge representation, Privacy of patient data, Privileged communications

| Text box 1. Contributions to the literature |

|---|

| There is inadequate account on the extent and type of evidence on how: |

| • Artificial intelligent (AI) tools could commit errors resulting in negative health outcomes to patients |

| • The lack of clear policy and regulatory regimes of the application of AI tools in patient-care threaten the rights, privacy, and autonomy of patients |

| • AI tools may dim the active participation of patients in the care process |

| • AI tools could escalate the overhead cost of providing and receiving essential healthcare |

| • Faulty and manipulated data, and inadequate machine learning could result in bias and discriminatory services |

Introduction

The global health system is facing unprecedented pressures due to the changing demographics, emerging diseases, administrative demands, dwindling and large migration of workforce, increasing mortality and morbidity, and changing demands and expectations in information technology [1, 2]. Meanwhile, the needs and expectations of patients are increasing and getting ever complicated [1, 3]. The global health system is thus, forced to leverage every opportunity, including the use of artificial intelligence (AI), to provide care that is consistent with patients’ needs and values [4, 5]. As expected, AI has become an obvious and central theme in the global narrative due to its enormous potential positive impacts on the healthcare system. AI, in this context, should be construed as capability of computers to perform tasks similar to those performed by human professionals even in healthcare [6, 7]. This includes the ability to reason, discover and extrapolate meanings, or learn from previous experiences to achieve healthcare goals artificially [4].

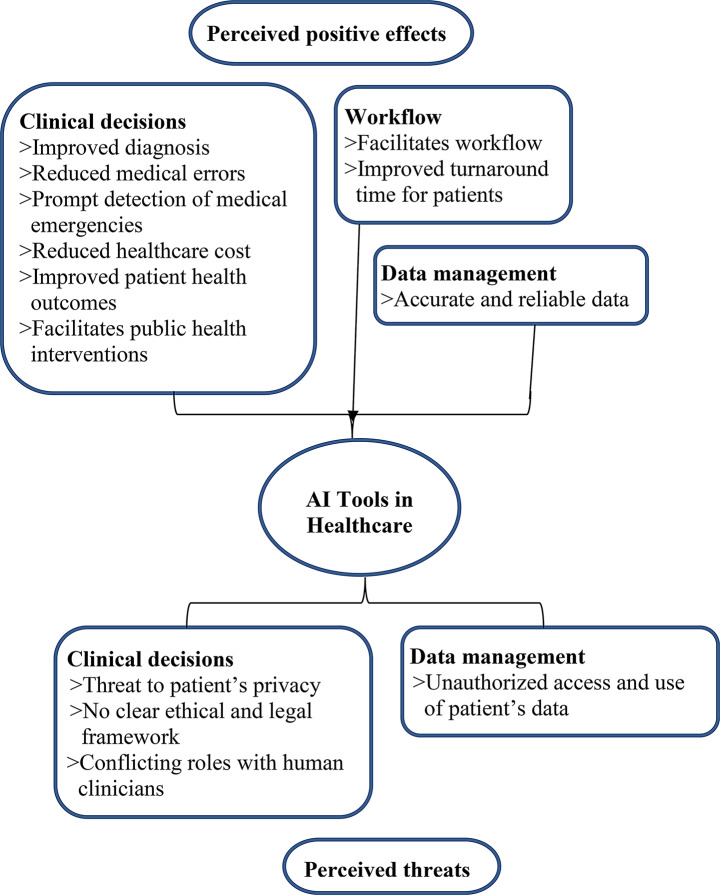

The term AI, a term credited to Sir John McCarthy since 1956, is vast, and it seems there is no consensus yet on what truly constitutes AI [8, 9]. AI is not a single type of technology, but many different types of computerised systems (hardware and software) that require large datasets to realise their full potential [10, 11]. AI tools are transforming the state of healthcare globally giving hope to patients with conditions that appear to defy traditional treatment techniques [1–3]. In clinical decision-making for instance, AI tools have improved diagnosis, reduced medical errors, stimulated prompt detection of medical emergencies, reduced healthcare cost, improved patient health outcomes, and facilitated public health interventions [3, 4]. Additionally, AI tools have facilitated workflow, improved turnaround time for patients, and also improved the accuracy and reliability of patients’ data.

The successes of the use of AI in healthcare seems promising, if not great already, but there is the need for caution. There is the need for moderation in the celebrations and expectations of the capabilities of AI tools in healthcare, because these tools also present threats yet to be fully understood and appreciated [6, 12,13]. So far, there are serious concerns that AI tools could threaten the privacy and autonomy of patients [2, 11]. Moreover, widespread adoption and use of AI tools in healthcare could be confounded by factors such as lack of standardised patients’ data, inadequate curated datasets, and lack of robust legal regimes that clearly define standards for professional practice using AI tools [11]. Additionally, socio-cultural differences, lack of government commitment, proliferation of AI-savvy persons with malicious intents, irregular supply of electric power, and poverty (especially in the global south) are but a few of the many factors that may work against the potentials of AI tools in healthcare [14]. For instance, algorithms on which AI tools operate can be weaponised to perpetuate discrimination based on race, age, gender, sexual identity, socio-cultural background, social status, and political identity [15, 16]. Notwithstanding their immense capabilities, AI tools are but a means to an end and not an end in themselves.

There is also a growing concern over how AI tools could facilitate and perpetuate unprecedented “infodemic” of misinformation via online social media networks that threaten global public health efforts [17–20]. In fact, the pandemic of disinformation has led to the coining of the term “infodemiology”, now acknowledged by WHO and other public health organisations globally as an important scientific field and critical area of practice especially during major disease outbreaks [17–20]. Recognising the consequences of disinformation to patients’ rights and safety and the potential of AI tools in facilitating same, public health experts have suggested a tighter control over patients’ information, and advocated for eHealth literacy and science and technology literacy [17–20]. Additionally, the experts also suggested the need to encourage peer review and fact checking systems to help improve the knowledge and quality of information regarding patient care [17–20]. Furthermore, there is the need to eliminate delays in the translation and transmission of knowledge in healthcare to mitigate distorting factors such as political, commercial, or malicious influences, as was widely reported during the SARS-CoV-2 outbreak [17–20].

Moreover, it is difficult to demonstrate how the deployment of AI tools in healthcare is contributing to the realisation of the Sustainable Development Goals (SGDs) 3.8, 11.7, and 16. For instance, SDG 11.7 provides for universal access to safe, inclusive and accessible public spaces, especially for women and children, older persons and persons with disabilities [24]. Moreover, SDG 3.8 calls for the realisation of universal health coverage, including access to quality essential healthcare services and essential medicines and vaccines for all. SDG 16 advocates for peaceful, inclusive, and just societies for all and building effective, accountable and inclusive institutions at all levels [24]. Thus, to achieve these and many others, there are many questions to be answered.

For instance, will the usage of AI tools in their present situations help achieve these SGDs by 2030? What constitutes professional negligence of AI tools in healthcare? Who takes responsibility for the commissions and omissions of AI tools in healthcare? What remedies accrue to patients who suffer serious adverse events from care provided by AI tools? What are the implications of using AI tools in healthcare on insurance policies of patients? To what extent is an AI tool developer liable for the actions and inactions of these intelligent tools? What constitutes informed consent when AI tools provide care to patients? In the event of conflicting decisions between AI tools and human clinicians, which would hold sway? Obviously, a lot more research, including reviews, are needed to clearly and confidently respond to these and several other nagging questions. Despite considerable research globally on AI, majority of these research have been done in non-clinical settings [22, 23]. For instance, randomised controlled studies, the gold standard in medicine, are yet to provide further and better evidence on how AI adversely impacts patients [23]. Therefore, the objective of this review is to map current existing evidence on the perceived threats by AI tools in healthcare on patients’ rights and safety.

Considering the social implications, this review is envisaged to positively impact the development, deployment, and utilisation of AI tools in patient care services [3, 25–29]. This is anticipated as the review to interrogate the main concerns of the patients and the general public regarding the use of these intelligent machines. The preposition is that these tools have the possibility for unpredictable errors, couple with inadequate policy and regulatory regime, may increase healthcare cost and create disparities in insurance coverage, breach privacy and data security of patients, and provide bias and discriminatory services which can be worrying [2, 7, 10, 25]. Therefore, the review envisaged that manufacturers of AI tools will pay attention and factor these concerns into the production of more responsible and patient-friendly AI tools and software. Additionally, medical facilities would subject newly procured IA tools and software to a more rigorous machine learning regime that would allay the concerns of patients and guarantee their rights and safety [25–27]. Moreover, the review may trigger the formulation and review of existing policies at the national and medical facility levels, which would provide adequate promotion and protection of the rights and safety of patients from the adverse effects of AI tools [26–28].

Furthermore, there are practical implications of this review to the deployment and application of AI tools in patient care. For instance, this review would remind healthcare managers of the need to conduct rigorous machine learning and simulation exercises for AI tools before deploying them in the care process [1–8, 27–29]. Moreover, medical professionals would have to scrutinise decisions of the AI tools before making final judgements on patients’ conditions. Again, healthcare professionals would find a way to make patients active participants in the care process. Finally, the review would draw attention of researchers to the issues that could undermine the acceptance of AI tools in patients care services [1–8]. For instance, this review may inform future research direction that explores potential threats posed by AI tools to patients’ rights and safety.

Several reviews are published recently (between January 1, 2022 and June 25, 2024) on the application of AI tools and software use in healthcare [30–48] (See Table 1). Almost halve (9 articles) of these recent reviews [30–38] explored the positives impacts of AI tools on healthcare services while almost halve (9 articles) [39–47] also examined both the positive and potential threats. Of these recent reviews, only one articles [48] studied the challenges pertaining to the adoption of AI tools in healthcare. Thus far, the current review provided a more focused and comprehensive perspectives to the threats posed by AI tools to patients’ rights and safety. The current review specifically interrogates the diverse and collates rich evidence from the perspectives of patients, healthcare workers, and the general public regarding the perceived threats posed by AI tools to patients’ rights and safety.

Table 1.

Comparison between key findings in the current and previous reviews on the use of artificial intelligent tools in healthcare

| Ser | Author of previous review | Purpose of previous review | How the current review compares/differs from previous ones | Novelty of current review | |

|---|---|---|---|---|---|

| Previous papers | Current paper | ||||

| 1 | Al Kuwaiti et al. (2023) | Application of AI tools in in-clinical and out-clinic patient care services | Broad overview of AI tools in clinical, administrative, and virtual patient care services | Focused on only the threats posed by AI tools to patients’ rights and safety | Provided diverse perspectives of patients, healthcare workers, and the general public about AI tools regarding patients’ rights and safety |

| 2 | Alnasser (2023) | Explored the potential cost savings and efficiency of AI tools in healthcare | Looked at the positive economic impacts of AI tools in healthcare | Focused on only the threats posed by AI tools to patients’ rights and safety | Provided comprehensive and diverse perspectives of patients, healthcare workers, and the general public about patients’ rights and safety |

| 3 | Botha et al. (2024) | Looked at the positive effects of AI tools in patient care | Focused on only the benefits of AI tools on patient care | Focused on only the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 4 | Kitsios et al. (2023) | Explored the benefits and reservations about AI tools in healthcare | Focused on the benefits of AI tools in clinical care and the related barriers | Focused on only the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 5 | Krishnan et al. (2023) | Gave extensive overview of the use of AI tools in patient care and related research | Focused on the benefits of AI tools to patient care and related research | Focused on the threats posed by AI tools to patients’ rights and safety | Provided a comprehensive overview of concerns of patients, healthcare workers, and the general public about patients’ rights and safety |

| 6 | Lambert et al. (2023) | Explored the factors influencing healthcare workers’ acceptance of AI tools | Looked at the factors that influence the adoption of AI tools by healthcare workers | Focused on only the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 7 | Tucci1 et al. (2022) | Examined healthcare workers’ trust in AI tools | Looked at the factors that influence healthcare workers’ trust in AI tools | Focused on the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 8 | WHO (2023) | Examined current and emerging applications of AI tools in healthcare financing | Looked at the benefits of current and emerging AI tools to healthcare financing | Focused on the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 9 | Wu et al. (2023) | Utilisation of AI tools in healthcare supply chain and ethical and political issues | Focused on the benefits of AI tools in healthcare | Focused on the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 10 | Ahsan et al. (2022) | Application of machine learning in the early detection of numerous diseases | Gave a broad overview of the application of AI tools with focus on the strengths and a bit about patient’s privacy issues | Provided a comprehensive overview of only the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 11 | Ali et al. (2023) | Application of AI in the healthcare |

Examined the benefits, challenges, methodologies, and functionalities of AI tools With a brief regarding data integration, privacy issues, legal issues, and patient safety |

Provided a comprehensive overview of only the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 12 | Alowais et al. (2023) | Gave an overview of current state of AI in clinical practice, and associated challenges | Looked at the general application of AI tools a bit about the ethical and legal considerations and the need for human expertise | Provided a comprehensive overview of only the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 13 | Kooli et al. (2022) | Examining the potential ethical dilemmas about AI tools in healthcare | Looked at the ethical dilemmas and benefits of AI tools in healthcare | Focused on only the threats posed by AI tools to patients’ rights and safety | Provided comprehensive and diverse perspectives of patients, healthcare workers, and the general public about patients’ rights and safety |

| 14 | Kumar et al. (2023) | Looked at the benefits and challenges of AI tools in medical care | Focused on the benefits and related challenges of using AI tools in healthcare | Focused on the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 15 | Lindroth et al. (2024) | A comprehensive overview of AI applications (computer vision) in healthcare | Focused on the use of AI tools (computer vision) in healthcare, including privacy, safety, and ethical factors | Focused on the threats posed by AI tools to patients’ rights and safety | Provided a comprehensive overview of concerns of patients, healthcare workers, and the general public about patients’ rights and safety |

| 16 | Mohamed Fahim J (2022) | Explored the latest developments and ethical issues about AI tools in healthcare | Provided a broad overview of the application of latest AI tools in healthcare and related ethical issues | Focused on the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 17 | Olawade et al. (2023) | Benefits and challenges of AI tools to public health | Focused on the facilitators and barriers to utilization of AI tools in public health | Focused on the threats posed by AI tools to patients’ rights and safety | Provided comprehensive and diverse perspectives of patients, healthcare workers, and the general public about patients’ rights and safety |

| 18 | Rubaiyat et al. (2023) | Looked at the use of current AI tools in healthcare | Focused on the application of AI tools | Focused on only the threats posed by AI tools to patients’ rights and safety | Provided a more comprehensive overview of threats of AI tools to patients’ rights and safety |

| 19 | Aldwean and Tenney (2024) | Explored the key challenges that explain slow adoption AI tools in healthcare | Looked at technological capabilities, regulations and policies, data management, and the ethical landscape surrounding the use of AI | Issues raised include unpredictable errors, inadequate policy and regulatory regime, medical paternalism, increased healthcare cost and disparities in insurance coverage, breach of privacy and data security, bias and discriminatory services | Provided a more comprehensive overview of concerns of patients, healthcare workers, and the general public about patients’ rights and safety |

Methods

We scrutinised, synthesised, and analysed peer review articles according to the guidelines by Tricco et al. [49]. Thus, (1) definition and examination of study purpose, (2) revision and thorough examination of study questions, (3) identification and discussion of search terms, (4) identification and exploration of relevant databases/search engines and download of articles, (5) data mining, (6) data summarisation and synthetisation of result, and (7) consultation.

Research questions

Six study questions guided this review. They are: (1) What are the implications of AI tools on medical errors? (2) What are the ethicolegal implications of AI tools to patient care? (3) What are the implications of AI tools on patients-provider relationship? (4) What are the implications of AI tools on the cost of healthcare and insurance coverage? (5) What are the potential threats of AI tools on patients’ rights and data security? And (6) What are the perceived implications of AI tools on discrimination and bias in healthcare?

Search strategy

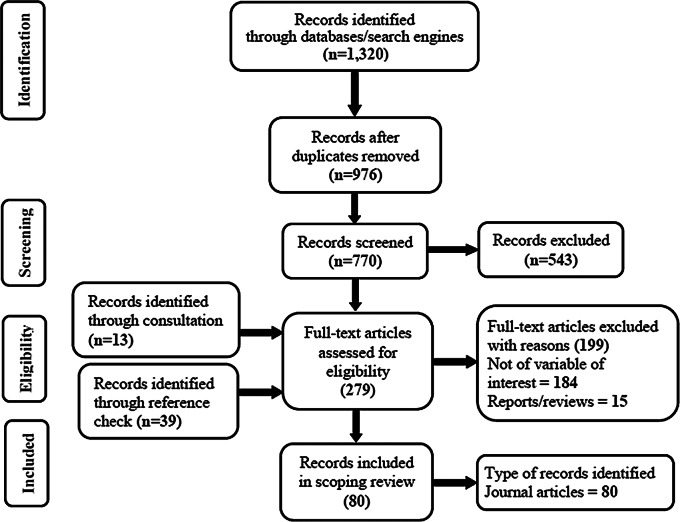

We mapped evidence on the topic using the Preferred Reporting Items for Reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR) [49, 50]. We searched the following databases/search engines for peer review articles: Nature, PubMed, Scopus, ScienceDirect, Dimensions, Web of Science, Ebsco Host, ProQuest, JStore, Semantic Scholar, Taylor & Francis, Emeralds, World Health Organisation, and Google Scholar (see Fig. 1; Table 2). To ensure the search process was rigorous and detailed, we first searched in the PubMed using Medical Subject Headings (MeSH) terms on the topic (see Table 2). The search was conducted at two levels based on the search terms. First, the search terms “Confidentiality” OR “Artificial Intelligence” produced 4,262 articles. Second, the search was guided using 30 MeSH terms and controlled vocabularies which also yielded 1,320 articles (see Fig. 1; Table 2).

Fig. 1.

PRISMA flow diagram of articles used in the current review conducted from January 1, 2010 to December 31, 2023

Table 2.

Summary of search strategy applied in selecting articles for the current review conducted from January 1, 2010 to December 31, 2023

| Search Strategy Item | Search Strategy |

|---|---|

| Databases/Search engines | Nature (107), PubMed (132), Scopus (83), ScienceDirect (205), Dimensions (68), Web of Science (77), Ebsco Host (91), ProQuest (69), JStore (84), Semantic Scholar (99), Taylor & Francis (67), Emeralds (45), World Health Organisation (13), and Google scholar (180) |

| Language filter | English |

| Time filter | January 1, 2010 to December 31, 2023 |

| Spatial filter | Worldwide |

| MeSH terms used |

1. Confidentiality – “Entry Terms” OR “Secrecy” OR “Privileged Communication” OR “Communication, Privileged” OR “Communications, Privileged” OR “Privileged Communications” OR “Confidential Information” OR “Information, Confidential” OR “Privacy of Patient Data” OR “Data Privacy, Patient” OR “Patient Data Privacy” OR “Privacy, Patient Data” 2. Artificial Intelligence – “Intelligence, Artificial” OR “Computational intelligence” OR “Intelligence, Computational” OR “Machine Intelligence” OR “Intelligence, Machine” OR “Computer Reasoning” OR “Reasoning, Computer” OR “AI (Artificial Intelligence)” OR “Computer Vision Systems” OR “Computer Vision System” OR “Systems, Computer Vision” OR “System, Computer Vision” OR “Vision System, Computer” OR “Vision Systems, Computer” OR “Knowledge Acquisition (Computer)” OR “Acquisition, Knowledge (Computer)” OR “Knowledge Representation (Computer)” OR “Knowledge Representations (Computer)” OR “Representation, Knowledge (Computer)” |

| Inclusion criteria | (i) The article must be about AI use in healthcare, (ii) the article must be a primary research and conducted anywhere in the world, (iii) the article must be written in the English Language and applied quantitative, qualitative, and or mixed approach, (iv) the article must be must provide details on perceived threats about AI use in healthcare, (v) the article must have been conducted between January 1, 2010 to December 31, 2023, and (vi) the article must provide details on: author(s), purpose, methods, country, and conclusion |

| Exclusion criteria | (i) The article is about AI use in health but lacked details on perceived threats about AI use in healthcare, (ii) reviews about AI use in healthcare, (iii) the article is about AI use in healthcare but conducted in other languages other than in the English Language, and (iv) abstracts, incomplete articles, commentaries, grey literature, opinion pieces, and media reports about AI use in healthcare |

The search covered studies conducted between January 1, 2010 and December 31, 2023, because the use of AI in healthcare is generally new and mostly unknown to people in the past three decades. Moreover, we conducted the study between January 1 and December 31, 2023. Through a comprehensive data screening process, we separated all duplicate articles into a folder, which were later removed. These articles also included those that were inconsistent with the inclusion threshold (see Table 2). The initial screening was conducted by authors 4, 5, 6, 7, 8, and 9, but where the qualification of an article was in doubt, that article was referred to authors 1, 3, 4 and 10 for further assessment until consensus was reached. Moreover, 1 and 10 further reviewed the data. To enhance comprehension and rigour in the search process, citation chaining was conducted on all full-text articles that met the inclusion threshold to identify additional relevant articles for further assessment. Table 2 presents inclusion and exclusion criteria used in selecting relevant articles for this review.

Quality rating

We conducted a quality rating of all selected full-text articles based on the guideline prescribed by Tricco et al. [49]. Thus, the reviewed article must provide a research background, purpose, context, suitable method, sampling, data collection and analysis, reflectivity, value of research, and ethics. We assessed and scored all selected articles based on the set criteria [49]. Thus, articles which scored “A” had few or no limitation, “B” had some limitations, “C” had substantial limitations but possess value, and “D” carry substantial flaws that could compromise the study as a whole. Therefore, articles scoring “D” were removed from the review [49].

Data extraction and thematic analysis

All authors independently extracted the data. Authors 5, 6, 7, 8, and 9, extracted data on “authors, purpose, methods, and country”, while authors 1, 2, 3, 4, and 10 extracted data on “perceived threats and conclusions” (see Table 3). Leveraging on Cypress [51], Morse [52], qualitative thematic analysis was conducted by authors 1, 2, 3, 4, and 10. Data were coded and themes emerged directly from the data consistent with study questions [53, 54]. Specifically, the analysis included repeated reading of the articles to gain deep insight into the data. We further created initial candidate codes, identified and examined emerging themes. Additionally, candidate themes were reviewed, properly defined and named, and extensively discussed until a consensus was reached. Finally, we composed a report and extensively reviewed it to ensure internal and external cohesion of the themes (see Table 4).

Table 3.

Overview of data extracted from articles on the perceived threats of artificial intelligent tools in healthcare

| Ser | Author(s) | Purpose | Approach | Continent | Potential Threats | Conclusion |

|---|---|---|---|---|---|---|

| 1 | Al’Aref et al. (2019) | Using the New York Percutaneous Coronary Intervention Reporting System to elucidate the determinants of in-hospital mortality in patients undergoing percutaneous coronary intervention |

Quantitative, 479,804 patients |

North America |

1. May not apply to all cohorts of patients 2. No clear ethical and legal regime |

High accuracy predictive potential for in-hospital mortality in patients undergoing percutaneous coronary intervention |

| 2 | Al’Aref et al. (2020) | Culprit Lesion (CL) precursors among Acute Coronary Syndrome (ACS) patients based on computed tomography-based plaque characteristics |

Quantitative, 468 patient |

North America |

1. Potential for bias 2. Potential for medical errors |

A boosted ensemble algorithm can be used to predict culprit lesion from non-culprit lesion precursors on coronary Computed Tomography Angiography (CTA) |

| 3 | Aljarboa and Miah (2021) | Perceptions about Clinical Decision Support Systems (CDSS) uptake among healthcare sectors |

Qualitative, 54 healthcare workers |

Asia |

1. Potential for error 2. Uncertainty about the future effects |

Patients’ confidence and diagnostic accuracy were new determinants of CDSS acceptability that emerged in this study |

| 4 | Alumran et al. (2020) | Electronic Canadian Triage and Acute Scale (E-CTAS) utilisation in emergency department |

Quantitative, 71 respondents |

Asia | No clear regulations in place | Years of nursing experience moderated the utilisation of E-CTAS |

| 5 | Ayatollahi et al. (2019) | Positive Predictive Value (PPV) of Cardiovascular Disease using Artificial Intelligence Neural Network (ANN) and Support Vector Machine (SVM) algorithm and their distinction in terms of predicting Cardiovascular Disease |

Quantitative, 1,324 |

Asia | No clear legal regime | The SVM algorithm presented higher accuracy and better performance than the ANN model and was characterised by higher power and sensitivity |

| 6 | Baskaran (2020) | Using machine learning to gain insight into the relative importance of variables to predict obstructive Coronary Artery Disease (CAD) |

Quantitative, 719 patients |

North America | No clear legal regime | Machine learning model showed BMI to be an important variable, although it is currently not included in most risk scores |

| 7 | Betriana (2021a) | Access to palliative care (PC) by integrating predictive model into a comprehensive clinical framework |

Quantitative, 68,349 in-patient encounters |

North America | Inadequate legal and ethical systems | A machine learning model can effectively predict the need for in-patient palliative care consult and has been successfully integrated into practice to refer new patients to palliative care |

| 8 | Betriana (2021b) | Interactions between healthcare robots and older persons | Qualitative | Asia |

1. Patients’ safety and security concerns 2. Legal and ethical concerns |

Interaction between healthcare robots and older people may improve quality of care |

| 9 | Blanco et al. (2018) | Barriers and facilitators related to uptake of Computerised Clinical Decision Support (CCDS) tools as part of a Clostridium Difficile Infection (CDI) reduction bundle |

Qualitative, 34 participants |

North America | Perceived loss of autonomy and clinical judgement | Findings shaped the development of Clostridium Difficile Infection reduction bundle |

| 10 | Borracci et al. (2021) | Application of Neural Network (NN) algorithm-based models to improve the Global Registry of Acute Coronary Events (GRACE) score performance to predict in-hospital mortality and acute Coronary Syndrome |

Quantitative, 1,255 admitted patients |

South America |

1. No clear regulations 2. Faulty data can lead to inaccurate results |

Treatment of individual predictors of GRACE score with NN algorithms improved accuracy and discrimination power in all models |

| 11 | Bouzid et al. (2021) | Consecutive patients evaluated for suspected Acute Coronary Syndrome |

Quantitative, 554 respondents |

North America |

1. Could produce bias result 2. No clear regulations |

A subset of novel electrocardiograph features predictive of acute coronary syndrome with a fully interpretable model highly adaptable to clinical decision support application |

| 12 | Catho et al. (2020) | Adherence to antimicrobial prescribing guidelines and Computerised Decision Support Systems (CDSSs) adoption |

Qualitative, 29 participants |

Europe |

1. Time-consuming 2. Reduce clinicians’ critical thinking and professional autonomy and raise new medico-legal issues 3. Effective CDSSs will require specific features |

Features that could improve adoption include friendliness, ergonomics, transparency of the decision-making process and workflow |

| 13 | Davari Dolatabadi et al. (2017) | Automatic diagnosis of normal and Coronary Artery Disease conditions using Heart Rate Variability (HRV) signal extracted from electrocardiogram | Quantitative | Asia | Inadequate legal regime | Methods based on the feature extraction of the biomedical signals are an appropriate approach to predict the health situation of patients |

| 14 | Dogan et al. (2018) | Examined whether similar machine learning approaches could be used to develop a similar panel to predict Coronary Heart Disease (CHD) |

Quantitative, 1,180 and 524 training and test sets, respectively |

North America | Results are inconclusive | The AI tool is more sensitive than conventional risk-factor based approaches, and performs well in both males and females |

| 15 | Du et al. (2020) | Using high-precision Coronary Heart Disease (CHD) prediction model through big data and machine-learning |

Quantitative, 42,676 patients |

Asia | No clear ethical and legal regime | Accurate risk-prediction of coronary heart disease from electronic health records is possible given a sufficiently large population of training data |

| 16 | Elahi et al. (2020) | Traumatic Brain Injury (TBI) prognostic models |

Mixed, 25 questionnaire and interview |

Africa | Poor internet connectivity may undermine its utility | Addressed unmet needs to determine feasibility of TBI clinical decision support systems in low-resource settings |

| 17 | Fan et al. (2020) | Integration of unified theory of user acceptance of technology and trust theory for exploring the adoption of Artificial Intelligence-Based Medical Diagnosis Support System (AIMDSS) |

Quantitative, 191 respondents (healthcare workers) |

Asia | Needs specialised skills to operate | The empirical examination demonstrates a high predictive power of this proposed model in explaining AIMDSS utilisation |

| 18 | Fan et al. (2021) | Real-world utilisation of AI health chatbot for primary care self-diagnosis |

Mixed, 16,519 users |

Asia | Sceptical about its utility in patient care | Although the AI tool is perceived convenient in improving patient care, issues and barriers exist |

| 19 | Fritsch et al. (2022) | Investigate perception about artificial intelligence in healthcare |

Quantitative survey, 452 patients and their companions |

Europe |

1. Unpredictable errors/mistakes 2. Cyberattack and implications for data privacy 3. Interferes with patient-doctor relationship 4. Diagnosis should be subject to physician assessment 5. Endanger data privacy and protection 6. Limited penetration |

Patients and their companions are open to AI usage in healthcare and see it as a positive development |

| 20 | Garzon-Chavez et al. (2021) | Utilisation of AI-assisted computed tomography screening tool for COVID-19 patient at triage |

Quantitative, 75 chest CTs |

South America | May conflict with diagnostic decisions of clinicians | There were differences in laboratory parameters between cases at the intensive care and non-intensive care units |

| 21 | Golpour et al. (2020) | Compare support vector machine, naïve Bayes and logistic regressions to determine the diagnostic factors that can predict the need for Coronary Angiography |

Quantitative, 1,187 candidates |

Asia | Depends on accurate and large data set | Gender, age and fasting blood sugar found to be the most important factors that predict the result of coronary angiography |

| 22 | Gonçalves (2020) | Nurses’ experiences with technological tools to support the early detection of sepsis | Qualitative | South America | Inadequate legal regime | Nurses in the technology incorporation process enable a rapid decision-making in the identification of sepsis |

| 23 | Grau et al. (2019) | Using Electronic Support Tools and Orders for Prevention of Smoking (E-STOPS) |

Qualitative, 21 participants |

North America | Clinical judgement is limited | Improvements in provider training and feedback as well as the timing and content of the electronic tools may increase their use by physicians |

| 24 | Horsfall et al. (2021) | Attitudes of surgeons and the wider surgical team toward the role of artificial intelligence in neurosurgery |

Mixed, 33 participants and 100 respondents |

North America | Not very effective during post-operative management and follow-ups | Artificial intelligence widely accepted as a useful tool in neurosurgery |

| 25 | Hu et al. (2019) | Using Rough Set Theory (RST) and Dempster-Shafer Theory (DST) of evidence to remedy Major Adverse Cardiac Event (MACE) prediction |

Quantitative, 2,930 acute coronary syndrome patient samples |

Asia | Needs regular, large, and accurate data | The model achieved better performance for the problem of MACE prediction when compared with the single models |

| 26 | Isbanner et al. (2022) | Assess and compare public judgments about AI use in healthcare |

Quantitative survey, 4448 respondents (general public) |

Australia |

1. Breach of ethical and social values 2. Interferes with patient-doctor contact |

AI systems should augment rather than replace humans in the provision of healthcare |

| 27 | Jauk et al. (2021) | Machine learning-based application for predicting the risk of delirium for in-patients |

Mixed, 47 questionnaire and 15 expert group (clinicians) |

Europe | Not effective in detecting delirium at an early stage | In order to improve quality and safety in healthcare, computerised decision support should predict actionable events and be highly accepted by users |

| 28 | Joloudari et al. (2020) | Integrated method using random trees (RTs), decision tree of C5.0, support vector machine (SVM), and decision tree of Chi-squared automatic interaction detection (CHAID) | Quantitative | Asia | Needs regular, accurate, and large volumes of data | The random tree model yielded the highest accuracy rate than others |

| 29 | Kanagasundaram et al. (2016) | Using in-patient Acute Kidney Injury (AKI) Computerised Clinical Decision Support (CCDS) |

Qualitative, 24 Interviews |

Australia |

1. Disrupts workflow 2. Seen as a hindrance to work |

Systems intruding on workflow, particularly involving complex interactions, may be unsustainable even if there has been a positive impact on care |

| 30 | Kayvanpour et al. (2021) | Genome-wide miRNA levels in a prospective cohort of patients with clinically suspected Acute Coronary Syndromes by applying an in Silico Neural Network |

Quantitative, 2,930 samples |

Europe | Needs large and accurate data to produce reliable outcomes | The approach opens the possibility to include multi-modal data points to further increase precision and performance classification of other differential diagnoses |

| 31 | Khong et al. (2015) | Adoption of wound clinical decision support system as an evidence-based technology by nurses |

Qualitative, 14 registered nurses |

Asia |

1. Can lead to loss of essential clinical skills 2. Prone to errors |

Improved knowledge of nurses’ about their decision to interact with the computer environment in a Singapore context |

| 32 | Kim et al. (2017) | Neural Network (NN) based prediction of Coronary Heart Disease risk using feature correlation analysis (NN-FCA) |

Quantitative, 4,146 subjects |

Asia | Depends on accurate and large data set | The model was better than Framingham risk score (FRS) in terms of coronary heart diseases risk prediction |

| 33 | Kitzmiller et al. (2019) | Neonatal intensive care unit clinician perceptions of a continuous predictive analytics technology |

Qualitative, 22 clinicians |

North America | Accuracy of in doubt | Combination of physical location as well as lack of integration into workflow or procedures of using data in care decision-making may have delayed clinicians from routinely paying attention to the data |

| 34 | Krittanawong et al. (2021) | Deep neural network to predict in-hospital mortality in patients with Spontaneous Coronary Artery Dissection (SCAD) |

Quantitative, 375 SCAD patients |

North America | Relies on large volume, accurate, and regular patient data | The deep neural network model was associated with higher predictive accuracy and discriminative power than logistic regression or ML models for identification of patients with ACS due to SCAD prone to early mortality |

| 35 | Lee (2015) | Emergency department decision support system that couples machine learning, simulation, and optimisation to address improvement goals | Mixed | North America | Inadequate regulations | General improvement in patient care at the emergency care department |

| 36 | Liberati et al. (2017) | Barriers and facilitators to the uptake of an evidence-based Computerised Decision Support Systems (CDSS) |

Qualitative, 30 participants (healthcare workers) |

Europe | Undermines professional autonomy and exposes practitioners to potential medico-legal issues | Attitudes of healthcare workers towards scientific evidence and guidelines, quality of inter-disciplinary relationships, and organisational ethos of transparency and accountability need to be considered when exploring facility readiness to implement AI tools |

| 37 | Li et al. (2021) | Machine learning-aided risk stratification system to simplify the procedure of the diagnosis of Coronary Artery Disease |

Quantitative, 5,819 patients |

Asia | Its efficacy depends on data availability and accuracy | The model could be useful in risk stratification of prediction for the coronary artery disease |

| 38 | Liu et al. (2021) | Machine learning models for predicting mortality in Coronary Artery Disease (CAD) patients with Atrial Fibrillation (AF) | Quantitative | Asia | Faulty data can result in errors | Combining the performance of all aspects of the models, the regularisation logistic regression model was recommended to be used in clinical practice |

| 39 | Love et al. (2018) | Using AI-based Computer-Assisted Diagnosis (CADx) in training healthcare workers |

Quantitative, 32 palpable breasts lumps examined by 3 non-radiologists |

North America |

1. Could return negative diagnosis 2. High cost of care 3. Patients are sceptical about final decisions |

A portable ultrasound system with CADx software can be successfully used by first-level healthcare workers to triage palpable breast lumps |

| 40 | MacPherson et al. (2021) | Costs and yield from systematic HIV-TB screening, including computer-aided digital chest X-Ray |

Quantitative, 1462 residents |

Africa | High cost of healthcare | Digital chest X-Ray computer-aided digital with universal HIV screening significantly increased the timelines and completeness of HIV and TB diagnosis |

| 41 | McBride et al. (2019) | Knowledge and attitudes of operating theatre staff towards robotic-assisted surgery programme |

Quantitative, 164 respondents (clinicians) |

Australia |

1. Sceptical about its efficacy in patient care 2. Increased cost of care |

Clinicians embraced the application of the robotic-assisted surgery programme in the theatre |

| 42 | McCoy (2017) | Machine learning-based sepsis prediction algorithm to identify patients with sepsis earlier |

Quantitative, 1328 respondents |

North America | Disruptiveness (alert fatigue) | The machine learning-based sepsis prediction algorithm improved patient outcomes |

| 43 | Mehta et al. (2021) | Assess knowledge, perceptions, and preferences about AI use in medical education |

Quantitative survey, 321 medical students |

North America |

1. Create new challenges to healthcare equity 2. Create new ethical and social challenges 3. Limited in the provision of empathetic, psychiatric, personal, and counselling care |

Optimistic about AI’s capabilities to carry out a variety of healthcare functions, including clinical and administrative Sceptical about AI utility in personal counselling and empathetic care |

| 44 | Morgenstern et al. (2021) | Determine the impacts of artificial intelligence (AI) on public health practice |

Qualitative (inter-continental interviews), 15 experts in public health and AI |

North America and Asia |

1. Inadequate experts in AI use 2. Poor healthcare data quality for AI training and learning 3. Introduce bias 4. Escalate healthcare inequity 5. Poor AI regulation |

Experts are cautiously optimistic AI’s potential to improve diagnosis and disease surveillance. However, perceived substantial barriers like inadequate regulation exist |

| 45 | Motwani et al. (2017) | Traditional prognostic risk assessment in patients undergoing non-invasive imaging |

Quantitative, 10,030 patients |

North America | Efficacy depends on accurate, large, and regular data | Machine learning combining clinical and coronary computed tomographic angiography data was found to predict 5-year all-cause mortality significantly better than existing models |

| 46 | Naushad et al. (2018) | Coronary artery disease risk and percentage stenosis prediction models using ensemble machine learning algorithms, multifactor dimensionality reduction and recursive partitioning |

Quantitative, 648 subjects |

Asia | Needs accurate data to function well | The model exhibited higher predictability both in terms of disease prediction and stenosis prediction |

| 47 | Nydert et al. (2017) | Clinical Decision Support System (CDSS) among paediatricians |

Qualitative, 17 clinicians |

Europe |

1. Risk of overreliance on system 2. Cannot function independently |

Generally, the system is considered very useful to patient drug management |

| 48 | O’Leary et al. (2014) | Support systems in healthcare and the concept of decision support for clinical pathways |

Mixed, 19 Clinicians |

Europe |

1. Lack of autonomy by clinicians 2. Complication of the care process |

The success of these systems depend on other factors outside of itself |

| 49 | Omar et al. (2017) | Paediatrician’s acceptance, perception and use of Electronic Prescribing Decision Support System (EPDSS) using extended Technology Acceptance Model (TAM2) | Qualitative | North America |

1. Not user friendly 2. Does not cancel medications not needed |

Although paediatricians are positive of the usefulness of EPDSS, there are problems with acceptance due to usability issues of the system |

| 50 | Orlenko et al. (2020) | Tree-based Pipeline Optimisation Tool (TPOT) to predict angiographic diagnoses of Coronary Artery Disease (CAD) | Quantitative | Europe | Efficacy depends on accurate and large data set | Phenotypic profile that distinguishes non-obstructive coronary artery disease patients from non-coronary artery disease patients is associated with higher precision |

| 51 | Panicker and Sabu (2020) | Factors influencing the adoption of Computer-Assisted Medical diagnosing (CDM) systems for TB |

Qualitative, 18 healthcare workers |

Asia | Prone to medical errors | Human, technological, and organisational characteristics influence the adoption of CMD system for TB |

| 52 | Petersson et al. (2022) | Explore perceived challenges about AI use in healthcare |

Qualitative, 26 healthcare leaders |

Europe |

1. Liability and legal issues 2. Setting standards and complying with quality requirements 3. Cost of operating AI 4. Acceptance of AI by professionals |

Healthcare leaders highlighted several implementation challenges in relation to AI within and beyond the healthcare system in general and their organisations in particular |

| 53 | Petitgand et al. (2020) | AI-based Decision Support System (DSS) in the emergency department |

Qualitative, 20 Clinicians and AI developers |

North America | System is prone to errors | The study points to the importance of considering interconnections between technical, human and organisational factors to better grasp the unique challenges raised by AI systems in healthcare |

| 54 | Pieszko (2019) | Risk assessment tool based on easily obtained features, including haematological indices and inflammation markers |

Quantitative, 5,053 patients |

Europe | Efficacy depends on large and accurate patient data | The machine-learning model can provide long-term predictions of accuracy comparable or superior to well-validated risk scores |

| 55 | Ploug et al. (2021) | Examine preferences for the performance and explainability of AI decision making in health care |

Quantitative survey, 1027 respondents (general public) |

Europe |

1. Unintentional harm 2. Potential for bias and discrimination 3. Consent issues |

Physicians must take ultimately responsibility for diagnostics and treatment planning, AI decision support should be explainable, and AI system must be tested for discrimination |

| 56 | Polero (2020) | Random forest and elastic net algorithms to improve acute coronary syndrome risk prediction tools |

Quantitative, 20,078 patients’ data |

South America | Performance depends on large and accurate data | Random forest significantly outperformed exiting models and can perform at par with previously developed scoring metrics |

| 57 | Prakash and Das (2021) | Factors influencing the uptake and use of intelligent conversational agents in mental healthcare | Qualitative | Asia | Inadequate legal regimes to protect patients | AI tools have proven efficacious in improving the health outcomes of patients. However, there are inadequate legal regimes to guide usage |

| 58 | Pumplun et al. (2021) | Factors that influence the adoption of machine learning systems for medical diagnosis in clinics |

Qualitative, 22 healthcare workers |

Europe | Unclear regulations to protect patients | Many clinics still face major problems in the application of machine learning systems for medical diagnostics |

| 59 | Richardson et al. (2021) | Examining patient views of diverse applications of AI in healthcare |

Qualitative (FGDs), 87 patients |

North America |

1. Breach of privacy 2. Discrimination and bias 3. Hacking and manipulation 4. Trust issues 5. Data integrity 6. Unknown harm 7. Breach of choice and autonomy 8. Lack of clear opportunity to challenge AI decisions 9. Cost of care 10. Inconsistent with insurance coverage 11. Over-dependence on AI leading to loss of skills and competencies |

Addressing patient concerns relating to AI applications in healthcare is essential for effective clinical implementation |

| 60 | Romero-Brufau (2020) | Reduce unplanned hospital readmissions through the use of artificial intelligence-based clinical decision support |

Quantitative, 2,460 respondents |

North America | Heavily dependent on quality and regular data | Six months following a successful application of intervention, readmissions rates decreased by 25% |

| 61 | Sarwar et al. (2019) | Examine perspectives on AI implementation in clinical practice |

Quantitative survey, 487 pathologist (Inter-continental) |

North America and Europe |

1. New medical-legal issues 2. Fear of errors 3. Inadequate skills and knowledge AI 4. Erodes skills and competencies of clinicians |

Most respondents envision eventual rollout of AI-tools to complement and not replace physicians in healthcare |

| 62 | Scheetz et al. (2021) | Investigate the diagnostic performance, feasibility, and end-user experiences of AI assisted diabetic retinopathy |

Mixed, 236 patients, 8 HCWs |

Australia |

1. Informed consent issues 2. Unintended errors |

AI in healthcare well-accepted by patients and clinicians |

| 63 | Schuh (2018) | Creation and modification of Arden-Syntax-based Clinical Decision Support Systems (CDSSs) | Quantitative | Australia |

1. Inadequate legal regime 2. Poor data interoperability |

Despite its high utility in patient care, inconsistent electronic data, lack of social acceptance among healthcare personnel, and weak legislative issues remain |

| 64 | Sendak (2020) | Integration of a deep learning sepsis detection and management platform, sepsis watch, into routine clinical care | Quantitative | North America |

1. High cost of care 2. Inadequate regulations |

Although there is no playbook for integrating deep learning into clinical care, learning from the sepsis watch integration can inform efforts to develop machines learning technologies at other healthcare delivery systems |

| 65 | Sherazi et al. (2020) | Propose a machine learning-based on 1-year mortality prediction model after discharge in clinical patients with acute coronary syndrome |

Quantitative, 10,813 subjects |

Asia | May produce medical error due to faulty data | The model would be beneficial for prediction and early detection of major adverse cardiovascular events in acute coronary syndrome patients |

| 66 | Sujan et al. (2022) | Explore views about AI in healthcare |

Qualitative, 26 patients, hospital staff, technology developers, and regulators |

Europe |

1. Unforeseen errors 2. Inadequate ethical and legal regime 3. Inability to respond to socio-culturally diversities 4. Inadequate situation awareness between AI and humans 5. Inadequate humanity |

Safety and assurance of healthcare AI need to be based on a systems approach that expands the current technology-centric focus |

| 67 | Terry et al. (2022) | Explore views about the use of AI tools in healthcare |

Qualitative, 14 primary healthcare and digital health stakeholders |

North America |

1. Current data system inadequate to support AI everywhere 2. Inadequate manpower and resources to rollout AI 3. Loss of control in decision making 4. Unpredictable errors and mistakes 5. Ethical, legal, and social implications |

Use of AI in primary healthcare may have a positive impact, but many factors need to be considered regarding its implementation |

| 68 | Tscholl et al.(2018) | Perceptions about patient monitoring technology (visual patient) for transforming numerical and waveform data into a virtual model |

Mixed, 128 interviews (anesthesiologists) with 38 online surveys |

Europe | Not very effective in patient monitoring | The new avatar-based technology improves the turnaround time in patient care |

| 69 | Ugarte-Gil et al. (2020) | A socio-technical system to implement a computer-aided diagnosis |

Mixed, Twelve clinicians |

South America |

1. May interfere with workflow 2. May be insensitive to local context 3. Incompatible with existing technology |

Several infrastructure and technological challenges impaired the effective implementation of mHealth tool, irrespective of its diagnosis accuracy |

| 70 | van der Heijden (2018) | Incorporation of IDx-diabetes retinopathy (IDx-DR 2.0) in clinical workflow, to detect retinopathy in persons with type 2 diabetes |

Quantitative, 1415 respondents |

Europe | Few errors recorded | High predictive validity recorded for IDx-DR 2.0 device |

| 71 | van der Zander et al. (2022) | Investigate perspectives about AI use in healthcare |

Quantitative survey, 492 (377 patients and 80 clinicians) |

Europe |

1. Potential loss of personal contact 2. Unintended errors |

Both patients and physicians hold positive perspectives towards AI in healthcare |

| 72 | Visram et al. (2023) | Investigate attitudes towards AI and its future applications in medicine and healthcare |

Qualitative (FGD), 21 young persons |

Europe |

1. Lack of humanity 2. Inadequate regulations 3. Lack of trust 4. Lack of empathy |

Children and young people to be included in developing AI. This requires an enabling environment for human-centred AI involving children and young people |

| 73 | Wang et al. (2021) | AI-powered clinical decision support systems in clinical decision-making scenarios |

Qualitative, 22 physicians |

Asia |

1. May conflict with diagnostic decisions by clinicians 2. May interfere with workflow 3. Subject to diagnostic errors 4. May be insensitive to local context 5. There is suspicion for AI tools 6. Incompatible with existing technology |

Despite difficulties, there is a strong and positive expectation about the role of AI- clinical decision support systems in the future |

| 74 | Wittal et al. (2022) |

Survey public perception and knowledge of AI use in healthcare, therapy, and diagnosis |

Quantitative survey, 2001 respondents (general public) |

Europe |

1. Privacy breaches 2. Data integrity issues 3. Lack of a clear legal framework |

Need to improve education and perception of medical AI applications by increasing awareness, highlighting the potentials, and ensuring compliance with guidelines and regulations to handle data protection |

| 75 | Xu (2020) | Medical-grade wireless monitoring system based on wearable and artificial intelligence technology | Quantitative | Asia |

1. Increased cost of care 2. Doubt about its suitability for all patient categories |

The AI tool can provide reliable psychological monitoring for patients in general wards and has the potential to generate more personalised pathophysiological information related to disease diagnosis and treatment |

| 76 | Zhai et al. (2021) | Develop and test a model for investigating the factors that drive radiation oncologists’ acceptance of AI contouring technology |

Quantitative, 307 respondents |

Asia | Medical errors | Clinicians had very high perceptions about AI-assisted technology for radiation contouring |

| 77 | Zhang et al. (2020) | Provide Optimal Detection Models for suspected Coronary Artery Disease detection |

Quantitative, 62 patients |

Asia | Depends on large and accurate data | Multi-modal features fusion and hybrid features selection can obtain more effective information for coronary artery disease detection and provide a reference for physicians to diagnosis coronary artery disease patients |

| 78 | Zheng et al. (2021) | Clinicians’ and other professional technicians’ familiarity with, attitudes towards, and concerns about AI in ophthalmology |

Quantitative, 562 Clinicians (291) and other technicians (271) |

Asia | Ethical concerns | AI tools are relevant in ophthalmology and would help improve patient health outcomes |

| 79 | Zhou et al. (2019) | Examine concordance between the treatment recommendation proposed by Watson for Oncology and actual clinical decisions by oncologists in a cancer centre |

Quantitative, 362 cancer patients |

Asia | Insensitive to local context | There is concordance between AI tools and human clinician decisions |

| 80 | Zhou et al. (2020) | Develop and internally validate a Laboratory-Based Model with data from a Chinese cohort of inpatients with suspected Stable Chest Pain |

Quantitative, 8,963 patients |

Asia | Needs very accurate and large volume of data | The present model provided a large net benefit compared with coronary artery diseases consortium ½ score (CAD1/2), Duke clinical score, and Forrester score |

Table 4.

Summary of themes emerging from articles on the perceived threats of artificial intelligent tools in health care

| Ser | Theme | Articles |

|---|---|---|

| 1 | Perceived unpredictable errors | 2,55–107 |

| 2 | Inadequate policy and regulatory regime | 56,58–60,78,79,89,72–94,96,97,101,108–123 |

| 3 | Perceived medical paternalism | 2,55,60,79,84,92,96,97,99–102,116,118,125–132 |

| 4 | Increased healthcare cost and disparities in insurance coverage | 2,76,77,119,122,133 |

| 5 | Breach of privacy and data security | 2,55,79,81,119,123 |

| 6 | Potential for bias and discriminatory services | 2,57,79,89,112 |

Results

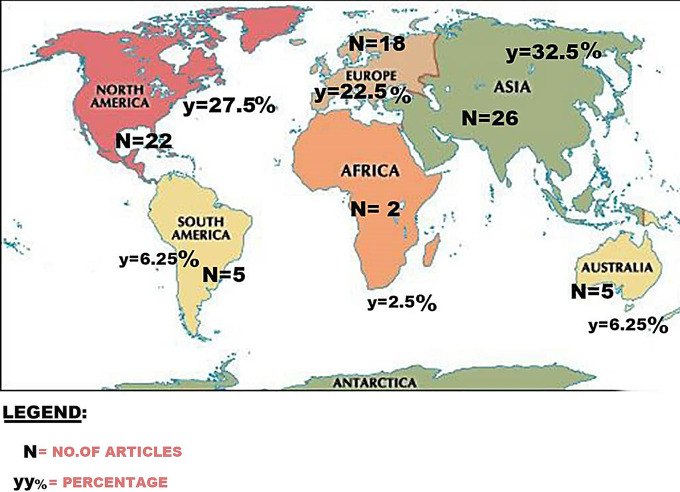

This scoping review covered 2010 to 2023 on the perceived threats of AI use in healthcare on the rights and safety of patients. We screened 1,320, of which 519(39%) studied AI application in healthcare, but only 80(15%) met the inclusion threshold, passed the quality rating and were included in this review. From the 80 articles, 48(60%) applied quantitative approach, 23(29%) qualitative, and 9(11%) mixed method. The 80 articles covered 2023–1(1.25%), 2022–7(8.75%), 2021–24(30%), 2020–21(26.25%), 2019–9(11.25%), 2018–7(8.75%), 2017–7(8.75%), 2016–1(1.25%), 2015–2(2.5%), and 2014–1(1.25%). This shows that the years 2020 and 2021 alone accounted for majority (56.25%) of the articles under review. Furthermore, 26(32.5%) of the articles came from Asia alone, 22(27.5%) from only North America, 18(22.5%) from only Europe, 5(6.25%) from only Australia, 5(6.25%) from only South America, 2(2.5%) from only Africa, 1(1.25%) from North America and Asia and 1(1.25%) from North America and Europe (see Fig. 2 below).

Fig. 2.

Geographical distribution of articles used in the current review

Perceived unpredictable errors

We report that majority of the articles reviewed revealed a widespread concern over the possibility of unpredictable errors associated with the use of AI tools in patient care. Of the 80 articles reviewed, 56(70%) [2, 55–107] reported the concern of AI tools committing unintended errors during care. Consistent with the operations of all machines, be it intelligent or not, AI tools could commit errors with potentially immeasurable consequences to patients [60–65, 100, 103, 106]. This has triggered some level of hesitation and suspicion for AI applications in healthcare [2, 57, 63, 70]. Perhaps, because the use of AI tools in healthcare is largely new and still emerging, the uncertainties and suspicions about their abilities and safety are largely in doubt [1, 3, 6, 25–29]. Moreover, there are centuries of personal and documented accounts of medical errors (avoidable or not) within the healthcare industry, but it is doubtful who becomes responsible or liable if such AI tools commute errors (see Figs. 3 and 4).

Fig. 3.

Summary of key findings from previous reviews on the use of artificial intelligent tools in healthcare

Fig. 4.

Summary of key findings in current review on the use of artificial intelligent tools in healthcare

Inadequate policy and regulatory regime

The public was also seriously concerned about lack of adequate policies and regulations, specifically on AI use in healthcare, that define the legal and ethical standards of practice. This is evident in 29(36%) of the articles [56, 58–60, 78, 79, 89, 72–94, 96, 97, 101, 108–124] reviewed in this study. As with all machines, AI tools could get it wrong [56, 78, 79, 94, 97, 101], through malfunction, with potentially terrible consequences to the health and well-being of patients. Thus, where lies the burden of liability in case of breach of duty of care, privacy, trespass, or even negligence? There were no specific regulations on AI use in healthcare to respond to the scope and direction of liability for ‘professional misconducts’ of intelligent [59, 60, 78, 79, 96, 97, 99], or unintelligent conducts of the machines. This finding is anticipated because the healthcare sector is already characterised by disputes between patients and the medical facilities (including their agents) [12, 22, 23, 48]. Generally, patients want to be clear on what remedies accrue to them when there is a breach in duty of care. Moreover, the healthcare professionals on their part want to be clear on who takes responsibility when AI tools provide care that is sub-optimal [12, 22, 48]. Somebody must be responsible, is it the AI tool, manufacturer, healthcare facility or who?

Perceived medical paternalism

The application of AI tools could also interfere with the traditional patient-doctor interactions and potentially undermine patient satisfaction and the overall quality of care. This was reported by 22(27%) of the articles reviewed [2, 55, 60, 79, 84, 92, 96, 97, 99–102, 116, 118, 125–132]. We argued that AI tools lacked adequate humanity required in patient care. Though AI tools may have the ability to better predict the moods of patients, they may not be trusted to competently provide very personal and private services, such as psychological and counselling care [2, 55, 84, 92, 101, 102, 116, 118]. Thus, the personal and human touch that define the relationship between patients and human clinicians may not be guaranteed through AI applications [2, 97, 99, 100, 118, 125, 129, 132]. It is highly expected that patients will fear losing the opportunity to interact directly with human caregivers (through verbal and non-verbal cues) [11–15, 23]. The question is, is the use of AI tools sending patient care back to the application of biomedical model in healthcare? Therefore, the traditional human-to-human interactions of patients and the medics may be lost when machine clinicians replace human clinicians in patient care [11–15, 23].

Increased healthcare cost and disparities in insurance coverage

Evidence also showed the public is concerned that the use of AI tools will increase the cost of healthcare and insurance coverage 7(9%) [2, 76, 77, 119, 122, 133]. Given that adoption of AI tools in healthcare could be capital-intensive and potentially inflate operational cost of care, patients are likely to be forced to pay far more for services beyond their economic capabilities. Moreover, most health insurance policies have not yet cover services provided by AI tools leading to dispute in the payment of bills for services relating to AI applications [2, 77, 119, 133]. Already, healthcare cost is one major concern for patients globally [7, 11, 16, 27]. Therefore, it is legitimate for patients and the public to become anxious about the possibility of AI tools worsening the rising cost of healthcare and triggering disparities in health insurance coverage. Cost of machines learning, cost of maintenance, cost of data, cost of electricity, cost of security and safety of AI tools and software, cost of training and retraining of healthcare professionals in the use of AI tools, and many other related costs could escalate the overhead cost of providing and receiving essential healthcare services [7, 11, 16, 27].

Breach of privacy and data security

We report that the public is concerned about the breach of patient privacy and data security by AI tools. As reported by 5(7%) of the articles [2, 55, 79, 81, 119, 123] reviewed, AI tools have the potential to gather large volumes of patient data in a split of a second, sometimes at the blind side of the patients or their legal agents. As argued by Morgenstern et al. [79] and Richardson et al. [2], given their sheer complexity and automated abilities, it will be difficult to foretell when and how a specific patient data are acquired and used by AI tools, a tuition the presents a ‘black box’ for patients. Thus, apart from what the patient may be aware of, there was no surety of what else these machine clinicians could procure, albeit unlawfully, about the patient. Furthermore, it is unclear how patient data are indemnified against wrongful use and manipulation [2, 119, 123]. These AI tools could, wittingly or unwittingly disclose privileged information about a patient with potentially dire consequences for the privacy and security of patients. It is expected that patients would be apprehensive about the privacy and security of their personal information stored by AI tools [5, 8, 10–16]. Given that these AI tools could act independently, patients would naturally be worried about what happens to their personal information.

Potential for bias and discriminatory services

The results further suggest that there is potential for discrimination and bias on a large scale when AI tools are used in healthcare. As reported by 5(6%) of the articles [2, 57, 79, 89, 112] we reviewed, the utility of AI is a function of its design and the quality of training provided [2, 57, 112]. In effect, if the data used for training these machines discriminate against a population or group, this could be perpetuated and potentially be escalated when AI tools are deployed on a wider scale to provide care [2, 57, 79]. Thus, AI tools could perpetuate and escalate pre-exiting biases and discrimination, leaving affected populations more marginalised than ever [2, 89, 112]. A common feature in healthcare globally is the issues of bias and discrimination in the patient care [8, 13, 37]. Therefore, the fear that AI tools could be setup to provide bias and discriminatory care is both real and legitimate, because their actions and inactions are based on the data and machine learning provided [8, 13, 37].

Discussion

There is a steady growth in AI research across diverse disciplines and activities globally [1, 134]. However, previous studies [4, 23] raised concerns about the paucity of empirical data on AI use in healthcare. For instance, Khan et al. [23] argued that majority of studies on AI usage in healthcare are unevenly distributed across the world and many are also conducted in non-clinical environments. Consistent with these findings, the current review showed that there is inadequate empirical evidence on the perceived threats of AI use in healthcare. Of the 519 articles on AI use in healthcare, only 80(15%) met the inclusion threshold of our study. Moreover, affirming findings from the previous studies [21, 135], we found uneven distribution of these selected articles across the continents, with majority (n = 66; 82.5%) coming from three continents; Asia (n = 26; 32.5%), North America – (n = 22; 27.5%), and Europe – (n = 18; 22.5%). We discussed our review findings under perceived unpredictable errors, inadequate policy and regulatory regime, perceived medical paternalism, increased healthcare cost and disparities in insurance coverage, perceived breach of privacy and data security, and potential for bias and discriminatory services.

Perceived unpredictable errors

There is little contention of the capacity of AI tools to significantly reduce diagnostic and therapeutic errors in healthcare [10, 138–140]. For instance, the huge data processing capacity and novel epidemiological features of modern AI tools are very effective in the fight against complex infectious diseases such as the SARS-CoV-2 and a game-changer in epidemiological research [140]. However, previous studies [1, 12, 22] found that AI tools are limited by factors that could undermine their efficacy and produce adverse outcomes on patients. For instance, power surges, poor internet connectivity, flawed data and faulty algorithms, and hacking could confound the efficacy of IA applications in healthcare. Indeed, hacking and internet failure could constitute the most dangerous threats to the use of AI tools in healthcare especially in resource-limited countries where internet speed and penetration are very poor [8–13]. Furthermore, we found that fear of unintended harm on patients by AI tools was widely reported by the articles (70%) we reviewed. For instance, potential for unpredictable errors were raised in a study that investigated perspectives about AI use in healthcare [99]. Similarly, Meehan et al. [141] argued that the generalizability and clinical utility of most AI applications are yet to be formally proven. Besides, concerns over AI related errors featured in a study on diagnostic performance, feasibility, and end-user experiences of AI assisted diabetic retinopathy [88]. Also, in the application of an AI-based Decision Support System (DSS) in the emergency department [81], such error concerns were raised.

The evidence is that, patients were in fear of being told “we do not know what went wrong”, when AI tools produce adverse outcomes [22]. This is because errors of commission or omission are associated with all machines, including these machines clinicians, whether intelligent or not [12, 22]. Therefore, there is merit in the argument that AI tools should be closely monitored and supervised to avoid or at least minimise the impact of unintended harms to patients [138]. We are of the view that the attainment of universal health coverage, including access to quality essential healthcare services, medicines and vaccines for all by 2030 (SDG 3.8) could be accelerated through evidence-based application of AI tools in healthcare provision [20]. Thus, given that the use of AI tools in healthcare is generally new and still emerging [7, 9, 15, 25–29], the uncertainties and suspicions about the trustworthiness of such tools (that is their capabilities and safety) are natural reactions that should be expected from patients and the general public. However, these concerns could ultimately slowdown the achievement of the SDG 3.8. Moreover, there are a lot of occurrence of medical errors (avoidable or not) within the healthcare industry with dire consequences to patients [13, 29, 30, 37]. Thus, the finding comes as no surprise because medical care has always been characterised by uncertainties and unpredictable outcomes with dire consequences to patients, families, facilities and the health system [4, 9, 28, 31].

Inadequate policy and regulatory regime

The fragility of human life requires that those in the healthcare business are held to the highest standards of practice and accountability [13, 24, 137]. Previous studies [10, 22, 136] argued that healthcare must be delivered consistent with ethicolegal and professional standards that uphold the sanctity of life and respect for individuals. In keeping with this, our review showed that the public is worried about the lack of adequate protection against perceived infractions, deliberate or not, by AI tools in healthcare. Concerns over the lack of a clear policy regime to regulate the use of AI applications in patient care featured in a study that integrated a deep learning sepsis detection and management platform, sepsis watch, into routine clinical care [92]. Similar concerns were raised in a study that evaluated consecutive patients for suspected Acute Coronary Syndrome [11]. Moreover, another evidence that used the Neural Network (NN) algorithm-based models to improve the Global Registry of Acute Coronary Events (GRACE) score performance to predict in-hospital mortality and acute Coronary Syndrome [10] similar concerns were found.

The contention is that, existing policy and legal frameworks are not adequate and clear enough on what remedies accrue to patients who suffer adverse events during AI care. Our view is that patients may be at risk of a new form of discrimination, especially targeted at minority groups, persons with disabilities, and sexual minorities [14]. The need for a robust policy and regulatory regime is urgent and apparent to protect patients from potential exploitation by AI tools. This finding is not strange, because the healthcare sector is already being regulated with policies which covering the various services [11, 30, 31, 35]. Moreover, because patients are normally the vulnerable parties in the patient-healthcare provider relationship [30, 32–36], we argue that patients would seek adequate protection from the actions and inactions of AI tools, but unfortunately, these machine tools may not have the capabilities. Moreover, human clinicians should be equally concerned about who takes responsibility for infractions of these machine clinicians during patents care [8, 24, 29, 35]. Therefore, there is the need for policy that clearly define and meaning to the scope and nature of liability of the relationship between humans and machine clinicians during patient care.

Perceived medical paternalism

Intelligent machines hold tremendous prospects for healthcare, but human interaction is still invaluable [3, 21, 141–144]. According to Checkround et al. [145], the overriding strength of AI models in healthcare is their super-abilities to leverage large datasets to foretell and prescribe the most suitable course of intervention for prospective patients. Unfortunately, the ability of AI models to predict treatment outcomes in Schizophrenia, for example, are highly context-dependent and have limited generalizability. Our review revealed that the public is equally worried that AI tools could limit the quality of interaction between patients and human clinicians. So, through empathy and compassion, human clinicians are better able to procure effective patient participation in the care process and reach decisions that best serve the personal-cultural values, norm and perspectives of the patients [143].

We found that as AI tools provide various services and care, human clinicians may end up losing some essential skills and professional autonomy [24]. For example, concerns over reduction in critical thinking and professional autonomy was raised in some studies, including a study that used socio-technical system to implement a computer-aided diagnosis [97], adherence to antimicrobial prescribing guidelines and Computerised Decision Support Systems (CDSSs) adoption [12] and barriers and facilitators to the uptake of an evidence-based Computerised Decision Support Systems (CDSS) [64]. Thus, human medics need to take a lead role in the care process and cease every opportunity to continually practice and improve their skills. We believe that because patients normally would want to interact directly with human clinicians (through verbal and non-verbal cues) and be convinced that the conditions of the patient are well understood by human beings [2, 3, 16, 31]. Typically, patients want to build cordial relationship that is based on trust with their human clinicians and other human healthcare professionals. However, this may not be feasible when AI clinicians are involved in the care process [11, 16, 26], especially dosing so independently. Therefore, the traditional human-to-human interactions between the patients and the human medics may be lost when machine clinicians takeover patient care.

Increased healthcare cost and disparities in insurance coverage