Abstract

The field of quantitative biology (q-bio) seeks to provide precise and testable explanations for observed biological phenomena by applying mathematical and computational methods. The central goals of q-bio are to (1) systematically propose quantitative hypotheses in the form of mathematical models, (2) demonstrate that these models faithfully capture a specific essence of a biological process, and (3) correctly forecast the dynamics of the process in new, and previously untested circumstances. Achieving these goals depends on accurate analysis and incorporating informative experimental data to constrain the set of potential mathematical representations. In this introductory tutorial, we provide an overview of the state of the field and introduce some of the computational methods most commonly used in q-bio. In particular, we examine experimental techniques in single-cell imaging, computational tools to process images and extract quantitative data, various mechanistic modeling approaches used to reproduce these quantitative data, and techniques for data-driven model inference and model-driven experiment design. All topics are presented in the context of additional online resources, including open-source Python notebooks and open-ended practice problems that comprise the technical content of the annual Undergraduate Quantitative Biology Summer School (UQ-Bio).

Keywords: stochastic gene expression, fluorescence microscopy, model inference, quantitative biology, single-cell imaging, mechanistic models

1. Introduction

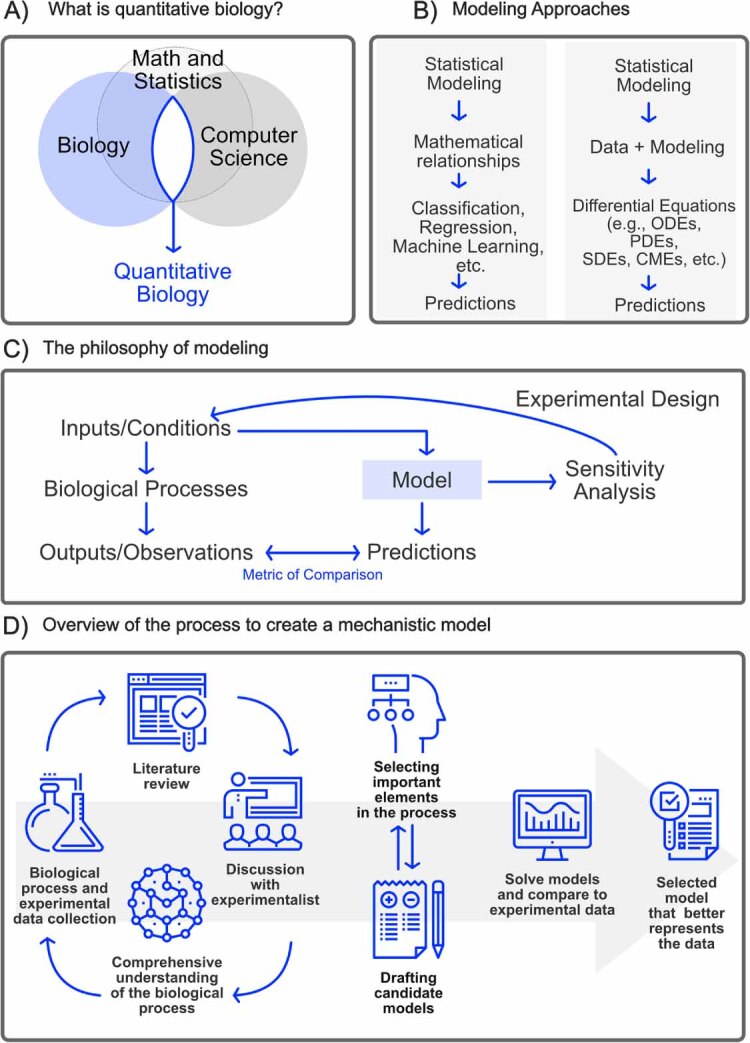

Quantitative biology is an interdisciplinary field that integrates biology, computer science, mathematics, and engineering to understand the behavior of biological systems (figure 1(A)). By employing quantitative methods, researchers have made significant strides in studying vital biological processes, including gene expression [1], signaling pathways [2], viral dynamics [3, 4], microbial community dynamics [5], and more. Quantitative biology is crucial in advancing biomedical research [6], drug development [7], and synthetic biology [8]. The models developed in quantitative biology have far-reaching implications, ranging from designing personalized medical treatments [9] to shaping public policy during pandemics [10].

Figure 1.

Overview of quantitative biology. (A) Quantitative biology lies at the intersection of biology, computer science, math and statistics. (B) Both statistical and mechanistic models attempt to infer relationships between experimental observations. The key difference is that mechanistic models also attempt to constrain these relationships to follow known or hypothesized physical laws or biochemical reactions. (C) Modeling involves an iterative procedure of collecting diverse data sets, making predictions for feasible experiments, constraining models to match resulting data, and analyzing model sensitivities to target more informative experimental conditions that are most likely to glean further insight. (D) The creating of mechanistic models involves close collaboration between experimentalists and computational scientists.

1.1. History of quantitative biology

Using quantitative methods to study life sciences is not new. Early examples can be traced back to the 19th century when Pierre-François Verhulst introduced the logistic function to model population growth [11]. In the late 19th century, Cato Guldberg and Peter Waage elucidated the principle for the Law of Mass Action to describe the stoichiometry and rates of elementary chemical reactions [12]. At the beginning of the 20th century, Leonor Michaelis and Maud Menten extended upon these to introduce the model describing the kinetics of substrate and products in enzyme-catalyzed reactions [13]. During the same period, Lotka–Volterra (or predator-prey) equations were introduced to model the evolution of interacting populations of distinct species [14, 15]. In the 1950s, Alan Hodgkin and Andrew Huxley introduced a mechanistic model to describe the initiation and propagation of action potentials in neurons [16]. During the ‘60s and ‘70s, scientists combined experimental observations with statistical models to determine genetic variation and predict quantitative traits in livestock and crops [17]. In 1965, Margaret Dayhoff, the ‘mother of bioinformatics,’ pioneered the development of computational methods in studying biological molecules [18]. Later in the ‘90s, large data sets of DNA, RNA, and protein sequences became available during the genome sequencing era, and the need to store and interpret these data sets gave rise to genomics and structural bioinformatics [19]. By the end of the ‘90s, important progress was made using mathematical models to understand the evolution of the immune system and HIV in infected patients, paving the way to developing potent antiviral drugs [20]. In parallel, physicists and biologists combined crystallographic data and molecular models to determine the 3D structure of proteins [21]. In the early 2000’s, systems biology was raised as a new paradigm to have a holistic (systems) understanding of all the metabolic processes in the cell [22]. At the same time, by combining mechanistic modeling and experimental data, important progress was achieved in understanding the sources of variability (extrinsic and intrinsic noise) in gene expression [23]. More recently, powerful technologies have been introduced to generate massive sets of biological data, which, combined with more powerful computers, has led to the development of complex models with predictive capabilities and a better understanding of ‘whole-cell’ processes [24]. At the same time, flux balance analyses have made significant strides to combine the concepts of mass balance and evolutionary optimization to study metabolic processes in genome scale models [25]. Nowadays, breakthroughs in biology have been achieved by using artificial intelligence and machine learning models. For example, image segmentation has been revolutionized after the introduction of U-net, a deep learning approach capable of automatically segmenting biomedical images [26]. In the realm of protein structure prediction, AlphaFold is an increasingly popular deep-learning model that has been demonstrated to predict protein structures with comparable scores to experimental results in Critical Assessment of Protein Structure Prediction competitions [27, 28]. The discovery of novel drugs is facilitated by the use of generative artificial intelligence [29].

1.2. History of the q-bio Conference and Summer School

As stated before, quantitative biology had been in practice for many decades before 2007, and the term ‘q-bio’ was previously coined by the physics e-print server (arXiv.org) in 2003. However, the prominence of the discipline entered a period of rapid growth following the first Annual q-bio Summer School and Conference in Cellular Information Processing (Summer 2007 at St. James College in Santa Fe, New Mexico). As Ilya Nemenman and colleagues write in their review of this inaugural event [30]:

‘[The Los Alamos based] organizers adopted the term ‘q-bio’ to succinctly refer to research efforts directed at predictive modeling of cellular regulatory systems [T]he reference ‘quantitative biology’, a long-used term, recognizes that the type of work emphasized at the conference is not new, although it does seem to be reaching a new level of maturity as technological advances allow biological systems to be probed and monitored quantitatively with unprecedented control, scope, and resolution The name reflects a hope that the conference will help spark a revolution that will bring the prominence of quantitative work in biology up to the level of that in fields such as chemistry and physics.’

As hoped by the original organizers, the conference certainly sparked a lasting interest in the field, and the Annual q-bio Summer School and Conference (recently celebrating their 17th year) have since hosted several thousand participants in discussions of how quantitative models can describe and predict biological processes.

1.3. What is this paper all about?

This review introduces some basic modeling strategies taught at the annual Undergraduate Quantitative Biology (UQ-Bio) Summer School that eventually grew from the q-bio effort. Specifically, we describe several experimental techniques that use fluorescence microscopy to visualize single-cell and single-molecule dynamics of gene expression, and we show how these data can be understood through the lenses of deterministic ODEs and discrete stochastic analyses. In section 2, we introduce the reader to a basic philosophy of quantitative modeling in biology, and we discuss some of the goals and initial strategies needed to create an appropriate model. In section 3, we review some of the modern labeling and microscopy tools used to generate single-cell images, and in section 4, we introduce the reader to some simple approaches to process these images and extract quantitative data. In section 5, we discuss modern deterministic and stochastic modeling tools used to reproduce the dynamics of single-cell processes, and section 6 presents modern tools used to integrate experimental data with these models. Due to limitations of space, we present these topic at a high level in this article, but we provide extensive and detailed online resources for the interested reader to build their skills in each topic. Specifically, the appendix provides links to comprehensive video tutorials and interactive electronic Python notebooks that demonstrate the technical material, including advice on how to choose the most appropriate modeling strategy, how to build effective procedures for managing experimental data, how to validate computational pipelines, and how to make scientific codes more accessible and reproducible. Finally, in gray boxes like the following, we describe a multipart drug discovery exercise to challenge the reader to implement all the topics discussed in this text and to build a predictive model of gene expression from simulated single-cell microscopy experiments.

1.4. UQ-Bio Summer School challenge—introduction

Throughout this tutorial, we will use a simulated case study to illustrate various steps toward analyzing data and creating mechanistic models to explain and predict the expression of a deleterious protein at the level of single cells. In the first stage of the challenge (section 2.3), you (the reader) will be asked to define the scope of a model based on initial knowledge about the system and available experiments. In the second stage of the challenge (section 4.4), you will be asked to process simulated microscopy videos to collect quantitative data for the spatial and temporal expression of RNA and protein at different times following application of the drug. In the third stage, you will be asked to create a mathematical framework to describe (section 5.2) and simulate the gene expression process under normal and perturbed conditions using deterministic (section 5.4) and stochastic approaches (sections 5.5 and 5.6). Finally, in the fourth stage (section 6.3), you will be asked to combine your mathematical model with the processed experimental data to quantify how well the model reproduces observed behaviors, and to infer model parameters.

This challenge is designed to increase in complexity, and readers are encouraged to complete the stages in the recommended order using Python code Jupyter Notebooks (links to example solutions are provided in each section). When this challenge has been used as part of the UQ-Bio Summer School, participating teams were asked to document and present all steps needed to formulate and simulate the model as well as to replicate the experimental data. For the sake of simplicity, here we consider a single simulated system containing one gene and one known drug mechanism-of-action (i.e. disruption of RNA nucleus to cytoplasm transport). In the UQ-Bio program, student teams have been asked to consider multiple drugs, each with different and unknown strengths and mechanisms, and they must iterate the presented steps in order to determine these mechanisms and strengths. Codes needed to generate data for these extensions are also provided in the appendix.

2. The basics of quantitative models

At the heart of quantitative biology is the concept of a model, which we define as a mathematical representation that aims to capture a system’s essence. Quantitative biology has two general approaches to modeling biological phenomena: statistical models (in which we include machine learning) and mechanistic models (figure 1). For either type of model, the goal of the model is typically to quantitatively integrate existing knowledge (i.e. the ‘prior’) and freshly acquired data (i.e. new ‘evidence’) to predict how certain system aspects (e.g. basic properties like chemical concentration and process energy or more collective, emergent properties such as phenotype or behavior) may change (e.g. in time or space) under different experimental circumstances (e.g. under different genetic, chemical, or environmental manipulations). Although statistical and mechanistic models share similar goals, their approaches differ in their underlying mathematical principles, assumptions, and methods needed to solve them. Statistical models seek to describe probability distributions for different relationship patterns within the data. Traditional statistical methods, including linear regression, logistic regression, Bayesian inference, time series analysis, etc and more modern machine learning methods extend these traditional approaches to allow for more complicated patterns, such as decision trees, neural networks, deep learning models, support vector machines, and so on [31]. These statistical approaches are built upon a strong foundation of algebra and geometry, with deep learning taking these to the next level using GPU-enhanced computing technology.

On the other hand, mechanistic models are built on an understanding of the underlying physical or biological processes that govern the system evolution in space and time. Mechanistic models often involve physical laws and chemical principles (e.g. Newton’s laws of motion, Fick’s laws of diffusion, or Guldberg and Waage’s Law of Mass Action) that are combined with conservation of mass and energy and conveniently stated in terms of differential equations. These include continuous, deterministic models based on ordinary differential equations (ODEs) and spatial models based on partial differential equations (PDEs). To account for unknown mechanisms, these models are often extended to include random noise, giving rise to stochastic models that must be analyzed using stochastic differential equations (SDEs) and stochastic partial differential equations (SPDEs). Because many important biological processes involve discrete quanta (e.g. individual molecules, genes, cells, organisms, etc), the principles underlying ODEs, PDEs, SDEs, and SPDEs can be solved for discrete processes using finite state machines (for discrete deterministic processes), Agent-Based Models (for discrete, deterministic and spatial processes), kinetic Monte Carlo (KMC), for discrete stochastic processes) and reaction-diffusion master equation (for discrete, stochastic, and spatial processes). See the q-bio community-written textbook [32] for more detailed introduction to these methods.

In modern practice (figures 1(C) and (D)), mechanistic modeling studies almost always employ statistical analyses to estimate model parameters and mechanisms from data [33], to quantify model uncertainties [34], and to reduce models to more computationally tractable representations [35, 36]. For example, thermodynamic models employ statistical mechanics to describe ensemble behaviors while offering detailed mechanistic insights into the energy flows underlying biological processes [37]. Similarly, there is a great deal of active research to include mechanistic detail within machine learning models, leading to Physics-Informed Machine Learning models [38, 39]. These ‘grey-box’ or ‘hybrid models’ aim to get the best of both worlds—on the one hand, they overcome limitations such as incomplete mechanistic understanding, and on the other, they reduce the need for massive data sets as required by purely statistical methods [40]. The choice of modeling approach depends on many factors, including the problem at hand, data availability, and, most importantly, how the model will be used. While both statistical models and mechanistic models are indispensable in the modern study of quantitative biology, this text will focus on mechanistic models because (1) they are generally more intuitive to the novice quantitative biologist, and (2) they can often provide a more interpretable set of predictions for how a biological process of interest may respond to new and unseen genetic, chemical, or environmental conditions.

2.1. Why create a quantitative model?

The first step in creating any mathematical model is to decide how that model will be used: what aspects of the biological process will the model be used to capture or predict? For example, perhaps the model will be used to test different mechanistic hypotheses for how a certain transcription factor regulates ‘gene X’; perhaps it will be used to predict which other genes are overexpressed when a specific drug is used to repress expression of ‘gene Y’; or maybe the model will be used to quantify the clustering of ribosomes on the mRNA transcribed from ‘gene Z’ when a particular tRNA is depleted from the cell. Because answering each question requires a different set of knowledge or experiments, you can dramatically reduce your work by asking clear questions at the outset9.

The general aim of a model is to rigorously connect three aspects of scientific exploration: the ‘mechanisms’ that affect the process of interest, the ‘controllable inputs’ that can perturb the process, and the ‘observable outputs’ that could provide data to quantify the process.

By formalizing these three aspects in different configurations, one can make clear statements about the purpose of the model, such as:

-

(i)

Model-Based Hypothesis Selection—The model will analyze the results of experiments and observations to identify important mechanisms of interest.

-

(ii)

Model-Generated Predictions—The model will integrate known biological constraints to predict responses under new experimental inputs.

-

(iii)

Model-Guided Process Design or Control—The model will be used to design inputs or perturbations to optimize responses under biological constraints.

2.2. How do I tailor my model to match my experiments and scientific questions?

To make the model more useful and to guide subsequent experimentation tasks, we must narrow the model scope. For this, it often helps to draw one or more cartoons (e.g. ‘free-body diagrams’). The purpose of these cartoons is to clearly and visually define the mechanisms under consideration in the context of controllable inputs and observable outputs that can be examined through experimentation (see figure C2 for an example cartoon). By creating such cartoons, the scientist can target their literature research and experiment designs to focus on the most essential biological processes or modeling goals. It is best to keep experimental collaborators in the loop when creating these cartoons—not all experimental assays are equal regarding feasibility, equipment availability, accuracy, or cost.

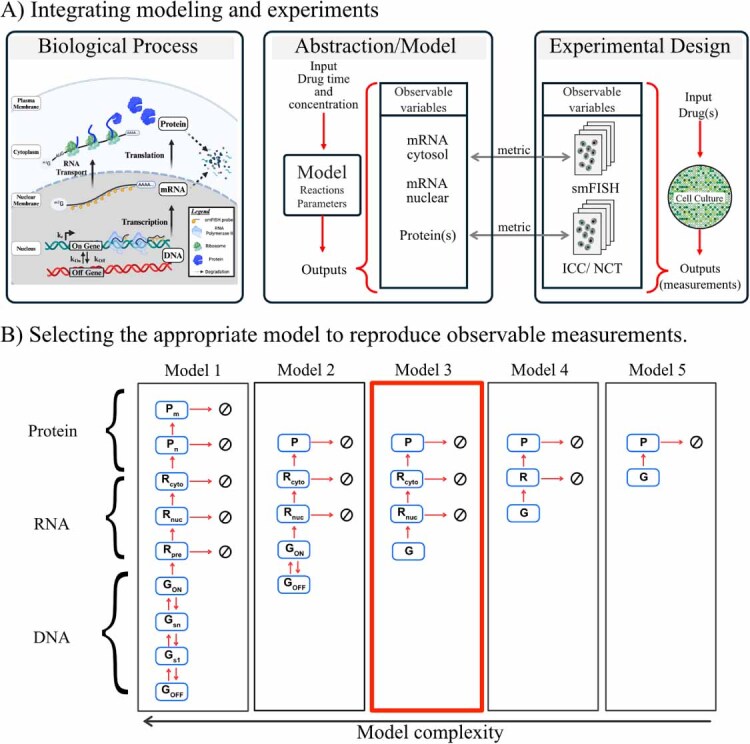

Figure C2.

Selecting the model scope. (A) Schematic containing the steps during gene expression, including (de)activation, transcription, and translation. The center and right diagrams show how the model and experiments are integrated by comparing observable variables cytosol and nuclear mRNA measured with smFISH experiments and protein measurements with immunocytochemistry (ICC) or Nascent Chain Tracking (NTC). The direct comparison between model variables and experimental variables is achieved by using a metric described in section 6. (B) Models with different complexity. In Model 1, the diagram represents a comprehensive representation of the system including nine species; in the first part of this representation, the Gene (G) can transition between n different states until reaching an active form (GON). Subsequently, multiple forms of mRNA are taken into account, including premature mRNA (Rpre), mature nuclear mRNA (Rn), cytoplasmic mRNA (Rc), nascent Protein (Pn), and mature protein (Pm). All 16 arrows connecting the different chemical species represent the system’s transition rates (parameters). From Models 2 to 5, it can be observed that only some elements are considered in these models, reducing the complexity of the model in this way. In this example, Model 3 is selected for the rest of the exercise as it contains the minimal variables needed to reproduce the observable experimental variables. Created in BioRender. Ron E (2025). https://BioRender.com/e39n811.

After defining the scope of the model and the availability of prior mechanistic knowledge and potential experiment designs, the next step is to choose the correct model resolution, which is the amount of detail that needs to be considered in the model. In this step, relevant elements from the known biology must be prioritized for their inclusion in the mathematical representation. What aspects have direct relevance and make unique contributions to the process dynamics, and which are more tangential or redundant? When choosing among these, it is important to note that there will be a trade-off between model complexity, the number of model parameters, and the computational resources needed to analyze these models. In other words, as the model’s complexity increases, so does the need for more computational resources to solve it correctly and more experimental resources to elucidate unknown parameters. The use of overly complex models can also lead to overfitting (fitting to noise and outliers in the data), reduced applicability, and decreased detectability of bias. Additionally, for a more robust modeling process, it is recommended to consider multiple models for different hypotheses. This way, multiple hypotheses can be tested to select those that best reproduce the experimental observations or result in the least prediction uncertainty. In the following exercise, you are asked to define the scope for a model of single-cell gene expression.

2.3. UQ-Bio Summer School challenge—define model scope

The first (and often most subjective) step in any modeling endeavor is to state the goals of the modeling exercise, to identify the existing prior knowledge related to those goals, and to critically evaluate the available materials or data that are relevant to the modeling goals. In this simulated challenge, your goals are clear—you have been asked to build a model that can predict how a drug affects gene expression at the single-cell level as a function of time and drug dosage. From your experience in eukaryotic single-cell biology, you know that the gene of interest may be bursty in that it may have active and inactive transcriptional states. You know that mRNA are transcribed in the nucleus from active alleles, and that mRNA must reach the cytoplasm before they can be translated. You know that both mRNA and protein degrade over time. Moreover, you are provided with prior knowledge that the mechanism of the drug is to reduce nuclear to cytoplasmic transport of the mRNA. Finally, you expect to be provided with (simulated) microscopy images containing labeled mRNA and protein under control conditions and in response to application of the drug (see figure C1). These snapshot data have a precise spatial resolution making it possible to count individual mRNA (using smFISH) and protein (using ICC), but achieving this spatial resolution requires cell fixation, which means that it impossible to track individual mRNA or protein molecules.

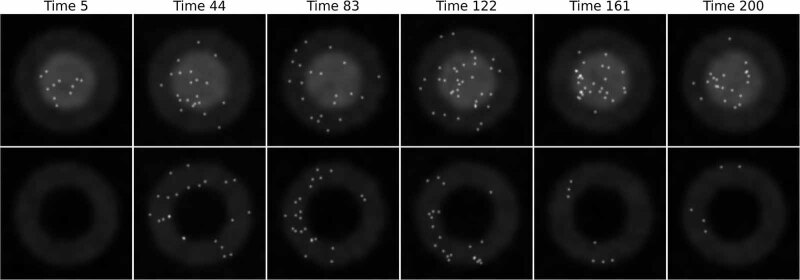

Figure C1.

Simulated data depicting RNA and protein snapshots at different times. The figure illustrates the spatial distribution of RNA and protein concentrations within a simulated cell at six different time points. The areas enclosed by the circles represent the nucleus and the surrounding cytosol. The top row shows the RNA channel, where RNA molecules are visualized as 2D Gaussian kernels in the image. The bottom row represents the protein channel, showing the accumulation and dynamics of protein molecules synthesized from the RNA. Both rows reveal the temporal progression of gene expression, and the effects of drug-induced inhibition on mRNA transport and its subsequent impact on protein production.

Note—for the sake of simplicity, these data were simulated using a two-dimensional spatiotemporal model. To create this model, we first generated a background image using random noise, simulating the cell with two circles representing the nucleus and cytoplasm. The background was constructed for the protein and RNA channels. Transcription and translation were modeled mechanistically, using a model simulating a gene oscillating between active and inactive states. mRNA molecules are synthesized in the active state, they diffuse to the cytoplasm, and there they are translated into protein. The model is solved using the Gillespie algorithm. To integrate this model into the spatial simulation, RNA and protein molecules were represented as 2D Gaussian kernels superimposed on the simulated cytoplasm and nucleus. The movement of RNA and protein spots was modeled using a two-dimensional random walk (figure C1). The simulator is available at https://github.com/luisub/qbio_paper.git. We note that more advanced and realistic single-cell microscopy simulators are available [41, 42], but we provide this relatively simple Python script so that the reader could more easily adapt the method for their own purposes.

Armed with this information, your first task in this stage is to create a detailed schematic to represent the gene expression process within the cell. This cartoon should encompass all critical stages, such as gene activation, transcription, mRNA transport, and translation into proteins. You should label all relevant molecular species (e.g. genes, mRNA, proteins) and their interactions, and you should explicitly state what are the controllable inputs and the observable outputs.

Next, you should endeavor to select the most critical components and interactions that are essential to capture the fundamental dynamics of gene expression. When possible, try to reduce the number of species and reactions by focusing on those that significantly influence the observable system’s behavior while eliminating redundant elements that cannot be affected by the allowable perturbations or resolved from the available observations.

Solution—specifying a model scope

To construct a mathematical representation of gene expression, we illustrate the process through a simplified diagram encompassing all the critical stages, such as gene activation, transcription, mRNA transport, and protein production. The cartoon depicted in figure C2 visualizes these gene expression phases. The models labeled 1 through 5 vary in complexity and detail. For instance, Model 1 comprises nine chemical entities and requires 16 parameters. In contrast, Model 5 simplifies to just two chemical entities with only two parameters. Moreover, this set of five models is already a major simplification; each of these models could be extended to include spatial diffusion of the genes, RNA, and protein molecules as well as to assign multiple post-transcriptional or post-translational states to control the activity and degradation of the molecules of interest. Any, all, or none of these models may be sufficient depending on the scientific question, the quality of the available experimental data, and the practicality of solving the model given the chosen methods and computational resources. As such, it is often crucial to consider multiple models.

For the purposes of this demonstration, Model 3 is selected for the rest of the exercise. The reason for this subjective choice is that Model 3 contains the minimal variables needed to capture the observable experimental variables, which are the number of mRNA in the nucleus, the number of mRNA in the cytoplasm, and the number of proteins in the cell. However, it should be noted that this choice should be flexible; if in later stages, we find that gene bursting is substantial (e.g. if the gene activation and deactivation rates are very slow compared to mRNA degradation events), then Model 3 may fail to capture large amounts of observed cell-to-cell heterogeneity, and we may need to use Models 1 or 2. Conversely, if we were to examine a drug that affects some process downstream from mRNA transport, then perhaps Model 4 or 5 may be sufficient to capture the relevant dynamics. If a different experiment were devised that allowed for direct tracking of mRNA or protein and if the specific mechanisms of diffusion were of particular interest, then a fully spatial model may be necessary. In practice, one would return to this stage later and ask critically if (1) does the chosen model capture all important phenomena (if not the model complexity may need to be increased), and (2) does the uncertainty in the model allow for acceptably precise predictions (if not, the model may need to be simplified).

3. Methods for single-cell analysis

Every model begins as a hypothesis that must be constrained or validated against experimental data before being trusted to describe or predict a biological phenomenon. To apply the scientific method and compare models to experiments, it is crucial to determine which experiments are needed to generate observational data, estimate errors associated with these data, and formulate quantitative metrics to rigorously compare models to data in light of these errors.

These decisions must consider the fact that different experimental techniques have vastly different powers for spatial and temporal resolution. Some techniques can measure single molecules, while others only measure average concentrations. Some techniques can measure events occurring in milliseconds, while others measure changes over long periods. Some measurements can detect the studied molecule directly, while others may use an indirect measurement such as intensity in a microscope image.

Given these differences between experimental techniques, it is necessary to determine the required spatiotemporal resolution to address the scientific question correctly and then select the appropriate modeling approach and experiment. For example, if it is known that ‘gene X’ has a large and homogeneous expression within cell populations, but it is not clear how its expression changes over time in response to chemical stimuli, then an experiment capable of measuring bulk mRNA or protein concentrations at multiple times should be sufficient to constrain a deterministic model to answer that scientific question. In contrast, if the scientific goal is to determine how the expression of ‘gene X’ is regulated, and it is known that ‘gene X’ is highly variable from one cell to the next, then experiments capable of single-molecule or single-cell resolution will be needed to constrain a stochastic model and achieve that goal. Several experimental techniques are aimed at providing bulk quantitative measurements of gene expression in terms of DNA, RNA, and proteins to satisfy the needs of the former example. These techniques include PCR, qRT-PCR, ELISA, and western blots, among others. However, in this article, we are particularly interested in gene regulation processes that lead to single-cell variations, and we will focus on single-cell experiments for the remainder of this tutorial.

3.1. Methods for single-cell imaging

Gene expression is a central biological process encoded in the DNA, which in most animal cell lines is present at levels of only one or two gene alleles per cell. DNA alleles fluctuate between active and inactive states. When active, genes are used as templates to produce mRNA, and these mRNAs are used as templates to produce proteins through transcription and translation processes, which in turn may also trigger signals to activate or deactivate other genes [43, 44]. In recent years, multiple methodologies have been developed to measure gene expression at single-cell and single-molecule resolution. These methods include flow cytometry [45], single-cell RNA and DNA seq [46–48], and single-cell imaging [49]. In the following, we will focus on single-molecule fluorescent microscopy; nevertheless, we remark that all other techniques can produce experimental data that can be integrated with mechanistic models using similar principles.

3.2. Fluorescent labels

Various fluorescent labels and activatable probes have been developed over the years to study biochemical processes at the molecular level in living and fixed cells. Most rely on combining three technologies: fluorescent molecules, methods to physically link the fluorescent molecules to a region or molecule of interest, and fluorescent microscopy. Fluorescence occurs when a fluorophore (e.g. a chemical dye molecule or bio-luminescent protein) absorbs light energy at one wavelength and then re-emits some of that energy at another lower energy wavelength [50]. Some examples of chemical fluorophores include Rhodamine [51], Alexa Fluor Dyes [52], Cyanine Dyes (Cy3, Cy5, and Cy7) [53]. It is also commonplace to utilize natural fluorophores like fluorescent proteins (e.g. the famous green fluorescent protein, GFP) [54].

3.3. Labeling techniques employed in fixed cells

Fixing and immobilizing the cell is often necessary to obtain the crispest spatially-resolved images of labeled biomolecules. Irreversibly arresting all cellular processes sacrifices temporal resolution, but it allows for applying highly specific labeling procedures, as follows. Immunolabeling allows identifying an antigen in a cell or tissue; the antigen is usually a protein, and full antibodies are used for detection. Full antibodies cannot pass the cell membrane; as a result, this approach is only relevant to fixed and permeabilized cells (which unfortunately have the side effect of killing the cell) or the extracellular side of the membrane for live cells [55–57].

Single-molecule fluorescence in situ hybridization (smFISH, figure 2(A)) quantifies endogenous transcription in single cells. This method targets RNA using multiple hybridized fluorescent oligonucleotides [58]. There are various types of smFISH depending on the probe design, such as (a) the original smFISH design, which is 20–50 bases long with 1–5 fluorophores per probe [59, 60], (b) indirect labeling by smiFISH consists of using multiple (20–30) primary probes, each containing the sequence targeting different regions within the gene of interest and a common sequence that is complementary to a fluorescently labeled secondary probe [61], and (c) multiplexed smFISH comprises primary probes with two sequences of readout. These are detected through successive rounds of hybridization and collectively form a code that identifies the bound cellular RNAs. Using this technique, hundreds of distinct RNA species can be detected [62–64].

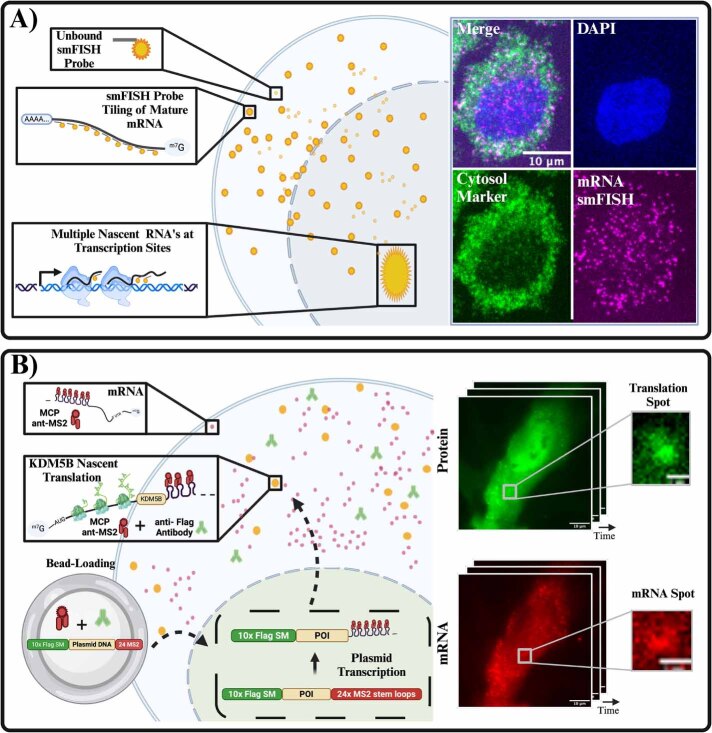

Figure 2.

Methods in single-cell imaging. (A) Schematic representation of smFISH (single-molecule fluorescence in situ hybridization) for detecting mRNA in fixed cells. The top panels show unbound smiFISH probes, hybridization to mRNA via tiling, and visualization of nascent transcripts at transcription sites. Right: Confocal image showing a nucleus stained with DAPI (blue), cytosolic marker (green), and mRNA smiFISH signal (magenta) in a single cell. (B) Cartoon depicting live-cell imaging of translation using nascent chain tracking (NCT). The plasmid construct encodes the protein of interest (POI) fused to a Flag epitope tag and 24× MS2 stem loops. The plasmid is bead-loaded into cells along with fluorescent anti-Flag antibodies and MCP (MS2 coat protein). Transcription of the plasmid produces mRNA containing 24× MS2 stem loops, which bind MCP and are visualized as diffraction-limited red spots. Nascent translation of the mRNA is tracked via the binding of anti-Flag antibodies (green) to the Flag epitope on nascent chains, resulting in colocalized green and red signals (yellow). Insets show time-lapse snapshots of protein and mRNA signals. Created in BioRender. Ron E (2025). https://BioRender.com/e39n811.

3.4. Labeling techniques employed to visualize gene expression in live cells

Live-cell imaging enables the real-time visualization of transcription and translation dynamics in live cells. These techniques consist of encoding secondary structures alongside the biomolecule of interest and detecting them with fluorescent tags. Techniques for live visualization of transcription responses include MS2 [65] and PP7 tagging-systems [66], which are naturally occurring stem-loop structures that are recognized by bacteriophage coat proteins tagged with fluorophores.

More recent techniques have also allowed for the live-cell imaging of translation dynamics. The most prominent example is Nascent Chain Tracking (NCT, figure 2(B)). NCT employs the MS2-MCP system to label RNA by inserting the stem-loops in the 3′ untranslated region to allow for the visualization of the RNA and repeat epitope tags are encoded into the gene of interest to visualize nascent peptides [67]. Fluorescent probes bind to these repeated epitopes in this technique, illuminating the nascent protein as it is being translated [49, 68]. Recent progress using this technique includes the use of genetically encoded antibody-based probes, such as MoonTag nanobody [69], anti-HA Frankenbody [70], multiple frame tags, such as the MASH tag [69], and the multi-frame tag that allows lit-up translation in different colors depending on what frame is being translated [71, 72]. See the appendix for a link to an online video tutorial detailing these methodologies and providing several example images and videos.

4. Methods for processing single-cell images

Using the labeling approaches from the previous section, the images or videos captured by a fluorescence microscope contain essential data to understand single-cell dynamics. For example, one could extract data to quantify and characterize the expression of RNA or protein over time or space, either within one cell or from one cell to the next, or one could analyze changes in cell morphology after a given treatment. This section introduces fundamental concepts and approaches to process microscopy images and extract such quantitative data.

4.1. Representing images as data arrays

A microscope image, depicted as a digital image (figure 2, Middle), is essentially a mathematical function denoted as , where x and y represent positive integer values corresponding to positions in a 2D coordinate system, specifically, the pixels [73]. To understand how information is stored in a digital image, consider it as an array of data; for example, a 2D black and white image is a matrix, a two-dimensional array with rows and columns. The matrix notation By,x will be used for simplicity. A more complex example could be a color image that can be represented as a three-dimensional array of data or a tensor with a shape of , where the c represents a dimension containing the different color channels in the image. A color image usually comprises three channels in the order RGB (red, green, and blue). Still, it is important to note that this order is arbitrary, and other conventions exist. More generally, a video is a sequence of images taken at multiple frames. A video is also a higher-order tensor with a shape , where f represents a dimension storing all the frames in the video. More complex stacks of images exist; for example, a sequence of microscope images with 3D spatial information (xyz) will result in a tensor with the shape .

Temporal, spatial, and intensity resolution define the amount of information stored in a microscope image or video. Temporal resolution indicates the frame rate of the sequence of images. Frame rate values are typically given in units of Hertz (s−1). Spatial resolution describes the physical distance each pixel represents for the real object in the image. Intensity resolution represents a range of values each pixel can take in the image. This is also known as the bit-depth and is defined as 2n, where n is the number of bits. For example, n = 1, represents a binary image with values 0 and 1, n = 8 is an 8-bit image with a range of 0 to 255, and n = 16 is a 16-bit image with a range of 0 to 65 535.

4.2. Processing images to focus on pertinent details

Image processing involves applying mathematical operations to the image to extract relevant information. Image processing can be divided into two main areas: image warping and filtering. Image warping consists of changing the domain or pixel location in the image via a coordinate transformation. Two common examples of image warping are image registration (e.g. rotating, shifting, scaling, or shearing one image to match the coordinates of another) and data augmentation (e.g. interpolating over an image to produce a higher-resolution image). The second class of manipulation is known as filtering, and these operations consist of applying logical operations, algebraic manipulations, or convolutions to adjust the intensity values of the image, often to highlight specific features within the image [73].

There are multiple instances where such transformations are used to adjust the intensity values of an image, with a couple of the most notable including thresholding and filtering. Thresholding, which is often used to remove extreme values in the image, is achieved by defining an intensity threshold value, Its, based on the image’s intensity histogram (figure 3(B)) and by reducing the intensity range as follows: . Thresholding can also segment elements in the image by removing the pixels below a threshold value considered the ‘background’ in the image. For example, a simple segmentation can be achieved by binarizing the intensity values in the image. This is done by setting pixels with values below the threshold as zero and the pixels with values above the threshold to one, that is and .

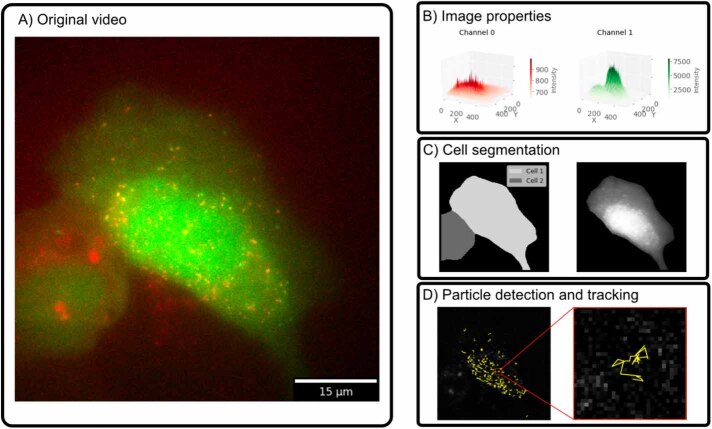

Figure 3.

Processing fluorescence microscopy images and videos. (A) Microscope image that shows an NCT experiment that detects KDM5B RNA molecules in the red channel and nascent proteins in the green channel (see figure 2(B)). (B) Each pixel in a digital image contains an intensity value, representing the properties in the original image. Spots are observed as intensity ‘spikes’, and structures such as the nucleus can be observed as extensive elevations (3D representation showing the intensity values as the z-axis). (C) Cell segmentation involves detecting and labeling the regions corresponding to independent cells. Here, cell segmentation was performed using Cellpose [74] in the green channel. (D) Detected RNA spots were detected for all time frames in the original video; after this, particle trajectories were created by linking particle positions at multiple time points. Here, spot detection and tracking were performed using TrackPy [75].

Applying convolutional filters to images is a routine process intended to reduce noise or enhance the appearance of elements in the image. Filters are convolutions between a kernel matrix and the original image applied at a given stride. The definition of the kernel matrix is problem-specific for tasks such as noise reduction, edge detection, particle detection, noise-induced feature enhancement, etc. A comprehensive list of these matrices is available in Python using the scientific library Scikit-image [76]. For example, one of the most common filters, the Gaussian filter, consists of multiplying a 2D-Gaussian square kernel matrix with a size of k (a user-defined parameter that normally ranges from 3 to 7 pixels) to each element in the image. A Gaussian filter reduces noise in the image by averaging adjacent pixels in a given area.

4.3. Extracting cells and sub-cellular features from microscopy images

In most cases, only a small portion of a given microscopy image or video is relevant, and one needs to focus attention on the specific features of the image that relate to the biological question of interest. Two of the most common feature quantification tasks in single-cell research are cell segmentation and particle detection.

Cell segmentation consists of locating the pixels containing cells in a microscope image and is usually one of the first steps in any image processing pipeline for single-cell biology. Previously, cell segmentation was a time-consuming and labor-intensive manual process in which many researchers resorted to drawing cell outlines by hand. Nowadays, sophisticated image-processing libraries have been introduced recently to automate this procedure. For example, Cellpose, Detectron2, and Segment Anything Model (SAM) are deep-learning libraries for cell segmentation [74, 77, 78]. These libraries have been proven to achieve state-of-the-art segmentation of cells in microscope images. Deep-learning approaches are highly relevant to automating and reducing user input during the process of large datasets [26]. A nuclear segmentation in our example image is shown in figure 3(C).

Particle detection consists of finding and quantifying punctae (bright spots), such as those corresponding to single mRNA in smFISH [60] or live-cell translation experiments [67]. One example library for spot detection and particle tracking is TrackPy [75], which contains many methods to detect punctae in the image, and more algorithms [79, 80]. Additionally, it contains robust methods to link trajectories and calculate their displacement. More specific libraries, such as Big-FISH [81], have also been introduced to detect spots in images with complex spot composition. Single punctae representing transcription sites are detected in our exemplary image using TrackPy in figure 3(D).

For more information on image processing of microscope images, the appendix provides links to tutorial notebooks and lecture videos introducing image processing basics, cell segmentation, and particle tracking. The following exercise suggests a hands-on exercise in which the reader can practice these skills.

4.4. UQ-Bio Summer School challenge—processing fluorescence microscopy images

The goal of the next stage of the challenge is to process the collected single-cell fluorescence microscopy images. First, you will need to create and implement a computational method to segment the cytosol and nucleus for each cell in the simulated images. For this, you should consider utilizing manual traditional techniques, such as applying combinations of filters and thresholds or by applying more automated machine learning-based segmentation routines (e.g. using Cellpose [74] or Detectron2 [77]).

Once you have segmented your images into nuclei and cytoplasm, apply particle detection algorithms to identify and locate RNA and protein spots within both the cytosol and nucleus across each color channel. Once again, traditional techniques, such as taking the differences of Gaussian filters, followed by thresholds can quickly find many spots, while programs like TrackPy [75] can be employed to detect particles based on predefined parameters such as diameter, intensity, and minimum mass. In either case, you will need to visually inspect your results to ensure that the detection parameters are optimized to accurately capture the relevant molecules without excessive false positives or negatives.

After detecting your spots, you will need to assign each detected spot to its corresponding cellular compartment (cytosol or nucleus) based on its position relative to the segmentation masks. Count the number of RNA and protein molecules detected in each compartment for every time point. Ensure that the counts are organized in a consistent structured format (e.g. use Python library Pandas Dataframes) as you are going to need to make use of these data in subsequent stages of the challenge. Write additional Python functions to generate visual representations (e.g. histograms, scatter plots, or heatmaps) to illustrate the distribution and concentration of molecules within each compartment over time.

Solution—processing fluorescence images

To segment the simulated images, we used a multi-level thresholding approach. First, the simulated image was smoothed using a Gaussian filter to reduce noise. Then, the smoothed image was segmented using the Multi-Otsu thresholding algorithm [82] into three regions, including background, cytosol, and nucleus. Masks for the nucleus and cytosol were extracted, and the cytosol mask was expanded using a binary dilation method to ensure complete coverage. mRNA and protein particles were detected using TrackPy [75], using a particle size of 5 pixels, and a threshold of 20 intensity units. Detected particles were assigned to specific regions based on their positions relative to the segmentation masks (figure C3). The final counts were reported as the number of spots detected in each RNA and protein channel localization within different cellular compartments for each time and are shown as histograms in figure C4.

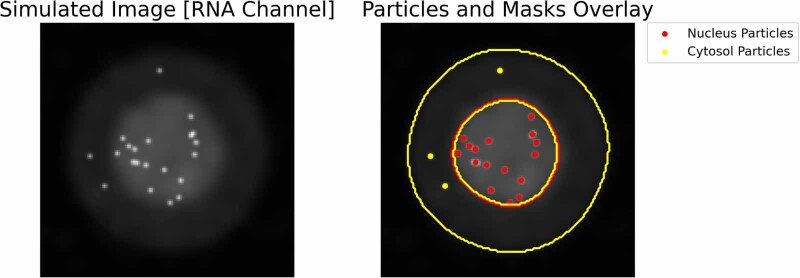

Figure C3.

Cell segmentation and spot detection. Representative image illustrating cell segmentation and particle detection in the RNA channel. The left panel displays the original simulated RNA channel image after Gaussian smoothing. The right panel overlays the detected particles and segmentation masks onto the RNA channel image. Inner yellow contour delineates the segmented nucleus, while outside yellow contours outline the segmented cytosol. Detected RNA particles within the nucleus are marked in red, and those within the cytosol are marked in yellow.

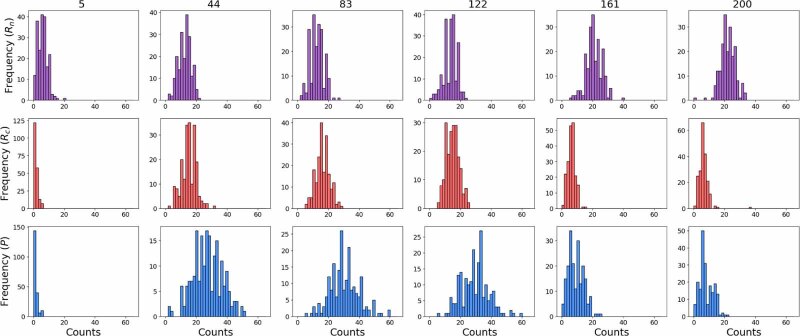

Figure C4.

Histograms of processed data. Histograms illustrating the distributions of recovered counts for the simulated data across six time points. Top panels display the distribution of nuclear mRNA molecules. The middle panels show the distribution of cytosolic mRNA molecules. The bottom panels illustrate the distribution of protein molecules in the cytosol. Each histogram is constructed with 25 bins ranging from 0 to 65 particles.

5. Quantitative mechanistic modeling

In this section, we introduce the mathematical formalism needed to represent and simulate a biological system. We start by introducing the concepts of stoichiometry and propensity functions in section 5.1. Then, we demonstrate how these can be used to simulate the system behavior in either a deterministic setting in section 5.3.1 or using a stochastic description in sections 5.4.1 and 5.5.1. Although we focus on building a quantitative description of gene expression, these same approaches can be used to describe many other biological phenomena.

5.1. Stoichiometry and propensity functions

To mathematically characterize a biological system, it is necessary to fully describe the system in terms of the different chemical species within it, the initial values of these species, the rules and probabilities that govern the interaction between these species, and the total time the system will be simulated. To simulate the system, the approximation assumption that the system is well-mixed and has a continuous time evolution is often made. If the latter is true, the system follows Markovian dynamics, where each state transition is random, discrete, and depends only upon the system’s current state.

To define the elements (species) that form our system, we introduce the population vector, , where ξ1 through ξN correspond to each of the species in the system. A particular state of the system, i, is denoted by the vector xi, which stores the count for every species in the system; hence, it contains one non-negative integer for each species.

To characterize how the species in the system change, we define the stoichiometry matrix, S, with one row for each of the N species and one column for each of the M reactions, which indicates the relative (net) change in the population of each species if that reaction occurs. The stoichiometry matrix is defined as , where is the stoichiometry column vector associated with the reaction. When the reaction occurs, the system transitions from it current state, xj, to a new state, . For example, if the hypothetical chemical reaction in a three-species system consumes two molecules of species 1 and one molecule of species 2 and produces three molecules of species 3, then the stoichiometry vector of that reaction is .

To quantify when the species in the system change, we introduce the propensity function, w, as the rate (or transition probability) associated with each state transition. The propensity function is always non-negative and is a function that typically depends upon the current reactant populations and time. The term is the probability that the reaction, initially in state x, will occur in the time-step dt. In many models, the law of mass action (LMA) can be applied to determine propensities for individual reactions, which states that the rate of a chemical reaction is proportional to the number of unique combinations by which those reactants can form products [83]. For the previous example, the LMA would suggest a propensity function of , where kµ is a rate parameter, and the quantity is the number of unique molecule triplets containing two molecules of species one and one molecule of species two. See the appendix for links to tutorial videos and Python notebooks containing more details on how to specify stoichiometry vectors and propensity functions for LMA and more complex models, and see the following example to practice the skill of defining such models.

5.2. UQ-Bio Summer School challenge—specifying stoichiometries and propensities for a gene regulation model

In the next stage of the course challenge, you are asked to build a mathematical framework to define and then simulate a quantitative representation of the mechanisms you specified in the first stage of the challenge.

First, you should make a list of all species in the model (i.e. active/inactive alleles, nuclear mRNA, cytoplasmic mRNA, protein) and possible reactions that can occur to change these populations. Then, for each reaction, you should write out the stoichiometry vector for that reaction (i.e. how the reaction causes the populations of each species to change) and the propensity function (i.e. the formula by which the rate of that reaction changes with the populations of all species). When possible, for each reaction rate or other relevant parameter within your propensity functions, specify the expected range of that parameter to ensure they are consistent with the biological process or literature values. Organize your model in the format of a stoichiometry matrix, a propensity function vector, and a parameter table list that will be suitable for subsequent computational simulations.

Solution—stoichiometry and propensity functions

To model gene expression, we can use the constitutive model (Model 3 from figure C2), in which the gene is transcriptionally active and produces nuclear mRNA molecule, Rn, through transcription. Nuclear RNA is transported from the nucleus to the cytosol (), where mRNA molecules in the cytosol can produce a protein molecule through translation. mRNA molecules and proteins are subject to degradation. Assuming that the gene concentration in the system is constant (i.e. there is a single allele and it is always active), we can define the following variables:

Table C1.

Model variables.

| Variable | Description |

|---|---|

| R n | mRNA nuclear |

| R c | mRNA cytoplasm |

| P | Protein |

The reactions for this gene expression model are:

Table C2.

Model reactions.

| Index | Reaction | Description | Reaction Rate |

|---|---|---|---|

| r 1 | Constitutive production of nuclear mRNA | k r | |

| r 2 | mRNA transport to cytoplasm | ||

| r 3 | mRNA in cytoplasm produces Protein | ||

| r 4 | Nuclear mRNA decay | ||

| r 5 | Cytoplasm mRNA decay | ||

| r 6 | Protein decay |

with a population vector of . The stoichiometry matrix is:

Note that the columns correspond to the stoichiometric coefficients for the reactions r1 through r6, and the rows to the species in the x vector. The propensity vector for this example is

5.3. Mechanistic simulation approaches

5.3.1. Deterministic modeling approach

To describe system dynamics in a bulk setting where all species populations are assumed to be large, one can use a system of coupled ODEs. In this deterministic description, each ODE in the system describes the mean behavior over time for a specific species population. The ODE description of a system is given in terms of the stoichiometry vectors and propensity functions according to:

where S is the stoichiometry matrix defined above, and is the propensity vector representing the propensity of each reaction in the system. This resulting system of ODEs can be easily solved using an ODE solver, such as those available in scipy.integrate in Python. See the appendix for additional resources and lecture videos, and complete the following example to practice building ODE analyses for gene regulation models.

5.4. UQ-Bio Summer School challenge—deterministic simulation of gene regulation

Now that you have defined the stoichiometry matrix, propensity function vector, parameters and initial conditions (section 5.2), use these to derive a system of ODEs to describe the rate of change of each species over time. Define initial concentrations and parameter values based on biological data or literature sources. Solve for the steady-state concentrations of each molecular species by setting the rate of change to zero and solving the resulting algebraic equations. Use computational tools (e.g. Python’s scipy.integrate.odeint) to numerically solve the ODEs and obtain time-course data for each species. Generate plots to illustrate the temporal dynamics of gene expression, and determine key behaviors such as steady states and responses to perturbations.

Solution—ODE representation of model

If we let denote the concentrations of the three species in our model, we can now use the stoichiometric matrix and propensity function vector to directly state the ODE that describes the process as:

By expanding the matrix vector algebra on the right hand side of this equation, we get:

System’s steady state. The analytical solution for our system at steady state can be found by setting the net change in our system equal to zero. With this, we can obtain the solution for all variables in the system as a function of the parameters. That is:

ODE simulation. To perform our simulations, we need to specify the initial values of the variables in the system, the parameter values, and the total simulation time. In this example, we will use the following values for the initial population levels:

Table C3.

Initial Conditions.

| Variable | Initial Conditions | Units |

|---|---|---|

| R n | 0 | Molecules |

| R c | 0 | Molecules |

| P | 0 | Molecules |

and the following values for the model parameters:

Table C4.

Parameter values.

| Parameter | Starting value | Units |

|---|---|---|

| k r | 2 | min−1 |

| k t | 0.083 | min−1 |

| k p | 0.5 | min−1 |

| γ r | 0.05 | min−1 |

| γ p | 0.25 | min−1 |

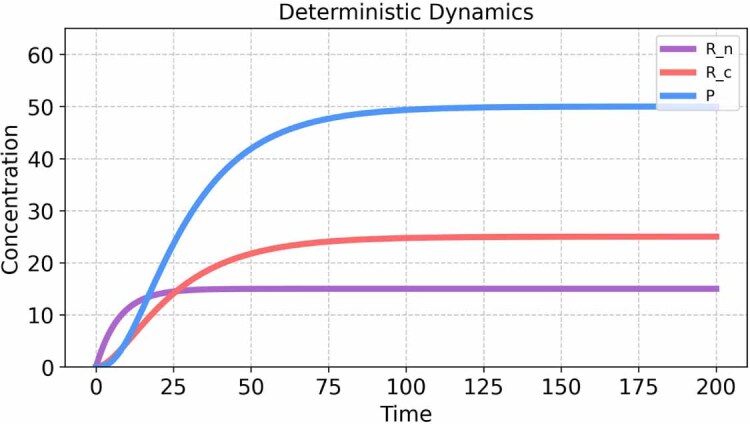

Figure C5 shows the solution of the system using the parameter values and initial conditions given in the tables above.

Figure C5.

Deterministic simulation output for the biochemical system. Species P, Rn, and Rc rapidly achieve a steady-state concentration, indicating a saturating process or equilibrium is reached quickly. This deterministic approach provides a baseline understanding of the system’s dynamics, allowing for the identification of steady states and thresholds.

5.4.1. Stochastic simulation approach

The continuous and deterministic approach discussed in section 5.3.1 fails to capture variations in system behavior due to the discrete nature of genes, RNA, and proteins or the randomness and biochemical noise that stems from the stochastic timing of bio-molecular events. Reproducing such phenomena requires a discrete description of the process and consideration of the distributions of event times. For this, we often employ KMC methods that use one or more random number generators to sample the time evolution as the process moves between different points in the discrete state space.

One such KMC approach is Gillespie’s direct stochastic simulation algorithm (SSA) [84–86]. The SSA simulates the process one reaction at a time, deciding at each step when the next reaction occurs and how the system’s state is affected by that reaction. The SSA is defined in terms of the same stoichiometry matrix, S, and propensity functions, W, introduced above. It seeks to simulate the system from some initial state, , until some final time, , through a series of jumps whose random times and directions are chosen from probability distributions defined by S and W.

Let denote the current sum of all propensity functions. Under this definition, the time of the next reaction has an exponential distribution , and a sample of this distribution is easily generated by sampling a uniform random number via the transformation:

Furthermore, the index of the chosen reaction has a categorical distribution of that can be found by sampling a second uniform random variable, , and finding the smallest k, such that:

Once samples of τ and k are generated, one can update to the new current time (), and if this time is less than , the state of the system can be updated to (). This process continues until t exceeds . To compute many trajectories, the process is repeated with new sequences of random numbers. Although easy to implement, the SSA can be computationally intensive, thus motivating the creation of more computationally efficient methods that approximate the SSA and generate stochastic trajectories, including the τ-Leaping Algorithm [87] and the Chemical Langevin equation Algorithm [83]. These methods, however, may result in a large loss of accuracy if the system species have low numbers of molecules or if the propensity functions experience quick, substantial changes.

The interested reader is invited to review the Python Tutorial and lecture videos on the SSA via the links in the appendix and to attempt to build an SSA model in the following example.

5.5. UQ-Bio Summer school challenge—stochastic simulation of gene regulation

The next step is to extend the model from before to implement the Gillespie SSA to model the stochastic dynamics of the system. Assign initial molecule counts and reaction rate constants and simulate the process for 100 repetitions to capture the variability inherent in the system. Generate histograms and time-course plots to illustrate the distribution and variability of the species. Compare the stochastic simulation results with deterministic ODE results to explore the impact of noise on the system dynamics.

Finally, extend the model to consider a drug perturbation inhibits mRNA transport at a prescribed time and amount. Formulate your model in terms of a parameter that captures the efficacy of the drug, where α = 0 denotes no effect (i.e. RNA transport occurs at the unperturbed rate) and α = 1 denotes perfect effect (i.e. RNA transport is fully repressed). After extending the mode, simulate the temporal effects of the drug in your system using both deterministic and stochastic dynamics, and show how the system adapts from the no-drug steady state to the new steady state under a 90% effective drug treatment.

Solution—stochastic simulations

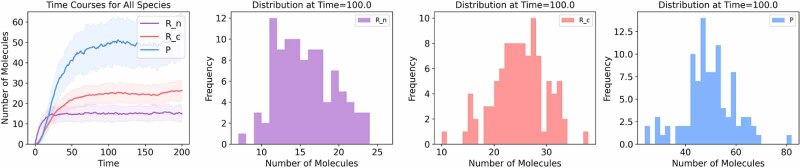

To solve the system under stochastic dynamics, we implement the SSA [85]. For this, we can implement the SSA by ourselves or use a library such as Gillespy2 [88]. In this step, ensure that your model is in the correct format that the library or your code requires. Figure C6 shows the temporal dynamics for all the variables in the system. In contrast to deterministic dynamics, the stochastic simulation returns different values for each run. It is common to run multiple repetitions (trajectories) to quantify the system variability. The simulation was performed for 100 independent trajectories, and the statistics of the different species can be obtained from the histograms representing the amount of particles for each species.

Figure C6.

Stochastic simulation analysis. A set of 100 individual trajectories were run. Each trajectory was used to build the median and standard deviation plotted on the time course in the top-right corner figure. A distribution of the values is given for each variable in the system. The simulation was performed using Gillespy2, [88]. Initial conditions, parameter values, and time span are reported in the tables above. Histograms were built by taking the species concentrations at time 100 of the simulation.

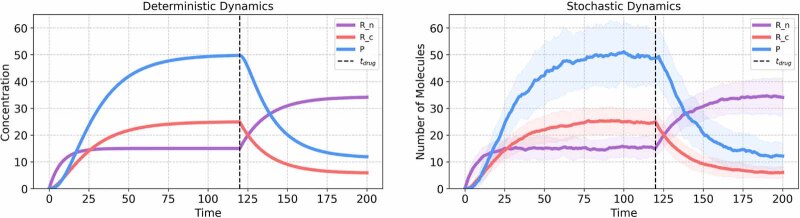

Solution—simulating drug perturbations

To model the effect of a drug that inhibits the mRNA transport, we employ a mathematical approach that allows the parameter kt, representing the rate of mRNA transport, to vary in response to drug application. This approach simulates conditions where the drug, once introduced, exerts a constant inhibitory effect throughout the duration of the simulation. The parameter kt is re-defined as follows:

Here, kt represents the baseline rate of mRNA transport in the absence of the drug. The parameter modulates the rate of mRNA transport in response to the drug’s presence. That is, a value of α = 0 would indicate complete inhibition of the mRNA transport process by the drug, while α = 1 would reflect no change in the transport rate. The time specifies the moment within the simulation timeline when the drug is introduced to the system.

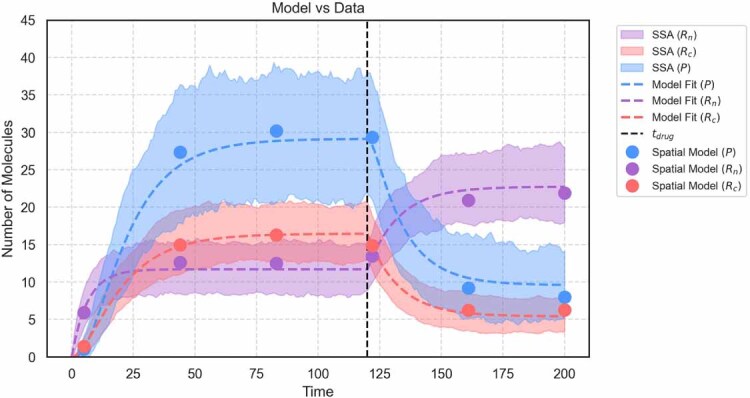

As a specific example, we reduced the transport rate as follows: . The application of the drug was simulated at time for a total simulation time, min. The model was solved under deterministic and stochastic dynamics (figure C7, top left and right, respectively).

Figure C7.

Effects of drug perturbation. Simulation of Drug inhibition at min, min−1, and min−1. For stochastic simulations, 100 trajectories were run.

5.5.1. Chemical master equation (CME) based approach

Using the SSA from the previous section, one can generate large sets of trajectories, where each trajectory is one statistical sample of the process behavior. However, because the process is random, every trajectory will be different, and none should be expected to match exactly to any real collection of data. To compare SSA models to actual data, one must generate large numbers of such samples at times and conditions of interest and then combine these data to form histograms. These histograms, when normalized to sum to one, approach the probability distributions of the system response at each point in time. For example, if one is interested in the probability of any particular state, xi, at some time, t, we can estimate the probability of that state:

where N is the number of SSA trajectories, and denotes the convergence rate of the error. Unfortunately, if there is a large number of possible states, then xi may be rare and could be very small. Because error in the estimate (equation (14)) converges with order , to obtain an accurate estimate for , one may need extremely large numbers of simulations.

Another approach to analyze the statistics of such processes is to define the Chemical Master equation (CME, also known as the forward Kolmogorov equation). The CME is a system of linear ODEs that directly describes the changes in the probability mass through time, , for all possible values for all xi that can be reached by the system. When solved, the CME contains all the information to fully describe the evolution of the system’s statistics, forgoing the need to generate SSA samples. Using standard notation, the CME can be written in terms of reactions that result in transitions out of state xi and reactions that result in a transition into state xi as follows:

Consistent with the notation described in section 5.1, the probability mass vector for the full state space can be defined as , and the CME can be written in a more condensed matrix form as:

where the infinitesimal generator matrix, A, is equal to:

Unfortunately, it is important to consider that for most realistic systems, the state space is infinite, meaning that an infinite number of ODEs are needed to formulate the CME. For this reason, approximate solution schemes are needed to solve the CME.

5.5.2. Finite state projection (FSP)

With the exception of very simple models, there is rarely an exact or analytical solution that can be computed, and the state space of the CME is often infinite or computationally intractable. To circumvent these issues, numerical approximations may be employed. One such technique is to project the CME state space onto a finite subset of states, naturally termed as finite state projection (FSP) [89–92]. FSP allows for manageable computation of the time evolution of the probability distribution over chemical reaction states while keeping track of, and enforcing bounds upon, the error that results from the truncation of the whole CME state space [93]. Additionally, the error threshold can be adjusted, and the size of the FSP subset iteratively contracted and expanded to find the optimal projection with high accuracy and low cost on computational efficiency.

Mathematically, the FSP method can be defined as follows: Let denote any enumeration of all possible states that can be reached by the system. Next, define as a finite set of indices, so that the set is a finite set of states. Also, let the complement set contain all of the states that were not included in . Using this notation, the full CME can be reordered as:

In the FSP approach, the second set of states is replaced by an absorbing sink, resulting in a new finite dimensional master equation:

where g(t) records the amount of probability mass to reach the absorbing sink. In this formulation of the master equation, once probability mass leaves , it cannot return and remains in the absorbing state, and g(t) therefore provides the exact probability mass that has left as a function of time.

In addition to providing an exact calculation for escape times, the FSP solution relates to the original CME solution in three important ways:

-

•

First, the FSP is a lower bound on the exact CME solution () for every possible state of the process. (Note: for finite CME state space, the FSP provides an exact analytical solution.)

-

•

Second, the exact total absolute error of the approximation is known, .

-

•

Third, the error of the FSP, g(t), decreases monotonically as one adds additional states to the set J.

With these three guarantees, one can then specify a maximum tolerable CME error as a monotonically increasing function, , where for every , and implement the FSP algorithm as follows [89, 92, 93]:

-

•

An initial state space XJ is selected, and the initial distribution along this space is extracted from .

-

•

The subsequent probabilities, , and the FSP approximation error, g(t), are integrated forward in time using equation (19). If g(t) exceeds a user-defined threshold function at any time, the integration is paused, and more states are added to XJ before continuing the integration.

-

•

The process is repeated until the final time, tf, at which point the approximation guarantees that the FSP solution is below the error threshold at all times.

The interested reader is invited to review the tutorial videos and Python Notebook provided in the appendix and the following example for further practice using the CME and FSP approaches to solve for gene regulation probability distributions.

5.6. UQ-Bio Summer School challenge—finite state projection analysis of gene regulation

The next step is to solve the gene regulation model system (section 5.2) using the FSP to approximate the CME solution. To this end, assume the same initial molecule counts and reaction rate constants as before, assume that the same inhibition process was simulated in drug application at time min, and simulate the system over the time interval min. The FSP solution provides the full probability distribution for each species at each time point. From these distributions, plot the mean and standard deviation of the molecular counts versus time, as well as the joint probability distributions for nuclear mRNA, cytoplasmic mRNA, and protein at the final time. Finally, compare your results with the deterministic and SSA solutions.

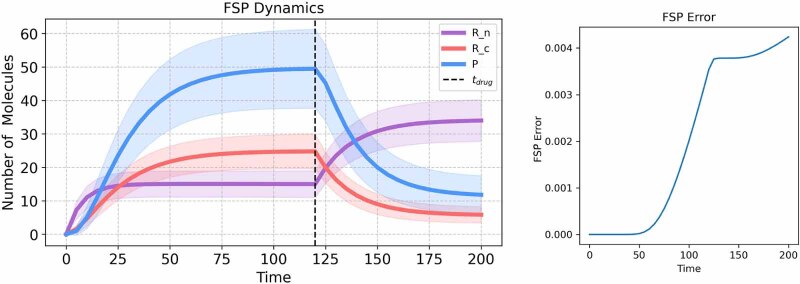

Solution—simulating the FSP

To model the system using the FSP approach, we implemented the FSP using the algorithm provided in the Github repository given in the appendix. The FSP projection space used in the simple Python implementation (which does not automatically adjust the size of the subset based on error) was limited to the set (, , array, resulting in an infinitesimal generator matrix A with 793 881 rows and columns. The initial probability mass vector, P0 was set as one for the given initial state (, , P = 0) and zero for all other states. Equation (19) is then solved using a Python implementation of Expokit [94] that uses a Krylov subspace approximation for exponentiation of large sparse matrices. We note that the codes provided in Python are meant primarily for educational purposes and are not intended for computationally intensive investigations. For example, this FSP calculation in Python took a computation time of 1 m 20.3 s on Apple M3 Pro, while a more established implementation in MATLAB (known as the Stochastic System Identification Toolkit and available at https://github.com/MunskyGroup/SSIT/) runs in approximately 0.032 s on the same computer and with the same bounds.

Upon solving the FSP for the joint distribution of all species, , it is straightforward to calculate model expected values (e.g. means) according to:

Similarly, the uncentered moments of are calculated by:

and variances and covariances are easily calculated as:

where x and y can be replaced with different combinations of Rn, Rc and P.

Using these calculations, figure C8 (left) shows the time course of the mean and standard deviation for each of the three species, which are in excellent agreement with the results from sections 5.4 and 5.5. Figure C8 (right) shows the FSP error versus time for the chosen model and choice of projection space, demonstrating that the total error is about 0.004 at the final time. To find the 2-species joint distributions, we simply marginalize over the left out species according to:

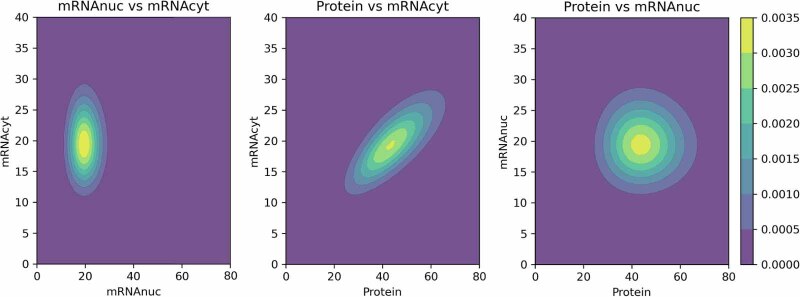

Figure C9 shows the joint probabilities between each pair of the three species calculated at the time t = 120 (min).

Figure C8.

FSP Solution and Error. (Left) Simulation of drug inhibition at min, with min−1 and min−1. (Right) FSP truncation error vs. time calculated as final term, g(t) in equation (19).

Figure C9.

The 2-species joint probability contours shown for each combination of the model species (Rn, Rc, P) at = 120 min.

6. Inferring model parameters from experimental data

With quantitative single-cell data (sections 3 and 4) and a solvable computational model (section 5), the next step is to use those data to constrain parameters of the model. In this section, we discuss a few of the simplest tools to fit models to data and extract parameters.

Model and parameter identification is the process of finding mechanisms or parameter sets that best describe or predict observed data. It is akin to finding the best-fitting line or curve that represents the relationship between variables in the dataset. Central to this process is the likelihood function, which quantifies the probability of observing the data under the specific set of model parameters [95]. Intuitively, we want to find those parameters that make the observations as plausible as possible, those that maximize the likelihood.

For example, consider observed data for a specific cell measured at time points . The likelihood function is

Note that here the data is fixed, and the likelihood depends on the parameters θ only.

For problems where an exact CME solution is computationally tractable, one can estimate the log-likelihood function in equation (24) directly. More generally, whenever the CME can be solved exactly or approximately using the FSP, likelihoods such as that in equation (24) can be computed. The most common way to do so is to rewrite the equation as a sequence of conditional probabilities:

Solving the CME up to time t1 allows us to compute , the probability distribution at the first point. Then, conditioning on the first observation and continuing the solution up to time t2 allows us to compute the second term, . This can be iterated for all time steps to arrive at the total likelihood (equation (25)). The details of this algorithm (which is generically called the forward algorithm for state-space models) can be found in [96, 97]. The general procedure of computing likelihoods of time-series as in equation (25) is called filtering, which includes the famous Kalman filter [98] and the HMM filter, another name for the algorithm outlined above.

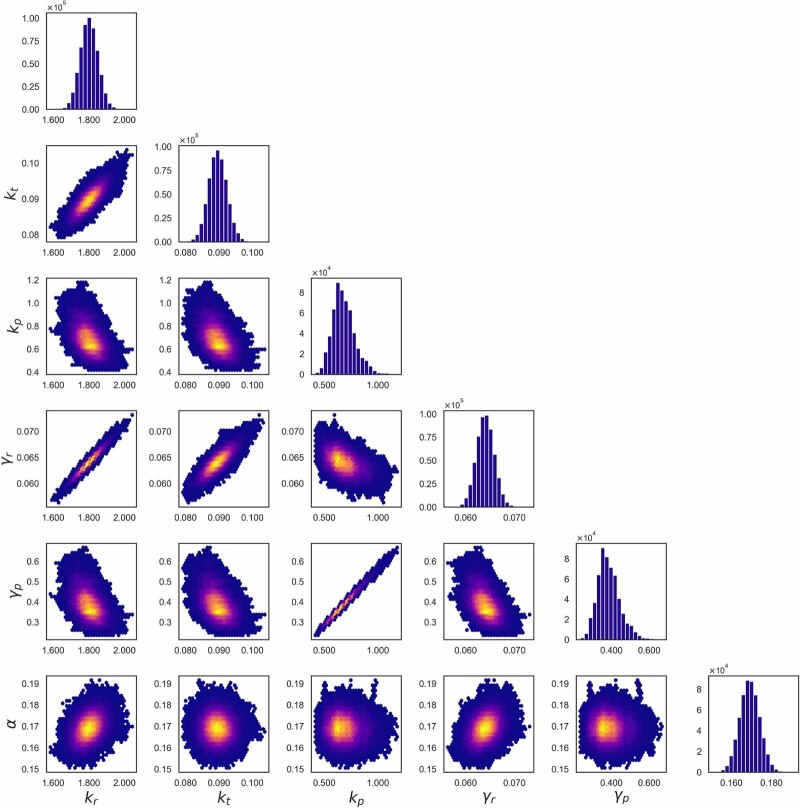

Observing a single cell does not yield much information about a system, especially for highly stochastic processes where cells can exhibit very variable behavior. For this reason, one often observes multiple independent cells at the same time; as long as these cells do not affect each other during the experiment, one can obtain the likelihood for all cells by multiplying the likelihoods in equation (24) for each cell. As a result, the computational effort for computing the likelihood increases linearly with the number of cells, but this is often a worthy trade-off given the improved estimates one usually gets. For many experimental approaches, such as smFISH or sequencing, one can only obtain a single snapshot measurement for each cell. In this case, if one assumes that every cell measurement is independent, where di refers to the cell, then the conditional distributions in equation (25) reduce to: