Abstract

Herbs have historically been central to medicinal practices, representing one of the earliest forms of therapeutic intervention. While synthetic drugs are often highly effective in treating acute conditions, their use is frequently accompanied by adverse side effects. In addition, the growing dependence on synthetic pharmaceuticals has raised concerns regarding affordability, thereby fostering a renewed interest in herbal medicine as a cost-effective and holistic alternative. In response to this need, the current study introduces a computer vision framework for accurate herb identification. A novel dataset, Herbify, was compiled from two different herb datasets and refined through rigorous cleaning, preprocessing, and quality control procedures. The resulting dataset underwent standardization via the Preprocessing Algorithm for Herb Detection (PAHD), producing a refined dataset of 6104 images, representing 91 distinct herb species, with an average of about 67 images per species. Utilizing transfer learning, the research harnessed pre-trained Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), then integrated these models into an ensemble framework that leverages the unique strengths of each architecture. Experimental results indicate that EfficientNet v2-Large achieved a noteworthy F₁-score of 99.13%, while the ensemble of EfficientNet v2-Large and ViT-Large/16, termed EfficientL-ViTL, attained an even higher F₁-score of 99.56%. Additionally, the research also introduces ‘Herbify’ application, an AI-driven framework designed to identify herbs using the developed model. By directly tackling the principal obstacles in herb identification, the proposed system achieves a highly accurate and operationally viable classification mechanism. The experimental outcomes showcase top-tier performance in herb identification and emphasize the transformative potential of AI-based solutions in supporting botanical applications.

Supplementary Information

The online version contains supplementary material available at 10.1186/s13007-025-01421-5.

Keywords: Medicinal plants, Herb classification, Convolutional neural networks, Vision transformers, Ensemble, Transfer learning, Deep Learning

Introduction

Herbs have historically been valued for their medicinal, nutritional, and cultural significance across various regions [1]. The global herbal medicinal market is substantial and continues to expand, driven by rising consumer interest in natural healthcare approaches [2]. Valued at USD 216.40 billion in 2023, this market is projected to reach USD 5 trillion by 2050 by the World Health Organization (WHO) [3]. According to a World Health Organization report, nearly 80% of the global population primarily relies on plant-based and traditional herbal remedies for their healthcare needs, underscoring their widespread application around the world [4].

Natural, plant-based medicines are gaining ground over the synthetic drugs. Global emphasis over sustainable practices is fostering efforts to explore herbal medicine as a promising alternative to synthetic pharmaceuticals [2, 5]. Although synthetic drugs are highly effective for acute conditions, they often carry adverse side effects, including kidney and liver damage. Furthermore, widespread reliance on synthetic drugs raises concerns regarding affordability, thereby positioning herbal medicines as a more cost-effective alternative [6, 7]. For instance, prolonged use of certain non-steroidal anti-inflammatory drugs (NSAIDs) can increase the risk of ulcers and kidney damage [8].

This growing emphasis on herbal medicine is largely attributable to the minimal side effects and non-toxic attributes of many naturally occurring medicinal plants [9–11]. For example, recent research has confirmed that curcumin, a key compound found in turmeric, exhibits anti-cancer properties, underscoring its significance in the healthcare sector [12].

By 2023, smartphones have become indispensable to everyday life, with over 6.92 billion users globally, roughly 86.29% of the world’s population [13], generating substantial opportunities for innovation, particularly in plant identification. Utilizing smartphones equipped with high-resolution cameras, powerful processors, and internet connectivity, to identify medicinal plants can offer significant benefits, especially in regions where access to expert knowledge or comprehensive reference materials is limited, opening the gateways for real-time applications such as herb identification [11]. Swift advancements in computer vision, especially through models such as convolutional neural networks (CNNs) and vision transformers (ViTs), have significantly enhanced the precision and efficiency of plant recognition systems. These models can be trained on large, diverse datasets to identify a wide array of species, including medicinal plants, even under varying environmental conditions.

Convolutional neural networks excel at extracting local features such as edges, textures, and shapes through their convolutional layers, while pooling layers provide translation invariance, thereby enhancing spatial understanding. Vision transformers, by contrast, adeptly capture global context using self-attention mechanisms to analyze long-range dependencies within an image [14]. Unlike CNNs, which rely on hierarchical feature maps, ViTs segment an image into patches and treat each patch as an input token, like words in natural language processing, thus offering a flexible method of representing visual data [15]. Hybrid models exploit the complementary strengths of these architectures, for instance, by using CNNs to extract local features and ViTs for broader contextual reasoning. In some designs, both architectures process the image concurrently, with their outputs fused for superior performance. This combination enables robust solutions in tasks such as image classification, object detection, and medical imaging. By integrating CNNs’ local feature extraction with ViTs’ global comprehension, hybrid models achieve state-of-the-art performance across diverse and challenging visual domains.

Many existing research efforts in medicinal plant identification are limited by the small scale of the datasets on which their models are trained. For example, the DeepHerb dataset—developed to address data scarcity—originally comprised only 2515 images, gathered primarily through scanners and mobile phones [10]. While some studies have employed CNNs to capture local features or ViTs to grasp global context, these models often fall short by using only one of the two techniques, thereby limiting classification robustness. Even when hybrid models have been attempted, ensemble learning strategies were frequently overlooked, leading to suboptimal performance. Moreover, real-time application of these models remains largely unexplored, with few, if any, studies offering a deployable interface for practical use.

To overcome these limitations and produce a more comprehensive, uniform, and high-quality resource, this study introduces a novel dataset named ‘Herbify.’ This dataset is formed by merging two distinct repositories—DIMPSAR [9] and DeepHerb [10], resulting in a total of 6104 images spanning 91 unique classes. This research also presents a hybrid ensemble model that combines the local feature extraction capabilities of CNNs with the global context awareness provided by ViTs, thereby enhancing overall classification accuracy.

This study aims to address practical constraints in real-world scenarios and existing research works by employing two core strategies. First, the newly consolidated ‘Herbify’ dataset offers extensive coverage of various medicinal plants, thereby mitigating issues related to limited data. The comprehensive preprocessing module PAHD has been developed to standardize the dataset and improve image quality, with a primary focus on minimizing background variance and noise, improving the overall results accuracy. Among the techniques used, data augmentation further enhances both the volume and diversity of the dataset. Secondly, the ensemble deep learning framework that capitalizes on CNNs for local feature extraction and ViTs for global context allows for precise herb identification. Finally, to ensure real-time usability, the study translates this framework into a fully functional web-based application that not only identifies medicinal plants but also provides the scientific name and a resemblance probability. This user-friendly interface paves the way for seamless, real-time herb identification, thereby extending the benefits of the research to a broader audience.

Literature review

In recent years, the detection and classification of herbs using advanced image-based techniques have gained significant attention for promoting sustainable agriculture and safeguarding global health. Integrating sophisticated image processing methods with deep learning architectures has shown considerable success in accurately identifying and categorizing herbs.

Murugan Prasathkumar et al. (2021) spotlight various Indian medicinal plants, discussing their phytocompounds and pharmacological properties, such as antimicrobial, antioxidant, antidiabetic, and anticancer effects, across 14 families. Among these, Senna auriculata is noted for its wide range of medicinal benefits, while B. asiatica, D. metal, and A. marmelos also exhibit notable therapeutic value. The authors investigate 20 Indian medicinal plants used in traditional polyherbal formulations within Ayurveda, Unani, and Siddha, thereby providing valuable insights for the advancement of ethno-medicine [16]. Subsequently, Pushpa et al. (2023) introduced the DIMPSAR dataset [17], comprising 5900 images of 40 plant species and 6900 single-leaf images spanning 80 species, all collected under real-time Indian conditions [9]. Leveraging this dataset, they developed Ayur-PlantNet, a lightweight CNN that achieves 92.27% accuracy rate in Ayurvedic plant identification [9, 18]. In a related effort, Roopashree et al. (2021) curated DeepHerb, a dataset with 2515 images covering 40 herb species, enabling a vision-based identification system that attained 97.5% accuracy. Their “HerbSnap” app demonstrates practical use, although DeepHerb faces challenges when dealing with compound leaves [10].

The study by B.R. Pushpa et al. (2024) presents a hierarchical classification model for 100 Indian medicinal plants, integrating convolutional and conventional features in conjunction with Random Forest classifiers. When evaluated on both a self-built leaf dataset (GSL100) and real-time datasets (RTL80 and RTP40), the model achieved a 94.54% accuracy on GSL100 and 75.46% on the real-time datasets, surpassing existing methods. This improvement underscores the model’s robustness and efficiency under real-world conditions [19]. Meanwhile, Chin Poo Lee et al. (2023) proposed a Plant-CNN-ViT ensemble that fuses Vision Transformer, ResNet-50, DenseNet-201, and Xception to address plant classification in data-scarce settings, achieving near-perfect accuracy on four plant leaf datasets: 100.00% on both the Flavia and Folio Leaf datasets, 100.00% on the Swedish Leaf dataset, and 99.83% on the MalayaKew Leaf dataset. Despite its higher complexity, the model effectively captures spatial details and mitigates overfitting, indicating its potential for broader applications, such as plant disease detection [20]. Mirzapour et al. (2023) proposed the Controllable Ensemble Transformer and CNN (CETC) model, which integrates CNNs and transformers to capture both local and global features for medical image classification. The architecture combines convolutional encoders, transposed-convolutional decoders, and a transformer-based classifier, achieving superior performance on two publicly available COVID-19 datasets [21]. Khan et al. (2025) proposed an optimized ensemble model for diabetic retinopathy detection, integrating advanced preprocessing (CLAHE, Gamma correction, and DWT) with three pre-trained CNNs (DenseNet169, MobileNetV1, Xception). The model uses the Salp Swarm Algorithm to dynamically assign ensemble weights, achieving 89.07% accuracy on the APTOS 2019 dataset and demonstrating strong performance across multiple evaluation metrics [22]. Hekmat et al. (2025) proposed a DE-optimized ensemble combining MobileNetV1, MobileNetV2, and ResNet50V2, achieving up to 98% accuracy in brain tumor detection, with improved interpretability using Grad-CAM [23]. Nanni et al. (2023) proposed ensembles of diverse CNN and transformer models optimized with novel Adam-based variants, demonstrating superior performance across multiple benchmarks and highlighting the benefits of combining varied architectures and optimizers for image classification [24]. Abulfaraj et al. (2025) proposed a multi-label image classification ensemble integrating a Vision Transformer (ViT) with enhanced MobileNetV2 and DenseNet201 models using parallel convolutional layers and a voting mechanism. The model achieved accuracies of 98.24% on VOC 2007, 98.89% on VOC 2012, 99.91% on MS-COCO, and 96.69% on NUS-WIDE 318. Their approach demonstrated superior performance over individual models, effectively combining global and local feature learning [25]. Amin et al. (2023) introduced a Deep Learning Based Active Learning (DLBAL) framework combining EfficientNet-B0 with an enhanced sample selection strategy to reduce manual annotation in image classification. Unlike conventional uncertainty-based methods, DLBAL incorporates high-confidence samples to improve learning efficiency. Experimental results on CACD and Caltech-256 show that the method outperforms existing approaches in both accuracy and annotation cost reduction [26]. In another research, Amin et al. (2022) proposed EADN, a lightweight deep learning model for anomaly detection in surveillance videos, combining shot segmentation, time-distributed CNN layers, and LSTM for spatiotemporal feature learning. The model achieved AUCs of 93% on UCSDped1, 97% on UCSDped2 and CUHK Avenue, and 98% on UCF-Crime, outperforming state-of-the-art methods while maintaining low computational cost and false alarm rates [27]. Similarly contributing to the world of research, Amin et al. (2023) proposed ADSV, an attention-based deep learning model combining LWCNN, LSTM, and attention mechanisms for anomaly detection in surveillance videos. Using shot segmentation and time-distributed layers, the model processes chronologically ordered frames to extract spatiotemporal features. Evaluated on CUHK-Avenue and UCF-Crime datasets, ADSV achieved accuracy improvements of 12.9 and 14.88% over existing methods, with reduced false alarms and a compact model size of 54.1 MB. The approach demonstrates strong generalization while maintaining computational efficiency [28]. In another study, N. Rohith et al. (2023) enhanced VGG-19-BN and ResNet101 by incorporating advanced attention modules like CBAM and ECANet, thereby allowing the networks to focus on critical regions of medicinal plant images. Employing the DIMPSAR dataset [9, 17], the authors curated a final dataset of 146 samples per class across 40 classes, demonstrating the benefits of attention mechanisms in improving classification performance [5].

Several hybrid models have also been introduced to bolster medicinal plant classification. Tiwari et al. (2024) developed MedLeafNet, a CNN-ViT architecture trained on a subset of the PlantVillage dataset containing 38 plant disease classes and 54,272 images. By concentrating on 20 targeted classes, they addressed dataset imbalance and achieved 95–96% accuracy, surpassing conventional techniques. MedLeafNet employs contrast boosting, sharpening, and color-based segmentation for preprocessing and leverages data resampling and augmentation to enhance generalization. This system is deployed as a web-based platform offering real-time disease identification and prevention recommendations [29]. Khan et al. (2025) proposed a hybrid ensemble model combining Vision Transformer (ViT-L16) with CNNs (ResNet50, EfficientNetB1, and a custom ProDense block) for breast cancer detection from mammograms. The model achieved 98.08% accuracy on the INbreast dataset, outperforming existing methods by leveraging both transformer-based global feature extraction and deep CNN features [30]. Similarly, Hajam et al. (2023) applied VGG16, VGG-19, and DenseNet201 with ensemble learning to classify leaves from 30 medicinal plant classes, comprising a total of 1835 images. Their best-performing ensemble—VGG-19 and DenseNet201, achieved a 99.12% test accuracy, underlining ensemble learning’s efficacy in reducing dependence on manual expertise for medicinal plant identification [31]. In another effort, Kunjachan et al. (2024) employed a CNN with four convolutional layers, pooling operations, a fully connected layer, and a soft-max classifier for herb recognition. Using the ReLU activation function and Adam optimizer with a time-based decay learning rate, the model achieved a 96.25% accuracy, affirming the utility of deep learning, preprocessing, and augmentation for medicinal plant applications [32]. Beyond pure image analysis, Liu et al. (2022) introduced TCMBERT, a two-stage transfer learning framework for traditional Chinese medicine (TCM) prescription generation from limited clinical records. This pioneering method effectively handles sequence generation tasks, outperforming existing models in both qualitative and quantitative evaluations [33]. Lastly, Mujahid et al. (2024) achieved a 92.66% accuracy in classifying Indonesian herbal leaves using CNNs on a dataset gathered via Bing Downloader Scraping, demonstrating low loss values and reliable feature extraction for herbal medicine research and biodiversity protection [34].

In our earlier work [35], we introduced an integrated framework that combines convolutional neural networks and vision transformers for precise soil‐type classification, alongside a fuzzy-logic engine to generate crop‐recommendation profiles. These methods were encapsulated in a mobile application capable of both rapid soil analysis and tailored crop‐suitability guidance. In this study, we build upon that foundation by adapting and extending our computer-vision and decision-support pipeline to the automated identification of medicinal herbs.

Despite notable progress in automated herb recognition, current research is still hampered by several persistent limitations. First, there is an acute shortage of well-curated, herb-specific image corpora. The few public collections that do exist are often small, imbalanced, and contain mislabeled or poor-quality images, all of which undermine the training of data-hungry deep networks. Second, most studies adopt either convolutional neural networks to exploit local, fine-grained texture cues or vision transformers to capture long-range contextual relationships, but rarely integrate the complementary strengths of both families of models. Consequently, many published systems perform well under controlled conditions yet generalize poorly to the heterogeneous settings encountered in the field. Finally, even when technical accuracy is satisfactory, research prototypes seldom mature into user-friendly, real-time tools, limiting their practical value for agronomists, clinicians, and end-users.

In response, the present work makes three intertwined contributions. (i) We assemble and rigorously standardize a large-scale medicinal-herb image repository, cleaning labels and removing low-quality samples to ensure reliable supervision. (ii) We introduce PAHD, a preprocessing pipeline that enhances color constancy and mitigates background noise, and we propose a hybrid ensemble in which CNN backbones and ViT components are jointly optimized to fuse local and global features. (iii) We deploy this model in “Herbify”, a cross-platform application that delivers fast, on-device inference and an intuitive interface for real-time herb identification.

Methodology

The study aims to develop an advanced artificial intelligence framework designed for the accurate identification of common herbs. Figure 1 presents a comprehensive overview of the workflow underlying the methodology. The methodology structured into two primary phases, each focusing on distinct objectives essential for achieving a robust AI model. Phase 1 centers on the creation of a comprehensive and large-scale herb dataset, named the ‘Herbify.’ This phase involves the integration and meticulous cleaning of the sub-datasets, which are processed to form the complete Herbify dataset. The dataset creation process emphasizes rigorous data standardization to ensure high-quality inputs for the model’s subsequent training and application stages. Phase 2 focuses on constructing and deploying the AI framework. It begins with normalizing the Herbify dataset into a consistent format that facilitates effective model training. Following this standardization, the dataset is partitioned into subsets for training, validation, and testing. The main emphasis of this phase is on model training, which employs state-of-the-art techniques such as convolutional neural networks (CNNs) and vision transformers (ViTs). Thereafter, the models are trained and rigorously tested to assess their performance in herb classification. Following training, the framework transitions to the application stage, where CNNs and ViTs are combined through ensembling techniques to optimize classification accuracy. The best performing ensemble model is then incorporated into a fully functional, web-based application designed for end-user accessibility, providing a seamless interface for practical herb identification.

Fig. 1.

The overall two-phase workflow adopted in this study’s methodology. In Phase I (Processing), the raw DIMPSAR corpus is first cleaned and quality-verified, then standardized using the PAHD algorithm. The standardized DIMPSAR data is subsequently merged with the DeepHerb dataset to create the Herbify dataset, which undergoes further manual verification, cleaning and normalization to ensure consistency. In Phase II (Development), the datasets are preprocessed and partitioned into training, validation and test splits; multiple candidate models are then trained and evaluated. The models are then combined into various ensembles. Finally, the top-performing ensemble is selected and deployed within the herb-identification application

The study introduces several notable works, including an advanced preprocessing pipeline, referred to as PAHD (Preprocessing Algorithm for Herb Detection), and the novel integration of the Herbify dataset. It also pioneers the development of hybrid models, created through the ensembling of state-of-the-art CNNs and ViTs, which enhances model performance by leveraging the unique strengths of each architecture. The final product, a user-friendly web application, offers reliable herb recognition capabilities, making the AI framework accessible for practical, real-world applications.

Herb datasets

The study employs two primary sub-datasets—DIMPSAR [9] and DeepHerb [10], along with the newly processed and merged Herbify dataset. The DIMPSAR dataset was individually preprocessed, formatted, and then merged with DeepHerb dataset to create the comprehensive Herbify dataset used for the final analyses. Each dataset is organized in a standardized format, with folders representing herb classes, and all images for a given class stored within the respective folder. Overall, the research encompasses three distinct dataset variations: the processed DIMPSAR dataset, the DeepHerb dataset, and the final, integrated Herbify dataset. This progression from individual datasets to a unified, comprehensive dataset underpins the study’s robust framework for medicinal herb classification.

The herbs and their plants in the datasets possess medicinal properties, with their leaves offering significant benefits for treating various human ailments. Moreover, components of these plants—including the bark, fruits, and seeds are crucial in producing a diverse array of medicinal compounds [10]. Their simple cultivation process makes them ideal candidates for growth in home or community gardens with minimal effort. Disseminating knowledge about these medicinal herbs and their practical applications is essential for increasing public awareness and promoting their use in natural health practices [10, 36].

DIMPSAR dataset

The DIMPSAR dataset comprises images of Indian medicinal plants, curated through fieldwork conducted in various botanical gardens across Karnataka and Kerala, India [9]. To emulate a smartphone-based image acquisition approach, a group of smartphone users captured the images, ensuring the dataset reflects real-world conditions with diverse perspectives, variations in leaf color, multiple resolutions, and differing capture distances. Images were acquired using fixed-lens smartphone cameras, with spatial resolutions ranging from 2560 × 1920 to 5312 × 2988 pixels. This unique acquisition process contributes to the dataset’s authenticity, capturing the challenges of real-time conditions such as fluctuating lighting, occlusions, and variable backgrounds. As a result, the DIMPSAR dataset offers substantial value for applications in plant analysis and recognition in realistic environments. To address class imbalance issues inherent in the dataset, the dataset’s authors employed image augmentation techniques. The original DIMPSAR dataset contained two main image sets: 5900 images representing forty plant species, and a second set comprising single-leaf images of eighty species with a total of 6900 samples [9, 17]. Since this study specifically focuses on single-leaf image-based herb detection, only the latter set, which originally contained eighty plant species, was selected. Notably, this set included duplicate classes, which, after removing similar images, was reduced to seventy-nine distinct plant species. The original dataset presented several quality concerns, including low-resolution images, noise, background biases, motion artifacts, out-of-focus regions, suboptimal cropping, and occlusions. To address these issues, a thorough manual cleaning process was conducted. Images with particularly low resolution, high background bias, significant motion artifacts, or severe occlusions were removed, while those with minor issues, such as out-of-focus regions or poor cropping, were manually corrected. Given the dataset’s varied backgrounds and inherent inconsistencies, a novel preprocessing algorithm named PAHD was introduced to standardize the images, ensuring a consistent format across samples.

The resulting DIMPSAR dataset variation produced by this study consists of 4735 images spanned across seventy-nine classes, each herb class manually cleaned and standardized using the PAHD preprocessing algorithm. This enhanced dataset provides a reliable foundation for herb detection and medicinal plant classification in real-world applications. The scientific names of the herb species included in the final, refined version of the DIMPSAR dataset are as follows: Allium cepa, Aloe barbadensis miller, Andrographis paniculata, Annona squamosa, Artocarpus heterophyllus, Azadirachta indica, Bacopa monnieri, Bambusa vulgaris, Basella alba, Brassica oleracea, Calotropis gigantea, Capsicum annuum, Cardiospermum halicacabum, Carica papaya, Catharanthus roseus, Chamaecostus cuspidatus, Cinnamomum camphora, Citrus limon, Citrus medica, Coffea arabica, Coleus amboinicus, Colocasia esculenta, Coriandrum sativum, Cucurbita, Curcuma longa, Cymbopogon, Ducati Panigale, Eclipta prostrata, Eucalyptus globulus Labill, Euphorbia hirta, Gomphrena globosa, Graptophyllum pictum, Hibiscus rosa-sinensis, Hymenaea courbaril, Ixora coccinea, Jasminum, Justicia adhatoda, Lantana camara, Lawsonia inermis, Leucas aspera, Magnolia champaca, Mangifera indica, Manilkara zapota, Mentha, Momordica dioica, Morinda citrifolia, Moringa oleifera, Murraya koenigii, Neolamarckia cadamba, Nerium oleander, Nyctanthes arbor-tristis, Ocimum basilicum, Ocimum tenuiflorum, Papaver somniferum, Phaseolus vulgaris, Phyllanthus emblica, Piper betle, Piper nigrum, Pisum sativum, Pongamia pinnata, Psidium guajava, Punica granatum, Radermachera xylocarpa, Raphanus sativus, Ricinus communis, Rosa rubiginosa, Ruta graveolens, Saraca asoca, Saraca asoca, Solanum lycopersicum, Solanum nigrum, Spinacia oleracea, Syzygium cumini, Tagetes, Tamarindus indica, Tecoma stans, Tinospora cordifolia, Wrightia tinctoria, and Zingiber officinale.

DeepHerb dataset

The DeepHerb dataset was developed to address the lack of available datasets specifically for medicinal herbs. This dataset was collected from various locations across Karnataka, India, with images captured either by mobile phone or scanner. The original dataset contained 2515 images at a resolution of 1600 × 1200 pixels, encompassing forty distinct classes [10]. The openly available iteration of the DeepHerb dataset has been refined to 1835 images spanning thirty classes from the original forty [37]. Since the DeepHerb dataset was already in a cleaned and standardized format suitable for the study’s requirements, no additional cleaning or processing was necessary. It was incorporated directly in its existing form in the current study. The scientific names of the herb species included in the current version of the DeepHerb dataset are as follows: Alpinia Galanga, Amaranthus Viridis, Artocarpus Heterophyllus, Azadirachta Indica, Basella Alba, Brassica Juncea, Carissa Carandas, Citrus Limon, Ficus Auriculata, Ficus Religiosa, Hibiscus Rosa-sinensis, Jasminum, Mangifera Indica, Mentha, Moringa Oleifera, Muntingia Calabura, Murraya Koenigii, Nerium Oleander, Nyctanthes Arbor-tristis, Ocimum Tenuiflorum, Piper Betle, Plectranthus Amboinicus, Pongamia Pinnata, Psidium Guajava, Punica Granatum, Santalum Album, Syzygium Cumini, Syzygium Jambos, Tabernaemontana Divaricata, andTrigonella Foenum-graecum.

Herbify dataset

The Herbify dataset represents an advanced, comprehensive, and expanded compilation of medicinal herb images, derived from the cleaned and processed versions of the DIMPSAR and DeepHerb datasets. This merged dataset underwent additional manual verification, with any anomalies or problematic images carefully removed. Herb species common to both DIMPSAR and DeepHerb were consolidated to create a unified dataset consisting of 91 herb species. Images within the Herbify dataset vary in spatial resolution from 103 × 94 pixels to 4236 × 4447 pixels, with an average resolution of approximately 1267 × 1135 pixels. The dataset includes a total of 6104 images, representing ninety-one unique herb species. Each species class contains between 7 and 163 images, with an average of 67 images per class.

Appendix provides a detailed description of the Herbify dataset. For each herb, the sample image, scientific name, common name, number of available samples, the corresponding sub-dataset, threat status, medicinal properties, and its typical location are detailed in the section.

Dataset preparation

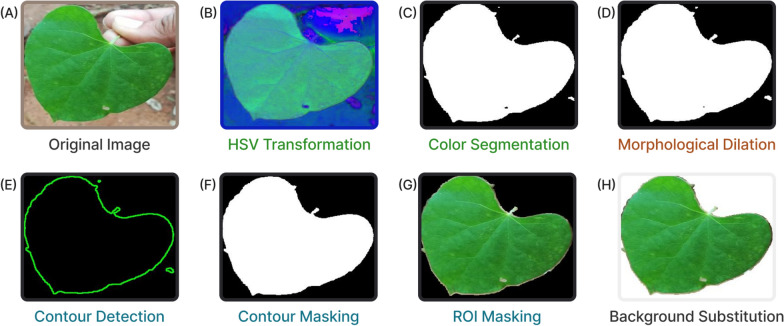

Data preprocessing represents a critical phase in deep learning pipelines, particularly when handling datasets like DIMPSAR, which present challenges due to substantial background variability, irregularities, and extraneous elements. The application of a robust preprocessing algorithm effectively addresses these issues, enhancing image quality and consistency prior to input into deep learning models. For example, images within the DeepHerb dataset are standardized, with only the herb visible against a uniform white background. In contrast, the DIMPSAR dataset presents highly varied backgrounds, often including additional elements such as human hands and other non-essential objects that introduce noise and visual clutter. To address these challenges, a novel preprocessing approach was implemented, referred to as the Preprocessing Algorithm for Herb (object) Detection (PAHD). The PAHD algorithm systematically reduces background interference and extraneous elements in DIMPSAR images, facilitating clearer input for model training. Figure 2 illustrates the step-by-step application and outcome of PAHD, while Algorithm 1 provides a detailed breakdown of each stage. An example of background clutter is shown in Fig. 2 (A), where a leaf is depicted along with a visible hand holding it. Such extraneous details can introduce inconsistencies in learning processes during model training, potentially affecting model performance.

Fig. 2.

Workflow of the Preprocessing Algorithm for Herb Detection (PAHD), comprising RGB → HSV conversion, color-based leaf segmentation, morphological dilation, contour detection and masking, ROI extraction, and background substitution

Data augmentation is a fundamental preprocessing strategy that significantly boosts both the volume and diversity of data available for model training. Applying these transformations exclusively to the training set yields multiple variants of the original data, thereby strengthening the model’s resilience and improving its generalization capabilities. Moreover, deep learning architectures typically demand input images to conform to specific size, quality, and format requirements; preprocessing steps guarantee that these conditions are satisfied [38].

This preparatory stage is vital for boosting the model’s ability to detect patterns and extract salient features, even when the dataset possesses inherent limitations. Overall, preprocessing enhances model robustness and improves generalization to unseen data.

The subsequent sections offer a comprehensive overview of the preprocessing methods utilized in this study.

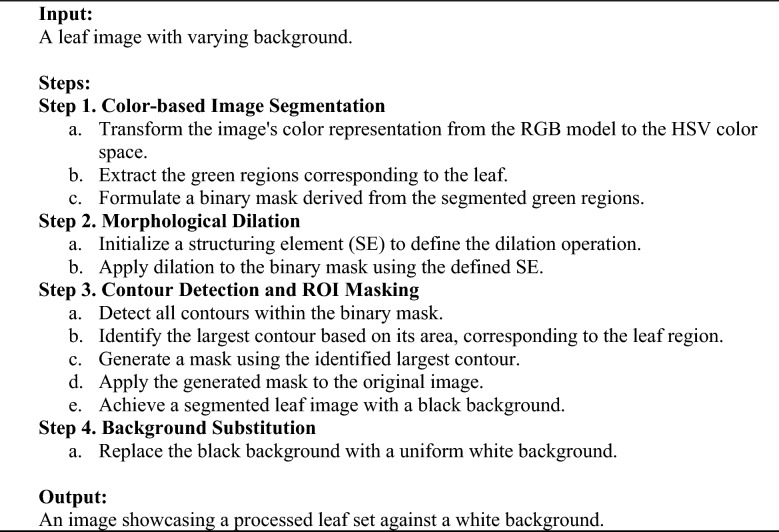

Algorithm 1.

Preprocessing Algorithm for Herb Detection (PAHD)

PAHD: color-based image segmentation

One major challenge in processing herb images is the frequent occurrence of various objects in both the foreground and background, beyond just the leaf itself. Addressing this issue effectively requires leveraging color information, which can be done through transformations in color space. The first step in the PAHD algorithm is thus color-based image segmentation, where green regions are extracted to target the segmentation of the leaf. The process starts by converting the input image from the RGB (Red, Green, and Blue) model into the HSV (Hue, Saturation, and Value) format.

The RGB color space, while prevalent in digital imaging, is suboptimal for color-based segmentation tasks due to its sensitivity to lighting variations, which often leads to inconsistent pixel intensity values for similarly colored objects. By contrast, the HSV color space separates color into three distinct channels—hue, saturation, and value offering more stable and reliable segmentation under variable lighting conditions. In particular, hue indicates the specific color (for example, red, green, or blue), saturation measures how vivid or pure that color is, and value reflects its brightness or lightness [39]. The equations for converting an image from RGB to HSV are provided in Eqs. 1–5, wherein the RGB color model, the values R, G, and B indicate the intensity of the red, green, and blue channels, respectively. Conversely, in the HSV model, H, S, and V correspond to hue, saturation, and value [40]. This separation enhances segmentation accuracy, making it especially useful in real-world images with uncontrolled lighting. Figure 2 (B) displays an example of an image converted to HSV.

| 1 |

| 2 |

| 3 |

| 4 |

| 5 |

Once the image is converted to HSV, segmentation focuses on isolating green regions that represent the leaf while excluding extraneous elements. Since green is the dominant color in plant life, a defined range of HSV values captures the varied hues, saturations, and brightness levels of green typically found in vegetation. In particular, the following HSV thresholds are defined to encompass a wide range of green hues, the lower bound hue is set at 35°, corresponding to a conventional green in the color spectrum. Saturation and value for the lower bound are set to 40 and 50, respectively, to eliminate darker regions and low-chroma areas, such as background noise or shadows. For the upper bound, the hue extends to 85°, encompassing a wider range of green tones. The saturation and value are set to their maximum (255), allowing the algorithm to capture all chromatic variations and ensure that both dark and light green shades are effectively included. Figure 2 (C) illustrates a sample binary mask of a green leaf, extracted through these bounds. Applying these defined bounds in the HSV color space generates a binary mask that isolates the green regions associated with the leaf, effectively filtering out non-green elements such as the background and other extraneous objects.

PAHD: morphological dilation

The next stage of the PAHD algorithm involves applying morphological dilation, a technique essential for refining the extracted binary mask to achieve accurate representation of the regions of interest (ROIs) and address any minor gaps or imperfections. In image processing, morphological techniques like dilation, erosion, opening, and closing are extensively employed to adjust the structure of objects in binary images [41]. Dilation is a morphological operation that fuses two sets by performing vector addition with a given structuring element (SE). When this process is applied to an image I using the SE, it results in a new binary image B, as defined by Eq. 6 [42]:

| 6 |

In simpler terms, each white (foreground) pixel in the binary mask is expanded outward, filling small gaps and linking fragmented sections of the leaf. The structuring element used for dilation—often a small matrix, such as a (3 × 3) or (5 × 5) square or circular shape, defines the extent of this expansion. This element acts as a neighborhood radius for each pixel and determines the degree of foreground region enlargement. The dilation operation is further described by Eq. 7, which highlights how bright regions are enhanced and expanded [43]:

| 7 |

Applying dilation to the binary mask of the leaf’s green regions provides several key benefits. First, it helps smooth jagged or irregular edges that may result from noise in the original image or imperfect segmentation thresholds. Dilation also effectively bridges small, disconnected green regions, which may occur due to image noise, shadow effects, or incomplete segmentation. Furthermore, it incorporates smaller or fragmented leaf sections that might have been missed due to thresholding errors or low contrast, thereby creating a more continuous and complete representation of the leaf structure. Finally, dilation enhances the mask’s contours, facilitating more accurate contour detection in subsequent processing stages. Figure 2 (D) displays an instance of the mask after the dilation process, illustrating the improved continuity and clarity of the leaf structure.

PAHD: contour detection and ROI masking

Following morphological dilation, the next critical stage in the PAHD pipeline is contour detection within the processed binary mask, alongside Region of Interest (ROI) masking. Contours are essential for accurately delineating the boundaries within an image, facilitating the isolation of the leaf as the primary region of interest. By extracting contours from the dilated binary mask, the algorithm precisely outlines the leaf, effectively excluding any remaining background artifacts or non-leaf regions. The contour detection process focuses on identifying only the outermost contours, which simplifies the task by capturing the primary boundary of the leaf without unnecessary complexity [44]. Figure 2 (E) illustrates an example of the contours that have been detected in the sample leaf image.

After identifying the contours, they are sorted according to their area, calculated using Green’s Algorithm [45]. The formula for area calculation via Green’s theorem is shown in Eq. 8 [46]:

| 8 |

The largest contour, typically corresponding to the main object of interest (in this case, the leaf), is selected. Selecting the largest contour enhances robustness by reducing the likelihood of including noise or smaller, irrelevant regions, such as residual green fragments caused by lighting variations or image noise.

With the largest contour identified, a binary mask is generated to enclose this contour, resulting in a refined representation of the leaf boundary. This contour mask is created by filling in the largest contour, producing a binary mask where all pixels within the contour are marked as foreground (white), and pixels outside the contour are designated as background (black). This high-precision mask precisely delineates the leaf and is prepared for the final ROI extraction stage [47]. Figure 2 (F) presents a sample of the binary mask.

In the final stage of this process, the original image is filtered with the contour mask to isolate the leaf using ROI masking. By overlaying the contour mask onto the original image, only the pixels corresponding to the leaf contour are preserved, while all other areas are effectively masked out, leaving a clean image of the leaf alone [48]. The equation for generating the ROI-extracted image, where I is the image and mask is the binary mask, is shown in Eq. 9:

| 9 |

The resulting image, depicted in Fig. 2 (G), displays the detected leaf against a black background. This final output presents a segmented image where the leaf stands out prominently, with all non-leaf areas removed.

PAHD: background substitution

In the final stage of the PAHD process, the isolated leaf previously set against a black background is transferred onto a white background to ensure consistency with the formatting of the DeepHerb dataset. This background replacement involves substituting all black pixels in the segmented image with white pixels, while preserving the color and detail of the leaf itself. The transformation is achieved by creating a uniform white background layer and applying it to areas of the image where the contour mask does not encompass the leaf. This white background provides a neutral, uniform backdrop, minimizing any background variation and enhancing the contrast between the leaf and the surroundings. This process is essential for maintaining consistency. Additionally, this step mitigates the potential influence of background color on learning algorithms, as it reduces the likelihood of overfitting to background patterns or inconsistencies, which can be especially problematic in complex deep learning models [49]. Figure 2 (H) illustrates the final result image, highlighting the major difference compared to the original image, with background inconsistencies removed and the target object, the leaf, properly isolated.

Once background replacement is complete, the processed DIMPSAR dataset variant once again undergoes a rigorous review and cleaning process to ensure quality and consistency across all images. This inspection process verifies that no background elements or artifacts are present and confirms that each leaf is fully isolated with sharp, accurate contours. Therefore, rigorous quality control is essential for maintaining the dataset’s integrity. Even slight discrepancies or subtle background variations can adversely influence the performance of machine learning models that depend on this data. Furthermore, the review process enables the identification and correction of any errors in segmentation that may have occurred during the PAHD algorithm application.

Augmentation

A primary obstacle in crafting high-performance deep neural networks lies in sourcing sufficiently large and heterogeneous datasets [50]. These models depend on abundant training data to deliver reliable, accurate, and robust outcomes. Comprehensive datasets, by offering a wide spectrum of examples, enhance the network’s learning process and its ability to generalize. To meet these requirements, data augmentation has emerged as an indispensable preprocessing technique in neural network workflows. This method artificially enlarges the training set by applying diverse transformations to existing samples, thus increasing both the size and variability of the dataset [51].

In this study, multiple data augmentation techniques were systematically applied to the training subsets of all datasets, with transformations carefully selected to enhance the model’s adaptability to varied inputs. The applied techniques included horizontal and vertical flips, image transposition, adjustments in brightness and contrast, modifications to tone curves, gamma adjustments, and the addition of blurring effects. Noise, such as ISO and Gaussian noise, was also introduced to simulate real-world conditions [52]. These augmentations were implemented using the Albumentations library in Python, which supports a range of robust, efficient image transformation methods [53].

Adjusting image dimensions

Before training commenced, every image in the dataset was rescaled to the spatial specifications demanded by the respective architectures. Convolutional neural networks processed inputs standardized at 256 × 256 pixels, whereas vision transformers operated on frames resized to 224 × 224 pixels—the resolution conventionally prescribed for these models. Enforcing these uniform dimensions ensured consistent data flow during both training and evaluation and bolstered each model’s capacity to generalize when faced with unseen, real-world imagery.

Image normalization

Normalization serves as another core preprocessing strategy, particularly beneficial for image datasets, and is anticipated to enhance the quality of the herb dataset [54]. This process involves standardizing a tensor image by adjusting it based on its mean and standard deviation, which ensures that the input features are uniformly scaled and centralized. Such standardization is critical for achieving improved convergence during model training. By choosing defined mean and standard deviation values, i.e., reflecting the first and second order statistics for each channel, the z-score for the data can be computed on a channel-specific basis. This process is commonly known as standardization [55], expressed as

| 10 |

Here, inputchannel denotes the input data, meanchannel represents the channel mean, and stdchannel refers to the channel standard deviation. By adjusting each channel of the image data to mimic a standard normal distribution, normalization will significantly enhance the model’s ability to generalize across diverse herb image sets.

Dataset splitting

To optimize herb identification with CNN and ViT architectures, the dataset was stratified into three subsets. Seventy percent was devoted to training, while the remaining thirty percent was split evenly—fifteen percent for validation and fifteen percent for testing. This structured allocation enabled evaluation at successive phases: the training portion furnished ample leaf images for pattern learning; the validation portion tracked performance during training and highlighted generalization behavior; and the independent test portion provided a rigorous assessment on unseen herb images, delivering an unbiased estimate of real-world applicability [35].

Models development

This study investigates sophisticated deep-learning schemes for identifying herbs, leveraging convolutional neural networks alongside vision transformers to achieve high-accuracy classification. The CNN suite comprises MobileNet v3-Large, VGG-19, ResNet-152, and EfficientNet v2-Large, whereas the transformer lineup includes ViT-Base/16 and ViT-Large/16. To further elevate predictive power, we constructed ensembles that merged outputs from selected CNN and ViT models, thereby yielding a sturdier and more precise classifier. Figure 3 summarizes the core architectural elements of the CNN and ViT approaches.

Fig. 3.

Schematic depiction of (A) Convolutional Neural Network architectures and (B) Vision Transformer architectures

Convolutional networks

Deep learning has driven transformative advances in many fields, with convolutional neural networks at the forefront of these achievements. CNNs have been extensively refined and widely adopted for image‐analysis tasks [56]. Their hallmark convolutional layers excel at capturing local spatial patterns in visual data. A typical CNN consists of four principal layer classes—convolution, max-pooling, fully connected, and output—arranged sequentially [35, 57]. This modular design affords substantial flexibility, allowing models to be tailored to domain-specific goals such as automated herb classification. Figure 3 (A) illustrates the core configurations of CNNs.

Consequently, CNNs operate simultaneously as sophisticated feature encoders and discriminative classifiers. The feature value at location (i, j) in the k-th map of l-th layer, [58] is given by

| 11 |

Applying the nonlinear activation a(.) produces

| 12 |

Let pool(.) denote the pooling operator and the neighborhood centered at (i, j). The pooled response for feature map is then expressed as [59]

| 13 |

Through the combined action of convolutional and pooling layers, CNNs extract salient representations from the input, while fully connected layers leverage these abstractions for classification. To model complex patterns, CNNs employ diverse activation functions and span architectures of varying depth, width, and parameter counts [35, 60].

This study evaluates several representative CNN architectures. MobileNet v3-Large, selected for its lightweight depth-wise separable convolutions, offers rapid inference with minimal computational overhead [61]. VGG-19, developed by the Visual Geometry Group and comprising 19 layers with over 180 million parameters, is computationally demanding but delivers high precision in capturing fine textures and intricate structures, making it well suited to herb recognition [62, 63]. ResNet-152, a deep Residual Network, utilizes skip connections to mitigate vanishing‐gradient issues and effectively learns complex feature hierarchies [64, 65]. EfficientNet v2-Large balances depth, width, and resolution to achieve strong accuracy while maintaining relatively modest parameter and FLOP counts [66].

Vision transformers

Transformers’ extraordinary achievements in natural-language processing have redirected computer-vision research, inspiring a detailed examination of their capacity to solve sophisticated visual tasks [67]. Their principal strength lies in modelling long-range dependencies while executing computations in parallel—something conventional recurrent neural networks (RNNs) cannot do efficiently [68, 69]. In vision, their popularity stems from the self-attention mechanism, which encodes extended contextual relationships far more effectively than RNNs or LSTMs, whose performance typically degrades when sequences grow long. By explicitly capturing pairwise interactions, self-attention yields pronounced performance gains across numerous applications [70].

Recent advances—most notably the Vision Transformer (ViT) family—demonstrate that self-attention-centric models can outperform classical convolutional neural networks on a breadth of vision benchmarks [71]. ViT splits an image into fixed-size patches, flattens each patch into a vector of raw pixel intensities, and linearly projects these vectors to the model’s input dimension. Treating the resulting sequence analogously to words in a sentence allows the network to exploit self-attention for learning spatial relationships [35, 72]. Figure 3 (B) illustrates the vision transformers architecture.

Let denote a sequence of n tokens , each embedded in d dimensions. Self-attention projects the sequence into query, key, and value spaces using trainable matrices , , and (with ). The projections are , , and [73]. The layer output is

| 14 |

which enables every token to be refined by global contextual information.

In this work we employ two ViT variants: ViT-Base/16 (ViT-B/16) and ViT-Large/16 (ViT-L/16). ViT-B/16 comprises 12 Transformer encoder blocks and offers a computationally economical choice, whereas ViT-L/16 doubles the depth to 24 encoders, trading greater resource demands for superior accuracy [74]. Consequently, ViT-B/16 is well-suited to settings with restricted hardware budgets, while ViT-L/16 targets scenarios that prioritize higher capacity and precision.

Training procedure

Developing a reliable herb-identification system necessitates training learning algorithms on extensive, high-quality image repositories. Running large-capacity networks on limited data, however, incurs significant computational costs and heightens the danger of overfitting [75]. To address these issues, the study adopts transfer learning procedure, which re-purposes the models pretrained on massive datasets such as ImageNet so that their broad visual representations can be specialized for herb imagery [76].

CNNs and ViTs were initialized with ImageNet weights, after which their output layers were replaced with fully connected layers sized for each dataset: 79 classes for DIMPSAR, 30 for DeepHerb, and 91 for Herbify. An overview of modulation is shown in Fig. 4. The approach further employed fine-tuning, allowing gradients to adjust a large subset or all of the pretrained parameters rather than limiting updates to the added layers alone. Fine-tuning is especially effective when the target domain diverges substantially from the pretraining domain, as is the case here [77].

Fig. 4.

Transfer learning pipeline for herb classification: the pre-trained CNN/ViT’s original classification head is removed, replaced with a newly initialized head, and the entire model is then fine-tuned on the herb dataset

Adaptive Moment Estimation (Adam) optimized the CNNs, leveraging its ability to combine AdaGrad and RMSProp advantages for sparse-gradient, non-stationary tasks [78]. For ViTs, the AdamW variant was used because decoupling weight decay from the gradient step enhances regularization, stabilizes transformer training, and curtails overfitting [79].

Learning rate and weight decay, two crucial hyperparameters governing convergence were exhaustively explored for Herbify via Grid Search, which systematically tests predefined combinations to locate the optimal setting for the architecture and dataset [80]. Meticulous tuning of these values markedly improved training efficiency and predictive accuracy.

Training relied on the multi-class cross-entropy loss [72], expressed as

| 15 |

where is the ground-truth indicator for sample i and class j, is the predicted probability, N is the number of samples, and M is the number of herb species [81]. Minimizing this loss via the chosen optimizer progressively refines network parameters and elevates classification accuracy.

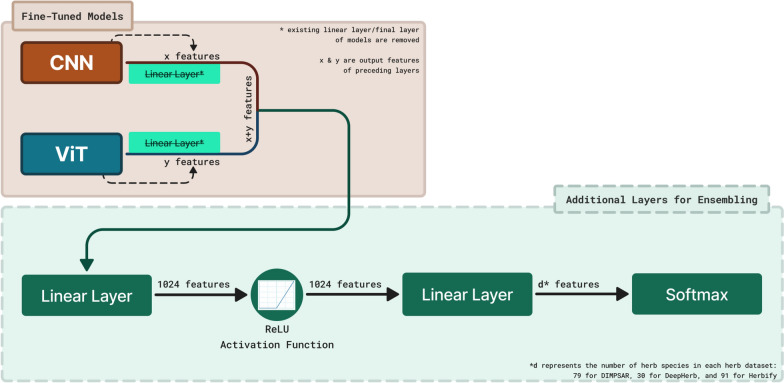

Convolutional neural networks and vision transformers ensembles

Once separate convolutional neural networks and vision transformer models had been fine-tuned on the herbify dataset, we merged them using an ensemble strategy. Harnessing the complementary strengths of CNNs and ViTs markedly improves classification accuracy—critical when an error could misidentify an herb species and propagate inaccurate medical information. CNNs specialize in capturing local structure through hierarchical convolutions, proving invaluable for detection and segmentation tasks [82, 83]. Fusing CNN-derived local features with the wide-context representations of ViTs yields a generalizable model that performs robustly across varied data distributions [84].

In the ensemble, the original classification heads were removed and replaced with layers dedicated to feature fusion. Feature maps from the CNN and ViT branches were concatenated, then passed into a fully connected layer generating 1024-dimensional representations.

These activations were fed through a Rectified Linear Unit (ReLU), enabling non-linear modelling [85]:

| 16 |

A second fully connected layer projected the 1024 features to the exact class count for each dataset—79 for DIMPSAR, 30 for DeepHerb and 91 for Herbify. A soft-max layer then converted the logits into probabilities [86]:

| 17 |

where N is the number of classes and the i-th logit.

Figure 5 illustrates the ensemble model’s overall structure, demonstrating the integration of CNN and ViT models for enhanced herb classification. For the ensemble models, only the newly added layers were trained by applying the transfer learning procedure. The pre-trained layers were frozen to ensure that no updates were made to the previously learned features. This approach preserves the knowledge acquired during fine-tuning [35].

Fig. 5.

Ensemble architecture combining CNN and ViT backbones: trained classification heads are removed, backbone feature embeddings are combined and fed into a series of MLP layers (Linear → ReLU → Linear), with a soft-max output for final herb classification

Eight ensemble variants were evaluated, each engineered to capitalise on the unique capabilities of its component networks (Table 1). By aggregating multiple learners, every ensemble delivers heightened performance and resilience across diverse deployment scenarios.

MobileL-ViTB (MobL-VB) combines MobileNet v3-Large and ViT-Base/16, striking an optimal balance between efficiency and rich feature representation—ideal for light-scale cloud services demanding fast yet accurate inference.

VGG19-ViTB (V19-VB) couples VGG-19 with ViT-Base/16, excelling when meticulous, context-aware analysis is paramount and model size is a secondary concern.

Res152-ViTL (R152-VL) unites ResNet-152 with ViT-Large/16, offering deep capacity for complex imagery, while EfficientL-ViTL (EffL-VL) blends EfficientNet v2-Large with ViT-Large/16 to provide a scalable compromise between accuracy and efficiency. Both serve large-scale cloud deployments requiring state-of-the-art accuracy with moderate resource use.

MobileL-VGG19-ViTB (MobL-V19-VB) and Res152-EfficientL-ViTL (R152-EffL-VL) extend capacity further by integrating multiple high-capacity CNNs with ViTs, delivering superior precision where both speed and accuracy are critical.

The most comprehensive ensembles, MobileL-VGG19-EfficientL-ViTB (MobL-V19-EffL-VB) and VGG19-Res152-EfficientL-ViTL (V19-Res152-EffL-VL), combine virtually all leading CNN families with ViTs. These configurations target high-stakes scenarios—such as advanced herb recognition research—where maximum predictive power is prioritised and ample computational resources are available.

Table 1.

Structural overview of ensemble models used in the study

| Models | Ensemble Model | |||||

|---|---|---|---|---|---|---|

| Convolutional Neural Networks | Vision Transformers | |||||

| MobileNet v3-Large | VGG-19 | ResNet-152 | EfficientNet v2-Large | ViT-Base/16 | ViT-Large/16 | |

| ✓ | × | × | × | ✓ | × | MobileL-ViTB |

| × | ✓ | × | × | ✓ | × | VGG19-ViTB |

| × | × | ✓ | × | × | ✓ | Res152-ViTL |

| × | × | × | ✓ | × | ✓ | EfficientL-ViTL |

| ✓ | ✓ | × | × | ✓ | × | MobileL-VGG19-ViTB |

| × | × | ✓ | ✓ | × | ✓ | Res152-EfficientL-ViTL |

| ✓ | ✓ | × | ✓ | ✓ | × | MobileL-VGG19-EfficientL-ViTB |

| × | ✓ | ✓ | ✓ | × | ✓ | VGG19-Res152-EfficientL-ViTL |

Together, the ensemble suite addresses deployment scenarios ranging from resource-constrained edge devices to high-throughput, high-resolution analytical platforms, providing adaptable solutions for diverse operational requirements [20, 87].

Performance evaluation

The devised models underwent a rigorous examination using a broad spectrum of metrics to ensure a thorough appraisal of their herb-classification capability [88]. Core indicators comprised Accuracy, Sensitivity, Specificity, Precision (also known as Recall), and the F₁-Score [89]. To counter the skew often present in herb datasets, the Geometric Mean (G-Mean) was likewise calculated, as it reliably reflects performance across imbalanced class distributions [90]. Together, these measures offer a multidimensional view of predictive effectiveness, each highlighting a distinct facet of classifier behavior. Equations 18–23 formalize these metrics.

| 18 |

| 19 |

| 20 |

| 21 |

| 22 |

| 23 |

where TP, TN, FP, and FN denote true positives, true negatives, false positives, and false negatives, respectively [91].

Additionally, plots of training accuracy and loss were produced to enhance interpretability. These visualizations track learning progress over time, revealing trends that help detect overfitting or underfitting and thus deepen insights into learning stability, model behavior, and anticipated generalizability [92].

Proposed application

This research introduces “Herbify,” a cross-platform web application tailored to botanists, specialists, and plant enthusiasts. Illustrated in Fig. 6, the system emphasizes an intuitive, streamlined interface that enables straightforward navigation and rapid inference. Given the widespread availability of smartphones, including in remote areas, the application is optimized for mobile use to maximize reach and usability across diverse populations [93]. Core web technologies—HTML, CSS, and JavaScript—constitute the front end. Backend tasks are orchestrates by a Flask server. To compensate for the limited computational power of mobile devices, resource-intensive operations such as image processing and herb identification are delegated to cloud servers. Users simply capture a photograph of a specimen; the image is then transmitted to the cloud for high-accuracy analysis and inference.

Fig. 6.

Pipeline overview of Herbify, a web application for herb identification. A user-captured image is uploaded to the cloud, standardized by the PAHD algorithm, classified by an ensemble model, and the predicted herb is displayed to the user

The processing pipeline commences with the Preprocessing Algorithm for Herb Detection (PAHD), which isolates the leaf, suppresses extraneous noise and background elements, and substitutes the background with a uniform white plane to prepare the image for recognition. For classification, the server deploys an advanced ensemble of CNNs and ViT, accurately identifying the plant and detailing its characteristics. After inference, the application returns the refined image alongside the specimen’s scientific and common names, furnishing users with a comprehensive identification report [94]. By combining affordability, accessibility, and ease of use, “Herbify” aspires to become a pivotal resource for botanical research, enhancing field studies through an efficient digital platform.

Setup

The development and training was carried out on a desktop workstation featuring an NVIDIA RTX 3080 Ti GPU, an Intel® Core™ i9-10900 K CPU, 64 GB of RAM, and 1 TB of SSD storage. Data preprocessing was performed using the OpenCV and Scikit-image libraries [95, 96]. Deep learning architectures—including convolutional neural networks, vision transformers, and ensemble constructs—were developed and trained within the PyTorch framework [97]. Evaluation metrics were computed with the Scikit-learn library [98], while graphical visualizations were created using the Matplotlib and Seaborn libraries [99, 100]. This setup facilitated efficient model training and evaluation, leveraging the capabilities of both hardware and software to achieve high-performance results.

Results and discussion

In this section, we deliver a detailed synthesis of the study’s achievements, emphasizing the herb datasets, the stages of model development, the experimental outcomes and insights gained, and the evaluation of various deep learning architectures. We examine convolutional neural networks (CNNs), vision transformers (ViTs) and their ensemble strategies, as well as the design and implementation of a dependable, high-precision herb recognition application.

Herb datasets

The study utilized three herb datasets: the DIMPSAR dataset with 79 herb species, the DeepHerb dataset with 30 herb species, and the cleaned and merged Herbify dataset containing 91 herb species. On the DIMPSAR dataset, the Preprocessing Algorithm for Herb Detection (PAHD) was applied after initial cleaning, cropping, and error correction to bring the DIMPSAR dataset to a unified standard. For the Herbify dataset, an extensive manual inspection was conducted after the merger to ensure its resilience and reliability.

We employed a uniform partitioning scheme for all datasets, designating 70% of the samples for training, 15% for validation, and the remaining 15% for testing. To bolster model resilience, the training subsets were subjected to comprehensive augmentation. These procedures ranged from fundamental operations—such as scaling and rotation—to more advanced manipulations, including noise injection to emulate real‐world variability. Table 2 summarizes the original dataset volumes (pre‐augmentation), the exact sample counts in each partition, and the expanded sizes of the augmented training sets across the three datasets.

Table 2.

Summary of herb datasets: total size and distribution across training (with and without augmentation), testing, and validation subsets

| Dataset | Total Number of Samples | Training Split (original) | Training Split (augmented) | Validation Split | Testing Split |

|---|---|---|---|---|---|

| DIMPSAR | 4735 | 3279 | 19,674 | 746 | 710 |

| DeepHerb | 1835 | 1273 | 7638 | 290 | 272 |

| Herbify (merged) | 6104 | 4233 | 25,398 | 958 | 913 |

Hyperparameters

Hyperparameters play a critical role in the training and optimization of deep learning models. To ensure optimal performance, hyperparameters were selected with meticulous care. Consistent hyperparameters for CNNs, ViTs, and ensemble models on the Herbify dataset are presented in Table 3. However, for CNN models on the DIMPSAR and DeepHerb datasets, variations in the learning rate and weight decay were applied, tailored to the specific dataset size and characteristics to mitigate overfitting and underfitting issues. These values are provided in Table 5.

Table 3.

Fixed hyperparameter settings for all models across herb datasets

| Hyperparameters | CNN | ViT | Ensemble model |

|---|---|---|---|

| Optimizer | Adam | AdamW | Adam |

| Batch Size | 16 | 16 | 16 |

| Max Epochs | 30 | 30 | 15 |

Table 5.

Learning rate and weight decay configurations for models over different herb datasets

| Hyperparameters | DIMPSAR | DeepHerb | Herbify (optimal) | ViT (All datasets) | Ensemble M. (Herbify DS) |

|---|---|---|---|---|---|

| Learning Rate (LR) | 1 × 10–4 | 1 × 10–4 | 1 × 10–5 | 1 × 10–5 | 1 × 10–4 |

| Weight Decay (WD) | 1 × 10–7 | 1 × 10–8 | 1 × 10–8 | 1 × 10–7 | 1 × 10–8 |

To optimize hyperparameters on the Herbify dataset, we conducted a grid search to pinpoint the optimal learning rate and weight decay. We selected the ResNet-50 architecture for convolutional models due to its favorable trade-off between computational cost and accuracy, and the ViT-Base/16 design for vision transformers. As detailed in Table 4, our grid spanned three candidate values for both learning rate and weight decay, yielding nine unique settings. We trained each configuration for ten epochs, with training times varying between approximately 45 and 120 min per run, depending on the model. Throughout the search, we consistently used the Adam optimizer for ResNet-50 and AdamW for ViT-Base/16, and maintained a batch size of 16.

Table 4.

Hyperparameter ranges for CNN and ViT architectures used in Grid Search

| Hyperparameters | Values |

|---|---|

| Learning Rate | 1 × 10–3, 1 × 10–4, 1 × 10–5 |

| Weight Decay | 1 × 10–7, 1 × 10–8, 1 × 10–9 |

The optimal hyperparameter configurations for CNNs are detailed in Table 5. For ViTs, the previously selected learning rate (1 × 10⁻5 or 1e-05) and weight decay (1 × 10⁻⁷ or 1e-07), utilized for training models over the DIMPSAR and DeepHerb dataset turned out to be the optimal hyperparameters, therefore these values remained unchanged across all datasets.

We applied similar hyperparameter tuning procedure to the ensemble architectures. By training only the newly appended layers, we preserved the pretrained representations and thus mitigated the risk of catastrophic forgetting. The hyperparameter values ultimately chosen for these ensembles, listed in Table 5, embody an optimal trade-off that enables each constituent model to contribute its strengths while upholding computational efficiency.

This structured approach to dataset preparation, augmentation, and hyperparameter tuning underpinned the robust performance observed across all models, laying the foundation for the successful deployment of an accurate herb recognition system.

Training and evaluation of models

Once preprocessing was complete, deep learning architectures were trained on three herb image collections via a fine-tuning strategy applied to both convolutional neural networks and vision transformers. The CNN architectures comprised MobileNet v3-Large, VGG-19, ResNet-152, and EfficientNet v2-Large, while the ViT variants included ViT-Base/16 and ViT-Large/16. Each model underwent fine-tuning under a standardized protocol with uniform hyperparameter settings. The DIMPSAR, DeepHerb, and Herbify datasets were split into training, validation, and test subsets, and model performance was evaluated using F₁-score, accuracy, precision, recall, specificity, and G-Mean. Due to pronounced class imbalance and distributional heterogeneity in the data, the F₁-score was selected as the primary metric for comparative analysis.

Training times varied based on the complexity (size and structure) of the models and the size of the datasets. For the DIMPSAR dataset, training durations ranged from 4 to 12 h. The DeepHerb dataset required 2–8 h, whereas Herbify, being the largest dataset, demanded 6–14 h. The most resource-intensive models were EfficientNet v2-Large and ViT-Large/16, while MobileNet v3-Large was the most computationally efficient due to its smaller architecture and fewer parameters. Model checkpoints were saved at each epoch upon achieving high validation accuracy, and the best-performing checkpoint over the validation split was used for testing. A visual representation of the F₁-scores of all models across the datasets in the form of bar graphs is provided in Fig. 7.

Fig. 7.

Comparative analysis of F₁-Scores for CNNs, ViTs, and ensemble models (on the Herbify dataset) across herb datasets

Performance analysis on the DIMPSAR dataset

The performance of the models on the DIMPSAR dataset is summarized in Table 6. Among all models, EfficientNet v2-Large achieved the highest F₁-score of 98.16%, alongside excellent accuracy (98.16%), precision (98.41%), sensitivity (98.16%), specificity (99.97%), and a G-Mean of 0.9906. These results underscore its capacity to effectively capture complex features within the dataset.

Table 6.

Evaluation metrics for convolutional neural networks and vision transformers architectures applied to the DIMPSAR dataset

| Model | F₁-Score (%) | Accuracy (%) | Precision (%) | Sensitivity (%) | Specificity (%) | G-Mean |

|---|---|---|---|---|---|---|

| MobileNet v3-Large | 97.45 | 97.46 | 97.79 | 97.46 | 99.96 | 0.9870 |

| VGG-19 | 94.60 | 94.78 | 95.01 | 94.78 | 99.93 | 0.9732 |

| ResNet-152 | 97.36 | 97.32 | 97.70 | 97.32 | 99.96 | 0.9863 |

| EfficientNet v2-Large | 98.16 | 98.16 | 98.41 | 98.16 | 99.97 | 0.9906 |

| ViT-Base/16 | 97.03 | 97.04 | 97.33 | 97.04 | 99.96 | 0.9849 |

| ViT-Large/16 | 96.76 | 96.76 | 97.16 | 96.76 | 99.95 | 0.9834 |

MobileNet v3-Large and ResNet-152 followed closely with F₁-scores of 97.45% and 97.36%, respectively. Comparable specificity (99.96%) and G-Means (0.9870 for MobileNet v3-Large and 0.9863 for ResNet-152) were exhibited by both model. Notably, MobileNet v3-Large outperformed ResNet-152 slightly in sensitivity (97.46% vs. 97.32%), indicating a better ability to identify true positive cases.

The comparison between CNN-based models and Vision Transformers revealed valuable insights. While the CNN models, particularly EfficientNet v2-Large, ResNet-152, and MobileNet v3-Large, demonstrated superior performance, the ViT architectures were competitive. ViT-B/16 achieved an F₁-score of 97.03%, marginally outperforming ViT-L/16, which scored 96.76%. Both ViT models exhibited high specificity (99.96% and 99.95%, respectively), reflecting strong capabilities in identifying negative samples. However, their slightly lower sensitivity and G-Mean values compared to the top-performing CNN models suggest that ViTs may be less effective at recognizing positive cases—a crucial factor given the dataset’s imbalance.

The observed differences between ViT-Base/16 and ViT-Large/16 are likely attributable to their architectural distinctions. ViT-B/16, with fewer parameters, demonstrated marginally better sensitivity and F₁-score, implying that a smaller ViT model generalize better to the dataset. Conversely, the larger capacity of ViT-L/16 did not yield improved performance, indicating inefficiencies in handling this particular dataset.

VGG-19 performed the least out of all models, achieving the least accuracy of 94.78%, with a F₁-score of 94.60%, highlighting the limitations of model’s ability to handle class imbalances. Furthermore, the trade-offs between precision and sensitivity are evident in some models. MobileNet v3-Large and ResNet-152 exhibited slightly higher precision than sensitivity, indicating a marginal bias toward correctly identifying negative samples. Specificity remained consistently high across all models, with values exceeding 99.93%, suggesting that false positives were rare for DIMPSAR dataset.

Figure 7 (A) provides a bar plot comparison of model performance on the DIMPSAR dataset. The training and validation performance trends for the DIMPSAR dataset are illustrated in Fig. 8 (A). Training accuracy curves indicate that most models converged by the third epoch, maintaining high performance thereafter. Simultaneously, all loss values plateaued by the fifth epoch, reflecting the stability and effectiveness of the training process.

Fig. 8.

Training accuracy and loss curves for all models over: (A) DIMPSAR dataset, (B) DeepHerb dataset, and (C) Herbify dataset

Performance analysis on the DeepHerb dataset

The evaluation of deep learning models on the DeepHerb dataset demonstrates exceptional accuracy, with several models achieving near-perfect results. Table 7 presents the performance metrics for the tested models. Among these, EfficientNet v2-Large delivered flawless outcomes, achieving perfect scores across all metrics: F₁-score, accuracy, precision, sensitivity, and specificity, each at 100 (with G-Mean being 1). This impeccable performance underscores the model’s unparalleled ability to accurately classify both positive and negative samples without error, and once again establishing itself as the benchmark. The model’s architecture, incorporating compound scaling and advanced feature extraction techniques, likely underpins its outstanding results, showcasing its capacity to handle even the dataset’s most nuanced variations.

Table 7.

Evaluation metrics for convolutional neural networks and vision transformers architectures applied to the DeepHerb dataset

| Model | F₁-Score (%) | Accuracy (%) | Precision (%) | Sensitivity (%) | Specificity (%) | G-Mean |

|---|---|---|---|---|---|---|

| MobileNet v3-Large | 99.27 | 99.26 | 99.36 | 99.26 | 99.97 | 0.9961 |

| VGG-19 | 99.27 | 99.26 | 99.37 | 99.26 | 99.97 | 0.9961 |

| ResNet-152 | 99.63 | 99.63 | 99.67 | 99.63 | 99.98 | 0.9980 |

| EfficientNet v2-Large | 100 | 100 | 100 | 100 | 100 | 1 |

| ViT-Base/16 | 98.50 | 98.52 | 98.71 | 98.52 | 99.94 | 0.9923 |

| ViT-Large/16 | 99.63 | 99.63 | 99.66 | 99.63 | 99.98 | 0.9980 |

Both ResNet-152 and ViT-Large/16 delivered exceptional results, each achieving an F₁-score of 99.63% and a geometric mean (G-Mean) of 0.9980. Both models maintained high precision (99.67% for ResNet-152 and 99.66% for ViT-L/16) and sensitivity (99.63%), indicating their effectiveness in balancing accurate positive and negative classifications.

MobileNet v3-Large and VGG-19 performed identically, attaining F₁-scores of 99.27% and G-Means of 0.9961. Although they did not surpass the top-performing models, their high specificity (99.97%) and competitive precision and sensitivity values affirm their reliability. MobileNet v3-Large, in particular, stands out for its lightweight architecture, making it a practical choice for deployment in resource-constrained environments such as mobile or edge devices.

ViT-Base/16 achieved the least F₁-score of 98.50% among all the models, which, while slightly lower than the top-tier models, still reflects excellent performance. Its marginally reduced precision (98.71%) and sensitivity (98.52%) suggest a limitation in capturing subtle feature representations compared to larger models such as ViT-L/16 or CNN-based architectures like EfficientNet v2-Large. Nevertheless, its high G-Mean of 0.9923 and specificity of 99.94% confirm its strong generalization ability, particularly in identifying negative samples accurately.

Across all models, specificity exceeded 99.94%, indicating a consistently low false-positive rate. The alignment of high precision and sensitivity values demonstrates a balanced ability to minimize false positives and correctly identify true positives.

These outcomes verify that the DeepHerb dataset constitutes a rigorously compiled and preprocessed resource for herb recognition, enabling multiple models to attain near-flawless performance. In Fig. 7 (B), a bar chart compares each model’s metrics on DeepHerb, while Fig. 8 (B) traces their training accuracy and loss curves. By epoch two, most networks have converged, achieving starting accuracies above 80% and loss values below one (with VGG-19 as the lone exception), thereby outperforming counterparts trained on the DIMPSAR dataset. Although all architectures exhibit high performance, VGG-19 requires additional epochs to stabilize, as its loss curve plateaus later.

Performance analysis on the herbify dataset

The Herbify dataset, created through the integration and further manual cleaning of the DIMPSAR and DeepHerb datasets, serves as a comprehensive benchmark for herb recognition tasks. The manual cleaning process significantly enhanced data quality, enabling models to extract critical features more effectively. Table 8 summarizes the performance of CNN and ViT models on this dataset, which consistently achieved high accuracy across all metrics.

Table 8.