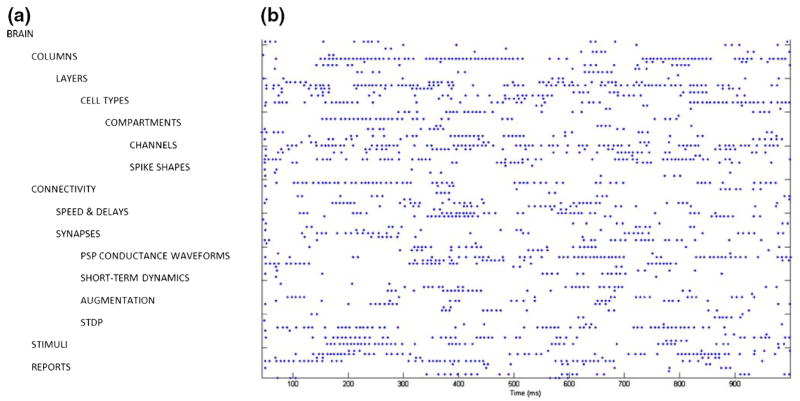

Abstract

We review different aspects of the simulation of spiking neural networks. We start by reviewing the different types of simulation strategies and algorithms that are currently implemented. We next review the precision of those simulation strategies, in particular in cases where plasticity depends on the exact timing of the spikes. We overview different simulators and simulation environments presently available (restricted to those freely available, open source and documented). For each simulation tool, its advantages and pitfalls are reviewed, with an aim to allow the reader to identify which simulator is appropriate for a given task. Finally, we provide a series of benchmark simulations of different types of networks of spiking neurons, including Hodgkin–Huxley type, integrate-and-fire models, interacting with current-based or conductance-based synapses, using clock-driven or event-driven integration strategies. The same set of models are implemented on the different simulators, and the codes are made available. The ultimate goal of this review is to provide a resource to facilitate identifying the appropriate integration strategy and simulation tool to use for a given modeling problem related to spiking neural networks.

Keywords: Spiking neural networks, Simulation tools, Integration strategies, Clock-driven, Event-driven

1 Introduction

The growing experimental evidence that spike timing may be important to explain neural computations has motivated the use of spiking neuron models, rather than the traditional rate-based models. At the same time, a growing number of tools have appeared, allowing the simulation of spiking neural networks. Such tools offer the user to obtain precise simulations of a given computational paradigm, as well as publishable figures in a relatively short amount of time. However, the range of computational problems related to spiking neurons is very large. It requires in some cases to use detailed biophysical representations of the neurons, for example when intracellular electrophysiological measurements are to be reproduced (e.g., see Destexhe and Sejnowski 2001). In this case, one uses conductance-based (COBA) models, such as the Hodgkin and Huxley (1952) type of models. In other cases, one does not need to realistically capture the spike generating mechanisms, and simpler models, such as the integrate-and-fire (IF) model are sufficient. IF type models are also very fast to simulate, and are particularly attractive for large-scale network simulations.

There are two families of algorithms for the simulation of neural networks: synchronous or “clock-driven” algorithms, in which all neurons are updated simultaneously at every tick of a clock, and asynchronous or “event-driven” algorithms, in which neurons are updated only when they receive or emit a spike (hybrid strategies also exist). Synchronous algorithms can be easily coded and apply to any model. Because spike times are typically bound to a discrete time grid, the precision of the simulation can be an issue. Asynchronous algorithms have been developed mostly for exact simulation, which is possible for simple models. For very large networks, the simulation time for both methods scale as the total number of spike transmissions, but each strategy has its own assets and disadvantages.

In this paper, we start by providing an overview of different simulation strategies, and outline to which extent the temporal precision of spiking events impacts on neuronal dynamics of single as well as small networks of IF neurons with plastic synapses. Next, we review the currently available simulators or simulation environments, with an aim to focus only on publically-available and non-commercial tools to simulate networks of spiking neurons. For each type of simulator, we describe the simulation strategy used, outline the type of models which are most optimal, as well as provide concrete examples. The ultimate goal of this paper is to provide a resource to enable the researcher to identify which strategy or simulator to use for a given modeling problem related to spiking neural networks.

2 Simulation strategies

This discussion is restricted to serial algorithms for brevity. The specific sections of NEST and SPLIT contain additional information on concepts for parallel computing.

There are two families of algorithms for the simulation of neural networks: synchronous or clock-driven algorithms, in which all neurons are updated simultaneously at every tick of a clock, and asynchronous or event-driven algorithms, in which neurons are updated only when they receive or emit a spike. These two approaches have some common features that we will first describe by expressing the problem of simulating neural networks in the formalism of hybrid systems, i.e., differential equations with discrete events (spikes). In this framework some common strategies for efficient representation and simulation appear.

Since we are going to compare algorithms in terms of computational efficiency, let us first ask ourselves the following question: how much time can it possibly take for a good algorithm to simulate a large network? Suppose there are N neurons whose average firing rate is F and average number of synapses is p. If all spike transmissions are taken into account, then a simulation lasting 1 s (biological time) must process N × p × F spike transmissions. The goal of efficient algorithm design is to reach this minimal number of operations (of course, up to a constant multiplicative factor). If the simulation is not restricted to spike-mediated interactions, e.g. if the model includes gap junctions or dendro-dendritic interactions, then the optimal number of operations can be much larger, but in this review we chose not to address the problem of graded interactions.

2.1 A hybrid system formalism

Mathematically, neurons can be described as hybrid systems: the state of a neuron evolves continuously according to some biophysical equations, which are typically differential equations (deterministic or stochastic, ordinary or partial differential equations), and spikes received through the synapses trigger changes in some of the variables. Thus the dynamics of a neuron can be described as follows:

where X is a vector describing the state of the neuron. In theory, taking into account the morphology of the neuron would lead to partial differential equations; however, in practice, one usually approximates the dendritic tree by coupled isopotential compartments, which also leads to a differential system with discrete events. Spikes are emitted when some threshold condition is satisfied, for instance Vm ≥ θ for IF models (where Vm is the membrane potential and would be the first component of vector X), and/or dVm/dt ≥ θ for Hodgkin–Huxley (HH) type models. This can be summarized by saying that a spike is emitted whenever some condition X ∈ A is satisfied. For IF models, the membrane potential, which would be the first component of X, is reset when a spike is produced. The reset can be integrated into the hybrid system formalism by considering for example that outgoing spikes act on X through an additional (virtual) synapse: X ← g0(X).

With this formalism, it appears clearly that spike times need not be stored (except of course if transmission delays are included), even though it would seem so from more phenomenological formulations. For example, consider the following IF model (described for example in Gütig and Sompolinsky (2006)):

where V(t) is the membrane potential, Vrest is the rest potential, ωi is the synaptic weight of synapse i, ti are the timings of the spikes coming from synapse i, and K(t − ti) = exp( − (t − ti)/τ) − exp( − (t − ti)/τs) is the post-synaptic potential (PSP) contributed by each incoming spike. The model can be restated as a two-variables differential system with discrete events as follows:

Virtually all PSPs or currents described in the literature (e.g. α-functions, bi-exponential functions) can be expressed this way. Several authors have described the transformation from phenomenological expressions to the hybrid system formalism for synaptic conductances and currents (Destexhe et al. 1994a,b; Rotter and Diesmann 1999; Giugliano 2000), short-term synaptic depression (Giugliano et al. 1999), and spike-timing-dependent plasticity (Song et al. 2000). In many cases, the spike response model (Gerstner and Kistler 2002) is also the integral expression of a hybrid system. To derive the differential formulation of a given post-synaptic current or conductance, one way is to see the latter as the impulse response of a linear time-invariant system [which can be seen as a filter (Jahnke et al. 1998)] and use transformation tools from signal processing theory such as the Z-transform (Kohn and Wörgötter 1998) (see also Sanchez-Montanez 2001) or the Laplace transform (the Z-transform is the equivalent of the Laplace transform in the digital time domain, i.e., for synchronous algorithms).

2.2 Using linearities for fast synaptic simulation

In general, the number of state variables of a neuron (length of vector X) scales with the number of synapses, since each synapse has its own dynamics. This fact constitutes a major problem for efficient simulation of neural networks, both in terms of memory consumption and computation time. However, several authors have observed that all synaptic variables sharing the same linear dynamics can be reduced to a single one (Wilson and Bower 1989; Bernard et al. 1994; Lytton 1996; Song et al. 2000). For example, the following set of differential equations, describing an IF model with n synapses with exponential conductances:

is mathematically equivalent to the following set of two differential equations:

where g is the total synaptic conductance. The same reduction applies to synapses with higher dimensional dynamics, as long as it is linear and the spike-triggered changes (gi ← gi + wi) are additive and do not depend on the state of the synapse (e.g. the rule gi ← gi + wi * f(gi) would cause a problem). Some models of spike-timing dependent plasticity (with linear interactions between pairs of spikes) can also be simulated in this way (see e.g.Abbott and Nelson 2000). However, some important biophysical models are not linear and thus cannot benefit from this optimization, in particular NMDA-mediated interactions and saturating synapses.

2.3 Synchronous or clock-driven algorithms

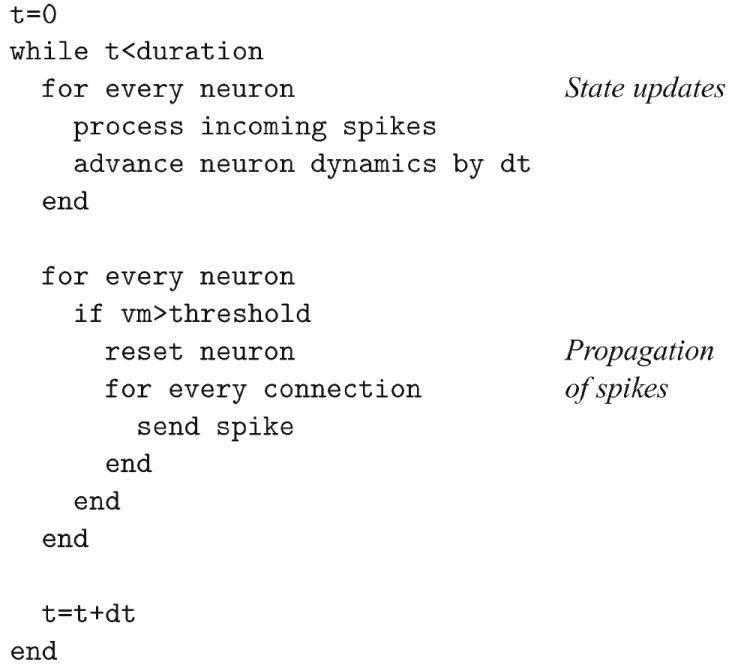

In a synchronous or clock-driven algorithm (see pseudo-code in Fig. 1), the state variables of all neurons (and possibly synapses) are updated at every tick of a clock: X(t) → X(t + dt). With non-linear differential equations, one would use an integration method such as Euler or Runge–Kutta (Press et al. 1993) or, for HH models, implicit methods (Hines 1984). Neurons with complex morphologies are usually spatially discretized and modelled as interacting compartments: they are also described mathematically by coupled differential equations, for which dedicated integration methods have been developed (for details see e.g. the specific section of Neuron in this review). If the differential equations are linear, then the update operation X(t) → X(t + dt) is also linear, which means updating the state variables amounts simply to multiplying X by a matrix: X(t + dt) = AX(t) (Hirsch and Smale 1974) (see also Rotter and Diesmann 1999, for an application to neural networks), which is very convenient in vector-based scientific softwares such as Matlab or Scilab. Then, after updating all variables, the threshold condition is checked for every neuron. Each neuron that satisfies this condition produces a spike which is transmitted to its target neurons, updating the corresponding variables (X ← gi(X)). For IF models, the membrane potential of every spiking neuron is reset.

Fig. 1.

A basic clock-driven algorithm

2.3.1 Computational complexity

The simulation time of such an algorithm consists of two parts: (1) state updates and (2) propagation of spikes. Assuming the number of state variables for the whole network scales with the number of neurons N in the network (which is the case when the reduction described in Section 2.2 applies), the cost of the update phase is of order N for each step, so it is O(N/dt) per second of biological time (dt is the duration of the time bin). This component grows with the complexity of the neuron models and the precision of the simulation. Every second (biological time), an average of F × N spikes are produced by the neurons (F is the average firing rate), and each of these needs to be propagated to p target neurons. Thus, the propagation phase consists in F × N × p spike propagations per second. These are essentially additions of weights wi to state variables, and thus are simple operations whose cost does not grow with the complexity of the models. Summing up, the total computational cost per second of biological time is of order

| (*) |

where cU is the cost of one update and cP is the cost of one spike propagation; typically, cU is much higher than cP but this is implementation-dependent. Therefore, for very dense networks, the total is dominated by the propagation phase and is linear in the number of synapses, which is optimal. However, in practice the first phase is negligible only when the following condition is met:

For example, the average firing rate in the cortex might be as low as F = 1 Hz (Olshausen and Field 2005), and assuming p = 10,000 synapses per neuron and dt = 0.1 ms, we get F × p × dt = 1. In this case, considering that each operation in the update phase is heavier than in the propagation phase (especially for complex models), i.e., cP < cU, the former is likely to dominate the total computational cost. Thus, it appears that even in networks with realistic connectivity, increases in precision (smaller dt, see Section 3) can be detrimental to the efficiency of the simulation.

2.3.2 Delays

For the sake of simplicity, we ignored transmission delays in the description above. However it is not very complicated to include them in a synchronous clock-driven algorithm. The straightforward way is to store the future synaptic events in a circular array. Each element of the array corresponds to a time bin and contains a list of synaptic events that are scheduled for that time (see e.g.Morrison et al. 2005). For example, if neuron i sends a spike to neuron j with delay d (in units of the time bin dt), then the synaptic event “i → j” is placed in the circular array at position p + d, where p is the present position. Circularity of the array means the addition p + d is modular ((p + d) mod n, where n is the size of the array—which corresponds to the largest delay in the system).

What is the additional computational cost of managing delays? In fact, it is not very high and does not depend on the duration of the time bin. Since every synaptic event (i → j) is stored and retrieved exactly once, the computational cost of managing delays for 1 s of biological time is

where cD is the cost of one store and one retrieve operation in the circular array (which is low). In other words, managing delays increases the cost of the propagation phase in equation (*) by a small multiplicative factor.

2.3.3 Exact clock-driven simulation

The obvious drawback of clock-driven algorithms as described above is that spike timings are aligned to a grid (ticks of the clock), thus the simulation is approximate even when the differential equations are computed exactly. Other specific errors come from the fact that threshold conditions are checked only at the ticks of the clock, implying that some spikes might be missed (see Section 3). However, in principle, it is possible to simulate a network exactly in a clock-driven fashion when the minimum transmission delay is larger than the time step. It implies that the precise timing of synaptic events is stored in the circular array (as described in Morrison et al. 2007a). Then within each time bin, synaptic events for each neuron are sorted and processed in the right order, and when the neuron spikes, the exact spike timing is calculated. Neurons can be processed independently in this way only because the time bin is smaller than the smallest transmission delay (neurons have no influence on each other within one time bin).

Some sort of clock signal can also be used in general event-driven algorithms without the assumption of a minimum positive delay. For example, one efficient data structure used in discrete event systems to store events is a priority queue known as “calendar queue” (Brown 1988), which is a dynamic circular array of sorted lists. Each “day” corresponds to a time bin, as in a classical circular array, and each event is placed in the calendar at the corresponding day; all events on a given day are sorted according to their scheduling time. If the duration of the day is correctly set, then insertions and extractions of events take constant time on average. Note that, in contrast with standard clock-driven simulations, the state variables are not updated at ticks of the clock and the duration of the days depends neither on the precision of the simulation nor on the transmission delays (it is rather linked to the rate of events)—in fact, the management of the priority queue is separated from the simulation itself.

Note however that in all these cases, state variables need to be updated at the time of every incoming spike rather than at every tick of the clock in order to simulate the network exactly (e.g. simple vector-based updates X ← AX are not possible), so that the term event-driven may be a better description of these algorithms (the precise terminology may vary between authors).

2.3.4 Noise in synchronous algorithms

Noise can be introduced in synchronous simulations by essentially two means:

Adding random external spikes

Simulating a stochastic process

Suppose a given neuron receives F random spikes per second, according to a Poisson process. Then the number of spikes in one time bin follows a Poisson distribution with mean F × dt. Thus one can simulate random external spike trains by letting each tick of the clock trigger a random number of synaptic updates. If F × dt is low, the Poisson distribution is almost a Bernouilli distribution (i.e., there is one spike with probability F × dt). It is straightforward to extend the procedure to inhomogeneous Poisson processes by allowing F to vary in time. The additional computational cost is proportional to Fext × N, where Fext is the average rate of external synaptic events for each neuron and N is the number of neurons. Note that Fext can be quite large since it represents the sum of firing rates of all external neurons (for example it would be 10,000 Hz for 10,000 external synapses per neuron with rate 1 Hz).

To simulate a large number of external random spikes, it can be advantageous to simulate directly the total external synaptic input as a stochastic process, e.g. white or colored noise (Ornstein–Uhlenbeck). Linear stochastic differential equations are analytically solvable, therefore the update X(t) → X(t + dt) can be calculated exactly with matrix computations (Arnold 1974) (X(t + dt) is, conditionally to X(t), a normally distributed random variable whose mean and covariance matrix can be calculated as a function of X(t)). Nonlinear stochastic differential equations can be simulated using approximation schemes, e.g. stochastic Runge–Kutta (Honeycutt 1992).

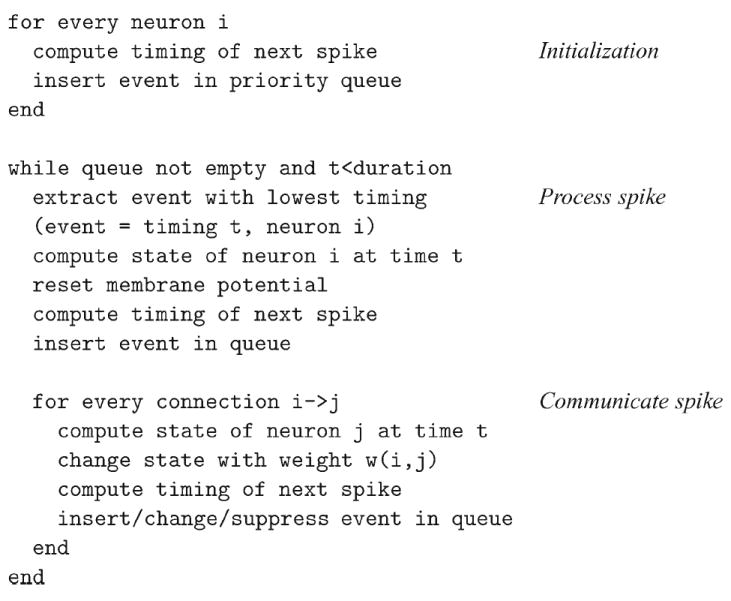

2.4 Asynchronous or event-driven algorithms

Asynchronous or event-driven algorithms are not as widely used as clock-driven ones because they are significantly more complex to implement (see pseudo-code in Fig. 3) and less universal. Their key advantages are a potential gain in speed due to not calculating many small update steps for a neuron in which no event arrives and that spike timings are computed exactly (but see below for approximate event-driven algorithms); in particular, spike timings are not aligned to a time grid anymore (which is a source of potential errors, see Section 3).

Fig. 3.

A basic event-driven algorithm with non-instantaneous synaptic interactions

The problem of simulating dynamical systems with discrete events is a well established research topic in computer science (Ferscha 1996; Sloot et al. 1999; Fujimoto 2000; Zeigler et al. 2000) (see also Rochel and Martinez 2003; Mayrhofer et al. 2002), with appropriate data structures and algorithms already available to the computational neuroscience community. We start by describing the simple case when synaptic interactions are instantaneous, i.e., when spikes can be produced only at times of incoming spikes (no latency); then we will turn to the most general case.

2.4.1 Instantaneous synaptic interactions

In an asynchronous or event-driven algorithm, the simulation advances from one event to the next event. Events can be spikes coming from neurons in the network or external spikes (typically random spikes described by a Poisson process). For models in which spikes can be produced by a neuron only at times of incoming spikes, event-driven simulation is relatively easy (see pseudo-code in Fig. 2). Timed events are stored in a queue (which is some sort of sorted list). One iteration consists in

Fig. 2.

A basic event-driven algorithm with instantaneous synaptic interactions

Extracting the next event

Updating the state of the corresponding neuron (i.e., calculating the state according to the differential equation and adding the synaptic weight)

Checking if the neuron satisfies the threshold condition, in which case events are inserted in the queue for each downstream neuron

In the simple case of identical transmission delays, the data structure for the queue can be just a FIFO queue (First In, First Out), which has fast implementations (Cormen et al. 2001). When the delays take values in a small discrete set, the easiest way is to use one FIFO queue for each delay value, as described in Mattia and Del Giudice (2000). It is also more efficient to use a separate FIFO queue for handling random external events (see paragraph about noise below).

In the case of arbitrary delays, one needs a more complex data structure. In computer science, efficient data structures to maintain an ordered list of time-stamped events are grouped under the name priority queues (Cormen et al. 2001). The topic of priority queues is dense and well documented; examples are binary heaps, Fibonacci heaps (Cormen et al. 2001), calendar queues (Brown 1988; Claverol et al. 2002) or van Emde Boas trees (van Emde Boas et al. 1976) (see also Connollly et al. 2003, in which various priority queues are compared). Using an efficient priority queue is a crucial element of a good event-driven algorithm. It is even more crucial when synaptic interactions are not instantaneous.

2.4.2 Non-instantaneous synaptic interactions

For models in which spike times do not necessarily occur at times of incoming spikes, event-driven simulation is more complex. We first describe the basic algorithm with no delays and no external events (see pseudo-code in Fig. 3). One iteration consists in

Finding which neuron is the next one to spike

Updating this neuron

Propagating the spike, i.e., updating its target neurons

The general way to do that is to maintain a sorted list of the future spike timings of all neurons. These spike timings are only provisory since any spike in the network can modify all future spike timings. However, the spike with lowest timing in the list is certified. Therefore, the following algorithm for one iteration guarantees the correctness of the simulation (see Fig. 3):

Extract the spike with lowest timing in the list

Update the state of the corresponding neuron and recalculate its future spike timing

Update the state of its target neurons

Recalculate the future spike timings of the target neurons

For the sake of simplicity, we ignored transmission delays in the description above. Including them in an event-driven algorithm is not as straightforward as in a clock-driven algorithm, but it is a minor complication. When a spike is produced by a neuron, the future synaptic events are stored in another priority queue in which the timings of events are non-modifiable. The first phase of the algorithm (extracting the spike with lowest timing) is replaced by extracting the next event, which can be either a synaptic event or a spike emission. One can use two separate queues or a single one. External events can be handled in the same way. Although delays introduce complications in coding event-driven algorithms, they can in fact simplify the management of the priority queue for outgoing spikes. Indeed, the main difficulty in simulating networks with non-instantaneous synaptic interactions is that scheduled outgoing spikes can be canceled, postponed or advanced by future incoming spikes. If transmission delays are greater than some positive value τmin, then all outgoing spikes scheduled in [t, t + τmin] (t being the present time) are certified. Thus, algorithms can exploit the structure of delays to speed up the simulation (Lee and Farhat 2001).

2.4.3 Computational complexity

Putting aside the cost of handling external events (which is minor), we can subdivide the computational cost of handling one outgoing spike as follows (assuming p is the average number of synapses per neuron):

Extracting the event (in case of non-instantaneous synaptic interactions)

Updating the neuron and its targets: p + 1 updates

Inserting p synaptic events in the queue (in case of delays)

Updating the spike times of p + 1 neurons (in case of non-instantaneous synaptic interactions)

Inserting or rescheduling p + 1 events in the queue (future spikes for non-instantaneous synaptic interactions)

Since there are F × N spikes per second of biological time, the number of operations is approximately proportional to F × N × p. The total computational cost per second of biological time can be written concisely as follows:

where cU is the cost of one update of the state variables, cS is the cost of calculating the time of the next spike (non-instantaneous synaptic interactions) and cQ is the average cost of insertions and extractions in the priority queue(s). Thus, the simulation time is linear in the number of synapses, which is optimal. Nevertheless, we note that the operations involved are heavier than in the propagation phase of clock-driven algorithms (see previous section), therefore the multiplicative factor is likely to be larger. We have also assumed that cQ is O(1), i.e., that the dequeue and enqueue operations can be done in constant average time with the data structure chosen for the priority queue. In the simple case of instantaneous synaptic interactions and homogeneous delays, one can use a simple FIFO queue (First In, First Out), in which insertions and extractions are very fast and take constant time. For the general case, data structures for which dequeue and enqueue operations take constant average time (O(1)) exist, e.g. calendar queues (Brown 1988; Claverol et al. 2002), however they are quite complex, i.e., cQ is a large constant. In simpler implementations of priority queues such as binary heaps, the dequeue and enqueue operations take O(logm) operations, where m is the number of events in the queue. Overall, it appears that the crucial component in general event-driven algorithms is the queue management.

2.4.4 What models can be simulated in an event-driven fashion?

Event-driven algorithms implicitly assume that we can calculate the state of a neuron at any given time, i.e., we have an explicit solution of the differential equations (but see below for approximate event-driven simulation). This would not be the case with e.g. HH models. Besides, when synaptic interactions are not instantaneous, we also need a function that maps the current state of the neuron to the timing of the next spike (possibly +∞ if there is none).

So far, algorithms have been developed for simple pulse-coupled IF models (Watts 1994; Claverol et al. 2002; Delorme and Thorpe 2003) and more complex ones such as some instances of the Spike Response Model (Makino 2003; Marian et al. 2002; Gerstner and Kistler 2002) (note that the SRM model can usually be restated in the differential formalism of Section 2.1). Recently, Djurfeldt et al. (2005) introduced several IF models with synaptic conductances which are suitable for event-driven simulation. Algorithms were also recently developed by Brette to simulate exactly IF models with exponential synaptic currents (Brette 2007) and conductances (Brette 2006), and extended this work to the quadratic model (Ermentrout and Kopell 1986). However, there are still efforts to be made to design suitable algorithms for more complex models [for example the two-variable IF models of Izhikevich (2003) and Brette and Gerstner (2005)], or to develop more realistic models that are suitable for event-driven simulation.

2.4.5 Noise in event-driven algorithms

As for synchronous algorithms, there are two ways to introduce noise in a simulation: (1) adding random external spikes; (2) simulating a stochastic process.

The former case is by far easier in asynchronous algorithms. It simply amounts to adding a queue with external events, which is usually easy to implement. For example, if external spikes are generated according to a Poisson process with rate F, the timing of the next event if random variable with exponential distribution with 1/F. If n neurons receive external spike trains given by independent Poisson processes with rate F, then the time of the next event is exponentially distributed with mean 1/(nF) and the label of the neuron receiving this event is picked at random in {1,2,…,n}. Inhomogeneous Poisson processes can be simulated exactly in a similar way (Daley and Vere-Jones 1988). If r(t) is the instantaneous rate of the Poisson process and is bounded by M (r(t) ≤ M), then one way to generate a spike train according to this Poisson process in the interval [0, T] is as follows: generate a spike train in [0, T] according to a homogeneous Poisson process with rate T * M; for each spike at time ti, draw a random number xi from a uniform distribution in [0, M]; select all spikes such that xi ≤ r(ti).

Simulating directly a stochastic process in asynchronous algorithms is much harder because even for the simplest stochastic neuron models, there is no closed analytical formula for the distribution of the time to the next spike (see e.g.Tuckwell 1988). It is however possible to use precalculated tables when the dynamical systems are low dimensional (Reutimann et al. 2003) (i.e., not more than 2 dimensions). Note that simulating noise in this way introduces provisory events in the same way as for non-instantaneous synaptic interactions.

2.4.6 Approximate event-driven algorithms

We have described asynchronous algorithms for simulating neural networks exactly. For complex neuron models of the HH type, Lytton and Hines (2005) have developed an asynchronous simulation algorithm which consists in using for each neuron an independent time step whose width is reduced when the membrane potential approaches the action potential threshold.

3 Precision of different simulation strategies

As shown in this paper, a steadily growing number of neural simulation environments endows computational neuroscience with tools which, together with the steady improvement of computational hardware, allow the simulation of neural systems with increasing complexity, ranging from detailed biophysical models of single cells up to large-scale neural networks. Each of these simulation tools pursues the quest for a compromise between efficiency in speed and memory consumption, flexibility in the type of questions addressable, and precision or exactness in the numerical treatment of the latter. In all cases, this quest leads to the implementation of a specific strategy for numerical simulations which is found to be optimal given the set of constraints set by the particular simulation tool. However, as shown recently (Hansel et al. 1998; Lee and Farhat 2001; Morrison et al. 2007a), quantitative results and their qualitative interpretation strongly depend on the simulation strategy utilized, and may vary across available simulation tools or for different settings within the same simulator. The specificity of neuronal simulations is that spikes induce either a discontinuity in the dynamics (IF models) or have very fast dynamics (HH type models). When using approximation methods, this problem can be tackled by spike timing interpolation in the former case (Hansel et al. 1998; Shelley and Tao 2001) or integration with adaptive time step in the latter case (Lytton and Hines 2005). Specifically in networks of IF neurons, which to date remain almost exclusively the basis for accessing dynamics of large-scale neural populations (but see Section 4.7), crucial differences in the appearance of synchronous activity patterns were observed, depending on the temporal resolution of the neural simulator or the integration method used.

In this section we address this question using one of the most simple analytically solvable leaky IF (LIF) neuron model, namely the classic LIF neuron, described by the state equation

| (1) |

where τm = 20 ms denotes the membrane time constant and 0 ≤ m(t) ≤ 1. Upon arrival of a synaptic event at time t0, m(t) is updated by a constant Δm = 0.1 (Δm = 0.0085 in network simulations) after which it decays according to

| (2) |

If m exceeds a threshold mthres = 1, the neuron fires and is afterwards reset to a resting state mrest = 0 in which it stays for an absolute refractory period tref = 1 ms. The neurons were subject to non-plastic or plastic synaptic interactions. In the latter case, spike-timing-dependent synaptic plasticity (STDP) was used according to a model by Song and Abbott (2001). In this case, upon arrival of a synaptic input at time tpre, synaptic weights are changed according to

| (3) |

where

| (4) |

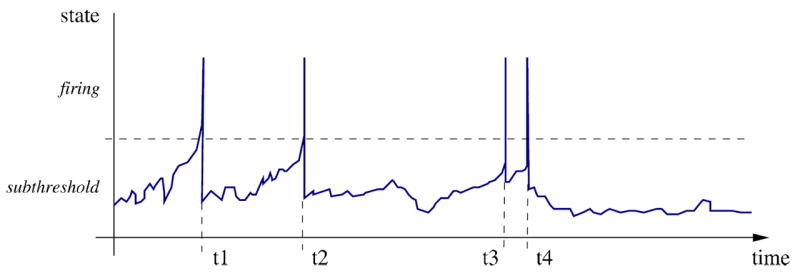

for Δt = tpre − tpost < 0 and Δt ≥ 0, respectively. Here, tpost denotes the time of the nearest postsynaptic spike, A± quantify the maximal change of synaptic efficacy, and τ± determine the range of pre- to postsynaptic spike intervals in which synaptic weight changes occur. Comparing simulation strategies at the both ends of a wide spectrum, namely a clock-driven algorithm (see Section 2.3) and event-driven algorithm (see Section 2.4), we evaluate to which extent the temporal precision of spiking events impacts on neuronal dynamics of single as well as small networks. These results support the argument that the speed of neuronal simulations should not be the sole criteria for evaluation of simulation tools, but must complement an evaluation of their exactness.

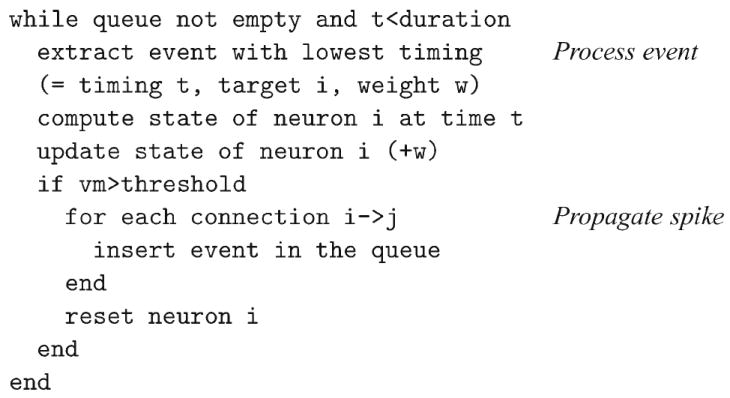

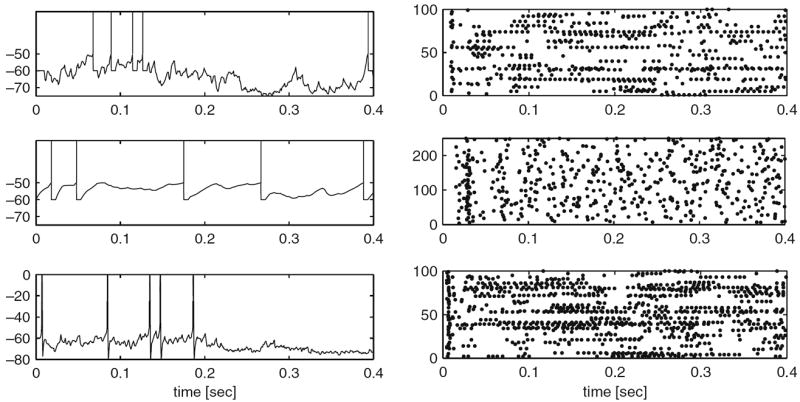

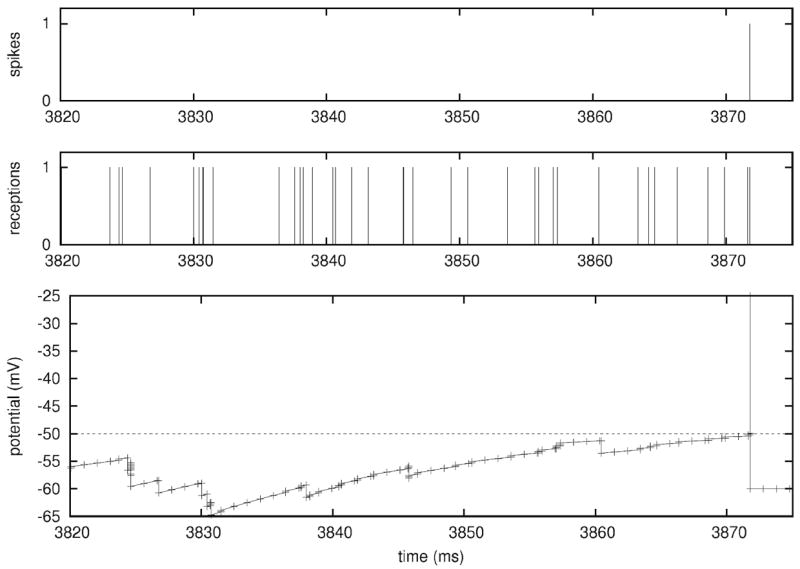

3.1 Neuronal systems without STDP

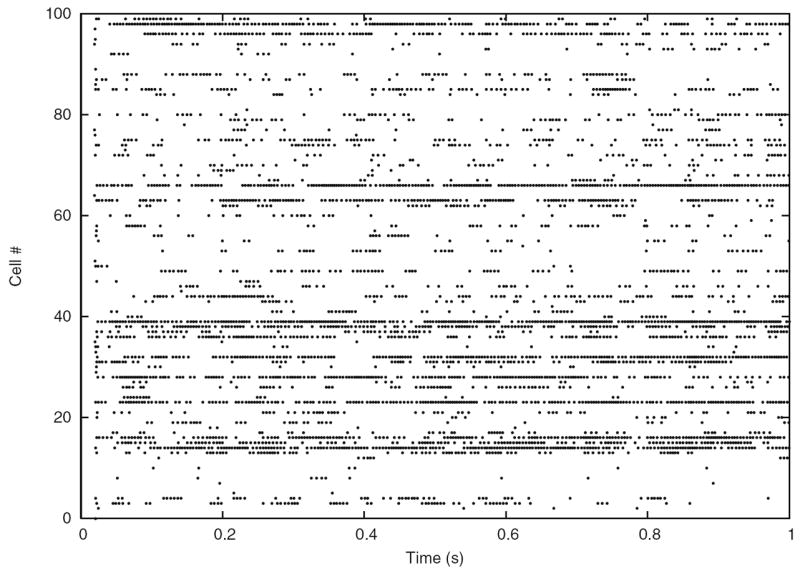

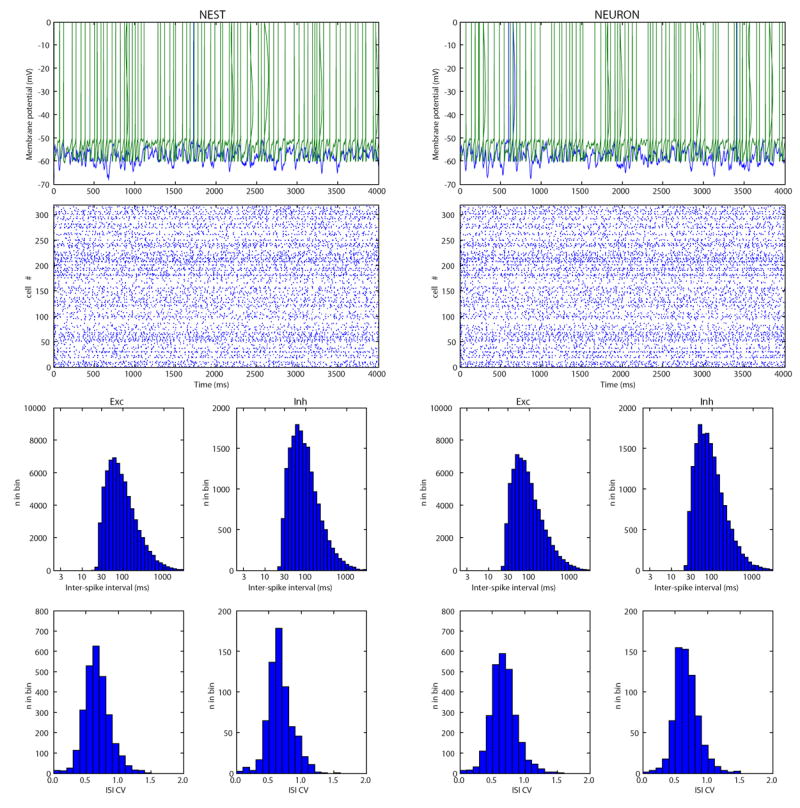

In the case of a single LIF neuron with non-plastic synapses subject to a frozen synaptic input pattern drawn from a Poisson distribution with rate νinp = 250 Hz, differences in the discharge behavior seen in clock-driven simulations at different resolutions (0.1 ms, 0.01 ms, 0.001 ms) and event-driven simulations occurred already after short periods of simulated neural activity (Fig. 4(a)). These deviations were caused by subtle differences in the subthreshold integration of synaptic input events due to temporal binning, and “decayed” with a constant which depended on the membrane time constant. However, for a strong synaptic drive, subthreshold deviations could accumulate and lead to marked delays in spike times, cancellation of spikes or occurrence of additional spikes. Although differences at the single cell level remained widely constrained and did not lead to changes in the statistical characterization of the discharge activity when long periods of neural activity were considered, already small differences in spike times of individual neurons can lead to crucial differences in the population activity, such as synchronization (see Hansel et al. 1998; Lee and Farhat 2001), if neural networks are concerned. We investigated this possibility using a small network of 15×15 LIF neurons with all-to-all excitatory connectivity with fixed weights and not distance-dependent synaptic transmission delay (0.2 ms), driven by a fixed pattern of superthreshold random synaptic inputs to each neuron (average rate 250 Hz; weight Δm = 0.1). In such a small network, the activity remained primarily driven by the external inputs, i.e. the influence of intrinsic connectivity is small. However, due to small differences in spike times due to temporal binning could had severe effects on the occurrence of synchronous network events where all (or most) cells discharge at the same time. Such events could be delayed, canceled or generated depending on the simulation strategy or temporal resolution utilized (Fig. 4(b)).

Fig. 4.

Modelling strategies and dynamics in neuronal systems without STDP. (a) Small differences in spike times can accumulate and lead to severe delays or even cancellation (see arrows) of spikes, depending on the simulation strategy utilized or the temporal resolution within clock-driven strategies used. (b) Raster-plots of spike events in a small neuronal network of LIF neurons simulated with event-driven and clock-driven approaches with different temporal resolutions. Observed differences in neural network dynamics include delays, cancellation or generation of synchronous network events [figure modified from Rudolph and Destexhe (2007)]

3.2 Neuronal systems with STDP

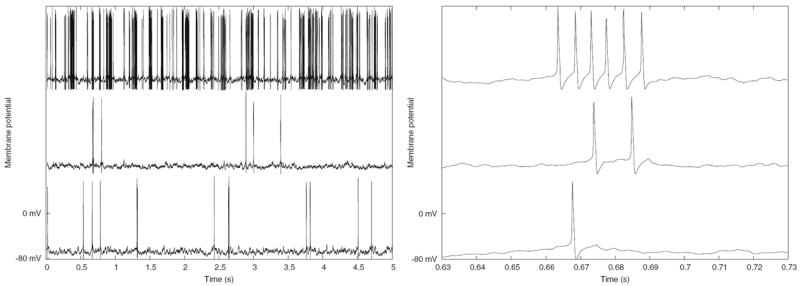

The above described differences in the behavior of neural systems simulated by using different simulation strategies remain constrained to the observed neuronal dynamics and are minor if some statistical measures, such as average firing rates, are considered. More severe effects can be expected if biophysical mechanism which depend on the exact times of spikes are incorporated into the neural model. One of these mechanism is short-term synaptic plasticity, in particular STDP. In this case, the self-organizing capability of the neural system considered will yield different paths along which the systems will develop, and, thus, possibly lead to a neural behavior which not only quantitatively but also qualitatively may differ across various tools utilized for the numerical simulation.

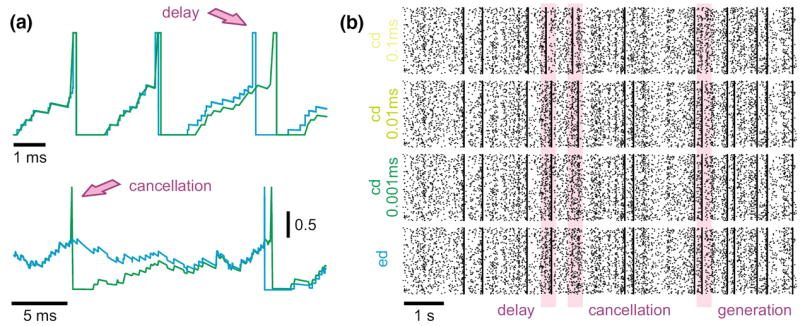

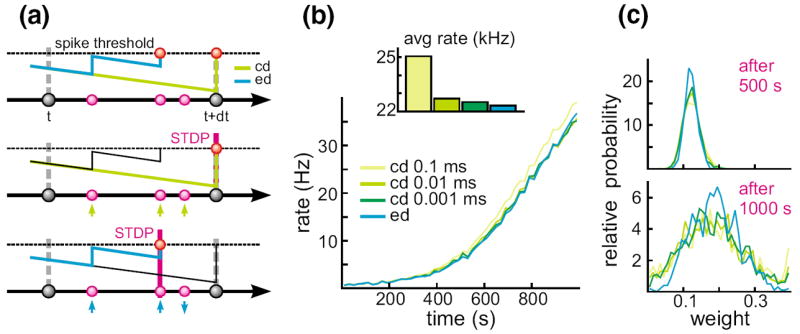

To explain why such small differences in the exact timing of events are crucial if models with STDP are considered, consider a situation in which multiple synaptic input events arrive in between two state updates at t and t + dt in a clock-driven simulation. In the latter case, the times of these events are assigned to the end of the interval (Fig. 5(a)). In the case these inputs drive the cell over firing threshold, the synaptic weights of all three synaptic input channels will be facilitated by the same amount according to the used STDP model. In contrast, if exact times are considered, the same input pattern could cause a discharge already after only two synaptic inputs. In this case the synaptic weights liked to these inputs will be facilitated, whereas the weight of the input arriving after the discharge will be depressed.

Fig. 5.

Dynamics in neuronal systems with STDP. (a) Impact of the simulation strategy (clock-driven: cd; event-driven: ed) on the facilitation and depression of synapses. (b) Time course and average rate (inset) in a LIF model with multiple synaptic input channels for different simulation strategies and temporal resolution. (c) Synaptic weight distribution after 500 and 1,000 s [figure modified from Rudolph and Destexhe (2007)]

Although the chance for the occurrence of situations such as those described above may appear small, already one instance will push the considered neural system onto a different path in its self-organization. The latter may lead to systems whose qualitative behavior may, after some time, markedly vary from a system with the same initial state but simulated by another, temporally more or less precise simulation strategy. Such a scenario was investigated by using a single LIF neuron (τm = 4.424 ms) with 1,000 plastic synapses (A+ = 0.005, A−/A+ = 1.05, τ+ = 20 ms, τ− = 20 ms, gmax = 0.4) driven by the same pattern of Poisson-distributed random inputs (average rate 5 Hz, Δm =0.1). Simulating only 1,000 s neural activity led to marked differences in the temporal development of the average rate between clock-driven simulations with a temporal resolution of 0.1 ms and event-driven simulations (Fig. 5 (b)). Considering the average firing rate over the whole simulated window, clock-driven simulations led to an about 10 % higher value compared to the event-driven approach, and approached the value observed in event-driven simulations only when the temporal resolution was increased by two orders of magnitude. Moreover, different simulation strategies and temporal resolutions led also to a significant difference in the synaptic weight distribution at different times (Fig. 5(c)).

Both findings show that the small differences in the precision of synaptic events can have a severe impact even on statistically very robust measures, such as average rate or weight distribution. Considering the temporal development of individual synaptic weights, both depression and facilitation were observed depending on the temporal precision of the numerical simulation Indeed, the latter could have severe impact on the qualitative interpretation of the temporal dynamics of structured networks, as this result suggests that synaptic connections in otherwise identical models can be strengthened or weakened due to the influence of the utilized simulation strategy or simulation parameters.

In conclusion, the results presented in this section suggest that the strategy and temporal precision used for neural simulations can severely alter simulated neural dynamics. Although dependent on the neural system modeled, observed differences may turn out to be crucial for the qualitative interpretation of the result of numerical simulations, in particular in simulations involving biophysical processes depending on the exact order or time of spike events (e.g. as in STDP). Thus, the search for an optimal neural simulation tool or strategy for the numerical solution of a given problem should be guided not only by its absolute speed and memory consumption, but also its numerical exactness.

4 Overview of simulation environments

4.1 NEURON

4.1.1 NEURON’s domain of utility

NEURON is a simulation environment for creating and using empirically-based models of biological neurons and neural circuits. Initially it earned a reputation for being well-suited for COBA models of cells with complex branched anatomy, including extracellular potential near the membrane, and biophysical properties such as multiple channel types, inhomogeneous channel distribution, ionic accumulation and diffusion, and second messengers. In the early 1990s, NEURON was already being used in some laboratories for network models with many of thousands of cells, and over the past decade it has undergone many enhancements that make the construction and simulation of large-scale network models easier and more efficient.

To date, more than 600 papers and books have described NEURON models that range from a membrane patch to large scale networks with tens of thousands of COBA or artificial spiking cells.1 In 2005, over 50 papers were published on topics such as mechanisms underlying synaptic transmission and plasticity (Banitt et al. 2005), modulation of synaptic integration by subthreshold active currents (Prescott and De Koninck 2005), dendritic excitability (Day et al. 2005), the role of gap junctions in networks (Migliore et al. 2005), effects of synaptic plasticity on the development and operation of biological networks (Saghatelyan et al. 2005), neuronal gain (Azouz 2005), the consequences of synaptic and channel noise for information processing in neurons and networks (Badoual et al. 2005), cellular and network mechanisms of temporal coding and recognition (Kanold and Manis 2005), network states and oscillations (Wolf et al. 2005), effects of aging on neuronal function (Markaki et al. 2005), cortical recording (Moffitt and McIntyre 2005), deep brain stimulation (Grill et al. 2005), and epilepsy resulting from channel mutations (Vitko et al. 2005) and brain trauma (Houweling et al. 2005).

4.1.2 How NEURON differs from other neurosimulators

The chief rationale for domain-specific simulators over general purpose tools lies in the promise of improved conceptual control, and the possibility of exploiting the structure of model equations for the sake of computational robustness, accuracy, and efficiency. Some of the key differences between NEURON and other neurosimulators are embodied in the way that they approach these goals.

4.1.2.1 Conceptual control

The cycle of hypothesis formulation, testing, and revision, which lies at the core of all scientific research, presupposes that one can infer the consequences of a hypothesis. The principal motivation for computational modeling is its utility for dealing with hypotheses whose consequences cannot be determined by unaided intuition or analytical approaches. The value of any model as a means for evaluating a particular hypothesis depends critically on the existence of a close match between model and hypothesis. Without such a match, simulation results cannot be a fair test of the hypothesis. From the user’s viewpoint, the first barrier to computational modeling is the difficulty of achieving conceptual control, i.e. making sure that a computational model faithfully reflects one’s hypothesis.

NEURON has several features that facilitate conceptual control, and it is acquiring more of them as it evolves to meet the changing needs of computational neuroscientists. Many of these features fall into the general category of “native syntax” specification of model properties: that is, key attributes of biological neurons and networks have direct counterparts in NEURON. For instance, NEURON users specify the gating properties of voltage- and ligand-gated ion channels with kinetic schemes or families of HH style differential equations. Another example is that models may include electronic circuits constructed with the LinearCircuitBuilder, a GUI tool whose palette includes resistors, capacitors, voltage and current sources, and operational amplifiers. NEURON’s most striking application of native syntax may lie in how it handles the cable properties of neurons, which is very different from any other neurosimulator. NEURON users never have to deal directly with compartments. Instead, cells are represented by unbranched neurites, called sections, which can be assembled into branched architectures (the topology of a model cell). Each section has its own anatomical and biophysical properties, plus a discretization parameter that specifies the local resolution of the spatial grid. The properties of a section can vary continuously along its length, and spatially inhomogeneous variables are accessed in terms of normalized distance along each section (Hines and Carnevale 1997) (Chapter 5 in Carnevale and Hines 2006). Once the user has specified cell topology, and the geometry, biophysical properties, and discretization parameter for each section, NEURON automatically sets up the internal data structures that correspond to a family of ODEs for the model’s discretized cable equation.

4.1.2.2 Computational robustness, accuracy, and efficiency

NEURON’s spatial discretization of COBA model neurons uses a central difference approximation that is second order correct in space. The discretization parameter for each section can be specified by the user, or assigned automatically according to the d_lambda rule (see Hines and Carnevale 1997) (Chapters 4 and 5 in Carnevale and Hines 2006).

For efficiency, NEURON’s computational engine uses algorithms that are tailored to the model system equations (Hines 1984, 1989; Hines and Carnevale 1997). To advance simulations in time, users have a choice of built-in clock driven (fixed step backward Euler and Crank-Nicholson) and event driven methods (global variable step and local variable step with second order threshold detection); the latter are based on CVODES and IDA from SUNDIALS (Hindmarsh et al. 2005). Networks of artificial spiking cells are solved analytically by a discrete event method that is several orders of magnitude faster than continuous system simulation (Hines and Carnevale 1997). NEURON fully supports hybrid simulations, and models can contain any combination of COBA neurons and analytically computable artificial spiking cells. Simulations of networks that contain COBA neurons are second order correct if adaptive integration is used (Lytton and Hines 2005).

Synapse and artificial cell models accept discrete events with input stream specific state information. It is often extremely useful for artificial cell models to send events to themselves in order to implement refractory periods and intrinsic firing properties; the delivery time of these “self events” can also be adjusted in response to intervening events. Thus instantaneous and non-instantaneous interactions of Section 2.4 are supported.

Built-in synapses exploit the methods described in Section 2.2. Arbitrary delay between generation of an event at its source, and delivery to the target (including 0 delay events), is supported by a splay-tree queue (Sleator and Tarjan 1983) which can be replaced at configuration time by a calendar queue. If the minimum delay between cells is greater than 0, self events do not use the queue and parallel network simulations are supported. For the fixed step method, when queue handling is the rate limiting step, a bin queue can be selected. For the fixed step method with parallel simulations, when spike exchange is the rate limiting step, six-fold spike compression can be selected.

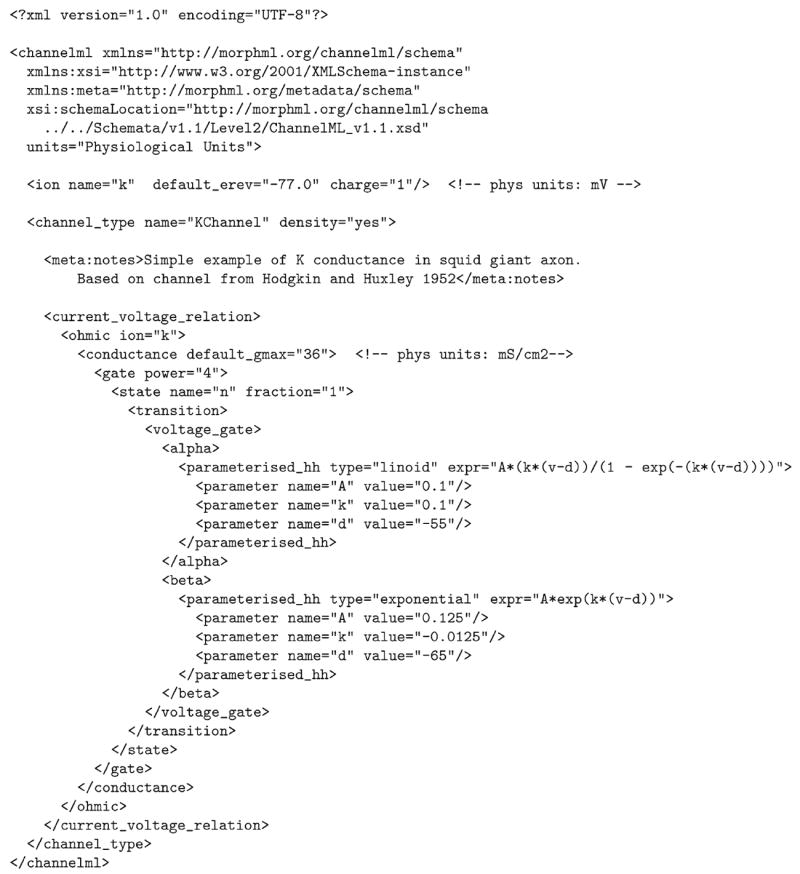

4.1.3 Creating and using models with NEURON

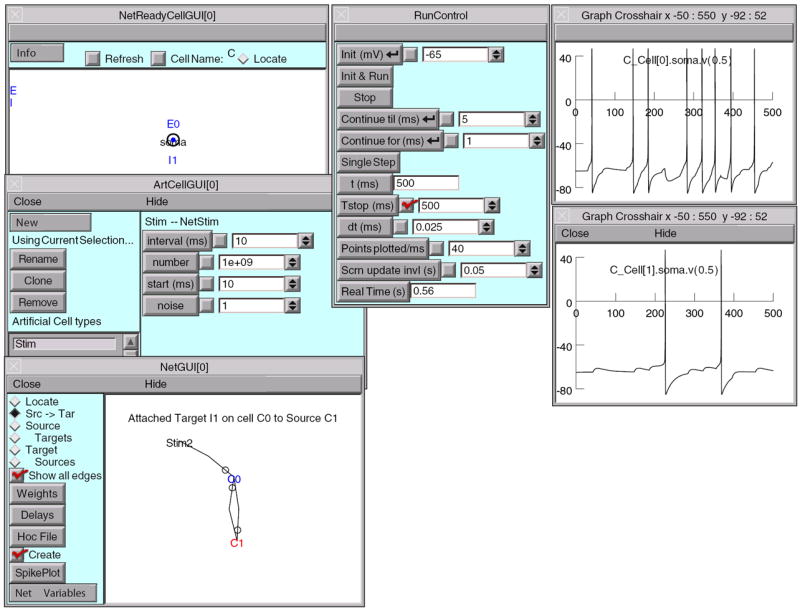

Models can be created by writing programs in an interpreted language based on hoc (Kernighan and Pike 1984), which has been enhanced to simplify the task of representing the properties of biological neurons and networks. Users can extend NEURON by writing new function and biophysical mechanism specifications in the NMODL language, which is then compiled and dynamically linked (Hines and Carnevale 1997) (chapter 9 in Carnevale and Hines 2006). There is also a powerful GUI for conveniently building and using models; this can be combined with hoc programming to exploit the strengths of both (Fig. 6).

Fig. 6.

NEURON graphical user interface. In developing large scale networks, it is helpful to start by debugging small prototype nets. NEURON’s GUI, especially its Network Builder (shown here), can simplify this task. Also, at the click of a button the Network Builder generates hoc code that can be reused as the building blocks for large scale nets [see Chapter 11, “Modeling networks” in Carnevale and Hines (2006)]

The past decade has seen many enhancements to NEURON’s capabilities for network modeling. First and most important was the addition of an event delivery system that substantially reduces the computational burden of simulating spike-triggered synaptic transmission, and enabled the creation of analytic IF cell models which can be used in any combination with COBA cells. Just in the past year the event delivery system was extended so that NEURON can now simulate models of networks and cells that are distributed over parallel hardware (see NEURON in a parallel environment below).

4.1.3.1 The GUI

The GUI contains a large number of tools that can be used to construct models, exercise simulations, and analyze results, so that no knowledge of programming is necessary for the productive use of NEURON. In addition, many GUI tools provide functionality that would be quite difficult for users to replicate by writing their own code. Some examples are:

-

Model specification tools

Channel builder—specifies voltage- and ligand-gated ion channels in terms of ODEs (HH-style, including Borg–Graham formulation) and/or kinetic schemes. Channel states and total conductance can be simulated as deterministic (continuous in time), or stochastic (countably many channels with independent state transitions, producing abrupt conductance changes).

Cell builder—manages anatomical and biophysical properties of model cells.

Network builder—prototypes small networks that can be mined for reusable code to develop large-scale networks (Chapter 11 in Carnevale and Hines 2006).

Linear circuit builder—specifies models involving gap junctions, ephaptic interactions, dual-electrode voltage clamps, dynamic clamps, and other combinations of neurons and electrical circuit elements.

-

Model analysis tools

Import3D—converts detailed morphometric data (Eutectic, Neurolucida, and SWC formats) into model cells. It automatically fixes many common errors, and helps users identify complex problems that require judgment.

Model view—automatically discovers and presents a summary of model properties in a browsable textual and graphical form. This aids code development and maintenance, and is increasingly important as code sharing grows.

Impedance—compute and display voltage transfer ratios, input and transfer impedance, and the electrotonic transformation.

-

Simulation control tools

Variable step control—automatically adjusts the state variable error tolerances that regulate adaptive integration.

Multiple run fitter—optimizes function and model parameters.

4.1.4 NEURON in a parallel environment

NEURON supports three kinds of parallel processing.

Multiple simulations distributed over multiple processors, each processor executing its own simulation. Communication between master processor and workers uses a bulletin-board method similar to Linda (Carriero and Gelernter 1989).

Distributed network models with gap junctions.

Distributed models of individual cells (each processor handles part of the cell). At present, setting up distributed models of individual cells requires considerable effort; in the future it will be made much more convenient.

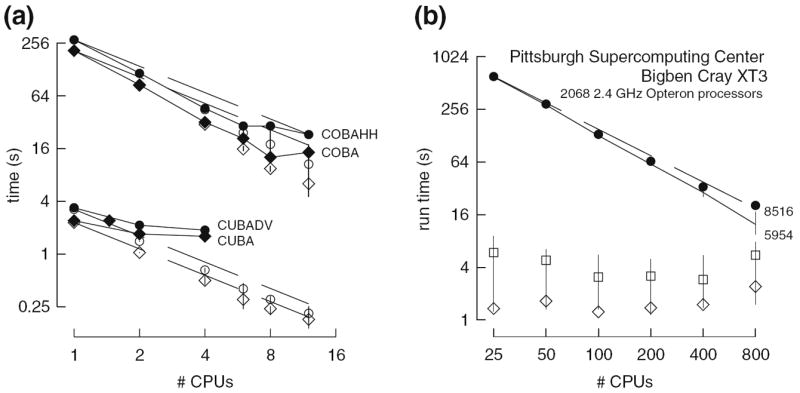

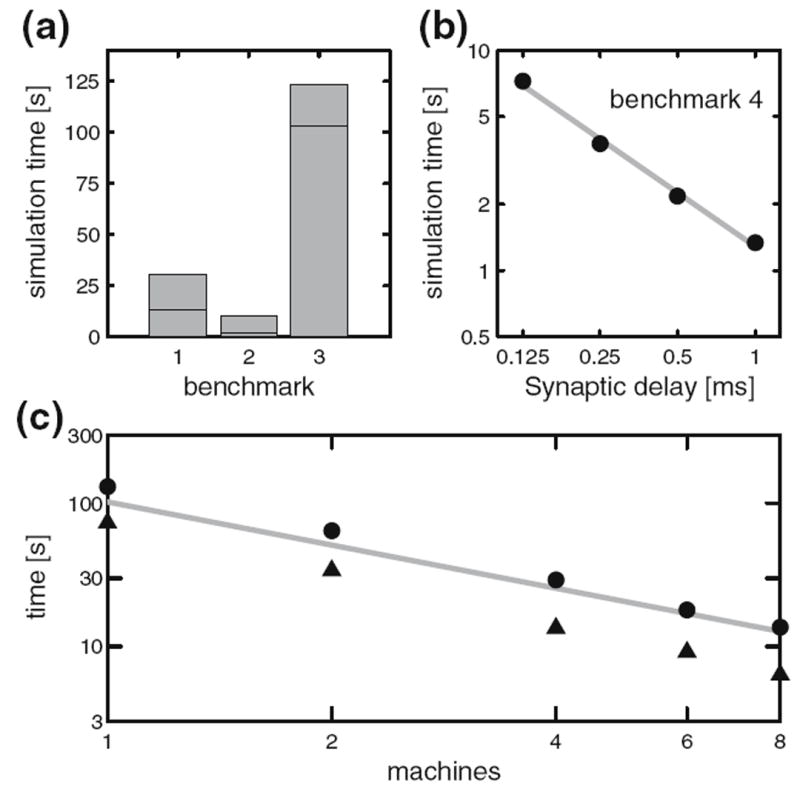

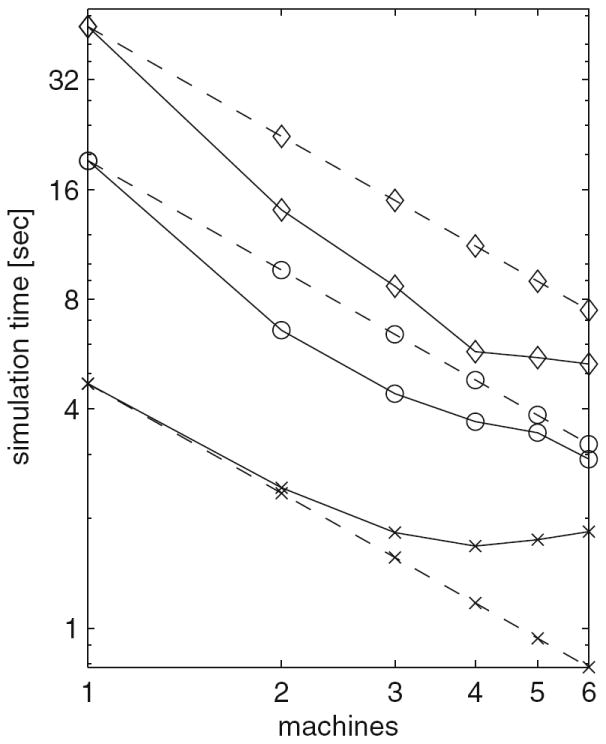

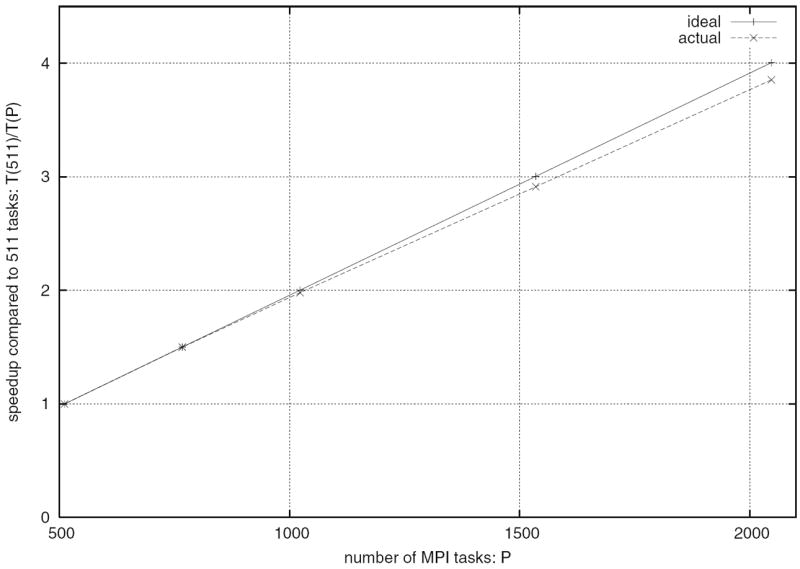

The four benchmark simulations of spiking neural networks (see Appendix B) were implemented under NEURON. Figure 7(a) demonstrates the speedup that NEURON can achieve with distributed network models of the four types [COBA, current-based (CUBA), HH, event-based—see Appendix B] on a Beowulf cluster (dashed lines are “ideal” – run time inversely proportional to number of CPUs – and solid symbols are actual run times). Figure 7(b) shows that performance improvement scales with the number of processors and the size and complexity of the network; for this figure we ran a series of tests using a NEURON implementation of the single column thalamocortical network model described by Traub et al. (2005) on the Cray XT3 at the Pittsburgh Supercomputer Center. Similar performance gain has been documented in extensive tests on parallel hardware with dozens to thousands of CPUs, using published models of networks of conductance based neurons (Migliore et al. 2006). Speedup is linear with the number of CPUs, or even superlinear (due to larger effective high speed memory cache), until there are so many CPUs that each one is solving fewer than 100 equations.

Fig. 7.

Parallel simulations using NEURON. (a) Four benchmark network models were simulated on 1, 2, 4, 6, 8, and 12 CPUs of a Beowulf cluster (6 nodes, dual CPU, 64-bit 3.2 GHz Intel Xeon with 1024 KB cache). Dashed lines indicate “ideal speedup” (run time inversely proportional to number of CPUs). Solid symbols are run time, open symbols are average computation time per CPU, and vertical bars indicate variation of computation time. The CUBA and CUBADV models execute so quickly that little is gained by parallelizing them. The CUBA model is faster than the more efficient CUBADV because the latter generates twice as many spikes (spike counts are COBAHH 92,219, COBA 62,349, CUBADV 39,280, CUBA 15,371). (b) The Pittsburgh Supercomputing Center’s Cray XT3 (2.4 GHz Opteron processors) was used to simulate a NEURON implementation of the thalamocortical network model of Traub et al. (2005). This model has 3,560 cells in 14 types, 3,500 gap junctions, 5,596,810 equations, and 1,122,520 connections and synapses, and 100 ms of model time it generates 73,465 spikes and 19,844,187 delivered spikes. The dashed line indicates “ideal speedup” and solid circles are the actual run times. The solid black line is the average computation time, and the intersecting vertical lines mark the range of computation times for each CPU. Neither the number of cell classes nor the number of cells in each class were multiples of the number of processors, so load balance was not perfect. When 800 CPUs were used, the number of equations per CPU ranged from 5954 to 8516. Open diamonds are average spike exchange times. Open squares mark average voltage exchange times for the gap junctions, which must be done at every time step; these lie on vertical bars that indicate the range of voltage exchange times. This range is large primarily because of synchronization time due to computation time variation across CPUs. The minimum value is the actual exchange time

4.1.5 Future plans

NEURON undergoes a continuous cycle of improvement and revision, much of which is devoted to aspects of the program that are not immediately obvious to the user, e.g. improvement of computational efficiency. More noticeable are new GUI tools, such as the recently added Channel Builder. Many of these tools exemplify a trend toward “form-based” model specification, which is expected to continue. The use of form-based GUI tools increases the ability to exchange model specifications with other simulators through the medium of Extensible Markup Language (XML). With regard to network modeling, the emphasis will shift away from developing simulation infrastructure, which is reasonably complete, to the creation of new tools for network design and analysis.

4.1.6 Software development, support, and documentation

Michael Hines directs the NEURON project, and is responsible for almost all code development. The other members of the development team have varying degrees of responsibility for activities such as documentation, courses, and user support. NEURON has benefited from significant contributions of time and effort by members of the community of NEURON users who have worked on specific algorithms, written or tested new code, etc. Since 2003, user contributions have been facilitated by adoption of an “open source development model” so that source code, including the latest research threads, can be accessed from an on-line repository.2

Support is available by email, telephone, and consultation. Users can also post questions and share information with other members of the NEURON community via a mailing list and The NEURON Forum.3 Currently the mailing list has more than 700 subscribers with “live” email addresses; the Forum, which was launched in May, 2005, has already grown to 300 registered users and 1700 posted messages.

Tutorials and reference material are available.4 The NEURON Book (Carnevale and Hines 2006) is the authoritative book on NEURON. Four books by other authors have made extensive use of NEURON (Destexhe and Sejnowski 2001; Johnston and Wu 1995; Lytton 2002; Moore and Stuart 2000), and several of them have posted their code online or provide it on CD with the book.

Source code for published NEURON models is available at many WWW sites. The largest code archive is ModelDB,5 which currently contains 238 models, 152 of which were implemented with NEURON.

4.1.7 Software availability

NEURON runs under UNIX/Linux/OS X, MSWin 98 or later, and on parallel hardware including Beowulf clusters, the IBM Blue Gene and Cray XT3. NEURON source code and installers are provided free of charge,6 and the installers do not require “third party” software. The current standard distribution is version 5.9.39. The alpha version can be used as a simulator/controller in dynamic clamp experiments under real-time Linux7 with a National Instruments M series DAQ card.

4.2 GENESIS

4.2.1 GENESIS capabilities and design philosophy

GENESIS (the General Neural Simulation System) was given its name because it was designed, at the outset, be an extensible general simulation system for the realistic modeling of neural and biological systems (Bower and Beeman 1998). Typical simulations that have been performed with GENESIS range from subcellular components and biochemical reactions (Bhalla 2004) to complex models of single neurons (De Schutter and Bower 1994), simulations of large networks (Nenadic et al. 2003), and systems-level models (Stricanne and Bower 1998). Here, “realistic models” are defined as those models that are based on the known anatomical and physiological organization of neurons, circuits and networks (Bower 1995). For example, realistic cell models typically include dendritic morphology and a large variety of ionic conductances, whereas realistic network models attempt to duplicate known axonal projection patterns.

Parallel GENESIS (PGENESIS) is an extension to GENESIS that runs on almost any parallel cluster, SMP, supercomputer, or network of workstations where MPI and/or PVM is supported, and on which serial GENESIS itself is runnable. It is customarily used for large network simulations involving tens of thousands of realistic cell models (for example, see Hereld et al. 2005).

GENESIS has a well-documented process for users themselves to extend its capabilities by adding new user-defined GENESIS object types (classes), or script language commands without the need to understand or modify the GENESIS simulator code. GENESIS comes already equipped with mechanisms to easily create large scale network models made from single neuron models that have been implemented with GENESIS.

While users have added, for example, the Izhikevich (2003) simplified spiking neuron model (now built in to GENESIS), and they could also add IF or other forms of abstract neuron models, these forms of neurons are not realistic enough for the interests of most GENESIS modelers. For this reason, GENESIS is not normally provided with IF model neurons, and no GENESIS implementations have been provided for the IF model benchmarks (see Appendix B). Typical GENESIS neurons are multicompartmental models with a variety of HH type voltage- and/or calcium-dependent conductances.

4.2.2 Modeling with GENESIS

GENESIS is an object-oriented simulation system, in which a simulation is constructed of basic building blocks (GENESIS elements). These elements communicate by passing messages to each other, and each contains the knowledge of its own variables (fields) and the methods (actions) used to perform its calculations or other duties during a simulation. GENESIS elements are created as instantiations of a particular precompiled object type that acts as a template. Model neurons are constructed from these basic components, such as neural compartments and variable conductance ion channels, linked with messages. Neurons may be linked together with synaptic connections to form neural circuits and networks. This object-oriented approach is central to the generality and flexibility of the system, as it allows modelers to easily exchange and reuse models or model components. Many GENESIS users base their simulation scripts on the examples that are provided with GENESIS or in the GENESIS Neural Modeling Tutorials package (Beeman 2005).

GENESIS uses an interpreter and a high-level simulation language to construct neurons and their networks. This use of an interpreter with pre-compiled object types, rather than a separate step to compile scripts into binary machine code, gives the advantage of allowing the user to interact with and modify a simulation while it is running, with no sacrifice in simulation speed. Commands may be issued either interactively to a command prompt, by use of simulation scripts, or through the graphical interface. The 268 scripting language commands and the 125 object types provided with GENESIS are powerful enough that only a few lines of script are needed to specify a sophisticated simulation. For example, the GENESIS “cell reader” allows one to build complex model neurons by reading their specifications from a data file.

GENESIS provides a variety of mechanisms to model calcium diffusion and calcium-dependent conductances, as well as synaptic plasticity. There are also a number of “device objects” that may be interfaced to a simulation to provide various types of input to the simulation (pulse and spike generators, voltage clamp circuitry, etc.) or measurements (peristimulus and interspike interval histograms, spike frequency measurements, auto- and cross-correlation histograms, etc.). Object types are also provided for the modeling of biochemical pathways (Bhalla and Iyengar 1999). A list and description of the GENESIS object types, with links to full documentation, may be found in the “Objects” section of the hypertext GENESIS Reference Manual, downloadable or viewable from the GENESIS web site.

4.2.3 GENESIS graphical user interfaces

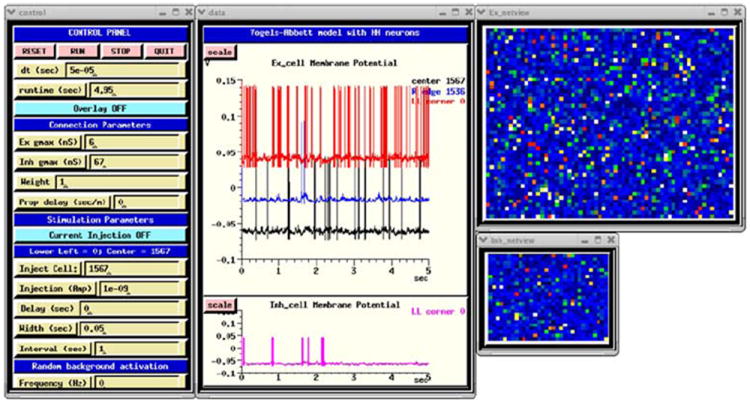

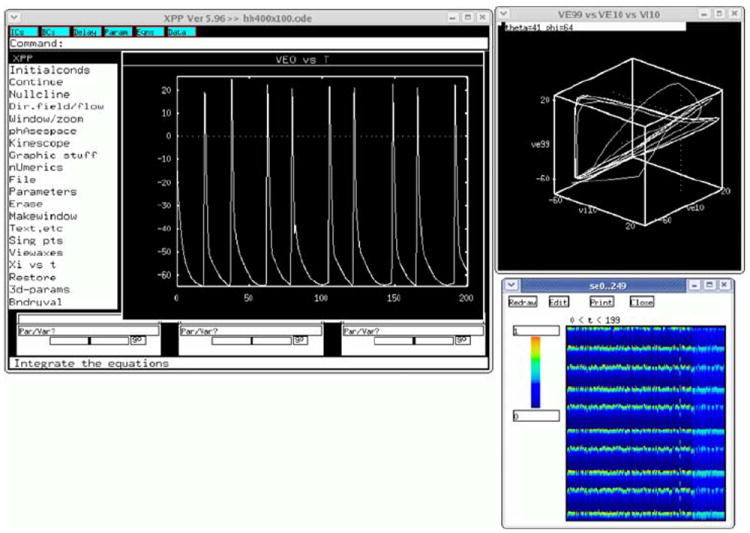

Very large scale simulations are often run with no GUI, with the simulation output to either text or binary format files for later analysis. However, GENESIS is usually compiled to include its graphical interface XODUS, which provides object types and script-level commands for building elaborate graphical interfaces, such as the one shown in Fig. 8 for the dual exponential variation of the HH benchmark simulation (Benchmark 3 in Appendix B). GENESIS also contains graphical environments for building and running simulations with no scripting, such as Neurokit (for single cells) and Kinetikit (for modeling biochemical reactions). These are themselves created as GENESIS scripts, and can be extended or modified. This allows for the creation of the many educational tutorials that are included with the GENESIS distribution (Bower and Beeman 1998).

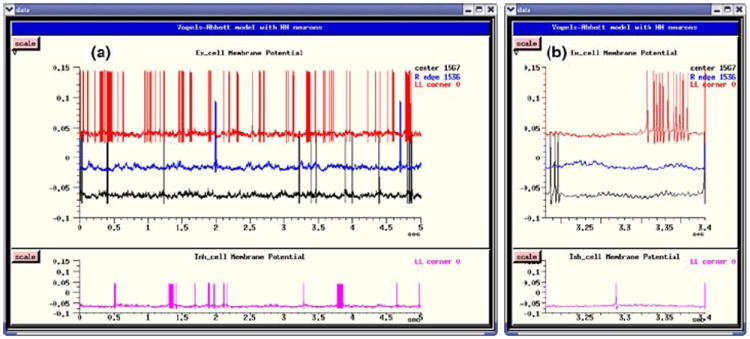

Fig. 8.

The GUI for the GENESIS implementation of the HH benchmark, using the dual-exponential form of synaptic conductance

4.2.4 Obtaining GENESIS and user support

GENESIS and its graphical front-end XODUS are written in C and are known to run under most Linux or UNIX-based systems with the X Window System, as well as Mac OS/X and MS Windows with the Cygwin environment. The current release of GENESIS and PGENESIS (ver. 2.3, March 17, 2006) is available from the GENESIS web site8 under the GNU General Public License. The GENESIS source distribution contains full source code and documentation, as well as a large number of tutorial and example simulations. Documentation for these tutorials is included along with online GENESIS help files and the hypertext GENESIS Reference Manual. In addition to the source distribution, precompiled binary versions are available for Linux, Mac OS/X, and Windows with Cygwin. The GENESIS Neural Modeling Tutorials (Beeman 2005) are a set of HTML tutorials intended to teach the process of constructing biologically realistic neural models with the GENESIS simulator, through the analysis and modification of provided example simulation scripts. The latest version of this package is offered as a separate download from the GENESIS web site.

Support for GENESIS is provided through email to http://www.genesis@genesis-sim.org, and through the GENESIS Users Group, BABEL. Members of BABEL receive announcements and exchange information through a mailing list, and are entitled to access the BABEL web page. This serves as a repository for the latest contributions by GENESIS users and developers, and contains hypertext archives of postings from the mailing list.

Rallpacks are a set of benchmarks for evaluating the speed and accuracy of neuronal simulators for the construction of single cell models (Bhalla et al. 1992). However, it does not provide benchmarks for network models. The package contains scripts for both GENESIS and NEURON, as well as full specifications for implementation on other simulators. It is included within the GENESIS distribution, and is also available for download from the GENESIS web site.

4.2.5 GENESIS implementation of the HH benchmark

The HH benchmark network model (Benchmark 3 in Appendix B) provides a good example of the type of model that should probably NOT be implemented with GENESIS. The Vogels and Abbott (2005) IF network on which it is based is an abstract model designed to study the propagation of signals under very simplified conditions. The identical excitatory and inhibitory neurons have no physical location in space, and no distance-dependent axonal propagation delays in the connections. The benchmark model simply replaces the IF neurons with single-compartment cells containing fast sodium and delayed rectifier potassium channels that fire tonically and display no spike frequency adaptation. Such models offer no advantages over IF cells for the study of the situation explored by Vogels and Abbott.

Nevertheless, it is a simple matter to implement such a model in GENESIS, using a simplification of existing example scripts for large network models, and the performance penalty for “using a sledge hammer to crack a peanut” is not too large for a network of this size. The simulation script for this benchmark illustrates the power of the GENESIS scripting commands for creating networks. Three basic commands are used for filling a region with copies of prototype cells, making synaptic connections with a great deal of control over the connectivity, and setting propagation delays.

The instantaneous rise in the synaptic conductances makes this a very efficient model to implement with a simulator specialized for IF networks, but such a non-biological conductance is not normally provided by GENESIS. Therefore, two implementations of the benchmark have been provided. The Dual Exponential VA HH Model script implements synaptic conductances with a dual exponential form having a 2 ms time-to-peak, and the specified exponential decay times of 5 ms for excitatory connections and 10 ms for inhibitory connections. The Instantaneous Conductance VA HH Model script uses a user-added isynchan object type that can be compiled and linked into GENESIS to provide the specified conductances with an instantaneous rise time. There is little difference in the behavior of the two versions of the simulation, although the Instantaneous Conductance model executes somewhat faster.

Figure 8 shows the implementation of the Dual Exponential VA HH Model with a GUI that was created by making small changes to the example RSnet.g, protodefs.g, and graphics.g scripts, which are provided in the GENESIS Modeling Tutorial (Beeman 2005) section “Creating large networks with GENESIS”.

These scripts and the tutorial specify a rectangular grid of excitatory neurons. An exercise suggests adding an additional layer of inhibitory neurons. The GENESIS implementations of the HH benchmark use a layer of 64 × 50 excitatory neurons and a layer of 32 × 25 inhibitory neurons. A change of one line in the example RSnet.g script allows the change from the nearest-neighbor connectivity of the model to the required infinite-range connectivity with 2% probability.

The identical excitatory and inhibitory neurons used in the network are implemented as specified in Appendix B. For both versions of the model, Poisson-distributed random spike inputs with a mean frequency of 70 Hz were applied to the excitatory synapses of the all excitatory neurons. The the simulation was run for 0.05 s, the random input was removed, and it was then run for an additional 4.95 s.

The Control Panel at the left is used to run the simulation and to set parameters such as maximal synaptic conductances, synaptic weight scaling, and propagation delays. There are options to provide current injection pulses, as well as random synaptic activation. The plots in the middle show the membrane potentials of three excitatory neurons (0, 1536, and 1567), and inhibitory neuron 0. The netview displays at the right show the membrane potentials of the excitatory neurons (top) and inhibitory neurons (bottom). With no propagation delays, the positions of the neurons on the grid are irrelevant. Nevertheless, this two-dimensional representation of the network layers makes it easy to visualize the number of cells firing at any time during the simulation.

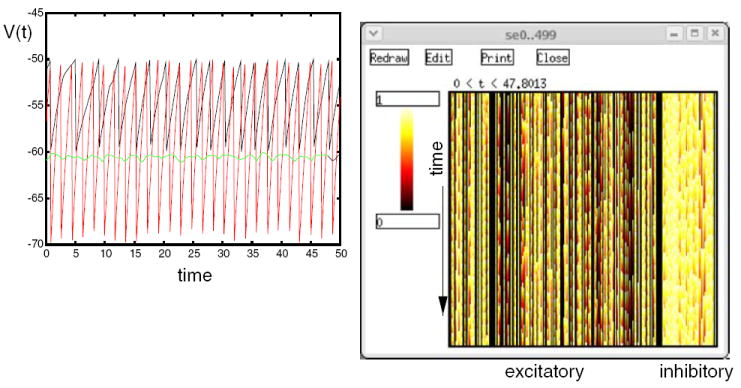

Figure 9 shows the plots for the membrane potential of the same neurons as those displayed in Fig. 8, but produced by the Instantaneous Conductance VA HH Model script. The plot at the right shows a zoom of the interval between 3.2 and 3.4 s.

Fig. 9.

Membrane potentials for four selected neurons of the Instantaneous Conductance VA HH Model in GENESIS. (a) The entire 5 s of the simulation. (b) Detail of the interval 3.2–3.4 s

In both figures, excitatory neuron 1536 has the lowest ratio of excitatory to inhibitory inputs of the four neurons plotted. It fires only rarely, whereas excitatory neuron 0, which has the highest ratio, fires most frequently.

4.2.6 Future plans for GENESIS

The GENESIS simulator is now undergoing a major redevelopment effort, which will result in GENESIS 3. The core simulator functionality is being reimplemented in C++ using an improved scheme for messaging between GENESIS objects, and with a platform-independent and browser-friendly Java-based GUI. This will result in not only improved performance and portability to MS Windows and non-UNIX platforms, but will also allow the use of alternate script parsers and user interfaces, as well as the ability to communicate with other modeling programs and environments. The GENESIS development team is participating in the NeuroML (Goddard et al. 2001; Crook et al. 2005) project,9 along with the developers of NEURON. This will enable GENESIS 3 to export and import model descriptions in a common simulator-independent XML format. Development versions of GENESIS are available from the Sourceforge GENESIS development site.10

4.3 NEST

4.3.1 The NEST initiative

The problem of simulating neuronal networks of biologically realistic size and complexity has long been underestimated. This is reflected in the limited number of publications on suitable algorithms and data structures in high-level journals. The lack of awareness of researchers and funding agencies of the need for progress in simulation technology and sustainability of the investments may partially originate from the fact that a mathematically correct simulator for a particular neuronal network model can be implemented by an individual in a few days. However, this has routinely resulted in a cycle of unscalable and unmaintainable code being rewritten in unmaintainable fashion by novices, with little progress in the theoretical foundations.

Due to the increased availability of computational resources, simulation studies are becoming ever more ambitious and popular. Indeed, many neuroscientific questions are presently only accessible through simulation. An unfortunate consequence of this trend is that it is becoming ever harder to reproduce and verify the results of these studies. The ad hoc simulation tools of the past cannot provide us with the appropriate degree of comprehensibility. Instead we require carefully crafted, validated, documented and expressive neuronal network simulators with a wide user community. Moreover, the current progress towards more realistic models demands correspondingly more efficient simulations. This holds especially for the nascent field of studies on large-scale network models incorporating plasticity. This research is entirely infeasible without parallel simulators with excellent scaling properties, which is outside the scope of ad hoc solutions. Finally, to be useful to a wide scientific audience over a long time, simulators must be easy to maintain and to extend.

On the basis of these considerations, the NEST initiative was founded as a long term collaborative project to support the development of technology for neural systems simulations (Diesmann and Gewaltig 2002). The NEST simulation tool is the reference implementation of this initiative. The software is provided to the scientific community under an open source license through the NEST initiative’s website.11 The license requests researchers to give reference to the initiative in work derived from the original code and, more importantly, in scientific results obtained with the software. The website also provides references to material relevant to neuronal network simulations in general and is meant to become a scientific resource of network simulation information. Support is provided through the NEST website and a mailing list. At present NEST is used in teaching at international summer schools and in regular courses at the University of Freiburg.

4.3.2 The NEST simulation tool

In the following we give a brief overview of the NEST simulation tool and its capabilities.

4.3.2.1 Domain and design goals

The domain of NEST is large neuronal networks with biologically realistic connectivity. The software easily copes with the threshold network size of 105 neurons (Morrison et al. 2005) at which each neuron can be supplied with the natural number of synapses and simultaneously a realistic sparse connectivity can be maintained. Typical neuron models in NEST have one or a small number of compartments. The simulator supports heterogeneity in neuron and synapse types. In networks of realistic connectivity the memory consumption and work load is dominated by the number of synapses. Therefore, much emphasis is placed on the efficient representation and update of synapses. In many applications network construction has the same computational costs as the integration of the dynamics. Consequently, NEST parallelizes both. NEST is designed to guarantee strict reproducibility: the same network is required to generate the same results independent of the number of machines participating in the simulation. It is considered an important principle of the project that the development work is carried out by neuroscientists operating on a joint code base. No developments are made without the code being directly tested in neuroscientific research projects. This implements an incremental and iterative development cycle. Extensibility and long-term maintainability are explicit design goals.

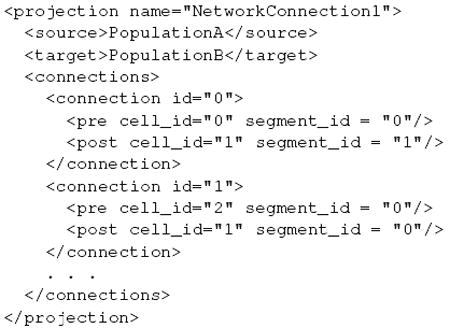

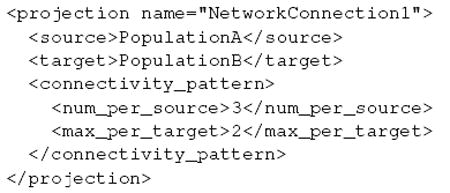

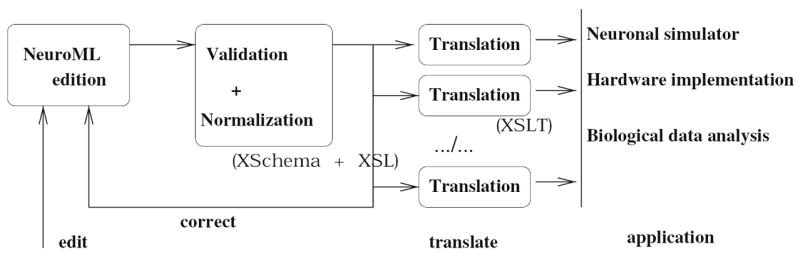

4.3.2.2 Instrfraucture