Abstract

The amygdala is critical for associating predictive cues with primary rewarding and aversive outcomes. This is particularly evident in tasks in which information about expected outcomes is required for normal responding. Here we used a pavlovian overexpectation task to test whether outcome signaling by amygdala might also be necessary for changing those representations in the face of unexpected outcomes. Rats were trained to associate several different cues with a food reward. After learning, two of the cues were presented together, in compound, followed by the same reward. Before each compound training session, rats received infusions of 2,3-dioxo-6-nitro-1,2,3,4-tetrahydrobenzo[f]quinoxaline-7-sulfonamide or saline into either the basolateral (ABL) or central nucleus (CeN) of amygdala. We found that infusions into CeN abolished the normal decline in responding to the compounded cue in a later probe test, whereas infusions into ABL had no effect. These results are inconsistent with the proposal that signaling of information about expected outcomes by ABL contributes to learning, at least in this setting, and instead implicate the CeN in this process, perhaps attributable to the hypothesized involvement of this area in attention and variations in stimulus processing.

Introduction

The amygdala is critical for associating predictive cues with primary rewarding and aversive outcomes (Maren and Quirk, 2004; Murray, 2007). This is particularly evident in tasks in which information about expected outcomes is required for normal responding (Hatfield et al., 1996; Málková et al., 1997; Blundell et al., 2001; Corbit and Balleine, 2005; Wellman et al., 2005; Machado and Bachevalier, 2007; Johnson et al., 2009). This function depends on basolateral amygdala (ABL), which is required for reinforcer-specific devaluation and pavlovian-to-instrumental transfer (Corbit and Balleine, 2005; Johnson et al., 2009). Although such studies reveal a role for ABL in the acquisition and use of value representations during initial learning, they do not address whether signaling of outcome information by ABL is necessary for changing associative representations when outcomes differ from expectations (Belova et al., 2008).

Pavlovian overexpectation is well suited to address this question. In this task, rats learn that several cues are independent predictors of reward. After learning, two cues are presented together, in compound, followed by the same reward. Normal rats exhibit a spontaneous decline in responding to these cues when they are presented separately later during a probe test. This decline is thought to reflect learning induced by violations of the summed expectations for reward in the compound phase (Rescorla, 1999, 2006, 2007). By dissociating learning in the face of unexpected outcomes in compound training, from the use of the new information, in the probe test, this task allows one to test whether a given brain region has a specific role in learning driven by changes in expected outcomes. For example, inactivation of orbitofrontal cortex (OFC) during the compound phase prevents the later decline in responding (Takahashi et al., 2009), suggesting that information about expected outcomes signaled by neurons in OFC facilitates learning in the face of unexpected outcomes (Schoenbaum et al., 2009). Notably, the OFC has close anatomical and functional relationships to ABL (Krettek and Price, 1977; Shi and Cassell, 1998; Ongür and Price, 2000; Ghashghaei and Barbas, 2002); like ABL, OFC is critical for normal behavior when information about expected outcomes is required (Gallagher et al., 1999; Izquierdo et al., 2004; McDannald et al., 2005b; Machado and Bachevalier, 2007; Ostlund and Balleine, 2007).

Here we used pavlovian overexpectation to test whether outcome signaling by amygdala neurons might also be necessary for learning when actual outcomes differ from expectations. Rats were trained as before; before compound training, rats received infusions of saline or 2,3-dioxo-6-nitro-1,2,3,4-tetrahydrobenzo[f]quinoxaline-7-sulfonamide (NBQX), an AMPA receptor antagonist, into either ABL or the central nucleus (CeN) of amygdala. We found that inactivation of AMPA receptors in ABL had no effect on the normal decline in responding to the compounded cue in the later probe test, whereas inactivation in CeN abolished this decline. These results suggest that signaling of outcome expectancies by ABL neurons is not required for learning, at least in this setting, and instead implicate the CeN in this process, perhaps because of its role in attention and variations in stimulus processing (Holland and Gallagher, 1999).

Materials and Methods

Subjects.

Experiments used male Long–Evans rats (Charles River), tested at the University of Maryland School of Medicine in accordance with University and National Institutes of Health guidelines.

Cannula location.

Cannulae (23 gauge; Plastics One) were implanted bilaterally in CeN (2.3 mm posterior to bregma, 4.0 mm lateral, and 6.0 mm ventral) in 18 rats or in ABL (2.7 mm posterior to bregma, 5.0 mm lateral, and 6.5 mm ventral) in 20 rats to allow infusion of inactivating agents or saline vehicle before some testing sessions. Actual infusions were made at 2.3 mm posterior, 4.0 mm lateral, and 8.0 mm ventral in CeN and 2.7 mm posterior, 5.0 mm lateral, and 8.5 mm ventral in ABL.

Apparatus.

Training was done in 16 standard behavioral chambers from Coulbourn Instruments, each enclosed in a sound-resistant shell. A food cup was recessed in the center of one end wall. Entries were monitored by photobeam. A food dispenser containing 45 mg sucrose pellets (plain, banana flavored, or grape flavored; Bio-Serv) allowed delivery of pellets into the food cup. White noise or a tone, each measuring ∼76 dB, was delivered via a wall speaker. Also mounted on that wall were a clicker (2 Hz) and a 6 W bulb that could be illuminated to provide a light stimulus during the otherwise dark session.

Pavlovian overexpectation training.

Rats were shaped to retrieve food pellets, and then they underwent 10 conditioning sessions. In each session, the rats received eight 30-s presentations of three different auditory stimuli (A1, A2, and A3) and one visual stimulus (V1), in a blocked design in which the order of cue blocks was counterbalanced. For all conditioning, V1 consisted of a cue light, and A1, A2, and A3 consisted of a tone, clicker, or white noise (counterbalanced). Two differently flavored sucrose pellets (banana and grape, designated O1 and O2, counterbalanced) were used as rewards. V1 and A1 terminated with delivery of three pellets of O1, and A2 terminated with delivery three pellets of O2. A3 was paired with no food. After completion of the 10 d of simple conditioning, rats received 4 consecutive days of compound conditioning in which A1 and V1 were presented together as a 30-s compound cue terminating with three pellets of O1, and V1, A2, and A3 continued to be presented as in simple conditioning. Cues were again presented in a blocked design, with order counterbalanced. For each cue, there were 12 trials on the first 3 d of compound conditioning and six trials on the last day of compound conditioning. One day after the last compound conditioning session, rats received a probe test session consisting of eight non-reinforced presentations of A1, A2, and A3 stimuli, with the order mixed and counterbalanced.

CeN/ABL inactivation.

On each compound conditioning day, cannulated rats received bilateral infusions of inactivating agents (CeN, n = 11; ABL, n = 10) or the same amount of PBS (CeN, n = 7; ABL, n = 6) immediately before the cue block in which A1 and V1 were presented in compound. Procedures were identical to those used previously (Takahashi et al., 2009), except with volumes and a concentration of NBQX, an AMPA receptor antagonist, based on previous work by McDannald et al. (2005a). Briefly, dummy cannulae were removed, and 30 gauge injector cannulae extending 2.0 mm beyond the end of the guide cannulae were inserted. Each injector cannula was connected with polyethylene PE20 tubing (Thermo Fisher Scientific) to a Hamilton syringe placed in an infusion pump (Orion M361; Thermo Fisher Scientific). Each infusion consisted of 4 μg of NBQX (Sigma). The drug was dissolved in 0.2 μl of PBS and infused at a flow rate of 0.2 μl/min. At the end of each infusion, the injector cannulae were left in place for another 2–3 min to allow diffusion of the drugs away from the injector. Approximately 10 min after removal of the injector cannulae, rats underwent compound conditioning.

Response measures.

The primary measure of conditioning to cues was the percentage of time that each rat spent with its head in the food cup during the 30 s conditioned stimuli (CS) presentation, as indicated by disruption of the photocell beam. We also measured the percentage of time that each rat showed rearing behavior during the 30 s CS period. To correct for time spent rearing, the percentage of responding during the 30 s CS was calculated as follows: % of responding = 100 × ((% of time in food cup)/(100 − (% of time of rearing)). There was neither a significant main effect nor any interactions with group in rearing behavior in any phase of training (F values <1.7, p values >0.2).

Results

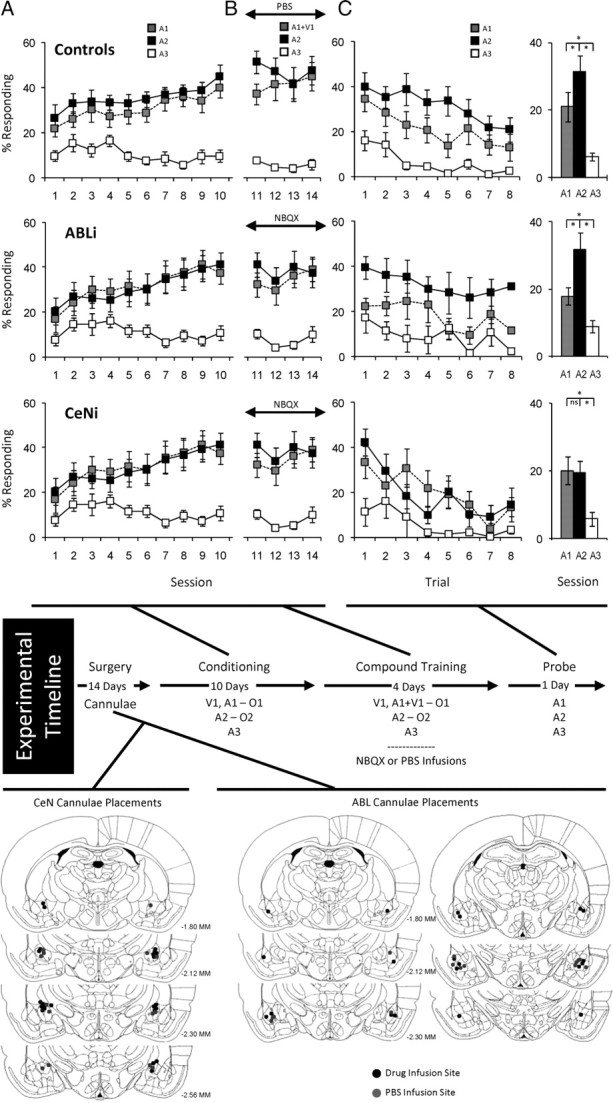

Thirty-four rats were trained as illustrated by the experimental timeline in Figure 1. Before training, all rats underwent surgery to implant cannulae bilaterally in either ABL (n = 16) or CeN (n = 18). These rats were divided into three groups: ABLi rats (n = 10) and CeNi rats (n = 11) that later received NBQX infusions and controls that later received infusions of PBS vehicle (seven CeN and six ABL). Cannula location is illustrated in Figure 1. The controls for CeN and ABL infusions exhibited no differences in any of the subsequent measures of conditioning, and thus they were combined into a single group.

Figure 1.

Effect of inactivation of ABL or CeN on changes in behavior after overexpectation. Shown is the experimental timeline linking conditioning, compound conditioning, and probe phases to data from each phase. Top, middle, and bottom rows indicate results from control, ABLi, and CeNi groups, respectively. In timeline and figures, V1 is a visual cue (a cue light), A1, A2, and A3 are auditory cues (tone, white noise, and clicker, counterbalanced), and O1 and O2 are different flavored sucrose pellets (banana and grape, counterbalanced). Positions of cannulae within ABL or CeN are shown beneath the timeline for the rats that received PBS (gray dot) or NBQX (black dot) before each compound conditioning session. A, Percentage of responding to food cup during cue presentation across 10 d of conditioning. Gray, black, and white squares indicate A1, A2, and A3 cues, respectively. B, Percentage of responding to food cup during cue presentation across 4 d of compound training. Gray, black, and white squares indicate A1/V1, A2, and A3 cues, respectively. C, Percentage of responding to food cup during cue presentation in the probe test. Line graph shows responding across the eight trials, and the bar graph shows average responding in these eight trials. Gray, black, and white colors indicate A1, A2, and A3 cues, respectively (*p < 0.05 or better).

After surgery and a 2 week recovery period, all rats underwent 10 d of conditioning, during which cues were paired with flavored sucrose pellets (banana and grape, designated as O1 and O2, counterbalanced). Three unique auditory cues (tone, white noise, and clicker, designated A1, A2, and A3, counterbalanced) were the primary cues of interest. A1 served as the “overexpected cue” and was associated with three pellets of O1. A2 served as a control cue and was associated with three pellets of O2. A3 was associated with no reward and thus served as a CS−.

Acquisition of conditioned responding to the critical auditory cues is shown in Figure 1A. Rats in all three groups developed equally elevated responding to A1 and A2 compared with A3 across the 10 sessions. In accord with this impression, ANOVA (group × cue × session) revealed significant main effects of cue and session (cue, F(2,62) = 75.3, p < 0.0001; session, F(9,279) = 9.1, p < 0.0001) and a significant interaction between cue and session (F(18,558) = 12.0, p < 0.0001); however, there were no main effects nor any interactions with group (F values <0.81, p values >0.52). A step-down ANOVA comparing responding to A1 and A2 revealed no statistical effects of either cue or group (F values <1.7, p values >0.205), indicating that rats learned to respond to these two cues equally. Furthermore, there were no effects of session in the final 2 d of training (F values <1.4, p values >0.26), indicating that responding was at ceiling.

Rats were also trained to associate a visual cue (cue light, V1) with three pellets of O1. V1 was to be paired with A1 in the compound phase to induce overexpectation; therefore, a nonauditory cue was used to discourage the formation of compound representations. All three groups of rats responded identically to the V1 cue used to induce overexpectation during compound training (data not shown). An ANOVA (group × day) during initial and compound training revealed a significant main effect of day during initial conditioning (F(9,279) = 16.0, p < 0.0001). There were no other significant effects or interactions in other analyses of the V1 cue (F values <0.8, p values >0.5).

After initial conditioning, the rats underwent 4 d of compound training. These sessions were the same as preceding sessions, except that V1 was delivered simultaneously with A1. V1 also continued to be presented separately to support its associative strength and thereby maximize the effect of overexpectation on A1. A2 and A3 continued to be presented as before. Immediately before each session, rats in the two experimental groups received bilateral infusions of NBQX to inactivate ABL or CeN; rats in the control groups received PBS vehicle infusions.

Responding during compound training is shown in Figure 1B. Rats in all three groups maintained elevated responding to A1/V1 and A2 compared with A3 across the four sessions. ANOVA (group × cue × session) revealed a significant main effect of cue (cue, F(2,62) = 94.1, p < 0.0001) and a significant interaction between cue and session (F(6,186) = 5.19, p < 0.0001); however, there was no main effect nor any interactions with group (F values <0.9, p values >0.5). A step-down ANOVA comparing responding to A1 and A2 showed neither main effects nor any interactions of cue or group during compound training (F values <1.9, p values >0.18).

Finally, all rats received a probe test in which A1, A2, and A3 were presented alone without reinforcement. Conditioned responding in this session is shown in Figure 1C. Rats in each group showed elevated responding to A1 and A2 compared with A3, and this responding extinguished across the session. A three-factor ANOVA (group × cue × trial) revealed significant main effects of cue and trial (cue, F(2,62) = 35.463, p < 0.0001; trial, F(7,217) = 10.616, p < 0.0001).

In addition, rats in both the control and ABLi groups exhibited a significant and sudden decline in responding to the A1 cue in the probe test. Reduced responding was evident throughout the extinction session. Accordingly, an ANOVA comparing responding to A1 and A2 in ABLi and control rats revealed significant main effects of cue (F(1,21) = 14.9, p < 0.001) and trial (F(7,147) = 4.2, p < 0.001) but no significant main effect nor any interactions with group (F values <0.75, p values >0.6). Post hoc comparisons showed that both groups responded significantly more to A2 than to A1 (p values <0.05). Importantly, there were no interactions between cue and trial; thus, the difference in responding between A1 and A2 in each group was consistent across the session, consistent with the proposal that it resulted from previous compound training rather than new learning in the session.

In contrast, rats in the CeNi group extinguished normally in the probe test but failed to show any selective decline in responding to the A1 cue. Consistent with this interpretation, an ANOVA comparing responding to A1 and A2 in the CeNi and control rats revealed a significant main effect of trial (F(7,154) = 8.41, p < 0.0001), a near significant main effect of cue (F(1,22) = 3.49, p < 0.07), and a significant interaction between cue and group (F(1,22) = 4.62, p < 0.04). Post hoc comparisons showed that, unlike controls, CeNi rats responded similarly to A1 and A2 (p = 0.85).

Discussion

Here we show that AMPA receptor blockade in CeN but not ABL disrupts learning in response to overexpectation. Rats that received NBQX infusions into CeN failed to learn in response to the compound training, exhibiting similar levels of responding to both the compounded and control cues in the probe text.

In contrast, rats that received NBQX infusions into ABL before the critical compound training learned as well as controls that received saline infusions. This was evident in the subsequent probe test, in which both groups showed a decline in responding to the cue that had been presented in compound but maintained responding to the control cue. Importantly, other laboratories have found behavioral effects of NBQX infusions in ABL (Walker et al., 2005; Rabinak et al., 2009), and we have recently used an identical approach in another study to disrupt behavioral changes correlated with changes in single-unit activity in ABL (Roesch et al., 2010). As a result, we do not believe that this negative result is attributable to any lack of effect of our manipulation, although of course it is possible that some other manipulation, such as dopamine blockade or neurotoxic lesions, could reveal a role for ABL in overexpectation.

The failure of ABL inactivation to affect learning in this setting is inconsistent with proposals that neural correlates of cue value or outcome expectancies or even putative error signals reported in ABL neurons play a general role in the recognition and calculation of reward prediction errors by neurons in other brain regions. Although such firing patterns may explain the role of ABL in guiding behavior, they do not appear to be necessary for learning driven by negative errors in reward prediction.

Of course, these results do not directly address whether ABL is involved in learning driven by errors in punishment prediction or by positive errors in reward prediction. Potential evidence for a role in learning from positive reward prediction errors comes from pavlovian reinforcer devaluation studies, in which damage to basolateral amygdala appears to cause deficits in the acquisition of the original associations (Pickens et al., 2003). However, these deficits seem more likely to reflect a direct role in storage rather than any contribution to error signaling, particularly because signaling of outcome expectancies should retard rather than accelerate learning in this setting. Similarly, although ABL is important for learning in an aversive overexpectation task (Cole and McNally, 2009), this was shown using NMDA antagonists, again suggesting a role in storage of the new information rather than any contributions to error signaling.

To the extent that overexpectation is comparable with extinction learning, showing spontaneous recovery and extinction for example (Rescorla, 1999, 2006, 2007), the failure of ABL inactivation to affect learning in this setting is in accord with reports showing that ABL is typically not necessary for extinction (or reversal) of responding (Lindgren et al., 2003; Schoenbaum et al., 2003; Izquierdo and Murray, 2005, 2007) (but see McLaughlin and Floresco, 2007). Our results build on these reports by using a paradigm that requires summation of reward expectancies for normal learning and by manipulating ABL function only at the time of learning.

The failure of ABL inactivation to affect this process stands in contrast to the effects of OFC inactivation, which impairs learning in response to overexpectation (Takahashi et al., 2009). This dissociation is notable because single-unit activity and blood oxygenation level-dependent response in both OFC and ABL represent information about expected outcomes (Schoenbaum et al., 1998; Tremblay and Schultz, 1999; O'Doherty et al., 2002; Gottfried et al., 2003; Padoa-Schioppa and Assad, 2006; Paton et al., 2006; Tye and Janak, 2007; Belova et al., 2008; Tye et al., 2008), and both areas have been associated with comparable behavioral deficits in tasks that require the use of outcome expectancies for normal behavior responding (Hatfield et al., 1996; Málková et al., 1997; Blundell et al., 2001; Corbit and Balleine, 2005; Wellman et al., 2005; Machado and Bachevalier, 2007; Johnson et al., 2009).

It is only recently that a limited number of dissociations have begun to appear. The dissociations are generally consistent with the proposal that ABL is preferentially involved in the acquisition of the original associative information, whereas OFC plays an ongoing role in integrating that simple retrospective information with additional inputs to drive behavior and new learning. For example, although both OFC and ABL are involved in changes in responding to cues after reinforcer devaluation, OFC is particularly critical for using the information at the time of the final probe test (Pickens et al., 2003, 2005). This function requires the real-time integration of the original association with information regarding the new value of the outcome. Likewise, although both OFC and ABL are implicated in temporal discounting, the role of ABL appears consistent with encoding the original associative strengths of the different responses (or cues), whereas OFC seems to play a more variable role consistent with the use of that information at the time a decision must be made (Mobini et al., 2002; Kheramin et al., 2003; Winstanley et al., 2004; Rudebeck et al., 2006). The current data is consistent with this dichotomy, because overexpectation provides a situation in which new learning requires the integration of existing simple associative representations. This is because the reward given in the compound phase is appropriate for either individual cue; it requires the real-time integration of the individual expectancies for an error in reward prediction to be perceived. OFC is required for this integration, whereas ABL is not.

Perhaps more surprising is the involvement of CeN in overexpectation, particularly in the absence of effects of ABL inactivation. CeN is often viewed as a relay station, operating in serial with ABL in the expression of learned behavior (LeDoux, 2000). This reflects the primarily unidirectional flow of information from ABL to CeN and the role of CeN in the expression of fear-associated behaviors. However, growing evidence suggests that CeN can also function in parallel with the ABL, independently encoding critical aspects of associative relationships in the environment (Balleine and Killcross, 2006; Wilensky et al., 2006). For example, ABL is critical for normal performance in a variety of settings that do not require CeN, including second-order conditioning, outcome-specific transfer, and also pavlovian devaluation tasks (Hatfield et al., 1996; Killcross et al., 1997; Corbit and Balleine, 2005). Likewise, CeN is involved in more general learning and attention-related behaviors, including learning in unblocking settings, which are not dependent on ABL (Gallagher et al., 1990; Holland and Gallagher, 1993a,b; Hatfield et al., 1996; Killcross et al., 1997; Corbit and Balleine, 2005; Lu et al., 2005; McDannald et al., 2005a). Our results provide another example of a behavioral setting in which ABL and CeN function in parallel rather than in serial.

We would suggest however that, in this case, the role of CeN in overexpectation likely reflects the involvement of this area in supporting variations in the processing of environmental stimuli rather than any selective role in representing certain types of associative information. Variations in stimulus processing are a key component of a class of learning theories exemplified by the theory of Pearce and Hall (1980). Unlike Rescorla and Wagner (1972) or temporal difference reinforcement learning (Sutton and Barto, 1998) that treat the associability or salience of conditioned (and perhaps unconditioned) stimuli as fixed quantities, Pearce–Hall and related theories propose that associability or salience changes in response to errors in reward prediction, increasing when errors are large and decreasing when errors are small. Highly associable or salient cues drive learning; thus, when errors are large and associability is high, learning occurs, whereas when errors are small and associability is low, learning is retarded.

Variations in stimulus processing can explain learning in a variety of settings, including some that cannot be easily accounted for by other models. One key example is increased responding induced by declines in the value of a predicted reward, as occurs in unblocking tasks when the reward predicted by a cue is reduced in conjunction with the addition of a novel second cue. On subsequent testing, rats will show increased responding to this novel second cue, despite the fact that it essentially predicts less reward. This increased responding cannot be easily explained by Rescorla and Wagner (1972) or temporal difference theories, because these theories posit a negative prediction error and declines in associative strength for the cues that are present in this situation. However, this result is readily explained by a mechanism in which associability increases, and learning can occur, whenever rewards are poorly predicted.

Holland and Gallagher (1993a,b) have shown that a circuit of structures, including CeN, is critical for learning in this setting. Thus, rats with CeN lesions do not show unblocking when reward is reduced. This effect was interpreted as showing a role for CeN in incrementing attention and cue processing, as posited by Pearce and Hall (1980), in the face of decrements in expected reward. Like overexpectation (Takahashi et al., 2009), learning in this setting also depends on midbrain dopamine neurons (Han et al., 1999; Lee et al., 2008). More recent work has suggested that this may also reflect variations in processing of the unconditioned stimulus (Holland and Kenmuir, 2005). This deficit was not observed when reward was increased because either increased responding could be equally well supported by simple error learning mechanisms or CeN is only involved in processing decrements in reward value.

The effects of CeN inactivation in pavlovian overexpectation, reported here, could be explained by a similar mechanism. Pearce and Hall (1980) originally proposed that incrementing attention and processing of available stimuli could lead to inhibition of behavior by pairing with an explicit “no reward” representation, explicitly defined as the absence of reward when it was expected (Pearce and Hall, 1980). Although such learning might be difficult to isolate in an unblocking task, in which the putative associations with no reward would have to outcompete new associations with the remaining reward (Holland and Kenmuir, 2005), learning capable of inhibiting behavior should be readily apparent in the overexpectation task, in which the cues are already heavily trained. According to this model, CeN may promote changes in behavior as a result of overexpectation by increasing the associability of the compounded cues when the expected rewards fail to materialize, thereby facilitating acquisition of an association with the imaginary missing reward. We would suggest that this is a more parsimonious account of these data than proposing a novel role for CeN in either signaling negative prediction errors or contributing information (expectancies) necessary for their calculation, although we cannot directly rule out these alternative accounts.

In addition to extending the current account of the role of CeN in attentional processing, our results are noteworthy for two other reasons. First, in using a temporally specific manipulation (inactivation during learning) rather than pretraining lesions, our results provide stronger evidence that CeN is not critical to the storage of the original associative information. Furthermore, because CeN was back online during probe testing, this also rules out a critical role in the final use of the new information. Also by inducing changes in expected reward by manipulating abstract expectations rather than by changing the timing, amount, or quantity of reward actually delivered, our results avoid potential confounds whereby the omission of one reward might inflate the value of what remains (Holland and Kenmuir, 2005). As a result, these findings provide strong support for proposals implicating CeN as an important part of a circuit of structures that support learning via attentional mechanisms validated through empirical studies of animal learning.

Footnotes

This work was supported by grants from the National Institute on Drug Abuse (G.S., Kathryn Burke, Matthew Roesch), the Human Frontier Science Protein (Y.K.T.), and the National Institute of Mental Health (G.S.).

References

- Balleine BW, Killcross S. Parallel incentive processing: an integrated view of amygdala function. Trends Neurosci. 2006;29:272–279. doi: 10.1016/j.tins.2006.03.002. [DOI] [PubMed] [Google Scholar]

- Belova MA, Paton JJ, Salzman CD. Moment-to-moment tracking of state value in the amygdala. J Neurosci. 2008;28:10023–10030. doi: 10.1523/JNEUROSCI.1400-08.2008. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Blundell P, Hall G, Killcross S. Lesions of the basolateral amygdala disrupt selective aspects of reinforcer representation in rats. J Neurosci. 2001;21:9018–9026. doi: 10.1523/JNEUROSCI.21-22-09018.2001. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Cole S, McNally GP. Complementary roles for amygdala and periaqueductal gray in temporal-difference fear learning. Learn Mem. 2009;16:1–7. doi: 10.1101/lm.1120509. [DOI] [PubMed] [Google Scholar]

- Corbit LH, Balleine BW. Double dissociation of basolateral and central amygdala lesions on the general and outcome-specific forms of pavlovian-instrumental transfer. J Neurosci. 2005;25:962–970. doi: 10.1523/JNEUROSCI.4507-04.2005. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Gallagher M, Graham PW, Holland PC. The amygdala central nucleus and appetitive Pavlovian conditioning: lesions impair one class of conditioned behavior. J Neurosci. 1990;10:1906–1911. doi: 10.1523/JNEUROSCI.10-06-01906.1990. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Gallagher M, McMahan RW, Schoenbaum G. Orbitofrontal cortex and representation of incentive value in associative learning. J Neurosci. 1999;19:6610–6614. doi: 10.1523/JNEUROSCI.19-15-06610.1999. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Ghashghaei HT, Barbas H. Pathways for emotion: interactions of prefrontal and anterior temporal pathways in the amygdala of the rhesus monkey. Neuroscience. 2002;115:1261–1279. doi: 10.1016/s0306-4522(02)00446-3. [DOI] [PubMed] [Google Scholar]

- Gottfried JA, O'Doherty J, Dolan RJ. Encoding predictive reward value in human amygdala and orbitofrontal cortex. Science. 2003;301:1104–1107. doi: 10.1126/science.1087919. [DOI] [PubMed] [Google Scholar]

- Han JS, Holland PC, Gallagher M. Disconnection of the amygdala central nucleus and substantia innominata/nucleus basalis disrupts increments in conditioned stimulus processing in rats. Behav Neurosci. 1999;113:143–151. doi: 10.1037//0735-7044.113.1.143. [DOI] [PubMed] [Google Scholar]

- Hatfield T, Han JS, Conley M, Gallagher M, Holland P. Neurotoxic lesions of basolateral, but not central, amygdala interfere with Pavlovian second-order conditioning and reinforcer devaluation effects. J Neurosci. 1996;16:5256–5265. doi: 10.1523/JNEUROSCI.16-16-05256.1996. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Holland PC, Gallagher M. Effects of amygdala central nucleus lesions on blocking an d unblocking. Behav Neurosci. 1993a;107:235–245. doi: 10.1037//0735-7044.107.2.235. [DOI] [PubMed] [Google Scholar]

- Holland PC, Gallagher M. Amygdala central nucleus lesions disrupt increments, but not decrements, in conditioned stimulus processing. Behav Neurosci. 1993b;107:246–253. doi: 10.1037//0735-7044.107.2.246. [DOI] [PubMed] [Google Scholar]

- Holland PC, Gallagher M. Amygdala circuitry in attentional and representational processes. Trends Cogn Sci. 1999;3:65–73. doi: 10.1016/s1364-6613(98)01271-6. [DOI] [PubMed] [Google Scholar]

- Holland PC, Kenmuir C. Variations in unconditioned stimulus processing in unblocking. J Exp Psychol Anim Behav Process. 2005;31:155–171. doi: 10.1037/0097-7403.31.2.155. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Izquierdo A, Murray EA. Opposing effects of amygdala and orbital prefrontal cortex lesions on the extinction of instrumental responding in macaque monkeys. Eur J Neurosci. 2005;22:2341–2346. doi: 10.1111/j.1460-9568.2005.04434.x. [DOI] [PubMed] [Google Scholar]

- Izquierdo A, Murray EA. Selective bilateral amygdala lesions in rhesus monkeys fail to disrupt object reversal learning. J Neurosci. 2007;27:1054–1062. doi: 10.1523/JNEUROSCI.3616-06.2007. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Izquierdo A, Suda RK, Murray EA. Bilateral orbital prefrontal cortex lesions in rhesus monkeys disrupt choices guided by both reward value and reward contingency. J Neurosci. 2004;24:7540–7548. doi: 10.1523/JNEUROSCI.1921-04.2004. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Johnson AW, Gallagher M, Holland PC. The basolateral amygdala is critical to the expression of pavlovian and instrumental outcome-specific reinforcer devaluation effects. J Neurosci. 2009;29:696–704. doi: 10.1523/JNEUROSCI.3758-08.2009. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Kheramin S, Brody S, Ho MY, Velazquez-Martinez DN, Bradshaw CM, Szabadi E, Deakin JFW, Anderson IM. Role of the orbital prefrontal cortex in choice between delayed and uncertain reinforcers: a quantitative analysis. Behav Process. 2003;64:239–250. doi: 10.1016/s0376-6357(03)00142-6. [DOI] [PubMed] [Google Scholar]

- Killcross S, Robbins TW, Everitt BJ. Different types of fear conditioned behavior mediated by separate nuclei within amygdala. Nature. 1997;388:377–380. doi: 10.1038/41097. [DOI] [PubMed] [Google Scholar]

- Krettek JE, Price JL. Projections from the amygdaloid complex to the cerebral cortex and thalamus in the rat and cat. J Comp Neurol. 1977;172:687–722. doi: 10.1002/cne.901720408. [DOI] [PubMed] [Google Scholar]

- LeDoux JE. The amygdala and emotion: a view through fear. In: Aggleton JP, editor. The amygdala: a functional analysis. New York: Oxford UP; 2000. pp. 289–310. [Google Scholar]

- Lee HJ, Youn JM, Gallagher M, Holland PC. Temporally limited role of substantia nigra-central amygdala connections in surprise-induced enhancement of learning. Eur J Neurosci. 2008;27:3043–3049. doi: 10.1111/j.1460-9568.2008.06272.x. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Lindgren JL, Gallagher M, Holland PC. Lesions of basolateral amygdala impair extinction of CS motivational value, but not of explicit conditioned responses, in Pavlovian appetitive second-order conditioning. Eur J Neurosci. 2003;17:160–166. doi: 10.1046/j.1460-9568.2003.02421.x. [DOI] [PubMed] [Google Scholar]

- Lu L, Hope BT, Dempsey J, Liu SY, Bossert JM, Shaham Y. Central amygdala ERK signaling pathway is critical to incubation of cocaine craving. Nat Neurosci. 2005;8:212–219. doi: 10.1038/nn1383. [DOI] [PubMed] [Google Scholar]

- Machado CJ, Bachevalier J. The effects of selective amygdala, orbital frontal cortex or hippocampal formation lesions on reward assessment in nonhuman primates. Eur J Neurosci. 2007;25:2885–2904. doi: 10.1111/j.1460-9568.2007.05525.x. [DOI] [PubMed] [Google Scholar]

- Málková L, Gaffan D, Murray EA. Excitotoxic lesions of the amygdala fail to produce impairment in visual learning for auditory secondary reinforcement but interfere with reinforcer devaluation effects in rhesus monkeys. J Neurosci. 1997;17:6011–6020. doi: 10.1523/JNEUROSCI.17-15-06011.1997. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Maren S, Quirk GJ. Neuronal signaling of fear memory. Nat Rev Neurosci. 2004;5:844–852. doi: 10.1038/nrn1535. [DOI] [PubMed] [Google Scholar]

- McDannald MA, Saddoris MP, Gallagher M, Holland PC. Lesions of orbitofrontal cortex impair rats' differential outcome expectancy learning but not conditioned stimulus-potentiated feeding. J Neurosci. 2005b;25:4626–4632. doi: 10.1523/JNEUROSCI.5301-04.2005. [DOI] [PMC free article] [PubMed] [Google Scholar]

- McDannald M, Kerfoot E, Gallagher M, Holland PC. Amygdala central nucleus function is necessary for learning but not expression of conditioned visual orienting. Eur J Neurosci. 2005a;20:240–248. doi: 10.1111/j.0953-816X.2004.03458.x. [DOI] [PubMed] [Google Scholar]

- McLaughlin RJ, Floresco SB. The role of different subregions of the basolateral amygdala in cue-induced reinstatement and extinction of food-seeking behavior. Neuroscience. 2007;146:1484–1494. doi: 10.1016/j.neuroscience.2007.03.025. [DOI] [PubMed] [Google Scholar]

- Mobini S, Body S, Ho MY, Bradshaw CM, Szabadi E, Deakin JF, Anderson IM. Effects of lesions of the orbitofrontal cortex on sensitivity to delayed and probabilistic reinforcement. Psychopharmacology. 2002;160:290–298. doi: 10.1007/s00213-001-0983-0. [DOI] [PubMed] [Google Scholar]

- Murray EA. The amygdala, reward and emotion. Trends Cogn Sci. 2007;11:489–497. doi: 10.1016/j.tics.2007.08.013. [DOI] [PubMed] [Google Scholar]

- O'Doherty JP, Deichmann R, Critchley HD, Dolan RJ. Neural responses during anticipation of a primary taste reward. Neuron. 2002;33:815–826. doi: 10.1016/s0896-6273(02)00603-7. [DOI] [PubMed] [Google Scholar]

- Ongür D, Price JL. The organization of networks within the orbital and medial prefrontal cortex of rats, monkeys and humans. Cereb Cortex. 2000;10:206–219. doi: 10.1093/cercor/10.3.206. [DOI] [PubMed] [Google Scholar]

- Ostlund SB, Balleine BW. Orbitofrontal cortex mediates outcome encoding in pavlovian but not instrumental learning. J Neurosci. 2007;27:4819–4825. doi: 10.1523/JNEUROSCI.5443-06.2007. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Padoa-Schioppa C, Assad JA. Neurons in orbitofrontal cortex encode economic value. Nature. 2006;441:223–226. doi: 10.1038/nature04676. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Paton JJ, Belova MA, Morrison SE, Salzman CD. The primate amygdala represents the positive and negative value of visual stimuli during learning. Nature. 2006;439:865–870. doi: 10.1038/nature04490. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Pearce JM, Hall G. A model for Pavlovian learning: variations in the effectiveness of conditioned but not of unconditioned stimuli. Psychol Rev. 1980;87:532–552. [PubMed] [Google Scholar]

- Pickens CL, Saddoris MP, Setlow B, Gallagher M, Holland PC, Schoenbaum G. Different roles for orbitofrontal cortex and basolateral amygdala in a reinforcer devaluation task. J Neurosci. 2003;23:11078–11084. doi: 10.1523/JNEUROSCI.23-35-11078.2003. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Pickens CL, Saddoris MP, Gallagher M, Holland PC. Orbitofrontal lesions impair use of cue-outcome associations in a devaluation task. Behav Neurosci. 2005;119:317–322. doi: 10.1037/0735-7044.119.1.317. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Rabinak CA, Orsini CA, Zimmerman JM, Maren S. The amygdala is not necessary for unconditioned stimulus inflation after Pavlovian fear conditioning in rats. Learn Mem. 2009;16:645–654. doi: 10.1101/lm.1531309. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Rescorla RA. Summation and overexpectation with qualitatively different outcomes. Anim Learn Behav. 1999;27:50–62. [Google Scholar]

- Rescorla RA. Spontaneous recovery from overexpectation. Learn Behav. 2006;34:13–20. doi: 10.3758/bf03192867. [DOI] [PubMed] [Google Scholar]

- Rescorla RA. Renewal from overexpectation. Learn Behav. 2007;35:19–26. doi: 10.3758/bf03196070. [DOI] [PubMed] [Google Scholar]

- Rescorla RA, Wagner AR. A theory of Pavlovian conditioning: variations in the effectiveness of reinforcement and nonreinforcement. In: Black AH, Prokasy WF, editors. Classical conditioning II: current research and theory. New York: Appleton-Century-Crofts; 1972. pp. 64–99. [Google Scholar]

- Roesch MR, Calu DJ, Esber GR, Schoenbaum G. Neural correlates of variations in event processing during learning in basolateral amygdala. J Neurosci. 2010;30:2464–2471. doi: 10.1523/JNEUROSCI.5781-09.2010. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Rudebeck PH, Walton ME, Smyth AN, Bannerman DM, Rushworth MF. Separate neural pathways process different decision costs. Nat Neurosci. 2006;9:1161–1168. doi: 10.1038/nn1756. [DOI] [PubMed] [Google Scholar]

- Schoenbaum G, Chiba AA, Gallagher M. Orbitofrontal cortex and basolateral amygdala encode expected outcomes during learning. Nat Neurosci. 1998;1:155–159. doi: 10.1038/407. [DOI] [PubMed] [Google Scholar]

- Schoenbaum G, Setlow B, Nugent SL, Saddoris MP, Gallagher M. Lesions of orbitofrontal cortex and basolateral amygdala complex disrupt acquisition of odor-guided discriminations and reversals. Learn Mem. 2003;10:129–140. doi: 10.1101/lm.55203. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Schoenbaum G, Roesch MR, Stalnaker TA, Takahashi YK. A new perspective on the role of the orbitofrontal cortex in adaptive behaviour. Nat Rev Neurosci. 2009;10:885–892. doi: 10.1038/nrn2753. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Shi CJ, Cassell MD. Cortical, thalamic, and amygdaloid connections of the anterior and posterior insular cortices. J Comp Neurol. 1998;399:440–468. doi: 10.1002/(sici)1096-9861(19981005)399:4<440::aid-cne2>3.0.co;2-1. [DOI] [PubMed] [Google Scholar]

- Sutton RS, Barto AG. Reinforcement learning: an introduction. Cambridge, MA: Massachusetts Institute of Technology; 1998. [Google Scholar]

- Takahashi YK, Roesch MR, Stalnaker TA, Haney RZ, Calu DJ, Taylor AR, Burke KA, Schoenbaum G. The orbitofrontal cortex and ventral tegmental area are necessary for learning from unexpected outcomes. Neuron. 2009;62:269–280. doi: 10.1016/j.neuron.2009.03.005. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Tremblay L, Schultz W. Relative reward preference in primate orbitofrontal cortex. Nature. 1999;398:704–708. doi: 10.1038/19525. [DOI] [PubMed] [Google Scholar]

- Tye KM, Janak PH. Amygdala neurons differentially encode motivation and reinforcement. J Neurosci. 2007;27:3937–3945. doi: 10.1523/JNEUROSCI.5281-06.2007. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Tye KM, Stuber GD, de Ridder B, Bonci A, Janak PH. Rapid strengthening of thalamo-amygdala synapses mediates cue-reward learning. Nature. 2008;453:1253–1257. doi: 10.1038/nature06963. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Walker DL, Paschall GY, Davis M. Glutamate receptor antagonist infusions into the basolateral and medial amygdala reveal differential contributions to olfactory vs context fear conditioning and expression. Learn Mem. 2005;12:120–129. doi: 10.1101/lm.87105. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Wellman LL, Gale K, Malkova L. GABAA-mediated inhibition of basolateral amygdala blocks reward devaluation in macaques. J Neurosci. 2005;25:4577–4586. doi: 10.1523/JNEUROSCI.2257-04.2005. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Wilensky AE, Schafe GE, Kristensen MP, LeDoux JE. Rethinking the fear circuit: the central nucleus of the amygdala is required for the acquisition, consolidation, and expression of pavlovian fear conditioning. J Neurosci. 2006;26:12387–12396. doi: 10.1523/JNEUROSCI.4316-06.2006. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Winstanley CA, Theobald DE, Cardinal RN, Robbins TW. Contrasting roles of basolateral amygdala and orbitofrontal cortex in impulsive choice. J Neurosci. 2004;24:4718–4722. doi: 10.1523/JNEUROSCI.5606-03.2004. [DOI] [PMC free article] [PubMed] [Google Scholar]