Abstract

Previous event-related potentials research has suggested that the N170 component has a larger amplitude to faces and words than to other stimuli, but it remains unclear whether it indexes the same cognitive processes for faces and for words. The present study investigated how category-level repetition effects on the N170 differ across stimulus categories. Faces, cars, words, and non-words were presented in homogeneous (1 category) or mixed blocks (2 intermixed categories). We found a significant repetition effect of N170 amplitude for successively presented faces and cars (in homogeneous blocks), but not for words and unpronounceable consonant strings, suggesting that the N170 indexes different underlying cognitive processes for objects (including faces) and orthographic stimuli. The N170 amplitude was significantly smaller when multiple faces or multiple cars were presented in a row than when these stimuli were preceded by a stimulus of a different category. Moreover, the large N170 repetition effect for faces may be important to consider when comparing the relative N170 amplitude for different stimulus categories. Indeed, a larger N170 deflection for faces than for other stimulus categories was observed only when stimuli were preceded by a stimulus of a different category (in mixed blocks), suggesting that an enhanced N170 to faces may be more reliably observed when faces are presented within the context of some non-face stimuli.

Keywords: adaptation, habituation, event-related potentials, face processing, objects, words

Introduction

Since it has been observed that the N170 is larger for faces than for other object categories (Botzel et al., 1995; Bentin et al., 1996), many event-related potentials (ERPs) studies have attempted to clarify its associated cognitive processes (Bentin and Deouell, 2000; Eimer, 2000; Tanaka and Curran, 2001; Rossion et al., 2002, 2003). The face-sensitivity of the N170 has been interpreted as an indication of the existence of brain mechanisms specialized for face processing (Kanwisher and Yovel, 2006), or as the result of the higher level of expertise that typical adults have with faces than with other object categories (Tanaka and Curran, 2001; Rossion et al., 2002). Recently, it has been reported that the N170 can be of equal, or even larger, amplitude for written words than for faces (Mercure et al., 2008), although it differs in lateralization for these two stimulus categories (being larger in the left than right hemiscalp when elicited by words and tending to be larger in the right than left hemiscalp when elicited by faces; Rossion et al., 2003; Maurer et al., 2008; Mercure et al., 2008). It has also been suggested that visual expertise plays a significant role in the establishment of the word-elicited N170 in reading acquisition (Maurer et al., 2005b, 2006). This raises the important issue of whether a high level of visual expertise is the common mechanism that underlies the enhanced amplitude of the N170 elicited by both faces and words.

One indication that the N170 might index different cognitive processes for faces and for words comes from the recent observation that the N170 amplitude is smaller when multiple faces of different identities are presented in a row (compared to faces intermixed with words), while no such effect was found for words. Indeed, a recent ERP study compared the N170 amplitude to faces and words in two different presentation conditions: blocks that alternated faces and words as well as homogeneous blocks in which only one stimulus category was presented. Thirty-six different items of each category were presented. Results suggested that the face-elicited N170 is larger when face stimuli alternated with word stimuli, than when only faces (of different identities) were presented. In contrast, the N170 did not change in amplitude when different words were successively presented (Maurer et al., 2008). This reduction in amplitude represents a repetition effect, also referred to as adaptation by some authors. The adaptation techniques rely on the phenomenon of decreased neural activity to repeated stimulus presentation (Wiggs and Martin, 1998; Henson, 2003; Grill-Spector et al., 2006) and have been widely used since the late 1990s in functional magnetic resonance imaging (fMRI) studies to probe the differential cognitive processes associated with different brain areas (Grill-Spector et al., 1999, 2006; Grill-Spector and Malach, 2001; Cohen Kadosh et al., 2010). It is assumed that a brain area sensitive to a specific aspect of a stimulus could show reduced responses when a series of stimuli sharing this characteristic are presented. In turn, changes in the relevant stimulus aspects triggers a release from adaptation. For example, Winston et al. (2004) found that repetition of emotional expression in different stimuli reduced the activation in the anterior superior temporal sulcus, whereas repetition of face identity reduced the activation of the fusiform gyrus and posterior temporal sulcus, suggesting that these areas are sensitive respectively to face emotion or face identity. In ERPs, it has been observed that the N170 amplitude decreases when the same face stimulus (same identity) is repeated (Itier and Taylor, 2002, 2004; Henson et al., 2003; Heisz et al., 2006a,b; Jacques et al., 2007; Kovács et al., 2007). The paradigm used by Maurer et al. (2008) is slightly different to the most commonly used repetition paradigm in that it represents category-level repetition as opposed to repetition of a specific item of a category or a specific characteristic of a stimulus. In other words, specific stimuli are not repeated but, in some conditions, different stimuli from the same category are sequentially presented (Face A, Face B, Face C…) and compared to conditions in which they are mixed with stimuli of a different category (Face A, Word A, Face B, Word B…). The smaller amplitude observed for sequential presentation is attributed to the repetition of the stimulus category and is referred to as category-level repetition effect. The results of Maurer et al.’s (2008) study suggest that while the N170 is sensitive to the inclusion of individual face stimuli into the category of faces, this type of category-level sensitivity was not observed for words. More generally, these results raise the hypothesis that the N170 may reflect different underlying processing for words and for faces.

The present study investigated category-level repetition effects on the N170 in order to clarify the processing of different stimulus categories at this level. It further aimed to confirm and extend the finding that the N170 shows a differential pattern of category-level repetition effect for faces and for words. There are several possible explanations for Maurer et al.’s (2008) results that our experiment potentially resolves. One possibility is that category-level repetition effect on the N170 is a special characteristic of face processing, which would support the claim that the N170 reflects face-sensitive cognitive mechanisms. This possibility is congruent with the results of ERP studies which have found an N170 repetition suppression effect to individual upright faces (Itier and Taylor, 2002; Henson et al., 2003; Heisz et al., 2006a,b; Jacques et al., 2007; Kovács et al., 2007), but not to inverted faces (Heisz et al., 2006a; Jacques et al., 2007). The N170 might show more repetition effect in response to the face category because upright faces represent a special class of objects with which adults have a high level of experience from very early in life. Maurer et al.’s (2008) results also suggest that N170 repetition effect might be absent or weaker for the word category, a result that we hypothesize might be attributable to the fact that these stimuli are pronounceable. Thus, participants might automatically retrieve the phonological form of each written word, which could elicit a sustained level of activity for words even when presented in series. Alternatively, the results of Maurer et al. (2008) could represent an artifact of their experimental design. The authors suggest that the difference in repetition effect to faces and words might be explained by a difference in the participant's familiarity with the stimuli. Indeed, they compared familiar frequently encountered words with unfamiliar faces. It is therefore possible that a category-level repetition effect is present only for unfamiliar stimuli. If this were the case, a category-level repetition effect could be observed for unfamiliar alphabetic stimuli, such as consonant strings. The present study will test these hypotheses.

Moreover, the present study examined the impact of homogeneous/mixed stimulus presentation on the relative amplitude of the N170 to faces, words, and other stimulus categories. It was previously observed that whether the N170 amplitude was larger for words or for faces was highly dependent on presentation conditions, including stimulus size, resolution, and presentation time (Mercure et al., 2008). The repetition effect observed in blocked presentation of faces, but not words might further influence the relative amplitude of the N170 to faces and words. This could have contributed to the fact that Rossion et al. (2003) found a larger amplitude of the N170 to faces than words (with mixed stimulus presentation), whereas Mercure et al. (2008) generally found a larger amplitude of the N170 for words than faces (with blocked stimulus presentation).

In the present study, four stimulus categories were presented to explore category-level N170 repetition effect. Faces and words were presented to investigate whether we could reproduce a difference in the category-level repetition effect for these stimulus categories. Words were compared to unfamiliar and non-pronounceable non-words were to test how stimulus familiarity influences the category-level repetition effect and whether the retrieval of a phonological form helps maintain a large amplitude of the N170 when words are successively presented. Cars were presented as a non-face object category to test the hypothesis that category-level repetition effect is specific to faces. Note that the current design only tests the impact of familiarity on alphabetic stimuli and does not allow distinguishing the familiarity and pronounceability. Two types of experimental blocks were presented: homogeneous blocks (1 stimulus category) and mixed blocks (2 stimulus categories). For each trial in the mixed blocks, we kept track of the stimulus category presented in the previous trial. Stimuli preceded by a stimulus of a different category served as the baseline condition in which no repetition effect was expected. A significant repetition effect (a significant difference in N170 amplitude between stimuli in homogeneous blocks and the baseline condition) was expected for faces and possibly for cars and non-words. Short-term repetition effect could also be observed within mixed blocks with a significant difference in N170 amplitude for stimuli preceded by a stimulus of the same versus a different category. This analysis aimed to better understand the time-scale of the N170 repetition effect by testing whether two successive items of a same stimulus category were sufficient to create a significant repetition effect. Jeffreys (1996) found that the presentation of two successive faces was sufficient to reduce the amplitude of the VPP, but it remains unclear if the same effect would be observed at the level of the N170 and if it would also be observed for other stimulus categories. This within-block comparison also aimed to rule out the possibility raised by Maurer and colleagues that between-blocks differences in attention level could explain the finding of a category-level repetition effect for faces, but not for words. Finally, within each presentation condition, the amplitude of the N170 was compared across categories in order to examine the impact of category-level repetition effect on the relative N170 amplitude for the different stimulus categories.

Materials and Methods

Participants

Eighteen adult participants between 18 and 31 years old (mean age = 24 years; 9 females) with normal or corrected-to-normal vision were paid for their participation. No participant reported taking any psychoactive medication. The data from two participants were rejected because of excessive artifacts (see rejection criteria below), leaving 16 participants for analyses. This experiment was undertaken with the understanding and written consent of each participant and was approved by Birkbeck ethics committee.

Stimuli

Stimuli were grayscale pictures of faces, cars, words, and non-words in a fixed size gray rectangle (30 stimuli per category; see Figure 1 for examples of stimuli). These rectangles were 140 × 182 pixels (7.5 × 10 cm), and extended 4.9° of horizontal and 6.4° of vertical angle from a viewing distance of 90 cm. Faces all depicted Caucasian females unfamiliar to the participants, with a direct gaze and a neutral facial expression, displayed on a gray rectangle with the eyes occupying the center of the picture. Face stimuli were adapted from the face databases of the Centre for Brain and Cognitive Development, the Nim Stim Face Stimulus Set (neutral facial expression only)1 and the CVL Face Database (Solina et al., 2003). Car stimuli depicted grayscale cars occupying the center of a gray rectangle. Word and non-word stimuli were presented in uppercase black Arial font on a gray rectangle. Word stimuli were 5-letter nouns with 4–5 phonemes, 1–2 syllables and one morpheme. Based on MRC Psycholinguistic Database (Wilson, 1987), words were rated between 200 and 600 for familiarity, concreteness and imageability (on a rating ranging from 100 to 700), and had 8 or less orthographic neighbors. These words had a written frequency of occurrence between 20 and 150 per million words (Kucera and Francis, 1967). Non-words consisted in strings of five consonants matched to the consonants in the word category for their frequency of occurrence. Finally, a grayscale picture of a butterfly on a gray rectangle was used as the target stimulus. Whole images of each category (stimulus and background) were equated in luminance using average image luminosity in Photoshop (see Table 1). A Sekonic luminance meter pointed at the center, but encompassing the whole image revealed no significant difference in luminance between the stimulus categories when presented on the screen in the testing room.

Figure 1.

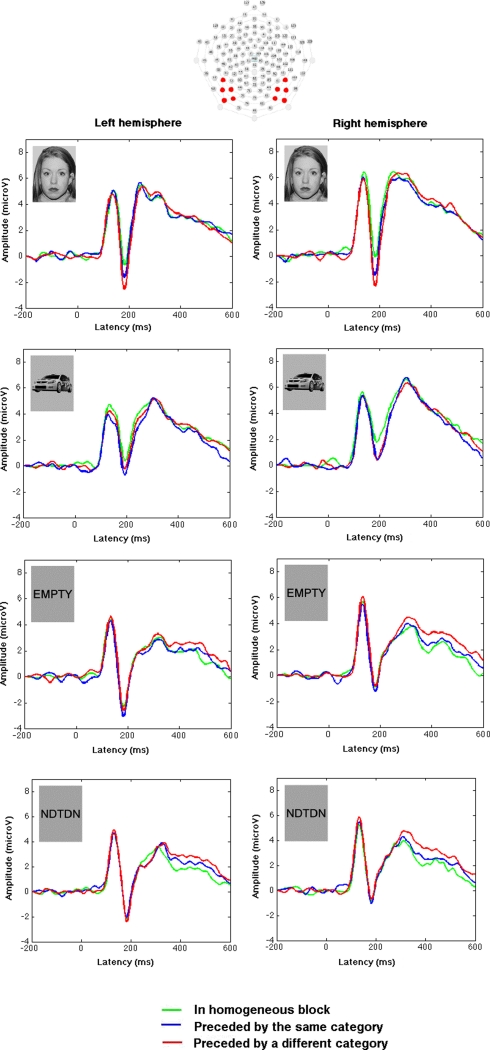

Grand average waveforms in the left and right occipitotemporal regions (channels: 58, 64, 65, 68, 69, 89, 90, 94, 95, 96) for faces, cars, words, and non-words in three presentation conditions: in homogeneous blocks, in mixed blocks when preceded by a stimulus of the same category (Mixed PrSame), or in mixed blocks when preceded by a stimulus of another category (Mixed PrOther). These waveforms are based on all trials included for analyses (see Materials and Methods) in each experimental category.

Table 1.

Detailed stimulus characteristics.

| Faces | Cars | Words | Non-words | |

|---|---|---|---|---|

| Degrees of visual angle of the stimulus within the image | 4° × 6° | 4.75° × 2.5° | 4.75° × 1° | 4.75° × 1° |

| Number of pixels of the stimulus within the image | 19684 | 6291 | 2319 | 1915 |

| Mean image luminosity (0–255 scale) ± SD | 152.2 ± 7.8 | 154.8 ± 1.3 | 153.8 ± 0.8 | 154.2 ± 1.0 |

| Mean background brightness (0–255 scale) | 204 | 177 | 162 | 162 |

Procedure

Participants performed a butterfly detection task, in which they pressed a button on a joystick when they recognized a butterfly. Ten blocks of stimuli were presented. Block order was fully randomized for each participant. Four blocks were “homogenous” blocks, in which 60 stimuli of the same category (Faces, Cars, Words, Non-Words) and 3 targets were presented2. Six blocks were “mixed” blocks in which two stimulus categories were intermixed (Faces – Cars; Faces – Words; Faces – Non-Words; Cars – Words; Cars – Non-Words; Words – Non-Words), with 40 stimuli of one category, 40 stimuli of the other category, and 4 targets3. Of these 40 stimuli of a given category, approximately 20 were preceded by a stimulus of the same category, whereas approximately 20 were preceded by a stimulus of a different category. Over the whole experiment, each stimulus category was presented in three conditions [Stimulus in a mixed block preceded by a stimulus of another category (Mixed PrOther), Stimulus in a mixed block preceded by a stimulus of the same category (Mixed PrSame), Stimulus in a homogenous block (Homogeneous)], with 54 to 64 trials in each condition (see Table 2 for a summary of experimental conditions). The PrOther condition included trials where stimuli were preceded by any other stimulus category or by a butterfly. The first trial of each block was not included in these analyses. This total number of trial did not differ between stimulus categories (p = 0.4). Each trial began with the presentation of a white fixation cross for 1000 ms on a black background, followed by the stimuli in a gray rectangle on a black background for 500 ms (SOA = 1500 ms). The stimuli were displayed using E-Prime 1.2.

Table 2.

Summary of experimental conditions.

| Stimulus | Presentation condition | Number of trial per block |

|---|---|---|

| Faces | Homogeneous (face presented in a homogeneous block) | 60 in a homogeneous block of faces |

| Mixed PrSame (face preceded by a face within a mixed block) | ±20 in a mixed block of faces and cars±20 in a mixed block of faces and words±20 in a mixed block of faces and non-words | |

| Mixed PrOther (face preceded by a car, word, non-word, or butterfly within a mixed block) | ±20 in a mixed block of faces and cars±20 in a mixed block of faces and words±20 in a mixed block of faces and non-words | |

| Cars | Homogeneous (car presented in a homogeneous block) | 60 a homogeneous block of cars |

| Mixed PrSame (car preceded by a car within a mixed block) | ±20 in a mixed block of cars and faces±20 in a mixed block of cars and words±20 in a mixed block of cars and non-words | |

| Mixed PrOther (car preceded by a face, word, non-word, or butterfly within a mixed block) | ±20 in a mixed block of cars and faces±20 in a mixed block of cars and words±20 in a mixed block of cars and non-words | |

| Words | Homogeneous (word presented in a homogeneous block) | 60 in a homogeneous block of words |

| Mixed PrSame (word preceded by a word within a mixed block) | ±20 in a mixed block of words and faces±20 in a mixed block of words and cars±20 in a mixed block of words and non-words | |

| Mixed PrOther (word preceded by a face, car, non-word, or butterfly within a mixed block) | ±20 in a mixed block of words and faces±20 in a mixed block of words and cars±20 in a mixed block of words and non-words | |

| Non-words | Homogeneous (non-word presented in a homogeneous block) | 60 in a homogeneous block of non-words |

| Mixed PrSame (non-word preceded by a non-word within a mixed block) | ±20 in a mixed block of non-words and faces±20 in a mixed block of non-words and cars±20 in a mixed block of non-words and words | |

| Mixed PrOther (non-word preceded by a face, car, word, or butterfly stimulus within a mixed block) | ±20 in a mixed block of non-words and faces±20 in a mixed block of non-words and cars±20 in a mixed block of non-words and words |

ERP recording and waveform analysis

EEG signal was recorded using a HydroCel Geodesic Sensor Net with 128 electrodes, with vertex as reference; horizontal and vertical electro-oculograms were used to monitor eye movements. EGI NetAmps 200 was used (gain = 1000), data were digitized with sampling rate of 500 Hz, and band-pass filtered between 0.1 and 100 Hz. Experimenters aimed to keep impedance under 100 kΩ for each channel by adding an electrolyte solution and by making sure the sensors were resting directly on the participant's skin.

Each trial was segmented from the continuous EEG data (windowed from 200 ms pre-stimulus onset to 600 ms post-stimulus onset). Segments with EEG exceeding ±100 μV or EOG exceeding ±55 μV were excluded. A channel was excluded from the whole recording if it was rejected in more than 20% of the trials. If more than 12 of 128 channels were rejected, the trial was excluded from the average. Signal from rejected electrodes was replaced using the “bad channel replacement” algorithm in Netstation 4.2.4, which interpolates the signal of a rejected channel from the signal of remaining channels using spherical splines. A minimum of 35 good trials in each stimulus category was required to keep a participant in for the analyses.

Waveforms were baseline-corrected using the 200-ms pre-stimulus interval. Averages were computed for each participant in each experimental condition, and data re-referenced to the average of channels. Based on visual inspection of the grand average (see Figure 1), a montage of electrodes in the occipitotemporal regions was created where the P1 and N170 components were maximal (58, 64, 65, 68, 69, 89, 90, 94, 95, 96, see Figure 1), which is similar to earlier observations (Rossion et al., 2003; Bentin et al., 2006). Based on visual inspection of the individual data, the P1 time-window was defined as 76–168 ms, and the N170 time-window was defined as 128–206 ms. These time-windows were centered around the peak amplitude of the component of interest and was sufficiently restricted to avoid including time-points from adjacent components of the same polarity in each individual (Handy, 2005). The resulting time-windows are generally congruent with the P1 and N170 time-windows described in the literature (Rossion et al., 2003; Maurer et al., 2005a; Halit et al., 2006; Mercure et al., 2008). The component peak amplitude within this time-window was extracted for each participant, in each condition, and each electrode. In order to reduce the number of levels in the statistical analyses, these peak amplitudes were then averaged for all channels in the left and right occipitotemporal montage. Analyses of the P1 amplitude aimed to ensure that differences found in the N170 amplitude could not be attributed to between-conditions differences in the amplitude of this earlier component with a very similar topography and an opposite polarity. P1 and N170 peak amplitudes were each analyzed in a repeated-measures multivariate analysis of variance (MANOVA) for the factors Stimulus Category (Faces, Cars, Words, Non-Words), Presentation Condition [Stimulus in a mixed block preceded by a stimulus of another category (Mixed PrOther), Stimulus in a mixed block preceded by a stimulus of the same category (Mixed PrSame), Stimulus in a homogenous block (Homogeneous)] and Hemisphere (Left, Right). The same MANOVA was also performed on the P1-N170 peak-to-peak difference. The PrOther condition can be considered as the baseline in which no repetition effect was expected since the stimuli were preceded by a stimulus of a different category. This baseline condition was compared to the PrSame condition in order to study the impact of a repetition of stimulus category and to the Homogeneous condition in order to study the impact of multiple repetitions of stimulus category. The term “repetition effect” used throughout this manuscript refers to a significant difference in N170 amplitude between a condition in which stimulus category was repeated (PrSame and Homogeneous) compared to the baseline condition in which there were no repetition of stimulus category (PrOther).

Results

P1 amplitude

The above-described MANOVA was performed on the peak amplitude of the P1 (see Table 3 for detailed statistics). Only a main effect of stimulus category was significant, and post hoc t-tests revealed that the P1 was larger for faces than for other stimulus categories [Faces versus Cars: t(15) = 2.83; p = 0.013; Faces versus Words: t(15) = 3.28; p = 0.005; Faces versus Non-Words: t(15) = 3.50; p = 0.003]. These P1 differences can potentially be explained by the fact that the face stimuli occupied a larger part of the whole image than the other stimulus categories, or by the fact that mean background brightness was slightly lighter for faces than for other categories (see Table 1).

Table 3.

Statistical results of a 4 categories × 3 presentation conditions × 2 hemispheres repeated-measures MANOVA on the peak amplitude of the P1, N170, and P1 to N170 peak-to-peak difference.

| P1 | N170 | P1–N170 | ||||

|---|---|---|---|---|---|---|

| F | F | F | ||||

| Category | (3, 13) = 3.7 | 0.040*(0.460) | (3, 13) = 4.0 | 0.033*(0.478) | (3, 13) = 4.0 | 0.031* (0.483) |

| Presentation | (2, 14) = 1.1 | 0.362 | (2, 14) = 7.3 | 0.007* (0.510) | (2, 14) = 3.8 | 0.047* (0.354) |

| Hemisphere | (1, 15) = 2.6 | 0.131 | (1, 15) = 2.6 | 0.126 | (1, 15) < 0.1 | 0.993 |

| Category × presentation | (6, 10) = 1.1 | 0.445 | (6, 10) = 6.7 | 0.005* (0.801) | (6, 10) = 3.8 | 0.032* (0.693) |

| Category × hemisphere | (3, 13) = 2.3 | 0.120 | (3, 13) = 2.8 | 0.083 | (3, 13) = 1.0 | 0.446 |

| Presentation × hemisphere | (2, 14) = 0.1 | 0.918 | (2, 14) = 0.8 | 0.490 | (2, 14) = 1.5 | 0.247 |

| Category × presentation × hemisphere | (6, 10) = 2.0 | 0.173 | (6, 10) = 1.0 | 0.484 | (6, 10) = 1.3 | 0.340 |

* Indicates p-values lower than 0.05, which were considered as significant, with the partial eta squared in parenthesis for significant effects.

N170 amplitude

The above-described MANOVA was also performed on the peak amplitude of the N170 (see Table 3 for detailed statistics). Significant main effects of stimulus category and of presentation condition were found, as well as a highly significant interaction between these two factors. Separate MANOVAs per stimulus category revealed that presentation condition influenced the amplitude of the N170 for faces [F(2, 14) = 11.80; p = 0.001] and cars [F(2, 14) = 5.84; p = 0.014], but not for words [F(2, 14) = 1.80; p = 0.202] and non-words [F(2, 14) = 0.245; p = 0.786]. This suggested that no N170 repetition effect was found for words or non-words.

Post hoc t-tests revealed that the N170 was larger for faces preceded by other category (Mixed PrOther) than for faces presented in homogeneous blocks [Homogeneous; t(15) = 4.47; p < 0.001; 15 participants/16 showed data congruent with this effect], or for faces preceded by a face within mixed blocks [Mixed PrSame; t(15) = 4.10; p < 0.001; 14 participants/16 showed data congruent with this effect). The mean repetition effect (Face Mixed PrOther versus Face Mixed PrSame) was −0.95 μV (95% confidence interval = −1.44, −0.46) in the case of two successive faces and −1.91 μV (95% confidence interval = −2.83, −1.00) in the case of multiple successive faces (Face Mixed PrOther versus Face Homogeneous). These results suggest that a highly significant N170 repetition effect was observed when faces were sequentially presented and that the presentation of two successive faces was sufficient to create this effect. For cars, the N170 was larger when presented in a mixed block than in a homogenous block, but the preceding stimulus had no influence within mixed blocks [Car Mixed PrSame versus Car Homogeneous: t(15) = 3.33; p = 0.005; 12 participants/16 showed data congruent with this effect; Car Mixed PrOther versus Car Homogeneous: t(15) = 3.27; p = 0.005; 12 participants/16 showed data congruent with this effect; Car Mixed PrSame versus Car Mixed PrOther: t(15) = 1.04; p = 0.313; 8 participants/16 showed data congruent with this effect]. The mean repetition effect was −1.20 μV (95% confidence interval = −1.98, −0.41) in the case of multiple successive cars (Car Mixed PrOther versus Car Homogeneous), but was not significant in the case of two successive cars (Car Mixed PrOther versus CarMixed PrSame; mean difference = −0.26 μV; 95% confidence interval = −0.78, +0.27). This suggests that a significant N170 repetition effect was present for car category, but that it required more than two sequential items, as opposed to the face repetition effect, which was also significant when only two sequential faces were presented. This difference in the repetition effect was confirmed by a significant Stimulus Category × Presentation Condition interaction [F(2, 14) = 6.5; p = 0.010] in a separate MANOVA with only faces and cars.

All interactions and main effects involving Hemisphere were found non-significant (see Table 3 for detailed statistics). However, as previously observed (Rossion et al., 2003; Maurer et al., 2008; Mercure et al., 2008), the Category × Hemisphere interaction was found significant when only face and word stimuli were analyzed [F(1, 15) = 8.6; p = 0.010]. This result was driven by a larger N170 in the left than right hemisphere for words [t(15) = −2.5; p = 0.022], while no significant difference was observed between the N170 in the left and right hemisphere for faces [t(15) = −0.1; p = 0.921). This result suggests that these two stimulus categories differ in lateralization. No significant interaction was found between Presentation Condition and Hemisphere or between Category, Presentation Condition, and Hemisphere, suggesting that the present repetition effect did not reliably influence lateralization.

Analyzing how the different presentation condition modified the relative amplitude of the N170 for different stimulus categories required breaking down the significant Stimulus Category × Presentation Condition interaction in a separate MANOVA for each presentation condition. This analysis revealed that the amplitude of the N170 tended to be influenced by stimulus category for stimuli preceded by another category within mixed blocks [Mixed PrOther; F(3, 13) = 3.3; p = 0.056]. In this presentation condition, faces elicited the largest N170, but this difference was only significant when compared to cars [Faces versus Cars: t(15) = 3.2; p = 0.006; Faces versus Words: t(15) = 1.6; p = 0.126; Faces versus Non-Words: t(15) = 1.7; p = 0.102]. For stimuli preceded by a stimulus of the same category within mixed blocks (Mixed PrSame), again the N170 amplitude was influenced by stimulus category [F(3, 13) = 3.9; p = 0.035], but in this presentation condition, the N170 was (or tended to be) larger for orthographic stimuli than for faces [Faces versus Words: t(15) = 1.7; p = 0.116; Faces versus Non-Words: t(15) = 0.5; p = 0.647], and even the difference between faces and cars failed to reach significance level [Faces versus Cars: t(15) = 1.8; p = 0.091]. Finally, a significant effect of stimulus category [F(3, 13) = 4.1; p = 0.029] was observed for stimuli in homogenous blocks [F(3, 13) = 5.1; p = 0.015]. Post hoc t-tests revealed that in this presentation condition, orthographic stimuli elicited a larger N170 than faces [Faces versus Words: t(15) = 2.2; p = 0.040; Faces versus Non-Words: t(15) = 2.4; p = 0.027], while the N170 was not significantly larger for faces than cars [t(15) = 1.4; p = 0.194]. The fact that the P1 amplitude was larger for faces than for other stimulus categories could have influenced these results by reducing the amplitude of the face-elicited N170. In order to clarify this matter, the amplitude of the negative deflection was assessed in a P1 to N170 peak-to-peak analysis.

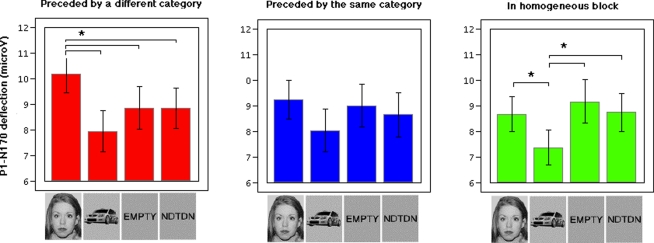

P1-N170 peak-to-peak amplitude

A 4 Stimulus Categories × 3 Presentation Conditions × 2 Hemispheres repeated-measures MANOVA was performed on the amplitude of the P1 to N170 peak-to-peak difference (see Table 3 for detailed statistics and Figure 2 for illustration). Like for the N170 peak amplitude, significant main effects of stimulus category and of presentation condition were observed, as well as a significant interaction between stimulus category and presentation condition. Separate MANOVAs for each presentation condition revealed that the amplitude of the P1-N170 deflection was highly influenced by stimulus category for stimuli preceded by another category within mixed blocks [Mixed PrOther; F(3, 13) = 9.0; p = 0.002]. In this presentation condition, faces elicited a larger P1-N170 deflection than all other stimulus categories [Faces versus Cars: t(15) = 5.2; p < 0.001; Faces versus Words: t(15) = 2.8; p = 0.013; Faces versus Non-Words: t(15) = 3.1; p = 0.007]. No influence of stimulus category was observed on the amplitude of the P1-N170 deflection for stimuli preceded by a stimulus of the same category within mixed blocks [Mixed PrSame; F(3, 13) = 1.5; p = 0.261]. Finally, a significant effect of stimulus category [F(3, 13) = 4.1; p = 0.029] was observed for stimuli in homogenous blocks. In the Homogeneous presentation condition, all categories presented a larger deflection than cars, but there were no difference between faces and words or between faces and non-words [Faces versus Cars: t(15) = 2.2; p = 0.041; Words versus Cars: t(15) = 3.1; p = 0.007; Non-Words versus Cars: t(15) = 2.4; p = 0.031; Faces versus Words: t(15) = 0.6; p = 0.539; Faces versus Non-Words: t(15) = 0.1; p = 0.910].

Figure 2.

Average of individual P1–N170 deflection (or peak-to-peak difference) for each stimulus category, in each presentation condition.

Discussion

This experiment investigated category-level repetition effect at the level of the N170, and how this effect differed across stimulus categories. The results confirmed Maurer et al.’s (2008) finding of a smaller N170 amplitude when faces were presented successively compared to when they were mixed with other categories, while no such category-level repetition effect was present for words. The present study extended and clarified Maurer et al.’s findings in three different ways. A first novel finding of the present study was that the presentation of two successive faces was sufficient to create a significant N170 repetition effect. In the current design, the Homogeneous and Mixed PrOther conditions were presented in different experimental blocks, during the course of which the N170 amplitude could have varied in different ways. It is therefore difficult to interpret the repetition effects obtained in this contrast in terms of suppression and/or enhancement. The most likely explanation is probably that the smaller N170 amplitude for faces in Homogeneous than in Mixed PrOther condition reflects a decrease in N170 amplitude over the course of the homogeneous block (repetition suppression effect). But from this data only, it is impossible to completely rule out the alternative explanation that the N170 showed less enhancement over the course of the homogeneous than the mixed block. The contrast between Mixed PrSame and Mixed PrOther, which is a within-block comparison, does not suffer from this limitation. The smaller N170 amplitude for faces in Mixed PrSame compared to Mixed PrOther can be more confidently interpreted as a repetition suppression effect since these two conditions were randomly presented in the same experimental blocks, with the only difference being the repetition of stimulus category in Mixed PrSame. This within-block comparison also ruled out the possibility that the difference in the category-level repetition suppression effect for faces and for words could result from between-blocks differences in attention level. A second new finding of the present study was that a category-level repetition effect was observed for a non-face object category (cars), but that this effect was weaker and it required the presentation of more successive items of the category than the face repetition effect. Unfortunately, the data collected in the present study does not allow finding how many successive cars would be necessary to create a significant repetition effect. This could be the object of a follow-up study. A third new finding from the present study was that orthographic stimuli did not elicit a category-level repetition effect, whether they were pronounceable (words) or not (consonant strings). This ruled out the possibility that the retrieval of the phonological form helped to sustain the N170 amplitude to sequentially presented words. Also, we found no repetition effect for both words (familiar, meaningful, and frequently encountered alphabetic stimuli) and consonant strings (unfamiliar and meaningless alphabetic strings) suggesting that the difference in the category-level repetition effect between words and faces is unlikely to be attributed to a difference in stimulus familiarity.

Henson (2003) suggested that neural repetition suppression occurs when the same cognitive processes are performed on the first and second presentation of a stimulus, whereas an increase in activity can occur when stimulus repetition causes a new process to be performed on the second presentation of a stimulus. For example, it was observed that repetition of individual faces elicit a reduction in fusiform gyrus activation when the faces are familiar (Henson et al., 2000, 2003), whereas repetition of individual faces elicit an increase in the activation of this area when the faces are unfamiliar (Henson et al., 2000). According to the authors, the repetition enhancement for unfamiliar faces can be explained by a modification in the cognitive processes applied on the first and second presentation of previously unknown individual faces. Indeed, the initial presentation of an unfamiliar face might be sufficient to form a face representation, which would allow recognition at the individual level when this face is repeated.

Following Henson's hypothesis (Henson, 2003), it could be argued that category-level repetition suppression would occur when the same processes are applied to sequential items of a stimulus category, while this effect would not be observed when different cognitive processes are applied to sequential items of a stimulus category. In accordance with this idea, a smaller N170 amplitude was observed for sequential presentation of faces because the cognitive processes underlying the N170 were repeated for each subsequent face. The face-elicited N170 is thought to reflect structural encoding of face stimuli (Bentin and Deouell, 2000; Eimer, 2000). Since all faces present the same first-order configuration (two eyes above a nose above a mouth; Maurer et al., 2002), the presentation of one face configuration might prime (or facilitate) the structural encoding of subsequent faces, resulting in an activity reduction at the level of the N170. On the other hand, previous literature showed that the N170 elicited by words could be larger than for symbol strings or pseudowords in a one-back task, suggesting that it might index the processes of visual word form recognition (Maurer et al., 2005a). Since different words do not share a common configuration that differ from that of pseudowords or symbol strings, the presentation of a word might not facilitate the access to the visual word form of a different unrelated word. Each visual word form would represent a new search, and being presented with a sequence of unrelated words would not facilitate individual searches. As opposed to faces, which can be categorized as such based on a first-order configuration, each word would need to be read or recognized in order to be categorized as a word. As a consequence, the N170 amplitude would remain unaffected by sequential presentation of words. However, it could be predicted that the repetition of an individual word would facilitate the second retrieval of this particular visual word form. Indeed, it has been observed that repetition of individual words elicits repetition suppression effects on ERP components as early as the N170 (Holcomb and Grainger, 2006).

Like faces, cars have a first-order configuration that could prime the encoding of subsequent stimuli of the same category. However, this priming effect might be weaker than the priming effect elicited by an upright face because of the special expertise typical adults have with this configuration. A difference in the construction of face and car stimuli offers an alternative explanation. The fact that the car stimuli differed slightly in orientation, whereas the face stimuli did not, suggests that the items of the car category were visually more heterogeneous than the items of the face category. It has been observed that the N170 amplitude could be reduced by the interstimulus pixelwise heterogeneities caused by differences in size, orientation, and position (Thierry et al., 2007), although this issue is controversial (Bentin et al., 2007; Rossion et al., 2008). According to the hypothesis presented by Thierry et al. (2007) the fact that the car stimuli differed slightly in orientation, whereas the face stimuli did not could potentially explain why the amplitude of the N170 was larger for faces than for cars in the present study. However, it is unclear how (and if) these differences in interstimulus pixelwise heterogeneity could also affect the N170 repetition effect. It is possible that the variability in the orientation of the car stimuli could have reduced the priming power of the car configuration by imposing the necessity of mental rotation to a canonical configuration. Indeed, various behavioral measures, including priming studies, have suggested that object processing might be position and viewpoint dependent (Schyns, 1998; Kravitz et al., 2008). Even if this factor could potentially explain the difference in the degree of repetition effect observed between faces and cars, it cannot account for the fact that no repetition effect was observed for orthographic stimuli. Since words and non-words were all five letters long and never varied in their size or orientation, the items of these categories were at least as visually similar as the items of the face category. Despite low interstimulus pixelwise heterogeneity, orthographic stimuli did not show any repetition effect, which suggests that the difference in the repetition effect for faces and for cars could not be entirely explained by differences in interstimulus pixelwise heterogeneity. Further studies are required to better understand the respective influence of object orientation, stimulus heterogeneity, and expertise with a stimulus category on category-level N170 repetition effect. Nevertheless, the results of the present study suggest that the N170 indexes different cognitive mechanisms for objects (including faces) and orthographic stimuli.

The present results also showed that homogeneous/mixed stimulus presentation can be a crucial factor in determining the relative amplitude of the N170 to different stimulus categories. Many studies have found that the N170 was larger for faces than for other stimulus categories (for example Rossion et al., 2003). In the present study, only in cases where stimuli were preceded by a stimulus of a different category was the N170 deflection larger for faces than for other stimulus categories. In other words, the repetition effect of the N170 to the face category was found to be such a powerful effect that it significantly reduced the amplitude of the face-elicited N170 deflection relative to other stimulus categories, an effect that was observed on both N170 amplitudes and P1 to N170 peak-to-peak difference. Like in Mercure et al. (2008), the N170 deflection was larger for words than for faces when stimuli were blocked by category. When absolute N170 amplitudes were considered, there was even a tendency for the N170 to be larger for words than faces when stimuli were preceded by the same category within mixed blocks (Mixed PrSame). These results outline the importance of taking homogeneous/mixed stimulus presentation conditions into account when comparing the relative amplitude of the N170 to different stimulus categories across studies. The relative amplitude of the N170 for faces and other stimulus categories has also been shown to be influenced by a complex interaction of factors such as stimulus presentation size and resolution (Mercure et al., 2008) and the amount of interstimulus pixelwise heterogeneities within each category (Thierry et al., 2007). In other words, the N170 can be larger for faces or for words depending on the exact presentation conditions. More importantly, the results of the present study suggest that the “special” amplitude of the N170 for faces could be more reliably observed for faces when they are presented within the context of non-face stimuli. The unanticipated presentation of a face might elicit increased cortical activity at the level of the N170, possibly via a subcortical face-detection pathway (Johnson, 2005). It is possible that the activation of this subcortical route is considerably reduced when many faces are presented in a row and their presentation therefore becomes expected.

The present study also replicates the difference in N170 lateralization observed for words and faces. In the present study as well as in previous ones (Rossion et al., 2003; Maurer et al., 2008; Mercure et al., 2008) a larger N170 was found in the left than right hemisphere for words, while the N170 was not significantly lateralized for faces. This N170 lateralization effect could represent the analog of the lateralized activation of the fusiform gyrus for the same stimulus categories. Functional magnetic resonance studies have revealed an area of the left fusiform gyrus, which shows a sensitivity for written words (Cohen et al., 2002; Cohen and Dehaene, 2004), while a face-sensitive area has been found in the fusiform gyrus, which shows stronger activation and is more consistently found in the right than left hemisphere (Kanwisher, 2006). Nevertheless, there are important differences in temporal and spatial resolution between the ERP and fMRI techniques which suggests care in making direct comparison between these two signals.

To conclude, the results of the present study suggest that the N170 indexes different cognitive mechanisms for objects (including faces) and orthographic stimuli. Although a high level of visual expertise might have an influence on the general amplitude of the N170 (Tanaka and Curran, 2001; Rossion et al., 2002), the expertise for faces and for words seems to be differently reflected in the N170. Because of these differences in the category-level repetition effect, homogeneous/mixed stimulus presentation significantly influenced the relative amplitude of the N170 to different stimulus categories. Only faces presented within the context of non-face stimuli yielded a larger N170 deflection than other stimulus categories. More research is required to better characterize the modification in processing that occurs when faces and words are successively presented. An interesting avenue for further research could be to compare and contrast ERP repetition effects to specific aspects of these stimulus categories, such as identity, configuration, or features. While the present results clarified the repetition effect elicited by categorization processes, comparing the ERP repetition effect that occurs when individual faces and individual words are repeated might allow a better understanding of the ERP signature of face and word processing.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

We would like to thank Bruce McCandliss and Urs Maurer for fruitful discussions on this subject, as well as Orsola Rosa Salva for help with testing participants. This work was supported by MRC grants (G9715587) to Mark Johnson and (G0400341) to Frederic Dick and an Economic Social Research Council Postdoctoral fellowship to Kathrin Cohen Kadosh (PTA-026-27-2329).

Footnotes

1Development of the MacBrain Face Stimulus Set was overseen by Nim Tottenham and supported by the John D. and Catherine T. MacArthur Foundation Research Network on Early Experience and Brain Development. Please contact Nim Tottenham at tott0006@tc.umn.edu for more information concerning the stimulus set.

2Thirty individual stimuli were presented twice per block. The order of all 63 items (60 stimuli + 3 targets) was randomized for each participant. Immediate repetition of individual stimuli could have occurred, but their probability was around one per block. A pilot study of 12 blocks established that the frequency of immediate stimulus repetition was 0.7 repetitions per block, with a maximum of 2 repetitions observed in a block. Theoretically, immediate stimulus repetitions could lower the N170 amplitude for a stimulus category, but it is very unlikely that 0–2 trials out of 60 could have had a major impact on the averaged ERPs.

3Of these 40 trials, there were 30 different items (different “identities” of the same category) and 10 random items were presented twice per block, with each item being only repeated in one block throughout the experiment. Stimuli were presented in one of two pseudorandom orders. No individual stimulus repetitions were presented.

References

- Bentin S., Allison T., Puce A., Perez E., McCarthy G. (1996). Electrophysiological studies of face perception in humans. J. Cogn. Neurosci. 8, 551–565 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Bentin S., Deouell L. Y. (2000). Structural encoding and identification in face processing: ERP evidence for separate mechanisms. Cogn. Neuropsychol. 17, 35–54 [DOI] [PubMed] [Google Scholar]

- Bentin S., Golland Y., Flevaris A., Robertson L. C., Moscovitch M. (2006). Processing the trees and the forest during initial stages of face perception: electrophysiological evidence. J. Cogn. Neurosci. 18, 1406–1421 [DOI] [PubMed] [Google Scholar]

- Bentin S., Taylor M. J., Rousselet G. A., Itier R. J., Caldara R., Schyns P., Jacques C., Rossion B. (2007). Controlling interstimulus perceptual variance does not abolish N170 face sensitivity. Nature Neuroscience, 10, 801–802 10.1038/nn0707-801 [DOI] [PubMed] [Google Scholar]

- Botzel K., Schulze S., Stodieck S. R. G. (1995). Scalp topography and analysis of intracranial sources of face-evoked potentials. Exp. Brain Res. 104, 135–143 [DOI] [PubMed] [Google Scholar]

- Cohen L., Dehaene S. (2004). Specialization within the ventral stream: the case for the visual word form area. Neuroimage 22, 466–476 10.1016/j.neuroimage.2003.12.049 [DOI] [PubMed] [Google Scholar]

- Cohen L., Lehericy S., Chochon F., Lemer C., Rivaud S., Dehaene S. (2002). Language-specific tuning of visual cortex? Functional properties of the visual word form area. Brain 125, 1054–1069 10.1093/brain/awf094 [DOI] [PubMed] [Google Scholar]

- Cohen Kadosh K., Henson R. N. A., Cohen Kadosh R., Johnson M. H., Dick F. (2010). Task-dependent activation of face-sensitive cortex: an fMRI adaptation study. J. Cogn. Neurosci. 22, 903–917 [DOI] [PubMed] [Google Scholar]

- Eimer M. (2000). Event-related brain potentials distinguish processing stages involved in face perception and recognition. Clin. Neurophysiol. 111, 694–705 10.1016/S1388-2457(99)00285-0 [DOI] [PubMed] [Google Scholar]

- Grill-Spector K., Henson R., Martin A. (2006). Repetition and the brain: neural models of stimulus-specific effects. Trends Cogn. Sci. 10, 14–23 [DOI] [PubMed] [Google Scholar]

- Grill-Spector K., Kushnir T., Edelman S., Avidan G., Itzchak Y., Malach R. (1999). Differential processing of objects under various viewing conditions in the human lateral occipital complex. Neuron 24, 187–203 10.1016/S0896-6273(00)80832-6 [DOI] [PubMed] [Google Scholar]

- Grill-Spector K., Malach R. (2001). fMR-Adaptation: a tool for studying the functional properties of human cortical neurons. Acta Psychol. 107, 293–332 10.1016/S0001-6918(01)00019-1 [DOI] [PubMed] [Google Scholar]

- Halit H., de Haan M., Schyns P. G., Johnson M. H. (2006). Is high-spatial frequency information used in the early stages of face detection? Brain Res. 1117, 154–161 [DOI] [PubMed] [Google Scholar]

- Handy T. C. (2005). Event-related Potentials. A Methods Handbook. Cambridge, MA: The MIT Press [Google Scholar]

- Heisz J. J., Watter S., Shedden J. M. (2006a). Automatic face identity encoding at the N170. Vision Res. 46, 4604–4614 10.1016/j.visres.2006.09.026 [DOI] [PubMed] [Google Scholar]

- Heisz J. J., Watter S., Shedden J. M. (2006b). Progressive N170 habituation to unattended repeated faces. Vision Res. 46, 47–56 10.1016/j.visres.2005.09.028 [DOI] [PubMed] [Google Scholar]

- Henson R. (2003). Neuroimaging studies of priming. Prog. Neurobiol. 70, 53–81 [DOI] [PubMed] [Google Scholar]

- Henson R., Goshen-Gottstein Y., Ganel T., Otten L. J., Quayle A., Rugg M. D. (2003). Electrophysiological and haemodynamic correlates of face perception, recognition and priming. Cereb. Cortex 13, 793–805 10.1093/cercor/13.7.793 [DOI] [PubMed] [Google Scholar]

- Henson R., Shallice T., Dolan R. (2000). Neuroimaging evidence for dissociable forms of repetition priming. Science 287, 1269–1272 10.1126/science.287.5456.1269 [DOI] [PubMed] [Google Scholar]

- Holcomb P. J., Grainger J. (2006). On the time course of visual word recognition: an event-related potential investigation using masked repetition priming. J. Cogn. Neurosci. 18, 1631–1643 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Itier R., Taylor M. J. (2004). Effects of repetition learning on upright, inverted and contrast-reversed face processing using ERPs. Neuroimage 21, 1518–1532 10.1016/j.neuroimage.2003.12.016 [DOI] [PubMed] [Google Scholar]

- Itier R. J., Taylor M. (2002). Inversion and contrast polarity reversal affect both encoding and recognition processes of unfamiliar faces: a repetition study using ERPs. Neuroimage 15, 353–372 10.1006/nimg.2001.0982 [DOI] [PubMed] [Google Scholar]

- Jacques C., d'Arripe O., Rossion B. (2007). The time course of the inversion effect during individual face discrimination. J. Vis. 7, 1–9 [DOI] [PubMed] [Google Scholar]

- Jeffreys A. D. (1996). Evoked potential studies of face and object processing. Vis. Cogn. 3, 1–38 [Google Scholar]

- Johnson M. H. (2005). Subcortical face processing. Nat. Rev. 6, 766–774 [DOI] [PubMed] [Google Scholar]

- Kanwisher N. (2006). What's in a face? Science 311, 617–618 10.1126/science.1123983 [DOI] [PubMed] [Google Scholar]

- Kanwisher N., Yovel G. (2006). The fusiform face area: a cortical region specialized for the perception of faces. Philos. Trans. R Soc. 361, 2109–2128 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Kovács G., Zimmer M., Harza I., Vidnyánszky Z. (2007). Adaptation duration affects the spatial selectivity of facial aftereffects. Vision. Res. 47, 3141–3149 [DOI] [PubMed] [Google Scholar]

- Kravitz D. J., Vinson L. D., Baker C. I. (2008). How position dependent is visual object recognition? Trends Cogn. Sci. 12, 114–122 [DOI] [PubMed] [Google Scholar]

- Kucera H., Francis N. (1967). Computational Analysis of Present-day American English. Providence, RI: Brown University Press [Google Scholar]

- Maurer D., Le Grand R., Mondloch C. J. (2002). The many faces of configural processing. Trends Cogn. Sci. 6, 255–260 [DOI] [PubMed] [Google Scholar]

- Maurer U., Brandeis D., McCandliss B. (2005a). Fast, visual specialization for reading in English revealed by the topography of the N170 ERP response. Behav. Brain Funct. 1, 13. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Maurer U., Brem S., Bucher K., Brandeis D. (2005b). Emerging neurophysiological specialization for letter strings. J. Cogn. Neurosci. 17, 1532–1552 10.1162/089892905774597218 [DOI] [PubMed] [Google Scholar]

- Maurer U., Brem S., Kranz F., Bucher K., Benz R., Halder P., Steinhausen H.-C., Brandeis D. (2006). Coarse neural tuning for print peaks when children learn to read. Neuroimage 33, 749–758 10.1016/j.neuroimage.2006.06.025 [DOI] [PubMed] [Google Scholar]

- Maurer U., Rossion B., McCandliss B. D. (2008). Category specificity in early perception: face and word N170 responses differ in both lateralization and habituation properties. Front. Hum. Neurosci. 2:18. 10.3389/neuro.09.018.2008 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Mercure E., Dick F., Halit H., Kaufman J., Johnson M. H. (2008). Differential lateralization for words and faces: cateory or psychophysics? J. Cogn. Neurosci. 20, 2070–2087 [DOI] [PubMed] [Google Scholar]

- Rossion B., Gauthier I., Goffaux V., Tarr M. J., Crommelinck M. (2002). Expertise training with novel objects leads to left-lateralized facelike electrophysiological responses. Psychol. Sci. 13, 250–257 [DOI] [PubMed] [Google Scholar]

- Rossion B., Jacques C. (2008). Does physical interstimulus variance account for early electrophysiological face sensitive responses in the human brain? Ten lessons on the N170. NeuroImage, 39, 1959–1979 10.1016/j.neuroimage.2007.10.011 [DOI] [PubMed] [Google Scholar]

- Rossion B., Joyce C. A., Cottrell G. W., Tarr M. J. (2003). Early lateralization and orientation tuning for face, word, and object processing in the visual cortex. Neuroimage 20, 1609–1624 10.1016/j.neuroimage.2003.07.010 [DOI] [PubMed] [Google Scholar]

- Schyns P. G. (1998). Diagnostic recognition: task constraints, object information, and their interactions. Cognition 67, 147–179 10.1016/S0010-0277(98)00016-X [DOI] [PubMed] [Google Scholar]

- Solina F., Peer P., Batagelj B., Juvan S., Kovae J. (2003). Color-based face detection in the “15 seconds of fame” art installation. Paper Presented at the Mirage 2003: Conference on Computer Vision/Computer Graphics Collaboration for Model-based Imaging, Rendering, Image Analysis and Graphical Special Effects, Rocquencourt, France [Google Scholar]

- Tanaka J. W., Curran T. (2001). A neural basis for expert object recognition. Psychol. Sci. 12, 43–47 [DOI] [PubMed] [Google Scholar]

- Thierry G., Martin C. D., Downing P., Pegna A. J. (2007). Controlling for interstimulus perceptual variance abolishes N170 face selectivity. Nat. Neurosci. 10, 505–511 [DOI] [PubMed] [Google Scholar]

- Wiggs C. L., Martin A. (1998). Properties and mechanisms of perceptual priming. Curr. Opin. Neurobiol. 8, 227–233 [DOI] [PubMed] [Google Scholar]

- Wilson M. (1987). MRC Psycholinguistic Database: Machine Usable Dictionary, version 2.00.

- Winston J. S., Henson R., Fine-Goulden M. R., Dolan R. (2004). fMRI-adaptation reveals dissociable neural representations of identity and expression in face perception. J. Neurophysiol. 92, 1830–1839 10.1152/jn.00155.2004 [DOI] [PubMed] [Google Scholar]