Abstract

Emblems are meaningful, culturally-specific hand gestures that are analogous to words. In this fMRI study, we contrasted the processing of emblematic gestures with meaningless gestures by pre-lingually Deaf and hearing participants. Deaf participants, who used American Sign Language, activated bilateral auditory processing and associative areas in the temporal cortex to a greater extent than the hearing participants while processing both types of gestures relative to rest. The hearing non-signers activated a diverse set of regions, including those implicated in the mirror neuron system, such as premotor cortex (BA 6) and inferior parietal lobule (BA 40) for the same contrast. Further, when contrasting the processing of meaningful to meaningless gestures (both relative to rest), the Deaf participants, but not the hearing, showed greater response in the left angular and supramarginal gyri, regions that play important roles in linguistic processing. These results suggest that whereas the signers interpreted emblems to be comparable to words, the non-signers treated emblems as similar to pictorial descriptions of the world and engaged the mirror neuron system.

Keywords: ASL, Deaf, gestures, emblems, fMRI, meaningful, meaning, normal hearing, non-signer

1. Introduction

Emblems are stand-alone hand gestures that convey semantic information and are analogous to spoken words (Ekman and Friesen, 1969). A commonly-occurring example of an emblem is the ‘thumbs-up’ (meaning ‘success’ or ‘good-job’) and ‘thumbs-down’ sign (meaning ‘failure’ or ‘poor-job’). However, emblems differ from spoken words or signs of a manual language because they do not occur in syntactic sequences (Venus and Canter, 1987) or exhibit morphology (Aronoff et al., 2005). Emblematic gestures have been characterized as socially regulated signs that are one-step removed from a full-fledged sign language (Kendon, 1988; McNeill, 1992). Because emblems are produced by both Deaf signers and non-signing, hearing speakers and are meaningful to both groups, they provide a means to investigate the common elements of perceiving gestures and recognizing linguistic signs, thus allowing us to speculate about links between gestural processing and language. The aim of the present study was to dissociate the processing of meaningful emblematic gestures from meaningless gestures in both hearing non-signers and Deaf signers using functional magnetic resonance imaging (fMRI).

In McNeill’s (1992) framework of gestures and their relationship to speech, speech-accompanying gesticulations occur at one extreme, followed by language-like-gestures that are transformed into pantomimes, then emblems and finally sign languages. As we progress from gesticulation to sign languages along this continuum, the presence of speech declines, the presence of language properties increases and idiosyncratic gestures are transformed into socially regulated signs. All languages, including manual languages such as American Sign Language (ASL), share a system which incorporates segmentation, composition, lexicon, syntax, arbitrariness, standards of form and a community of users (see Klima and Bellugi, 1979). In Arbib’s (2005) conceptualization of language development, manual gestures, including pantomimes and emblematic gestures, provided the seed or scaffolding for development of vocal language. In whichever manner language arose, emblems, which are understood by both hearing and Deaf populations, provide a means to test common gestural processing capabilities of the two populations.

Semantic processing of meaningful gestures may be related to semantic processing of both words and pictures. In a PET study, Decety et al. (1997) investigated the processing of meaningful and meaningless action sequences by hearing non-signers. The subjects were asked to watch the pantomimed gestures with the intention of recognizing or imitating the gestures. There were differences in brain activation patterns due to the stimulus-type (whether meaningful or meaningless) and the task required of the participants. Processing of meaningful pantomimes activated a left fronto-temporal network of regions whereas processing of meaningless action sequences activated a right occipito-parietal pathway. Recognizing actions led to the activation of memory encoding systems whereas observing with the intent to imitate led to the activation of brain regions involved in preparing and initiating motor actions. In an ERP study, Gunter and Bach (2004) had subjects categorize emblematic and closely-matched, made-up, gestures as being meaningful or meaningless in order to determine the semantic processing of emblems. The researchers focused on the N300 (reflecting stimulus-specific processing) and N400 (reflecting amodal semantic processing) components of the evoked potentials to determine the differences between the processing of the two types of gestures. Compared to meaningless gestures, emblems showed a reduced N400, an effect the authors argued is similar to that elicited by words when compared to pseudo-words (Bentin et al., 1985). Further, the N300 component elicited for emblems, although it was prolonged, showed some of the characteristics of picture-related semantic processing (e.g. Hamm et al., 2002). The authors concluded that meaningful hand gestures such as emblems, although more pictorial than words, may access a common semantic system. However, the investigators did not explicitly contrast processing of meaningful gestures with word processing in the same experiment. A more recent ERP study (Wu and Coulson, 2011) contrasted gestural processing with pictorial interpretation. Wu and Carlson determined that both depictive gestures and photographs of real-world objects evoked similar ERPs around 300 ms and between 400–600ms in normal-hearing non-signers; there were differences in the ERPs between dynamic and static gestures, with the latter having a stronger effect at 300ms. In contrast, Xu et al. (2009) found common activation patterns for gestures (pantomimes, emblems) and spoken glosses of these gestures in the left-lateralized perisylvian language-processing areas in hearing non-signers. It appears therefore that for hearing non-signers processing of meaningful gestures may engage language or word processing networks with a few studies alluding to engagement of the pictorial representation network.

A few brain imaging studies have investigated processing of emblems by Deaf signers. In a companion fMRI study (Husain et al., 2009), we investigated neural correlates of four types of gestures, varying along linguistic and semantic dimensions: (1) linguistic and meaningful ASL, (2) non-meaningful pseudo-ASL, (3) meaningful emblematic, and (4) nonlinguistic, meaningless, made-up gestures. The last two are part of the current study and their processing is discussed in the current article and contrasted with the processing in hearing non-signers. The results of the previous study provided partial support for the conceptualization of different gestures as belonging to a continuum (McNeill, 2005) and the variance in the fMRI results was best explained by differences in the processing of gestures along the semantic dimension. We found that brain activation patterns for the nonlinguistic, non-meaningful gestures (meaningless gestures of the present study) were the most different compared to the ASL gestures The activation patterns for the emblems were most similar to those of the ASL gestures and those of the pseudo-ASL were most similar to the meaningless gestures, with the first two types of gestures resulting in greater response in bilateral middle and superior temporal gyri compared to the last two types. Corina et al. (2007) investigated passive viewing of three types of gestures, including linguistic signs, intransitive self-oriented actions, and transitive object-oriented actions in Deaf signers and hearing non-signers using PET. Although the study did not explicitly investigate emblematic gestural processing, the study provides important evidence about similarities and differences in gestural processing among signers and non-signers. The signers engaged a frontal/parietal/superior-temporal-sulcus network for recognizing all three types of gestures. The Deaf signers distinguished the linguistic ASL gestures from the non-linguistic gestures, with the latter invoking response in the middle occipital temporal-ventral regions rather than the network engaged by the non-signers.

The goal of the present study was to characterize differences and similarities in the processing of meaningful and meaningless gestures by both hearing and Deaf participants. Are the neural correlates of processing emblematic gestures similar in hearing non-signers and Deaf signers? If they are different, is the difference illustrative of how gestures or linguistic units are processed in non-signers and signers? We attempted to answer these questions by comparing processing of both meaningful and meaningless gestures in signers and non-signers. Based on our previous work (Husain et al., 2009) and the work of others (Corina et al., 2007) that compared processing of linguistic signs and gestures in the Deaf, we presumed that emblematic, but not meaningless, gestures would be treated as linguistic units. We also know from the Decety et al. (1997) and Gunter and Bach (2004) studies that meaningful gestures are treated possibly as words and perceived differently than meaningless gestures, in hearing non-signers. Therefore we hypothesized that both signers and non-signers would interpret emblems as words and engage linguistic processing.

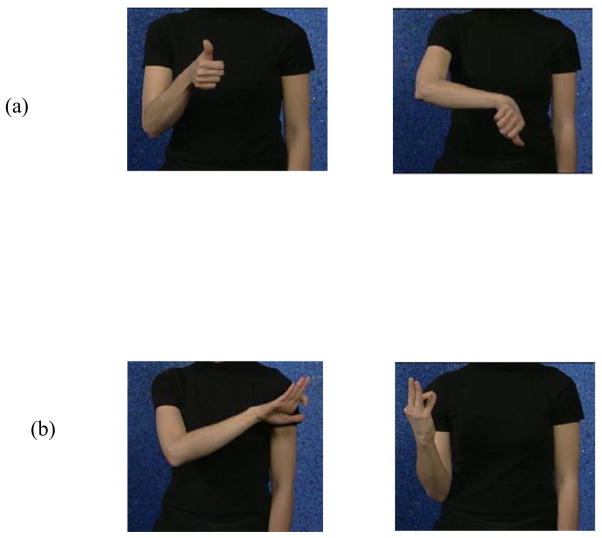

The emblematic meaningful gestures used in the current study were “thumbs-up” and “thumbs-down” gestures understood by all participants. The meaningless gestures were not part of any sign language, particularly American Sign Language, known by the Deaf participants and are further described in the Methods section (Figure 1) and our previous paper (Husain et al., 2009). We expected differences in the fMRI activation patterns for the meaningless gestures in the two groups: the meaningless gestures would be treated as pseudo-words by the signing group and as body movements by the hearing non-signing group. Contrasting the processing of meaningful and meaningless gestures would allow us to identify the contribution of meaning to gestural processing, for both signers and non-signers.

Figure 1.

Pictures of the two types of stimuli: (a) meaningful emblematic and (b) meaningless gestures.

The subjects performed a visual or identity discrimination (IDN) and a category discrimination (CAT) task employing pairs of exemplars of these stimulus types. We have previously used similar tasks (Husain et al., 2006; Husain et al., 2009) to probe differences in levels of processing and their associated neural correlates for the same set of stimuli. The CAT task would allow the participants to process the gestures at a whole ‘word’ or semantic-level of analysis, whereas the IDN task would likely engage neural sources involved in ‘phonological-level’ of processing for signers and possibly at a visuo-spatial processing level for non-signers. In our previous work (Husain et al., 2009) with the same group of signers as in this study, but with a larger set of gestural types, we found that the CAT task resulted in a greater response in the left ventral middle and inferior frontal gyrus, among other regions, compared to the IDN task, in concordance with the idea of semantic processing. The reverse comparison of IDN > CAT task resulted in increased response in bilateral intraparietal sulcus, supporting the idea of feature-based or phonological-level processing of gestures. Note that these data were obtained from Deaf signers and results from hearing non-signers may show different results.

2. Results

Hearing non-signers and Deaf signers were imaged and their behavioral responses recorded during an fMRI study. Participants watched videos of hand gestures and performed one of two tasks: IDN or identity discrimination task (are the two gestures identical?) and CAT or category discrimination task (do the two gestures belong to the same category?). See Figure 2 for the timeline of individual trials and a scanning session.

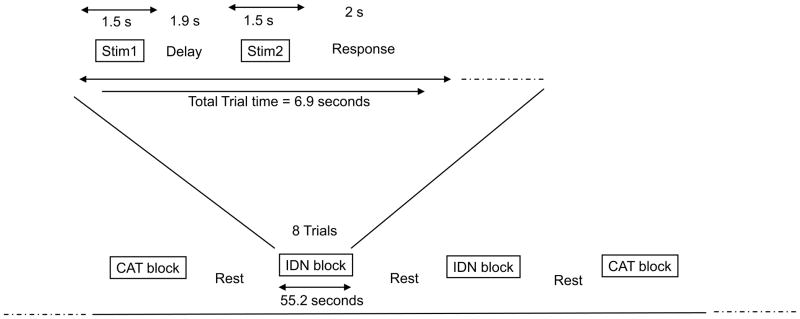

Figure 2.

Timeline of a trial and a scanning session.

2.1 Behavioral Results

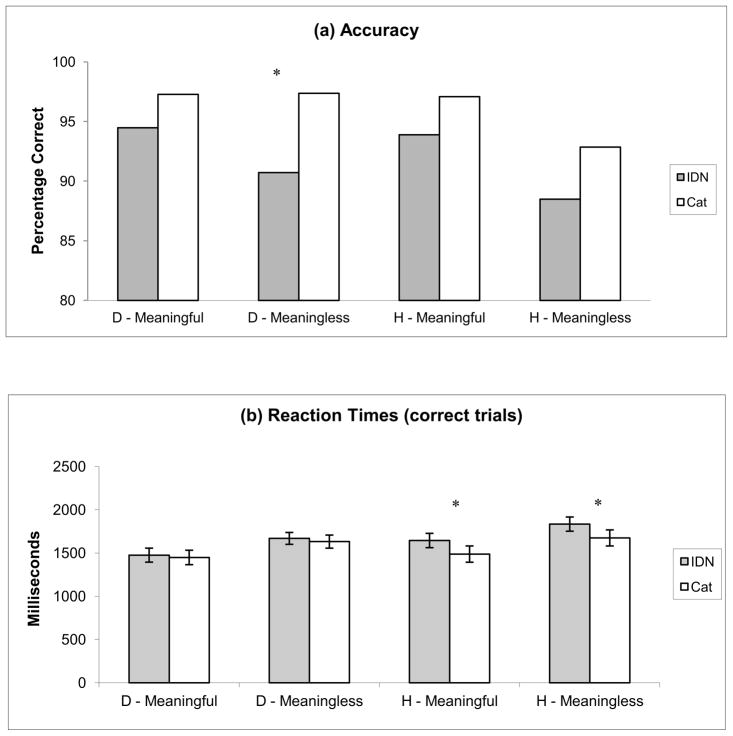

The behavioral results are shown in Figure 3. The reaction time and accuracy were analyzed for 16 hearing and 13 Deaf subjects (the behavioral responses of one Deaf subject were not recorded due to technical problems). We performed a 3-way ANOVA (group, task, stimulus condition) for both accuracy and reaction times. The analysis of correct responses showed a main effect of task type (F [1,108] = 15.5, p=0.0001) and stimulus condition (F [1,108] = 6.89, p=0.009) but not of group (F [1,108] = 2.93, p = 0.09). Individual comparisons of tasks for a given stimulus type revealed a trend of the CAT task being more accurate than the IDN task; this was significant (p<0.05) for the Deaf group for the meaningless stimulus type and almost significant for the hearing group for the meaningful condition. The analysis of reaction times of correct trials showed a main effect of stimulus condition (F [1, 108] = 9.5, p = 0.003), but not of task or group. All participants showed the following trend – the reaction times for the CAT task were shorter than for the IDN task for all stimulus types. This trend was significant (p<0.05) for the hearing group for both stimulus types.

Figure 3.

Behavioral results, accuracy and (b) reaction times of correct trials. D: Deaf, H: Hearing. Asterisks denote significant results of paired t-tests for the IDN and CAT tasks.

2.2 fMRI Results

The results reported here are for data from sixteen hearing and twelve Deaf subjects. Of the fifteen Deaf participants enrolled in the study, data from two were excluded from the analysis due to excessive head motion (defined ≥ 4 mm in translation and ≥2 degrees in rotation) in any one of the scan runs and one Deaf participant did not complete the study.

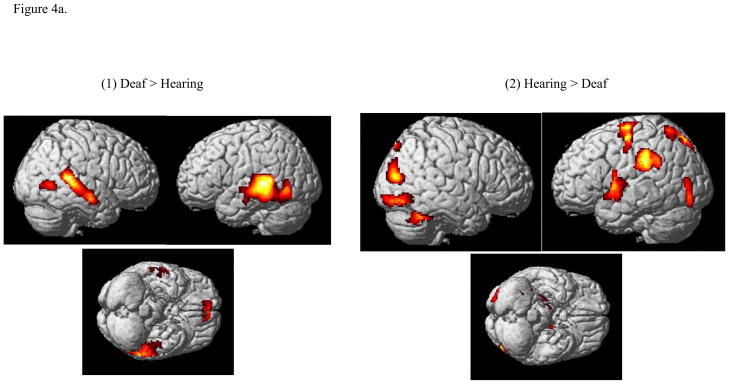

Across groups

In the first analysis, in order to consider the effects of gestural processing in general, no distinction was made between categorical and visual discrimination tasks. We performed a two-way ANOVA with group and stimulus conditions as factors. The positive effect of the Deaf signing group compared to the hearing group resulted in bilateral activation of secondary auditory cortex (superior and middle temporal gyri) and the inferior temporal gyrus. See Figure 4a and Table 1. The positive effect of hearing group compared to the Deaf group resulted in a predominantly left lateralized activation pattern. The clusters within this pattern were in the following regions: inferior parietal lobule, precentral and postcentral gyri, middle and superior frontal gyri, extrastriate regions including the lingual gyrus, precuneus, occipital gyrus and parahippocampal gyrus.

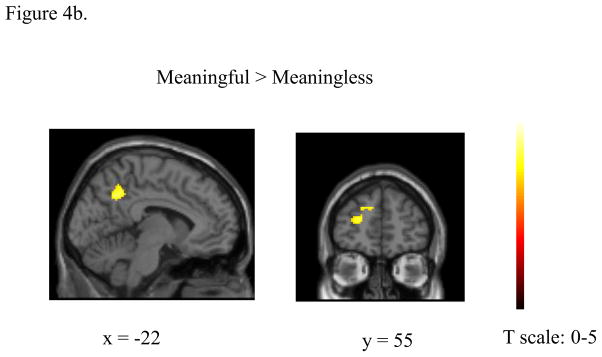

Figure 4.

Statistical parametric maps computed using two-way ANOVA (with stimulus and group as factors) rendered on surface of a template brain. (a) Effect of group. Results from (1) Deaf>Hearing contrast and (2) Hearing > Deaf contrast. (b) Effect of stimulus types. Results from the MEANINGFUL>MEANINGLESS contrast. The reverse contrast (MEANINGLESS>MEANINGFUL) resulted in 0 suprathreshold voxels and is not shown here. The results were corrected for cluster-wise multiple comparisons (p<0.05 FWE).

Table 1.

Effect of the group factor from the ANOVA analysis.

| Contrast | MNI coordinates (x,y,z) | Z score | Cluster Size | Gyrus (Brodmann area) | ||

|---|---|---|---|---|---|---|

| (a) Deaf>Hearing | −50 | −32 | 2 | 6.92 | 1330 | Superior temporal gyrus (BA 22) Middle temporal gyrus (BA 22, 37) |

| −64 | −40 | 2 | 6.04 | |||

| −50 | −40 | −6 | 5.49 | |||

|

| ||||||

| −42 | 12 | 50 | 6.47 | 523 | Middle temporal gyrus (BA 21) Superior temporal gyrus (BA 22) |

|

| −26 | −2 | 61 | 5.6 | |||

|

| ||||||

| 48 | −14 | −14 | 5.18 | 71 | Inferior temporal gyrus (BA 21) | |

| 62 | −8 | −18 | 4.89 | |||

|

| ||||||

| 6 | 54 | −12 | 5.14 | 41 | Medial frontal gyrus (BA 11) | |

|

| ||||||

| −56 | −62 | −10 | 4.78 | 39 | Inferior temporal gyrus (BA 37) | |

|

| ||||||

| (b) Hearing>Deaf | −46 | −30 | 28 | 5.79 | 2874 | Inferior parietal lobule (BA 40) |

| −60 | −30 | 38 | 4.63 | |||

| −52 | −46 | 30 | 4.51 | |||

|

| ||||||

| −42 | −4 | 6 | 5.75 | 1146 | Insula (BA 13) Precentral gyrus (BA 6) |

|

| −58 | 2 | 16 | 3.86 | |||

|

| ||||||

| 36 | −58 | −38 | 5.53 | 4217 | Middle occipital gyrus (BA 19) Inferior occipital gyrus (BA 18,19) Paraphippocampal gyrus (BA 19) Cerebellum |

|

| 36 | −80 | 14 | 5.24 | |||

| 30 | −50 | −6 | 5.1 | |||

|

| ||||||

| −24 | −58 | 68 | 5.27 | 1951 | Postcentral gyrus (BA 7) Precuneus (BA 7) |

|

| −2 | −78 | 42 | 5.11 | |||

| −4 | −54 | 52 | 5.09 | |||

|

| ||||||

| −30 | −62 | −8 | 4.93 | 578 | Lingual gyrus (BA 19) Parahippocampal gyrus (BA 19, 30) |

|

| −26 | −50 | −6 | 4.67 | |||

| −28 | −56 | 4 | 3.66 | |||

|

| ||||||

| −28 | −10 | 66 | 4.47 | 962 | Middle frontal gyrus (BA 6) precentral gyrus (BA 6), superior frontal gyrus (BA 6) | |

| −42 | −12 | 54 | 4.33 | |||

| −16 | −16 | 70 | 4.14 | |||

|

| ||||||

| −42 | −82 | −18 | 4.41 | 298 | Inferior occipital gyrus (BA 18), middle occipital gyrus (BA 19), middle temporal gyrus (BA 39) | |

| −46 | −82 | −2 | 4.33 | |||

| −48 | −78 | 10 | 4.01 | |||

Results from (a) Deaf>Hearing contrast and (b) Hearing>Deaf contrast

Combining the two subject groups, no voxels survived correction in determining the effect of the processing of meaningless compared to meaningful stimuli. For the positive effect of processing meaningful stimuli compared to meaningless stimuli, again combining both groups, two clusters survived the threshold: left superior frontal gyrus and bilateral precuneus (Figure 4b).

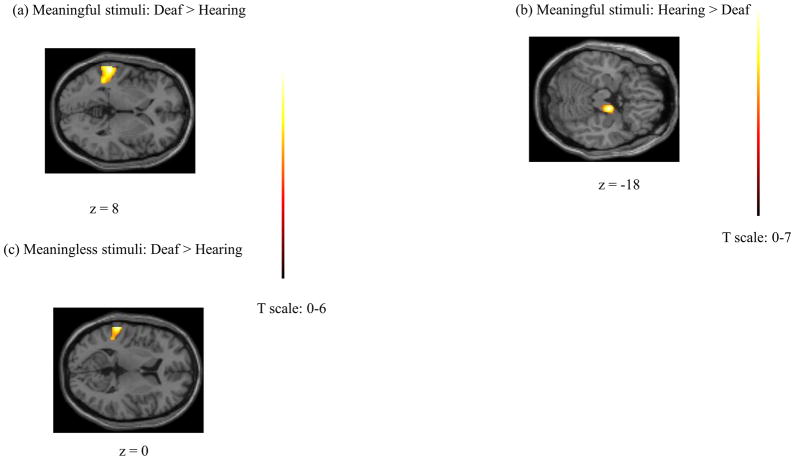

We next examined the effect of specific stimuli on the two groups by performing two-sample t-tests, as depicted in Figure 5. The processing of meaningful stimuli activated the left posterior STG (BA 42/22), planum temporale, to a greater extent in the Deaf group compared to the hearing group. Areas in left posterior STG, among a diverse set of regions, exhibited heightened response for within-group comparison of Deaf participants (but not hearing) for the meaningful stimuli (both tasks) relative to rest. The reverse contrast of hearing greater than Deaf subject populations for the meaningful gestures did not result in any suprathreshold voxels, although there was a 302-voxel cluster just below threshold in the right parahippocampal gyrus and hypothalamus at p=0.06. The processing of meaningless stimuli activated the left STG to a greater extent in the Deaf compared to the hearing group. However, the extent of this activation was less than in the similar contrast for the meaningful emblems. No voxels survived the correction in the reverse contrast for the hearing group relative to the Deaf group.

Figure 5.

Results of two-sample t-tests for the groups for a particular stimulus condition: (a) Meaningful stimuli: Deaf>Hearing, (b) Meaningful stimuli: Hearing> Deaf, and (c) Meaningless stimuli: Deaf>Hearing contrasts.

Within groups

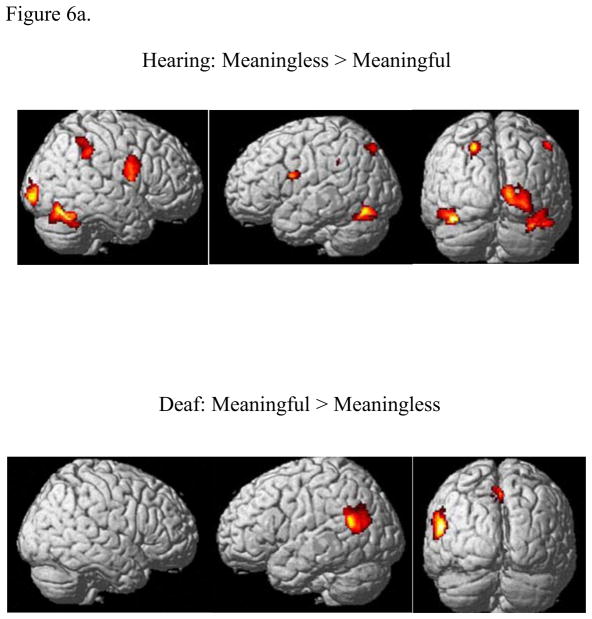

A two-way ANOVA (stimulus and task as factors) was computed separately for the two groups to test for the effect of stimulus and task types. For the hearing group, the MEANINGLESS>MEANINGFUL contrast, regardless of the task, activated a network of regions including bilateral premotor cortex (BA 6), extrastriate cortex, fusiform gyrus, insula, cerebellum and right inferior frontal gyrus, supramarginal gyrus, and inferior parietal lobule (Figure 6 and Table 2). There were no activations in the reverse contrast. In contrast to this, for the Deaf group, there were no suprathreshold voxels for the MEANINGLESS>MEANINGFUL contrast, but a predominantly left lateralized activation pattern with clusters at superior temporal gyrus, supramarginal gyrus, angular gyrus and precuneus was activated for the MEANINGFUL>MEANINGLESS contrast (Figure 6 and Table 2).

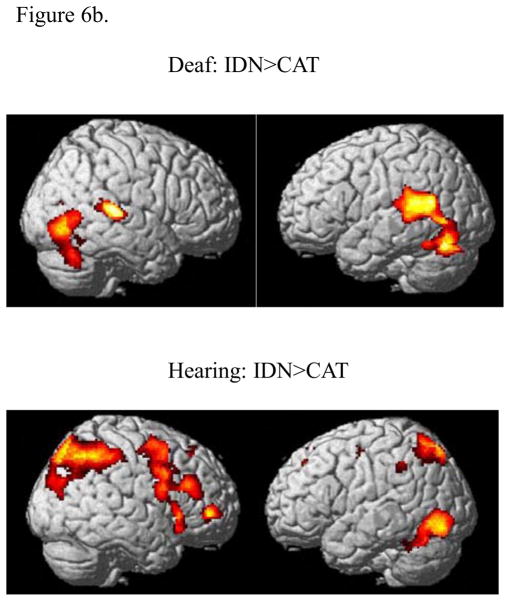

Figure 6.

Statistical parametric maps computed using two-way ANOVA (with stimulus and task as factors) rendered on surface of a template brain: (a) Effect of stimulus type, (b) Effect of task. The results were corrected for cluster-wise multiple comparisons (p<0.05 FWE). In sub-figure (a), the effect of the stimulus condition is depicted by the contrast MEANINGLESS>MEANINGFUL for the hearing group (top), and the effect of the stimulus condition depicted by the contrast MEANINGFUL>MEANINGLESS for the Deaf group (bottom). No suprathreshold voxels remained for the opposite contrasts in either case, MEANINGFUL>MEANINGLESS for the hearing group and the MEANINGLESS>MEANINGFUL for the Deaf group. In sub-figure (b), the effect of the contrast IDN>CAT is shown for the hearing group (top) and the Deaf group (bottom). No suprathreshold voxels remained for the contrast CAT>IDN for either group.

Table 2.

Local minima from the contrasts highlighting the semantic of the stimuli within groups.

| Contrast | MNI coordinates (x,y,z) | Z score | Cluster Size | Gyrus (Brodmann area) | ||

|---|---|---|---|---|---|---|

| (a) Meaningless>Meaningful (hearing group) | −96 | −4 | 26 | 5.13 | 561 | Cuneus (BA 18, 17) |

| −100 | −2 | 16 | 4.2 | |||

| −88 | 8 | 12 | 4.16 | |||

|

| ||||||

| 34 | −70 | −22 | 4.78 | 688 | Inferior occipital gyrus (BA 19) Fusiform gyrus (BA 37) | |

| 48 | −72 | −12 | 3.86 | |||

| 46 | −56 | −22 | 3.69 | |||

|

| ||||||

| −42 | −76 | −18 | 4.36 | 375 | Fusiform gyrus (BA 19) | |

| −50 | −72 | −16 | 4.24 | |||

| −38 | −62 | −22 | 4.15 | |||

|

| ||||||

| −24 | −50 | 42 | 4.18 | 542 | Precuneus (BA 7) postcentral gyrus (BA 2) | |

| −34 | −34 | 34 | 3.87 | |||

| −16 | −80 | 50 | 3.56 | |||

|

| ||||||

| 52 | 0 | 22 | 4.14 | 529 | Inferior frontal gyrus (BA 44, 9) Insula (BA 13) |

|

| 50 | 2 | 34 | 3.53 | |||

| 38 | 4 | 16 | 3.46 | |||

|

| ||||||

| −42 | 2 | 20 | 3.94 | 343 | Insula (BA 13) Precentral gyrus (BA 6) |

|

| −34 | −4 | 30 | 3.69 | |||

| −32 | −14 | 34 | 3.33 | |||

|

| ||||||

| 50 | −48 | 52 | 3.82 | 321 | Inferior parietal lobule (BA 40) supramarginal gyrus (BA 40) | |

| 44 | −42 | 36 | 3.64 | |||

| 56 | −36 | 46 | 3.15 | |||

|

| ||||||

| (b) Meaningful>Meaningless (Deaf group) | −58 | −64 | 14 | 5.38 | 525 | Superior temporal gyrus (BA 22) supramarginal gyrus (BA 40) angular gyrus (BA 39) |

| −60 | −56 | 28 | 4.26 | |||

| −54 | −68 | 30 | 3.7 | |||

|

| ||||||

| −2 | −56 | 48 | 4.64 | 459 | Precuneus (BA 7) | |

All are p<=0.05 FWE (family-wise error) corrected for multiple comparisons.

Considering the effect of tasks in both groups, both groups showed statistically significant increased activation for the IDN>CAT task comparison but not for the CAT>IDN task comparison (Figure 6b and Table 3). The Deaf group showed increased activation for the IDN>CAT contrast in the left supramarginal gyrus and superior temporal gyrus and right superior temporal gyrus, insula, precuneus, inferior occipital gyrus, fusiform gyrus and middle temporal gyrus. For the same IDN>CAT contrast, the hearing group exhibited greater response in a different set of regions: bilateral middle frontal gyrus, bilateral superior and inferior parietal lobules, medial frontal gyrus and anterior and posterior cingulate gyri along the central axis and left inferior occipital gyrus and left inferior temporal gyrus.

Table 3.

Local minima from the contrasts comparing IDN and CAT tasks for the stimuli within each group.

| Contrast | MNI coordinates (x,y,z) | Z score | Cluster Size | Gyrus (Brodmann area) | ||

|---|---|---|---|---|---|---|

| (a) IDN>CAT (hearing group) | 40 | 50 | −2 | 5.39 | 2581 | Middle frontal gyrus (BA 10, 46, 6) |

| 44 | 30 | 24 | 4.35 | |||

| 34 | −2 | 56 | 4.35 | |||

|

| ||||||

| 48 | −54 | 52 | 5.37 | 3093 | Inferior parietal lobule (BA 40) superior parietal lobule (BA 7) | |

| 24 | −76 | 58 | 4.76 | |||

| 32 | −70 | 56 | 4.74 | |||

|

| ||||||

| 8 | 30 | 44 | 5.19 | 1734 | Medial frontal gyrus (BA 6) anterior cingulate (BA 32) | |

| 8 | 36 | 26 | 4.54 | |||

| −10 | 34 | 28 | 3.95 | |||

|

| ||||||

| 8 | −8 | −4 | 4.86 | 3538 | Hypothalamus, substantia nigra, posterior cingulate (BA 23) | |

| 8 | −16 | −12 | 4.56 | |||

| 12 | −38 | 18 | 4.29 | |||

|

| ||||||

| −38 | −78 | −10 | 5.52 | 1114 | Inferior occipital gyrus (BA 19) inferior temporal gyrus (BA 37) | |

| −44 | −70 | −4 | 5.23 | |||

| −40 | −60 | −22 | 5.15 | |||

|

| ||||||

| −16 | −76 | 58 | 4.69 | 1838 | Superior parietal lobule (BA 7) | |

| −24 | −58 | 30 | 4.33 | |||

| −26 | −58 | 50 | 4.3 | |||

|

| ||||||

| −24 | −16 | 40 | 3.85 | 315 | Cingulate gyrus (BA 24) middle frontal gyrus (BA 6) | |

| −22 | −6 | 46 | 3.72 | |||

| −30 | −8 | 46 | 3.66 | |||

|

| ||||||

| (b) IDN>CAT (Deaf group) | −38 | −74 | −26 | 4.84 | 2440 | Cerebellum, supramarginal gyrus (BA 40), superior temporal gyrus (BA 22) |

| −48 | −54 | 18 | 4.8 | |||

| −54 | −46 | 16 | 4.47 | |||

|

| ||||||

| 62 | −32 | 10 | 4.56 | 535 | Superior temporal gyrus (BA 42, 22) Insula (BA 13) | |

| 68 | −44 | 12 | 3.62 | |||

| 48 | −40 | 16 | 3.22 | |||

|

| ||||||

| 28 | −58 | 20 | 4.29 | 481 | Precuneus (BA 7) | |

| 28 | −68 | 26 | 3.84 | |||

| 26 | −56 | 36 | 3.48 | |||

|

| ||||||

| 46 | −76 | −8 | 4.13 | 961 | Inferior occipital gyrus (BA 19) fusiform gyrus (BA 19), middle temporal gyrus (BA 37) | |

| 48 | −70 | −18 | 3.89 | |||

| 52 | −62 | 2 | 3.75 | |||

All are p<=0.05 FWE (family-wise error) corrected for multiple comparisons.

3. Discussion

We investigated neural correlates of meaningful emblems and meaningless fabricated gestures in Deaf signers and hearing non-signers. Behavioral results revealed a main effect of task and stimulus type; both groups tended to be faster and more accurate for the CAT task compared to the IDN task. There was some difference in response to the different gestures, with the Deaf signers differentiating the two tasks better for the meaningless gestures and the hearing group for the meaningful gestures. As expected, the brain activation patterns for gestural processing varied between the two groups: a stark difference was the heightened response of bilateral non-primary auditory areas in the temporal cortex for the Deaf group but not the hearing group for the tasks compared to the baseline rest task. The common areas of activation for both types of gestures across the two groups were in the left superior frontal gyrus and bilateral precuneus. We also found distinct patterns of activation for the groups for the meaningless and meaningful gestures that are discussed in greater detail below. Briefly, our results suggest that meaningful gestures were processed as linguistic units by the Deaf group but not by the hearing non-signing group. The hearing group, contrary to our expectations, tended to regard emblems as non-linguistic hand gestures.

The Deaf group activated mostly bilateral non-primary auditory areas to a greater extent than did the hearing group during processing of the manual gesture videos. The hearing subjects activated a diverse set of regions, mostly in the right and left hemispheres, involved in visual-spatial processing, to a greater extent than the Deaf group. These results suggest that, not surprisingly, there are differences in how the Deaf signers and hearing non-signers perceive and process gestures. Of particular interest was the activation in the Deaf group of non-primary auditory areas for visual processing. The participants in the group were pre-lingually Deaf and the result attests to the plasticity of the cortex, specifically in the superior and middle temporal cortex. For the meaningful stimuli, the locus of activation encompassed the posterior portion of superior temporal cortex (planum temporale), which is the putative Wernicke’s area. Wernicke’s area has long been considered a polymodal semantic processing region, with the planum temporale acting as a computational hub, albeit primarily for auditory stimuli in the hearing (Griffiths and Warren, 2002). Our results suggest that the planum temporale may play a semantic processing and computational role in processing of gestures in Deaf non-signers. These results are consistent with the findings of earlier studies (MacSweeney et al., 2004) that found the involvement of non-primary auditory areas in gestural processing, with the response of the planum temporale heightened for meaningful gestures (relative to meaningless ones) in Deaf signers.

We found increased activation in left BA 6 and in left inferior parietal lobule (BA 40) by the hearing group when they were observing hand gestures compared to the Deaf group. This activation was further enhanced for the meaningless stimuli relative to the meaningful ones, but was lateralized to the right hemisphere. Together with BA 44, BA 6 and BA 40 constitute the ‘mirror neuron’ system described by Rizzolatti and colleagues (di Pellegrino et al., 1992; Fadiga et al., 1995; Rizzolatti et al., 1996). They found evidence for a mirror neuron system in monkeys and humans wherein neurons located in a dorsal pathway show increased activation during observation of hand movements. In monkeys the primary site of mirror neurons is frontal region F5 and its homolog in humans is considered to be BA 44 (pars opercularis of the inferior frontal gyrus) and lateral BA 6 (ventral premotor cortex). More recently, mirror neurons have also been described in the inferior parietal lobule (di Pellegrino et al., 1992; Fadiga et al., 1995; Rizzolatti et al., 1996; Rizzolatti et al., 2001) that might code the observed action and also allow the monkey to understand the intentions of the agent performing the actions. This suggests that processing of gestures used in the study by non-signers may involve the mirror neuron system, as has been found for emblems and other types of hand gestures in several recent imaging studies that varied in their tasks and experimental paradigms. In a recently published fMRI study involving hearing non-signers, Villareal et al. (2012) found a similar diverse set of mirror-neuron system nodes in processing of emblems (inferior frontal gyrus, the superior parietal cortex and the temporoparietal junction in the right hemisphere) with additional regions (bilateral precuneus and posterior cingulate) being recruited when the context of the gestures became important. Similarly, Montgomery et al. (2007) determined that viewing, producing, and imitating hand gestures, including emblems, evoked response in the mirror neuron system. The greatest difference between communicative gestures or emblems and object-oriented gestures was in the superior temporal sulcus for the production task; neither the inferior parietal lobule nor the frontal operculum exhibited any difference in response for the two types of gestures. Emmorey et al. (2010) found that pantomimes, when compared to visual fixation, exhibited greater response in the fronto-parietal mirror neuron regions in hearing non-signers but not in Deaf signers; the latter group showed greater activation in the bilateral middle temporal gyri. An alternate explanation of the fMRI results is that the hearing signers may have interpreted the emblems as pictorial descriptions rather than as words. Depictive or iconic gestures have been shown to evoke comparable interpretation as other image-based representations (Wu and Coulson, 2011).

Contrary to our expectations that emblems will invoke the linguistic system in both signers and non-signers, only the Deaf signers activated left angular gyrus and supramarginal gyrus (part of the inferior parietal lobule) to a greater extent for the meaningful emblems compared to the meaningless gestures. The results for the deaf group are in keeping with our initial hypothesis and support the suggestion of McNeill (2005) that the emblematic gestures have linguistic properties. Both supramarginal and angular gyri have been associated with lexical processing (Joubert et al., 2004). The angular gyrus has been implicated in reading (Rumsey et al., 1997), and its disordered functional connectivity can be considered as a marker for dyslexia (Horwitz et al., 1998). In particular, it has been suggested that the angular gyrus is useful for character-to-phonological conversion in letter perception (Callan et al., 2005). Sakai et al. (2005) ascertained that both sign and oral language activated the angular gyrus to a greater extent during sentence comprehension compared to sentential non-word detection. The supramarginal gyrus has been implicated in phonological processing, not only of words (Sigman et al., 2007) but also of signs (Emmorey et al., 2003).

A possible reason for emblems not engaging linguistic processing in non-signers may be repetition effects of stimuli. Unlike other investigations of meaningful gesture processing (Gunter and Bach, 2004; Villarreal et al., 2012; Xu et al., 2009), our study used only two gestures (thumbs-up, thumbs-down) and their repetitions that varied in orientation of the thumb and hand. Both repetition priming (enhanced behavioral indices) and repetition suppression (reduced fMRI BOLD effects) may have resulted in differential effects of the same meaningful stimuli in the two subject groups. A recent behavioral study by Corina and Grosvald (2012) explicitly examined the effect of repetition and inversion using a categorization task. Video clips of signs and non-linguistic gestures were either presented in correct orientation or with 180° inversion, in rapid order. Participants were told to categorize the actions shown as an ASL sign or a non-linguistic gesture. The results showed that Deaf signers were faster than their counterparts and also more sensitive to the inversion paradigm. The non-linguistic gestures benefited more from repetition than did the signs for both groups; these gestures were of the self-grooming type and therefore more familiar than the signs for the hearing non-signers, thus supporting the assertion that repetition of familiar actions is associated with increased priming relative to that with unfamiliar objects or actions. The results for Deaf signers are harder to interpret because they did not show increased priming for signs either on the basis of lexical representation or on the basis of familiarity. The authors explain the results by suggesting that sign processing makes use of a general action recognition system and greater expertise in it translates to more efficient recognition of human actions and gestures. In the context of our study, the Corina and Grosvald results would suggest that repetition effects of familiar emblematic stimuli had a priming effect on the non-signers but not on the signers. This would translate into the non-signers not invoking the semantic network for the repetitious emblems whereas the signers, because of the greater facility with processing signs, would not show an effect of repetition. As can be noted in our results, the contrast MEANINGFUL>MEANINGLESS resulted in suprathreshold voxels only for the Deaf group.

In the current study, the effect of tasks was similar for the two groups, with the fMRI response patterns for the IDN task using a more diverse set of regions than the CAT task. It is possible that the discrimination of one set of gestures versus another was easier and more automated than the task requiring discrimination of small orientation changes within and across gestures. The claim is supported by behavioral data that showed, for the most part, greater accuracy and shorter reaction times for the CAT relative to IDN task for both groups in the present study and in our previous gestural processing study (Husain et al., 2009). The behavioral and fMRI results for the visual studies are in contrast to our study of categorization and discrimination of sounds in young normal hearing adults (Husain et al., 2006). We found that categorical discrimination of simple speech and non-speech sounds was longer in duration but more accurate than discrimination based on minor pitch changes. The fMRI response patterns for the categorical task were more widespread for all sounds relative to the auditory discrimination task and these differences were centered in left inferior frontal gyrus, dorsomedial frontal gyrus, and inferior parietal sulcus. Because the sounds used were meaningless, the categorization task evoked phonological processing and the auditory discrimination task possibly used acoustic feature detection processes. In the present study, the categorization task involved semantic processing and the identity discrimination task could be considered to include phonological processes, at least for the Deaf group. The Deaf group showed increased activation for the IDN>CAT comparison in different set of brain regions that may play a role in reading or other aspects of linguistic processing of whole words rather than phoneme or syllable-type segments, such as supramarginal gyrus, insula, and fusiform gyrus. This result further supports the idea that the Deaf group considered the emblems as word gestures and is corroborated by findings from our previous study (Husain et al., 2009) where we explicitly compared emblems with ASL gestures and found no statistically significant difference in their neural correlates.

4. Methods and Materials

4.1 Subjects

Sixteen right-handed hearing normal volunteers (6 women, 10 men), 30 ±5 years in age, participated in the study. Fifteen right-handed Deaf normal volunteers (6 women, 9 men), who became Deaf before the age of three and who learned ASL as their first language, 27±5 years in age, also participated in the study. Individuals with any condition that may have compromised their visual ability in the scanner were excluded from the study. Subject had normal vision or wore corrective contact lenses in the scanner. One Deaf subject was excluded from the study because of discomfort in the scanner. Subjects gave their informed consent to the study, which was approved by the NIDCD-NINDS IRB (protocol NIH 92-DC-0178), and were suitably compensated for their participation in the study. An ASL interpreter was present throughout an experimental session.

4.2 Stimuli

We used two types of hand gestures that differed from each other in being meaningful or meaningless (Figure 1). Each stimulus type was divided into two classes or categories. For instance, for the meaningful gestures, the two classes were the gestures “thumbs-up” and “thumbs-down”. The meaningless gestures were made-up gestures that were not part of ASL. There were 5 exemplars within each category, with the exemplars varying in orientation. Thus, the five exemplars of the “thumbs-up” category were all identifiable as “thumbs-up” but differed in the orientation of the final hand gesture relative to the shoulder.

4.3 Tasks

Subjects performed a delayed-match-to-sample (DMS) task (Figure 2). The subjects saw videos of the two gestures interspersed by an interval, and decided if the two gestures were the same or different. During separate blocks, the decision was based on one of two criteria – (1) are the two gestures exactly the same? and (2) do the two gestures belong to the same category? The first type of task is referred as visual Identity Discrimination or IDN and the second type as Category Discrimination or CAT. Each trial was 6.9 seconds in duration, with 2 stimuli of 1500 msecs each, an inter-stimulus interval of 1900 msecs and 2000 msecs of subject-response time. The response time was fixed regardless of the time taken by the subject to press the button and response times longer than 2 seconds were considered as incorrect responses.

4.4 Training

Subjects were trained outside the magnet prior to being scanned. The training of the two tasks for the two stimulus types took approximately 15–20 minutes. During the training session for a particular stimulus type, subjects viewed passively the exemplars from the two categories, and then participated in tasks to discriminate between pairs of gestures based on either criterion (1) or (2). The categories were identified only as ‘A’ and ‘B’ so as to be uniform (not all stimulus types had easily identifiable categories). Although, there were separate training sessions for each stimulus type; the instructions and training was identical in each case. Subjects were given feedback for the tasks and repeated a specific training session until their performance was better than a threshold of 85% (62 correct/72 trials) for that stimulus type. During scanning, before each scan run, subjects were familiarized with the type of gestures that they would discriminate in the following run by viewing passively examples of the two categories of that stimulus type.

4.5 Scanning

Subjects were scanned using echo planar imaging (EPI) in a block design paradigm in which 26 axial slices were collected on a 3 Tesla Signa scanner (General Electric, Waukesha, WI). Stimuli were presented using Presentation software (Neurobehavioral Systems, Albany, CA) on a PC laptop computer. There were separate functional EPI runs, one for each type of stimulus. Within an EPI run, subjects underwent 9 rest blocks and 8 test blocks. Within a given test block, there were 8 DMS trials, all of the same type, either IDN or CAT. The instructions as to which criterion to use for the DMS tasks within a given block were given visually 10 seconds prior to the beginning of the test block (during the preceding rest block). The first rest block (fixating on a ‘+’ on screen) was 38 seconds; subsequent rest blocks were 30 seconds long. Each test block was 55.2 seconds long. The last rest block was 32 seconds long. Total time was 12 minutes and 10 seconds (or 365 repetitions of 2 second TR) for each run. The first four and the last volumes were discarded, resulting in 360 volumes available for analysis. The functional scans consisted of 26 interleaved axial (inferior-to-superior) slices that were 5 mm in thickness and with 3.75 × 3.75 mm in-plane resolution (TE=40ms, matrix size 64×64, FOV = 240 mm, 90 degrees flip angle). Sagittal localizer and anatomical scans were run prior to the T2-weighted functional EPI scans.

4.6 Analysis

The analysis of the fMRI data was carried out using the statistical parametric mapping (SPM) software (SPM2, SPM5, Wellcome Dept. of Cognitive Neurology, London, http://www.fil.ion.ucl.ac.uk/spm). The image volumes were corrected for slice time differences, realigned, normalized into standard stereotactic space (using the MNI EPI template provided with SPM2 software) and smoothed with a Gaussian kernel (10 mm full-width-half-maximum). On observation, we found that four of our 13 subjects had movement artifacts in at least one of the 4 EPI scan runs. To control for these movement artifacts, we used a robust weighted least squares method of (Diedrichsen and Shadmehr, 2005). This method utilizes a restricted maximum likelihood approach to obtain unbiased estimates of image variance from unsmoothed data and applies these weights to the smoothed data or observations, resulting in optimal model estimates from the noisy data.

The data were rescaled for variations in global signal intensity and high-pass filtered to remove the effect of any low-frequency drift. Using a general linear model (Friston et al., 1995), a mixed effects analysis was conducted at two levels. First, a fixed effects analysis was performed for individual subjects (p<0.001, uncorrected) and contrast images created for the different task conditions. Next, the contrast images from the first level were used to perform a further second-level analysis using SPM5. The second level analyses were of multiple types: one-sample t test, two-sample t test and two-way ANOVA. In all cases we used the statistical threshold p<0.05 corrected for multiple comparisons using family-wise error at either the voxel-level or the cluster-level. At the cluster-level, different sizes (number of voxels in a cluster) were used but only those clusters that met the statistical criterion were included in the results.

5. Conclusion

In this fMRI study, we contrasted the processing of emblematic gestures with meaningless gestures by Deaf and hearing participants. Subjects performed a visual discrimination and a categorical discrimination task involving both types of stimuli. Deaf participants activated bilateral auditory processing and associative areas in the temporal cortex to a greater extent than the hearing participants while processing both types of gestures. The hearing activated a diverse set of regions, predominantly in the left hemisphere, including those implicated in the mirror neuron system, such as premotor cortex (BA 6) and inferior parietal lobule (BA 40). Further, when contrasting the processing of meaningful and meaningless gestures, the Deaf (but not the hearing) activated the left angular and supramarginal gyri, which play important roles in linguistic processing. One possible explanation of the last result is that whereas emblems are treated as linguistic signs by the Deaf signers, they may be considered to be more closely related to pictorial descriptions and object recognition by the non-signers.

Highlights.

We investigate processing of gestures by Deaf signers and hearing non-signers.

These gestures are of two types: meaningful to both groups or meaningless.

The two types of gestures are processed differently by the two groups.

Meaningful gestures are perceived as linguistic units by Deaf signers alone.

Gestural processing engages the ‘mirror neuron’ system in hearing non-signers.

Acknowledgments

The research was supported by the NIH-NIDCD Intramural Research Program. The authors are grateful to Nathan Pajor for assistance with the analysis of behavioral data.

Footnotes

Publisher's Disclaimer: This is a PDF file of an unedited manuscript that has been accepted for publication. As a service to our customers we are providing this early version of the manuscript. The manuscript will undergo copyediting, typesetting, and review of the resulting proof before it is published in its final citable form. Please note that during the production process errors may be discovered which could affect the content, and all legal disclaimers that apply to the journal pertain.

References

- Arbib MA. From monkey-like action recognition to human language: an evolutionary framework for neurolinguistics. Behav Brain Sci. 2005;28:105–24. doi: 10.1017/s0140525x05000038. discussion 125–67. [DOI] [PubMed] [Google Scholar]

- Aronoff M, Meir I, Padden C, Sandler W. Morphological universals and the sign language type. Yearbook of Morphology 2004. In: Booij G, Marle J, editors. Yearbook of Morphology. Springer; Netherlands: 2005. pp. 19–39. [Google Scholar]

- Bentin S, McCarthy G, Wood CC. Event-related potentials, lexical decision and semantic priming. Electroencephalogr Clin Neurophysiol. 1985;60:343–55. doi: 10.1016/0013-4694(85)90008-2. [DOI] [PubMed] [Google Scholar]

- Callan AM, Callan DE, Masaki S. When meaningless symbols become letters: neural activity change in learning new phonograms. Neuroimage. 2005;28:553–62. doi: 10.1016/j.neuroimage.2005.06.031. [DOI] [PubMed] [Google Scholar]

- Corina D, Chiu YS, Knapp H, Greenwald R, San Jose-Robertson L, Braun A. Neural correlates of human action observation in hearing and deaf subjects. Brain Res. 2007;1152:111–29. doi: 10.1016/j.brainres.2007.03.054. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Corina DP, Grosvald M. Exploring perceptual processing of ASL and human actions: effects of inversion and repetition priming. Cognition. 2012;122:330–45. doi: 10.1016/j.cognition.2011.10.011. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Decety J, Grezes J, Costes N, Perani D, Jeannerod M, Procyk E, Grassi F, Fazio F. Brain activity during observation of actions. Influence of action content and subject’s strategy. Brain. 1997;120(Pt 10):1763–77. doi: 10.1093/brain/120.10.1763. [DOI] [PubMed] [Google Scholar]

- di Pellegrino G, Fadiga L, Fogassi L, Gallese V, Rizzolatti G. Understanding motor events: a neurophysiological study. Exp Brain Res. 1992;91:176–80. doi: 10.1007/BF00230027. [DOI] [PubMed] [Google Scholar]

- Diedrichsen J, Shadmehr R. Detecting and adjusting for artifacts in fMRI time series data. Neuroimage. 2005;27:624–34. doi: 10.1016/j.neuroimage.2005.04.039. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Ekman P, Friesen WV. The repertoire of nonverbal communication: categories, origins, usage, and coding. Semiotica. 1969;1:49–98. [Google Scholar]

- Emmorey K, Grabowski T, McCullough S, Damasio H, Ponto LL, Hichwa RD, Bellugi U. Neural systems underlying lexical retrieval for sign language. Neuropsychologia. 2003;41:85–95. doi: 10.1016/s0028-3932(02)00089-1. [DOI] [PubMed] [Google Scholar]

- Emmorey K, Xu J, Braun A. Neural responses to meaningless pseudosigns: evidence for sign-based phonetic processing in superior temporal cortex. Brain Lang. 2010;117:34–8. doi: 10.1016/j.bandl.2010.10.003. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Fadiga L, Fogassi L, Pavesi G, Rizzolatti G. Motor facilitation during action observation: a magnetic stimulation study. J Neurophysiol. 1995;73:2608–11. doi: 10.1152/jn.1995.73.6.2608. [DOI] [PubMed] [Google Scholar]

- Friston KJ, Holmes AP, Worsley KJ, Poline JP, Frith AD, Frackowiak RSJ. Statistical parametric maps in functional imaging: A general linear approach. Hum Brain Mapp. 1995;2:189–210. [Google Scholar]

- Griffiths TD, Warren JD. The planum temporale as a computational hub. Trends Neurosci. 2002;25:348–53. doi: 10.1016/s0166-2236(02)02191-4. [DOI] [PubMed] [Google Scholar]

- Gunter TC, Bach P. Communicating hands: ERPs elicited by meaningful symbolic hand postures. Neurosci Lett. 2004;372:52–6. doi: 10.1016/j.neulet.2004.09.011. [DOI] [PubMed] [Google Scholar]

- Hamm JP, Johnson BW, Kirk IJ. Comparison of the N300 and N400 ERPs to picture stimuli in congruent and incongruent contexts. Clin Neurophysiol. 2002;113:1339–50. doi: 10.1016/s1388-2457(02)00161-x. [DOI] [PubMed] [Google Scholar]

- Horwitz B, Rumsey JM, Donohue BC. Functional connectivity of the angular gyrus in normal reading and dyslexia. Proc Natl Acad Sci U S A. 1998;95:8939–44. doi: 10.1073/pnas.95.15.8939. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Husain FT, Fromm SJ, Pursley RH, Hosey LA, Braun AR, Horwitz B. Neural bases of categorization of simple speech and nonspeech sounds. Hum Brain Mapp. 2006;27:636–651. doi: 10.1002/hbm.20207. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Husain FT, Patkin DJ, Thai-Van H, Braun AR, Horwitz B. Distinguishing the processing of gestures from signs in deaf individuals: An fMRI study. Brain Res. 2009 doi: 10.1016/j.brainres.2009.04.034. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Joubert S, Beauregard M, Walter N, Bourgouin P, Beaudoin G, Leroux JM, Karama S, Lecours AR. Neural correlates of lexical and sublexical processes in reading. Brain Lang. 2004;89:9–20. doi: 10.1016/S0093-934X(03)00403-6. [DOI] [PubMed] [Google Scholar]

- Kendon A. How gestures can become like words. In: Poyatos F, editor. Cross-cultural perspectives in nonverbal communication. Hogrege; Toronto: 1988. pp. 131–141. [Google Scholar]

- Klima E, Bellugi U. Signs of language. Harvard University Press; Cambridge, MA: 1979. [Google Scholar]

- MacSweeney M, Campbell R, Woll B, Giampietro V, David AS, McGuire PK, Calvert GA, Brammer MJ. Dissociating linguistic and nonlinguistic gestural communication in the brain. Neuroimage. 2004;22:1605–18. doi: 10.1016/j.neuroimage.2004.03.015. [DOI] [PubMed] [Google Scholar]

- McNeill D. Hand and Mind. The University of Chicago Press; Chicago, IL: 1992. [Google Scholar]

- McNeill D. Gesture and Thought. The University of Chicago Press; Chicago: 2005. [Google Scholar]

- Montgomery KJ, Isenberg N, Haxby JV. Communicative hand gestures and object-directed hand movements activated the mirror neuron system. Soc Cogn Affect Neurosci. 2007;2:114–22. doi: 10.1093/scan/nsm004. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Rizzolatti G, Fadiga L, Gallese V, Fogassi L. Premotor cortex and the recognition of motor actions. Brain Res Cogn Brain Res. 1996;3:131–41. doi: 10.1016/0926-6410(95)00038-0. [DOI] [PubMed] [Google Scholar]

- Rizzolatti G, Fogassi L, Gallese V. Neurophysiological mechanisms underlying the understanding and imitation of action. Nat Rev Neurosci. 2001;2:661–70. doi: 10.1038/35090060. [DOI] [PubMed] [Google Scholar]

- Rumsey JM, Horwitz B, Donohue BC, Nace K, Maisog JM, Andreason P. Phonological and orthographic components of word recognition. A PET-rCBF study. Brain. 1997;120(Pt 5):739–59. doi: 10.1093/brain/120.5.739. [DOI] [PubMed] [Google Scholar]

- Sakai KL, Tatsuno Y, Suzuki K, Kimura H, Ichida Y. Sign and speech: amodal commonality in left hemisphere dominance for comprehension of sentences. Brain. 2005;128:1407–17. doi: 10.1093/brain/awh465. [DOI] [PubMed] [Google Scholar]

- Sigman M, Jobert A, Lebihan D, Dehaene S. Parsing a sequence of brain activations at psychological times using fMRI. Neuroimage. 2007;35:655–68. doi: 10.1016/j.neuroimage.2006.05.064. [DOI] [PubMed] [Google Scholar]

- Venus CA, Canter GJ. The effect of redundant cues on comprehension of spoken messages by aphasic adults. J Commun Disord. 1987;20:477–91. doi: 10.1016/0021-9924(87)90035-9. [DOI] [PubMed] [Google Scholar]

- Villarreal MF, Fridman EA, Leiguarda RC. The effect of the visual context in the recognition of symbolic gestures. PLoS One. 2012;7:e29644. doi: 10.1371/journal.pone.0029644. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Xu J, Gannon PJ, Emmorey K, Smith JF, Braun AR. Symbolic gestures and spoken language are processed by a common neural system. Proc Natl Acad Sci U S A. 2009;106:20664–9. doi: 10.1073/pnas.0909197106. [DOI] [PMC free article] [PubMed] [Google Scholar]