Abstract

Feature-based attention (FBA) enhances the representation of image characteristics throughout the visual field, a mechanism that is particularly useful when searching for a specific stimulus feature. Even though most theories of visual search implicitly or explicitly assume that FBA is under top-down control, we argue that the role of top-down processing in FBA may be limited. Our review of the literature indicates that all behavioural and neuro-imaging studies investigating FBA suffer from the shortcoming that they cannot rule out an effect of priming. The mere attending to a feature enhances the mandatory processing of that feature across the visual field, an effect that is likely to occur in an automatic, bottom-up way. Studies that have investigated the feasibility of FBA by means of cueing paradigms suggest that the role of top-down processing in FBA is limited (e.g. prepare for red). Instead, the actual processing of the stimulus is needed to cause the mandatory tuning of responses throughout the visual field. We conclude that it is likely that all FBA effects reported previously are the result of bottom-up priming.

Keywords: selective attention; feature-based attention; priming, top-down control

1. Introduction

Since the late 1970s, there has been agreement that visual selective attention can be directed to a non-fixated location in space (e.g. [1–3]). In his classic paper, Posner [4] compared the effective use of spatial information to a mechanism that operates analogously to a ‘beam of light’. Metaphorically, Posner et al. [3] compared visual selective attention as a ‘spotlight that enhances the efficiency of the detection of events within its beam’ (p. 172). When shifting attention from one location to another, the spotlight of attention ‘highlights’ the new location in the environment. The processing of information presented at that location is better and more efficient than at the previously attended location or at any other non-attended locations. Shifts of spatial attention are usually (but not necessarily) accompanied by eye movements. In many cases, observers shift their attention from one location to the next at will, implying that the observer is controlling the focus of attention. In other circumstances, events in the environment (for instance, the sudden appearance of an object) may capture attention, indicating that attention is automatically and reflexively drawn to the location of the object (e.g. for a review, see [5]).

In addition to selecting information on the basis of spatial information, it is also possible to direct attention to particular feature properties throughout the visual field independently of spatial attention. This so-called feature-based attention (FBA) makes it possible to direct limited processing resources on those sensory inputs that are most relevant for the task at hand (e.g. a particular colour, particular shape, etc.). This is important because in everyday life we often know what we are looking for (e.g. a particular book that is large and red) but not exactly where it is. Even though such a concept is intuitively plausible, one needs to ask the question whether FBA outside the focus of attention does in fact improve the detection of a signal. It is obvious that once spatial attention is directed towards a particular object, FBA helps in deciding that the object that you selected is indeed the object you were looking for. In other words, knowing that the book that you need is red and large helps tremendously in deciding that the object you selected is in fact the book that you wanted. Still, it is not immediately clear whether knowledge about the relevant features (that it is large and red) improves directly the efficiency of selection as does knowledge about the location of an object.

In the early 1980s, Posner et al. [3] also posed the question about the efficiency of FBA. The question asked was whether ‘the entry of information concerning the presence of a signal into a system’ can be improved by any type of information, be it spatial or non-spatial. The underlying notion is that any information regarding the target should help in disentangling the signal from noise (see also [6]). Posner et al. [3] concluded that the detection of signals can only be improved by information about its location and not by other information, such as its shape or colour. Since the seminal paper of Posner et al. [3], the question whether all features are equal or whether location has a special status in separating signal from noise has been a matter of debate.

Many theories suggest that all features are equal. In order to select a target, one has to set up a target template that needs to be matched to a sensory signal. This target template may consist of target properties representing its shape, colour and location. Most theories assume the setting of particular weights which are assigned proportionally to the degree of the match (e.g. [7–9]). The higher the weight, the higher the probability that the stimulus is selected for further processing. Weights can be set on the basis of any criterion, be it its colour, shape, movement, location, etc. According to these theories, FBA should be, in principle, as effective as spatial attention.

Since Posner's seminal paper of 1980, there has been discussion regarding the question whether all features are equal or whether location information has a special status in separating signal from noise (see [10] for a recent discussion of this issue). Even though the role of location information is undisputed in visual selection [3,11,12], there is debate whether information about non-spatial features has a similar effect. Some studies have provided evidence that prior knowledge regarding non-spatial features has no effect on visual selection (e.g. [13–16]), whereas others provided evidence that non-spatial features may improve the entry of information into the brain (e.g. [6,17–19]).

In this respect, it is also important to mention findings obtained with the partial report paradigm [7,20–23] as these studies seem to provide unequivocal evidence for selection on the basis of non-spatial features. For example, classic studies of von Wright [21] showed efficient selection in a partial report task on the basis of simple attributes, such as colour, luminance and shape. These findings are interpreted as evidence for selection on the basis of non-spatial features. However, it should be realized that these findings do not necessarily indicate that non-spatial information is used directly to select information (as, for example, assumed by Bundesen's theory of visual attention (TVA) [7]). It has been argued that non-spatial information is used to direct spatial attention to a location in space (similar to a bar marker indicating a location). In this sense, still location information is ultimately used as a means to select the relevant item [24] (see also [15]).

Shih & Sperling [25] came to a similar conclusion. They had superimposed stimulus arrays in a rapid visual serial presentation task. Observers were better at detecting a target digit when it was in the colour (or size) they expected but only when the target was in a frame with distractors having all different colours. Clearly in this condition, the expected non-spatial feature provided spatial information about the target. In conditions in which the elements in a single frame had the same colour and the expected non-spatial feature provided temporal but not spatial information, participants could not use this information to improve performance. Shih & Sperling [25] concluded that non-spatial information does not directly affect visual selection but only guides spatial attention to the relevant location. Along similar lines, Moore & Egeth [26] concluded that direct selection on the basis of a non-spatial feature, colour for instance, was not effective. In their experiments, observers were required to detect a target digit among letters and were told the probability of the target being in one of these colours. The higher the probability of a specific colour, the faster the responses to targets in that specific colour, indicating that selection by colour was effective. However, in subsequent experiments, the display was presented briefly and masked, rendering colour cueing ineffective. Moore and Egeth argued that masking the brief display prevented a shift of spatial attention to the relevant colour. Therefore, they concluded that colour cannot affect selection directly but only by guiding attention to the relevant location.

Even though selection on the basis of location is undisputed, there is discussion regarding the selection on the basis of non-spatial features. In addition to space-based attention and FBA, there is also object-based attention, a notion that adheres the position that the deployment of spatial attention is affected by the object structure in the visual field. Even though this is clearly an important concept, one may argue that objects occupy space and as such object-based attention may be nothing other than a variant of a space-based account (see [27] for a recent review). As such, we will not discuss object-based attention further in this review.

2. Spatial attention

When discussing the difference between space-based attention and FBA, one has to recognize the way these different modes of attention are controlled. When selection is controlled by the goals of the person in an active, volitional way, one speaks of top-down control. When selection is driven by features in the environment in a passive, automatic way, one speaks of bottom-up control (see reviews [5,28,29]). Even though in the past 20 years, we have described compelling conditions in which salient events capture attention [30–32] or the eyes [33,34] against the intentions of the observer in a bottom-up way, most theories assume that visual selection is basically under volitional top-down control. In other words, at any point in time, we determine what we select from the environment [9,35,36]. Indeed, at any time it feels like we are controlling what we are searching for and looking at; for example, when searching for your favourite coffee in the supermarket or when searching for your car at the parking lot.

When considering space-based attention, it has been known since the classic study of Posner et al. [3] that observers can direct attention to a location in space ‘at will’. In the Posner et al. study, before display onset observers received a central symbolic cue (e.g. an arrow) that indicated the location of the upcoming target with a validity of 80%. In other words, in 80% of trials, the centrally presented arrow pointed to the location where the target would appear. In 20% of the trials, the target appeared at the ‘invalid’ location (i.e. at the location opposite to that indicated by the arrow). The results showed that observers were faster and more accurate when the target appeared at the cued location than when it occurred at the non-cued location. The interpretation of these results is that observers use the cue (the arrow) to voluntarily direct spatial attention to the location indicated by the cue. It is important to realize that in these types of experiments, the location indicated by the cue varies randomly from trial to trial, which implies that at each trial, observers have to shift attention to the indicated location at will which rules out some type of location priming.

In the exogenous version of the location cueing paradigm, spatial attention is not directed at will to the cued location, but instead attention is captured by the cue. In this paradigm, before the appearance of the target an uninformative peripheral event (usually an abrupt increase in luminance) is presented either at the location of the target or at a location where the target does not appear. The usual finding is that when the target happens to appear at the location of the cue (i.e. valid trials), response times are fast and accuracy is high relative to a condition in which the target appears at a non-cued location (i.e. invalid trials). The finding that a cue that has no predictive value regarding the upcoming target can induce spatial cueing effects is considered to be evidence that exogenous cueing is bottom-up and automatic [11,37,38].

In a recent paper, we [39] questioned whether the dichotomy between top-down and bottom-up control of attention is useful (sometimes referred to as ‘endogenous’ and ‘exogenous’ control, respectively). Even though this dichotomy may work for interpreting space-based attentional effect, the dichotomy fails when considering FBA. We argued that there is a growing body of literature indicating strong selection biases towards particular features that can be explained by neither current selection goals nor the physical salience of potential targets.

3. Feature-based attention

Leaving aside the discussion whether attentional set towards a feature affects selection directly or whether it guides spatial attention to the feature-relevant locations, it is generally assumed that it is possible to direct attention to a specific feature or feature dimension at will. Indeed, most theories, such as Guided Search [9], Attentional Engagement [8], TVA [7], FBA [40], Contingent Capture [36] and Dimensional Weighting [41], adhere to the notion that observers can actively prepare themselves for the upcoming target by selectively enhancing those features (or dimensions) that define the target. For example, in a visual search task in which observers know that the target is going to be red, they actively prepare for the colour red, causing objects that are red to be prioritized in selection. In other words, top-down FBA causes a bias to those image components that are related to the target throughout the visual field.

This basic notion of top-down FBA is present in most contemporary theories of visual search. For example, the contingent capture hypothesis assumes that observers can set themselves on each trial for a particular colour [36]. According to Guided Search, top-down feature set increases the salience of the relevant feature dimension (in this example: the feature ‘red’) so that attention is (primarily) guided to relevant features only [9,42]. Other theories claim a selection bias towards some type of short-term description of information that stipulates what is needed for the task at hand [8]. An attentional template ensures that stimuli, which match the description are selected over those that do not match [7].

To investigate whether initial attentional selection can be set specifically for feature one or another, it is crucial that the feature under investigation is evidently present in the display. For example, when one wants to know whether attention can be selectively directed to a red object in a display, it is crucial that this red object is salient enough and not confusable with other objects that look like the target. Indeed, when investigating top-down control of FBA on early (feed-forward) processing, one has to design a task that taps this initial selection, and is not confounded by processes that occur after an object has been selected. For example, when searching serially through a natural scene or searching for an object that consists of a conjunction of basic features, focal spatial attention visits each object in turn, matching each visited object with a top-down template, deciding whether it is the target or not. This process is not about initial feature selection but refers to those processes that occur following selection (i.e. post-selection processes). Clearly, when considering this process, FBA (i.e. knowing the features of the object one is looking for) plays a large role as it helps in deciding whether the object selected is indeed the target.

To investigate FBA on initial selection, however, one has to ensure that the findings of various studies are not open to alternative interpretations involving post-selection processes. For example, Wolfe et al. [43] showed that in conjunction search, a word cue (e.g. a cue saying ‘black vertical’ or ‘big red’) instructing observers what to look for helped the search dramatically. This result is not surprising given that observers had to decide whether each item selected was indeed the ‘big red’ or ‘black vertical’. Clearly, these are post-selection processes (matching a selected object with a template) that do not address the issue of the efficiency of FBA (see also [44] for a similar result). Other studies using, for example, a partial report technique [22] also do not address the issue of initial selection as these effects are typically ascribed to post-selective processes involved in retrieval from short-term memory.

To study the role of FBA in initial selection, one has to use a task which addresses attentional modulation on early (feed-forward) vision while excluding later post-selectional modulations arising from massive recurrent top-down processing from extrastriate areas to primary visual areas. One such is the feature singleton search task. In this task, the target is unique in a basic feature dimension (e.g. a red element surrounded by green elements) and therefore ‘pops-out’ from the display. Pop-out detection tasks have been implicated to subserve the first stage of visual processing, and single unit studies have implicated primary visual cortex in mediating bottom-up pop-out saliency computations [45].

(a). Evidence for feature-based attention

This section reviews the most important evidence for FBA both from behavioural and neuro-imaging studies.

(i). Behavioural studies

In the classic work of Treisman [46], observers had to search for a singleton with a unique colour or shape. These trial types were mixed and compared with blocks in which observers searched only for a unique shape or colour. The pure blocks were about 100 ms faster than the mixed blocks which led Treisman to conclude that knowing the dimension of the target helps pop-out search.

Müller et al. [41,47,48] also provided evidence that knowing the dimension one is looking for speeds up search. For example, Found & Müller [48] investigated search for singleton targets within and across stimulus dimensions. Observers had to search for three possible targets, which were all defined within one dimension (e.g. orientation) or were defined across dimensions (e.g. orientation, colour and size). They showed that the detection of a common right-tilted target was 60 ms slower in the cross-dimension relative to both the intradimension condition and the control condition. These (and other findings) led to the dimensional weighting account of Müller et al. which assumes a top-down mechanism ‘that modifies the processing system by allocating selection weight to the various dimensions that potentially define the target’ ([41], p. 1021).

The Guided Search model of Wolfe [9] is very similar to the Dimensional Weighting account except that it focuses on feature values (e.g. red, green, vertical) instead of feature dimensions (e.g. colour, shape). Wolfe et al. [42] conducted experiments similar to those of Treisman [46] and Müller et al. [41]. In one of the conditions of Wolfe et al., observers searched a whole block of trials for a red target between green non-targets (i.e. colour singleton) or for a vertical line between horizontal line segments (i.e. shape singleton). These blocked conditions were compared with mixed conditions consisting of blocks of trials in which the target could either be red, green, vertical or horizontal. Wolfe et al. explain these experiments in the same vein as Müller and colleagues: in a blocked condition in which the target is always the same, observers can put as much weight as possible on one feature (e.g. orientation), allowing for a strong signal to guide search. In a mixed condition, all features have some weight. When in the mixed condition the target happens to be an orientation singleton, there is a weaker signal to guide search and noise from other dimensions (colour and size) may slow search. Note that both in Müller's and Wolfe's accounts, top-down knowledge guides the search process, i.e. top-down knowledge influences the selection process of the feature.

Kumada [49] also explicitly examined FBA, addressing the question whether prior knowledge of a target feature dimension guides spatial attention to the target. Kumada showed that in visual search reaction times for blocks in which the target was defined within a single feature dimension were faster than in blocks in which the target varied across dimensions, a result similar to those of Müller et al. [41]. Kumada argued for knowledge-based dimensional weighting in which weights are assigned on the basis of explicit knowledge of the observer. He contrasts this with response-based weighting which is implicit priming of feature dimensions used for responding in the preceding trial.

Rossi & Paradiso [50] used a different approach to establish FBA. This study examined the effect of performing a foveal discrimination task on the sensitivity for detecting a near threshold Gabor patch in the periphery. The results showed that the sensitivity for the peripheral grating was dependent on the spatial frequencies and orientations that had to be discriminated in the centrally presented foveal task. The study provides clear evidence for FBA across the visual field.

A study by Saenz et al. [51] examined FBA for motion and colour. This study consisted of a dual task in which observers had to divide their attention across two spatially separate stimuli presented on either side of fixation. The results show that observers performed better when they had to perform their luminance discrimination task on separate stimuli sharing a common feature (same direction of motion or same colour) compared with opposing features. The results are consistent with a notion of FBA in which the attended feature tunes the response of cortical neurons throughout the visual field.

(ii). Neuro-imaging studies

The classic study of Corbetta et al. [52] used positron emission tomography (PET) to measure changes in cerebral blood flow when observers discriminated different features (shape, colour and velocity). Observers had to discriminate a stimulus change of either shape, colour or velocity (the selective attention condition), and this was compared with a condition in which a change could occur in any of the three feature attributes (divided attention). The critical finding was that discrimination sensitivity was higher in the selective attention condition than in the divided attention condition. The PET results showed that attention to a specific feature attribute (shape, colour, velocity) enhanced activity in the corresponding extrastriate cortex. FBA was inferred on the basis of a comparison of pure blocks (discriminate a change in one specific feature across a block of trials) with mixed blocks (discriminate a change in any of features across a block of trials).

In an fMRI study, Chawla et al. [53] manipulated the level of FBA by examining transient V4 and V5 responses to either motion or colour stimuli. Observers viewed a stationary monochromatic random dot display in which dots intermittently changed colour and motion. Before the visual display was presented, a cue instructed observers to attend to either the motion or the colour feature. In the motion condition, observers discriminated the slower dots from the faster dots; in the colour condition, they had to detect the pinker dots. It was demonstrated that dependent on the attentional set for either colour or motion there was an increase in baseline activity for motion and colour sensitive areas in extrastriate cortex. Chawla et al. argued that these baseline shifts reflect top-down expectation towards a particular stimulus feature. Even though this study used cues to instruct observers to attend to either the motion or colour feature in a truly top-down way, it should be realized that observers performed a series of trials in mini-blocks in which they performed either the colour or the motion task. In other words, during these mini-blocks observers always attended the same feature.

In an fMRI study by O'Craven et al. [54], observers viewed transparent stimuli consisting of a face and a house, one moving whereas the other was stationary. During a whole block of trials observers directed their attention to one stimulus attribute (the face, the house or the motion). The fMRI results showed that, depending on the attribute attended, there was an enhanced neural representation not only of the attribute attended but also of the other attribute of the same object. For example, if observers attended the motion of the face, there was enhanced processing both at medial temporal/medial superior temporal (MT/MST) area (movement) and the fusiforn face area (faces). These findings suggest that attention to one feature may not only enhance the processing of that feature but also other features of the whole object.

An fMRI study by Saenz et al. [55] investigated FBA to motion (exp. 1) and colour (exp. 2). On one side of fixation, there was a target field with overlapping fields of upward and downward moving dots. On the other, to-be-ignored, side, there were moving dots that moved consistently in one direction. Observers had to attend to either the upward or downward moving dots in the target field. The fMRI data showed that all visual areas responded more strongly to the ignored stimulus when the dots in the ignored stimulus field moved in the same direction as those in the target field. The same effect was found for attending colour (red or green) with stationary dots. The results demonstrate that FBA, which is assumed to modulate the gain of cortical neurons, tuned to those features that are attended.

Serences & Boynton [40] used multi-voxel pattern analysis (MVPA) in an fMRI study and showed that FBA (one of two directions of motion) spreads across the visual field, even to regions that do not contain a stimulus. In this experiment, observers monitored two invisible apertures containing dots moving in two different directions (half the dots moved at 45° and the other half moved at 135°). Observers attended in one of the apertures one specific direction of motion. The task was to detect the slowing of the attended dots. The crucial finding was that by means of MVPA, the continuous monitoring of the direction of motion on one side of the visual field spread across the visual field even for unstimulated regions of the visual field, providing strong evidence for feature-selective modulations.

Zhang & Luck [56] conducted an event-related potential (ERP) study showing that colour-based attention affected the feed-forward flow of information within 100 ms after stimulus onset. Crucially this study shows that this type of FBA is independent of spatial attention. Observers fixated a central fixation point. On one side of fixation, a continuous stream of intermixed red and green dots was presented. Observers were instructed to attend either the red or the green dots and detect an occasional luminance decrement in the attended colour. One the other side of fixation, a stimulus field was presented in which there were flashed dots (probes). These task-irrelevant, intermittently flashing, probes were either all red or all green dots. This stimulus field was unattended. ERPs were measured to the onset of the ignored flashes. The results showed a larger P1 amplitude contralateral to the flashed stimuli for stimuli in the attended colour relative to stimuli presented in the unattended colour. The effect was seen within 100 ms following stimulus presentation. As colour-based attention modulated the very early component P1, even for unattended stimuli, Zhang & Luck concluded that their study was the first to demonstrate that FBA can affect early feed-forward sensory processing. Zhang & Luck interpreted their results in terms of early effects of top-down FBA. Even though this is unequivocal evidence for FBA, one design aspect should be highlighted: in their study, observers were instructed to attend to a particular colour (red or green) throughout a whole block of trials. The colour to attend never varied within a block of trials.

(b). Summary of the evidence for feature-based attention

The review of the literature regarding FBA indicates that the feature attended tunes the response of cortical neurons throughout the visual field. Owing to the feature-based selective modulations, observers are faster to respond to the feature, show increased sensitivity in detecting the feature across the visual field, and exhibit various increased neural responses in those brain areas that are associated with the attended feature. The review indicates that the studies use either of two methods to infer FBA. Several studies use a blocked design in which observers search consistently during a whole (pure) block of trials for a target of a specific feature (e.g. a red target). For example, the behavioural experiments of Treisman [46], Müller et al. [47], Wolfe et al. [9] and Kumada [49] have such a design. The neural imaging studies of Corbetta et al. [52], Chawla et al. [53] and Zhang & Luck [56] also have such a design. The other studies use a design in which observers are instructed to attend to an actual stimulus feature, and in many cases focus their attention to one of two available features (one direction of motion, for example). This design is seen in the behavioural experiments of Rossi & Paradiso [50] and Saenz et al. [55]. Some of the neuro-imaging studies also have this design, such as those of O'Craven et al. [54], Serences & Boynton [40] and Saenz et al. [55]. The crucial aspect to note is that in all experiments the observer attends the actual (physical) stimulus feature, either on the previous trial (in the blocked design) or during the current trial (e.g. focusing on one feature in the centre while the FBA effect spreads across the visual field).

Even though not all authors make it explicit that the FBA effects they reported are driven by a top-down attentional set, it is more or less implied in all studies. Some authors are very explicit. Wolfe et al. [42], for example, claim ‘top-down information makes a substantial contribution to reaction time (RT) even for the simplest of feature searches. Fully mixed RTs are about 80 ms slower than are blocked RTs’ (p. 485). Müller et al. [47] assume a top-down weighting mechanism allowing observers to put more weight on one or the other feature dimension. Zhang & Luck [56] indicate that the effect that they report provides clear evidence for top-down FBA leading to enhanced feed-forward transmission. In a review paper, Maunsell & Treue [57] compare FBA to top-down space-based attention, implicitly suggesting that FBA may possibly be controlled in the same way as space-based attention.

The question, however, that needs to be addressed is whether FBA effects as reported in all these studies are in fact under volitional, top-down control. In all designs used in these FBA experiments, it is possible that feature priming played a role. In the blocked designs, this is evident as observers search each and every trial for the same target. But also the designs in which observers focus on one of the features, priming may drive the cortical neurons throughout the visual field. So despite the suggestion in most FBA studies that FBA is a knowledge-based, expectancy-driven top-down effect, the effect may merely be the result of passive bottom-up priming [58,59]. Granted, the act of attending to a feature is top-down, yet subsequent tuning of the response of cortical neurons throughout the visual field may merely be the passive and mandatory consequence of attending the feature.

Maljkovic & Nakayama [58] demonstrated the strength of bottom-up priming. They showed that it is impossible to counteract the priming of a previous trial. Intertrial facilitation could not be abolished or reduced even when participants knew exactly which target would be presented at the next trial (see also [60–62]; see also figure 3). Participants could not actively set themselves for a target that was different from that of the previous trial.

Figure 3.

Data from J. Theeuwes & E. van der Burg (2011, unpublished data). Observers had to either search consistently for a red or green singleton (repetition condition) or consistently switch between red and green (switch condition). In the repeat condition, selection was perfect; in the repeat condition there was a significant congruency effect, suggesting that observers were not able to switch their search set from red to green and vice versa, even though they knew they had to switch from red to green, to red, to green, and so on, during the whole experiment.

4. Top-down feature-based attention

To really demonstrate that FBA is top-down and similar to endogenous spatial attention, a similar cueing procedure should be applied as is commonly used in spatial attention studies. As outlined in the classic Posner-like studies before display onset, observers receive a central symbolic cue (e.g. an arrow, or a word ‘left’) that indicates the likely location of the upcoming target. For example, the centrally presented arrow may indicate, in 80% of trials, the location where the target will appear. A similar procedure should be applied to FBA. In other words, before each trial, the likely feature to be searched for should be presented as a cue.

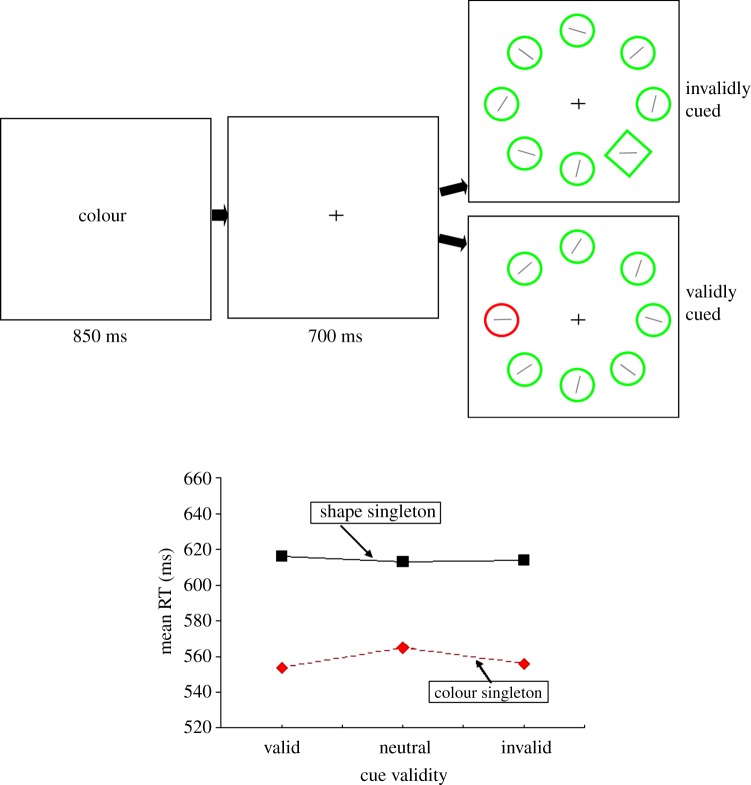

Among the first studies to test the viability of FBA in this way was a study by Theeuwes et al. [63]. In this study, observers were cued with a cue that had a validity of 83% about the likely feature property of the upcoming target singleton (figure 1). There was 1.5 s to optimally prepare for the feature that would be the target of the upcoming trial. For example, as shown in figure 1, observers received the word ‘colour’ as a cue and knew with 83% certainty that the target line segment (horizontal or vertical) they were looking for would be presented within the red circle. In 17% of the trials, the target line segment would appear in the shape singleton (the green diamond). In the neutral condition, no information about the property of the target singleton was provided. As is clear from the data, providing this information has no effect on the efficiency of target selection. Knowing whether the target is red or a diamond did not improve performance. These results suggest that when one cues in a similar way as is typically done in the endogenous spatial cueing paradigm, FBA appears to be ineffective. There is no evidence that it is possible to tune the cortical neurons throughout the visual field by endogenously preparing for the upcoming feature (see also [62]).

Figure 1.

Stimuli and adapted from Theeuwes et al. [63]. The cue indicated with a validity of 83% the likely target singleton dimension for the upcoming trial (the word cue ‘shape’, ‘colour’ or ‘neutral’). Observers responded to the orientation of the line segment inside the singleton which was either a pop-out red circle or a pop-out green diamond. The RT data show that observers were faster to respond to the colour singleton than to the shape singleton. Importantly, however, the validity of the cue had no effect on responding. These data indicate that top-down FBA is not effective in guiding search.

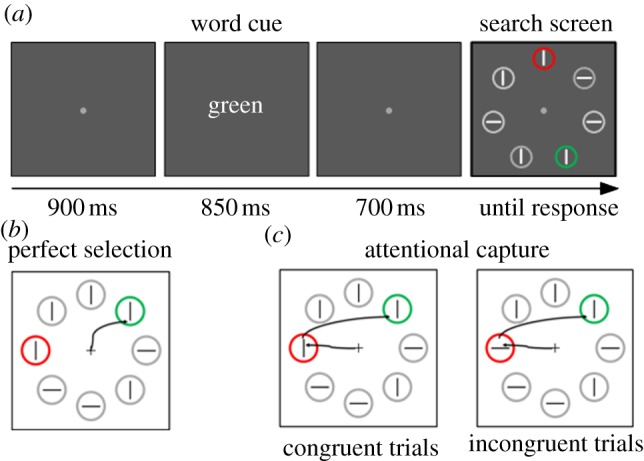

In a more recent study, we tried to push the notion of FBA further [61]. This study employed a very simple visual search task with displays in which two salient singletons were simultaneously present (for example, a red and green target circle among a number of grey circles). Within all circles of the display there were vertical or horizontal line segments, and observers responded to the orientation of the line segment located within one of the uniquely coloured target singletons. In each trial, before the search display was presented, observers received a cue telling them which circle to select in the upcoming trial. This cue was a simple word saying ‘red’, ‘green’; i.e. whatever colour was the target colour in the next upcoming trial. The interval between cue and search display was long enough (about 1.5 s) for observers to set their featural attentional bias towards the relevant colour (see figure 2 for the paradigm). The cue was 100% valid, so there was every reason for an observer to use that cue. In essence, the task was similar to a location cueing task in which observers need to set themselves in each trial to prepare for a particular colour.

Figure 2.

(a) Observers were asked to search for one of two colour singletons and respond to the line segment inside the one that was indicated by the cue (in this example: search for the green singleton). (b) Perfect selection: observers direct attention only to the green singleton and respond to the line segment. (c) Attention is captured by the red irrelevant singleton, the line inside the irrelevant distractor singleton could be congruent (e.g. both vertical) or incongruent (e.g. one horizontal and one vertical) with the orientation of the line segment inside the (cued) target singleton. If a congruency effect is found, one can only conclude that at some point attention was directed to the irrelevant singleton (adapted from [61]).

To determine the efficiency of selection, we used a technique known as ‘the identity intrusion technique’ first used by Theeuwes & Burger [64]. The underlying notion is that if observers are perfectly able to select only the target singleton (the singleton indicated by the cue) and respond to the line segment inside it, then the identity of the line segment positioned in the distractor singleton (the other popping-out element) should not affect responding. If, however, selection is not perfect one expects that, at least in a subset of trials, attention will be captured by the distractor singleton before attention is directed to the target singleton. If that is the case, one expects a congruency effect (see figure 2 for a further explanation). In other words, if the line segment in the distractor singleton is identical to that inside the target singleton (i.e. congruent), observers should respond faster than when the line segments are incongruent (e.g. a horizontal line segment inside the target singleton and a vertical line segment in the distractor singleton), in which case observers should be relatively slow (see also [32,64–66]).

Even though the cue was 100% valid and observers had plenty of time to set themselves for the colour of the upcoming target, a clear congruency effect was found, suggesting that selection was not perfect. Finding a congruency effect suggests that, at least in a subset of trials, attention was erroneously directed towards the irrelevant colour singleton before it was directed to the target. Theeuwes & Van der Burg [61] concluded that top-down feature-based selection is limited. Even though the two singletons were about equally salient (there was no reliable difference in the absolute RTs between the various colours in experiments), the smallest increase in top-down weight on the target colour should have tipped the weight in favour of the target and should have selectively biased attention only to the target singleton. Their results showed that this top-down bias was not able to prevent attentional capture, i.e. the erroneous processing of the irrelevant distractor singleton.

In subsequent studies, Theeuwes and Van der Burg further tested the limits of top-down FBA. In one study, observers were told that they had to switch each trial from red to green, then to red, then to green, etc. Observers were also reminded in each trial by a word cue (the same as in figure 2) giving the colour of search. Even though observers knew that they had to switch the attentional set throughout the whole experiment, they were unable to prevent attentional capture by the irrelevant singleton as is evidenced by the large congruency effect (see figure 3, red line). Crucially, when the attentional set did not switch (red, red, red, or green, green, green) observers were not only fast, but also there was no evidence for a congruency effect, suggesting that search was perfect and no attentional capture was observed.

The finding that there is no attentional capture by the irrelevant singleton when the colour remained the same over the whole block of trials is important, because it indicates that when the target feature stays the same across a block of trials, FBA is effective in guiding attention. This is consistent with the FBA studies that we discussed earlier that all used a blocked design to infer effective FBA. In this study, FBA prevents attentional capture by an irrelevant singleton.

5. Feature-based attention and history

The studies discussed above suggest that feature-selective attention is not efficient when observers are asked to set themselves in each trial for the upcoming target feature (red, green, etc.). Clearly, the congruency effect indicates that it was impossible for observers to prevent, in a top-down way, attentional capture by the irrelevant singleton. However, as is clear from figure 3, when the target feature (in this case the colour) is repeated from trial to trial, selection is perfect and there is no capture by the irrelevant singleton. These findings clearly show the effectiveness of FBA; yet this effect may not be under top-down control but instead may be driven by what happened in the previous trial. This implies that feature-based selection may be nothing other than feature priming, driven by what was selected in the previous trial. This intertrial priming effect is likely to be automatic and bottom-up driven [61,62,67,68].

In subsequent studies [61,62], we showed that this effect not only works through intertrial priming but that also by cueing, i.e. by showing the search feature as a cue. Just like intertrial priming, this results in perfect selection (no congruency effect). Figure 4 gives an example of this paradigm. The results indicated that when the search object is shown beforehand, observers are able to select the relevant feature (in this example, the red circle) without interference from the irrelevant distractor (in this example, the green circle).

Figure 4.

Observers were asked to search for one of two colour singletons and respond to the line segment inside the one that was indicated by the cue (in this example: search for the colour singleton). In this particular task, observers are able to select the circle indicated by the cue without any interference of the irrelevant distractor (adapted from [61]).

An important question that needs to be addressed is whether this (intertrial) priming effect is indeed automatic and bottom-up. One could, for example, argue that a symbolic cue (as in figure 4) contains more information than a word cue (as in figure 2), which could explain the difference in cueing efficiency. A few studies have addressed this question directly. In one study [63], we also cued the upcoming target by means of a symbolic cue, but instead of being 100% predictive (as in the experiment described above) the cue had a validity of 80%. In that study, we did not examine selectivity between two colours but instead between a colour and a shape (e.g. red between green versus a diamond between circles). We looked at cue validity effects and showed that a matching cue (a diamond as a cue and a diamond as a target) resulted in faster reaction times than when the cue was invalid (a diamond as cue and a red circle as a target). Crucially, we repeated this experiment and made the cue counterpredictive. For example, when a red circle was shown as a cue, there was a high chance (83%) that the target would be a green diamond. In other words, a red circle as a cue indicated that observers should prepare for a green diamond, because in the majority of trials the target was a green diamond. The results indicated that even when the cue was counterpredictive it had an effect on the efficiency of selection. For example, when a red circle was presented as a cue (which indicated that a green diamond would be presented as a target in 83% of the trials), the cue (the red circle) still had an effect on RT such that observers were faster when the ‘unlikely’ red target singleton was presented. The same was true for the reverse (green diamond cue, red circle target). Importantly, an across-experiment analysis showed that the RT benefits owing to the cue were the same regardless of whether the cue was counterpredictive (17%) or highly predictive (80%). These findings indicate that the mere processing of the cue (regardless of its predictive validity) results in processing benefits (see also [62] for a similar effect). It is therefore unlikely that this cueing effect is the result of top-down volitional control as the predictive value had basically no effect. As such, this confirms the idea that priming is automatic, basically beyond volitional control [58,69].

Other studies investigating the role of priming in serial visual search (e.g. [70]) showed that priming did not affect the search slopes but only the overall reaction times, suggesting that priming does ‘guide’ search. As noted, it is hard to reconcile findings of serial search (i.e. Kristjansson et al. [70] involved conjunction search) with the present account, as in serial search post-selection processes (the object selected is indeed the target) play a major role. Other studies have also shown that when search becomes difficult, priming may play a less dominant role than strategy based top-down search (e.g. [71]).

A recent study by Leonard & Egeth [72] illustrates the role of post-selection processes in a study that examined the role of top-down feature and priming in visual search. Similar to the studies of Theeuwes and colleagues, observers received a cue telling them that the target of the upcoming trial would be either red or green. There was also a non-informative condition (the word ‘either’). The search display consisted of three, five or seven items, one of which had a unique colour constituting the target. The results show that the effect of foreknowledge was large when there were very few items in the display (e.g. three). However, with many items in the display (e.g. seven) preknowledge about the upcoming target feature had a negligible effect above and beyond intertrial priming. These results illustrate how advance feature knowledge can contribute to visual search: only when there is ambiguity about the identity of the target, can feature-based preknowledge have an effect (see also [73]). As outlined before, such an effect is likely to be post-selectional as it reduces uncertainty about the identity of an object after it has been selected (e.g. is it the target or not?). However, consistent with the current view, when there is no ambiguity about the identity of the target, advance knowledge above and beyond priming does not help much in the initial selection of the target.

(a). A special case: feature-based attention and contingent capture

An influential notion concerning (feature-based) attentional selection is the contingent capture hypothesis [36], which states that selection towards a particular stimulus feature critically depends, at any given time, on the perceptual goals held by the observer. In their original series of experiments, Folk et al. [36] used a spatial cueing paradigm in which a cue display was followed in rapid succession by a target display. Typically, there were four elements in the target display, and observers were required to identify the unique element. In different blocks of trials, observers searched either for a uniquely coloured red item surrounded by three white items (colour condition) or for the only element that was presented with abrupt onset (onset condition). When searching for one of these targets, there were two types of cue displays preceding the search display with a stimulus-onset asynchrony (SOA) of 150 ms. The cue was defined either by colour (in which one location was surrounded by red dots and the other three locations were surrounded by white dots) or by onset (in which one location was surrounded by an abrupt onset of white dots and the remaining locations remained empty). In the critical experiment, the cue that preceded the search display could be either valid (i.e. it appeared at the same location as the target) or invalid (i.e. it appeared at a different location than the target). Among the four potential target locations, the cue was valid in 25% of the trials and invalid in the remaining trials.

The crucial finding was that when observers were looking for a target defined as an abrupt onset, observers were fast on valid trials and relatively slow on invalid trials, but only when the cue was defined by an onset too. The same was found for the conditions in which they were looking for a target defined by colour: observers were fast on valid trials and relatively slow on invalid trials, but only when the cue was defined by colour. When the cue was defined by the other feature (i.e. colour when looking for onset or the other way around), no effect of cue validity was found. The critical finding of Folk et al.'s studies was that only when the search display was preceded by a to-be-ignored featural singleton (the ‘cue’) that matched the feature for which observers were searching, did the cue capture attention.

These findings have been interpreted as evidence that attentional top-down set towards particular features determines the selection priority: when set for a particular feature, one will select each feature that matches this top-down set; features that do not match top-down attentional sets will simply be ignored. In effect, the contingent capture notion is a prime representative of a FBA theory as this notion claims that top-down set towards a feature causes a bias attention to those image components that are related to the target throughout the visual field.

Even though the contingent capture notion has been very influential as a hypothesis of top-down attentional control, one aspect of the design of these experiments has been greatly overlooked. Crucially, in all contingent capture-like experiments, observers always search for a particular target feature throughout a whole block of trials. In other words, the attentional set is fixed over a block of trials, which gives rise to massive intertrial priming effects. If the attentional set for feature singletons as observed in the Folk et al.-like experiments is indeed the result of priming, then it is questionable whether this attentional set is top-down in nature.

To test this idea of whether the contingent capture is truly driven by a top-down attentional set (as Folk et al. assume), Belopolsky et al. [69] examined whether observers were able to endogenously set themselves to search for a particular target feature in each and every trial. Belopolsky et al. [69] used exactly the same spatial cueing paradigm as Folk et al. [36]. Instead of keeping the target feature, observers had to search for a fixed property over a whole block of trials (as was originally done with contingent capture experiments) and had to adopt a particular top-down set before the start of each single trial. In other words, observers were cued at the beginning of each trial to look for either a unique colour or the unique onset. If, as claimed by the contingent capture hypothesis, a top-down attentional set determines which property captures attention, then one would expect that only properties that match the top-down set would capture attention. Belopolsky et al. showed that even though observers knew the target feature of the upcoming trial, both relevant and irrelevant feature properties captured attention. In other words, there was no sign of contingent capture; instead both the relevant feature cue that matched the target and also the irrelevant feature cue summoned attention.

For the current discussion, Belopolsky et al. [69] made another important observation. An intertrial analysis of their data showed that the feature which was the target in the previous trial determined to a large extent the feature that would be selected in the current trial, suggesting a large role for intertrial priming. As in the typical Folk et al. paradigm, observers searched a whole block for a particular target, and it is likely that intertrial priming drove the contingent capture effect instead of the assumed top-down set. Belopolsky et al. suggested that the concept of ‘attentional set’ for a particular target feature as currently proposed by the contingent capture hypothesis is not top-down in origin. When the search feature is fixed, voluntary top-down control does not have to be present in a continuous fashion in each trial during the search task. Once the target parameters defined by instruction have led to a correct response, attentional selection can carry on based on bottom-up intertrial priming. This means that voluntary control over selection is neither necessary nor needed and attentional set is established after the first few experiences with the target.

6. Conclusions

FBA is a powerful mechanism that allows us to enhance the representation of image characteristics throughout the visual field. FBA is explained in terms of the tuning of the responses of cortical neurons (increasing the gain of neurons) throughout the visual field. When searching for a particular stimulus feature, it is particularly useful when relevant features are enhanced throughout the visual field. Even though it is suggested implicitly and explicitly that this feature-based tuning is under top-down (volitional) control, we have provided evidence that the FBA effects may not be as endogenous as commonly assumed. Our review of the literature indicates that all behavioural and neuro-imaging studies suffer from the shortcoming that they cannot rule out an effect of priming, i.e. the notion that merely attending to a feature enhances the processing of that feature across the visual field, an effect that may occur in an automatic bottom-up way. Our own studies that have investigated FBA by means of a cueing paradigm (similar to endogenous spatial cueing) demonstrate that FBA cannot occur purely on the basis of a top-down set (e.g. prepare for red). Instead, the actual processing of the stimulus (as a cue or as a target in a previous trial) is needed to allow the tuning of responses throughout the visual field. This latter claim is consistent with a recent study by Kristjansson et al. [74] who showed that observers’ active processing is necessary to obtain a priming effect. Future studies that take this shortcoming into account will reveal the boundary conditions of true top-down FBA.

References

- 1.Eriksen CW, Hoffman J. 1973. The extent of processing of noise elements during selective encoding from visual displays. Percept. Psychophys. 40, 225–240 (doi:10.3758/BF03211502) [DOI] [PubMed] [Google Scholar]

- 2.Hoffman JE. 1975. Hierarchical stages in the processing of visual information. Percept. Psychophys. 18, 348–354 (doi:10.3758/BF03211211) [Google Scholar]

- 3.Posner MI, Snyder CRR, Davidson BJ. 1980. Attention and the detection of signals. J. Exp. Psychol. Gen. 109, 160–174 (doi:10.1037/0096-3445.109.2.160) [PubMed] [Google Scholar]

- 4.Posner MI. 1980. Orienting of attention. Q. J. Exp. Psychol. 32, 3–25 (doi:10.1080/00335558008248231) [DOI] [PubMed] [Google Scholar]

- 5.Theeuwes J. 2010. Top-down and bottom-up control of visual selection. Acta Psychol. 123, 77–99 (doi:10.1016/j.actpsy.2010.02.006) [DOI] [PubMed] [Google Scholar]

- 6.Lappin JS, Uttal WR. 1976. Does prior knowledge facilitate the detection of visual targets in random noise?. Percept. Psychophys. 20, 367–374 (doi:10.3758/BF03199417) [Google Scholar]

- 7.Bundesen C. 1990. A theory of visual attention. Psychol. Rev. 97, 523–547 (doi:10.1037/0033-295X.97.4.523) [DOI] [PubMed] [Google Scholar]

- 8.Duncan J, Humphreys GW. 1989. Visual search and stimulus similarity. Psychol. Rev. 96, 433–458 (doi:10.1037/0033-295X.96.3.433) [DOI] [PubMed] [Google Scholar]

- 9.Wolfe JM. 1994. Guided search 2.0: a revised model of visual search. Psychon. Bull. Rev. 1, 202–238 (doi:10.3758/BF03200774) [DOI] [PubMed] [Google Scholar]

- 10.Carrasco M. 2011. Visual attention. Vis. Res. 51, 1484–525 (doi:10.1016/j.visres.2011.04.012) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 11.LaBerge D. 1981. Automatic information processing: a review. In Attention and performance IX (eds Long JB, Baddeley AD.), pp. 173–186 Hillsdale, NJ: Erlbaum [Google Scholar]

- 12.Tsal Y, Lamy D. 2000. Attending to an object's color entails attending to its location: support for location-special views of visual attention. Percept. Psychophys. 62, 960–968 (doi:10.3758/BF03212081) [DOI] [PubMed] [Google Scholar]

- 13.Cave KR, Pashler H. 1995. Visual selection mediated by location: selecting successive visual objects. Percept. Psychophys. 57, 421–432 (doi:10.3758/BF03213068) [DOI] [PubMed] [Google Scholar]

- 14.Kim M-S, Cave KR. 1995. Spatial attention in visual search for features and feature conjunctions. Psychol. Sci. 6, 376–380 (doi:10.1111/j.1467-9280.1995.tb00529.x) [Google Scholar]

- 15.Tsal Y, Lavie N. 1988. Attending to color and shape: the special role of location in selective visual processing. Percept. Psychophys. 44, 15–21 (doi:10.3758/BF03207469) [DOI] [PubMed] [Google Scholar]

- 16.Theeuwes J. 1989. Effects of location and form cueing on the allocation of attention in the visual field. Acta Psychol. 72, 177–192 (doi:10.1016/0001-6918(89)90043-7) [DOI] [PubMed] [Google Scholar]

- 17.Brawn PT, Snowden RJ. 1999. Can one pay attention to a particular color? Percept. Psychophys. 61, 860–873 (doi:10.3758/BF03206902) [DOI] [PubMed] [Google Scholar]

- 18.Humphreys GW. 1981. Flexibility of attention between stimulus dimensions. Percept. Psychophys. 30, 291–302 (doi:10.3758/BF03214285) [DOI] [PubMed] [Google Scholar]

- 19.Vierck E, Miller JO. 2005. Direct selection by color for visual encoding. Percept. Psychophys. 67, 483–494 (doi:10.3758/BF03193326) [DOI] [PubMed] [Google Scholar]

- 20.Sperling G. 1960. The information available in brief presentation. Psychol. Monogr. 74, 1–29 (doi:10.1037/h0093759) [Google Scholar]

- 21.von Wright JM. 1970. On selection in visual immediate memory. Acta Psychol. 33, 280–292 (doi:10.1016/0001-6918(70)90140-X) [DOI] [PubMed] [Google Scholar]

- 22.Brouwer RFT, van der Heijden AHC. 1996. Identity and position: dependence originates from independence. Acta Psychol. 95, 215–237 (doi:10.1016/S0001-6918(96)00042-X) [Google Scholar]

- 23.Bundesen C, Pedersen LF, Larsen A. 1984. Measuring efficiency of selection from briefly exposed visual displays: a model for partial report. J. Exp. Psychol. Hum. Percep. Perform. 10, 329–339 (doi:10.1037/0096-1523.10.3.329) [DOI] [PubMed] [Google Scholar]

- 24.van der Heijden AHC. 1993. The role of position in object selection in vision. Psychol. Res. 56, 44–58 (doi:10.1007/BF00572132) [DOI] [PubMed] [Google Scholar]

- 25.Shih SI, Sperling G. 1996. Is there feature-based attentional selection in visual search? J. Exp. Psychol. Hum. Percep. Perform. 22, 758–779 (doi:10.1037/0096-1523.22.3.758) [DOI] [PubMed] [Google Scholar]

- 26.Moore CM, Egeth H. 1998. How does feature-based attention affect visual processing? J. Exp. Psychol. Hum. Percep. Perform. 24, 1296–1310 (doi:10.1037/0096-1523.24.4.1296) [DOI] [PubMed] [Google Scholar]

- 27.Reppa I, Schmidt WC, Leek EC. 2012. Successes and failures in obtaining attentional object-based cueing effects. Attent. Percept. Psychophys. 74, 43–69 (doi:10.3758/s13414-011-0211-x) [DOI] [PubMed] [Google Scholar]

- 28.Corbetta M, Shulman GL. 2002. Control of goal-directed and stimulus-driven attention in the brain. Nat. Neurosci. 3, 201–215 (doi:10.1038/ni0302-201) [DOI] [PubMed] [Google Scholar]

- 29.Van der Stigchel S, Belopolsky AV, Peters JC, Wijnen JG, Meeter M, Theeuwes J. 2009. The limits of top-down control of visual attention. Acta Psychol. 132, 201–212 (doi:10.1016/j.actpsy.2009.07.001) [DOI] [PubMed] [Google Scholar]

- 30.Theeuwes J. 1991. Cross-dimensional perceptual selectivity. Percept. Psychophys. 50, 84–193 (doi:10.3758/BF03212219) [DOI] [PubMed] [Google Scholar]

- 31.Theeuwes J. 1992. Perceptual selectivity for color and form. Percept. Psychophys. 51, 599–606 (doi:10.3758/BF03211656) [DOI] [PubMed] [Google Scholar]

- 32.Schreij D, Owens C, Theeuwes J. 2008. Abrupt onsets capture attention independent of top-down control settings. Percept. Psychophys. 70, 208–218 (doi:10.3758/PP.70.2.208) [DOI] [PubMed] [Google Scholar]

- 33.Theeuwes J, Kramer AF, Hahn S, Irwin DE. 1998. Our eyes do not always go where we want them to go: capture of the eyes by new objects. Psychol. Sci. 9, 379–385 (doi:10.1111/1467-9280.00071) [Google Scholar]

- 34.Theeuwes J, Kramer AF, Hahn S, Irwin DE, Zelinsky GJ. 1999. Influence of attentional capture on oculomotor control. J. Exp. Psychol. Hum. Percep. Perform. 25, 1595–1608 (doi:10.1037/0096-1523.25.6.1595) [DOI] [PubMed] [Google Scholar]

- 35.Bacon WF, Egeth HE. 1994. Overriding stimulus-driven attentional capture. Percept. Psychophys. 55, 485–496 (doi:10.3758/BF03205306) [DOI] [PubMed] [Google Scholar]

- 36.Folk CL, Remington RW, Johnston JC. 1992. Involuntary covert orienting is contingent on attentional control settings. J. Exp. Psychol. Hum. Percep. Perform. 18, 1030–1044 (doi:10.1037/0096-1523.18.4.1030) [PubMed] [Google Scholar]

- 37.Jonides J. 1981. Voluntary versus automatic control over the mind's eye's movement. In Attention and performance IX (eds Long JB, Baddeley AD.), pp. 187–203 Hillsdale, NJ: Erlbaum [Google Scholar]

- 38.Yantis S, Jonides J. 1990. Abrupt visual onsets and selective attention: voluntary versus automatic allocation. J. Exp. Psychol. Hum. Percep. Perform. 16, 121–134 (doi:10.1037/0096-1523.16.1.121) [DOI] [PubMed] [Google Scholar]

- 39.Awh E, Belopolsky AV, Theeuwes J. 2012. Top-down versus bottom-up attentional control: a failed theoretical dichotomy. Trends Cogn. Sci. 16, 437–443 (doi:10.1016/j.tics.2012.06.010) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 40.Serences JT, Boynton GM. 2007. Feature-based attentional modulations in the absence of direct visual stimulation. Neuron 55, 301–312 (doi:10.1016/j.neuron.2007.06.015) [DOI] [PubMed] [Google Scholar]

- 41.Müller HJ, Reimann B, Krummenacher J. 2003. Visual search for singleton feature targets across dimensions: stimulus- and expectancy-driven effects in dimensional weighting. J. Exp. Psychol. Hum. Percep. Perform. 29, 1021–1035 (doi:10.1037/0096-1523.29.5.1021) [DOI] [PubMed] [Google Scholar]

- 42.Wolfe JM, Butcher SJ, Lee C, Hyle M. 2003. Changing your mind: on the contributions of top-down and bottom-up guidance in visual search for feature singletons. J. Exp. Psychol. Hum. Percep. Perform. 29, 483–502 (doi:10.1037/0096-1523.29.2.483) [DOI] [PubMed] [Google Scholar]

- 43.Wolfe JM, Horowitz TS, Kenner N, Hyle M, Vasan N. 2004. How fast can you change your mind? The speed of top-down guidance in visual search. Vis. Res. 44, 1411–1426 (doi:10.1016/j.visres.2003.11.024) [DOI] [PubMed] [Google Scholar]

- 44.Wilschut A, Theeuwes J, Olivers CNL. 2013. The time it takes to turn a memory into a template. J. Vis 13, 8 (doi:10.1167/13.3.8) [DOI] [PubMed] [Google Scholar]

- 45.Nothdurft HC, Gallant JL, Van Essen DC. 1999. Response modulation by texture surround in primate area V1: correlates of ‘popout’ under anesthesia. Vis. Neurosci. 16, 15–34 (doi:10.1017/S0952523899156189) [DOI] [PubMed] [Google Scholar]

- 46.Treisman A. 1988. Features and Objects: The fourteenth Bartlett memorial lecture. Q. J. Exp. Psychol. 40A, 201–237 (doi:10.1080/02724988843000104) [DOI] [PubMed] [Google Scholar]

- 47.Müller HJ, Heller D, Ziegler J. 1995. Visual search for singleton feature targets within and cross feature dimensions. Percept. Psychophys. 57, 1–17 (doi:10.3758/BF03211845) [DOI] [PubMed] [Google Scholar]

- 48.Found A, Müller HJ. 1996. Searching for unknown feature targets on more than one dimension: investigating a ‘dimension-weighting’ account. Percept. Psychophys. 58, 88–101 (doi:10.3758/BF03205479) [DOI] [PubMed] [Google Scholar]

- 49.Kumada T. 1999. Limitations in attending to a feature value for overriding stimulus driven interference. Percept. Psychophys. 61, 61–79 (doi:10.3758/BF03211949) [DOI] [PubMed] [Google Scholar]

- 50.Rossi AF, Paradiso MA. 1995. Feature-specific effects of selective visual attention. Vis. Res. 35, 621–634 (doi:10.1016/0042-6989(94)00156-G) [DOI] [PubMed] [Google Scholar]

- 51.Saenz MT, Buracas GT, Boynton GM. 2003. Global feature-based attention for motion and color. Vis. Res. 43, 629–637 (doi:10.1016/S0042-6989(02)00595-3) [DOI] [PubMed] [Google Scholar]

- 52.Corbetta M, Miezin FM, Dobmeyer S, Shulman GL, Petersen SE. 1990. Attentional modulation of neural processing of shape, color, and velocity in humans. Science 248, 1556–1559 (doi:10.1126/science.2360050) [DOI] [PubMed] [Google Scholar]

- 53.Chawla D, Rees G, Friston KJ. 1999. The physiological basis of attentional modulation in extrastriate visual areas. Nat. Neurosci. 2, 671–676 (doi:10.1038/10230) [DOI] [PubMed] [Google Scholar]

- 54.O'Craven K, Downing P, Kanwisher N. 1999. fMRI evidence for objects as the units of attentional selection. Nature 401, 584–587 (doi:10.1038/44134) [DOI] [PubMed] [Google Scholar]

- 55.Saenz MT, Buracas GT, Boynton GM. 2002. Global effects of feature-based attention in human visual cortex. Nat. Neurosci. 5, 631–632 (doi:10.1038/nn876) [DOI] [PubMed] [Google Scholar]

- 56.Zhang W, Luck SJ. 2009. Feature-based attention modulates feedforward visual processing. Nat. Neurosci. 12, 24–25 (doi:10.1038/nn.2223) [DOI] [PubMed] [Google Scholar]

- 57.Maunsell JH, Treue S. 2006. Feature-based attention in visual cortex. Trends Neurosci. 29, 317–322 (doi:10.1016/j.tins.2006.04.001) [DOI] [PubMed] [Google Scholar]

- 58.Maljkovic V, Nakayama K. 1994. Priming of pop-out. I. Role of features. Mem. Cogn. 22, 657–672 (doi:10.3758/BF03209251) [DOI] [PubMed] [Google Scholar]

- 59.Maljkovic V, Nakayama K. 1996. The priming of pop-out ii: role of position. Percept. Psychophys. 58, 977–991 (doi:10.3758/BF03206826) [DOI] [PubMed] [Google Scholar]

- 60.Theeuwes J, Van der Burg E. 2008. The role of cueing in attentional capture. Vis. Cogn. 16, 232–247 (doi:10.1080/13506280701462525) [Google Scholar]

- 61.Theeuwes J, Van der Burg E. 2011. On the limits of top-down control. Attent. Percept. Psychophys. 73, 2092–2103 (doi:10.3758/s13414-011-0176-9) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 62.Theeuwes J, Van der Burg E. 2007. The role of spatial and nonspatial information in visual selection. J. Exp. Psychol. Hum. Percep. Perform. 33, 1335–1351 (doi:10.1037/0096-1523.33.6.1335) [DOI] [PubMed] [Google Scholar]

- 63.Theeuwes J, Reimann B, Mortier K. 2006. Visual search for featural singletons: no top-down modulation, only bottom-up priming. Vis. Cogn. 14, 466–489 (doi:10.1080/13506280500195110) [Google Scholar]

- 64.Theeuwes J, Burger R. 1998. Attentional control during visual search: the effect of irrelevant singletons. J. Exp. Psychol. Hum. Percep. Perform. 24, 1342–1353 (doi:10.1037/0096-1523.24.5.1342) [DOI] [PubMed] [Google Scholar]

- 65.Theeuwes J. 1995. Perceptual selectivity for color and form: on the nature of the interference effect. In Converging operations in the study of visual attention (eds Kramer AF, Coles MGH, Logan GD.), pp. 297–314 Washington, DC: American Psychological Association [Google Scholar]

- 66.Schreij D, Theeuwes J, Olivers CNL. 2010. Irrelevant onsets cause inhibition of return regardless of attentional set. Attent. Percept. Psychophys. 72, 1725–1729 (doi:10.3758/APP.72.7.1725) [DOI] [PubMed] [Google Scholar]

- 67.Schreij D, Theeuwes J, Olivers CNL. 2010. Abrupt onsets capture attention independent of top-down control settings. II. Additivity is no evidence for filtering. Attent. Percept. Psychophys. 72, 672–682 (doi:10.3758/APP.72.3.672) [DOI] [PubMed] [Google Scholar]

- 68.Pinto Y, Olivers CNL, Theeuwes J. 2005. Target uncertainty does not lead to more distraction by singletons: intertrial priming does. Percept. Psychophys. 67, 1354–1361 (doi:10.3758/BF03193640) [DOI] [PubMed] [Google Scholar]

- 69.Belopolsky A, Schreij D, Theeuwes J. 2010. What is top-down about contingent capture? Attent. Percept. Psychophys. 72, 326–341 (doi:10.3758/APP.72.2.326) [DOI] [PubMed] [Google Scholar]

- 70.Kristjánsson A, Wang D, Nakayama K. 2002. The role of priming in conjunctive visual search. Cognition 85, 37–52 (doi:10.1016/S0010-0277(02)00074-4) [DOI] [PubMed] [Google Scholar]

- 71.Lamy D, Zivony A, Yashar A. 2011. The role of search difficulty in intertrial feature priming. Vis. Res. 51, 2099–2109 (doi:10.1016/j.visres.2011.07.010) [DOI] [PubMed] [Google Scholar]

- 72.Leonard CJ, Egeth HE. 2008. Attentional guidance in singleton search: an examination of top-down, bottom-up, and intertrial factors. Vis. Cogn. 16, 1078–1091 (doi:10.1080/13506280701580698) [Google Scholar]

- 73.Meeter M, Olivers C. 2006. Intertrial priming stemming from ambiguity: a new account of priming in visual search. Vis. Cogn. 13, 202–222 (doi:10.1080/13506280500277488) [Google Scholar]

- 74.Kristjánsson Á, Saevarsson S, Driver J. 2013. The boundary conditions of priming of visual search: from passive viewing through task-relevant working memory load. Psychon. Bull. Rev. 20, 514–521 (doi:10.3758/s13423-013-0375-6) [DOI] [PubMed] [Google Scholar]