Abstract

The current study investigated the effects of presentation time and fixation to expression-specific diagnostic features on emotion discrimination performance, in a backward masking task. While no differences were found when stimuli were presented for 16.67 ms, differences between facial emotions emerged beyond the happy-superiority effect at presentation times as early as 50 ms. Happy expressions were best discriminated, followed by neutral and disgusted, then surprised, and finally fearful expressions presented for 50 and 100 ms. While performance was not improved by the use of expression-specific diagnostic facial features, performance increased with presentation time for all emotions. Results support the idea of an integration of facial features (holistic processing) varying as a function of emotion and presentation time.

Keywords: Facial expressions, Facial features, Eye-tracking, Fixation location

Facial expressions provide individuals with important emotional signals in social situations. Following the influential work by Ekman (e.g., Ekman, 1993), many theorists argue that facial expressions convey discrete emotions and six basic ones have been identified: happiness, fear, disgust, surprise, sadness, and anger. Many studies have revealed differences in our ability to discriminate these prototypic, often exaggerated, facial expressions (e.g., Palermo & Coltheart, 2004; Rapscak et al., 2000). Performance varies across emotions and studies, but happiness is always best discriminated (~88–99% accuracy) while fear and surprise are most often the worst discriminated emotions (~51–75% accuracy). Angry, disgusted, and neutral expressions fall somewhere in between.

Under certain circumstances (i.e., being approached by another individual) little time is available for thorough processing of facial expressions; one must quickly extract facial information in order to respond quickly and appropriately. While accurate emotion judgements can be made with millisecond face-exposure durations (e.g., Kirouac & Doré, 1984), presentation time seems to impact this discrimination in important yet unclear ways. Many behavioural studies used a backward visual masking procedure where a target face is briefly presented closely followed by a masking stimulus, which prevents further processing of the target and ensures precise exposure time (see Wiens, 2006). Loffler, Gordon, Wilkinson, Goren, and Wilson (2005) compared various types of masks including upright and inverted faces, and non-face masks. They reported that for face identity discrimination tasks, the greater the similarity between the mask and target face, the stronger the masking effect, with upright faces yielding the best results. However, when using only upright masks, Bachmann, Luiga, and Põder (2005) showed that different-face masks yielded better masking effects than same-face masks. For facial expression discrimination tasks, Milders, Sahraie, and Logan (2008) demonstrated that a neutral face was a more effective mask than a dynamic checkerboard. However, to our knowledge, no facial emotion study has compared the effectiveness of other types of masking stimuli. Because neutral-face masks could cause confusion in the discrimination of neutral expressions, possibly introducing a response bias, the present study compared the effects of upright and inverted neutral-face masks to determine the most efficient mask to be used in facial expression discrimination studies. Inverted faces, while configurally disrupted, are still perceived as faces but could be less confused with (upright) neutral-face targets.

Kirouac and Doré (1984) were the first to investigate the presentation time required for accurate emotion discrimination using a backward mask. All basic emotions were presented for durations ranging from 10 ms to 50 ms, followed by a mask. High accuracy performance was seen for all emotions except fear and anger during the 10 ms condition. Results were unclear as not all statistical analyses were reported and the type of mask used was not indicated. Esteves and Öhman (1993) presented neutral, angry, and happy expressions from 20 ms to 300 ms, followed by an upright neutral-face mask and found that increasing presentation time increased discrimination performance. In particular, participants scored above chance level from 50 ms presentation or longer and below chance level during the 30 ms presentation. Results were replicated by Esteves, Parra, Dimberg, and Öhman (1994). The percentage of correct responses (hits) these studies used to evaluate discrimination performance was shown to be highly sensitive to response bias when performing forced-choice tasks (Green & Swets, 1966; MacMillan & Creelman, 1991). To avoid this bias, recent studies evaluated performance according to signal detection theory methods and used A′, a non-parametric analogue of the d′ sensitivity measure. Unlike d′, A′ does not require the assumption of a normal signal-to-noise distribution and can be used with a relatively small number of trials (MacMillan & Creelman, 1991; Maxwell & Davidson, 2004).

Using A′, Calvo and Esteves (2005) found above chance discrimination for angry, happy, and sad expressions presented longer than 20 ms, whereas Maxwell and Davidson (2004) reported above chance level discrimination for neutral, happy, and angry expressions with presentation times as short as 17 ms. In both studies, discrimination performance was higher for happiness than the other facial expressions, which did not differ from each other. Most recently, Milders et al. (2008) reported no difference between the tested facial expressions at 10 ms presentation times. At 20 ms, scores were higher for happiness than for fear, and by 40 ms happiness scores were higher than all tested emotions. However, there were no discrimination differences between the other emotions (neutral, anger, and fear) across presentation times (ranging from 10 ms to 50 ms), a result in contrast to the non-masking literature (e.g., Palermo & Coltheart, 2004; Rapscak et al., 2000).

To summarise, despite differences in participants, stimuli, and procedures, above chance-level discrimination of happy faces is seen at presentation times as short as 17 ms (Maxwell & Davidson, 2004). However, studies using backward masking and signal detection methods have not replicated the differences seen in the non-masking literature between the other facial expressions. Additionally, whether or not these differences between emotions vary with presentation time remains unknown. It is also important to note that surprise and disgust have not been investigated using this paradigm. In the present study, we report discrimination differences beyond the happy-superiority effect that vary with presentation times, when using A′ measures and neutral, disgusted, fearful, happy, and surprised facial expressions in a backward masking paradigm. These results are important as they suggest the time course of facial information extraction might vary as a function of emotion.

Recent studies suggest that individual features play in important role when discriminating facial expressions. Gosselin and Schyns (2001) have developed a new technique called Bubbles that randomly reveals portions of the face of various sizes and spatial frequencies, thereby revealing the facial information most useful or diagnostic for a given discrimination task. Studies using this technique have suggested that specific locations on the face provide the most important and diagnostic information for the accurate discrimination of the prototypic six basic expressions (Schyns, Petro, & Smith, 2007; Schyns, Petro, & Smith, 2009; van Rijsbergen & Schyns, 2009). For instance, the eyes were shown to be the primary diagnostic feature for fear, the mouth for happiness and surprise, and the corners of the nose for disgust. These findings indirectly extended and supported previous literature that claimed that the facial areas providing the best discrimination accuracy vary with each emotion when participants were presented with face parts (Boucher & Ekman, 1975; Calder, Young, Keane, & Dean, 2000, Experiment 1; Hanawalt, 1944).

Further evidence for the importance of individual features in emotion discrimination comes from visual scanning and neuropsychologial studies although findings are mixed. Many studies have reported longer viewing time and/or more fixations towards the eyes compared to the mouth and nose regardless of facial expressions (e.g., Clark, Neargarder, & Cronin-Golomb, 2010; Guo, 2012; Sullivan, Ruffman, & Hutton, 2007). These results support the idea of a special role played by the eye region in face processing in general (Itier & Batty, 2009). Other studies, however, suggest some facial features might be attended to more depending on the emotion. For example, Scheller, Büchel, and Gamer (2012) showed that participants moved their eyes less toward the eyes and more toward the mouth when the face was expressing happiness while the opposite was seen for fearful and neutral faces. Similarly, Gamer and Büchel (2009) showed that although participants usually moved their eyes more toward than away from the eyes, they did so more for fearful and neutral faces than for happy or angry faces. In contrast, Eisenbarth and Alpers (2011) showed that the eyes received more attention for sad and angry faces, the mouth for happy faces, but that both features were equally important for fearful and neutral faces. In a famous neuropsychological study, Adolphs et al. (2005) reported that instructing a patient with amygdala damage to focus on the eye region of a fearful expression improved her ability to recognise fear to the same level as control patients. However, although some of these studies support the idea of a greater attention toward expression-specific diagnostic features, it remains unclear whether fixation on these features would improve discrimination performance in the normal population and for all facial expressions, and reveal accuracy differences between emotions not yet reported. Importantly, the eye-tracking literature supporting the idea of a differential amount of attention toward diagnostic features (i) does not speak to the impact of these features on accuracy performance and (ii) uses presentation times usually larger than the ones used in the masking literature, thus preventing a full understanding of the impact of facial features in the earliest stages of vision.

The present study extends previous work investigating the presentation time required for accurate expression discrimination (e.g., Maxwell & Davidson, 2004; Milders et al., 2008) by reporting whether performance varies with emotion and fixation on diagnostic facial features and whether this is seen at brief presentation times. Eye-tracking ensured fixation to specific locations on the face during the presentation of neutral, disgusted, fearful, happy, and surprised expressions. Due to time constraints, all basic expressions were not tested and the ones used were chosen based on their different diagnostic features. In each experiment a specific exposure time before mask (Group 1 = 16.67 ms, Group 2 = 50 ms, Group 3 = 100 ms) and eight different locations on the face (chin, forehead, left cheek, left eye, nose, mouth, right cheek, and right eye) were used for each emotion. To test for differences in accuracy between emotions and fixation locations, presentation times were chosen in line with the previous literature (i.e., 10–50 ms; Milders et al., 2008). The refresh rate of the CRT monitor used resulted in 16.67 ms as the lowest possible exposure time. A 100 ms presentation time group was also included to search for potential differences between emotions not reported previously. Experiment 1 used inverted neutral-face masks to avoid confusion when neutral target faces were presented. In Experiment 2 an upright neutral-face mask was used to compare mask efficiency and relate better to the existing literature.

To the best of our knowledge, this is the first study to test facial expression discrimination in a backward masking paradigm, while using eye-tracking to ensure fixation on specific feature allocations during brief presentations of the entire face. Based on previous findings in the masking (e.g., Milders et al., 2008) and non-masking literatures (e.g., Palermo & Coltheart, 2004; Rapscak et al., 2000), a happy-superiority effect was expected at all presentation times. Additionally, based on the non-masking literature lower discrimination performance for surprise and fear was expected. Increased performance with increased exposure time was predicted for all emotions (e.g., Esteves & Öhman, 1993). Finally, following studies demonstrating the use of facial features in expression discrimination (e.g., Schyns et al., 2009) higher performance was expected for fixation on expression-specific diagnostic facial features, relative to non-diagnostic locations. For example, fixation on the mouth of a happy face (i.e., the diagnostic cue for happy expressions) should yield a higher performance (as indexed by higher A′ values) than fixation on the forehead (i.e., non-diagnostic cue for happy expression). Other tested diagnostic cues included the eyes for fear, the nose for disgust, and the mouth for surprise. For neutral expressions, we expected to see no effect of fixation location.

EXPERIMENT 1

Method

Participants

A total of forty-nine undergraduate participants (33 females), all with normal or corrected-to-normal visual acuity were recruited from the University of Waterloo (UW) for course credit. Participants were pre-screened and only selected if born and raised in North America, as the strategy employed to extract visual information from faces differs across cultures (e.g., Blais, Jack, Scheepers, Fiset, & Caldara, 2008). Fifteen (6 females), age range 18–25 years (Mage = 20.6 years), participated in Group 1 (16.67 ms presentation time). Nineteen participants were recruited for Group 2 (50 ms presentation time). Four were rejected due to a low number of trials per condition (< 20) after removing saccade-contaminated trials, resulting in a final sample of 15 participants (9 females) aged 19–23 (Mage = 20.30 years) included in the analyses. A total of 15 students (10 females) aged 18–25 years (Mage = 19.5 years) were recruited for Group 3 (100 ms presentation time). None of the participants were included in more than one group.

Stimuli

Ten static photographs of faces (5 men, 5 women), each with neutral, disgusted, fearful, happy, and surprised expressions, were selected from the NimStim set of facial expressions (see Tottenham et al., 2009, for a full description and validation of the stimuli). Images were converted to greyscale in Photoshop CS4 Extended and cropped to be 24.02 cm (W) × 35.00 cm (H) at a resolution of 72 pixels/inch. Hair and part of the neck remained on the final stimuli to maintain ecological validity (see Figure 1) but all images excluded any easily distinguishable paraphernalia such as earrings. Each image was viewed against a grey background and subtended 19.08° of visual angle horizontally and 26.75° vertically at a viewing distance of 0.70 m. Eight neutral faces (4 men, 4 women) of different identities from the target faces, were selected from NimStim and served as masks. The mask stimuli were converted to greyscale and rotated by 180° (inverted) in Photoshop CS4 Extended. Eight different fixation-cross locations were assigned to each of the five expressions for each identity. For each stimulus, exact coordinates corresponding to eight feature locations on the face were recorded: chin, forehead, left cheek, left eye, mouth, nose, right cheek, and right eye. Fixation crosses on the forehead, nose, and chin were aligned with one another along an axis passing through the middle of the nose and face. For the forehead position, the cross was placed at the centre of the forehead vertically, between the edge of the hair line and the nasion. For the chin position, the cross was placed vertically at equidistance between the outer edge of the chin and the lower lip of the mouth. Eye coordinates were determined by placing the cross on the centre of the pupil. For the cheek positions, the cross was situated at equidistance horizontally between the centre of the nose tip and the outline of the face. Vertically, they were placed approximately in the centre of the cheeks. No two fixation-crosses were presented in the exact same location due to minor variations between the identities and expressions so each picture had a different set of fixation locations.

Figure 1.

Example of the stimuli used; all images were shown in greyscale. From left to right: neutral expression, disgust, fear, happiness, and surprise. Note that each of the 10 identities expressed all emotions.

Apparatus

The stimuli were presented on a Viewsonic PS790 CRT 19-inch colour monitor driven by an Intel Corel Quad CPU Q6700 with a refresh rate of 60 Hz. Eye movements were recorded using a remote Eyelink 1000 eye-tracker from SR Research with a sampling rate of 1,000 Hz. The eye-tracker was calibrated to each participant’s dominant eye, but viewing was binocular. Calibration was done using a nine-point automated calibration accuracy test. Calibration was repeated if the error at any point was more than 1°, or if the average for all points was greater than 0.5°. The participants’ head positions were stabilised with a head and chin rest to maintain viewing position and distance.

Materials and procedure

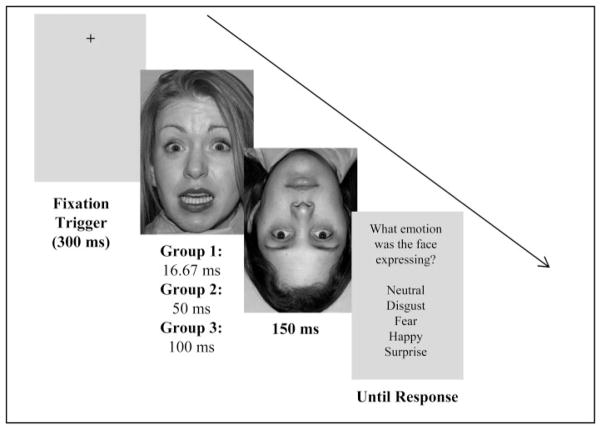

Before the experiment started, participants were given an eight-trial practice session. Each of the eight fixation-cross locations was presented so that participants became accustomed to moving their eyes to various locations on the screen between trials. During trials, participants were instructed to fixate on the black fixation-cross to initiate the trial and to remain fixated there until the response screen appeared. In the experimental session a trial started with a black fixation-cross on a grey background jittered between 1,000 and 1,500 ms. For each trial the fixation-cross appeared at one of the eight fixation locations in an unpredictable fashion. Once participants focused on the centre of the fixation-cross for 300 ms the target face was presented for 16.67 ms in Group 1, 50 ms in Group 2, and 100 ms in Group 3, and was immediately masked by one of the four inverted neutral-face masks. The 60 Hz refresh rate of the monitor limited the lowest possible presentation time of the target to 16.67 ms. The mask duration was always 150 ms (100 ms and greater have been shown to be effective masks; e.g., Esteves & Öhman, 1993). Immediately following the mask a response screen appeared with a vertically presented list of the five emotions which remained until response (see Figure 2 for a trial example). The order of the emotions on the response screen was kept constant for all trials. Participants were instructed to respond as quickly and accurately as possible. The next trial was initiated following the response made using a mouse click. Testing was carried out in 10 blocks separated by a self-paced break, during which the eye-tracker was recalibrated. During each block there were 120 images presented pseudo-randomly. Each fixation-cross location was presented 15 times and at each fixation-cross location the five expressions were presented three times each such that all identities and emotions were presented across the 10 blocks. The same identity and fixation location were never presented less than three trials apart. The order of the 10 blocks was counterbalanced across participants with a total of 1,200 trials (30 trials for each emotion and fixation location condition).

Figure 2.

Trial example with forehead fixation: Subjects were tested on 1,200 trials organised as follows. First the fixation point was displayed on the screen for a jittered amount of time (1,000–1,500 ms) with a fixation trigger of 300 ms. Then the greyscale picture was flashed for 16.67 ms, 50 ms or 100 ms, immediately followed by an inverted greyscale mask for 150 ms. Subjects had an unlimited amount of time to select the correct response using the click of the mouse on the corresponding word.

Participants then completed the State-Trait Inventory for Cognitive and Somatic Anxiety (STICSA; Ree, French, MacLeod, & Locke, 2008). The STICSA is a Likert scale assessing cognitive and somatic symptoms of anxiety as they pertain to one’s mood in the moment (state; 21 items) and in general (trait; 21 items). Anxiety was measured because it is known to interact with the processing of emotions like fear (e.g., Dugas, Gosselin, & Ladouceur, 2001). Participants completed a demographic questionnaire assessing age, vision, gender, and ethnicity.

Data analysis

Any trial with one or more saccades and any participant with less than 20 trials per condition were removed from the analyses.

Expression accuracy performance was analysed using A′ measures calculated for each emotion and each fixation-cross location. First, the number of responses for each condition was tabulated. For example, for happy expressions with a left eye fixation location, the possible responses were happy and non-happy (neutral, disgusted, fearful, or surprised). Next, the number of correct happy responses and non-happy responses were each divided by the number of presentations of happy plus non-happy stimuli. This provided conditional probabilities for each emotion at each fixation location. Hit rate (H) is the probability of correctly making a response given the corresponding stimulus. False alarm rate (FA) is the probability of making a particular response when the corresponding stimulus is absent (e.g., answering “fearful” when a happy face was presented). The probability of H and FA were entered into Equation 1 to determine the A′ for conditions where H ≥ FA. For conditions where the FA ≥ H, Equation 2 was used. A′ values ranged from 0 to 1, with chance level equal to 0.5. For a discussion of the A′ sensitivity index see Haase, Theios, and Jenison (1999), MacMillan and Creelman (1991), Maxwell and Davidson (2004), and Snodgrass and Corwin (1988).

| (1) |

| (2) |

The A′ values were compared in a 5 (Emotion) × 8 (Fixation Location) × 3 (Presentation Time) mixed analysis of variance (ANOVA), with Emotion and Fixation Location as within-subject factors and Presentation time as a between-subject factor. Further analyses of the interactions found were completed with separate ANOVAs for each presentation time using a 5 (Emotion) × 8 (Fixation Location) ANOVA and for each emotion comparing presentation times using an 8 (Fixation Location) × 3 (Presentation Time) ANOVA.

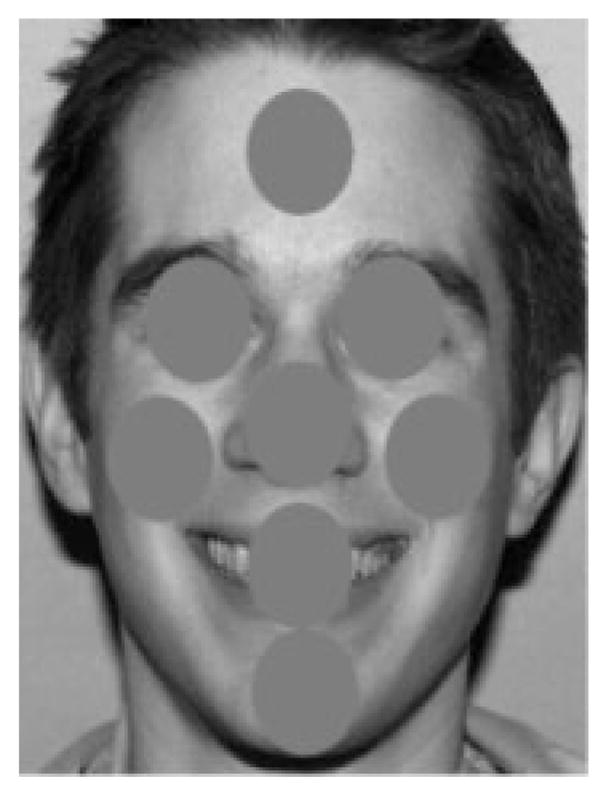

To rule out possible influences of low-level factors, we measured the mean luminance and root mean squared (RMS) contrast of each picture using a home-made Matlab program and compared them across emotions using paired sample t-tests, with p-values corrected for multiple comparisons. For each picture, an RMS contrast and luminance were also calculated for circular areas of 4° visual angle around each fixation location (i.e., foveated areas which did not overlap, see Figure 3) and were analysed using a 5 (Emotion) × 8 (Fixation Location) ANOVA.

Figure 3.

Example of areas of 4° visual angle around each fixation location (i.e., foveated areas which did not overlap) used to calculate local RMS contrast and luminance for each picture.

For ANOVA analyses in all experiments, the Greenhouse–Geisser correction to the degrees of freedom was used when sphericity was violated and Bonferroni corrections were used for multiple comparisons.

Results

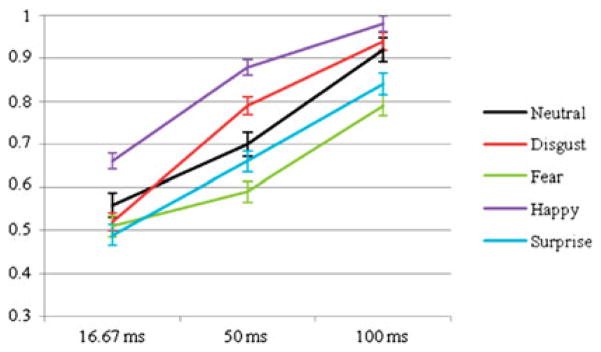

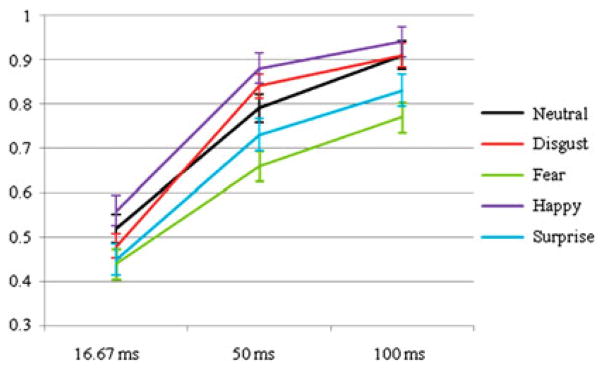

The mixed ANOVA revealed a significant main effect of Emotion, F(1.91, 80.00) = 33.77, p<.001, , such that for all groups A′ values were highest for happiness, followed by neutral and disgust (which did not differ), and lowest for surprise and fear (which did not differ). The main effect of Fixation Location, F(4.84, 203.11) = 17.52, p<.001, , revealed the lowest A′ values for forehead fixations and lower A′ values for right and left eye fixations compared to nose fixations. A main effect of Presentation Time, F(2, 42) = 136.02, p<.001, , indicated the lowest A′ values at 16.67 ms presentation time followed by 50 ms, and highest A′ scores for the 100 ms group, as clearly seen on Figure 4. There were significant interactions of Emotion by Presentation Time, F(3.81, 80.02) = 5.59, p<.01, , Fixation Location by Presentation Time, F(9.67, 203.11) = 2.62, p<.01, , and Emotion by Fixation Location, F(11.96, 502.52) = 1.36, p<.05, .

Figure 4.

Mean A′ values for neutral expression, disgust, fear, happiness, and surprise presented for 16.67 ms, 50 ms and 100 ms in Experiment 1 (inverted face-mask). C: chin; F: forehead; LC: left cheek; LE: left eye; M: mouth; N: nose; RC: right cheek; RE: right eye. Error bars represent standard errors to the means.

For the 16.67 ms presentation time group analysed separately (Figure 4), the main effect of Emotion was not significant, F(1.39, 19.52) = 3.50, p=.07, although A′ values tended to be highest for happy faces. No other effects were found.

For the 50 ms presentation time group, a significant main effect of Emotion, F(4, 56) = 54.18, p<.001, , revealed highest A′ scores for happiness, followed by disgust, then neutral and surprise (which did not differ), and lastly fear (all significant pairwise comparisons at p<.05). The effect of Fixation Location, F(3.02, 42.25) = 16.36, p<.001, , was due to lowest A′ scores for forehead fixations compared to all other locations and lower performance for the left eye (significantly at p<.05 compared to left cheek, mouth, nose, and right cheek). No significant interaction was found (p=.08).

For the 100 ms presentation time group, a main effect of Emotion, F(2.17, 30.43) = 39.69, p<.001, , was due to the highest A′ scores seen for happiness and disgust (which did not differ), followed by neutral, and the lowest scores for fear and surprise (which did not differ). The effect of Fixation Location, F(2.75, 38.58) = 13.35, p<.001, , was due to lower performance for forehead fixations compared to all other fixation locations (paired comparisons at p<.05). No interaction was found (p=.19).

To explore the Emotion by Presentation Time interaction better, separate ANOVAs were conducted for each emotion. For fearful expressions, discrimination performance was lower for the 16.67 and 50 ms groups (which did not differ) compared to the 100 ms group (Figure 5). All other emotions followed the pattern of the main effect of Presentation Time with an increase in performance between 16.67 ms, 50 ms, and 100 ms.

Figure 5.

Mean A′ values for neutral expression, disgust, fear, happiness, and surprise collapsed across fixation location when presented for 16.67 ms, 50 ms, and 100 ms in Experiment 1 (inverted face-mask). Error bars represent standard error to the means.

Discussion

As predicted, when faces were presented for 50 ms and 100 ms facial expression discrimination performance varied as a function of emotion. Replicating Milders et al. (2008), a happy-superiority effect emerged at 50 ms with a trend seen at 16.67 ms. In previous masking experiments using signal detection methods, differences between emotions were inconsistent and only neutral, angry, and fearful expressions were tested. The present results are thus novel as they included disgusted and surprised expressions and showed, for the first time, differences between emotions as early as 50 ms presentation time, beyond the happy-superiority effect. Indeed, happy expressions were best discriminated, followed by neutral and disgusted, and then surprised expressions. Fearful expressions were the most poorly discriminated. The lower performance seen for surprise and fear is in line with the pattern of data seen in the non-masking literature (e.g., Palermo & Coltheart, 2004; Rapscak et al., 2000) despite the variability in methodology. Comparisons between the groups (16.67, 50, and 100 ms) supported the prediction that increasing exposure time increased performance. However, for fearful faces no accuracy improvement was seen between 16.67 ms and 50 ms presentation times, suggesting a differential processing of fearful compared to the other expressions at these durations.

We predicted that fixation to an expression-specific diagnostic facial feature relative to non-diagnostic locations would result in greater accuracy performance. This was not supported for any of the tested emotions for any group. The only consistent finding was a decreased performance when participants fixated on the forehead, an effect most pronounced in the 50 ms group.

From this experiment alone where we (i) ensured fixation to specific facial features by means of an eye-tracker and (ii) tested a wider range of emotions, we showed that facial expression discrimination varies as a function of emotion beyond the happy-superiority effect. Previous research did not find such emotion differences when using an upright neutral masking stimulus (Milders et al., 2008). Experiment 1 used an inverted neutral-face mask to avoid confusion with the neutral expression target face. The inverted mask, however, may not have properly stopped the processing of the target stimuli after the desired presentation time. Experiment 2 thus used an upright-face mask.

EXPERIMENT 2

Method

Participants

A total of fifty-one undergraduate participants (28 females), all with normal or corrected-to-normal visual acuity were recruited from the UW for course credit. Participants were pre-screened and selected following the same methods as Experiment 1. Nineteen participants were recruited for Group 1 (16.67 ms) and four were rejected due to a low number of trials per condition (< 20) after removing saccade-contaminated trials, resulting in a final sample of 15 participants (9 females) aged 19–23 (Mage = 18.60 years). Seventeen participants were recruited for Group 2 (50 ms). Two were rejected due to a low number of trials per condition (<20), resulting in a final sample of 15 participants (9 females) aged 19–23 (Mage = 19.93 years). A total of 15 students (6 females) aged 18–25 (Mage = 20.13 years) were recruited for Group 3 (100 ms presentation time). None of the participants were included in more than one group.

Materials and procedure

The materials and procedure were the same as used in Experiment 1, except the inverted neutral-face masks were replaced by upright neutral-face masks. The pictures were the inverted masks used in Experiment 1, rotated by 180° in Photoshop CS4 Extended. Additionally the order of the emotions listed on the response screen was randomised between trials.

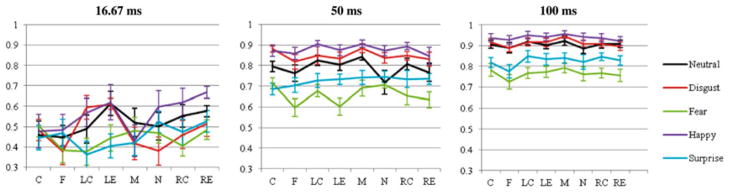

Results

The mixed ANOVA revealed a significant main effect of Emotion, F(3.07, 129.09) = 50.96, p<.001, , such that for all presentation times A′ values were highest for happiness, followed by neutral and disgust (which did not differ), and lowest for surprise and fear (which did not differ; all significant pairwise comparisons at p<.05). A main effect of Fixation Location, F(4.49, 188.77) = 3.72, p<.01, , revealed lower A′ values for forehead fixations compared to left eye, right eye, and right cheek fixations. A main effect of Presentation Time, F(2, 42) = 45.82, p<.001, , indicated lower A′ values for 16.67 ms than for 100 ms and 50 ms (which did not differ) as clearly seen on Figure 6. There were significant interactions of Emotion by Presentation Time, F(6.14, 129.09) = 3.39, p<.01, , Fixation Location by Presentation Time, F(8.99, 188.77) = 3.06, p<.01, , and Emotion by Fixation Location, F(10.42, 20.84) = 2.51, p<.01, . The three-way interaction of Emotion by Fixation Location by Presentation Time was also significant, F(20.84, 437.54) = 1.85, p<.05, .

Figure 6.

Mean A′ values for neutral expression, disgust, fear, happiness, and surprise presented for 16.67 ms, 50 ms and 100 ms in Experiment 2 (upright face-mask). C: chin; F: forehead; LC: left cheek; LE: left eye; M: mouth; N: nose; RC: right cheek; RE: right eye. Error bars represent standard errors to the means.

For the 16.67 ms group analysed separately (Figure 6), a main effect of Emotion was found, F(2.59, 36.32) = 4.28, p<.05, , with higher A′ scores seen for happy, neutral and disgusted faces (which did not differ) than for surprised and fearful faces (which did not differ). A main effect of Fixation Location, F(7, 56) = 2.88, p<.05, , revealed lower A′ scores for forehead fixations compared to the left eye. A significant Emotion by Fixation Location interaction, F(8.14, 113.95) = 2.10, p<.05, , was found. One-way ANOVAs for each emotion were thus conducted. The effect of Fixation Location was not significant for neutral (p=.08), fear (p=.30), and surprise (p=.06) and although it was significant for disgust, F(7, 98) = 2.99, p<.05, , and happiness, F(7, 98) = 2.58, p<.05, , no significant comparisons were found.

For the 50 ms group (Figure 6), a significant main effect of Emotion, F(4, 56) = 40.51, p<.001, , was due to the highest A′ scores seen for happiness and disgust (not differing significantly), then neutral expressions, and lowest scores for surprise and fear (not differing significantly; all significant pairwise comparisons at p<.05). The effect of Fixation Location, F(2.87, 40.11) = 5.33, p<.01, , was due to higher A′ scores when fixation was on the mouth compared to the forehead and right eye (significantly at p<.05), and a trend for higher scores was seen for left eye fixations. No interaction was found (p=.08).

For the 100 ms group, a significant main effect of Emotion was found, F(2.19, 30.68) = 77.34, p<.001, , due to highest A′ scores seen for happiness, followed by neutral and disgust (no significant difference), and lowest scores for surprise and fear (no significant difference; all significant pairwise comparisons p<.05). An effect of Fixation Location, F(3.66, 51.30) = 5.33, p<.01, , was due to higher A′ scores for mouth fixations compared to the forehead (significantly at p<.05). No interaction was found (p=.29).

To explore the Emotion by Presentation Time interaction separate ANOVAs were conducted for each emotion. For neutral faces, discrimination performance increased with increasing presentation time. All other emotions followed the main effect of Presentation Time with lower performance for 16.67 ms than for 50 ms and 100 ms (which did not differ; see Figure 7).

Figure 7.

Mean A′ values for neutral expression, disgust, fear, happiness, and surprise collapsed across fixation location when presented for 16.67 ms, 50 ms, and 100 ms in Experiment 2 (upright face-mask). Error bars represent standard error to the means.

Between-groups mask comparison

A 2 (Mask: inverted or upright) × 5 (Emotion) × 8 (Fixation Location) ANOVA was conducted for each group (16.67 ms, 50 ms, and 100 ms) to compare mask efficiency. At 16.67 ms, no effect of Mask (p=.19) and no Mask by Emotion interaction (p=.82) were found. For the 50 ms groups, there was no effect of Mask (p=.60), however there was a Mask by Emotion interaction, F(3.39, 94.87) = 2.73, p<.05, , due to higher performance with the upright-face mask compared to the inverted-face mask for neutral target faces only (p<05). For the 100 ms group, there was no main effect of Mask (p=.12) and no Mask by Emotion interaction (p=.81).

RMS contrast and luminance

Mean luminance and RMS contrast for each emotion are reported in Table 1. Paired t-tests revealed no differences between emotions (p<.05 for all comparisons).

Table 1.

Mean luminance and RMS contrast values (as calculated by Photoshop) for neutral, disgusted, fearful, happy, and surprised expressions

| Mean luminance (SD) | Mean RMS contrast (SD) | |

|---|---|---|

| Neutral | 112.99 (6.86) | 0.498 (0.005) |

| Disgust | 115.13 (5.88) | 0.498 (0.002) |

| Fear | 113.78 (5.88) | 0.497 (0.003) |

| Happy | 114.69 (8.45) | 0.498 (0.003) |

| Surprise | 115.07 (8.65) | 0.496 (0.005) |

For mean RMS contrast in areas of 4° visual angle around each fixation, a main effect of Fixation Location, F(7, 63) = 97.738, p<.001, , was due to largest contrast values found for the eyes and the mouth (which did not differ), then the chin, left and right cheeks (which did not differ), and smallest values for the nose and forehead (which did not differ; see Figure 8). No effect of Emotion was found (p=.69). However, a significant Emotion by Fixation Location interaction, F(28, 252) = 3.92, p<.01, , was due to lower RMS contrast for surprise and disgust than the other emotions when fixation was centred on the mouth (p<.05).

Figure 8.

Mean RMS contrast (A) and luminance (B) values for neutral, disgust, fear, happiness, and surprise within 4° of visual angle surrounding each fixation location. C: chin; F: forehead; LC: left cheek; LE: left eye; M: mouth; N: nose; RC: right cheek.

For mean luminance in areas of 4° visual angle around each fixation, a main effect of Fixation Location, F(7, 63) = 145.26, p<.001, , was due to the highest luminance seen for the forehead, followed by the nose, chin, left and right cheek (which did not differ), then the mouth, and lowest luminance seen for the left and right eye (which did not differ). No effect of Emotion was found (p=.06), but a significant Emotion by Fixation Location interaction, F(28, 252) = 4.34, p<.01, , was due to higher luminance for the area around the mouth for happy faces compared to the other emotions (p<.05).

Discussion

In line with Experiment 1, differences between emotions were present in the 50 ms and 100 ms groups with the highest performance seen for happiness, followed by neutral and disgust, then surprise, and the lowest performance seen for fear. As predicted, accuracy increased with presentation time for all five expressions, although this increase was not significant between 50 ms and 100 ms (except for neutral faces), in contrast to what was seen in Experiment 1. Once again, these emotion differences were not due to fixation on the expression-specific diagnostic facial features. Comparisons of pictures’ RMS contrast and luminance revealed that the eyes and the mouth were areas of high contrast and low luminance, as expected. In addition, no differences in overall luminance and contrast of pictures were seen between emotions. If these low-level factors were driving the effects we would have seen similar effects of emotion, fixation, and their interaction for contrast and luminance and for accuracy. Instead we found no effect of fixation location or consistent emotion by fixation interactions on discrimination performance and the overall effects of emotion seen at the accuracy level were not matched by emotion effects at the stimuli level. Therefore, luminance and contrast factors do not seem to drive the behavioural effects we found.

Mask comparison for each group revealed that the upright and inverted neutral-face masks were equally effective at preventing further processing of the facial expressions, except for neutral faces presented for 50 ms. Backward masking in face identity discrimination tasks has been shown to be more effective (i.e., to yield more disruption) if the mask is configurally similar to the target (Loffler et al., 2005). In contrast, at 50 ms presentation, we found higher performance for neutral faces when an upright mask was used compared to an inverted mask. This suggests the upright-face mask was less efficient than the inverted-face mask in disrupting processing of that emotion, likely because the target and masks were confused when both presented upright (see also Bachmann et al., 2005, for more efficient different-face than same-face masks presented upright in face-identity judgements). However, this mask difference vanished at 100 ms, likely because enough time was provided for emotion discrimination irrespective of masking stimulus.

GENERAL DISCUSSION

The current study investigated the effect of presentation time on accurate facial expression discrimination. Faces were immediately followed by a face mask to enforce precise timing. Performance was measured using signal detection methods to avoid response bias. Participants were fixated on facial features to search for performance differences between expressions. Eye-tracking was used to enforce fixation to eight facial locations (chin, forehead, left cheek, left eye, mouth, nose, right cheek, right eye) on neutral, disgusted, fearful, happy, and surprised expressions. Three presentation times (16.67 ms, 50 ms, and 100 ms) were tested in each experiment. An inverted neutral-face mask was used in Experiment 1 and an upright neutral-face mask in Experiment 2 to test for mask efficiency.

Predictions were based on previous emotion discrimination studies using masked (Milders et al., 2008) and unmasked expressions (e.g., Palermo & Coltheart, 2004; Rapscak et al., 2000). In line with predictions and replicating previous studies, highest performance was seen for happy faces presented for 50 ms and greater. In line with Milders et al. (2008), there were no significant emotion differences when faces were presented for 16.67 ms for either experiment but happy faces did tend to yield higher scores than other emotions. Happy expressions presented for 50 and 100 ms were significantly best discriminated, followed by neutral and disgusted, then surprised, and finally fearful expressions. This pattern was seen for both upright- and inverted-face masks. Thus, by controlling point of gaze to the target face we revealed performance differences beyond the happy-superiority effect during presentations as brief as 50 ms. Previous research (e.g., Milders et al., 2008) did not ensure correct fixation location on the target stimuli. This is important as recent eye-tracking studies have reported that initial facial fixation location affects performance and scanning patterns (Arizpe, Kravitz, Yovel, & Baker, 2012). This methodological difference along with others (i.e., smaller stimulus size, within-subject design, and smaller range of emotions), may explain the lack of differences seen between emotions beyond the happy-superiority effect in their study. Additionally our results revealed that surprised and fearful expressions were consistently less well discriminated than other emotions, in line with the non-masking literature on facial expression recognition (e.g., Palermo & Coltheart, 2004; Rapscak et al., 2000). Importantly, differences between emotions occurred very early on in the course of visual processing (as early as 50 ms) and did not appear to be compensated for by increasing presentation times even though longer presentation times improved emotion discrimination for all expressions.

Based on previous research (Schyns et al., 2007, 2009; van Rijsbergen & Schyns, 2009), it was also predicted that discrimination performance would be enhanced when participants fixated on an expression-specific diagnostic facial feature, relative to non-diagnostic locations. This was not supported in either experiment. In contrast, the present results suggest fixation to diagnostic facial features does not improve discrimination performance when presenting the whole face. It thus appears that the reported differences in performance between emotions are not explained by the direct use of expression-specific diagnostic facial features. Instead, our findings support those by Guo (2012) suggesting that expressive cues from more than one feature are combined to reliably decode facial affect. Thus, (non-diagnostic) features falling outside of the fovea are processed and impact the decision outcome. These findings also suggest facial expressions are processed more holistically than on the basis of (diagnostic) features, an idea supported by studies using the composite paradigm in which participants are slower to identify the target top or bottom expression (e.g., fear) when it is spatially aligned with the complementary top or bottom of another expression (e.g., happiness) than when both are misaligned (e.g., Calder et al., 2000). Importantly, this feature integration (holistic processing) is dependent on presentation time as we showed an increase in performance with increasing presentation time.

In contrast, eye-tracking studies have shown that when the entire face is presented for 150 ms and above, participants look longer and/or make more fixations to the diagnostic features (e.g., Eisenbarth & Alpers, 2011; Gamer & Büchel, 2009; Scheller et al., 2012). However, while these studies support the idea that diagnostic features play a role in the gaze exploration of facial expressions, they do not demonstrate that this gaze pattern difference explains emotion discrimination performances. Our study suggests that diagnostic features of facial expressions do not impact discrimination outcome. Instead, we suggest it is the way all features are “glued” together (holistic processing) during the early stages of vision that impacts emotion discrimination and this is seen as early as 50 ms of visual presentation.

Results of the current study also point to the possible effect of task on the use of expression-specific diagnostic facial features. Fixation to features might provide a discrimination advantage when forcing featural processing of the image, e.g., using the Bubbles method or presenting faces upside-down. Inverting neutral (see Valentine, 1988, for a review; Yin, 1969) and emotional (Derntl, Seidel, Kainz, & Carbon, 2009; McKelvie, 2011; Prkachin, 2003) faces is known to disrupt holistic processing, forcing feature-based processing. An effect of expression-specific diagnostic features for inverted but not upright faces would support the claim that diagnostic features improve discrimination accuracy only when processing is forced to be featural. This idea will have to be tested by future studies.

The results of pictures’ RMS contrast and luminance analyses were also interesting as they revealed that, despite the classic differences found between features (with eyes being zones of high contrast and low luminance), these low-level factors did not discriminate between emotions, globally or locally (around the features). The only exception was found for the mouth which had the highest luminance in happy faces, and which we could tentatively relate to the happy-superiority effect seen. However, and most importantly, the overall patterns of RMS contrast and luminance across fixations and emotions did not parallel the effects seen at the accuracy level, suggesting that they do not play any fundamental role in emotion discrimination at these early presentation times.

A few limitations to our study need to be acknowledged. On every trial the fixation-cross moved around the centrally presented face so fixation was on a feature. Despite the fovea falling on the featural location, participants may have pre-attended to other locations. However, our finding that performance was decreased for the forehead fixation condition suggests this is unlikely. If participants had pre-attended to the centre of the screen (around the core features) performance would not have dropped. In contrast this forehead effect fuels the holistic hypothesis as holistic processing would be most efficient with fixation in the centre of mass of the face, where features can be most easily “glued” together. Future studies where the fixation-cross remains centred and the target face moves around it to change fixation location will have to confirm the present results.

Backward masking was necessary to measure discrimination accuracy at specific timings. We compared upright mask efficiency with an inverted neutral-face mask to avoid confusion when presenting a neutral target face. The masks resulted in similar performances, however the possibility remains that other masks are even more effective. If this is the case, it is possible that we did not find an effect of diagnostic features because the mask did not prevent processing of all tested facial expressions equally. As this work is one of the first to compare mask effectiveness for emotional faces, future studies will have to investigate the most efficient mask for each individual expression.

The ecological validity of the experiment might also be questioned. Posed, high intensity expressions were used while less intense expressions are more frequently seen in real life (see Guo, 2012, for a discussion), although this might depend on the emotion. For example, we encounter the full expression of happiness more frequently in our everyday lives than other emotions such as surprise. In fact only 4–25% of adults display the full prototypic expression of surprise (Reisenzein, Bördgen, Holtbernd, & Matz, 2006). While we acknowledge this limitation, recent research suggests that expression intensity does not play a major role in facial exploration. Guo (2012) had participants freely scan emotional faces ranging from very low to high intensity and showed that the number and/or duration of fixations to internal facial features was equal across expression intensities. While our results converge with those by Guo (2012) in suggesting that facial expressions are processed holistically and thus, that fixating on diagnostic features do not seem to play any major role, the high intensity of the expressions used might contribute to a larger impact of non-diagnostic parafoveal features in this holistic processing, which might explain the early differences seen between emotions. Future studies should consider administering this experiment with low-intensity faces that are more similar to those seen in our everyday lives. Importantly the results of the current study were obtained using backward masking of still poses of overly intense facial expressions and therefore might not reflect real-life scenarios. To get a more accurate understanding of emotion discrimination this work will have to be extended to real-life approaches (e.g., Kingstone, Eastwood, & Smilek, 2008).

In summary, our study demonstrated differences in the ability to accurately discriminate basic expressions of emotion as early as 50 ms. By using an eye-tracker to enforce fixation to the face stimuli we reported differences not yet reported in the literature at this brief presentation time. However, performance did not depend on the specific location of fixation on the face for any of the tested emotions. Performance was only systematically decreased during fixation to the forehead, leading to the possibility that performance depends on the time available to integrate the internal features (holistically). If this were true, fear and surprise then require a longer presentation time to integrate the facial features than disgusted, happy, and neutral expressions. Future studies are required to investigate whether these results were due to the parameters of the current paradigm or whether accuracy performance is truly not improved by fixation on specific diagnostic features.

Acknowledgments

This study was supported by the Canadian Institutes for Health Research (CIHR), the Canada Foundation for Innovation (CFI), the Ontario Research Fund (ORF) and the Canada Research Chair (CRC) program to RJI.

References

- Adolphs R, Gosselin F, Buchanan TW, Tranel D, Schyns P, Damasio AR. A mechanism for impaired fear recognition after amygdala damage. Nature. 2005;433:68–72. doi: 10.1038/nature03086. [DOI] [PubMed] [Google Scholar]

- Arizpe J, Kravitz DJ, Yovel G, Baker CI. Start position strongly influences fixation patterns during face processing: Difficulties with eye movements as a measure of information use. PLoS One. 2012;7(2):e31106. doi: 10.1371/journal.pone.0031106. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Bachmann T, Luiga I, Põder E. Variations in backward masking with different masking stimuli: II. the effects of spatially quantised masks in the light of local contour interaction, interchannel inhibition, perceptual retouch, and substitution theories. Perception. 2005;34(3):305–318. doi: 10.1068/p5276. [DOI] [PubMed] [Google Scholar]

- Blais C, Jack RE, Scheepers C, Fiset D, Caldara R. Culture shapes how we look at faces. PLoS One. 2008;3(8):e3022. doi: 10.1371/journal.pone.0003022. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Boucher JD, Ekman P. Facial areas and emotional information. Journal of Communication. 1975;25(2):21–29. doi: 10.1111/j.1460-2466.1975.tb00577. [DOI] [PubMed] [Google Scholar]

- Calder AJ, Young AW, Keane J, Dean M. Configural information in facial expression perception. Journal of Experimental Psychology: Human Perception and Performance. 2000;26(2):527–551. doi: 10.1037/0096-1523.26.2.527. [DOI] [PubMed] [Google Scholar]

- Calvo M, Esteves F. Detection of emotional faces: Low perceptual threshold and wide attentional span. Visual Cognition. 2005;12(1):13–27. doi: 10.1080/13506280444000094. [DOI] [Google Scholar]

- Clark US, Neargarder S, Cronin-Golomb A. Visual exploration of emotional facial expressions in Parkinson’s disease. Neuropsychologia. 2010;48:1901–1913. doi: 10.1016/j.neuropsychologia.2010.03.006. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Derntl B, Seidel EM, Kainz E, Carbon CC. Recognition of emotional expressions is affected by inversion and presentation time. Perception. 2009;38:1849–1862. doi: 10.1068/p6448. [DOI] [PubMed] [Google Scholar]

- Dugas MJ, Gosselin P, Ladouceur R. Intolerance of uncertainty and worry: Investigating specificity in a nonclinical sample. Behavior Research and Therapy. 2001;25:551–558. doi: 10.1023/A:1005553414688. [DOI] [Google Scholar]

- Eisenbarth H, Alpers GW. Happy mouth and sad eyes: Scanning emotional facial expressions. Emotion. 2011;11:860–865. doi: 10.1037/a0022758. [DOI] [PubMed] [Google Scholar]

- Ekman P. Facial expression and emotion. American Psychologist. 1993;48(4):384–392. doi: 10.1037/0003-066X.48.4.384. [DOI] [PubMed] [Google Scholar]

- Esteves F, Öhman A. Masking the face: Recognition of emotional facial expressions as a function of the parameters of backward masking. Scandinavian Journal of Psychology. 1993;34(1):1–18. doi: 10.1111/j.1467-9450.1993.tb01096.x. [DOI] [PubMed] [Google Scholar]

- Esteves F, Parra C, Dimberg U, Öhman A. Nonconscious associative learning: Pavlovian conditioning of skin conductance responses to masked fear-relevant facial stimuli. Psychophysiology. 1994;31:393–413. doi: 10.1111/j.1469-8986.1994.tb02446.x. [DOI] [PubMed] [Google Scholar]

- Gamer M, Büchel C. Amygdala activation predicts gaze toward fearful eyes. The Journal of Neuroscience. 2009;29(28):9123–9126. doi: 10.1523/JNEUROSCI.1883-09.2009. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Gosselin F, Schyns PG. Bubbles: A technique to reveal the use of information in recognition tasks. Vision Research. 2001;41(17):2261–2271. doi: 10.1016/S0042-6989(01)00097-9. [DOI] [PubMed] [Google Scholar]

- Green DM, Swets JA. Signal detection theory and psychophysics. New York, NY: Wiley; 1966. [Google Scholar]

- Guo K. Holistic gaze strategy to categorize facial expression of varying intensities. PLoS One. 2012;7(8):e42585. doi: 10.1371/journal.pone.0042585. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Haase SK, Theios J, Jenison R. A signal detection theory analysis of an unconscious perception effect. Perception and Psychophysics. 1999;61(5):986–992. doi: 10.3758/BF03206912. [DOI] [PubMed] [Google Scholar]

- Hanawalt NG. The role of the upper and the lower parts of the face as a basis for judging facial expressions: II. In posed expressions and “candid-camera” pictures. The Journal of General Psychology. 1944;31:23–36. doi: 10.1080/0022-3514.37.11.2049. [DOI] [Google Scholar]

- Itier RJ, Batty M. Neural bases of eye and gaze processing: The core of social cognition. Neuroscience and Biobehavioral Reviews. 2009;33(6):843–863. doi: 10.1016/j.neubiorev.2009.02.004. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Kingstone A, Eastwood JD, Smilek D. Cognitive ethology: A new approach for studying human cognition. The British Psychological Society. 2008;99(3):317–340. doi: 10.1348/000712607X251243. [DOI] [PubMed] [Google Scholar]

- Kirouac G, Doré FY. Judgment of facial expressions of emotion as a function of exposure time. Perceptual and Motor Skills. 1984;59(1):147–150. doi: 10.2466/pms.1984.59.1.147. [DOI] [PubMed] [Google Scholar]

- Loffler G, Gordon GE, Wilkinson F, Goren D, Wilson HR. Configural masking of faces: Evidence for high-level interactions in face perception. Vision Research. 2005;45:2287–2297. doi: 10.1016/j.visres.2005.02.009. [DOI] [PubMed] [Google Scholar]

- MacMillan NA, Creelman CD. Signal detection theory: A user’s guide. New York, NY: Cambridge University Press; 1991. [Google Scholar]

- Maxwell JS, Davidson RJ. Unequally masked: Indexing differences in the perceptual salience of “unseen” facial expressions. Cognition and Emotion. 2004;18(8):1009–1026. doi: 10.1080/02699930441000003. [DOI] [Google Scholar]

- McKelvie SJ. Emotional expression in upside-down faces: Evidence for configurational and componential processing. British Journal of Social Psychology. 2011;34(3):325–334. doi: 10.1111/j.2044-8309.1995.tb01067.x. [DOI] [PubMed] [Google Scholar]

- Milders M, Sahraie A, Logan S. Minimum presentation time for masked facial expression discrimination. Cognition and Emotion. 2008;22(1):63–82. doi: 10.1080/02699930701273849. [DOI] [Google Scholar]

- Palermo R, Coltheart M. Photographs of facial expression: Accuracy, response times, and ratings of intensity. Behavior Research Methods, Instruments & Computers. 2004;36:634–638. doi: 10.3758/BF03206544. [DOI] [PubMed] [Google Scholar]

- Prkachin GC. The effects of orientation on detection and identification of facial expressions of emotion. British Journal of Psychology. 2003;94:45–62. doi: 10.1348/000712603762842093. [DOI] [PubMed] [Google Scholar]

- Rapscak SZ, Galper SR, Comer JF, Reminger SL, Nielsen L, Kaszniak AW, Cogen RA. Fear recognition deficits after focal brain damage. Neurology. 2000;54:575–581. doi: 10.1212/WNL.54.3.575. [DOI] [PubMed] [Google Scholar]

- Ree MJ, French D, MacLeod C, Locke V. Distinguishing cognitive and somatic dimensions of state and trait anxiety: Development and validation of the State-Trait Inventory for Cognitive and Somatic Anxiety (STICSA) Behavioural and Cognitive Psychotherapy. 2008;36(3):313–332. doi: 10.1017/S1352465808004232. [DOI] [Google Scholar]

- Reisenzein R, Bördgen S, Holtbernd T, Matz D. Evidence for strong dissociation between emotion and facial displays: The case of surprise. Journal of Personality and Social Psychology. 2006;91(2):295–315. doi: 10.1037/0022-3514.91.2.295. [DOI] [PubMed] [Google Scholar]

- Scheller E, Büchel C, Gamer M. Diagnostic features of emotional expressions are processed preferentially. PLoS One. 2012;7(7):e41792. doi: 10.1371/journal.pone.0041792. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Schyns PG, Petro LS, Smith ML. Dynamics of visual information integration in the brain for categorizing facial expressions. Current Biology. 2007;17:1580–1585. doi: 10.1016/j.cub.2007.08.048. [DOI] [PubMed] [Google Scholar]

- Schyns PG, Petro LS, Smith ML. Transmission of facial expressions of emotions co-evolved with their efficient decoding in the brain: Behavioral and brain evidence. PLoS One. 2009;4(5):e5625. doi: 10.1371/journal.pone.0005625. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Snodgrass JG, Corwin J. Pragmatics of measuring recognition memory: Applications to dementia and amnesia. Journal of Experimental Psychology: General. 1988;117(1):34–50. doi: 10.1037/0096-3445.117.1.34. [DOI] [PubMed] [Google Scholar]

- Sullivan S, Ruffman T, Hutton SB. Age differences in emotion recognition skills and the visual scanning of emotion faces. The Journal of Gerontology, Series B: Psychological Sciences and Social Sciences. 2007;62:53–60. doi: 10.1093/geronb/62.1.P53. [DOI] [PubMed] [Google Scholar]

- Tottenham N, Tanaka JW, Leon AC, McCarry T, Nurse M, Hare TA, Nelson C. The NimStim set of facial expressions: Judgments from untrained research participants. Psychiatry Research. 2009;168(3):242–249. doi: 10.1016/j.psychres.2008.05.006. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Valentine T. Upside-down faces: A review of the effect of inversion upon face recognition. British Journal of Psychology. 1988;79(4):471–491. doi: 10.1111/j.2044-8295.1988.tb02747.x. [DOI] [PubMed] [Google Scholar]

- van Rijsbergen NJ, Schyns PG. Dynamics of trimming the content of face representations for categorization in the brain. PLoS: Computational Biology. 2009;5(11):e1000561–e1000561. doi: 10.1371/journal.pcbi.1000561. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Wiens S. Current concerns in visual masking. Emotion. 2006;6(4):675–680. doi: 10.1037/1528-3542.6.4.675. [DOI] [PubMed] [Google Scholar]

- Yin RK. Looking at upside-down faces. Journal of Experimental Psychology. 1969;81(1):141–145. doi: 10.1037/h0027474. [DOI] [Google Scholar]