Abstract

Rising Medicaid health expenditures have hastened the development of State managed care programs. Methods to monitor and improve health care under Medicaid are changing. Under fee-for-service (FFS), the primary concern was to avoid overutilization. Under managed care, it is to avoid underutilization. Quality enhancement thus moves from addressing inefficiency to addressing insufficiency of care. This article presents a case study of Virginia's redesign of Quality Assessment and Improvement (QA/I) for Medicaid, adapting the guidelines of the Quality Assurance Reform Initiative (QARI) of the Health Care Financing Administration (HCFA). The article concludes that redesigns should emphasize Continuous Quality Improvement (CQI) by all providers and of multi-faceted, population-based data.

Introduction

During the 1990s, Medicaid, the primary program for meeting the medical needs of persons with low incomes, has been the fastest growing publicly funded health care program. Medicaid expenditures are rapidly consuming the lion's share of most State budgets. Thus, the majority of States have turned to managed care to control Medicaid costs. From 1991 to 1992, in the 36 States using managed care programs for Medicaid, the number of Medicaid enrollees in such programs rose by 35 percent. All States but six had Medicaid managed care programs in place in 1994 (Fisher, 1994; Hurley, Freund, and Paul, 1993; Horvath and Kaye, 1995).

At the same time, some States have also folded new populations into their Medicaid programs. Responding to the failure of the Federal Government to provide universal health insurance, seven States have expanded coverage for the poor through managed care initiatives under Medicaid. By doing so, they hope to lower total medical costs for the poor (both Medicaid recipients and patients with no insurance at all); such costs are in fact already being shifted to taxpayers. Arizona, Hawaii, Tennessee, Oregon, Rhode Island, Minnesota, and Washington now offer their poor citizens near-universal insurance coverage through Medicaid managed care, and the list of Medicaid-expansion States is growing (Schear, 1995; Lutz, 1995).

The increased use of managed care by the major health care program for poor Americans raises concerns about its accountability for quality. Equity and social justice require that quality assurance (QA) be guarded especially carefully in publicly funded programs that serve low-income persons. Concern about the quality of care available to low-income groups has been growing (Schear, 1995; Haas et al., 1994).

Concurrently with the rapid changes in publicly funded Medicaid, managed care and consumer-driven market reforms are accelerating in the private sector. The private market has forced consumers, insurers, employers, governments, and health maintenance organizations (HMOs) to seek better methods of understanding the relationship between costs, quality, and access to care (Carey and Weis, 1990; Carey, 1991). Some fear that cost controls in the private sector will inevitably reduce both access and the quality of care (Ware, 1986). Medicaid cost constraints may have been cushioned in the past by cost-shifting, but today the changes in the private sector are a new limiting factor.

These profound shifts toward managed care have led to adoption of CQI or Total Quality Management (TQM) methods, which are organized approaches to identifying quality problems, making changes to improve quality, and checking that the improvements have taken place. With the arrival of these methods on the scene, the era of reliance on credentialing, subjective peer review, and incident-triggered review as the primary external means for assuring the quality of medical care is changing. Although individual quality-improvement initiatives first occurred in a FFS system, more fragmented care is inherent; a systems approach to quality improvement can be more easily facilitated within managed care organizations. The new era of QA/I relies on emerging information technologies that furnish uniform, expanded clinical data collected at relatively low cost. To supplement more structural review approaches, the twin goals of the new era are detailed quality assessment for an entire population and an improvement in the median quality of the care it receives (Goldfield, 1991; Goldman, 1992; McCarthy, Ward, and Young, 1994; Milakovich, 1991; Nash, 1993; Rutstein, 1976).

The purpose of this article is to explain new Federal guidelines for assuring quality under Medicaid managed care in the reformed era and to describe how the States are handling issues of quality and access. The article examines how one State, Virginia, has adapted the Federal suggestions for QA to its Medicaid program. The article also elucidates the principles underlying the new approach.

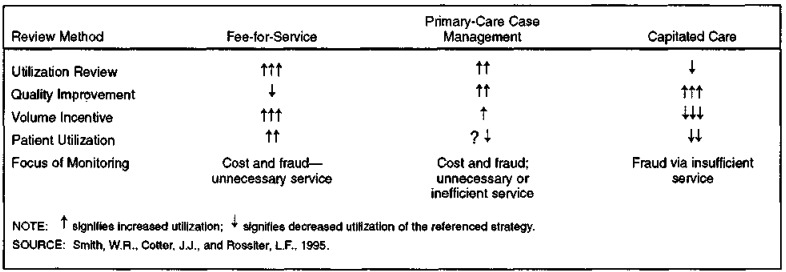

The evolution of health care delivery from FFS to managed care opens the door to a different approach to assuring quality (Figure 1). Under FFS reimbursement, providers have strong incentives to increase volume. Hence, the primary concern in terms of quality is avoiding unnecessary care and overutilization. Under capitated or risk-based reimbursement programs, while the system of care affords a choice for more organized quality oversight, the plan and, sometimes, the providers have incentives to reduce volume; the concern about quality is to prevent underutilization of medical care as well as fraud through service denial and preservation of “ghost” clients. Under transitional case-management programs, both under- and overutilization are potential problems.

Figure 1. Comparison of Methods of Monitoring Quality and Service Use Under Medicaid Fee-for-Service, Primary-Care Case Management, and Capitated Care.

The traditional Medicaid peer review or utilization review process, which was appropriate for FFS mechanisms, is less appropriate for the newer payment schemes (Luke and Modrow, 1983). In a shift from concern about controlling fraud and abuse, newer programs must monitor the adequacy of access and health outcomes. Rather than being triggered by incidences of poor care and collecting data only when certain poor outcomes occur, programs must now collect data on random samples or a census of populations to make sure that the average standard of care is improving, is acceptable to their customers, or meets the appropriate professional standard.

Federal Reform of Medicaid Quality

In the early 1990s, it became apparent that existing rules and regulations for assuring the quality of care under Medicaid managed care were no longer adequate for the growing number of enrollees. By then, most States had begun or were considering Medicaid managed care. HCFA's Medicaid Bureau, noting this growth, began to develop a system for improving the quality of health care under Medicaid managed care programs. The goal of the undertaking for State managed care programs—QARI—was to develop guidelines that were consistent with industry standards and would be used to improve the quality of care in those programs (Health Care Financing Administration, 1993).

QARI provides four elements of QA for State Medicaid managed care programs. First, QARI presents an overall framework for the design of a quality-improvement system. Secondly, QARI recommends design and operational criteria for internal QA programs in managed care organizations that serve Medicaid clients. Third, QARI lists the critical medical conditions likely to require attention, sample indicators of the quality of treatment, and references to published guidelines which support these indicators. Fourth, because Federal law requires external review of the quality of the services rendered under Medicaid managed care, QARI suggests the scope that the activities of the external reviewers should encompass (Health Care Financing Administration, 1993).

The QARI guidelines paralleled the growth of accreditation of managed care plans and a private managed care effort to assure quality—the health plan report card. The Health Plan Employer Data and Information Set (HEDIS) of the National Committee for Quality Assurance (NCQA) (National Academy for State Health Policy, 1994; National Committee for Quality Assurance, 1993) is designed to provide basic information on broad measures of health plan performance. Targeted toward populations with private health insurance plans through their employment, HEDIS data furnish general descriptive information for plan-by-plan comparisons. HEDIS' core performance measures are intended to inform employers and insurers about the cost-effectiveness of health plans. NCQA, along with HCFA and the American Public Welfare Association, is adapting the HEDIS measures to assess plans used by Medicaid (National Committee for Quality Assurance, 1995). It remains to be seen whether Medicaid HEDIS can fully address the special needs, quality, and circumstances of the Medicaid population, especially for plans in which Medicaid enrollment is a disproportionate share of the membership.

While awaiting Medicaid HEDIS, States with growing numbers of Medicaid enrollees in managed care programs have applied the QARI principles in their programs for QA. Three States have implemented the guidelines as part of a national demonstration project (Gold and Felt, 1995). Overall, States have welcomed the guidelines. The results of a survey conducted by HCFA in early 1994 (Table 1) show that, of the 32 State agencies responding, 90 percent were familiar with the QARI guidelines. Most of the 32 States were using or planned to use them. Two-thirds to three-quarters found the QARI components helpful, especially the guidelines on internal QA programs. These results indicate that States are willing to adapt their methods of ensuring quality care for Medicaid recipients to suit their new mechanisms for paying Medicaid providers.

Table 1. Results (Number and Percent) of HCFA Survey of States' Response to QARI Guidelines: March 1994.

| Planned Use | Using to a Great Extent | Using to Some Extent | Plan to Use | Do Not Plan to Use | Not Sure | Blank |

|---|---|---|---|---|---|---|

| Quality Improvement System Framework | 9 (28) |

8 (25) |

11 (34) |

0 (0) |

2 (6) |

2 (6) |

| Internal QA Programs | 10 (32) |

6 (19) |

10 (32) |

0 (0) |

3 (10) |

2 (6) |

| External Review Standards | 7 (22) |

10 (31) |

10 (31) |

0 (0) |

3 (9) |

2 (6) |

| Clinical Priorities/Indicators | 8 (25) |

8 (25) |

10 (31) |

0 (0) |

4 (13) |

2 (6) |

| Helpfulness | Very | Some | Not | Not Sure | Blank | |

|

| ||||||

| Quality Improvement System Framework | 12 (38) |

11 (34) |

0 (0) |

4 (13) |

5 (16) |

|

| Internal QA Programs | 14 (44) |

8 (25) |

0 (0) |

5 (16) |

5 (16) |

|

| External Review Standards | 12 (38) |

10 (31) |

0 (0) |

5 (16) |

5 (16) |

|

| Clinical Priorities | 12 (38) |

9 (28) |

0 (0) |

6 (19) |

5 (16) |

|

NOTES: n = 32 responses. Numbers in parentheses are percent of total responses. HCFA is Health Care Financing Administration. QARI is Quality Assurance Reform Initiative. QA is quality assurance.

SOURCE: Health Care Financing Administration: Data from the Medicaid Bureau, 1994.

State Medicaid Quality Reform: A Case Study

One example of State receptivity to a change in the methods for ensuring the quality of care under Medicaid can be seen in Virginia. The Virginia DMAS has implemented three managed care programs—MEDALLION, OPTIONS, and MEDALLION II. MEDALLION, a primary-care case-management program, began in 1992 and now enrolls 221,173 people, or 32 percent of all Virginia Medicaid recipients. Over 1,700 physicians participate in the program. Each MEDALLION Medicaid recipient either chooses or is assigned a primary-care physician. Primary-care physicians are the principal sources of care and provide referrals for specialized care if they identify need. As payment for their primary-care case management, physicians receive $3 per assigned recipient per month in addition to their regular FFS billing under Medicaid. The OPTIONS program is an HMO program with voluntary enrollment, begun in 1994 and effective June 30, 1995, enrolling 76,600 Medicaid recipients among four HMO plans (mixed Medicaid/non-Medicaid independent practice association [IPA] models). At present, Medicaid recipients can choose whether or not to participate in the OPTIONS program and which HMO they will join, but participation in the program is expected to become mandatory, as it is now in many other States. In 1996, the AFDC population will be transitioned to a mandatory enrollment, capitated risk program dubbed MEDALLION II. It is expected to enroll approximately 300,000 recipients in its first year of operation. Enrollment in the MEDALLION II program is mandatory for AFDC recipients; however, they are given the choice of enrolling in OPTIONS. Within each program, the recipients have the right to choose their own provider. Effective June 30, 1995, 214,047 adults and children and 7,126 aged, blind, and disabled recipients were enrolled in MEDALLION. In OPTIONS, 73,611 adults and children and 2,989 aged, blind, and disabled were enrolled. Table 2 shows the enrollment in Medicaid programs in Virginia effective June 30, 1995. The majority of enrollment has been in the metropolitan areas of Norfolk-Virginia Beach-Newport News, Richmond-Petersburg, and Northern Virginia.

Table 2. Total Managed Care, Voluntary HMO, and Traditional Fee-for-Service Enrollees in the Virginia Medicaid Program: June 30, 1995.

| Demographic | Medicaid Total | Primary-Care Case Management (MEDALLION) | Voluntary HMO (OPTIONS) | Traditional Fee-for-Service | ||||

|---|---|---|---|---|---|---|---|---|

|

|

|

|

|

|||||

| Number | Percent | Number | Percent | Number | Percent | Number | Percent | |

| Total Enrollment | 687,718 | 100 | 221,173 | 32 | 76,600 | 12 | 389,945 | 57 |

| Adults and Children | 500,110 | 73 | 214,047 | 31 | 73,611 | 11 | 212,452 | 31 |

| Blind and Disabled | 100,123 | 15 | 6,869 | 1 | 2,918 | 0.4 | 90,336 | 13 |

| Elderly | 87,485 | 13 | 257 | 0.04 | 71 | 0.01 | 87,157 | 13 |

NOTE: HMO is health maintenance organization.

SOURCE: Virginia Department of Medical Assistance Services, 1995.

An evaluation of the initial stages of the MEDALLION program found that the managed care program was effective in reducing costs (Hurley and Rossiter, 1994). Similar results have been found for primary-care case-management programs in other States (Hurley, Freund, and Paul, 1993). One result in Virginia was that unnecessary emergency room visits by Medicaid recipients were substantially reduced in parallel with rising MEDALLION enrollment (Hurley and Rossiter, 1994).

Consequently, Virginia DMAS decided to enlarge MEDALLION and implement OPTIONS and MEDALLION II, and to redesign its traditional utilization review as a QA/I system suitable for these programs. The program design efforts will change as the Virginia Medicaid program incorporates more recipients into managed care. Inherent in capitated risk programs is a transfer of accountability to the health maintenance plan, but the accountability between individual plans and their associated providers varies. Virginia is taking a lead position to focus on quality of care issues at the provider level. Given the vulnerability of the Medicaid population, this enhanced scrutiny is critical. This redesign also prepares Virginia to respond to State responsibility if Medicaid were restructured into a block grant program. Many other States are planning to do so. This article examines the Virginia experience as a case study.

Program Content

The Virginia Medicaid QA/I Program relies on key structural elements of quality suggested by QARI, and utilizes three operational assessment components, each based on a different view of quality. The program thus applies a multiaxial definition of medical quality as outcomes of care, process of care, and satisfaction with care (Figure 2). The three component measures, which will be performed annually, consist of: (1) practice parameter profiles of the outcomes for sentinel conditions seen by primary-care physicians; (2) detailed chart reviews (DCRs) for selected areas of care, for example, asthma, for a large sample of patients (1,000 or more) from a representative sample of providers; and (3) a recipient household survey of 1,500 patients, asking them to report on their degree of access to care, satisfaction with care, and health.

Figure 2. Quality of Care Framework for Medicaid Managed Care.

Practice Parameter Profiles

One of the QARI design elements for a managed care organization's internal QA program is a sentinel parameter which indicates underlying quality. Virginia adapted this approach to quality, using the two suggested QARI parameters, childhood immunization rates and pregnancy outcomes. However, to implement this approach, Virginia undertook an innovative method.

The collection of quality assessment data has traditionally relied heavily on claims data on patients' encounters with medical care. Even under capitation, several States require risk-based plans and their physicians to provide traditional FFS claims data in order to monitor service use. Such claims data, encounter data, or dummy claims are not now required of Virginia risk-based plans under OPTIONS, although those data are available from the MEDALLION primary-care case-management program. Therefore, QA/I has created a patient-level data base that profiles major practice parameters, using patient-level claims data when it is available (Year 1) or using eligibility files when claims are absent (Year 2 and beyond), and combining these with other data sources described later. As an example of how this implementation strategy of a QARI system element will work, we describe the immunization profiling process.

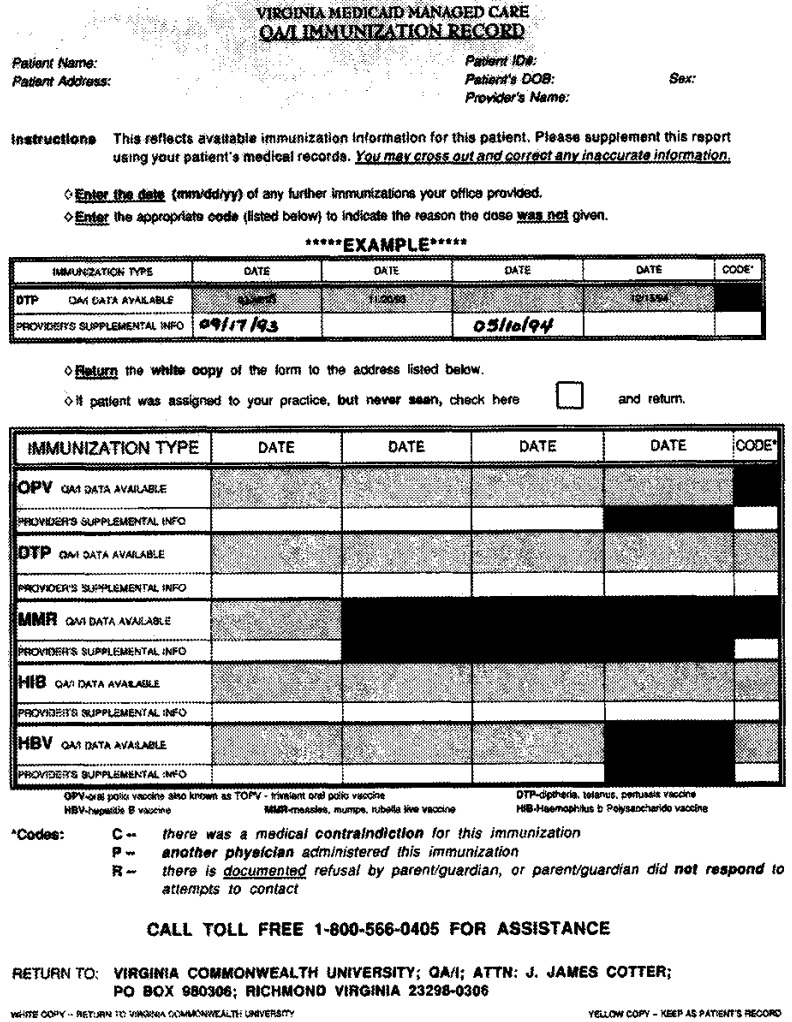

In the first year, QA/I has focused on children's immunizations, felt by many to be a sentinel indicator of the overall quality of a child's health care (Freed, 1993; Winslow, 1994; Cutts et al., 1992). The status of immunizations, based on the recommended immunization schedule of the Centers for Disease Control (1995), is being compiled for a census of over 5,000 2-year-olds cared for under Medicaid managed care. The completed patient level immunization data base thus will contain demographic information on the recipient and data on each immunization recommended by age 2, including information on contraindications and parental refusals. The project augments available Medicaid claims data in MEDALLION, Virginia's primary-care case-management program, including data from the early prevention, screening, detection, and treatment (EPSDT) program, with information from local health departments. Currently, MEDALLION EPSDT information is captured through DMAS claims data. Current HMO contracts in the OPTIONS program require special reporting for EPSDT recipients; reporting of the EPSDT information should thus continue to be accomplished through use of encounter data analysis. Once these existing sources of data are merged and reconciled, providers are sent reports (Figure 3) and asked to complete the immunization profile with information from their patients' charts. Once completed, providers simply tear off a carbon copy of the completed form, provided by the project, place it in the patient's medical record, and return the original. Project staff assist physicians with a high case loads (50 patients or more) to file their reports. Final reports will consist of anonymous comparisons of provider performance for immunization rates; the exception to anonymity will be the disclosure to providers of rates which are their own. The process will be repeated each year to stimulate improvement in the rate of immunizations. While providers might be well-served in early years by overreporting, eventually they might be poorly served by failure to show improvement due to prior overreporting.

Figure 3. Immunization Report Form.

In future years, QA/I will add a practice parameter profile on prenatal care and birth outcomes, as suggested by QARI However, as with the data on immunizations, the implementation process will take advantage of existing data sources outside of traditional encounter data—in this case the State birth registry—and will combine data sources to draw a census of all such data in the State. Other such registries in Virginia include a trauma registry and a cancer registry. Though more difficult to construct for State agencies, the merged and reconciled Medicaid and health department data dramatically ease the data reporting burden on primary-care physicians and plans. Further, the information helps them better care for their patients and maintain up-to-date medical records.

Detailed Chart Review

The DCR is a review of the process of care in ambulatory settings. Initially, the process of care for asthma will be reviewed over several visits or an interval of time. DCR will determine physicians' documentation of outcome indicators, classification of severity, education, medication, and recommendation of appropriate followup treatment. Elements in the review are based on our adaptation of the National Institute of Health's guidelines (National Asthma Education Program Expert Panel, 1991) for asthma treatment, modified to be congruent with community practice standards in Virginia. The modification was accomplished through discussions with provider representatives who advise the DMAS.

DCR will be conducted annually by reviewing charts of a sample of primary-care physicians serving Medicaid managed care patients. Project personnel will visit physicians' offices to conduct the review, rather than burden them with more data submissions. A two-staged sampling process will be used: First, physicians who treat patients with one targeted condition will be sampled randomly within various geographic areas. Secondly, a random sample of patients with the target condition will be drawn from the panel of patients for each physician in that sample. For example, during the first year of the project, a total of 1,050 cases will be reviewed: Managed care cases (n = 450) will be reviewed and compared with FFS cases (n = 450); in addition, 150 cases seen by physicians who were identified as outliers during the Recipient Household Survey (discussed later) will be reviewed. Only physician's office records will be reviewed. However, all Medicaid claims for the index condition will be linked with chart reviews by social security number, recipient identification number, and name. Initially, claims will be used to select physicians and patients. Subsequently, they will be used to help validate records, and to supplement reports to providers.

The sample size of 15-20 providers per selected program (FFS, MEDALLION, OPTIONS, or MEDALLION ID and 450-600 charts per program allows generalizations at the program level, but the number of providers examined does not allow generalization to the 600 providers involved. The number of charts per provider, representing a complete census or a sample of 30 cases of the provider's pediatric asthma care for a 1-year period, allows generalization at the individual provider level (Figure 4).

Figure 4. Quality Assessment and Improvement Project: Sampling Schemes for Detailed Chart Review of Primary-Care Physician (MEDALLION) and Health Maintenance Organizations (OPTIONS).

Pediatric asthma was chosen for initial review because it is a commonly occuring and potentially severe and costly disease among low-income children (Hahn and Beasley, 1994; Crain et al., 1994). During the second year, another condition will be added for review. Thereafter, the project will continue to review a total of two conditions annually, staggered so that a given condition is reviewed for two successive years. The second year's review will expect to find improved performance.

The reports on provider physicians will link chart reviews and claims data. The reports will answer the following questions:

Process Indicators—Were appropriateness-of-care standards met during the process of care?

Outcomes Indicators—Did unexpected outcomes occur? Were they preventable?

Risk and Severity Adjusters—Did unexpected outcomes occur because of the patient's illness, or because of poor care? Once severity of illness is adjusted for, are there unexplained unexpected outcomes?

As subsequent medical areas of concern are chosen, process and outcome indicators will be selected from authoritative sources' standards and guidelines, such as The American Academy of Pediatrics, The American College of Obstetrics and Gynecology, and The American College of Physicians. Other sources for guidelines include the Agency for Health Care Policy and Research, the National Institute of Health, and the U.S. Public Health Service. Recognizing that adaptation of guidelines into practice algorithms is fraught with difficulty, QA/I will seek provider consensus (through the Provider Advisory Committee of DMAS) regarding the appropriateness of chart review instruments drafted using published guidelines, and accept implicit adaptations of the guideline via adaptations of the review instrument.

Several methods of adjusting for risk and severity will be used in addition to age, race, sex, and socioeconomic status. The combination of a high data completion rate for a given adjuster and the accuracy of that adjuster at mortality prediction will determine which adjuster will be utilized. Several alternative adjusters will be tested, including the primary-care physician's subjective severity classification, a baseline peak flow measurement, number of admissions annually, claims data, or history of intubation by claims data The project will measure and adjust for co-morbidity (other conditions) using either Disease Staging (available in the public domain) or Deyo's adaptation of the Charlson comorbidity score (Deyo, Cherkin, and Ciol, 1992). According to the aspect of care, disease-specific adjustments for risk and severity may also be used.

Recipient Household Survey

Annually, QA/I will select and survey a geographically diverse group of the recipients of care under each provider or plan. For the initial phase of the Recipient Household Survey, MEDALLION and FFS patients will be compared. For the next phase of the Recipient Household Survey, the perceptions of OPTIONS clients will be explored. Random samples will be picked, stratified by age and substratified by HMO. Preliminary power analyses suggest that four samples of 250 OPTIONS recipients must be selected, one from each of the HMOs providing care. A limitation is that QA/I no longer has the ability to draw huge FFS or MEDALLION samples, as these programs are being phased out. The survey will be conducted using telephone interviews of clients who call a toll-free phone number in response to a mailing promising an incentive. In addition to standard questions about patients' personal characteristics, the survey will measure their global health function, satisfaction with care, access to care, and utilization of care (Sofaer, 1993; Davies and Ware, 1988). The survey is described in detail later. Because obtaining responses from low-income populations such as Medicaid recipients is known to be difficult, QA/I plans to use incentives to enhance the response rate. As previously noted, certain unexpected results from a particular survey may trigger a DCR for the patient's physicians. Examples of such trigger results include functional status that is significant lower than expected, a patient's marked dissatisfaction (e.g. more than two standard deviations from the mean), or a portionless answer to certain “trigger questions” (e.g., a negative answer to the question “Can you always get needed care?”). Unexpectedly high disenrollment rates, as provided by Virginia Medicaid, will also trigger DCR Details of the survey's components follow.

Health Status

The Medical Outcomes Institute Short Form 12 (SF-12), a newer, shorter version of the well-known SF-36, will be used to continually assess general health status among selected subgroups. This survey will assist in the validation of both the SF-12 Health Status Assessment and the Child Health Questionnaire-Parent Form 28 (New England Medical Center, 1995). Although such surveys do not measure specific outcomes of specific aspects of care, similar global measures of health function have been shown to reliably measure general health status and changes in health status (Ware and Sherbourne, 1992; Ware, 1993). SF-12 scores will measure global program benefits; since the SF-12 is based on the SF-36, which is already used worldwide, the health status of Virginia Medicaid managed care recipients can be compared with that of recipients in other plans. The SF-12 scores will not, however, be used to compare individual practitioners.

Patient Satisfaction

A second component measures basic patient satisfaction, corresponding to general satisfaction for surveys for managed care such as that used by the Group Health Association of America (1991).

Access (Symptom Response)

Based on a similar component of the Medicaid competition evaluation in the late 1980s, this section of the survey obtains the recipient's account of his or her access to primary care. The questions ask about the patient's actions in response to specific needs for immediate care. The questions also ask for the recipient's perceptions of the primary-care provider and the functioning of the provider's office (Research Triangle Institute, 1989).

Service Utilization

QA/I does not itself require claims data from HMOs. In the absence of other administrative requirements for claims, information about service use will be obtained as part of the household survey. In the beginning, QA/I will validate a client's estimates of service utilization against available records of claims. In Virginia, encounter data will continue to be collected from the HMOs after the capitated risk program is implemented. However, the quality of that data has yet to be determined. QA/I will validate a client's estimate of service utilization against available claims using a ‘fuzzy’ match that compares the client's recollected date to claims data for the months before and after the remembered utilization. The client's estimate of claims will also be validated through chart review of HMO records.

In sum, the results from year 1 of the QA/I will compare the quality of the medical care delivered in MEDALLION and OPTIONS with care delivered by the traditional FFS Medicaid program; data will be compared at the client level, the provider level, and the program level. Together, the three QA/I components described above will assess the quality of care provided in each program.

An overview of the way in which Virginia's QA/I program adapts the QARI guidelines appears in Table 3. The QA/I program is designed to produce a comprehensive evaluation by using outcomes, processes of care, and enrollee satisfaction. The program defines the key sentinel conditions whose treatment in each plan should be evaluated annually. The use of a patient satisfaction survey in QA/I strengthens the involvement of Medicaid recipients. The QA/I program, following CQI principles, also establishes a partnership with the providers of care—the primary-care physicians—to ensure improvements in the quality of care. This partnership is established through the use of practicing physicians as project investigators, the periodic review and discussion of the program's methodology and instruments by the Provider Advisory Council of the Medicaid agency, one-to-one academic detailing about the program with selected Virginia physicians, and support from the major medical societies in Virginia, manifested by letters of endorsement in project mailings.

Table 3. Comparison of the Design Elements of HCFA's Quality Assurance and Reform Initiative (QARI) With the Virginia Medicaid Program's Quality Assessment and Improvement Program.

| Design Element | HCFA QARI | Virginia Medicaid QA/I Program |

|---|---|---|

| Internal QA Programs | “[A]ll coordinated care organizations contracting with State Medicaid programs under capitation or other risk payment arrangements shall have an internal program of quality assurance.” | Primary-care providers and health maintenance organizations enhance their quality of care processes based on outcomes for sentinel, “key marker” conditions. |

| State Monitoring | “[M]onitor each coordinated care organization to assess to what extent its Quality Assurance Program meets the above State specified standards.” | Through feedback on process related to norms and peer practice, improves the consistency and reduces variation in observed process indicators. |

| “[A]nnually assess the quality of health care delivered by the managed care organization” through an “annual, independent, external review of the quality of services delivered.” | Annually assesses the quality of care improvements achieved by providers, through a review of process and outcomes. | |

| Medicaid Recipient Involvement | “[E]nrollee/member grievance procedures are instituted.” | Structured multiple surveys of enrollee/members about the quality improvement process, not just about individual grievances. |

| Monitoring and Evaluating the HCQIS | “[F]ormally monitoring, evaluating, and revising the Medicaid Coordinated Care Health Care Quality Improvement System and all of its elements on a periodic and regular basis.” | Comprehensive evaluation of outcomes, processes of care, and enrollee/member satisfaction. |

| Biannually assesses the impact of the review process itself on the processes of care. | ||

| Data Collection | Claims or encounter data turned over to Medicaid. Aggregate or summary statistics provided annually according to agreed-upon standards for reporting. | Available claims or encounter data utilized by Medicaid. Access to medical records requested, recipient households surveyed, and aggregate summary statistics provided annually according to agreed-upon standards for reporting. |

SOURCES: Smith, W.R., Cotter, J.J., and Rossiter, L.F., 1995; (Health Care Financing Administration, 1993).

Program Goals and Philosophy

The QA/I program stresses the importance of information for both patients and providers. Extensive discussions with primary-care physicians on the Virginia Medicaid Provider Advisory Committee have guided the design of the evaluation components, to make sure they will actually help the physician providers to improve their processes of care as well as improve patient outcomes and satisfaction.

The program uses the feedback loop that is central to CQI. For two of the components—practice parameter profiling and the Recipient Household Survey—feedback is annual and ongoing, whereas feedback inherent in DCR allows primary-care physicians to use insights offered by the previous years' study results to improve their processes of care (Daley, Gertman, and Delbanco, 1988; Lomas et al., 1989). Although the condition reviewed may change in the DCR, the feedback on processes of care continues annually. Thus, the same physician may receive feedback on two different conditions over the course of several years. The feedback is intended to expose practice variation and, where appropriate, diminish it. The program is flexible enough to monitor and improve many aspects of care; for example, within the DCR, each year one new condition is added and the conditions evaluated for the past 2 years are dropped.

An immediate, key result of QA/I will be the assessment of whether the quality of care provided under Medicaid managed care is comparable to that provided under FFS. Another important achievement will be a richer portrait of the quality of care than has previously been available in publicly funded programs. QA/I will also demonstrate how information on care can be compiled from diverse sources and shared to programmatically address gaps in care. Finally, QA/I creates a rich State data base on key health outcomes at the population level, often including coordinated information on eligibility, utilization, health outcomes, processes of care, and customer satisfaction.

Physicians who are primary-care providers serving low-income recipients under managed care need new information on how to provide care within the two constraints of a managed care program and patients with low incomes. Thus, the QA/I's feedback to providers of data on their own practice patterns and processes may allow them to check, perhaps for the first time, their now intuitive views of their practice styles, and will enhance their knowledge of their individual patients. This knowledge could not only help them to choose more cost-effective treatments, but also, it is to be hoped, help them to provide high-quality care to their Medicaid patients (Eisenberg, 1985).

Practical Issues Surrounding Medicaid Quality Reform

Strengths and Limitations of the Virginia Approach

Virginia has attempted to address a potential deficiency in the QARI model of structural quality oversight. Figure 5 shows that both financial and quality oversight arrangements exist between States, plans, and individual providers under primary-care case-management and capitated risk programs. However, especially in IPA model HMOs, the relative roles of the HMO and of the provider in QA/I are inadequately defined and have not been extensively studied. Thus, Virginia has chosen to supplement the HMO plans' quality oversight of their providers by directly observing quality at the provider level. As State medicaid agencies continue their transition to managed care, this issue will become more important. The supplementary oversight by the State of individual providers is one model worthy of further testing.

Figure 5. Capitation and Quality Oversight Relationships in Medicaid Managed Care.

Several limitations were encountered in Virginia's adaptation of QARI guidelines. First, the use of childhood immunization rates as a sentinel for underlying quality of care across age groups may falsely represent the quality of adult health care. For this reason, QARI and QA/I have included pregnancy outcomes as a adult sentinel health indicator. Still, one limitation of Virgina's staged implementation of QARI guidelines is incomplete quality information in early years for an important segment of enrollees.

Another limitation of the QA/I project results from the lack of resources to do a DCR on specialty provider care resulting from referrals made by primary-care physicians. However, by including all providers with asthma claims in the sampling frame, there exists the potential to do a DCR on specialists.

A third limitation of QA/I is that, although it has set up assessment mechanisms, it has not yet actually improved care. QARI guidelines appear to imply that feedback, if not measurement, of performance is tantamount to inducing change. Feedback has been the chief mechanism of quality improvement inherent in QA/I approaches so far. However, work has begun on the problem of effecting lasting and significant behavior change. The provider/agency/assessor partnership, which was begun to enable the initial description of quality, has now begun to plot strategies to improve quality through enabling strategies, reminders to patients and physicians, etc. As of yet, there are no firm solutions. It is likely that several strategies to induce behavioral change will be applied.

Sources and Limitations of Quality Data

While HMOs and other organized groups of providers may be able to create and maintain the complex internal information resources needed for population-based assessment and improvement of the quality of care, the same capacity is not usually available to individual providers who work either in primary-care case-management programs or IPAs. For these individual providers, the burden of such data collection could easily become prohibitive in terms of both time and cost. Because the design of a quality-improvement program must fit these differing individual providers, it may require assessors to rely more on claims data and less on primary data collection.

In contrast, relying on claims data from capitated or risk-based plans may prove to be futile, since claims are unnecessary under this payment mechanism. Even in HMOs where independent practices are paid FFS, subgroups may be paid on a capitated basis and have no requirement to submit claims in a uniform format. However, many HMOs already have information systems and support staff hired to collect some population-based primary data. Indeed, Federal regulations already require federally qualified HMOs to maintain an internal QA infrastructure (Health Care Financing Administration, 1993). HEDIS allows plans to draw statistical samples from these primary sources of data to report indicators of health plan performance.

QARI requires the State to serve as an outside reviewer of HMO quality plans, auditing health plans and checking on their data collection and information reporting. A Medicaid quality program must be set up to accommodate these different information sources and differing abilities to supply information. The Virginia experience suggests that a State can abide by the principles and spirit of QARI, yet not impose a heavy data collection burden on primary-care case managers, who are physicians unaccustomed to collecting internal QA data. States can anticipate that the ability of networks of providers and/or HMOs to manage data collection and reporting of quality outcomes will increase. Under that assumption, the QA/I program can phase in data collection and review. One important discovery to be made initially by the Virginia program will be the extent to which primary-care case-manager physicians under MEDALLION and health plans under OPTIONS can supply reliable, usable data for quality assessment.

Intended Uses of Quality Data

The intended use of a quality-improvement program dictates both its data structure and its report format (Table 4). A popular format for reporting quality data is the “report card” (Leatherman and Chase, 1994), which summarizes provider performance on preselected sentinel variables (e.g. mammography or immunization rates) so that consumers can compare providers. While the concept of provider accountability to consumers is said to lie behind the use of report cards, they clearly are also a potential marketing and advertising device for individual health plans. Because report cards encourage plans to compete on the basis of whatever performance parameters are cited, they can have an effect on both price and quality.

Table 4. Characteristics of Quality Assessment Programs According to Intended Use.

| For Internal Quality Improvement | For Marketing |

|---|---|

|

|

SOURCE: Smith, W.R., Cotter, J.J., and Rosslter, L.F., 1995.

Unfortunately, though, health plan report cards, with their often broad scope of indicators, lack the details that would help an individual provider improve quality in the spirit of CQI or TQM. Rather, they give a general and often insufficiently specific account of many providers' performances. Quality reports based on HEDIS indicators are ill suited for providers' internal programs of quality improvement. The assumption that individual plans that meet report card performance criteria are in fact improving quality at the individual practitioner level is an assumption that must be tested before States cede their responsibility for quality to the report card approach.

Comparisons among providers have to be convincing to the providers. To be so, they should show outcomes adjusted for severity, and base their results on appropriate samples of providers' populations. In addition, such comparative reports should not attribute performance solely to the providers when patient performance (e.g., adherence to medication regimes or followup) accounts, to some extent, for outcomes. Consumers may misinterpret results that are not severity-adjusted or epidemiologically sound, and may falsely attribute results to providers alone.

In contrast to consumer report cards, the reports produced by QA/I are intended for individual providers themselves, to use to improve quality. Thus, reports on individual providers would emphasize the feedback loop. The emphasis in QA/I is on clinical, not financial, outcomes. (Financial outcomes are addressed by the new payment scheme that places providers under the control of health care plans.) Because the QA/I reports on providers are repetitive and detailed, they allow providers to show improvement in outcomes and the reasons for it, when feasible. That is why the reports must be sufficiently in-depth to capture a significant amount of process data and relate it to patient outcomes and satisfaction (Axt-Adam, van der Wouden, and van der Does, 1993).

Opportunity for Medicaid

The QARI approach offered/adopted by HCFA is a starting place for States in revamping their systems to assure quality care under Medicaid and prudent spending of scarce Medicaid dollars. The QARI program is, at present, only guidance for the States; each State is approaching QARI differently. This article has described how the Virginia Medicaid program is undertaking to change its quality-improvement programs. Other States are no doubt adopting other mechanisms reflecting their own types of providers and plans and the regulatory environments and structures already in place.

The massive changes now under way in the relationship between managed care and Medicaid afford a unique opportunity for the States to markedly redesign or reengineer their QA programs. Additional impetus for redesign will come if Federal budget reforms result in Medicaid block grants to the States. Redesign may mean moving away from simply detecting excessive service use or fraud and abuse under FFS, since under a capitated system that is no longer needed. Rather, the new approach requires attention to how well the health care needs and wants of patients are being met.

Most States already apply basic standards of professional quality for their providers through licensing and certification, and malpractice liability laws provide some protection against the worst abuses. Thus, State Medicaid programs will best serve their constituencies and obtain more value in return for Medicaid expenditures if they undertake a supportive and cooperative role with providers and health plans. To borrow a phrase from the 1980s, State Medicaid programs can reengineer their QA efforts by trusting providers and health plans to provide high-quality care, but also verifying that both the process and the outcomes match the claims that providers and health plans make. If the States' transition to a new era, extending Medicaid to previously uninsured populations, is accompanied by a new era in QA/I, the States, working with providers, may yet show the Federal Government how to achieve health care reform.

Acknowledgments

The authors gratefully acknowledge the support and technical expertise of the leadership at Virginia Medicaid, especially Margot Fritts, Thomas E. McGraw, Donald McCall, and Joe Teefey.

Footnotes

The research presented in this article was performed under contract with the Virginia Department of Medical Assistance Services (DMAS), Commonwealth of Virginia. The authors are with the Medical College of Virginia, Virginia Commonwealth University. The opinions expressed are those of the authors and do not necessarily reflect those of the Virginia DMAS, Virginia Commonwealth University, or HCFA.

Reprint Requests: Wally R. Smith, M.D., Medical College of Virginia, Virginia Commonwealth University, P.O. Box 980102, Richmond, Virginia 23298-0102.

References

- Axt-Adam P, van der Wouden JC, van der Does E. Influencing Behavior of Physicians Ordering Laboratory Tests: A Literature Study. Medical Care. 1993;31:784–794. doi: 10.1097/00005650-199309000-00003. [DOI] [PubMed] [Google Scholar]

- Carey TS. Prepaid Versus Traditional Medicaid Plans: Lack of Effect on Pregnancy Outcomes and Prenatal Care. Health Services Research. 1991;26(2):165–181. [PMC free article] [PubMed] [Google Scholar]

- Carey TS, Weis K. Diagnostic Testing and Return Visits for Acute Problems in Prepaid, Case-Managed Medicaid Plans Compared With Fee-for-Service. Archives of Internal Medicine. 1990;150:2369–2372. [PubMed] [Google Scholar]

- Centers for Disease Control. Recommended Childhood Immunization Schedule—United States, 1995. Morbidity and Mortality Weekly Report. 1995 Jun 16;44(RR5):1–9. [PubMed] [Google Scholar]

- Crain EF, Weiss KB, Bijur PE, et al. An Estimate of the Prevalence of Asthma and Wheezing Among Inner-City Children. Pediatrics. 1994;94:356–362. [PubMed] [Google Scholar]

- Cutts FT, Zell ER, Mason D, et al. Monitoring Progress Toward U.S. Preschool Immunization Goals. Journal of the American Medical Association. 1992 Apr 8;267(14):1952–1955. [PubMed] [Google Scholar]

- Daley J, Gertman PM, Delbanco TL. Looking for Quality in Primary Care Physicians. Health Affairs (Millwood) 1988;7:107–113. doi: 10.1377/hlthaff.7.1.107. [DOI] [PubMed] [Google Scholar]

- Davies AR, Ware JE., Jr Involving Consumers in Quality of Care Assessment. Health Affairs (Millwood) 1988;7:33–48. doi: 10.1377/hlthaff.7.1.33. [DOI] [PubMed] [Google Scholar]

- Deyo RA, Cherkin DC, Ciol MA. Adapting a Clinical Comorbidity Index For Use With ICD-9-CM Administrative Databases. Journal of Clinical Epidemiology. 1992;45(6):613–619. doi: 10.1016/0895-4356(92)90133-8. [DOI] [PubMed] [Google Scholar]

- Eisenberg JM. Physician Utilization. The State of Research About Physicians' Practice Patterns. Medical Care. 1985;23:461–493. [PubMed] [Google Scholar]

- Fisher R. Medicaid Managed Care: The Next Generation. Academic Medicine. 1994 May;69(5):317–322. doi: 10.1097/00001888-199405000-00001. [DOI] [PubMed] [Google Scholar]

- Freed G. Childhood Immunizaiton Programs: An Analysis of Policy Issues. Milbank Quarterly. 1993;71(1):65–96. [PubMed] [Google Scholar]

- Gold M, Felt S. Reconciling Practice and Theory: Challenges in Managing Medicaid Managed-Care Quality. Health Care Financing Review. 1995 Summer;16(4):85–105. [PMC free article] [PubMed] [Google Scholar]

- Goldfield N. Measurement and Management of Quality in Managed Care Organizations: Alive and Improving. QRB—Quality Review Bulletin. 1991 Nov;17(11):343–348. doi: 10.1016/s0097-5990(16)30484-5. [DOI] [PubMed] [Google Scholar]

- Goldman RL. The Reliability of Peer Assessments of Quality of Care. Journal of the American Medical Association. 1992 Feb 19;267(7):958–960. [PubMed] [Google Scholar]

- Group Health Association of America. GHAA's Consumer Satisfaction Survey and User's Manual. 2nd Edition. Washington, DC.: 1991. [Google Scholar]

- Haas JS, Geary PD, Guadagnoli E, et al. The Impact of Socioeconomic Status on the Intensity of Ambulatory Treatment and Health Outcomes After Hospital Discharge for Adults With Asthma. Journal of General Internal Medicine. 1994 Mar;9:121–126. doi: 10.1007/BF02600024. [DOI] [PubMed] [Google Scholar]

- Hahn DL, Beasley JW The Wisconsin Research Network Asthma Prevalence Study Group. Diagnosed and Possible Undiagnosed Asthma: A Wisconsin Research Network Study. The Journal of Family Practice. 1994 Apr;38(4):373–379. [PubMed] [Google Scholar]

- Health Care Financing Administration. A Health Care Quality Improvement Program System for Medicaid Managed Care. Medicaid Bureau; Washington, DC.: 1993. [Google Scholar]

- Horvath J, Kaye N. Medicaid Managed Care: A Guide for States. 2nd Edition. Pordand, ME.: National Academy for State Health Policy; 1995. [Google Scholar]

- Hurley RE, Freund DA, Paul JE. Managed Care in Medicaid: Lessons for Policy and Program Design. Ann Arbor: Health Administration Press; 1993. [Google Scholar]

- Hurley RE, Rossiter LF. Final Report of the Second Year Evaluation of the MEDALLION Program. Richmond, VA.: The Williamson Institute, Virginia Commonwealth University; 1994. Prepared for the Virginia Department of Medical Assistance Services. [Google Scholar]

- Leatherman S, Chase D. Using Report Cards to Grade Health Plan Quality. The Journal of American Health Policy. 1994 Jan-Feb;4(1):32–40. [PubMed] [Google Scholar]

- Lomas J, Anderson G, Domnick-Pierre K, et al. Do Practice Guidelines Guide Practice? The Effect of a Consensus Statement on the Practice of Physicians. The New England Journal of Medicine. 1989 Nov 9;321(19):1306–1311. doi: 10.1056/NEJM198911093211906. [DOI] [PubMed] [Google Scholar]

- Luke RD, Modrow RE. Anonymous Organization and Change in Health Care Quality Assurance. Rockville, MD.: Aspen Systems Corporation; 1983. [Google Scholar]

- Lutz S. For Real Reform, Watch the States. Modern Healthcare. 1995;25:31–35. [PubMed] [Google Scholar]

- McCarthy BD, Ward RE, Young MJ. Dr. Deming and Primary Care Internal Medicine. Archives of Internal Medicine. 1994 Feb 28;154:391–384. [PubMed] [Google Scholar]

- Milakovich ME. Creating a Total Quality Health Care Environment. Health Care Management Review. 1991;16(2):9–20. doi: 10.1097/00004010-199101620-00005. [DOI] [PubMed] [Google Scholar]

- Nash DB. Evaluating the Competence of Physicians in Practice: From Peer Review to Performance Assessment. Academic Medicine. 1993 Feb;68(Supplement 2):19–22. doi: 10.1097/00001888-199302000-00024. [DOI] [PubMed] [Google Scholar]

- National Academy for State Health Policy. In QARI. Portland, ME.: 1994. [Google Scholar]

- National Asthma Education Program Expert Panel. Guidelines for the Diagnosis and Management of Asthma. Bethesda, MD.: U.S. Department of Health and Human Services, Public Health Service, National Institutes of Health; Aug, 1991. NIH Publication Number 91-3042. [Google Scholar]

- National Committee for Quality Assurance. Health Plan Employer Data and Information Set and User's Manual, Version 2.0. Washington DC.: 1993. [Google Scholar]

- National Committee for Quality Assurance Medicaid HEDIS. An Adaption of NCQA's Health Plan Employer Data and Information Set. Washington DC.: 1995. Circulation Draft. [Google Scholar]

- New England Medical Center. Child Health Questionnair—Parent Form CHQ-PF28. Boston, MA.: 1995. [Google Scholar]

- Research Triangle Institute. Evaluation of the Medicaid Competition Demonstrations: Integrative Final Report. Research Triangle Park, NC.: 1989. Prepared for the Health Care Financing Administration under Contract Number 550-83-0250. [Google Scholar]

- Rutstein DD. Measuring the Quality of Medical Care: A Clinical Method. The New England Journal of Medicine. 1976;294(11):582–588. doi: 10.1056/NEJM197603112941104. [DOI] [PubMed] [Google Scholar]

- Schear S. A Medicaid Miracle? National Journal. 1995 Feb 4;27(5):294–298. [PubMed] [Google Scholar]

- Sofaer S. Informing and Protecting Consumers Under Managed Competition. Health Affairs (Millwood) 1993;12:76–86. doi: 10.1377/hlthaff.12.suppl_1.76. [DOI] [PubMed] [Google Scholar]

- Ware JE. Comparison of Health Outcomes at a Health Maintenance Organisation With Those of FFS Care. The Lancet. 1986 May 3;1(8488):1017–1022. doi: 10.1016/s0140-6736(86)91282-1. [DOI] [PubMed] [Google Scholar]

- Ware JE., Jr . SF-36 Health Survey: Manual and Interpretation Guide. Boston: The Health Institute, New England Medical Center; 1993. [Google Scholar]

- Ware JE, Jr, Sherbourne CD. The MOS 36-Item Short-Form Health Survey (SF36): 1. Conceptual Framework and Item Selection. Medical Care. 1992;30:473–483. [PubMed] [Google Scholar]

- Winslow R. Big HMO Group Joins Drive to Lift Vaccination Rates. The Wall Street Journal. 1994 Feb 15; [Google Scholar]