Abstract

In this paper we report the set-up and results of the Multimodal Brain Tumor Image Segmentation Benchmark (BRATS) organized in conjunction with the MICCAI 2012 and 2013 conferences. Twenty state-of-the-art tumor segmentation algorithms were applied to a set of 65 multi-contrast MR scans of low- and high-grade glioma patients—manually annotated by up to four raters—and to 65 comparable scans generated using tumor image simulation software. Quantitative evaluations revealed considerable disagreement between the human raters in segmenting various tumor sub-regions (Dice scores in the range 74%–85%), illustrating the difficulty of this task. We found that different algorithms worked best for different sub-regions (reaching performance comparable to human inter-rater variability), but that no single algorithm ranked in the top for all sub-regions simultaneously. Fusing several good algorithms using a hierarchical majority vote yielded segmentations that consistently ranked above all individual algorithms, indicating remaining opportunities for further methodological improvements. The BRATS image data and manual annotations continue to be publicly available through an online evaluation system as an ongoing benchmarking resource.

Index Terms: MRI, Brain, Oncology/tumor, Image segmentation, Benchmark

I. Introduction

Gliomas are the most frequent primary brain tumors in adults, presumably originating from glial cells and infiltrating the surrounding tissues [1]. Despite considerable advances in glioma research, patient diagnosis remains poor. The clinical population with the more aggressive form of the disease, classified as high-grade gliomas, have a median survival rate of two years or less and require immediate treatment [2], [3]. The slower growing low-grade variants, such as low-grade astrocytomas or oligodendrogliomas, come with a life expectancy of several years so aggressive treatment is often delayed as long as possible. For both groups, intensive neuroimaging protocols are used before and after treatment to evaluate the progression of the disease and the success of a chosen treatment strategy. In current clinical routine, as well as in clinical studies, the resulting images are evaluated either based on qualitative criteria only (indicating, for example, the presence of characteristic hyper-intense tissue appearance in contrast-enhanced T1-weighted MRI), or by relying on such rudimentary quantitative measures as the largest diameter visible from axial images of the lesion [4], [5].

By replacing the current basic assessments with highly accurate and reproducible measurements of the relevant tumor substructures, image processing routines that can automatically analyze brain tumor scans would be of enormous potential value for improved diagnosis, treatment planning, and follow-up of individual patients. However, developing automated brain tumor segmentation techniques is technically challenging, because lesion areas are only defined through intensity changes that are relative to surrounding normal tissue, and even manual segmentations by expert raters show significant variations when intensity gradients between adjacent structures are smooth or obscured by partial voluming or bias field artifacts. Furthermore, tumor structures vary considerably across patients in terms of size, extension, and localization, prohibiting the use of strong priors on shape and location that are important components in the segmentation of many other anatomical structures. Moreover, the so-called mass effect induced by the growing lesion may displace normal brain tissues, as do resection cavities that are present after treatment, thereby limiting the reliability of spatial prior knowledge for the healthy part of the brain. Finally, a large variety of imaging modalities can be used for mapping tumor-induced tissue changes, including T2 and FLAIR MRI (highlighting differences in tissue water relaxational properties), post-Gadolinium T1 MRI (showing pathological intratumoral take-up of contrast agents), perfusion and diffusion MRI (local water diffusion and blood flow), and MRSI (relative concentrations of selected metabolites), among others. Each of these modalities provides different types of biological information, and therefore poses somewhat different information processing tasks.

Because of its high clinical relevance and its challenging nature, the problem of computational brain tumor segmentation has attracted considerable attention during the past 20 years, resulting in a wealth of different algorithms for automated, semi-automated, and interactive segmentation of tumor structures (see [6] and [7] for good reviews). Virtually all of these methods, however, were validated on relatively small private datasets with varying metrics for performance quantification, making objective comparisons between methods highly challenging. Exacerbating this problem is the fact that different combinations of imaging modalities are often used in validation studies, and that there is no consistency in the tumor sub-compartments that are considered. As a consequence, it remains difficult to judge which image segmentation strategies may be worthwhile to pursue in clinical practice and research; what exactly the performance is of the best computer algorithms available today; and how well current automated algorithms perform in comparison with groups of human expert raters.

In order to gauge the current state-of-the-art in automated brain tumor segmentation and compare between different methods, we organized in 2012 and 2013 a Multimodal Brain Tumor Image Segmentation Benchmark (BRATS) challenge in conjunction with the international conference on Medical Image Computing and Computer Assisted Interventions (MICCAI). For this purpose, we prepared and made available a unique dataset of MR scans of low- and high-grade glioma patients with repeat manual tumor delineations by several human experts, as well as realistically generated synthetic brain tumor datasets for which the ground truth segmentation is known. Each of 20 different tumor segmentation algorithms was optimized by their respective developers on a subset of this particular dataset, and subsequently run on the remaining images to test performance against the (hidden) manual delineations by the expert raters. In this paper we report the set-up and the results of this BRATS benchmark effort. We also describe the BRATS reference dataset and online validation tools, which we make publicly available as an ongoing benchmarking resource for future community efforts.

The paper is organized as follows. We briefly review the current state-of-the-art in automated tumor segmentation, and survey benchmark efforts in other biomedical image interpretation tasks, in Section II. We then describe the BRATS set-up and data, the manual annotation of tumor structures, and the evaluation process in Section III. Finally, we report and discuss the results of our comparisons in Sections IV and V, respectively. Section VI concludes the paper.

II. Prior Work

Algorithms for Brain Tumor Segmentation

The number of clinical studies involving brain tumor quantification based on medical images has increased significantly over the past decades. Around a quarter of such studies relies on automated methods for tumor volumetry (Fig. 1). Most of the existing algorithms for brain tumor analysis focus on the segmentation of glial tumor, as recently reviewed in [6], [7]. Comparatively few methods deal with less frequent tumors such as meningioma [8]–[12] or specific glioma subtypes [13].

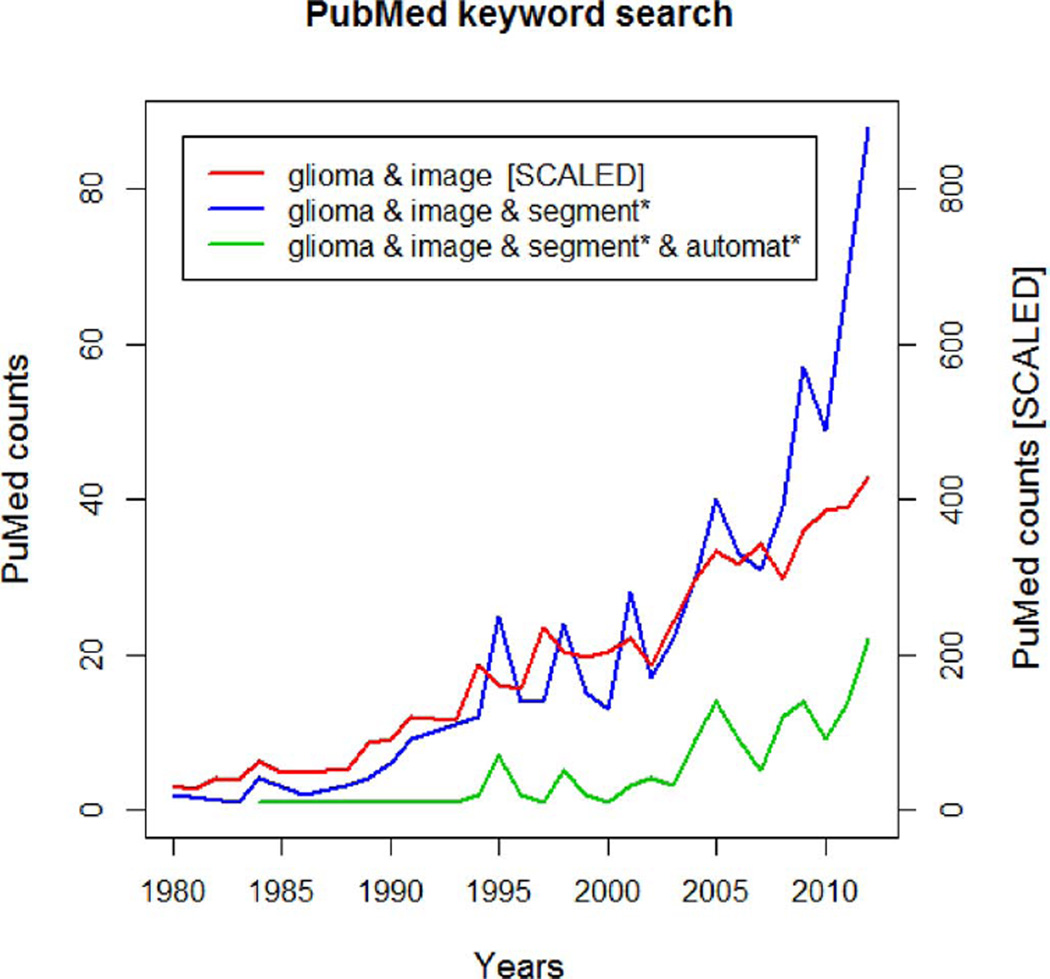

Fig. 1.

Results of PubMed searches for brain tumor (glioma) imaging (red), tumor quantification using image segmentation (blue), and automated tumor segmentation (green). While the tumor imaging literature has seen a nearly linear increase over the last 30 years, the number of publications involving tumor segmentation has grown more than linearly since 5–10 years. Around 25% of such publications refer to “automated” tumor segmentation.

Methodologically, many state-of-the-art algorithms for tumor segmentation are based on techniques originally developed for other structures or pathologies, most notably for automated white matter lesion segmentation that has reached considerable accuracy [14]. While many technologies have been tested for their applicability to brain tumor detection and segmentation—e.g., algorithms from image retrieval as an early example [9]—we can categorize most current tumor segmentation methods into one of two broad families. In the so-called generative probabilistic methods, explicit models of anatomy and appearance are combined to obtain automated segmentations, which offers the advantage that domain-specific prior knowledge can easily be incorporated. Discriminative approaches, on the other hand, directly learn the relationship between image intensities and segmentation labels without any domain knowledge, concentrating instead on specific (local) image features that appear relevant for the tumor segmentation task.

Generative models make use of detailed prior information about the appearance and spatial distribution of the different tissue types. They often exhibit good generalization to unseen images, and represent the state-of-the-art for many brain tissue segmentation tasks [15]–[21]. Encoding prior knowledge for a lesion, however, is difficult. Tumors may be modeled as outliers relative to the expected shape [22], [23] or image signal of healthy tissues [17], [24] which is similar to approaches for other brain lesions, such as Multiple Sklerosis [25], [26]. In [17], for instance, a criterion for detecting outliers is used to generate a tumor prior in a subsequent expectation-maximizations segmentation which treats tumor as an additional tissue class. Alternatively, the spatial prior for the tumor can be derived from the appearance of tumor-specific “bio-markers” [27], [28], or from using tumor growth models to infer the most likely localization of tumor structures for a given set of patient images [29]. All these models rely on registration for accurately aligning images and spatial priors, which is often problematic in the presence of large lesions or resection cavities. In order to overcome this difficulty, both joint registration and tumor segmentation [18], [30] and joint registration and estimation of tumor displacement [31] have been studied. A limitation of generative models is the significant effort required for transforming an arbitrary semantic interpretation of the image, for example, the set of expected tumor substructures a radiologist would like to have mapped in the image, into appropriate probabilistic models.

Discriminative models directly learn from (manually) annotated training images the characteristic differences in the appearance of lesions and other tissues. In order to be robust against imaging artifacts and intensity and shape variations, they typically require substantial amounts of training data [32]–[38]. As a first step, these methods typically extract dense, voxel-wise features from anatomical maps [35], [39] calculating, for example, local intensity differences [40]–[42], or intensity distributions from the wider spatial context of the individual voxel [39], [43], [44]. As a second step, these features are then fed into classification algorithms such as support vector machines [45] or decision trees [46] that learn boundaries between classes in the high-dimensional feature space, and return the desired tumor classification maps when applied to new data. One drawback of this approach is that, because of the explicit dependency on intensity features, segmentation is restricted to images acquired with the exact same imaging protocol as the one used for the training data. Even then, careful intensity calibration remains a crucial part of discriminative segmentation methods in general [47]–[49], and tumor segmentation is no exception to this rule.

A possible direction that avoids the calibration issues of discriminative approaches, as well as the limitations of generative models, is the development of joint generative-discriminative methods. These techniques use a generative method in a pre-processing step to generate stable input for a subsequent discriminative model that can be trained to predict more complex class labels [50], [51].

Most generative and discriminative segmentation approaches exploit spatial regularity, often with extensions along the temporal dimension for longitudinal tasks [52]–[54]. Local regularity of tissue labels can be encoded via boundary modeling for both generative [17], [55] and discriminative models [32], [33], [35], [55], [56], potentially enforcing non-local shape constraints [57]. Markov random field (MRF) priors encourage similarity among neighboring labels in the generative context [25], [37], [38]. Similarly, conditional random fields (CRFs) help enforce—or prohibit—the adjacency of specific labels and, hence, impose constraints considering the wider spatial context of voxels [36], [43]. While all these segmentation models act locally, more or less at the voxel level, other approaches consider prior knowledge about the relative location of tumor structures in a more global fashion. They learn, for example, the neighborhood relationships between such structures as edema, Gadolinium-enhancing tumor structures, or necrotic parts of the tumor through hierarchical models of super-voxel clusters [42], [58], or by relating image patterns with phenomenological tumor growth models adapted to patient scans [31].

While each of the discussed algorithms was compared empirically against an expert segmentation by its authors, it is difficult to draw conclusions about the relative performance of different methods. This is because datasets and pre-processing steps differ between studies, the image modalities considered, the annotated tumor structures, and the used evaluation scores all vary widely as well (Table I).

TABLE I.

Data Sets, MR Image Modalities, Evaluation Scores, and Even Tumor Types Used for Self-Reported Performances in the Brain Tumor Image Segmentation Literature Differ Widely. Shown Is a Selection of Algorithms Discussed Here and in [7]. Tumor Type Is Defined as G—Glioma (Unspecified), HG—High-Grade Glioma, LG—Low-Grade Glioma, M—Meningioma; “na” Indicates That No Information Is Reported. When Available the Number of Training and Testing Datasets Is Reported, Along With the Testing Mechanism: tt—Separate Training and Testing Datasets, cv—Cross-Validation

| Algorithm | MRI modalities |

Approach | Perform. score |

Tumor type |

trainining/testing (tt/cv) |

|---|---|---|---|---|---|

| Fletcher 2001 | T1 T2 PD | Fuzzy clustering w/ image retrieval |

Match (53–91%) |

na | 2/4 tt |

| Kaus 2001 | T1 | Template-moderated classification |

Accuracy (95%) |

LG, M | 10/10 tt |

| Ho 2002 | T1 T1c | Level-sets w/ region competition |

Jaccard (85–93%) |

G, M | na/5 tt |

| Prastawa 2004 | T2 | Generative model w/ outlier detection |

Jaccard (59–89%) |

G, M | na/3 tt |

| Corso 2008 | T1 T1c T2 FLAIR |

Weighted aggregation | Jaccard (62–69%) |

HG | 10/10 tt |

| Verma 2008 | T1 T1c T2 FLAIR DTI |

SVM | Accuracy (34–93%) |

HG | 14/14 cv |

| Wels 2008 | T1 T1c T2 | Discriminative model w/ CRF |

Jaccard (78%) |

G | 6/6 cv |

| Cobzas 2009 | T1c FLAIR | Level-set w/ CRF | Jaccard (50–75%) |

G | 6/6 tt |

| Wang 2009 | T1 | Fluid vector flow | Tanimoto (60%) |

na | 0/10 tt |

| Menze 2010 | T1 T1c T2 FLAIR |

Generative model w/ lesion class |

Dice (40–70%) |

G | 25/25 cv |

| Bauer 2011 | T1 T1c T2 FLAIR |

Hierarchical SVM w/ CRF |

Dice (77–84%) |

G | 10/10 cv |

Image Processing Benchmarks

Benchmarks that compare how well different learning algorithms perform in specific tasks have gained a prominent role in the machine learning community. In recent years, the idea of benchmarking has also gained popularity in the field of medical image analysis. Such benchmarks, sometimes referred to as “challenges,” all share the common characteristic that different groups optimize their own methods on a training dataset provided by the organizers, and then apply them in a structured way to a common, independent test dataset. This situation is different from many published comparisons, where one group applies different techniques to a dataset of their choice, which hampers a fair assessment as this group may not be equally knowledgeable about each method and invest more effort in optimizing some algorithms than others (see [59]).

Once benchmarks have been established, their test dataset often becomes a new standard in the field on how to evaluate future progress in the specific image processing task being tested. The annotation and evaluation protocols also may remain the same even when new data are added (to overcome the risk of over-fitting this one particular dataset that may take place after a while), or when related benchmarks are initiated. A key component in benchmarking is an online tool for automatically evaluating segmentations submitted by individual groups [60], as this allows the labels of the test set never to be made public. This helps ensure that any reported results are not influenced by unintentional overtraining of the method being tested, and that they are therefore truly representative of the method's segmentation performance in practice.

Recent examples of community benchmarks dealing with medical image segmentation and annotation include algorithms for artery centerline extraction [61], [62], vessel segmentation and stenosis grading [63], liver segmentation [64], [65], detection of microaneurysms in digital color fundus photographs [66], and extraction of airways from CT scans [67]. Rather few community-wide efforts have focused on segmentation algorithms applied to images of the brain (a current example deals with brain extraction (“masking”) [68]), although many of the validation frameworks that are used to compare different segmenters and segmentation algorithms, such as STAPLE [69], [70], have been developed for applications in brain imaging, or even brain tumor segmentation [71].

III. Set-Up of the Brats Benchmark

The BRATS benchmark was organized as two satellite challenge workshops in conjunction with the MICCAI 2012 and 2013 conferences. Here we describe the set-up of both challenges with the participating teams, the imaging data and the manual annotation process, as well as the validation procedures and online tools for comparing the different algorithms. The BRATS online tools continue to accept new submissions, allowing new groups to download the training and test data and submit their segmentations for automatic ranking with respect to all previous submissions.1 A common entry page to both benchmarks, as well as to the latest BRATS-related initiatives is www.braintumorsegmentation.org.2

A. The MICCAI 2012 and 2013 Benchmark Challenges

The first benchmark was organized on October 1, 2012 in Nice, France, in a workshop held as part of the MICCAI 2012 conference. During Spring 2012, participants were solicited through private e-mails as well as public e-mail lists and the MICCAI workshop announcements. Participants had to register with one of the online systems (cf. Section III-F) and could download annotated training data. They were asked to submit a four page summary of their algorithm, also reporting a cross-validated training error. Submissions were reviewed by the organizers and a final group of twelve participants were invited to contribute to the challenge. The training data the participants obtained in order to tune their algorithms consisted of multi-contrast MR scans of 10 low- and 20 high-grade glioma patients that had been manually annotated with two tumor labels (“edema” and “core,” cf. Section III-D) by a trained human expert. The training data also contained simulated images for 25 high-grade and 25 low-grade glioma subjects with the same two “ground truth” labels. In a subsequent “on-site challenge” at the MICCAI workshop, the teams were given a 12 h time period to evaluate previously unseen test images. The test images consisted of 11 high- and 4 low-grade real cases, as well as 10 high- and 5 low-grade simulated images. The resulting segmentations were then uploaded by each team to the online tools, which automatically computed performance scores for the two tumor structures. Of the twelve groups that participated in the benchmark, six submitted their results in time during the on-site challenge, and one group submitted their results shortly afterwards (Subbanna). During the plenary discussions it became apparent that using only two basic tumor classes was insufficient as the “core” label contained substructures with very different appearances in the different modalities. We therefore had all the training data re-annotated with four tumor labels, refining the initially rather broad “core” class by labels for necrotic, cystic and enhancing substructures. We asked all twelve workshop participants to update their algorithms to consider these new labels and to submit their segmentation results—on the same test data—to our evaluation platform in an “off-site” evaluation about six months after the event in Nice, and ten of them submitted updated results (Table II).

TABLE II.

Overview of the Algorithms Employed in 2012 and 2013. For a Full Description Please Refer to the Appendix and the Workshop Proceedings Available Online (see Section III-A). Three Non-Automatic Algorithms Required a Manual Initialization

| Method | Description | Fully automated |

|

|---|---|---|---|

| Integrated hierarchical random forest classification and CRF regularization | Yes | 2012 | |

| Spatial decision forests with intrinsic hierarchy [42] | Yes | ||

| “Tumorcut” method [72] | No | ||

| Generative lesion segmentation model [73] | Yes | ||

| Generative-discriminative model building on top of “Menze (G)” | Yes | ||

| Generative model with latent atlases and level sets | No | ||

| Hybrid clustering and classification by logistic regression | Yes | ||

| Hierarchical MRF approach with Gabor features | Yes | ||

| Learned MRF on supervoxels clusters | Yes | ||

| Context-sensitive features with a decision tree ensemble | Yes | ||

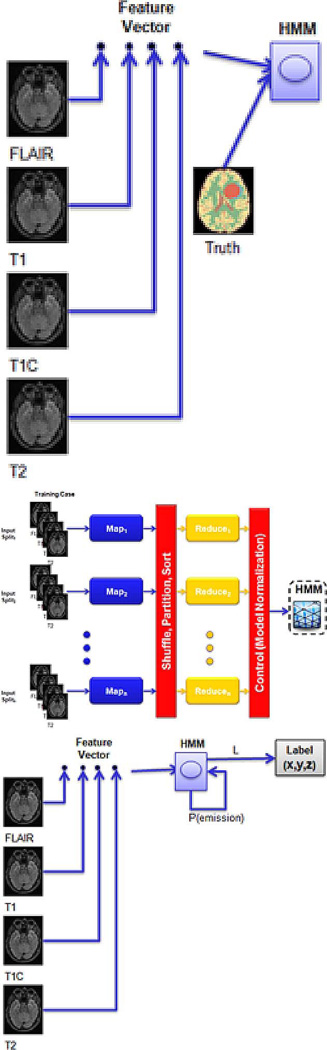

| Bit-grouping artificial immune network | Yes | 2013 | |

| Patch-based tissue segmentation approach | Yes | ||

| Hidden Markov fields and variational EM in a generative model | Yes | ||

| Random forest classifier using neighborhood and local context features | Yes | ||

| Semi-automatic segmentation using active contours | No | ||

| Appearance- and context-sensitive features with a random forest and CRF | Yes | ||

| Texture features and random forests | Yes | ||

| “Map-Reduce Enabled” hidden Markov models | Yes | ||

| Random forest classifier using the open source ANTs/ANTsR packages | Yes | ||

| Like “Zhao (I)” with updated unary potential | Yes |

The second benchmark was organized on September 22, 2013 in Nagoya, Japan in conjunction with MICCAI 2013. Participants had to register with the online systems and were asked to describe their algorithm and report training scores during the summer, resulting in ten teams submitting short papers, all of which were invited to participate. The training data for the benchmark was identical to the real training data of the 2012 benchmark. No synthetic cases were evaluated in 2013, and therefore no synthetic training data was provided. The participating groups were asked to also submit results for the 2012 test dataset (with the updated labels) as well as to 10 new test datasets to the online system about four weeks before the event in Nagoya as part of an “off-site” leaderboard evaluation. The “on-site challenge” at the MICCAI 2013 workshop proceeded in a similar fashion to the 2012 edition: the participating teams were provided with 10 high-grade cases, which were previously unseen test images not included in the 2012 challenge, and were given a 12 h time period to upload their results for evaluation. Out of the ten groups participating in 2013 (Table II), seven groups submitted their results during the on-site challenge; the remaining three submitted their results shortly afterwards (Buendia, Guo, Taylor).

Altogether, we report three different test results from the two events: one summarizing the on-site 2012 evaluation with two tumor labels for a test set with 15 real cases (11 high-grade, four low-grade) and 15 synthetically generated images (10 high-grade, five low-grade); one summarizing the on-site 2013 evaluation with four tumor labels on a fresh set of 10 new real cases (all high-grade); and one from the off-site tests which ranks all 20 participating groups from both years, based on the 2012 real test data with the updated four labels. Our emphasis is on the last of the three tests.

B. Tumor Segmentation Algorithms Tested

Table II contains an overview of the methods used by the participating groups in both challenges. In 2012, four out of the twelve participants used generative models, one was a generative-discriminative approach, and five were discriminative; seven used some spatially regularizing model component. Two methods required manual initialization. The two automated segmentation methods that topped the list of competitors during the on-site challenge of the first benchmark used a discriminative probabilistic approach relying on a random forest classifier, boosting the popularity of this approach in the second year. As a result, in 2013 participants employed one generative model, one discriminative-generative model, and eight discriminative models out of which a total of four used random forests as the central learning algorithm; seven had a processing step that enforced spatial regularization. One method required manual initialization. A detailed description of each method is available in the workshop proceedings,3 as well as in the Appendix/Online Supporting Information.

C. Image Datasets

Clinical Image Data

The clinical image data consists of 65 multi-contrast MR scans from glioma patients, out of which 14 have been acquired from low-grade (histological diagnosis: astrocytomas or oligoastrocytomas) and 51 from high-grade (anaplastic astrocytomas and glioblastoma multiforme tumors) glioma patients. The images represent a mix of pre- and post-therapy brain scans, with two volumes showing resections. They were acquired at four different centers—Bern University, Debrecen University, Heidelberg University, and Massachusetts General Hospital—over the course of several years, using MR scanners from different vendors and with different field strengths (1.5T and 3T) and implementations of the imaging sequences (e.g., 2D or 3D). The image datasets used in the study all share the following four MRI contrasts (Fig. 2).

T1: T1-weighted, native image, sagittal or axial 2D acquisitions, with 1–6 mm slice thickness.

T1c: T1-weighted, contrast-enhanced (Gadolinium) image, with 3D acquisition and 1 mm isotropic voxel size for most patients.

T2: T2-weighted image, axial 2D acquisition, with 2–6 mm slice thickness.

FLAIR: T2-weighted FLAIR image, axial, coronal, or sagittal 2D acquisitions, 2–6 mm slice thickness.

To homogenize these data we co-registered each subject's image volumes rigidly to the T1c MRI, which had the highest spatial resolution in most cases, and resampled all images to 1 mm isotropic resolution in a standardized axial orientation with a linear interpolator. We used a rigid registration model with the mutual information similarity metric as it is implemented in ITK [74] (“VersorRigid3DTransform” with “MattesMutualInformation” similarity metric and three multi-resolution levels). No attempt was made to put the individual patients in a common reference space. All images were skull stripped [75] to guarantee anomymization of the patients.

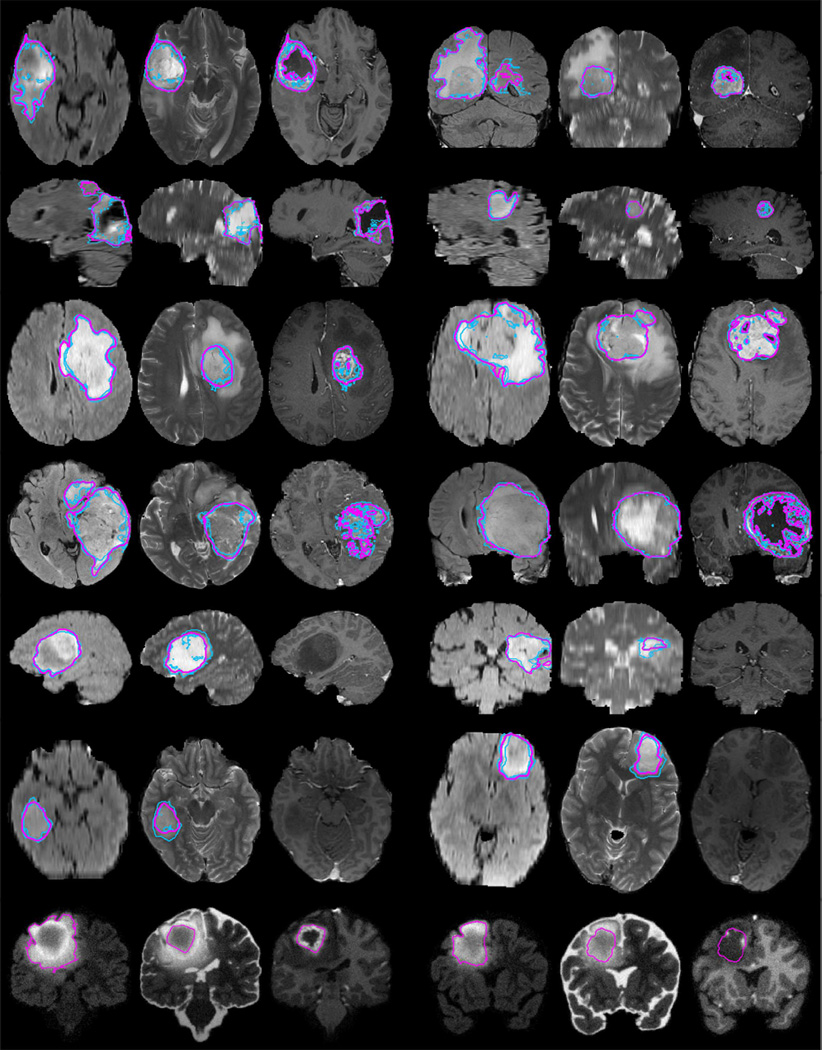

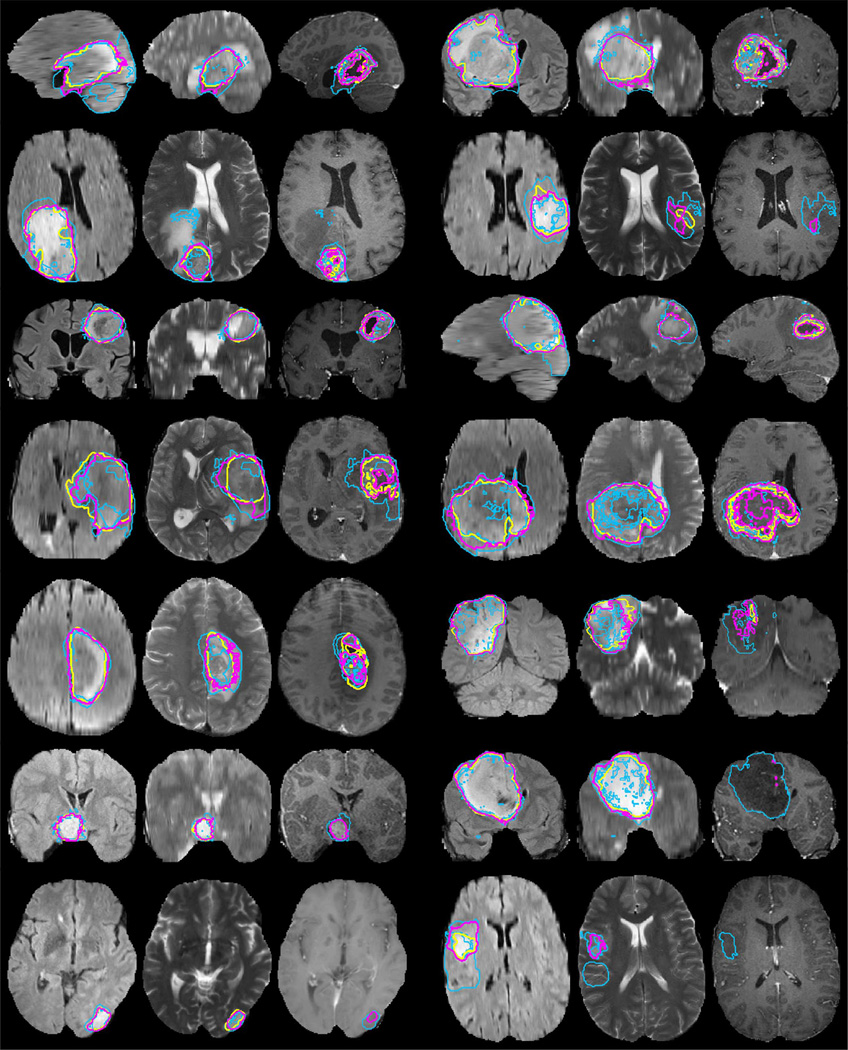

Fig. 2.

Examples from the BRATS training data, with tumor regions as inferred from the annotations of individual experts (blue lines) and consensus segmentation (magenta lines). Each row shows two cases of high-grade tumor (rows 1–4), low-grade tumor (rows 5–6), or synthetic cases (last row). Images vary between axial, sagittal, and transversal views, showing for each case: FLAIR with outlines of the whole tumor region (left); T2 with outlines of the core region (center); T1c with outlines of the active tumor region if present (right). Best viewed when zooming into the electronic version of the manuscript.

Synthetic Image Data

The synthetic data of the BRATS 2012 challenge consisted of simulated images for 35 high-grade and 30 low-grade gliomas that exhibit comparable tissue contrast properties and segmentation challenges as the clinical dataset (Fig. 2, last row). The same image modalities as for the real data were simulated, with similar 1 mm3 resolution. The images were generated using the TumorSim software,4 a cross-platform simulation tool that combines physical and statistical models to generate synthetic ground truth and synthesized MR images with tumor and edema [76]. It models infiltrating edema adjacent to tumors, local distortion of healthy tissue, and central contrast enhancement using the tumor growth model of Clatz et al. [77], combined with a routine for synthesizing texture similar to that of real MR images. We parameterized the algorithm according to the parameters proposed in [76], and applied it to anatomical maps of healthy subjects from the BrainWeb simulator [78], [79]. We synthesized image volumes and degraded them with different noise levels and intensity inhomogeneities, using Gaussian noise and polynomial bias fields with random coefficients.

D. Expert Annotation of Tumor Structures

While the simulated images came with “ground truth” information about the localization of the different tumor structures, the clinical images required manual annotations. We defined four types of intra-tumoral structures, namely “edema,” “non-enhancing (solid) core,” “necrotic (or fluid-filled) core,” and “non-enhancing core.” These tumor substructures meet specific radiological criteria and serve as identifiers for similarly-looking regions to be recognized through algorithms processing image information rather than offering a biological interpretation of the annotated image patterns. For example, “non-enhancing core” labels may also comprise normal enhancing vessel structures that are close to the tumor core, and “edema” may result from cytotoxic or vasogenic processes of the tumor, or from previous therapeutical interventions.

Tumor Structures and Annotation Protocol

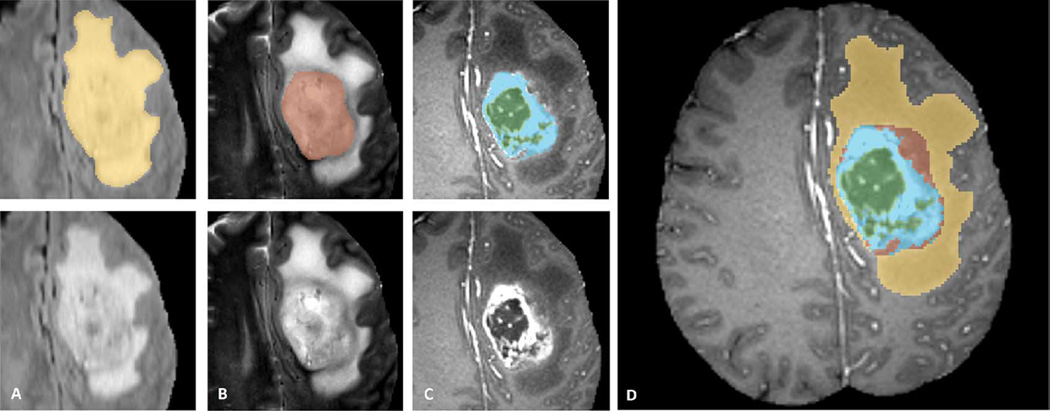

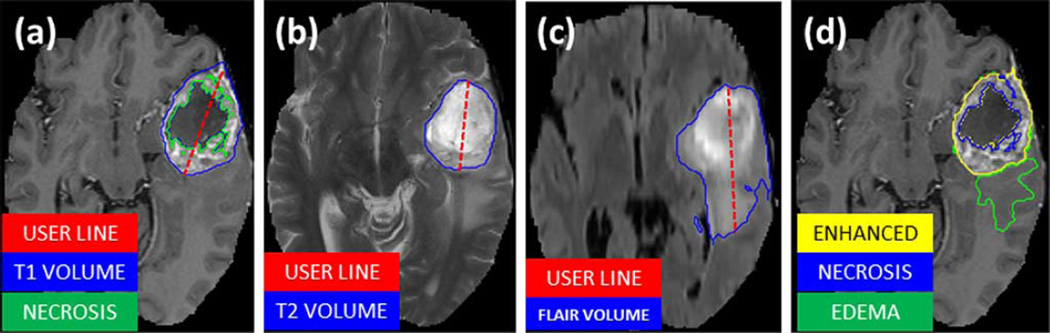

We used the following protocol for annotating the different visual structures, where present, for both low- and high-grade cases (illustrated in Fig. 3).

The “edema” was segmented primarily from T2 images. FLAIR was used to cross-check the extension of the edema and discriminate it against ventricles and other fluid-filled structures. The initial “edema” segmentation in T2 and FLAIR contained the core structures that were then relabeled in subsequent steps [Fig. 3(A)].

As an aid to the segmentation of the other three tumor substructures, the so-called gross tumor core—including both enhancing and non-enhancing structures—was first segmented by evaluating hyper-intensities in T1c (for high-grade cases) together with the inhomogenous component of the hyper-intense lesion visible in T1 and the hypo-intense regions visible in T1 [Fig. 3(B)].

The “enhancing core” of the tumor was subsequently segmented by thresholding T1c intensities within the resulting gross tumor core, including the Gadolinium enhancing tumor rim and excluding the necrotic center and vessels. The appropriate intensity threshold was determined visually on a case-by-case basis [Fig. 3(C)].

The “necrotic (or fluid-filled) core” was defined as the tortuous, low intensity necrotic structures within the enhancing rim visible in T1c. The same label was also used for the very rare instances of hemorrhages in the BRATS data [Fig. 3(C)].

Finally, the “non-enhancing (solid) core” structures were defined as the remaining part of the gross tumor core, i.e., after subtraction of the “enhancing core” and the “necrotic (or fluid-filled) core” structures [Fig. 3(D)].

Fig. 3.

Manual annotation through expert raters. Shown are image patches with the tumor structures that are annotated in the different modalities (top left) and the final labels for the whole dataset (right). Image patches show from left to right: the whole tumor visible in FLAIR (A), the tumor core visible in T2 (B), the enhancing tumor structures visible in T1c (blue), surrounding the cystic/necrotic components of the core (green) (C). Segmentations are combined to generate the final labels of the tumor structures (D): edema (yellow), non-enhancing solid core (red), necrotic/cystic core (green), enhancing core(blue).

Following this protocol, the MRI scans were annotated by a trained team of radiologists and altogether seven radiographers in Bern, Debrecen and Boston. They outlined structures in every third axial slice, interpolated the segmentation using morphological operators (region growing), and visually inspected the results in order to perform further manual corrections, if necessary. All segmentations were performed using the 3D slicer software5, taking about 60 min per subject. As mentioned previously, the tumor labels used initially in the BRATS 2012 challenge contained only two classes for both high- and low-grade glioma cases: “edema,” which was defined similarly as the edema class above, and “core” representing the three core classes. The simulated data used in the 2012 challenge also had ground truth labels only for “edema” and “core.”

Algorithm 1. The hierarchical majority vote.

The number of raters/algorithms that assigned a given voxel to one of the four tumor structures is indicated by nedm, nnen, nnec, nenh; nall is the total number of raters/algorithms.

| label ← “nrm” | ▷ normal tissue |

| if (nedm + nnen + nnec + nenh) ≥ nall/2 then | |

| label ← “edm” | ▷ edema |

| if (nnen + nnec + nenh) ≥ nall/2 then | |

| label ← “nen” | ▷ non-enhancing core |

| if (nnec + nenh) ≥ nall/2 then | |

| label ← “nec” | ▷ necrotic core |

| if nenh ≥ nall/2 then | |

| label ← “enh” | ▷ enhancing core |

| end if | |

| end if | |

| end if | |

| end if |

Consensus Labels

In order to deal with ambiguities in individual tumor structure definitions, especially in infiltrative tumors for which clear boundaries are hard to define, we had all subjects annotated by several experts, and subsequently fused the results to obtain a single consensus segmentation for each subject. The 30 training cases were labeled by four different raters, and the test set from 2012 was annotated by three. The additional testing cases from 2013 were annotated by one rater. For the data sets with multiple annotations we fused the resulting label maps by assuming increasing “severity” of the disease from edema to non-enhancing (solid) core to necrotic (or fluid-filled) core to enhancing core, using a hierarchical majority voting scheme that assigns a voxel to the highest class to which at least half of the raters agree on (Algorithm 1). To illustrate this rule: a voxel that has been labeled as edema, edema, non-enhancing core, and necrotic core by the four annotators would be assigned to non-enhancing core structure as this is the most serious label that 50% of the experts agree on.

We chose this hierarchical majority vote to include prior knowledge about the structure and the ranking of the labels. A direct application of other multi-class fusion schemes that do not consider relations between the class labels, such as the STAPLE algorithm [69], lead to implausible fusion results where, for example, edema and normal voxels formed regions that were surrounded by “core” structures.

E. Evaluation Metrics and Ranking

Tumor Regions Used for Validation

The tumor structures represent the visual information of the images, and we provided the participants with the corresponding multi-class labels to train their algorithms. For evaluating the performance of the segmentation algorithms, however, we grouped the different structures into three mutually inclusive tumor regions that better represent the clinical application tasks, for example, in tumor volumetry. We obtain

the “whole” tumor region (including all four tumor structures),

the tumor “core” region (including all tumor structures except “edema”),

and the “active” tumor region (only containing the “enhancing core” structures that are unique to high-grade cases).

Examples of all three regions are shown in Fig. 2. By evaluating multiple binary segmentation tasks, we also avoid the problem of specifying misclassification costs for trading false assignments in between, for example, edema and necrotic core structures or enhancing core and normal tissue, which cannot easily be solved in a global manner.

Performance Scores

For each of the three tumor regions we obtained a binary map with algorithmic predictions P ∈ {0, 1} and the experts' consensus truth T ∈ {0, 1}, and we calculated the well-known Dice score

where Λ is the logical AND operator, |·| is the size of the set (i.e., the number of voxels belonging to it), and P1 and T1 represent the set of voxels where P = 1 and T = 1, respectively (Fig. 4). The Dice score normalizes the number of true positives to the average size of the two segmented areas. It is identical to the F score (the harmonic mean of the precision recall curve) and can be transformed monotonously to the Jaccard score.

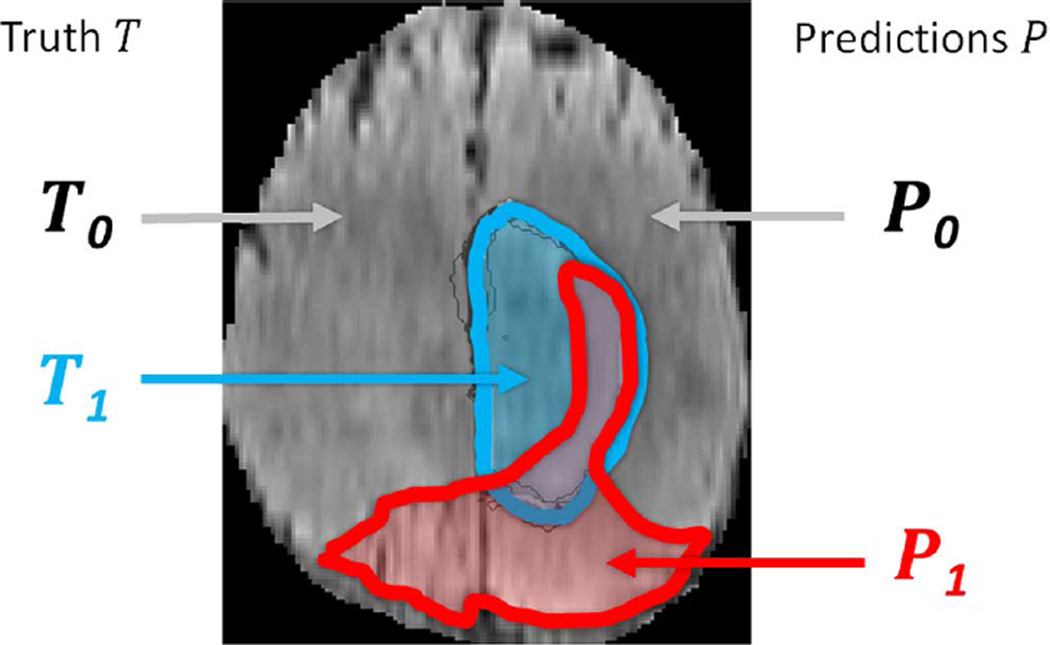

Fig. 4.

Regions used for calculating Dice score, sensitivity, specificity, and robust Hausdorff score. Region T1 is the true lesion area (outline blue), T0 is the remaining normal area. P1 is the area that is predicted to be lesion by—for example—an algorithm (outlined red), and P0 is predicted to be normal. P1 has some overlap with T1 in the right lateral part of the lesion, corresponding to the area referred to as P1 Λ T1 in the definition of the Dice score (Eq. III.E).

We also calculated the so-called sensitivity (true positive rate) and specificity (true negative rate)

where P0 and T0 represent voxels where P = 0 and T = 0, respectively.

Dice score, sensitivity, and specificity are measures of voxel-wise overlap of the segmented regions. A different class of scores evaluates the distance between segmentation boundaries, i.e., the surface distance. A prominent example is the Hausdorff distance calculating for all points p on the surface ∂P1 of a given volume P1 the shortest least-squares distance d(p, t) to points t on the surface ∂T1 of the other given volume T1, and vice versa, finally returning the maximum value over all d

Returning the maximum over all surface distances, however, makes the Hausdorff measure very susceptible to small outlying subregions in either P1 or T1. In our evaluation of the “active tumor” region, for example, both P1 or T1 may consist of multiple small areas or nonconvex structures with high surface-to-area ratio. In the evaluation of the “whole tumor,” predictions with few false positive regions—that do not substantially affect the overall quality of the segmentation as they could be removed with an appropriate postprocessing—might also have a drastic impact on the overall Hausdorff score. To this end we used a robust version of the Hausdorff measure—reporting not the maximal surface distance between P1 and T1, but the 95% quantile of it.

Significance Tests

In order to compare the performance of different methods across a set of images, we performed two types of significance tests on the distribution of their Dice scores. For the first test we identified the algorithm that performed best in terms of average Dice score for a given task, i.e., for the whole tumor region, tumor core region, or active tumor region. We then compared the distribution of the Dice scores of this “best” algorithm with the corresponding distributions of all other algorithms. In particular, we used a nonparametric Cox-Wilcoxon test, testing for significant differences at a 5% significance level, and recorded which of the alternative methods could not be distinguished from the “best” method this way.

In the same way we also compared the distribution of the inter-rater Dice scores, obtained by pooling the Dice scores across each pair of human raters and across subjects—with each subject contributing six scores if there are four raters, and three scores if there are three raters—to the distribution of the Dice scores calculated for each algorithm in a comparison with the consensus segmentation. We then recorded whenever the distribution of an algorithm could not be distinguished from the inter-rater distribution this way. We note that our inter-rater score somewhat overestimates variability as it is calculated from two manual annotations that may both be very eccentric. In the same way a comparison between a rater and the consensus label may somewhat underestimates variability, as the same manual annotations had contributed to the consensus label it now is compared against.

F. Online Evaluation Platforms

A central element of the BRATS benchmark is its online evaluation tool. We used two different platforms: the Virtual Skeleton Database (VSD), hosted at the University of Bern, and the Multimedia Digital Archiving System (MIDAS), hosted at Kitware [80]. On both systems participants can download annotated training and “blinded” test data, and upload their segmentations for the test cases. Each system automatically evaluates the performance of the uploaded label maps, and makes detailed—case by case—results available to the participant. Average scores for the different subgroups are also reported online, as well as a ranked comparison with previous results submitted for the same test sets.

The VSD6 provides an online repository system tailored to the needs of the medical research community. In addition to storing and exchanging medical image datasets, the VSD provides generic tools to process the most common image format types, includes a statistical shape modeling framework and an ontology-based searching capability. The hosted data is accessible to the community and collaborative research efforts. In addition, the VSD can be used to evaluate the submissions of competitors during and after a segmentation challenge. The BRATS data is publicly available at the VSD, allowing any team around the world to develop and test novel brain tumor segmentation algorithms. Ground truth segmentation files for the BRATS test data are hosted on the VSD but their download is protected through appropriate file permissions. The users upload their segmentation results through a web-interface, review the uploaded segmentation and then choose to start an automatic evaluation process. The VSD automatically identifies the ground truth corresponding to the uploaded segmentations. The evaluation of the different label overlap measures used to evaluate the quality of the segmentation (such as Dice scores) runs in the background and takes less than one minute per segmentation. Individual and overall results of the evaluation are automatically published on the VSD webpage and can be downloaded as a CSV file for further statistical analysis. Currently, the VSD has evaluated more than 10 000 segmentations and recorded over 100 registered BRATS users. We used it to host both the training and test data, and to perform the evaluations of the on-site challenges. Up-to-date ranking is available at the VSD for researchers to continuously monitor new developments and streamline improvements.

MIDAS7 is an open source toolkit that is designed to manage grand challenges. The toolkit contains a collection of server, client, and stand-alone tools for data archiving, analysis, and access. This system was used in parallel with VSD for hosting the BRATS training and test data in 2012, as well as managing submissions from participants and providing final scores using a collection of metrics. It has not been used any more for the 2013 BRATS challenge.

The software that generates the comparison metrics between ground truth and user submissions in both VSD and MIDAS is available as the open source COVALIC (Comparison and Validation of Image Computing) toolkit.8

IV. Results

In a first step we evaluate the variability between the segmentations of our experts in order to quantify the difficulty of the different segmentation tasks. Results of this evaluation also serve as a baseline we can use to compare our algorithms against in a second step. As combining several segmentations may potentially lead to consensus labels that are of higher quality than the individual segmentations, we perform an experiment that applies the hierarchical fusion algorithm to the automatic segmentations as a final step.

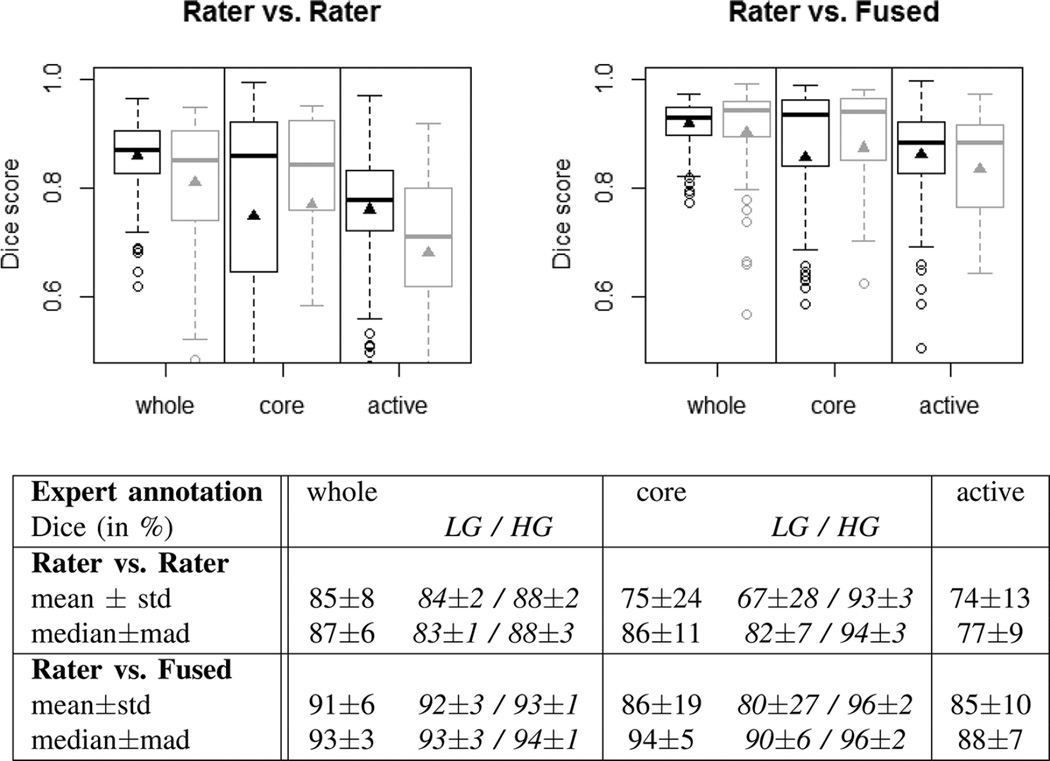

A. Inter-Rater Variability of Manual Segmentations

Fig. 5 analyzes the inter-rater variability in the four-label manual segmentations of the training scans (30 cases, four different raters), as well as of the final off-site test scans (15 cases, three raters). The results for the training and test datasets are overall very similar, although the inter-rater variability is a bit higher (lower Dice scores) in the test set, indicating that images in our training dataset were slightly easier to segment (Fig. 5, plots at the top). The scores obtained by comparing individual raters against the consensus segmentation provides an estimate of an upper limit for the performance of any algorithmic segmentation, indicating that segmenting the whole tumor region for both low- and high-grade and the tumor core region for high-grade is comparatively easy, while identifying the “core” in low-grade glioma and delineating the enhancing structures for high-grade cases is considerably more difficult (Fig. 5, table at the bottom). The comparison between an individual rater and the consensus segmentation, however, may be somewhat overly optimistic with respect to the upper limit of accuracy that can be obtained on the given datasets, as the consensus label is generated using the rater's segmentation it is compared against. So we use the inter-rater variation as an unbiased proxy that we compare with the algorithmic segmentations in the remainder. This sets the bar that has to be passed by an algorithm to Dice scores in the high 80% for the whole tumor region (median 87%), to scores in the high 80% for “core” region (median 94% for high-grade, median 82% for low-grade), and to average scores in the high 70% for “active” tumor region (median 77%) (Fig. 5, table at the bottom).

Fig. 5.

Dice scores of inter-rater variation (top left), and variation around the “fused” consensus label (top right). Shown are results for the “whole” tumor region (including all four tumor structures), the tumor “core” region (including enhancing, non-enhancing core, and necrotic structures), and the “active” tumor region (that features the T1c enhancing structures). Black boxplots show training data (30 cases); gray boxes show results for the test data (15 cases). Scores for “active” tumor region are calculated for high-grade cases only (15/11 cases). Boxes report quartiles including the median; whiskers and dots indicate outliers (some of which are below 0.5 Dice); and triangles report mean values. Table at the bottom shows quantitative values for the training and test datasets, including scores for low- and high-grade cases (LG/HG) separately; here “std” denotes standard deviation, and “mad” denotes median absolute deviance.

We note that on all datasets and in all three segmentation tasks the dispersion of the Dice score distributions is quite high, with standard deviations of 10% and more in particular for the most difficult tasks (tumor core in low-grade patients, active core in high-grade patients), underlining the relevance of comparing the distributions rather than comparing summary statistics such as the mean or the median and, for example, ranking measures thereof.

B. Performance of Individual Algorithms

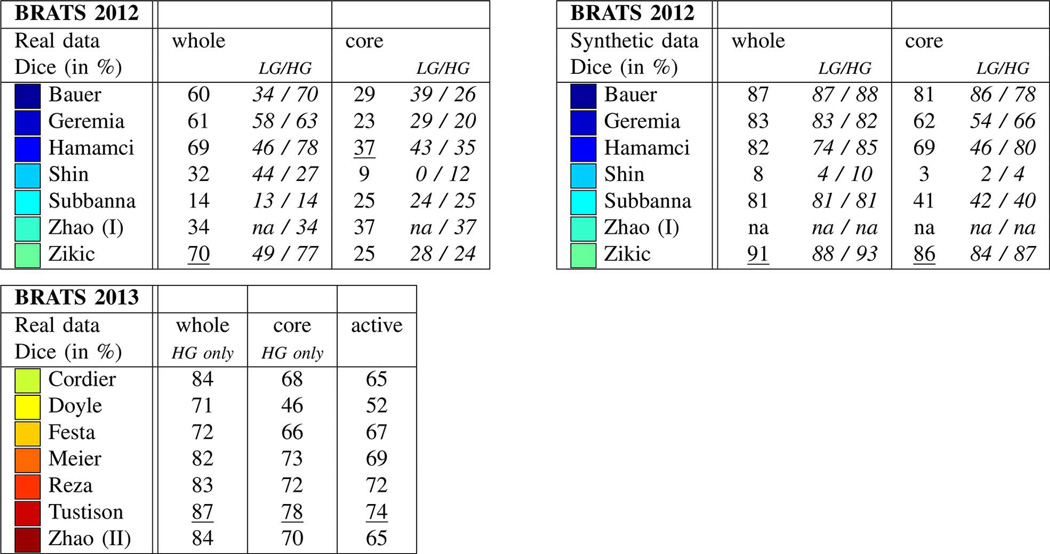

On-Site Evaluation

Results from the on-site evaluations are reported in Fig. 6. Synthetic images were only evaluated in the 2012 challenge, and the winning algorithms on these images were developed by Bauer, Zikic, and Hamamci (Fig. 6, top right). The same methods also ranked top on the real data in the same year (Fig. 6, top left), performing particularly well for whole tumor and core segmentation. Here, Hamamci required some user interaction for an optimal initialization, while the methods by Bauer and Zikic were fully automatic. In the 2013 on-site challenge, the winning algorithms were those by Tustison, Meier, and Reza, with Tustison performing best in all three segmentation tasks (Fig. 6, bottom left).

Fig. 6.

On-site test results of the 2012 challenge (top left and right) and the 2013 challenge (bottom left), reporting average Dice scores. Test data for 2012 included both real and synthetic images, with a mix of low- and high-grade cases (LG/HG): 11/4 HG/LG cases for the real images and 10/5 HG/LG cases for the synthetic scans. All datasets from the 2012 on-site challenge featured “whole” and “core” region labels only. On-site test set for 2013 consisted of 10 real HG cases with four-class annotations, of which “whole,” “core,” “active” regions were evaluated (see text). Best results for each task are underlined. Top performing algorithms of the on-site challenge were Hamamci, Zikic, and Bauer in 2012; and Tustison, Meier, and Reza in 2013.

Overall, the performance scores from the on-site test in 2013 were higher than those in the previous off-site leader-board evaluation (compare Fig. 7, top with Fig. 6, bottom left). As the off-site test data contained the test cases from the previous year, one may argue that the images chosen for the 2013 on-site evaluation were somewhat easier to segment than the on-site test images in the previous—and one should be cautious about a direct comparison of on-site results from the two challenges.

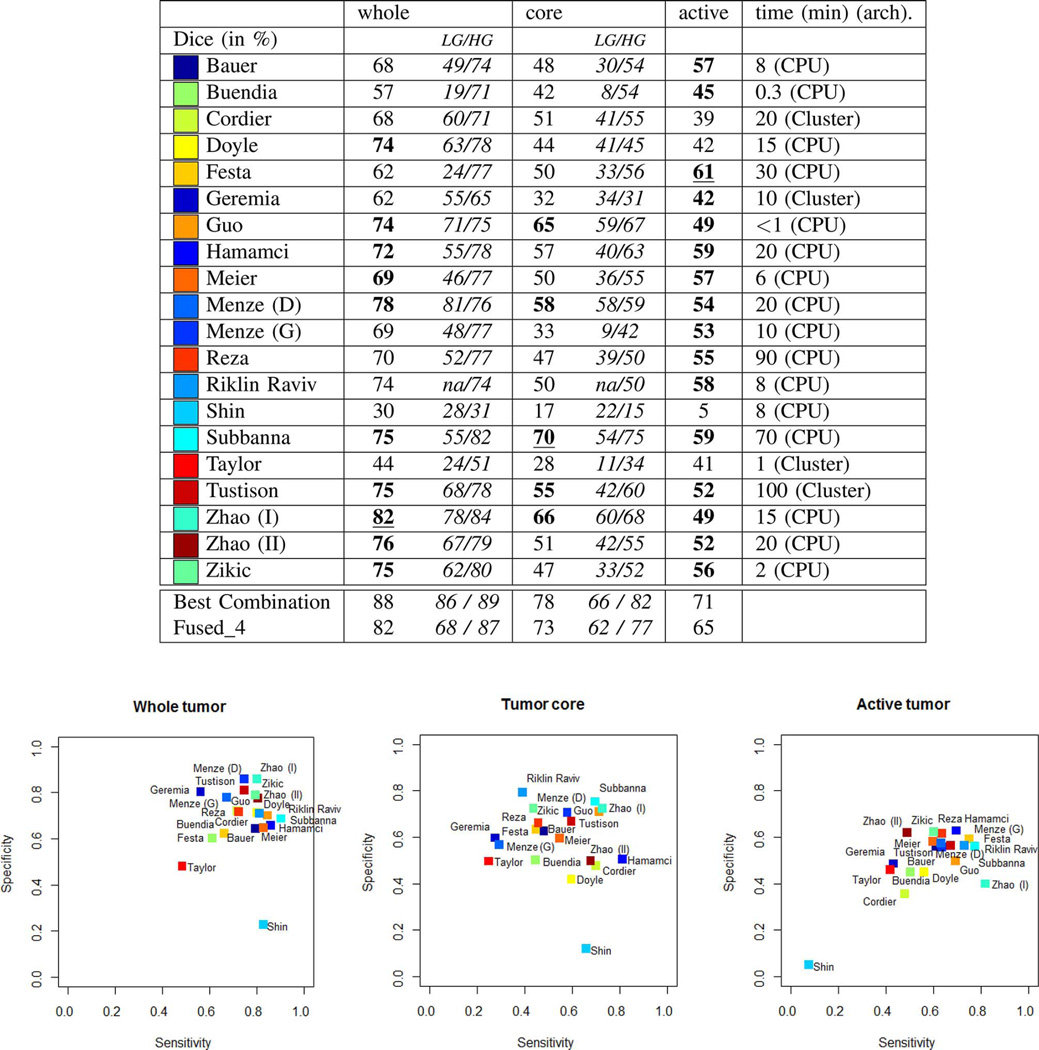

Fig. 7.

Average Dice scores from the “off-site” test, for all algorithms submitted during BRATS 2012 and 2013. The table at the top reports average Dice scores for “whole” lesion, tumor “core” region, and “active” core region, both for the low-grade (LG) and high-grade (HG) subsets combined and considered separately. Algorithms with the best average Dice score for the given task are underlined; those indicated in bold have a Dice score distribution on the test cases that is similar to the best (see also Fig. 8). “Best Combination” is the upper limit of the individual algorithmic segmentations (see text), “Fused_4” reports exemplary results when pooling results from Subbanna, Zhao (I), Menze (D), and Hamamci (see text). Reported average computation times per case are in minutes; an indication regarding CPU or Cluster based implementation is also provided. Plots at the bottom show the sensitivities and specificities of the corresponding algorithms. Colors encode the corresponding values of the different algorithms; written names have only approximate locations.

Off-Site Evaluation

Results on the off-site evaluation (Figs. 7 and 8) allow us to compare algorithms from both challenges, and also to consider results from algorithms that did not converge within the given time limit of the on-site evaluation (e.g., Menze, Geremia, Riklin Raviv). We performed significance tests on the Dice score to identify which algorithms performed best or similar to the best one for each segmentation task (Fig. 7). We also performed significance tests on the Dice scores to identify which algorithms had a performance that is similar to the inter-rater variation that are indicated by stars on top of the box plots in Fig. 8. For “whole” tumor segmentation, Zhao (I) was the best method, followed by Menze (D), which performed the best on low-grade cases; Zhao (I), Menze (D), Tustison, and Doyle report results with Dice scores that were similar to the inter-rater variation. For tumor “core” segmentation, Subbanna performed best, followed by Zhao (I) that was best on low-grade cases; only Subbanna has Dice scores similar to the inter-rater scores. For “active” core segmentation Festa performs best; with the spread of the Dice scores being rather high for the “active” tumor segmentation task, we find a high number of algorithms (Festa, Hamamci, Subbanna, Riklin Raviv, Menze (D), Tustison) to have Dice scores that do not differ significantly from those recorded for the inter-rater variation. Sensitivity and specificity varied considerably between methods (Fig. 7, bottom).

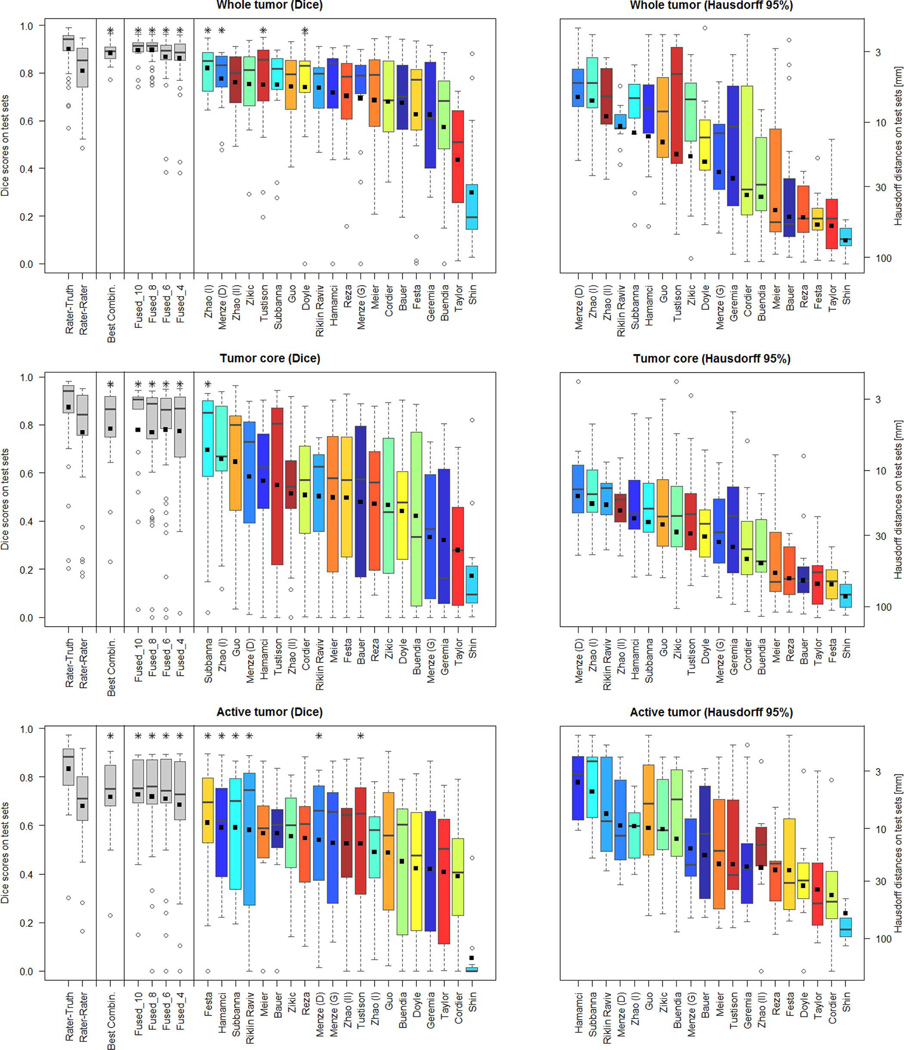

Fig. 8.

Dispersion of Dice and Hausdorff scores from the “off-site” test for the individual algorithms (color coded), and various fused algorithmic segmentations (gray), shown together with the expert results taken from Fig. 5 (also shown in gray). Boxplots show quartile ranges of the scores on the test datasets; whiskers and dots indicate outliers. Black squares indicate the mean score (for Dice also shown in the table of Fig. 7), which were used here to rank the methods. Also shown are results from four “Fused” algorithmic segmentations (see text for details), and the performance of the “Best Combination” as the upper limit of individual algorithmic performance. Methods with a star on top of the boxplot have Dice scores as high or higher than those from inter-rater variation. Hausdorff distances are reported on a logarithmic scale.

Using the Hausdorff distance metric we observe a ranking that is overall very similar (Fig. 7, boxes on the right), suggesting that the Dice scores indicate the general algorithmic performances sufficiently well. Inspecting segmentations of the one method that is an exception to this rule (Festa), we find it to segment the active region of the tumor very well for most volumes, but also to miss all voxels in the active region of three volumes (apparently removed from a very strong spatial regularization), with low Dice scores and Hausdorff distances of more than 50 mm. Averaged over all patients, this still leads to a very good Dice score, but the mean Hausdorff distance is unfavourably dominated by the three segmentations that failed.

C. Performance of Fused Algorithms

An Upper Limit of Algorithmic Performance

One can fuse algorithmic segmentations by identifying—for each test scan and each of the three segmentation tasks—the best segmentation generated by any of the given algorithms. This set of “optimal” segmentations (referred to as “Best Combination” in the remainder) has an average Dice score of about 90% for the “whole” tumor region, about 80% for the tumor “core” region, and about 70% for the “active” tumor region (Fig. 7, top), surpassing the scores obtained for inter-rater variation (Fig. 8). However, since fusing segmentations this way cannot be performed without actually knowing the ground truth, these values can only serve as a theoretical upper limit for the tumor segmentation algorithms being evaluated. The average Dice score of the algorithm performing best on the given task are about 10% below these numbers.

Hierarchical Majority Vote

In order to obtain a mechanism for fusing algorithmic segmentations in more practical settings, we first ranked the available algorithms according to their average Dice score across all cases and all three segmentation tasks, and then selected the best half. While this procedure guaranteed that we used meaningful segmentations for the subsequent pooling, we note that the resulting set included algorithms that performed well in one or two tasks, but performed clearly below average in the third one. Once the 10 best algorithms were identified this way, we sampled random subsets of 4, 6, and 8 of those algorithms, and fused them using the same hierarchical majority voting scheme as for combining expert annotations (Section III-D). We repeated this sampling and pooling procedure ten times. The results are shown in Fig. 8 (labeled “Fused_4,” “Fused_6,” and “Fused_8”), together with the pooled results for the full set of the 10 segmentations (named “Fused_10”). Exemplary segmentations for a Fused_4 sample are shown in Fig. 9—in this case, pooling the results from Subbanna, Zhao (I), Menze (D), and Hamamci. The corresponding Dice scores are reported in the table in Fig. 7.

Fig. 9.

Examples from the test data set, with consensus expert annotations (yellow) and consensus of four algorithmic labels overlaid (magenta). Blue lines indicate the individual segmentations of four different algorithms (Menze (D), Subbanna, Zhao (I), Hamamci). Each row shows two cases of high-grade tumor (rows 1–5) and low-grade tumor (rows 6–7). Three images are shown for each case: FLAIR (left), T2 (center), and T1c (right). Annotated are outlines of the whole tumor (shown in FLAIR), of the core region (shown in T2), and of active tumor region (shown in T1c, if applicable). Views vary between patients with axial, sagittal and transversal intersections with the tumor center. Note that clinical low-grade cases show image changes that have been interpreted by some of the experts as enhancements in T1c.

We found that results obtained by pooling four or more algorithms always outperformed those of the best individual algorithm for the given segmentation task. The hierarchical majority voting reduces the number of segmentations with poor Dice scores, leading to very robust predictions. It provides segmentations that are comparable to or better than the inter-rater Dice score, and it reaches the hypothetical limit of the “Best Combination” of case-wise algorithmic segmentations for all three tasks (Fig. 8).

V. Discussion

A. Overall Segmentation Performance

The synthetic data was segmented very well by most algorithms, reaching Dice scores on the synthetic data that were much higher than those for similar real cases (Fig. 6, top left), even surpassing the inter-rater accuracies. As the synthetic datasets have a high variability in tumor shape and location, but are less variable in intensity and less artifact-loaded than the real images, these results suggest that the algorithms used are capable of dealing well with variability in shape and location of the tumor segments, provided intensities can be calibrated in a reproducible fashion. As intensity-calibration of magnetic resonance images remains a challenging problem, a more explicit use of tumor shape information may still help to improve the performance, for example from simulated tumor shapes [81] or simulations that are adapted to the geometry of the given patients [31].

On the real data some of the automated methods reached performances similar to the inter-rater variation. The rather low scores for inter-rater variability (Dice scores in the range 74%–85%) indicate that the segmentation problem was difficult even for expert human raters. In general, most algorithms were capable of segmenting the “whole” region tumor quite well, with some algorithms reaching Dice scores of 80% and more (Zhao (I) has 82%). Segmenting the tumor “core” region worked surprisingly well for high-grade gliomas, and reasonably well for low-grade cases—considering the absence of enhancements in T1c that guide segmentations for high-grade tumors—with Dice scores in the high 60% (Subbanna has 70%). Segmenting small isolated areas of the “active” region in high-grade gliomas was the most difficult task, with the top algorithms reaching Dice scores in the high 50% (Festa has 61%). Hausdorff distances of the best algorithms are around 5–10 mm for the “whole” and the “active” tumor region, and about 20 mm for the tumor “core” region.

B. The Best Algorithm and Caveats

This benchmark cannot answer the question of what algorithm is overall “best” for glioma segmentation. We found that no single algorithm among the ones tested ranked in the top five for all three subtasks, although Hamamci, Subbanna, Menze (D), and Zhao (I) did so for two tasks (Fig. 8; considering Dice score). The results by Guo, Menze (D), Subbanna, Tustison, and Zhao (I) were comparable in all three tasks to those of the best method for respective task (indicated in bold in Fig. 7). Menze (D), Zhao (I), and Riklin Raviv led the ranking of the Hausdorff scores for two of the subtasks, and followed Hamamci and Subbanna for the third one.

Among the BRATS 2012 methods, we note that only Hamamci and Geremia performed comparably in the “off-site” and the “on-site” challenges, while the other algorithms performed significantly better in the “off-site” test than in the previous “on-site” evaluation. Several factors may have led to this discrepancy. Some of the groups had difficulties in submitting viable results during the “on-site” challenge and resolved them only for the “off-site” evaluation (Menze, Riklin Raviv). Others used algorithms during the “off-site” challenge that were significantly updated and reworked after the 2012 event (Subbanna, Shin). All 2012 participants had to adapt their algorithms to the new four-class labels and, if discriminative learning methods were used, to retrain their algorithms which also may have contributed to fluctuations in performance. Finally, we cannot rule out that some cross-checking between results of updated algorithms and available test images may have taken place in between the 2012 workshop and the 2013 “off-site” test.

There is another limitation regarding the direct comparison of “off-site” results between the 2012 and the 2013 workshop participants, as the test setting was inadvertently stricter for the latter group. In particular, the 2012 participants had several months to work with the test images and improve scores before the “off-site” evaluation took place—which, they were informed, would be used in a final ranking. In contrast, the 2013 groups were permitted access to those data only four weeks before their competition and were not aware that these images would be used for a broad comparison. It is therefore worth pointing out, once again, the algorithms that performed best on the on-site tests: these were the methods by Bauer, Zikic, and Hamamci in 2012, and Tustison's method in 2013.

C. “Winning” Algorithmic Properties

A majority of the top ranking algorithms relied on a discriminative learning approach, where low-level image features were generated in a first step, and a discriminative classifier was applied in a second step, transforming local features into class probabilities with MRF regularization to produce the final set of segmentations. Both Zikic and Menze (D) used the output of a generative model as input to a discriminative classifier in order to increase the robustness of intensity features. However, also other approaches that only used image intensities and standard normalization algorithms such as N4ITK [82] did surprisingly well. The spatial processing by Zhao (I), which considers information about tumor structure at a regional “super-voxel” level, did exceptionally well for “whole” tumor and tumor “core.” One may expect that performing such a non-local spatial regularization might also improve results of other methods. Most algorithms ranking in the lower half of the list used rather basic image features and did not employ a spatial regularization strategy, featuring small false positive outliers that decreased Dice score and increased the average Hausdorff distance.

Given the excellent results by the semi-automatic methods from Hamamci and Guo (and those by Riklin Raviv for the active tumor region), and because tumor segmentations will typically be looked at in the context of a clinical workflow anyway, it may be beneficial to take advantage of some user interaction, either in an initialization or in a postprocessing phase. In light of the clear benefit of fusing multiple automatic segmentations, demonstrated in Section IV-C, user interaction may also prove helpful in selecting the best segmentation maps for subsequent fusion.

The required computation time varied significantly among the participating algorithms, ranging from a few minutes to several hours. We observed that most of the computational burden related to feature detection and image registration sub-tasks. In addition, it was observed that a good understanding of the image resolution and amount of image subsampling can lead to a good trade-off between speed improvements and segmentation quality.

D. Fusing Automatic Segmentations

We note that fusing segmentations from different algorithms always performed better than the best individual algorithm applied to the same task. This observation aligns well with a common concept from ensemble learning, when a set of predictors that are unbiased but with high variability in the individual prediction, improve when their predictions are pooled [83]. In that case, averaging over multiple predictors reduces variance and, hence, reduces the prediction error. Subselecting only the best few segmentations, i.e., those with the least bias (or average misclassification) further improves results. In general there are two extrema: variance is maximal for single observations and minimal after fusing many, while bias is minimal for the one top-ranking algorithm and maximal when including a large number of (also lesser) predictions. For many applications, an optimum is reached in between these two extrema, depending on the bias and variance of the predictors that are fused. Optimizing the ensemble prediction by balancing variability reduction (fuse many predictors) and bias removal (fuse a few selected only) can be done on a test set representing the overall population, or for the individual image volume when partial annotation is available—for example from the limited user interaction mentioned above. Statistical methods that estimate and weight the performance of individual contributions—for example, based on appropriate multi-class extensions of STAPLE [69] and related probabilistic models [19], [84]—may also be used to trade bias and variance in an optimal fashion.

E. Limitations of the BRATS Benchmark

When designing the BRATS study, we made several choices that may have impacted the results and that could potentially have been improved. For example, we decided to homogenize the data by co-registering and reformatting each subject's image volumes using rigid registration and linear interpolation, as described in Section III-C. Although the registration itself was found to work well (as it was always between images acquired from the same subject and in the same acquisition session), it may have been advisable to use a more advanced interpolation method, because the image resolution differed significantly between sequences, patients, and centers. Furthermore, in order to build a consensus segmentation from multiple manual annotations, we devised a simple fusion rule that explicitly respects the known spatial and—with respect to the evolution of the disease— temporal relations between the tumor substructures, as more advanced fusion schemes were found to yield implausible results. These choices can certainly be criticized; however, we believe the major challenge for the segmentation algorithms was ultimately not interpolation or label fusion details, but rather the large spatial and structural variability of the tumors in the BRATS dataset, as well as the variability in image intensities arising from differences in imaging equipment and acquisition protocols.

Although we were able to identify several overall “winning” algorithmic properties (discussed in Section V-C), one general limitation of image analysis benchmarks is that it is often difficult to explain why a particular algorithm does well or—even more difficult—why it does not do well. This is because even the best algorithmic pipeline will fail if just one element is badly parameterized or implemented. Detecting such failures would require a meticulous study of each element of every processing pipeline—for a learning-based approach, for example, of the intensity normalization, the feature extraction, the classification algorithm, and the spatial regularization. Unfortunately, while this type of analysis is extremely valuable, it requires a careful experimental design that cannot easily be pursued post hoc on a heterogeneous set of algorithms contributed by different parties in a competitive benchmark such as BRATS.

Another limitation of the current study, which is also shared by other benchmarks, pertains to the selection of an appropriate overall evaluation metric that can be used to explicitly rank all competing algorithms. Although we reported separate results for sensitivity, specificity, and Hausdorff distance, we based our overall final ranking in different tumor regions on average Dice scores. As demonstrated by the results of the Festa method in “active tumor” segmentation, however, the exact choice of evaluation metric does sometimes affect the ranking results, as different metrics are sensitive to different types of segmentation errors.

Although the number of images included in the BRATS benchmark was large, the ranking of the segmentation algorithms reported here may still have been impacted by the high variability in brain tumors. As such, it will be desirable to further increase the number of training and test cases in future brain tumor segmentation benchmarks.

We wish to point out that all the individual segmentation results by all participants are publicly available,9 so that groups interested in brain tumor segmentation can perform their own internal evaluation, focusing specifically on what they consider most important. Looking at individual segmentations can also help understand better the advantages and drawbacks of the different algorithms under comparison, and we would strongly encourage taking advantage of this possibility. It is worth pointing out that the individual rater's manual segmentations of the training data are also available,10 so that groups that do not trust the consensus labels we provide, can generate their own training labels using a fusion method of their choice.

F. Lessons Learned

There are lessons that we learned from organizing BRATS 2012 and 2013 that may also be relevant for future benchmark organizers confronted with complex and expensive annotation tasks. First, it may be recommended to generate multiple annotations for the test data—rather than for the training set as we did here—as this is where the comparisons between experts and algorithms take place. Many algorithms will be able to overcome slight inconsistencies or errors in the training data that are present when only a single rater labels each case. At the same time, most algorithms will benefit from having larger training datasets and, hence, can be improved by annotating larger amounts of data even if this comes at the price of fewer annotations per image volume.

Second, while it may be useful to make unprocessed data available as well, we strongly recommend providing participants with maximally homogenized datasets—i.e., image volumes that are co-registered, interpolated to a standard resolution and normalized with respect to default intensity distributions— in order to ease participation, maximize the number of participants, and facilitate comparisons of the segmentation methods independently of preprocessing issues.

G. Future Work

Given that many of the algorithms that participated in this study offered good glioma segmentation quality, it would seem valuable to have their software implementations more easily accessible. Right now, only an implementation of Bauer and Meier's method is freely available,11 and Tustison's code12 The online MIDAS and VSD platforms that we used for BRATS may be extended to not only host and distribute data, but also to host and distribute such algorithms. Making the top algorithms available through appropriate infrastructures and interfaces—for example as developed for the VISCERAL benchmark13 [86], or as used in the commercial NITRC Amazon cloud service14—may help to make thoroughly benchmarked algorithms available to the wider clinical research community.

Since our results indicate that current automated glioma segmentation methods only reach the level of consensus-rater variation in the “whole” tumor case (Fig. 8), continued algorithmic development seems warranted. Other tumor substructures may also be relevant with respect to diagnosis and prognosis, and a more refined tumor model—with more than the four classes used in this study—may be helpful, in particular when additional image modalities are integrated into the evaluation. Finally, in clinical routine the change of tumor structures over time is often of primary relevance, something the current BRATS study did not address. Evaluating the accuracy of automated routines in longitudinal settings including both pre- and postoperative images, are important directions for future work along with further algorithmic developments.

VI. Summary and Conclusion

In this paper we presented the BRATS brain tumor segmentation benchmark. We generated the largest public dataset available for this task and evaluated a large number of state-of-the-art brain tumor segmentation methods. Our results indicate that, while brain tumor segmentation is difficult even for human raters, currently available algorithms can reach Dice scores of over 80% for whole tumor segmentation. Segmenting the tumor core region, and especially the active core region in high-grade gliomas, proved more challenging, with Dice scores reaching 70% and 60%, respectively. Of the algorithms tested, no single method performed best for all tumor regions considered. However, the errors of the best algorithms for each individual region fell within human inter-rater variability.

An important observation in this study is that fusing different segmenters boosts performance significantly. Decisions obtained by applying a hierarchical majority vote to fixed groups of algorithmic segmentations performed consistently, for every single segmentation task, better than the best individual segmentation algorithm. This suggests that, in addition to pushing the limits of individual tumor segmentation algorithms, future gains (and ultimately clinical implementations) may also be obtained by investigating how to implement and fuse several different algorithms, either by majority vote or by other fusion strategies.

Acknowledgments

This research was supported by the NIH NCRR (P41-RR14075), the NIH NIBIB (R01EB013565), the Academy of Finland (133611), TEKES (ComBrain), the Lundbeck Foundation (R141-2013-13117), the Swiss Cancer League, the Swiss Institute for Computer Assisted Surgery (SICAS), the NIH NIBIB NAMIC (U54-EB005149), the NIH NCRR NAC (P41-RR13218), the NIH NIBIB NAC (P41-EB-015902), the NIH NCI (R15CA115464), the European Research Council through the ERC Advanced Grant MedYMA 2011-291080 (on Biophysical Modeling and Analysis of Dynamic Medical Images), the FCT and COMPETE (FCOM-01-0124-FEDER-022674), the MICAT Project (EU FP7 Marie Curie Grant No. PIRG-GA-2008-231052), the European Union Seventh Framework Programme under grant agreement no. 600841, the Swiss NSF project Computer Aided and Image Guided Medical Interventions (NCCR CO-ME), the Technische Universität München—Institute for Advanced Study (funded by the German Excellence Initiative and the European Union Seventh Framework Programme under Grant agreement 291763), the Marie Curie COFUND program of the European Union (Rudolf Mössbauer Tenure-Track Professorship to BHM).

Appendix

Here we reproduce a short summary of each algorithm used in BRATS 2012 and BRATS 2013, provided by its authors. A more detailed description of each method is available in the workshop proceedings.15

Bauer, Wiest and Reyes (2012): Segmentation of Brain Tumor Images Based on Integrated Hierarchical Classification and Regularization

Bauer, Wiest and Reyes (2012): Segmentation of Brain Tumor Images Based on Integrated Hierarchical Classification and Regularization

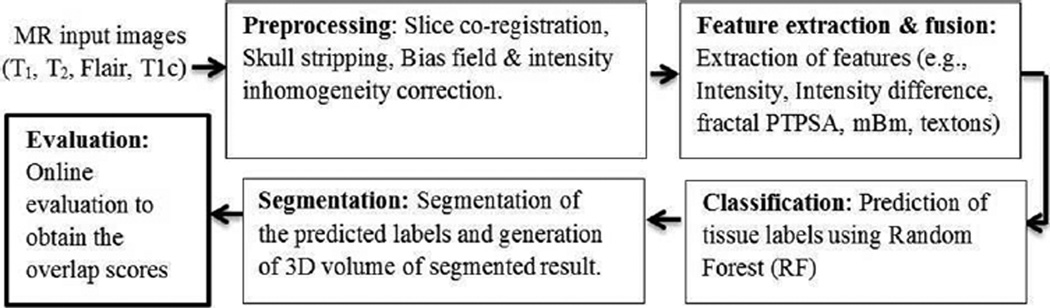

Algorithm and Data

We are proposing a fully automatic method for brain tumor segmentation, which is based on classification with integrated hierarchical regularization [87]. It subcategorizes healthy tissues into CSF, WM, GM and pathologic tissues into necrotic, active, non-enhancing and edema compartment. The general idea is based on a previous approach presented in [43]. After pre-processing (denoising, bias-field correction, rescaling and histogram matching) [74], the segmentation task is modeled as an energy minimization problem in a conditional random field (CRF) formulation. The energy consists of the sum of the singleton potentials in the first term and the pairwise potentials in the second term of (1). The expression is minimized using [88] in a hierarchical way

| (1) |

The singleton potentials V(yi, xi) are computed according to (2), where ỹi is the label output from a classifier, xi is the feature vector and δ is the Kronecker-δ function

| (2) |

We use a decision forest as a classifier [89], which has the advantage of being able to handle multi-class problems and providing a probabilistic output [89]. The probabilistic output is used for the weighting factor p(ỹi|xi) in (2), in order to control the degree of spatial regularization. A 44-dimensional feature vector is used for the classifier, which combines the intensities in each modality with the first-order textures (mean, variance, skewness, kurtosis, energy, entropy) computed from local patches, statistics of intensity gradients in a local neighborhood and symmetry features across the mid-sagittal plane. The pairwise potentials W(yi, yj, xi, xj) account for the spatial regularization. In (3) ws(i, j) is a weighting function, which depends on the voxel spacing in each dimension. The term (1 − δ(yi, yj)) penalizes different labels of adjacent voxels, while the intensity term exp((−PCD(xi − xj))/(2·x̄) regulates the degree of smoothing based on the local intensity variation, where PCD is a pseudo-Chebyshev distance and x̄ is a generalized mean intensity. D(yi, yj) allows us to incorporate prior knowledge by penalizing different tissue adjacencies individually

| (3) |

Computation time for one dataset ranges from 4 to 12 min depending on the size of the images, most of the time is needed by the decision forest classifier.

Training and Testing