Abstract

We used a multiple baseline design across behaviors to evaluate peer-mediated behavioral skills training to improve a complex repertoire of conversational skills of an undergraduate student diagnosed with a learning disability NOS. Following treatment, we observed a decrease in interrupting and content specificity and an increase in questioning. Treatment effects maintained with naïve peers during unstructured conversations and outcomes compared favorably with normative data on the conversational skills of three undergraduates without learning disabilities.

Keywords: Behavioral skills training, Conversational skills, Intraverbals, Mands, Peer mediation

A largely under-researched area of verbal behavior is that of conversational skills, which are important in developing and maintaining peer relationships (Krasny et al. 2003). Intraverbals play an important role in conversational speech. Skinner (1957) defined an intraverbal as a verbal response that has no point-to-point correspondence with the verbal stimulus that occasions the response. There is a fair amount of research on the acquisition of intraverbals, but few studies have evaluated methods to improve intraverbal behavior related to the conversational speech of adults without disabilities (Sautter and LeBlanc 2006).

With respect to conversational speech, the challenge to the learner is more than the acquisition of intraverbals; it also includes learning to balance the timing of the emission with the form of the response. Related to timing, one must learn the appropriate moment to emit the intraverbal, so that the response does not disproportionately interrupt the speaker. With respect to form, one must learn the appropriate amount of detail and autoclitics to include within the response. Lastly, the individual must learn to discriminate when it is appropriate to emit an intraverbal versus a mand for information (e.g., ask a question). Other important aspects of conversational speech include the duration spent in the listener versus speaker role, and the qualitative aspects of behavior emitted by the individual while in the listener role. Unfortunately, normative measures of conversational skills are rarely assessed. In a notable exception, Minkin et al. (1976) asked naïve judges to rate conversation samples of female college and junior high school students during unstructured conversations, and the judges rated the college students more favorably. The authors found that the college students engaged in more questioning and positive feedback than the junior high school students, suggesting that these two behaviors are important conversational skills. The authors then successfully taught questioning and positive feedback to pre-delinquent girls using behavioral skills training (BST; a teaching package that includes instructions, modeling, role play, and feedback). However, the authors did not measure interruptions or the amount of details provided within each conversation.

The better we become at teaching verbal behavior, the greater the need for establishing methods to improve conversational speech, because the same children who are learning to mand and tact now will likely need to be taught to engage in conversations later. To our knowledge, previous research on improving verbal behavior has neither evaluated methods to decrease interruptions and content specificity during a conversation nor collected normative measures on these behaviors. In our study, we evaluated the effects of peer-mediated BST plus homework on the conversational skills of interrupting and questioning and on content specificity during unstructured conversations with a college student diagnosed with learning disability not otherwise specified (NOS). We also collected normative measures of the same skills and conducted a social validity assessment.

Method

Participant and Setting

Cornelius was a 21-year-old male undergraduate student diagnosed with learning disability NOS. He solicited help with his conversational skills from the student disabilities services at the university he attended. Our study was approved by the institutional review board, and Cornelius signed an informed consent to participate. All sessions occurred 3 days per week for 1 h per day in an office at the university Cornelius attended.

Data Collection and Interobserver Agreement

We videotaped all sessions, and all dependent variables were recorded with paper and pencil, except speaking, which was recorded using data collection software. Our selection of target behaviors was based on previous research, an interview with Cornelius, and the direct observation of his conversing with confederates during baseline. Speaking was defined as any vocal utterance emitted by Cornelius and we measured the duration with data collection software. Observers pressed an assigned key when the participant began speaking and then pressed the same key 2 s after he stopped speaking. Listener role was defined as when the confederate was speaking and the participant was either engaging in positive feedback or silent. We measured the duration of the listener role. We recorded the rate of positive feedback, interrupting, and questioning. Positive feedback was defined as vocal utterances (e.g., “yeah,” “that’s cool,” “mm-hmm”) and gestures such as head nods and smiles emitted by Cornelius while the confederate spoke. Interrupting was defined as vocal initiations emitted while the confederate spoke and excluded positive feedback. Questioning was defined as vocal initiations that requested information from the confederate. If Cornelius asked a question that interrupted the confederate, the behavior was scored as a question and an interruption. Because there is limited research on measuring the amount of detail provided in an intraverbal, we collected two measures. High specificity was defined as an excess of details provided on a topic. For example, after being asked whether he had a good weekend, Cornelius reported very specific events unlikely to be of interest to a listener (e.g., “Yeah, first I woke up, walked downstairs to the kitchen and I saw my roommate sleeping on the couch, and poured some cereal, then…”). We recorded the duration of high specificity within each 5-min interval. Content specificity was a supplementary measure of high specificity. Content specificity was scored by rating each 5-min interval of a 20-min conversation on a scale from 1 to 4 and then averaging the ratings per session. A rating of 1 was recorded when Cornelius’ speech included 0 s of high specificity during the interval, 2 denoted 1 to 30 s of high specificity, 3 denoted 31 s to 1 min, and 4 denoted more than 1 min. Observers were trained with many examples and nonexamples until we obtained orderly and reliable data.

A second observer independently collected data on 33 % of all sessions. We calculated interobserver agreement (IOA) for interrupting, questioning, content specificity rating, and positive feedback by dividing the total number of agreements per interval by the sum of agreements and disagreements and converting the ratio to a percentage. Mean IOA was 94 % for interrupting, 97 % for questioning, 92 % for content specificity (rating), and 75 % for positive feedback. We calculated IOA for speaking by dividing each session into 10-s bins and we divided each session into four 5-min intervals for high specificity. We then divided the smaller duration by the larger duration, and converted the ratio to a percentage. Mean IOA for speaking was 92 %. Mean IOA for high specificity was 74 %.

Experimental Design and Procedures

We used a multiple baseline design across behaviors to evaluate the effects of peer-mediated BST on conversational skills. Maintenance of effects was evaluated in the absence of treatment and generalization was assessed during unstructured conversations with naïve peers. All sessions were 20 min because Cornelius reported he wanted to learn to maintain conversations. Sessions were conducted in a dyadic format, which included Cornelius and a confederate. Confederates were one graduate student and five undergraduate psychology students (male and female) enrolled in a psychology research course, all of whom were informed of the purpose of the study. Cornelius was aware that the students were assisting with the study.

Baseline

Confederates were instructed to (a) ask at least five questions, (b) discuss one predetermined participant-preferred topic, (c) discuss one participant-non-preferred topic, (d) wait approximately 20 s after answering a question or finishing a story before speaking again, (e) allow Cornelius to speak if he interrupted, (f) not interrupt Cornelius, and (g) listen attentively. The confederate-specific behavior allowed us to assess how well Cornelius answered questions, initiated interaction, interrupted during preferred and non-preferred topics, and engaged in positive feedback during repeated observations before and during treatment.

BST

Confederates implemented BST plus homework sequentially across the behaviors of interrupting, questioning, and content specificity. BST was not implemented with speaking or positive feedback. BST consisted of pre-session instructions, which included a brief rationale of the skill, modeling examples, and nonexamples of correct performance (either in session or viewing video before session), practicing the skill during the 20-min sessions, feedback following correct and incorrect performance throughout the sessions, and homework assignments, which Cornelius completed outside of sessions. Homework assignments were specific to the skills that were targeted during the sessions (e.g., when BST was introduced for interrupting, Cornelius was instructed to have conversations with three novel peers while practicing to listen without interrupting). Cornelius reported the results of homework assignments to the first author before or after each day’s direct observation session, but data were not collected on performance during homework assignments. In addition, Cornelius viewed a graph of his performance at the end of each week.

Because BST was implemented across dependent variables, the instructions, modeling, role play, and feedback varied slightly across measures. Initially, with BST for interrupting, we used visual feedback. The visual feedback consisted of placing a predetermined number of glass beads on a table next to Cornelius (initially 16 beads were used, which represented a 25 % reduction from the mean number of interruptions during baseline). Following each interruption, the confederate removed one bead without mentioning the interruption. The visual feedback allowed for the relatively smooth continuation of the conversation (as opposed to vocal feedback, which would have involved interrupting him to tell him he interrupted) and the beads had the potential to be gradually eliminated across sessions (we removed four beads per session when interrupting was below 80 % of baseline). After the visual feedback faded, and levels of interrupting had decreased, vocal feedback was introduced to simulate a more realistic environment. During vocal feedback, following each interruption, the confederate stated to Cornelius that he had just interrupted. During the role play for questioning, Cornelius was asked to come up with potential questions given a particular statement (e.g., during the role play, the confederate would ask, “What question could you ask me if I told you that I went to a party last night?”). During the role play for content specificity, immediately prior to the start of the first five sessions, Cornelius selected a topic to discuss, completed a written outline, which broke the topic down from general to specific details, and practiced discussing the topic aloud. During the session, if Cornelius began providing a lot of detail, the confederate prompted Cornelius to start the narrative again and reduce the amount of detail.

Naïve Peers

Cornelius was assessed during two 20-min unstructured conversations with two novel and untrained peers who were blind to the purpose of the study and instructed to behave as they typically would during a social interaction.

Social Validity Assessment

Following the conclusion of the study, Cornelius completed a questionnaire regarding his satisfaction with the teaching procedures and the treatment results. In addition, three respondents who did not partake in any aspect of the study and did not know Cornelius, rated his conversational skills on a 7-point Likert scale (1 being “poor” and 7 being “excellent”) after watching a 5-min baseline video and a 5-min post-treatment video. The respondents were blind to which video was pre- and post-treatment, but were asked to concentrate on the number of interruptions, questions asked, and the amount of content provided during each conversation.

Normative Comparison

We collected normative data on conversational skills exhibited by three undergraduate psychology students with no diagnoses who reported to have no concerns with their social skills. Participants were recruited from undergraduate psychology courses and were blind to the purpose of the study. Each session was 20-min long, videotaped, and included two untrained participants who were instructed to behave as they typically would with peers. We collected data on the same behaviors using the same procedures described previously. IOA was collected on 20 % of sessions across participants and averaged 91 % across measures.

Results and Discussion

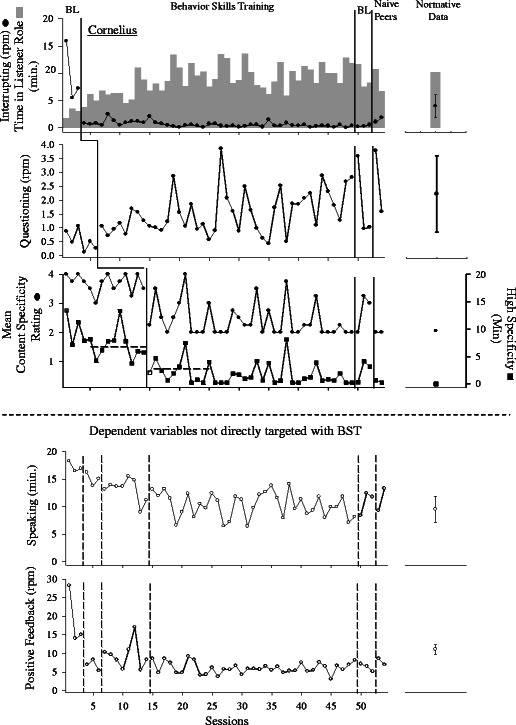

Data are displayed in Fig. 1. During baseline, we observed high and variable interruptions and little time spent in the listener role (first panel), a decreasing trend of questioning (second panel), a high mean content specificity rating and long durations of high specificity (third panel), a high duration of speaking (fourth panel), and stable amounts of positive feedback (last panel). Questioning shows a decreasing trend in baseline. It is important to note that the first three data points were with three different confederates and the following three data points (when the decrease is evident) were the second conversations with the same three confederates. In other words, Cornelius asked many questions when he first conversed with a confederate (“What’s your name?” “How old are you?” “What year of school are you in?” etc.), but when he conversed with the same participant a second time, he asked fewer questions. Therefore, we introduced treatment to teach him to ask questions to occasion elaboration of particular topics introduced by the confederates (e.g., “Where did you go after the party?”). With respect to content specificity (duration), the first six data points in the baseline show a decreasing trend; however, for the last eight data points in baseline, the decreasing trend ceased and the data appeared stable with some variability, which was followed by the introduction of treatment.

Fig. 1.

Rate of interruptions and duration of listener role (top), rate of questioning (second panel), mean rating of content specificity and duration of high specificity (third panel), duration of speaking (fourth panel), and rate of positive feedback (bottom). The dashed vertical lines on the two bottom panels denote when treatment was implemented with the target behaviors. Normative data are depicted to the right of the participant’s data

Upon the introduction of BST, we observed a decrease in interrupting, increased duration in the listener role, an increase and maintenance of questioning, a decrease in the mean rating of content specificity, and a decrease in the duration of high-specificity content. We did not implement BST for speaking amount or positive feedback, but we observed a concomitant decrease in the duration of speaking. We suppose the decrease in speaking was a function of both the decrease in interruptions and increase in questioning (i.e., the participant was allowing the confederate to speak and asking the confederate more questions). Positive feedback remained variable throughout the entire evaluation. These effects maintained with confederates (Fig. 1, BL 2) and with the naïve untrained peers after teaching was removed (Fig. 1, Naïve peers).

The normative assessment (far right panels of Fig. 1) showed that the mean rate of interruptions was 3.9 (SD = 2.1), mean rate of questioning was 2.2 (SD = 1.4); speaking was 9.8 min (SD = 2.3), the mean content specificity rating was 2, high-specificity duration was 0 min, and the mean rate of positive feedback was 11.1 (SD = 1.3). Compared to his peers, during baseline, Cornelius spent much less time in the listener role, engaged in a higher rate of interrupting, a lower rate of questioning, more content specificity and a longer duration of high-specificity content, a higher duration of speaking, and a similar level of positive feedback. After teaching, the targeted conversational skills were within the range of his peers; however, he engaged in fewer interruptions than his peers after BST. The results of the social validity questionnaire indicated that Cornelius strongly agreed that the teaching procedures and his improvement in conversational skills were acceptable. He also commented in the open-ended part of the assessment that he was more confident in social situations and in meeting and conversing with new people. The mean score for the baseline video scored by the three blind respondents was 2.3 (range, 1–4) and the mean score post-treatment was 5.3 (range, 5–6).

We observed changes in target conversational skills when we implemented teaching, which was staggered across measures, thus demonstrating that the effects were primarily a function of BST. This study extends current research by describing an effective and socially acceptable procedure for improving conversational skills to levels commensurate with those of young adults without learning disabilities attending university.

We suspect that the use of BST to improve the timing and form of intraverbals and mands for information, may have also indirectly improved Cornelius’ self-editing skills. Skinner (1957) describes self-editing as essential to emitting socially acceptable complex verbal behavior and involves a process of rejecting or releasing verbal behavior. Skinner asserted that withholding speech is more than an issue of not emitting speech and instead involves engaging in behavior. Self-editing can be measured easily when emitted overtly (e.g., placing one’s hand over one’s mouth mid-sentence to stop from continuing with the sentence), but can be challenging to measure when the behavior occurs covertly (e.g., thinking about what one will say next). Although we did not directly measure self-editing, we observed some overt self-editing responses after the implementation of treatment. For example, following the introduction of BST, we observed Cornelius frequently stop mid-sentence, apologize for interrupting, and then allow his conversational partner to finish. At other times, and in the early phase of treatment, we observed him softly say to himself, “I should ask a question now,” which was then followed by a question to his partner. Although much self-editing occurs covertly and it would be difficult to obtain an accurate measure of this behavior, it is still important to consider the role of private events for a complete account of conversational behavior (Palmer 2011); if an individual lacks appropriate self-editing skills (evaluating one’s speech before emitting it), these skills will likely need to be taught to make improvements in other verbal behavior meaningful. Our intervention involved arranging contingencies and providing Cornelius—a high-functioning, language-abled adult—with rules regarding conversations. We suspect that the contingencies and rationales taught Cornelius to self-edit his verbal behavior, which led to improvements in our direct measures.

This study has several limitations. First, because Cornelius graduated, we did not assess the long-term maintenance of skills in more naturalistic contexts. Second, the repeated measures integral to the multiple baseline design across behaviors were laborious. Future researchers and practitioners should consider using a multiple-probe design to reduce the time and resources involved with data collection and analysis. Third, the word “excess” in the operational definition of content specificity might be interpreted as subjective. As noted earlier, we trained our observers with examples and nonexamples to achieve acceptable reliability (a mean reliability score of 92 % for content specificity rating and a mean reliability score of 74 % for high-specificity duration). The level of detail reinforced by a verbal community varies with context, but no matter how difficult it is to operationalize, it remains an important variable. A speaker who provides too much or too little detail would be stigmatized. Thus, although to our knowledge, content specificity has not been analyzed in the research literature, the best practices with regard to measuring content specificity are still needed. We also suggest that the variables controlling abnormalities in conversational skills be functionally analyzed in future research so that more precise treatments can be developed.

References

- Krasny L, Williams BJ, Provencal S, Ozonoff S. Social skills interventions for the autism spectrum: essential ingredients and a model curriculum. Child and Adolescent Psychiatric Clinics in North America. 2003;12:107–122. doi: 10.1016/S1056-4993(02)00051-2. [DOI] [PubMed] [Google Scholar]

- Minkin N, Braukmann CJ, Minkin BL, Timbers GD, Timbers BJ, Fixsen DL, et al. The social validation and training of conversational skills. Journal of Applied Behavior Analysis. 1976;9:127–139. doi: 10.1901/jaba.1976.9-127. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Palmer DC. Consideration of private events is required in a comprehensive science of behavior. The Behavior Analyst. 2011;34:201–207. doi: 10.1007/BF03392250. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Sautter RA, LeBlanc LA. Empirical applications of Skinner’s analysis of verbal behavior with humans. The Analysis of Verbal Behavior. 2006;22:35–48. doi: 10.1007/BF03393025. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Skinner BF. Verbal behavior. Cambridge MA: Prentice Hall; 1957. [Google Scholar]