Abstract

Background

Partnerships between academic and community-based organizations can richly inform the research process and speed translation of findings. While immense potential exists to co-conduct research, a better understanding of how to create and sustain equitable relationships between entities with different organizational goals, structures, resources, and expectations is needed.

Objective

To engage community leaders in the development of an instrument to assess community-based organizations' interest and capacity to engage with academia in translational research partnerships.

Methods

Leaders from community-based organizations partnered with our research team in the design of a 50-item instrument to assess organizational experience with applying for federal funding and conducting research studies. Respondents completed a self-administered, paper/pencil survey and a follow-up structured cognitive interview (n=11). A community advisory board (n=8) provided further feedback on the survey through guided discussion. Thematic analysis of the cognitive interviews and a summary of the community advisory board discussion informed survey revisions.

Results

Cognitive interviews and discussion with community leaders identified language and measurement issues for revision. Importantly, they also revealed an unconscious bias on the part of researchers and offered an opportunity, at an early research stage, to address imbalances in the survey perspective and to develop a more collaborative, equitable approach.

Conclusions

Engaging community leaders enhanced face and content validity and served as a means to form relationships with potential community co-investigators in the future. Cognitive interviewing can enable a bi-directional approach to partnerships, starting with instrument development.

Keywords: Community Based Participatory Research, Translational Research, Organizational Capacity to Conduct Research, Community-Academic Partnerships, Community Engagement, Cognitive Interviewing

Introduction

The concept of building equitable community-academic partnerships through community engagement in research has seen increasing emphasis over the past several years as a necessary component of translational science.1 Public health researchers employ community engagement methods to better understand the context in which bench and clinical research findings are disseminated and implemented. This collaborative approach to research aims to achieve balance between academics and communities by fostering shared decision-making, co-learning, and sharing of resources.2 Community engagement in research also provides an opportunity for community members to not only offer input into policies and programs that affect their communities, but help define and shape the research being conducted.

While the potential impact of community-engaged research on translational science is significant, the process for establishing fruitful partnerships between communities and academia can be inhibited by incongruent goals, different levels of interest in partnering, and varied capacity to conduct research and manage research resources. Failure to recognize and understand where organizations are in terms of their interest in and capacity to co-conduct research can lead to mismatched expectations and difficulties in negotiating proposal development, research activities, and resource sharing. This is particularly true for the type of rigorous research activities expected from federal funding agencies such as the National Institutes of Health.

The Community Academic Resources for Engaged Scholarship (CARES) unit within the Translational and Clinical Sciences (TraCS) Institute (home of the NIH Clinical and Translational Science Award at UNC Chapel Hill) recognized that there are few validated measures available to evaluate successful community-engaged research, including early research partnership formation. Therefore, we launched an initiative to develop and test an instrument to assess community-based organizations' (CBOs) organizational readiness for and capacity to do research. The original instrument included a total of 44 questions and was based on the research team's knowledge of what was needed from community partners in order to successfully manage resources in research studies. The survey went through multiple iterations prior to testing with community members to refine the content based on input from other investigators experienced in community-engaged research.

A crucial step in the survey development process was to conduct cognitive interviews with community members to assess the face and content validity of the instrument. Traditionally, cognitive interviewing is primarily done within the context of large research studies where participants are recruited and interviewed in a cognitive laboratory environment3. In our study, we engaged participants by going to locations in or near their community. The cognitive interviewing process allowed for significant input from stakeholders outside academia and gave us an opportunity to identify areas to improve prior to fielding the survey. We assessed respondents' level of comprehension and ability to interpret the questions to make sure the items and their measurement were meaningful and made sense to our target audience. One unanticipated outcome of using cognitive interviewing was that it presented an opportunity to build partnerships and incorporate community voices at an early stage of our instrument development. The feedback informed the final 50-item instrument, which will be electronically distributed to a sample of over 800 CBOs across North Carolina. In this paper we describe the process and results of our cognitive interviewing approach, a novel approach to relationship-building that can be replicated by other investigators who are interested in both instrument development and refinement and initiating early-stage community engaged research partnerships.

Methods

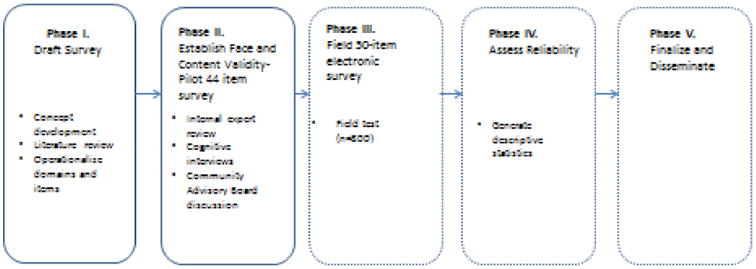

CARES' aims include encouraging translational research partnerships and fostering community and academic input in the development of best practices, measures and methods related to community engagement. In keeping with these aims, the team felt it was important to model an instrument development process (Figure 1) that considered both academic and community points of view. We first brainstormed a list of potential domains related to applying for and managing federal funding, and conducted a literature review to generate items under each domain. The draft instrument was then circulated to academic experts in community-engaged research for feedback as part of a content validation process. After the tool was revised based on their feedback, the team conducted cognitive interviews with potential respondents from the community to pilot the tool and assess its face and content validity. Assessing face validity entailed checking whether the tool measured what it intended to measure from a community stakeholder perspective.4 Assessing content validity entailed determining whether the instrument adequately represented all domains of organizational research readiness and capacity from a community stakeholder perspective.5 The final step will involve field testing the instrument with a sample of CBO representatives to further assess its validity and reliability. The study was approved by the Institutional Review Board at the University of North Carolina at Chapel Hill.

Figure 1. Survey Development – Pheses I and II.

Study Organizations and Participants

The research team conducted interviews with leaders from a combination of a purposive and convenience sample of community organizations across two geographic regions with varied levels of personal and organizational research experience. Participants needed to be a potential lead contact for a research project; i.e., someone who is a decision-maker or who could be a community PI. Recruitment was conducted by phone, email, and person-to-person contact. Out of 12 organizations, 11 agreed to participate. These organizations included faith-based, advocacy, health, and human service organizations. A small sample is recommended for cognitive interviews because the intent is to spend enough time with the participants to gain an in-depth understanding of their thought process as they are taking the survey.6

Data collection procedures

Between November 2012 and January 2013, three research team members (ZE, SD, RT) conducted eleven in-person cognitive interviews in large and smaller urban areas and one rural area in North Carolina. University-affiliated research staff conducted the cognitive interviews. Interviews took place in community and academic settings, in enclosed offices or meeting rooms to ensure participants' privacy. The sessions lasted approximately one hour (including 15 minutes for completion of the paper survey). After informed consent, participants filled out a paper version of the draft survey, which was followed by a structured cognitive interview. All interviews were digitally audio-recorded. Upon completion of the survey and interview, participants were given a $50 gift card.

Following the 11 interviews, a regional community advisory board (CAB) provided additional feedback and validation on the interview findings through guided discussion. The CAB consists of community leaders and clinicians, many with prior experience working on research projects, who are willing to share a community-based perspective with investigators while they are developing or implementing a research project. CAB members received the survey prior to their regular quarterly meeting. Two research team members attended the meeting; one team member presented an overview of the cognitive interview findings and asked for suggestions on how to (1) improve the survey items and (2) recruit survey participants for future field testing, while the other member took extensive notes on the discussion.

Cognitive Interview Process as a Step in Survey Development

The original 44-item survey contained 7 domains: (1) respondent's organizational role; (2) previous experience with research; (3) administrative, (4) scientific, and (5) resource capabilities for federally funded research; (6) motivational readiness to engage in research; and (7) organizational infrastructure for federally funded research. Measurement included check box lists, yes/no/don't know, 4-point Likert scales, and a space for open-ended feedback on the instrument.

To elicit information about the meaning and clarity of items in addition to comprehension of questions' intent, participants were asked to comment on confusing aspects of the instrument while they were completing it. Researchers then asked participants to “think aloud” about how they interpreted and responded to questions in each section of the survey. Participants were asked if they were clear on definitions used in the survey and to restate certain questions in their own words, comment on how well certain questions applied to their organization, and describe the reasoning behind their answers. The interviewers used probes such as “Tell me more about….” to engage participants and encourage responses, and elicited suggestions and modifications from interviewees to address language, content, and measurement issues.

Data coding and analysis

Given that the primary purpose of the cognitive interviews was to gauge the appropriateness of the language and revise content of the research readiness instrument, team members used an analytic approach for identifying and coding the main themes within and across the cognitive interviews. Three team members (ZE, SD, RT) independently analyzed the interviews and inductively identified themes from the data, noting issues related to question wording, ordering, and format. Each member then summarized the results of their individual thematic analysis in a matrix table. The team then reviewed the complete matrix of the 11 interviews, met to review the results, and reconciled any discrepancies by consensus. The notes from the CAB consultation were used to confirm themes that emerged from the cognitive interviews and provide additional contextual details to the findings.

Findings

The thematic findings from the cognitive interviews and CAB consultation facilitated a useful exchange between community and academic stakeholders and helped to identify ways the survey instrument could be refined. The interviews also unearthed important issues that were not readily apparent to the academic researchers. Findings are organized into three areas: design and measurement issues, researcher biases, and opportunities for future research capacity-building and involvement. Finally, there is a revised survey section that details the structural changes made to the survey.

Design/Measurement Issues

We encountered several design and measurement issues that could have potentially elicited inaccurate data. First, an early question on the survey asked participants to identify their role in their organization (“Which of the following best describes your role in the organization? (please check only one role)”; 5 check box options included: Executive Director, Management, Staff, Board Member, Other (write-in)). Some participants had difficulty articulating their role, especially those who worked with multiple organizations or filled multiple roles within a small organization.

“I take part in different organizations, you know, each one a complement of another and you ask me which role am I playing? You know, I got to pick one of those organizations to say who I am because I'm not everything at one time as far as the organizations are concerned. So in one respect, I'm a board member, and another, I'm the president of this, so which organization am I going to be representing?”

We revised the question to ask leaders to report on their role at the organization where they worked the most hours.

Second, participants equated their personal experience with research with their organization's research experience (“How confident are you that your organization could…”; Not at all—Very Confident, 4-point scale).

“This is measuring my knowledge – would our organization's answers be the same if I left?”

We revised the survey to ask specifically about their organization's research capacity.

Third, some participants noted that community organizations not involved in research would probably have different concerns and interests than those that were already involved in research partnerships. Thus, it was important to identify the different levels of potential involvement in health related research. Participants distinguished, for example, between assisting a study with recruitment or data collection, being a subcontractor, or serving as a lead organization on a partnered research grant. We revised the survey by adding a question on partnership interest with response categories that reflected these differing levels of involvement.

Fourth, the cognitive interviews revealed difficulty in understanding federal grant terminology. Some participants were hesitant to admit they did not know something, responding that they were “somewhat confident” or “confident”, but when they described the thoughts behind their answer, there may have been a lack of clarity on certain terms. We revised the survey to include definitions for grant terms. For example, because the final survey is electronic, when the cursor hovers over the term ‘subcontractor’ a sentence appears stating, “A subcontractor conducts a portion of a research study as part of a paid contract with a University.”

Finally, the cognitive interviews revealed items containing specific fiscal details that proved unnecessary to collect. The survey asked questions to verify that participants were knowledgeable about certain grant requirements such as having a Data Universal Numbering System (DUNS) number or an indirect cost rate. Participants mentioned that questions asking about finances were unnecessary and could be considered sensitive or private. Moreover, participants expressed concern over recalling their DUNS number while taking the survey as it would interrupt survey flow:

“Ok, the questions that you had in here about the DUNS and the SAMs - You asked me for my numbers of which I don't have available now. I don't know how to, how you would legislate that when somebody is going through the survey, meaning that do you want them to stop and go and find the numbers? I mean, if I was sitting in front of my computer, I could do that, but you're asking individuals for information they might not have readily available.”

Although we kept the yes/no questions on whether the organization had a DUNS number and indirect cost rate as an indicator of their fiscal capacity, we removed questions requesting the specific DUNS number and indirect cost rate.

Researcher Bias

Another key finding that emerged from the cognitive interviews was the research team's unintentional biases reflected in the survey items. We learned that participants felt the survey items were unidirectional and did not account for the well-established professional networks CBO's had in place for sharing research information. The academic team designed the survey around skills, knowledge, and resources community organizations might need to write a research grant with academic partners and neglected to reflect on the skills and resources researchers might need to partner with a community partner.

“And this gets back to the institutional arrogance that I mentioned earlier. You know, the institution is a lot bigger than these nonprofits. It has a hell of a lot more resources. I mean, I'm taking the time to talk to you today, ‘cause I believe in research and I wanted to participate, and I told you I would. And so, the institution needs to understand what its responsibilities are? How is it going to be a good partner to the nonprofit? The institute needs to find ways to make it possible for nonprofits to participate, let me put it that way.”

From this valuable feedback, we revised the survey to query respondents on what their organization expects from the university to form an equitable research partnership that would be considered a win-win for all stakeholders.

Initially, the survey was focused on an organization's knowledge of federal grant requirements and whether they had the infrastructure to complete a grant. The research team assumed that the community organizations were not as well versed in federal grant requirements as academic institutions, however we did not account for social network connections that CBOs have cultivated and use to assist them in accessing and sharing research information. The key leaders we talked to were all very resourceful; if they did not know how to do something, they knew people that could help them. One respondent commented,

“We are always willing to look for partnerships and build capacity and do networking to do the work.”

Several leaders planned to complete grants in partnership and share writing tasks with an academic partner based on their skill sets and interests. For instance, one participant said they would contact their local Area Health Education Center (AHEC) and ask a librarian for help with a literature review. We revised the language in some items to “co-develop” or “co-led” to acknowledge that research activities were collaborative. We realized that community organizations were planning to collaborate and revised the item responses in the grant requirements section from measuring confidence (1=“Not at all Confident” to 4=“Very Confident”) to assessing the level of support needed to conduct activities together (1=“We could do this with a lot of support” to 3=“We could do this on our own”).

Unanticipated Outcome: Opportunities for Research Capacity-building and Involvement

The relationship building that grew throughout the course of conducting the cognitive interviews occurred because the community partners we interviewed were advocates for their organizations and were concerned about sustainability. They wanted to ensure the dialogue that started during the cognitive interviews continued after the interview was over. In addition, the survey topics dealt with building community-academic partnerships and interviewees felt that it was an opportunity to involve others, either through additional training, educational materials, or other means. Without feedback from the cognitive interviews, we may not have thought about engaging the CAB for further survey refinement or developed a plan to work with organizations in the future on training materials or feedback reports.

Given that the purpose of the survey was to encourage research partnerships, we added several new domains and items based on suggestions from our cognitive interviewees. These items included questions on academic capacity to respond to organizational research needs, interest in partnering, and organizational characteristics. We also changed the title of the survey from “Research Readiness of Community-based Organizations” to “Community-Academic Research Partnership Survey” and revised our confidence scale to acknowledge collaboration in research activities.

In addition, a few participants thought we should ask why community organizations were interested in research in the first place; what was their motivation? Knowing this information would allow academic partners to better appeal to this motivation and be better prepared when engaging in partnerships.

“Again I kept thinking back to our meetings with the CABs, so we would hear about projects and give recommendations and then sometimes they would be looking for ideas or looking for partners so we would help brainstorm different things. And so it was always interesting to me, to think, well is this something that I could help with or be involved with?”

Lastly, participants commented that there were organizational characteristics that might affect research readiness. These included organizational size, organization's level of research experience, and experience with federal grant writing. Based on these comments, we added questions in the survey to collect this information.

After taking the survey, participants were interested in hearing more about the topics mentioned in the questionnaire that could improve their organization's readiness to partner in research. They expressed the need for additional training or educational materials that explained the federal grant requirements and terminology.

Structural revisions to the survey

The revised community-academic research partnership survey now consists of 50 items, increased from 44 on the original survey. The instrument is divided into five main sections and designed with skip patterns based on an organization's level of interest in partnering with an academic institution to conduct research. The survey begins with instructions that describe who the research team is, the purpose of the survey, and how to complete the survey.

Depending on their organization's interest, survey respondents will complete different sections of the survey: 1) Respondents representing organizations interested in being the lead or co-lead on a research study are prompted to complete the entire survey; 2) Respondents who represent organizations interested in acting as subcontractors skip Section A, which include questions on developing and writing sections of a federal research grant proposal; 3) Respondents who identify with organizations interested in participating in research activities for a University-led research study without a subcontract skip to Section C, which only include questions about creating invoices and preparing a fiscal policy. If a respondent indicates their organization is not interested in research involvement, they skip to Section D. Section D is intended for all survey respondents and asks general questions about participants and their organizations.

Discussion

With a growing need for stronger community-academic partnerships in the field of translational research, finding ways to establish and cultivate these relationships is paramount. We anticipated that our cognitive interviews would serve to uncover and refine survey measurement issues and highlight implicit biases in the instrument. The process of testing the instrument with community stakeholders improved face and content validity and reduced measurement error. We did not anticipate, however, that we would be able to initiate research partnerships with community stakeholders as a result of the cognitive interviewing process. Our inquiry reflected CBPR principles of early engagement in the research process (e.g., build on the strengths and resources within the community, facilitate collaborative partnerships in all phases of the research, and integrate knowledge and action for mutual benefit),2 and allowed us to benefit from having the community's voice as part of the development of measures to promote instrument validity. In contrast to the typical approach of having a one-time, short-term exchange with a participant, our approach resulted in the involvement of community organizations in co-developing a bi-directional tool and started a face to face interaction that could lead to a future partnership.

For example, the cognitive interview participants for this study expressed an interest in providing technical assistance on developing community-academic workshops that are created as a result of the research findings. In addition, the organizations that the interviewees represented will likely be among the first organizations we contact when we recruit community partners for collaborative research projects. Some respondents have already engaged with our academic team's activities such as joining our community engagement metrics working group.

Our future plans are to distribute the revised instrument to a sample of over 800 CBOs across North Carolina. The team will share survey results from field testing with the CAB to discuss future directions and content of trainings and educational materials for community organizations to increase research capacity.

The final tool will enable NC TraCS to identify what is needed in terms of research readiness for specific audiences who complete the tool, then develop tailored, topic-specific trainings that address their research needs and interests.

Participants pointed out that faculty also need training in how to work with community partners, and a complementary instrument is currently being developed by the team in collaboration with a study participant and additional community partners.

Limitations

As with any research study, we recognize that limitations exist. Our cognitive interview participants were selected through a combination of purposive and convenience sampling and only represent the organizations for which they are affiliated. We may not have identified the full range of considerations with the survey due to the limited number of participants representing groups with varied research experience. Since we learned that relationship-building was an unanticipated outcome of the cognitive interview process, we did not measure whether participants' collaborative activities with academic partners increased before and after the interviews. Future studies can explore whether trust or collaborations increased after conducting cognitive interviews in a more systematic fashion.

References

- 1.National Research Council. The CTSA Program at NIH: Opportunities for Advancing Clinical and Translational Research. Washington, DC: The National Academies Press; 2013. [PubMed] [Google Scholar]

- 2.Israel BA, Eng E, Schulz AJ, Parker EA, Satcher D. Methods in community-based participatory research for health. San Francisco: Jossey-Bass; 2005. [Google Scholar]

- 3.Willis GB. Cognitive Interviewing, A “How To” Guide. Research Triangle Institute, Short course presented at the 1999 Meeting of the American Statistical Association; [Google Scholar]

- 4.Bornstein RF. Face validity. In: Lewis-Beck MS, Bryman A, Liao TF, editors. The SAGE encyclopedia of social science research methods. Thousand Oaks, CA: Sage Publications Inc; 2004. pp. 368–369. [Google Scholar]

- 5.Fitzpatrick AR. The meaning of content validity. Applied Psychological Measurement Winter. 1983;7(1):3–13. [Google Scholar]

- 6.Willis GB. Cognitive interviewing: a tool for improving questionnaire design. Thousand Oaks, CA: Sage Publications Inc.; 2005. [Google Scholar]