Abstract

This paper describes an R package named flare, which implements a family of new high dimensional regression methods (LAD Lasso, SQRT Lasso, ℓq Lasso, and Dantzig selector) and their extensions to sparse precision matrix estimation (TIGER and CLIME). These methods exploit different nonsmooth loss functions to gain modeling exibility, estimation robustness, and tuning insensitiveness. The developed solver is based on the alternating direction method of multipliers (ADMM), which is further accelerated by the multistage screening approach. The package flare is coded in double precision C, and called from R by a user-friendly interface. The memory usage is optimized by using the sparse matrix output. The experiments show that flare is efficient and can scale up to large problems.

1 Introduction

As a popular sparse linear regression method for high dimensional data analysis, Lasso has been extensively studied by machine learning and statistics communities (Tibshirani, 1996; Chen et al., 1998). It adopts the quadratic loss and ℓ1 norm regularization functions to select and estimate nonzero parameters simultaneously. Software packages such as glmnet have been developed to efficiently solve large problems (Friedman et al., 2010). Lasso further yields a wide range of research interests, and motivates many variants by exploiting nonsmooth loss functions to gain modeling exibility, estimation robustness, and tuning insensitiveness. These nonsmooth loss functions, however, pose a great challenge to computation. To the best of our knowledge, no efficient solver has been developed so far for these Lasso variants.

In this report, we describe a newly developed R package named flare (Family of Lasso Regression). The flare package implements a family of linear regression methods including

LAD Lasso, which is robust to heavy tail random noise and outliers (Wang, 2013).

SQRT Lasso, which is tuning insensitive (the optimal regularization parameter selection does not depend on any unknown parameter, Belloni et al. (2011)).

ℓq Lasso, which shares the advantage of LAD Lasso and SQRT Lasso.

-

Dantzig selector, which can tolerate missing values in the design matrix and response vector (Candes and Tao, 2007).

By adopting the column by column regression scheme, we further extend these regression methods to sparse precision matrix estimation, including

TIGER, which is tuning insensitive (Liu and Wang, 2012).

CLIME, which can tolerate missing values in the data matrix (Cai et al., 2011).

The developed solver is based on the alternating direction method of multipliers (ADMM), which is further accelerated by a multistage screening approach (Gabay and Mercier, 1976; Boyd et al., 2011). The global convergence result of ADMM has been established in He and Yuan (2012a,b). The numerical simulations show that the flare package is efficient and can scale up to large problems.

2 Notation

We first introduce some notations. Given a d-dimensional vector υ = (υ1, …, υd)T ∈ ℝd, we define vector norms:

where 1 ≤ q ≤ 2. Given a matrix A = [Ajk] ∈ ℝd×d, we use ‖A‖2 to denote the largest singular value of A. We also define the winterization, univariate soft thresholding, and group soft thresholding operators as follows,

3 Algorithm

We are interested in solving convex programs in the following generic form,

| (1) |

where λ > 0 is the regularization parameter. The possible choices of Lλ(α), A, and r for different regression methods are listed in Table 1. As can be seen, LAD Lasso and SQRT Lasso are special cases of ℓq Lasso for q = 1 and q = 2 respectively. All methods above can be efficiently solved by the iterative scheme as follows,

| (2) |

| (3) |

| (4) |

where u is the rescaled Lagrange multiplier, and ρ > 0 is the penalty parameter. Note that the Lagrange multiplier u is rescaled for computational convenience, and it does not affect the global convergence of the ADMM method. See more details in Boyd et al. (2011). For LAD Lasso, SQRT Lasso, and Dantzig selector, we can obtained a closed form solution to(2) by

| (5) |

| (6) |

| (7) |

For ℓq Lasso with 1 < q < 2, we can solve (2) by the bisection based root finding algorithm (Liu and Ye, 2010). (3) is a standard ℓ1 penalized least square problem. Our solver adopts the linearization at β = βt as follows and solves (3) approximately by

| (8) |

where . We can obtain a closed form solution to (8) by soft thresholding,

| (9) |

Besides the pathwise optimization scheme and the active set trick, we also adopt the multistage screening approach to speedup the computation. In particular, we first select k nested subsets of coordinates 𝒜1 ⊆ 𝒜2 ⊆ … ⊆ 𝒜k = ℝd by the marginal correlation between the covariates and responses. Then the algorithm iterates over these nested subsets of coordinates to obtain the solution. The multistage screening approach can greatly boost the empirical performance, especially for Dantzig selector.

Table 1.

All regression methods provided in the flare package. X ∈ ℝn×d denotes the design matrix, and y ∈ ℝn denotes the response vector. “L.P.” denotes the general linear programming solver, and “S.O.C.P” denotes the second-order cone programming solver.

| Method | Loss function | A | r | Existing solver | |||

|---|---|---|---|---|---|---|---|

| LAD Lasso | X | y | L.P. | ||||

| SQRT Lasso | X | y | S.O.C.P. | ||||

| ℓq Lasso | X | y | None | ||||

| Dantzig selector | L.P. |

4 Examples

We illustrate the user interface by two examples. The first one is the eye disease dataset in our package.

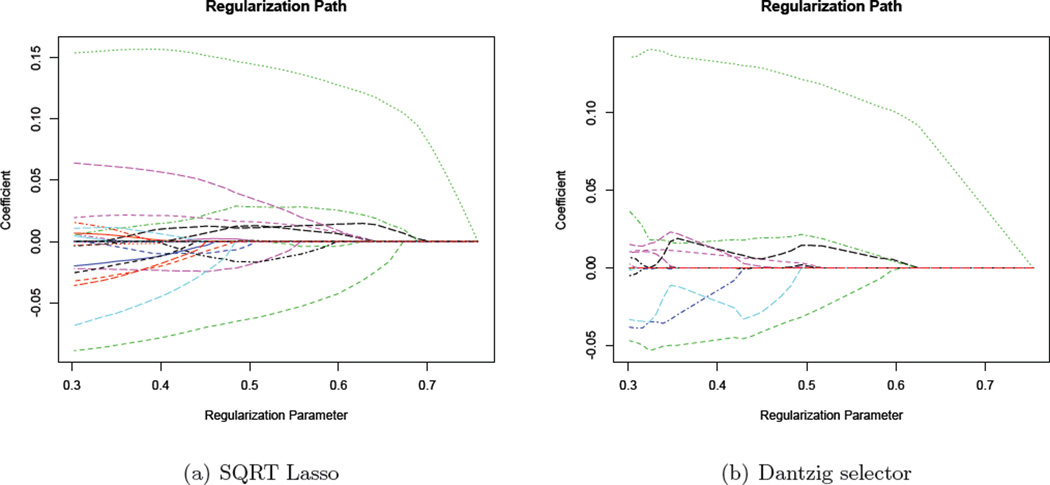

> # Load the dataset > library(flare); data(eyedata) > # SQRT Lasso > out1 = slim(x,y,method="lq",nlambda=40,lambda.min.value=sqrt(log(200)/120)) > # Dantzig Selector > out2 = slim(x,y,method="dantzig",nlambda=40,lambda.min.ratio=0.35) > # Plot solution paths > plot(out1); plot(out2)

The program automatically generates a sequence of 40 regularization parameters and estimates the corresponding solution paths of SQRT Lasso and the Dantzig selector. For the Dantzig selector, the optimal regularization parameter is usually selected based on some model selection procedures, such as cross validation. Note that the theoretically consistent regularization parameter of SQRT Lasso is , where C is some constant. Thus we manually choose its minimum regularization parameter to be . We see that the minimum regularization parameter yields 19 nonzero coefficients out of 200. We further plot two solution paths in Figure 1.

Figure 1.

Solution paths obtained by the package flare. The minimum regularization parameter of SQRT Lasso is selected as manually, which yields 19 nonzero regression coefficients out of 200.

Our second example is the simulated dataset using the data generator in our package.

> # Generate data with hub structure > L = sugm.generator(n=400,d=200,graph="hub",g=10) > out1 = sugm(L$data,method="clime",nlambda=10,lambda.min.ratio=0.4) > # Model selection using cross validation. > out1.opt = sugm.select(out1,criterion="cv") > out2 = sugm(L$data,lambda = sqrt(log(200)/400)) > # Visualize obtained grpahs > plot(L); plot(out1.opt); plot(out2)

For CLIME, the program automatically generates a sequence of 10 regularization parameters, estimates the corresponding graph path, and chooses the optimal regularization parameter by cross validation. Note that TIGER is also tuning insensitive. Therefore we manually choose the regularization to be (This is also a theoretically consistent choice). We then compare the obtained graphs with the true graph using the visualization function in our package, and the resulting figures are presented in Figure 2. We see that TIGER achieves good graph recovery performance without any model selection procedure.

Figure 2.

Graphs estimated by the package flare. The CLIME graph is selected by cross validation, and the TIGER graph is manually selected by setting the regularization parameter as .

5 Numerical Simulation

All experiments are carried out on a PC with Intel Core i5 3.3GHz processor, and the convergence threshold of flare is chosen to be 10−5. Timings (in seconds) are averaged over 100 replications using a sequence of 20 regularization parameters, and the range of regularization parameters is chosen so that each method produces approximately the same number of nonzero estimates.

We first evaluate the timing performance of flare for sparse linear regression. We set n = 100 and vary d from 375 to 3000 as is shown in Table 2. We independently generate each row of the design matrix from a d-dimensional normal distribution N(0,Σ), where Σjk = 0.5|j−k|. Then we generate the response vector using yi = 3Xi1+2Xi2+1.5Xi4+εi, where εi is independently generated from N(0, 1). From Table 2, we see that all methods achieve very good timing performance. Dantzig selector and ℓq Lasso are slower due to more difficult computational formulations. For comparison purpose, we also present the timing performance of the glmnet package for solving SQRT Lasso in Table 2. Since glmnet cannot be directly applied to SQRT Lasso, the implementation is based on the alternating minimization algorithm proposed in Sun and Zhang (2012). In particular, this algorithm obtains the minimizer by solving a sequence of Lasso problems (using glmnet). As can be seen, it also achieves good timing performance, but still slower than the flare package.

Table 2.

Average timing performance (in seconds) with standard errors in the parentheses on sparse linear regression and sparse precision matrix estimation.

| Sparse Linear Regression | ||||

| Method | d = 375 | d = 750 | d = 1500 | d = 3000 |

| LAD Lasso | 1.1713(0.2915) | 1.1046(0.3640) | 1.8103(0.2919) | 3.1378(0.7753) |

| ℓ1.5 Lasso | 12.995(0.5535) | 14.071(0.5966) | 14.382(0.7390) | 16.936(0.5696) |

| Dantzig selector | 0.3245(0.1871) | 1.5360(1.8566) | 4.4669(5.9929) | 17.034(23.202) |

| SQRT Lasso (flare) | 0.4888(0.0264) | 0.7330(0.1234) | 0.9485(0.2167) | 1.2761(0.1510) |

| SQRT Lasso (glmnet) | 0.6417(0.0341) | 0.8794(0.0159) | 1.1406(0.0440) | 2.1675(0.0937) |

| Sparse Precision Matrix Estimation | ||||

| Method | d = 100 | d = 200 | d = 300 | d=400 |

| TIGER | 1.0637(0.0361) | 4.6251(0.0807) | 7.1860(0.0795) | 11.085(0.1715) |

| CLIME | 2.5761(0.3807) | 20.137(3.2258) | 42.882(18.188) | 112.50(11.561) |

We then evaluate the timing performance of flare for sparse precision matrix estimation. We set n = 100 and vary d from 100 to 400 as is shown in Table 2. We independently generate the data from a d-dimensional normal distribution N(0,Σ), where Σjk = 0.5|j−k|. The corresponding precision matrix Ω = Σ−1 has Ωjj = 1.3333, Ωjk = −0.6667 for all j, k = 1, …, d and |j − k| = 1, and all other entries are 0. As can be seen from Table 2, TIGER and CLIME both achieve good timing performance, and CLIME is slower than TIGER due to a more difficult computational formulation.

6 Discussion and Conclusions

Though the glmnet package cannot handle nonsmooth loss functions, it is much faster than flare for solving Lasso as illustrated in Table 3. The simulation setting is the same as the sparse linear regression setting in §5. Moreover, the glmnet package can also be applied to solve ℓ1 regularized generalized linear model estimation problems, which flare cannot. Overall speaking, the flare package serves as an efficient complement to the glmnet packages for high dimensional data analysis. We will continue to maintain and support this package.

Table 3.

Quantitive comparison between the flare and glmnet packages for solving Lasso.

| Method | d = 375 | d = 750 | d = 1500 | d = 3000 |

|---|---|---|---|---|

| Lasso (flare) | 0.0920(0.0013) | 0.1222(0.0009) | 0.2328(0.0037) | 0.6510(0.0051) |

| Lasso (glmnet) | 0.0024(0.0001) | 0.0038(0.0001) | 0.0065(0.0005) | 0.0466(0.0262) |

Acknowledgments

Tuo Zhao and Han Liu are supported by NSF Grants III-1116730 and NSF III-1332109, NIH R01MH102339, NIH R01GM083084, and NIH R01HG06841, and FDA HHSF223201000072C. Lie Wang is supported by NSF Grant DMS-1005539. Xiaoming Yuan is supported by the General Research Fund form Hong Kong Research Grants Council: 203311 and 203712.

References

- Belloni A, Chernozhukov V, Wang L. Square-root lasso: pivotal recovery of sparse signals via conic programming. Biometrika. 2011;98:791–806. [Google Scholar]

- Boyd S, Parikh N, Chu E, Peleato B, Eckstein J. Distributed optimization and statistical learning via the alternating direction method of multipliers. Foundations and Trends ® in Machine Learning. 2011;3:1–122. [Google Scholar]

- Cai T, Liu W, Luo X. A constrained ℓ1 minimization approach to sparse precision matrix estimation. Journal of the American Statistical Association. 2011;106:594–607. [Google Scholar]

- Candes E, Tao T. The dantzig selector: Statistical estimation when p is much larger than n. The Annals of Statistics. 2007;35:2313–2351. [Google Scholar]

- Chen SS, Donoho DL, Saunders MA. Atomic decomposition by basis pursuit. SIAM journal on scientific computing. 1998;20:33–61. [Google Scholar]

- Friedman J, Hastie T, Tibshirani R. Regularization paths for generalized linear models via coordinate descent. Journal of statistical software. 2010;33:1. [PMC free article] [PubMed] [Google Scholar]

- Gabay D, Mercier B. A dual algorithm for the solution of nonlinear variational problems via finite element approximation. Computers & Mathematics with Applications. 1976;2:17–40. [Google Scholar]

- He B, Yuan X. On non-ergodic convergence rate of douglas-rachford alternating direction method of multipliers. Tech. rep., Nanjing University; 2012a. [Google Scholar]

- He B, Yuan X. On the o(1/n) convergence rate of the douglas-rachford alternating direction method. SIAM Journal on Numerical Analysis. 2012b;50:700–709. [Google Scholar]

- Liu H, Wang L. Tiger: A tuning-insensitive approach for optimally estimating gaussian graphical models. Tech. rep., Princeton University; 2012. [Google Scholar]

- Liu J, Ye J. Efficient l1/lq norm regularization. Tech. rep., Arizona State University; 2010. [Google Scholar]

- Sun T, Zhang C-H. Scaled sparse linear regression. Biometrika. 2012;99:879–898. [Google Scholar]

- Tibshirani R. Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society, Series B. 1996;58:267–288. [Google Scholar]

- Wang L. L1 penalized lad estimator for high dimensional linear regression. Journal of Multivariate Analysis. 2013 [Google Scholar]