Abstract

Background

Prescription drug monitoring programs (PDMPs) are a key component of the president's Prescription Drug Abuse Prevention Plan to prevent opioid overdoses in the United States.

Purpose

To examine whether PDMP implementation is associated with changes in nonfatal and fatal overdoses; identify features of programs differentially associated with those outcomes; and investigate any potential unintended consequences of the programs.

Data Sources

Eligible publications from MEDLINE, Current Contents Connect (Clarivate Analytics), Science Citation Index (Clarivate Analytics), Social Sciences Citation Index (Clarivate Analytics), and ProQuest Dissertations indexed through 27 December 2017 and additional studies from reference lists.

Study Selection

Observational studies (published in English) from U.S. states that examined an association between PDMP implementation and nonfatal or fatal overdoses.

Data Extraction

2 investigators independently extracted data from and rated the risk of bias (ROB) of studies by using established criteria. Consensus determinations involving all investigators were used to grade strength of evidence for each intervention.

Data Synthesis

Of 2661 records, 17 articles met the inclusion criteria. These articles examined PDMP implementation only (n = 8), program features only (n = 2), PDMP implementation and program features (n = 5), PDMP implementation with mandated provider review combined with pain clinic laws (n = 1), and PDMP robustness (n = 1). Evidence from 3 studies was insufficient to draw conclusions regarding an association between PDMP implementation and nonfatal overdoses. Low-strength evidence from 10 studies suggested a reduction in fatal overdoses with PDMP implementation. Program features associated with a decrease in overdose deaths included mandatory provider review, provider authorization to access PDMP data, frequency of reports, and monitoring of nonscheduled drugs. Three of 6 studies found an increase in heroin overdoses after PDMP implementation.

Limitation

Few studies, high ROB, and heterogeneous analytic methods and outcome measurement.

Conclusion

Evidence that PDMP implementation either increases or decreases nonfatal or fatal overdoses is largely insufficient, as is evidence regarding positive associations between specific administrative features and successful programs. Some evidence showed unintended consequences. Research is needed to identify a set of “best practices” and complementary initiatives to address these consequences.

Primary Funding Source

National Institute on Drug Abuse and Bureau of Justice Assistance.

The overuse of prescription opioids during the past 2 decades has evolved into a major public health issue in the United States. Opioid prescribing increased 350% between 1999 and 2015, from 180 to 640 morphine milligram equivalents per capita (1), with parallel increases in nonmedical use (2, 3), neonatal abstinence syndrome (4), and deaths due to both prescription opioid and heroin overdose (5, 6). The age-adjusted rate of prescription opioid–related deaths rose from 1.0 to 4.4 deaths per 100 000 population between 1999 and 2016, whereas heroin-related deaths increased nearly 5-fold since 2010, rising from 1.0 to 4.9 deaths per 100 000 population between 2010 and 2016 (7).

State prescription drug monitoring programs (PDMPs) have been advanced as a critical tool to better inform clinical care, identify illegal prescribing, and reduce prescription opioid–related morbidity and mortality (8, 9). By 2017, all 50 states and the District of Columbia had an operational PDMP or passed legislation to operate a PDMP. Although PDMPs in the United States have commonalities in terms of centralized statewide data systems that electronically transmit prescription data, the administrative features of PDMPs have varied substantially among states and over time. Programs operate under different regulatory agencies, collect different types of data, require data to be updated at different intervals, and allow access to different groups of people. Despite this variability in PDMP administrative features, previous studies found implementation of these programs to be associated with reductions in the supply (10), diversion (11), and misuse of prescription opioids (12). As such, PDMPs are increasingly promoted as valuable, userfriendly, accurate, and real-time digital resources for providers and law enforcement alike (13, 14). However, evidence for the effect of PDMPs on drug-induced overdoses remains unclear.

The objective of our review was to systematically search and review the literature to assess whether PDMPs are associated with changes in nonfatal or fatal overdoses; to evaluate whether specific administrative features of PDMPs are differentially associated with these outcomes and, if so, which features are most influential; and to investigate any potential unintended consequences associated with PDMPs.

Methods

Data Sources and Searches

We followed a predefined protocol developed in November 2016 (Supplement 1, available at Annals.org) and structured reporting of the review according to PRISMA (Preferred Reporting Items for Systematic reviews and Meta-Analyses) guidelines (15). We searched 5 online databases (MEDLINE, Current Contents Connect [Clarivate Analytics], Science Citation Index [Clarivate Analytics], Social Sciences Citation Index [Clarivate Analytics], and ProQuest Dissertations) for titles and abstracts of articles that examined an association between PDMP implementation and nonfatal or fatal drug overdoses. We did not impose a time or language restriction on searches (that is, queries surveyed the entire history of each online database). We included dissertations and peer-reviewed articles, as well as both published and in-process texts. We also examined references from the selected materials to identify additional articles and searched ClinicalTrials.gov. The search was first conducted in November 2016 and repeated in December 2017. All the resulting study titles and abstracts were exported to Covidence, a Web interface developed by Cochrane to systematize the review process (16). For the search terms and algorithm used in the literature search, see Appendix Table 1 (available at Annals.org).

Study Selection

All titles and abstracts were independently screened by 1 of 3 investigators (D.S.F., J.P.S., or K.K.G.) for eligibility, and those considered relevant by any investigator advanced to the full-text review. We included observational studies published in English if they estimated the before-and-after change in rates of nonfatal or fatal drug overdoses after a PDMP was implemented within a single U.S. state or in a set of states. No restrictions were placed on sample size or population age. A PDMP was considered implemented when a state operationalized its program and began to collect and distribute data or to make the data available to authorized users.

Data Extraction and Quality Assessment

Two researchers (J.P.S. and K.K.G.) independently read selected articles. Using a standardized article assessment form, they captured data on the specific policy studied; outcome data sources; study design; and results, including point estimates and CIs or P values. After the data were abstracted independently from each study, the 2 researchers reviewed the data for each article to ensure consistency and resolve differences. Disagreements between the researchers were reconciled by the first author (D.S.F.). Finally, 2 investigators independently assessed risk of bias (ROB) for the overdose outcomes reported in each study by using the Cochrane Risk Of Bias In Non-randomized Studies of Interventions (ROBINS-I) assessment tool (17). By answering questions provided by ROBINS-I, the investigators assessed ROB within 8 specific bias domains (confounding, selection of participants, classification, deviations from intended interventions, missing data, measurement of outcomes, selection of the reported results, and overall bias), grading each domain as low, moderate, serious, or critical. Disagreements were resolved by consensus.

Data Synthesis and Analysis

Because of substantial heterogeneity in the policies examined and the analytic methods applied, we did not do a meta-analysis. Instead, we performed a qualitative assessment and synthesis using methods outlined by the Agency for Healthcare Research and Quality (18). We categorized studies into 5 groups: PDMP implementation only, specific administrative features only, both PDMP implementation and specific administrative features, PDMP implementation with other opioid policies, and PDMP robustness. Studies examining only PDMP implementation treated all PDMPs as homogenous programs without considering how their administrative features have varied among states and over time. Studies investigating specific administrative features compared states with a PDMP having a specific feature (such as mandatory registration or use, frequency of reporting, or proactive reporting) with states that either had no PDMP or had a PDMP without the specific feature. Studies of PDMPs implemented with other, associated opioid policies examined the contribution of PDMP features to those policies. Finally, studies examining PDMP robustness presented quantitative ratings of PDMP features according to their potential effectiveness in reducing diversion and overdose. We also examined 3 outcomes: nonfatal overdoses, fatal overdoses, and unintended consequences.

The investigators assessed the overall strength of evidence (SOE), considering 5 domains: study limitations (determined by using ROBINS-I), directness (whether evidence linked interventions directly to a key question in the review), consistency (degree to which studies found the same direction of effect estimates), precision (degree of certainty surrounding an effect estimate), and reporting bias (selective publishing or reporting of findings on the basis of favorability of the direction or magnitude of effect estimates). On the basis of grades from the 5 specific domains, we rated the overall SOE for each intervention and outcome as insufficient, low, moderate, or high.

Role of the Funding Source

The National Institute on Drug Abuse (NIDA) and Bureau of Justice Assistance (BJA) had no role in the design and conduct of the study; collection, management, analysis, or interpretation of the data; or preparation, review, or approval of the manuscript.

Results

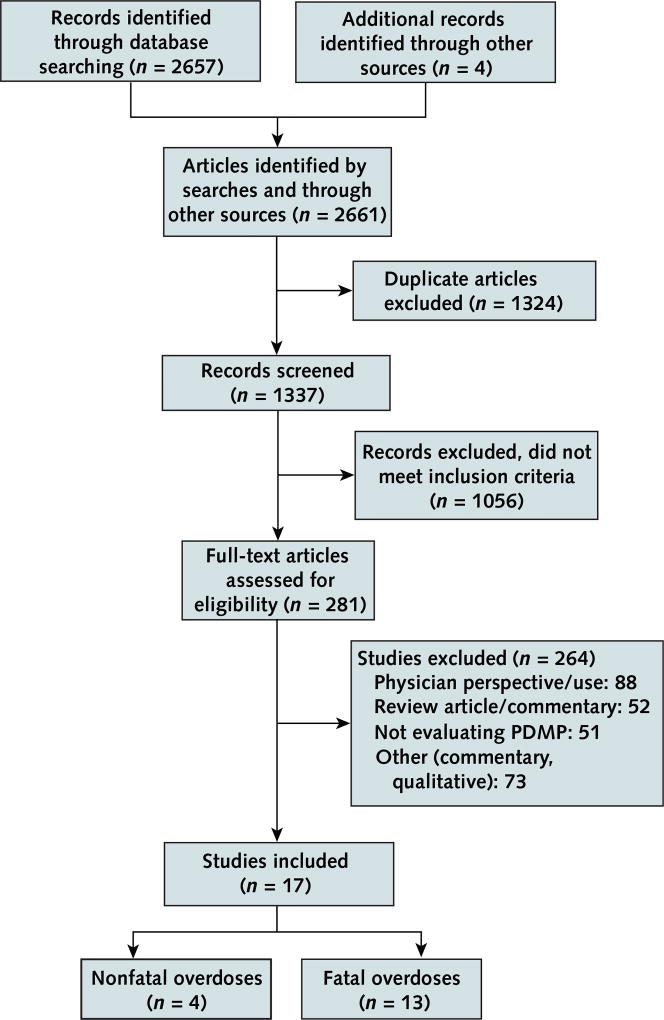

Figure 1 depicts the literature search and selection process. Seventeen articles met the inclusion criteria; 4 reported nonfatal drug overdoses, and 13 reported fatal drug overdoses. All were published between 2011 and 2018. Three were doctoral dissertations (19–21), and 14 were published in peer-reviewed journals (22–35). Of note, outcome data from 1 study were extracted from 2 publications (29, 36). Supplement 2 (available at Annals.org) presents the characteristics and Appendix Table 2 (available at Annals.org) the ROB assessments of the studies.

Figure 1. Evidence search and selection.

PDMP = prescription drug monitoring program.

The Table shows the various PDMP configurations evaluated in the 17 studies. Of these studies, 8 examined PDMP implementation in general (21, 29, 30–35), 2 looked at program features alone (23, 24), 5 analyzed both PDMP implementation and program features (19, 20, 22, 27, 28), 1 investigated PDMP implementation with mandated provider review combined with pain clinic laws (25), and 1 assessed PDMP robustness (26). The study that examined robustness generated a score of PDMP administrative strength or “robustness” by assigning weights to specific administrative features on the basis of extant evidence, or expert judgment if evidence was lacking, regarding the expected effect of the characteristic on prescribing or overdose, then summing the weights for a PDMP in a given state for a particular year (26). Among the 7 studies that examined program features, whether alone (22, 24) or in addition to PDMPs in general (19, 20, 22, 27, 28), mandatory provider use of or registration for the PDMP was the most frequently evaluated administrative feature, with 1 study examining the association with nonfatal overdoses (28), 4 studies investigating the association with fatal overdoses (20, 22, 24, 27), and 1 study looking at the association with both nonfatal and fatal overdoses (23). In addition, 2 studies examined state authorization for providers to access PDMP data (20, 22), 2 focused on proactive reporting of PDMP data to providers (19, 28), 1 looked at interstate sharing of PDMP data (19), 3 investigated the frequency of reports (19, 27, 28), 1 examined PDMP housing agency (19), and 3 analyzed the monitoring of nonscheduled drugs (19, 27, 28).

Table.

SOE for PDMPs

| Outcome | Studies, n | SOE Domains | Reference | |||||

|---|---|---|---|---|---|---|---|---|

| ROB | Directness | Consistency | Precision | SOE | Publication Bias |

|||

| PDMP implementation vs. no PDMP | ||||||||

| Nonfatal overdoses | 3 | Serious | Direct | Inconsistent | Precise | Insufficient | None detected | 28, 30, 31 |

| Fatal overdoses | 10 | Moderate | Direct | Inconsistent | Imprecise* | Low | None detected | 19–22, 27, 29, 32–35 |

| Mandatory registration or use vs. no PDMP | ||||||||

| Nonfatal overdoses | 2 | Serious | Direct | Inconsistent | Imprecise* | Insufficient | None detected | 23, 28 |

| Fatal overdoses | 5 | Moderate | Direct | Inconsistent | Imprecise* | Low | None detected | 20, 22–24, 27 |

| Mandatory provider use with pain clinic law vs. | ||||||||

| Nonfatal overdoses | 0 | – | – | – | – | – | – | – |

| Fatal overdoses | 1 | Serious | Direct | Unknown (single study) | Imprecise* | Insufficient | None detected | 25 |

| State authorizes prescribers to access PDMP data | ||||||||

| Nonfatal overdoses | 0 | – | – | – | – | – | – | – |

| Fatal overdoses | 2 | Moderate | Direct | Consistent | Precise | Low | None detected | 20, 22 |

| Frequency of reporting vs. no PDMP | ||||||||

| Nonfatal overdoses | 1 | Serious | Direct | Unknown (single study) | Imprecise* | Insufficient | None detected | 28 |

| Fatal overdoses | 2 | Moderate | Direct | Inconsistent | Imprecise* | Low | None detected | 19, 27 |

| Proactive reporting vs. no PDMP | ||||||||

| Nonfatal overdoses | 1 | Serious | Direct | Unknown (single study) | Imprecise* | Insufficient | None detected | 28 |

| Fatal overdoses | 1 | Moderate | Direct | Unknown (single study) | Imprecise* | Insufficient | None detected | 19 |

| Interstate sharing vs. no PDMP | ||||||||

| Nonfatal overdoses | 0 | – | – | – | – | – | – | – |

| Fatal overdoses | 1 | Moderate | Direct | Unknown (single study) | Imprecise* | Insufficient | None detected | 19 |

| Housing agency vs. no PDMP | ||||||||

| Nonfatal overdoses | 0 | – | – | – | – | – | – | – |

| Fatal overdoses | 1 | Moderate | Direct | Unknown (single study) | Imprecise* | Insufficient | None detected | 19 |

| Monitoring of nonscheduled drugs vs. no PDMP | ||||||||

| Nonfatal overdoses | 1 | Serious | Direct | Unknown (single study) | Imprecise* | Insufficient | None detected | 28 |

| Fatal overdoses | 2 | Direct | Consistent | Precise | Low | None detected | 19, 27 | |

| PDMP robustness vs. no PDMP | ||||||||

| Nonfatal overdoses | 0 | – | – | – | – | – | – | – |

| Fatal overdoses | 1 | Serious | Direct | Unknown (single study) | Unknown | Insufficient | None detected | 26 |

PDMP = prescription drug monitoring program; ROB = risk of bias; SOE = strength of evidence.

Imprecision based on a broad CI or a CI that crosses the decisional threshold.

Outcome data on nonfatal and fatal overdoses were obtained from both state-level and national data sets. Two studies used state-level data: 1 used information from the Florida Medical Examiners Commission regarding oxycodone-involved deaths (29); the other used data from New York health care facilities on inpatient and emergency department visits (23). National data on nonfatal overdoses came from either the Drug Abuse Warning Network (30, 31) or Truven Health MarketScan administrative claims (28), whereas information on fatal drug overdoses was obtained from the Multiple Cause of Death files produced by the National Center for Health Statistics of the Centers for Disease Control and Prevention. International Classification of Diseases, 10th Revision, codes were used to define mortality by state and year on the basis of both the manner and contributing cause of death. Manner of death included drug poisoning that was unintentional (X40 to X44), intentional (X60 to X64), or undetermined (Y10 to Y14). Contributing cause codes were used to identify whether the death was attributed to a prescription opioid analgesic (T40.2 to T40.4) or heroin (T40.1).

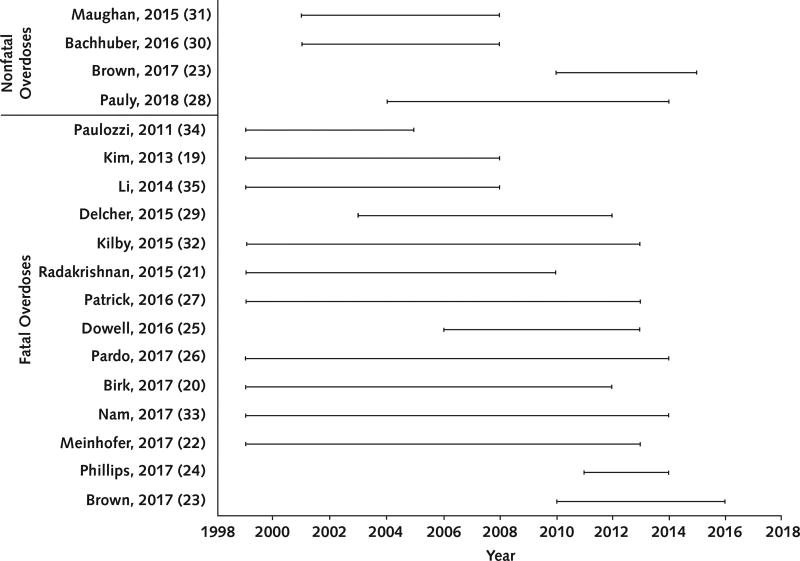

PDMP Implementation

All studies examining the association between PDMP implementation and overdose had methodological shortcomings, including inadequate adjustment for time-invariant and time-varying confounding factors and no adjustment for competing laws and policies that might affect overdoses (such as Good Samaritan laws, naloxone distribution, or medical marijuana laws). Three of the studies (all with serious ROB) estimated postimplementation changes in nonfatal overdose rates, finding mixed results (28, 30, 31). Two studies, 1 analyzing opioid-related overdoses (31) and 1 examining benzodiazepine-related cases (30), reported no change in nonfatal overdose events after PDMP implementation. Another study reported that PDMP implementation was associated with a 31% decrease (95% CI, 0.56 to 0.87) in prescription opioid–related inpatient and emergency department visits (28). Differences were found among study samples and years of data examined (Figure 2). The studies reporting a nonsignificant change in overdose events analyzed data from 11 metropolitan areas (Boston, Chicago, Denver, Detroit, Houston, Miami–Dade County, Minneapolis–St. Paul, New York City, Phoenix, San Francisco, and Seattle) from 2004 to 2011 (30, 31); the study reporting a statistically significant decrease in overdose rates used data from all 50 U.S. states from 2004 to 2014 (28).

Figure 2. Span of years analyzed in the included studies.

Ten studies (2 low, 6 moderate, and 2 serious ROB) examined the association between PDMP implementation and fatal overdoses. Three reported a decrease (27, 29, 32), 6 reported no change (19–22, 33, 34), and 1 reported an increase in overdose deaths (35). Studies that found an association between PDMP implementation and a decrease in fatal overdoses restricted their data to a subset of potential U.S. states; 1 study examined oxycodone-related events in Florida (29), and 2 studies excluded early-adopter states (that is, states that instituted a PDMP before 2003) from their analysis (27, 32). In contrast, the 6 studies that used data from all 50 U.S. states reported that PDMP implementation was not associated with a change in fatal overdoses (19–22, 33, 34). All studies that found a decrease or no change in fatal overdoses after PDMP enactment accounted for time-fixed differences between PDMP and non-PDMP states by including a state fixed effect (and did or did not account for time-varying confounders). The study that reported a postimplementation increase in fatal drug overdoses did not adequately adjust for preexisting time-fixed differences between states that did and those that did not enact PDMPs, or for timevarying differences in key factors, such as other policies that might co-occur with PDMP implementation (35).

Six studies (1 low, 2 moderate, and 3 serious ROB) examined the relationship between PDMP implementation and heroin-related overdose deaths (21, 22, 25, 32, 33, 36), with mixed results. Three found an association between PDMP implementation and increased rates of heroin overdose (22, 32, 36), and 3 found a statistically nonsignificant decrease in heroin overdoses (21, 25, 33).

PDMP Administrative Features

Nine studies (1 low, 3 moderate, 3 serious, and 2 critical ROB) investigated the relationship between specific PDMP administrative features and nonfatal (23, 28) or fatal (19, 20, 22–27) overdoses. The studies found reduced rates of fatal overdose in states with programs that shared data with other states (19), had mandatory provider review (20, 28), monitored noncontrolled substances (19, 27, 28), proactively reported patients' controlled substance prescription history to in-state prescribers and licensure boards (herein called proactive reporting) (28), and updated data at least weekly (27, 28).

The most frequently analyzed administrative feature—mandated provider review of PDMP data—was investigated in 6 studies (20, 22–24, 27, 28). Three of the studies (low or moderate ROB) specified a fixed effect for each state to examine the association between mandated provider review and drug overdoses after adjustment for time-invariant baseline characteristics. One of these studies found no change in the number of opioid-related deaths (27), and 2 reported an association between mandated provider review and reduced rates of fatal overdose related to prescription opioids (20, 22) and benzodiazepines (22), as well as increased rates of overdose death related to heroin and cocaine (22). The other 3 studies, which did not include state fixed effects to adjust for time-fixed sources of confounding, reported statistically significant increases in opioid-related overdoses (23, 24, 28).

One study (serious ROB) estimated the combined effect of state pain clinic laws and PDMPs that require providers to query the database (25). Using data from 38 states from 2006 to 2013, the authors found that these policies together reduced prescription opioid overdose deaths (incident rate ratio, 0.81 [CI, 0.69 to 0.95]) (25).

One study (serious ROB) estimated the association between PDMP robustness and prescription opioid–related deaths (26). The robustness measure of Pardo (26), grounded in an appreciation of a multifactorial characterization of PDMPs, aimed to quantify the ability of a PDMP to influence provider practices and behaviors. To create this measure, Pardo assigned different weights to administrative features that have a theoretical but subjective basis for changing provider behavior or reducing overdose deaths. For example, access to PDMPs for law enforcement and prosecutors was assigned a weight of 1, whereas requiring that providers check PDMPs before prescribing to a patient was assigned a weight of 4. Next, Pardo calculated scores by summing the total weights for each state by year, with scores ranging from 0 to 23, and found a 1.5% (CI, 0.3% to 30.0%) reduction in the opioid-related overdose death rate for each point assigned to a state's PDMP score. The author estimated that such a reduction would be associated with preventing approximately 300 deaths nationwide per year.

Study Quality and SOE

The Table summarizes the overall SOE regarding PDMPs and drug overdoses. In general, the SOE was stronger for fatal than nonfatal outcomes. A common limitation across comparisons was the small number of studies. For nonfatal outcomes and specific administrative features, the paucity of studies with serious ROB led to estimates that were inconsistent and imprecise, resulting in insufficient SOE. Low-grade evidence was available for the association between PDMP implementation and fatal overdoses. This judgment of low overall SOE for the relationship between PDMP implementation and fatal overdoses was based on the studies with low to moderate ROB, which were precise and consistent. In addition, low-grade evidence was available for the relationship between 4 specific administrative features— mandatory provider review, provider authorization to access PDMP data, frequency of reporting, and monitoring of nonscheduled drugs—and fatal overdoses.

Discussion

Evidence that PDMP implementation either increases or decreases nonfatal or fatal overdoses is largely insufficient, as is evidence regarding any association between specific PDMP administrative features and nonfatal or fatal overdoses (Table). The only exception is low-strength evidence of a reduction in fatal overdoses after implementation of PDMPs, specifically those that have mandatory provider review, authorize providers to access PDMP data, update data frequently, and monitor nonscheduled drugs.

Seven studies with low to moderate ROB found inconsistent evidence to support an association between PDMP use and a change in fatal overdoses. The 2 studies that found a decrease in fatal overdoses after PDMP implementation (27, 32) were based on programs started after 2004, when the first Model Prescription Monitoring Program Act was published by the National Alliance for Model State Drug Laws (NAMSDL) (37). Because the NAMSDL act included language promoting the monitoring of nonscheduled drugs, proactive reporting, provider authorization to access PDMP data, and interstate sharing, it is possible that certain, more restrictive, administrative features, common in more recent PDMPs, drove the reductions in overdose deaths that were observed in those studies (27, 32). Indeed, we found low-strength evidence for a relationship between 4 specific administrative features—mandatory provider review (20, 22), provider authorization to access data (20, 22), more frequent reporting (19, 27), and monitoring of nonscheduled drugs (19, 27)—and a decrease in fatal overdoses. Differences in the influence of certain administrative features suggest that future studies may be more fruitful if they focus on identifying a set of PDMP “best practices” for implementing programs that confer the greatest reduction in overdoses. To identify this set of PDMP best practices, future studies will have to move beyond estimating the effect of specific administrative features or generating a subjective robustness measure and model the complex interplay among different features that bring about the greatest reduction in overdoses, such as latent class analysis or machine learning methods.

Implementation of PDMPs may have unintended negative outcomes—namely, increased rates of heroin-related overdose. Of the 6 studies examining the relationship between PDMP enactment and heroin-related overdoses, 3 found a statistically significant postimplementation increase in these events (22, 32, 36). Programs that have adopted best practices, such as real-time reporting and proactive provision of unsolicited patient reports to providers, may reduce the feasibility of “doctor shopping” as well as the overall supply of prescription opioids available from the illicit market. A reduction in black market prescription opioids, although generally viewed as positive, also may generate unanticipated outcomes. For example, an ethnographic study of high-risk users in Philadelphia and San Francisco found that key drivers of the progression from prescription opioid to heroin use are the rising cost of the “pill habit” and heroin's easy availability and comparatively lower cost (38). As such, changes to either the supply or cost of prescription opioids after a PDMP is instituted might reasonably drive opioid-dependent persons to substitute their preferred prescription opioid with heroin or nonpharmaceutical fentanyl. In that case, policies and laws targeting drug supplies will have to be supplemented by initiatives to better identify persons with opioid dependency and refer them to medication-assisted treatment, or by other evidence-based treatment methods, to mitigate any unintended consequences.

An English-language MEDLINE search up to January 2018 failed to reveal any systematic reviews on the association between PDMP implementation and nonfatal or fatal overdoses. However, we identified 2 narrative reviews (14, 39) that characterized the mechanisms whereby PDMPs might affect population health by influencing prescribing practices and patient behavior; both studies found that PDMPs decrease drug diversion and doctor shopping. Although the state PDMP has become a hallmark health care technology–based intervention to address illegal opioid-prescribing behaviors and their downstream health consequences, our review highlights the dearth of evidence to inform these policies.

Our systematic review had several limitations. All the studies were observational, and many had serious or critical ROB. Studies used many different modeling strategies to account for confounding factors and often examined different years of data and different outcomes. Many did not report effect estimates with CIs or provide sufficient information to calculate a standardized effect estimate. Whether statistically insignificant findings were due to the absence of an association or to insufficient power often was unclear. Although publication bias and selective outcomes reporting are potential limitations, we searched for unpublished trials and outcomes at ClinicalTrials.gov and found no evidence of either of these biases. Although we required studies to be published in English because of limited resources for translation, our review of references from the identified studies did not elucidate any non-English publications that seemed to meet our eligibility criteria.

The limitations in the existing literature suggest a need for several areas of future study. First, more research is needed on the relationship between PDMPs and nonfatal overdoses. Only 4 of the 17 articles included in this review examined nonfatal overdoses. Expanded research into these events will provide a better understanding of how PDMPs affect a broader range of opioid-related harms. Second, research should examine the moderating influence of county-level factors, particularly the influence of area-level income. Residents of higher-income areas are more likely than those of lower-income regions to access prescription opioids through their medical providers and, in the case of prescription opioid misuse, to receive referrals to evidence-based treatment and other care (40, 41). Thus, PDMPs may affect low- and high-income populations differently, widening existing health disparities (42). Third, analysis is needed regarding how complementary drug prevention programs (such as medication-assisted therapy, naloxone distribution, and pill mill laws) interact with PDMPs to affect population health. Except for the study by Dowell and colleagues (25) that measured the combined effect of state pain clinic laws and PDMPs, extant studies have focused largely on estimating PDMPs' effect on prescribing behaviors or health outcomes while statistically adjusting for complementary drug prevention programs (such as naloxone distribution initiatives or pill mill laws), instead of investigating whether complementary programs have a synergistic (that is, more than additive) effect on overdose rates. This method of isolating the effect of PDMPs from that of other drug prevention programs probably will have limited utility for decision makers who need information about the health consequences of various options during the policy development process.

We conclude that variations in PDMP features are likely to affect outcomes differently. A PDMP's ability to influence population health probably arises from its unique set of administrative features. Future studies will have to consider this variation in features to develop a set of empirically based best practices that result in the greatest reduction in prescription opioid–related harm and mitigate any potential unintended consequences of PDMPs, such as heroin-related harms.

Supplementary Material

Acknowledgments

Grant Support: By grant R01 DA039962 from the NIDA, National Institutes of Health (NIH), and grants 2016-PM-BX-K005 and 2015-PM-BX-K001 from the BJA. Mr. Fink is supported by NIDA training grant T32DA031099. The BJA is a component of the Department of Justice's Office of Justice Programs, which include the Bureau of Justice Statistics, National Institute of Justice, Office of Juvenile Justice and Delinquency Prevention, Office for Victims of Crime, and Office of Sex Offender Sentencing, Monitoring, Apprehending, Registering, and Tracking.

Mr. Fink and Dr. Kim report grants from the NIH during the conduct of the study. Ms. Grover is employed as a research coordinator at Duke University for an NIDA Clinical Trials Network study outside the submitted work. Dr. Delcher reports grants from the BJA (National Institute of Justice) during the conduct of the study. Dr. Martins reports grants from the NIDA during the conduct of the study.

Appendix

Appendix Table 1.

Search Strategy

| Search String | Notes |

|---|---|

| MEDLINE (via Ovid [including in-process and other nonindexed citations]) | |

| Searched on 9 November 2016 and updated on 22 December 2017 | |

|

| |

| (“Humans”[MeSH]) | MeSH term for a specific search of indexed articles |

|

| |

| prescription drug monitoring program*.ti,ab or prescription monitoring program*.ti,ab and prescription drug*.ti,ab or overdose.ti,ab or opioid*.ti,ab or prescription opioid*.ti,ab or heroin.ti,ab | Keyword terms for a sensitive search of nonindexed articles |

|

| |

| MeSH search and Keyword search combined with AND | |

|

| |

| 1946 (as far back as records go) to date of search | |

|

| |

| Number of articles identified | 309 |

|

| |

| MEDLINE (via Web of Science) | |

| Searched on 10 November 2016 and updated on 22 December 2017 | |

| (“Humans”[MeSH]) | MeSH term for a specific search of indexed articles |

|

| |

| TS=prescription drug monitoring program* or TS=prescription monitoring program* and TS=prescription drug* or TS=overdose or TS=opioid* or TS=prescription opioid* or TS=heroin | Keyword terms for a sensitive search of nonindexed articles |

|

| |

| No restriction on date range | |

|

| |

| 1946 (as far back as records go) to date of search | |

|

| |

| Number of articles identified | 1024 |

| Current contents | |

| Searched on 10 November 2016 and updated on 27 December 2017 | |

|

| |

| (“Humans”[MeSH]) | MeSH term for a specific search of indexed articles |

|

| |

| TS=prescription drug monitoring program* or TS=prescription monitoring program* and TS=prescription drug* or TS=overdose or TS=opioid* or TS=prescription opioid* or TS=heroin | Keyword terms for a sensitive search of nonindexed articles |

|

| |

| MeSH search and Keyword search combined with AND | |

|

| |

| No restriction on date range | |

|

| |

| Number of articles identified | 577 |

|

| |

| Social Citation Index and Social Sciences Citation Index (via Web of Science Core Collection) | |

| Searched on 17 November 2016 and updated on 27 December 2017 | |

| (“Humans”[MeSH]) | MeSH term for a specific search of indexed articles |

|

| |

| TS=prescription drug monitoring program* or TS=prescription monitoring program* and TS=prescription drug* or TS=overdose or TS=opioid* or TS=prescription opioid* or TS=heroin | Keyword terms for a sensitive search of nonindexed articles |

|

| |

| MeSH search and Keyword search combined with AND | |

|

| |

| No date limits | |

|

| |

| Number of articles identified | 703 |

| Proquest Dissertation | |

| Searched on 12 December 2016 and updated on 27 December 2017 | |

|

| |

| (“Humans”[MeSH]) | MeSH term for a specific search of indexed articles |

|

| |

| prescription drug monitoring program*.ti,ab or prescription monitoring program*.ti,ab and prescription drug*.ti,ab or overdose.ti,ab or opioid*.ti,ab or prescription opioid*.ti,ab or heroin.ti,ab | Keyword terms for a sensitive search of nonindexed articles |

|

| |

| MeSH search and Keyword search combined with AND | |

|

| |

| No date limits | |

|

| |

| Number of articles identified | 41 |

|

| |

| ClinicalTrials.gov | |

| Searched on 27 December 2017 | |

| (prescription drug monitoring program* OR prescription monitoring program*) AND (prescription drug* OR overdose OR opioid* OR prescription opioid* OR heroin) | Completed Studies | Studies that accept healthy volunteers | Keyword terms for a sensitive search of nonindexed articles |

|

| |

| Completed studies | |

|

| |

| Number of articles identified | 3 |

MeSH = Medical Subject Heading.

Appendix Table 2.

ROB Assessment in Studies That Reported on the Association Between PDMPs and Nonfatal and Fatal Drug Overdoses

| Criteria for ROB Assessment in Studies That Reported on PDMP Effects |

Study, Year (Reference) | |||

|---|---|---|---|---|

| Maughan, 2015 (31) | Bachhuber, 2016 (30) | Brown, 2017 (23) | Pauly, 2018 (28) | |

|

| ||||

| Bias due to confounding | Serious – GEE model adjusted for calendar quarter, metropolitan area, interaction between calendar quarter and metropolitan area, area unemployment rate. Inadequate adjustment for co-implemented policies | Serious – GEE model adjusted for calendar quarter, metropolitan area, interaction between calendar quarter and metropolitan area, area unemployment rate. Inadequate adjustment for co-implemented policies | Critical – Failure to account for competing interventions that might have affected the rate of nonfatal and fatal opioid-related overdose events is likely to differentially affect the pre-intervention or post-intervention rate of events. Focus on slopes, instead of intercept, increases risk for time-varying confounding. | Serious – GEE model adjusted for time, geographic region, rate of diagnosed substance use disorder, percentage of population male, percentage aged 25–35 years, and insured population counts. Inadequate adjustment for time-invariant factors and co-implemented policies. |

|

| ||||

| Bias in selection of participants into the study | Low – Selection of metropolitan areas based on data availability | Low – Selection of metropolitan areas based on data availability | Low – Single state examined pre-/post-intervention | Moderate – Data from Truven Health MarketScan administrative claims data. Sample is representative of the privately insured and employed U.S. population |

|

| ||||

| Bias in classification of interventions | Low – Intervention was clearly defined. | Low – Intervention was clearly defined. | Low – Intervention was clearly defined. | Low – Data from NAMSDL and PDAPS |

|

| ||||

| Bias due to deviations from intended interventions | Low – No deviation from the intended intervention. | Low – No deviation from the intended intervention. | Low – No deviation from the intended intervention. | Low – No deviation from the intended intervention. |

|

| ||||

| Bias due to missing data | Low – No missing data were reported. | Low – No missing data were reported. | Low – No missing data were reported. | Low – No missing data were reported. |

|

| ||||

| Bias in measurement of outcomes | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy |

|

| ||||

| Bias in selection of the reported results | Low – Expected analyses were reported. | Low – Expected analyses were reported. | Moderate – Post-implementation change in intercept was not reported. | Low – Expected analyses were reported. |

|

| ||||

| Overall bias | Serious – Inadequate adjustment of time-invariant and time-varying factor | Serious – Inadequate adjustment of time-invariant and time-varying factor | Critical – Inadequate adjustment for key competing interventions. No alternative specifications were explored, e.g., date of legislation vs. implementation. | Serious – Inadequate adjustment of time-invariant and time-varying factors; sample unlikely to represent U.S. population. |

| Paulozzi, 2011 (34) | Kim, 2013 (19) | Li, 2014 (35) | Delcher, 2015 (29) and Delcher, 2016 (36) | |

|

| ||||

| Bias due to confounding | Moderate – State fixed-effects adjust for time-invariant differences and adjustment for time, state, and spatial autocorrelation; however, inadequate adjustment for co-implemented policies | Moderate – State fixed-effects adjust for time-invariant differences, adjustment for demographic, socioeconomic status, health/health care, and gun control laws; however, limited adjustment for time-varying factors | Critical – GEE model adjusted for calendar quarter, demographic characteristics (percentage male, percentage aged 35–54 years, percentage white), geographic region, unemployment rate. Inadequate adjustment for time-invariant state differences and co-implemented policies | Serious – Misspecification of ARIMA model poses serious risk; however, numerous sensitivity analyses were performed; minimal adjustment for co-implemented policies except pill mill laws. |

|

| ||||

| Bias in selection of participants into the study | Low – Selection of 50 U.S. states and D.C. | Low – Selection of 50 U.S. states and D.C. | Low – Selection of 50 U.S. states and D.C. | Moderate – Study limited to state of Florida, unlikely to represent U.S. effect |

|

| ||||

| Bias in classification of interventions | Low – Intervention determined from IJIS Institute. | Low – Intervention determined from IJIS Institute and NAMSDL | Low – Intervention from DEA | Low – Implementation date ascertained from state |

|

| ||||

| Bias due to deviations from intended interventions | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention |

|

| ||||

| Bias due to missing data | Low – No missing data were reported. | Low – No missing data were reported. | Low – No missing data were reported. | Low – Minimal missing data |

|

| ||||

| Bias in measurement of outcomes | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy |

|

| ||||

| Bias in selection of the reported results | Low – Expected analyses were reported. | Low – Expected analyses were reported. | Low – Expected analyses were reported. | Low – Expected analyses were reported. |

|

| ||||

| Overall bias | Moderate – Inadequate adjustment of time-varying factor, but assessment of spatial autocorrelation | Moderate – Inadequate adjustment of time-varying factor | Serious – Inadequate adjustment of time-invariant and time-varying factors | Serious – Misspecification of ARIMA model will produce bias estimates; sensitivity analyses reduce some ROB. |

| Radakrishnan, 2015 (21) | Kilby, 2015 (32) | Patrick, 2016 (27) | Birk, 2017 (20) | |

|

| ||||

| Bias due to confounding | Low – State and year fixed-effects adjust for time-invariant differences; robust adjustment for sociodemographics and adjustment for co-implemented policies | Moderate – State and fixed-effects adjust for time-invariant differences, adjustment for unemployment rate and population over 60 years; limited adjustment for time-varying factors | Moderate – State fixed-effects adjust for time-invariant differences, adjusted for unemployment, education attainment rate; extensive sensitivity analyses | Low – State fixed-effects adjust for time-invariant differences; adjustment for pill mill laws, Good Samaritan laws, naloxone distribution, and medical marijuana legalization |

|

| ||||

| Bias in selection of participants into the study | Low – Selection of 50 U.S. states and D.C. | Moderate – 12 states that adopted PDMPs before 2003 were excluded. | Low – 15 states that did not implement a law during study period were excluded. | Low – Selection of 50 U.S. states and D.C. |

|

| ||||

| Bias in classification of interventions | Low – Intervention determined from NAMSDL | Low – Intervention determined from NAMSDL | Low – Intervention from Law Atlas and NAMSDL | Low – Intervention determined from The Network of Public Health Laws and the PDMP Center for Excellence |

|

| ||||

| Bias due to deviations from intended interventions | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention |

|

| ||||

| Bias due to missing data | Low – No missing data were reported. | Moderate – 12 states that adopted PDMP before 2003 | Moderate – Excluded Florida and West Virginia due to influence of co-implemented laws; some suppressed data, multiple imputation | Low – No missing data were reported. |

|

| ||||

| Bias in measurement of outcomes | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy |

|

| ||||

| Bias in selection of the reported results | Low – Expected analyses were reported. | Low – Expected analyses were reported. | Low – Expected analyses were reported. | Low – Expected analyses were reported. |

|

| ||||

| Overall bias | Low – Adequate adjustment for time-invariant factors (fixed effects) and robust control for co-implemented policies | Moderate – Inadequate adjustment of time-varying factor; exclusion of 12 early-adopter states | Moderate – Inadequate adjustment of time-varying factors; exclusion of states with time-varying confounding instead of adjustment | Low – Robust adjustment of confounding and sensitivity analyses |

| Nam, 2017 (33) | Meinhofer, 2017 (22) | Dowell, 2016 (25) | Pardo, 2017 (26) | |

|

| ||||

| Bias due to confounding | Moderate – State and year fixed-effects adjust for time-invariant differences and secular trends; adjustment for sociodemographics and health care access; however, inadequate adjustment for co-implemented policies | Moderate – State and year fixed-effects adjust for time-invariant differences and secular trends; however, adjustment for co-implemented policies | Moderate – State and year fixed-effects adjust for time-invariant differences and secular trends, adjustment for opioid prescribing rate, pending death rates; however, inadequate adjustment for co-implemented policies | Low – State and year fixed-effects adjust for time-invariant differences and secular trends; adjustment for pill mill laws, Good Samaritan laws, naloxone distribution, and medical marijuana legalization |

|

| ||||

| Bias in selection of participants into the study | Moderate – 15 states that adopted PDMPs before 2000 were excluded. | Low – Selection of 50 U.S. states and D.C. | Low – 12 states were excluded for low outcome counts and complex policy situations. | Low – Selection of 50 U.S. states and D.C. |

|

| ||||

| Bias in classification of interventions | Moderate – No information on how date of intervention was determined | Low – Intervention determined from NAMSDL, TTAC, and ONCHIT | Low – Intervention from WestLaw, TTAC, and NAMSDL | Critical – The continuous score that was the primary independent variable of interest was determined on the basis of factors previously found to reduce the outcome. |

|

| ||||

| Bias due to deviations from intended interventions | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention | Low – No deviation from the intended intervention |

|

| ||||

| Bias due to missing data | Moderate – Some data suppressed due to small counts; 15 states that adopted PDMPs before 2000 were excluded. | Moderate – States with zero counts were imputed 1 dead. | Moderate – 12 states with low number of deaths were excluded. | Low – No missing data were reported. |

|

| ||||

| Bias in measurement of outcomes | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy | Low – Measurement of outcome independent of policy |

|

| ||||

| Bias in selection of the reported results | Low – Expected analyses were reported. | Low – Expected analyses were reported. | Low – Expected analyses were reported. | Serious – The use of a subjective exposure increases the likelihood that many analyses were conducted to determine the best model; however, no information on such analyses was reported. |

|

| ||||

| Overall bias | Moderate – Inadequate adjustment for time-varying factors, exclusion of states that adopted PDMP before 2000 | Moderate – Inadequate adjustment of time-varying factor; unexpected handling of missing data | Serious – Inadequate adjustment of time-varying factor; unexpected handling of missing data | Serious – Subjective measure of intervention; inadequate information on multiple tests; robust adjustment of confounding |

| Phillips, 2017 (24) | – | – | – | |

|

| ||||

| Bias due to confounding | Critical – Random state and year variable fail to account for time invariant differences among states or secular trends; adjustment for education, unemployment, percentage of population on disability, and medical marijuana laws; however, no adjustment for other time-varying policies | – | – | – |

|

| ||||

| Bias in selection of participants into the study | Low – Selection of 50 U.S. states and D.C. | – | – | – |

|

| ||||

| Bias in classification of interventions | Low – Intervention determined from NAMSDL | – | – | – |

|

| ||||

| Bias due to deviations from intended interventions | Low – No deviation from the intended intervention | – | – | – |

|

| ||||

| Bias due to missing data | Low – No missing data were reported. | – | – | – |

|

| ||||

| Bias in measurement of outcomes | Low – Measurement of outcome independent of policy | – | – | – |

|

| ||||

| Bias in selection of the reported results | Low – Expected analyses were reported. | – | – | – |

|

| ||||

| Overall bias | Critical – Inadequate adjustment of time-invariant and time-varying factor | – | – | – |

ARIMA = autoregressive integrated moving average; DEA = U.S. Drug Enforcement Administration; GEE = generalized estimating equation; IJIS = Integrated Justice Information Systems; NAMSDL = National Alliance for Model State Drug Laws; ONCHIT = Office of the National Coordinator for Health Information Technology; PDAPS = Prescription Drug Abuse Policy System; PDMP = prescription drug monitoring program; ROB = risk of bias; TTAC = Brandeis University's PDMP Training and Technical Assistance Center.

Footnotes

Note: Mr. Fink had full access to all the data in the study and takes responsibility for the integrity of the data and the accuracy of the data analysis.

Disclaimer: Viewpoints or opinions in this document are those of the authors and do not necessarily represent the official position or policies of the U.S. Department of Justice.

Disclosures: Authors not named here have disclosed no conflicts of interest. Disclosures can also be viewed at www.acponline.org/authors/icmje/ConflictOfInterestForms.do?msNum=M17-3074.

Reproducible Research Statement: Study protocol: See Supplement 1. Statistical code and data set: Not available.

Current author addresses and author contributions are available at Annals.org.

Author Contributions: Conception and design: D.S. Fink, S.S. Martins, M. Cerdá.

Analysis and interpretation of the data: D.S. Fink, J.P. Schleimer, C. Delcher, A. Castillo-Carniglia, S.G. Henry.

Drafting of the article: D.S. Fink, J.P. Schleimer, A. Sarvet, C. Delcher.

Critical revision for important intellectual content: D.S. Fink, J.P. Schleimer, A. Sarvet, C. Delcher, A. Castillo-Carniglia, J.H. Kim, A.E. Rivera-Aguirre, S.G. Henry, S.S. Martins, M. Cerdá.

Final approval of the article: D.S. Fink, J.P. Schleimer, A. Sarvet, K.K. Grover, C. Delcher, A. Castillo-Carniglia, J.H. Kim, A.E. Rivera-Aguirre, S.G. Henry, S.S. Martins, M. Cerdá.

Provision of study materials or patients: D.S. Fink.

Statistical expertise: D.S. Fink, J.P. Schleimer.

Obtaining of funding: S.S. Martins, M. Cerdá.

Administrative, technical, or logistic support: D.S. Fink.

Collection and assembly of data: D.S. Fink, J.P. Schleimer, K.K. Grover.

References

- 1.Guy GP, Jr, Zhang K, Bohm MK, et al. Vital signs: changes in opioid prescribing in the United States, 2006–2015. MMWR Morb Mortal Wkly Rep. 2017;66:697–704. doi: 10.15585/mmwr.mm6626a4. [PMID: 28683056] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 2.Han B, Compton WM, Jones CM, Cai R. Nonmedical prescription opioid use and use disorders among adults aged 18 through 64 years in the United States, 2003–2013. JAMA. 2015;314:1468–78. doi: 10.1001/jama.2015.11859. [PMID: 26461997] [DOI] [PubMed] [Google Scholar]

- 3.McCabe SE, West BT, Veliz P, McCabe VV, Stoddard SA, Boyd CJ. Trends in medical and nonmedical use of prescription opioids among US adolescents: 1976–2015. Pediatrics. 2017;139 doi: 10.1542/peds.2016-2387. [PMID: 28320868] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 4.Patrick SW, Schumacher RE, Benneyworth BD, Krans EE, McAllister JM, Davis MM. Neonatal abstinence syndrome and associated health care expenditures: United States, 2000–2009. JAMA. 2012;307:1934–40. doi: 10.1001/jama.2012.3951. [PMID: 22546608] [DOI] [PubMed] [Google Scholar]

- 5.Rudd RA, Aleshire N, Zibbell JE, Gladden RM. Increases in drug and opioid overdose deaths—United States, 2000–2014. MMWR Morb Mortal Wkly Rep. 2016;64:1378–82. doi: 10.15585/mmwr.mm6450a3. [PMID: 26720857] [DOI] [PubMed] [Google Scholar]

- 6.Hedegaard H, Warner M, Minino AM. NCHS Data Brief no. 273. Hyattsville, MD: National Center for Health Statistics; 2017. Drug Overdose Deaths in the United States, 1999–2015. [Google Scholar]

- 7.Hedegaard H, Warner M, Minino AM. NCHS Data Brief no. 294. Hyattsville, MD: National Center for Health Statistics; 2017. Drug Overdose Deaths in the United States, 1999–2016. [Google Scholar]

- 8.National Center for Injury Prevention and Control. From Epi to Policy: Prescription Drug Overdose. State Health Department Training and Technical Assistance Meeting. Atlanta: Centers for Disease Control and Prevention; 2013. [Google Scholar]

- 9.Office of National Drug Control Policy; U.S. Executive Office of the President. Epidemic: Responding to America's Prescription Drug Abuse Crisis. Washington, DC: Office of National Drug Control Policy; 2011. [Google Scholar]

- 10.Reisman RM, Shenoy PJ, Atherly AJ, Flowers CR. Prescription opioid usage and abuse relationships: an evaluation of state prescription drug monitoring program efficacy. Subst Abuse. 2009;3:41–51. doi: 10.4137/sart.s2345. [PMID: 24357929] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 11.Surratt HL, O’Grady C, Kurtz SP, et al. Reductions in prescription opioid diversion following recent legislative interventions in Florida. Pharmacoepidemiol Drug Saf. 2014;23:314–20. doi: 10.1002/pds.3553. [PMID: 24677496] [DOI] [PubMed] [Google Scholar]

- 12.Reifler LM, Droz D, Bailey JE, et al. Do prescription monitoring programs impact state trends in opioid abuse/misuse? Pain Med. 2012;13:434–42. doi: 10.1111/j.1526-4637.2012.01327.x. [PMID: 22299725] [DOI] [PubMed] [Google Scholar]

- 13.Islam MM, McRae IS. An inevitable wave of prescription drug monitoring programs in the context of prescription opioids: pros, cons and tensions [Editorial] BMC Pharmacol Toxicol. 2014;15:46. doi: 10.1186/2050-6511-15-46. [PMID: 25127880] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 14.Finley EP, Garcia A, Rosen K, McGeary D, Pugh MJ, Potter JS. Evaluating the impact of prescription drug monitoring program implementation: a scoping review. BMC Health Serv Res. 2017;17:420. doi: 10.1186/s12913-017-2354-5. [PMID: 28633638] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 15.Moher D, Liberati A, Tetzlaff J, Altman DG PRISMA Group. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. Ann Intern Med. 2009;151:264–9. doi: 10.7326/0003-4819-151-4-200908180-00135. [PMID: 19622511] [DOI] [PubMed] [Google Scholar]

- 16.Kaucher S, Leier V, Deckert A, et al. Time trends of cause-specific mortality among resettlers in Germany, 1990 through 2009. Eur J Epidemiol. 2017;32:289–98. doi: 10.1007/s10654-017-0240-4. [PMID: 28314982] [DOI] [PubMed] [Google Scholar]

- 17.Sterne JA, Hernán MA, Reeves BC, et al. ROBINS-I: a tool for assessing risk of bias in non-randomised studies of interventions. BMJ. 2016;355:i4919. doi: 10.1136/bmj.i4919. [PMID: 27733354] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 18.Berkman ND, Lohr KN, Ansari M, et al. Methods Guide for Effectiveness and Comparative Effectiveness Reviews. Rockville: Agency for Healthcare Research and Quality; 2008. Grading the Strength of a Body of Evidence When Assessing Health Care Interventions for the Effective Health Care Program of the Agency for Healthcare Research and Quality: An Update. [PubMed] [Google Scholar]

- 19.Kim M. The Impact of Prescription Drug Monitoring Programs on Opioid-Related Poisoning Deaths. Baltimore: Johns Hopkins Univ Pr; 2013. [Google Scholar]

- 20.Birk EG. Prescription Drug Monitoring Programs and the Abuse of Prescription Drugs. Eugene, OR: Univ Oregon; 2017. [Google Scholar]

- 21.Radakrishnan S. Essays in the Economics of Risky Health Behaviors. Ithaca, NY: Cornell University; 2015. [Google Scholar]

- 22.Meinhofer A. Prescription drug monitoring programs: the role of asymmetric information on drug availability and abuse. Am J Health Econ. 2017 [Forthcoming] [Google Scholar]

- 23.Brown R, Riley MR, Ulrich L, et al. Impact of New York prescription drug monitoring program, I-STOP, on statewide overdose morbidity. Drug Alcohol Depend. 2017;178:348–54. doi: 10.1016/j.drugalcdep.2017.05.023. [PMID: 28692945] [DOI] [PubMed] [Google Scholar]

- 24.Phillips E, Gazmararian J. Implications of prescription drug monitoring and medical cannabis legislation on opioid overdose mortality. J Opioid Manag. 2017;13:229–39. doi: 10.5055/jom.2017.0391. [PMID: 28953315] [DOI] [PubMed] [Google Scholar]

- 25.Dowell D, Zhang K, Noonan RK, Hockenberry JM. Mandatory provider review and pain clinic laws reduce the amounts of opioids prescribed and overdose death rates. Health Aff (Millwood) 2016;35:1876–83. doi: 10.1377/hlthaff.2016.0448. [PMID: 27702962] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 26.Pardo B. Do more robust prescription drug monitoring programs reduce prescription opioid overdose? Addiction. 2017;112:1773–83. doi: 10.1111/add.13741. [PMID: 28009931] [DOI] [PubMed] [Google Scholar]

- 27.Patrick SW, Fry CE, Jones TF, Buntin MB. Implementation of prescription drug monitoring programs associated with reductions in opioid-related death rates. Health Aff (Millwood) 2016;35:1324–32. doi: 10.1377/hlthaff.2015.1496. [PMID: 27335101] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 28.Pauly NJ, Slavova S, Delcher C, Freeman PR, Talbert J. Features of prescription drug monitoring programs associated with reduced rates of prescription opioid-related poisonings. Drug Alcohol Depend. 2018;184:26–32. doi: 10.1016/j.drugalcdep.2017.12.002. [PMID: 29402676] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 29.Delcher C, Wagenaar AC, Goldberger BA, Cook RL, Maldonado-Molina MM. Abrupt decline in oxycodone-caused mortality after implementation of Florida's Prescription Drug Monitoring Program. Drug Alcohol Depend. 2015;150:63–8. doi: 10.1016/j.drugalcdep.2015.02.010. [PMID: 25746236] [DOI] [PubMed] [Google Scholar]

- 30.Bachhuber MA, Maughan BC, Mitra N, Feingold J, Starrels JL. Prescription monitoring programs and emergency department visits involving benzodiazepine misuse: early evidence from 11 United States metropolitan areas. Int J Drug Policy. 2016;28:120–3. doi: 10.1016/j.drugpo.2015.08.005. [PMID: 26345658] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 31.Maughan BC, Bachhuber MA, Mitra N, Starrels JL. Prescription monitoring programs and emergency department visits involving opioids, 2004–2011. Drug Alcohol Depend. 2015;156:282–8. doi: 10.1016/j.drugalcdep.2015.09.024. [PMID: 26454836] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 32.Kilby AA. Opioids for the Masses: Welfare Tradeoffs in the Regulation of Narcotic Pain Medications. Cambridge, MA: Massachusetts Institute of Technology; 2015. [Google Scholar]

- 33.Nam YH, Shea DG, Shi Y, Moran JR. State prescription drug monitoring programs and fatal drug overdoses. Am J Manag Care. 2017;23:297–303. [PMID: 28738683] [PubMed] [Google Scholar]

- 34.Paulozzi LJ, Kilbourne EM, Desai HA. Prescription drug monitoring programs and death rates from drug overdose. Pain Med. 2011;12:747–54. doi: 10.1111/j.1526-4637.2011.01062.x. [PMID: 21332934] [DOI] [PubMed] [Google Scholar]

- 35.Li G, Brady JE, Lang BH, Giglio J, Wunsch H, DiMaggio C. Prescription drug monitoring and drug overdose mortality. Inj Epidemiol. 2014;1:1–8. doi: 10.1186/2197-1714-1-9. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 36.Delcher C, Wang Y, Wagenaar AC, Goldberger BA, Cook RL, Maldonado-Molina MM. Prescription and illicit opioid deaths and the prescription drug monitoring program in Florida [Letter] Am J Public Health. 2016;106:e10–1. doi: 10.2105/AJPH.2016.303104. [PMID: 27153025] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 37.National Alliance for Model State Drug Laws. [on 2 January 2018];Components of a strong prescription monotoring/status program. 2004 Accessed at www.namsdl.org/library/2B94937F-1372-636C-DD57FE97A1B0345C.

- 38.Mars SG, Bourgois P, Karandinos G, Montero F, Ciccarone D. “Every ‘never’ I ever said came true”: transitions from opioid pills to heroin injecting. Int J Drug Policy. 2014;25:257–66. doi: 10.1016/j.drugpo.2013.10.004. [PMID:.24238956] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 39.Worley J. Prescription drug monitoring programs, a response to doctor shopping: purpose, effectiveness, and directions for future research. Issues Ment Health Nurs. 2012;33:319–28. doi: 10.3109/01612840.2011.654046. [PMID: 22545639] [DOI] [PubMed] [Google Scholar]

- 40.Clark JD. Chronic pain prevalence and analgesic prescribing in a general medical population. J Pain Symptom Manage. 2002;23:131–7. doi: 10.1016/s0885-3924(01)00396-7. [PMID: 11844633] [DOI] [PubMed] [Google Scholar]

- 41.Joynt M, Train MK, Robbins BW, Halterman JS, Caiola E, Fortuna RJ. The impact of neighborhood socioeconomic status and race on the prescribing of opioids in emergency departments throughout the United States. J Gen Intern Med. 2013;28:1604–10. doi: 10.1007/s11606-013-2516-z. [PMID: 23797920] [DOI] [PMC free article] [PubMed] [Google Scholar]

- 42.King NB, Fraser V, Boikos C, Richardson R, Harper S. Determinants of increased opioid-related mortality in the United States and Canada, 1990–2013: a systematic review. Am J Public Health. 2014;104:e32–42. doi: 10.2105/AJPH.2014.301966. [PMID: 24922138] [DOI] [PMC free article] [PubMed] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.