Abstract

Evaluation of resident operative skills is challenging in the fast-paced operating room environment and limited by lack of validated assessment metrics. We describe a smartphone-based app that enables rapid assessment of operative skills. Accreditation Council for Graduate Medical Education (ACGME) otolaryngology taxonomy surgical procedures (n = 593) were uploaded to the software platform. The app was piloted over 1 month. Outcomes included (1) completion of evaluation, (2) time spent completing the evaluation, and (3) quantification of case complexity, operative autonomy, and performance. During the study, 12 of 12 procedures, corresponding to 3 paired evaluated by the resident/attending dyad. Mean ± SD time of evaluation completion was 98.0 ± 24.2 and 123.0 ± 14.0 seconds for the resident and attending, respectively. Mean time between resident and attending evaluation completion was 27.9 ± 26.8 seconds. Resident and attending scores for case complexity, operative autonomy, and performance were strongly correlated (P < .0001). Rapid evaluation of resident intraoperative performance is feasible using smartphone-based technology.

Keywords: otolaryngology residency, education, mobile, surgical education

Residency is a critical period for the acquisition of surgical skills. Surgical education has changed from the Halstedian model of “See One, Do One, Teach One” to a competency-based, graduated system of training and evaluation.1,2 The Accreditation Council for Graduate Medical Education (ACGME) mandates that otolaryngology residents “perform a sufficient number, variety and complexity of surgical procedures to ensure education in the entire scope of the specialty.”3 To achieve ACGME objectives, the Residency Case Log Database is organized into Key Indicator cases.4 In addition, the ACGME Milestones Project (Milestones) is designed to assess resident progression for gaining competency in otolaryngology-specific areas of patient care that includes acquisition of surgical skills.5

There is growing need for objective assessment of otolaryngology trainee operative performance.2 Notably, Milestones emphasizes competency-based educational innovation and invites educators to develop new ways to ensure appropriate resident education. To this end, we customized and piloted a novel smartphone-based evaluation tool called System for Improving and Measuring Procedural Learning (SIMPL).6 In this study, we used SIMPL to collect otolaryngology resident operative performance data. We hypothesized that one can readily adapt the otolaryngology case taxonomy for use in the SIMPL app, which may enable rapid assessment of resident operative performance.

Method

SIMPL App

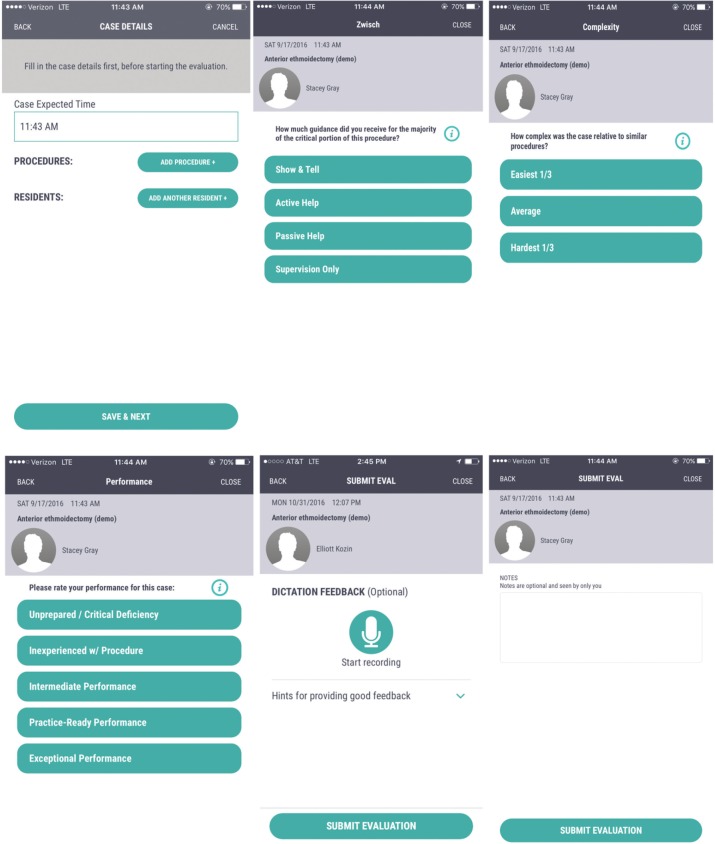

The SIMPL app is a smartphone-based surgical assessment tool that was developed by the Procedural Learning and Safety Collaborative (PLSC), a multi-institutional nonprofit research consortium. Information about SIMPL and PLSC can be found at http://www.procedurallearning.org. SIMPL evaluations consists of 3 questions. The first question uses the validated 4-level “Zwisch scale” to assess resident surgical autonomy5,7 ( Table 1 ). The Zwisch scale is a 1-dimensional behavioral ordinal scale that is used by raters to grade the degree of guidance the attending surgeon provides to a trainee during the critical portions of a procedure.5 The scale ranges from the attending performing the critical portion to the resident performing the critical portion (even while the attending is present to ensure patient safety).7 The second question assesses intraoperative performance on a 5-level scale8 ( Figure 1 ). The final question assesses the complexity of the procedure on a 3-level scale ( Figure 1 ). The resident initiates the evaluation and then completes the 3-question evaluation. The faculty member is notified of the pending evaluation and completes an identical assessment ( Figure 1 ).

Table 1.

Zwisch Rating Scale of Resident Autonomy.a

| Zwisch Stage of Supervision | Attending Behaviors | Resident Behaviors Commensurate with This Level of Supervision |

|---|---|---|

| Show and Tell | • Does majority of key portions as the

surgeon • Narrates the case • Demonstrates key concepts, anatomy, and skills |

• Opens and closes • First assists and observes |

| Active Help | • Leads the resident (active assist) for >50% of the

critical portion • Identifies key anatomy • Optimizes the surgical field and demonstrates plane • Coaches technical skills and next steps |

• Actively assists • Practices component technical skills |

| Passive Help | • Follows the lead of the resident (passive assist) for

>50% of the critical portion • Acts as a first assistant • Coaches for polish, refinement of skills, and safety |

• Can accomplish next steps • Recognizes critical transition points |

| Supervision Only | • Provides no unsolicited advice for >50% of the critical

portion • Monitors progress and patient safety |

• Mimics independence • Can safely complete case without faculty guidance • Recovers from most errors • Recognizes when to seek advice/help |

Reproduced from Journal of Surgical Education, Vol 70, Debra A. DaRosa, Joseph B. Zwischenberger, Shari L. Meyerson, Brian C. George, Ezra N. Teitelbaum, Nathaniel J. Soper, Jonathan P. Fryer, A Theory-Based Model for Teaching and Assessing Residents in the Operating Room, pp 24-30, Copyright 2013, with permission from Elsevier.5

Reproduced with permission from Edward Kobraei, Jordan Bohnen, Brian George, et al. Uniting Evidence-Based Evaluation with the ACGME Plastic Surgery Milestones: A Simple and Reliable Assessment of Resident Operative Performance. Plastic and Reconstructive Surgery. 2016;138(2):349e-57e. http://journals.lww.com/plasreconsurg.6

Figure 1.

Demonstration of System for Improving and Measuring Procedural Learning (SIMPL) app evaluation. Top right describes the validated “Zwisch” scale. Bottom left and middle screens ask questions related to case complexity and preparation.

Otolaryngology Case Taxonomy

The ACGME-codified list of otolaryngology procedures, including Key Indicators, was entered into the SIMPL taxonomy database and integrated with otolaryngology-specific search terms.

Study Design and Analysis

The SIMPL evaluation application was prospectively piloted for use with a single otolaryngology resident and faculty surgeon over a 1-month period. The faculty surgeon and resident completed a training session to calibrate to the evaluation scales and ensure reliability of ratings by individuals.9 The resident and faculty member were blinded to results.

Outcomes included rate of completion of evaluation, time spent completing the evaluation, and quantification of case complexity, operative autonomy, and performance. Descriptive statistics and Spearman correlation coefficients were calculated. The study was deemed exempt by the Massachusetts Eye and Ear Infirmary Institutional Review Board.

Results

The SIMPL app was modified by developers to incorporate the customized otolaryngology case taxonomy. ACGME’s otolaryngology taxonomy (n = 593) and Current Procedural Terminology (CPT) codes were uploaded to the SIMPL platform.

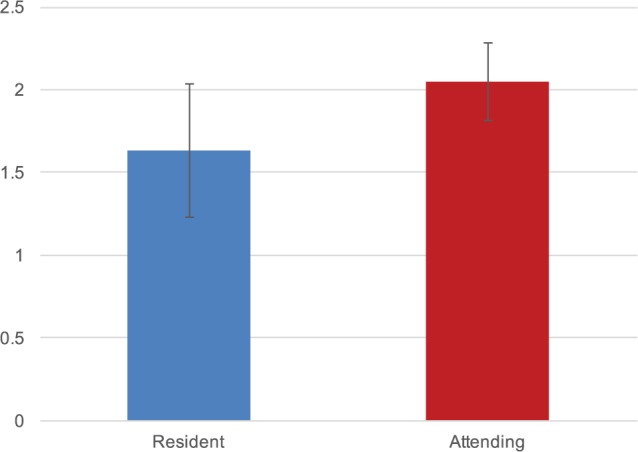

After an initial training session, 12 of 12 (100%) endoscopic sinus procedures were evaluated over the 1-month study period, corresponding to 3 completed paired evaluations by the resident and attending. Each evaluation assess 4 distinct sinus procedures. Mean ± SD time to complete each evaluation was 98.0 ± 24.2 and 123.0 ± 14.0 seconds for the resident and attending, respectively ( Figure 2 ). Mean time between resident and attending evaluation completion was 27.9 ± 26.8 seconds.

Figure 2.

Time to complete evaluations. Time to complete evaluation on System for Improving and Measuring Procedural Learning (SIMPL) app (n = 12 procedures, and 3 paired evaluations).

Resident and faculty evaluations of case complexity, operative autonomy, and performance demonstrated a strong correlation (correlation coefficient = 1.0, P < .0001).

Discussion

In this pilot study, results suggest that rapid evaluation of resident intraoperative performance is feasible using smartphone-based technology. All evaluations were initiated prospectively and successfully completed. Mean time to complete each evaluation was less than 3 minutes for both the resident and attending. From a qualitative perspective and similar to others,6 there was a noticeable shift in emphasis toward common assessment language. Results confirm findings in the general8 and plastic9 surgery literature, where SIMPL was feasibly integrated into the training environment, and assessed in relation to Milestones evaluations, respectively.

High-quality evaluations are essential to training, but there remain challenges in providing residents with real-time feedback. Multiple otolaryngology residency programs have trialed Objective Structured Assessment of Technical Skills (OSATS) to evaluate surgical skill.10 Although there are limited data, relatively few OSATS evaluations are completed compared with total cases performed during residency, quality of the feedback may vary depending on the evaluator, and OSATS can be time-consuming for trainees and faculty.11,12 Further, Milestones evaluations occur every 6 months, and faculty on the Clinical Competency Committee may be asked to assess residents on surgical skills that they observed months ago, limiting the utility of the feedback.6,13,14 Biannual assessment of resident performance does not provide granular data that would enable trainees to improve more efficiently. Last, not all types of otolaryngologic cases are evaluated in the Milestones system. Creation of an accessible evaluative tool that can be completed in a short period of time allows faculty to provide immediate feedback.

This study has several limitations. First, this pilot study used only a single faculty-resident combination, potentially limiting generalizability. Second, the SIMPL app was not compared with other assessment tools, such as the Objective Structured Assessment of Technical Skill15 or Ottawa Surgical Competency OR Evaluation.16 Finally, the resident and attending were familiar with the app from prior testing and development. Duration of app use may be faster than users less familiar with the software.

Conclusion

Rapid evaluation of otolaryngology residents’ intraoperative performance is feasible using smartphone technology. Smartphone technology may be an effective mechanism for trainee evaluation as otolaryngology programs devise strategies to meet ACGME educational objectives.

Author Contributions

Elliott D. Kozin, conception, data collection, analysis, writing of manuscript, final approval; Jordan D. Bohnen, conception, data collection, analysis, writing of manuscript, final approval; Brian C. George, conception, data collection, analysis, writing of manuscript, final approval; Natalie Justicz, data collection, analysis, writing of manuscript, final approval; C. Alessandra Colaianni, data collection, analysis, writing of manuscript, final approval; Maria Duarte, data collection, analysis, writing of manuscript, final approval; Stacey T. Gray, conception, data collection, analysis, writing of manuscript, final approval.

Disclosures

Competing interests: None.

Sponsorships: None.

Funding source: None.

Footnotes

No sponsorships or competing interests have been disclosed for this article.

This article was presented at the 2016 AAO-HNSF Annual Meeting and OTO EXPO; September 18-21, 2016; San Diego, California.

References

- 1. Kotsis SV, Chung KC. Application of the “see one, do one, teach one” concept in surgical training. Plast Reconstr Surg. 2013;131:1194-1201. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 2. Nasca TJ, Philibert I, Brigham T, et al. The next GME accreditation system—rationale and benefits. N Engl J Med. 2012;366:1051-1056. [DOI] [PubMed] [Google Scholar]

- 3. Accreditation Council for Graduate Medical Education (ACGME). Program requirements for graduate medical education in otolaryngology. http://www.acgme.org/. Accessed Septmeber 1, 2016.

- 4. Accreditation Council for Graduate Medical Education (ACGME). Case log coding guidelines. http://www.acgme.org/. Accessed Septmeber 1, 2016.

- 5. DaRosa DA, Zwischenberger JB, Meyerson SL, et al. A theory-based model for teaching and assessing residents in the operating room. J Surg Educ. 2013;70:24-30. [DOI] [PubMed] [Google Scholar]

- 6. Kobraei EM, Bohnen JD, George BC, et al. Uniting evidence-based evaluation with the ACGME plastic surgery milestones: a simple and reliable assessment of resident operative performance. Plast Reconstr Surg. 2016;138:349e-357e. [DOI] [PubMed] [Google Scholar]

- 7. George BC, Teitelbaum EN, Meyerson SL, et al. Reliability, validity, and feasibility of the Zwisch scale for the assessment of intraoperative performance. J Surg Educ. 2014;71:e90-e96. [DOI] [PubMed] [Google Scholar]

- 8. Bohnen JD, George BC, Williams RG, et al. The feasibility of real-time intraoperative performance assessment with SIMPL (System for Improving and Measuring Procedural Learning): early experience from a multi-institutional trial. J Surg Educ. 2016;73:e118-e130. [DOI] [PubMed] [Google Scholar]

- 9. George BC, Teitelbaum EN, Darosa DA, et al. Duration of faculty training needed to ensure reliable or performance ratings. J Surg Educ. 2013;70:703-708. [DOI] [PubMed] [Google Scholar]

- 10. Martin JA, Regehr G, Reznick R, et al. Objective structured assessment of technical skill (OSATS) for surgical residents. Br J Surg. 1997;84:273-278. [DOI] [PubMed] [Google Scholar]

- 11. Ahmed K, Miskovic D, Darzi A, et al. Observational tools for assessment of procedural skills: a systematic review. Am J Surg. 2011;202:469-480.e6. [DOI] [PubMed] [Google Scholar]

- 12. van Hove PD, Tuijthof GJ, Verdaasdonk EG, et al. Objective assessment of technical surgical skills. Br J Surg. 2010;97:972-987. [DOI] [PubMed] [Google Scholar]

- 13. Williams RG, Chen XP, Sanfey H, et al. The measured effect of delay in completing operative performance ratings on clarity and detail of ratings assigned. J Surg Educ. 2014;71:e132-e138. [DOI] [PubMed] [Google Scholar]

- 14. Kim MJ, Williams RG, Boehler ML, et al. Refining the evaluation of operating room performance. J Surg Educ. 2009;66:352-356. [DOI] [PubMed] [Google Scholar]

- 15. Williams RG, Verhulst S, Colliver JA, et al. A template for reliable assessment of resident operative performance: assessment intervals, numbers of cases and raters. Surgery. 2012;152:517-527. [DOI] [PubMed] [Google Scholar]

- 16. Gofton WT, Dudek NL, Wood TJ, et al. The Ottawa Surgical Competency Operating Room Evaluation (O-SCORE): a tool to assess surgical competence. Acad Med. 2012;87:1401-1407. [DOI] [PubMed] [Google Scholar]