Abstract

Real-world information is primarily sensory in nature, and understandably people attach value to the sensory information to prepare for appropriate behavioral responses. This review presents research from value-based, perceptual, and social decision-making domains, so far studied using isolated paradigms and their corresponding computational models. For example, in perceptual decision making, the sensory evidence accumulation rather than value computation becomes central to choice behavior. Furthermore, we identify cross-linkages between the perceptual and value-based domains to help us better understand generic processes pertaining to individual decision making. The purpose of this review is 2-fold. First, we identify the need for integrated study of different domains of decision making. Second, given that both our perception and valuation are influenced by the surrounding context, we suggest the integration of different types of information in decision making could be done by studying contextual influences in decision making. Future research needs to attempt toward a system-level understanding of various subprocesses involved in decision making.

Keywords: Decision making, neuroeconomics, computational models

Introduction

Decision making is the process of choosing an option among several alternatives. Day-to-day life involves many decision-making scenarios that range from making decisions at the level of an individual (eg, purchases in a supermarket), group (eg, collaborative decision making in companies), or an organization (eg, recruitment of personnel). In individual decision making, choice behavior would reveal subjective and relative preferences of an individual among the multiple alternatives available. It is therefore imperative to understand the mechanisms involved how people arrive at such decisions. Consider an example when you are asked to choose between 1 apple versus 2 apples, which can be considered a value judgment based on the quantity of the reward. Compare this with a situation where you are asked to choose between 1 apple and 1 orange. In such scenarios, a more rewarding option is not necessarily more (or less) in quantity, instead is a different type of reward altogether. Hence, to understand a decision-making phenomenon, we need to understand the computation of subjective values of the available alternatives. Decision-making scenarios encountered in real-life situations are often also repetitive in nature. Thus, understanding the dynamics of value computation over time can help us better understand how people make decisions the way they do and how these decisions can be improved.

Decision making forms a key link between sensation and action in which decision transformation maps sensory evidence onto an action selection followed by its execution. Thus, studying decision making has potential to open a window to study cognition in general.1 Real-world information is primarily sensory in nature, and understandably people attach value to the sensory information to prepare for appropriate behavioral responses. How does the perceptual judgment of alternatives in such scenarios differ from value-based preferences?2 The value computation step distinguishes a simple perceptual discrimination task from those that involve value-based decision making.3 Theoretical accounts have suggested 2 distinct systems for valuation and action selection.4 At a neuronal level, decision-related signals can be easily distinguished from sensory signals, but it is more difficult to isolate these from the action signals.5 The selected action that reveals the choice made and the cognitive process that precedes the choice can be termed as a decision.6 Choices are formed through the computations that are performed when samples of information (either sensory or values) are integrated toward selecting one of several alternatives.7 Thus, choices form a key measure to study individual preferences.

As pointed out by Marr,8 to understand the mechanisms of any cognitive phenomenon, it becomes imperative to investigate at multiple levels covering the computational, algorithmic, and architectural implementations. Accordingly, the purpose of this review is 2-fold. First, we identify the need for integrated study of different domains of decision making, so far studied in an isolated fashion.9 Second, we review and suggest that the integration of different types of information in decision making could be done by studying contextual influences in decision making.

In the next 2 sections, we further review literature from value-based and perceptual decision-making domains and identify cross-linkages between the 2 domains to help us better understand decision-making processes in general.

Value-based decision making

As noted above, valuation of alternatives is central to theoretical, empirical, and computational approaches of decision making. For optimal decision making, subjective evaluation of available alternatives is necessary prior to making a choice. A general mechanism for decision making can be understood by the notion of “common currency” where the comparisons of various competing alternatives can be made over the same relative scale.10 Value-based decision making is mostly investigated in the context of “free-choice” or preference tasks allowing subjects to choose freely among the various options available. The various alternatives provided to the participants vary in magnitude or reward probability and then the choice behavior is studied with reference to these manipulations.

Valuation has also been central to classical and modern economic theories that provide a rich account of human decision-making behavior and subjective preferences. Studies of decision making started with describing outcomes of people’s choices when faced with uncertain or risky decision-making scenarios, in which the choice outcomes are probabilistic in nature and are drawn from a known set of distributions.6 The underlying principle used to explain people’s choices was the maximization of the expected monetary value of the available alternatives. The expected value is given by the sum of payoffs of a particular state multiplied by its respective probability of occurrence. Bernoulli11 demonstrated that expected value is not the maximization of monetary values, but it depends on other factors such as people’s perception of the likelihood of winning over the chosen alternative. Kahneman and Tversky12 showed how in reality it is difficult to formulate a model that takes into account both normative and descriptive theories. Prospect theory was, thus, proposed as a descriptive theory. They also show how in real life people violate the normative accounts of maximization. The fundamental idea behind prospect theory is that the utility function is governed by the gains and losses compared with a relative reference point. The utility function looks like an asymmetric S-curve around the relative reference point. The value function plotted above the reference line is typically a concave-shaped curve reflecting the potential gains and is convexly shaped for values below the reference line denoting potential losses. The asymmetry of the S-shaped curve denotes people behaving as risk-averse in the gains domain and risk-seeking in the loss domain.

Reward prediction error and reinforcement learning

Economic theories have suggested that choice behavior is determined by the values attached to different alternatives in decision-making scenarios. The complementary perspective on how these values are acquired is provided by reward-related learning theories.13 Seminal work by Schultz et al14 showed phasic activity of dopaminergic midbrain neurons corresponding to prediction error which elicits the difference between actual and expected rewards. This process is similar to the computational reinforcement learning (RL) algorithms which model how an agent learns the values of states and actions.15 The agent’s task is to learn an optimal policy that maximizes expected sum of rewards with future rewards discounted exponentially by their delay. Hence, an agent would begin without any knowledge of the environment and would learn or sample through experience over time discovering the most rewarding outcomes using explore or exploit strategies. The Temporal Difference learning, gives the differences in predictions over successive time steps to model the learning process. The discounted sum of all future rewards is computed by an exponential weighting such that reinforcement at a distant time step becomes less important. This can be expressed as follows:

where is the state visited at time t, R is the reward received after transitioning and is the learning rate parameter.

Q-learning is a modified version of TD learning, where the values of state-action pairs are learned directly instead of updating values of each state with respect to the previously experienced rewards. Q-learning can be formally expressed as follows:

where, is the value of the current state-action pair, is the learning rate and R is the reward received on trial t.

Another class of models, also based on the RL framework, known as the actor-critic model consists of 2 modules—an actor and a critic. The critic module is responsible for calculating prediction error, and the actor module is responsible for using the prediction error to update the action values and select an optimal choice from the available alternatives. Daw et al16 proposed 2 distinct types of RL mechanisms that could explain the difference between habitual and goal-directed behavior during learning and decision making. In model-free learning, the agent learns through reward prediction error signals which are insensitive to the model of the environment, ie, learning is based on actual experience of rewards or punishments. However, in a model-based learning, the underlying structure of the surrounding environment (eg, the reward and transition probabilities of a given state-action pair to a new state) helps to predict future outcomes of action sequences.

Current Models of Decision Making

The process of decision making involves at least 2 steps—we need first to represent the various alternatives and then compute the value of these alternatives. The valuation step in decision making depends on environmental factors such as context and time pressure. Contextual knowledge enables an individual to make optimal choices that must be either provided beforehand or learned through experience over time. The value computation can be considered to be either a bottom-up process or a top-down process. The bottom-up process of valuation is dependent on frequency and quantity of reinforcement (reward) of the available alternatives. The top-down process takes into account environmental factors such as expected outcomes or cognitive and emotional states.6 This has been proposed earlier in terms of visual processing mechanisms, where “low-level information extraction of visual properties is followed by higher level decision process of evaluating goals and expectations of the participant to prepare for an appropriate behavioral response.”17(p454)

Gold and Stocker18 recently reviewed visual decision making bringing together a holistic perspective combining events that occur long before decision making to postdecision processes. More specificially, statistical regularities and perceptual learning provide contextual information in addition to top-down and bottom-up factors that influence a decision. The decision process is described in terms of evidence accumulation and deliberation culminating in commitment of choice. Postdecision evaluative processes inform future decisions.

Doya19 proposed that a decision-making process is composed of 4 steps: first is the accumulation of evidence to recognize the present state, second step involves action evaluation in terms of rewards and punishments of the available options, third step involves choosing one of the options based on cost and benefits calculated in the previous step, and the last step of the process is to reevaluate the option according to the outcome and update the rewards or losses associated with the outcome.

Rangel et al20 in one of their reviews on value-based decision making propose the following multistage framework for understanding the processing steps involved in value-based decision making. These components of the framework are not necessarily separate brain regions; rather, they have overlapping brain structures involved. The framework has 3 components. First, they divide the process of decision making into 5 basic subcomponents—(1) representation, (2) valuation, (3) action selection, (4) outcome evaluation, and (5) learning. Representation includes identifying internal and external states such as hunger level and threat levels, respectively, and also a potential course of action associated with each of these states. Valuation step is responsible for assigning a value to each action given the internal and external states. This step results in associating the rewards (or losses) associated with each action. The action selection step is the act of comparing the valuation of each action and making a choice. After a choice is made, the next step is outcome evaluation, where the brain measures the desirability of the current outcome associated with the action taken in the previous step. Finally, the representation, valuation, and action selection are updated to make better future decisions; this step is called the learning step. On the basis of human and animal behavioral evidence, the second component of framework of Rangel et al shows that there are 3 types of valuation systems: (1) Pavlovian, (2) habitual, and (3) goal-directed systems. The Pavlovian valuation systems are “hard-wired” responses to a small set of environmental stimuli. Habitual valuation systems learn and assign values on the basis of past experiences or a trial and error method. Goal-directed systems assign values to actions by calculating an action outcome mapping and reward associated with each of these mappings. Goal-directed systems incorporate modulating variables that affect the decision-making process. Some of these modulating variables are risk and uncertainty and delay discounting.

In another review, Kable and Glimcher21 using primate and human neurophysiology studies, in addition to neuroeconomic models, formulated another multistage decision-making framework. This framework included 2 major steps: (1) valuation and (2) choice. During the valuation stage, different factors of each available alternative are taken into consideration, and a subjective value is assigned to each of the options available. The valuation circuits are seen to be primarily located in the ventromedial prefrontal cortex (vmPFC) and striatum. The choice stage has comparisons being made of the subjective values from the previous step to decide on the best alternative. Neural representation of choice circuits is found in lateral prefrontal and parietal cortex. The valuation and choice stages are similar to valuation and action selection step from Rangel’s framework. The other components from Rangel’s framework, i.e., representation, outcome evaluation, and learning, are not explicitly identified in Kable and Glimcher’s framework, but the processes performed in these stages are implied in the model.

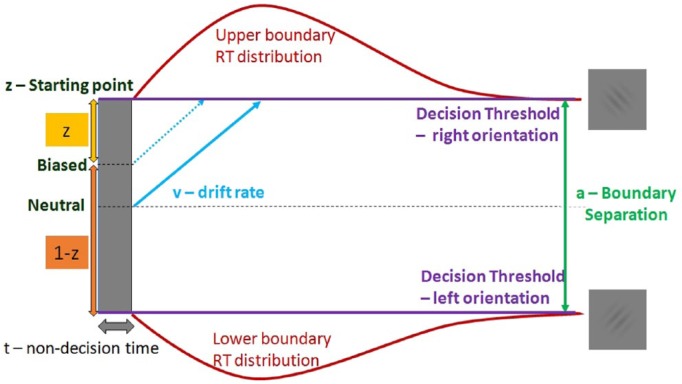

There is a growing consensus that key brain systems associated with the subjective valuation network include the ventromedial and dorsolateral part of the prefrontal cortex, posterior cingulate cortex, and striatum.21 The subjective value network converts the different competing alternatives into a common currency to facilitate a decision. The valuation system is proposed to be subserved by vmPFC that bridges the sensory information with choice execution. It receives input from the dopaminergic systems that have been shown to encode reward prediction error (see Schultz et al,22 for a recent review). More recently, the lateral intraparietal cortex has been shown to play a key role in the transformation of value (Figure 1).23

Figure 1.

Neural model of decision making.

Adapted from Levy and Glimcher.10

The domain of value-based decision making has identified computation of value as a common currency for guiding the choice process. The next section reviews perceptual decision making, where the sensory evidence accumulation rather than value computation becomes central to choice behavior.

Perceptual decision making

Consider a scenario where on a clear sunny morning you are commuting from home to work. You are effortlessly able to see most information, for example, the shops on the sides of the road, signboards to turn left or right, or pedestrians waiting to cross the street. However, during foggy weather, the sensory information is noisy and hence to reach at a particular decision, for example, taking a particular turn or to stop at a traffic light, you take longer to accumulate the incomplete or ambiguous sensory information from the surroundings. This type of decision making where you make categorical judgments over the accumulated sensory evidence is referred to as perceptual decision making. The accumulated sensory information is then translated to guide us how we behave in the world.

In laboratory settings, perceptual decisions are investigated by simulating the uncertain environments through controlled tasks, for example, by asking participants to discriminate the direction of a noisy patch of random dots or a Gabor patch consisting of alternating gratings with certain orientations. However, in real life, there are various other factors that appear simultaneously with the visual stimulus of interest which may or may not influence the decision-making process. Some of these factors are the difficulty of the task presented, prior probability of the event occurrence or knowing the outcome of the task. Because perceptual decisions are easier to control and quantify as compared with other decisions, perceptual decision-making domain has been studied extensively using various behavioral, computational, and neural approaches (see Hanks and Summerfield,24 for a recent review). We now discuss various experimental approaches used to understand the process of perceptual decision making in humans and primates. Most commonly used approaches to investigate perceptual decision making are based on different visual discrimination tasks where the participant has to identify a certain stimuli or pattern from a noisy environment. A random dot motion task is perhaps the most widely used task to investigate perceptual decision mechanisms. In this paradigm, the participants are asked to identify the motion of coherently moving dots among a patch of noisy dots moving in random motion. In nonhuman primate studies, the identification of direction is usually revealed by eye movements. In human participants, the direction identification response is usually recorded as a key press corresponding to a particular direction of motion. Typically, such studies use a 2-alternative forced choice (2AFC) setup where the minority coherent dots move in either the left or the right direction. Random dot motion task has 2 variants. The first is where subjects are allowed to report the direction of the coherently moving dots as soon as they recognize the motion of the dots. This is known as the reaction time variant. Such an experimental setup not only shows when the participant decided on the stimulus direction but also shows how much evidence was required to reach that particular decision. This is a good setup for investigating the speed-accuracy trade-off in perception. The second variant of the task is where the stimulus is displayed for a fixed amount of time usually for 1 or 2 seconds.

Several computational models have been used that characterize performance and explain how humans and animals arrive at a decision when faced with a noisy environment. Signal detection theory (SDT) offers a simple explanation of how stimulus information is accumulated over time to arrive at a decision. It is a mathematical procedure that can quantify between the signal (stimuli of interest) and noise (background stimuli of no interest). The main advantage of using SDT over other popular mathematical frameworks such as information theory and game theory approaches is that it can specify how a single observation leads to a single response. SDT can also be applied to both behavioral and neuronal data. There is also a notion that representation of sensory evidence further gives rise to something called a decision variable over which a decision rule is applied.1

The SDT suggests that a decision is reached on the basis of a single sample of information, whereas another prominent framework of perceptual decision making is sequential sampling models (SSMs) that assume multiple samples are integrated over time until a decision boundary is reached.25 Sequential sampling models of decision making can be seen as a time domain extension of the SDT. All SSMs repeatedly sample and accumulate evidence over time to reach a decision. Sequential sampling models are separated into 2 classes: diffusion models26 that assume relative evidence accumulation over time and race models that assume independent evidence accumulation and response commitment once the first accumulator crosses a boundary.27

Diffusion Decision Models

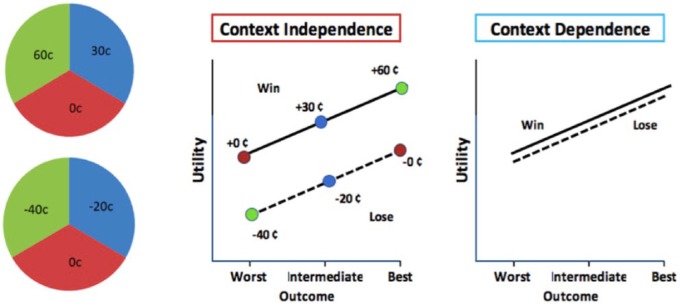

Diffusion models are used to model fast 2-choice decisions (see Ratcliff et al,26 for a recent review). These models assume accumulation of noisy information over time toward the decision criteria representing the 2 available choices. The 2 choices are represented as upper and lower boundaries. Once the accumulated evidence crosses a threshold toward 1 of the 2 choice decision boundaries, the corresponding option is chosen. This assumption not only gives the choice distributions but also gives the response time distributions which is the time taken by the model to reach a decision boundary. The speed with which the evidence accumulation process approaches 1 of the 2 decision boundaries represents the relative evidence toward 1 of the 2 boundaries and is termed as drift rate (v). Due to noise in each trial of the drift process, the time taken to reach a particular boundary would vary across trials. If such a consistent variation is observed over different conditions, the drift rate reflects task difficulty with smaller drift rates representing more difficult tasks. When participants’ performances are compared, drift is a measure for individual cognitive or perceptual speed of information processing. The distance between the 2 boundaries is called threshold and is denoted by “a.” Threshold per trial gives the amount of evidence to be accumulated until a response can be executed. A lower threshold leads to not only faster responses but also increased noise influence on judgments making the decisions impulsive and more error prone. A higher threshold leads to careful responses (slower more accurate but skewed RT distributions). Different studies have shown that the parameter “a” is sensitive to speed versus accuracy instructions. In addition, slowing in response times is shown to be attributed to age-related changes which can be partially explained by the conservative response styles. Response time per trial does not solely comprise the decision making but also includes perception, movement initiation, and execution which clubbed together form the nondecision time parameter (t) of the drift diffusion process. The drift diffusion model also includes a bias parameter z, which represents the starting point of the drift process relative to the 2 boundaries. Bias parameter is responsible for the starting point of response time distributions for each trial. Difference in bias parameter across conditions can reflect choices encountered with different payoff matrices (Figure 2). For example, the starting point moves toward a response threshold when the corresponding response leads to greater rewards (see Voss et al,28 for a review).

Figure 2.

Schematic diagram of a drift diffusion model.

There are also other models that aim to understand perceptual decision making which are inspired by the underlying biologically phenomenon such as inhibition mechanisms of neurons and information decay over time. Leaky competing accumulator (LCA) model is one such leaky diffusion model. The LCA is a connectionist network model of decision making. Preferences in this model are based on the sequential evaluation of advantages and disadvantages of each prospect.29 Another model similar to the LCA is the linear ballistic accumulator (LBA). The LBA has 2 accumulators as in the LCA but assumes a noisy environment for longer timescales but a noise-free environment during the evidence accumulation process.30

As described in the previous 2 sections, research in perceptual decision making has mainly relied on experimental paradigms and computational models that are different from those of value-based decision making. Most of the decisions pertaining to social situations involve more than one individual, which forms yet another domain of study in decision-making research, expanded in the following section.

Social decision making

Traditionally, decision making has been investigated under the light of individual decision making. The experiments designed are such that participants are asked to choose between different available options of monetary gambles or goods. Participants’ choices in such settings only depend on their individual preferences and valuation. However, in real-life scenarios, the decision making by individuals is not done in isolation but is an outcome of complex social interactions which sometimes also depends on the choices of other individuals. Scientists in recent years have begun investigating social decisions using approaches from game theory which is a part of experimental economics. Game-theoretic constructs are useful to investigate situations that involve conflict between short-term rewards and more distant, but potentially larger, rewards. For example, to keep long-term benefits of a sustained cooperative relationship would I be willing to go through the immediate costs attached to altruism (see Rilling and Sanfey,31 for a review). A variety of neuroscientific methods are also being used to understand a more detailed picture of psychological and neural correlates underlying social decision making.

Experimental games such as the ultimatum game and trust games have been widely used to study strategic interactions during fair versus unfair behaviors. In an ultimatum game, 2 players are given an opportunity to divide a given some of money among themselves. One of the players is called as proposer who decides on what should be the proportion of dividing the money. The other player called the responder can either accept or reject the proposer’s offer of the division. If the responder accepts the offer, then the money is split as proposed. In case the responder decides to reject the offer, both proposer and responder end up with receiving nothing. According to Nash equilibrium prediction, if people make choices purely out of self-motivation, then any offer made by the proposer should always be accepted by the responder, and the proposer should always offer the smallest nonzero amount. However, it is seen that offers below 20% split are rejected half of the time32 possibly owing to the feeling of being mistreated. Thus, participant’s choices are motivated not only by self-interest but also by other factors. Neuroscientific methods can help unraveling these complex decision-making interactions. Trust game is useful to study reciprocity which is another essential element of social interaction. Trust game involves 2 participants: one player is the investor and the other is the trustee. The investor decides to how much money has to be invested together with the trustee. The amount that the investor decides is than multiplied by some factor and the new increased amount is then at the disposal of the investor to return (or not) to the investor. According to game theory predictions, trustee out of his rational and self-motivated interests would never honor the investor’s trust and would end up returning zero money to the investor and the investor knowing this would never invest anything in the first place. However, in real-life scenarios, it is seen that trustees do transfer some amount of money and the trust is reciprocated.33 Prisoner’s dilemma is another experimental game used for investigating reciprocity. This game helps us better understand competition and cooperation in complex social settings. In this game, both players decide to choose whether to trust the other player or not without knowing each other’s intent. In prisoner’s dilemma, the highest payoff is achieved when one of the partners cooperates and the other defects. If both participants decide to cooperate, a moderate payoff is achieved and when both decide to defect the participants receive the least payoff.

The paradigms mentioned above involving social decision making extend individual decisions to highly complex social scenarios. Thus, in addition to the valuation of multiple alternatives, the decisions in social interactions also depend on choices of others, which affect both the individual as well as others.31

How context-dependent perception affects valuation and choice

In visual perception domain, this has been long known that context of surrounding objects in the field of view affects the perception of the target object. Perceptual attributes of an object such as perceived size, shape, and color of objects are liable to change due to changes in the context despite the actual physical attributes being constant at all times. For example, Ebbinghaus illusion (or Titchener circles) shows 2 objects which look equal in size, but when the same objects are surrounded by other objects, the target objects are perceived to be of different sizes.

However, in the field of value-based decision making, these ideas of context as modulators have not been considered by the standard economic theories. The expected utility theory and optimal foraging theory34 assume that irrelevant information (or context) does not affect the decision outcome. This also means that the value calculation step is strictly performed only on relevant alternatives and contextual information such as temporal history and available resources do not bias decision making. Contrary to the above-mentioned theories, a number of studies have shown that context strongly affects choice behavior violating the assumption of normative theories that humans are rational decision makers. In multialternative choice, inclusion of other options can alter choices among a fixed set of options.35,36 The attraction effect is the enhancement of the preference for one of the options by introducing a similar but inferior decoy option. The similarity effect increases the probability of selecting the dissimilar option, and the compromise effect increases the probability of selecting the third option that is intermediate to the 2 original options.37 Studies, for example, show that context can lead to a bias in subjective valuation and hence lead to a change in preferences38; choice behavior was also shown to be biased when the same alternatives were differently worded (framing effect39).

The decision field theory40 was based on the idea of sequential sampling of information that is accumulated over time to make a decision in uncertain environments. Kőszegi and Rabin41 developed a reference-dependent model of preferences. They assume that a person’s reference point is determined by the context of the rational expectations about outcomes from recent past. They illustrated that the endowment effect (ie, people ascribe more value to things merely because they own them) observed in laboratory settings disappears in real market due to the reference-dependent expectation of sellers and buyers in the context of trade. Stewart et al42 proposed theory of decision by sampling in which valuation of alternatives depends on both the immediate context and from memory (ie, outcomes from previous decisions). This suggests that people do not use internal scales for value but rather constitute simple cognitive processes such as comparison of relative rank of an alternative. Therefore, subjective values are constructed online depending on both the immediate context and also from memory. More recently, Bayesian models have been proposed that explain choice behavior in terms of incentive values reflecting relative values of rewards in a given context.43,44 The theory suggests that value of an option corresponds to a precision-weighted prediction error. The predictions are based on the expectations of the reward. This theory could explain contextual effects of options those that are presented in the past as well as options currently available.

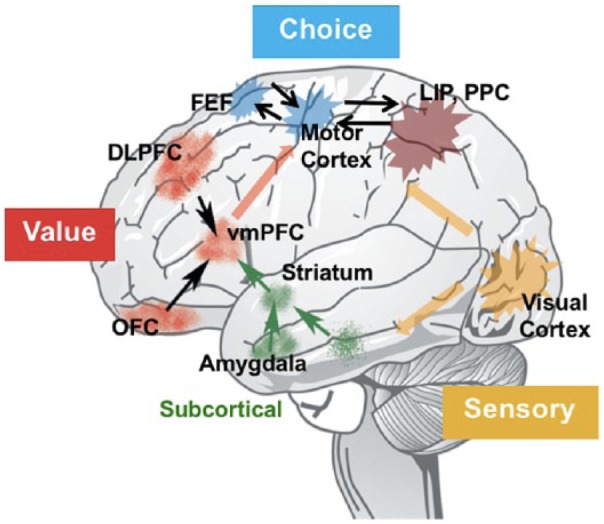

Examples from both perceptual and value-based domains suggest that decision processes are dependent on the specific context a decision maker is facing while encountering these choices. Context faced by a decision maker could, for example, be the history of reward experiences or could also depend on the relative value of other possible alternatives being presented. Breiter et al45 showed 3 kinds of prospects in which participants could win or lose money (good: US $10, US $2.50, US $0; intermediate: US $2.50, US $0, −US $1.50; and bad: US $0, −US $1.50, −US $6). The outcome of US $0 on a good prospect will be experienced as a loss and the same outcome in a bad prospect would be experienced as a win. Accordingly, responses in amygdala and nucleus accumbens were found to be context dependent. Similar finding of context dependence across a number of brain regions was reported by Nieuwenhuis et al46 who compared win and loss gambles involving a common outcome of winning or losing nothing (Figure 3).

Figure 3.

Context gambles.

Adapted from Nieuwenhuis et al.46

Contexts can be manifested in 2 different ways, i.e., spatial context and temporal context. Spatial context refers to the value of other simultaneously available options within a trial, whereas temporal context is the outcomes experienced over time or across trials.47 A study by Tremblay and Schultz48 elucidates temporal context manipulation in the orbitofrontal neurons. A monkey was presented with 3 different cues that yielded 3 different rewards—cereal, apple, and raisins. In one block, the pair of rewards available were a cereal or an apple and the rigorous firing rate of neurons for apple indicated a higher preference for apple. However, in another block, when the less preferred reward of cereal was replaced with a more preferred reward, raisin, the neurons fired rigorously for raisins implicating neuronal coding of preferred choice. The neuronal firing for the previously higher preferred choice (apple) reduced when paired with a different alternative. This indicates that the neuronal activity encodes a relative preference over time in the context of other available rewards. Similarly, Padoa-Schioppa49 demonstrated that neuronal firing in orbitofrontal cortex adapted to different ranges of reward magnitude when compared with no reward. Earlier findings by Tobler et al50 and Kobayashi et al51 suggest that dopaminergic neurons and orbitofrontal neurons adapt to the range of input reward magnitudes. This phenomenon can be explained using the efficient coding hypothesis.

The efficient coding hypothesis is inspired by information coding theory used for communication networks. Scientists suggested that neurons use a similar process to efficiently encode information.52,53 The efficient coding hypothesis states that a group of neurons adjusts the spiking rates to encode maximum information for efficient resource utilization, similar to communication systems which attempt to transfer information in fewest possible bits. A large body of literature in visual neuroscience has shown that neurons in the sensory circuits adapt to the properties of the surroundings. Similar to the visual information transfer system in the brain, some researchers now suggest that the decision-making circuitry in the brain also follows the efficient coding hypothesis.53 For example, a monkey is presented with 2 choices—one drop of juice versus a full jug of juice. In such a case, it is easier for the monkey to make a decision as in the former case the spiking of neurons would be far less than the latter. Now consider an example, where the monkey has a choice between a jug of juice which is almost full versus a jug that is completely full. In this case, if neurons were to encode exact information, then it would become quite difficult for the monkey to make a choice when let us say one neuron fires 80 spikes a second versus the other one which fires at 100 spikes a second. Scientists now propose that brain avoids this problem by encoding the subjective value information by the relative difference between the 2 available choices. In the above example, this would lead to the neurons encoding the jug almost full with a low firing rate as it is currently the worst choice of the 2 when compared with the other more rewarding option. It now again becomes easy for the monkey to make a choice between the 2 options. Neuronal adaptation, therefore, can have additive (shift) and multiplicative (gain) normalization that scales the reward values relative to other available alternatives (ie, context). Indeed, normalization has been proposed to form the basis for context-dependent decision making.54

Context modulation is one such efficient coding hypothesis which is involved in both decision and sensory system circuitry.55 As such, in the current literature, there is no specific definition for context or its modulation. Broadly context modulation can be defined by the interaction between 2 different kinds of inputs: first consists of the feed-forward connections from the earlier areas in the preprocessing stream and second consists of the modulatory system that controls the system response to the driving inputs. A theory of context modulation would also explain the optimality of behavior contrasting with the earlier notions of independence of irrelevant alternatives axiom.

Recent studies by Rigoli et al43 manipulated context in value-based decisions and found a role for hippocampus and dopaminergic midbrain mediating the corresponding neural adaptations. Their manipulation involved partially overlapping high- and low-value contexts. The reward values common to both the contexts were used to identify the corresponding context-sensitive neural mechanisms.

Toward a Domain-Generic Understanding of Decision Making

For decades, research in decision making has focused on using paradigms and computational models that individually focus on investigating perceptual, value-based, or social decision-making scenarios in isolation. However, in real life, decision-making scenarios rarely occur as isolated scenarios belonging to 1 of the 3 decision-making domains. In real-life scenarios, we do not always encounter choices which are more or less rewarding, but we also come across alternatives or rewards which are of different types. Furthermore, perceptual decisions can be ultimately seen as reward driven and in the same vein economic decisions might require perceptual valuation of available options.9 Recent studies have shown supportive neural and behavioral evidence in monkey studies23,56,57 for investigating perceptual and economic decisions in an integrated way.

Given that contextual effects are apparent both in sensory perception and valuation, the current literature has a gap in understanding contextual influences in decision making. As elaborated earlier, the subcomponents of decision making involve both sensory encoding (representation) and valuation before choice execution. It is therefore imperative that we need to understand which of the subcomponents of decision processes are modulated by contextual information. We propose that it is necessary to identify not only the existence of context-induced bias but also the uses of contextual information to understand which stages of the decision-making process are influenced. Specifically, integration of simultaneously available contextual information can be distinguished from experience-based or temporal context. These context-sensitive mechanisms are similar to widely known simultaneous and successive contrast effects in vision. Contextual influences in valuation have been proposed to involve the spatial and temporal contexts in line with the efficient coding hypothesis.53 According to the general agreement of a Bayesian observer, individuals incorporate experience in terms of a prior. Therefore, studying the subprocesses involved in decision making involves not only the valuation of alternatives and incorporation of simultaneously available contextual information but also the prior by considering the temporal context of the decision-making scenarios. There also is a need to establish the role of common currency hypothesis in decisions that do not explicitly invoke valuation processes such as in perceptual judgment. A key question then is to what extent the cognitive processes of decision making are similar or different across different domains—perceptual and economic (value-based). This can be studied using experimental paradigms that integrate these 2 domains. A relatively new line of investigation is studying the integration of reward values with perceptual decisions.9,23,57–60

Akin to the perceptual decisions, we can consider that the valuation process also has an accumulation of evidence. Recently, there have been successful attempts to use the drift diffusion model to investigate value-based decisions.61 Although this is intuitive in perceptual decision making, there is little explanation on how such an evidence accumulation of valuation might take place. Value-based decision making has so far been studied majorly by prespecifying the probabilities and associated outcome values. Chawla and Miyapuram62 studied value-based decisions in an experience-based, repetitive decision-making setup. Participants have to repeatedly sample from 2 cards left or right that would reveal a corresponding outcome. We here investigated how past experience shapes expectations. This experimental approach is also interestingly suitable to study a variety of reinforcement types. Hence, this experimental approach can be used for domain-generic studies of decision making. Using meta-analysis of brain imaging studies, we investigated the neural mechanisms involved in different types of decision making.63-65 If a single system exists that supports computation of a (common) decision variable, then we should find overlapping regions of brain activation. However, if the value and perceptual decisions involve different processes, then we would observe involvement of different sets of brain activations. According to the common currency coding hypothesis, the neural substrates of decision making would involve a common circuit across different domains of decision making.10 One challenge in verifying this hypothesis is that different paradigms are used for different domains of decision making and therefore cannot be studied in single studies. Although there are very few studies that study the cross-linkages between different decision-making domains, we found common or domain-generic, as well as domain-specific, brain activations.64 The common network revealed by the conjunction analysis comprises basal ganglia (caudate, putamen, pallidum), insula, together with inferior frontal region, supplementary motor area, and thalamus. These regions could form the common currency evaluation and choice execution networks in the brain. The inferior parietal area appears to be involved in both perceptual and value-based decision making, but not necessarily in social decision making. Anterior cingulate cortex and medial prefrontal cortex show selectivity toward social and value-based decision making. It further remains open to future work to identify the specific cognitive and neural mechanisms supporting the temporal dynamics corresponding to the different stages of decision making.

Conclusions, Limitations, and Future Research

Through this review, we propose that models of decision making should not only consider the evaluation of simultaneously available alternatives but also include explicitly the context/prior information gained from experience. In other words, we can speculate a multistage decision model that should segregate stimulus encoding, choice or action selection, and execution processes from that of computation of a decision variable. Furthermore, the outcome evaluation should be integrated back to update the prior for making future decisions.19 We can arrive at 2 kinds of models for decision making. First, a cognitive model needs to specify the various stages and their role in decision making. For example, previous literature has not attempted to tease apart whether top-down information influences stimulus encoding or choice execution stage. Future work needs to reconcile the decision process, i.e., computation decision variable with the parallel accounts of a common currency of valuation models. Second, a neural model can specify which regions of the brain participate in these different stages of decision making. Neural computation is an extremely complex phenomenon to be understood in terms of spatial segregation in the brain regions subserving various functions over time or in parallel. It might be possible that over different timescales, multiple functions are performed by a network of overlapping brain regions (see also Schultz et al, 2017). The study of decision making as presented in this review reflects a small subset of experiments suitable for a laboratory environment. Other research in the field of decision making has already addressed questions pertaining to the ecological validity of laboratory-based research.66 Behavior measures such as choice made and response times are measures of the outcomes of a decision process. Computational modeling approaches are suitable for simulating the decision process resulting in behavioral outcomes. The computational modeling approach inherently has a crucial limitation that it can match the experimental observations but cannot make claims about the mechanisms underlying the cognitive phenomenon being investigated, which in our case was decision making. For ease of scientific investigations and rigor, cognitive phenomena are studied largely in isolation. By taking an example of decision making, this review appeals the need for a holistic and a system-level understanding of a cognitive phenomenon.

Footnotes

Funding:The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: MC was supported by doctoral fellowship from Tata Consultancy Services. KPM thanks Department of Science and Technology, Government of India, for funding from Cognitive Science Research Initiative (SR/CSRI/70/2014).

Declaration of conflicting interests:The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author Contributions: MC conceptualized the review and prepared draft manuscript. MC and KPM finalised the manuscript and critical revisions.

ORCID iD: Manisha Chawla  https://orcid.org/0000-0001-5779-2342

https://orcid.org/0000-0001-5779-2342

References

- 1. Shadlen MN, Kiani R. Decision making as a window on cognition. Neuron. 2013;80:791–806. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 2. Smith DV, Huettel SA. Decision neuroscience: neuroeconomics. Wiley Interdiscip Rev Cogn Sci. 2010;1:854–871. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 3. Montague PR, King-Casas B, Cohen JD. Imaging valuation models in human choice. Annu Rev Neurosci. 2006;29:417–448. [DOI] [PubMed] [Google Scholar]

- 4. Dayan P, Balleine BW. Reward, motivation, and reinforcement learning. Neuron. 2002;36:285–298. [DOI] [PubMed] [Google Scholar]

- 5. Sugrue LP, Corrado GS, Newsome WT. Choosing the greater of two goods: neural currencies for valuation and decision making. Nat Rev Neurosci. 2005;6:363–375. [DOI] [PubMed] [Google Scholar]

- 6. Miyapuram KP, Pammi VS. Understanding decision neuroscience: a multidisciplinary perspective and neural substrates. Prog Brain Res. 2013;202:239–266. [DOI] [PubMed] [Google Scholar]

- 7. Tsetsos K, Chater N, Usher M. Salience driven value integration explains decision biases and preference reversal. Proc Natl Acad Sci U S A. 2012;109:9659–9664. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 8. Marr D. Vision: A Computational Approach. San Francisco, CA: Freeman; 1982. [Google Scholar]

- 9. Summerfield C, Tsetsos K. Building bridges between perceptual and economic decision-making: neural and computational mechanisms. Front Neurosci. 2012;6:70. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 10. Levy DJ, Glimcher PW. The root of all value: a neural common currency for choice. Curr Opin Neurobiol. 2012;22:1027–1038. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 11. Bernoulli D. Specimen theoriae novae de mensura sortis. In: Commentarii Academiae Scientiarum Imperialis Petropolitanae. Vol. 5; 1738. Translated by Somer Louise. and reprinted as Exposition of a new theory on the measurement of risk. Econometrica. 1954;22:23–36. [Google Scholar]

- 12. Kahneman D, Tversky A. Prospect theory: an analysis of decision under risk. Econometrica. 1979;47:263–291. [Google Scholar]

- 13. O’Doherty JP, Cockburn J, Pauli WM. Learning, reward, and decision making. Annu Rev Psychol. 2017;68:73–100. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 14. Schultz W, Dayan P, Montague PR. A neural substrate of prediction and reward. Science. 1997;275:1593–1599. [DOI] [PubMed] [Google Scholar]

- 15. Sutton RS. Learning to predict by the methods of temporal differences. Mach Learn. 1988;3:9–44. [Google Scholar]

- 16. Daw ND, Niv Y, Dayan P. Uncertainty-based competition between prefrontal and dorsolateral striatal systems for behavioral control. Nat Neurosci. 2005;8:1704–1711. [DOI] [PubMed] [Google Scholar]

- 17. Van Rullen R, Thorpe SJ. The time course of visual processing: from early perception to decision-making. J Cogn Neurosci. 2001;13:454–461. [DOI] [PubMed] [Google Scholar]

- 18. Gold JI, Stocker AA. Visual decision-making in an uncertain and dynamic world. Annu Rev Vis Sci. 2017;3:227–250. [DOI] [PubMed] [Google Scholar]

- 19. Doya K. Modulators of decision making. Nat Neurosci. 2008;11:410–416. [DOI] [PubMed] [Google Scholar]

- 20. Rangel A, Camerer C, Montague PR. A framework for studying the neurobiology of value-based decision making. Nat Rev Neurosci. 2008;9:545–556. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 21. Kable JW, Glimcher PW. The neurobiology of decision: consensus and controversy. Neuron. 2009;63:733–745. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 22. Schultz W, Stauffer WR, Lak A. The phasic dopamine signal maturing: from reward via behavioural activation to formal economic utility. Curr Opin Neurobiol. 2017;43:139–148. [DOI] [PubMed] [Google Scholar]

- 23. Rorie AE, Gao J, McClelland JL, Newsome WT. Integration of sensory and reward information during perceptual decision-making in lateral intraparietal cortex (LIP) of the macaque monkey. PLoS ONE. 2010;5:e9308. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 24. Hanks TD, Summerfield C. Perceptual decision making in rodents, monkeys, and humans. Neuron. 2017;93:15–31. [DOI] [PubMed] [Google Scholar]

- 25. Forstmann BU, Ratcliff R, Wagenmakers EJ. Sequential sampling models in cognitive neuroscience: advantages, applications, and extensions. Annu Rev Psychol. 2016;67:641–666. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 26. Ratcliff R, Smith PL, Brown SD, McKoon G. Diffusion decision model: current issues and history. Trends Cogn Sci. 2016;20:260–281. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 27. Wiecki TV. Sequential sampling models in computational psychiatry: Bayesian parameter estimation, model selection and classification. arXiv preprint, arXiv:1303.5616; 2013. [Google Scholar]

- 28. Voss A, Nagler M, Lerche V. Diffusion models in experimental psychology: a practical introduction. Exp Psychol. 2013;60:385–402. [DOI] [PubMed] [Google Scholar]

- 29. Usher M, McClelland JL. The time course of perceptual choice: the leaky, competing accumulator model. Psychol Rev. 2001;108:550–592. [DOI] [PubMed] [Google Scholar]

- 30. Brown SD, Heathcote A. The simplest complete model of choice response time: linear ballistic accumulation. Cogn Psychol. 2008;57:153–178. [DOI] [PubMed] [Google Scholar]

- 31. Rilling JK, Sanfey AG. The neuroscience of social decision-making. Annu Rev Psychol. 2011;62:23–48. [DOI] [PubMed] [Google Scholar]

- 32. Sanfey AG, Rilling JK, Aronson JA, Nystrom LE, Cohen JD. The neural basis of economic decision-making in the ultimatum game. Science. 2003;300:1755–1758. [DOI] [PubMed] [Google Scholar]

- 33. Camerer CF. Behavioral Game Theory: Experiments in Strategic Interaction. Princeton, NJ: Princeton University Press; 2011. [Google Scholar]

- 34. Stephens DW, Krebs JR. Foraging Theory. Princeton, NJ: Princeton University Press; 1987. [Google Scholar]

- 35. Trueblood JS, Brown SD, Heathcote A, Busemeyer JR. Not just for consumers: context effects are fundamental to decision making. Psychol Sci. 2013;24:901–908. [DOI] [PubMed] [Google Scholar]

- 36. Srivastava N, Schrater P. Learning what to want: context-sensitive preference learning. PLoS ONE. 2015;10:e0141129. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 37. Noguchi T, Stewart N. In the attraction, compromise, and similarity effects, alternatives are repeatedly compared in pairs on single dimensions. Cognition. 2014;132:44–56. [DOI] [PubMed] [Google Scholar]

- 38. Ariely D, Norton MI. How actions create—not just reveal—preferences. Trends Cogn Sci. 2008;12:13–16. [DOI] [PubMed] [Google Scholar]

- 39. Tversky A, Kahneman D. The framing of decisions and the psychology of choice. Science. 1981;211:453–458. [DOI] [PubMed] [Google Scholar]

- 40. Busemeyer JR, Townsend JT. Decision field theory: a dynamic-cognitive approach to decision making in an uncertain environment. Psychol Rev. 1993;100:432–459. [DOI] [PubMed] [Google Scholar]

- 41. Kőszegi B, Rabin M. A model of reference-dependent preferences. Quart J Econ. 2006;121:1133–1165. [Google Scholar]

- 42. Stewart N, Chater N, Brown GD. Decision by sampling. Cogn Psychol. 2006;53:1–26. [DOI] [PubMed] [Google Scholar]

- 43. Rigoli F, Friston KJ, Martinelli C, Selaković M, Shergill SS, Dolan RJ. A Bayesian model of context-sensitive value attribution. eLife. 2016;5:e16127. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 44. Rigoli F, Mathys C, Friston KJ, Dolan RJ. A unifying Bayesian account of contextual effects in value-based choice. PLoS Comput Biol. 2017;13:e1005769. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 45. Breiter HC, Aharon I, Kahneman D, Dale A, Shizgal P. Functional imaging of neural responses to expectancy and experience of monetary gains and losses. Neuron. 2001;30:619–639. [DOI] [PubMed] [Google Scholar]

- 46. Nieuwenhuis S, Heslenfeld DJ, von Geusau NJA, Mars RB, Holroyd CB, Yeung N. Activity in human reward-sensitive brain areas is strongly context dependent. Neuroimage. 2005;25:1302–1309. [DOI] [PubMed] [Google Scholar]

- 47. Louie K, Glimcher PW, Webb R. Adaptive neural coding: from biological to behavioral decision-making. Curr Opin Behav Sci. 2015;5:91–99. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 48. Tremblay L, Schultz W. Relative reward preference in primate orbitofrontal cortex. Nature. 1999;398:704–708. [DOI] [PubMed] [Google Scholar]

- 49. Padoa-Schioppa C. Range-adapting representation of economic value in the orbitofrontal cortex. J Neurosci. 2009;29:14004–14014. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 50. Tobler PN, Fiorillo CD, Schultz W. Adaptive coding of reward value by dopamine neurons. Science. 2005;307:1642–1645. [DOI] [PubMed] [Google Scholar]

- 51. Kobayashi S, de Carvalho OP, Schultz W. Adaptation of reward sensitivity in orbitofrontal neurons. J Neurosci. 2010;30:534–544. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 52. Barlow HB. Possible principles underlying the transformations of sensory messages. In: Rosenblith WA. (ed.) Sensory Communication. Cambridge, MA: The MIT Press; 1961:217–234. [Google Scholar]

- 53. Louie K, Glimcher PW. Efficient coding and the neural representation of value. Ann N Y Acad Sci. 2012;1251:13–32. [DOI] [PubMed] [Google Scholar]

- 54. Louie K, Khaw MW, Glimcher PW. Normalization is a general neural mechanism for context-dependent decision making. Proc Natl Acad Sci. 2013;110:6139–6144. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 55. Louie K, De Martino B. The neurobiology of context-dependent valuation and choice. In: Paul W, Fehr GE, eds. Neuroeconomics. 2nd ed. Cambridge, MA: Academic Press; 2014:455–476. [Google Scholar]

- 56. Feng S, Holmes P, Rorie A, Newsome WT. Can monkeys choose optimally when faced with noisy stimuli and unequal rewards? PLoS Comput Biol. 2009;5:e1000284. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 57. Gao J, Tortell R, McClelland JL. Dynamic integration of reward and stimulus information in perceptual decision-making. PLoS ONE. 2011;6:e16749. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 58. Chen MY, Jimura K, White CN, Maddox WT, Poldrack RA. Multiple brain networks contribute to the acquisition of bias in perceptual decision-making. Front Neurosci. 2015;9:63. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 59. Mulder MJ, Wagenmakers EJ, Ratcliff R, et al. Bias in the brain: a diffusion model analysis of prior probability and potential payoff. J Neurosci. 2012;32:2335–2343. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 60. Mahesan D, Chawla M, Miyapuram KP. The effect of reward information on perceptual decision-making. Paper presented at: International Conference on Neural Information Processing; October 16-21, 2016:156-163; Kyoto, Japan Cham: Springer. [Google Scholar]

- 61. Pedersen ML, Frank MJ, Biele G. The drift diffusion model as the choice rule in reinforcement learning. Psychon Bull Rev. 2017;24:1234–1251. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 62. Chawla M, Miyapuram KP. Influence of previous choice and outcome in a two-alternative decision-making task. Paper presented at: International Conference on Neural Information Processing; November 9-12, 2015:467-474; Istanbul Cham: Springer. [Google Scholar]

- 63. Chawla M, Miyapuram KP. Meta-analysis of functional neuroimaging data. Paper presented at: IEEE 2nd International Conference on Image Information Processing; December 9-11, 2013:256-260; Shimla, India New York: IEEE. [Google Scholar]

- 64. Chawla M, Miyapuram KP. Comparison of meta-analysis approaches for neuroimaging studies of reward processing: a case study. Paper presented at: International Joint Conference on Neural Networks (IJCNN); July 12-16, 2015:1-5; Killarney New York: IEEE. [Google Scholar]

- 65. Chawla M, Miyapuram KP. Common neural coding across domains of decision making identified by meta-analysis. Front Neuroinform. 2016. https://www.frontiersin.org/10.3389/conf.fninf.2016.20.00011/event_abstract.

- 66. Padoa-Schioppa C. Neurobiology of economic choice: a good-based model. Annu Rev Neurosci. 2011;34:333–359. [DOI] [PMC free article] [PubMed] [Google Scholar]