Abstract

To make evidence-based treatments deliverable, effective, and scalable in community settings, it is critical to develop a workforce that can deliver evidence-based treatments as designed with skill. However, the science and practice of clinician training and consultation lags behind other areas of implementation science. In this paper, we present the Longitudinal Education for Advancing Practice (LEAP) model designed to help span this gap. The LEAP model is a mechanistic model of clinician training and consultation that details how training inputs, training and consultation strategies, and mechanisms of learning influence training outcomes. We first describe the LEAP model and then discuss how key implications of the model can be used to develop effective training and consultation strategies.

Keywords: Training, Consultation, Mechanisms of Learning, Clinician, Community Settings

Critical to the long-term goal of transporting evidence-based treatments (EBTs) to community settings (e.g., community mental health and school-based settings) is developing a workforce that can deliver EBTs with high integrity and skill. A lack of clinician expertise and availability in community settings is a likely barrier to maximizing the effectiveness of EBTs for individuals with psychological and behavioral needs (e.g., Fairburn & Patel, 2014; Kazdin & Rabbitt, 2013). Despite the integral role of the clinician in delivering EBTs, the science and practice of training and consultation lags behind other areas of implementation science (McGaghie, 2010; McHugh & Barlow, 2010). Thus, a challenge for the field is to develop clinician training and consultation strategies that are effective (i.e., produce meaningful, long-lasting changes in clinician behavior; Becker & Stirman, 2011; Chambers, Glasgow, & Stange, 2013), scalable (Fairburn & Patel, 2014; Kazdin & Rabbitt, 2013), and sustainable.

The past decade has witnessed calls for research on clinician training and consultation (e.g., Beidas & Kendall, 2010; Herschell, Kolko, Baumann, & Davis, 2010). Efforts to heed these calls have evaluated the impact that training and consultation strategies have on clinician behavior (e.g., Bearman, Schneiderman, & Zoloth, 2017; Kolko et al., 2012) as well as the downstream impact that training and consultation strategies have on clinical outcomes (e.g., Edmunds, Beidas, & Kendall, 2013; Schoenwald, Sheidow, & Chapman, 2009). Though this work has advanced knowledge, it has also underscored the challenges associated with developing a workforce that can skillfully deliver EBTs. In isolation, the most common training strategies (e.g., treatment protocol, didactic workshop) produce small effects (Bearman et al., 2017; Sholomaskas et al., 2005) and need to be followed by ongoing consultation to meaningfully impact clinician behavior (Sholomaskas et al., 2005; Walters, Matson, Baer, & Ziedonis, 2005). Moreover, the benefits of training may not be sustained once consultation ends (Chambers & Azrin, 2013; Stirman et al., 2012). Thus, more work is needed to identify effective training and consultation strategies that produce sustainable changes in clinician behavior.

In this paper, we make the case that adopting a mechanistic approach to the study of clinician training and consultation may advance the field. Theoretical models of training and consultation exist (e.g., Edmunds et al., 2013; Milne & Dunkerley, 2010), but most models do not specify the mechanisms through which training and consultation strategies produce changes in clinician behavior (see Bennett-Levy, 2006 and Johnston & Milne, 2012 for notable exceptions). By contrast, theoretical models and research from fields outside mental health and school-based intervention, such as adult learning and industrial-organizational psychology, focus on the identification of such mechanisms and describe the processes by which learning occurs and behavior changes over time (Lyon, Stirman, Kerns, & Bruns, 2011). Thus, we draw on the theory and research from these fields to develop a model of clinician training and consultation that we place within an implementation framework to help inform research efforts designed to span the science-practice gap in community settings.

Definition of Terms: Training, Supervision, and Consultation

Though the terms “training,” “supervision,” and “consultation” are commonly used in the mental health and school intervention fields, they are not consistently defined. Our definition of training is inspired by definitions used in the learning literature (i.e., instruction aimed at providing new skills and knowledge regarding a specific task, such as delivering an EBT; Soderstrom & Bjork, 2015). This definition focuses on the initial training provided to clinicians about a particular treatment model (e.g., EBT), which is considered distinct from ongoing learning opportunities.

In the mental health field, both “consultation” and “supervision” are used to refer to learning opportunities provided after the initial training (Bearman et al., 2013; Falender & Shafranske, 2014). Though consultation and supervision have some similar goals, status differences in the role of the trainee and trainer have distinguished the two terms. Supervisors are often employed at the same agency as the trainee whereas consultants are not typically part of the same agency. In supervision, there often exists a status difference such that the supervisor bears responsibility for the services provided by the trainee. Towards this end, Milne (2007) defines supervision as “relationship-based education, and training that is work-focused and which manages, supports, and evaluates work of colleagues” (p. 439). Supervision may focus on the delivery of an intended treatment, but likely also focuses on other clinical and administrative topics (e.g., risk assessment, attendance, billing; Accurso et al., 2011; Dorsey et al., 2018; Falender & Shafranske, 2014). In contrast, consultants are not typically responsible for care. The focus of consultation is typically limited to the delivery of an intended treatment, which under preferred circumstances is an EBT. To date, very little research has focused on the content, strategies, and outcomes of workplace-based supervision of EBTs provided in community service settings (Dorsey et al., 2018), but the available evidence suggests that relatively little time is paid to the delivery of treatment (Accurso et al., 2011; Dorsey et al., 2017). Thus, consultation and supervision may differ in the amount of time dedicated to focusing on treatment delivery. In this paper, our focus is on training clinicians to deliver EBTs and not the more general competencies that might be addressed by supervisors (see Accurso et al., 2011; Dorsey et al., 2018; Falender and Shafranske, 2014). Thus, we use the term consultation to refer to ongoing learning opportunities provided by consultants or supervisors designed to support the development of skills and knowledge related to delivering an EBT (Caplan & Caplan, 1993).

What We Know: Training, Consultation, and Supervision Strategies

As the empirical base for treatments moved from efficacy to effectiveness trials (e.g., Bearman et al., 2013; Schoenwald et al., 2009), researchers began focusing on training community and school-based clinicians in EBTs. Initially, researchers used training methods commonly used in efficacy trials: provision of a specific treatment protocol, a workshop-based training, and consultation (Sholomskas et al., 2005). However, some early effectiveness studies found few differences between usual clinical care and the EBT (e.g., Southam-Gerow et al., 2010; Weisz et al., 2009; Weisz et al., 2013). Closer inspection revealed that when clinicians in community settings were trained in EBTs using these methods, they did not always achieve the same levels of treatment integrity (adherence or competence) as clinicians working in research settings (McLeod et al., 2017; Smith et al., 2017). Researchers thus suggested that identifying ways to train clinicians more effectively might help boost treatment integrity in community settings (Powell et al., 2012; Proctor et al., 2011; Wood, McLeod, Klebanoff, & Brookman-Frazee, 2015).

Difficulties transporting EBTs led, in part, to an increased focus on boosting treatment integrity in community settings. The goal of most training and consultation strategies is to foster treatment integrity (Powell et al., 2012). Defined as the quantity (i.e., adherence) and quality (i.e., competence) of EBT delivery, integrity is a key outcome of implementation strategies, such as training and consultation (Proctor et al., 2011). This is because treatment integrity is an indicator of training effectiveness (Crits-Christoph et al., 1998; Rakovshik & McManus, 2010; Sholomskas et al., 2005) and a predictor of clinical outcomes (Hogue et al., 2008; Schoenwald, Sheidow, & Letourneau, 2004).

Many efforts to understand the relation between training, consultation, and treatment integrity have occurred within the context of effectiveness trials. Within this context, a few studies have found a relation between the provision of consultation and higher treatment integrity (e.g., Bearman et al., 2013; Schoenwald et al., 2009). For example, Bearman et al. (2013) found that the use of active strategies in consultation (e.g., modeling, role-play) predicted higher treatment integrity. Though these findings help demonstrate a relation between consultation strategies and treatment integrity, concerns about the time and financial costs of the training and consultations models typically used in effectiveness trials have led to concerns about sustainability (e.g., Hogue, Ozechowski, Robbins, & Waldon, 2013). As a result, researchers have sought to develop more cost-effective models.

Learning Collaboratives and Train-the-Trainer models have been commonly used to train EBTs (Herschell et al., 2010) given their potential to be time- and cost-effective (Hogue et al., 2013; Kazdin & Rabbitt, 2013) as well as to improve participant EBT knowledge, skill, and attitudes. Initially developed by the Institute for Healthcare Improvement, a Learning Collaborative is a systematic process that includes an engagement and readiness period followed by staggered learning sessions (i.e., training) and action (i.e., consultation) periods (Institute for Healthcare Improvement, 2003). Typically, Learning Collaboratives emphasize learning across organizations and within different levels of an organization (e.g. clinicians, supervisors, and senior leaders) with the goal of supporting change at multiple levels (e.g., organization and clinical levels; Institute for Healthcare Improvement, 2003; Kilo, 1998). At the clinician level, Learning Collaboratives have been associated with changing professional networks (i.e., peer advice seeking; Bunger et al., 2016) as well as improving engagement in clinical training and compliance with program requirements (Nadeem, Weiss, Olin, Hoagwood, & Horwitz, 2016).

Studies of Learning Collaboratives focused on client outcomes have found that this method is associated with improved engagement in behavioral health services (Cavaleri et al., 2006, 2007, 2010; Rutkowski et al., 2010), including initiating (Cavaleri et al., 2006) and sustaining (Cavaleri et al., 2007) gains in initial appointment attendance rates. It also has been associated with improvements in children’s behavioral support services in schools (Stephan, Conners, Arora, & Brey, 2013) and trauma-focused service providers (Dopp et al., 2017; Lang, Franks, Epstein, Stover & Oliver, 2015)

In contrast, Train-the-Trainer models involve an EBT expert providing clinical training to a community-based clinician who in turn replicates that training with other clinicians in their organization. Though this approach is widely used (e.g., Hawkins & Sinha, 1998; Rogers, Cohen, Danley, Hutchinson, & Anthony, 1986) and is considered affordable and feasible (e.g., Hoagwood et al., 2018), the effectiveness of the model is not well-understood. Within behavioral health, some studies have reported a “watering down” effect from consultants to staff (Shore, Iwata, Vollmer, Lerman, & Zarcone, 1995), whereas others have reported no differences (Martino et al., 2010). Recent studies focused on the effectiveness of the models have found pre- to post-training gains in knowledge about mental health services and self-efficacy for family peer advocates (Hoagwood et al., 2018), reductions in eating disorder risks for undergraduates (e.g., Greif, Becker & Hildebrandt, 2015), and improving opioid prescribing practices (Zisblatt et al., 2017). However, key questions about the effectiveness of this model remain.

A few general features of the training and consultation literature bear mentioning. Most training models are complex, include multiple components (e.g., face-to-face training, consultation), are poorly described, and are variable in their procedures (e.g., inclusion of consultation methods, online training; Roth, Pilling, & Turner, 2010). As a result, there has been little focus on identifying the critical components for producing change in clinician behavior (Chu, 2008; Rakovshik & McManus, 2010). However, a few recent experimental studies have found that consultation provided after training leads to greater knowledge, change in attitudes towards EBTs, and higher treatment integrity than a control group (e.g., Bearman et al., 2017; Martino et al., 2016; Webster-Stratton, Reid, & Marsenich, 2014). This research is important as experimental studies can address key questions related to the impact of training and consultation strategies on mechanisms and training outcomes. That said, more research is needed to identify how training and consultation work to produce change in training outcomes so that the critical training and consultation strategies can be identified.

One reason why relatively little research has investigated how and why training and consultation strategies work is due, in part, to the fact that most existing conceptual models do not identify the mechanisms through which training and consultation strategies influence clinician behavior (Edmunds et al., 2013; Milne & Dunkerley, 2010). Two notable exceptions exist. The first is a cognitive model of clinician skill acquisition (Bennett-Levy, 2006) that draws from adult learning theory (Kolb, 1984) and states that skill acquisition in clinicians depends on engaging three cognitive systems (i.e., declarative, procedural, and reflective). Designed to help stimulate research on clinician training, this model was intended to specify the mechanisms by which novice and more experienced clinicians learn. This model, and the research used to inform its development (see Binder 1993, 1999; Skovholt, 2001), represents an important contribution as information processing research and theory were used to help understand skill acquisition in clinicians. The second is a developmental model designed to delineate how supervisees learn during CBT supervision (Johnston & Milne, 2012). Using grounded theory methodology to study the CBT supervision process, the authors proposed a model of supervisee learning that emphasized the progression through stages that unfolded over the course of supervision. Both models emphasize the importance of cognitive processes and a sequential progression of learning.

In this paper, we attempt to build upon and expand on this previous work. Our aim is to propose a mechanistic model of clinician learning that fits within an implementation framework. A mechanistic model that leads to theory-based research could help advance the field in at least two ways (Chu, 2008; Kazdin, 2007): First, understanding how mechanisms impact treatment integrity can help identify effective training and consultation strategies. Second, identifying specific effective training and consultation strategies that impact mechanisms of learning could help reduce the number of strategies needed. Adopting a mechanistic approach to the study of clinician training and consultation may thus help advance the field.

Longitudinal Education for Advancing Practice (LEAP) Model

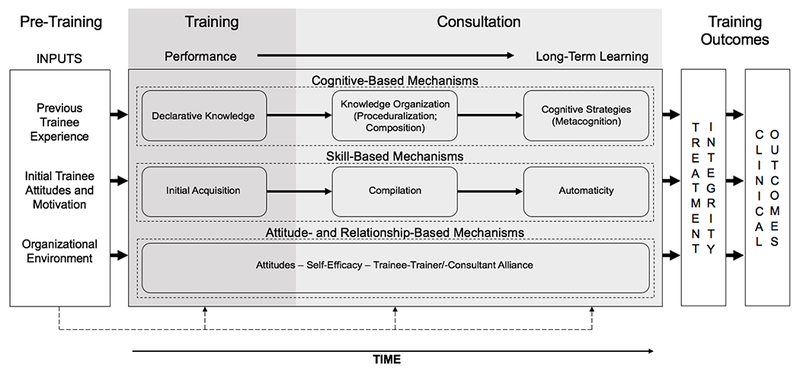

Figure 1 depicts the Longitudinal Education for Advancing Practice (LEAP) model, a mechanistic model that includes training inputs, mechanisms of learning, and training outcomes. The LEAP model synthesizes theory and research from industrial-organizational psychology (Blume, Ford, Baldwin, & Huang, 2010; Ford & Weissbein, 1997), adult learning (Kolb, 1984; Lewin, 1946; Soderstrom & Bjork, 2015), and implementation research (Aarons, Hurlburt, & Horwitz, 2011; Beidas & Kendall, 2010; Herschell et al., 2010). Our goal in introducing the LEAP model is to provide the field with a new mechanistic model of training and consultation to guide and stimulate research in mental health. It is important to note, however, that this is a working model. Though the elements of the LEAP model are based on theory and research, the connections among these elements are largely untested within mental health. The model is thus likely to evolve as research does, or does not, support different elements of LEAP model.

Figure 1:

Longitudinal Education for Advancing Practice (LEAP) Model

The left section lists inputs that influence and moderate training outcomes: previous trainee experience (Bennett-Levy, 2006), initial trainee attitudes and motivation (e.g., attitudes, motivation, self-efficacy; Colquitt, LePine, & Noe, 2000; Sitzmann, Brown, Casper, Ely, & Zimmerman, 2008), and organizational environment (e.g., organizational climate, support; Cannon-Bowers, Salas, Tannenbaum, & Mathieu, 1995; Tracey, Tannenbaum, & Kavanagh, 1995). To achieve the goal of implementing EBTs in community contexts, the field will need to develop a better understanding of how individual and organizational factors present at baseline influence the training process. From a learning perspective, clinicians with previous experience may need less instruction. Of course, some more seasoned clinicians may have prior experience with training models that could impact their attitudes towards a new treatment or motivation to participate in training. To our knowledge, no research in mental health has investigated how these factors present at baseline in isolation and in combination with other factors influence the learning process.

The middle section depicts mechanisms of learning and consultation and is organized longitudinally to represent changes that occur as learning progresses. Following initial training, clinicians demonstrate changes in “performance” (i.e., retention of material and demonstration of skills that can be assessed during training; Soderstrom & Bjork, 2015), and eventually achieve “long-term learning” (i.e., lasting changes in comprehension and skills that lead to long-term retention of information; Soderstrom & Bjork, 2015) with sufficient consultation in how to deliver an EBT. The transition from performance to long-term learning is called “transfer of learning” (Blume et al., 2010) and requires clinicians to take the skills and knowledge from the initial training and determine how to deploy them in the work environment. For clinicians, long-term learning equates to demonstrating high adherence (i.e., delivery of core interventions found in an EBT protocol) and competence (i.e., quality of delivery of the core intervention in an EBT) with clients in treatment sessions (i.e., treatment integrity). The transfer of learning progression can be tracked via cognitive-, skill-, and attitude/relationship-based mechanisms that change with practice and feedback (i.e., consultation).

Within this model, the training and consultation processes act as the engine that drives and supports the advancement of learning over time in a complex mediational relationship. The extent to which the trainee learns is dependent on the strategies employed first by the trainer and then the consultant, such that the trainer and consultant choose strategies that are developmentally appropriate and apply the strategies in adequate quantity and with sufficient quality (Falender & Shafranske, 2014; Johnston & Milne, 2012). For example, to achieve long-term learning, clinicians must have opportunities to practice new skills and receive feedback in order to promote the development of procedural knowledge and compilation.

Only a handful of studies have investigated some relations in the middle portion of the LEAP model. For example, research has demonstrated that training plus consultation leads to more knowledge, better attitudes towards EBTs, and better performance in behavioral rehearsals than training alone (e.g., Beidas, Edmunds, Marcus, & Kendall, 2012; Bearman et al., 2017). As another example, research has found that more experienced clinicians perform better on clinical decision-making tasks (Carpenter et al., 2016). Overall, however, little research has investigated the extent to which these mechanisms change over the course of training and consultation and predict training outcomes.

The right side of the model represents training outcomes represented by treatment integrity and clinical outcomes (Falender, 2014; Lewis, Scott, & Hendricks, 2014). Long-term learning in the LEAP model is demonstrated via high treatment adherence and competence with clients in sessions. High treatment integrity is, in turn, hypothesized to predict better clinical outcomes. To date, most research has focused on relations between training and consultation strategies and treatment integrity (e.g., Beidas et al., 2012; Sholomaskas et al., 2005; Martino et al., 2016; Webster-Stratton et al., 2014) or client outcomes (Martino et al., 2016; Schoenwald et al., 2009). Though this research is important, it will not advance an understanding of how training and consultation strategies work to promote long-term learning in clinicians. Thus, our goal in proposing the LEAP model is to add a critical piece to existing models by specifying how training and consultation strategies influence treatment integrity via specific mechanisms.

Training and Consultation Inputs

Although the main focus of the LEAP model is on mechanisms of learning and training outcomes, the learning and industrial-organizational psychology literatures consistently note that individual and organizational factors act as inputs (i.e., factors present before training or consultation begin) or moderators of the learning process. Moreover, theory and research emphasize that individual and organizational factors both play an important role in the implementation of EBTs (Aarons et al., 2011). An exhaustive review of the factors that might serve as inputs or moderators is beyond the scope of this paper, so we attempt to highlight factors that have particular relevance for mental health and school-based intervention research.

Previous trainee experience.

Adult learning and industrial-organizational psychology research has identified previous trainee experience as a factor that influences learning (Colquitt et al., 2000). Previous experience is important to consider in mental health because training may target both new learners (e.g., graduates in training) and the existing workforce. It is possible that less training may be needed for clinicians who have had previous experience with a similar intervention model (e.g., introduced to the foundational components of cognitive-behavioral therapy [CBT]; Bennett-Levy, 2006) because they may begin training with greater knowledge and skills. Clinicians with previous experience may also require less training due to the development of foundational competencies that facilitate the learning process (e.g., ability to self-assess; Falender & Shafranske, 2014; Johnston & Milne, 2012). In contrast, clinicians who have been trained in an alternate intervention model (e.g., short term dynamic treatment) may develop attitudes that interfere with learning a new model (e.g., CBT). As implementation strategies can be tailored for clinicians with varying levels of experience, it is important to focus on how experience impacts learning.

Initial trainee attitudes and motivation.

To date, research has identified individual factors that are relevant to clinicians, including motivation, self-efficacy, and attitudes (Gallo & Barlow, 2012). Individual motivation to participate in training and consultation is one of the most consistent predictors of training outcomes in the learning literature (e.g., Bauer, Orvis, Ely, & Surface, 2016; Colquitt et al., 2000). Self-efficacy in performing a task and an individual’s attitudes derived from previous experience with a task are also associated with training outcomes (Colquitt et al., 2000). Given the consistency of the findings for motivation, self-efficacy, and attitudes towards the training in other fields, researchers may want to study these factors when evaluating the impact of training and consultation on clinician training outcomes. Similar to the notion that trainees with higher initial levels of knowledge and skills might need less training, it is possible that individuals who begin training with more positive attitudes or more motivation might be trained more efficiently.

Organizational environment.

The importance of organizational factors as training inputs and moderators of training outcomes cannot be overstated (Cannon-Bowers et al., 1995). Prior to training, the culture and climate of an organization can influence an individual’s self-efficacy and motivation (Cannon-Bowers et al., 1995). The level of commitment an organization demonstrates for a particular training (e.g., expectations set for training) can also play a role in determining training outcomes (Colquitt et al., 2000; Noe & Colquitt, 2002; Sitzmann & Ely, 2011). In community settings, organizations that have a more positive climate (e.g., Ditty, Landes, Doyle, & Beidas, 2015) and culture (e.g., Glisson et al., 2008) and minimize barriers to implementation (e.g., unsupportive leadership, high staff turnover, insufficient financial resources) have better training outcomes (Brunette et al., 2008). Given the consistent findings linking organizational factors to training outcomes, researchers should attend to organizational culture and climate when seeking to understand the impact of training on clinician learning mechanisms and training outcomes.

A Learning Perspective on Training and Consultation

Critical to developing effective clinician training and consultation strategies is understanding how to match the appropriate learning strategies to the type of skills required for EBT delivery. Towards this end, the adult learning literature distinguishes between “closed” and “open” skills (Yelon & Ford, 1999). Closed skills are simple tasks (e.g., filling out billing paperwork) that can be replicated in training (e.g., mechanics can be provided with an engine to repair). Open skills, in contrast, are more complex as they can be completed in multiple ways (Yelon, Ford, & Bhatia, 2014). For example, teaching a manager how to motivate employees represents an open skill as various strategies are needed for different employees (Yelon & Ford, 1999). Open skills require trainees to perform tasks in flexible ways depending on contextual cues. Thus, open skills are comprised of skill- (i.e., how to do a task) and cognitive-based (i.e., when to do the task) dimensions.

EBT delivery is best considered an open skill. Competent delivery of an EBT depends, in part, on the skillful delivery of specific components of an intervention adjusted to meet the unique needs of a client (Barber, Sharpless, Klostermann, & McCarthy, 2007; Chu & Kendall, 2009). Considering EBT delivery an open skill has important implications for training and consultation as learning strategies must facilitate the development of the skill- (e.g., steps involved in an exposure for anxiety) and cognitive-based (e.g., knowing how to adjust the delivery of an exposure based on client response) dimensions required to deliver an EBT.

Transfer of learning: Performance versus long-term learning.

The goal of instruction in open skills is to achieve a relatively permanent change in the skills and knowledge that support long-term learning (Soderstrom & Bjork, 2015). To achieve this goal, the trainee must transfer what is learned from the initial training to the work environment. This process unfolds over time, is referred to as transfer of learning, and is depicted in Figure 1 by the arrow linking performance to long-term learning. Performance is defined as a temporary fluctuation in skills and knowledge that can be measured during or immediately after initial training. Long-term learning, in turn, is defined as lasting changes in skills and knowledge.

Soderstrom and Bjork (2015) note that the ultimate goal of instruction is to provide trainees with skills and knowledge that are robust and flexible. Robust refers to the extent to which the trainee retains the skills and knowledge following periods of disuse. Flexible refers to whether the trainee can access and use the skills and knowledge across relevant contexts (e.g., different settings, client presentations). To reflect the longitudinal nature of learning, the LEAP model depicts transfer of learning as the change in skills and knowledge that occur as a trainee progresses from performance to long-term learning (see Figure 1). Thus, in this model, the goal of training and consultation is to produce changes in the comprehension, understanding, and skills of clinicians in a way that will lead to the long-term retention and flexible delivery of an EBT across contexts.

Framework for Training Evaluation

The LEAP model builds on work by Kraiger, Ford, and Salas (1993) who proposed a framework for training evaluation grounded in cognitive, social, and instructional psychology. Building from previous work (Bennett-Levy, 2006; Johnston & Milne, 2012), our goal was to include mechanisms of learning and provide a framework that could be used to evaluate the success of training and consultation strategies. We next define each mechanism of learning, discuss how each mechanism can be assessed, and identify some tools that have been used to assess each mechanism in mental health research.

Cognitive-based mechanisms.

Learning how to deliver an EBT involves acquiring new knowledge and developing ways to organize and apply the knowledge. Knowledge acquisition is dynamic and evolves over time as information is obtained, organized, and applied (Kolb, 1984; Kraiger et al., 1993). As trainees gain experience, they form heuristics that guide organization and use of knowledge across contexts. Consistent with Kraiger et al. (1993), we draw on a model proposed by Gagne (1984) that places cognitive mechanisms into three longitudinal categories, reflecting changes expected to be seen in trainees over time: (a) Declarative knowledge, (b) knowledge organization, and (c) cognitive strategies.

Declarative knowledge.

The first step in the learning process is acquiring declarative knowledge (i.e., factual knowledge and information about an EBT). Changes in declarative knowledge are expected to occur as a result of an initial training, or soon thereafter. Tests of declarative knowledge are one of the most common means to assess training outcomes and often focus on assessing the amount of knowledge, recall accuracy, and accessibility of knowledge (Kraiger et al., 1993). In mental health and school intervention research, tests of declarative knowledge typically use multiple choice questions to assess recall of core concepts related to an EBT treatment protocol (e.g., Beidas, Koerner, Weingardt, & Kendall, 2011; see Simons, Rozek, & Serrano, 2013 for a review).

Knowledge organization.

The transfer of learning process begins as trainees form procedural knowledge (i.e., knowledge about how to deliver an EBT) and decision-making heuristics that are used to organize and guide the application of emerging knowledge (Rouse & Morris, 1986). Procedural knowledge and heuristics develop as trainees practice applying lessons from training as this allows them to determine how skills and knowledge can be used together to achieve goals. Two cognitive processes, proceduralization and composition (Anderson, 1982), are instrumental to the formation of heuristics. In proceduralization, a trainee identifies and organizes the discrete steps required to deliver an EBT into a larger routine, whereas in composition, a trainee groups steps by linking them into a more complex process. Applying procedural knowledge and developing heuristics requires cognitive resources. As a result, trainees are slow to recognize problems and identify potential solutions. Assessment involves comparing how trainees and experts organize knowledge (e.g., cognitive maps; Goldsmith, Johnson, & Acton, 1991). Although this domain is rarely assessed in clinical research (Rakovshik & McManus, 2010), a few instruments exist. For example, the Assessment of Clinical Decision-Making in Evidence-Based Treatment for Child Anxiety and Related Disorders (ACE CARD; Carpenter et al., 2016) uses clinical vignettes to assess both procedural knowledge and metacognitive strategies (i.e., how to apply CBT interventions to different clinical situations) related to delivering CBT for youth anxiety and depression.

Cognitive strategies.

The last cognitive domain focuses on the development and application of higher-order cognitive strategies (Anderson, 1982; Kanfer & Ackerman, 1989). As trainees gain experience they develop faster, more sophisticated heuristics for organizing information. As trainees continue to practice and gain experience with complex, open skills, they internalize the application of knowledge, forming habits. Once internalized, fewer cognitive resources are required to perform a task, leaving the individual free to apply their attention elsewhere (e.g., tracking client emotion while conducting an intervention procedure, etc.). Metacognition—the awareness and regulation of one’s own cognitive processes and the effects of one’s behavior on others’—is developed during this phase (Brown, 1975; Leonesio & Nelson, 1990). Metacognition allows trainees to track progress towards accomplishing goals, determine when an approach is not working, and problem-solve while delivering interventions during a treatment session. Bennett-Levy (2006) called this the “reflective” system and suggested that it plays a key role in helping clinicians determine how and when to use specific clinical interventions. Cognitive strategies play a role in helping clinicians generalize knowledge to new situations, which is central to becoming a competent clinician. The assessment of cognitive strategies involves determining if trainees are (a) aware of the steps required to accomplish a task, and (b) able to monitor their progress. Though it may be possible to use a clinical vignette approach, such as the ACE CARD (Carpenter et al., 2016), to assess cognitive strategies, existing tools in EBT research do not attempt to distinguish between declarative, knowledge organization, and cognitive strategies dimensions.

Skill-based mechanisms.

This dimension focuses on the technical skills associated with performing a task. In terms of EBT delivery, this includes skills involved in the delivery of the therapeutic interventions (e.g., how to construct a goal chart). Individuals developing open skills must learn how to perform specific skills and how to sequence those skills to accomplish a task (Weiss, 1990). Learning new skills necessitates the acquisition of new knowledge, so developing open skills requires that changes in cognitive- and skill-based mechanisms co-occur. For skill acquisition, this process is represented in a three-phase model (Anderson, 1982): (a) initial skill acquisition, (b) compilation, and (c) automaticity. As with the cognitive mechanisms, the skill-based mechanisms develop longitudinally.

Initial skill acquisition.

Initial skill acquisition occurs as trainees start to develop procedural knowledge, which is required to reproduce a skill. Following the initial training, trainees may be able to demonstrate some facets of a skill, but only in a rudimentary way. This is because during skill acquisition trainees require successive cognitive processing of tasks as they must rely on cognitive resources (e.g., working memory, mental rehearsal) to produce skills (Weiss, 1990). At this stage, trainees have difficulty integrating the skill and cognitive components of a new task and thus have trouble multi-tasking, recognizing errors, and identifying when a skill is not successful. Feedback at this stage is particularly conducive to facilitating skill development. Performance-based role-plays are a common method of evaluating skill acquisition in mental health research (Beidas et al., 2011; Dimeff et al., 2009). These role-plays assess skill in delivering EBT interventions in a simulated clinical setting and oftentimes require a clinician to prepare a confederate for a specific therapeutic intervention (e.g., an exposure). The role-plays are typically recorded and then coded using a treatment integrity system (e.g., Sholomskas et al., 2005) to assess skill acquisition.

Compilation.

As trainees gain experience, errors are less frequent and behavior becomes increasingly fluid as they rely less on mental rehearsal and working memory. Two cognitive processes noted above, proceduralization and composition, help facilitate the transition from skill acquisition to compilation (Anderson, 1982). Trainees become more accurate in grouping discrete behaviors into larger categories, allowing them to see how the components relate to the overarching task goals. For example, clinicians learning to implement positive behavioral supports for children in elementary school classrooms may learn to conceptually group separate therapeutic interventions, such as positive reinforcement and extinction, together as they observe how the combination of interventions contributes to improvements in compliance and engagement. These processes also allow trainees to anticipate the need for a certain skill and generalize skills to new situations. Compilation is most typically assessed via performance-based role-plays (e.g., Beidas et al., 2012; Sholomaskas et al., 2005), videotaped intervention sessions, or structured situational interviews (e.g., asking about the steps required to complete a task).

Automaticity.

Trainees progress to automaticity after they have gained sufficient experience performing a skill. Skill performance is fluid, accomplished, and individualized as the trainee has obtained mastery of the skills and knowledge needed to perform the task. Automaticity thus represents a shift from controlled to automatic processing (Schneider & Shiffrin, 1977), whereby cognitive processes are totally or nearly removed allowing the trainee to attend to other contextual features. Automaticity can be assessed by requiring a trainee to perform tasks while simultaneously doing another activity to judge how their speed and accuracy are impacted (e.g., a performance-based role play with a very difficult confederate). To our knowledge, no tests of automaticity exist in community mental health or school-based intervention research.

Attitude and relationship-based mechanisms.

The third dimension focuses on attitude and relationship processes that might change as a result of training. Gagne (1984) argued that internal states (i.e., affective states) that influence behavior should be considered to be a training outcome (e.g., positive feelings). Kraiger et al. (1993) broadened the category to include such cognitive-affective factors as attitudes and self-efficacy. Though some of the factors falling within the dimension were traditionally studied as predictors of training outcomes, Kraiger et al. (1993) noted that these variables could, and in some cases should, change due to training. We thus followed the recommendations of Kraiger et al. (1993) and postulated that attitude and self-efficacy dimensions can change as a result of training and represent a key node in the transfer of learning process in clinician training (see Figure 1). In selecting attitude- and relationship-based components that might represent a target of training and consultation, we relied upon models and findings from the clinical supervision literature (e.g., Falender & Shafranske, 2014; Lewis et al., 2014) and implementation science (e.g., Aarons et al., 2011).

Attitudes.

Some training programs attempt to change trainee attitudes. For example, some police training programs have the explicit goal of promoting attitude change, since police act as socialization agents (Feldman, 1984; Kraiger et al., 1993). Given that attitudes towards EBTs may influence uptake (Addis & Krasnow, 2000), clinician training and consultation may explicitly address trainee attitudes towards EBTs. However, even if training does not purposefully target clinician attitudes, changes may still result from training and consultation (e.g., Bearman et al., 2017). For example, when new behavioral patterns are found to be effective in the work environment, a shift in attitudes is sometimes observed (Goldstein & Sorcher, 1974; Kraut, 1976). As another example, a positive shift in attitudes has been observed in clinicians following EBT training and consultation (Allen, Wilson, & Armstrong, 2014; Bearman et al., 2017). We thus included attitudes as an indicator of learning in the LEAP model, as attitudes may shift over the course of training and consultation.

Self-efficacy.

Self-efficacy has long been a variable of interest in a number of fields. Defined as the perception an individual holds of their ability to perform a specific task (Bandura, 1983), the level of an individual’s baseline self-efficacy is believed to impact training outcomes. However, self-efficacy can also change as an individual gains experience applying skills and knowledge. In fact, some models of supervision explicitly state that a goal of supervision is to enhance clinician self-efficacy (Falender & Shafranske, 2014). Given the robust relation between self-efficacy and task performance, it seems important to gauge whether self-efficacy changes as a result of training. It is also possible that self-efficacy may predict long-term learning–for example, an individual’s self-efficacy at the end of consultation may determine, in part, the extent to which they continue to use an EBT with clients (Bandura, 1983; Kraiger et al., 1993; Marx, 1982). For this reason, self-efficacy is a key component of the attitude and relationship-based factors in the LEAP model.

Trainee-consultant alliance.

Emerging evidence suggests that the quality of the trainee-consultant relationship may influence training outcomes (Johnson, Pas, Bradshaw, & Ialongo, 2017). Originally conceived as a construct relevant to progress in psychosocial intervention, the alliance has also been applied to the trainee-consultant relationship (e.g., Falender & Shafranske, 2014; Johnson, Pas, & Bradshaw, 2016). The field has yet to establish a singular definition of the alliance for training and consultation (Falender & Shafranske, 2014; Johnson et al., 2016). At present, most definitions emphasize an affective component (e.g., affective quality of the trainee-consultant relationship) and a task-oriented component (e.g., agreement between the trainee and consultant on the tasks and goals of consultation; see Johnson et al., 2016 for a discussion). This research emphasizes that a strong alliance may motivate trainees to engage in learning activities, especially for consultation models that emphasize collaboration (e.g., collaborative coaching models; Frank & Kratochwill, 2014). The alliance can be used to operationalize the degree to which trainees and consultants trust each other and agree about the goals of training. Conceptual models, particularly of supervision, strongly emphasize the importance of the alliance (Falender & Shafranske, 2014; Roth et al., 2010). Moreover, some preliminary research in school settings suggests that the quality of the alliance predicts better implementation and training outcomes (Johnson et al., 2017; Wehby et al., 2012). For these reasons, measuring the alliance from the perspective of both the trainee and consultant may help clarify if the alliance changes as a result of training and the extent to which the alliance predicts training outcomes.

Training outcomes.

Treatment integrity based on a clinician’s delivery of an EBT with a client in a specific community context (e.g., school clinic) represents the ultimate training outcome. This is because the extent to which a clinician can demonstrate high treatment integrity with a client in a specific community context represents the degree to which transfer of learning has occurred. Treatment integrity is a broad term used to mean the degree to which an intervention was delivered as intended (Perepletchikova & Kazdin, 2005; Southam-Gerow & McLeod, 2013). The field has yet to settle on a single definition of treatment integrity (Hagermoser Sanetti & Kratochwill, 2009; McLeod, Southam-Gerow, Tully, Rodríguez, & Smith, 2013) and a number of definitions and conceptual models have been proposed (e.g., Dane & Schneider, 1998; Fixsen, Naoom, Blasé, Friedman, & Wallace, 2005; Jones, Clarke, & Power, 2008; Waltz, Addis, Koerner, & Jacobson, 1993). Two components found throughout the literature are particularly relevant to assessing training outcomes: adherence and competence. Adherence refers to the extent to which the clinician delivers interventions indicated in the protocol whereas clinician competence refers to the level of skill and degree of responsiveness demonstrated by the clinician when delivering the technical and relational elements of an intervention. Each component is thought to capture a unique aspect of the content and quality of treatment delivery that together, or in isolation, may be responsible for positive change in target outcomes (Perepletchikova & Kazdin, 2005).

Client outcomes represent a distal training outcome (Lewis et al., 2014). The ultimate goal of clinician training and consultation is to improve the quality of care in community settings. Though the evidence is limited, some evidence does suggest a relation between supervision characteristics and client outcomes (Schoenwald et al., 2009). Thus, it is important for research to consider the impact of training and consultation on both treatment integrity and outcomes.

Training and Consultation Strategies

Though the main focus of this paper is presenting the LEAP model, here we present training and consultation strategies that map onto the learning mechanisms. With psychosocial treatments, it can be difficult to draw conclusions regarding the impact of specific training and consultation strategies on training outcomes as most studies use multiple strategies (Rakovshik & McManus, 2010). We thus use findings from adult learning, industrial-organizational psychology, and mental health research to identify training and consultations strategies that are likely to impact training outcomes via the cognitive- and skill-based mechanisms specified in the LEAP model. Our recommendations take into account (a) recent efforts to specify and evaluate supervision microskills (e.g., summarizing, giving feedback, experiential learning; Bearman et al., 2017; James, Milne, & Morse, 2008); (b) evidence that passive strategies (e.g., lectures, reading material) may be important for the acquisition of declarative knowledge whereas active strategies (e.g., role-playing, Socratic questioning) may facilitate acquisition of procedural knowledge (Bennett-Levy, 2006); and (c) that the opportunity for feedback regarding performance is considered critical for learning and the development of meta-cognitive skills (Falender & Shafranske, 2014; Lewis et al., 2014). That said, research has not investigated the influence of each specific strategy listed below on mechanisms or training outcomes. The strategies below are intended to help facilitate specific aspects of the learning process during training and consultation, but it is possible that some of the strategies listed below may be useful in either training or consultation. Indeed, it is likely that the list of strategies that are effective for training and consultation will evolve as research progresses.

Training strategies.

In community mental health and school-based intervention settings, most training for EBTs occurs in multi-day workshops that introduce clinicians to the treatment model and the core interventions. Training workshops vary in the extent to which more passive (e.g., lectures) and active (e.g., role-plays) learning strategies are utilized. The literature suggests that a combination of passive and active strategies is important to present the foundational information regarding the EBT model (declarative knowledge) and provide opportunities for clinicians to practice applying the new knowledge in a variety of situations (i.e., start forming procedural knowledge; Beidas & Kendall, 2010; Herschell et al., 2010). Thus, a key goal of training is to establish declarative knowledge and provide opportunities to form procedural knowledge relevant to delivering an EBT in the clinician’s work setting. Towards this end, we have identified five core strategies (see Table 1) that blend passive and active strategies designed to help clinicians acquire declarative knowledge and initial skills (Rakovshik & McManus, 2010; Salas, Tannenbaum, Kraiger, Smith-Jentsch, & Thayer, 2012): (a) Engage learners in self-regulation activities—cognitive strategies used during training to promote sustained attention on the learning process (Sitzmann & Ely, 2011). These skills include knowing how to self-monitor performance, and comparison of progress to an end goal (“Are you learning what you need to learn?; Could you take a test on this material?; Would you know how to deliver this intervention to a client?”); (b) Behavioral rehearsal—opportunities to practice core therapeutic interventions contained in an EBT and receive feedback from a trainer regarding initial skill acquisition; (c) Cognitive rehearsal—opportunities to practice applying knowledge regarding the treatment model and core therapeutic interventions to sample cases (e.g., to develop a case formulation) and receive feedback from a trainer regarding declarative knowledge; (d) Error training—involves placing trainees into increasingly difficult behavioral and cognitive rehearsals in which errors are likely to occur in order to provide opportunities to identify errors and develop cognitive and emotion management strategies to deal with errors. Teaching clinicians how to anticipate and deal with common errors that occur when delivering an EBT helps to promote procedural knowledge and thus facilitates transfer of learning; (e) Approximate work conditions—the use of examples and confederates that represent the types of clients seen in the work environment so that the practice of skills approximates conditions seen in typical clinical practice. Over the course of training, information should be presented in multiple formats (information, model, feedback) and steps taken to ensure that active and passive learning strategies are used (Taylor, Russ-Eft, & Chan, 2005; Zapp, 2001) in order to cover a wide range of learning styles (Franzoni & Assar, 2009; Taylor et al., 2005).

Table 1.

Training and Consultation Strategies

| Training Strategies | Targeted Mechanism | ||

|---|---|---|---|

| Strategy | Definition | Cognitive | Skill |

| Self-regulation activities | Cognitive strategies used to promote sustained attention to the learning process by helping clinicians learn how to self-monitor performance, compare progress to an end goal, and adjust learning effort/strategies | X | |

| Behavioral rehearsal | Engage in role-plays to practice core EBT interventions | X | |

| Cognitive rehearsal | Engage in activities designed to help clinicians practice developing and revising case formulations | X | |

| Error training | Placing clinicians in increasingly difficult behavioral and cognitive rehearsals in which errors are likely to occur to provide opportunities to identify and develop strategies to deal with common errors | X | X |

| Approximate work conditions | Constructing behavioral and cognitive rehearsals on the types of clients typically seen in the work environment | X | X |

| Consultation Strategies | Targeted Mechanism | ||

| Strategy | Definition | Cognitive | Skill |

| Behavioral rehearsal | Engage in role-plays to practice core EBT interventions | X | |

| Cognitive rehearsal | Engage in dialog in which the case formulation is discussed and applied to the specific circumstances of the client | X | |

| Variability & distribution of practice | Vary the conditions of practice of behavioral and cognitive rehearsals over time | X | X |

| Retrieval practice | Test and provide feedback on clinician behavioral skills, cognitive skills, and knowledge | X | X |

| Difficult cognitive- & skill-based practice | Engage clinicians in increasingly challenging behavioral and cognitive rehearsals with the types of clinical situations that they are likely to encounter in their clinical setting | X | X |

Consultation.

A key goal of consultation is to promote transfer of learning by providing an opportunity for a clinician to practice and receive feedback on treatment delivery. Though consultation is considered critical to transfer of learning, there is little consensus in the mental health field regarding the structure and focus of consultation. That said, to promote long-term learning, consultation must help clinicians further develop procedural knowledge along with the meta-cognitive skills required to apply that knowledge and skills to novel situations (Bennett-Levy, 2006; Falender & Shafranske, 2014). When promoting transfer of training, it may help for consultation meetings to include agenda setting, case material review, planning for the next intervention session, and a meeting summary. Within this structure, we believe it is important to embed two core active strategies (Rakovshik, McManus, Vazquez-Montes, Muse, & Ougrin, 2016; Sholomskas et al., 2005): (a) Behavioral rehearsals of the core therapeutic interventions found in the EBT, and (b) Cognitive rehearsal in which the case formulation for a client is discussed and applied to the particular circumstances of the client. Across consultation, the delivery of these active strategies should be guided by three principles from adult learning theory (Rakovshik & McManus, 2010; Soderstrom & Bjork, 2015): (a) Variability and distribution of practice–this principle involves having clinicians engage in the skill- and cognitive-based tasks across different conditions (e.g., asking a clinician to formulate a case conceptualization based on a child with depression, with or without conduct problems), over time (i.e., rehearsal of specific skills is spread over weeks), and with multiple clients (Jackson, Herschell, Schaffner, Turiano, & McNeil, 2017). Typical consultation focuses on a single set of therapeutic interventions each week related to the content of the treatment protocol to be delivered next; however, massed practice (i.e., intense practice of the same skill in a single meeting) does not promote long-term learning (Soderstrom & Bjork, 2015). To optimize long-term learning it thus may be important to distribute cognitive and behavioral rehearsals related to core therapeutic interventions over different conditions and time; (b) Retrieval practice–this principle involves asking clinicians to demonstrate core cognitive- and skill-based tasks in different conditions to help promote long-term learning. Research indicates that testing trainees over time helps to solidify learning gains and promote long-term learning (Soderstrom & Bjork, 2015); (c) Difficult cognitive- and skill-based practice–this principle involves posing “what if” questions regarding complex clinical situations in cognitive rehearsals and engaging clinicians in challenging behavioral rehearsals (e.g., by varying the level of difficulty of the client) to help with skill acquisition and the development of active encoding processes. This strategy is similar to error training in that the activity encourages clinicians to take existing procedural knowledge and apply it to new situations to help promote the development of meta-cognitive skills required for long-term learning (see Table 1).

Implications of the LEAP Model for Future Research

The past decade has witnessed increased pressure for researchers and stakeholders from community settings to work together to implement EBTs. A goal of implementation research is to increase the speed of information transmission between the science and practice of clinical care. Powell et al. (2012) identified six broad implementation strategies used to accomplish this goal: planning, educating, financing, restructuring, quality management, and attending to policy contexts. The LEAP model has the potential to inform the education strategy and thus aid efforts to improve the quality of care in community settings.

Education targeted at clinicians is a key implementation strategy used to impact the clinical workforce. One way this strategy can be enacted is via graduate training (Baker, McFall, & Shoham, 2008; Frazier, Bearman, Garland, & Atkins, 2014). Several efforts have been made to incorporate training in EBTs into graduate curriculum (e.g., Barth, Kolivoski, Lindsey, Lee, & Collins, 2013; Weisz, Ng, & Bearman, 2014). However, little guidance from the literature is provided on how best to structure training and consultation within a graduate curriculum to cultivate trainee EBT competence.

Another target of education strategies is the existing workforce. This involves clinicians who may have been trained in and regularly practice non-evidence-based strategies. Further, previous exposure to and training in EBTs is likely to vary across age cohorts (e.g., Karekla, Lundgren, & Forsyth, 2004) and by academic discipline (Barth et al., 2013). Thus, many trainees from the workforce are asked to adopt a novel set of interventions that may contradict their current approach and to do so while they are actively engaged in clinical work. Addressing the training needs of this population requires strategies that can be delivered in community settings, while surmounting the existing perceptual (e.g., attitudes toward EBTs) and organizational barriers (e.g., competing time demands; Accurso et al., 2011; Dorsey et al., 2017) to transfer of learning and sustained EBT delivery.

Given existing gaps in our knowledge base, a challenge going forward for implementation research is to develop feasible, efficient, and effective education strategies for students and seasoned clinicians (Chu, 2008; Rakovshik & McManus, 2010). To accomplish these goals, evidence-based clinician training and consultation strategies are needed. Yet, as a field, mental health research is far from achieving this goal. Adopting a mechanistic approach to training and consultation may contribute to the goals of implementation science. The LEAP model has the potential to identify the specific training and consultation strategies needed to support educational opportunities offered to graduate students or the existing workforce. As noted earlier, the LEAP model is intended to help guide programmatic research that will lead to the identification of feasible, efficient, and effective training and consultation strategies. To achieve this goal, the following three key questions raised by the model need to be addressed:

Which cognitive- and skill-based changes are required for learning? Research and theory suggest that both initial training and consultation are required for learning (Beidas & Kendall, 2010; Herschell et al., 2010; Walters et al., 2005). However, it is not clear how changes in cognitive- and skill-based mechanisms relate to long-term learning. Thus, one goal is to identify the specific mechanistic changes required to promote clinician long-term learning. Understanding the role of each mechanism may allow us to narrow the range of possible mechanisms that need to be targeted, representing an important step toward creating efficient and feasible training and consultation strategies.

What are the effective training and consultation strategies that promote change in cognitive- and skill-based mechanisms? Specific training and consultation strategies seem to be critical to long-term learning (Soderstrom & Bjork, 2015). However, it is not clear which training and consultation strategies promote changes in specific cognitive- and skill-based mechanisms. Thus, a goal for research is to identify specific strategies that support the development of skill- and cognitive-based mechanisms.

What training and consultation strategies help promote long-term learning? Learning is clearly a longitudinal process (Ford & Weissbein, 1997; Soderstrom & Bjork, 2015), but how long it takes specific training and consultation strategies to promote learning is not clear. Clarifying the typical temporal parameters of long-term learning in clinicians is critical to informing education strategies.

What individual and organizational factors influence long-term learning? Research and theory suggest that individual and organizational factors may influence training outcomes, but how specific factors influence the learning process is unclear. Better understanding of how individual (experience, attitudes, motivation) and organizational (climate, culture) factors present at baseline influence the learning process can inform education strategies targeted at graduate students and the existing workforce.

To address these questions, specific measurement approaches and methodologies are required. Regarding measurement, a key aspect of the LEAP model is the hypothesis that mechanisms and training outcomes are multidimensional. We assert that EBT delivery represents an open skill that requires the acquisition of new skills and knowledge. To fully understand the process by which initial training and ongoing consultation influence training outcomes, it is critical that research assesses multiple learning dimensions. The LEAP model delineates how the transfer of learning process depends on certain changes in skill-, cognitive-, and attitude/relationship-based mechanisms (Kraiger et al., 1993). Thus, these dimensions should be assessed together in order to understand the transfer of learning process.

The LEAP model also posits that the transfer of learning process unfolds over time. It follows that in order to capture the transfer of learning, both mechanisms of learning and training outcomes need to be assessed on multiple occasions. Understanding this unfolding process of the development of learning mechanisms and subsequent EBT implementation mastery will lead to greater theoretical specificity regarding the timing of change in clinical training and consultation. At present, a significant challenge for the field is determining the appropriate time interval during which to administer instruments.

Experimental methods can be used to directly evaluate the effects of manipulating specific strategies on the mechanisms and training outcomes. Two types of experimental designs may be particularly useful for addressing key questions about the relationships among training and consultation strategies, learning mechanisms, and training outcomes: single case designs and micro trials (i.e., randomized experiments designed to help determine what strategies and mechanisms provide maximum impact for specific participants; Howe, Beach, & Brody, 2010). Barlow, Nock, and Hersen (2009) have advocated for the use of single case designs for developing and evaluating interventions. Micro-trials are increasingly used to test the impact of specific strategies on mediators and outcomes (Howe et al., 2010). These designs avoid many of the practical constraints posed by traditional RCTs (e.g., need for a large number of clinicians). A key strength of each design is they can help determine if the introduction of a training or consultation strategy is associated with changes in mediators and outcomes. Although we do not know of any study that uses microtrials in the training and consultation literature, some researchers have used single case designs to evaluate the influence of consultation on clinician behavior (e.g., Milne, Pilkington, Gracie, & James, 2003; Milne, Reiser, & Cliffe, 2013)

Summary.

In this paper, we introduced the LEAP model. LEAP is a mechanistic model of training and consultation that can help guide research on EBT learning and mastery in community settings. We hope that the model will serve as a roadmap for a new generation of studies that may ultimately improve the quality of care for as many children and families as possible.

Acknowledgements:

Preparation of this article was supported by a grant from the National Institute of Mental Health (R34 MH110591; McLeod & Wood).

Footnotes

Conflicts of Interest: The authors have no conflicts to disclose.

Contributor Information

DR Bryce D. McLeod, Virginia Commonwealth University, Department of Psychology.

Julia R. Cox, Virginia Commonwealth University, Department of Psychology

Amanda Jensen-Doss, University of Miami, Department of Psychology.

Amy Herschell, West Virginia University.

Jill Ehrenreich-May, University of Miami, Department of Psychology.

Jeffrey J. Wood, Departments of Education and Psychiatry, University of California

References

- Aarons GA, Hurlburt M, & Horwitz SM (2011). Advancing a conceptual model of evidence-based practice implementation in public service sectors. Administration and Policy in Mental Health and Mental Health Services Research, 38(1), 4–23. doi: 10.1007/s10488-010-0327-7 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Accurso EC, Taylor RM, & Garland AF (2011). Evidence-based practices addressed in community-based children’s mental health clinical supervision. Training and Education in Professional Psychology: Training and Education in Professional Psychology, 5(2), 88–96. doi: 10.1037/a0023537 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Addis ME, & Krasnow AD (2000). A national survey of practicing psychologists’ attitudes towards psychotherapy treatment manuals. Journal of Consulting and Clinical Psychology, 68(2), 331–339. doi: 10.1037/0022-006X.68.2.331 [DOI] [PubMed] [Google Scholar]

- Allen B, Wilson KL, & Armstrong NE (2014). Changing clinicians’ beliefs about treatment for children experiencing trauma: The impact of intensive training in an evidence-based trauma-focused treatment. Psychological Trauma: Theory, Research, Practice, and Policy, 6(4), 384–389. doi: 10.1037/a0036533 [DOI] [Google Scholar]

- Anderson JR (1982). Acquisition of cognitive skills. Psychological Review, 89(4), 369–406. doi: 10.1037/0033-295X.89.4.369 [DOI] [Google Scholar]

- Bandura A (1983). Self-efficacy determinants of anticipated fears and calamities. Journal of Personality and Social Psychology, 45, 464–469. doi: 10.1037/0022-3514.45.2.464 [DOI] [Google Scholar]

- Barber JP, Sharpless B, Klostermann S, & McCarthy KS (2007). Assessing intervention competence and its relation to therapy outcome: A selected review derived from the outcome literature. Professional Psychology: Research and Practice, 38(5), 493–500. doi: 10.1037/0735-7028.38.5.493 [DOI] [Google Scholar]

- Baker TB, McFall RM, & Shoham V (2008). Current status and future prospects of clinical psychology: Toward a scientifically principled approach to mental and behavioral health care. Psychological Science in the Public Interest, 9(2), 67–103. doi: 10.1111/j.1539-6053.2009.01036.x [DOI] [PMC free article] [PubMed] [Google Scholar]

- Barlow DH, Nock MK, & Hersen M (2009). Single case experimental designs: Strategies for studying behavior change (3rd ed.). Upper Saddle River, NJ: Pearson. [Google Scholar]

- Barth RP, Kolivoski KM, Lindsey M. a., Lee BR, & Collins KS (2013). Translating the common elements approach: Social work’s experiences in education, practice, and research. Journal of Clinical Child & Adolescent Psychology, 43, 301–311. doi: 10.1080/15374416.2013.848771 [DOI] [PubMed] [Google Scholar]

- Bauer KN, Orvis KA, Ely K, & Surface EA (2016). Re-examination of motivation in learning contexts: Meta-analytically investigating the role type of motivation plays in the prediction of key training outcomes. Journal of Business and Psychology, 31(1), 33–50. doi: 10.1007/s10869-015-9401-1 [DOI] [Google Scholar]

- Bearman SK, Schneiderman RL, & Zoloth E (2017). Building an evidence base for effective supervision practices: An analogue experiment of supervision to increase EBT fidelity. Administration and Policy in Mental Health and Mental Health Services Research, 44(2), 293–307. doi: 10.1007/s10488-016-0723-8 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Bearman SK, Weisz JR, Chorpita BC, Hoagwood KE, Ward A, Ugueto AM, & Bernstein A (2013). More practice, less preach? The role of supervision processes and therapist characteristics in EBP implementation. Administration and Policy in Mental Health and Mental Health Services Research, 40(6), 518–529. doi: 10.1007/s10488-013-0485-5 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Becker KD, & Stirman SW (2011). The science of training in evidence-based treatments in the context of implementation programs: Current status and prospects for the future. Administration and Policy in Mental Health and Mental Health Services Research, 38(4), 217–22. doi: 10.1007/s10488-011-0361-0 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Beidas RS, Edmunds JM, Marcus SC, & Kendall PC (2012). Training and consultation to promote implemetnation of an empirically supported treatment: A randomized trial. Psychiatric Services, 63(7), 660–665. doi: 10.1176/appi.ps.201100401b [DOI] [PMC free article] [PubMed] [Google Scholar]

- Beidas RS, & Kendall PC (2010). Training therapists in evidence-based practice: A critical review of studies from a systems-contextual perspective. Clinical Psychology: Science and Practice, 17(1), 1–30. doi: 10.1111/j.1468-2850.2009.01187.x [DOI] [PMC free article] [PubMed] [Google Scholar]

- Beidas RS, Koerner K, Weingardt KR, & Kendall PC (2011). Training research: Practical recommendations for maximum impact. Administration and Policy in Mental Health and Mental Health Services Research, 38(4), 223–37. doi: 10.1007/s10488-011-0338-z [DOI] [PMC free article] [PubMed] [Google Scholar]

- Bennett-Levy J (2006). Therapist skills: A cognitive model of their acquisition and refinement. Behavioural and Cognitive Psychotherapy, 34(1), 57–78. doi: 10.1017/S1352465805002420 [DOI] [Google Scholar]

- Binder JL (1993). Is it time to improve psychotherapy training? Clinical Psychology Review, 13, 301–318. doi: 10.1016/0272-7358(93)90015-E [DOI] [Google Scholar]

- Binder JL (1999). Issues in teaching and learning time-limited psychodynamic psychotherapy. Clinical Psychology Review, 19, 705–719. doi: 10.1016/S0272-7358(98)00078-6 [DOI] [PubMed] [Google Scholar]

- Blume BD, Ford JK, Baldwin TT, & Huang JL (2010). Transfer of training: A meta-analytic review. Journal of Management, 36(4), 1065–1105. doi: 10.1177/0149206309352880 [DOI] [Google Scholar]

- Brown A (1975). The development of memory: Knowing, knowing about knowing, and knowing how to know In Reese H (Ed.), Advances in child development and behavior (pp. 103–152). San Diego, CA: Academic Press. [DOI] [PubMed] [Google Scholar]

- Brunette MF, Asher D, Whitley R, Lutz WJ, Wieder BL, Jones AM, & McHugo GJ (2008). Implementation of integrated dual disorders treatment: A qualitative analysis of facilitators and barriers. Psychiatric Services, 59(9), 989–995. doi: 10.1176/appi.ps.59.9.989 [DOI] [PubMed] [Google Scholar]

- Bunger AC, Hanson RF, Doogan NJ, Powell BJ, Cao Y, & Dunn J (2016). Can Learning Collaboratives support implementation by rewiring professional networks? Administration and Policy in Mental Health and Mental Health Services Research, 43, 79–92. doi: 10.1007/s10488-014-0621-x [DOI] [PMC free article] [PubMed] [Google Scholar]

- Cannon-Bowers JA, Salas E, Tannenbaum SI, & Mathieu JE (1995). Toward theoretically based principles of training effectiveness: A model and initial empirical investigation. Military Psychology, 7(3), 141–164. doi: 10.1207/s15327876mp0703_1 [DOI] [Google Scholar]

- Caplan G, & Caplan RB (1993). Mental health consultation and collaboration. San Francisco, CA: Jossey-Bass Publishers, Inc. [Google Scholar]

- Carpenter AL, Pincus DB, Conklin PH, Wyszynski CM, Chu BC, & Comer JS (2016). Assessing cognitive-behavioral clinical decision-making among trainees in the treatment of childhood anxiety. Training and Education in Professional Psychology, 10(2), 109–116. 109–116. doi: 10.1037/tep0000111 [DOI] [Google Scholar]

- Cavaleri MA, Franco LM, McKay MM, Appel A, Bannon WM, Bigley MF, & Thaler S (2007). The sustainability of a learning collaborative to improve mental health service use among low-income urban youth and families. Best Practices in Mental Health, 3, 52–61. [Google Scholar]

- Cavaleri MA, Gopalan G, McKay MM, Appel A, Bannon WM, Bigley MF, & Thaler S (2006). Impact of a learning collaborative to improve child mental health service use among low-income urban youth and families. Best Practices in Mental Health, 2, 67–79. [Google Scholar]

- Cavaleri MA, Gopalan G, McKay MM, Messam T, Velez E, & Elwyn L (2010). The effect of a learning collaborative to improve engagement in child mental health services. Children and Youth Services Review, 32, 281–285. doi: 10.1016/j.childyouth.2009.09.007 [DOI] [Google Scholar]

- Chambers DA, & Azrin ST (2013). Research and services partnerships: A fundamental component of dissemination and implementation research. Psychiatric Services, 64(6), 509–511. doi: 10.1176/appi.ps.201300032. [DOI] [PubMed] [Google Scholar]

- Chambers DA, Glasgow RE, & Stange KC (2013). The dynamic sustainability framework: Addressing the paradox of sustainment amid ongoing change. Implementation Science, 8, 117. doi: 10.1186/1748-5908-8-117 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Chu BC (2008). Empirically supported training approaches: The who, what, and how of disseminating psychological interventions. Clinical Psychology: Science and Practice, 15(4), 308–312. doi: 10.1111/j.1468-2850.2008.00142.x [DOI] [Google Scholar]

- Chu BC, & Kendall PC (2009). Therapist responsiveness to child engagement: Flexibility within manual-based CBT for anxious youth. Journal of Clinical Psychology, 65(7), 736–754. doi: 10.1002/jclp [DOI] [PubMed] [Google Scholar]

- Colquitt JA, LePine JA, & Noe RA (2000). Toward an integrative theory of training motivation: A meta-analytic path analysis of 20 years of research. Journal of Applied Psychology, 85(5), 678–707. doi: 10.1037//0021-9010.g5.5.678 [DOI] [PubMed] [Google Scholar]

- Crits-Christoph P, Siqueland L, Chittams J, Barber JP, Beck AT, Frank A, … Woody G (1998). Training in cognitive, supportive-expressive, and drug counseling therapies for cocaine dependence. Journal of Consulting and Clinical Psychology, 66(3), 484–492. doi: 10.1037/0022-006X.66.3.484 [DOI] [PubMed] [Google Scholar]

- Dane AV, & Schneider BH (1998). Program integrity in primary and early secondary prevention: Are implementation effects out of control? Clinical Psychology Review, 18, 23–45. doi: 10.1016/S0272-7358(97)00043-3 [DOI] [PubMed] [Google Scholar]

- Dimeff LA, Koerner K, Woodcock EA, Beadnell B, Brown MZ, Skutch JM, … Harned MS (2009). Which training method works best? A randomized controlled trial comparing three methods of training clinicians in dialectical behavior therapy skills. Behaviour Research and Therapy, 47(11), 921–930. doi: 10.1016/j.brat.2009.07.011 [DOI] [PubMed] [Google Scholar]

- Ditty MS, Landes SJ, Doyle A, & Beidas RS (2015). It takes a village: A mixed method analysis of inner setting variables and dialectical behavior therapy implementation. Administration and Policy in Mental Health and Mental Health Services Research, 42(6), 672–681. doi: 10.1007/s10488-014-0602-0 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Dopp AR, Hanson RF, Saunders BE, Dismuke CE, & Moreland AD (2017). Community-based implementation of trauma-focused interventions for youth: Economic impact of the learning collaborative model. Psychological Services, 14, 57–65. doi: 10.1037/ser0000131 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Dorsey S, Kerns SEU, Lucid L, Pullmann MD, Harrison JP, Berliner L, … Deblinger E (2018). Objective coding of content and techniques in workplace-based supervision of an EBT in public mental health. Implementation Science, 13(1), 1–12. doi: 10.1186/s13012-017-0708-3 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Dorsey S, Pullmann MD, Kerns SEU, Jungbluth N, Meza R, Thompson K, & Berliner L (2017). The juggling act of supervision in community mental health: Implications for supporting evidence-based treatment. Administration and Policy in Mental Health and Mental Health Services Research, 44(6), 838–852. doi: 10.1007/s10488-017-0796-z [DOI] [PMC free article] [PubMed] [Google Scholar]

- Edmunds JM, Beidas RS, & Kendall PC (2013). Dissemination and implementation of evidence-based practices: Training and consultation as implementation strategies. Clinical Psychology: Science and Practice, 20(2), 152–165. doi: 10.1111/cpsp.12031 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Fairburn CG, & Patel V (2014). The global dissemination of psychological treatments: A road map for research and practice. American Journal of Psychiatry, 171(5), 495–498. doi: 10.1176/appi.ajp.2013.13111546 [DOI] [PubMed] [Google Scholar]

- Falender CA (2014). Supervision outcomes: Beginning the journey beyond the emperor’s new clothes. Training and Education in Professional Psychology, 8(3), 143–148. doi: 10.1037/tep0000066 [DOI] [Google Scholar]

- Falender CA, & Shafranske EP (2014). Clinical supervision: The state of the art. Journal of Clinical Psychology, 70(11), 1030–1041. doi: 10.1002/jclp.22124 [DOI] [PubMed] [Google Scholar]

- Feldman D (1984). The development and enforcement of group norms. Academy of Management Review, (9), 47–53. doi: 10.2307/258231 [DOI] [Google Scholar]

- Fixsen DL, Naoom SF, Blase KA, Friedman RM, & Wallace F (2005). Implementation research: A synthesis of the literature. Tampa, FL: University of South Florida, The National Implementation Research Network. [Google Scholar]

- Ford J, & Weissbein D (1997). Transfer of training. An updated review and analysis. Performance Improvement Quarterly, 10, 22–41. doi: 10.1111/j.1937-8327.1997.tb00047.x [DOI] [Google Scholar]