Abstract

Recognition of actions and complex movements is fundamental for social interactions and action understanding. While the relationship between motor expertise and visual recognition of body movements has received a vast amount of interest, the role of visual learning remains largely unexplored. Combining psychophysics and functional magnetic resonance imaging (fMRI) experiments, we investigated neural correlates of visual learning of complex movements. Subjects were trained to visually discriminate between very similar complex movement stimuli generated by motion morphing that were either compatible (experiments 1 and 2) or incompatible (experiment 3) with human movement execution. Employing an fMRI adaptation paradigm as index of discriminability, we scanned human subjects before and after discrimination training. The results of experiment 1 revealed three different effects as a consequence of training: (1) Emerging fMRI-selective adaptation in general motion-related areas (hMT/V5+, KO/V3b) for the differences between human-like movements. (2) Enhanced of fMRI-selective adaptation already present before training in biological motion-related areas (pSTS, FBA). (3) Changes covarying with task difficulty in frontal areas. Moreover, the observed activity changes were specific to the trained movement patterns (experiment 2). The results of experiment 3, testing artificial movement stimuli, were strikingly similar to the results obtained for human movements. General and biological motion-related areas showed movement-specific changes in fMRI-selective adaptation for the differences between the stimuli after training. These results support the existence of a powerful visual machinery for the learning of complex motion patterns that is independent of motor execution. We thus propose a key role of visual learning in action recognition.

Introduction

Recognition and understanding of complex movements and actions is critical for survival and social interactions in the dynamic environment we inhabit. Thus, it is no surprise that the human visual system is highly skilled in action recognition even from highly impoverished stimuli like point-light displays (for review, see Blake and Shiffrar, 2007).

Since the discovery of the “mirror system” (Rizzolatti et al., 1996), many studies have investigated the interplay between observation and execution of movements and its neural substrates in the human and monkey brain (Decety et al., 1997; Iacoboni et al., 1999; Buccino et al., 2001; Johnson-Frey et al., 2003; Rizzolatti and Craighero, 2004). More recently, several studies have focused on the specific relationship between movement observation and ones own motor expertise, showing a direct influence of motor learning on the visual recognition of actions (Hecht et al., 2001; Calvo-Merino et al., 2006; Casile and Giese, 2006; Cross et al., 2006). However, the role of visual learning in the processing of action patterns remains largely unexplored. Does learning of nonimitable complex movements affect cortical areas in the same way as the learning of natural human movements? Previous studies have reported minor differences between the neural substrates representing human and nonhuman movements (Pelphrey et al., 2003; Pyles et al., 2007). However, it remains unclear whether these differences reflect disjoint neural machineries, or if they reflect signatures of different levels of visual expertise of a common neural representation.

To address these questions, we tested whether training to discriminate movements, results in changes in functional magnetic resonance imaging (fMRI)-selective adaptation (Kourtzi and Kanwisher, 2000; Grill-Spector and Malach, 2001) in visual areas involved in processing of human actions, including the human middle temporal complex, the kinetic occipital area, biological motion selective areas in temporal cortex and action-responsive regions in prefrontal cortex. The stimuli comprised point-light walkers (Johansson, 1973), generated by morphing between three prototypical movements (Giese and Poggio, 2000; Jastorff et al., 2006). We parametrically controlled the spatiotemporal similarity of the movements by varying the weights of the prototypes. In experiments 1 and 2, prototypes were drawn from original human movements (e.g., walking), while in experiment 3, the prototypes contained artificial complex movements that could not be executed by humans. These artificial movements shared some basic properties of human movements (articulation, average speed), but were based on skeletons that did not resemble naturally occurring species. To test whether learning shapes the neural representations of movements with and without relevance for motor execution, we scanned observers before and after training on a discrimination task. We reasoned that areas involved in the discrimination of movements would show fMRI-selective adaptation; that is higher fMRI responses for different movements than the same movement presented repeatedly. We predicted that enhanced fMRI-selective adaptation for similar movements after training would indicate learning-dependent changes in the neural representations of movements, and that this effect might be more prominent for human movements compared with artificial stimuli in frontal and temporal areas, whereas general motion-related areas might not differ in their response to the two stimulus groups.

Materials and Methods

Participants

Thirty-three students from the University Tübingen participated in this study: eleven in each of the three experiments. The data from one subject in experiment 1 and two subjects in experiment 3 were excluded due to either excessive head movement or poor psychophysical performance in the training sessions. All experiments were approved by the local ethics committee and informed consent was obtained from all participants.

Stimuli

All stimuli (5 × 10 degrees of visual angle) were point-light displays, presented as 10 white dots (0.5 degrees of visual angle) on a black background. To minimize the effect of low-level position cues, the points were not presented exactly at the joint positions, but were randomly jittered along the segments of the skeleton. The displacement varied randomly between 0% and 20% of the segment length in every frame of the animation. Previous psychophysical studies had shown the suitability of these stimuli for discrimination learning of complex movements (Jastorff et al., 2006).

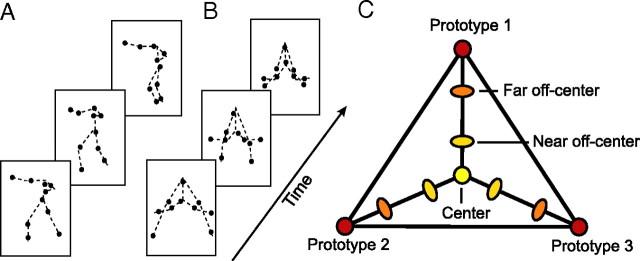

The natural human-like stimuli used for experiments 1 and 2 (Fig. 1A) were obtained by tracking the two-dimensional joint positions in video movies of a human actor facing orthogonally to the view axis of the camera while performing different movements. Eighteen movements were recorded including locomotion, dancing, aerobics and martial arts sequences. Twelve points were tracked manually (head, shoulders, elbows, wrist, hip, knees, and ankles) but the positions of the shoulder and the head markers were averaged for stimulus presentation.

Figure 1.

Stimuli and morphing space. A, B, Individual frames of a natural human-like point light stimulus (A) and an artificial pattern (B) (the dashed lines connecting the joins were not shown during the experiment). C, Metric space defined by motion morphing. Morphs were generated by linear combination of the joint trajectories of three prototypical patterns (Prototypes 1–3). Three groups of stimuli were generated by choosing different combinations of linear weights: (1) Center Stimuli, for which each of the three prototypes contributed to the resulting morph with equal weights (33.33%), (2) near Off-Center Stimuli, for which the weight for one of the prototypes was on average 60% and for each of the other two prototypes 20%, and (3) far Off-Center Stimuli, for which the weight for one of the prototypes was on average 75% and for each of the other two prototypes 12.5%.

The artificial movement stimuli used in experiment 3 (Fig. 1B) were generated by animation of 18 different artificial skeleton models with 9 segments. The skeletons were chosen to be highly dissimilar from naturally occurring body structures. Debriefing of the observers showed that the stimuli did not result in any consistent interpretation, in contrast with the natural human-like stimuli that were recognized accurately as human movements by all observers. None of the observers interpreted any artificial pattern as a human action.

The joint angle trajectories αn(t) of each skeleton were given by sinusoidal functions of the form: αn(t) = an + bnsin(ωt + ϕn). Their frequency ω and amplitude bn were matched with that of typical joint trajectories of human actors during natural movements. Moreover, we assured that the movement of the individual points fulfilled the “two-thirds power law” that links the curvature and the speed of approximately planar human movements (Viviani and Stucchi, 1992) (for details, see Jastorff et al., 2006). Following this procedure, we generated 18 artificial movement prototypes. In addition, the segment length and the area covered by the artificial stimuli were matched with the natural human-like movements to control for differences in the low-level properties of the two stimulus classes.

All stimuli were generated using motion morphing. We applied an algorithm known as spatiotemporal morphable models (Giese and Poggio, 2000; Giese and Lappe, 2002) that generates new trajectories by linear combination of prototypical movement patterns in space-time. Each stimulus was defined as linear combinations of three prototypical movements: New motion pattern = c1 · (Prototype 1) + c2 · (Prototype 2) + c3 · (Prototype 3).

The weights ci determined how much the individual prototypes contributed to the morph. When the weight of one prototype was very high, the linear combination strongly resembled this prototype. (Weight combinations always fulfilled c1 + c2 + c3 = 1). The weight vectors (c1, c2, c3) defined a Euclidian space of movement patterns that provided a metric of the spatiotemporal similarity between the patterns. This has been verified in studies applying multidimensional scaling to similarity judgments between stimuli generated by this method (Giese et al., 2008). The metric space allowed us to precisely manipulate the difficulty of the discrimination task by varying the distance between the movements in morphing space.

The morphing technique was used to generate three different classes of stimuli: (1) Center Stimuli, for which each of the three prototypes contributed to the resulting morph with equal weights (33.33%), (2) near Off-Center Stimuli, for which the weight for one of the prototypes slightly exceeded the weights for the other two prototypes, and (3) far Off-Center Stimuli, for which the weight for one of the prototypes was much higher compared to the other two prototypes (Fig. 1C). These weights resulted in a gradual change in the physical similarity between morphs as indicated by measurements of the Euclidean distances between the stimulus trajectories. For natural human-like movements, the mean distance between the trajectories of the center and the near off-center stimuli was 0.073, the center and the far off-center stimuli 0.117, and the center and the prototype stimuli 0.172. For the artificial movements, the Euclidean distance between the artificial center and near off-center stimuli was 0.085, indicating that the physical differences in the stimulus space generated for the human-like movements and the artificial patterns were very similar.

To ensure that the morphed human movements appeared natural, we morphed between prototypes from the same movement category (e.g., running, limping and marching or three different types of boxing movements). Previous studies have shown that the technique of spatiotemporal morphing interpolates smoothly between quite dissimilar gait patterns (e.g., walking and running), resulting in motion morphs that look highly natural (Giese and Lappe, 2002). In addition, we collected naturalness ratings in a pilot experiment (scale 1: unnatural, 5: natural) for each of the morphed stimuli. Only stimuli with high naturalness ratings (4 or 5) were used in experiments 1 and 2.

Finally, the scrambled point light stimuli used for the localizer of biological motion-related areas were generated by randomizing the initial starting position of every point in the intact point-light displays of the prototypical actions, while preserving the original motion vector of each individual point. The scrambled and intact point-light displays were matched for area and dot density (Grossman et al., 2000).

Design and procedure

In addition to the experimental runs, each subject participated in five localizer runs. These were used to map the early retinotopic areas (1 run), the kinetic occipital area, KO/V3b (1 run), the middle temporal area: hMT/V5+ (1 run) and biological motion-related areas (2 runs), namely the posterior superior temporal sulcus (pSTS) and the extrastriate and the fusiform body area (EBA/FBA).

Experiment 1: learning of novel natural movements

Pretraining scanning session (day 1).

Six different groups of natural human-like movements were presented. Each group consisted of movement morphs between three prototypical natural human actions from the same category (e.g., three different locomotion patterns or three kinds of boxing movements). Four different conditions were tested: (1) Identical: the same center stimulus was presented twice, (2) Very Similar: a center stimulus was presented, followed by a near off-center stimulus, (3) Less Similar: a center stimulus was followed by a far off-center stimulus, and (4) Dissimilar: a center stimulus was followed by a prototype (Fig. 1C).

The scanning session consisted of four event-related runs without feedback. The participants were instructed to judge whether two successively presented stimuli were the same or different. Each run started and ended with an eight second fixation epoch. Every run consisted of 20 experimental trials for each of the four conditions and 20 fixation trials that were interleaved with the experimental trials. Each trial lasted 4 s and started with the first stimulus (one movement cycle) presented for 1300 ms, followed by a 100 ms blank, the second stimulus for 1300 ms and ended with a blank interval of 1300 ms. The history of the conditions was matched so that each condition, including the fixation condition, was preceded equally often by trials from each of the other conditions [analogous to the experiment by Kourtzi and Kanwisher (2000)]. Over the whole scanning session, four stimulus groups were shown 13 times and two stimulus groups were shown 14 times for each condition. The two groups that were presented 14 times differed between subjects.

Training sessions (days 2–4).

Each subject participated in three training sessions in the laboratory on consecutive days. Each session consisted of a total of 150 trials, 25 trials per movement group. In all trials participants had to compare center stimuli with off-center stimuli in a paired comparison paradigm (Fig. 1C). Each trial started with the presentation of a center stimulus for four gait cycles (5200 ms), followed either by the same center stimulus or by an off-center stimulus (generated from the same triple of prototypes, presented for four movement cycles). The prototype contributing with the highest weight to the off-center stimulus was chosen randomly. Participants were not trained in the Dissimilar condition, as their discrimination performance was high already before training. In a two-alternative forced choice paradigm, participants had to report whether the second stimulus matched the first one.

On each day, the training session consisted of three test blocks that were interleaved by two training blocks. In the test blocks, center stimuli had to be discriminated from near off-center stimuli. Each stimulus group was presented 3 times in random order, resulting in 18 trials per test block. During test trials no feedback about correct discrimination was provided. Based on a pilot experiment with a different set of observers, we adjusted the similarity of the near off-center to the center stimuli to achieve an average performance level of ∼50% before training. The morphing weights for the other types of stimuli were rescaled accordingly. The training blocks consisted of 48 trials (8 repetitions per stimulus group). During training, participants had to discriminate between center and far off-center stimuli and received feedback about their performance. After each training session, the observers' performance was tested in one experimental run without feedback, matching the situation during scanning (supplemental Fig. S1, available at www.jneurosci.org as supplemental material).

Post-training scanning session (day 5).

After completion of the training sessions, the subjects were tested on the following day in the scanner in the same way as in the pretraining scanning session.

Experiment 2: trained versus untrained natural movements

The experiment consisted of 3 d of training, followed by one scanning session (day 4). The training procedure was similar to that of experiment 1 with the exception that three out of the six different movement groups were selected for training (trained stimuli) while the other three groups were only presented during the scanning session without any training (untrained stimuli). The stimuli used for training and the untrained stimuli were counterbalanced across subjects.

Four conditions were tested in the post-training scanning session: (1) Identical Trained: the same trained center stimulus was presented twice in a trial, (2) Very Similar Trained: a trained center stimulus was followed by a trained near off-center stimulus, (3) Identical Untrained: the same untrained center stimulus was presented twice in a trial, (4) Very Similar Untrained: an untrained center stimulus was followed by an untrained near off-center stimulus. Despite the conditions, the design of the runs was identical to experiment 1.

Experiment 3: learning of novel artificial movements

The experiment consisted of a pretraining scanning session (day 1) followed by three consecutive days of training (days 2–4), and a post-training scanning session (day 5). The procedure and design for the training and scanning sessions was the same as in experiment 2 with the exception that artificial movement stimuli were presented.

Imaging

Data were collected with a 3T Siemens scanner (University Clinic, Tübingen) with gradient echo pulse sequence [repetition time (TR) = 2 s, echo time (TE) = 30 ms] for 24 axial slices (voxelsize: 3×3 mm in plane, 5 mm thickness) using a standard head coil. The 24 slices in one volume covered the entire brain from the cerebellum to the vertex. A three-dimensional (3D) high resolution T1-weighted image covering the entire brain was acquired in each scanning session and used for anatomical reference. A single scanning session lasted ∼90 min.

fMRI data analysis

The fMRI data were processed using the Brain Voyager 2000/QX software package. After removing linear trends, temporal filtering as well as correcting for head movements, the 2D functional data were aligned to the 3D anatomical data and transformed into Talairach space. The anatomical scans across sessions were carefully aligned to each other ensuring good correspondence of voxels across scanning sessions. That way, the regions of interest (ROIs) defined in one scanning session could also be used for the second session.

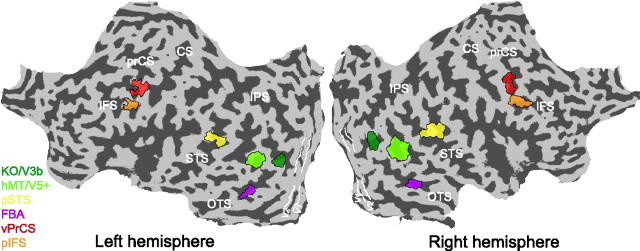

For each individual subject, 8 regions of interest were defined. The definition was based on the overlap of functional activations and anatomical landmarks reported in previous studies as being characteristic of the cortical locus of the area. The retinotopic visual areas V1 and V2 were mapped based on standard procedures (Engel et al., 1994). The radius of the rotating wedge was 5 degrees visual angle. hMT/V5+ was localized by contrasting moving and static random dot texture patterns (Tootell et al., 1995) and area KO/V3b was localized by contrasting activation for motion defined contours and transparent motion, as described by Van Oostende et al. (1997). Area pSTS was defined as the set of voxels on the posterior extent of the superior temporal sulcus that showed significantly stronger activation (p < 10−4) for intact than scrambled point-light walkers, consistent with previous studies (Grossman et al., 2000) (supplemental Table S1, available at www.jneurosci.org as supplemental material). Similarly, stronger activation (p < 10−4) for intact than scrambled point-light walkers was observed in a region in the fusiform gyrus, corresponding to the fusiform body area (FBA) (Peelen and Downing, 2005). In a recent study, Peelen and Downing (2006) showed that even though area FFA and FBA are in close proximity of one another, voxelwise correlation analysis reveals that only FBA, but not FFA correlates significantly with biological motion processing.

We also observed significantly stronger activation for intact compared to scrambled point-light walkers in the posterior ITS, corresponding to area EBA (Downing et al., 2001). Analyzing the fMRI signal within this ROI (avoiding overlap with hMT/V5+) showed very similar activation changes compared with area FBA (see supplemental Fig. S2, available at www.jneurosci.org as supplemental material). Given this result, we decided to focus on areas FBA and pSTS as examples of biological motion-related areas.

The biological motion localizer was also used to map the ROIs in the frontal cortex. In accordance with a study by Pyles et al. (2007), we did not obtain significantly stronger activation in frontal areas when directly comparing the fMRI signal for intact versus scrambled biological motion. However, Saygin et al. (2004) reported frontal activations selective to point-light biological motion. To be able to investigate changes in these areas, we compared fMRI activation for intact point-light walker with the fixation baseline. This contrast resulted in restricted activations in both the ventral precentral sulcus and the posterior inferior frontal sulcus in close proximity to the locations reported by Saygin et al. (2004) (supplemental Table S1, available at www.jneurosci.org as supplemental material). Figure 2 displays the location of the individual ROIs for a single subject, superimposed on the subjects' flattened left and right hemisphere.

Figure 2.

Analyzed regions of interest. Regions of interest for a single subject visualized on the flattened cortical surface of the left and the right hemisphere of this subject. The color coded areas represent, the human MT complex (hMT/V5+), the kinetic occipital area (KO/V3b), the pSTS, the FBA, and action responsive regions in the vPrCS, and the pIFS. White lines delineate early visual areas V1 and V2. Dark gray, Sulci; light gray, gyri. prCS, Precentral sulcus; CS, central sulcus; STS, superior temporal sulcus; IPS, interparietal sulcus; OTS, occipital temporal sulcus.

In a first step of the analysis, all trials where subjects answered incorrectly were excluded to prevent any influence of the different number of error trials for the different conditions. Following, we calculated the fMRI response for each ROI by extracting the signal intensity for all remaining trials from trial onset (0 – 14 s) and averaging across trials for each condition. The resulting time courses were converted to percentage signal change relative to the fixation condition and averaged across runs and hemispheres (supplemental Fig. S3, available at www.jneurosci.org as supplemental material). Fitting the percentage signal change in each ROI with a hemodynamic response model based on the difference of two gamma functions, as described by Boynton and Finney (2003), together with an ANOVA analysis across time points for each ROI, indicated that fMRI responses peaked between four and six seconds after trial onset, consistent with the hemodynamic response properties (supplemental Figs. S3, S4, available at www.jneurosci.org as supplemental material). Thus, we used the average fMRI response at these time points for further statistical analysis of the differences between conditions. Subsequently, we used a standard normalization procedure to estimate relative differences in the fMRI responses (rebound index) independent of overall signal differences across subjects, sessions and ROIs. During this procedure, we subtracted the mean response across conditions individually for each subject and added the grand mean across subjects separately for each ROI. Repeated-measures ANOVAs and subsequent contrast analyses on significant main effects and interactions (with Greenhouse–Geisser correction) for training (Pretraining, Post-training scanning session), condition (Identical, Very Similar, Less Similar, Dissimilar), and stimulus (Trained, Untrained) were used for the analysis of the psychophysical and fMRI time course data.

Results

Experiment 1: learning of novel natural movements

Experiment 1 was designed to assess how discrimination training changes the neural representation of movements that are relevant for human motor behavior. Subjects were scanned before and after training with stimuli derived from natural human movements by morphing (Fig. 1A).

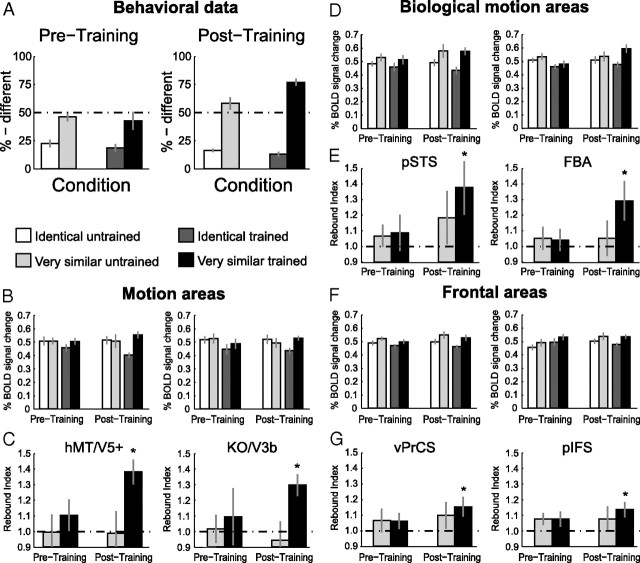

Behavioral performance

Figure 3A shows the observers' performance (percentage of different responses) inside the scanner for the discrimination between novel human-like movements before (Pretraining) and after (Post-training) training. Statistical analysis showed a significant interaction between training and condition (F(3,27) = 26.7, p < 0.001). Subsequent contrast analysis revealed no significant changes in performance for the Identical and the Different conditions, but significantly improved performance for the Very Similar and the Less Similar conditions after training (Very Similar: F(1,9) = 30.7, p < 0.001; Less Similar: F(1,9) = 23.1, p < 0.001). Interestingly, the learning generalized from the Less Similar to the Very Similar condition, despite the fact that observers were not trained on this condition (see Materials and Methods).

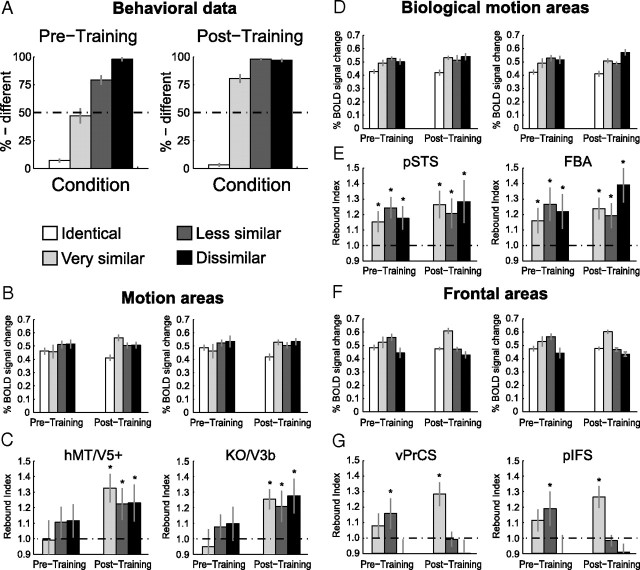

Figure 3.

Results of experiment 1: Learning of novel natural movements. A, Psychophysical data obtained during the scanning sessions before training (Pretraining) and after training (Post-training). The data are expressed as percentage of different judgments for the different conditions. Error bars indicate SEM across subjects. B, Average fMRI response across subjects in general motion-related areas (hMT/V5+, KO/V3B) before and after training (±SEM). C, Rebound indices for the fMRI responses in general motion-related areas before and after training. Rebound values were calculated by dividing the average fMRI responses for each condition by the fMRI responses for the Identical condition. An index of 1 indicates adaptation, while an index >1 indicates sensitivity to changes in the stimulus. Error bars represent SEM; as they incorporate the error estimates of both numerator and denominator SEMs appear rather large. D, Average fMRI response across subjects (±SEM) in biological motion-related areas (pSTS, FBA) before and after training. E, Rebound indices (±SEM) for the fMRI responses in biological motion-related areas before and after training. F, Average fMRI response across subjects (±SEM) in frontal areas (vPrCS/pIFS) before and after training. G, Rebound indices (±SEM) for the fMRI responses in frontal areas before and after training. Asterisks indicate rebound indices significantly higher than one.

fMRI data: general motion-related areas

Figure 3B shows the fMRI responses in the localized general motion-related areas hMT/V5+ and KO/V3b before and after training. We observed fMRI-selective adaptation only after training. That is, a one-way repeated-measures ANOVA comparing the activation for the different conditions showed that differences in fMRI responses across conditions were significant after training (hMT/V5+: F(3,27) = 5.5, p < 0.01; KO/V3b: F(3,27) = 4.0, p < 0.05), but not before training (hMT/V5+: F(3,27) = 1.1, p = 0.36; KO/V3b: F(3,27) = 1.1, p = 0.39). Comparing the fMRI signal across scanning sessions (Pre- and Post-training) resulted in a significant interaction between training and condition (hMT/V5+: F(3,27) = 3.0, p < 0.05; KO/V3b: F(3,27) = 3.3, p < 0.05). Detailed statistics are reported in supplemental Table S2, available at www.jneurosci.org as supplemental material.

To quantify the effect of learning on fMRI responses across conditions, we computed a rebound index (Fig. 3C). This index was calculated by dividing fMRI responses in each condition by the response to the Identical condition. An index of one indicates adaptation due to repetition of the same stimulus, while an index higher than one indicates recovery from adaptation and thus neural sensitivity to differences between the stimuli presented in a trial. In agreement with the analyses of the fMRI responses, we observed rebound indices significantly higher than one only after training (Table 1). This indicates recovery from adaptation and suggests emerging neural sensitivity to the perceived differences between the stimuli after training. This interpretation was supported by a repeated-measures ANOVA, comparing the rebound indices before and after training that showed a significant main effect of training (hMT/V5+: F(1,9) = 6.3, p < 0.05; KO/V3b: F(1,9) = 9.2, p < 0.05).

Table 1.

Statistical analysis of the rebound indices of Experiment 1

| Area | Condition | Before training |

After training |

||

|---|---|---|---|---|---|

| t value | p value | t value | p value | ||

| hMT/V5+ | Very Similar | 0.2 | =0.82 | 4.5 | <0.01 |

| Less Similar | 1.7 | =0.12 | 2.7 | <0.05 | |

| Different | 1.8 | =0.11 | 2.5 | <0.05 | |

| KO | Very Similar | 0.8 | =0.45 | 4.6 | <0.01 |

| Less Similar | 1.3 | =0.23 | 2.7 | <0.05 | |

| Different | 1.2 | =0.26 | 3.1 | <0.05 | |

| pSTS | Very Similar | 2.5 | <0.05 | 3.6 | <0.01 |

| Less Similar | 4.9 | <0.01 | 2.4 | <0.05 | |

| Different | 2.6 | <0.05 | 3.1 | <0.05 | |

| FBA | Very Similar | 2.4 | <0.05 | 3.9 | <0.01 |

| Less Similar | 3.3 | <0.01 | 2.9 | <0.05 | |

| Different | 2.5 | <0.05 | 4.0 | <0.01 | |

| vPrCS | Very Similar | 1.1 | =0.30 | 4.1 | <0.01 |

| Less Similar | 2.5 | <0.05 | 0.1 | =0.98 | |

| Different | 1.2 | =0.26 | 1.4 | =0.20 | |

| pIFS | Very Similar | 1.7 | =0.11 | 4.2 | <0.01 |

| Less Similar | 2.6 | <0.05 | 0.3 | =0.79 | |

| Different | 0.9 | =0.37 | 1.6 | =0.14 | |

Bold font marks rebound indices significantly larger than 1.

fMRI data: biological motion-related areas

In contrast to the general motion-related areas, the biological motion-related areas pSTS and FBA (Fig. 3D) showed a significant difference between the conditions both before (pSTS: F(3,27) = 6.4, p < 0.01, FBA: F(3,27) = 3.3, p < 0.05) and after training (pSTS: F(3,27) = 6.1, p < 0.01, FBA: F(3,27) = 10.0, p < 0.001). Statistical comparisons across scanning sessions showed a significant interaction between training and condition in area pSTS (F(3,27) = 3.2, p < 0.05) and a similar trend in area FBA (F(3,27) = 2.4, p = 0.08), indicating that training affected the processing of the different conditions in biological motion-related areas.

Statistical analysis of the rebound indices confirmed the results of the above analyses (Fig. 3E). Rebound indices significantly larger than one were obtained for all conditions containing different stimuli both before and after training (Table 1). Importantly, comparison of the rebound indices before and after training showed a significant main effect of training (pSTS: F(1,9) = 5.7, p < 0.05, FBA: F(1,9) = 5.9, p < 0.05), whereas the main effect of condition or the interaction between these factors were not significant. Thus, fMRI-selective adaptation was enhanced after training and this effect did not differ significantly across all stimulus conditions.

fMRI data: frontal areas

Figure 3F shows the results from the ventral precentral sulcus (vPrCS) and the posterior inferior frontal sulcus (pIFS). Analogous to the biological motion-related areas, we obtained significant differences in fMRI signal between the conditions before (vPrCS: F(3,27) = 3.2, p < 0.05, pIFS: F(3,27) = 3.4, p < 0.05) and after training (vPrCS: F(3,27) = 12.7, p < 0.001, pIFS: F(3,27) = 19.8, p < 0.001). Further, the frontal areas showed a significant interaction across scanning sessions (vPrCS: F(3,27) = 3.1, p < 0.05, pIFS: F(3,27) = 3.0, p < 0.05).

However, unlike pSTS and FBA, recovery from adaptation in frontal regions occurred only for specific stimulus conditions (Fig. 3G). Before training, the only condition for which we obtained rebound indices significantly higher than one, was the Less Similar condition (vPrCS: t(9) = 2.5, p < 0.05; pIFS: t(9) = 2.7, p < 0.05). Yet after training, only the Very Similar condition showed rebound indices significantly higher than one (vPrCS: t(9) = 4.1, p < 0.01; pIFS: t(9) = 4.2, p < 0.01). This observation was supported by a repeated-measures ANOVA comparing the rebound indices before and after training, showing a significant interaction between training and condition (vPrCS: F(2,18) = 6.5, p < 0.01, pIFS: F(3,27) = 4.0, p < 0.01). Additional contrast analysis revealed that for vPrCS the rebound index for the Very Similar condition was significantly higher after than before training (F(1,9) = 6.9, p < 0.05) and that the opposite was true for the Less Similar condition (F(1,9) = 6.6, p < 0.05). In pIFS, the same result was observed for the Less Similar condition (F(1,9) = 4.7, p < 0.05). However, the rebound index for the Very Similar condition did not differ significantly before and after training (F(1,9) = 1.9, p = 0.19). This response pattern differs substantially from the responses obtained for occipitotemporal areas. Whereas statistical analysis of the rebound indices for the occipitotemporal areas showed similar training effects across conditions, frontal areas showed differential effects of training across stimulus conditions.

In particular, rebound effects in frontal areas seem to reflect difficulty in the discrimination task rather than the perceived differences between stimuli. Before training, observers' performance was high for the Identical and the Different conditions, while low for stimuli in the Very Similar condition. The most difficult condition was the Less Similar condition, with performance ∼75%. After training, the most difficult condition was the Very Similar condition, with performance ∼75%, in contrast to the rest of the conditions, for which observers' performance was very high (Fig. 3A).

Together, our results suggest that learning facilitates the discrimination of human-like movements and shapes their neural representations across areas through potentially different mechanisms. In particular, we observed: (1) emerging sensitivity to the differences between human-like movements in general motion-related areas after training, (2) enhancement of the already present sensitivity to movement differences in biological motion-related areas, and (3) recruitment of prefrontal areas when the discrimination task is most demanding. In addition, we observed generalization of these learning-dependent effects beyond movements trained with feedback (the Less Similar condition) to stimuli that were not trained with feedback (Very Similar condition).

Experiment 2: pattern specificity of the learning process

The results of experiment 1 indicated learning-dependent changes across different cortical areas during discrimination training. In experiment 2, we evaluated, whether these changes were specific to the trained stimulus space or simply reflected unspecific improvement due to familiarity with the task. To this end, we tested for transfer of learning-dependent changes to stimuli generated from different prototypical movements that were not presented during training (Fig. 1C).

In particular, we investigated fMRI responses after training (Post-training) to movement patterns with which the observers had been trained before the scanning (Trained) and movements generated from different prototypes, with which the observers were not trained (Untrained). Since the critical condition in experiment 1 was the Very Similar condition, we tested only the Identical and the Very Similar conditions for both trained and untrained stimuli. fMRI-selective adaptation for trained but not untrained stimuli would suggest stimulus-specific learning-dependent changes in the representation of movements, while similar effects for trained and untrained stimuli would suggest transfer of learning across different movement patterns.

Behavioral performance

Consistent with previous psychophysical results (Jastorff et al., 2006), the observers' ability to discriminate between the stimuli in the Very Similar condition improved for the trained stimuli compared to the untrained ones (Fig. 4A). This was supported by a significant interaction between stimulus similarity (Identical vs Very Similar) and stimulus group (trained vs untrained) (F(1,10) = 33.4, p < 0.001), suggesting stimulus-specific learning effects.

Figure 4.

Results of experiment 2: Learning specificity versus generalization. A, Psychophysical data (% different) during the scanning session after training (Post-training) for the four conditions. B–G, Average fMRI response and rebound indices across subjects in general motion-related areas (B, C), biological motion-related areas (D, E) and frontal areas (F, G) for the trained and the untrained stimuli after training. Error bars represent SEM and asterisks indicate rebound indices significantly higher than one.

fMRI data: general motion-related areas

fMRI responses in general motion-related areas (Fig. 4B) showed learning effects specific to the trained human-like movements as indicated by a significant interaction between stimulus similarity and stimulus group in hMT/V5+ (F(1,10) = 5.1, p < 0.05) and a significant main effect of stimulus group in area KO/V3b (F(1,10) = 5.0, p < 0.05). Detailed statistics are reported in supplemental Table S3, available at www.jneurosci.org as supplemental material.

This finding was supported by the rebound indices plotted in Figure 4C. Rebound indices for the trained stimuli were significantly higher than one (hMT/V5+: t(10) = 4.9, p < 0.001; KO/V3b: t(10) = 3.2, p < 0.01). In contrast, rebound indices for the untrained stimuli did not differ significantly from one (hMT/V5+: t(10) = −0.3, p = 0.80; KO/V3b: t(10) = 1.0, p = 0.34). Moreover, direct comparison of the rebound indices, showed significantly higher indices for trained compared to untrained stimuli (hMT/V5+: t(10) = 2.7, p < 0.05; KO/V3b: t(10) = 2.3, p < 0.05).

fMRI data: biological motion-related areas

Similar to the results for the general motion-related regions, pSTS and FBA did not show transfer of learning-dependent effects to the untrained stimuli (Fig. 4D). A repeated-measures ANOVA revealed a significant main effect of stimulus similarity (pSTS: F(1,10) = 32.3, p < 0.001, FBA: F(1,10) = 10.5, p < 0.001) and stimulus group (pSTS: F(1,10) = 5.4, p < 0.05, FBA: F(1,10) = 6.3, p < 0.05).

Similarly, rebound indices (Fig. 4E) for the Very Similar condition were significantly higher than one for both trained and untrained stimuli. However, comparing the rebound indices between the trained and the untrained stimuli directly, showed a significantly higher rebound index for trained compared to untrained stimuli in pSTS (t(10) = 2.4, p < 0.05) and a similar trend in FBA (t(10) = 2.0, p = 0.07). These results are in agreement with the findings from experiment 1 showing that fMRI-selective adaptation for very similar movements was present before training and further enhanced after training. Importantly, this learning-dependent enhancement in neural sensitivity was specific to the perceived differences between trained movements.

fMRI data: frontal areas

Learning-dependent changes in fMRI-selective adaptation were specific to trained stimuli also in frontal areas. An ANOVA comparing fMRI responses (Fig. 4F) showed a main effect of stimulus similarity (vPrCS: F(1,10) = 6.7, p < 0.05, pIFS: F(1,10) = 5.5, p < 0.05).

However, statistical analysis of the rebound indices (Fig. 4G) indicated rebound indices significantly higher than one only for the trained (vPrCS: t(10) = 3.7, p < 0.01; pIFS: t(10) = 2.8, p < 0.05), but not for the untrained stimuli (vPrCS: t(10) = 1.4, p = 0.19; pIFS: t(10) = 1.5, p = 0.17). Moreover, rebound indices were significantly higher for trained than untrained stimuli in vPrCS (t(10) = 2.4, p < 0.05) but not in pIFS (t(10) = 1.3, p = 0.23), consistent with the results in experiment 1.

In summary, the results of experiment 2 complement the results of experiment 1 highlighting that learning-dependent changes in fMRI-selective adaptation across areas are stimulus-specific. Generalization may occur between stimuli from the same stimulus space (as in experiment 1), but not between stimuli generated from different stimulus spaces (i.e., spaces based on different sets of prototypes).

Experiment 3: learning novel artificial complex movements

Experiments 1 and 2 showed that discrimination learning of novel stimuli compatible with human movements, leads to changes in fMRI sensitivity to the perceived differences between movements across cortical areas. Previous studies have suggested that biological motion-related areas show an enhanced activation for human-like movements compared to other complex motion patterns (Pyles et al., 2007). In experiment 3 we tested whether areas involved in the processing of human movements are recruited for the learning of motion trajectories that have similar complexity but are not compatible with human movements.

In particular, we used articulated artificial motion stimuli that did not resemble human or animal bodies (Fig. 1B) (see Materials and Methods for details). These stimuli were constructed by animating artificial skeleton models with sinusoidal joint angle motion. The skeletons were chosen to be highly dissimilar from naturally occurring body structures, and were thus not consistently interpreted as humans or animals (Jastorff et al., 2006). The joint trajectories of these artificial skeletons were motion-morphed in the same way as the human trajectories to construct similar morph spaces and control for similarity across stimuli. As in experiment 2, observers were tested with the Identical and the Very Similar conditions on trained and untrained stimuli. However, participants were scanned both before and after training.

Behavioral performance

As illustrated in Figure 5A, before training, observers showed similar performance for the trained and the untrained stimuli. That is, there was no significant main effect of stimulus group: F(1,8) = 0.90, p = 0.37 or interaction: F(1,8) < 0.1, p = 0.97 between stimulus group and training. After training however, performance for the trained stimuli increased significantly compared to the untrained ones (contrast analysis: F(1,8) = 24.3, p < 0.01). This indicates stimulus-specific learning of the artificial stimuli, similar to the results obtained for the natural human-like stimuli.

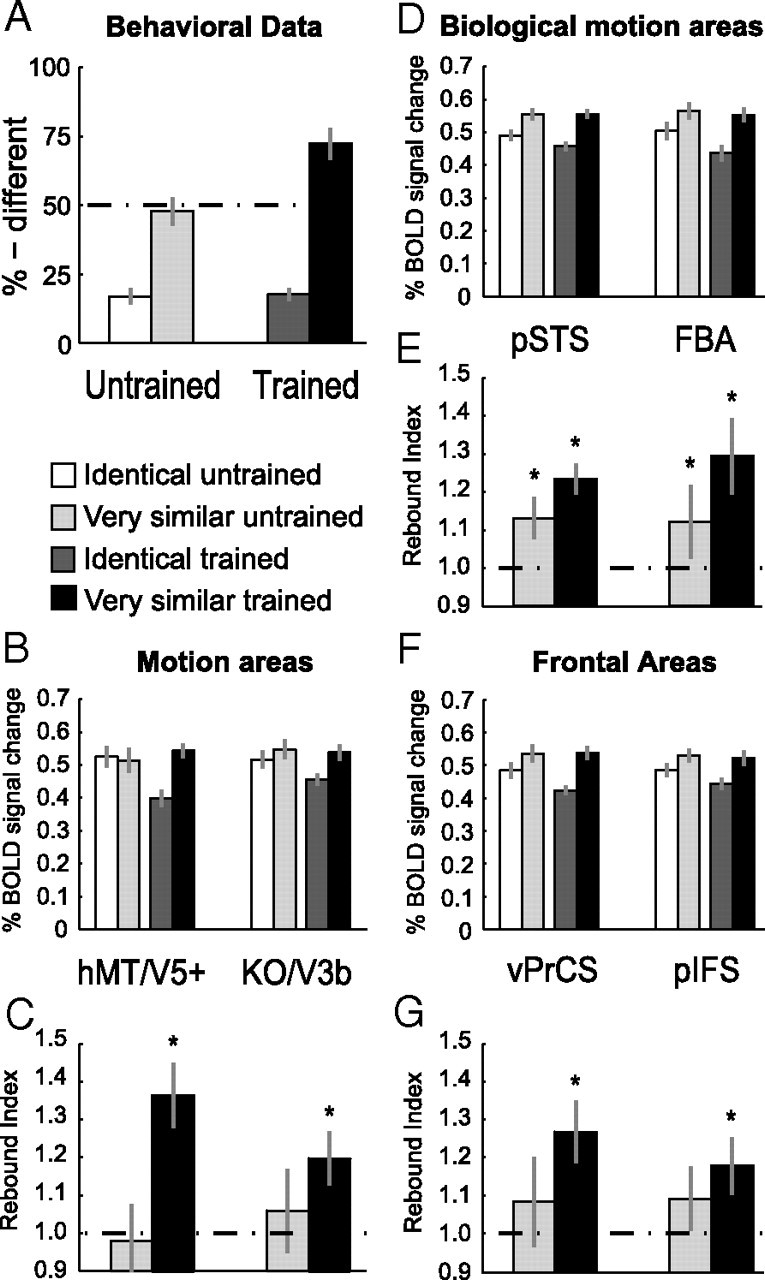

Figure 5.

Results of experiment 3: Learning of artificial complex movements. A, Psychophysical data (% different) during scanning before (Pretraining) and after (Post-training) training. B–G, Average fMRI response and rebound indices across subjects in general motion-related areas (B, C), biological motion-related areas (D, E) and frontal areas (F, G) for the trained and the untrained stimuli, separately for the two scanning sessions. Error bars represent SEM and Asterisks indicate rebound indices significantly higher than one.

fMRI data: general motion-related areas

Similar to the activations for the human-like stimuli, fMRI-selective adaptation specific to the trained movements was observed after, but not before training, in motion-related areas. That is, significantly stronger fMRI responses for the Very Similar compared to Identical condition were observed, when the participants were tested with trained compared to untrained stimuli, as indicated by a significant interaction of stimulus group and training in hMT/V5+ (F(1,8) = 4.9, p < 0.05) as well as in KO/V3B (F(1,8) = 4.7, p < 0.05) after training (Fig. 5B). Detailed statistics can be found in supplemental Table S4, available at www.jneurosci.org as supplemental material.

Rebound indices shown in Figure 5C support this finding. Rebound indices were significantly higher than one after training and only for the trained stimuli (hMT/V5+: t(8) = 5.3, p < 0.01; KO/V3B: t(8) = 3.6, p < 0.01). Moreover, a repeated-measures ANOVA comparing rebound indices across scanning sessions (Pre- and Post-training) showed a significant interaction between stimulus group and stimulus similarity in hMT/V5+ (F(1,8) = 5.7, p < 0.05) and a similar trend in KO/V3B (F(1,8) = 4.8, p = 0.06). Thus, in motion-related areas, neural sensitivity to the differences between artificial movement patterns emerged only after training, and this learning was specific to the trained stimuli.

fMRI data: biological motion-related areas

Figure 5D shows fMRI responses for the biological motion-related areas. In contrast to experiments 1 and 2, these areas showed fMRI-selective adaptation effects only after but not before training. This observation is supported by a significant main effect of stimulus similarity after but not before training in pSTS (F(1,8) = 8.1, p < 0.05; F(1,8) = 1.9, p = 0.21) and a similar trend in FBA (F(1,8) = 4.5, p = 0.06; F(1,8) = 0.8, p = 0.39).

Moreover, rebound indices (Fig. 5E) were significantly higher than one after training and only for the trained stimuli (pSTS: t(8) = 2.9, p < 0.05; FBA: t(8) = 2.8, p < 0.05) but not for the untrained stimuli (pSTS: t(8) = 1.4, p = 0.18; FBA: t(8) = 0.8, p = 0.43). Similar to the general motion-related areas, repeated-measures ANOVAs across scanning sessions showed a significant interaction in the FBA (F(1,8) = 6.1, p < 0.05) and a similar trend in pSTS (F(1,8) = 4.8, p = 0.06), indicating stimulus-specific changes after training.

fMRI data: frontal areas

Figure 5F shows fMRI responses for vPrCS and pIFS. Before training, we did not obtain any significant difference in fMRI signal between conditions. After training however, we obtained a significant main effect of stimulus similarity in both frontal areas (vPrCS: F(1,8) = 8.5, p < 0.05, pIFS: F(1,8) = 6.0, p < 0.05).

However, statistical analysis of the rebound indices (Fig. 5G) suggested that frontal areas were affected to a lesser extend by training compared to occipitotemporal areas. Although rebound indices were significantly higher than one after training for the trained stimuli (vPrCS: t(8) = 2.8, p < 0.05; pIFS: t(8) = 3.1, p < 0.05), the interaction between stimulus conditions and scanning sessions was not significant (vPrCS: F(1,8) = 0.3, p = 0.61; pIFS: F(1,8) = 0.2, p = 0.65).

In summary, the results obtained for the novel artificial motion stimuli differ in two aspects from the results obtained for the natural human-like stimuli. First, for biological motion-related areas, the differences between conditions were significant both before and after training for natural stimuli but only after training for artificial stimuli. Similarly, significant recovery from adaptation was observed after training for artificial stimuli, but was already present before training for natural stimuli. This suggests that biological motion-related areas do not differentiate between very similar artificial stimuli before training, but that they become sensitive to the perceived differences between these movement trajectories after training. To quantify the difference between natural and artificial stimuli before training statistically, we conducted an ANOVA comparing fMRI responses before training for the Identical and the Very Similar conditions between experiments 1 and 3. This ANOVA did not result in a significant interaction between stimulus type (natural and artificial) and stimulus similarity (Identical and Very Similar) in pSTS and FBA (pSTS: F(1,34) = 1.9, p = 0.18; FBA: F(1,34) = 2.6, p = 0.11). However, this result is difficult to interpret as different observers participated across experiments and the experiments also differed with respect to the conditions tested.

Second, even though frontal areas showed significant recovery from adaptation for the trained artificial stimuli, we did not obtain a significant interaction across scanning sessions. Furthermore, rebound indices for the trained stimuli were not significantly higher after than before training (vPrCS: F(1,8) = 1.5, p = 0.17; pIFS: F(1,8) = 0.7, p = 0.48). This result is different from the results obtained for the natural stimuli as in the vPrCS, the rebound indices for the Very Similar condition were significantly higher after than before training (experiment 1: F(1,9) = 6.9, p < 0.05). To test this difference statistically, we compared fMRI signals across experiments 1 and 3 after training using a factorial ANOVA. This ANOVA showed a trend toward a significant interaction between stimulus type (natural and artificial) and stimulus similarity (Identical and Very Similar) in vPrCS (F(1,34) = 3.7, p = 0.06). However, the limitations discussed above in comparing the results between the two experiments also apply to this finding. Together, these results suggest an efficient visual learning mechanism that operates in a very similar way for stimuli with and without relevance for human motor execution.

Relation between fMRI signal and behavioral performance

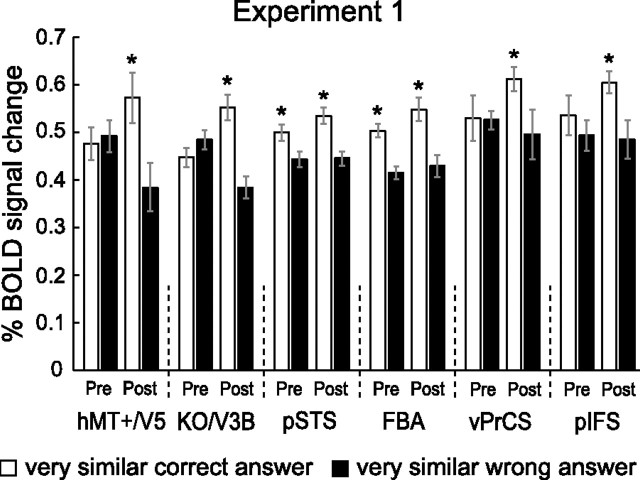

To further quantify the relationship between the behavioral and fMRI learning effects, we conducted three additional analyses. First, we analyzed the trials of experiment 1 for which the subjects answered incorrectly. For this analysis we concentrated on the Very Similar condition, because this condition yielded the most incorrect trials. Next, we compared the activation for incorrect trials with the activation for correct trials (plotted in Fig. 3). Note that the stimuli for correct and incorrect trials were physically identical. We reasoned that if fMRI responses reflect the behavioral judgment of the observers rather than the physical stimulus similarity, the signal for incorrect trials (i.e., when the observers could not discriminate different stimuli), should be lower compared to trials for which observers perceived the difference between the stimuli. Yet this result would be only obtained in areas that are involved in the discrimination process.

Figure 6 shows that this prediction was supported by the data. Plotted are the fMRI responses before and after training for the Very Similar condition (natural stimuli), separately for the trials, for which subjects answered correctly (white bars, identical to Fig. 3) and the trials, for which subjects incorrectly perceived the stimuli as being identical (black bars). Repeated-measures ANOVAs with the factors response (identical and different) and training (prescan and postscan) showed a significant main effect of response across all ROIs (hMT/V5+: F(1,9) = 8.4, p < 0.05; KO/V3B: F(1,9) = 5.5, p < 0.05; pSTS: F(1,9) = 10.2, p < 0.05; FBA: F(1,9) = 13.9, p < 0.01; vPrCS: F(1,9) = 6.8, p < 0.05; pIFS: F(1,9) = 8.1, p < 0.05). This result suggests that even though the stimuli were physically identical, the fMRI signal was modulated based on the perception of the subjects. This modulation was significant in biological motion-related areas already before training (contrast analysis: pSTS: F(1,9) = 6.0, p < 0.05; FBA: F(1,9) = 10.3, p < 0.05) and became significant in motion-related areas and frontal areas after training (contrast analysis: hMT/V5+: F(1,9) = 15.9, p < 0.01; KO/V3B: F(1,9) = 12.1, p < 0.01; vPrCS: F(1,9) = 5.4, p < 0.01; pIFS: F(1,9) = 6.2, p < 0.05). The result of this independent analysis confirms our interpretation of the findings of experiment 1. That is, biological motion-related areas are involved in the discrimination between natural human-like stimuli before training, while general motion-related areas are recruited after training. In addition, this result establishes a direct link between behavioral judgments and fMRI signal changes.

Figure 6.

Relation between behavioral response and fMRI signal. Average fMRI response across subjects (±SEM) in the different ROIs for the Very Similar condition. White bars indicate the fMRI response for trials where subjects answered correctly (identical to light gray bars in Fig. 3) and black bars show the response for trials where subjects answered incorrectly. Note that the stimuli presented in the two cases are physically identical. Asterisks indicate significant differences in fMRI signals between the two cases within one scanning session.

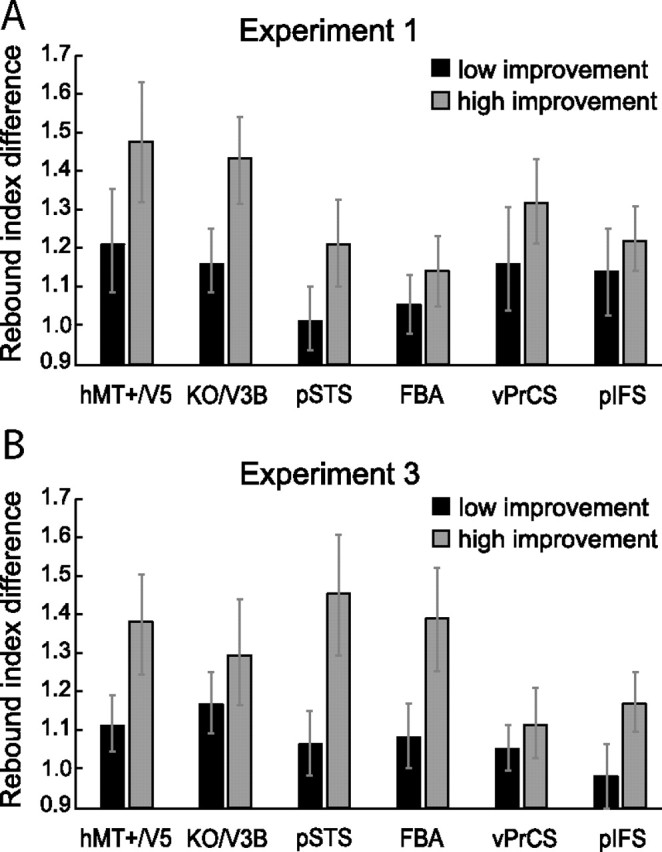

In a second analysis (Fig. 7), we focused on the individual improvement in performance for each subject between pre- and postscan. We computed the difference in correct responses for the Very Similar condition before and after training for experiments 1 and 3. We grouped subjects with higher versus lower performance enhancement. Hence each group contained five subjects for experiment 1 and four subjects for experiment 3 (here the subject with the median performance increase was excluded because only nine subjects participated). Subsequently, we computed the ratio between the rebound indices before and after training (rebound index after training/rebound index before training) for each subject independently (Fig. 7). In all areas, the mean ratios for the group with higher performance enhancement exceeded the mean ratios for the group with the lower performance enhancement. This effect was observed for both human-like and artificial movements. An ANOVA showed a significant main effect of performance enhancement for the natural (F(1,48) = 4.4, p < 0.05) and artificial stimuli (F(1,36) = 5.6, p < 0.05). The main effect of ROI and the interaction between ROI and performance enhancement were not significant (natural: ROI F(1,48) = 1.6, p = 0.15 interaction F(1,48) = 0.4, p = 0.88; artificial: ROI F(1,36) = 0.5, p = 0.79; interaction F(1,36) = 0.8, p = 0.55). This analysis showed that increases in neural sensitivity to the differences between stimuli were significantly higher for observers, who showed stronger behavioral training effects. These results provide an additional link between behavioral improvement and learning-dependent changes in fMRI signals.

Figure 7.

Relation between behavioral improvement and selectivity changes. A, B, Ratio of the rebound indices before and after training, separately for two groups of subjects for experiment 1 (A) and experiment 3 (B). The first group (dark gray bars) contains subjects with a lower performance increase after training and the second group (light gray bars) contains subjects with higher performance increase after training (±SEM).

Third, we performed a regression analysis for the individual subjects' psychophysical and fMRI data of experiment 1 (supplemental Fig. S5, available at www.jneurosci.org as supplemental material). This analysis concentrated on occipitotemporal areas, as these areas showed, in contrast to the frontal areas, a significant main effect of training for the rebound indices (supplemental Table S2, available at www.jneurosci.org as supplemental material). Before training, a significant correlation between behavioral performance and fMRI responses was observed only in biological motion but not general motion-related areas (pSTS: r = 0.41, p < 0.05; FBA: r = 0.38, p < 0.05; hMT/V5+: r = 0.24, p = 0.16; KO/V3B: r = 0.20, p = 0.24). However, after training the correlations were significant across all occipitotemporal areas (pSTS: r = 0.51, p < 0.01; FBA: r = 0.58, p < 0.01; hMT/V5+: r = 0.51, p < 0.01; KO/V3B: r = 0.45, p < 0.01). This analysis provides additional evidence for a link between behavioral improvement and experience-dependent fMRI changes. Moreover, it supports our findings showing differential processing between biological motion-related areas and general motion-related areas before training.

Learning of complex movements in retinotopic visual cortex

We further examined whether early retinotopic areas (V1 and V2) might be engaged in the learning of complex articulated movements. We tested fMRI responses for human-like and artificial movements in experiments 2 and 3 that contained comparable conditions (trained vs untrained stimuli). For experiment 2 (supplemental Fig. S6A, available at www.jneurosci.org as supplemental material), neither area V1, nor area V2 showed significant main effects, or a significant interaction (supplemental Table S3, available at www.jneurosci.org as supplemental material). Likewise, the rebound indices did not differ significantly from one (supplemental Fig. S6B, available at www.jneurosci.org as supplemental material). The same results were obtained for the novel artificial stimuli used in experiment 3 (supplemental Fig. S7C–F; supplemental Table S4, available at www.jneurosci.org as supplemental material). The lack of fMRI-selective adaptation for very similar human and artificial movements in V1 and V2 suggests that our findings in higher visual areas were due to learning differences in the global structure rather than the local features of the movements. Furthermore, this finding suggests that learning-dependent changes in neural sensitivity in higher visual areas could not be simply attributed to adaptation of input responses from the primary visual cortex. Moreover, the lack of adaptation in these areas makes it unlikely that our results could be simply explained by general alertness or arousal that would affect signals not only in higher areas but also in early visual cortex (Kastner and Ungerleider, 2000; Ress et al., 2000).

Learning-related plasticity changes outside the defined regions of interest

Our main analyses concentrated on regions of interest that where localized independently for each subject to gain maximal sensitivity. However, to test for the possibility that learning-dependent plasticity might occur within areas not included in our ROIs, we performed two whole brain fixed effects GLM analyses. The first one focused on experiment 1 and combined all scans (pre- and post-training) from all subjects within a single model. Contrasting the fMRI signal for the Identical condition with the fMRI signals for all the other conditions (Very Similar, Less Similar, and Dissimilar) confirmed that adaptation effects were predominantly confined to the areas already included in our ROI analysis (supplemental Fig. S7A, available at www.jneurosci.org as supplemental material). Moreover, it indicated that recovery from adaptation seemed to be more pronounced in the right hemisphere, in agreement with several studies showing more consistent activation of the right hemisphere for biological movements (Grossman et al., 2000; Peuskens et al., 2005; Peelen et al., 2006; Pyles et al., 2007; Jastorff and Orban, 2009).

The second analysis focused on the learning effects for the synthetic stimuli in experiment 3. Pyles et al. (2007) obtained stronger responses for movements of Creatures (bodies built from rod-like shape primitives linked by rigid and nonrigid joints) compared to human movements in an area located in the inferior occipital sulcus. This led to the hypothesis that this area might be involved in the representation of novel and dynamic objects. It seemed possible that areas outside our localized ROIs showed learning-induced activity changes for our artificial stimuli. To test this possibility, we conducted a fixed effects analysis combining the data from the post-training scans from all subjects of experiment 3, searching for regions showing a significant interaction between condition and training. That is, regions showing stronger recovery from adaptation for the trained than untrained stimuli after training. However, this analysis did not reveal any significant activation beyond the network of ROIs investigated in our study (supplemental Fig. S7B, available at www.jneurosci.org as supplemental material). The results of both whole brain analyses confirmed that learning-dependent activity changes were primarily confined to the areas included in our ROI analyses.

Reaction times and eye movements

To control for the possibility that differences in fMRI responses across the conditions were not due to differences in general alertness or differential attentional allocation, we analyzed reaction times during scanning (supplemental Fig. S8, available at www.jneurosci.org as supplemental material). We obtained no significant differences in reaction times across conditions in experiment 1 (Pretraining: F(3,27) = 2.56; p = 0.07; Post-training: F(3,27) = 1.42; p = 0.26), experiment 2 (Post-training: F(1,10) = 3.34; p = 0.12) or experiment 3 (Pretraining: F(1,8) = 3.22; p = 0.11; Post-training: F(1,8) < 1; p = 0.82). In experiment 3, significantly faster reaction times were observed for the trained compared to the untrained stimuli in the post-training scan (F(1,8) = 9.07; p = 0.02). These results make it unlikely that differential attentional allocation could account for the observed pattern of fMRI responses. In contrast to our fMRI results, an attentional load explanation would predict higher fMRI responses when the discrimination task was hardest as difficult conditions require prolonged, focused attention resulting in higher fMRI responses (Ress et al., 2000). We observed the opposite effect: fMRI responses were similar for conditions in which the discrimination was easiest and subjects responded fastest (different condition in experiment 1, supplemental Fig. S8, available at www.jneurosci.org as supplemental material). Further, the quick succession of randomly interleaved trials ensured that the observers could not attend selectively to particular conditions. Moreover, it is unlikely that the observed fMRI learning effects were due to the fact that the subjects were more attentive after than before training for the trained compared to the untrained movements, as the task was more demanding before training and for the untrained stimuli. Reaction times in these difficult discrimination conditions were the slowest rather than very fast, as it would be expected if the observers had given up and were simply guessing in these conditions. These psychophysical data indicate that observers were engaged in the task rather than responding randomly both before and after training.

Eye movement recordings showed that the subjects were able to fixate for long periods of time and any saccades did not differ systematically in their number, amplitude or duration across conditions before and after training. Moreover, we did not observe a significant difference in the number of saccades across scanning sessions. The relevant statistics are presented in supplemental Figure S9, available at www.jneurosci.org as supplemental material.

Across-trial adaptation

Throughout all experiments, every trial started with the presentation of a center stimulus. Therefore, within a full training or scanning session, the center stimuli were presented more often than the off-center and the prototype stimuli (Fig. 1C). Although this design may lead to priming effects for the center stimuli, it is unlikely that this possibility would confound our results, as Ganel et al. (2006) have shown that priming and adaptation effects are additive and not interactive. By comparing activation for identical with different trials, which were either primed in preceding sessions or presented for the first time, they could show that the net amount of adaptation for the identical trials was equal, independently of whether the stimuli were primed or not. The only difference in activation between primed and nonprimed stimuli was that overall activation for primed stimuli was reduced. As this overall reduction does not affect the interaction between training and stimulus condition that we defined as critical for identifying changes in adaptation related to discrimination training, priming could not affect the interpretation of our findings.

Discussion

Our findings reveal localized changes in fMRI activation as a result of learning novel complex motion patterns. Most importantly, we found similar learning effects for natural human-like and artificial movements of similar complexity across multiple areas involved in action processing. Improvement of observers' performance for the discrimination of movements after training was associated with three types of activity changes that were stimulus-specific: (1) Emerging sensitivity to movement differences in general motion-related areas (hMT/V5+ and KO/V3B); (2) increased sensitivity after training with human-like movements and emerging sensitivity for novel artificial stimuli in biological motion-selective areas (pSTS and FBA); (3) changes reflecting task-difficulty in frontal areas related to learning to discriminate between human-like movements.

General motion-related areas

Our results reveal that experience-dependent plasticity is not only observed across areas that are known to be involved in the processing of biological motion, but also in areas involved in the processing of simpler motion patterns. Sensitivity for the differences between the stimuli emerged for natural as well as for artificial stimuli after training. Our results suggest that these areas contribute to the discrimination of complex motion patterns and may optimize their neural representations in the context of discrimination learning. This is consistent with studies indicating a role of hMT/V5+ in the learning of global motion configurations (Zohary et al., 1994; Vaina et al., 1998). Moreover, work in neural modeling and computer vision shows that the performance of hierarchical models of motion and object recognition can be substantially improved when the properties of mid-level feature detectors are optimized by learning (Wersing and Körner, 2003; Jhuang et al., 2007; Serre et al., 2007; Ullman, 2007).

General motion-related areas predominantly extract local stimulus features and might be less invariant for local stimulus changes. This might explain why these areas do not show selectivity for novel human-like movements before training, as such features might show larger variations between stimuli of the same stimulus class (generated from the same prototypes).

Biological motion-related areas

Interestingly, biological motion-related areas exhibited differences between the learning of different classes of complex movements. In particular, before training, these areas showed a small but significant sensitivity to differences between similar human-like movements but not artificial movements. One explanation for this difference might be that pre-existing representations for human movements show generalization to novel human-like stimuli. Similar mechanisms have been implicated in the representation of novel object categories based on learned example views (Edelman et al., 1999). On the contrary, such generalization might not be possible for novel artificial patterns, as their general appearance deviates strongly from already existing representations. The observed enhanced specificity for natural movements after training could reflect an increased sensitivity to the differences between stimulus features of neural populations already involved in their discrimination before training (Schoups et al., 2001; Baker et al., 2002; Sigala and Logothetis, 2002; Freedman et al., 2003).

Although biological motion-related areas do not show sensitivity to differences between novel artificial stimuli before training, they show enhanced sensitivity after training. Jastorff and Orban (2009) showed that activation in pSTS depends on the biological kinematics of the individual dots present in point-light displays rather than configural information. According to their study, even scrambled versions of point-light walkers, preserving the inherent biological kinematics, elicited significantly stronger activation in pSTS compared to presentations of a full body translating. The trajectories of the individual points in our artificial stimuli were coarsely matched in frequency and amplitude with natural human movements and moved in accordance with the two-thirds power law (Lacquaniti et al., 1983; Viviani and Stucchi, 1992). Therefore, it is possible that the same neural machinery involved in the processing of human kinematics is recruited for the learning of novel artificial movements.

Pyles et al. (2007) reported lower overall activation in the pSTS for the presentation of “Creatures” (moving artificial articulated shapes) compared to the presentation of human movements and suggested specialized neural processing for human movements in pSTS. However, the Creature movement was not explicitly matched with human kinematics and their experiment did not involve a training phase, allowing for the possibility that enhanced activation in pSTS might be observed after extensive training.

While neural populations within pSTS might develop sensitivity for differences in movement kinematics, neural populations in FBA might develop sensitivity for the differences in body structure between the stimuli. Pyles et al. (2007) reported similar activation in the FBA for the presentation of humans and creatures when presented as stick figures, but weaker activation for creatures, when presented as point-light displays. This finding, together with the finding that subjects had more difficulty to detect point-light creatures in noise compared to human point-light walkers indicates that subjects were unable to use prior knowledge about body structure to facilitate recognition. In our study, observers might have learned a “body representation” for the artificial stimuli during training, which could explain increased sensitivity to the differences between artificial movements in FBA.

Frontal areas

The localized frontal areas seem to exhibit a different response pattern compared to the occipital and temporal areas. General and biological motion-related areas showed fMRI-selective adaptation effects for all conditions where different stimuli were presented, independent of the similarity of the stimuli. In contrast, frontal areas showed significant release from adaptation only for specific conditions. This effect was more prominent in vPrCS than pIFS and was related to task difficulty as indicated by the behavioral performance of the observers. Although significant recovery from adaptation was also observed after training for artificial movements, the interaction between scanning session and stimulus similarity was not significant (supplemental Table S4, available at www.jneurosci.org as supplemental material), indicating that training with artificial stimuli affected frontal areas to a lesser extend compared to natural stimuli.

Recruitment of frontal areas for conditions where discrimination is most demanding is consistent with studies showing significant higher fMRI activation in ventral precentral sulcus and posterior IFS for point-light stimuli when subjects performed a one-back task compared to passive observation (Jastorff and Orban, 2009). Thus, our results in frontal areas may reflect a consequence of the learning-induced changes in occipital and temporal areas.

Comparison between human-like and artificial movements

Comparison of the results between natural and artificial stimuli revealed two main differences in fMRI-selective adaptation: Lack of sensitivity before training to differences between artificial stimuli in biological motion-related areas and no significant training effects for artificial stimuli in frontal areas. However, statistical comparisons across experiments 1 and 3 did not indicate that these differences were significant. This null result is difficult to interpret because the experiments tested different conditions and the number and identity of the participants differed. Future experiments, testing both stimulus groups in the same experiment are needed to directly test the difference in responses between natural and artificial stimuli within biological motion-related and frontal areas.

Top-down influences

As subjects were involved in a discrimination task during the scanning sessions, we cannot completely rule out the possibility that top-down signals might have contributed to the effects discussed. However we believe that the diversity of effects we obtained across experiments and ROIs argue against a pure top-down explanation for our findings. Moreover, without the behavioral data of the subjects from inside the scanner, the direct link between behavioral improvements and changes in fMRI selective adaptation could not have been established.

Conclusions

Our study advances our understanding of experience-based plasticity mechanisms involved in the learning of novel complex biological movements in several respects. First, our experiments investigated the role of visual learning in the discrimination of novel biological movements that mediate recognition of individual actions rather than detection in random noise backgrounds (Grossman et al., 2004), a process that engages scene segmentation processes. Such learning entails analysis of the distinctive features of movements that are critical for the discrimination between different motion patterns. Second, by using complex artificial movements that were matched for low-level and biological movement features with human movements, we were able to show that areas involved in the processing of human movements become involved in the processing of artificial movements in contrast with previous suggestions (Pyles et al., 2007). Third, by combining spatiotemporal morphing techniques with an fMRI adaptation paradigm as an index of discriminability, we were able to trace how changes in behavioral performance are reflected in changes of sensitivity of neural populations in several brain areas involved in the processing of action stimuli.

In sum, our results provide important new insights into the recognition of complex movements and action patterns. Our findings provide evidence for a fast and efficient visual learning process for complex articulated motion patterns that seems to be independent of the compatibility of theses movements with human kinematics or human body structure. This suggests that the processing of biological motion might be a special instance of a more general function of these areas. Future combined fMRI and neurophysiological studies are necessary to investigate the specific neural plasticity mechanisms at the level of single neurons and their interactions within and across cortical areas involved in the visual analysis of movements and the planning of actions.

Footnotes

This work was supported by the Deutsche Forschungsgemeinschaft (DFG) (SFB 550), the Max Planck Society, Human Frontier Science Program, European Community FP6 Project COBOL, and DFG Grant TH 812/1-1 for the imaging facilities. We thank H. P. Thier, C. F. Altman, and T. Cooke for support with this work. We are grateful to G. Orban for comments on an earlier version of this manuscript. We also thank M. Pavlova and E. Grossman for stimulating discussions.

References

- Baker CI, Behrmann M, Olson CR. Impact of learning on representation of parts and wholes in monkey inferotemporal cortex. Nat Neurosci. 2002;5:1210–1216. doi: 10.1038/nn960. [DOI] [PubMed] [Google Scholar]

- Blake R, Shiffrar M. Perception of human motion. Annu Rev Psychol. 2007;58:47–73. doi: 10.1146/annurev.psych.57.102904.190152. [DOI] [PubMed] [Google Scholar]

- Boynton GM, Finney EM. Orientation-specific adaptation in human visual cortex. J Neurosci. 2003;23:8781–8787. doi: 10.1523/JNEUROSCI.23-25-08781.2003. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Buccino G, Binkofski F, Fink GR, Fadiga L, Fogassi L, Gallese V, Seitz RJ, Zilles K, Rizzolatti G, Freund HJ. Action observation activates premotor and parietal areas in a somatotopic manner: an fMRI study. Eur J Neurosci. 2001;13:400–404. [PubMed] [Google Scholar]

- Calvo-Merino B, Grèzes J, Glaser DE, Passingham RE, Haggard P. Seeing or doing? Influence of visual and motor familiarity in action observation. Curr Biol. 2006;16:1905–1910. doi: 10.1016/j.cub.2006.07.065. [DOI] [PubMed] [Google Scholar]

- Casile A, Giese MA. Nonvisual motor training influences biological motion perception. Curr Biol. 2006;16:69–74. doi: 10.1016/j.cub.2005.10.071. [DOI] [PubMed] [Google Scholar]

- Cross ES, Hamilton AF, Grafton ST. Building a motor simulation de novo: observation of dance by dancers. Neuroimage. 2006;31:1257–1267. doi: 10.1016/j.neuroimage.2006.01.033. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Decety J, Grèzes J, Costes N, Perani D, Jeannerod M, Procyk E, Grassi F, Fazio F. Brain activity during observation of actions. Influence of action content and subject's strategy. Brain. 1997;120:1763–1777. doi: 10.1093/brain/120.10.1763. [DOI] [PubMed] [Google Scholar]

- Downing PE, Jiang Y, Shuman M, Kanwisher N. A cortical area selective for visual processing of the human body. Science. 2001;293:2470–2473. doi: 10.1126/science.1063414. [DOI] [PubMed] [Google Scholar]

- Edelman S, Bülthoff HH, Bülthoff I. Effects of parametric manipulation of inter-stimulus similarity on 3D object categorization. Spat Vis. 1999;12:107–123. doi: 10.1163/156856899x00067. [DOI] [PubMed] [Google Scholar]

- Engel SA, Rumelhart DE, Wandell BA, Lee AT, Glover GH, Chichilnisky EJ, Shadlen MN. fMRI of human visual cortex. Nature. 1994;369:525. doi: 10.1038/369525a0. [DOI] [PubMed] [Google Scholar]

- Freedman DJ, Riesenhuber M, Poggio T, Miller EK. A comparison of primate prefrontal and inferior temporal cortices during visual categorization. J Neurosci. 2003;23:5235–5246. doi: 10.1523/JNEUROSCI.23-12-05235.2003. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Ganel T, Gonzalez CL, Valyear KF, Culham JC, Goodale MA, Köhler S. The relationship between fMRI adaptation and repetition priming. Neuroimage. 2006;32:1432–1440. doi: 10.1016/j.neuroimage.2006.05.039. [DOI] [PubMed] [Google Scholar]

- Giese MA, Lappe M. Measurement of generalization fields for the recognition of biological motion. Vision Res. 2002;42:1847–1858. doi: 10.1016/s0042-6989(02)00093-7. [DOI] [PubMed] [Google Scholar]

- Giese MA, Poggio T. Morphable models for the analysis and synthesis of complex motion pattern. Int J Comput Vis. 2000;38:59–73. [Google Scholar]

- Giese MA, Thornton I, Edelman S. Metrics of the perception of body movement. J Vis. 2008;8:13, 11–18. doi: 10.1167/8.9.13. [DOI] [PubMed] [Google Scholar]