Abstract

Time perception in the supra-second range is crucial for fundamental cognitive processes like decision making, rate calculation, and planning. In the vast majority of species, behavioral and neurophysiological manipulations, interval timing is scale invariant: the time-estimation errors are proportional to the estimated duration. The origin and mechanisms of this fundamental property are unknown. We discuss the computational properties of a circuit consisting of a large number of (input) neural oscillators projecting on a small number of (output) coincidence detector neurons, which allows time to be coded by the pattern of coincidental activation of its inputs. We showed that time-scale invariance emerges from the neural noise, such as small fluctuations in the firing patterns of its input neurons and in the errors with which information is encoded and retrieved by its output neurons. In this architecture, time-scale invariance is resistant to manipulations as it depends neither on the details of the input population, nor on the distribution probability of noise.

I. INTRODUCTION

The perception and use of durations in the seconds-to-hours range (interval timing) is essential for survival and adaptation, and is critical for fundamental cognitive processes like decision making, rate calculation, and planning of action [1]. The fixed-interval (FI) procedure is the classic interval timing experiment in which a subject’s behavior is reinforced for the first response (e.g., lever press) made after a pre-programmed interval has elapsed since the previous reinforcement. Although no external time cues is provided, the subjects trained on a FI procedure typically pause after the delivery of reinforcement and start responding after a fixed proportion of the interval has elapsed. A widely-used discrete-trial variant of FI procedure is the peak-interval (PI) procedure [2, 3]. In a PI procedure, a stimulus such as a tone or light is turned on to signal the beginning of the to-be-timed interval and in a proportion of trials the subjects first response after the criterion time is reinforced. In the remainder of the trials, known as probe trials, no reinforcement is given and the stimulus remains on for about three-four times the criterion time. The mean response rate over a very large number of trials has a Gaussian shape whose peak measures the accuracy of criterion time estimation and the spread of the timing function measures its precision. In the vast majority of species, protocols, and manipulations to date, interval timing is both accurate and time-scale invariant, i.e., time-estimation errors increase linearly with the estimated duration [4–7] (Fig. 1). Accurate and time-scale invariant interval timing was observed in many species [1, 4] from invertebrates to fish, birds, and mammals, such as rats [8] (Fig. 1A), mice [9] and humans [10] (Fig. 1B). Time-scale invariance is stable over behavioral (Fig. 1B), lesion [11], pharmacological [12, 13] (Fig. 1C), and neurophysiological manipulations [14] (Fig. 1D).

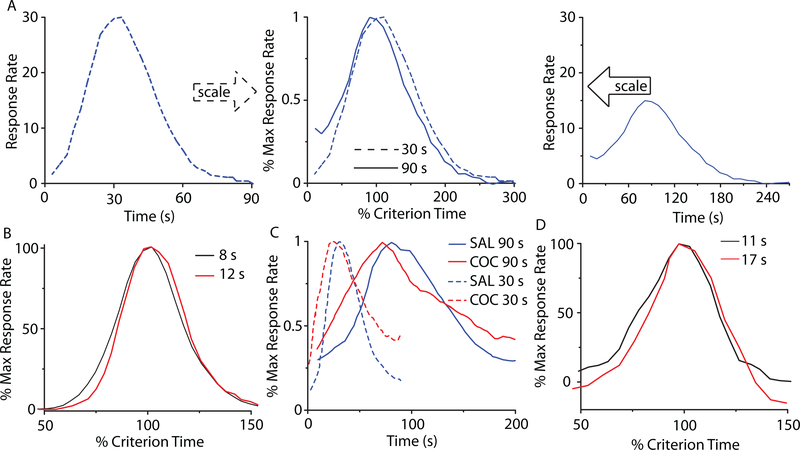

FIG. 1. (Color online) Accurate and time-scale invariant interval timing.

(A) The response rate of rats timing a 30s interval (left) or 90s interval (right) overlap (center) when normalized by the maximum response rate (vertical axis), respectively, the criterion time (horizontal axis); redrawn from [8]. (B) Time-scale invariance in human subjects for 8s and 21s criteria; redrawn from [10]. (C) Systemic cocaine (COC) administration speeds-up timing proportional (scalar) to the original criteria 30s and 90s; redrawn from [8]. (D) The hemodynamic response associated with a subject’s active time reproduction scales with the timed criterion, 11s v. 17s; redrawn from [14].

In 1963, Treisman introduced the elements of one of the most influential interval timing paradigms - the pacemaker-accumulator clock (pacemaker-counter). According to Treisman (1963), the interval timing mechanism that links internal clock to external behavior also requires some kind of store of reference times and a comparison mechanism for time judgement. Treisman’s pacemaker-counter mode was rediscovered two decades later and became the Scalar Expectancy Theory (SET) [6, 15]. According to SET, the interval timing emerges from the interaction of three abstract blocks: a clock, an accumulator (working or short-term memory), and a comparator. The internal pacemaker generates pulses that accumulate in the working memory until the occurrence of an important event, such as reinforcement. At the time of the reinforcement, the number of clock pulses accumulated is transferred from the working (short-term) memory to the reference (or long-term) memory. A response (output) is produced by computing the ratio between the value stored in the reference memory and the current accumulator total. When the ratio falls below a threshold, responding begins. One of the major shortcomings of SET is that it predicts greater relative accuracy at longer time intervals: “If there is no error in the accumulator, or if the error is independent of accumulator value, and if there is pulse-by-pulse variability in the pacemaker rate, then by the law of large numbers, relative error (standard deviation divided by mean, coefficient of variation) must be less at longer time intervals.” (see Staddon and Higa [16] for details). This theoretical prediction of SET contradicts the time-scale invariance observed in experiments.

Another influential interval timing paradigm is the Behavioral Timing (BeT) theory [17, 18]. BeT assumes a “clock” consisting of a fixed sequence of states with the transition from one state to the next driven by a Poisson pacemaker. Each state is associated with different classes of behavior, and, the theory claims, these behaviors serve as discriminative stimuli that set the occasion for appropriate operant responses (although there is not a 1:1 correspondence between a state and a class of behavior). The added assumption that pacemaker rate varies directly with reinforcement rate allows the model to handle some experimental results not covered by SET, although it has failed some specific tests (see [16] for a review).

A handful of neurobiologically-inspired models explain accurate timing and time-scale invariance as a property of the information flow in the neural circuits [19, 20]. Shadlen was the first to suggest that timing of sub-second intervals may be addressed at the level of single neurons [21], though how such a mechanism accounts for timing of supra-second durations is unclear. Killeen and Taylor [22] attempted solving this issue by explaining timing in terms of information transfer between noisy counters, although the biological mechanisms were not addressed. Meck and associates [4, 23] (Fig. 2A) proposed a different approach called the Striatal Beat Frequency (SBF) model in which timing is coded by the coincidental activation of neurons, which produces firing beats with periods spanning a much wider range of durations than single neurons [24]. As Matell and Meck (2004) suggested, the interval timing could be the product of multiple and complimentary mechanisms. They suggested that the same neuroanatomical structure could use different mechanisms for interval timing. A possible common ground for all interval timing models could be the threshold accommodation phenomenon that allows stimulus selectivity [25, 26] and promote coincidence detection [9]. Farries (2010) showed that dynamic threshold change in subthalamic nucleus that projects to the output nuclei of the basal ganglia (BG) allows subthalamic nucleus (STn) to act either as an integrator for rate code inputs or a coincidence detector [27] (see Fig. 2).

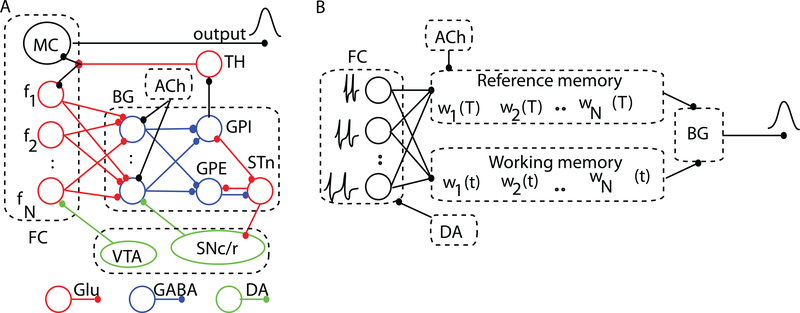

FIG. 2. (Color online) The neurobiological structures involved in interval timing and the corresponding simplified SBF architecture.

(A) Schematic representation of some neurobiological structures involved in interval timing. The color-coded connectivities among different areas emphasize appropriate neuromodulatory pathways. The two main areas involved in interval timing are frontal cortex (FC) and basal ganglia (BG). (B) In our implementation of the SBF model, the states of the N cortical oscillators (input neurons) at reinforcement time T are stored in the reference memory as a set of weights wi(T). During test trials, the working memory stores the current weights wi(t) and, together with the reference memory, projects its content onto spiny (output) neurons of the BG. FC: frontal cortex; MC: motor cortex; BG: basal ganglia; TH: thalamus. GPE: globus pallidus external; GPI: globus pallidus internal; STn: subthalamic nucleus; SNc/r: substantia nigra pars compacta/reticulata; VTA: ventral tegmental area; Glu: glutamate; DA: dopamine; GABA: gamma-aminobutyric acid; ACh: acetylcholine.

Here we showed analytically that in the context of the proposed SBF neural circuitry, time-scale invariance emerges naturally from variability (noise) in models’ parameters. We also showed that time-scale invariance is independent of both the type of the input neuron, and the probability distribution or the sources of the noise.

This paper is organized as follows. The SBF model is described in Sec. II. A brief neurobiological justification is provided for each major functional block of the SBF model. The analytical and numerical results are described in Sec. III. In the absence of noise, the SBF network produces accurate interval timing but violates time-scale invariance. At least one source of noise added to the SBF model produces both accurate and time-scale invariant interval timing. We conclude in Sec. IV with a discussion of our results. Detailed analytical derivations of time-scale invariance in the presence of noise are summarized in the Appendices.

II. THE STRIATAL BEAT FREQUENCY MODEL

Our paradigm for interval timing is inspired by the SBF model [4, 23], which assumes that durations are coded by the coincidental activation of a large number, N, of cortical (input) neurons projecting onto No spiny (output) neurons in the striatum that selectively respond to particular reinforced patterns [28–30] (Fig. 2A).

A. The cortical oscillators

Neurobiological justification

There is strong experimental evidence that oscillatory activity is a hallmark of neuronal activity in various brain regions, including the olfactory bulb [31–33], thalamus [34, 35], hippocampus [36, 37] and neocortex [38]. Cortical oscillators in the alpha band ([8, 13] Hz) were previously considered as pacemakers for temporal accumulation [39], as they reset upon occurrence of the to-be-remembered stimuli [40].

Numerical implementation

Neurons that produce stable membrane potential oscillations are mathematically described as limit cycle oscillators, i.e., they pose a closed and stable phase space trajectory [41]. Since the oscillations repeat identically, it is often convenient to map the high-dimensional space of periodic oscillators using a phase variable that continuously covers the interval (0, 2π). Phase oscillator models have a series of advantages: (1) provide analytical insights into the response of complex networks, (2) any neural oscillator can be reduced to a phase oscillator near a bifurcation point [42], and (3) allow numerical checks in a reasonable time. All neurons operate near a bifurcation, i.e., a point past which the neuron produces large membrane potential excursions - called action potentials [41].

In this SBF-sin implementation, the cortical neurons are represented by N (input) phase oscillators with intrinsic frequencies fi (i = 1,…, N) uniformly distributed over the interval (fmin, fmax), projecting onto No (output) spiny neurons [23] (Fig. 2B). A sine wave is the simplest possible phase oscillator that mimics periodic transitions between hyperpolarized and depolarized states observed in single cell recordings. For analytical purposes, the membrane potential of ith cortical neuron was approximated by a sine wave vi(t) = a cos(2πfit), where a is the amplitude of oscillations. We also implemented an SBF-ML network in which the input neurons are conductance-based Morris-Lecar (ML) model neuron with two state variables: membrane potential and a slowly varying potassium conductance [43, 44] (see Appendix C for detailed model equations).

B. The memory of criterion time

Neurobiological justification

Among the potential areas involved in storing brain states related to salient features of stimuli in interval timing trials are the hippocampus (see [45] and references therein) and the striatum, which we mimic in our simplified neural circuitry (see Fig. 2A).

Numerical implementation

The memory of the criterion time T is modeled by the set of state parameters (or weights) wij that characterize the state of cortical oscillator i during the FI trial j. In our implementation of the noiseless SBF-sin model, the weights wij ∝ vi(Tj), where Tj is the stored value of the criterion time T in the FI trial j. The state of PFC oscillators i at the reinforcement time Tj is the normalized average over all memorized values Tj of the criterion time: , where we used such that the normalized weight is bounded |wi(T)| ≤ 1 (see Fig. 2B). We found no difference between the response of the SBF model with the above weights or the positively-defined weight .

C. Coincidence detection with spiny neurons

Neurobiological justification

Support for the involvement of the striato-frontal dopaminergic system in timing comes from imaging studies in humans [46–49], lesion studies in humans and rodents [50, 51], and drug studies in rodents [52, 53] all pointing towards the basal ganglia as having a central role in interval timing (see also [54] and references therein). Striatal firing patterns are peak-shaped around a trained criterion time, a pattern consistent with substantial striatal involvement in interval timing processes [55]. Lesions of striatum result in deficiencies in both temporal-production and temporal-discrimination procedures [56]. There are also neurophysiological evidences that striatum can engage reinforcement learning to perform pattern comparisons (see [57]). Another reason we ascribed the coincidence detection to medium spiny neurons is due to their bistable property that permits selective filtering of incoming information [58, 59]. Each striatal spiny neuron integrates a very large number of afferents (between 10,000 and 30,000) [28, 29, 59], of which a vast majority (≈ 72%) are cortical [60, 61]. The comparison between a stored representation of an event, e.g., the set of the states of cortical oscillators at the reinforcement (criterion) time wi(T), and the current state of the same cortical oscillators during the ongoing test trial wi(t) is believed to be distributed over many areas of the brain [62] (Fig. 2A).

Numerical implementation

Based on neurobiological data, in our implementation of the striato-cortical interval timing network we have a ratio of 1000:1 between the input (cortical) oscillators and output (spiny) neurons in the BG (Fig. 2B). The output neurons, which mimic the spiny neurons in the BG, act as coincidence detectors: They fire when the pattern of activity (or the state of cortical oscillators) wi(t) at the current time t matches the memorized reference weights wi(T). Numerically, the coincidence detection was modeled using the product of the two sets of weights:

| (1) |

The purpose of the coincidence detection given by Eq. (1) is to implement a rule that produces a strong output when the two vectors wi(T) and wi(t) coincide and a weaker responses when they are dissimilar. Although there are many choices, such as sigmoidal functions (which involve numerically expensive calculations due to exponential functions involved), we opted for implementing the simplest possible rule that would fulfill the above requirement, i.e., the dot product of the vectors wi(T) and wi(t). Without reducing the generality of our approach, and in agreement with experimental findings [61], for analytical analyses we only considered one output neuron in Eq. (1).

D. Noise in the SBF model

Neurobiological justification

Variability in the SBF model could be ascribed to channel gating fluctuations [63, 64], noisy synaptic transmission [65], and background network activity [66–68]. Single-cell recordings support the hypothesis that irregular firing in cortical interneurons is determined by the intrinsic stochastic properties (channel noise) of individual neurons [69, 70]. At the same time, fluctuations in the presynaptic currents that drive cortical spiking neurons have a significant contribution to the large variability of the interspike intervals [71, 72]. For example, in spinal neurons, synaptic noise alone fully accounts for output variability [71]. Additional variability affects either the storage (writing) or retrieval (reading) of criterion time to or from the memory [73, 74]. Another source of criterion time variability comes from considerations of how animals are trained [75, 76]. In this paper, we were not concerned with the biophysical mechanisms that generated irregular firing of cortical oscillators nor we investigated how reading / writing errors of criterion time happened. We rather investigated if the above assumed variabilities in the SBF model’s parameters can produce accurate and time-scale invariant interval timing.

Numerical implementation

Two sources of variability (noise) were considered in this SBF implementation: 1) Frequency variability, which was modeled by allowing the intrinsic frequencies fi to fluctuate according to a specified probability density function pdff, e.g., Gaussian, Poisson, etc. Computationally, the noise in the firing frequency of the respective neurons was introduced by varying either the frequency, fi (in the SBF-sin implementation), or the bias current Ibias required to bring the ML neuron to the excitability threshold (in the SBF-ML implementation). 2) Memory variability was modeled by allowing the criterion time T to be randomly distributed according to a probability density function pdfT.

III. RESULTS

A. No time-scale invariance in a noiseless SBF model

In the absence of noise (variability) in the SBF-sin model, the output given by Eq. (1) for one spiny (output) neuron (No = 1) becomes:

| (2) |

The first term determines a sharp output when the current time t approaches the criterion time T and we dropped the second symmetrical term that peaks at t = −T. The cos(Nπ(fmax + fmin)(t − T)) factor is a very fast oscillating function that fills out the envelope of the output function, i.e., sin(Nπ(fmax −fmin)(t−T))/(2sin(π(fmax −fmin)(t−T))). Based on Eq. (2), we found that O(t = T) = N and the width of the output function σo in the absence of noise can be determined from O(T + σo/2) = O(t = T)/2, i.e.,

| (3) |

The equation (3) that determines the width of the output function in the absence of noise contains only the number of cortical oscillators N and the values of the frequency range fmin and fmax, respectively. Therefore, σo is independent of the criterion time and violates time-scale invariance.

To numerically verify the above predictions, the envelope of the output function of a noise-free SBF-sin model (Fig. 3A) was fitted with a Gaussian whose mean and standard deviations were contrasted against the theoretically-predicted values (Fig. 3B). Although the standard deviations obtained from the fitting of the envelope (Fig. 3A) were not strictly constant (Fig. 3B, filled rectangles), they were close to the theoretically predicted values (Fig. 3B, filled circles) in the limit of fitting errors.

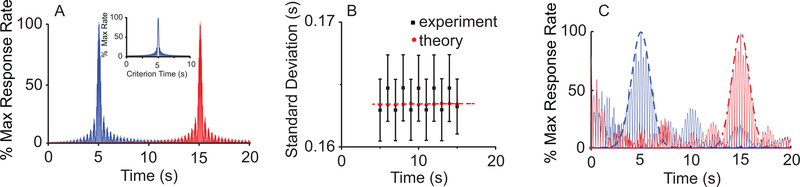

FIG. 3. (Color online) A noise-free SBF model does not produce time-scale invariance.

(A) Analytically predicted (inset) and numerically generated output of a noise-free SBF-sin model with N = 100 for T = 5s and T = 15s. (B) As predicted analytically (theoretical), the width the output function of a noise free SBF-sin model is independent of the criterion time. (C) A noise-free SBF-ML model does not produce time-scale invariance either. The width of the Gaussian envelopes (dashed line for T = 5s and dashed-dotted line for t = 15s) remains constant.

The above result regarding the emergence of time-scale property from noise in SBF-sin model can extend to any type of input neuron. Indeed, according to Fourier’s theory, any periodic train of action potentials can be decomposed into discrete sine-wave components. It results that irrespective of the type of input neuron, a noise-free SBF model cannot produce time-scale invariant outputs. We verified this prediction by replacing the sine-wave oscillator inputs with biophysically-realistic noise-free ML neurons. Numerical simulations confirmed that the envelope of the output function can be reasonably fitted by a Gaussian, but the width of the Gaussian output does not increase with the timed interval (see Fig. 3C), thus violating the time-scale invariance (scalar property).

B. Time-scale invariance emerges from noise in the SBF model

Many sources of noise (variability) may affect the functioning of an interval timing network, such as small fluctuations in the intrinsic frequencies of the inputs, and in the encoding and retrieving the weights wi(T) by the output neuron(s) [23, 24, 77, 78]. Here we showed analytically that one noise source is sufficient to produce time-scale invariance [23]. Without compromising generality, in the following we examined the role of the variability in encoding and retrieval of the criterion time by the output neuron(s). The cumulative effect of all noise sources (trial-to-trial variability, neuromodulatory inputs, etc.) on the memorized weights wi(T) was modeled by the stochastic variable Tj distributed around T according to a given pdfT. For only one spiny (output) neuron (No = 1), the output function given by Eq. (1) becomes:

| (4) |

which has the first term centered at t = +Tj and the second symmetrical at the unphysical value of t = −Tj. Based on the central limit theorem, the output function given by Eq. (4), which is a sum of a (very) large number Trials of stochastic variables Tj, is always a Gaussian regardless the pdfT of the criterion time.

To examine time-scale invariance, we estimate again the relationship between the width of the output function σo and the criterion time T. Briefly, using trigonometric identities we rewrote the output function with respect to the stochastic displacement x of a particular realization Tj with respect to T in terms of the new variable θ(x) = π(t − T − Tx)df (see Appendix A). The function θ(x) is a phase difference between the current (running) time t and the memorized criterion time Tj.

To investigate time-scale invariance of the results given in Eq. (4), the physically realizable term centered around t = +Tj was transformed to:

| (5) |

where θ(x) = π(t−T−Tx)df, and df = (fmax−fmin)/N. The pdfz of the new stochastic variable z(x) is related to the pdfx of the criterion time pX(x) through pZ(z) = pX(h−1(z))|dx/dz| (see [79]). For a very large number of cortical (input) oscillators and irrespective of the noise distribution pdfT of the criterion time, the expected value of the output function given by Eq. (5) becomes:

| (6) |

where the range (xmin, xmax) depends on the specific type of pdfX(x) considered. To estimate the expression in Eq. (6), we used the mean value theorem for integrals which states that there always exists a number xmin < γ < xmax such that the integral in Eq. (6) is approximated by:

| (7) |

To find the width of the time-dependent expected value of the output function in Eq. (7), we require that , i.e., the expected value at the time t = T + σo/2 that corresponds to the half-width of the output function must be 1/2 of the expected value at criterion time t = T. By solving Eq. (7) (see Appendix A), we found that σo/(2Tγ) = const., i.e., σo ∝ T. Therefore, in the presence of at least one source of noise (variability in the encoding / decoding of criterion time T) the SBF-sin has: (1) a Gaussian output function centers at T (accurate interval timing) that (2) obeys the scalar property.

1. Particular case: Time-scale invariance in the presence of Gaussian noise

Although we already showed that the output function for the SBF-sin model and arbitrary pdfT for the criterion time noise is always Gaussian and produces accurate interval timing that also obeys scalar property, it is illuminating to grasp the meaning of the theoretical coefficients in our general result by investigating a biologically relevant particular case. If the criterion time is affected by a Gaussian noise with zero mean and standard deviation σT, one can show that, in the limit of a large pool of inputs, the standard deviation of the output function becomes:

| (8) |

Briefly (see Appendix B for details), in this particular case, the reference weights are normalized sums of the stochastic variable y = cos(2πfiT(1 + G(0, σT))) ∈ [−1, 1], where G(0, σT) is a Gaussian stochastic variable with zero mean and σT standard deviation. By replacing the stochastic vector of reference weights wi(T) in Eq. (1) with its expected value the output function is given by:

| (9) |

which has two Gaussian terms centered at t = ±T with a standard deviation given by Eq. (8). The Eq. (9) shows that the SBF-sin model produces accurate interval timing with a Gaussian shape that has a with given by the scaling Eq. (8), i.e., obeys time-scale invariance property.

We used the SBF-sin implementation to numerically verify our prediction given by Eq. (8) over multiple trials (runs) of this type of stochastic process and for different values of T. The output functions (see continuous lines in Fig. 4A) for T = 30s and T = 90s are reasonably fitted by Gaussian curves (see dashed lines in Fig. 4A). Our numerical results show a linear relationship between σo of the Gaussian fit of the output and T (Fig. 4B). We found that the resultant slope of this linear relationship matched the theoretical prediction given by Eq. (8): For example, for σT = 10% the average slope was 11.3% ± 4.5% with a coefficient of determination of R2 = 0.93, p < 10−4.

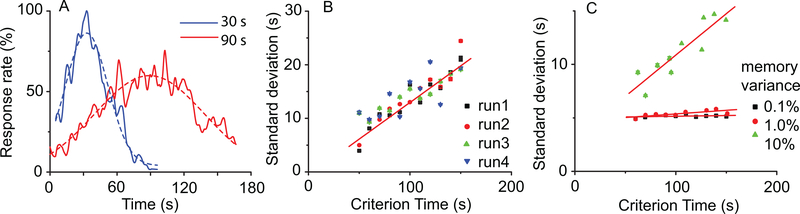

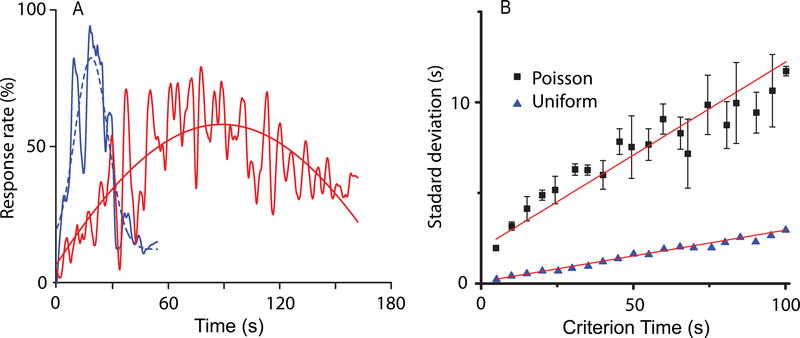

FIG. 4. (Color online) Time-scale invariance emerges from criterion time noise in the SBF model.

(A) Time-scale invariance emerges spontaneously in a nosy SBF-sin model; here the two criteria are T = 30s and T = 90s. The output functions (continuous lines) were fitted with Gaussian curves (dashed lines) in order to estimate the position of the peak and the width of the output function. (B) In an SBF-sin model, the standard deviation increases linearly with the criterion time in all four trials shown with different symbols. (C) In an SBF-ML implementation, low levels of Gaussian noise (solid rectangles represent σT = .1% and circles σT = 1%) produce an almost constant standard deviation of the output function similar to noise free case. At high enough levels of noise (solid triangles represent σT = 10%), the SBF-ML model with criterion time variance produces a standard deviation σo that increases linearly with the criterion time T, which is the hallmark of time-scale invariance.

We noticed that the SBF-ML neurons is less sensitive to the level of criterion time noise. For example, a noise level of 0.1% that would lead to a linear dependence of standard deviation on criterion time in the case of the SBF-sin implementation, rendered no significant change in standard deviation with the SBF-ML implementation (Fig. 4C). The scalar property is indeed valid (Fig. 4C), but it emerges only at such levels of (memory) variance that were not even accessible to SBF-sin implementation. The slope of the standard deviation was insignificant 0.001±0.001 (R2 = 0.342) for %0.1 memory variance, 0.007±0.002(R2 = 0.789) for %1 variance, respectively, 0.07 ± 0.01(R2 = 0.898) for 10% memory variance. The fact that the slope of standard deviation versus criterion time increases ten times (from 0.007 to 0.07) while the memory variance increases ten times (from 1% to 10%) suggests that σo ∝ σTT for SBF-ML as we predicted and already checked for the SBF-sin model.

We also checked numerically that time-scale invariance is preserved by adding other sources of noise, e.g., small fluctuations in fi, beside the noise in encoding the criterion time pdfT (Fig. 5A). Not only time-scale invariance was preserved, but the output function became slightly skewed (longer tail), as often described in the literature (see Fig. 1), suggesting that other properties of the output function may also derive from noise. These results further suggest that the more sources of noise are considered, the more stable the scalar property is, thus explaining its ubiquity [1, 4, 12]. Only when all the noise sources are eliminated, the output ceases to be time-scale invariant, as shown by Eq. (2).

FIG. 5. (Color online) Time-scale invariance is robust to noise manipulation in the SBF model.

(A) In the presence of both criterion time and frequency variance, the SBF-ML produces accurate and time-scale invariant output. At the same time, multiple sources of noise determine a long tail in the output function, which is similar with experimental findings. (B) The scalar property is also preserved regardless the type of criterion time variability (uniform noise - solid triangles, Poisson noise - solid squares).

IV. DISCUSSION

Computational models of interval timing vary largely with respect to the hypothesized mechanisms and the assumptions by which temporal processing is explained, and by which time-scale invariance, or drug effects are explained. The putative mechanisms of timing rely on pacemaker/accumulator processes [6, 7, 80, 81], sequences of behaviors [18], pure sine oscillators [8, 23, 82–84], memory traces [16, 85–89], or cell and network-level models [21, 90]. For example, both neurometric functions from single neurons and ensemble of neurons successfully paralleled the psychometric functions for the to-be-timed intervals shorter than one second [21]. Reutimann et al. (2004) also considered interacting populations that are subject to neuronal adaptation and synaptic plasticity based on the general principle of firing rate modulation in single-cell. Balancing LTP and LTD mechanisms are thought to modulate the firing rate of neural populations with the net effect that the adaptation leads to a linear decay of the firing rate over time. Therefore, the linear relationship between time and the number of clock ticks of the pacemaker-accumulator model in the SET of interval timing [6] was translated into a linearly decaying firing rate model that maps time and variable firing rate.

By and large, to address time-scale invariance current behavioral theories assume convenient computations, rules, or coding schemes. Scalar timing is explained as either deriving from computation of ratios of durations [6, 7, 91], adaptation of the speed at which perceived time flows [18], or from processes and distributions that conveniently scale-up in time [16, 82, 85, 87, 88]. Some neurobiological models share computational assumptions with behavioral models and continue to address time-scale invariance by specific computations or embedded linear relationships [92]. Some assume that timing involves neural integrators capable of linearly ramping up their firing rate in time [90], while others assume LTP/LTD processes whose balance leads to a linear decay of the firing rate in time [93]. It is unclear whether such models can account for time-scale invariance in a large range of behavioral or neurophysiological manipulations.

Neurons are often viewed as communications channels which respond even to the precisely delivered stimuli sequence in a random manner consistent with Gaussian noise [94]. Biological noise was shown to play important functional roles, e.g., enhance signal detection through stochastic resonance [95, 96] and stabilize synchrony [97, 98]. Firing rate variability in neural oscillators also results from ongoing cortical activity (see [98, 99] and references therein), which may appear noisy simply because it is not synchronized with obvious stimuli. Our theoretical predictions based on an SBF model show that time-scale invariance emerges as the property of a (very) large and noisy network. Furthermore, we showed that the output function of an SBF mode always resembles the Gaussian shape found in behavioral experiments regardless the type of noise affecting the timing network. Our results regarding the effect of noise on interval timing support and extend the speculation [23] by which an SBF model requires at least one source of variance (noise) to address time-scale invariance. Rather than being a signature of higher-order cognitive processes or specific neural computations related to timing, time-scale invariance naturally emerges in a massively-connected brain from the intrinsic noise of neurons and circuits [4, 21]. This provides the simplest explanation for the ubiquity of scale invariance of interval timing in a large range of behavioral, lesion, and pharmacological manipulations.

ACKNOWLEDGMENTS

This work was supported by grants from the National Science Foundation (IOS CAREER award 1054914 to S.A.O.) and the National Institute of Health (National Institute of Mental Health grants MH065561 and MH073057 to C.V.B.)

Appendix A: General case: Time-scale invariance from noise with arbitrary distribution

To estimate the width of the output function using Eq. (7), we notice that at t = T the function θ(γ) = −πTγdf which leads to:

| (A1) |

where we used the notation a = πTγ(fmax − fmin), b = πTγ(fmax + fmin). Similarly, the estimated value of the output function at t = T + σo/2, i.e., the half width of the output function, is

| (A2) |

where x = σo/(2Tγ) is proportional to the coefficient of variation of the criterion time. By solving , we find that:

| (A3) |

Using a Taylor series approximation for the right hand side of Eq. (A3), we obtain:

| (A4) |

where the constant , and O(x2) includes second order terms and higher. From Eq. (A4), the first order approximation of the expected width of the output function is:

| (A5) |

which proves that x = σo/(2Tγ) = Const., i.e., σo ∝ T. This proves that the scalar-time property is fulfilled regardless the pdfT.

Appendix B: Particular case: Time-scale invariance from Gaussian noise

When the criterion time is affected by a Gaussian noise, the reference weights wi(T) become:

| (B1) |

The stochastic variables y = cos(2πfiT(1 + G(0, σT))) ∈ [−1, 1] under summation in Eq. (B1) has the pdf [100]:

The mean of pdfy is , which rewrites as:

| (B2) |

By replacing the stochastic vector of reference weights wi(T) in Eq. (1) with the expected value from Eq. (B2) one obtains:

| (B3) |

which, for a very broad spectrum of frequencies (theoretically 0 < f < ∞) and a very large pool of neural oscillators (theoretically N → ∞), becomes:

| (B4) |

which is equivalent to Eq. (8).

Appendix C: Morris-Lecar model equations

We used a dimensionless Morris-Lecar model [43, 101] described by the following equations

| (C1) |

where x1 is the membrane potential, x2 is the slow potassium activation and all ionic currents are described by Ix = gx(x1 −Ex), where gx is the conductance of the voltage gated channel x and Ex is the corresponding reversal potential. In particular, the calcium current is ICa = gCam∞(x1)(x1 − ECa), the potassium current is IK = gKx2(x1 − EK), and the leak current is IL = gL(x1−VL). The reversal potentials for calcium, potassium and leak currents are ECa = 1.0,EK = −0.7,EL = −0.5, respectively. The steady state activation function for calcium channels is m∞(x1) = 1 + tanh((x1 − V1)/V2))/2, where V1 = −0.01, V2 = 0.15, the steady state activation function for potassium channels is w∞(x1) = (1 + tanh((x1 − V3)/V4])/2 where V3 = 0.1, V4 = 0.145, the inverse time constant of potassium channels is λ0(x1) = cosh((x1 −V3)/V4/2), the potassium and leak conductances are gK = 2.0, gL = 0.5, respectively, and the ξ = 1.0/3.0.

The two control parameters that can switch the ML model from a Class I excitable cell [102] to a Class II are the calcium conductance gCa and the bias current I0. For example, if gCa = 1.0 and 0.083 < I0 < 0.242 the equations (C1) describe what was classified by A.L. Hodgkin as Class I excitable cells. For example, if gCa = 0.5 and 0.303 < I0 < 0.138 the equations (C1) describe what was classified by A.L. Hodgkin as Class II excitable cells. In our simulations, we used a Class II ML model neuron that has a membrane potential shape very close to a sine-wave.

Contributor Information

Sorinel A. Oprisan, Department of Physics and Astronomy, College of Charleston, 66 George Street, Charleston, SC 29624, U.S.A.

Catalin V. Buhusi, Department of Psychology, Utah State University, Logan, UT

References

- [1].Gallistel C, The organization of behavior (MIT Press, Cambridge, MA, 1990). [Google Scholar]

- [2].Catania AC, “Reinforcement schedules and psychophysical judgments: A study of some temporal properties of behavior,” in The theory of reinforcement schedules, edited by Schoenfeld W (Appleton-Century-Crofts, New York, 1970) pp. 1–42. [Google Scholar]

- [3].Roberts S, Journal of Experimental Psychology: Animal Behavior Processes 7, 242 (1981). [PubMed] [Google Scholar]

- [4].Buhusi C and Meck W, Nature Reviews Neuroscience 6, 755 (2005). [DOI] [PubMed] [Google Scholar]

- [5].Mauk M and Buonomano D, Annu Rev Neurosci 27, 307 (2004). [DOI] [PubMed] [Google Scholar]

- [6].Gibbon J, Psychological Review 84, 279 (1977). [Google Scholar]

- [7].Gibbon J, Church R, and Meck W, Annals of the New York Academy of Sciences 423, 52 (1984). [DOI] [PubMed] [Google Scholar]

- [8].Matell M, King G, and Meck W, Behavioral Neuroscience 118, 150 (2004). [DOI] [PubMed] [Google Scholar]

- [9].Buhusi C and Aziz D, Behav Neurosci 123, 1102 (2009). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [10].Rakitin B, Gibbon J, Penney T, Malapani C, Hinton S, and Meck W, Journal of Experimental Psychology: Animal Behavior Processes 24, 15 (1998). [DOI] [PubMed] [Google Scholar]

- [11].Meck W, Church R, Wenk G, and Olton D, Journal of Neuroscience 7, 3505 (1987). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [12].Buhusi C and Meck W, “Timing behavior,” in Encyclopedia of Psychopharmacology, Vol. 2, edited by Stolerman I (Springer, Berlin, 2010) pp. 1319–1323. [Google Scholar]

- [13].Buhusi C and Meck W, Behavioral Neuroscience 116, 291 (2002). [DOI] [PubMed] [Google Scholar]

- [14].Meck W and Malapani C, Cognitive Brain Research 21, 133 (2004). [DOI] [PubMed] [Google Scholar]

- [15].Church R, Meck W, and Gibbon J, Journal of Experimental Psychology: Animal Behavior Processes 20, 135 (1994). [DOI] [PubMed] [Google Scholar]

- [16].Staddon J and Higa J, Journal of Experimental Analysis of Behavior 71, 215 (1999). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [17].Killeen PR, “Behaviors time,” in The psychology of learning and motivation, Vol. 27, edited by Bower G (Academic Press, New York, 1991) pp. 294–334. [Google Scholar]

- [18].Killeen P and Fetterman J, Psychological Review 95, 274 (1988). [DOI] [PubMed] [Google Scholar]

- [19].Sendiña Nadal I, Leyva I, Buldu JM´, Almendral JA, and Boccaletti S, Phys. Rev. E 79, 046105 (2009). [DOI] [PubMed] [Google Scholar]

- [20].Talathi SS, Abarbanel HDI, and Ditto WL, Phys. Rev. E 78, 031918 (2008). [DOI] [PubMed] [Google Scholar]

- [21].Leon MI and Shadlen MN, Neuron, 317?327 (2003). [DOI] [PubMed] [Google Scholar]

- [22].Killeen P and Taylor T, Psychological Review 107, 430 (2000). [DOI] [PubMed] [Google Scholar]

- [23].Matell M and Meck W, Cognitive Brain Research 21, 139 (2004). [DOI] [PubMed] [Google Scholar]

- [24].Miall R, Neural Computation 1, 359 (1989). [Google Scholar]

- [25].Wilent W and Contreras D, Nature Neuroscience 8,1364? 1370 (2005). [DOI] [PubMed] [Google Scholar]

- [26].Abarbanel HD and Talathi SS, Phys. Rev. Lett 96, 148104 (2006). [DOI] [PubMed] [Google Scholar]

- [27].Farries MA, Kita H, and Wilson CJ, The Journal of Neuroscience 30, 13180 (2010). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [28].Beiser D and Houk J, Clinical Neurophysiology 79, 3168 (1998). [DOI] [PubMed] [Google Scholar]

- [29].Houk J, “Information processing in modular circuits linking basal ganglia and cerebral cortex,” in Models of Information Processing in the Basal Ganglia, edited by Houk J, Davis J, and Beiser D (MIT Press, Cambridge, 1995) pp. 3–10. [Google Scholar]

- [30].Houk J, Davis J, and Beiser D, Computational Neuroscience, Models of Information Processing in the Basal Ganglia (MIT Press, Cambridge, 1995). [Google Scholar]

- [31].Beshel J, Kopell N, and Kay LM, Journal of Neuroscience 27, 8358 (2007). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [32].Martin C, Houitte D, Guillermier M, Petit F, Bonvento G, and Gurden H, Frontiers in Neural Circuits 6 (2012). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [33].Kay LM and Lazzara P, Journal of Neurophysiology 104, 1768 (2010). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [34].Hughes S, Lorincz M, Parri H, and Crunelli V, Prog Brain Res 193, 145 (2011). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [35].Steriade M, Jones EG, and Llinas RR, Thalamic oscillations and signaling (Oxford, England: John Wiley and Sons, 1990). [Google Scholar]

- [36].Buzsaki G, Neuron 33, 325 (2002). [DOI] [PubMed] [Google Scholar]

- [37].Pignatelli M, Beyeler A, and Leinekugel X, Journal of Physiology-Paris 106, 81 (2012). [DOI] [PubMed] [Google Scholar]

- [38].Worrell GA, Parish L, Cranstoun SD, Jonas R, Baltuch G, and Litt B, Brain 127, 1496 (2004). [DOI] [PubMed] [Google Scholar]

- [39].Anliker J, Science, 1307 (1963). [DOI] [PubMed] [Google Scholar]

- [40].Rizzuto D, Madsen J, Bromfield E, Schulze-Bonhage A, Seelig D, Aschenbrenner-Scheibe R, and Kahana M, Proc Natl Acad Sci USA 100, 7931 (2003). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [41].Izhikevich EM, Dynamical Systems in Neuroscience: The Geometry of Excitability and Bursting (The MIT Press, 2007). [Google Scholar]

- [42].Ermentrout G, “Losing amplitude and saving phase,” in Lecture Notes in Biomathematics, Vol. 66 (Springer, Berlin - New York, 1986). [Google Scholar]

- [43].Morris C and Lecar H, Biophysical Journal 35, 193 (1981). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [44].Rinzel J and Ermentrout B, “Analysis of neural excitability and oscillations,” in Methods of Neuronal Modeling, edited by Koch C and Segev I (MIT Press, Cambridge, MA, 1998). [Google Scholar]

- [45].Lisman JE and Grace AA, Neuron 46, 703 (2005). [DOI] [PubMed] [Google Scholar]

- [46].Coull J, Vidal F, Nazarian B, and Macar F, Science 303, 1506 (2004). [DOI] [PubMed] [Google Scholar]

- [47].Stevens M and Kiehl K, Human Brain Mapping 28, 394 (2007). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [48].Harrington D and Haaland K, Reviews of Neuroscience 10, 91 (1999). [DOI] [PubMed] [Google Scholar]

- [49].Hinton S, Meck W, and MacFall J, NeuroImage 3, S224 (1996). [Google Scholar]

- [50].Malapani C, Rakitin B, Levy R, Meck W, Deweer B, Dubois B, and Gibbon J, Journal of Cognitive Neuroscience 10, 316 (1998). [DOI] [PubMed] [Google Scholar]

- [51].Meck W, Journal of Experimental Psychology. Animal Behavior Processes, 171 (1983). [PubMed] [Google Scholar]

- [52].Meck W, Cognitive Brain Research 3, 227 (1996). [DOI] [PubMed] [Google Scholar]

- [53].Neil D and Herndon J Jr., Brain Research 153, 529 (1978). [DOI] [PubMed] [Google Scholar]

- [54].Lapish C, Kroener S, Durstewitz D, Lavin A, and Seamans J, Psychopharmacology (Berl) 191, 609 (2007). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [55].Matell M, Meck W, and Nicolelis M, Behavioral Neuroscience 117, 760 (2003). [DOI] [PubMed] [Google Scholar]

- [56].Jin X and Costa R, Nature 466, 457 (2010). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [57].Sutton R and Barto A, Reinforcement learning: an introduction (MIT Press, Cambridge, MA, 1998). [Google Scholar]

- [58].Stern E, Jaeger D, and Wilson C, Nature 394, 475 (1998). [DOI] [PubMed] [Google Scholar]

- [59].Wilson C, “The contribution of cortical neurons to the firing pattern of striatal spiny neurons,” in Models of Information Processing in the Basal Ganglia, edited by Houk J, Davis J, and Beiser D (MIT Press, Cambridge, MA, 1995) pp. 29–50. [Google Scholar]

- [60].Brunner F, Kacelnik A, and Gibbon J, Animal Behaviour 44, 597 (1992). [Google Scholar]

- [61].Doig NM, Moss J, and Bolam JP, The Journal of Neuroscience 30, 14610 (2010). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [62].Squire L, Stark C, and Clark R, Annu Rev Neurosci 27, 279 (2004). [DOI] [PubMed] [Google Scholar]

- [63].Fellous J, Tiesinga P, Thomas P, and Sejnowski T, Journal of Neuroscience 24, 2989??3001 (2004). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [64].White J, Rubinstein J, and Kay A, Trends in Neuroscience 23, 99 (2000). [Google Scholar]

- [65].Faisal A, Selen L, and Wolpert D, Nature Review Neuroscience 9, 292 (2008). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [66].Destexhe A, Rudolph M, and Pare D, Nature Review Neuroscience 4, 739 (2003). [DOI] [PubMed] [Google Scholar]

- [67].Matsumura M, Cope T, and Fetz E, Experimental Brain Research 70, 463 (1988). [DOI] [PubMed] [Google Scholar]

- [68].Steriade M, Timofeev I, and Grenier F, Journal of Neurophysiology 85, 1969 (2001). [DOI] [PubMed] [Google Scholar]

- [69].Englitz B, Stiefel K, and Sejnowski T, Neural Computation 20, 44 (2008). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [70].Markram H, Toledo-Rodriguez M, Wang Y, Gupta A, Silberberg G, and Wu C, Nature Reviews Neuroscience 5, 793 (2004). [DOI] [PubMed] [Google Scholar]

- [71].Calvin W and Stevens C, Journal of Neurophysiology 31, 574 (1968). [DOI] [PubMed] [Google Scholar]

- [72].Stevens CF and Zado AM, Nature Neuroscience 1, 210 (1998). [DOI] [PubMed] [Google Scholar]

- [73].Droit-Volet S, Wearden J, and Delgado-Yonger M, IJournal of Experimental Child Psychology 97, 246 (2007). [DOI] [PubMed] [Google Scholar]

- [74].Fortin C and Couture E, Canadian Journal of Experimental Psychology 56, 120 (2002). [DOI] [PubMed] [Google Scholar]

- [75].Jones L and Wearden J, Quarterly Journal of Experimental Psychology 56, 321 (2003). [DOI] [PubMed] [Google Scholar]

- [76].Jones L and Wearden J, Quarterly Journal of Experimental Psychology 57B, 55 (2004). [DOI] [PubMed] [Google Scholar]

- [77].Oprisan S and Buhusi C, Frontiers in Integrative Neuroscience 5, 52 (2011). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [78].Sobie C, Babul A, and de Sousa R, Phys. Rev. E 83, 051912 (2011). [DOI] [PubMed] [Google Scholar]

- [79].Spiegel M, Theory and Problems of Probability and Statistics (McGraw-Hill, New York, 1992). [Google Scholar]

- [80].Gibbon J and Allan L, Annals of the New York Academy of Sciences 423, 1 (1984). [DOI] [PubMed] [Google Scholar]

- [81].Gibbon J and Church R, “Sources of variance in an information processing theory of timing,” in Animal cognition, edited by Roitblat H, Bever T, and Terrace H (Erlbaum, Hillsdale, NJ, 1984) pp. 465–488. [Google Scholar]

- [82].Church R and Broadbent H, Cognition 37, 55 (1990). [DOI] [PubMed] [Google Scholar]

- [83].Matell M, Chelius C, Meck W, and Sakata S, Abstracts-Society for Neuroscience 26 (2000). [Google Scholar]

- [84].Matell MS and Meck WH, Bioessays 22, 94 (2000). [DOI] [PubMed] [Google Scholar]

- [85].Grossberg S and Schmajuk N, Neural Networks 2, 79 (1989). [Google Scholar]

- [86].Grossberg S and Merrill J, Brain Res Cogn Brain Res 1, 3 (1992). [DOI] [PubMed] [Google Scholar]

- [87].Machado A, Psychological Review 104, 241 (1997). [DOI] [PubMed] [Google Scholar]

- [88].Buhusi C and Schmajuk NA, Behavioural Processes 45, 33 (1999). [DOI] [PubMed] [Google Scholar]

- [89].Staddon J, Higa J, and Chelaru I, J Experimental analysis of behavior 71, 293 (1999). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [90].Simen P, Balci F, deSouza L, Cohen JD, and Holmes P, The Journal of Neuroscience 31, 9238 (2011). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [91].Gallistel C and Gibbon J, Psychological Review 107, 289 (2000). [DOI] [PubMed] [Google Scholar]

- [92].Karmarkar U and Buonomano D, Neuron 53, 427 (2007). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [93].Reutimann J, Yakovlev V, Fusi S, and Senn W, J. Neuroscience 24, 3295??3303 (2004). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [94].Borst A and Theunissen FE, Nature Neuroscience 2, 947 (1999). [DOI] [PubMed] [Google Scholar]

- [95].Gong P-L and Xu J-X, Phys. Rev. E 63, 031906 (2001). [DOI] [PubMed] [Google Scholar]

- [96].Benayoun M, Cowan JD, van Drongelen W, and Wallace E, PLoS Comput Biol 6, e1000846 (2010). [DOI] [PMC free article] [PubMed] [Google Scholar]

- [97].Braun H, Wissing H, Schafer K, and Hirsch M, Nature 367, 270 (1994). [DOI] [PubMed] [Google Scholar]

- [98].Kaluza P, Strege C, and Meyer-Ortmanns H, Phys. Rev. E 82, 036104 (2010). [DOI] [PubMed] [Google Scholar]

- [99].Kenet T, Bibitchkov D, Tsodyks M, Grinvald A, and Arieli A, Nature 425, 954 (2003). [DOI] [PubMed] [Google Scholar]

- [100].Papoulis A and Pillai SU, Probability, Random Variables and Stochastic Processes, 4th ed. (McGraw Hill, 2002). [Google Scholar]

- [101].Ermentrout G, Neural Comput. 8, 979 (1996). [DOI] [PubMed] [Google Scholar]

- [102].Hodgkin A, J. Physiology 107, 165 (1948). [DOI] [PMC free article] [PubMed] [Google Scholar]