Abstract

Objective. In the course of daily teaching responsibilities, pharmacy educators collect rich data that can provide valuable insight into student learning. This article describes the qualitative data analysis method of content analysis, which can be useful to pharmacy educators because of its application in the investigation of a wide variety of data sources, including textual, visual, and audio files.

Findings. Both manifest and latent content analysis approaches are described, with several examples used to illustrate the processes. This article also offers insights into the variety of relevant terms and visualizations found in the content analysis literature. Finally, common threats to the reliability and validity of content analysis are discussed, along with suitable strategies to mitigate these risks during analysis.

Summary. This review of content analysis as a qualitative data analysis method will provide clarity and actionable instruction for both novice and experienced pharmacy education researchers.

Keywords: content analysis, educational research, latent, manifest, qualitative data analysis

INTRODUCTION

The Academy’s growing interest in qualitative research indicates an important shift in the field’s scientific paradigm. Whereas health science researchers have historically looked to quantitative methods to answer their questions, this shift signals that a purely positivist, objective approach is no longer sufficient to answer pharmacy education’s research questions. Educators who want to study their teaching and students’ learning will find content analysis an easily accessible, robust method of qualitative data analysis that can yield rigorous results for both publication and the improvement of their educational practice. Content analysis is a method designed to identify and interpret meaning in recorded forms of communication by isolating small pieces of the data that represent salient concepts and then applying or creating a framework to organize the pieces in a way that can be used to describe or explain a phenomenon.1 Content analysis is particularly useful in situations where there is a large amount of unanalyzed textual data, such as those many pharmacy educators have already collected as part of their teaching practice. Because of its accessibility, content analysis is also an appropriate qualitative method for pharmacy educators with limited experience in educational research. This article will introduce and illustrate the process of content analysis as a way to analyze existing data, but also as an approach that may lead pharmacy educators to ask new types of research questions.

Content analysis is a well-established data analysis method that has evolved in its treatment of textual data. Content analysis was originally introduced as a strictly quantitative method, recording counts to measure the observed frequency of pre-identified targets in consumer research.1 However, as the naturalistic qualitative paradigm became more prevalent in social sciences research and researchers became increasingly interested in the way people behave in natural settings, the process of content analysis was adapted into a more interesting and meaningful approach. Content analysis has the potential to be a useful method in pharmacy education because it can help educational researchers develop a deeper understanding of a particular phenomenon by providing structure in a large amount of textual data through a systematic process of interpretation. It also offers potential value because it can help identify problematic areas in student understanding and guide the process of targeted teaching. Several research studies in pharmacy education have used the method of content analysis.2-7 Two studies in particular offer noteworthy examples: Wallman and colleagues employed manifest content analysis to analyze semi-structured interviews in order to explore what students learn during experiential rotations,7 while Moser and colleagues adopted latent content analysis to evaluate open-ended survey responses on student perceptions of learning communities.6 To elaborate on these approaches further, we will describe the two types of qualitative content analysis, manifest and latent, and demonstrate the corresponding analytical processes using examples that illustrate their benefit.

Qualitative Content Analysis

Content analysis rests on the assumption that texts are a rich data source with great potential to reveal valuable information about particular phenomena.8 It is the process of considering both the participant and context when sorting text into groups of related categories to identify similarities and differences, patterns, and associations, both on the surface and implied within.9-11 The method is considered high-yield in educational research because it is versatile and can be applied in both qualitative and quantitative studies.12 While it is important to note that content analysis has application in visual and auditory artifacts (eg, an image or song), for our purposes we will largely focus on the most common application, which is the analysis of textual or transcribed content (eg, open-ended survey responses, print media, interviews, recorded observations, etc). The terminology of content analysis can vary throughout quantitative and qualitative literature, which may lead to some confusion among both novice and experienced researchers. However, there are also several agreed-upon terms and phrases that span the literature, as found in Table 1.

Table 1.

Terms and Definitions Used in Qualitative Content Analysis

There is more often disagreement on terminology in the methodological approaches to content analysis, though the most common differentiation is between the two types of content: manifest and latent. In much of the literature, manifest content analysis is defined as describing what is occurring on the surface, what is and literally present, and as “staying close to the text.”8,13 Manifest content analysis is concerned with data that are easily observable both to researchers and the coders who assist in their analyses, without the need to discern intent or identify deeper meaning. It is content that can be recognized and counted with little training. Early applications of manifest analysis focused on identifying easily observable targets within text (eg, the number of instances a certain word appears in newspaper articles), film (eg, the occupation of a character), or interpersonal interactions (eg, tracking the number of times a participant blinks during an interview).14 This application, in which frequency counts are used to understand a phenomenon, reflects a surface-level analysis and assumes there is objective truth in the data that can be revealed with very little interpretation. The number of times a target (ie, code) appears within the text is used as a way to understand its prevalence. Quantitative content analysis is always describing a positivist manifest content analysis, in that the nature of truth is believed to be objective, observable, and measurable. Qualitative research, which favors the researcher’s interpretation of an individual’s experience, may also be used to analyze manifest content. However, the intent of the application is to describe a dynamic reality that cannot be separated from the lived experiences of the researcher. Although qualitative content analysis can be conducted whether knowledge is thought to be innate, acquired, or socially constructed, the purpose of qualitative manifest content analysis is to transcend simple word counts and delve into a deeper examination of the language in order to organize large amounts of text into categories that reflect a shared meaning.15,16 The practical distinction between quantitative and qualitative manifest content analysis is the intention behind the analysis. The quantitative method seeks to generate a numerical value to either cite prevalence or use in statistical analyses, while the qualitative method seeks to identify a construct or concept within the text using specific words or phrases for substantiation, or to provide a more organized structure to the text being described.

Latent content analysis is most often defined as interpreting what is hidden deep within the text. In this method, the role of the researcher is to discover the implied meaning in participants’ experiences.8,13 For example, in a transcribed exchange in an office setting, a participant might say to a coworker, “Yeah, here we are…another Monday. So exciting!” The researcher would apply context in order to discover the emotion being conveyed (ie, the implied meaning). In this example, the comment could be interpreted as genuine, it could be interpreted as a sarcastic comment made in an attempt at humor in order to develop or sustain social bonds with the coworker, or the context might imply that the sarcasm was meant to convey displeasure and end the interaction.

Latent content analysis acknowledges that the researcher is intimately involved in the analytical process and that the their role is to actively use mental schema, theories, and lenses to interpret and understand the data.10 Whereas manifest analyses are typically conducted in a way that the researcher is thought to maintain distance and separation from the objects of study, latent analyses underscore the importance of the researcher co-creating meaning with the text.17 Adding nuance to this type of content, Potter and Levine‐Donnerstein argue that within latent content analysis, there are two distinct types: latent pattern and latent projective.14 Latent pattern content analysis seeks to establish a pattern of characteristics in the text itself, while latent projective content analysis leverages the researcher’s own interpretations of the meaning of the text. While both approaches rely on codes that emerge from the content using the coder’s own perspectives and mental schema, the distinction between these two types of analyses are in their foci.14 Though we do not agree, some researchers believe that all qualitative content analysis is latent content analysis.11 These disagreements typically occur where there are differences in intent and where there are areas of overlap in the results. For example, both qualitative manifest and latent pattern content analyses may identify patterns as a result of their application. Though in their research design, the researcher would have approached the content with different methodological approaches, with a manifest approach seeking only to describe what is observed, and the latent pattern approach seeking to discover an unseen pattern. At this point, these distinctions may seem too philosophical to serve a practical purpose, so we will attempt to clarify these concepts by presenting three types of analyses for illustrative purposes, beginning with a description of how codes are created and used.

Creating and Using Codes

Codes are the currency of content analysis. Researchers use codes to organize and understand their data. Through the coding process, pharmacy educators can systematically and rigorously categorize and interpret vast amounts of text for use in their educational practice or in publication. Codes themselves are short, descriptive labels that symbolically assign a summative or salient attribute to more than one unit of meaning identified in the text.18 To create codes, a researcher must first become immersed in the data, which typically occurs when a researcher transcribes recorded data or conducts several readings of the text. This process allows the researcher to become familiar with the scope of the data, which spurs nascent ideas about potential concepts or constructs that may exist within it. If studying a phenomenon that has already been described through an existing framework, codes can be created a priori using theoretical frameworks or concepts identified in the literature. If there is no existing framework to apply, codes can emerge during the analytical process. However, emergent codes can also be created as addenda to a priori codes that were identified before the analysis begins if the a priori codes do not sufficiently capture the researcher’s area of interest.

The process of detecting emergent codes begins with identification of units of meaning. While there is no one way to decide what qualifies as a meaning unit, researchers typically define units of meaning differently depending on what kind of analysis is being conducted. As a general rule, when dialogue is being analyzed, such as interviews or focus groups, meaning units are identified as conversational turns, though a code can be as short as one or two words. In written text, such as student reflections or course evaluation data, the researcher must decide if the text should be divided into phrases or sentences, or remain as paragraphs. This decision is usually made based on how many different units of meaning are expressed in a block of text. For example, in a paragraph, if there are several thoughts or concepts being expressed, it is best to break up the paragraph into sentences. If one sentence contains multiple ideas of interest, making it difficult to separate one important thought or behavior from another, then the sentence can be divided into smaller units, such as phrases or sentence fragments. These phrases or sentence fragments are then coded as separate meaning units. Conversely, longer or more complex units of meaning should be condensed into shorter representations that still retain the original meaning in order to reduce the cognitive burden of the analytical process. This could entail removing verbal ticks (eg, “well, uhm…”) from transcribed data or simplifying a compound sentence. Condensation does not ascribe interpretation or implied meaning to a unit, but only shortens a meaning unit as much as possible while preserving the original meaning identified.18 After condensation, a researcher can proceed to the creation of codes.

Many researchers begin their analyses with several general codes in mind that help guide their focus as defined by their research question, even in instances where the researcher has no a priori model or theory. For example, if a group of instructors are interested in examining recorded videos of their lectures to identify moments of student engagement, they may begin with using generally agreed upon concepts of engagement as codes, such as students “raising their hands,” “taking notes,” and “speaking in class.” However, as the instructors continue to watch their videos, they may notice other behaviors which were not initially anticipated. Perhaps students were seen creating flow charts based on information presented in class. Alternatively, perhaps instructors wanted to include moments when students posed questions to their peers without being prompted. In this case, the instructors would allow the codes of “creating graphic organizers” and “questioning peers” to emerge as additional ways to identify the behavior of student engagement.

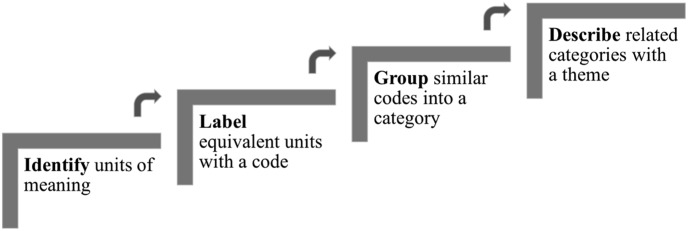

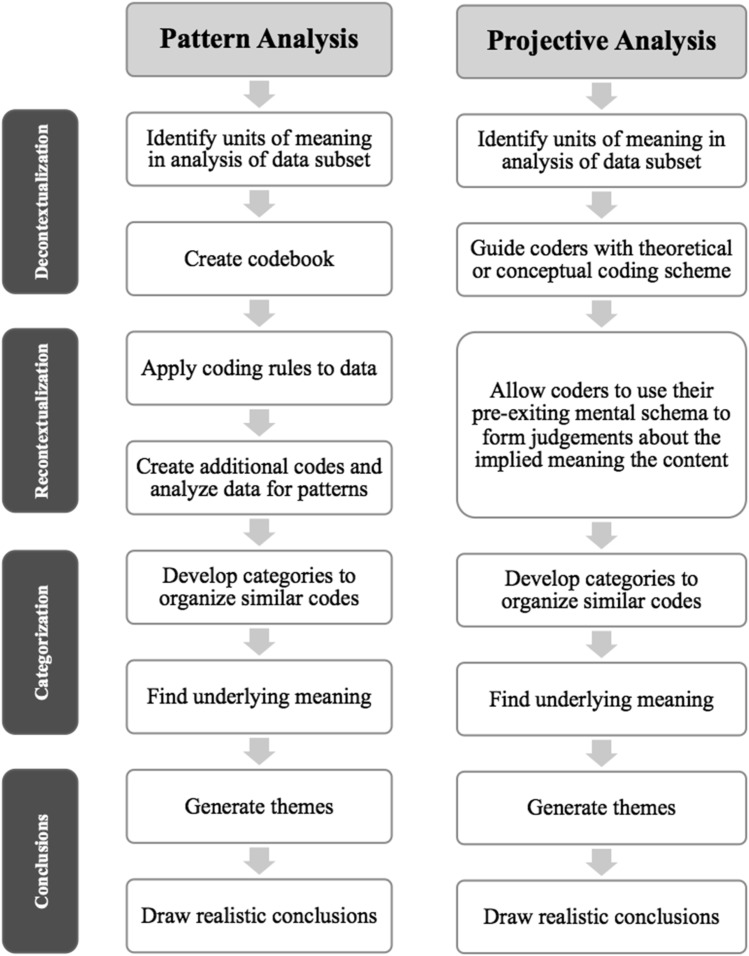

Once a researcher has identified condensed units of meaning and labeled them with codes, the codes are then sorted into categories which can help provide more structure to the data. In the above example of recorded lectures, perhaps the category of “verbal behaviors” could be used to group the codes of “speaking in class” and “questioning peers.” For complex analyses, subcategories can also be used to better organize a large amount of codes, but solely at the discretion of the researcher. Two or more categories of codes are then used to identify or support a broader underlying meaning which develops into themes. Themes are most often employed in latent analyses; however, they are appropriate in manifest analyses as well. Themes describe behaviors, experiences, or emotions that occur throughout several categories.18 Figure 1 illustrates this process. Using the same videotaped lecture example, the instructors might identify two themes of student engagement, “active engagement” and “passive engagement,” where active engagement is supported by the category of “verbal behavior” and also a category that includes the code of “raising their hands” (perhaps something along the lines of “pursuing engagement”), and the theme of “passive engagement” is supported by a category used to organize the behaviors of “taking notes” and “creating graphic organizers.”

Figure 1.

The Process of Qualitative Content Analysis

To more fully demonstrate the process of content analysis and the generation and use of codes, categories, and themes, we present and describe examples of both manifest and latent content analysis. Given that there are multiple ways to create and use codes, our examples illustrate both processes of creating and using a predetermined set of codes. Regardless of the kind of content analysis instructors want to conduct, the initial steps are the same. The instructor must analyze the data using codes as a sense-making process.

Manifest Content Analysis

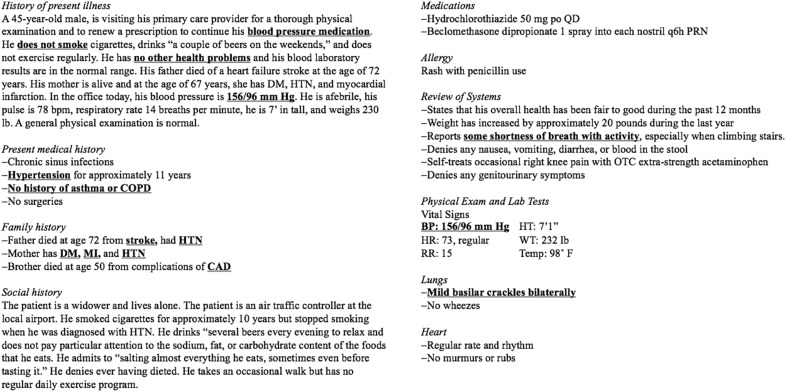

The first form of analysis, manifest content analysis, examines text for elements that exist on the surface of the text, the meaning of which is taken at face value. Schools and colleges of pharmacy may benefit from conducting manifest content analyses at a programmatic level, including analysis of student evaluations to determine the value of certain courses, or analysis of recruitment materials for addressing issues of cultural humility in a uniform manner. Such uses for manifest content analysis may help administrators make more data-based decisions about students and courses. However, for our example of manifest content analysis, we illustrate the use of content analysis in informing instruction for a single pharmacy educator (Figure 2).

Figure 2.

A Student’s Completed Beta-blocker Case with Codes in Underlined Bold Text

In the example, a pharmacology instructor is trying to assess students’ understanding of three concepts related to the beta-blocker class of drugs: indication of the drug, relevance of family history, and contraindications and precautions. To do so, the instructor asks the students to write a patient case in which beta-blockers are indicated. The instructor gives the students the following prompt: “Reverse-engineer a case in which beta-blockers would be prescribed to the patient. Include a history of the present illness, the patients’ medical, family, and social history, medications, allergies, and relevant lab tests.” Figure 2 is a hypothetical student’s completed assignment, in which they demonstrate their understanding of when and why a beta-blocker would be prescribed.

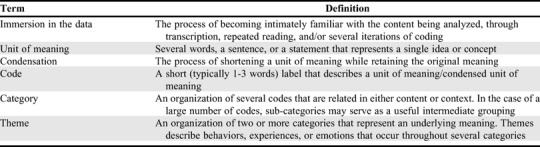

The student-generated cases are then treated as data and analyzed for the presence of the three previously identified indicators of understanding in order to help the instructor make decisions about where and how to focus future teaching efforts related to this drug class. Codes are created a priori out of the instructor’s interest in analyzing students’ understanding of the concepts related to beta-blocker prescriptions. A codebook (Table 2) is created with the following columns: name of code, code description, and examples of the code. This codebook helps an individual researcher to approach their analysis systematically, but it can also facilitate coding by multiple coders who would apply the same rules outlined in the codebook to the coding process.

Table 2.

Example Code Book Created for Manifest Content Analysis

Using multiple coders introduces complexity to the analysis process, but it is oftentimes the only practical way to analyze large amounts of data. To ensure that all coders are working in tandem, they must establish inter-rater reliability as part of their training process. This process requires that a single form of text be selected, such as one student evaluation. After reviewing the codebook and receiving instruction, everyone on the team individually codes the same piece of data. While calculating percentage agreement has sometimes been used to establish inter-rater reliability, most publication editors require more rigorous statistical analysis (eg, Krippendorf’s alpha, or Cohen’s kappa).19 Detailed descriptions of these statistics fall outside the scope of this introduction, but it is important to note that the choice depends on the number of coders, the sample size, and the type of data to be analyzed.

Latent Content Analysis

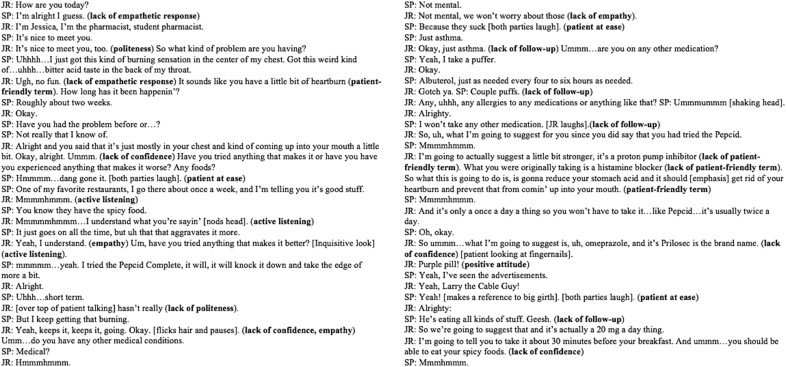

Latent content analysis is another option for pharmacy educators, especially when there are theoretical frameworks or lenses the educator proposes to apply. Such frameworks describe and provide structure to complex concepts and may often be derived from relevant theories. Latent content analysis requires that the researcher is intimately involved in interpreting and finding meaning in the text because meaning is not readily apparent on the surface.10 To illustrate a latent content analysis using a combination of a priori and emergent codes, we will use the example of a transcribed video excerpt from a student pharmacist interaction with a standardized patient. In this example, the goal is for first-year students to practice talking to a customer about an over-the-counter medication. The case is designed to simulate a customer at a pharmacy counter, who is seeking advice on a medication. The learning objectives for the pharmacist in-training are to assess the customer’s symptoms, determine if the customer can self-treat or if they need to seek out their primary care physician, and then prescribe a medication to alleviate the patient’s symptoms.

To begin, pharmacy educators conducting educational research should first identify what they are looking for in the video transcript. In this case, because the primary outcome for this exercise is aimed at assessing the “soft skills” of student pharmacists, codes are created using the counseling rubric created by Horton and colleagues.20 Four a priori codes are developed using the literature: empathy, patient-friendly terms, politeness, and positive attitude. However, because the original four codes are inadequate to capture all areas representing the skills the instructor is looking for during the process of analysis, four additional codes are also created: active listening, confidence, follow-up, and patient at ease. Figure 3 presents the video transcript with each of the codes assigned to the meaning units in bolded parentheses.

Figure 3.

A Transcript of a Student’s (JR) Experience with a Standardized Patient (SP) in Which the Codes are Bolded in Parentheses

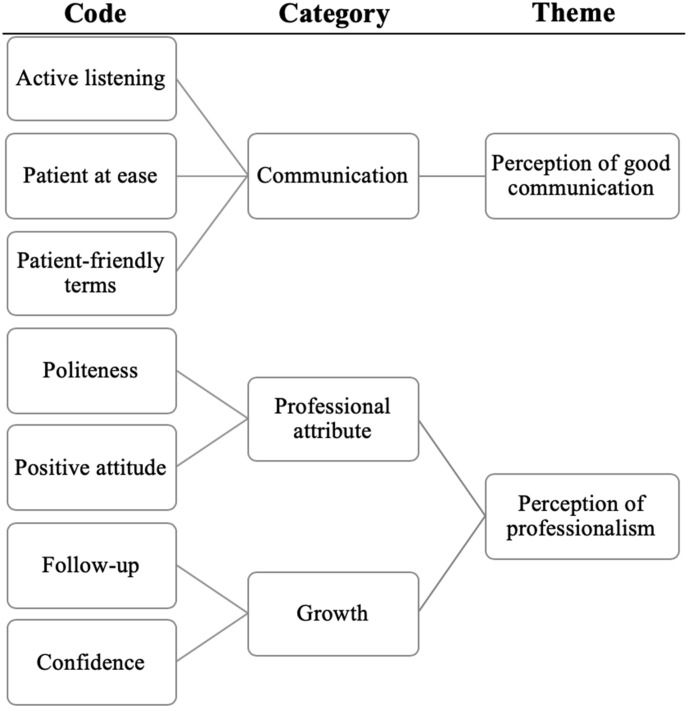

Following the initial coding using these eight codes, the codes are consolidated to create categories, which are depicted in the taxonomy in Figure 4. Categories are relationships between codes that represent a higher level of abstraction in the data.18 To reach conclusions and interpret the fundamental underlying meaning in the data, categories are then organized into themes (Figure 1). Once the data are analyzed, the instructor can assign value to the student’s performance. In this case, the coding process determines that the exercise demonstrated both positive and negative elements of communication and professionalism. Under the category of professionalism, the student generally demonstrated politeness and a positive attitude toward the standardized patient, indicating to the reviewer that the theme of perceived professionalism was apparent during the encounter. However, there were several instances in which confidence and appropriate follow-up were absent. Thus, from a reviewer perspective, the student's performance could be perceived as indicating an opportunity to grow and improve as a future professional. Typically, there are multiple codes in a category and multiple categories in a theme. However, as seen in the example taxonomy, this is not always the case.

Figure 4.

Example of a Latent Content Analysis Taxonomy

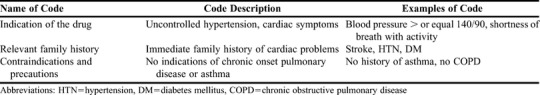

If the educator is interested in conducting a latent projective analysis, after identifying the construct of “soft skills,” the researcher allows for each coder to apply their own mental schema as they look for positive and negative indicators of the non-technical skills they believe a student should develop. Mental schema are the cognitive structures that provide organization to knowledge, which in this case allows coders to categorize the data in ways that fit their existing understanding of the construct. The coders will use their own judgement to identify the codes they feel are relevant. The researcher could also choose to apply a theoretical lens to more effectively conceptualize the construct of “soft skills,” such as Rogers' humanism theory, and more specifically, concepts underlying his client-centered therapy.21 The role of theory in both latent pattern and latent projective analyses is at the discretion of the researcher, and often is determined by what already exists in the literature related to the research question. Though, typically, in latent pattern analyses theory is used for deductive coding, and in latent projective analyses underdeveloped theory is used to first deduce codes and then for induction of the results to strengthen the theory applied. For our example, Rogers describes three salient qualities to develop and maintain a positive client-professional relationship: unconditional positive regard, genuineness, and empathetic understanding.21 For the third element, specifically, the educator could look for units of meaning that imply empathy and active listening. For our video transcript analysis, this is evident when the student pharmacist demonstrated empathy by responding, "Yeah, I understand," when discussing aggravating factors for the patient's condition. The outcome for both latent pattern and latent projective content analysis is to discover the underlying meaning in a text, such as social rules or mental models. In this example, both pattern and projective approaches can discover interpreted aspects of a student’s abilities and mental models for constructs such as professionalism and empathy. The difference in the approaches is where the precedence lies: in the belief that a pattern is recognizable in the content, or in the mental schema and lived experiences of the coder(s). To better illustrate the differences in the processes of latent pattern and projective content analyses, Figure 5 presents a general outline of each method beginning with the creation of codes and concluding with the generation of themes.

Figure 5.

Flow Chart of the Stages of Latent Pattern and Latent Projective Content Analysis

How to Choose a Methodological Approach to Content Analysis

To determine which approach a researcher should take in their content analysis, two decisions need to be made. First, researchers must determine their goal for the analysis. Second, the researcher must decide where they believe meaning is located.14 If meaning is located in the discrete elements of the content that are easily identified on the surface of the text, then manifest content analysis is appropriate. If meaning is located deep within the content and the researcher plans to discover context cues and make judgements about implied meaning, then latent content analysis should be applied. When designing the latent content analysis, a researcher then must also identify their focus. If the analysis is intended to identify a recognizable truth within the content by uncovering connections and characteristics that all coders should be able to discover, then latent pattern content analysis is appropriate. If, on the other hand, the researcher will rely heavily on the judgment of the coders and believes that interpretation of the content must leverage the mental schema of the coders to locate deeper meaning, then latent projective content analysis is the best choice.

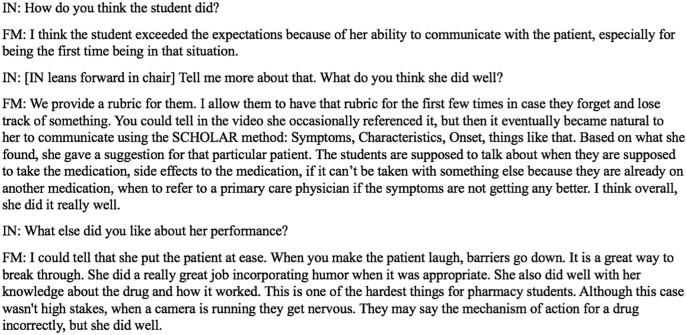

To demonstrate how a researcher might choose a methodological approach, we have presented a third example of data in Figure 6. In our two previous examples of content analysis, we used student data. However, faculty data can also be analyzed as part of educational research or for faculty members to improve their own teaching practices. Recall in the video data analyzed using latent content analysis, the student was tasked to identify a suitable over-the-counter medication for a patient complaining of heartburn symptoms. We have extended this example by including an interview with the pharmacy educator supervising the student who was videotaped. The goal of the interview is to evaluate the educator’s ability to assess the student’s performance with the standardized patient. Figure 6 is an excerpt of the interview between the course instructor and an instructional coach. In this conversation, the instructional coach is eliciting evidence to support the faculty member’s views, judgements, and rationale for the educator’s evaluation of the student’s performance.

Figure 6.

A Transcript of an Interview in Which the Interviewer (IN) Questions a Faculty Member (FM) Regarding Their Student’s Standardized Patient Experience

Manifest content analysis would be a valid choice for this data if the researcher was looking to identify evidence of the construct of “instructor priorities” and defined discrete codes that described aspects of performance such as “communication,” “referrals,” or “accurate information.” These codes could be easily identified on the surface of the transcribed interview by identifying keywords related to each code, such as “communicate,” “talk,” and “laugh,” for the code of “communication.” This would allow coders to identify evidence of the concept of “instructor priorities” by sorting through a potentially large amount of text with predetermined targets in mind.

To conduct a latent pattern analysis of this interview, researchers would first immerse themselves in the data to identify a theoretical framework or concepts that represent the area of interest so that coders could discover an emerging truth underneath the surface of the data. After immersion in the data, a researcher might believe it would be interesting to more closely examine the strategies the coach uses to establish rapport with the instructor as a way to better understand models of professional development. These strategies could not be easily identified in the transcripts if read literally, but by looking for connections within the text, codes related to instructional coaching tactics emerge. A latent pattern analysis would require that the researcher code the data in a way that looks for patterns, such as a code of “facilitating reflection,” that could be identified in open-ended questions and other units of meaning where the coder saw evidence of probing techniques, or a code of “establishing rapport” for which a coder could identify nonverbal cues such as “[IN leans forward in chair].”

Conducting latent projective content analysis might be useful if the researcher was interested in using a broader theoretical lens, such as Mezirow’s theory of transformative learning.22 In this example, the faculty member is understood to have attempted to change a learner’s frame of reference by facilitating cognitive dissonance or a disorienting experience through a standardized patient simulation. To conduct a latent projective analysis, the researcher could analyze the faculty member’s interview using concepts found in this theory. This kind of analysis will help the researcher assess the level of change that the faculty member was able to perceive, or expected to witness, in their attempt to help their pharmacy students improve their interactions with patients. The units of meaning and subsequent codes would rely on the coders to apply their own knowledge of transformative learning because of the absence in the theory of concrete, context-specific behaviors to identify. For this analysis, the researcher would rely on their interpretations of what challenging educational situations look like, what constitutes cognitive dissonance, or what the faculty member is really expecting from his students’ performance. The subsequent analysis could provide evidence to support the use of such standardized patient encounters within the curriculum as a transformative learning experience and would also allow the educator to self-reflect on his ability to assess simulated activities.

OTHER ASPECTS TO CONSIDER

Navigating Terminology

Among the methodological approaches, there are other terms for content analysis that researchers may come across. Hsieh and Shannon10 proposed three qualitative approaches to content analysis: conventional, directed, and summative. These categories were intended to explain the role of theory in the analysis process. In conventional content analysis, the researcher does not use preconceived categories because existing theory or literature are limited. In directed content analysis, the researcher attempts to further describe a phenomenon already addressed by theory, applying a deductive approach and using identified concepts or codes from exiting research to validate the theory. In summative content analysis, a descriptive approach is taken, identifying and quantifying words or content in order to describe their context. These three categories roughly map to the terms of latent projective, latent pattern, and manifest content analyses respectively, though not precisely enough to suggest that they are synonyms.

Graneheim and colleagues9 reference the inductive, deductive, and abductive methods of interpretation of content analysis, which are data-driven, concept-driven, and fluid between both data and concepts, respectively. Where manifest content produces phenomenological descriptions most often (but not always) through deductive interpretation, and latent content analysis produces interpretations most often (but not always) through inductive or abductive interpretations. Erlingsson and Brysiewicz23 refer to content analysis as a continuum, progressing as the researcher develops codes, then categories, and then themes. We present these alternative conceptualizations of content analysis to illustrate that the literature on content analysis, while incredibly useful, presents a multitude of interpretations of the method itself. However, these complexities should not dissuade readers from using content analysis. Identifying what you want to know (ie, your research question) will effectively direct you toward your methodological approach. That said, we have found the most helpful aid in learning content analysis is the application of the methods we have presented.

Ensuring Quality

The standards used to evaluate quantitative research are seldom used in qualitative research. The terms “reliability” and “validity” are typically not used because they reflect the positivist quantitative paradigm. In qualitative research, the preferred term is “trustworthiness,” which is comprised of the concepts of credibility, transferability, dependability, and confirmability, and researchers can take steps in their work to demonstrate that they are trustworthy.24 Though establishing trustworthiness is outside the scope of this article, novice researchers should be familiar with the necessary steps before publishing their work. This suggestion includes exploration of the concept of saturation, the idea that researchers must demonstrate they have collected and analyzed enough data to warrant their conclusions, which has been a focus of recent debate in qualitative research.25

There are several threats to the trustworthiness of content analysis in particular.14 We will use the terms “reliability and validity” to describe these threats, as they are conceptualized this way in the formative literature, and it may be easier for researchers with a quantitative research background to recognize them. Though some of these threats may be particular to the type of data being analyzed, in general, there are risks specific to the different methods of content analysis. In manifest content analysis, reliability is necessary but not sufficient to establish validity.14 Because there is little judgment required of the coders, lack of high inter-rater agreement among coders will render the data invalid.14 Additionally, coder fatigue is a common threat to manifest content analysis because the coding is clerical and repetitive in nature.

For latent pattern content analysis, validity and reliability are inversely related.14 Greater reliability is achieved through more detailed coding rules to improve consistency, but these rules may diminish the accessibility of the coding to consumers of the research. This is defined as low ecological validity. Higher ecological validity is achieved through greater reliance on coder judgment to increase the resonance of the results with the audience, yet this often decreases the inter-rater reliability. In latent projective content analysis, reliability and validity are equivalent.14 Consistent interpretations among coders both establishes and validates the constructed norm; construction of an accurate norm is evidence of consistency. However, because of this equivalence, issues with low validity or low reliability cannot be isolated. A lack of consistency may result from coding rules, lack of a shared schema, or issues with a defined variable. Reasons for low validity cannot be isolated, but will always result in low consistency.

Any good analysis starts with a codebook and coder training. It is important for all coders to share the mental model of the skill, construct, or phenomenon being coded in the data. However, when conducting latent pattern or projective content analysis in particular, micro-level rules and definitions of codes increase the threat of ecological validity, so it is important to leave enough room in the codebook and during the training to allow for a shared mental schema to emerge in the larger group rather than being strictly directed by the lead researcher. Stability is another threat, which occurs when coders make different judgments as time passes. To reduce this risk, allowing for recoding at a later date can increase the consistency and stability of the codes. Reproducibility is not typically a goal of qualitative research,15 but for content analysis, codes that are defined both prior to and during analysis should retain their meaning. Researchers can increase the reproducibility of their codebook by creating a detailed audit trail, including descriptions of the methods used to create and define the codes, materials used for the training of the coders, and steps taken to ensure inter-rater reliability.

In all forms of qualitative analysis, coder fatigue is a common threat to trustworthiness, even when the instructor is coding individually. Over time, the cases may start to look the same, making it difficult to refocus and look at each case with fresh eyes. To guard against this, coders should maintain a reflective journal and write analytical memos to help stay focused. Memos might include insights that the researcher has, such as patterns of misunderstanding, areas to focus on when considering re-teaching specific concepts, or specific conversations to have with students. Fatigue can also be mitigated by occasionally talking to participants (eg, meeting with students and listening for their rationale on why they included specific pieces of information in an assignment). These are just examples of potential exercises that can help coders mitigate cognitive fatigue. Most researchers develop their own ways to prevent the fatigue that can seep in after long hours of looking at data. But above all, a sufficient amount of time should be allowed for analysis, so that coders do not feel rushed, and regular breaks should be scheduled and enforced.

CONCLUSION

Qualitative content analysis is both accessible and high-yield for pharmacy educators and researchers. Though some of the methods may seem abstract or fluid, the nature of qualitative content analysis encompasses these concerns by providing a systematic approach to discover meaning in textual data, both on the surface and implied beneath it. As with most research methods, the surest path towards proficiency is through application and intentional, repeated practice. We encourage pharmacy educators to ask questions suited for qualitative research and to consider the use of content analysis as a qualitative research method for discovering meaning in their data.

REFERENCES

- 1.Kolbe RH, Burnett MS. Content-analysis research: an examination of applications with directives for improving research reliability and objectivity. J Consumer R. 1991;18(2):243-250. [Google Scholar]

- 2.Bird E, Anderson HM, Anaya G, Moore DL. Beginning an assessment project: a case study using data audit and content analysis. Am J Pharm Educ. 2005;69(3):53. [Google Scholar]

- 3.Danielson J, Craddick K, Eccles D, Kwasnik A, O’Sullivan TA. A qualitative analysis of common concerns about challenges facing pharmacy experiential education programs. Am J Pharm Educ. 2015;79(1):6. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 4.Fjortoft N. Students' motivations for class attendance. Am J Pharm Educ. 2005;69(1):15. [Google Scholar]

- 5.Margolis AR, Porter AL, Pitterle ME. Best practices for use of blended learning. Am J Pharm Educ. 2017;81(3):49. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 6.Moser L, Berlie H, Salinitri F, McCuistion M, Slaughter R. Enhancing academic success by creating a community of learners. Am J Pharm Educ. 2015;79(5):70. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 7.Wallman A, Sporrong SK, Gustavsson M, Lindblad ÅK, Johansson M, Ring L. Swedish students' and preceptors' perceptions of what students learn in a six-month advanced pharmacy practice experience. Am J Pharm Educ. 2011;75(10):197. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 8.Kondracki NL, Wellman NS, Amundson DR. Content analysis: review of methods and their applications in nutrition education. J Nutr Educ Behav. 2002;34(4):224-230. [DOI] [PubMed] [Google Scholar]

- 9.Graneheim UH, Lindgren BM, Lundman B. Methodological challenges in qualitative content analysis: a discussion paper. Nurse Educ Today. 2017;56:29-34. [DOI] [PubMed] [Google Scholar]

- 10.Hsieh HF, Shannon SE. Three approaches to qualitative content analysis. Qual Health Res. 2005;15(9):1277-1288. [DOI] [PubMed] [Google Scholar]

- 11.Julien H. Content analysis. In: Given LM, ed. The SAGE Encyclopedia of Qualitative Research Methods. Vol.2 Thousand Oaks, CA: SAGE;2008:121-123. [Google Scholar]

- 12.Krippendorff K. Content Analysis: An Introduction to Its Methodology. Thousand Oaks, CA: SAGE Publications; 2012. [Google Scholar]

- 13.Graneheim UH, Lundman B. Qualitative content analysis in nursing research: concepts, procedures, and measures to achieve trustworthiness. Nurse Education Today. 2004;24(2):105-112. [DOI] [PubMed] [Google Scholar]

- 14.Potter WJ, Levine‐Donnerstein D. Rethinking validity and reliability in content analysis. J Applied Comm Res . 1999;27(3):258-284. [Google Scholar]

- 15.Lincoln YS, Guba EG. Naturalistic Inquiry. Beverly Hills, CA: SAGE;1985. [Google Scholar]

- 16.Weber RP. Basic Content Analysis. Beverly Hills, CA: SAGE;1990. [Google Scholar]

- 17.Mishler EG. The analysis of interview-narratives. In Sarbin TR, ed. Narrative Psychology: The Storied Nature of Human Conduct. Westport, CT: Praeger;1986:233-255. [Google Scholar]

- 18.Saldana J. The Coding Manual for Qualitative Researchers . Thousand Oaks, CA: SAGE; 2009. [Google Scholar]

- 19.Krippendorff K. Reliability in content analysis. Human Communication Research. 2004;30(3):411-433. [Google Scholar]

- 20.Horton N, Payne KD, Jernigan M, et al. A standardized patient counseling rubric for a pharmaceutical care and communications course. Am J Pharm Educ . 2013;77(7):1-9. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 21.Rogers CR. Client-Centered Therapy. Washington, DC: American Psychological Association;1966. [Google Scholar]

- 22.Mezirow J. Transformative Dimensions of Adult Learning. San Francisco, CA: Jossey-Bass;1991. [Google Scholar]

- 23.Erlingsson C, Brysiewicz P. A hands-on guide to doing content analysis. African J Emergency Med. 2017;7(3):93-99. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 24.Guba EG, Lincoln YS. Effective Evaluation: Improving the Usefulness of Evaluation Results Through Responsive and Naturalistic Approaches. San Francisco, CA: Jossey-Bass;1981. [Google Scholar]

- 25.Saunders B, Sim J, Kingstone T, et al. Saturation in qualitative research: exploring its conceptualization and operationalization. Quality & Quantity. 2018;52(4):1893-1907. [DOI] [PMC free article] [PubMed] [Google Scholar]