Summary

The fluent production of a signed language requires exquisite coordination of sensory, motor, and cognitive processes. Similar to speech production, language produced with the hands by fluent signers appears effortless, but reflects the precise coordination of both large-scale and local cortical networks. The organization and representational structure of sensorimotor features underlying sign language phonology in these networks is unknown. Here, we present a unique case study of high-density electrocorticography (ECoG) recordings from the cortical surface of profoundly deaf signer during awake craniotomy. While neural activity was recorded from sensorimotor cortex, the participant produced a large variety of movements in linguistic and transitional movement contexts. We found that at both single electrode and neural population levels, high-gamma activity reflected tuning for particular hand, arm, and face movements, which were organized along dimensions that are relevant for phonology in sign language. Decoding of manual sensorimotor features revealed a clear functional organization and population dynamics for these highly-practiced movements. Furthermore, neural activity clearly differentiated linguistic and transitional movements, demonstrating encoding of language-relevant articulatory features. These results provide a novel and unique view of the fine-scale dynamics of complex and meaningful sensorimotor actions.

Graphical Abstract

Introduction

Unlike spoken languages, which are produced with the vocal tract articulators and understood through the auditory system, signed languages are articulated through the hands and body and perceived through the visual system [1]. Despite these differences, signed languages evolve naturally in communities of Deaf persons, and have historical [2], linguistic [3,4], and developmental [5,6] patterns that are nearly indistinguishable from spoken languages.

Signed languages provide a unique opportunity to explore how highly-practiced sensorimotor representations that underlie human language production are encoded in the brain. The complete visibility of all articulators used in sign language production allows analysis of linguistic representations at the gestural level, which is more difficult in speech, where articulators are not easily accessible [7]. In addition, sign languages provide a rich set of movements that require complex control of the arms, hands, and body compared to typical motor control experiments involving reaching and grasping [8-10]. Thus, alongside studies of speech and movement, signed languages can provide a critical complementary window into the neural computations that underlie highly skilled manual movements.

Linguistic analysis of signed languages has revealed that the manual actions used in sign formation have compositional structure. Analogous to spoken segmental features (e.g., syllables, consonants, vowels), signs are constructed from fixed inventories of handshapes, locations (place of articulation), and discrete arm and hand movement trajectories [11]. At a basic motor control level, these movements reflect planning and execution that is similar to reaching and grasping [12], where decades of work has revealed both individual neuron tuning for specific articulator and movement features, and complex movement dynamics encoded in neural populations [13]. However, little work, particularly in humans, has examined the representation of movements where combinations of basic features (e.g., phonology) are combined to produce complex representations like words and phrases.

The fact that fluent signers produce these kinds of highly-practiced movements in coordination with higher level cognitive and linguistic representations allows for a detailed examination of language production that does not use the vocal tract. While there have been descriptions of the broad cortical regions involved in sign language processing, particularly as it relates to speech [14-19], the fine-scale coordinated dynamics of these regions have not been explored, especially for production. Specifically, there has been little work on the neural processes involved in representing the compositional substructure of signed language [20,21]. It is unknown how motor, sensory, and linguistic representations are coordinated in dynamic brain activity to allow for fluent sign production.

Here, we present a unique case study of a profoundly deaf signer who underwent awake craniotomy with language mapping for resection of a brain tumor. He volunteered to participate in an intraoperative research study in which high-density electrocorticographic signals were recorded directly from his brain. The participant viewed videos of ASL (Figure S1A), and was asked to perform a lexical decision task (real/pseudo signs). In addition to “yes/no” responses, he produced a variety of spontaneous natural responses, including linguistic signs, transitional movements (Figure S1B), and fingerspelling, a manual representation of the English alphabet that occurs in a central space in front of the signer’s body (distinct from the lexical sign neutral location). The participant’s manual behaviors were recorded and annotated frame-by-frame to provide nearly 8000 events that were aligned to the neural data. This unique opportunity allowed us to examine the neural encoding of sensorimotor and linguistic properties of American Sign Language (ASL), extending our understanding of how skilled motor behaviors are controlled in human cortex [22-24].

Results

Selectivity for hand and arm movements in sensorimotor cortex

While performing a lexical decision task, the participant produced a range of location/handshape combinations, both in the context of indicating his responses on each trial, and in a variety of comments he made to the experimenters. This provided a unique opportunity to examine how high-gamma (HG; 70-150Hz) neural activity encodes specific movements in sensorimotor cortex. We chose to examine articulatory location (the spatial locations in front of and on the body where a sign is produced), and handshape (the configuration of the fingers of the hand(s)) because they are the most prominent features of these movements, and at a theoretical level, they constitute two of the most critical phonological features in sign language [11,25].

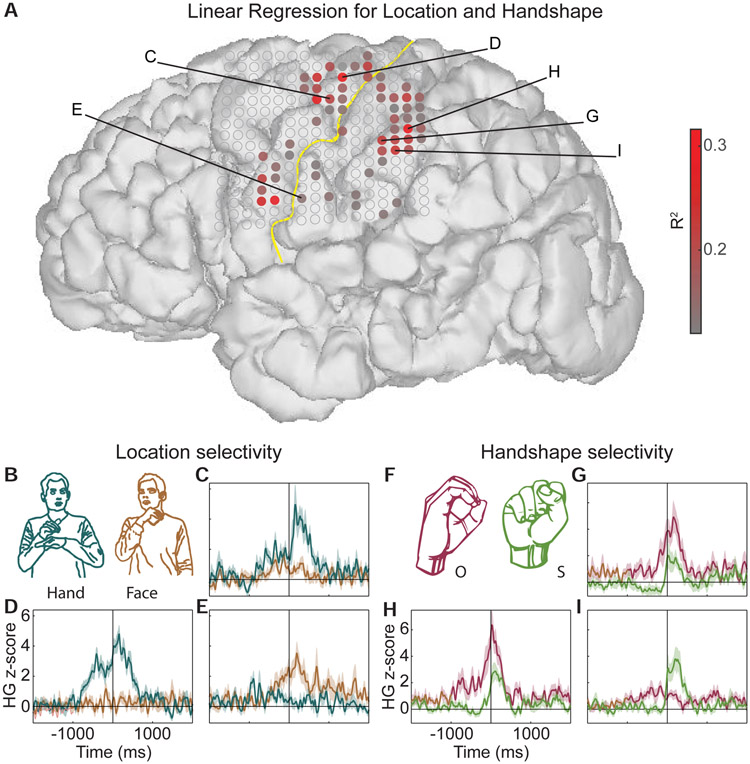

We identified electrodes that encode location and handshape, using multiple linear regression. We found 61 electrodes over pre-central, post-central, and supramarginal gyrus (SMG) with activity that was significantly correlated with location and handshape features, explaining up to ~32% of the variance (p<0.01, Bonferroni corrected for multiple electrodes; minimum p-value = 0.000039; Figure 1A).

Figure 1: Individual electrodes show sensorimotor selectivity for relevant linguistic features of ASL.

(A) Linear regression R2 values showing electrodes that respond significantly to location and handshape features. The yellow line marks the central sulcus. Filled electrodes passed a statistical cutoff for the regression analysis (p<0.01, Bonferroni corrected for multiple comparisons across electrodes). (B) Comparison of single electrode activity for movements occurring at hand and face locations. (C-E) Single electrodes respond selectively to hand (C,D) and face (E) locations, controlling for handshape. Some responses begin at movement onset (video frame when the participant begins to move; C), and others begin up to ~1sec before movement onset (D,E). (F) Comparison of single electrode activity for ‘O’ and ‘S’ handshapes, which are physically similar. (G-I) Single electrodes respond selectively to ‘O’ (G,H) and ‘S’ (I) handshapes, controlling for location. See Figure S1, Table S1, and Video S1.

To examine encoding of location features, we compared movements where the dominant hand contacted the non-dominant hand (hand’ location), versus movements where the dominant hand contacted the face, (‘face’ location), controlling for handshape (Figure 1B). We found example electrodes that showed stronger activity for the hand locat over dorsal pre-central gyrus (Figure 1C-D). In contrast, an example electrode over ventral central sulcus showed significantly greater responses to movements in the ‘face’ location (Figure 1E). These sites corresponded clearly to dorsal and ventral sensorimotor areas, and specifically to hand and face representations of the homunculus (see Figure 3). Since these comparisons were matched for handshape, we conclude that they are selective for location.

Figure 3: Direct electrical stimulation reveals sensorimotor and language organization.

Sites with evoked motor, sensory, and language errors are marked on the participant’s brain. Sites with multiple effects have markers offset for visualization. Black line indicates the central sulcus. No sites showed only motor hand effects.

Next, we examined the encoding of specific handshapes. We compared the ‘O’ and ‘S’ handshape, which are of interest because they are physically similar (Figure 1F; most ASL handshapes are described according to the fingerspelling alphabet). We found two example electrodes that showed stronger activity for ‘O’ than for ‘S’ over SMG (Figure 1G-H). These contrasted with an example electrode that showed a greater response for ‘S’ handshapes, which was also located over SMG (Figure 1H). Since responses were matched for location, we conclude that they are selective for handshape. Together, these examples demonstrate that within less than 1cm of cortex, neural responses encode fine-grained sensorimotor information that is highly relevant to linguistic features of ASL.

Among these responses, we also observed different temporal neural dynamics during sign production. Some electrodes showed sharp responses that were locked to the onset of movement (the video frame when the participant begins to move his hand for the corresponding movement/feature, e.g., Figure 1C,I), while others showed more sustained responses that began prior to movement onset (e.g., Figure 1D,E,G,H). The latter response type is typical of motor evoked activity, which reflects preparatory and planning activity that precedes the actual movement. In contrast, responses closely locked to movement onset may reflect a combination of motor and sensory/proprioceptive feedback, such as contact between the thumb and medial phalange of the middle finger (Figure 1G,H). These responses were not likely due to purely somatosensory activity, as a qualitative comparison between movements that made contact in the target location and non-contacting movements that either failed to make contact or are ASL signs that are “uncontacted”, revealed overlapping response profiles. This suggests that while electrodes may encode sensory and proprioceptive features, they are part of the motor action that drives neural activity during movement.

Hierarchies for sensorimotor features in cortex

Across all location and handshape combinations for which there was sufficient data, we used an unsupervised clustering approach to describe the neural organization of these sensorimotor features (which were defined a priori based on the ASL linguistic literature). We calculated the Euclidean distance between every location/handshape combination based on the peak activity across all 61 significant electrodes.

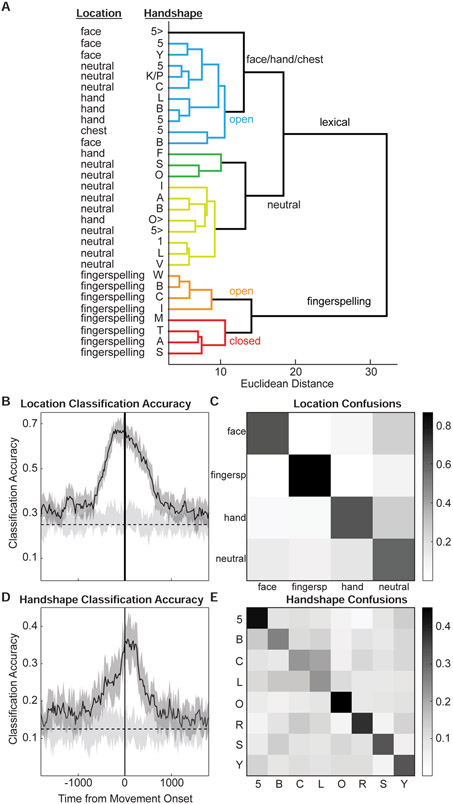

We found two primary clusters, which separated movements made during the production of lexical signs in ASL and movements made during the production of fingerspelled sequences (Figure 2A). Fingerspelling has different sensorimotor and visual dynamics compared to sign, where fingerspelling requires the signer’s hand to be held at a stationary location in front of the signer (often proximal to the shoulder; Supplementary Video S1) and a sequential articulation of a constrained set of handshapes corresponding to the 26 letters of the English alphabet is used to spell words. Furthermore, it is considered linguistically distinct from sign language, since it is a manual representation of spelling based on spoken language. In contrast, lexical signs entail a larger set of phonological features, which include a more varied set of articulatory locations, discrete movements, and handshapes that are constrained by sign language specific properties.

Figure 2: Hierarchical clustering of sensorimotor features.

Unsupervised clustering of neural data reveals hierarchical structure of linguistically-defined phonological features. (A) Fingerspelling location is distinct from all other locations. Larger branches are generally organized according to location, while the smallest distances reflect handshape similarity. (B-C) Neural data was used to classify four locations. The timecourse of classification accuracy revealed a peak prior to movement onset (B). At the peak, all four locations are classified above chance, with poorer performance for “neutral” (C). (D-E) Neural data was used to classify eight handshapes. The timecourse of classification accuracy revealed a peak just after movement onset (D). At the peak, handshape confusions were largely driven by sensorimotor similarity (E). Light grey in B and D reflects chance distributions. See Figure S2 and Table S1.

Within the fingerspelling branch, we observed clear clustering based on handshape. The largest branch within this cluster divides the handshapes {‘W’,’B’,’C’,’I’} (Figure 2A; orange branch) and {‘M’,’T’,’A’,’S’} (Figure 2A; red branch). These two sub-clusters are defined primarily by whether the hand is open or closed, where closed handshapes tend to make contact between the fingers and palm. Within the closed hand cluster, the only difference between handshapes is the position of the thumb.

The lexical sign branch had two main sub-clusters, the first of which was composed mostly of signs in the ‘neutral’ location (Figure 2A; yellow and green branches). Neutral location is defined as an articulatory area in front of the signer where signs are produced without contacting the body. A sub-cluster of this branch contained handshapes that are defined by an extended index finger (‘1’, ‘L’, ‘V’). The second main sub-cluster contained all non-fingerspelling locations (‘face’, ‘neutral’, ‘hand’, and ‘chest’), and was organized primarily according to open handshapes that do not involve contact between the digits and the palm (e.g., ‘5’, ‘B’, ‘C’; Figure 2A; blue branches).

We also examined the organization of location and handshape features with another phonological feature of sign: internal hand movements (Figure S2C-D). Internal movements refer to changes that affect the distal properties of hand articulation, which include changes in wrist motion (rotation and flexion) and changes in handshapes and fingers (hand closure, finger oscillation). These are typically repeated movements, which may be superimposed upon the larger ballistic movements of the arms. While classification accuracy was less robust compared to location/handshape, we found patterns that were related primarily to wrist movements (e.g. flex, rotate, shake) and finger movements (close, oscillate, circle).

In summary, unsupervised clustering revealed structure in the neural representation of sensorimotor features. These features are important phonological components of signing systems [4,11,26], and these analyses show a representational and temporal hierarchy for distinct phonological features.

Sensorimotor Features can be Classified from Neural Activity

To examine the neural separability of sensorimotor/linguistic features, we trained a series of linear support vector machine (SVM) classifiers. The data from all 61 electrodes at each timepoint was used to classify each articulation as a particular location or handshape. Separate 10-fold cross-validated classifiers were trained using a 20ms sliding window to generate a timecourse of classification accuracy relative to movement onset.

For location, the classifier was accurate up to 67.1% (chance≈25%; Figure 2B). The classifier performed above chance from ~650ms before movement onset to ~650ms after movement onset, and peaked ~100-200ms before movement onset. Averaged over a 200ms window around the peak classification accuracy, the confusion matrix for the 4 locations revealed high accuracy for each location (Figure 2C, diagonal). Consistent with hierarchical clustering (Figure 2A), fingerspelling was highly distinct (86.4%), and was rarely confused with the other locations. The ‘face’ (64.5%), ‘hand’ (63.8%), and ‘neutral’ (58.8%) locations were also all classified well above chance.

Handshape classification was similar to location classification, with peak accuracy ~36.6% (chance≈12.5%; Figure 2D). The classifier performed above chance levels from ~400ms before movement onset to ~450ms after movement onset, and peaked ~50-150ms after movement onset. Averaged over a 200ms window around the peak, the confusion matrix for 8 handshapes revealed high accuracy for each handshape (Figure 2E, diagonal). Classifier confusions were consistent with sensorimotor similarity between handshapes. For example ‘B’, ‘C’ and ‘5’ are forms in which all 5 fingers are prominent, but differ in terms of curvature (‘C’ and ‘B’), and whether the fingers are separated or together (‘5’ versus ‘C’ and ‘B’).

We next asked which brain regions contribute to location and handshape classification by examining the weights of the linear SVM models at the peak of the classification curves. The weights were averaged across all 10 folds and all of the one-vs-all classifiers for each condition. We observed that the weights for both location (Figure S2A) and handshape (Figure S2B) were highly distributed across sensorimotor cortex. Consistent with the example electrodes in Figure 1, we observed stronger weights for handshape in post-central areas, and largely overlapping localization for location, particularly in ventral pre-central areas.

We also examined the classification performance for internal movements. Overall, classification accuracy was lower, but still well above chance, reaching 56.5% (chance≈25%; Figure S2E). Performance was above chance from ~50ms before movement onset to ~350ms after movement onset, and peaked ~20ms before movement onset. Averaged over a 200ms window around the peak classification accuracy, the confusion matrix showed that ‘close’ (57.4%), ‘flex’ (39.2%), ‘oscillate’ (47.6%), and ‘rotate’ (55.4%) were all classified well above chance (Figure S2F).

In summary, high-gamma neural activity in left-hemisphere peri-rolandic and parietal cortex encodes fine-scale articulatory features that are associated with phonological features of sign language. As discussed below, the hierarchical and temporally distributed patterns of activity may reflect different requirements for the planning and execution of skilled movements with early pre-movement activity encoding goal directed targets of a signed action, while later occurring (i.e. post-movement onset) activity reflecting proprioceptive information necessary for fine motor error monitoring related to handshapes.

Stimulation Reveals Sensorimotor Map

The neurophysiology results thus far show a distributed set of focal representations for specific sensorimotor features related to handshape and the spatial locations in which movements take place. A notable aspect of these results is the inter-mixed nature of face and hand representations, and the fact that the timing of activity suggests both sensory and motor representations on both pre- and post- central gyri.

To definitively map the functions of these sensorimotor areas, we performed direct electrical stimulation (DES) with the participant, where the entire area of cortex exposed by the craniotomy was mapped. DES provides a way to test the causal role of focal neural populations in various behaviors by precisely inducing or disrupting activity patterns, causing somatotopically-specific movements and percepts.

We found that stimulating sites throughout the pre- and post-central gyri caused a variety of specific motor and sensory effects (Figure 3). Notably, we found three sites that caused both facial movements and sensations in the jaw and tongue (sites 1, 2, and 7), which were located on ventral sensorimotor cortex. We also found two sites over precentral gyrus that did not evoke movements, but did evoke facial sensations (sites 3 and 11). There were three post-central sites that caused only sensory effects for either the face (site 4) or hand (sites 5 and 6). Notably, there were relatively few sites that caused motor or sensory effects for the hands, even in dorsal regions of sensorimotor cortex, which reflects differences between ECoG and DES in terms of defining functional properties of local tissue.

We also stimulated while the participant performed continuous counting and picture naming tasks. We found a site on ventral pre-central gyrus (near the anterior bank; site 11), which caused counting arrest. Notably, this area has been implicated speech arrest, hand movement arrest, and in music arrest where patients are using their hands to play piano or guitar [27,28]. There were also two sites that caused naming errors, one on SMG (site 13), and one over posterior middle temporal gyrus (site 12), consistent with naming errors during speech in hearing patients [29].

Together, these results demonstrate that the areas in which we observe neurophysiological activity that correlates with sign production overlap with areas that are causally related to sensorimotor and language function.

Linguistic and Transitional Movements Have Distinct Activity Patterns

To determine the extent to which these cortical responses are unique to language production, we asked whether neural activity in these regions is different during the production of linguistic versus transitional movements that are matched closely for sensorimotor features. During the task, the participant produced three main types of movements: lexical signs, fingerspellings, and transitional gestures. Lexical signs and fingerspellings constitute linguistic movements that carry meaning and occur in highly structured sequences. In contrast, transitional gestures are movements that occur between linguistic movements, and are characterized by the physical properties of both the preceding and subsequent linguistic content. While they contain information about the lexical sign or fingerspelling movements surrounding them, these dynamic actions are not considered part of the underlying representation of linguistic (i.e., phonological) signs. To minimize the possibility that any observed effects are due to fine-scale sensorimotor differences between linguistic and transitional movements, both conditions were matched closely for handshape information. Furthermore, electrodes that significantly encoded location information (Figure 1A) were excluded from the analysis.

We found that specific electrodes showed stronger responses to linguistic movements (Figure 4A-B), while a smaller subset showed stronger responses to transitional movements (Figure 4C). Across all electrodes, responses were generally significantly stronger for linguistic movements, particularly in ventral and dorsal precentral gyrus, and SMG (Figure 3D; see Figure S3A for electrode timecourses and statistics; ranksum test p<0.05, Bonferroni corrected for electrodes). There were three distinct regions that showed greater responses to transitional movements: dorsal pre-central gyrus, ventral SMG, and middle pre-central gyrus (Figure 3D). In some cases, neural responses actually decreased for linguistic movements, while transitional movements evoked stronger activity (e.g., Figure 3C). While electrodes generally showed evoked activity to both linguistic and transitional movements, these results demonstrate that there are differences in the pattern of response amplitude that distinguish these categories (Figure S3A).

Figure 4: Localization of linguistic features of sign.

Transitional movements (not part of lexical signs or fingerspelling) were compared to linguistic movements. (A-C) Example electrodes showing timecourses for linguistic signs and transitional movements (matched for handshape). Electrodes are labeled in D. (D) The peak difference between transitional and linguistic movements reveals generally larger responses to linguistic signs throughout pre-central, post-central, and parietal cortex. The dorsal portion of post-central gyrus and anterior SMG respond more strongly to transitional movements. (E) The peak latency of the difference between transitional and linguistic movements reveals an anterior-to-posterior gradient of activity across sensorimotor cortex. (F) Producing real signs evokes stronger activity in most areas except posterior dorsal parietal cortex. Pseudo signs are matched to real signs for handshape. See Figure S3.

The timing of the peak differences between linguistic and transitional movements revealed an anterior-to-posterior pattern, with pre-central activity peaking up to ~250ms before movement onset, and post-central activity peaking up to ~250ms after movement onset (Figure 4E; see also Figure 4A-B). There was a significant correlation between the magnitude of the peak amplitude difference and the latency at which that peak occurred (Pearson r = 0.39, p < 0.007; Figure S3C). This suggests that as activity propagates from motor to sensory cortex, linguistic movements evoke increasingly greater activity compared to transitional movements. Note that transitional movement effects do not always precede linguistic effects, since transitional movements occur both before and after each linguistic event. Together, these results suggest that post-central and supramarginal gyri may contain specific linguistic representations for meaningful movements in sign and fingerspelling.

We also compared the production of real signs with pseudo signs, which were created by replacing a single handshape feature of a real sign, rendering it meaningless. On a subset of trials, the participant repeated the real and pseudo signs, allowing us to examine the production of these two movement types while controlling for low-level sensorimotor differences. As with linguistic versus transitional movements, we found that the majority of electrodes showed stronger responses to real signs (Figure 4F; see Figure S3B for electrode timecourses and statistics; ranksum test p<0.05, Bonferroni corrected for electrodes). The same three areas that showed greater responses to transitional movements also showed stronger responses to pseudo signs. As with the linguistic vs transitional movement distinction, most electrodes that showed evoked activity for real signs also showed evoked activity for pseudo signs, however the relative response amplitude differed, usually with higher activity for real signs (Figure S3B). The participant produced real and pseudo signs with the same durations (Kolmogorov-Smirnov D = 0.13, p = 0.86), although he was faster to start producing real signs (Kolmogorov-Smirnov D = 0.38, p = 0.007).

Lexical Frequency and Age of Acquisition of ASL Signs Modulate Activity

Finally, we wanted to understand whether neural activity during signing is influenced by factors that are known to modulate activity during speech and other motor actions [30]. Here, we focused on task difficulty, which we quantified using lexical variables of sign frequency and age of acquisition (AoA)[31]. The original task design (lexical decision) was focused more on perceptual processes related to sign language. Furthermore, the stimuli were designed to span a range of statistical properties of ASL, including lexical frequency and AoA. Therefore, we initially examined neural responses time-locked to the stimulus presentation.

Although response times were on average sufficiently long to separate putative perceptual and motor activity (Figure S1C), we found that most active electrodes showed response patterns that were not clearly attributable to perception (Figure S4A). There were some electrodes that exhibited multi-peak responses that suggest distinct perception and production-related activity, however at the single trial level, the variability in response times made this hard to interpret. Furthermore, when we examined the main stimulus manipulation in the task (real vs. pseudo signs), we found that most significant differences between these conditions occurred during time windows more clearly attributed to production (Figure S4B). Thus, while there may be perception-related activity in sensorimotor cortex in a Deaf signer, the present data do not allow us to disambiguate perceptual and motor activity.

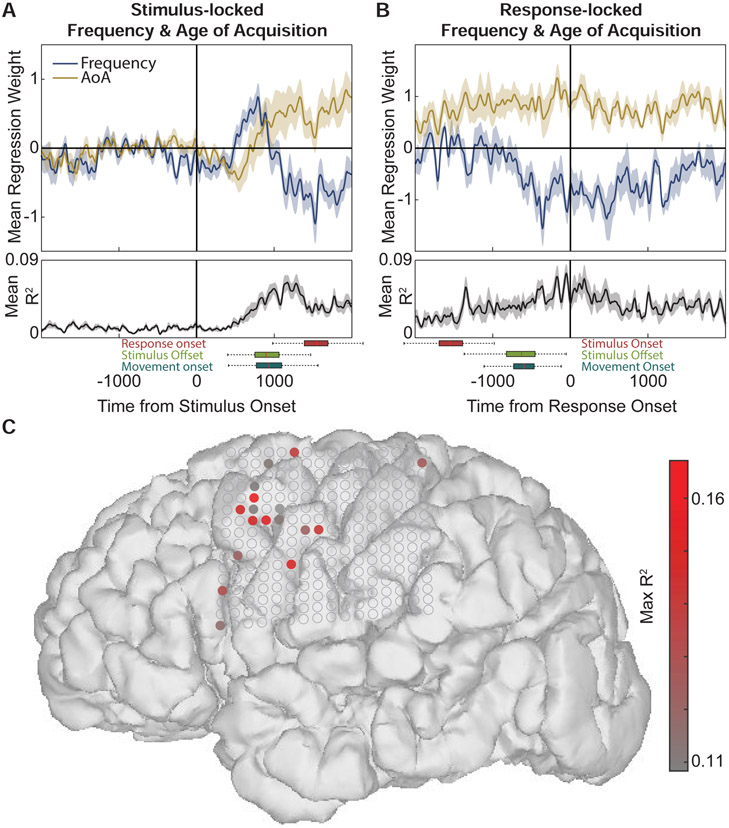

However, we found evidence that stimulus-related statistical features are correlated with activity during both early and later time periods putatively associated with perception and production. Whether time-locked to stimulus onset (Figure 5A) or response onset (Figure 5B), we found a significant negative correlation with frequency and a positive correlation with AoA (p<0.05, Bonferroni corrected for timepoints). As expected, frequency and AoA are negatively correlated for the stimuli (Figure S5; Spearman’s ρ=−0.58, p=1.3x10−18), since higher frequency signs/words tend to be learned earlier. However, there is still sufficient variability in this relationship that the neural regression analysis could characterize, leading to distinct timecourses of effects.

Figure 5: Lexical frequency and age of acquisition (AoA) effects on stimulus- and response- locked activity.

(A) At ~1000ms after stimulus onset, there is a negative effect of frequency and a positive effect of AoA, explaining a maximum of ~8% of the variance on average. Box plots beneath axes indicate interquartile range and 1.5 times interquartile range for response onset (red), stimulus offset (green), and movement onset (blue). (B) Response-locked (production) neural activity was also modulated by frequency and AoA, with a broader timecourse peaking around the onset of each movement. (C) Electrodes significantly modulated by frequency and AoA during the post-stimulus window were primarily localized to dorsal prefrontal cortex. A subset of the same electrodes was modulated during production. See Figure S4 and Figure S5.

The stimulus-locked regression weights showed the pattern more clearly, and also revealed an initial positive frequency and negative AoA effect (Figure 5A). Furthermore, the frequency effect preceded the AoA effect, starting ~500ms before average movement onset, and nearly 1000ms before the onset of the participant’s production. These results indicate that although we did not observe clear event-related neural responses to the presentation of the sign videos, activity after the stimulus onset was modulated by processes related to both frequency and AoA, effects that persisted throughout the duration of the sign production.

In contrast to previous analyses showing movement- and linguistic-related activity in sensorimotor cortex, frequency and AoA effects were localized primarily to prefrontal cortex (Figure 4C). Only electrodes showing R2 values with P < 0.05, Bonferroni corrected for multiple timepoints, and constrained to at least 50ms of contiguous significance were considered significant (their average regression weights and R2 values are shown in Figure 4A-B). On these electrodes, frequency and AoA accounted for up to ~17% of the variance.

Discussion

We examined the neural basis of sign language production in a unique case of a profoundly deaf ASL signer using high-density ECoG directly on the cortical surface. These recordings provide the highest resolution description of fluent ASL production to date, and allow us to characterize the fine-scale spatiotemporal dynamics of sensorimotor and linguistic manual movements. The participant provided a rich, complex, and relatively natural set of behaviors, which gave us the opportunity to understand the cortical organization of highly-practiced sensorimotor control in the human brain.

Cortical organization of manual sensorimotor features

Non-invasive neuroimaging has revealed the broad cortical networks involved in single sign and sentence production. Our results overlap with the frontal and parietal areas that have been observed previously, including supplementary motor area, precentral gyrus, postcentral gyrus, paracentral lobule (BA 5,7), and SMG (BA 40) [18,20,21,32]. SMG is a multi-modal region that contains an integrated representation of the body, and in monkeys, neurons there exhibit complex body-centered responses [33-36]. Overall, this frontal-parietal network is associated with motor and linguistic behaviors, including action planning.

To our knowledge, there is only one previous report of intracranial neurophysiology in humans during sign language production [15]. In that case report, a hearing patient with epilepsy who was a proficient, late learner of ASL performed picture naming, word reading, and word repetition in both English and ASL. The authors observed highly overlapping high-gamma activity between speech and sign, except in sensorimotor and parietal regions. Across sensorimotor cortex, speech and sign were localized to areas that reflected the articulators used (e.g., tongue vs hand). In parietal cortex, there were unique responses during signing, which is consistent with previous reports of lesions to these areas causing sign language aphasia [37].

Here, we confirm many of these activity patterns [15], and we expand on what activity in these various sensorimotor regions reflects. We observed that high-gamma neural activity in left-hemisphere peri-rolandic and parietal cortex encodes fine-scale articulatory features that map onto the crucial phonological features of sign language [38]. Discrete cortical locations in parietal cortex were shown to differentiate handshapes that were similar in articulatory form (e.g., “O” and “S”), where subtle differences (e.g., open vs closed fist) are the distinctive feature that represents different linguistic categories. This is consistent with a previous report demonstrating that sign language paraphasias, including formational errors in handshape, were evoked by stimulation to the left-hemisphere inferior parietal lobe in an intraoperative mapping study of a deaf signer [39]. In addition, both the previous stimulation mapping and the present ECoG results demonstrated cortical specificity for distinct body locations, which represent another contrastive feature of signed languages in addition to handshape.

Distinct representations of linguistically-meaningful features

Here, classification and hierarchical clustering provide detailed evidence for neural representations of linguistically-relevant features of ASL, which may be combined compositionally during signing. First, the distinction between articulatory locations used in lexical signs versus fingerspelling demonstrates a key dissociation between two mutually exclusive signing systems, which agrees with a recent PET study that showed cortical activation differences during the production of fingerspelled words and body anchored lexical signs in ASL [21].

Second, we found neural representations of ASL handshapes, for example distinguishing open postures (e.g. B, C, 5) and more compact postures (e.g. S, A, T). Previous behavioral analyses have demonstrated that the degree of handshape closure factors significantly in phonetic and phonological models of ASL [40-42]. The distinct (but partially overlapping) representations of location and handshape (and to a lesser degree, internal movements) provide evidence for a spatial encoding of phonological features in ASL that are analogous to articulatory synergies recently described for speech [43]. Furthermore, differences in the timing of location and handshape classification support a hierarchical organization of compositional elements in sign language.

Third, we found that linguistic movements were associated with greater responses in ventral and dorsal pre-central gyrus and SMG. We confirmed that this effect was specifically related to movements produced in a linguistic context by comparing real lexical signs and pseudo signs, which differed only in a single handshape. These results provide further evidence for encoding of linguistically-relevant articulatory features throughout the sensorimotor network, which are also modulated by lexical properties of sign frequency and age of acquisition.

Conclusions

We present a unique case study that allows us to characterize the dynamics of manual articulations in sign language at a scale that has never before been possible. The confluence of circumstances here – profound deafness, early exposure to sign language, and the unfortunate development of a brain tumor requiring awake surgery– are rare and unlikely to be replicated directly. For decades, sign language has provided a crucial test of the generality of language representation across modalities [44]. As new methodological advances allow for characterizations of the underlying representational structure of speech, studies using similar approaches in sign language have great potential to reveal the amodal features that constitute language. Particularly where our results agree with recent work in speech production [45], it is now possible to characterize the organization and representations underlying linguistically-relevant movements, regardless of language modality or hearing status.

STAR ★ Methods

RESOURCE AVAILABILITY

Lead Contact

Further information and requests for resources and reagents should be directed to and will be fulfilled by the Lead Contact, Edward F. Chang (Edward.Chang@ucsf.edu).

Materials availability

This study did not generate unique reagents.

Data and Code Availability

The datasets/code supporting the current study have not been deposited in a public repository because it contains personally identifiable patient information, but are available in an anonymized form from the corresponding author on request after signing a data transfer agreement.

EXPERIMENTAL MODEL AND SUBJECT DETAILS

Participant

One 48-year-old right-handed male participated in the study. He was profoundly deaf in both ears since age 2, having lost his hearing due to spinal meningitis. He had attended residential schools for the deaf and worked as a state employee. American Sign Language (ASL) was his primary and preferred language. At age 48, he was diagnosed with glioblastoma multiforme (GBM) located in the left temporoparietal area. Prior to this diagnosis, he had no other major medical conditions, and had no other known cognitive or neurological deficits. The patient gave written informed consent prior to surgery and experimental testing. All protocols were approved by the Committee on Human Research at UCSF and experiments and data in this study complied with all relevant ethical regulations.

METHOD DETAILS

ECoG Data Acquisition

A high-density 256-channel grid with 4mm inter-electrode spacing was placed on the cortical surface covering frontal and parietal areas. The grid was connected to a multichannel pre-amplifier connected to a digital signal processor (Tucker-Davis Technologies) and digitized at 3052 Hz. Signals were monitored in real time and recorded without any hardware filtering.

Data Preprocessing

Data were visually inspected offline for noisy channels and time points containing artifacts (e.g., electrographic spikes, DC shifts), which were excluded from all subsequent analyses. The remaining channels were common average referenced, and notch filters were used to remove 60 Hz line noise and harmonics.

The broadband ECoG voltages were filtered using logarithmically-spaced band-pass filters and the Hilbert transform, described in previously published procedures [45] to extract signals in the high-gamma (70-150 Hz) range [46,47]. High-gamma (HG) responses are of interest in the present study because they are known to correlate with multi-unit firing [48], and have strong evoked relationships with speech perception [49-51] and production [45]. The HG time series on each channel was normalized with z-scores relative to the period from 1000 to 500ms before stimulus onset. This window was chosen to provide a baseline that is not contaminated by either sensory or motor components of the task.

Task and Behavior

The participant viewed short video clips of single ASL signs and pseudosigns (Figure S1A) on a computer screen placed in his field of view. There were 79 videos (49 real signs, 30 pseudo signs, which were created by changing a single handshape feature in real signs), and the participant viewed each video 4 times. Videos were 931.3 ± 324.4ms in duration. He was instructed to perform a lexical decision task, where he would respond to each video with the ASL signs ‘yes’ (real sign) or ‘no’ (pseudo sign). Lexical decision sign stimuli were presented using Presentation (version 6.1.3) on a Dell Latitude 620 laptop, which output codes to an analog channel in the ECoG recordings, which provided millisecond synchronization between visual stimuli and neural data. Two cameras recording at 30fps were used to capture the participant’s responses during the procedure. Video data were analyzed using ELAN (version 4.4) and a multi-tiered coding scheme that documented relevant features (e.g., stimulus onset, movement onset, sign-gloss, handshape, location etc; see Table S1). These annotations were time-aligned to the Presentation stimulus time codes. Furthermore, all ECoG analyses were performed with data downsampled to 100Hz, providing ~10ms temporal resolution, with ± 3 neural sample synchronization to each coded event.

Although the participant understood the task instructions and was awake and alert, on most trials he did not solely respond to the lexical decision question. Instead, he produced a variety of responses, including repeating the sign, fingerspelling the sign (e.g., “C-A-N-D-Y” for “candy”; Supplementary Video S1), repairing pseudo signs to real signs, and other comments such as jokes. This resulted in a large and varied set of linguistic and transitional movements that could be used to investigate the neural basis of ASL production in a deaf signer (Figure S1A,B). To capitalize on this rare, naturalistic data set, a fluent linguistically-trained ASL signer carefully coded the video of the participant’s behavior frame-by-frame (uncertain events were discussed in detail with another fluent linguistically-trained ASL signer). There were 26 parameters that were coded for each event, which included the onset and offset times of the event (defined as the frame when the participant began to move for that particular feature), the movement type (lexical sign, fingerspelling, transitional movement, etc.), the response type (repetition, comment, yes/no, etc.), and a series of sensorimotor and linguistically relevant phonological features of ASL: handshape, location, internal movement, movement path, and movement direction (see Table S1 for complete list of coded features). Fingerspelling occurred in a location central to the participant’s chest due to the constraints of the operating setup, and to allow the experimenters to see his articulations clearly. These features were chosen as descriptions of the sensorimotor actions the participant performed, which could then be related to the linguistic structure of ASL. ECoG data were segmented for the time window [−2 2] seconds around the onset of each behavior. See Supplementary Video S1 for an example of a trial in which the participant produced both a fingerspelling and a lexical sign for the word ‘candy’.

We measured response times as the time from the start of each video stimulus to the time of the first movement onset within each trial (not counting subsequent movements within a trial). The participant responded with a mean response time of 953.5ms (±250.3ms; Figure S1C). The inter-trial interval (ITI; measured from last movement onset to next stimulus onset) was 4.13sec (±1.32sec; Figure S1D), providing sufficient time for a return to neural baseline between trials.

Stimulation Mapping

To map the function of local neural populations, we used direct electrical stimulation (DES) on the exposed surface of the cortex. Current was delivered using a bipolar stimulator wand (Integra LifeSciences, Plainsboro, NJ) with parameters set at 60Hz frequency, 3-5mA current amplitude, biphasic pulses. Amplitude was determined based on the ability to elicit sensorimotor or sign arrest effects without after-discharges [27,29,52]. Signing errors were measured during counting and picture naming tasks. During the picture naming task, the participant viewed images on a screen and produced the ASL sign and/or fingerspelling translation for the name of the object. As with speech, signing errors were either arrest (complete involuntary cessation of movement until the offset of stimulation), slowing, or dysarthria of the manual articulators. The entire area of the exposed craniotomy was stimulated across ~1 hour of mapping. Negative sites (sites with no errors or elicited responses) were not marked on the brain during mapping, however were evaluated in the video recording of the entire session with the camera focused on the exposed brain.

QUANTIFICATION AND STATISTICAL ANALYSIS

Location/Handshape Linear Regression Analyses

To examine the encoding of behavioral variables of interest on each electrode, neural activity was regressed against feature matrices describing the features of articulatory activity (e.g., handshape, location, internal movement). For categorical variables like handshape, the features were coded as a series of binary dummy variables (1 if present during a particular behavior, 0 if absent). For the location/handshape regression, a minimum of 10 occurrences of each location/handshape combination was required to be included in the analysis.

Separate regression models for each electrode were run across the 4 seconds of segmented ECoG trials using a sliding window. Electrodes were considered to have significant effects of location/handshape if the regression resulted in R2 values at a threshold of P < 0.01, Bonferroni corrected for 256 electrodes and showing at least 100ms of contiguous significance at that threshold. This procedure identified 61 significant electrodes distributed across sensorimotor and parietal cortex.

Hierarchical Clustering

To examine structural relationships among the observed feature properties identified through the regression analysis, we used hierarchical clustering, an unsupervised method for finding structure in the neural data. Ideally, location, handshape, and internal movement features would be clustered simultaneously. However, the participant did not produce a sufficient number of movements where all three features were apparent with a reasonable number of occurrences. Therefore, we performed separate clustering with 3 combinations: (1) Location/handshape, (2) Location/internal movement, and (3) Handshape/internal movement. A minimum of 8 occurrences of each combination was required to be included in the analysis.

Hierarchical clustering was performed for the data averaged over a 200ms window centered at the onset of the linguistic movement across all 61 electrodes identified in the regression analysis. This time window was chosen as it was generally the peak of the R2 in the regression analysis. Clustering was done using Ward’s method, and the ordering of the leaves was determined by minimizing the distances between neighboring feature combinations. In the visualization of the hierarchy, branches were colored such that distances below 40% of the maximum have unique colors.

Classification

Location, handshape, and internal movements were examined using supervised classification. The 4 locations and 8 handshapes with at least 50 occurrences were separately classified using linear Support Vector Machines (SVMs). For internal movements, there were 4 categories that had at least 35 occurrences. ECoG data from the 61 significant electrodes identified in the regression analysis was used as features in a series of classifiers.

A 100ms window was advanced by 20ms increments along the 4 second timecourse of neural activity, producing timecourses of classification accuracy. Since there were more than 2 classes in each analysis, we used a one-vs-all approach, where the data were assigned a score for each possible class using a uniform prior across classes. Each data point was assigned to the class with the highest classification score.

Classification accuracy was defined as the average classification accuracy of each class. This was done by counting the total number of confusions for each class, normalizing for class size, and calculating the proportion of correct classifications. This approach assigns the same weight to smaller categories as it does to larger categories. We used this approach rather than a more basic tally of the total number of correct classifications because we were interested in the classifier’s ability to discriminate between feature classes, and the more basic approach would have skewed the accuracy to reflect the ability to discriminate the largest classes.

To prevent over-fitting and to quantify significance, we used 10-fold crossvalidation. For classification accuracy timecourses, the mean and standard deviation across the 10 folds of held-out test data are shown. We also generated a null distribution of 10-fold cross-validated test data by randomly shuffling the class labels for each training set. We defined above-chance classification accuracy as timepoints where the standard deviations of the null and actual distributions do not overlap.

To examine which electrodes contributed most strongly to each classification model, we extracted the linear weights:

Where β is the set of linear predictor coefficients used to define the separating hyperplane for the classes, s is a scaling parameter, and b is the bias term. The model weights were normalized together for location and handshape classifiers for visualization, which was justified given that the L2 norm of each weight vector was similar (location=1.51, handshape=1.71).

Supplementary Material

Video S1: Example ASL production of “candy”, with both fingerspelling and lexical sign movements. Related to Figure 1.

KEY RESOURCES TABLE

| REAGENT or RESOURCE | SOURCE | IDENTIFIER |

|---|---|---|

| Software and Algorithms | ||

| MATLAB (Mathworks) | http://www.mathworks.com/products/matlab/ | RRID: SCR_001622 |

| Presentation (Neurobehavioral Systems) | https://www.neurobs.com/ | RRID: SCR_002521 |

| ELAN (Max Planck Institute for Psycholinguistics) | https://archive.mpi.nl/tla/elan | N/A |

Sign language production requires coordinating sensorimotor and cognitive processes

We recorded electrocorticography (ECoG) while a deaf signer produced signs

Phonological sign features were distinctly represented in sensorimotor cortex

Population neural activity reflected linguistically-relevant sensorimotor features

Producing sign language requires coordination of phonologically-organized hand movements. Leonard et al. record direct neural activity in a deaf signer undergoing brain surgery while he produces signs and other movements. Location and handshape are distinctly represented in sensorimotor cortex, revealing neural organization for sign articulation.

Acknowledgements

The authors thank the participant for his invaluable contributions to this study. This work was supported by the National Institutes of Health (F32-DC013486 to M.K.L., R00-NS065120, DP2-OD00862, and R01-DC012379 to E.F.C., and R01-DC014767 and R01-DC011538 to D.C.), and a Kavli Institute for Brain and Mind Innovative Research grant to M.K.L. E.F.C. was supported by The New York Stem Cell Foundation, The McKnight Foundation, The Shurl and Kay Curci Foundation, and The William K. Bowes Foundation.

Footnotes

Declaration of Interests

The authors declare no competing interests.

Publisher's Disclaimer: This is a PDF file of an unedited manuscript that has been accepted for publication. As a service to our customers we are providing this early version of the manuscript. The manuscript will undergo copyediting, typesetting, and review of the resulting proof before it is published in its final form. Please note that during the production process errors may be discovered which could affect the content, and all legal disclaimers that apply to the journal pertain.

References

- 1.Bellugi U, and Klima ES (1979). The Signs of Language (Harvard University Press; ). [Google Scholar]

- 2.Sandler W, Meir I, Padden C, and Aronoff M (2005). The emergence of grammar: Systematic structure in a new language. Proc. Natl. Acad. Sci. U. S. A 102, 2661–2665. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 3.Emmorey K (2001). Language, cognition, and the brain: Insights from sign language research (Psychology Press; ). [Google Scholar]

- 4.Sandler W, and Lillo-Martin D (2006). Sign language and linguistic universals (Cambridge University Press; ). [Google Scholar]

- 5.Lillo-Martin D (1999). Modality effects and modularity in language acquisition: The acquisition of American Sign Language. Handb. Child Lang. Acquis 531, 567. [Google Scholar]

- 6.Newport EL, and Meier RP (1985). The acquisition of American Sign Language. (Lawrence Erlbaum Associates, Inc; ). [Google Scholar]

- 7.Bouchard KE, Conant DF, Anumanchipalli GK, Dichter B, Chaisanguanthum KS, Johnson K, and Chang EF (2016). High-Resolution, Non-Invasive Imaging of Upper Vocal Tract Articulators Compatible with Human Brain Recordings. PloS One 11, e0151327. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 8.Wolpert DM, and Ghahramani Z (2000). Computational principles of movement neuroscience. Nat. Neurosci 3, 1212–1217. [DOI] [PubMed] [Google Scholar]

- 9.Shadmehr R, and Wise SP (2005). The computational neurobiology of reaching and pointing: a foundation for motor learning (MIT press; ). [Google Scholar]

- 10.Chestek CA, Gilja V, Blabe CH, Foster BL, Shenoy KV, Parvizi J, and Henderson JM (2013). Hand posture classification using electrocorticography signals in the gamma band over human sensorimotor brain areas. J. Neural Eng 10, 026002. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 11.Stokoe W (1965). Dictionary of the American Sign Language based on scientific principles. [Google Scholar]

- 12.Rizzolatti G, and Luppino G (2001). The cortical motor system. Neuron 31, 889–901. [DOI] [PubMed] [Google Scholar]

- 13.Shenoy KV, Sahani M, and Churchland MM (2013). Cortical control of arm movements: a dynamical systems perspective. Annu. Rev. Neurosci 36. [DOI] [PubMed] [Google Scholar]

- 14.Corina DP (1999). On the nature of left hemisphere specialization for signed language. Brain Lang. 69, 230–240. [DOI] [PubMed] [Google Scholar]

- 15.Crone N, Hao L, Hart J, Boatman D, Lesser R, Irizarry R, and Gordon B (2001). Electrocorticographic gamma activity during word production in spoken and sign language. Neurology 57, 2045–2053. [DOI] [PubMed] [Google Scholar]

- 16.Emmorey K, Damasio H, McCullough S, Grabowski T, Ponto LL, Hichwa RD, and Bellugi U (2002). Neural systems underlying spatial language in American Sign Language. NeuroImage 17, 812–824. [PubMed] [Google Scholar]

- 17.Campbell R, MacSweeney M, and Waters D (2007). Sign language and the brain: a review. J. Deaf Stud. Deaf Educ [DOI] [PubMed] [Google Scholar]

- 18.MacSweeney M, Capek CM, Campbell R, and Woll B (2008). The signing brain: the neurobiology of sign language. Trends Cogn. Sci 12, 432–440. [DOI] [PubMed] [Google Scholar]

- 19.Leonard MK, Ramirez NF, Torres C, Travis KE, Hatrak M, Mayberry RI, and Halgren E (2012). Signed words in the congenitally deaf evoke typical late lexicosemantic responses with no early visual responses in left superior temporal cortex. J. Neurosci 32, 9700–9705. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 20.Jose-Robertson S, Corina DP, Ackerman D, Guillemin A, and Braun AR (2004). Neural systems for sign language production: mechanisms supporting lexical selection, phonological encoding, and articulation. Hum. Brain Mapp 23, 156–167. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 21.Emmorey K, Mehta S, McCullough S, and Grabowski TJ (2016). The neural circuits recruited for the production of signs and fingerspelled words. Brain Lang. 160, 30–41. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 22.Wolpert DM, Ghahramani Z, and Jordan MI (1995). An internal model for sensorimotor integration. Science 269, 1880. [DOI] [PubMed] [Google Scholar]

- 23.Hickok G, Houde J, and Rong F (2011). Sensorimotor integration in speech processing: computational basis and neural organization. Neuron 69, 407–422. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 24.Houde JF, and Nagarajan SS (2011). Speech production as state feedback control. Front Hum Neurosci 5, 82. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 25.Sandler W (1989). Phonological representation of the sign: Linearity and nonlinearity in American Sign Language (Walter de Gruyter; ). [Google Scholar]

- 26.Brentari D (1998). A prosodic model of sign language phonology (Mit Press; ). [Google Scholar]

- 27.Leonard MK, Desai M, Hungate D, Cai R, Singhal NS, Knowlton RC, and Chang EF (2019). Direct cortical stimulation of inferior frontal cortex disrupts both speech and music production in highly trained musicians. Cogn. Neuropsychol 36, 158–166. [DOI] [PubMed] [Google Scholar]

- 28.Breshears JD, Southwell DG, and Chang EF (2019). Inhibition of manual movements at speech arrest sites in the posterior inferior frontal lobe. Neurosurgery 55, E496–E501. [DOI] [PubMed] [Google Scholar]

- 29.Corina DP, Loudermilk BC, Detwiler L, Martin RF, Brinkley JF, and Ojemann G (2010). Analysis of naming errors during cortical stimulation mapping: implications for models of language representation. Brain Lang. 115, 101–112. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 30.Jescheniak JD, and Levelt WJ (1994). Word frequency effects in speech production: Retrieval of syntactic information and of phonological form. J. Exp. Psychol. Learn. Mem. Cogn 20, 824. [Google Scholar]

- 31.Corina D Frequency and Age of Acquisition Values. Available at: http://corinalab.ucdavis.edu/sign-language-research.html.

- 32.Emmorey K, Mehta S, and Grabowski TJ (2007). The neural correlates of sign versus word production. Neuroimage 36, 202–208. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 33.Hyvarinen J, and Shelepin Y (1979). Distribution of visual and somatic functions in the parietal associative area 7 of the monkey. Brain Res. 169, 561–564. [DOI] [PubMed] [Google Scholar]

- 34.Petrides M (2015). The Ventrolateral Frontal Region. Neurobiol. Lang, 25. [Google Scholar]

- 35.Robinson C, and Burton H (1980). Organization of somatosensory receptive fields in cortical areas 7b, retroinsula, postauditory and granular insula of M. fascicularis. J. Comp. Neurol 192, 69–92. [DOI] [PubMed] [Google Scholar]

- 36.Taira M, Mine S, Georgopoulos A, Murata A, and Sakata H (1990). Parietal cortex neurons of the monkey related to the visual guidance of hand movement. Exp. Brain Res 83, 29–36. [DOI] [PubMed] [Google Scholar]

- 37.Corina D (1998). The processing of sign language: Evidence from aphasia In Handbook of neurolinguistics (Elsevier; ), pp. 313–329. [Google Scholar]

- 38.Branco MP, Freudenburg ZV, Aarnoutse EJ, Bleichner MG, Vansteensel MJ, and Ramsey NF (2017). Decoding hand gestures from primary somatosensory cortex using high-density ECoG. Neuroimage 147, 130–142. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 39.Corina DP, McBurney SL, Dodrill C, Hinshaw K, Brinkley J, and Ojemann G (1999). Functional roles of Broca’s area and SMG: evidence from cortical stimulation mapping in a deaf signer. Neuroimage 10, 570–581. [DOI] [PubMed] [Google Scholar]

- 40.Brentari D (1990). Licensing in ASL handshape change. Sign Lang. Res. Theor. Issues, 57–68. [Google Scholar]

- 41.Jean A (1993). A linguistic investigation of the relationship between physiology and handshape. [Google Scholar]

- 42.Keane J (2014). Towards an articulatory model of handshape: What fingerspelling tells us about the phonetics and phonology of handshape in American Sign Language. [Google Scholar]

- 43.Chartier J, Anumanchipalli GK, Johnson K, and Chang EF (2018). Encoding of articulatory kinematic trajectories in human speech sensorimotor cortex. Neuron 98, 1042–1054. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 44.Poeppel D, Emmorey K, Hickok G, and Pylkkanen L (2012). Towards a new neurobiology of language. J. Neurosci 32, 14125–14131. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 45.Bouchard KE, Mesgarani N, Johnson K, and Chang EF (2013). Functional organization of human sensorimotor cortex for speech articulation. Nature 495, 327–332. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 46.Crone NE, Boatman D, Gordon B, and Hao L (2001). Induced electrocorticographic gamma activity during auditory perception. Clin. Neurophysiol 112, 565–582. [DOI] [PubMed] [Google Scholar]

- 47.Crone NE, Miglioretti DL, Gordon B, and Lesser RP (1998). Functional mapping of human sensorimotor cortex with electrocorticographic spectral analysis. II. Event-related synchronization in the gamma band. Brain 121, 2301–2315. [DOI] [PubMed] [Google Scholar]

- 48.Ray S, and Maunsell JH (2011). Different origins of gamma rhythm and high-gamma activity in macaque visual cortex. PLoS Biol. 9, e1000610. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 49.Leonard MK, and Chang EF (2014). Dynamic speech representations in the human temporal lobe. Trends Cogn. Sci 18, 472–479. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 50.Mesgarani N, Cheung C, Johnson K, and Chang EF (2014). Phonetic feature encoding in human superior temporal gyrus. Science 343, 1006–1010. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 51.Leonard MK, Baud MO, Sjerps MJ, and Chang EF (2016). Perceptual restoration of masked speech in human cortex. Nat. Commun 7, 13619. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 52.Leonard MK, Cai R, Babiak MC, Ren A, and Chang EF (2019). The peri-Sylvian cortical network underlying single word repetition revealed by electrocortical stimulation and direct neural recordings. Brain Lang. 193, 58–72. [DOI] [PMC free article] [PubMed] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.

Supplementary Materials

Video S1: Example ASL production of “candy”, with both fingerspelling and lexical sign movements. Related to Figure 1.

Data Availability Statement

The datasets/code supporting the current study have not been deposited in a public repository because it contains personally identifiable patient information, but are available in an anonymized form from the corresponding author on request after signing a data transfer agreement.