Abstract

Deep learning methods, which can predict the binding affinity of a drug–target protein interaction, reduce the time and cost of drug discovery. In this study, we propose a novel deep convolutional neural network called SE-OnionNet, with two squeeze-and-excitation (SE) modules, to computationally predict the binding affinity of a protein–ligand complex. The OnionNet is used to extract a feature map from the three-dimensional structure of a protein–drug molecular complex. The SE module is added to the second and third convolutional layers to improve the non-linear expression of the network to improve model performance. Three different optimizers, stochastic gradient descent (SGD), Adam, and Adagrad, were also used to improve the performance of the model. A majority of protein–molecule complexes were used for training, and the comparative assessment of scoring functions (CASF-2016) was used as the benchmark. Experimental results show that our model performs better than OnionNet, Pafnucy, and AutoDock Vina. Finally, we chose the macrophage migration inhibitor factor (PDB ID: 6cbg) to test the stability and robustness of the model. We found that the prediction results were not affected by the docking position, and thus, our model is of acceptable robustness.

Keywords: protein-ligand binding affinity, molecular docking, deep learning, convolutional neural network, drug repositioning

Introduction

The binding affinity of small molecules to receptor proteins is the key to drug discovery and drug repositioning (David Hecht, 2009; Ru et al., 2020; Zeng et al., 2020a). Chemical prediction methods are often time-consuming and costly. The development of accurate prediction models for calculating binding affinity is imperative. The OnionNet model (Zheng et al., 2019) was proposed for predicting binding affinity using the three-dimensional structure of complexes. In the search for a favorable docking pose, a specific scoring function is used to estimate the binding affinity often with a low accuracy and a high false-positive rate. For example, data experiments have been conducted using the comparative assessment of scoring functions (CASF) (Li et al., 2014a,b). We have also previously tested the performance of AutoDock Vina on the CASF-2013 benchmark (Gaillard, 2018). Additionally, the molecular mechanics Poisson–Boltzmann surface area method (Rd et al., 2012) was developed to calculate the binding free energy. This method is computationally intensive and is generally superior to the docking scoring function (Shoichet, 2004).

It was found that the performance of machine learning methods for predicting binding affinity is heavily dependent on the way proteins and ligands are represented. In virtual screening methods, for example, the output is usually analyzed using a docking software to generate or manually extract features of the protein–ligand interaction. This is a laborious and complicated process, and it cannot be efficiently applied in machine learning methods, particularly for large-scale data (Lheureux et al., 2017).

Deep learning technology aims to minimize the time taken for the feature extraction process. The non-linear transformation of the original dataset can reveal the principles hidden in a large-scale dataset. Recently, deep learning technology has attracted the attention of academia and has become a viable option for pharmaceutical research. Dahl et al. developed a multitask deep learning model to predict the chemical structure of molecules, the pharmacophore of the active site, and drug levels toxic to the active site (Lv et al., 2019; Lin et al., 2020; Zeng et al., 2020b). Ramsundar et al. proposed a deep neural network model that efficiently predicts drug activity and structure (Wallach et al., 2015; Jain and Kumar, 2019; Zhao et al., 2019).

In this study, we propose a modified deep learning model, called SE-OnionNet, with two Squeeze-and-Excitation (SE) (Hu et al., 2017) modules to estimate the binding affinity of a protein–ligand complex. Specifically, the SE module is used to increase the non-linear expression ability of the network. We first extract the feature map from the three-dimensional structure of the complex. The local and non-local interactions between each pair of proteins and ligands are identified by dividing the contact characteristics between the protein and the ligand into different distance ranges. Then, the feature map is inputted into the network, and a predicted value is obtained as the output. We tested our SE-OnionNet using the scoring functions on PDBbind (v. 2018) and CSAF-2016 benchmark (Altae-Tran et al., 2017) and found that our model performs better than the classical OnionNet model. In addition, we compare our model with AutoDock Vina's ranking function (Oleg and Olson, 2009). We found that our model can predict significantly higher number of complexes than AutoDock Vina. Our model can also use predicted ligand structures, from a docking simulation, as its input, indicating its robustness.

Materials and Methods

The OnionNet Model

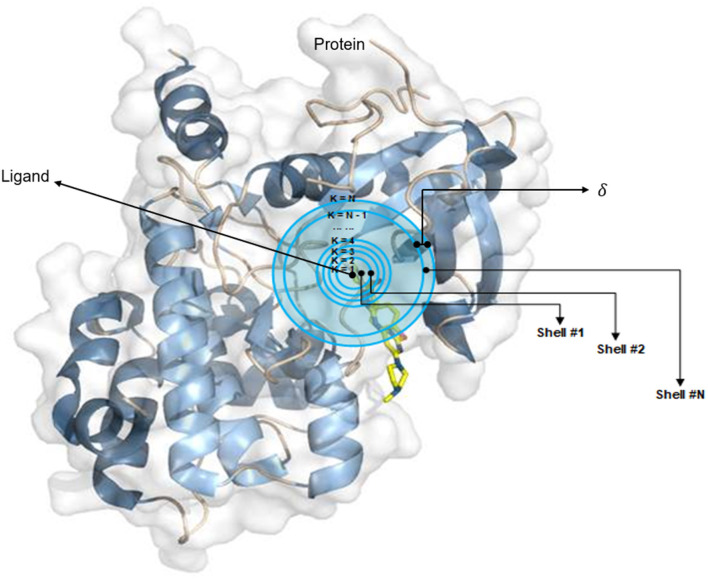

The OnionNet model was obtained by improving the characterization of protein–ligand complex data by Pafnucy, which used CASF-2013 as the benchmark. The three-dimensional structure of a protein–ligand complex is used as the input of the network. It defines each 1 Å as a three-dimensional box; extracts chemical information, centered on all ligand atoms in that box; and yields a high-dimensional (21 × 21 × 21 × 10) feature map. This is, then, inputted into the convolutional neural network model, which yields an affinity prediction ranking as the output. In addition, the OnionNet model defines “shell” as the boundary of each atom around a series of ligands. The “shell” is defined as the space between boundary K – 1 and K, with a thickness of δ (Figure 1). The nth shell is defined as the space between boundaries k = n – 1 and k = n, 1 ≤ n ≤ N. Intermolecular interactions between the ligand and the protein are expressed as the number of contacts between atoms in the nth shell.

Figure 1.

The definition of “shell.” The interaction between proteins and ligands is defined layer by layer in a three-dimensional space.

They selected eight types of elements (EL), C, N, O, H, P, S, halogens, and all the remaining elements (ARE), to measure the types of contact between a ligand and the atoms in a protein. To maintain the generalization ability of the model, we define halogens to represent any one of the four elements F, Cl, Br, and I. For the nth shell, considering the different binding orientations of ligands and proteins, we used 64 features to represent the contact between the ligand and the protein.

| (1) |

| (2) |

| (3) |

For each element pair, ECTSTt, the number of contacts is the sum of Cr,l between Rn,Ts and LTt, where Cr,l, Rn,Ts, and LTt are the number of contacts, atoms in the protein, and atoms in the ligand, respectively. The dr,l represents the distance between atom r and atom l, and the distance between the atom in the ligand to the nearest point of the boundary is defined as d0, if dr,l is within (k – 2)δ + d0 ≤ dr,l < (k – 1)δ + d0, then r and l is equal to 1; otherwise, it is equal to 0. In our study, we used the same values of d0 and δ as those used in OnionNet.

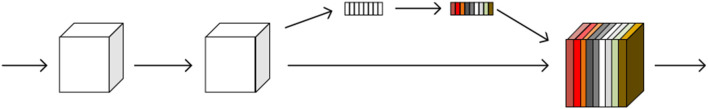

The SE Module

The SE module is inspired by SENet, which was the champion of the ImageNet Large Scale Visual Recognition Challenge 2017. It allows for simple yet easy expansion within the existing network structure. The SENet network focuses on the relationships between channels, aiming to automatically learn the importance of different channel features. The SE module is shown in Figure 2.

Figure 2.

A squeeze-and-excitation block. The squeeze operation compresses the features along the spatial dimension and turns each two-dimensional feature channel into a real number. The excitation operation, which is similar to the gate mechanism in the recurrent neural network, generates weights for each feature channel. The reweight operation completes the recalibration of the original feature in the channel dimension.

The SE module performs a squeeze operation on the feature map to obtain the channel-level global features, and an excitation operation is performed on the global features to learn the relationship between the channels. The weight of the different channels is multiplied by the original feature map to obtain the final feature map. Essentially, the SE module performs an attention or a gating operation on the channel dimension. This mechanism can pay more attention to channel features, which have a large amount of information, while suppressing unimportant channel features. The SE module was embedded in our original network architecture.

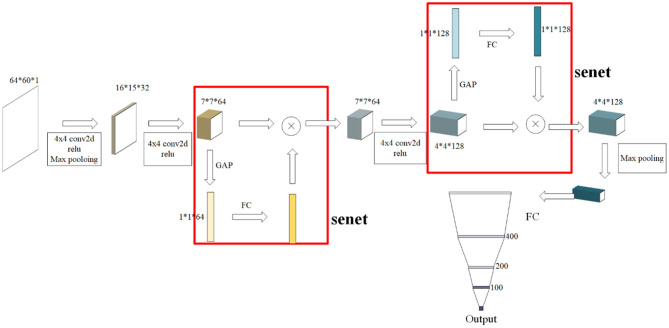

Our SE-OnionNet Model

In general, the SE-OnionNet model was designed by embedding SE modules, for their ability to perform attention operations, in the OnionNet network. Specifically, for each complex, two-dimensional information, as a feature map, is extracted from the three-dimensional structure. Then, the feature map is entered into a three-layer convolutional network to flatten and pass them onto the four fully connected layers with 400, 200, and 100 units, respectively. Finally, an output layer is generated with the predicted protein–ligand binding affinity score, pKa. The SE module is added to the second and third convolutional layers to improve the non-linear expression of the network. The structure of the SE-OnionNet model is shown in Figure 3.

Figure 3.

The structure of our model. It is composed of three layers of convolution, two SE blocks, and four layers of dense layer. SE blocks can not only effectively enhance the performance but also are computationally lightweight and impose only a slight increase in model complexity and computational burden.

We trained our model using the loss function in OnionNet, shown as follows:

| (4) |

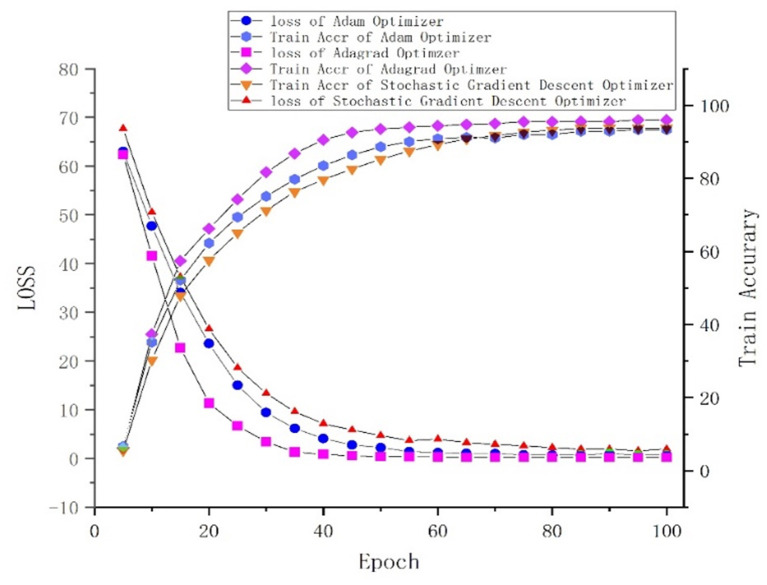

Where R and RMSE are the Pearson's correlation coefficient and root mean square error, respectively. α denotes an adjustable positive parameter that is <1. The value of α is set to be 0.8 in our model. The purpose of training is to obtain a higher R value and a lower RMSE value. We applied batch regularization to all layers except the last one, in order to avoid overfitting. For this, we tried many methods and finally chose to apply regularization between the convolutional layer and the dense layer. Adagrad was selected as the optimizer of SE-OnionNet after comparison with stochastic gradient descent (SGD) and Adam (Kingma and Ba, 2015).

Evaluation Metrics

The Pearson's correlation coefficient (R), denoted in Equation (9), and the standard deviation (SD) are used to evaluate the performance of the model during the training process. The binding affinity, pKa, is expressed as the negative logarithm of Kx, as follows:

| (5) |

Where Kx represents the inhibition constant (Ki), dissociation constant (Kd), or semi-inhibitory concentration (IC50).

The accuracy of the model is evaluated by RMSE, calculated using Equation (6), to quantify the relative deviation between the predicted and experimentally measured values of pKa.

| (6) |

We also estimated the regression SD, calculated using the following equation:

| (7) |

where a and b are the slope and intercept of the linear regression line between the predicted pKa and actual pKa values.

The mean absolute error (MAE), calculated using Equation (8), is used to evaluate the prediction error.

| (8) |

Finally, R calculated by Equation (9) is used to estimate the relationship between the predicted pKa and actual pKa.

| (9) |

where and are the standard deviations of the pKa predicted by our network and the actual pKa, respectively.

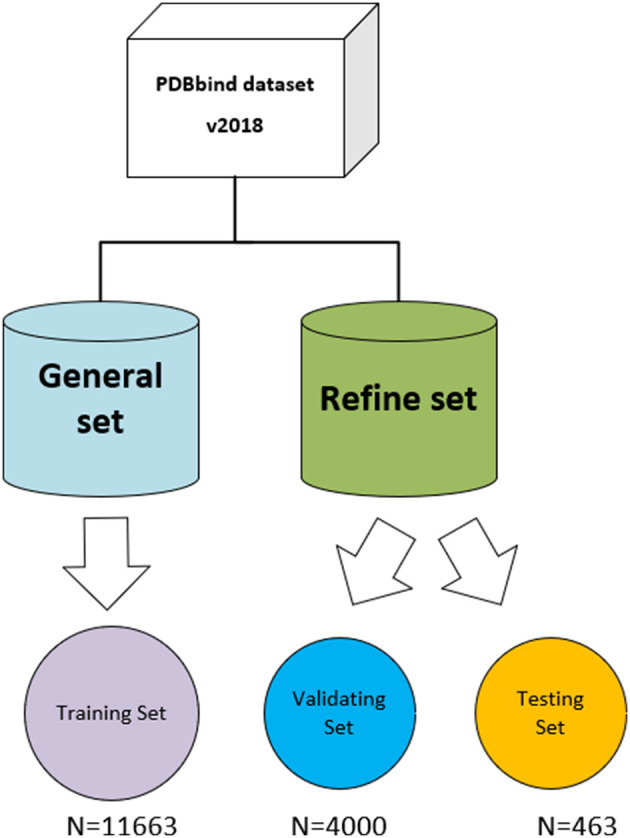

Datasets

The three-dimensional complexes used for the training and testing of our model are from the PDBbind database (v. 2018) (http://www.pdbbind.org.cn/). The dataset consists of both the general set, which includes 11,663 complexes, and the refined set, which includes 4,463 complexes, and the general set was used to train our model. We then randomly selected 4,000 complexes from the refined set for validation, and the rest were used as testing sets. The datasets used in the model are shown in Figure 4.

Figure 4.

The datasets used in the model. The original PDBbind v.2018 dataset was filtered to retain only protein–ligand complexes with measured Ki or Kd binding affinity and divided into training set, validating set, and testing set.

The CASF-2016 benchmark was selected to verify our model (Su et al., 2018). Compared with CASF-2013, CASF-2016 has improved in several aspects such as test set construction, evaluation method, and selection of scoring function. The CASF-2016 benchmark offers the following: (1) A larger and higher quality test set can be constructed; (2) there is an improved series of evaluation methods; and (3) 25 scoring functions can be tested for exemplary application.

Results

Performance Comparison With Different Optimizers

One of the central steps of the contemporary deep learning pipeline is to select an optimizer. Considering the sparsity of the feature map, we tried three popularly used optimizers: SGD, Adam (Kingma and Ba, 2015), and Adagrad (Duchi et al., 2011). The number of iterations for the optimization algorithm was set to 100, and the learning rate was set to 0.001. We found that Adagrad was the fastest optimizing algorithm with an accuracy higher than that of SGD and Adam. The loss and accuracy of the three optimizers, from 0 to 100 epoch(s), are shown in Figure 5.

Figure 5.

Loss and accuracy under different optimizers.

Performance of the SE-OnionNet Model

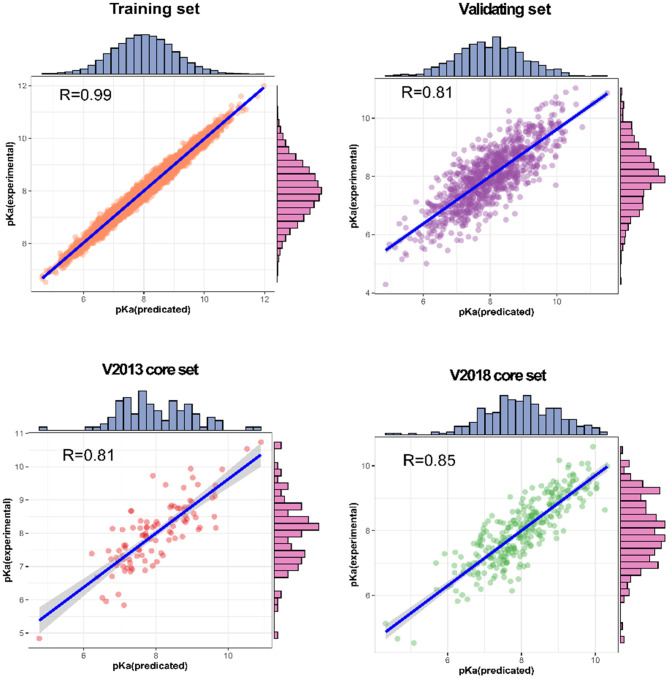

Our SE-OnionNet model performed well in the experiments conducted using the v. 2013 core and v. 2018 core datasets (see Table 1).

Table 1.

Performance of SE-OnionNet.

| Dataset | R | RMSE | MAE | SD |

|---|---|---|---|---|

| Training set | 0.990 | 0.256 | 0.152 | 0.198 |

| Validating set | 0.814 | 1.584 | 0.823 | 1.221 |

| V2013 core set | 0.812 | 1.692 | 1.323 | 1.423 |

| V2018 core set | 0.853 | 1.592 | 0.912 | 1.253 |

For each complex in the dataset, the affinity was predicted and compared with the real value. The prediction accuracy of the model was evaluated based on the values of R, RMSE, SD, and MAE. The R values of our model were found to be 0.990, 0.814, 0.812, and 0.853 for the training set, validating set, and two testing sets, respectively, and these values are higher than those of the original model. The RMSE values calculated using Equation (6) were 1.584, 1.692, and 1.592 for the validating set and two testing sets, respectively, demonstrating that the pKa predicted by our model is highly correlated with the actual pKa value.

We also analyzed the correlation between predicted pKa and measured pKa of the different datasets using a scatter plot (Figure 6). As expected, the values were highly correlated not only in the training set but also in the validating and testing sets.

Figure 6.

Predictions for two test sets (core sets from PDBbind v. 2013 and v. 2018): training set and validation set.

Discussion

Stability of SE-OnionNet

It is well-known that the stability of the deep learning model plays an important role. To test the model's stability, we compared the SD and R values of SE-OnionNet, OnionNet, Pafnucy, and AutoDock Vina using CASF-2016. The results are shown in Table 2. The SD and R values of our model are 1.20 and 0.83, while in the OnionNet, these are 1.26 and 0.82, respectively, indicating that our model is slightly better than OnionNet. Simultaneously, the indices SD and R of our model are better than those of Pafnucy and AutoDock Vina. Overall, our model based on deep learning performed better than other traditional scoring models.

Table 2.

Performance comparison of different scoring functions.

| Scoring functions | SD | R |

|---|---|---|

| SE-OnionNet | 1.20 | 0.83 |

| OnionNet | 1.26 | 0.82 |

| Pafnucy | 1.37 | 0.78 |

| AutoDock Vina | 1.61 | 0.62 |

Robustness of SE-OnionNet

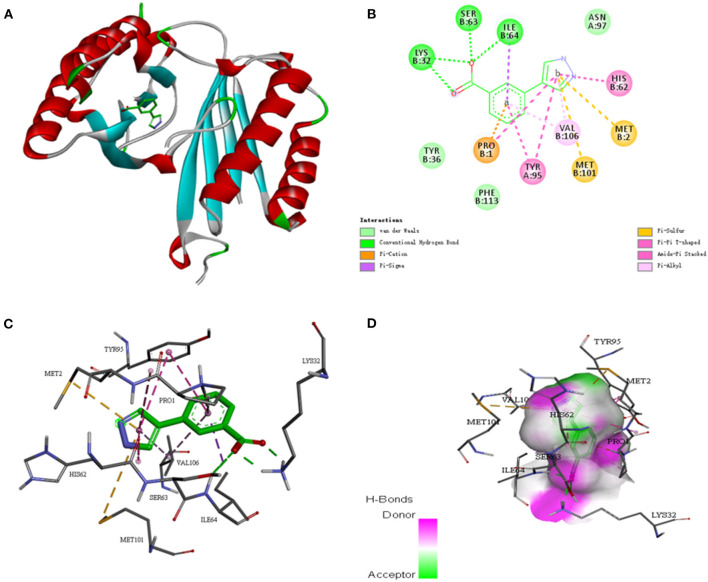

To further investigate whether the SE-OnionNet model improved the robustness of the original model, we selected the macrophage migration inhibitor factor (PDB ID: 6cbg) and its ligand is 3-(1H-pyrazol-4-yl)benzoic acid (EWG) from the PDB refined dataset. The index pKd (pKa for our study) provided in the PDBbind database is 3.95. We first extracted the proto-ligand (EWG) from the protein and redocked it using AutoDock Vina (Figure 7). This was used as an input for our model to obtain the pKa value. The predicted index pKa of the complex, docked using AutoDock Vina, by our model was 5.645 and was not lower than the indexed pKd provided in the PDBbind database.

Figure 7.

The interaction diagram between the macrophage migration inhibitor factor (PDB ID: 6cbg) and 3-(1H-pyrazol-4-yl)benzoic acid (EWG). (A) Molecular docking between the macrophage migration inhibitor factor (PDB ID: 6cbg) and 3-(1H-pyrazol-4-yl)benzoic acid (EWG). (B) Local two dimensional display of the interaction diagram between the macrophage migration inhibitor factor (PDB ID: 6cbg) and 3-(1H-pyrazol-4-yl)benzoic acid (EWG). (C) Three dimensional display of the interaction diagram between the macrophage migration inhibitor factor (PDB ID: 6cbg) and 3-(1H-pyrazol-4-yl)benzoic acid (EWG). (D) Hydrogen bond coloring display of the interaction diagram between the macrophage migration inhibitor factor (PDB ID: 6cbg) and 3-(1H-pyrazol-4-yl)benzoic acid (EWG).

Conclusion

In this study, a modified deep learning model SE-OnionNet, with an attention mechanism to improve the performance of the model, is constructed. Based on the SENet model, we added SE modules to each of the two convolutional layers, except the first one, to improve the non-linear expression of the network and, thus, the performance of the model. We used three different optimizers, SGD, Adam, and Adagrad, to optimize the network, and finally, chose the superior Adagrad as our optimizer. Using CASF-2016, we found that the SE-OnionNet model outperforms the original model. Finally, for the purpose of testing the stability and robustness of the network, we chose the macrophage migration inhibitor factor (PDB ID: 6cbg) as an example. We found that our model is robust and the prediction results are not affected by docking orientation. We plan to add more modules to improve the performance of the model. Additionally, our study also motivates the formulation of innovative approaches to process the three-dimensional structure of a protein–ligand complex. Furthermore, it is worthwhile to use spiking neural networks [e.g., spiking neural P systems (Song et al., 2016, 2017, 2018, 2019a,b)] for drug discovery.

Data Availability Statement

Publicly available datasets were analyzed in this study. This data can be found here: CASF-2016 (https://pubs.acs.org/doi/10.1021/acs.jcim.8b00545).

Author Contributions

All authors listed have made a substantial, direct and intellectual contribution to the work, and approved it for publication.

Conflict of Interest

RZ was employed by company Trinity Earth Technology Co. Ltd. The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Footnotes

Funding. This work was supported by the National Natural Science Foundation of China (Grant Nos. 61873280, 61873281, 61672033, 61672248, 61972416, and 61772376), Taishan Scholarship (tsqn201812029), Natural Science Foundation of Shandong Province (No. ZR2019MF012), Fundamental Research Funds for the Central Universities (18CX02152A and 19CX05003A-6), and Key Scientific Research Project of Beijing Educational Committee (No. SZ20171001105).

References

- Altae-Tran H., Ramsundar B., Pappu A. S., Pande V. (2017). Low data drug discovery with one-shot learning. ACS Central Sci. 3:283. 10.1021/acscentsci.6b00367 [DOI] [PMC free article] [PubMed] [Google Scholar]

- David Hecht G. B. F. (2009). Computational intelligence methods for docking scores. Curr. Comp. Aided Drug Design. 5:63. 10.2174/157340909787580863 [DOI] [Google Scholar]

- Duchi J., Hazan E., Singer Y. (2011). Adaptive subgradient methods for online learning and stochastic optimization. J. Machine Learn Res. 12, 2121–2159. [Google Scholar]

- Gaillard T. (2018). Evaluation of autodock and autodock vina on the casf-2013 benchmark. J. Chem. Inform. Model. 58, 1697–1706. 10.1021/acs.jcim.8b00312 [DOI] [PubMed] [Google Scholar]

- Hu J., Shen L., Albanie S., Sun G., Wu E. (2017). Squeeze-and-excitation networks. IEEE Trans Pattern Anal Machine Intelligence 99. [DOI] [PubMed] [Google Scholar]

- Jain K., Kumar A. (2019). An optimal RSSI-based cluster-head selection for sensor networks. Int. J. Adapt. Innovat. Syst. 2, 349–361. 10.1504/IJAIS.2019.108428 [DOI] [Google Scholar]

- Kingma D., Ba J. (2015). Adam: a method for stochastic optimization, in Proceedings of the 3rd International Conference for Learning Representations (San Diego: ). [Google Scholar]

- Lheureux A., Grolinger K., Elyamany H. F., Capretz M. (2017). Machine learning with big data: challenges and approaches. IEEE Access. 65, 7776–7797. 10.1109/ACCESS.2017.2696365 [DOI] [Google Scholar]

- Li Y., Han L., Liu Z., Wang R. (2014a). Comparative assessment of scoring functions on an updated benchmark: 2. evaluation methods and general results. J. Chem. Inform. Model. 54, 1717–1736. 10.1021/ci500081m [DOI] [PubMed] [Google Scholar]

- Li Y., Liu Z., Li J., Han L., Liu J., Zhao Z., et al. (2014b). Comparative assessment of scoring functions on an updated benchmark: 1. compilation of the test set. J. Chem. Inform. Model. 54:1700. 10.1021/ci500080q [DOI] [PubMed] [Google Scholar]

- Lin X., Quan Z., Wang Z. J., Ma T., Zeng X. (2020). Kgnn: knowledge graph neural network for drug-drug interaction prediction. IJCAI 380, 2739–2745. 10.24963/ijcai.2020/380 [DOI] [Google Scholar]

- Lv Z., Ao C., Zou Q. (2019). Protein function prediction: from traditional classifier to deep learning. Proteomics 19:1900119. 10.1002/pmic.201900119 [DOI] [PubMed] [Google Scholar]

- Oleg T., Olson A. J. (2009). Autodock vina: improving the speed and accuracy of docking with a new scoring function, efficient optimization, and multithreading. J. Comput. Chem. 31, 455–461. 10.1002/jcc.21334 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Rd M. B., Jr M. G. T., Swails J. M., Homeyer N., Gohlke H., Roitberg A. E. (2012). Mmpbsa.py: an efficient program for end-state free energy calculations. J. Chem. Theory Comput. 8:3314. 10.1021/ct300418h [DOI] [PubMed] [Google Scholar]

- Ru X., Wang L., Li L., Ding H., Ye X., Zou Q. (2020). Exploration of the correlation between gpcrs and drugs based on a learning to rank algorithm. Compt. Biol. Med. 119:103660. 10.1016/j.compbiomed.2020.103660 [DOI] [PubMed] [Google Scholar]

- Shoichet K. (2004). Virtual screening of chemical libraries. Nature 432, 862–865. 10.1038/nature03197 [DOI] [PMC free article] [PubMed] [Google Scholar]

- Song T., Pan L., Wu T., Zheng P., Wong M. D., Rodriguez-Paton A. (2019b). Spiking neural p systems with learning functions. IEEE Trans. Nanobiosci. 18, 176–190. 10.1109/TNB.2019.2896981 [DOI] [PubMed] [Google Scholar]

- Song T., Rodriguez-Paton A., Zheng P., Zeng X. (2017). Spiking neural p systems with colored spikes. IEEE Trans. Cogn. Dev. Syst. 10, 1106–1115. 10.1109/TCDS.2017.2785332 [DOI] [PubMed] [Google Scholar]

- Song T., Zeng X., Zheng P., Jiang M., Rodriguez-Paton A. (2018). A parallel workflow pattern modeling using spiking neural p systems with colored spikes. IEEE Trans. Nanobiosci. 17, 474–484. 10.1109/TNB.2018.2873221 [DOI] [PubMed] [Google Scholar]

- Song T., Zheng P., Dennis Wong M. L., Wang X. (2016). Design of logic gates using spiking neural P systems with homogeneous neurons and astrocytes-like control. Inform. Sci. 372, 380–391. 10.1016/j.ins.2016.08.055 [DOI] [Google Scholar]

- Song T., Zheng P., Wong M. D., Jiang M., Zeng X. (2019a). On the computational power of asynchronous axon membrane systems. IEEE Trans. Emerg. Top. Comput. Intelligence. 4, 696–704. 10.1109/TETCI.2019.2907724 [DOI] [Google Scholar]

- Su M., Yang Q., Du Y., Feng G., Liu Z., Li Y., et al. (2018). Comparative assessment of scoring functions: the casf-2016 update. J. Chem. Inform. Model. 59, 895–913. 10.1021/acs.jcim.8b00545 [DOI] [PubMed] [Google Scholar]

- Wallach I., Dzamba M., Heifets A. (2015). Atomnet: a deep convolutional neural network for bioactivity prediction in structure-based drug discovery. arXiv [preprint]. arXiv:1510.02855. [Google Scholar]

- Zeng X., Song X., Ma T., Pan X., Zhou Y., Hou Y., et al. (2020b). Repurpose open data to discover therapeutics for covid-19 using deep learning. J Proteome Res. 11, 4624–4636. 10.1021/acs.jproteome.0c00316 [DOI] [PubMed] [Google Scholar]

- Zeng X., Zhu S., Lu W., Liu Z., Huang J., Zhou Y., et al. (2020a). Target identification among known drugs by deep learning from heterogeneous networks. Chem. Sci. 11, 1775–1797. 10.1039/C9SC04336E [DOI] [PMC free article] [PubMed] [Google Scholar]

- Zhao Y., Liu X., Sun W. (2019). A chain membrane model with application in cluster analysis. Int. J. Adapt Innovat. Syst. 2:324. 10.1504/IJAIS.2019.108417 [DOI] [Google Scholar]

- Zheng L., Fan J., Mu Y. (2019). Onionnet: a multiple-layer inter-molecular contact based convolutional neural network for protein-ligand binding affinity prediction. ACS Omega. 4:9b01997. 10.1021/acsomega.9b01997 [DOI] [PMC free article] [PubMed] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.

Data Availability Statement

Publicly available datasets were analyzed in this study. This data can be found here: CASF-2016 (https://pubs.acs.org/doi/10.1021/acs.jcim.8b00545).