Abstract

The problem of estimating high-dimensional network models arises naturally in the analysis of many biological and socio-economic systems. In this work, we aim to learn a network structure from temporal panel data, employing the framework of Granger causal models under the assumptions of sparsity of its edges and inherent grouping structure among its nodes. To that end, we introduce a group lasso regression regularization framework, and also examine a thresholded variant to address the issue of group misspecification. Further, the norm consistency and variable selection consistency of the estimates are established, the latter under the novel concept of direction consistency. The performance of the proposed methodology is assessed through an extensive set of simulation studies and comparisons with existing techniques. The study is illustrated on two motivating examples coming from functional genomics and financial econometrics.

Keywords: Granger causality, high dimensional networks, panel vector autoregression model, group lasso, thresholding

1. Introduction

We consider the problem of learning a directed network of interactions among a number of entities from time course data. A natural framework to analyze this problem uses the notion of Granger causality (Granger, 1969). Originally proposed by C.W. Granger this notion provides a statistical framework for determining whether a time series X is useful in forecasting another one Y, through a series of statistical tests. It has found wide applicability in economics, including testing relationships between money and income (Sims, 1972), government spending and taxes on economic output (Blanchard and Perotti, 2002), stock price and volume (Hiemstra and Jones, 1994), etc. More recently the Granger causal framework has found diverse applications in biological sciences including functional genomics, systems biology and neurosciences to understand the structure of gene regulation, protein-protein interactions and brain circuitry, respectively.

It should be noted that the concept of Granger causality is based on associations between time series, and only under very stringent conditions, true causal relationships can be inferred (Pearl, 2000). Nonetheless, this framework provides a powerful tool for understanding the interactions among random variables based on time course data.

Network Granger causality (NGC) extends the notion of Granger causality among two variables to a wider class of p variables. Such extensions involving multiple time series are handled through the analysis of vector autoregressive processes (VAR) (Lütkepohl, 2005). Specifically, for p stationary time series , with , one considers the class of models

| (1) |

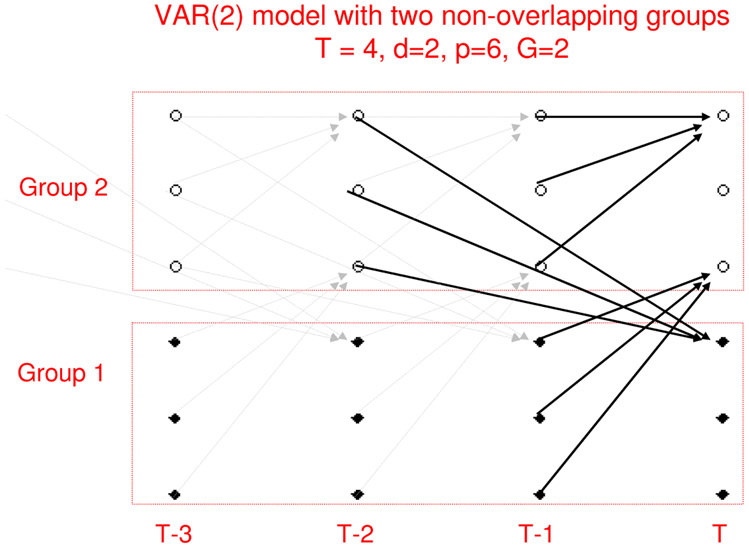

where A1, A2, … , Ad are p × p real-valued matrices, d is the unknown order of the VAR model and the innovation process satisfies ϵt ~ N(0, σ2I). In this model, the time series is said to be Granger causal for the time series if for some h = 1, … , d. Equivalently we can say that there exists an edge in the underlying network model comprising of (d + 1) × p nodes (see Figure 1). We call A1, … , Ad the adjacency matrices from lags 1, … , d. Note that the entries of the adjacency matrices are not binary indicators of presence/absence of edges between two nodes and . Rather, they represent the direction and strength of influence from one node to the other.

Figure 1:

An example of a Network Granger causal model with two non-overlapping groups observed over T = 4 time points

The temporal structure induces a natural partial order among the nodes of this network, which in turn simplifies significantly the corresponding estimation problem (Shojaie and Michailidis, 2010a) of a directed acyclic graph. Nevertheless, one still has to deal with estimating a high-dimensional network (e.g., hundreds of genes) from a limited number of samples.

The traditional asymptotic framework of estimating VAR models requires observing a long, stationary realization {X1, … , XT, T → ∞, p, d fixed} of the p-dimensional time series. This is not appropriate in many biological applications for the following reasons. First, long stationary time series are rarely observed in these contexts. Second, the number of time series (p) being large compared to T, the task of consistent order (d) selection using standard criteria (e.g., AIC or BIC) becomes challenging. Similar issues arise in many econometric applications where empirical evidence suggests lack of stationarity over a long time horizon, although the multivariate time series exhibits locally stable distributional properties.

A more suitable framework comes from the study of panel data, where one observes several replicates of the time series, with possibly short T, across a panel of n subjects. In biological applications replicates are obtained from test subjects. In the analysis of macroeconomic variables, households or firms typically serve as replicates. After removing panel specific fixed effects one treats the replicates as independent samples, performs regression analysis under the assumption of common slope structure and studies the asymptotic properties under the regime n → ∞. Recent works of Cao and Sun (2011) and Binder et al. (2005) analyze theoretical properties of short panel VARs in the low-dimensional setting (n → ∞, T, p fixed).

The focus of this work is on estimating a high-dimensional NGC model in the panel data context (p, n large, T small to moderate). This work is motivated by two application domains, functional genomics and financial econometrics. In the first application (presented in Section 6) one is interested in reconstructing a gene regulatory network structure from time course data, a canonical problem in functional genomics (Michailidis, 2012). The second motivating example examines the composition of balance sheets of the n = 50 largest US banks by size, over T = 9 quarterly periods, which provides insight into their risk profile.

The nature of high-dimensionality in these two examples comes from both estimation of p2 coefficients for each of the adjacency matrices A1, … , Ad, but also from the fact that the order of the time series d is often unknown. Thus, in practice, one must either “guess” the order of the time series (often times, it is assumed that the data is generated from a VAR(1) model, which can result in significant loss of information), or include all of the past time points, resulting in significant increase in the number of variables in cases where d ⪡ T. Thus, efficient estimation of the order of the time series becomes crucial.

Latent variable based dimension reduction techniques like principal component analysis or factor models are not very useful in this context since our goal is to reconstruct a network among the observed variables. To achieve dimension reduction we impose a group sparsity assumption on the structure of the adjacency matrices A1, … , Ad. In many applications, structural grouping information about the variables exists. For example, genes can be naturally grouped according to their function or chromosomal location, stocks according to their industry sectors, assets/liabilities according to their class, etc. This information can be incorporated to the Granger causality framework through a group lasso penalty. If the group specification is correct it enables estimation of denser networks with limited sample sizes (Bach, 2008; Huang and Zhang, 2010; Lounici et al., 2011). However, the group lasso penalty can achieve model selection consistency only at a group level. In other words, if the groups are misspecified, this procedure can not perform within group variable selection (Huang et al., 2009), an important feature in many applications.

Over the past few years, several authors have adopted the framework of network Granger causality to analyze multivariate temporal data. For example, Fujita et al. (2007) and Lozano et al. (2009) employed NGC models coupled with penalized ℓ1 regression methods to learn gene regulatory mechanisms from time course microarray data. Specifically, Lozano et al. (2009) proposed to group all the past observations, using a variant of group lasso penalty, in order to construct a relatively simple Granger network model. This penalty takes into account the average effect of the covariates over different time lags and connects Granger causality to this average effect being significant. However, it suffers from significant loss of information and makes the consistent estimation of the signs of the edges difficult (due to averaging). Shojaie and Michailidis (2010b) proposed a truncating lasso approach by introducing a truncation factor in the penalty term, which strongly penalizes the edges from a particular time lag, if it corresponds to a highly sparse adjacency matrix.

Despite recent use of NGC in applications involving high dimensional data, theoretical properties of the resulting estimators have not been fully investigated. For example, Lozano et al. (2009) and Shojaie and Michailidis (2010b) discuss asymptotic properties of the resulting estimators, but neither addresses in depth norm consistency properties, nor do they examine under what vector autoregressive structures the obtained results hold.

In this paper, we develop a general framework that accommodates different variants of group lasso penalties for NGC models. It allows for the simultaneous estimation of the order of the times series and the Granger causal effects; further, it allows for variable selection even when the groups are misspecified. In summary, the key contributions of this work are: (i) investigate in depth sufficient conditions that explicitly take into consideration the structure of the VAR(d) model to establish norm consistency, (ii) introduce the novel notion of direction consistency, which generalizes the concept of sign consistency and provides insight into the properties of group lasso estimates within a group, and (iii) use the latter notion to introduce an easy to compute thresholded variant of group lasso, that performs within group variable selection in addition to group sparsity pattern selection even when the group structure is misspecified.

All the obtained results are non-asymptotic in nature, and hence help provide insight into the properties of the estimates under different asymptotic regimes arising from varying growth rates of T, p, n, group sizes and the number of groups.

2. Model and Framework

Notation.

Consider a VAR model

| (2) |

observed over T time points t = 1, … , T, across n panels. The index set of the variables can be partitioned into G non-overlapping groups , i.e., and if g ≠ g′. Also denotes the size of the gth group with . In general, we use λmin and λmax to denote the minimum and maximum of a finite collection of numbers λ1, … , λm.

For any matrix A, we denote the ith row by Ai:, jth column by A:j and the collection of rows (columns) corresponding to the gth group by A[g]: (A:[g]). The transpose of a matrix A is denoted by A′ and its Frobenius norm by ∥A∥F. For a symmetric/Hermitian matrix Σ, its maximum and minimum eigenvalues are denoted by Λmin(Σ) and Λmax(Σ), respectively. The symbol A1:h is used to denote the concatenated matrix [A1 : ⋯ : Ah], for any h > 0. For any matrix or vector D, ∥D∥0 denotes the number of non-zero coordinates in D. For notational convenience, we reserve the symbol ∥.∥ to denote the ℓ2 norm of a vector and/or the spectral norm of a matrix. For a pre-defined set of non-overlapping groups on {1, … , p}, the mixed norms of vectors are defined as and ∥v∥2,∞ = max1≤g≤G∥v[g]∥. Also for any vector β, we use βj to denote its jth coordinate and β[g] to denote the coordinates corresponding to the gth group. We also use supp(v) to denote the support of v, i.e., supp(v) = {j ∈ {1, … , p}∣vj ≠ 0}.

Network Granger causal (NGC) estimates with group sparsity.

Consider n replicates from the NGC model (2), and denote the n × p observation matrix at time t by . In econometric applications the data on p economic variables across n panels (firms, households etc.) can be observed over T time points. For time course microarray data one typically observes the expression levels of p genes across n subjects over T time points. After removing the panel specific fixed effects one assumes the common slope structure and independence across the panels. The data are high-dimensional if either T or p is large compared to n. In such a scenario, we assume the existence of an underlying group sparse structure, i.e., for every i = 1, … , p, the support of the ith row of A1:T−1 = [A1 : ⋯ : AT−1] in the model (2) can be covered by a small number of groups si, where si ⪡ (T − 1)G. Note that the groups can be misspecified in the sense that the coordinates of a group covering the support need not be all non-zero. Hence, for a properly specified group structure we shall expect . On the contrary, with many misspecified groups, si can be of the same order, or even larger than .

Learning the network of Granger causal effects {(i, j) ∈ {1, … , p} : for some t} is equivalent to recovering the correct sparsity pattern in A1:(T−1) and consistently estimating the non-zero effects . In the high-dimensional regression problems this is achieved by simultaneous regularization and selection operators like lasso and group lasso. The group Granger causal estimates of the adjacency matrices A1, … , AT−1 are obtained by solving the following optimization problem

| (3) |

where is the n × p observation matrix at time t, constructed by stacking n replicates from the model (2), wt is a p × G matrix of suitably chosen weights and λ is a common regularization parameter. The optimization problem can be separated into the following p penalized regression problems:

| (4) |

The order d of the VAR model is estimated as .

Different choices of weights lead to different variants of NGC estimates. The regular NGC estimates correspond to the choices or , while for adaptive group NGC estimates the weights are chosen as , where are obtained from a regular NGC estimation. For , the weight is infinite, which is interpreted as discarding the variables in group g from the optimization problem.

Thresholded NGC estimates are calculated by a two-stage procedure. The first stage involves a regular NGC estimation procedure. The second stage uses a bi-level thresholding strategy on the estimates . First, the estimated groups with ℓ2 norm less than a threshold (δgrp = cλ, c > 0) are set to zero. The second level of thresholding (within group) is applied if the a priori available grouping information is not entirely reliable. within an estimated group is thresholded to zero if is less than a threshold δmisspec ∈ (0,1). So, for every t = 1, … , T − 1, if , the thresholded NGC estimates are

The tuning parameters λgrp and δmisspec are chosen via cross-validation. The rationale behind this thresholding strategy is discussed in Section 4.

3. Estimation Consistency of NGC estimates

In this section we establish the norm consistency of regular group NGC estimates. The regular NGC estimates in (3) are obtained by solving p separate group lasso programs with a common design matrix . This design matrix has columns which can be partitioned into groups . We denote the sample Gram matrix by . For the ith optimization problem, these groups are penalized by , 1 ≤ t ≤ T − 1, 1 ≤ g ≤ G, with the choice of weights described in Section 2. Following Lounici et al. (2011) one can establish a non-asymptotic upper bound on the ℓ2 estimation error of the NGC estimates under certain restricted eigenvalue (RE) assumptions. These assumptions are common in the literature of high-dimensional regression (Lounici et al., 2011; Bickel et al., 2009; van de Geer and Bühlmann, 2009) and are known to be sufficient to guarantee consistent estimation of the regression coefficients even when the design matrix is singular. Of main interest, however, is to investigate the validity of these assumptions in the context of NGC models. This issue is addressed in Proposition 3.2.

For L > 0, we say that a Restricted Eigenvalue (RE) assumption RE(s, L) is satisfied if there exists a positive number ϕRE = ϕRE(s) > 0 such that

| (5) |

The following proposition provides a non-asymptotic upper bound on the ℓ2-estimation error of the group NGC estimates under RE assumptions. The proof follows along the lines of Lounici et al. (2011) and is delegated to Appendix C.

Proposition 3.1 Consider a regular NGC estimation problem (4) with smax = max1≤i≤p si and . Suppose λ in (3) is chosen large enough so that for some α > 0,

| (6) |

Also assume that the common design matrix in the p regression problems (4) satisfy RE(2smax, 3). Then, with probability at least ,

| (7) |

Remark. Consider a high-dimensional asymptotic regime where for some B > 0, kmax/kmin = O(1), s = O(na1) and kmax = O(na2) with 0 < a1, a2 < a1 + a2 < 1 so that the total number of non-zero effects is o(n). If are bounded above (often accomplished by standardizing the data) and is bounded away from zero (see Proposition 3.2 for more details), then the NGC estimates are norm consistent for any choice of α > 2 + a2/B.

Note that group lasso achieves faster convergence rate (in terms of estimation and prediction error) than lasso if the groups are appropriately specified. For example, if all the groups are of equal size k and λg = λ for all g, then group lasso can achieve an ℓ2 estimation error of order . In contrast, lasso’s error is known to be of the order , which establishes that group lasso has a lower error bound if s ⪡ ∥A1:d∥0. On the other hand, lasso will have a lower error bound if s ≍ ∥A1:d∥0, i.e., if the groups are highly misspecified.

Validity of RE assumption in Group NGC problems.

In view of Theorem 3.1, it is important to understand how stringent the RE condition is in the context of NGC problems. It is also important to find a lower bound on the RE coefficient ϕRE, as it affects the convergence rate of the NGC estimates. For the panel-VAR setting, we can rigorously establish that the RE condition holds with overwhelming probability, as long as n, p grow at the same rate required for ℓ2-consistency.

The following proposition achieves this objective in two steps. Note that each row of the design matrix X (common across the p regressions) is independently distributed as N(0, Σ) where Σ is the variance-covariance matrix of the (T − 1)p-dimensional random variable ((X1)′, … , (XT−1)′)′. First, we exploit the spectral representation of the stationary VAR process to provide a lower bound on the minimum eigenvalue of Σ. In the next step, we establish a suitable deviation bound on X − Σ to prove that X satisfies RE condition with high probability for sufficiently large n.

Proposition 3.2 (a) Suppose the VAR(d) model of (2) is stable, stationary. Let Σ be the variance-covariance matrix of the (T−1)p-dimensional random variable ((X1)′, … , (XT−1)′)′. Then the minimum eigenvalue of Σ satisfies

where is the reverse characteristic polynomial of the VAR(d) process, and vin, vout are the maximum incoming and outgoing effects at a node, cumulated across different lags

(b) In addition, suppose the replicates from different panels are i.i.d. Then, for any s > 0, there exist universal positive constants ci such that if the sample size n satisfies

then X satisfies RE(s, L) with with probability at least 1 − c2 exp(−c3 n).

Remark. Proposition 3.2 has two interesting consequences. First, it provides a lower bound on the RE constant ϕRE which is independent of T. So if the high dimensionality in the Granger causal network arises only from the time domain and not the cross-section (T → ∞, p, G fixed), the stationarity of the VAR process guarantees that the rate of convergence depends only on the true order (d), and not T. Second, this result shows that the NGC estimates are consistent even if the node capacities vin and vout grow with n, p at an appropriate rate.

4. Variable Selection Consistency of NGC estimates

In view of (4), to study the variable selection properties of NGC estimates it suffices to analyze the variable selection properties of p generic group lasso estimates with a common design matrix.

The problem of group sparsity selection has been thoroughly investigated in the literature (Wei and Huang, 2010; Lounici et al., 2011). The issue of selection and sign consistency within a group, however, is still unclear. Since group lasso does not impose sparsity within a group, all the group members are selected together (Huang et al., 2009) and it is not clear which ones are recovered with correct signs. This also leads to inconsistent variable selection if a group is misspecified, i.e., not all the members within a group have non-zero effect. Several alternate penalized regression procedures have been proposed to overcome this shortcoming (Breheny and Huang, 2009; Huang et al., 2009). The main idea behind these procedures is to combine ℓ2 and ℓ1 norms in the penalty to encourage sparsity at both group and variable level. These estimators involve nonconvex optimization problems and are computationally expensive. Also their theoretical properties in a high dimensional regime are not well studied.

We take a different approach to deal with the issue of group misspecification. Although the group lasso penalty does not perform exact variable selection within groups, it performs regularization and shrinks the individual coefficients. We utilize this regularization to detect misspecification within a group. To this end, we formulate a generalized notion of sign consistency, henceforth referred as “direction consistency”, that provides insight into the properties of group lasso estimates within a single group. Subsequently, these properties are used to develop a simple, easy to compute, thresholded variant of group lasso which, in addition to group selection, achieves variable selection and sign consistency within groups.

We consider a generic group lasso regression problem of the linear model y = Xβ0 + ϵ with p variables partitioned into G non-overlapping groups of size kg, g = 1, … , G. Without loss of generality, we assume for g ∈ S = {1, 2, … , s} and for all g ∉ S and consider the following group lasso estimate of β0:

| (8) |

| (9) |

| (10) |

Direction Consistency.

For an m-dimensional vector define its direction vector D(τ) = τ/∥τ∥ , D(0) = 0. In the context of a generic group lasso regression (10), for a group g ∈ S of size kg, ) indicates the direction of influence of at a group level in the sense that it reflects the relative importance of the influential members within the group. Note that for kg = 1 the function D(·) simplifies to the usual sgn(·) function.

Definition.

An estimate of a generic group lasso problem (8) is direction consistent at a rate δn, if there exists a sequence of positive real numbers δn → 0 such that

| (11) |

Now suppose is a direction consistent estimator. Consider the set . can be viewed as a collection of influential group members within a group , which are “detectable” with a sample of size n. Then, it readily follows from the definition that

| (12) |

The latter observation connects the precision of group lasso estimates to the accuracy of a priori available grouping information. In particular, if the pre-specified grouping structure is correct, i.e., all the members within a group have non-zero effects, then for a sufficiently large sample size we have for all g ∈ S. Hence, if the group lasso estimate is direction consistent, it will correctly estimate the sign of all the variables in the support. On the other hand, in case of a misspecified a priori grouping structure (numerous zero coordinates in βg for g ∈ S), group lasso will correctly estimate only the signs of the influential group members. This argument on zero vs. non-zero effects can be generalized to strong vs. weak effects, as well.

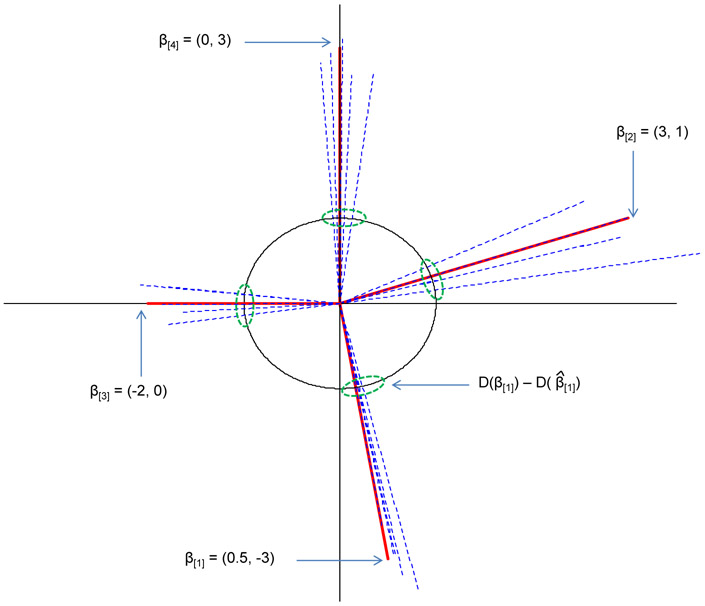

Example. We demonstrate the property of direction consistency using a small example. Consider a linear model with 8 predictors

The coefficient vector β0 is partitioned into four groups of size 2, viz., (0.5, −3), (3, 1), (−2, 0) and (0, 3). The last two groups are misspecified. We generated n = 25 samples from this model and ran group lasso regression with the above group structure. Figure 2 shows the true coefficient vectors (solid) and their estimates (dashed) from five iterations of the above exercise. Note that even though the ℓ2 errors between and vary largely across the four groups, the distance between their projections on the unit circle, , are comparatively stable across groups. In fact, Theorem 4.1 shows that under certain irrepresentable conditions (IC) on the design matrix, it is possible to find a uniform (over all g ∈ S) upper bound δn on the ℓ2 gap of these direction vectors. This motivates a natural thresholding strategy to correct for the misspecification in groups (cf. Proposition 4.2). Even though a group is misspecified (i.e., lies on a coordinate axis), direction consistency ensures, with high probability, that the corresponding coordinate in will be smaller than a threshold δn which is common across all groups in the support.

Figure 2:

Example demonstrating direction consistency

Group Irrepresentable Conditions (IC).

Next, we define the IC required for direction consistency of group lasso estimates. Irrepresentable conditions are common in the literature of high-dimensional regression problems (Zhao and Yu, 2006; van de Geer and Bühlmann, 2009) and are shown to be sufficient (and essentially necessary) for selection consistency of the lasso estimates. Further these conditions are known to be satisfied with high probability, if the population analogue of the Gram matrix belongs to the Toeplitz family (Zhao and Yu, 2006; Wainwright, 2009). In NGC estimation the population analogue of the Gram matrix Σ = Var(X1:(T−1)) is block Toeplitz, so the irrepresentable assumptions are natural candidates for studying selection consistency of the estimates. Consider the notations of (8) and (10). Define K = diag (λ1Ik1, λ2Ik2, … , λsIks).

Uniform Irrepresentable Condition (IC) is satisfied if there exists 0 < η < 1 such that for all with ,

| (13) |

Note that the definition reverts to the usual IC for lasso when all groups correspond are singletons.

The IC is more stringent than the RE condition and is rarely met if the underlying model is not sparse. It can be shown that a slightly weaker version of this condition is necessary for direction consistency. We refer the readers to Appendix D for further discussion on the different irrepresentable assumptions and their properties. Numerical evidence suggests that the group IC tends to be less stringent than the IC required for the selection consistency of lasso. We illustrate this using three small simulated examples.

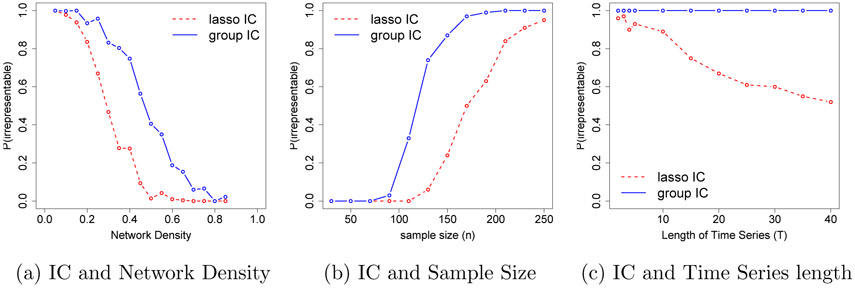

Simulation 1. We constructed group sparse NGC models with T = 5, p = 21, G = 7, kg = 3 and different levels of network densities, where the network edges were selected at random and scaled so that ∥A1∥ = 0.1. For each of these models, we generated 100 samples of size n = 150 and calculated the proportions of times the two types of irrepresentable conditions were met. The results are displayed in Figure 3a.

Figure 3:

Comparison of lasso and group irrepresentable conditions in the context of group sparse NGC models. (a) group ICs tend to be met for dense networks where lasso IC fails to meet. (b) For the same network group IC is met with smaller sample size than required by lasso. (c) For longer time series group IC is satisfied more often than lasso IC.

Simulation 2. We selected a VAR(1) model from the above class and generated samples of size n = 20, 50, … , 250. Figure 3b displays the proportions of times (based on 100 simulations) the two ICs were met.

Simulation 3. We generated n = 200 samples from the VAR(1) model of example 2 for T = 2, 3, 4, 5, 10, … , 40. Figure 3c displays the proportions of times (based on 100 simulations) the two ICs were met.

Selection consistency for generic group lasso estimates.

For simplicity, we discuss the selection consistency properties of a generic group lasso regression problem with a common tuning parameter across groups, i.e., λg = λ for every . Similar results can be obtained for more general choices of the tuning parameters.

Theorem 4.1 Assume that the group uniform IC holds with 1 − η for some η > 0. Then, for any choice of α > 0,

with probability greater than 1 − 4G1−α, there exists a solution satisfying

1. for all g ∉ S,

2. , and hence , for all g ∈ S. If δn < 1, then for all g ∈ S.

Remark. The tuning parameter λ can be chosen of the same order as required for ℓ2 consistency to achieve selection consistency within groups in the sense of (12). Further, with the above choice of λ, δn can be chosen of the order of . Thus, group lasso correctly identifies the group sparsity pattern and is direction consistent if , the same scaling required for ℓ2 consistency.

Thresholding in Group NGC estimators.

As described in Section 2, regular group NGC estimates can be thresholded both at the group and coordinate levels. The first level of thresholding is motivated by the fact that lasso can select too many false positives [cf. van de Geer et al. (2011), Zhou (2010) and the references therein]. The second level of thresholding employs the direction consistency of regular group NGC estimates to perform within group variable selection with high probability. The following proposition demonstrates the benefit of these two types of thresholding. The second result is an immediate corollary of Theorem 4.1. Proof of the first result (thresholding at group level) requires some additional notations and is delegated to Appendix E.

Theorem 4.2 Consider a generic group lasso regression problem (8) with common tuning parameter λg = λ.

(i) Assume the RE(s, 3) condition of (5) holds with a constant ϕRE and define . If , then , with probability at least 1 − 2G1−α.

(ii) Assume that uniform IC holds with 1 − η for some η > 0. Choose λ and δn as in Theorem 4.1 and define

Then with probability at least 1 − 4G1−α, if for all , i.e., if the effect of every non-zero member in a group is “visible” relative to the total effect from the group.

5. Performance Evaluation

We evaluate the performances of regular, adaptive and thresholded variants of the group NGC estimators through an extensive simulation study, and compare the results to those obtained from lasso estimates. The R package grpreg (Breheny and Huang, 2009) was used to obtain the group lasso estimates. The settings considered are:

(a) Balanced groups of equal size: i.i.d samples of size n = 60, 110, 160 are generated from lag-2 (d = 2) VAR models on T = 5 time points, comprising of p = 60, 120, 200 nodes partitioned into groups of equal size in the range 3-5.

(b) Unbalanced groups: We retain the same setting as before, however the corresponding node set is partitioned into one larger group of size 10 and many groups of size 5.

(c) Misspecified balanced groups: i.i.d samples of size n = 60, 110, 160 are generated from lag-2 (d = 2) VAR models on T = 10 time points, comprising of p = 60, 120 nodes partitioned into groups of size 6. Further, for each group there is a 30% misspecification rate, namely that for every parent group of a downstream node, 30% of the group members do not exert any effect on it.

Using a 19 : 1 sample-splitting, the tuning parameter λ is chosen from an interval of the form [C1λe, C2λe], C1, C2 > 0, where for lasso and for group lasso. The thresholding parameters are selected as δgrp = 0.7λσ at the group level and δmisspec = n−0.2 within groups. These parameters are chosen by conducting a 20-fold cross-validation on independent tuning data sets of same sizes, using intervals of the form [C3λ, C4λ] for δgrp and {n−δ, δ ∈ [0, 1]} for δmisspec. Finally, within group thresholding is applied only when the group structure is misspecified.

The following performance metrics were used for comparison purposes: (i) Precision = TP/(TP + FP), (ii) Recall = TP/(TP + FN) and (iii) Matthew’s Correlation coefficient (MCC) defined as

where TP, TN, FP and FN correspond to true positives, true negatives, false positives and false negatives in the estimated network, respectively. The average and standard deviations (over 100 replicates) of the performance metrics are presented for each setup.

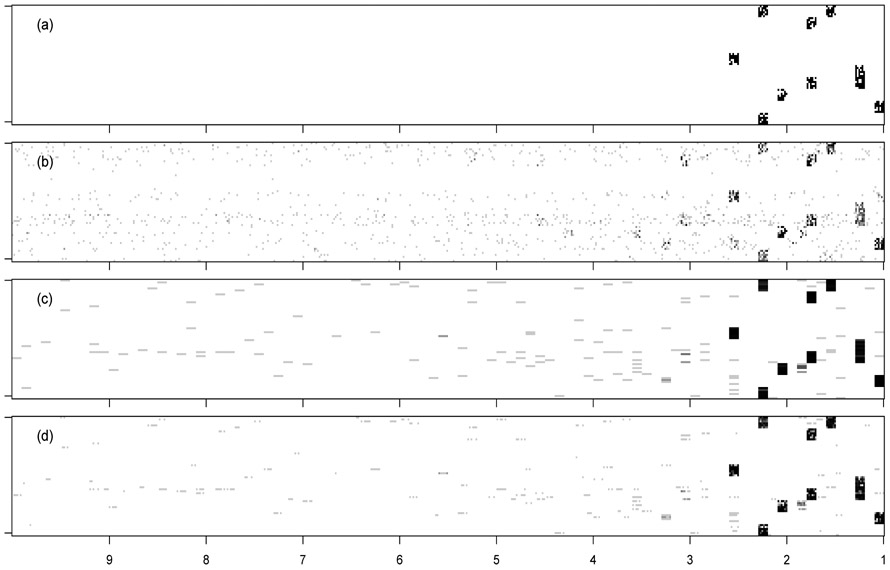

The results for the balanced settings are given in Table 1. The Recall for p = 60 shows that even for a network with 60 × (5 − 1) = 240 nodes and ∣E∣ = 351 true edges, the group NGC estimators recover about 71% of the true edges with a sample size as low as n = 60, while lasso based NGC estimates recover only 31% of the true edges. The three group NGC estimates have comparable performances in all the cases. However thresholded lasso shows slightly higher precision than the other group NGC variants for smaller sample sizes (e.g., n = 60, p = 200). The results for p = 60, n = 110 also display that lower precision of lasso is caused partially by its inability to estimate the order of the VAR model correctly, as measured by ERR LAG=Number of falsely connected edges from lags beyond the true order of the VAR model divided by the number of edges in the network (∣E∣). This finding is nicely illustrated in Figure 4 and Table 1. The group penalty encourages edges from the nodes of the same group to be picked up together. Since the nodes of the same group are also from the same time lag, the group variants have substantially lower ERR LAG. For example, average ERR LAG of lasso for p = 200, n = 160 is 19.79% while the average ERR LAGs for the group lasso variants are in the range 3.06% – 4.21%.

Table 1:

Performance of different regularization methods in estimating graphical Granger causality with balanced group sizes and no misspecification; d = 2, T = 5, SNR = 1.8. Precision (P), Recall (R), MCC are given in percentages (numbers in parentheses give standard deviations). ERR LAG gives the error associated with incorrect estimation of VAR order.

|

p = 60, ∣E∣ =351 Group Size=3 |

p = 120, ∣E∣ =1404 Group Size=3 |

p = 200, ∣E∣ =3900 Group Size=5 |

||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| n | 160 | 110 | 60 | 160 | 110 | 60 | 160 | 110 | 60 | |

| P | Lasso | 80(2) | 75(2) | 66(4) | 69(1) | 62(2) | 52(2) | 52(1) | 47(1) | 38(1) |

| Grp | 95(2) | 91(4) | 83(7) | 91(3) | 80(5) | 68(7) | 78(4) | 72(3) | 59(6) | |

| Thgrp | 96(1) | 92(3) | 86(6) | 93(3) | 83(5) | 70(7) | 82(4) | 76(3) | 64(6) | |

| Agrp | 96(2) | 92(4) | 83(7) | 92(3) | 82(5) | 69(7) | 81(3) | 74(3) | 60(6) | |

| R | Lasso | 71(2) | 54(2) | 31(2) | 54(1) | 40(1) | 22(1) | 38(1) | 28(1) | 15(1) |

| Grp | 99(1) | 93(3) | 71(7) | 91(2) | 81(2) | 48(8) | 84(1) | 70(2) | 41(4) | |

| Thgrp | 99(1) | 93(3) | 71(7) | 91(2) | 81(2) | 48(8) | 84(2) | 69(2) | 41(3) | |

| Agrp | 99(1) | 93(3) | 71(7) | 91(2) | 81(2) | 47(8) | 84(1) | 69(2) | 40(4) | |

| MCC | Lasso | 75(2) | 63(2) | 45(3) | 60(1) | 49(1) | 33(1) | 43(1) | 35(1) | 23(1) |

| Grp | 97(1) | 92(3) | 76(5) | 91(1) | 80(2) | 56(2) | 81(2) | 70(2) | 48(2) | |

| Thgrp | 98(1) | 93(2) | 78(5) | 92(1) | 81(2) | 57(3) | 83(2) | 72(2) | 50(3) | |

| Agrp | 97(1) | 92(3) | 76(5) | 91(1) | 81(2) | 56(3) | 82(2) | 71(2) | 48(2) | |

| ERR | Lasso | 10.5 | 11.3 | 13.9 | 16.63 | 17.37 | 16.69 | 19.79 | 20 | 18.52 |

| LAG | Grp | 3.19 | 6.95 | 12.76 | 4.86 | 10.77 | 12.65 | 4.21 | 5.27 | 7.8 |

| Thgrp | 2.83 | 5.87 | 10.01 | 3.98 | 9.03 | 11.19 | 3.06 | 3.91 | 5.68 | |

| Agrp | 3.13 | 6.89 | 12.59 | 4.63 | 10.37 | 12.34 | 3.58 | 4.87 | 7.59 | |

Figure 4:

Estimated adjacency matrices of a misspecified NGC model with p = 60, T = 10, n = 60: (a) True, (b) Lasso, (c) Group Lasso, (d) Thresholded Group Lasso. The grayscale represents the proportion of times an edge was detected in 100 simulations.

The results for the unbalanced networks are given in Table 2. As in the balanced group setup, in almost all the simulation settings the group NGC variants outperform the lasso estimates with respect to all three performance metrics. However the performances of the different variants of group NGC are comparable and tend to have higher standard deviations than the lasso estimates. Also the average ERR LAGs for the group NGC variants are substantially lower than the average ERR LAG for lasso demonstrating the advantage of group penalty. Although the conclusions regarding the comparisons of lasso and group NGC estimates remain unchanged it is evident that the performances of all the estimators are affected by the presence of one large group, skewing the uniform nature of the network. For example the MCC measures of group NGC estimates in a balanced network with p = 60 and ∣E∣ = 351 vary around 97 – 98% which lowers to 89% – 90% when the groups are unbalanced.

Table 2:

Performance of different regularization methods in estimating graphical Granger causality with unbalanced group sizes and no misspecification; d = 2, T = 5, SNR = 1.8. Precision (P), Recall (R), MCC are given in percentages (numbers in parentheses give standard deviations). ERR LAG gives the error associated with incorrect estimation of VAR order.

|

p = 60, ∣E∣ = 450 Groups=1 × 10, 11 × 5 |

p = 120, ∣E∣ = 1575 Groups=1 × 10, 23 × 5 |

p = 200, ∣E∣ = 4150 Groups=1 × 10, 39 X× 5 |

||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| n | 160 | 110 | 60 | 160 | 110 | 60 | 160 | 110 | 60 | |

| P | Lasso | 72(2) | 69(3) | 62(2) | 51(1) | 48(1) | 41(1) | 61(1) | 53(1) | 42(2) |

| Grp | 84(4) | 79(6) | 76(9) | 55(5) | 47(5) | 40(6) | 86(3) | 77(5) | 66(7) | |

| Thgrp | 86(4) | 82(7) | 78(11) | 60(6) | 50(7) | 40(5) | 88(2) | 79(6) | 69(6) | |

| Agrp | 85(3) | 81(5) | 77(9) | 59(5) | 51(5) | 42(6) | 88(2) | 78(5) | 67(6) | |

| R | Lasso | 45(2) | 35(2) | 22(2) | 43(1) | 34(1) | 22(1) | 23(1) | 15(0) | 7(0) |

| Grp | 94(3) | 87(5) | 61(8) | 88(2) | 75(5) | 48(6) | 73(3) | 49(6) | 22(5) | |

| Thgrp | 95(2) | 88(4) | 62(8) | 89(3) | 77(4) | 50(5) | 73(3) | 50(6) | 21(5) | |

| Agrp | 94(3) | 87(5) | 61(8) | 88(2) | 75(5) | 48(6) | 73(3) | 49(6) | 22(5) | |

| MCC | Lasso | 56(2) | 48(2) | 35(2) | 46(1) | 39(1) | 29(1) | 36(1) | 28(1) | 17(1) |

| Grp | 89(3) | 82(4) | 67(5) | 68(3) | 58(3) | 42(3) | 79(1) | 61(3) | 37(3) | |

| Thgrp | 90(3) | 84(4) | 68(6) | 72(4) | 61(4) | 43(2) | 80(1) | 62(3) | 37(3) | |

| Agrp | 89(3) | 83(4) | 67(6) | 71(3) | 60(3) | 43(3) | 79(1) | 61(3) | 37(3) | |

| ERR | Lasso | 10.59 | 10.74 | 11.76 | 18.3 | 18.72 | 18.76 | 11.54 | 10.93 | 9.29 |

| LAG | Grp | 7.04 | 9.85 | 13.04 | 12.53 | 14.71 | 13.06 | 4.8 | 6.41 | 6.85 |

| Thgrp | 6.58 | 8.98 | 11.1 | 9.6 | 11.9 | 10.9 | 4.06 | 5.65 | 5.7 | |

| Agrp | 6.74 | 9.19 | 12.96 | 10.81 | 12.78 | 11.79 | 4.55 | 6.2 | 6.81 | |

The results for misspecified groups are given in Table 3. Note that for higher sample size n, the MCC of lasso and regular group lasso are comparable. However, the thresholded version of group lasso achieves significantly higher MCC than the rest. This demonstrates the advantage of using the directional consistency of group lasso estimators to perform within group variable selection. We would like to mention here that a careful choice of the thresholding parameters δgrp and δmisspec via cross-validation improves the performance of thresholded group lasso; however, we do not pursue these methods here as they require grid search over many tuning parameters or an efficient estimator of the degree of freedom of group lasso.

Table 3:

Performance of different regularization methods in estimating graphical Granger causality with misspecified groups (30% misspecification); d = 2, T = 10, SNR = 2. Precision (P), Recall (R), MCC are given in percentages (numbers in parentheses give standard deviations). ERR LAG gives the error associated with incorrect estimation of VAR order.

|

p = 60, ∣E∣ = 246 Group Size=6 |

p = 120, ∣E∣ = 968 Group Size=6 |

||||||

|---|---|---|---|---|---|---|---|

| n | 160 | 110 | 60 | 160 | 110 | 60 | |

| P | Lasso | 88(2) | 85(3) | 77(5) | 59(1) | 55(1) | 49(2) |

| Grp | 65(2) | 66(2) | 66(3) | 43(3) | 44(4) | 38(4) | |

| Thgrp | 87(3) | 88(3) | 85(3) | 56(6) | 56(6) | 51(7) | |

| Agrp | 65(2) | 66(2) | 66(3) | 45(2) | 45(4) | 39(4) | |

| R | Lasso | 80(3) | 63(3) | 37(2) | 66(1) | 54(1) | 35(1) |

| Grp | 100(0) | 98(2) | 82(6) | 87(2) | 78(3) | 59(4) | |

| Thgrp | 100(0) | 98(2) | 79(6) | 86(2) | 79(3) | 57(4) | |

| Agrp | 100(0) | 98(2) | 82(6) | 86(2) | 78(3) | 58(3) | |

| MCC | Lasso | 84(2) | 73(2) | 53(3) | 62(1) | 54(1) | 41(1) |

| Grp | 81(1) | 80(2) | 74(4) | 61(2) | 58(3) | 47(2) | |

| Thgrp | 93(2) | 93(2) | 82(4) | 69(4) | 66(4) | 53(3) | |

| Agrp | 81(1) | 80(2) | 74(4) | 62(2) | 59(2) | 47(2) | |

| ERR | Lasso | 12.63 | 17.05 | 22.41 | 45.09 | 49.68 | 53.4 |

| LAG | Grp | 9.43 | 8.78 | 15.12 | 18.22 | 18.43 | 29.26 |

| Thgrp | 6.45 | 5.34 | 8.02 | 11.81 | 12.84 | 15.57 | |

| Agrp | 9.11 | 8.78 | 14.96 | 16.32 | 16.9 | 27.69 | |

In summary, the results clearly show that all variants of group lasso NGC outperform the lasso-based ones, whenever the grouping structure of the variables is known and correctly specified. Further, their performance depends on the composition of group sizes. On the other hand, if the a priori known group structure is moderately misspecified lasso estimates produce comparable results to regular and adaptive group NGC ones, while thresholded group estimates outperform all other methods, as expected.

6. Application

Example: T-cell activation.

Estimation of gene regulatory networks from expression data is a fundamental problem in functional genomics (Friedman, 2004). Time course data coupled with NGC models are informationally rich enough for the task at hand. The data for this application come from Rangel et al. (2004), where expression patterns of genes involved in T-cell activation were studied with the goal of discovering regulatory mechanisms that govern them in response to external stimuli. Activated T-cells are involved in regulation of effector cells (e.g., B-cells) and play a central role in mediating immune response. The available data comprising of n = 44 samples of p = 58 genes, measure the cells response at 10 time points, t = 0, 2, 4, 6, 8, 18, 24, 32, 48, 72 hours after their stimulation with a T-cell receptor independent activation mechanism. We concentrate on data from the first 5 time points, that correspond to early response mechanisms in the cells.

Genes are often grouped based on their function and activity patterns into biological pathways. Thus, the knowledge of gene functions and their membership in biological pathways can be used as inherent grouping structures in the proposed group lasso estimates of NGC. Towards this, we used available biological knowledge to define groups of genes based on their biological function. Reliable information for biological functions were found from the literature for 38 genes, which were retained for further analysis. These 38 genes were grouped into 13 groups with the number of genes in different groups ranging from 1 to 5.

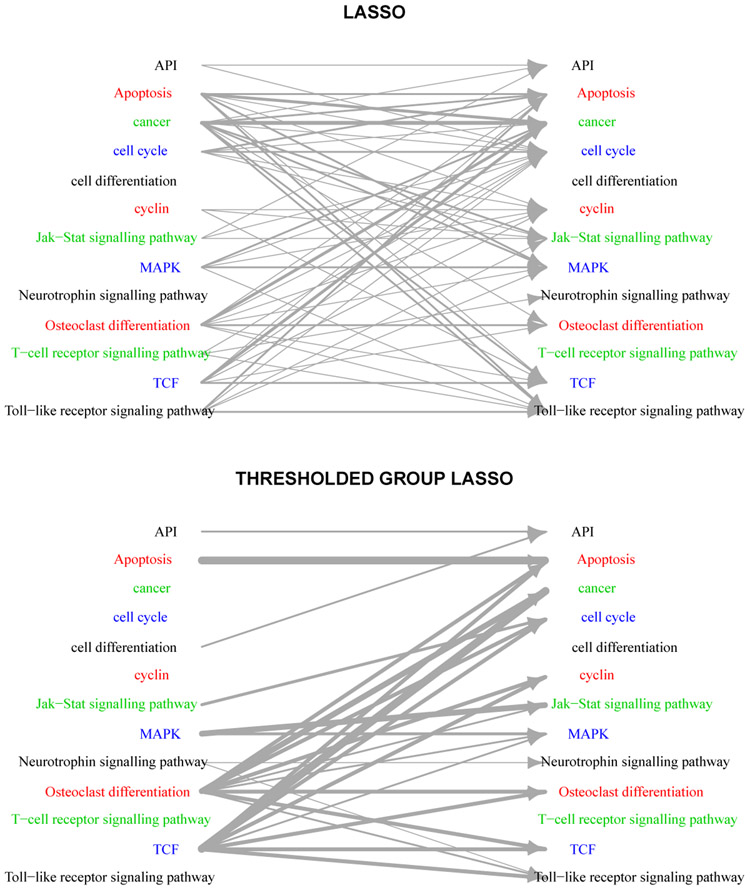

Figure 5 shows the estimated networks based on lasso and thresholded group lasso estimates, where for ease of representation the nodes of the network correspond to groups of genes. In this case, estimates from variants of group NGC estimator were all similar, and included a number of known regulatory mechanisms in T-cell activation, not present in the regular lasso estimate. For instance, Waterman et al. (1990) suggest that TCF plays a significant role in activation of T-cells, which may describe the dominant role of this group of genes in the activation mechanism. On the other hand, Kim et al. (2005) suggest that activated T-cells exhibit high levels of osteoclast-associated receptor activity which may attribute the large number of associations between member of osteoclast differentiation and other groups. Finally, the estimated networks based on variants of group lasso estimator also offer improved estimation accuracy in terms of mean squared error (MSE) despite having having comparable complexities to their regular lasso counterpart (Table 4), which further confirms the findings of other numerical studies in that paper.

Figure 5:

Estimated Gene Regulatory Networks of T-cell activation. Width of edges represent the number of effects between two groups, and the network represents the aggregated regulatory network over 3 time points.

Table 4:

Mean and standard deviation of MSE for different NGC estimates

| Lasso | Grp | Agrp | Thgrp | |

|---|---|---|---|---|

| mean | 0.649 | 0.456 | 0.457 | 0.456 |

| stdev | 0.340 | 0.252 | 0.251 | 0.252 |

Example: Banking balance sheets application.

In this application, we examine the structure of the balance sheets in terms of assets and liabilities of the n = 50 largest (in terms of total balance sheet size) US banking corporations. The data cover 9 quarters (September 2009-September 2011) and were directly obtained from the Federal Deposit Insurance Corporation (FDIC) database (available at www.fdic.gov). The p = 21 variables correspond to different assets (US and foreign government debt securities, equities, loans (commercial, mortgages), leases, etc.) and liabilities (domestic and foreign deposits from households and businesses, deposits from the Federal Reserve Board, deposits of other financial institutions, non-interest bearing liabilities, etc.) We have organized them into four categories: two for the assets (loans and securities) and two for the liabilities (Balances Due and Deposits, based on a $250K reporting FDIC threshold). Amongst the 50 banks examined, one discerns large integrated ones with significant retail, commercial and investment activities (e.g., Citibank, JP Morgan, Bank of America, Wells Fargo), banks primarily focused on investment business (e.g., Goldman Sachs, Morgan Stanley, American Express, E-Trade, Charles Schwab), regional banks (e.g., Banco Popular de Puerto Rico, Comerica Bank, Bank of the West).

The raw data are reported in thousands of dollars. The few missing values were imputed using a nearest neighbor imputation method with k = 5, by clustering them according to their total assets in the most recent quarter in the data collection period (September 2011) and subsequently every missing observation for a particular bank was imputed by the median observation on its five nearest neighbors. The data were log-transformed to reduce nonstationarity issues. The data set was restructured as a panel with p = 21 variables and n = 50 replicates observed over T = 9 time points. Every column of replicates was scaled to have unit variance.

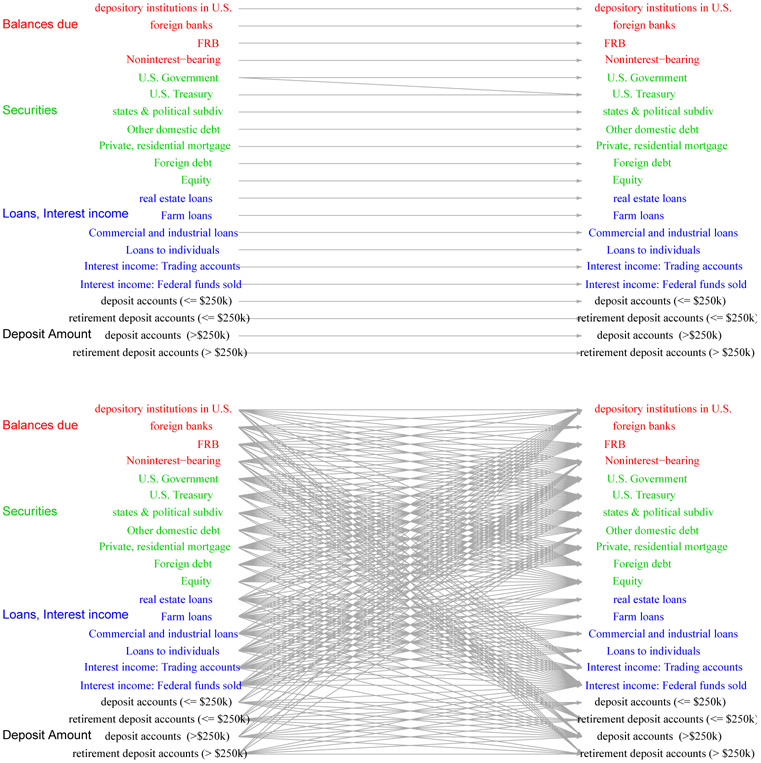

We applied the proposed variants of NGC estimates on the first T = 6 time points (Sep 2009 - Dec 2010) of the above panel data set. The parameters λ and δgrp were chosen using a 19 : 1 sample-splitting method and the misspecification threshold δmisspec was set to zero as the grouping structure was reliable. We calculated the MSE of the fitted model in predicting the outcomes in the four quarters (December 2010 - September 2011). The Predicted MSE (MSE for Dec 2010) are listed in Table 5. The estimated network structures are shown in Figure 6.

Table 5:

Mean and standard deviation (in parentheses) of PMSE (MSE in case of Dec 2010) for prediction of banking balance sheet variables.

| Quarter | Lasso | Grp | Agrp | Thgrp |

|---|---|---|---|---|

| Dec 2010 | 1.59 (0.29) | 0.36 (0.05) | 0.36 (0.05) | 0.37 (0.05) |

| Mar 2011 | 1.46 (0.30) | 0.47 (0.23) | 0.47 (0.23) | 0.46 (0.22) |

| Jun 2011 | 1.33 (0.26) | 0.36 (0.11) | 0.36 (0.11) | 0.35 (0.11) |

| Sep 2011 | 1.72 (0.32) | 0.50 (0.18) | 0.50 (0.18) | 0.47 (0.16) |

Figure 6:

Estimated Networks of banking balance sheet variables using (a) lasso and (b) group lasso. The networks represent the aggregated network over 5 time points.

It can be seen that the lasso estimates recover a very simple temporal structure amongst the variables; namely, that past values (in this case lag-1) influence present ones. Given the structure of the balance sheet of large banks, this is an anticipated result, since it can not be radically altered over a short time period due to business relationships and past commitments to customers of the bank. However, the (adaptive) group lasso estimates reveal a richer and more nuanced structure. Examining the fitted values of the adjacency matrices At, we notice that the dominant effects remain those discovered by the lasso estimates. However, fairly strong effects are also estimated within each group, but also between the groups of the assets (loans and securities) on the balance sheet. This suggests rebalancing of the balance sheet for risk management purposes between relatively low risk securities and potentially more risky loans. Given the period covered by the data (post financial crisis starting in September 2009) when credit risk management became of paramount importance, the analysis picks up interesting patterns. On the other hand, significant fewer associations are discovered between the liabilities side of the balance sheet. Finally, there exist relationships between deposits and securities such as US Treasuries and other domestic ones (primarily municipal bonds); the latter indicates that an effort on behalf of the banks to manage the credit risk of their balance sheets, namely allocating to low risk assets as opposed to more risky loans.

It is also worth noting that the group lasso model exhibits superior predictive performance over the lasso estimates, even 4 quarters into the future. Finally, in this case the thresholded estimates did not provide any additional benefits over the regular and adaptive variants, given that the specification of the groups was based on accounting principles and hence correctly structured.

7. Discussion

In this paper, the problem of estimating Network Granger Causal (NGC) models with inherent grouping structure is studied when replicates are available. Norm, and both group level and within group variable selection consistency are established under fairly mild assumptions on the structure of the underlying time series. To achieve the second objective the novel concept of direction consistency is introduced.

The type of NGC models discussed in this study have wide applicability in different areas, including genomics and economics. However, in many contexts the availability of replicates at each time point is not feasible (e.g., in rate of returns for stocks or other macroeconomic variables), while grouping structure is still present (e.g., grouping of stocks according to industry sector). Hence, it is of interest to study the behavior of group lasso estimates in such a setting and address the technical challenges emanating from such a pure time series (dependent) data structure.

Acknowledgments

We thank the action editor and three anonymous reviewers for their helpful comments. The work of SB and GM was supported in part by DoD grant W81XWH-12-1-0130, and that of GM by NSF DMS-1106695 and NSA H98230-10-1-0203. The work of AS was partially supported by NSF grant DMS-1161565 and NIH grant 1R21GM101719-01A1.

Appendix

Appendix A. Auxiliary Lemmas

Lemma A.1 (Characterization of the Group lasso estimate) A vector is a solution to the convex optimization problem

| (14) |

if and only if satisfies, for some with max1≤g≤G ∥τ[g]∥ ≤ 1, . Further, whenever .

Proof Follows directly from the KKT conditions for the optimization problem (14). ■

Lemma A.2 (Concentration bound for multivariate Gaussian) Let Zk×1 ~ N(0, Σ). Then, for any t > 0, the following inequalities hold:

Proof The first inequality can be found in Ledoux and Talagrand (1991) (equation (3.2). To establish the second inequality note that,

■

Lemma A.3 Let β, . Let and . Then ∥r∥ < 2δ whenever .

Proof It follows from that

which implies that . Now,

since and . ■

Lemma A.4 Let , … , be any partition of {1, …, p} into G non-overlapping groups and λ1, … , λG be positive real numbers. Define the cone sets for any subset of groups . Also define the set of group s-sparse vectors , for some , ∣J∣ ≤ s}. Then

| (15) |

where L′ = Lλmax/λmin, is the ball of unit norm vectors in and cl{.}, conv{.} respectively denote the closure and convex hull of a set.

Proof Note that for any , ∣J∣ ≤ s, and , we have

which implies

Hence the union of the cone sets on the left hand side of (15) is a subset of .

We will show that the set A is a subset of , the closed convex hull on the right hand side of (15). Since both sets A and B are closed convex, it is enough to show that the support function of A is dominated by the support function of B.

The support function of A is given by ϕA(z) = supθ∈A〈θ, z〉. For any , let S ⊆ {1, … , G} be a subset of top s groups in terms of the ℓ2 norm of z[g]. Thus, ∥z[Sc]∥2, ∞ ≤ ∥z[g]∥ for all g ∈ S. This implies . So, we have

| (16) |

| (17) |

On the other hand, support function of is given by

This concludes the proof. ■

Lemma A.5 Consider a matrix Xn×p with rows independently distributed as N(0, Σ), Λmin(Σ) > 0. Let , … , be any partition of {1, … , p} into G non-overlapping groups of size k1, … , kg, respectively. Let C = X′X/n denote the sample Gram matrix and denote the set of group s-sparse vectors defined in Lemma A.4. Then, for any integer s ≥ 1 and any η > 0, we have

| (18) |

for some universal positive constants Ci.

Proof We consider a fixed vector with ∥v∥ ≤ 1, the support of which can be covered by a set J of at most s groups, i.e., , , ∣J∣ ≤ s. Define Y = Xv. Then each coordinate of Y is independently distributed as , where .

Then, for any η > 0, Hanson-Wright inequality of Rudelson and Vershynin (2013) ensures

Next, we extend this deviation bound on all vectors v in the sparse set

| (19) |

For a given , ∣J∣ = 2s, we define and note that . For an ϵ > 0 to be specified later, we construct an ϵ-net of . Since ∑g∈J kg ≤, 2s kmax it is possible to construct such a net with cardinality at most (1 + 2/ϵ)2skmax (Vershynin, 2009).

We want a tail inequality for , where Δ = C − Σ. Since is an ϵ-cover of , for any , there exists such that w = v − v0 satisfies ∥w∥ ≤ ϵ. Then

Taking supremum over all , and noting that , we obtain

| (20) |

To upper bound the third term, note that , and

Hence

From equation (20), we now have

Choosing ϵ > 0 small enough so that (1 − 6ϵ − ϵ2) > 1/2, we obtain

Taking supremum over choices of J, we get

In order to extend this deviation inequality to , we note that any v in the convex hull of can be expressed as , where v1, … , vm are in and 0 ≤ αi ≤ 1, ∑ αi = 1. Then

Also, for every i, j, , and

Hence

Together with the continuity of quadratic forms, this implies

The result then readily follows from the above deviation inequality. ■

Appendix B. Proof of Main Results

Proof [Proof of Proposition 3.2] (a) Note that Σ is a p(T − 1) × p(T − 1) block Toeplitz matrix with (i,j)th block (Σij)1≤i,j≤(T−1) := Γ(i − j), where Γ(ℓ)p×p is the autocovariance function of lag ℓ for the zero-mean VAR(d) process (2), defined as .

We consider the cross spectral density of the VAR(d) process (2)

| (21) |

From standard results of spectral theory we know that , for every ℓ.

We want to find a lower bound on the minimum eigenvalue of Σ, i.e., inf∥x∥=1 x′Σx. Consider an arbitrary p(T − 1)-variate unit norm vector x, formed by stacking the p-tuples x1, … , xT−1.

For every θ ∈ [−π, π], define and note that

Also let μ(θ) be the minimum eigenvalue of the Hermitian matrix f(θ). Following Parter (1961) we have the result

So . Since is the (matrix-valued) characteristic polynomial of the VAR(d) model (2), we have the following representation of the spectral density (see Priestley, 1981, eqn 9.4.23):

Thus, . But for every θ ∈ [−π, π]. The result then follows at once from the standard matrix norm inequality (see e.g., Golub and Van Loan, 1996, Cor 2.3.2)

where

(b) The first part of the proposition ensures that . If the replicates available from different panels are i.i.d, each row of the design matrix is independently and identically distributed according to a N(0, Σ) distribution.

To show that RE(s, L) of (5) holds with high probability for sufficiently large n, it is enough to show that

| (22) |

holds with high probability, where the cone sets are defined as

| (23) |

for all with ∣J∣ ≤ s. Denote the ball of unit norm vectors in by . By scale invariance of ∥Xv∥2/n∥v∥2, it is enough to show that with high probability

| (24) |

where C = X′X/n is the sample Gram matrix.

By part (a), we already know that v′Σv ≥ Λmin(Σ) > 0 for all . So we only need to show that ∣v′ (C − Σ) v∣ ≤ Λmin(Σ)/2 with high probability, uniformly on the set

| (25) |

The proof relies on two key parts. In the first part, we use an extremal representation to show that the above union of the cone sets sits within the closed convex hull of a suitably defined set of group s-sparse vectors. In particular, it follows from Lemma A.4 that

| (26) |

where , for some , ∣J∣ ≤ s}, L′ = Lλmax/λmin and cl{.}, conv{.} respectively denote the closure and convex hull of a set.

The next part of the proof is an upper bound on the tail probability of v′(C − Σ)v, uniformly over all , presented in Lemma A.5. In particular, setting η = Λmin(Σ)/12∥Σ∥(2 + L′)2 in the above lemma yields

| (27) |

for the proposed choice of n. Together with the lower bound on Λmin(Σ) established in part (a), this concludes the proof. ■

Proof [Proof of Theorem 4.1] Consider any solution of the restricted regression

| (28) |

and set . We show that such an augmented vector satisfies the statements of Theorem 4.1 with high probability.

Let . In view of lemmas A.1 and A.3, it suffices to show that the following events happen with probability at least 1 − 4 G1−α:

| (29) |

| (30) |

Note that, in view of Lemma A.1, for some with ∥τ[g]∥ ≤ 1 for all g ∈ S, and . Thus, for any g ∈ S,

Note that V = (C11)−1 Z(1) ~ N(0, σ2 (C11)−1). So , where Σ[g][g] := (Σ−1)[g][g]]. Also, by the second statement of lemma A.2 we have . Therefore is bounded above by

For the proposed choice of δn, this expression is bounded above by 2G−α. Next, for any g ∉ S, we get

Defining , the uniform irrepresentable condition implies that the above probability is bounded above by .

It can then be seen that , where denotes the Schur complement of C22. As before, lemma A.2 establishes that

and the last probability is bounded above by 2G−α for the proposed choice of λ.

The results in the proposition follow by considering the union bound on the two sets of the probability statements made across all . ■

Appendix C. Proof of results on ℓ2-consistency

We first note that each of the p optimization problems in (4) is essentially a generic group lasso regression on n independent samples from a linear model Y = Xβ0 + ϵ, ϵ ~ N(0, σ2):

| (31) |

where , , , ,, and . In Proposition C.1, we first establish the upper bounds on estimation error in the context of a generic group lasso penalized regression problem. The results for regular group NGC then readily follows by applying the above Proposition on the p separate regressions.

Recall the Restricted Eigenvalue assumption required for the derivation of ℓ2 estimation and prediction error. Following van de Geer and Bühlmann (2009), we introduce a slightly weaker notion called Group Compatibility (GC). For a constant L > 0 we say that GC(S, L) condition holds, if there exists a constant ϕcompatible = ϕcompatible(S, L) > 0 such that

| (32) |

The fact that GC(S, L) holds whenever RE(s, L) is satisfied (and ϕRE ≤ ϕcompatible) follows at once from Cauchy Schwarz inequality. We shall derive upper bounds on the prediction and ℓ2,1 estimation error of group lasso estimates involving the compatibility constant. This notion will also be used later to connect the irrepresentable conditions to the consistency results of group lasso estimators.

Proposition C.1 Suppose the GC condition (32) holds with L = 3. Choose α > 0 and denote λmin = min1≤g≤Gλg. If

for every , then, the following statements hold with probability at least 1 − 2G1−α,

| (33) |

| (34) |

If, in addition, RE(2s, 3) holds, then, with the same probability we get

| (35) |

Proof [Proof of Proposition (C.1)] Since is a solution of the optimization problem (31), for all , we have

Plugging in Y = Xβ0 + ϵ, and simplifying the resulting equation, we get

Fix and consider the event . Note that . So Z[g] ~ N(0, σ2C[g][g]). Then,

where the last inequality follows from the second statement of Lemma A.2. Now, let . Then, for xg > 0, if

we get

But this happens if,

which is ensured by the proposed choice of λg.

Next, define . Then, , and on the event , we have, for all ,

Note that vanishes if g ∉ S and is bounded above by if g ∈ S.

This leads to the following sparsity oracle inequality, for all ,

| (36) |

The sparsity oracle inequality (36) with β = β0, and leads to the following two useful bounds on the prediction and ℓ2,1-estimation errors:

| (37) |

| (38) |

Now, assume the group compatibility condition 32 holds. Then,

| (39) |

which implies the first inequality of proposition C.1. The second inequality follows from

where the last step uses (39).

The proof of the last inequality of proposition C.1, i.e., the upper bound on ℓ2 estimation error under RE(2s), is the same as in Theorem 3.1 in Lounici et al. (2011) and is omitted. ■

Proof [Proof of Proposition 3.1] Applying the ℓ2-estimation error of (35) on the ith group lasso regression problem of regular group NGC, we have

with probability at least . Combining the bounds for all i = 1, … , p and noting that , we have the required result. ■

Appendix D. Irrepresentable assumptions and consistency

In this section, we discuss two results involving the compatibility and irrepresentable conditions for group lasso. We first show that a stronger version of the uniform irrepresentable assumption implies the group compatibility (32), and hence, consistency in ℓ2,1 norm. Next we argue that a weaker version of the irrepresentable assumption is indeed necessary for the direction consistency of the group lasso estimates. These results generalize analogous properties of lasso (van de Geer and Bühlmann, 2009; Zhao and Yu, 2006) to the group penalization framework. The proofs are given under a special choice of tuning parameter . Similar results can be derived for the general choice of λg, although their presentation is more involved.

Proposition D.1 Assume uniform irrepresentable condition (13) holds with η ∈ (0,1), and Λmin(C11) > 0. Then group compatibility(S, L) (32) condition holds whenever .

Proof First note that with the above choice of λg the Group Compatibility (S, L) condition simplifies to

| (40) |

Also, the uniform irrepresentable condition guarantees that there exists 0 < η < 1 such that with , we have,

Here K0 = K/λ is a q × q block diagonal matrix with diagonal blocks . Define

| (41) |

Note that , and introduce two Lagrange multipliers λ and λ′ corresponding to the equality and inequality constraints for solving the optimization problem in (41). Also, partition and X = [X(1) : X(2)] into signal and nonsignal parts as in (10). The first q linear equations of the KKT conditions imply that there exists such that

| (42) |

and, for every g ∈ S,

It readily follows that .

Multiplying both sides of (42) by we get

| (43) |

Also, (42) implies

| (44) |

Multiplying both sides of the equation by (K0τ0)T = (τ0)T K0 we obtain

| (45) |

Note that the absolute value of the first term,

| (46) |

is bounded above by

| (47) |

by virtue of the uniform irrepresentable condition and the Cauchy-Schwartz inequality. Assuming the minimum eigenvalue of C11, i.e., Λmin (C11), is positive and considering , the second term is at most λq/Λmin (C11). So (45) implies

| (48) |

In particular, λ ≥ Λmin (C11) (1 − (1 − η)L)/q is positive whenever L < 1/(1 − η). Next, multiply both sides of (44) by to get

| (49) |

Using the upper bound in (47), the right hand side is at least −λ(1 − η)L.

Also a simple consequence of the block inversion formula of the non-negative definite matrix C guarantees that the matrix C22 − C22 (C11)−1 C12 is non-negative definite. Hence,

Putting all the pieces together we get

Plugging in the lower bound for λ we obtain the result; namely,

for any . ■

In this section we investigate the necessity of irrepresentable assumptions for direction consistency of group lasso estimates. To this end we first introduce the notion of weak irrepresentability.

For a q-dimensional vector τ define the stacked direction vector

Weak Irrepresentable Condition is satisfied if

| (50) |

We argue the necessity of weak irrepresentable condition for group sparsity selection and direction consistency under two regularity conditions on the design matrix, as n, p → ∞:

(A1) The minimum eigenvalue of the signal part of the Gram matrix, viz. Λmin(C11), is bounded away from zero.

(A2) The matrices C21 and C22 are bounded above in spectral norm.

As in the last proposition, we set and K0 = K/λ. Suppose that the weak irrepresentable condition does not hold, i.e., for some g ∉ S and ξ > 0, we have,

for infinitely many n. Also suppose that there exists a sequence of positive reals δn → 0 such that the event

satisfies as p, n → ∞.

Note that for large enough n so that δn < ming ∥D(β[g])∥, we have , ∀g ∈ S on the event En.

Then, as in the proof of Theorem 4.1, we have, on the event En,

| (51) |

| (52) |

Substituting the value of from (51) in (52), we have, on the event En,

which implies that

| (53) |

Now note that for large enough n, if ∥C21∥ is bounded above, direction consistency guarantees that the expression on the right is larger than

which in turn is larger than , in view of the weak irrepresentable condition.

This contradicts , since the left-hand side of (53) corresponds to the norm of a centered Gaussian random variable with bounded variance structure while diverges with .

Appendix E. Thresholding Group Lasso Estimates.

Proof [Proof of Theorem 4.2] We use the notations developed in the proof of Proposition C.1. First note that, (ii) follows directly from Theorem 4.1. For (i), since the falsely selected groups are present after the initial thresholding, we get for every such group. Next, we obtain an upper bound for the number of such groups. Specifically, denoting , we get

| (54) |

Next, note that from the sparsity oracle inequality (37), the following holds on the event ,

It readily follows that

where the last inequality follows from the ℓ2,1-error bound of (34). Using this inequality together with (54) gives the result. ■

Contributor Information

Sumanta Basu, Department of Statistics, University of Michigan, Ann Arbor, MI 48109-1092, USA.

Ali Shojaie, Department of Biostatistics, University of Washington, Seattle, WA, USA.

George Michailidis, Department of Statistics, University of Michigan, Ann Arbor, MI 48109-1092, USA.

References

- Bach FR. Consistency of the group lasso and multiple kernel learning. J. Mach. Learn. Res, 9:1179–1225, 2008. ISSN 1532-4435. [Google Scholar]

- Bickel PJ, Ritov Y, and Tsybakov AB. Simultaneous analysis of lasso and dantzig selector. The Annals of Statistics, 37(4):1705–1732, 2009. [Google Scholar]

- Binder M, Hsiao C, and Pesaran MH. Estimation and inference in short panel vector autoregressions with unit roots and cointegration. Econometric Theory, 21:795–837, 2005. ISSN 1469-4360. doi: 10.1017/S0266466605050413. [DOI] [Google Scholar]

- Blanchard O and Perotti R. An empirical characterization of the dynamic effects of changes in government spending and taxes on output. The Quarterly Journal of Economics, 117 (4):1329–1368, 2002. [Google Scholar]

- Breheny P and Huang J. Penalized methods for bi-level variable selection. Stat. Interface, 2(3):369–380, 2009. ISSN 1938-7989. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Cao B and Sun Y. Asymptotic distributions of impulse response functions in short panel vector autoregressions. Journal of Econometrics, 163(2):127–143, 2011. ISSN 0304-4076. [Google Scholar]

- Friedman N. Inferring cellular networks using probabilistic graphical models. Science’s STKE, 303(5659):799, 2004. [DOI] [PubMed] [Google Scholar]

- Fujita A, Sato J, Garay-Malpartida H, Yamaguchi R, Miyano S, Sogayar M, and Ferreira C. Modeling gene expression regulatory networks with the sparse vector autoregressive model. BMC Systems Biology, 1(1):39, 2007. ISSN 1752-0509. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Golub GH and Van Loan CF. Matrix Computations. Johns Hopkins Studies in the Mathematical Sciences. Johns Hopkins University Press, Baltimore, MD, third edition, 1996. ISBN 0-8018-5413-X; 0-8018-5414-8. [Google Scholar]

- Granger CWJ. Investigating causal relations by econometric models and cross-spectral methods. Econometrica, 37(3):424–438, 1969. [Google Scholar]

- Hiemstra C and Jones JD. Testing for linear and nonlinear granger causality in the stock price-volume relation. Journal of Finance, pages 1639–1664, 1994. [Google Scholar]

- Huang J and Zhang T. The benefit of group sparsity. Ann. Statist, 38(4):1978–2004, 2010. ISSN 0090-5364. [Google Scholar]

- Huang J, Ma S, Xie H, and Zhang C-H. A group bridge approach for variable selection. Biometrika, 96(2):339–355, 2009. ISSN 0006-3444. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Kim K, Kim JH, Lee J, Jin HM, Lee SH, Fisher DE, Kook H, Kim KK, Choi Y, and Kim N. Nuclear factor of activated t cells c1 induces osteoclast-associated receptor gene expression during tumor necrosis factor-related activation-induced cytokine-mediated osteoclastogenesis. Journal of Biological Chemistry, 280(42):35209–35216, 2005. [DOI] [PubMed] [Google Scholar]

- Ledoux M and Talagrand M. Probability in Banach Spaces, volume 23 of Ergebnisse der Mathematik und ihrer Grenzgebiete (3) [Results in Mathematics and Related Areas (3)]. Springer-Verlag, Berlin, 1991. ISBN 3-540-52013-9. Isoperimetry and processes. [Google Scholar]

- Lounici K, Pontil M, van de Geer S, and Tsybakov AB. Oracle inequalities and optimal inference under group sparsity. Ann. Statist, 39(4):2164–2204, 2011. [Google Scholar]

- Lozano A, Abe N, Liu Y, and Rosset S. Grouped graphical granger modeling for gene expression regulatory networks discovery. Bioinformatics, 25(12):i110, 2009. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Lütkepohl H. New Introduction to Multiple Time Series Analysis. Springer, 2005. [Google Scholar]

- Michailidis G. Statistical challenges in biological networks. Journal of Computational and Graphical Statistics, 21(4):840–855, 2012. doi: 10.1080/10618600.2012.738614. [DOI] [Google Scholar]

- Parter SV. Extreme eigenvalues of Toeplitz forms and applications to elliptic difference equations. Trans. Amer. Math. Soc, 99:153–192, 1961. ISSN 0002-9947. [Google Scholar]

- Pearl J. Causality: Models, Reasoning, and Inference, volume 47. Cambridge, 2000. [Google Scholar]

- Priestley MB. Spectral Analysis and Time Series. Vol. 2. Academic Press Inc. [Harcourt Brace Jovanovich Publishers], London, 1981. ISBN 0-12-564902-9. Multivariate series, prediction and control, Probability and Mathematical Statistics. [Google Scholar]

- Rangel C, Angus J, Ghahramani Z, Lioumi M, Sotheran E, Gaiba A, Wild DL, and Falciani F. Modeling t-cell activation using gene expression profiling and state-space models. Bioinformatics, 20(9):1361, 2004. [DOI] [PubMed] [Google Scholar]

- Rudelson M and Vershynin R. Hanson-wright inequality and sub-gaussian concentration. Electron. Commun. Probab, 18:no. 82, 1–9, 2013. ISSN 1083-589X. doi: 10.1214/ECP.v18-2865. [DOI] [Google Scholar]

- Shojaie A and Michailidis G. Penalized likelihood methods for estimation of sparse high dimensional directed acyclic graphs. Biometrika, 97(3):519–538, 2010a. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Shojaie A and Michailidis G. Discovering graphical granger causality using a truncating lasso penalty. Bioinformatics, 26(18):i517–i523, 2010b. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Sims CA. Money, income, and causality. The American Economic Review, 62(4):540–552, 1972. [Google Scholar]

- van de Geer S and Bühlmann P. On the conditions used to prove oracle results for the Lasso. Electron. J. Stat, 3:1360–1392, 2009. ISSN 1935-7524. [Google Scholar]

- van de Geer S, Bühlmann P, and Zhou S. The adaptive and the thresholded Lasso for potentially misspecified models (and a lower bound for the Lasso). Electron. J. Stat, 5: 688–749, 2011. ISSN 1935-7524. [Google Scholar]

- Vershynin R. Lectures in Geometric Functional Analysis. available at http://www-personal.umich.edu/romanv/papers/GFA-book/GFA-book.pdf, 2009. [Google Scholar]

- Wainwright MJ. Sharp thresholds for high-dimensional and noisy sparsity recovery using ℓ1-constrained quadratic programming (Lasso). IEEE Transactions on Information Theory, 55(5):2183–2202, May 2009. ISSN 0018-9448. [Google Scholar]

- Waterman ML, Jones KA, et al. Purification of tcf-1 alpha, a t-cell-specific transcription factor that activates the t-cell receptor c alpha gene enhancer in a context-dependent manner. The New Biologist, 2(7):621, 1990. [PubMed] [Google Scholar]

- Wei F and Huang J. Consistent group selection in high-dimensional linear regression. Bernoulli, 16(4):1369–1384, 2010. ISSN 1350-7265. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Zhao P and Yu B. On model selection consistency of lasso. J. Mach. Learn. Res, 7: 2541–2563, December 2006. ISSN 1532-4435. [Google Scholar]

- Zhou S. Thresholded lasso for high dimensional variable selection and statistical estimation. Arxiv preprint arXiv:1002.1583, 2010. [Google Scholar]