Abstract

We quantify the propagation and absorption of large-scale publicly available news articles from the World Wide Web to financial markets. To extract publicly available information, we use the news archives from the Common Crawl, a non-profit organization that crawls a large part of the web. We develop a processing pipeline to identify news articles associated with the constituent companies in the S&P 500 index, an equity market index that measures the stock performance of US companies. Using machine learning techniques, we extract sentiment scores from the Common Crawl News data and employ tools from information theory to quantify the information transfer from public news articles to the US stock market. Furthermore, we analyse and quantify the economic significance of the news-based information with a simple sentiment-based portfolio trading strategy. Our findings provide support for that information in publicly available news on the World Wide Web has a statistically and economically significant impact on events in financial markets.

Keywords: complex systems, financial markets, sentiment analysis, machine learning, transfer entropy

1. Introduction

Studies of the impact of speculation and information arrival on the price dynamics of financial securities have a long history, going back to the early work of Bachelier [1] in 1900 and Mandelbrot [2] in 1963 (see, Jarrow & Protter [3] for a historical account of these and related developments). In 1970, Fama [4] formulated the efficient market hypothesis in financial economics, stating that security prices reflect all publicly available information. Shortly after in 1973, Clark [5] proposed the mixture of distribution hypothesis which asserts that the dynamics of price returns are governed by the information flow available to traders. Subsequently, novel models were introduced such as the sequential information arrival model [6], news jump dynamics [7], both in the 1980s, and truncated Levy processes from econophysics [8] in the 1990s, to name a few examples. With the rise of the World Wide Web and social media came an ever-increasing abundance of available data, allowing for more detailed studies of the impact of news on financial markets at different time-scales [9–17]. Big data, coupled together with advancements in machine learning (ML) [18] and complex systems research [19–22], enabled more efficient analysis of financial data [23], such as web data [24–39], social media [40–44], web search queries [45–47], online blogs [48,49] and other alternative data sources [50].

In this article, we use news articles from the Common Crawl News, a subset of the Common Crawl’s petabytes of publicly available World Wide Web archives, to measure the impact of the arrival of new information about the constituent stocks in the S&P 500 index at the time of publishing. To the best of our knowledge, our study is the first one to use the Common Crawl News data in this way. We develop a cloud-based processing pipeline that identifies news articles in the dataset that are related to the companies in the S&P 500. As the Common Crawl public data archives are getting bigger, they are opening doors for many real-world ‘data-hungry’ applications such as transformers models such as GPT [51] and BERT [52], a recent class of deep learning language models. We believe that public sources of news data are important not only in natural language processing (NLP) and financial markets, but also in complex systems and computational social sciences that are aiming to characterize (mis)information propagation and dynamics in techno-socio-economic systems. The abundance of high-frequency data in financial markets enables complex systems researchers to have microscopic observables, allowing for the testing and verification of hypotheses and theories previously not possible.

Using ML methods from NLP [53,54] we analyse and extract sentiment for each news article in the Common Crawl News repository, assigning a score in the range from zero to one that represent most negative (zero) through most positive (one) sentiment. To quantify the information propagation from the publicly available news articles on the World Wide Web to companies in the S&P 500 index, we use two different approaches. First, we employ tools from information theory of complex systems [20,22,55] to measure the impact of information transfer of the news sentiment scores on the returns of the constituent companies in the S&P 500 index at an intraday level. Second, we implement and simulate the daily portfolio returns resulting from a simple trading strategy based on extracted news sentiment scores for each company. We use the returns from this strategy as an econometric instrument and compare it with several benchmark strategies that do not incorporate news sentiments. Our findings provide support for that information in publicly available news on the World Wide Web has a statistically and economically significant impact on events in financial markets.

2. Methods

2.1. Common Crawl data

News data are freely and publicly available from many online sources including newspapers, news outlets and news aggregators. Assembling a dataset that covers a representative subset of these sources is a major task. For example, it took about three and a half years for Google to develop a release-ready version of Google News.1 In this article, we use a large public datatset from the Common Crawl, a non-profit organization that collects data from the World Wide Web. Since its start in 2008, the Common Crawl has collected petabytes of data and their developers have continued to improve their system and expand the number of websites visited by their crawler.

We performed our analysis using the Common Crawl News, a subset of the Common Crawl dataset containing only news articles.2 From its start in 2016 until the end of February 2020, Common Crawl collected about eight terabytes of news from publicly available news sources on the World Wide Web, corresponding to a total of about 400 million articles covering a wide range of topics in different languages.

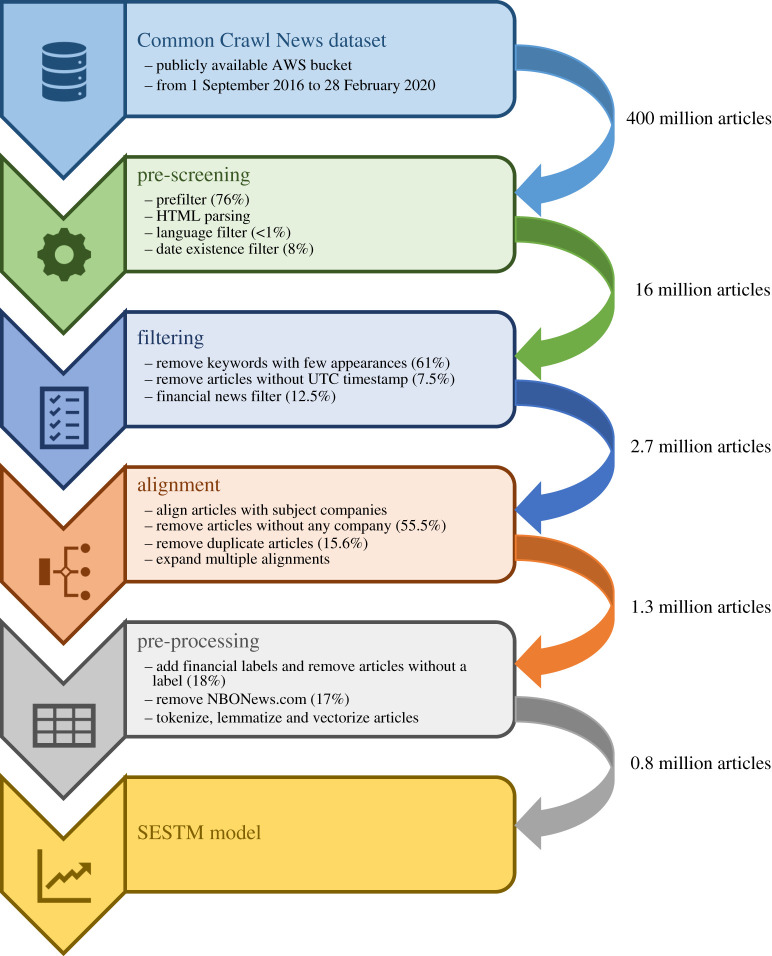

Figure 1 depicts the steps we took in processing the Common Crawl News dataset. Processing this dataset is complicated due to its size, the content’s raw format, the different HTML formats used on websites and the broad coverage of different topics and languages. Furthermore, as part of the processing we had to address several issues including (i) extracting the main text and additional meta-information such as timestamps from the HTML strings of each article, (ii) matching each article to the relevant company names and tickers, (iii) deciding which articles relate to the financial performance of the subject company, and (iv) removing any duplicate news articles present in the data. After the processing, we have a dataset of about 1.3 million financial articles written in English covering S&P 500 companies from August 2016 to February 2020. For each article, we identify its URL, publication time, crawling time, title and all referenced constituent companies in the S&P 500 index. We describe the details of our processing pipeline in the electronic supplementary material, Methods S1 and table S1.

Figure 1.

The pipeline deployed to process and transform the Common Crawl News dataset into the dataset used by the sentiment model. Each box represents one stage of the pipeline where data transformation and filtering steps are applied. The numbers next to the arrows show how many articles are passed on from one stage to the next. The percentages in the brackets after each filtering step show the proportion of articles removed in that specific step.

2.2. Transfer entropy

To avoid making specific assumptions of the relationship between sentiment and stock returns, rather than using classical Granger causality we use transfer entropy, a model-free measure from information theory that is not restricted to linear dynamics or Gaussian assumptions [21,56]. For a random variable X, the Shannon entropy measures the expected level of ‘information’ or ‘uncertainty’ associated with its outcomes. Intuitively, if the logarithm is expressed in base two then H(X) represents the number of bits of the optimal code length for a lossless data compression of events from data source X. In order to quantify information content H(Xt) from a time-dependent stochastic process, {Xt}, one needs to analyse transition probabilities [57] of the underlying stochastic process. In particular, using the idea of finite-order Markov processes, Schreiber [20] introduced transfer entropy (TE), a measure of information transfer of systems evolving through time. For each company in the S&P 500 index, we compute the TE from company news sentiment {st} to stock returns {rt}, defined as

| 2.1 |

where denotes the conditional Shannon entropy [22]. The transfer entropy (2.1) can be expressed as the KL divergence

| 2.2 |

where we define and , which makes explicit that transfer entropy measures the log deviation from the generalized Markov property .

2.3. News sentiment model

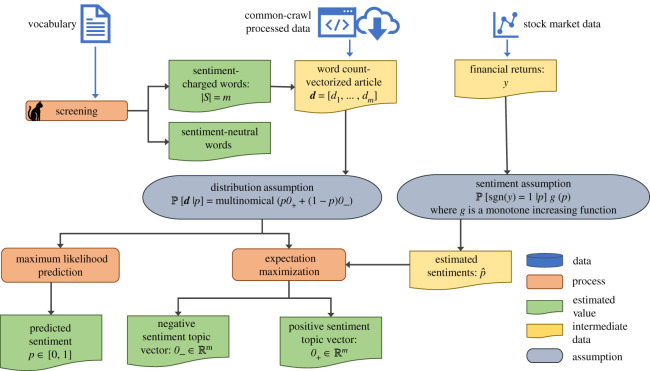

To assign sentiment scores to each news article, in this study we use an approach referred to as sentiment extraction via screening and topic modelling (SESTM) [54]. While closely related to the latent Dirichlet allocation (LDA) [58] and vector-based representations such as word2Vec [59] and GloVe [60]; unlike these models, SESTM is trained in a supervised fashion that facilitates interpretability and provides theoretical guarantees on the accuracy of estimates with minimal assumptions [54]. Figure 2 depicts the main steps of the SESTM model we use in this article. We briefly describe our model below; a more detailed description can be found in the electronic supplementary material, Methods S2.

Figure 2.

Process chart of the sentiment model. The two assumptions underlying the sentiment model are depicted in the middle. The data used in fitting the model is shown at the top. We apply the predicted sentiment scores (bottom-left corner) to analyse transfer entropy and simulate several simple trading strategies.

Before training the SESTM model, we apply standard pre-processing steps from NLP to turn articles into document-term vectors, including stopword-removal, tokenization and lemmatization. For each article i in our dataset, we assign a sentiment score pi ∈ [0, 1] that reflects the financial sentiment the article bears towards the subject company, where pi = 0 and p = 1 denote the most negative and positive sentiment achievable, respectively. We view a sentiment of p = 0.5 as neutral. We assume positive (negative) sentiment for a company most likely leads to positive (negative) return for the company’s stock in the following sense:

| 2.3 |

where denotes the probability, g(·) is a monotonically increasing function and ri is the corresponding financial return of the company pertinent to news article i.

We assume that only a subset of the words in the corpus’s dictionary is relevant and refer to these as sentiment-charged. The remaining words are referred to as sentiment-neutral. The model determines the sentiment-charged vocabulary of words, S, by including only those words that occur sufficiently frequently in our corpus and that are predominantly associated with either positive or negative returns. We remove all sentiment-neutral words from our original dictionary.

For each article, we associate a document-term vector, di, of the occurrences of the sentiment-charged words and assume that it has a mixture multinomial distribution of the form

| 2.4 |

where scales the distribution and piO+ + (1 − pi)O− is a mixture of two topics that determines the probability distribution over the sentiment-charged words. O+ describes the probability of the words in a maximally positive article, pi = 1. Similarly, O− describes the probability of the words in a maximally negative article, pi = 0. We assume that O+, are normalized such that ‖O+‖1 = ‖O−‖1 = 1. For the articles with sentiment not on the boundary, 0 < pi < 1, word frequencies are convex combinations of those from the two topics. We train our model by estimating the vectors O+ and O− via expectation maximization (EM), denoting their estimates by and .

The sentiment associated with the ith news article is determined by maximum likelihood estimation (MLE) applied to the multinomial distribution of the news article’s document-term vector, d. In other words, we determine by solving

| 2.5 |

where and λ > 0 is a regularization parameter. The choice of regularization is equivalent of imposing a beta prior on the sentiment, thereby pulling the estimated values toward the neutral score (p = 0.5).

While our assumptions are the same as the SESTM model [54], we deviate from the original parameter estimation procedure due to the smaller size of our dataset. Specifically, the model parameters are estimated at the beginning of each month based on all the articles observed up until that point.

Then the fitted model is used to predict sentiments during the whole month before being updated again. In addition, we keep the hyperparameters used for the sentiment-charged words selection and the prediction regularization parameter, λ, fixed rather than considering them as a part of the periodic estimation.

3. Results

3.1. The Common Crawl and financial news coverage

Using the Common Crawl News, a subset of the Common Crawl exclusively for news articles, we process and extract news articles related to the constituent companies in the S&P 500 index over the time period from 26 August 2016 to 27 February 2020. We choose the end of August 2016 as our starting point because prior periods have insufficient news coverage for the companies in the S&P 500 index. An article is matched to a company if and only if the company is mentioned in the title or the first paragraph (see the electronic supplementary material, Methods S1 for a detailed description of our data processing pipeline).

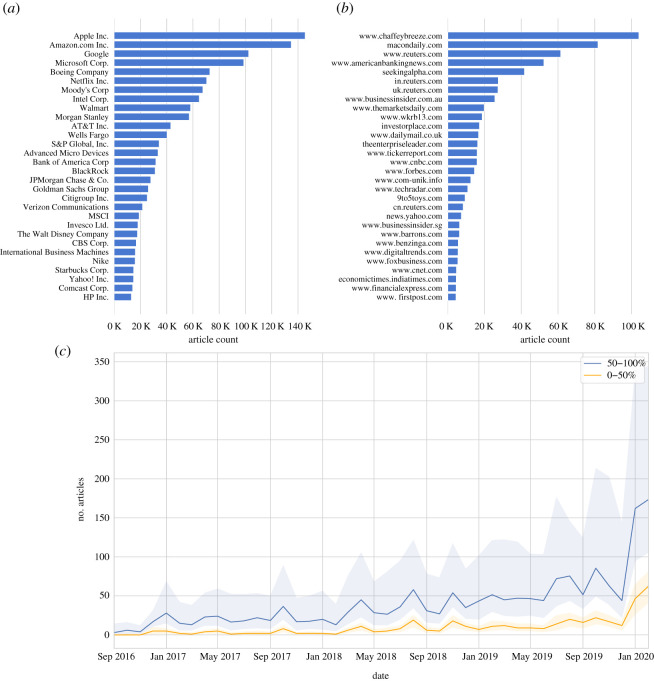

Figure 3a shows the 30 most frequently occurring companies in our dataset as measured by the number of distinct articles that mention each company at least once. Figure 3b shows the 30 top news sources as measured by the number of unique articles.3 Not surprisingly, well-known publicly available financial news websites, such as www.reuters.com, www.seekingalpha.com, www.businessinsider.com and www.cnbc.com appear amongst the most frequent sources. Other frequent sources, including www.chaffeybreeze.com, www.macondaily.com and www.americanbankingnews.com are less well-known. We note that www.chaffeybreeze.com and www.macondaily.com redirect to www.marketbeat.com that publish articles about specific companies and investor ratings. However, as Common Crawl only accesses publicly available sources with free content, any subscription-based news services, such as the Wall Street Journal (https://www.wsj.com) or Barron’s (https://www.barrons.com), are not part of our dataset. Figure 3c depicts the median number of articles per company published each month throughout our time period. We divide the companies into upper and lower halves by total article count. The shaded regions show the 25% and 75% percentiles for each half by month, respectively. We observe that the distribution of published articles is right skewed as some companies have significant news coverage while others are mentioned less frequently. The shaded percentile regions illustrate that the top 50% of the companies receive significantly more coverage than the bottom 50%. In addition, we emphasize that the amount of news is increasing month over month, as Common Crawl continues to increase the number of websites that are crawled.

Figure 3.

Summary of the news dataset used in this article. (a) The most frequently mentioned companies as measured by the number of distinct articles. (b) The most frequent news sources as measured by the number of distinct articles associated with each source. (c) The median number of articles published per company and month. The companies are divided into top and bottom halves by the total number of articles published about them. The shaded regions represent the 25% and 75% percentiles of each half.

3.2. Information transfer from news to stock returns

After processing all articles, for each company i in the S&P 500 index we have an inhomogeneous time series of article sentiment scores (from the SESTM model) occurring at irregular timestamps , that correspond to the publication times of n articles. For our subsequent analysis, we need the time series of sentiment scores to be occurring at regular time intervals. We achieve this by binning the sentiment series to hourly intervals and taking the average scores inside each bin. For example, for each company i we obtain time series of hourly average sentiment scores , where {1, 2, …, m} represent the hourly binned timestamps of total length m. To simplify the notation, we drop the superscript i, referencing the company. Hence the hourly price returns of a particular stock will be denoted by rt (see electronic supplementary material, Data S1, for more details). We characterize the information transfer from the news sentiment series of each company in the S&P 500 index to its stock price returns by computing its transfer entropy as described in formula (2.1). In particular, we use transfer entropy to quantify the amount of uncertainty reduction in the future return, rt+1, for each stock given the information of lagged news sentiment and price returns, (st, rt).

To address any non-stationarity of the time series of sentiment scores, we apply the difference operator on our time series and perform an augmented Dickey–Fuller test to detect the presence of unit roots with p-values (<0.01) obtained through regression surface approximation [61,62].

To compute the statistical significance (p-values) of transfer, we employ a non-parametric bootstrap method of the underlying Markov process [56,63] and use effective transfer entropy [64] to perform finite sample corrections.

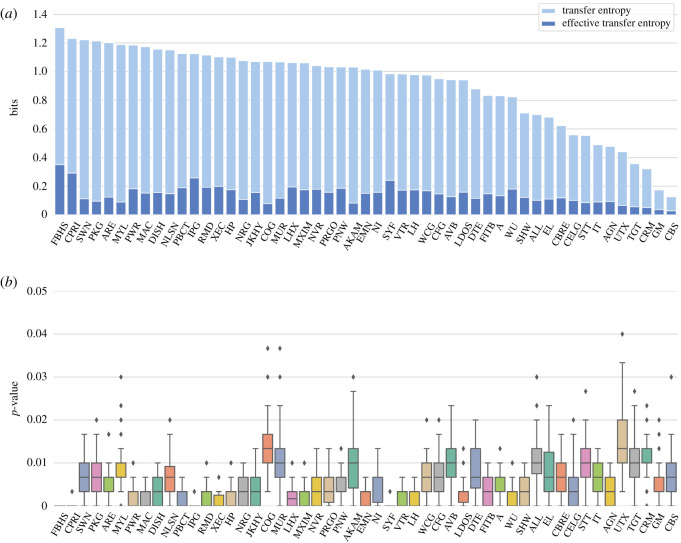

Using our dataset over the period from 3 January 2018 to 27 February 2020,4 for each company we compute the transfer entropy between hourly sentiment score differences and hourly price returns. Figure 4a,b displays companies with statistically significant transfer entropy (p-value < 0.01) and the estimated distribution of p-values. We observe that the distribution of p-values of the depicted stocks are below the 0.05 level. In the electronic supplementary material, figures S4 and S5, we show the results with the control of false discovery rate (FDR < 0.05) and again find the presence of statistically significant reduction of uncertainty for 1 h and 2 h old sentiment signals.

Figure 4.

(a) Companies and corresponding significant Shannon transfer entropy (and effective transfer entropy) from hourly sentiment score differences to hourly price returns. The unit of transfer entropy is bits (logarithm with base 2), corresponding to the reduction of the average optimal code length needed to encode stock returns with lagged sentiment. Transfer entropy was calculated for the period from January 2018 to February 2020 using time series of hourly returns from 9.30 to 15.30 Eastern Time and corresponding lagged average sentiment scores. The statistical significance (p-value < 0.01) of transfer entropy was estimated with 300 bootstrap samples and 100 shuffles to obtain the effective transfer entropy. (b) Box and whisker plots of estimated distributions of the p-values for selected company tickers. The box and whisker plots show Q1, median, Q3, minimum, maximum and estimated outliers.

Our analysis corroborates public news contribution to information dissemination and price discovery at different time-scales [65]. The existence of multiple time-scales in financial markets is connected to a heterogeneous market hypothesis [65] and arises from differences in heterogeneity across market participants, including different trading constraints, risk profiles, locations, information processing and decision frequencies [66].

3.3. Economical significance of news sentiment from Common Crawl News data

In the previous section, we demonstrated that public news sentiment has a statistically significant impact on the uncertainty reduction of future stock returns. This result poses the question whether this impact is also economically significant. To address this question, we analyse performance of the following simple sentiment-based trading strategy. For each day in our sample, we rank all S&P 500 companies based on their news sentiment scores from news articles published between 9.30 on the previous trading day and 9.00 of the current day, where times reflect Eastern Time (ET) throughout this article. For companies with multiple news articles, we average their news sentiments to obtain a single sentiment score for each company. Daily, we form a portfolio with long positions of equal amounts in the 20 companies with the most positive sentiment scores and short positions of equal amounts in the 20 companies with the most negative sentiment scores.5 We refer to this daily rebalanced portfolio as the Day 1 sentiment strategy, where ‘Day 1’ denotes the one-day lag of the sentiment scores. We track its daily open-to-open return over time, i.e. from 9.30 on the day of portfolio formation to 9.30 the following day. Similarly, we form Day 0 and Day −1 sentiment portfolios and track their daily returns through time.6 Note that the Day 0 and Day −1 portfolios are ‘look-ahead’ portfolios as they rely on receiving news ahead of its publishing time. Nevertheless, we use these for comparison purposes below.

Importantly, we emphasize that our experiment here is not meant to reflect implementable real-world trading strategies used by professional money managers and hedge funds. For that, we would also have to include the analysis of trading costs, something which is beyond the scope of this study. Instead, we use a simple trading exercise to study the interplay between public news and financial markets also from an economical point of view.

We compare our sentiment-based trading strategies performance with the SPDR S&P 500 trust (ticker symbol: SPY) and a set of random portfolios as a null-model benchmark. SPY is an exchange-traded fund tracking the S&P 500 index. Each random portfolio is rebalanced daily at the same time as the Day 1 sentiment strategy and consists of long and short legs, each with positions of equal amounts in 20 randomly chosen stocks from the S&P 500 index. We simulate 500 random portfolio histories and use their resulting return series to bootstrap performance metrics.

In figure 5, we depict the cumulative return of the Day 1 sentiment strategy relative to our benchmarks from January 2018 to February 2020. We summarize the performance statistics of the Day 1 sentiment trading strategy and benchmarks in table 1. We use the annualized Sharpe ratio as one of our performance metrics, defined as the annualized average return divided by the annualized volatility of return. Clearly, the Day 1 sentiment strategy outperforms the SPY and random portfolios, obtaining an annualized Sharpe ratio of 1.64 as compared with 0.48 and −0.01 for SPY and random portfolios, respectively. Regressing the returns of the Day 1 sentiment strategy on the returns of SPY, we observe that the intercept, denoted by α, and R2 of this regression is 20.69% (annualized) and 0.4%, respectively. α is significant at the 1%-level. We conclude that that the Day 1 sentiment strategy (i) outperforms the market and (ii) is uncorrelated with the market. This supports that there is economically and statistically significant information in public news sources. However, note that contrary to the transfer entropy measure that quantifies the average uncertainty reduction during the whole period, the trading strategy is an econometric method that is based on a dynamically rebalanced portfolio that adapts to the arrival of new information through time.

Figure 5.

Cumulative returns of trading strategies and benchmarks. ‘Day 1’ represents the cumulative returns of the Day 1 sentiment strategy based on the Common Crawl News dataset from January 2018 to February 2020. SPY is the SPDR S&P 500 trust. ‘Random’ denotes the average of the random strategies along with 1 s.d. confidence bands obtained from 500 simulations. ‘Day 0’ and ‘Day −1’ are the ‘look-ahead’ sentiment strategies relying on future information.

Table 1.

Performance statistics of the Day 1 sentiment trading strategy and benchmarks from January 2018 to February 2020. The sentiment trading strategy is based on news articles from the Common Crawl News dataset. SPY is the SPDR S&P 500 trust. ‘Random’ denotes the baseline strategy where each day we randomly select companies to invest in. ‘Day 0’ and ‘Day −1’ are ‘look-ahead’ sentiment strategies, reported for comparison purposes. Statistics are computed using daily returns (n = 542). MDD is the maximum daily drawdown defined as the maximum observed decline from a historical peak of the price until a new peak is attained. The p-values < 0.001 are denoted with symbol ***, p-values < 0.01 with symbol **, and p-values < 0.02 with symbol *. The p-values for the Sharpe ratios were bootstrapped from 500 random backtests. We obtain α (the intercept) and R2 by regressing the daily returns of the portfolios on the daily returns of the SPY. The performance metrics of the random portfolios were bootstrapped from 500 random backtests. For daily turnover, we use , where wi,t denotes portfolio weight at time t and ri,t+1 represents daily return at time t + 1 of stock i.

| Day −1 | Day 0 | Day 1 | SPY | random | |

|---|---|---|---|---|---|

| annualized average return | 48.95% | 45.42% | 21.02% | 7.25% | |

| annualized volatility | 11.65% | 12.43% | 12.85% | 15.06% | |

| annualized Sharpe ratio | 4.20*** | 3.66*** | 1.64** | 0.48 | |

| MDD | 8.82% | 8.34% | 10.31% | 21.04% | |

| annualized α | 47.88%*** | 45.36%*** | 20.69%* | 0 | |

| R2 | 0.004 | 0.001 | 0.004 | 1 | |

| daily turnover | 82.12% | 82.27% | 82.43% | 0% |

As expected, we note that both the Day −1 and Day 0 strategies outperform the Day 1 strategy, achieving Sharpe ratios of 4.20 and 3.66, respectively. Similarly, they strongly outperform both baselines and display no significant correlation with the market (R2 when regressing on SPY returns is 0.4% and 0.1%, respectively). While these two ‘look-ahead’ strategies rely on future information, they provide additional support to that there is significant correlation between stock returns and sentiment derived from the Common Crawl News dataset.

3.4. Comparing the information transfer of private and public news to financial markets

We investigate to what extent there is a difference in the information transfer of publicly available news as compared with commercially available news data to financial security prices. As noted above, the Common Crawl News data consists of only freely available public news. That is, the dataset does not contain news content from web pages and news providers that are subscription-based or require registration.

To measure the effect of non-public news sources, we obtained the Alexandria dataset,7 a commercial news database consisting of financial news curated from about a dozen subscription-based data sources, including Dow Jones News Wire, Wall Street Journal and Barrons. Alexandria uses a proprietary algorithm to associate each news article to the companies in question and to assign sentiment scores.

We use the same simple trading strategy as above to build daily sentiment portfolio using the Alexandria dataset and compare its trading results with those based on the Common Crawl News dataset. The trading strategy using the Alexandria dataset obtains a Sharpe ratio of 1.51 over our time horizon (other performance metrics are available in the electronic supplementary material, table S3). Importantly, the correlation of the return series of the two strategies is only 0.07 (p-value < 0.1). That the correlation is not statistically different from zero suggests the sentiment scores derived from the Common Crawl News and Alexandria datasets are based on different underlying information. In fact, the average Jaccard index,8 between the long and short stock positions based on the sentiment scores from the Alexandria and Common Crawl News datasets are 0.020 ± 0.022 and 0.019 ± 0.023, respectively. This means that the overlap of companies in the portfolios formed on the Alexandria and Common Crawl News sentiment scores is less than one on average.

We conclude there is valuable information present in both datasets to predict future returns. However, the information from the datasets are different (see electronic supplementary material, figure S3) and the Common Crawl News dataset provides complementary information to that of the Alexandria dataset (see electronic supplementary material, Discussion S1). There are two main reasons for why the datasets are different: (i) they use different news sources and (ii) the computed sentiment scores are determined from different models. Alexandria relies on subscription-based financial news, whereas Common Crawl only accesses publicly available sources. Alexandria deploys a proprietary ML approach to compute sentiment scores for each news article, whereas we use the SESTM model to determine sentiment scores for the news articles from the Common Crawl News dataset.

4. Discussion

Processing roughly 400 million articles from the Common Crawl News data comes with many non-trivial engineering challenges, including parsing different HTML formats used on the websites, identifying and removing duplicate articles, aligning each article and financial return to corresponding company. The SESTM sentiment model was chosen as a consensus of the complexity, interpretability and theoretical foundations in supervised learning and topic modelling in NLP (see electronic supplementary material, Discussion S3 for details). In the electronic supplementary material, table S2 and figure S2, we explore the effects of deep learning models for sentiment extraction, using the pre-trained [67] bidirectional encoder representations from transformers model (BERT) [68]. It is important to emphasize that the focus of this paper is not on what the best sentiment model is, but rather on the analysis of the interaction of news from the World Wide Web and the financial market as a prototype of an efficient ‘information absorbing’ system. By analysing the time-series of sentiment scores and price returns, we find evidence of statistically significant transfer of information on the intraday level over the period from January 2018 to February 2020. In this study, we did not analyse possible confounding effects [69,70] between multivariate time series of sentiment and stock price returns, as we were not focused on causal inference [71,72] but on bivariate transfer of information between news to corresponding stocks. The multivariate case of transfer entropy can be further extended with partial transfer entropy [69,73] in future work. By using a simple sentiment-based trading strategy as an econometric tool only, and not with the intention of being a realistic and implementable trading strategy, we find that our sentiment signals from public news are carrying both economic value and complementary information compared with non-public and commercial news data providers. Our analysis provides support for that public news contributes to information dissemination and price discovery at different time-scales during a single trading day.

Supplementary Material

Acknowledgements

F.F., M.J. and B.P thank Prof. Zhang Ce and Prof. Andreas Krause for their helpful comments and AWS credits during the Data Science Lab course.

Footnotes

This version of the dataset is available at https://commoncrawl.org/2016/10/news-dataset-available.

We omitted the domain www.nbonews.com. While a frequently occurring source, it is no longer accessible and we were unable to verify its legitimacy. We provide summaries that include nbonews.com in the electronic supplementary material, figure S1.

A period between August 2016 and December 2017 is used as a ‘warm-up’ period for the sentiment model. For the exact fitting procedure, see the electronic supplementary material, Methods S2.

A long position refers to the investor having bought and therefore owns shares. A short position refers to the investor having borrowed and sold shares on the open market, anticipating to buy them back later for less money.

Specifically, denoting the current trading day by t, we use news from 9.30 on day t − 1 to 9.00 on day t for the Day 1 portfolio, 9.30 on day t to 9.00 on day t + 1 for the Day 0 portfolio and 9:30 a.m. on day t + 1 to 9.00 on day t + 2 for the Day −1 portfolio. For all portfolios, we enter the market at 9.30 on day t and hold our positions until 9.30 on day t + 1.

The Jaccard index, a measure of the similarity between two discrete sets A and B, is defined as . It takes values in the range [0, 1]. The higher the index, the greater the similarity between the two sets.

Contributor Information

Nino Antulov-Fantulin, Email: anino@ethz.ch.

Petter N. Kolm, Email: petter.kolm@nyu.edu.

Data accessibility

Data and relevant code for this research work are stored in GitHub: https://github.com/ffaltings/news_and_markets/tree/v0.1 and have been archived within the Zenodo repository: https://doi.org/10.5281/zenodo.5013220.

Authors' contributions

F.F., M.J. and B.P. contributed equally to the work. All authors contributed to the writing and editing of the manuscript. P.N.K. and N.A.-F. designed and supervised this project. F.F., M.J. and B.P. processed the Common Crawl News data. All authors contributed to the modelling and analysis.

Competing interests

We declare we have no competing interests.

Funding

N.A.-F. acknowledges financial support from SoBigData++ through Grant Agreement no. 871042. M.J. acknowledges financial support from Public Scholarship and Development Fund of the Republic of Slovenia.

References

- 1.Bachelier L. 1900. Théorie de la spéculation. Annales scientifiques de l’École Normale Supérieure17, 21–86.

- 2.Mandelbrot B. 1963. The variation of certain speculative prices. J. Bus. 36, 394-419. ( 10.1086/294632) [DOI] [Google Scholar]

- 3.Jarrow R, Protter P. 2004. A short history of stochastic integration and mathematical finance: the early years, 1880–1970. In A festschrift for Herman Rubin (ed. A DasGupta), pp. 75–91. Beachwood, OH: Institute of Mathematical Statistics.

- 4.Fama EF. 1970. Efficient capital markets: a review of theory and empirical work. J. Finance 25, 383-417. ( 10.2307/2325486) [DOI] [Google Scholar]

- 5.Clark PK. 1973. A subordinated stochastic process model with finite variance for speculative prices. Econometrica 41, 135-155. ( 10.2307/1913889) [DOI] [Google Scholar]

- 6.Jennings RH, Starks LT, Fellingham JC. 1981. An equilibrium model of asset trading with sequential information arrival. J. Finance 36, 143-161. ( 10.1111/j.1540-6261.1981.tb03540.x) [DOI] [Google Scholar]

- 7.Jorion P. 1988. On jump processes in the foreign exchange and stock markets. Rev. Financ. Stud. 1, 427-445. ( 10.1093/rfs/1.4.427) [DOI] [Google Scholar]

- 8.Stanley H, Mantegna R. 2000. An introduction to econophysics. Cambridge, UK: Cambridge University Press. [Google Scholar]

- 9.Chan WS. 2003. Stock price reaction to news and no-news: drift and reversal after headlines. J. Financ. Econ. 70, 223-260. ( 10.1016/S0304-405X(03)00146-6) [DOI] [Google Scholar]

- 10.Maheu JM, McCurdy TH. 2004. News arrival, jump dynamics, and volatility components for individual stock returns. J. Finance 59, 755-793. ( 10.1111/j.1540-6261.2004.00648.x) [DOI] [Google Scholar]

- 11.Vega C. 2006. Stock price reaction to public and private information. J. Financ. Econ. 82, 103-133. ( 10.1016/j.jfineco.2005.07.011) [DOI] [Google Scholar]

- 12.Tetlock PC. 2007. Giving content to investor sentiment: the role of media in the stock market. J. Finance 62, 1139-1168. ( 10.1111/j.1540-6261.2007.01232.x) [DOI] [Google Scholar]

- 13.Groß-Klußmann A, Hautsch N. 2011. When machines read the news: using automated text analytics to quantify high frequency news-implied market reactions. J. Empir. Finance 18, 321-340. ( 10.1016/j.jempfin.2010.11.009) [DOI] [Google Scholar]

- 14.Birz G, Lott JR Jr. 2011. The effect of macroeconomic news on stock returns: new evidence from newspaper coverage. J. Bank. Finance 35, 2791-2800. ( 10.1016/j.jbankfin.2011.03.006) [DOI] [Google Scholar]

- 15.Vlastakis N, Markellos RN. 2012. Information demand and stock market volatility. J. Bank. Finance 36, 1808-1821. ( 10.1016/j.jbankfin.2012.02.007) [DOI] [Google Scholar]

- 16.Engelberg JE, Reed AV, Ringgenberg MC. 2012. How are shorts informed? Short sellers, news, and information processing. J. Financ. Econ. 105, 260-278. ( 10.1016/j.jfineco.2012.03.001) [DOI] [Google Scholar]

- 17.Lillo F, Miccichè S, Tumminello M, Piilo J, Mantegna RN. 2015. How news affects the trading behaviour of different categories of investors in a financial market. Quant. Finance 15, 213-229. ( 10.1080/14697688.2014.931593) [DOI] [Google Scholar]

- 18.Goodfellow I, Bengio Y, Courville A. 2016. Deep learning. New York, NY: MIT press. [Google Scholar]

- 19.Newman ME. 2011. Complex systems: a survey. (http://arxiv.org/abs/1112.1440)

- 20.Schreiber T. 2000. Measuring information transfer. Phys. Rev. Lett. 85, 461-464. ( 10.1103/PhysRevLett.85.461) [DOI] [PubMed] [Google Scholar]

- 21.Barnett L, Barrett AB, Seth AK. 2009. Granger causality and transfer entropy are equivalent for Gaussian variables. Phys. Rev. Lett. 103, 238701. ( 10.1103/PhysRevLett.103.238701) [DOI] [PubMed] [Google Scholar]

- 22.Jizba P, Kleinert H, Shefaat M. 2012. Rényi’s information transfer between financial time series. Physica A 391, 2971-2989. ( 10.1016/j.physa.2011.12.064) [DOI] [Google Scholar]

- 23.Fang B, Zhang P. 2016. Big data in finance. In Big data concepts, theories, and applications (eds S Yu, S Guo), pp. 391–412. Berlin, Germany: Springer.

- 24.Ranco G, Bordino I, Bormetti G, Caldarelli G, Lillo F, Treccani M. 2016. Coupling news sentiment with web browsing data improves prediction of intra-day price dynamics. PLoS ONE 11, e0146576. ( 10.1371/journal.pone.0146576) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 25.Heiberger RH. 2015. Collective attention and stock prices: evidence from Google trends data on Standard and Poor’s 100. PLoS ONE 10, e0135311. ( 10.1371/journal.pone.0135311) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 26.Bordino I, Kourtellis N, Laptev N, Billawala Y. 2014. Stock trade volume prediction with yahoo finance user browsing behavior. In 2014 IEEE 30th Int. Conf. on Data Engineering, Chicago, IL, 31 March, pp. 1168–1173. New York, NY: IEEE.

- 27.Piškorec M, Antulov-Fantulin N, Novak PK, Mozetič I, Grčar M, Vodenska I, Šmuc T. 2014. Cohesiveness in financial news and its relation to market volatility. Sci. Rep. 4, 5038. ( 10.1038/srep05038) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 28.Zhang Y, Feng L, Jin X, Shen D, Xiong X, Zhang W. 2014. Internet information arrival and volatility of SME price index. Physica A 399, 70-74. ( 10.1016/j.physa.2013.12.034) [DOI] [Google Scholar]

- 29.Curme C, Stanley HE, Vodenska I. 2015. Coupled network approach to predictability of financial market returns and news sentiments. Int. J. Theor. Appl. Finance 18, 1550043. ( 10.1142/S0219024915500430) [DOI] [Google Scholar]

- 30.Alanyali M, Moat HS, Preis T. 2013. Quantifying the relationship between financial news and the stock market. Sci. Rep. 3, 3578. ( 10.1038/srep03578) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 31.Dos Rieis JCS, de Souza FB, de Melo VPOS, Prates RO, Kwak H, An J. 2015. Breaking the news: first impressions matter on online news. In 9th Int. AAAI Conf. on Web and Social Media, Oxford, UK, 21 April.

- 32.Yang SY, Mo SYK, Liu A, Kirilenko AA. 2017. Genetic programming optimization for a sentiment feedback strength based trading strategy. Neurocomputing 264, 29-41. ( 10.1016/j.neucom.2016.10.103) [DOI] [Google Scholar]

- 33.Ruiz-Martínez JM et al. 2012. Semantic-based sentiment analysis in financial news. In Proc. of the 1st Int. Workshop on Finance and Economics on the Semantic Web, Heraklion, Greece, 27–28 May, pp. 38–51. Extended Semantic Web Conference.

- 34.Wang C-J, Tsai M-F, Liu T, Chang C-T. 2013. Financial sentiment analysis for risk prediction. In Proc. of the 6th Int. Joint Conf. on Natural Language Processing, Nagoya, Japan, October, pp. 802–808. Asian Federation of Natural Language Processing.

- 35.Schumaker RP, Chen H. 2009. Textual analysis of stock market prediction using breaking financial news: the AZFin text system. ACM Trans. Inf. Syst. (TOIS) 27, 1-19. ( 10.1145/1462198.1462204) [DOI] [Google Scholar]

- 36.Amin SA, Rai DS. 2019. Sentiment analysis and stock market prediction—using news to predict stock markets. J. Artif. Intell. Res. Adv. 6, 17-24. [Google Scholar]

- 37.Hisano R, Sornette D, Mizuno T, Ohnishi T, Watanabe T. 2013. High quality topic extraction from business news explains abnormal financial market volatility. PLoS ONE 8, e64846. ( 10.1371/journal.pone.0064846) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 38.Ding X, Zhang Y, Liu T, Duan J. 2015. Deep learning for event-driven stock prediction. In 24th Int. Joint Conf. on Artificial Intelligence, Buenos Aires, Argentina, 25–31 July. AAAI Press.

- 39.Luss R, d’Aspremont A. 2015. Predicting abnormal returns from news using text classification. Quant. Fin. 15, 999-1012. ( 10.1080/14697688.2012.672762) [DOI] [Google Scholar]

- 40.Ranco G, Aleksovski D, Caldarelli G, Grčar M, Mozetič I. 2015. The effects of Twitter sentiment on stock price returns. PLoS ONE 10, e0138441. ( 10.1371/journal.pone.0138441) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 41.Rao T, Srivastava S. 2012. Analyzing stock market movements using Twitter sentiment analysis. In Proc. of the 2012 Int. Conf. on Advances in Social Networks Analysis and Mining (ASONAM 2012), ASONAM ’12, (USA), Istanbul, Turkey, August, pp. 119–123. Washington, DC: IEEE Computer Society.

- 42.Mao H, Counts S, Bollen J. 2011. Predicting financial markets: comparing survey, news, Twitter and search engine data. (http://arxiv.org/abs/1112.1051).

- 43.Zheludev I, Smith R, Aste T. 2014. When can social media lead financial markets? Sci. Rep. 4, 4213. ( 10.1038/srep04213) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 44.Broadstock DC, Zhang D. 2019. Social-media and intraday stock returns: the pricing power of sentiment. Finance Res. Lett. 30, 116-123. ( 10.1016/j.frl.2019.03.030) [DOI] [Google Scholar]

- 45.Bordino I, Battiston S, Caldarelli G, Cristelli M, Ukkonen A, Weber I. 2012. Web search queries can predict stock market volumes. PLoS ONE 7, e40014. ( 10.1371/journal.pone.0040014) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 46.Preis T, Moat HS, Stanley HE. 2013. Quantifying trading behavior in financial markets using google trends. Sci. Rep. 3, 1684. ( 10.1038/srep01684) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 47.Zhang W, Shen D, Zhang Y, Xiong X. 2013. Open source information, investor attention, and asset pricing. Econ. Model. 33, 613-619. ( 10.1016/j.econmod.2013.03.018) [DOI] [Google Scholar]

- 48.De Choudhury M, Sundaram H, John A, Seligmann DD. 2008. Can blog communication dynamics be correlated with stock market activity? In Proc. of the 19th ACM Conf. on Hypertext and Hypermedia, Pittsburgh, PA, 19–21 June, pp. 55–60. New York, NY: Association for Computing Machinery.

- 49.Ruiz EJ, Hristidis V, Castillo C, Gionis A, Jaimes A. 2012. Correlating financial time series with micro-blogging activity. In Proc. of the 5th ACM Int. Conf. on Web Search and Data Mining, pp. 513–522.

- 50.Kolanovic M, Krishnamachari RT. 2017. Big data and AI strategies: machine learning and alternative data approach to investing (ed. M Kolanovic). JP Morgan Global Quantitative & Derivatives Strategy Report. New York, NY: JP Morgan.

- 51.Brown TB.et al.2020Language models are few-shot learners. (http://arxiv.org/abs/2005.14165)

- 52.Wang S, Zhang Z. 2019. Construction of financial news sentiment indices using deep neural networks. Available at SSRN 3477690.

- 53.Blei DM, Ng AY, Jordan MI. 2003. Latent Dirichlet allocation. J. Mach. Learn. Res. 3, 993-1022. [Google Scholar]

- 54.Ke Z, Kelly BT, Xiu D. 2019. Predicting returns with text data. Available at SSRN: https://ssrn.com/abstract=3489226.

- 55.Shannon CE. 1948. A mathematical theory of communication. Bell Syst. Tech. J. 27, 379-423. ( 10.1002/j.1538-7305.1948.tb01338.x) [DOI] [Google Scholar]

- 56.Dimpfl T, Peter FJ. 2013. Using transfer entropy to measure information flows between financial markets. Stud. Nonlinear Dyn. Econom. 17, 85-102. ( 10.1515/snde-2012-0044) [DOI] [Google Scholar]

- 57.Hlavackova-Schindler K, Palus M, Vejmelka M, Bhattacharya J. 2007. Causality detection based on information-theoretic approaches in time series analysis. Phys. Rep. 441, 1-46. ( 10.1016/j.physrep.2006.12.004) [DOI] [Google Scholar]

- 58.Blei DM, Ng AY, Jordan MI. 2003. Latent Dirichlet allocation. J. Mach. Learn. Res. 3, 993-1022. [Google Scholar]

- 59.Mikolov T, Sutskever I, Chen K, Corrado G, Dean J. 2013. Distributed representations of words and phrases and their compositionality. In Proc. of the 26th Int. Conf. on Neural Information Processing Systems – vol. 2, NIPS’13, Red Hook, NY, USA, pp. 3111–3119. Curran Associates Inc.

- 60.Pennington J, Socher R, Manning C. 2014. GloVe: global vectors for word representation. In Proc. of the 2014 Conf. on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, pp. 1532–1543. Association for Computational Linguistics.

- 61.MacKinnon JG. 1994. Approximate asymptotic distribution functions for unit-root and cointegration tests. J. Bus. Econ. Stat. 12, 167-176. ( 10.1080/07350015.1994.10510005) [DOI] [Google Scholar]

- 62.MacKinnon JG. 2010. Critical values for cointegration tests. Technical Report, Queen’s Economics Department Working Paper.

- 63.Simon B, Thomas D, Franziska JP, David JZ. 2019. Rtransferentropy–quantifying information flow between different time series using effective transfer entropy. SoftwareX 10, 1-9. ( 10.1016/j.softx.2019.100265) [DOI] [Google Scholar]

- 64.Marschinski R, Kantz H. 2002. Analysing the information flow between financial time series. Eur. Phys. J. B-Condens. Matter Complex Syst. 30, 275-281. ( 10.1140/epjb/e2002-00379-2) [DOI] [Google Scholar]

- 65.Müller UA, Dacorogna MM, Davé RD, Olsen RB, Pictet OV, Von Weizsäcker JE. 1997. Volatilities of different time resolutions—analyzing the dynamics of market components. J. Empir. Finance 4, 213-239. ( 10.1016/S0927-5398(97)00007-8) [DOI] [Google Scholar]

- 66.Corsi F. 2009. A simple approximate long-memory model of realized volatility. J. Financ. Econom. 7, 174-196. ( 10.1093/jjfinec/nbp001) [DOI] [Google Scholar]

- 67.Araci D. 2019. FinBERT: financial sentiment analysis with pre-trained language models. (http://arxiv.org/abs/1908.10063).

- 68.Devlin J, Chang M-W, Lee K, Toutanova K. 2018. BERT: pre-training of deep bidirectional transformers for language understanding. (http://arxiv.org/abs/1810.04805).

- 69.Verdes PF. 2005. Assessing causality from multivariate time series. Phys. Rev. E 72, 026222. ( 10.1103/PhysRevE.72.026222) [DOI] [PubMed] [Google Scholar]

- 70.Vakorin VA, Krakovska OA, McIntosh AR. 2009. Confounding effects of indirect connections on causality estimation. J. Neurosci. Methods 184, 152-160. ( 10.1016/j.jneumeth.2009.07.014) [DOI] [PubMed] [Google Scholar]

- 71.Sugihara G, May R, Ye H, Hsieh C-h, Deyle E, Fogarty M, Munch S. 2012. Detecting causality in complex ecosystems. Science 338, 496-500. ( 10.1126/science.1227079) [DOI] [PubMed] [Google Scholar]

- 72.Yang AC, Peng C-K, Huang NE. 2018. Causal decomposition in the mutual causation system. Nat. Commun. 9, 1-10. ( 10.1038/s41467-017-02088-w) [DOI] [PMC free article] [PubMed] [Google Scholar]

- 73.Kugiumtzis D. 2013. Partial transfer entropy on rank vectors. Eur. Phys. J. Spec. Top. 222, 401-420. ( 10.1140/epjst/e2013-01849-4) [DOI] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.

Supplementary Materials

Data Availability Statement

Data and relevant code for this research work are stored in GitHub: https://github.com/ffaltings/news_and_markets/tree/v0.1 and have been archived within the Zenodo repository: https://doi.org/10.5281/zenodo.5013220.