Abstract

Hand gesture recognition is viewed as a significant field of exploration in computer vision with assorted applications in the human–computer communication (HCI) community. The significant utilization of gesture recognition covers spaces like sign language, medical assistance and virtual reality–augmented reality and so on. The underlying undertaking of a hand gesture-based HCI framework is to acquire raw data which can be accomplished fundamentally by two methodologies: sensor based and vision based. The sensor-based methodology requires the utilization of instruments or the sensors to be genuinely joined to the arm/hand of the user to extract information. While vision-based plans require the obtaining of pictures or recordings of the hand gestures through a still/video camera. Here, we will essentially discuss vision-based hand gesture recognition with a little prologue to sensor-based data obtaining strategies. This paper overviews the primary methodologies in vision-based hand gesture recognition for HCI. Major topics include different types of gestures, gesture acquisition systems, major problems of the gesture recognition system, steps in gesture recognition like acquisition, detection and pre-processing, representation and feature extraction, and recognition. Here, we have provided an elaborated list of databases, and also discussed the recent advances and applications of hand gesture-based systems. A detailed discussion is provided on feature extraction and major classifiers in current use including deep learning techniques. Special attention is given to classify the schemes/approaches at various stages of the gesture recognition system for a better understanding of the topic to facilitate further research in this area.

Keywords: Human–computer interaction (HCI), Vision-based gesture recognition (VGR), Static and dynamic gestures, Deep learning methods

Introduction

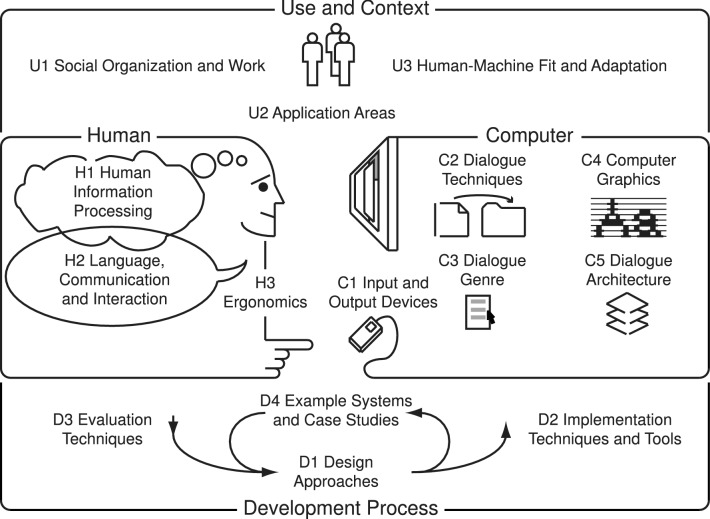

In this period of innovation, where we are profound into the information age, technological progression has arrived at such a point that nearly everybody in each nook and corner of the world independent of any discipline, has interacted with computers somehow or the other. However, in general, a typical user ought not to need to secure computer education to utilize computers for basic undertakings in regular day-to-day life. Human–computer interaction (HCI) is a field of study which plans to encourage the communication of clients, regardless of whether specialists or fledglings, with computers in a simple way. It improves user experience by distinguishing factors that help to diminish the expectation to learn and adapt for new users and furthermore gives arrangements like console easy routes and other navigational guides for common users. In designing an HCI system, three main factors should be considered: functionality, usability and emotion [73]. Functionality denotes actions or services a system avails the user. However, a system’s functionality is only useful if the user can exploit it effectively and efficiently. The usability of a system denotes the extent to which a system can be used effectively and efficiently to fulfill user requirements. A proper balance between functionality and usability results in good system design. Taking account of emotion in HCI includes designing interfaces that are pleasurable to use from a physiological, psychological, social, and aesthetic perspective. Considering all three factors, an interface should be designed to fit optimally between the user, device, and required services. Figure 1 illustrates this concept.

Fig. 1.

Overview of human–computer interaction [73]

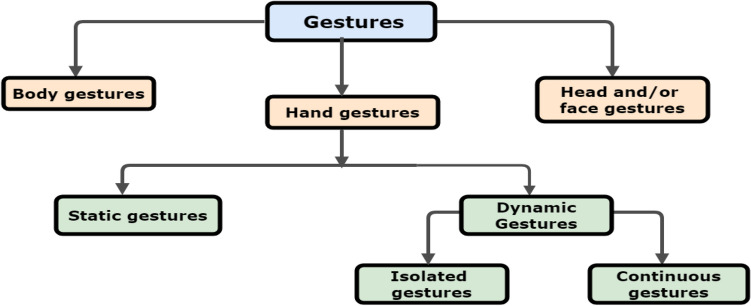

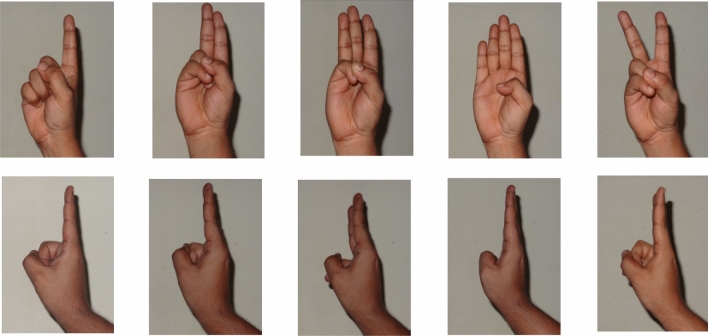

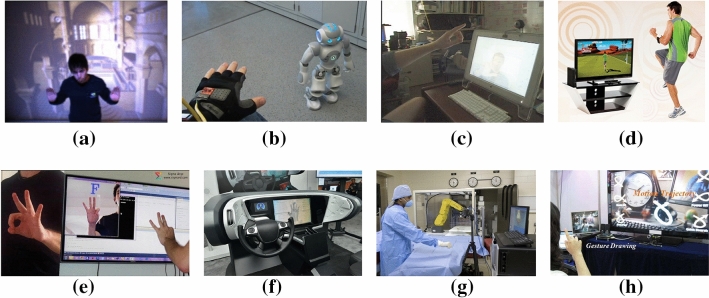

In recent years, significant effort has been devoted to body motion analysis and gesture recognition. With the increased interest in human–computer interaction (HCI), research related to gesture recognition has grown rapidly. Along with speech, they are the obvious choice for natural interfacing between a human and a computer. Human gestures constitute a common and natural means for nonverbal communication. A gesture-based HCI system enables a person to input commands using natural movements of the hand, head, and other parts of the body [171] (Fig. 2). And since the hand is the most widely used body part for gesturing apart from face [93], hand gesture recognition from visual images forms an important part of this research. Generally, hand gestures are classified as static gestures or simply postures and dynamic or trajectory-based gestures. Again, dynamic or trajectory-based gestures can be isolated or continuous.

Fig. 2.

Classification of different gestures based on used body-part

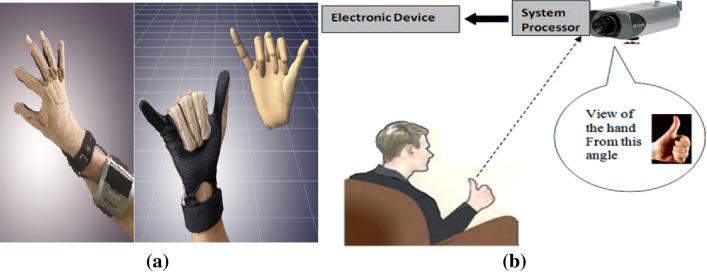

Gesture Acquisition

Before going into more depth, we want to first see how to acquire data or information for hand gesture recognition. The task of acquiring raw data for hand gesture-based HCI systems can be achieved mainly by two approaches [36]: sensor based and vision based (Fig. 3).

Fig. 3.

Human–computer interaction using: a CyberGlove-II (picture courtesy: https://www.cyberglovesystems.com/products/cyberglove-II/photos-video), b vision-based system

Sensor-based approaches require the use of sensors or instruments physically attached to the arm/hand of the user to capture data consisting of position, motion and trajectories of fingers and hand. Sensor-based methods are mainly as follows:

Glove-based approach measures position, acceleration, degree of freedom and bending of the hand and fingers. Glove-based sensors generally constitute flex sensors, gyroscope, accelerometer, etc.

Electromyography (EMG) measures human muscle’s electrical pulses and decode the bio-signal to detect finger movements.

WiFi and radar use radio-waves, broad-beam radar or spectrogram to detect the changes in signal strength.

Others utilize ultrasonic, mechanical, electromagnetic and other haptic technologies.

Vision-based approaches require the acquisition of images or videos of the hand gestures through video cameras.

Single camera—it includes webcams, different types of video cameras and smart-phone cameras.

Stereo-camera and multiple camera-based systems—a pair of standard color video or still cameras capture two simultaneous images to give depth measurement. Multiple monocular cameras can better capture the 3D structure of an object.

Light coding techniques—projection of light to capture the 3D structure of an object. Such devices include PrimeSense, Microsoft Kinect, Creative Senz-3D, Leap Motion Sensor, etc.

Invasive techniques—body markers such as hand color, wrist bands, and finger marker. But the term vision based is generally used for capturing images or videos of the bare hand without any glove and/or marker. The sensor-based approach reduces the need for pre-processing and segmentation stage, which is essential to classical vision-based gesture recognition systems.

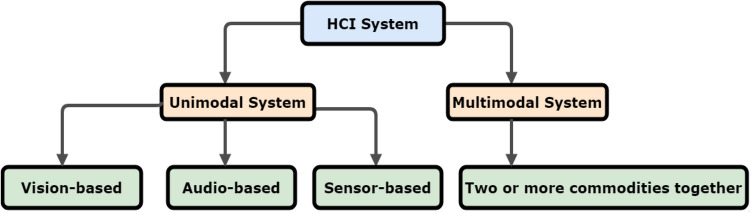

HCI Systems Architecture

The architecture of HCI systems can be broadly categorized into two groups based on their number and diversity of inputs and outputs: unimodal HCI systems and multimodal HCI systems [83] (Fig. 4).

Unimodal HCI systems Unimodal systems can be (a) vision based (e.g., body movement tracking [147], gesture recognition [146], facial expression recognition [115, 189], gaze detection [206], etc.), (b) audio based (e.g., auditory emotion recognition [47], speaker recognition [105], speech recognition [125], etc.), or (c) based on different types of sensors [113].

Multimodal HCI systems Individuals for the most part utilize different modalities during human to human correspondence. Subsequently, to survey a user’s expectation or conduct extensively, HCI frameworks ought to likewise incorporate data from numerous modalities [162]. Multimodal interfaces can be arranged utilizing blends of data sources, for example, gesture and speech [161] or facial posture and speech [86] and so forth. Some of the major applications of multimodal systems are assistance for people with disabilities [106], driver monitoring [204], e-commerce [9], intelligent games [188], intelligent homes and offices [144], and smart video conferencing [142].

Fig. 4.

General taxonomy of HCI system based on input channels

Major Problems

It is an essential ability for computers to perceive the gestures of the hand visually for the future advancement of vision-based HCI. Static gesture recognition or pose estimation of the isolated hand, in constrained conditions, is roughly a solved problem to quite an extent. Notwithstanding, there are as yet numerous aspects of dynamic hand gestures that must be addressed, and it is an interdisciplinary challenge mainly due to three difficulties:

Dynamic hand gestures vary spatio-temporally with assorted and different implications;

The human hand has a complex non-unbending design making it hard to perceive; and

There are as yet numerous difficulties in computer vision itself making it a poorly presented problem.

A gesture recognition system depends on certain subsystems associated in arrangement. In view of the arrangement of subsystems, the general exhibition of the framework is reliant on the precision of every subsystem. Along these lines, generally execution is profoundly influenced by a subsystem that is a “feeble connection”. All the gesture-based applications are dependent on the ability of the device to read gestures efficiently and correctly from a stream of continuous gestures. To develop human–computer interfaces using the human hand has motivated researchers for continuous hand gesture recognition. Two major challenges present in the process of continuous hand gesture recognition are—constraints related to segmentation and problems in spotting the hand gestures perfectly in a continuous stream of gestures. But there are many other challenges apart from these which we will discuss now. More on constraints in hand gesture recognition can be found in [32] by the same authors.

- Challenges in segmentation Exact segmentation of the hand or the gesturing body part from the caught recordings or pictures still remains a challenge in computer vision for some limitations like illumination variation, background complexity, and occlusion.

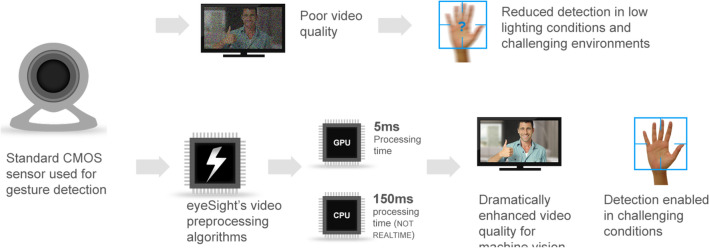

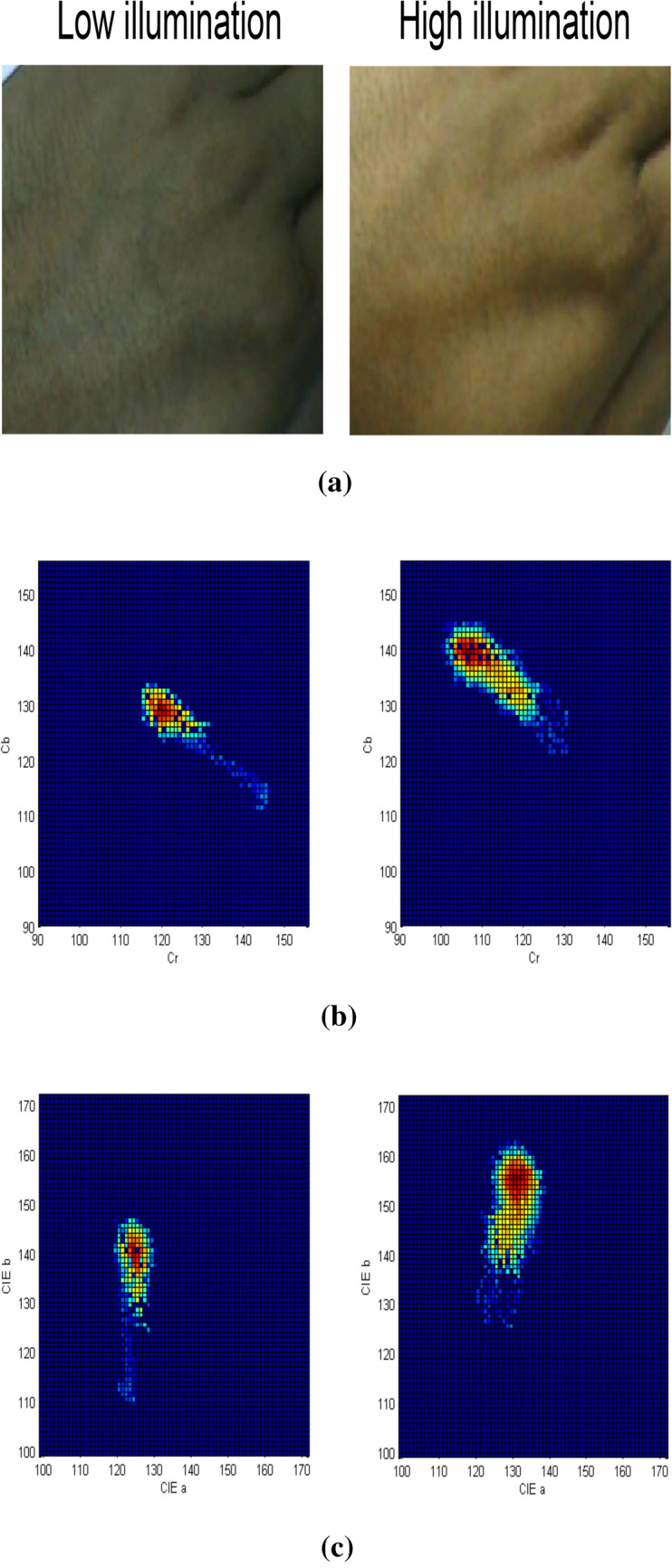

- Illumination variation The precision of skin color segmentation techniques is generally influenced by illumination variation. Because of light changes, the chrominance properties of the skin tones may change, and the skin color will appear different from the original color. Many methods use luminance invariant color spaces to accommodate varying illuminations [27, 66, 89, 90, 173]. However, these methods are useful only for a very narrow range of illumination changes. Moritz et al. found that the skin reflectance locus and the illuminant locus are directly related, which means that the perceived color is not independent of illumination changes [209]. Sigal et al. used dynamic histogram segmentation technique to counter illumination changes [199, 200]. In the dynamic histogram method, a second-order Markov model is used to predict the histogram’s time-evolving nature. The method is applicable only for a set of images with predefined skin-probability pixel values. This method is very promising for videos with smooth illumination changes but fails for abrupt illumination changes. Also, this method is applicable to the time progression of illumination changes. In many cases where the illumination change is discrete, and input data is a set of skin samples obtained under randomly changed illumination conditions, this method performs poorly. Stern et al. used color space switching to track the human face under varying illumination [208]. King et al. used RGB color space and normalized it, and then converted it to YCbCr space. Finally, the Cb-Cr components are chosen to represent the skin pixel to reduce illumination effects. Kuiaski et al. performed a comparative study of the illumination dependency over skin-color segmentation methods [111]. They used naïve Bayesian classifier and histogram-based classification [87] over different skin samples obtained under four different illumination conditions. It was observed that dropping the illumination component of a color space significantly reduces the illumination vulnerability of segmentation methods as compared with methods based on standard RGB color space. However, from the ROC curves obtained under different illumination conditions, it is evident that no color space is fully robust to illumination condition changes. Guoliang et al. grouped the skin-colored pixels according to their illumination component (Y) values in YCbCr color space into a finite number of illumination ranges [236]. It is evident from their analysis and previous literature review that the chrominance components are not independent of the illumination component. As shown in Fig. 5, the shape and position of the color histogram of the image change significantly due to the changes in illumination and the notion of independence can only be applied for a very narrow range of illumination changes. A Back Propagation Neural Network (BPNN) can be used to fit the data, which consists of the mean value of Cb and Cr, namely , co-variance matrix and the mean value of the interval of Y, i.e., as given below

where, , are the Cb-Cr samples belong to illumination range. Here, s are used as input and the Gaussian model are the output. The model is then used to classify the skin and non-skin pixels for a particular illumination level. Bishesh et al. used a log-chromaticity color space (LCCS) by taking the logarithm of ratios of color channels and obtained intrinsic images to reduce the effect of illumination variations in skin color segmentation [53, 100]. However, LCCS gives a correct detection rate (CDR) of and a false detection rate (FDR) of , which are not so good results. Liu et al. used face detection to get the sample skin colors and then applied a dynamic thresholding technique to update the skin color model based on a Bayesian decision framework [130]. This method is dependent on the accuracy of the detected skin pixels from the face detection method, and it may fail if the face is not detected perfectly or the detected face has a mustache, beard, spectacles, or hair falling over it. Although a color correction strategy is used to convert the colors of the frame in the absence of a face, this solution is temporary and prone to error. In [190], the authors converted RGB color-space into HSV and YCbCr color-cues to compensate illumination variation in the skin-segmentation method to segment the hand portion from the background. Biplab et al. has utilized a fusion-based picture explicit model for skin division to deal with the issue of segmentation under differing enlightenment conditions [31]. - Background complexity Another serious issue in gesture recognition is the appropriate division of skin-shaded items (e.g., hands, face) against an intricate static/dynamic background. An example of a complex background is shown in Fig. 6. Different types of complex backgrounds exist:

- Cluttered background (Static) Although the background statistics are fairly constant, the background color and texture are highly varied. This kind of background can be modeled using Gaussian mixture models (GMMs). However, to model backgrounds of increasing complexity, more Gaussians should be included in the GMM.

- Dynamic background The background color and texture change with time. Although hidden Markov models (HMMs) are often used to model signals that have a time-varying structure, unless they follow a well-defined stochastic process, their application to background modeling is computationally complex. The precision of skin division strategies is restricted because of the presence or movement of skin-colored objects behind the scenes which increment false positives.

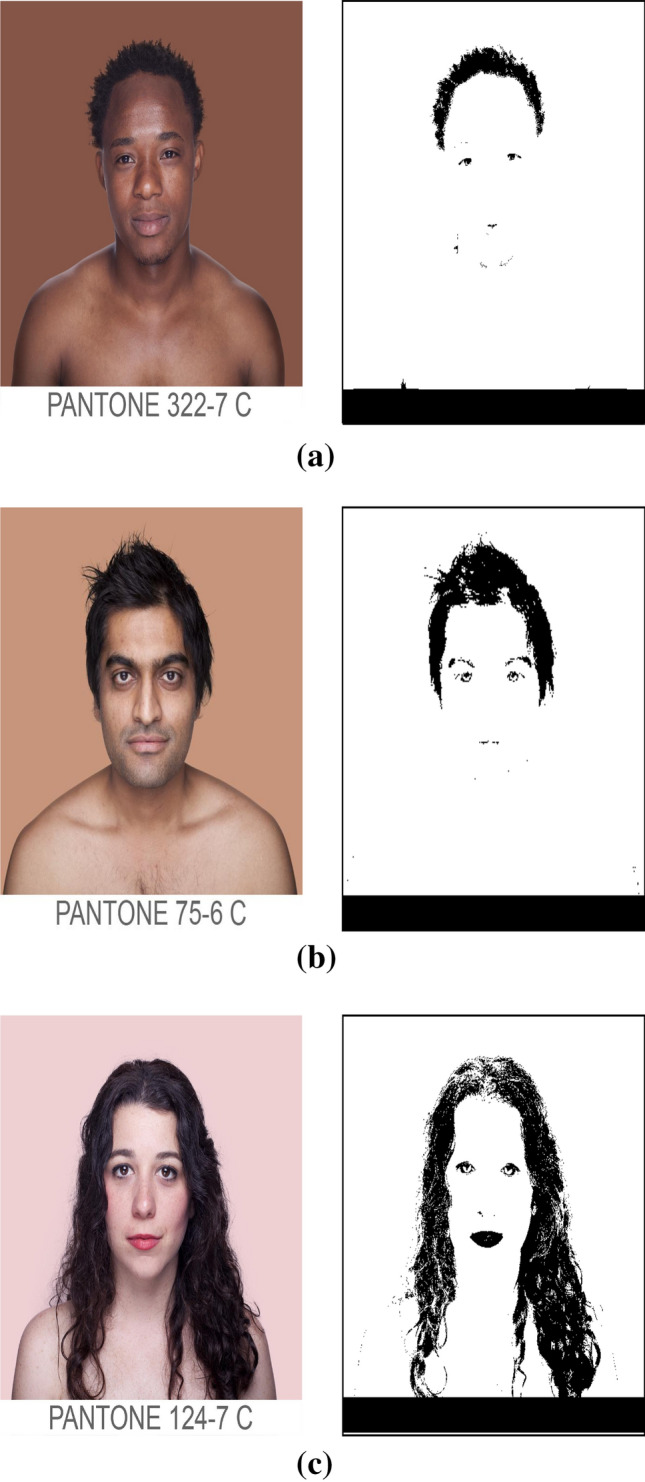

- Camouflage The background is skin-colored or contains skin-colored regions, which may abut the region of interest (e.g., the face, hands). For example, when a face appears behind a hand, this complicates hand gesture recognition, and when a hand appears behind a face, this complicates face region segmentation. These kinds of cases render it nearly impossible to segment the hand or face regions solely from pixel color information. Figure 7 shows a case of camouflage. The major problem with almost all segmentation methods based on the color space is that the feature space lacks spatial information on the objects, such as their shape.

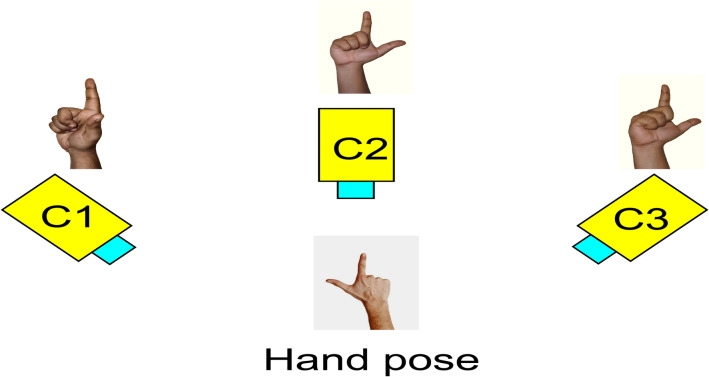

- Occlusion Another major challenge is mitigating the effects of occlusion in gesture recognition. In single-handed gestures, the hand may occlude itself apart from some other objects. The problem is more severe in two-handed gestures where one hand may occlude the other while doing the gestures. The appearance of the hand is affected by both kinds of occlusion subsequently hampering recognition of gestures. In monocular vision-based gesture recognition, the appearance of gesturing hands is view dependent. As shown in Fig. 8, different hand poses appear to be similar in a particular view of observation due to self-occlusions. To solve occlusion problems there are some possible approaches:

- Use of multiple cameras for static/dynamic gestures.

- Use of tracking-based systems for dynamic gestures.

- Use of multiple cameras + tracking-based system for dynamic gestures.

- Multiple camera-based gesture recognition Utsumi et al. captured the hand with multiple cameras, selecting for gesture recognition the camera whose the principal axis is closest to normal to the palm. The hand rotation angle is then estimated using an elliptical model of the palm [218]. Alberola et al. used a pair of cameras to construct a 3D hand model with an occlusion analysis from the stereoscopic image [6]. In this model, a label is added to each of the joints, indicating its degree of visibility from a camera’s viewpoint. The value of each joint’s label range from fully visible to fully occluded. Ogawara et al. fitted a 26-DOF kinematic model to a volumetric model of the hand, constructed from images obtained using multiple infrared cameras arranged orthogonally [158]. Gupta et al. used occlusion maps to improve body pose estimations with multiple views [63].

- Tracking-based gesture recognition Lathuiliere et al. tracked the hand in real time by wearing a dark glove marked with colored cues [120]. The pose of the hand and the postures of the fingers were reconstructed using the position of the color markers in the image. Occlusion was handled by predicting the finger positions and by validating 3D geometric visibility conditions.

- Multiple cameras with tracking-based gesture recognition Instead of using multiple cameras and hand tracking separately, a fusion-based approach using both of them may be suitable for occlusion handling. Utsumi et al. used an asynchronous multicamera tracking system for hand gesture recognition [219]. Though multiple camera-based systems are one solution for this problem, these devices are not purely accurate. View-invariant 3D models or depth measuring sensors can provide some more insight into this problem (Fig. 9).

-

Difficulties related to the articulated shape of the hand The accurate detection and segmentation of the gesturing hand are significantly affected by variations in illumination and shadows, the presence of skin-colored objects in the background, occlusion, background complexity, and different other issues. The complex articulated shape of the hand makes it further tough to model the appearance of the hand for both static and dynamic gestures. Moreover, in the case of dynamic or trajectory-based gestures, the tracking of physical movement of the hand is quite challenging due to the varied size, shape and color of the hand. Generally, it is expected that a generic gesture recognition system should be invariant to the shape, size and appearance of the gesturing body part.

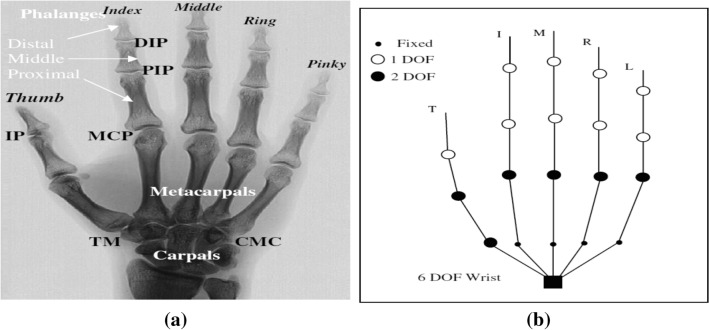

The human hand has 27 bones—14 in the fingers, 5 in the palm, and 8 in the wrist (Fig. 10a). The 9 interphalangeal (IP) joints have one degree of freedom (DOF) each for flexion and extension. The 5 metacarpophalangeal (MCP) joints have 2 DOFs each: one for flexion and extension and the other for abduction or adduction (spreading the fingers) in the palm plane. The carpometacarpal (CMC) joint of the thumb, which is also called the trapeziometacarpal (TM), has 2 DOFs along nonorthogonal and nonintersecting rotation axes [74]. The palm is assumed to be rigid. Lee et al. proposed a 27-DOF hand model, assuming that the wrist has 6 DOFs (Fig. 10b). As evident from Fig. 10, the hand is an articulated object with more than 20 DOF. Now, because of the interdependencies between the fingers, the effective number of DOF reduces to approximately six. Their estimation—in addition to the location and orientation of the hand—results in a large number of parameters to be estimated. Estimation of hand configuration is extremely difficult because of occlusion and the high degrees of freedom. Even data gloves are not able to acquire the hand state perfectly. Compared with sensors for glove-based recognition, computer vision methods are generally at a disadvantage. To get rid of these constraints, [150] has tracked air-written gestures only through finger-tip detection. But it has the limitation that the detection of sign language is not possible. For monocular vision, it is impossible to know the full state of the hand unambiguously for all hand configurations, as several joints and finger parts may be hidden from the view of the camera. Applications in vision-based interfaces need to keep these limitations in mind and focus on gestures that do not require full hand pose information. General hand detection in unconstrained settings is a largely unsolved problem. In view of this, systems often locate and track hands in images using color segmentation, motion flow, background subtraction, or a combination of these techniques.

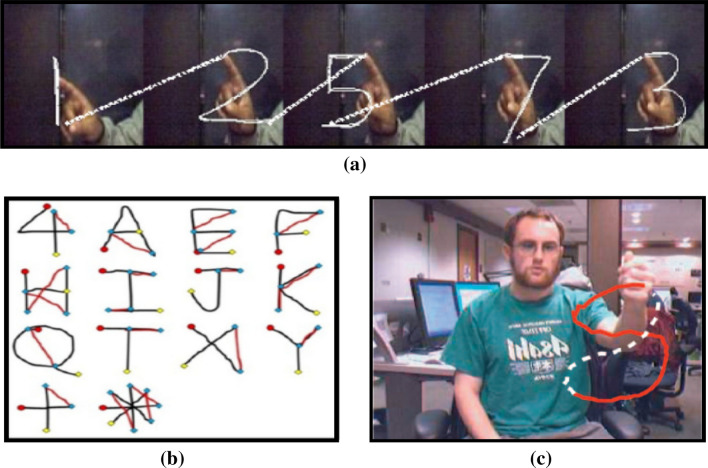

Gesture spotting problem Gesture spotting means locating the beginning and the end-points of a gesture in a nonstop stream of gestures. When gesture boundaries are resolved, the gesture can be extracted and grouped. In any case, spotting significant patterns from a stream of gestures is an exceptionally troublesome errand mainly because of two issues: segmentation ambiguity and spatiotemporal variability. For sign language recognition, the framework should uphold the natural gesturing of the user to empower unhindered collaboration with the entity. Prior to taking care of the video into the recognition framework, the non-gestural movements ought to be taken out from the video sequence since these movements regularly blend a motion grouping. Instances of non-gestural movements incorporate ”movement epenthesis” and ”gesture co-articulation” (appeared in Fig. 11). Movement epenthesis occurs between two gestures and the current gesture is affected by the preceding or the following gesture. Gesture co-articulation is an unwanted movement that occurs in the middle of performing a gesture. In some cases, a gesture could be similar to a sub-part of a longer gesture, referred to as the “sub-gesture problem” [7]. When a user tries to repeat the same gesture, spatiotemporal variations in the shape and speed of the hands will occur. The system must accommodate these variations while maintaining an accurate representation of the gestures. Though static hand gesture recognition problem [52, 59, 60, 156, 174] is almost a solved one, but to date, there are only a handful of works are there dealing with these three problems of continuous hand gesture recognition system [16–18, 95, 133, 211, 240].

- Problems related to two-handed gesture recognition The inclusion of two-handed gestures in a gesture vocabulary can make HCI more natural and expressive for the user. It can greatly increase the size of the vocabulary because of the different combinations of left and right-hand gestures. Previously proposed methods include template-based gesture recognition with motion estimation [78] and two-hand tracking with colored gloves [10]. Despite its advantages, two-handed gesture recognition faces some major difficulties:

- Computational complexity The inclusion of two-handed gestures can be computationally expensive because of their complicated nature.

- Inter-hand overlapping The hands can overlap or occlude each other, thus impeding recognition of the gestures.

- Simultaneous tracking of both hands The accurate tracking of two interacting hands in a real environment is still an unsolved problem. If the two hands are clearly separated, the problem can be solved as two instances of the single-hand tracking problem. However, if the hands interact with each other, it is no longer possible to use the same method to solve the problem because of overlapping hand surfaces [160].

-

Hand gestures with facial expressions Incorporating facial expressions into the hand gesture vocabulary can make it more expressive as it can enhance the discrimination of different gestures with similar hand movements. A major application of hand and face gesture recognition is sign language. Little work has been reported in this research direction. Von Agris et al. used facial and hand gesture features to recognize sign language automatically [2].

This approach also has the following challenges:- The simultaneous tracking of both hand and face.

- Higher computational complexity compared with the recognition of only hand gestures.

Difficulties associated with extracted features It is generally not recommended to consider all the image pixel values in a gesture video as the feature vector. This will not only be time-consuming but also it would take a great many examples to span the space variation, particularly if multiple viewing conditions and multiple users are considered. The standard approach is to compute some features from each image and concatenate these as a feature vector to the gesture model. Both the spatial and temporal movements of the hand along with its characteristics should be considered by a gesture model. No two samples of the same gesture will bring about the very same hand and arm movements or similar arrangement of visual pictures, i.e., gestures experience the ill effects of spatio-transient variety. Spatio-temporal variety exists in any event when the same user plays out a similar gesture on various occasions. Each time the user performs a gesture, the shape, position of the hand and speed of the motion normally change. Accordingly, extracted features ought to be rotation-scaling-translation (RST) invariant. Yet, different image processing strategies have their own imperatives to deliver RST-invariant features. Another limitation is that the processing of a lot of image information is tedious, and thus a real-time application might be troublesome.

Fig. 5.

Effect of illumination variations on perceived skin color: a skin color in low and high illumination conditions, b 2D color histogram in YCbCr space, and c 2D color histogram in CIE-Lab space

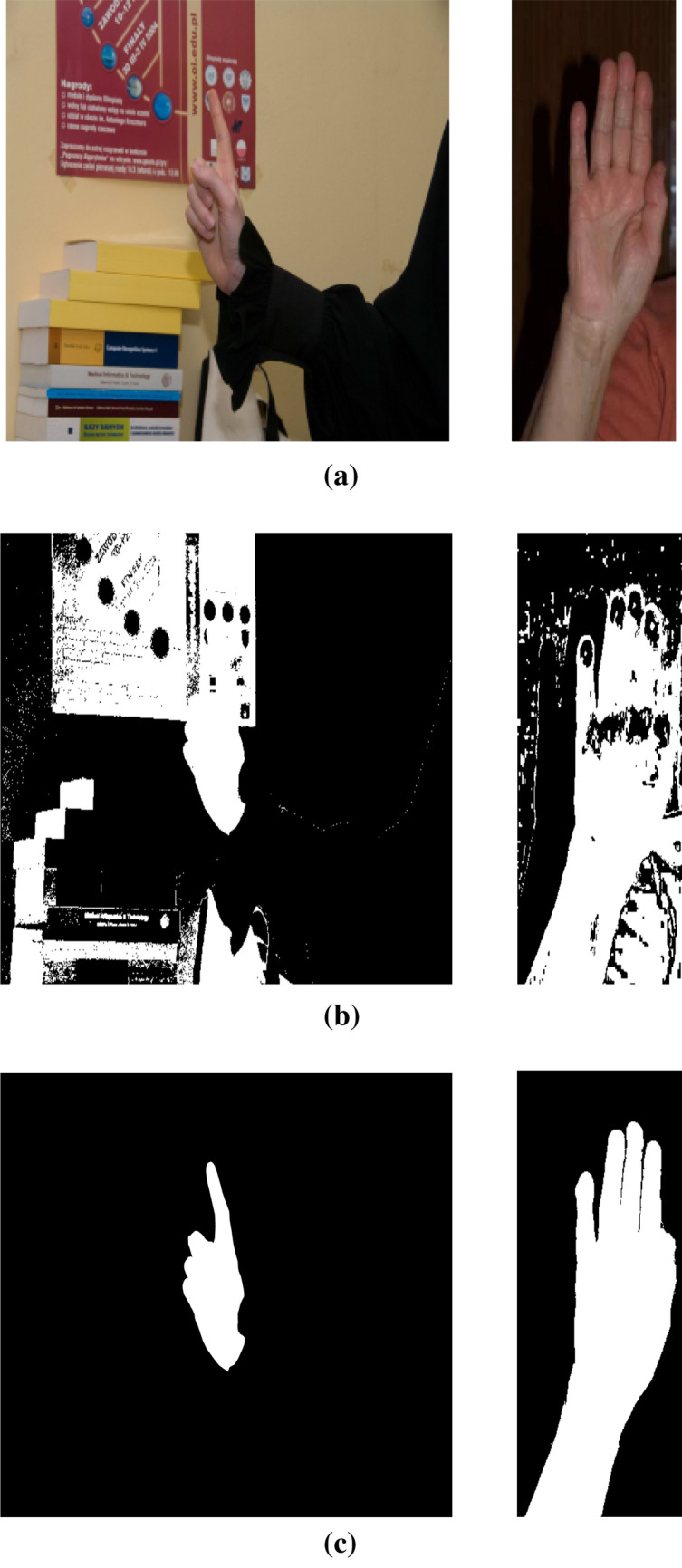

Fig. 6.

Effect of complex background on skin color segmentation: a original images, b segmentation results, and c ground truth

Fig. 7.

Effect of camouflage on skin color segmentation (left column: original image, right column: segmented image): a African, b Asian, and c Caucasian

Fig. 8.

Different hand poses and their side views

Fig. 9.

Multiple camera-based gesture recognition

Fig. 10.

Skeletal hand model: a hand anatomy [48], b the kinematic model [123]

Fig. 11.

a Movement epenthesis problem [18] b Gesture co-articulation (marked with redline) [202] c sub-gesture problem (here gesture ‘5’ is a sub-gesture of gesture ‘8’) [7]

Overview of Vision-Based Hand Gesture Recognition System

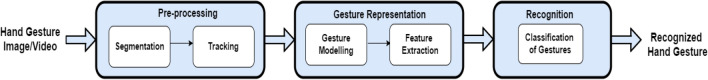

The essential part of vision-based frameworks is to identify and perceive visual signs for correspondence. A vision-based plan is more helpful than a glove-based one on account of its natural methodology. It tends to be utilized any place inside a camera’s field of view and simple to convey. The fundamental undertaking of vision-based gesture recognition is to get visual data in a specific scene and attempt to separate the vital motions. This methodology should be acted in a progression of succession, in particular, acquisition, detection and pre-processing; gesture representation and feature extraction; and recognition (Fig. 12).

Acquisition, detection and pre-processing The acquisition and detection of the gesturing body part is vital for a productive VGR framework. The procurement incorporates capturing gestures utilizing imaging gadgets. The fundamental assignment of discovery and pre-processing is essentially the segmentation of the gesturing body part from images or videos as precisely as could really be expected.

Gesture representation and feature extraction The assignment of the following subsystem in a hand gesture recognition system is to model or represent the gesture. The performance of a gestural interface is directly related to the proper representation of hand gestures. After gesture modeling, a bunch of features should be extricated for gesture recognition. Diverse sorts of features have been distinguished for addressing specific sorts of gestures [25].

Recognition The last subsystem of an recognition framework has the assignment of recognition or classification of gestures. A reasonable classifier perceives the incoming gesture parameters or features and gathers them into either predefined classes (supervised) or by their closeness (unsupervised) [146]. There are numerous classifiers utilized for both static and dynamic gestures, every one with its own benefits and constraints.

Fig. 12.

The basic architecture of a typical gesture recognition system

Acquisition, Detection and Pre-processing

Gesture acquisition involves capturing images or videos using imaging gadgets. The detection and classification of moving objects present in a scene is key research in the field of action/gesture recognition. The most important research challenges are segmentation, detection, and tracking of moving objects from a video sequence. The detection and pre-processing stage mainly deals with localizing gesturing body parts in images or videos. Since dynamic gesture analysis consists of all these subtasks, so this very portion can be subdivided into segmentation and tracking or combining both of them together. Moreover, in static gestures also segmentation is a vital step.

-

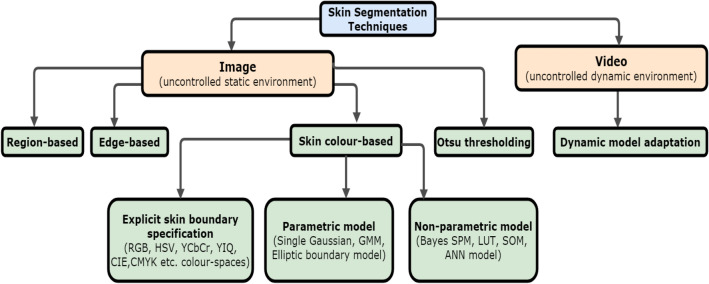

Segmentation Segmentation is the way toward partitioning an image into various distinct parts and in this way discovering the region of interest (ROI), which is hand for our situation. Precise segmentation of the hand or the body parts from the captured images actually stays a challenge for some engrossed limitations in computer vision like illumination variation, background complexity, and occlusion. A large portion of the segmentation strategies can be extensively delegated as follows (Fig. 13): (a) skin color-based segmentation, (b) region based, (c) edge based, (d) Otsu thresholding and so on. The simplest method to recognize skin districts of a picture is through an explicit boundary specification for skin tone in a particular color space, e.g., RGB [69], HSV [205], YCbCr [28] or CMYK [193]. Numerous analysts drop the luminance segment and have utilized just the chrominance segment since chrominance signals contain skin color information. This is on the grounds that the hue-separation space is less sensitive to illumination changes when contrasted with RGB shading space [190]. Also, color cues show variations in the skin color in different illumination conditions, and also skin color changes with the change in human races, and so segmentation is more constrained in the presence of skin-colored objects in the background. Occlusion also leads to many issues in the segmentation process.

Recently published works of literature show that the performance of the model-based approaches (parametric and non-parametric) is better than explicit boundary specification-based methods [97]. To improve the detection accuracy, many researchers have used parametric and non-parametric model-based approaches for skin detection. For example, Yang et al. [237] used a single multivariate Gaussian to model skin color distribution. But, skin color distribution possesses multiple co-existing modes. So, the Gaussian mixture model (GMM) [238] is more appropriate than a single Gaussian function. Lee and Yoo [124] proposed an elliptical modeling-based approach for skin detection. The elliptical modeling has less computational complexity as compared to GMM modeling. However, many true skin pixels may get rejected if the ellipse is small. Whereas if the ellipse is larger, many non-skin pixels may be detected as skin pixels. Out of different non-parametric model-based approaches for skin detection, Bayes skin probability map (Bayes SPM) [88], self-organizing map (SOM) [22], k-means clustering [154], artificial neural network (ANN) [33], support vector machine (SVM) [69], random forest [99] are noteworthy. The region-based approach involves region growing techniques, region splitting and region merging techniques. Rotem et al. [184] combined patch-based information with edge cues under a probabilistic framework. In an edge-based technique, basic edge-detecting approaches like Prewitt filter, Canny edge detector, Hough transforms are used. Otsu thresholding is a clustering-based image thresholding method that converts a gray-level image to a binary image using any edge detecting or tracking technique so that we have only two objects, i.e., one is hand and the other is background [145]. In the case of videos, all these methods can be applied with dynamic adaptation.

Fig. 13.

Different skin segmentation techniques

-

2.

Tracking Tracking can also be considered as a part of pre-processing in the hand detection process as both tracking and segmentation together help to extract the hand from the background. Despite the fact that skin segmentation is perhaps the most favored technique for segmentation or detection, still, it is not so viable for different imperatives like scene illumination variation, background complexity, and occlusion [190]. Fundamentally, when earlier information on moving objects like appearance and shape is not known, pixel-level change can, in any case, give viable motion-based cues for detecting and localizing objects. Different methodologies for moving item discovery utilizing pixel-level change can be background subtraction, inter-frame difference, or three-frame difference [241]. Stabilized background detection consistently is an expensive matter making it defenseless for long and fluctuated video groupings [241]. Aside from this, the choice of temporal distance between frames is a tricky question. It essentially relies upon the size and speed of the moving object. Despite the fact that interframe difference methods can easily detect motion, it shows terrible performance in localizing the object. The three-frame difference [92] approach uses previous, current and future frames to localize the object in the current frame. The utilization of future frames presents a slack in the global positioning framework, and this slack is adequate just if the object is far away from the camera or moves slowly comparative with the high catch pace of the camera.

Tracking of the hand can be restricted due to the fast movement of the hand and its appearance can alter immensely within a few frames. In such cases, model-based algorithms like mean-shift [56], Kalman filter [44], particle filter [30] are some of the methods used for tracking. The mean-shift is a purely non-parametric mode-seeking algorithm that iteratively shifts a data point to the average of data points in its neighborhood (similar to clustering). However, tracking often converges to an incorrect object when the object changes its position very quickly in the two neighboring frames. Because of this problem, a conventional mean-shift tracker fails to position a fast-moving object. [152, 185, 190] used a modified mean-shift algorithm called continuous adaptive mean-shift (CAMShift) where the window size is adjusted so as to fit the gesture area reflected by any variation in the distance between the camera and the hand. Though CAMShift performs well with objects that have a simple and consistent appearance, it is not powerful in more perplexing scenes. The movement model for the Kalman filter depends on the understanding that the speed is moderately little when items are moving, and thus, it is demonstrated by a zero mean and low variance white noise. One restriction of the Kalman filter is the supposition that the state variables depend on Gaussian distribution, and along these lines, the Kalman filter will give inaccurate assessments for state variables that do not follow a linear Gaussian environment. The particle filter is for the most part a preferred strategy over the Kalman filter since it can consider non-linearity and non-Gaussianity. The fundamental thought of the particle filter is to apply a weighted sample particle set to approximate the probability distribution, i.e., the necessary posterior density function is addressed by a bunch of arbitrary examples with related weights and estimation is done based on these samples and weights. Both Kalman filter and particle filter have the disadvantage of the requirement of previous knowledge in modeling the system. Kalman filter or particle filter can be combined with the mean shift tracker for precise tracking. In [224], authors have detected hand movement using Adaboost with the histogram of gradient (HOG) method.

-

3.

Combined segmentation and tracking Here the first step is object labeling by segmentation and the second step is object tracking. Accordingly, an update for tracking is done by calculating the distribution model with various label values. Skin-segmentation and tracking together can give quite a good performance [68], but researchers have adopted other methods too where skin segmentation is not so efficient.

Gesture Representation and Feature Extraction

Based on spatio-temporal variation, gestures are mainly classified as static or dynamic. Static gestures are simply the pose or orientation of the gesturing part (e.g., hand pose) in the space and hence sometimes simply called posture. On the other hand, dynamic gestures are defined by trajectory or temporal deformation (e.g., shape, position, motion, etc.) of body parts. Again dynamic gestures can be either single isolated trajectory type or continuous type, occurring in a stream, one after another.

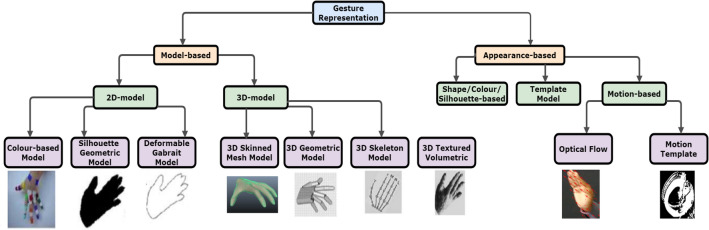

- Gesture representation A gesture must be represented using a suitable model for its recognition. Based on feature extraction methods, the following are the types of gesture representations: model based and appearance based (Fig. 14).

- Model based Here, gestures can be modeled utilizing either a 2D model or a 3D model. The 2D model essentially relies upon either different color-based models like RGB, HSV, YCbCr, and so forth, or silhouettes or contours obtained from 2D images. The deformable Gabarit model relies upon the arrangement of active deformable shaping. Then again, 3D models can be classified into mesh model [98], geometric model, volumetric models and skeletal models [198]. The volumetric model addresses hand motions with high exactness. The skeletal model diminishes the hand signals into a bunch of identical joint angle parameters with fragment length. For instance, Rehg and Kanade [179] utilized a 27-level degree-of-freedom (DOF) model of the human hand in their framework called ‘Digiteyes’. Local image-based trackers are utilized to adjust the extended model lines to the finger edges against a solid background. Crafted by Goncalves et al. [61] advanced three-dimensional tracking of the human arm utilizing a two cone arm model and a single camera in a uniform background. One significant drawback of model-based portrayal utilizing a single camera is self-occlusion [61] that often happens in articulated objects like a hand. To stay away from it, a few frameworks utilize multiple/stereo cameras and restrict the motion to small regions [179]. But it also has its own disadvantages like precision, accuracy, etc. [32].

- Appearance based The appearance-based model attempts to distinguish gestures either straightforwardly from visual images/videos or from the features derived from the raw data. Highlights of such models might be either the image sequences or a few features obtained from the images which can be utilized for hand-tracking or classification purposes. For instance, Wilson and Bobick [228] introduced results utilizing activities, generally hand motions, where the genuine gray-scale images (with no background) are utilized in real-life portrayal. Rather than utilizing raw gray-scale images, Yamato et al. [234] utilized body silhouettes, and Akita [5] utilized body shapes/edges. Yamato et al. [234] used low-level silhouettes of human activities in a hidden Markov model (HMM) system, where binary silhouettes of background-subtracted images are vector quantized and used as input to the HMMs. In Akita’s work [5], the utilization of edges and some straightforward two-dimensional body setup information were utilized to decide the body parts in a progressive way (first, discover legs, then the head, arms, trunk) in light of steadiness. While utilizing two or three-dimensional primary data, there is a prerequisite of individual features or properties to be extracted and tracked from each frame of the video sequence. Consequently, movement understanding is truly cultivated by perceiving an arrangement of static setups that require previous detection and segmentation of the item. Furthermore, since the good old days, sequential state-space models like generative hidden Markov models (HMMs) [122] or discriminative conditional random fields (CRFs) [19] have been proposed to demonstrate elements of activity/gesture recordings. Temporal ordering models like dynamic time warping (DTW) [7] have likewise been applied with regards to dynamic activity/gesture recognition where matching of an incoming gesture is done to a set of pre-defined representations.

- Optical flow Optical flow is the apparent movement or displacement of items/pixels as seen by a spectator. Optical flow shows the adjustment in speed of a point moving in the scene, likewise called a movement field. Here the objective is to assess the motion field (velocity vector) which can be figured from horizontal and vertical flow fields. Preferably, the motion field addresses the 3D movement of the points of an article across 2D image frame for a specific frame interval. Out of various optical stream procedures found in the literature, the most well-known strategies are: (a) Lucas–Kanade [134], (b) Horn–Schunk [76], (c) Brox 04 [23] and (5) Brox 11 [24], and (d) Farneback [51]. The choice of the optical flow technique principally relies upon the power of generating a histogram of optical flow (HOF) or motion boundary histogram (MBH) descriptor. HOF gives the optical flow vectors in horizontal and vertical directions. The natural thought of MBH is to address the oriented gradients computed over the vertical and horizontal optical flow components. When horizontal and vertical optical flow segments are acquired, histograms of oriented gradients are computed on each image component. The result of this interaction is a couple of horizontal (MBHx) and vertical (MBHy) descriptors. Laptev et al. [119] executed a blend of HOG-HOF for taking insensible human activity from motion pictures. [39] additionally proposed to ascertain changes of optical flow that focus on optical flow differences between frames (motion boundaries). Yacoob and Davis [233] utilized optical flow estimations to follow predefined polygonal patches set on interest areas for facial expression recognition. [229] introduced an incorporated methodology where the optical flow is coordinated frame-by-frame over time by considering the consistency of direction. In [135], the optical flow was used to detect the direction of motion along with the RANSAC algorithm which in turn helped to further localize the motion points. In [95], authors have used optical flow guided trajectory images for dynamic hand gesture recognition using deep learning-based classifier.

- Motion templates Basically, motion templates are the compact representation of a gesture video where the dynamics of motion of a gesture video is encoded into an image. These templates are compact representations of videos where a single image illustrates the motion information of the whole video useful for video analysis. Hence, these images are named motion fused images or temporal templates or motion templates. There are three widely used motion fusion strategies namely motion energy image(MEI) and motion history image (MHI) [3, 21], dynamic images (DI) [20] and methods based on PCA [49]. We will not go into the details of these methods, but the same can be found in [191] by the same authors.

Feature extraction After modeling a gesture, the next step is to extract a bunch of features for gesture recognition. For static gestures, features are obtained from image data like color and texture or posture data like direction, orientation, shape, and so forth. There are three basic features for spatio-temporal patterns of dynamic gestures namely location, orientation and velocity [242], based on which different features or descriptors are utilized in the cutting edge techniques. For instance, a few features depend on movement and additionally disfigurement data like position, skewness, and the speed of hands. Features for dynamic hand signals are spatial-transient examples. A static hand gesture might be seen as a special instance of a dynamic gesture with no temporal variation of the hand position as well as shape. A gesture model ought to think about both spatial and temporal changes of the hand and its motion. Generally, no two examples of the same gesture will bring about the very same hand and arm movements or generate a similar arrangement of visual information, i.e., motions experience the ill effects of spatial-transient variety. There exists spatial-transient variety when a user plays out the same gesture on various occasions. Each time the user plays out a motion, the shape and the speed of the motion for the most part shift. Regardless of whether a similar individual attempt to play out a similar sign twice, a little variety in speed and position of the hands may happen. Subsequently, separated features ought to be rotation–scaling–translation (RST) invariant. Different features or descriptors are utilized in the classification stage of VGR frameworks. These features can be comprehensively classified depending on their technique for extraction, for example, spatial domain features, transform domain features, curve fitting-based features, histogram-based descriptors, and interest point-based descriptors. Also, the classifier ought to have the capacity to deal with spatio-temporal variations. As of late, feature extraction procedures based on deep learning have frequently been applied for various applications. Kong et al. [109] proposed a view-invariant feature extraction technique utilizing deep learning for multi-view activity acknowledgment. Table 1 gives a short review of the properties of various features utilized for both static and dynamic motion acknowledgment.

Fig. 14.

Different hand models for hand gesture representation

Table 1.

Major features used in gesture recognition

| Feature type | Examples | Static | Dynamic | Advantages | Limitations |

|---|---|---|---|---|---|

| Spatial domain (2D) | Fingertips location, finger direction, and silhouette [156] | ✓ | ✓ | • Easy to extract | • Unreliable under occlusion or varying illumination |

| • Rotation invariant | • Object view dependent | ||||

| • Distorted hand trajectory distorts MCC also | |||||

| Motion chain code (MCC) [19, 122] | ✓ | ||||

| Spatial domain (3D) | Joint angles, hand location, surface texture and surface illumination [118] | ✓ | ✓ | • 3D modeling can most accurately represent the state of a hand, and thus can give higher recognition accuracy | • Difficult to accurately estimate 3D shape information of a hand |

| Transform domain | Fourier descriptor [70], DCT descriptor [4], Wavelet descriptor [79] | ✓ | ✓ | • RST invariant | • Not able to perfectly distinguish different gestures |

| Moments | Geometric moments, orthogonal moments [174] | ✓ | ✓ | • Moments can be used to derive RST invariant global features | • Moments are in general global features. So, moments cannot effectively represent an occluded hand |

| Curve fitting based | Curvature scale space [231] | ✓ | • RST invariant | • Sensitive to distortion in the boundary | |

| • Resistant to noise | |||||

| Histogram based | Histogram of gradient (HoG) features [52] | ✓ | ✓ | • Invariant to geometry and illumination changes | • Performance is not so satisfactory for images with a complex background and noise |

| Interest point based | Scale-invariant feature transform (SIFT) [40], Speeded up robust features (SURF) [247] | ✓ | ✓ | • RST and illumination invariant | • They are not the best choice for real-time applications because they are computationally expensive |

| Mixture of features | Combined features [60] | ✓ | ✓ | • Incorporates the advantages of different types of features | • Classification performance may degrade due to curse of dimensionality |

Recognition

The last subsystem of a gesture framework has the assignment of recognition where a reasonable classifier perceives the incoming gesture parameters or features and gathers them into either predefined classes (supervised) or by their closeness (unsupervised). Here, the hand gesture recognition techniques have been tried to classify into some categories for easy understanding. And based on the type of input data and the method, the hand gesture recognition process can be broadly categorized into three sections:

Conventional methods on RGB data

Depth-based methods on RGB-D data

Deep networks—a new era in computer vision

Conventional Methods on RGB Data

Vision-based gesture recognition generally depends on three stages where the third module consists of a classifier, which typically classifies the input gestures. However, each classifier has its own advantages as well as limitations. Here, we discuss the conventional methods of classification for static and dynamic gestures on RGB data.

- Static gesture recognition Static gestures are basically finger-spelled signs in still images without any time frame. Unsupervised k-means and supervised k-NN, SVM, ANN are the major classifiers for static gesture recognition.

- k-means It is an unsupervised classifier that evaluates k center points to minimize error in the clustering defined by the sum of the distances of all data points to their respective cluster centers. For a set of observations , in a d-dimensional real vector space, k-means clustering partitions the n observations into a set of k clusters or groups and their centers are given by

The classifier arbitrarily finds k cluster centers in the feature space. Each point in the information dataset is assigned to the closest cluster center, and their locations are refreshed to the average location value for each group. This cycle is then rehashed until a halting condition is met. The halting condition could be either a user indicated of maximum number of cycles or a distance edge for the development of the group communities. Ghosh and Ari [59] utilized a k means clustering-based radial basis function neural network (RBFNN) for static hand gesture recognition. In this work, k means grouping is utilized to decide the RBFNN centers.1 - k-nearest neighbors (k-NN) k-NN is a non-parametric algorithm where information in the feature space can be multidimensional. It is a supervised learning scheme with a bunch of labeled vectors as training data. The number k essentially decides the number of neighbors (close feature vectors) that impact the characterization. Commonly, an odd estimation of k is picked for two-class characterization. Each neighbor might be given a similar weight or more weight might be given to those nearest to the input information by applying a Gaussian distribution. In uniform voting, a new feature vector is allocated to the class to which the majority of its neighbors belongs. Hall et al. expected two statistical distributions (Poisson and binomial) for the sample data to get the ideal estimation of k [67]. The k-NN can be utilized in various applications, for example, hand gesture-based media player control [138], sign language recognition [64], and so on.

- Support vector machine (SVM) An SVM is a supervised classifier for both linearly separable and nonseparable data. When it is not possible to linearly separate the input data in the current feature space, then SVM maps this non-linear data to some higher dimensional space where the data can be linearly separated. This mapping from lower to higher dimensional space makes the order of the information more straightforward and recognition more precise. On several occasions SVM has been utilized for hand gesture recognition [41, 98, 132, 183]. SVMs were initially intended for two-class grouping, and an expansion for multi-class arrangement is vital for many instances. Dardas et al. [41] applied SVM along with bag-of-visual-words for hand gesture recognition. Weston and Watkins [226] proposed an SVM design to settle a multi-class pattern recognition problem using a single optimization stage. Be that as it may, their optimization procedure found to be extremely convoluted to be executed for real-life pattern recognition problems [77]. Rather than utilizing a single optimization method, various paired classifiers can be utilized to take care of multi-class grouping issues, for example, ”one-against-all” and ”one-against-one” techniques. Murugeswari and Veluchamy [151] utilized “one-against-one” multi-class SVM for gesture recognition. It was tracked down that the ”one-against-one” strategy performs better compared to the remainder of the strategies [77].

- Artificial neural network (ANN) ANN is a statistical learning algorithm utilized for different errands like functional approximation, pattern recognition and classification. ANNs can be used as a biologically inspired supervised classifier for gesture recognition where training is performed utilizing a bunch of marked input data. The trained ANN arranges new input data into the labeled classes. ANNs can be utilized to perceive both static [59] as well as dynamic hand gestures [157, 163]. [157] applied ANN to classify gesture motions utilizing a 3D articulated hand model. A dataset collected using Kinect® sensor [163] was used for this. Obtaining info from data glove, Kim et al. [102] applied ANNs to perceive Korean sign language from the movement of hand and fingers. A restriction of traditional ANN design is its failure to deal with temporal arrangements of features proficiently and successfully [165]. Primarily, it cannot make up for changes in transient moves and scales, particularly in real-time applications [177]. Out of a few altered structures, multi-state time-delay neural networks [239] can deal with such changes somewhat utilizing dynamic programming. Fuzzy-based neural networks have likewise been utilized to perceive gestures [220].

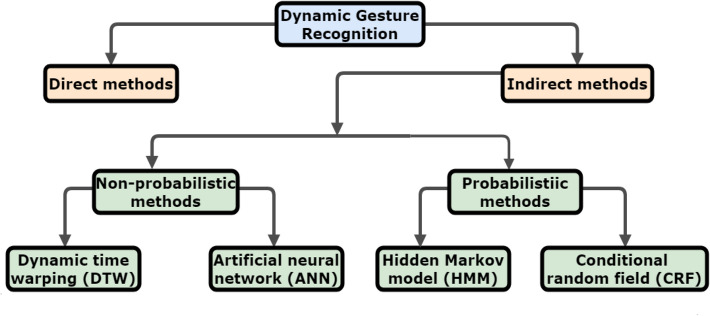

- Dynamic gesture recognition Dynamic gestures or trajectory-based gestures are gestures having trajectories with temporal information in terms of video frames. Dynamic gestures can be either a single isolated trajectory type or continuous type occurring one after another in a stream. Recognition performance of dynamic gestures, especially the continuous gestures, is basically dependent on gesture spotting schemes. Dynamic gesture recognition schemes can be categorized into direct or indirect methods [7]. The approaches in direct method first detect the boundaries in time for the performed gestures and then apply standard techniques same as isolated gesture recognition. Typically, motion cues like speed, acceleration and trajectory curvature [242]) or specific starting–ending marks [7], an open/closed palm can be applied for boundary detection. Whereas, in the indirect approach temporal segmentation is intertwined with recognition. In indirect methods, typically gesture boundaries are detected by finding time intervals that give good scores when matched with one of the gesture classes in the input sequence. Such procedures are too vulnerable to false positives and recognition errors as they have to deal with two vital constraints of dynamic gesture recognition [146]: 1) spatiotemporal variability, i.e., a user cannot reproduce the same gesture at the exact same shape and duration and 2) segmentation ambiguity, i.e., problems faced due to erroneous boundary detection. Through indirect methods, we try to minimize these problems as much as possible. Indirect methods can be of two types (Fig. 15): non-probabilistic, i.e., (a) dynamic programming/dynamic time warping, (b) ANN; and probabilistic, i.e., (c) HMM and other statistical methods, (d) CRF and its variants. Some other common techniques are eigenspace-based methods [164], curve fitting [196], finite-state machine (FSM) [16, 19] and graph-based methods [194].

- Dynamic programming/dynamic time warping (DTW) A template matching approach of dynamic programming is dynamic time warping (DTW) and it has been extensively used in isolated gesture recognition. It can find the optimal alignment of two signals in the time domain. Each element in a time series is represented by a feature vector. So, the DTW algorithm calculates the distance between each possible pair of points in two time series in terms of their feature vectors. The steps in a DTW are as follows:

- Two time series P and Q:

where , are feature vectors for the ith element of the corresponding time sequences. - Construct matrix D with distances .

- Warping path W is a contiguous set of matrix elements

- Define warping between and

where - Find:

-

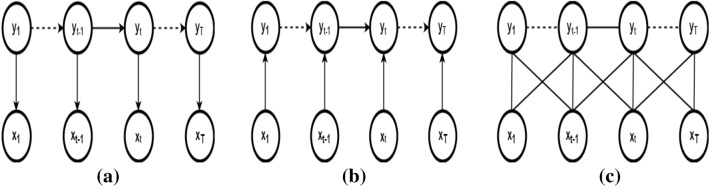

Hidden Markov model (HMM) Though HMM originally emerged in the field of speech recognition, now, it is one of the most widely used techniques for gesture recognition with its numerous variants. HMM is extensively used because it can be applied for modeling the spatiotemporal variability of the gesture videos. Since trajectory-based gesture is a series of images, so there is a need for past knowledge to help the system to recognize gestures and an HMM can help us in this. Before we elaborate on HMM, let us understand a traditional Markov process. A stochastic process has the nth order Markov property if the current event’s conditional probability density is dependent only on the n most recent events. For , the process is called a first-order Markov process, where the current event depends only on the previous event. This is a useful assumption for hand gestures, where the positions and orientations of the hands are treated as events. HMM has two special properties for encoding hand gestures—a) it assumes a first-order model, i.e., it encodes the present time (t) in terms of the previous time ()—the Markov property of underlying unobservable finite-state Markov process and b) a set of random functions, each associated with a state, that produces an observable output at discrete intervals. In this way, an HMM is a “doubly stochastic” process [176]. The states in the hidden stochastic layers are governed by a set of probabilities:

-

i.The state transition probability distribution , which gives the probability of transition from the current state to the next possible state.

-

ii.The observation symbol probability distribution , which gives the probability of observation for the present state of the model.

-

iii.The initial state distribution , which gives the probability of a state being an initial state.

- Let there be a set of N states ; with a sequence of states , where . For a gesture with M observable states, the set of observed symbol or feature is given by .

- The state-transition matrix is , where is the state-transition probability from state at time t to state at time .

- The observation symbol probability matrix , where is the probability of symbol at state .

- The initial probability distribution , where

For a given observation sequence, the key issues of HMM are,- Evaluation Given the model . What is the probability of occurrence of a particular observation sequence This is the heart of the classification/recognition problem. Determination of the probability that a particular model will generate the observed sequence when there is a trained model for each of a set of classes (forward–backward algorithm).

- Decoding Optimal state sequence to produce an observation sequence Determination of the optimal state sequence that produces the observation sequence (Viterbi algorithm).

- Learning Determine model , given a training set of observations, i.e., find , such that is maximal. Train and adjust the model to maximize the observation sequence probability such that HMM should identify a similar observation sequence in the future (Baum–Welch algorithm).

-

i.

-

Conditional random field (CRF) CRF is basically a variant of the Markov model with some added advantages. HMM requires strict independence assumptions across multivariate features and conditional independence between observations. This is generally violated in continuous gestures where observations are not only dependent on the state, but also on the past observations. Another disadvantage of using HMM is that the estimation of the observation parameters needs a huge amount of training data. The distinction between HMM and CRF is that HMM is a generative model that defines a joint probability distribution to solve a conditional problem thus focusing on modeling the observation to compute the conditional probability. Moreover, one HMM is constructed per label or pattern where HMM assumes that all the observations are independent. On the other hand, CRF is a discriminative model that uses a single model of the joint probability of the label sequence to find conditional densities from the given observation sequence. CRFs can effortlessly address contextual dependencies and have computationally alluring properties. CRFs support proficient recognition utilizing dynamic programming, and their parameters can be learned utilizing convex optimization.Both HMM and CRF can be used for labeling sequential data. For this, we define a statement for a given observation sequence x that, we want to choose a label sequence such that the conditional probability P(y|x) is maximized, that is:

Maximum entropy Markov models (MEMMs) are discriminative models, where each state has an exponential model that takes the observation sequence as input and outputs a probability distribution over the next possible states.2

Each of the , is an exponential model of the form:3

where Z is a normalization constant and the summation is overall features. But MEMM suffers from Label Bias Problem, i.e., the transition probabilities of leaving a given state are normalized for only that state (local normalization). MEMMs have a non-linear decision surface in light of the fact that the current observation is simply ready to choose what successor state has chosen; however, the probability mass do not move to that state. To stay away from this impact, a CRF utilizes an undirected graphical model that characterizes a single log-linear distribution over the joint vector of a whole class label sequence given a specific observation sequence and accordingly the model has a linear decision surface. Let be a graph such that so that Y is indexed by vertices of G. Then (X, Y) is a conditional random field, when conditioned on X, the random variables obey the Markov property with respect to the graph: , where means that w and v are neighbors in G. Given by Hammersley and Clifford, it states that the probability distribution of x satisfies the Markov property with respect to graph G(V, E) if and only if, it can be factored according to G:4

where Z is the normalization constant and is the potential function over clique C.5

where f(.) is the feature vector defined over the clique and is the corresponding weight vector for those features. Bhuyan et al. [19] proposed a recognition method applying CRF through a novel set of motion chain code features. Sminchisescu et al. [203] have compared performance analysis applying algorithms based on CRF and MEMM for discerning human motion in video sequences. Undirected conditional model CRF and directed conditional model MEMM with different windows of observations are compared with HMM. Both MEMM and HMM have trouble in perceiving long-range observation dependencies that become useful in discriminating among various gestures. It is seen that CRFs have better recognition performance compared to MEMMs, which in turn, typically outperformed traditional HMMs. This is because CRF applies an undirected graphical model to overcome the problem of label bias present in maximum entropy Markov models (MEMMs) where states with low-entropy transition distributions effectively ignore their observations. The main constraint of CRF is that training is more time-consuming ranging from several minutes to several hours for models having longer windows of observations (as compared to seconds for HMMs, or minutes for MEMMs), on a standard desktop PC.6 - Some other classification methods Here, we discuss some other classification techniques that have also been used in the classification of gestures. Patwardhan and Roy [164] presented an eigenspace-based methodology to represent trajectory-based hand gestures containing both shape and trajectory information which are rotation, scale and translation (RST) invariant. Shin et al. [196] presented a curve-fitting based geometric framework utilizing Bezier curves by fitting the curve to the 3D motion trajectory of the hand. The gesture velocity is interlinked in the algorithm to enable trajectory analysis and classification of dynamic gestures having variations in velocity. Bhuyan et al. [16, 19] represented the keyframes of a gesture trajectory as a sequence of states ordered in the spatial-transient space, which constitutes a finite state machine (FSM) that classifies the input. Graph-based frameworks are also applied as a powerful scheme for pattern recognition problems but have been practically left unused for a long period of time due to their high computational cost. [194] used graphs for gestures matching in an eigenspace to handle hand occlusion (Fig. 16).

Fig. 15.

Conventional dynamic gesture recognition techniques

Fig. 16.

a HMM b a directed conditional model or MEMM c a conditional random field accommodates arbitrary overlapping features or long-term dependency of observation sequence [203]

Depth-Based Methods on RGB-D Data

Depth information is largely invariant to illumination variation and skin colors and offers a quite clear segmentation from the background. So, the major problems in segmentation like illumination variation and occlusion can be handled nicely with the help of depth information to a great extent. Due to these advantages, depth measuring cameras have been used in the field of computer vision for many years. However, the applicability of depth cameras was restricted because of their excessive cost and low quality. With the introduction of low-cost color-depth (RGB-D) cameras like Kinect® by Microsoft, Leap Motion Controller (LMC) by Leap Motion, Intel RealSense®, Senz3D® by Creative and DVS128® by iniLabs, a new revolution was evolved in gesture recognition by providing high-quality depth images that can handle issues like complex background and variation in illumination. Out of all these, hand gesture recognition on Kinect®-based dataset and ‘one-shot learning’ with RGB-D data, are the prominent methods mostly discussed in depth-based hand gesture recognition.

Kinect®-based methods Kinect® has a combined RGB and IR camera along with depth sensor [248]. It uses the infrared projector and sensor for depth computation and an RGB camera for capturing RGB data only. The infrared projector projects a predefined pattern on the items and a CMOS sensor captures the deformations in the reflected pattern. Depth information is then calculated by mapping a three-dimensional view of the scene obtained from the deformation information. Kinect® acquire RGB-D information by consolidating organized light with two exemplary computer vision strategies: depth from focus and depth from the stereo. The skeletal information got from these RGB-D sensors is changed over to more significant and undeniable features, and algorithms are created for the robust gesture classification. Classification of hand gestures is particularly difficult because of the complex articulation and relatively smaller area of the hand region. Kinect® is helpful in tending to these central issues in computer vision [163, 181, 212]. It has also diverse applications ranging from gaming to classroom [71, 116].

Other depth sensor-based methods Leap motion controller (LMC) and Intel RealSense® are the most used RGB-D sensor for HCI applications apart from Kinect®. RealSense® is more robust to self-occlusions and it can capture pinching gestures. LMC is another RGB-D sensor and its purpose is to locate 3D fingertip positions instead of the whole-body depth information as the case with Kinect® sensor. It can detect only fingertips lying parallel to the sensor plane, but with high accuracy. In [133] feature vector with depth information is computed using a leap motion sensor and fed into the hidden conditional neural field (HCNF) to classify dynamic hand gestures. Leap motion sensors can also be applied in different utilization, e.g., virtual environments [178] and sign language recognition [172].

One-shot learning methods on RGB-D data Using Deep Learning, human-level performance has become achieveable on complex image classification tasks. However, these models rely on a supervised training paradigm and their achievement is heavily dependent on the availability of labeled training data. Also, the classes that the models can recognize are limited to those they were trained on. This makes these models less useful in realistic scenarios for the classes where enough labeled data is not available during training. Also, since it is practically not possible to train on images of all possible objects, so the model is expected to recognize images from classes with a limited amount of data in the training phase or precisely with a single example. So, in the case of a small dataset, ‘one-shot learning’ may be very useful. Various researchers [108, 221, 230] have used one-shot learning in both deep learning and non-deep learning paradigm for recognition of hand gestures, especially with RGB-D data. Wu et al. [230] presented a framework to learn gestures from just one learning sample for each class, in particular, ’one-shot learning’. Features are obtained depending on extended motion history image (Extended MHI) and the gestures are recognized based on the maximum correlation coefficient. The extended MHI is used to improve the presentation of MHI by making up for the immobile regions and repeated activities. A multi-view spectral embedding (MSE) scheme is utilized to meld the RGB and depth information in an actually significant way. The MSE calculation finds the natural connection among RGB and depth features, improving the recognition rate of the algorithm. In [136], authors used a methodology consolidating MHI with statistical measures and frequency domain transformation on depth images for one-shot-learning hand gesture recognition. Due to the availability of the depth information, the background-subtracted silhouette images were obtained using a simple mask threshold.

Deep Networks: A New Era in Computer Vision

Though the idea of artificial intelligence (AI) is quite ancient, modern AI first came into the picture around the mid-twentieth century. The AI aims at developing intelligence in machines so as to make them work and respond like humans. This can be achieved when the machines are made to have certain traits, e.g., reasoning, problem solving, perception, learning, etc. Machine learning (ML) is one of the cores of AI. There are a large number of applications of ML in many aspects of modern human society. Consumer products like cameras and smartphones are the best examples where ML techniques are being employed increasingly. In the field of computer vision, ML techniques have been vigorously used in different applications like object detection, image classification, face recognition, gesture and activity recognition, semantic segmentation, and many more. In conventional ML, engineers and data scientists have to identify useful features and they have to handcraft the feature extractor manually which requires considerable engineering skills and domain knowledge. To identify important and powerful features, they must have considerable domain expertise. The issue of “handcrafting features” can be addressed if good features can be learned automatically. This automatic learning of features can be done by a learning method called “representation learning”. These are methods that enables a machine to automatically learn the representations that are crucial for detection or classification.

Recently, deep learning has shown outstanding performance outperforming “non-deep” state-of-the-art methods in action and gesture recognition fields. Deep learning, a subfield of ML, is based on representation learning methods having multiple levels of representation. Deep learning is a part of ML algorithms, in which extraction of multiple levels of features is possible. In several fields, such as computer vision, deep learning methods have been proved to have much better performance than conventional ML methods. The main reason for deep learning having an upper hand over ML is the fact that the feature learning mechanism at these different levels of representation is fully automatic, thereby allowing the computational model to implicitly capture intricate structures embedded in the data. The deep learning methods are said to have deep architecture because of the non-uniform processing of information at different levels of abstraction where higher-level features are interpreted in the form of lower-level features. This has propelled the advancement of learning powerful and successful portrayals straightforwardly from raw data and deep learning gives a conceivable method of naturally learning different levels of image specific features by utilizing different layers. Deep networks are fit for discovering remarkable dormant constructions inside unlabeled and unstructured raw data and can be utilized for both feature extraction as well as classification [110]. The recent popular deep learning methods like convolutional neural network (CNN), recurrent neural network (RNN) and long short-term memory (LSTM) have demonstrated competitive performance in both image/video representation as well as classification. But deep learning approaches have mainly two inherent requirements: huge data for training purposes and expensive computation. But in this modern era, the abundance of high quality, easily available labeled datasets from different sources along with parallel graphics processing unit (GPU) computing, also played a vital role in the success of deep learning by fulfilling its requirements. We will see all these methods one by one, but before that let’s talk about one major problem of deep learning which is the requirement of huge data and how various researchers have tried to overcome it through the data augmentation process when the database is limited.

The need for data augmentation in deep learning methods Contrary to the hand-crafted features, there is developing interest towards feature learned and represented by deep neural networks [12, 29, 37, 43, 58, 85, 94, 101, 110, 121, 129, 148, 149, 153, 169, 201, 215, 217, 223, 249, 250]. But the fundamental necessity in deep learning methods is loads of data set examples. Various researchers have stressed the significance of utilizing diverse training samples for CNNs/RNNs [110]. For datasets with restricted variety, they have proposed data augmentation techniques in the training stage to forestall CNNs/RNNs from overfitting. Krizhevsky et al. [110] utilized different data augmentation procedures in the preparation of the recognition problem of 1000 groups. Simonyan and Zisserman [201] utilized some spatial augmentation on every image frame to prepare CNNs for video-based human action classification. Notwithstanding, these data augmentation strategies were restricted to only spatial varieties. Pigou et al. [169] transiently deciphered video outlines apart from applying spatial changes to add varieties to the video sequences containing dynamic movement. Molchanov et al. [148] applied space-time video augmentation methods to keep away 3D-CNN from overfitting.

Convolutional neural networks (CNN) In 1962, D.H. Hubel and T.N. Weisel proposed the prototype of Cat’s visual cortex, which later on helped in the development of CNNs. The first neural network architecture for visual pattern recognition was presented by K. Fukushima in 1980 and was given the nickname “neocognitron” [57]. This network was based on unsupervised learning. Finally, in the late 90s, Yann LeCunn and his collaborators developed CNN which showed exciting results in various recognition tasks [121]. But till 2012, CNN was not that much evolved due to the requirements of deep learning methods mentioned above. After the work of Krizhevsky et al. [110], various researchers applied CNN in various domains for classification as well as other purposes. Generally, 2D-CNN is used in the case of images that can access only spatial information, whereas, for video processing, 3D-CNN (C3D) is quite effective which can extract both spatial as well as temporal information. A fusion-based approach with CNN as trajectory shape extractor of a gesture video and CRF as temporal feature extractor is proposed by [235]. In [190], the authors used CNN for recognition of hand gestures using trajectory-to-contour-based images obtained through skin segmentation and tracking method. In [245], the authors used pseudo-color-based MHI images as input to convolutional networks. [96] proposed a model for isolated gesture recognition using optical flow where the trajectory-contour of the moving hand with varied shape, size and color is detected and the hand gesture is classified through a VGG16 CNN framework.