Summary

Reproducible computational research (RCR) is the keystone of the scientific method for in silico analyses, packaging the transformation of raw data to published results. In addition to its role in research integrity, improving the reproducibility of scientific studies can accelerate evaluation and reuse. This potential and wide support for the FAIR principles have motivated interest in metadata standards supporting reproducibility. Metadata provide context and provenance to raw data and methods and are essential to both discovery and validation. Despite this shared connection with scientific data, few studies have explicitly described how metadata enable reproducible computational research. This review employs a functional content analysis to identify metadata standards that support reproducibility across an analytic stack consisting of input data, tools, notebooks, pipelines, and publications. Our review provides background context, explores gaps, and discovers component trends of embeddedness and methodology weight from which we derive recommendations for future work.

Keywords: reproducible research, reproducible computational research, RCR, reproducibility, replicability, metadata, provenance, workflows, pipelines, ontologies, notebooks, containers, software dependencies, semantic, FAIR

The bigger picture

A recent confluence of technologies has enabled scientists to effectively transfer runnable analyses, addressing a long-standing challenge of reproducible research. The implementation of reproducible research for in silico analyses requires extensive metadata to describe both scientific concepts and the underlying computing environment. This review covers the wide range of metadata standards relevant to reproducible computational research across an “analytic stack” consisting of input data, tools, reports, pipelines, and publications. Legacy and cutting-edge metadata support a wide range of data annotations, analytic approaches, and interpretation across virtually all scientific disciplines. This review is designed to bridge the metadata and reproducible research communities. We identify competing approaches of embedded and connected metadata, discuss gaps, and make recommendations with implications for the future of journals and peer review.

Leipzig et al. review the wide range of metadata standards for input data, tools, reports, pipelines, and publications that are enabling reproducible computational analyses with implications for the future of journals and peer review.

Introduction

Digital technology and computing have transformed the scientific enterprise. As evidence, many scientific workflows and methods have become fully digital, from the problem scoping stage and data collection tasks to analyses, reporting, storage, and preservation. Another key factor includes federal1 and institutional2,3 recommendations and mandates to build a sustainable research infrastructure, to support FAIR principles,4 and reproducible computational research (RCR). Metadata have emerged as a crucial component, supporting these advances, with standards supporting the research life cycle. Reflective of change, there have been many case studies on reproducibility,5 although few studies have systematically examined the role of metadata in supporting computational reproducibility. Our aim in this work was to review metadata developments that are directly applicable to computational reproducibility, identify gaps, and recommend further steps involving metadata toward building a more robust infrastructure. To lay the groundwork for these recommendations, we first review reproducible computational research and metadata, examine how they relate across different stages of an analysis, and discuss what common trends emerge from this approach.

Intended audience

This review is designed primarily to bridge the metadata and reproducible research communities. The practitioners working in this area may be considered information scientists or data engineers working in the life, physical, and social sciences. Those readers most interested in the representation of scientific data and results will find sections on input and publication most relevant, while those most closely aligned with analysis and data engineering may be more interested in the sections on tools, reports, and pipelines. During the development of this article, it became evident that many important efforts that could be useful and applicable to other domains will wither in isolation if not discovered by a wider audience. Furthermore, many areas of research homologous are not identified as such simply due to differences in the use of terminology. Though much of the battle ground of reproducibility has involved the fields of bioinformatics and psychology, these are by no means the only affected areas. It should be mentioned that while the reproducibility crisis has played out on a public stage involving high-profile papers and journals and is often connected to challenges in peer review processes, the home front of reproducibility is borne by individuals working in smaller settings who need to reproduce analyses written by immediate colleagues, or even themselves.

Reproducible computational research

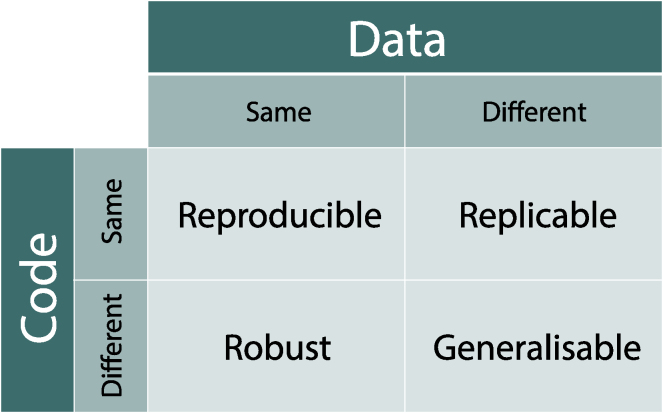

“Reproducible research” is an umbrella term that encompasses many forms of scientific quality, from generalizability of underlying scientific truth, exact recreation of an experiment with or without communicating intent, to the open sharing of analysis for reuse. Specific to computational facets of scientific research, RCR6 encompasses all aspects of in silico analyses, from the propagation of raw data collected from the wet lab, field, or instrumentation, through intermediate data structures, computational hardware, to open code and statistical analysis, and finally publication. Here, our emphasis is on the scholarly record with results reported in a journal article, conference proceeding, white paper, or report, as a final reporting; although we clearly recognize the importance of reproducibility and full scope of scientific output including data, software, tools, and even data papers.7 Reproducible research points to several underlying concepts of scientific validity – terms that should be unpacked to be understood. Stodden et al.8 devised a five-level hierarchy of research, classifying it as reviewable, replicable, confirmable, auditable, and open or reproducible. Whitaker9 described an analysis as "reproducible" in the narrow sense that a user can produce identical results provided the data and code from the original, and "generalizable" if it produces similar results when both data are swapped out for similar data ("replicability"), and if underlying code is swapped out with comparable replacements ("robustness") (Figure 1).

Figure 1.

Whitaker’s matrix of reproducibility

Whitaker's matrix of reproducibility;10 made available under the Creative Commons Attribution license (CC-BY 4.0).

While these terms may confuse those new to reproducibility, a review by Barba disentangled the terminology while providing a historical context of the field.11 One major conflicted use of terms (reproducible/replicable) has since then been harmonized.12 A wider perspective places reproducibility as a first-order benefit of applying FAIR principles: Findability, Accessibility, Interoperability, and Reusability. In the next sections, we engage reproducibility in the general sense and use "narrow-sense" to refer to the same data, same code condition.

Reproducibility crisis

The scientific community's challenge with irreproducibility in research has been extensively documented.13 Two events in the life sciences stand as watershed moments in this crisis: the publication of manipulated and falsified predictive cancer therapeutic signatures by a biomedical researcher at Duke and subsequent forensic investigation by Keith Baggerly and David Coombes,14 and a review by scientists at Amgen who could replicate the results of only 6 of 53 cancer studies.15 These events involved different aspects: poor data structures and missing protocols, respectively. Together with related studies,16 they underscore recurring reproducibility problems due to a lack of detailed methods, missing controls, and other protocol failures in inadequate understanding or misuse of statistics, including inappropriate statistical tests and/or misinterpretation of results, which also plays a recurring role in irreproducibility.17 Regardless of intent, these activities fall under the umbrella term of "questionable research practices." It bears speculation whether these types of incidents are more likely to occur in novel statistical or computational approaches compared with conventional ones. Subsequent surveys of researchers13 have identified selective reporting, while theory papers18 have emphasized the insidious combination of underpowered designs and publication bias, essentially a multiple testing problem on a global scale. We contend that metadata have an undervalued role to play in addressing all of these issues and to shift the narrative from a crisis to opportunities.19

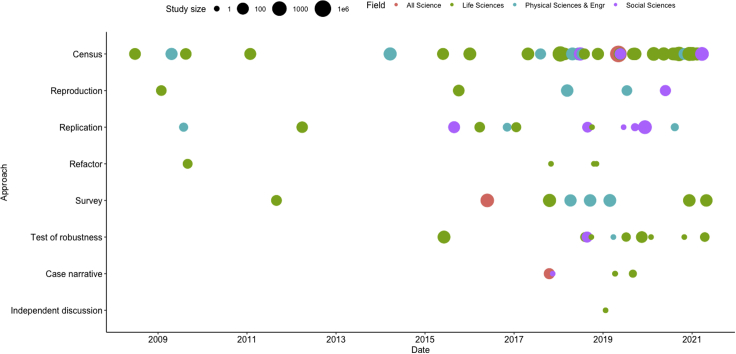

In the wake of this newfound interest in reproducibility, both the variety and volume of related case studies increased after 2015 (Figure 2). Likert-style surveys and high-level publication-based censuses (see Figure 3) in which authors tabulate data or code availability are most prevalent. In addition, low-level reproductions, in which code is executed, replications in which new data are collected and used, tests of robustness in which new tools or methods are used, and refactors to best practices are also becoming more popular. While the life sciences have generated more than half of these case studies, areas of the social and physical sciences are increasingly the subjects of important reproduction and replication efforts. These case studies have provided the best source of empirical data for understanding reproducibility and will likely continue to be valuable for evaluating the solutions we review in the next sections.

Figure 2.

Case studies in reproducible research

The term "case studies" is used in a general sense to describe any study of reproducibility.5 A reproduction is an attempt to arrive at comparable results with identical data using computational methods described in a paper. A refactor involves refactoring existing code into frameworks and reproducible best practices while preserving the original data. A replication involves generating new data and applying existing methods to achieve comparable results. A test of robustness applies various protocols, workflows, statistical models, or parameters to a given dataset to study their effect on results. A census is a high-level tabulation conducted by a third party. A survey is a questionnaire sent to practitioners. A case narrative is an in-depth first-person account. An independent discussion uses a secondary independent author to interpret the results of a study as a means to improve inferential reproducibility.

Figure 3.

Reproducibility

Censuses like this one by Obels et al. measure data and code availability and reproducibility in this case over a corpus of 118 studies, 62 of which were psychology studies that had preregistered a Registered Report (RR).20

Big data, big science, and open data

The inability of third parties to reproduce results is not new to science,21 but the scale of scientific endeavor and the level of data and method reuse suggest replication failures may damage the sustainability of certain disciplines, hence the term "reproducibility crisis." The problem of irreproducibility is compounded by the rise of "big data," in which very large, new, and often unique, disparate, or unformatted sources of data have been made accessible for analysis by third parties, and "big science," in which terabyte-scale datasets are generated and analyzed by multi-institutional collaborative research projects on specialized and possibly unique infrastructure. Metadata aspects of big data have been quantitatively studied concerning reuse,22,23 but not reproducibility, despite some evidence that big data may play a role in spurious results associated with reporting bias.24 Big data and big science have increased the demand for high-performance computing, specialized tools, and complex statistics, with attention to the growing popularity and application of machine learning and deep learning (ML/DL) techniques to these data sources. Such techniques typically train models on specific data subsets, and the models, as the end product of these methods, are often "black boxes," i.e., their internal predictors are not explainable (unlike older techniques such as regression) though they provide a good fit for the test data. Properly evaluating and reproducing studies that rely on such algorithms presents new challenges not previously encountered with inferential statistics.25,26 Computational reproducibility is typically focused on the last analytic steps of what is often a labor-intensive scientific process that often originates from wet-lab protocols, fieldwork, or instrumentation and these last in silico steps present some of the more difficult problems both from technical and behavioral standpoints, because of the amount of entropy introduced by the sheer number of decisions made by an analyst. Developing solutions to make ML/DL workflows transparent, interpretable, and explorable to outsiders, such as peer reviewers, is an active area of research.27

The ability of third parties to reproduce studies relies on access to the raw data and methods employed by authors. Much to the exasperation of scientists, statisticians, and scientific software developers, the rise of "open data" has not been matched by "open analysis," as evidenced by several case studies.20, 28, 29, 30

Missing data and code can obstruct the peer-review process, where proper review requires the authors to put forth the effort necessary to share a reproducible analysis. Software development practices, such as documentation and testing, are not a standard requirement of the doctoral curriculum, the peer-review process, or the funding structure, and as a result, the scientific community suffers from diminished reuse and reproducibility.31 Sandve et al.32 identified the most common sources of these oversights in "Ten Simple Rules for Reproducible Computational Research": lack of workflow frameworks, missing platform and software dependencies, manual data manipulation or forays into web-based steps, lack of versioning, lack of intermediates and plot data, and lack of literate programming or context can derail a reproducible analysis.

An issue distinct from the availability of source code and raw data is the lack of metadata to support reproducible research. We have observed that many of the findings from case studies in reproducibility point to missing methods details in an analysis, which can include software-specific elements such as software versions and parameters,33 but also steps along the entire scientific process, including data collection and selection strategies, data processing provenance including hardware and statistical methods, and linking these elements to publication. We find the key concept connecting all of these issues is metadata.

An ensemble of dependency management and containerization tools already exist to accomplish narrow-sense reproducibility34: the ability to execute a packaged analysis with little effort from a third party. But context to allow for robustness and replicability, "broad-sense reproducibility," is limited without endorsement and integration of necessary metadata standards that support discovery, execution, and evaluation. Despite the growing availability of open-source tools, training, and better executable notebooks, reproducibility is still challenging.35 In the following sections, we address these issues, first defining metadata, defining an "analytic stack" to abstract the steps of an in silico analysis, and then identifying and categorizing standards both established and in development to foster reproducibility.

Metadata

Over the past 25 years, metadata have gained acceptance as a key component of research infrastructure design. This trend is defined by numerous initiatives supporting the development and sustainability of hundreds of metadata standards, each with varying characteristics.36,37 Across these developments, there is a general high-level consensus regarding the following three types of metadata standards38,39:

-

1.

Descriptive metadata, supporting the discovery and general assessment of a resource (e.g., the format, content, and creator of the resource).

-

2.

Administrative metadata, supporting technical and other operational aspects affiliated with resource use. Administrative metadata include technical, preservation, and rights metadata.

-

3.

Structural metadata, supporting the linking among the components of a resource, so it can be fully understood.

There is also general agreement that metadata are a key aspect in supporting FAIR, as demonstrated by the FAIRsharing project (https://fairsharing.org), which divides standards types into "reporting standards" (checklists or templates, e.g., MIAME),40 "terminology artifacts or semantics" (formal taxonomies or ontologies to disambiguate concepts, e.g., Gene Ontology),41 "models and formats" (e.g., FASTA),42 "metrics" (e.g., FAIRMetrics)43 and "identifier schemata" (e.g., DOI)44,45 (see Table 1).

Table 1.

Types of FAIRsharing data and metadata standards

| Type of standard | Purpose |

|---|---|

| Reporting standards | Ensure adequate metadata for reproduction |

| Terminology artifacts or semantics | Concept disambiguation and semantic relationships |

| Models and formats | Interoperability |

| Identifier schemata | Discovery |

Metadata are by definition structured. However, structured intermediates and results that are used as part of scientific analyses and employ encoding languages such as JSON or XML are recognized as primary data, not metadata. While an exhaustive distinction is beyond the scope of this paper, we define reproducible computational research metadata broadly as any structured data that aids reproducibility and that can conform to a standard. While this definition may seem liberal, we contend that metadata are the "glue" of reproducibility, and best identified by its function rather than its origins. This general understanding of metadata as a necessary component for research and data management and growing interest in reproducible computational research, together with the fact that there are few studies targeting metadata about the analytic stack that motivated the research presented in this paper.

Goals and methods

Our overall goal of this work is to review existing metadata standards and new developments that are directly applicable to reproducible computational research, identify gaps, discuss common threads among these efforts, and recommend next steps toward building a more robust infrastructure.

Our method is framed as a state-of-the-art review based on literature and ongoing software development in the scientific community. Review steps included: (1) defining key components of the analytic stack, and functions that metadata can support; (2) selecting exemplary metadata standards that address aspects of the identified functions; (3) assessing the applicability of these standards for supporting computational reproducibility functions; and (4) designing the corresponding metadata hierarchy. Our approach was informed, in part, by the Qin LIGO case study,46 catalogs of metadata standards such as FAIRSharing, and comprehensive projects to bind semantic science such as Research Objects.47 Compilation of core materials was accomplished mainly through literature searches but also perusal of code repositories, ontology catalogs, presentations, and Twitter posts. A "word cloud" of the most used abstract terms in the cited papers revealing most general terms is available in the code repository.

The RCR metadata stack

To define the key aspects of reproducible computational research, we have found it useful to break down the typical scientific computational analysis workflow, or "analytic stack," into five levels: (1) input, (2) tools, (3) reports, (4) pipelines, and (5) publications. These levels correspond loosely to the CRISP-DM data science process model (understanding, prep, modeling, evaluation, deployment),48 scientific method (formulation, hypothesis, prediction, testing, analysis), and various research lifecycles as proposed by data curation communities (data search, data management, collection, description, analysis, archival, and publication)49 and software development communities (plan, collect, quality control, document, preserve, use). However, unlike the steps in the life cycle, we do not emphasize a strong temporal order to these layers, but instead consider them simply interactive components of any scientific output.

Results

In the course of our research, we found most standards, projects, and organizations were intended to address reproducibility issues that corresponded to specific activities in the analytic stack. However, metadata standards were unevenly distributed among the levels. Standards that could arguably be classified or repurposed into two or more areas were placed closest to their original intent. While we present the standards as a linear list of elements for the sake of clarity and comprehensibility, it is impossible to ignore their strongly intertwined nature. Pipelines, for example, also include data and code, journal articles, especially executable papers, and encompass metadata standards across many components. If communities are to embrace the RCR model, agreement is needed not just for individual metadata standards but also for elements that are used in concert.

The synthesis below first presents a summary table (Table 2), followed by a more detailed description of each of the five levels, specific examples, and a forecast of future directions.

Table 2.

High-level summary

| Metadata level | Description | Examples of metacontent | Examples of standards | Projects and organizations |

|---|---|---|---|---|

| 1. Input | metadata related to raw data and intermediates | sequencing parameters, instrumentation, spatiotemporal extent | MIAME,∗ EML,∗ DICOM∗, GBIF CIF ThermoML, CellML, DATS, FAANG, ISO/TC 276, NetCDF, OGC, GO |

OBO, NCBO, FAIRsharing, Allotrope |

| 2.Tools | metadata related to executable and script tools | version, dependencies, license, scientific domain | CRAN DESCRIPTION file,∗ Conda∗ meta.yaml/environment.yml, pip requirements.txt,∗ pipenv Pipfile/Pipfile.lock, Poetry pyproject.toml/poetry.lock, EDAM,∗ CodeMeta,∗ Biotoolsxsd, DOAP, ontosoft, SWO | Dockstore, Biocontainers |

| 3.Statistical reports and notebooks | literate statistical analysis documents in Jupyter or knitr, overall statistical approach or rationale | session variables, ML parameters, inline statistical concepts | OBCS, STATO∗ SDMX DDI, MEX,∗ MLSchema, MLFlow,∗ Rmd YAML∗ |

Neural Information Processing Systems Foundation |

| 4.Pipelines, preservation, and binding | dependencies and deliverables of the pipeline, provenance | file intermediates, tool versions, deliverables | CWL,∗ CWLProv,∗ RO-Crate,∗ RO, WICUS, OPM, PROV-O, ReproZip Config, ProvOne, WES, BagIt, BCO, ERC | GA4GH, ResearchObjects, WholeTale, ReproZip |

| 5.Publication | research domain, keywords, attribution | bibliographic, scientific field, scientific approach (e.g., "GWAS") | BEL,∗ Dublin Core, JATS, ONIX, MeSH, LCSH, MP, Open PHACTS, SWAN, SPAR, PWO, PAV | NeuroLibre, JOSS, ReScience, Manubot |

Metadata standards, including MIAME,40 EML,50 DICOM,51 GBIF,52 CIF,53 ThermoML,54 CellML,55 DATS,56 FAANG,57 ISO/TC 276,58 GO,41 Biotoolsxsd,59 meta.yaml,60 DOAP,61 ontosoft,62 EDAM,63 SWO,64 OBCS,65 STATO,66 SDMX,67 DDI),68 MEX,69 MLSchema,70 CWL,71 WICUS,72 OPM,73 PROV-O,74 CWLProv,75 ProvOne,76 PAV,77 BagIt,78 RO,47 RO-Crate (abstract by Sefton et al., 2019), BCO,79 Dublin Core,80 JATS,81 ONIX,82 MeSH,83 LCSH,84 MP,85 Open PHACTS,86 BEL,87 SWAN,88 SPAR,89 PWO.90 ∗Standards that are featured within this article. Examples of all standards can be found at https://github.com/leipzig/metadata-in-rcr.

Input

Input refers to raw data from wet lab, field, instrumentation, or public repositories; intermediate processed files; and results from manuscripts. Compared with other layers of the analytic stack, input data garner the majority of metadata standards. Descriptive standards (metadata) enable the documentation, discoverability, and interoperability of scientific research and make it possible to execute and repeat experiments. Descriptive metadata, along with provenance metadata, also provides context and history regarding the source, authenticity, and life cycle of the raw data. These basic standards are usually embodied in the scientific output of tables, lists, and trees, which take form in files of innumerable file and database formats as input to reproducible computational analyses, filtering down to visualizations and statistics in published journal articles. Most instrumentation, field measurements, and wet lab protocols can be supported by metadata used for detecting anomalies such as batch effects and sample mix-ups.

Input metadata also serves to characterize gestalt aspects of datasets that may explain failures to replicate, such as a lack of population diversity in genomic studies,91 or those that can quickly inform peer reviewers whether appropriate methods were employed for an analysis.

While metadata are often recorded from firsthand knowledge of the technician performing an experiment or the operator of an instrument, many forms of input metadata are in fact metrics that can be derived from the underlying data. This fact does not undermine the value of "derivable" metadata in terms of its importance for discovery, evaluation, and reproducibility.

Formal semantic ontologies represent one facet of metadata. The OBO Foundry92 and NCBI BioPortal serve as catalogs of life science ontologies. The usage of these ontologies appears to follow a steep Pareto distribution, with the most popular ontologies generating thousands of citations, whereas the vast majority of NCBO's 883 ontologies have never been cited or mentioned.

Examples

In addition to being the oldest, and arguably most visible of reproducibility metadata standards, input metadata standards serve as a watershed for downstream reproducibility. To understand what input means for computational reproducibility, we examine three well-established examples of metadata standards from different scientific fields. Considering each of these standards reflects different goals and practical constraints of their respective fields, their longevity merits investigating what characteristics they have in common.

DICOM: An embedded file header. Digital Imaging and Communications in Medicine (DICOM) is a medical imaging standard introduced in 1985.93 DICOM images require extensive technical metadata to support image rendering, and descriptive metadata to support clinical and research needs. These metadata coexist in the DICOM file header, which uses a group/element namespace to designate public restricted standard DICOM tags from private metadata. Extensive standardization of data types, called value representations (VRs) in DICOM, also follow this public/private scheme.94 The public tags, standardized by the National Electrical Manufacturers Association (NEMA), have served the technical needs of both 2- and 3-dimensional images, as well as multiple frames, and multiple associated DICOM files or "series." Conversely, descriptive metadata have suffered from "tag entropy" in the form of missing, incorrectly filled, nonstandard, or misused tags by technicians manually entering in metadata.95 This can pose problems both for clinical workflows as well as efforts to aggregate imaging data for data mining and machine learning. Advanced annotations supporting image segmentation and quantitative analysis have to conform to data structures imposed by the DICOM header format. This has made it necessary for programs such as 3DSlicer96 and its associated plugins, such as dcqmi,97 to develop solutions such as serializations to accommodate complex or hierarchical metadata.

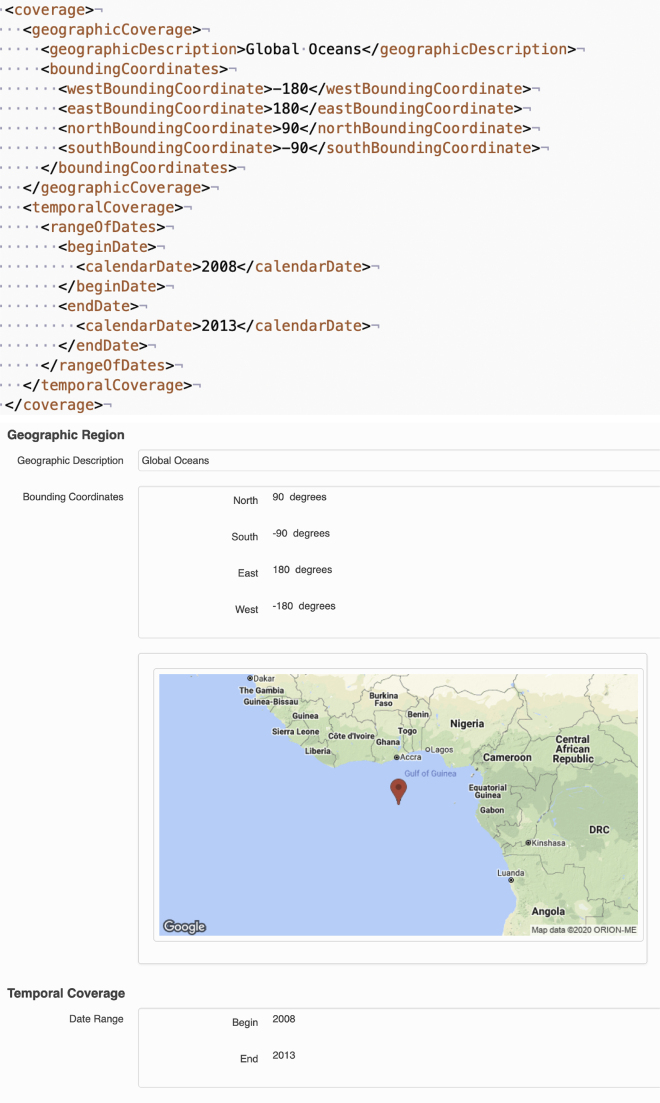

EML: Flexible user-centric data documentation. Ecological Metadata Language (EML) is a common language for sharing ecological data.50 EML was developed in 1997 by the ecology research community and is used for describing data in notable databases, such as the Knowledge Network for Biocomplexity (KNB) repository (https://knb.ecoinformatics.org/) and the Long Term Ecological Network (https://lternet.edu/). The standard enables documentation of important information about who collected the research data, when, and how, describing the methodology down to specific details and providing detailed taxonomic information about the scientific specimen being studied (Figure 4).

Figure 4.

Ecological metadata language

Geographic and temporal EML metadata and the associated display on Knowledge Network for Biocomplexity (KNB) from Halpern et al.98

MIAME: A submission-centric minimal standard. Minimum Information About a Microarray Experiment (MIAME)40 is a set of guidelines developed by the Microarray Gene Expression Data (MGED). society that has been adopted by many journals to support an independent evaluation of results. Introduced in 2001, MIAME allows public access to crucial metadata supporting gene expression data, i.e., quantitative measures of RNA transcripts via the Gene Expression Omnibus (GEO) database at the National Center for Biotechnology Information and European Bioinformatics Institute (EBI) ArrayExpress. The standard allows microarray experiments encoded in this format to be reanalyzed, supporting a fundamental goal of computational reproducibility: to support structured and computable experimental features.99

MIAME (Box 1) has been a boon to the practice of meta-analyses and harmonization of microarrays, offering essential array probeset, normalization, and sample metadata that make the over 2 million samples in GEO meaningful and reusable.100 However, it should be noted that among MIAME and other Investigation/Study/Assay (ISA) standards that have followed suit,101 none offer a controlled vocabulary for describing downstream computational workflows aside from slots to name the normalization procedure applied to what are essentially unitless intensity values.

Box 1. An example of MIAME in MINiML formathttps://www.ncbi.nlm.nih.gov/geo/info/MINiML_Affy_example.txt.

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<MINiML

xmlns=" https://www.ncbi.nlm.nih.gov/geo/info/MINiML "

xmlns:xsi=" http://www.w3.org/2001/XMLSchema-instance "

xsi:schemaLocation=" https://www.ncbi.nlm.nih.gov/geo/info/MINiML

https://www.ncbi.nlm.nih.gov/geo/info/MINiML.xsd"

version="0.5.0" >

<Contributor iid="contrib1">

<Person><First>Jun</First><Last>Shima</Last></Person>

</Contributor>

<Contributor iid="contrib2">

<Person><First>Fumiko</First><Last>Tanaka</Last></Person>

</Contributor>

<Contributor iid="contrib3">

<Person><First>Akira</First><Last>Ando</Last></Person>

</Contributor>

<Contributor iid="contrib4">

<Person><First>Toshihide</First><Last>Nakamura</Last></Person>

</Contributor>

<Contributor iid="contrib5">

<Person><First>Hiroshi</First><Last>Takagi</Last></Person>

</Contributor>

<Database iid="GEO">

<Name>Gene Expression Omnibus (GEO)</Name>

<Public-ID>GEO</Public-ID>

<Organization>NCBI NLM NIH</Organization>

<Web-Link> https://www.ncbi.nlm.nih.gov/geo </Web-Link>

<Email> geo@ncbi.nlm.nih.gov </Email>

</Database>

<Platform iid="GPL90">

<Accession database="GEO">GPL90</Accession>

</Platform>

<Sample iid="Sample1">

<Title>

before fermentation

</Title>

<Channel-Count>1</Channel-Count>

<Channel position="1">

<Source>mRNA T128</Source>

<Organism>Saccharomyces cerevisiae</Organism>

<Characteristics>

Future directions: Encoding, findability, granularity, dimensionality

Metadata for input is developing along descriptive, administrative, and structural axes. Scientific computing has continuously and selectively adopted technologies and standards developed for the larger technology sector. Perhaps most salient from a development standpoint is the shift from extensible markup language (XML) to more succinct Javascript Object Notation (JSON) and Yet Another Markup Language (YAML) as preferred formats, along with requisite validation schema standards.102

The term "semantic web" describes an early vision of the Internet based on machine-readable contextual markup and semantically linked data using Uniform Resource Identifier (URI).103 Schema.org, a consortium of e-commerce companies developing tags for markup and discovery, such as those recognized by Google Dataset Search,104 has coalesced a stable set of tags that is expanding into scientific domains, demonstrating the potential for findability. Schema.org can be used to identify and distinguish inputs and outputs of analyses in a disambiguated and machine-readable fashion. DATS,56 a Schema.org-compatible tag-suite describes fundamental metadata for datasets akin to that used for journal articles, especially to enable access to sensitive data. Combined with solutions for securely accessing analysis tools,105,106 DATS can solve an often invoked impediment to reproducibility: that of unshareable data. The Open Research Knowledge Graph107 (ORKG) aims to bring meaningfulness to scholarly documents in the same way as the semantic web for online documents. ORKG's structured semantic metadata on research contributions could not only improve findability and make scientific knowledge machine readable, but also mitigate reproducibility challenges.

Of increasing interest to the life sciences is the representation of phenotypic data to accompany various omics studies, as primary variables for genotype-by-environment studies, to control for possible confounds and random effects, and as labels for machine learning efforts toward genotype-to-phenotype prediction. Phenotypic metadata for human studies, ranging from basic demographics (e.g., sex, age) to complex attributes, such as disease, is often crucial to interpreting and reusing omics data. However, a study of 29 transcriptomics-based sepsis studies revealed 35% of the phenotypic information was lost in public repositories relative to their respective publication.108 Efforts to standardize phenotypic information for plants, such as Minimal Information About Plant Phenotyping Experiment (MIAPPE), are challenged by a highly heterogeneous landscape of species, data types, and experimental designs.109 This has required the development of the Plant Phenotyping Experiment Ontology (PPEO) data model with elements unique to botany.

Finally, the growing scope for input metadata describing and defining unambiguous lab operations and protocols is important for reproducibility. One example of such an input metadata framework is the Allotrope Data Format, an HDF5 data structure, and accompanying ontology for chemistry protocols used in the pharmaceutical industry.110 Allotrope uses the W3C Shapes Constraint Language (SHACL) to describe which RDF relationships are valid to describe lab operations.

Tools

Tool metadata refers to administrative metadata associated with computing environments, compiled executable software, and source code. In scientific workflows, executable and script-based tools are typically used to transform raw data into intermediates that can be analyzed by statistical packages and visualized as, e.g., plots or maps. Scientific software is written for a variety of platforms and operating systems; although Unix/Linux-based software is especially common, it is by no means a homogeneous landscape. In terms of reproducing and replicating studies, the specification of tools, tool versions, and parameters is paramount. In terms of tests of robustness (same data/different tools) and generalizations (new data/different tools), communicating the function and intent of a tool choice is also important and presents opportunities for metadata. Scientific software is scattered across many repositories in both source and compiled forms. Consistently specifying the location of software using URLs is neither trivial nor sustainable. To this end, a Software Discovery Index was proposed as part of the NIH Big Data To Knowledge (B2DK) initiative.1 Subsequent work in the area cited the need for unique identifiers, supported by journals, and backed by extensive metadata.111

Examples

The landscape of metadata standards in tools is best organized into efforts to describe tools, dependencies, and containers.

CRAN, EDAM, and CodeMeta: Tool description and citation. Source code spans both tools and literate statistical reports, although for convenience we classify code as a subcategory of tools. Metadata standards do not exist for loose code, but packaging manifests with excellent metadata standards exist for several languages, such as R's Comprehensive R Archive Network (CRAN) DESCRIPTION files (Box 2).

Box 2. R description An R package DESCRIPTION file from DESeq2.112.

Package: DESeq2

Type: Package

Title: Differential gene expression analysis based on the negative

binomial distribution

Version: 1.33.1

Authors@R: c(

person("Michael", "Love", email="michaelisaiahlove@gmail.com", role =

c("aut","cre")),

person("Constantin", "Ahlmann-Eltze", role = c("ctb")),

person("Kwame", "Forbes", role = c("ctb")),

person("Simon", "Anders", role = c("aut","ctb")),

person("Wolfgang", "Huber", role = c("aut","ctb")),

person("RADIANT EU FP7", role="fnd"),

person("NIH NHGRI", role="fnd"),

person("CZI", role="fnd"))

Maintainer: Michael Love<michaelisaiahlove@gmail.com>

Description: Estimate variance-mean dependence in count data from

high-throughput sequencing assays and test for differential

expression based on a model using the negative binomial

distribution.

License: LGPL (>= 3)

VignetteBuilder: knitr, rmarkdown

Imports: BiocGenerics (>= 0.7.5), Biobase, BiocParallel, genefilter,

methods, stats4, locfit, geneplotter, ggplot2, Rcpp (>= 0.11.0)

Depends: S4Vectors (>= 0.23.18), IRanges, GenomicRanges,

SummarizedExperiment (>= 1.1.6)

Suggests: testthat, knitr, rmarkdown, vsn, pheatmap, RColorBrewer,

apeglm, ashr, tximport, tximeta, tximportData, readr, pbapply,

airway, pasilla (>= 0.2.10), glmGamPoi, BiocManager

LinkingTo: Rcpp, RcppArmadillo

URL: https://github.com/mikelove/DESeq2

biocViews: Sequencing, RNASeq, ChIPSeq, GeneExpression, Transcription,

Normalization, DifferentialExpression, Bayesian, Regression,

PrincipalComponent, Clustering, ImmunoOncology

RoxygenNote: 7.1.1

Encoding: UTF-8

Recent developments in tools metadata have focused on tool description, citation, dependency management, and containerization. The last two advances, exemplified by the Conda and Docker projects (described below), have largely made computational reproducibility possible, at least in the narrow sense of being able to reliably version and install software and related dependencies on other people's machines. Often small changes in software and reference data can have substantial effects on an analysis.113 Tools like Docker and Conda respectively make the computing environment and version pinning software tenable, thereby producing portable and stable environments for reproducible computational research.

The EMBRACE Data And Methods (EDAM) ontology provides high-level descriptions of tools, processes, and biological file formats.63 It has been used extensively in tool recommenders,114 tool registries,115 and within pipeline frameworks and workflow languages.116,117 In the context of workflows, certain tool combinations tend to be chained in predictable usage patterns driven by application; these patterns can be mined for tool recommender software used in workbenches.118

CodeMeta119 prescribes JSON-LD (JSON for Linked Data) standards for code metadata markup. While CodeMeta is not itself an ontology, it leverages Schema.org ontologies to provide language-agnostic means of describing software as well as "crosswalks" to translate manifests from various software repositories, registries, and archives into CodeMeta (Box 3).

Box 3. CodeMeta A snippet of CodeMeta JSON file from Price et al.120 using Schema.org contextual tags.

{

"@context": [

" https://doi.org/10.5063/schema/codemeta-2.0 ",

],

"@type": "SoftwareSourceCode",

"identifier": "baydem",

"description": "Bayesian tools for reconstructing past and present\n

demography.",

"name": "baydem: Bayesian Tools for Reconstructing Past and Present Demography",

"license": "https://spdx.org/licenses/MIT",

"version": "0.1.0",

"programmingLanguage": {

"@type": "ComputerLanguage",

"name": "R",

"url": " https://r-project.org "

},

"runtimePlatform": "R version 4.0.2 (2020-06-22)",

"author": [

{

"@type": "Person",

"givenName": ["Michael", "Holton"],

"familyName": "Price",

"email": "michaelholtonprice@sgmail.com"

}

]

Considerable strides have been made in improving software citation standards,121 which should improve the provenance of methods sections that cite those tools that do not already have accompanying manuscripts. Code attribution is implicitly fostered by the application of large-scale data mining of code repositories, such as Github is the generation of dependency networks,122 measures of impact,123 and reproducibility censuses.124

Dependency and package management metadata. Compiled software often depends on libraries that are shared by many programs on an operating system. Conflicts between versions of these libraries, and software that demands obscure or outdated versions of these libraries, are a common source of frustration for users who install scientific software and a major hurdle to distributing reproducible code. Until recently, installation woes and "dependency hell" were considered a primary stumbling block to reproducible research.125 Software written in high-level languages such as Python and R has traditionally relied on language-specific package management systems and repositories, e.g., pip and PyPI for Python, and the install.packages() function and CRAN for R. The complexity yet unavoidability of controlling dependencies led to competing and evolving tools, such as pip, Pipenv, and Poetry in the Python community, and even different conceptual approaches, such as the CRAN time machine. In recent years, a growing number of scientific software projects use combinations of Python and compiled software. The Conda project (https://conda.io) was developed to provide a universal solution for compiled executables and script dependencies written in any language. The elegance of providing a single requirements file has contributed to Conda's rapid adoption for domain-specific library collections such as Bioconda,126 which are maintained in "channels" that can be subscribed and prioritized by users.

Fledgling standards for containers. For software that requires a particular environment and dependencies that may conflict with an existing setup, a lightweight containerization layer provides a means of isolating processes from the underlying operating system, basically providing each program with its own miniature operating system. The ENCODE project127 provided a virtual machine for a reproducible analysis that produced many figures featured in the article and serves as one of the earliest examples of an embedded virtual environment. While originally designed for deploying and testing e-commerce web applications, the Docker containerization system has become useful for scientific environments where dependencies and permissions become unruly. Several papers have demonstrated the usefulness of Docker for reproducible workflows125,128 and as a central unit of tool distribution.129,130

Conda programs can be trivially Dockerized, and every BioConda package gets a corresponding BioContainer131 image built for Docker and Singularity, a similar container solution designed for research environments. Because Dockerfiles are similar to shell scripts, Docker metadata are an underutilized resource and one that may need to be further leveraged for reproducibility. Docker does allow for arbitrary custom key-value metadata (labels) to be embedded in containers (Box 4). The Open Container Initiative's Image Format Specification (https://github.com/opencontainers/image-spec/) defines pre-defined keys, e.g., for authorship, links, and licenses. In practice, the now deprecated Label Schema (http://label-schema.org/rc1/) labels are still pervasive, and users may add arbitrary labels with prepended namespaces. It should be noted that containerization is not a panacea and Dockerfiles can introduce irreproducibility and decay if contained software is not sufficiently pinned (e.g., by using so-called lockfiles) and installed from sources that are available in the future.

Box 4. Excerpt from a Dockerfile: LABEL instruction with image metadata Source: https://github.com/nuest/ten-simple-rules-dockerfiles/blob/master/examples/text-analysis-wordclouds_R-Binder/Dockerfile.

LABEL maintainer="daniel.nuest@uni-muenster.de" \

Name="Reproducible research at GIScience - computing environment" \

org.opencontainers.image.created="2020-04" \

org.opencontainers.image.authors="Daniel Nüst" \

org.opencontainers.image.url=" https://github.com/nuest/reproducible-research-at-giscience/blob/master/Dockerfile " \

org.opencontainers.image.documentation=" https://github.com/nuest/reproducible-research-at-giscience /" \

org.opencontainers.image.licenses="Apache-2.0" \

org.label-schema.description="Reproducible workflow image (license: Apache 2.0)"

Future directions

Automated repository metadata. Source code repositories such as Github and Bitbucket are designed for collaborative development, version control, and distribution and as such do not enforce any reproducible research standards that would be useful for evaluating scientific code submissions. As a corresponding example to the NLP above, there are now efforts to mine source code repositories for discovery and reuse.132

Data as a dependency. “Data libraries,” which pair data sources with common programmatic methods for querying them, are very popular in centralized open source repositories such as Bioconductor,133 and scikit-learn,134 despite often being large downloads. Tierney and Ram provide a best practices guide to the organization and necessary metadata for data libraries and independent datasets.135 Ideally, users and data providers should be able to distribute data recipes in a decentralized fashion, for instance, by broadcasting data libraries in user channels. Most raw data include a limited number of formats, but ideally, data should be distributed in packages bound to a variety of tested formatters. One solution, Gogetdata136 is a project that can be used to specify versioned data prerequisites to coexist with software within the Conda requirements specification file. A private company called Quilt is developing similar data-as-a-dependency solutions bound to a cloud computing model. A similar effort, Frictionless Data, focuses on JSON-encoded schemas for tabular data and data packages featuring a manifest to describe constitutive elements. From a Docker-centric perspective, the Open Container Initiative137 is working to standardize "filesystem bundles": the collection of files in a container and their metadata. In particular, container metadata are critical for relating the contents of a container to its source code and version, its relationship with other containers, and how to use the container. Ongoing research on container preservation138,139 can introduce new structural metadata on container usage to avoid container images becoming just a binary bitstream when they are archived, but instead they remain understandable and even actionable.

Neither Conda nor Docker is explicitly designed to describe software with fixed metadata standards or controlled vocabularies. This suggests that a centralized database should serve as a primary metadata repository for tool information, rather than a source code repository, package manager, or container store. An example of such a database is the GA4GH Dockstore,140 a hub and associated website that allows for a standardized means of describing and invoking Dockerized tools as well as sharing workflows based on them.

Statistical reports and notebooks

Statistical reports and notebooks serve as an annotated session of an analysis. Though they typically use input data that have been processed by scripts and workflows (see below), they can be characterized as a step in the workflow rather than apart from it, and for some smaller analyses, all processing can be done within these notebooks. Statistical reports and notebooks occupy an elevated reputation as being an exemplar of reproducible best practices, but they are not a reproducibility panacea and can introduce additional challenges, one reason being the metadata supporting them is surprisingly sparse.

Statistical reports that use “literate programming,” combining statistical code with descriptive text, markup, and visualizations, have been a standard for statistical communication since the advent of Sweave.141 Sweave allowed R and LaTeX markup to be mixed in chunks, allowing the adjacent contextual descriptions of statistical code to serve as guideposts for anyone reading a Sweave report, typically rendered as PDF. An evolution of Sweave, knitr,142 extended choices of both markup (allowing Markdown) and output (HTML) while enabling tighter integration with integrated development environments such as RStudio.143 A related project that started in the Python ecosystem but now supports several kernels, Jupyter,144 combined the concept of literate programming with an REPL (read-eval-print loop) in a web-based interactive session in which each block of code is kept stateful and can be reevaluated. These live documents are known as "notebooks." Notebooks provide a means of allowing users to directly analyze data programmatically using common scripting languages, and access more advanced data science environments such as Spark, without requiring data downloads or localized tool installation if run on cloud infrastructures. Using preloaded libraries, cloud-based notebooks can alleviate time-consuming permissions recertification, downloading of data, and dependency resolution, while still allowing persistent analysis sessions. Dataset-specific Jupyter notebooks "spawned" for thousands of individuals temporarily have been enabled as companions for Nature articles145 and are commonly used in education. Cloud-based notebooks have not yet been extensively used in data portals, but they represent the analytical keystone to the decade-long goal of "bringing the tools to the data." Notebooks offer possibilities over siloed installations in terms of eliminating the data science bottlenecks common to data analyses: cloud-based analytic stacks, cookbooks, and shared notebooks.

Collaborative notebook sharing has been used to accelerate the analysis cycle by allowing users to leverage existing code. The predictive analytics platform Kaggle employs an open implementation of this strategy to host data exploration events. This approach is especially useful for sharing data cleaning tasks—removing missing values, miscategorizations, and phenotypic standardization, which can represent 80% of effort in an analysis.146 Sharing capabilities in existing open-source notebook platforms are at a nascent stage, but this presents possibilities for reproducible research environments to flourish. One promising project in this area is Binder, which allows users to instantiate live Jupyter notebooks and associated Dockerfiles stored on Github within a Kubernetes-backed service.147,148

At face value, reports and notebooks resemble source code or scripts, but as the vast majority of statistical analysis and machine learning education and research is conducted in notebooks, they represent an important area for reproducibility.

Examples

R Markdown headers. As we mentioned, statistical reports and notebooks do not typically leverage structured metadata for reproducibility. R Markdown–based reports, such as those processed by knitr, do have a YAML-based header or front matter (Box 5). These are used for a wide variety of technical parameters for controlling display options, for providing structured metadata on authors, e.g., when used for scientific publications with the rticles package,149 or for parameterizing the included workflow (https://rmarkdown.rstudio.com/developer_parameterized_reports.html). However, no schema or standards exist for their validation beyond syntax, and different tools freely extend them for their own needs.

Box 5. A YAML-based R Markdown header for demonstration purposes Full document shared in this papers' repository at https://github.com/leipzig/metadata-in-rcr/.

--- title: "A title for the analysis" # author metadata, esp. used for scientific articles

author:

- name: Jeremy Leipzig

footnote: Corresponding author

affiliation: "Metadata Research Center, Drexel University, College of Computing and Informatics,

orcid: "0000-0001-7224-9620"

- name: Daniel Nüst

affiliation: "Institute for Geoinformatics, University of Münster, Germany"

orcid: "0000-0002-0024-5046"

email: daniel.nuest@uni-muenster.de

# parameters to manipulate workflow; defaults can be changed when compiling the document

params:

year: 2020

region: "Europe"

printcode: TRUE

data: file.csv

max_n: 42

# configuration and styling of different output document formats

output:

html_document:

theme: lumen

toc: true

toc_float:

collapsed: false

code_folding: show

self_contained: true

pdf_document:

toc: yes

fig_caption: yes

df_print: kable

linkcolor: blue

# field values can be generated from code

date: "`r format(Sys.time(), '%d %B, %Y')`"

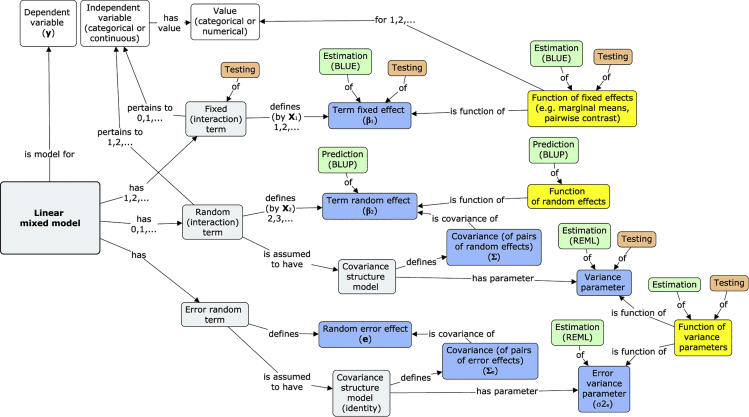

Statistical and machine learning metadata standards. The intense interest paired with the competitive nature of machine learning and deep learning conferences such as Neurips demands high reproducibility standards.150 Given the predominance of notebooks for disseminating machine learning workflow, we focused our attention on finding statistical and machine learning metadata standards that would apply to content found with notebooks. The opacity, rapid proliferation, and multifaceted nature of machine learning and data mining statistical methods to nonexperts suggest it is necessary to begin cataloging and describing them at a more refined level than crude categories (e.g., clustering, classification, regression, dimension reduction, feature selection). So far, the closest attempt to decompose statistics in this manner is the STATO statistical ontology (http://stato-ontology.org/), which can be used to semantically, rather than programmatically or mathematically, define all aspects of a statistical model and its results, including assumptions, variables, covariates, and parameters (Figure 5). While STATO is currently focused on univariate statistics, it represents one possible conception for enabling broader reproducibility than simply relying on specific programmatic implementations of statistical routines.

Figure 5.

STATO

Concepts describing a linear mixed model used by STATO151

MEX is designed as a vocabulary to describe the components of machine learning workflows. The MEX vocabulary builds on PROV-O to describe specific machine learning concepts such as hyperparameters and performance measures and includes a decorator class to work with Python.

Future directions: Parameter tracking

MLFlow152 is designed specifically to handle hyperparameter tracking for machine learning iterations or "runs" performed in the Apache Spark, but also tracks arbitrary artifacts and metrics associated with these. The metadata format that MLFlow uses exposes variables that are explored and tuned by end-users (Box 6).

Box 6. MLflow snippet showing exposed hyperparameters.

name: HyperparameterSearch

conda_env: conda.yaml

entry_points:

# train Keras DL model

train:

parameters:

training_data: {type: string, default: "./datasets/wine-quality.csv"}

epochs: {type: int, default: 32}

batch_size: {type: int, default: 16}

learning_rate: {type: float, default: 1e-1}

momentum: {type: float, default: .0}

seed: {type: int, default: 97531}

command: "python train.py {training_data}

--batch-size {batch_size}

--epochs {epochs}

--learning-rate {learning_rate}

--momentum {momentum}"

Pipelines

Most scientific analyses are conducted in the form of pipelines, in which a series of transformations is performed on raw data, followed by statistical tests and report generation. Pipelines are also referred to as "workflows," which sometimes also encompasses steps outside an automated computational process. Pipelines represent the computation component of many papers, in both basic research and tool papers. Pipeline frameworks or scientific workflow management systems (SWfMS) are platforms that enable the creation and deployment of reproducible pipelines in a variety of computational settings including cluster and cloud parallelization. The use of pipeline frameworks, as opposed to standalone scripts, has recently gained traction, largely due to the same factors (big data, big science) driving the interest of reproducible research. Although frameworks are not inherently more reproducible than shell scripts or other scripted ad hoc solutions, use of them tends to encourage parameterization and configuration that promote reproducibility and metadata. Pipeline frameworks are also attractive to scientific workflows in that they provide tools for the reentrancy—restarting a workflow where it left off, implicit dependency resolution—allowing the framework engine to automatically chain together a series of transformation tasks, or "rules," to produce a user-supplied file target. Collecting and analyzing provenance, which refers to the record of all activities that go into producing a data object, is a key challenge for the design of pipelines and pipeline frameworks.

The number and variety of pipeline frameworks have increased dramatically in recent years; each framework built with design philosophies that offer varying levels of convenience, user-friendliness, and performance. There are also tradeoffs between the dynamicity of a framework, in terms of its ability to behave flexibly (e.g., skip certain tasks, re-use results from a cache) based on input, that will affect the apparent reproducibility and the run-level metadata that is required to inspire confidence in an analyst's ability to infer how a pipeline behaved in a particular situation. Leipzig153 reviewed and categorized these frameworks into three key dimensions: using an implicit or explicit syntax; using a configuration, convention, or class-based design paradigm; and offering a command line or workbench interface.

“Convention-based frameworks” are typically implemented in a domain-specific language, a meaningful symbol set to represent rule input, output, and parameters that augment existing scripting languages to provide the glue to create workflows. These can often mix shell-executable commands with internal script logic in a flexible manner. “Class-based pipeline frameworks” augment programming languages to offer fine-granularity means of efficient distribution of data for high-performance cluster computing frameworks such as Apache Spark. “Configuration-based frameworks” abstract pipelines into configuration files, typically XML or JSON which contain little or no code. Workbenches such as Galaxy,154 Kepler,155 KNIME,156 Taverna,157 and commercial workbenches, such as Seven Bridges Genomics and DNANexus, typically offer canvas-like graphical user interfaces by which tasks can be connected and always rely on configuration-based tool and workflow descriptors. Customized workbenches configured with a selection of pre-loaded tools and workflows and paired with a community web portal are often termed "science gateways."

Examples

CWL: A configuration-based framework for interoperability. The Common Workflow Language (CWL)71 is a specification for tools and workflows to share across several pipeline frameworks, adopted by several workbenches. CWL manages the exacting specification of file inputs, outputs, parameters that are "operational metadata" used by the workflow machinery to communicate with the shell and executable software (Figure 6). While these metadata are primarily operational in nature and rarely accessed outside the context of a compatible runner such as Rabix158 or Toil,159 CWL also enables tool metadata in the form of versioning, citation, and vendor-specific fields that may differ between implementations.

Figure 6.

Common workflow language

Snippets of a COVID-19 variant detection CWL workflow and the workflow as viewed through the cwl-viewer.160

Note the EDAM file definitions.

Using this metadata, an important aspect of CWL is the focus on richly describing tool invocations both for reproducibility and documentation purposes, with tools referenced as retrievable Docker images or Conda packages, and identifiers to EDAM,63 ELIXIR's bio.tools59 registry and Research Resource Identifiers (RRIDs).161 This wrapping of command line tool interfaces is used by GA4GH Dockstore140 for providing a uniform executable interface to a large variety of computational tools even outside workflows.

While there are many other configuration-based workflow languages, CWL is notable for the number of parsers that support its creation and interpretation, and an advanced linked data validation language, called Schema Salad. Together with supporting projects, such as Research Objects, the CWL appears amenable to being used as metadata.

Future directions

Interoperable script and workflow provenance. For future metadata to support pipeline reproducibility, it must accommodate a huge menagerie of solutions that coexist inside a number of computing environments. Large organizations have been encouraging the use of cloud-based data commons, but solutions that target the majority of published scientific analysis must address the fact that many if not most of them will not use a data commons or even a pipeline framework. Because truly reproducible research implies evaluation by third parties, portability is an ongoing concern.

Pimentel et al. reviewed and categorized 27 approaches to collecting provenance from scripts.162 A wide variety of relational databases and proprietary file formats are used to store, distribute, visualize, version, and query provenance from these tools. The authors found that while four approaches—RDataTracker,163 SPADE,164 StarFlow,165 and YesWorkflow166—natively adopt interoperable W3C PROV or OPM standards as export, most were designed for internal usage and did not enable sharing or comparisons of provenance. In part, these limitations are related to primary goals and scope of these provenance tracking tools.

For analyses that use workflows, a prerequisite for reproducible research is the ability to reliably share "workflow enactments," or runs that encompass all elements of the analytic stack. Unlike pipeline frameworks geared toward cloud-enabled scalability, compatibility with executable command-line arguments and programmatic extensibility afforded by DSLs, Vistrails was designed explicitly to foster provenance tracking and querying, both prospective and retrospective.167 As part of the WINGS project, Garijo et al.168 use linked-data standards—OWL, PROV, and RDF—to create a framework-agnostic Open Provenance for Workflows (OPMW) for greater semantic possibilities for user needs in workflow discovery and publishing. The CWLProv75 project implements a CWL-centric and RO-based solution with a goal of defining a format of implementing retrospective provenance.

Packaging and binding building blocks. While we have attempted to classify metadata across layers of the analytic stack, there are a number of efforts to tie or bind all these metadata that define a research compendia explicitly. A research compendium (RC) is a container for building blocks of a scientific workflow. Originally defined by Gentleman and Temple Lang as a means for distributing and managing documents, data, and computations using a programming language's packaging mechanism, the term is now used in different communities to provide code, data, and documentation (including scientific manuscripts) in a meaningful and useable way (https://research-compendium.science/). A best practice compendium includes environment configuration files (see above), has files that are under version control, and uses accessible plain text formats. Instead of a formal workflow specification, inputs, outputs, and control files and the required commands are documented for human users in a README file. While an RC can take many forms, the flexibility is also a challenge for extracting metadata. The Executable Research Compendium (ERC) formalizes the RC concept with an R Markdown notebook for the workflow and a Docker container for the runtime environment.169 A YAML configuration file connects these parts (Box 7), configures the document to be displayed to a human user, and provides minimal metadata on licenses. The concept of bindings connects interactive parts of an ERC workflow with the underlying code and data.170

Box 7. erc.yml example fileSee the specification at https://o2r.info/erc-spec/.

id: b9b0099e-9f8d-4a33-8acf-cb0c062efaec

spec_version: 1

licenses:

code: Apache-2.0

data: data-licenses.txt

text: "Creative Commons Attribution 2.0 Generic (CC BY 2.0)"

metadata: "see metadata license headers"

Instead of trying to establish a common standard and single point for metadata, the ERC intentionally skips formal metadata and exports the known information into multiple output files and formats, such as Zenodo metadata as JSON or Datacite as XML, accepting duplication for the chance to provide usable information in the long term.

Perhaps the most prominent realization of the RC concept is Research Objects171 and the subsequent RO-Crate (Carragáin, E.Ó., et al., 2019, BOSC, abstract) projects (Box 8), which strive to be comprehensive solutions for binding code, data, workflows, and publications into a metadata-defined package. RO-Crate is lightweight JSON-LD (javascript object notation linked data) that supports Schema.org concepts to identify and describe all constituent files from the analytic stack and various people, publication, and licensing metadata, as well as provenance both between workflows and files and across crate versions.

Box 8. RO-Crate metadata.

{ "@context": "https://w3id.org/ro/crate/1.0/context",

"@graph": [

{

"@type": "CreativeWork",

"@id": "ro-crate-metadata.jsonld",

"conformsTo": {"@id": " https://w3id.org/ro/crate/1.0 "},

"about": {"@id": "./"}

},

{

"@id": "./",

"identifier": " https://doi.org/10.4225/59/59672c09f4a4b ",

"@type": "Dataset",

"datePublished": "2020",

"name": "Data files associated with the manuscript:The Role of Metadata in

Reproducible Computational Research",

"description": "Palliative care planning for nursing home residents with

advanced dementia …",

"license": {"@id": " https://creativecommons.org/licenses/by-nc-sa/3.0/au/ "},

"hasPart": [

{

"@id": "src/"

},

{

"@id": "metadata_examples/"

}

]

},

{

"@id": "https://creativecommons.org/licenses/by-nc-sa/4.0/au/",

"@type": "CreativeWork",

"description": "Creative Commons Attribution 4.0 International Public License

(Public License)",

"identifier": "https://creativecommons.org/licenses/by-nc-sa/3.0/au/",

"name": "Attribution-NonCommercial-ShareAlike 3.0 Australia (CC BY-NC-SA 3.0 AU)"

}

]

}

An alternative approach to binding is to leverage existing work in "application profiles,"172 a highly customizable means of combining namespaces from different metadata schemas. Application profiles follow along the Singapore Framework (Figure 7), and guidelines supported by the Dublin Core Metadata Initiative (DCMI).

Figure 7.

Singapore Framework application profile model

Publication

Our conception of the analytic stack points to the manuscript as the final product of an analysis. Due to the requirements of cataloging, publishing, attribution, and bibliographic management, journals employ a robust set of standards including MARC21 and ISO_2709 for citations, and Journal Article Tag Suite (JATS) for manuscripts. Library science has been an early adopter of many metadata standards and encoding formats (e.g., XML) later used throughout the analytic stack. Supplementing and extending these standards to accommodate reproducible analyses connected or even embedded in publications is an open area for development.

For the purposes of reproducibility, we are most interested in finding publication metadata standards that attempt to support structured results as a "first-class citizen," essentially input metadata but for integration into the manuscript.

The methods section of a peer-reviewed article is the oldest and often the sole source of metadata related to an analysis. However, methods sections and other free-text supplementals are notoriously poor and unreliable examples of reproducible computational research, as evidenced by the Amgen findings and numerous reproduction studies. A number of text mining efforts have sought to extract details of the software used in analyses directly from methods sections for purposes of survey173,174 and recommendation175 using natural language processing (NLP). The ProvCaRe database and web application extend this to both computational and clinical findings by using a wide-ranging corpus of provenance terms and extending existing PROV-O ontology.176 While these efforts are noble, they can never entirely bridge the gap between human-readable protocols and machine-readable metadata schemes.

Journals share an important responsibility to enforce and incentivize reproducible research,177 but most peer-reviewed publications have been derelict in this role. While many have raised standards for open data access, "open analysis" is still an alien concept to many journals and code execution during peer review a rare understudied practice.178 Some journals, such as Nature Methods, do require authors to submit source code.179 Of the most prestigious life science journals (Nature, Science, Cell), the requirements vary considerably and it is not clear how these guidelines are actually enforced.180 Few journals have clear reproduction policies.181 The CODECHECK initiative aims to establish a minimum workflow for independent code execution during peer review to be adopted by publishers.181 A YAML configuration file (https://codecheck.org.uk/spec/config/1.0/) in each code repository provides metadata for a check. The metadata connects the publication's and the reproduction certificate's DOIs, provides authorship information, lists the output files that were reproduced in a manifest, and is published in a register listing all checks (https://codecheck.org.uk/register/).

Container portals, package repositories, and workbenches do provide some additional inherent structure that would be useful for journals to require, but these often lack any binding with notebooks or elegant routes to report generation that would guarantee the scientific code matches the results contained with a manuscript. We should not underestimate the technical challenges of building and maintaining these advances. Computational provenance between all figures and tables in a manuscript and the underlying analysis is an open area of research that we discuss below.

Examples

Formalization of the results of biological discovery. In the scientific literature, authors must not only outline the formulation of their experiments, their execution, and their results, but also an interpretation of the results with respect to an overarching scientific goal. Due to the lack of specificity of prose and the needless jargon endemic to modern scientific discourse, both the goals and interpretation of results are often obfuscated such that the reader must exert considerable effort to understand. This burden is further exacerbated by the acceleration of the growth of the body of scientific literature. As a result, it has become overwhelming, if not impossible, for researchers to follow the relevant literature in their respective fields, even with the assistance of search tools like PubMed and Google.

The solution lies in the formalization of the interpretation presented in the scientific literature. In molecular biology, several formalisms (e.g., BEL,87 SBML,182 SBGN,183 BioPAX,184 GO-CAM)185 have the facility to describe the interactions between biological entities that are often elucidated through laboratory or clinical experimentation. Further, there are several organizations186, 187, 188, 189, 190 whose purpose is to curate and formalize the scientific literature in these formats and distribute them in one of several databases and repositories. Because curation is both difficult and time-consuming, several semi-automated NLP191,192 curation workflows based on NLP-based relation extraction systems193, 194, 195 and assemblers196 have been proposed to assist.

The Biological Expression Language (BEL) captures causal, correlative, and associative relationships between biological entities along with the experimental/biological context in which they were observed as well as the provenance of the publication from which the relation was reported (https://biological-expression-languge.github.io). It uses a text-based custom domain-specific language (DSL) to enable biologists and curators alike to express the interpretations present in biomedical texts in a simple but structured form, as opposed to a complicated formalism built with low-level formats XML, JSON, and RDF or mid-level formats like OWL and OBO. Similarly to OWL and OBO, BEL pays deep respect to the need for the use of structured identifiers and controlled vocabularies for its statements to support the integration of multiple content sources in downstream applications. We focus on BEL because of its unique ability to represent findings across biological scales, including the genomic, transcriptomic, proteomic, pathway, phenotype, and organism levels.

Below is a representation of a portion of the MAPK signaling pathway in BEL (Box 9), which describes the process through which a series of kinases are phosphorylated, become active, and phosphorylate the next kinase in the pathway. It uses the FamPlex (fplx)197 namespace to describe the RAF, MEK, and ERK protein families.

Box 9. MAPK signaling pathway in Biological Expression Language.

act(p(fplx:RAF), ma(kin)) directlyIncreases p(fplx:MEK, pmod(Ph))

p(fplx:MEK, pmod(Ph)) directlyIncreases act(p(fplx:MEK), ma(kin))

act(p(fplx:MEK), ma(kin)) directlyIncreases p(fplx:ERK, pmod(Ph))

p(fplx:ERK, pmod(Ph)) directlyIncreases act(p(fplx:ERK)))

While the additional provenance, context, and metadata associated with each statement have not been shown, this example demonstrates that several disparate information sources can be assembled in a graph-like structure due to the triple-like nature of BEL statements.

While BEL was designed to express the interpretation presented in the literature, related formats are more focused on mechanistically describing the underlying processes on either a qualitative (e.g., BioPAX, SBGN) or quantitative (e.g., SBML) basis. Ultimately, each of these formalisms has supported a new generation of analytical techniques that have begun to replace classical pathway-analysis.

Future directions: Reproducible articles

Attempts have been made to integrate reproducible analyses into manuscripts. An article in eLife198 was published with an inline live R Markdown Binder analysis as part of a proof-of-concept of the publisher's Executable Research Article (ERA) (Aufreiter and Penfold, 2018, IEEE eScience, abstract).199, 200 Because of the technical metadata used for rendering and display, subtle changes are required to integrate containerized analyses with JATS, and the requirements for hosting workflows outside the narrow context of Binder will require further engineering and metadata standards.

Discussion

The range and diversity of metadata standards developed that aid researchers in their daily activities, also support them in sharing research outputs (data, code, publications, and other component parts of the research life cycle). If we promote metadata as the "glue" of reproducible research, what does that entail for the metadata and reproducible research communities? Clearly, no single metadata standard can support all aspects of the analytic stack. Metadata standards are driven by needs associated with function, discipline, and object format type; hence the categorization of descriptive, administrative, and structural metadata, as well as standards targeting a domain (e.g., biology) or object format (images, GIS materials).201 The fact that metadata standards continue to undergo formal community reviews demonstrates value. Finally, as research communities and activities converge around the analytical stack and seek to automate pipelines supporting scientific services such as analytic cores, metadata not only has continuing value for reproducibility, it is shown to be critical to this endeavor.

In our review, we have attempted to describe metadata as it addresses reproducibility across the analytic stack. Two principal components: (1) embeddedness versus connectedness, and the (2) methodology weight and standardization appear to be recurring themes across all metadata facets.

Embeddedness versus connectedness