Abstract

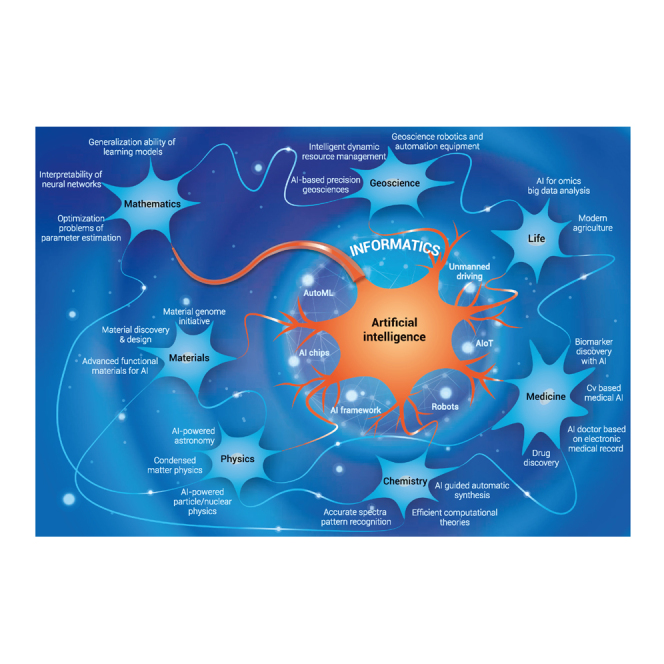

Artificial intelligence (AI) coupled with promising machine learning (ML) techniques well known from computer science is broadly affecting many aspects of various fields including science and technology, industry, and even our day-to-day life. The ML techniques have been developed to analyze high-throughput data with a view to obtaining useful insights, categorizing, predicting, and making evidence-based decisions in novel ways, which will promote the growth of novel applications and fuel the sustainable booming of AI. This paper undertakes a comprehensive survey on the development and application of AI in different aspects of fundamental sciences, including information science, mathematics, medical science, materials science, geoscience, life science, physics, and chemistry. The challenges that each discipline of science meets, and the potentials of AI techniques to handle these challenges, are discussed in detail. Moreover, we shed light on new research trends entailing the integration of AI into each scientific discipline. The aim of this paper is to provide a broad research guideline on fundamental sciences with potential infusion of AI, to help motivate researchers to deeply understand the state-of-the-art applications of AI-based fundamental sciences, and thereby to help promote the continuous development of these fundamental sciences.

Keywords: artificial intelligence, machine learning, deep learning, information science, mathematics, medical science, materials science, geoscience, life science, physics, chemistry

Graphical abstract

Public summary

-

•

“Can machines think?” The goal of artificial intelligence (AI) is to enable machines to mimic human thoughts and behaviors, including learning, reasoning, predicting, and so on.

-

•

“Can AI do fundamental research?” AI coupled with machine learning techniques is impacting a wide range of fundamental sciences, including mathematics, medical science, physics, etc.

-

•

“How does AI accelerate fundamental research?” New research and applications are emerging rapidly with the support by AI infrastructure, including data storage, computing power, AI algorithms, and frameworks.

Introduction

“Can machines think?” Alan Turing posed this question in his famous paper “Computing Machinery and Intelligence.”1 He believes that to answer this question, we need to define what thinking is. However, it is difficult to define thinking clearly, because thinking is a subjective behavior. Turing then introduced an indirect method to verify whether a machine can think, the Turing test, which examines a machine's ability to show intelligence indistinguishable from that of human beings. A machine that succeeds in the test is qualified to be labeled as artificial intelligence (AI).

AI refers to the simulation of human intelligence by a system or a machine. The goal of AI is to develop a machine that can think like humans and mimic human behaviors, including perceiving, reasoning, learning, planning, predicting, and so on. Intelligence is one of the main characteristics that distinguishes human beings from animals. With the interminable occurrence of industrial revolutions, an increasing number of types of machine types continuously replace human labor from all walks of life, and the imminent replacement of human resources by machine intelligence is the next big challenge to be overcome. Numerous scientists are focusing on the field of AI, and this makes the research in the field of AI rich and diverse. AI research fields include search algorithms, knowledge graphs, natural languages processing, expert systems, evolution algorithms, machine learning (ML), deep learning (DL), and so on.

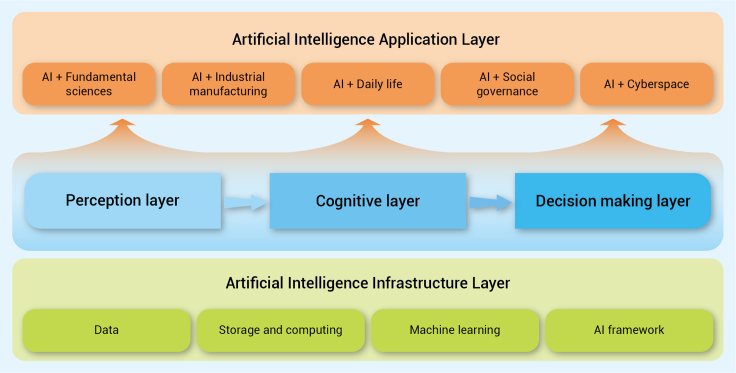

The general framework of AI is illustrated in Figure 1. The development process of AI includes perceptual intelligence, cognitive intelligence, and decision-making intelligence. Perceptual intelligence means that a machine has the basic abilities of vision, hearing, touch, etc., which are familiar to humans. Cognitive intelligence is a higher-level ability of induction, reasoning and acquisition of knowledge. It is inspired by cognitive science, brain science, and brain-like intelligence to endow machines with thinking logic and cognitive ability similar to human beings. Once a machine has the abilities of perception and cognition, it is often expected to make optimal decisions as human beings, to improve the lives of people, industrial manufacturing, etc. Decision intelligence requires the use of applied data science, social science, decision theory, and managerial science to expand data science, so as to make optimal decisions. To achieve the goal of perceptual intelligence, cognitive intelligence, and decision-making intelligence, the infrastructure layer of AI, supported by data, storage and computing power, ML algorithms, and AI frameworks is required. Then by training models, it is able to learn the internal laws of data for supporting and realizing AI applications. The application layer of AI is becoming more and more extensive, and deeply integrated with fundamental sciences, industrial manufacturing, human life, social governance, and cyberspace, which has a profound impact on our work and lifestyle.

Figure 1.

The general framework of AI

History of AI

The beginning of modern AI research can be traced back to John McCarthy, who coined the term “artificial intelligence (AI),” during at a conference at Dartmouth College in 1956. This symbolized the birth of the AI scientific field. Progress in the following years was astonishing. Many scientists and researchers focused on automated reasoning and applied AI for proving of mathematical theorems and solving of algebraic problems. One of the famous examples is Logic Theorist, a computer program written by Allen Newell, Herbert A. Simon, and Cliff Shaw, which proves 38 of the first 52 theorems in “Principia Mathematica” and provides more elegant proofs for some.2 These successes made many AI pioneers wildly optimistic, and underpinned the belief that fully intelligent machines would be built in the near future. However, they soon realized that there was still a long way to go before the end goals of human-equivalent intelligence in machines could come true. Many nontrivial problems could not be handled by the logic-based programs. Another challenge was the lack of computational resources to compute more and more complicated problems. As a result, organizations and funders stopped supporting these under-delivering AI projects.

AI came back to popularity in the 1980s, as several research institutions and universities invented a type of AI systems that summarizes a series of basic rules from expert knowledge to help non-experts make specific decisions. These systems are “expert systems.” Examples are the XCON designed by Carnegie Mellon University and the MYCIN designed by Stanford University. The expert system derived logic rules from expert knowledge to solve problems in the real world for the first time. The core of AI research during this period is the knowledge that made machines “smarter.” However, the expert system gradually revealed several disadvantages, such as privacy technologies, lack of flexibility, poor versatility, expensive maintenance cost, and so on. At the same time, the Fifth Generation Computer Project, heavily funded by the Japanese government, failed to meet most of its original goals. Once again, the funding for AI research ceased, and AI was at the second lowest point of its life.

In 2006, Geoffrey Hinton and coworkers3,4 made a breakthrough in AI by proposing an approach of building deeper neural networks, as well as a way to avoid gradient vanishing during training. This reignited AI research, and DL algorithms have become one of the most active fields of AI research. DL is a subset of ML based on multiple layers of neural networks with representation learning,5 while ML is a part of AI that a computer or a program can use to learn and acquire intelligence without human intervention. Thus, “learn” is the keyword of this era of AI research. Big data technologies, and the improvement of computing power have made deriving features and information from massive data samples more efficient. An increasing number of new neural network structures and training methods have been proposed to improve the representative learning ability of DL, and to further expand it into general applications. Current DL algorithms match and exceed human capabilities on specific datasets in the areas of computer vision (CV) and natural language processing (NLP). AI technologies have achieved remarkable successes in all walks of life, and continued to show their value as backbones in scientific research and real-world applications.

Within AI, ML is having a substantial broad effect across many aspects of technology and science: from computer science to geoscience to materials science, from life science to medical science to chemistry to mathematics and to physics, from management science to economics to psychology, and other data-intensive empirical sciences, as ML methods have been developed to analyze high-throughput data to obtain useful insights, categorize, predict, and make evidence-based decisions in novel ways. To train a system by presenting it with examples of desired input-output behavior, could be far easier than to program it manually by predicting the desired response for all potential inputs. The following sections survey eight fundamental sciences, including information science (informatics), mathematics, medical science, materials science, geoscience, life science, physics, and chemistry, which develop or exploit AI techniques to promote the development of sciences and accelerate their applications to benefit human beings, society, and the world.

AI in information science

AI aims to provide the abilities of perception, cognition, and decision-making for machines. At present, new research and applications in information science are emerging at an unprecedented rate, which is inseparable from the support by the AI infrastructure. As shown in Figure 2, the AI infrastructure layer includes data, storage and computing power, ML algorithms, and the AI framework. The perception layer enables machines have the basic ability of vision, hearing, etc. For instance, CV enables machines to “see” and identify objects, while speech recognition and synthesis helps machines to “hear” and recognize speech elements. The cognitive layer provides higher ability levels of induction, reasoning, and acquiring knowledge with the help of NLP,6 knowledge graphs,7 and continual learning.8 In the decision-making layer, AI is capable of making optimal decisions, such as automatic planning, expert systems, and decision-supporting systems. Numerous applications of AI have had a profound impact on fundamental sciences, industrial manufacturing, human life, social governance, and cyberspace. The following subsections provide an overview of the AI framework, automatic machine learning (AutoML) technology, and several state-of-the-art AI/ML applications in the information field.

Figure 2.

The knowledge graph of the AI framework

The AI framework provides basic tools for AI algorithm implementation

In the past 10 years, applications based on AI algorithms have played a significant role in various fields and subjects, on the basis of which the prosperity of the DL framework and platform has been founded. AI frameworks and platforms reduce the requirement of accessing AI technology by integrating the overall process of algorithm development, which enables researchers from different areas to use it across other fields, allowing them to focus on designing the structure of neural networks, thus providing better solutions to problems in their fields. At the beginning of the 21st century, only a few tools, such as MATLAB, OpenNN, and Torch, were capable of describing and developing neural networks. However, these tools were not originally designed for AI models, and thus faced problems, such as complicated user API and lacking GPU support. During this period, using these frameworks demanded professional computer science knowledge and tedious work on model construction. As a solution, early frameworks of DL, such as Caffe, Chainer, and Theano, emerged, allowing users to conveniently construct complex deep neural networks (DNNs), such as convolutional neural networks (CNNs), recurrent neural networks (RNNs), and LSTM conveniently, and this significantly reduced the cost of applying AI models. Tech giants then joined the march in researching AI frameworks.9 Google developed the famous open-source framework, TensorFlow, while Facebook's AI research team released another popular platform, PyTorch, which is based on Torch; Microsoft Research published CNTK, and Amazon announced MXNet. Among them, TensorFlow, also the most representative framework, referred to Theano's declarative programming style, offering a larger space for graph-based optimization, while PyTorch inherited the imperative programming style of Torch, which is intuitive, user friendly, more flexible, and easier to be traced. As modern AI frameworks and platforms are being widely applied, practitioners can now assemble models swiftly and conveniently by adopting various building block sets and languages specifically suitable for given fields. Polished over time, these platforms gradually developed a clearly defined user API, the ability for multi-GPU training and distributed training, as well as a variety of model zoos and tool kits for specific tasks.10 Looking forward, there are a few trends that may become the mainstream of next-generation framework development. (1) Capability of super-scale model training. With the emergence of models derived from Transformer, such as BERT and GPT-3, the ability of training large models has become an ideal feature of the DL framework. It requires AI frameworks to train effectively under the scale of hundreds or even thousands of devices. (2) Unified API standard. The APIs of many frameworks are generally similar but slightly different at certain points. This leads to some difficulties and unnecessary learning efforts, when the user attempts to shift from one framework to another. The API of some frameworks, such as JAX, has already become compatible with Numpy standard, which is familiar to most practitioners. Therefore, a unified API standard for AI frameworks may gradually come into being in the future. (3) Universal operator optimization. At present, kernels of DL operator are implemented either manually or based on third-party libraries. Most third-party libraries are developed to suit certain hardware platforms, causing large unnecessary spending when models are trained or deployed on different hardware platforms. The development speed of new DL algorithms is usually much faster than the update rate of libraries, which often makes new algorithms to be beyond the range of libraries' support.11

To improve the implementation speed of AI algorithms, much research focuses on how to use hardware for acceleration. The DianNao family is one of the earliest research innovations on AI hardware accelerators.12 It includes DianNao, DaDianNao, ShiDianNao, and PuDianNao, which can be used to accelerate the inference speed of neural networks and other ML algorithms. Of these, the best performance of a 64-chip DaDianNao system can achieve a speed up of 450.65× over a GPU, and reduce the energy by 150.31×. Prof. Chen and his team in the Institute of Computing Technology also designed an Instruction Set Architecture for a broad range of neural network accelerators, called Cambricon, which developed into a serial DL accelerator. After Cambricon, many AI-related companies, such as Apple, Google, HUAWEI, etc., developed their own DL accelerators, and AI accelerators became an important research field of AI.

AI for AI—AutoML

AutoML aims to study how to use evolutionary computing, reinforcement learning (RL), and other AI algorithms, to automatically generate specified AI algorithms. Research on the automatic generation of neural networks has existed before the emergence of DL, e.g., neural evolution.13 The main purpose of neural evolution is to allow neural networks to evolve according to the principle of survival of the fittest in the biological world. Through selection, crossover, mutation, and other evolutionary operators, the individual quality in a population is continuously improved and, finally, the individual with the greatest fitness represents the best neural network. The biological inspiration in this field lies in the evolutionary process of human brain neurons. The human brain has such developed learning and memory functions that it cannot do without the complex neural network system in the brain. The whole neural network system of the human brain benefits from a long evolutionary process rather than gradient descent and back propagation. In the era of DL, the application of AI algorithms to automatically generate DNN has attracted more attention and, gradually, developed into an important direction of AutoML research: neural architecture search. The implementation methods of neural architecture search are usually divided into the RL-based method and the evolutionary algorithm-based method. In the RL-based method, an RNN is used as a controller to generate a neural network structure layer by layer, and then the network is trained, and the accuracy of the verification set is used as the reward signal of the RNN to calculate the strategy gradient. During the iteration, the controller will give the neural network, with higher accuracy, a higher probability value, so as to ensure that the strategy function can output the optimal network structure.14 The method of neural architecture search through evolution is similar to the neural evolution method, which is based on a population and iterates continuously according to the principle of survival of the fittest, so as to obtain a high-quality neural network.15 Through the application of neural architecture search technology, the design of neural networks is more efficient and automated, and the accuracy of the network gradually outperforms that of the networks designed by AI experts. For example, Google's SOTA network EfficientNet was realized through the baseline network based on neural architecture search.16

AI enabling networking design adaptive to complex network conditions

The application of DL in the networking field has received strong interest. Network design often relies on initial network conditions and/or theoretical assumptions to characterize real network environments. However, traditional network modeling and design, regulated by mathematical models, are unlikely to deal with complex scenarios with many imperfect and high dynamic network environments. Integrating DL into network research allows for a better representation of complex network environments. Furthermore, DL could be combined with the Markov decision process and evolve into the deep reinforcement learning (DRL) model, which finds an optimal policy based on the reward function and the states of the system. Taken together, these techniques could be used to make better decisions to guide proper network design, thereby improving the network quality of service and quality of experience. With regard to the aspect of different layers of the network protocol stack, DL/DRL can be adopted for network feature extraction, decision-making, etc. In the physical layer, DL can be used for interference alignment. It can also be used to classify the modulation modes, design efficient network coding17 and error correction codes, etc. In the data link layer, DL can be used for resource (such as channels) allocation, medium access control, traffic prediction,18 link quality evaluation, and so on. In the network (routing) layer, routing establishment and routing optimization19 can help to obtain an optimal routing path. In higher layers (such as the application layer), enhanced data compression and task allocation is used. Besides the above protocol stack, one critical area of using DL is network security. DL can be used to classify the packets into benign/malicious types, and how it can be integrated with other ML schemes, such as unsupervised clustering, to achieve a better anomaly detection effect.

AI enabling more powerful and intelligent nanophotonics

Nanophotonic components have recently revolutionized the field of optics via metamaterials/metasurfaces by enabling the arbitrary manipulation of light-matter interactions with subwavelength meta-atoms or meta-molecules.20, 21, 22 The conventional design of such components involves generally forward modeling, i.e., solving Maxwell's equations based on empirical and intuitive nanostructures to find corresponding optical properties, as well as the inverse design of nanophotonic devices given an on-demand optical response. The trans-dimensional feature of macro-optical components consisting of complex nano-antennas makes the design process very time consuming, computationally expensive, and even numerically prohibitive, such as device size and complexity increase. DL is an efficient and automatic platform, enabling novel efficient approaches to designing nanophotonic devices with high-performance and versatile functions. Here, we present briefly the recent progress of DL-based nanophotonics and its wide-ranging applications. DL was exploited for forward modeling at first using a DNN.23 The transmission or reflection coefficients can be well predicted after training on huge datasets. To improve the prediction accuracy of DNN in case of small datasets, transfer learning was introduced to migrate knowledge between different physical scenarios, which greatly reduced the relative error. Furthermore, a CNN and an RNN were developed for the prediction of optical properties from arbitrary structures using images.24 The CNN-RNN combination successfully predicted the absorption spectra from the given input structural images. In inverse design of nanophotonic devices, there are three different paradigms of DL methods, i.e., supervised, unsupervised, and RL.25 Supervised learning has been utilized to design structural parameters for the pre-defined geometries, such as tandem DNN and bidirectional DNNs. Unsupervised learning methods learn by themselves without a specific target, and thus are more accessible to discovering new and arbitrary patterns26 in completely new data than supervised learning. A generative adversarial network (GAN)-based approach, combining conditional GANs and Wasserstein GANs, was proposed to design freeform all-dielectric multifunctional metasurfaces. RL, especially double-deep Q-learning, powers up the inverse design of high-performance nanophotonic devices.27 DL has endowed nanophotonic devices with better performance and more emerging applications.28,29 For instance, an intelligent microwave cloak driven by DL exhibits millisecond and self-adaptive response to an ever-changing incident wave and background.28 Another example is that a DL-augmented infrared nanoplasmonic metasurface is developed for monitoring dynamics between four major classes of bio-molecules, which could impact the fields of biology, bioanalytics, and pharmacology from fundamental research, to disease diagnostics, to drug development.29 The potential of DL in the wide arena of nanophotonics has been unfolding. Even end-users without optics and photonics background could exploit the DL as a black box toolkit to design powerful optical devices. Nevertheless, how to interpret/mediate the intermediate DL process and determine the most dominant factors in the search for optimal solutions, are worthy of being investigated in depth. We optimistically envisage that the advancements in DL algorithms and computation/optimization infrastructures would enable us to realize more efficient and reliable training approaches, more complex nanostructures with unprecedented shapes and sizes, and more intelligent and reconfigurable optic/optoelectronic systems.

AI in other fields of information science

We believe that AI has great potential in the following directions:

-

•

AI-based risk control and management in utilities can prevent costly or hazardous equipment failures by using sensors that detect and send information regarding the machine's health to the manufacturer, predicting possible issues that could occur so as to ensure timely maintenance or automated shutdown.

-

•

AI could be used to produce simulations of real-world objects, called digital twins. When applied to the field of engineering, digital twins allow engineers and technicians to analyze the performance of an equipment virtually, thus avoiding safety and budget issues associated with traditional testing methods.

-

•

Combined with AI, intelligent robots are playing an important role in industry and human life. Different from traditional robots working according to the procedures specified by humans, intelligent robots have the ability of perception, recognition, and even automatic planning and decision-making, based on changes in environmental conditions.

-

•

AI of things (AIoT) or AI-empowered IoT applications.30 have become a promising development trend. AI can empower the connected IoT devices, embedded in various physical infrastructures, to perceive, recognize, learn, and act. For instance, smart cities constantly collect data regarding quality-of-life factors, such as the status of power supply, public transportation, air pollution, and water use, to manage and optimize systems in cities. Due to these data, especially personal data being collected from informed or uninformed participants, data security, and privacy31 require protection.

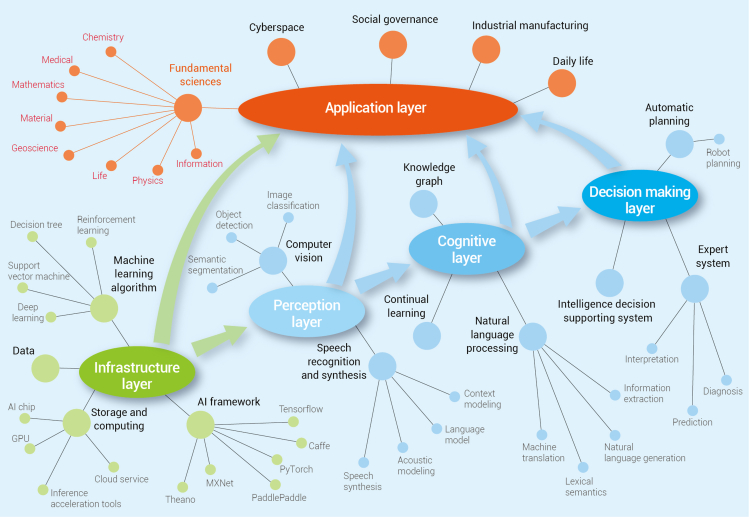

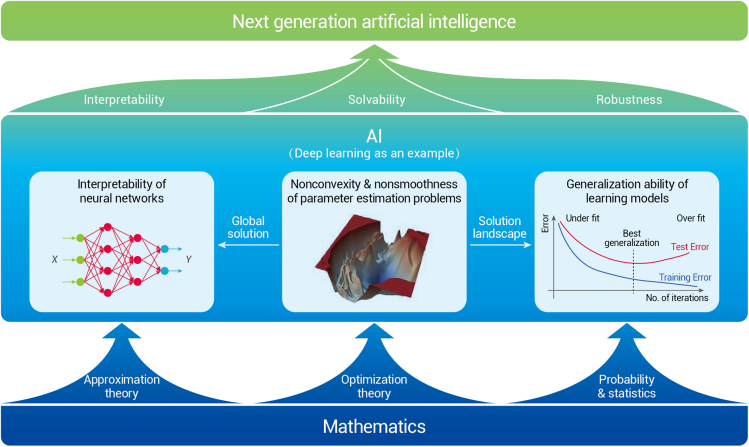

AI in mathematics

Mathematics always plays a crucial and indispensable role in AI. Decades ago, quite a few classical AI-related approaches, such as k-nearest neighbor,32 support vector machine,33 and AdaBoost,34 were proposed and developed after their rigorous mathematical formulations had been established. In recent years, with the rapid development of DL,35 AI has been gaining more and more attention in the mathematical community. Equipped with the Markov process, minimax optimization, and Bayesian statistics, RL,36 GANs,37 and Bayesian learning38 became the most favorable tools in many AI applications. Nevertheless, there still exist plenty of open problems in mathematics for ML, including the interpretability of neural networks, the optimization problems of parameter estimation, and the generalization ability of learning models. In the rest of this section, we discuss these three questions in turn.

The interpretability of neural networks

From a mathematical perspective, ML usually constructs nonlinear models, with neural networks as a typical case, to approximate certain functions. The well-known Universal Approximation Theorem suggests that, under very mild conditions, any continuous function can be uniformly approximated on compact domains by neural networks,39 which serves a vital function in the interpretability of neural networks. However, in real applications, ML models seem to admit accurate approximations of many extremely complicated functions, sometimes even black boxes, which are far beyond the scope of continuous functions. To understand the effectiveness of ML models, many researchers have investigated the function spaces that can be well approximated by them, and the corresponding quantitative measures. This issue is closely related to the classical approximation theory, but the approximation scheme is distinct. For example, Bach40 finds that the random feature model is naturally associated with the corresponding reproducing kernel Hilbert space. In the same way, the Barron space is identified as the natural function space associated with two-layer neural networks, and the approximation error is measured using the Barron norm.41 The corresponding quantities of residual networks (ResNets) are defined for the flow-induced spaces. For multi-layer networks, the natural function spaces for the purposes of approximation theory are the tree-like function spaces introduced in Wojtowytsch.42 There are several works revealing the relationship between neural networks and numerical algorithms for solving partial differential equations. For example, He and Xu43 discovered that CNNs for image classification have a strong connection with multi-grid (MG) methods. In fact, the pooling operation and feature extraction in CNNs correspond directly to restriction operation and iterative smoothers in MG, respectively. Hence, various convolution and pooling operations used in CNNs can be better understood.

The optimization problems of parameter estimation

In general, the optimization problem of estimating parameters of certain DNNs is in practice highly nonconvex and often nonsmooth. Can the global minimizers be expected? What is the landscape of local minimizers? How does one handle the nonsmoothness? All these questions are nontrivial from an optimization perspective. Indeed, numerous works and experiments demonstrate that the optimization for parameter estimation in DL is itself a much nicer problem than once thought; see, e.g., Goodfellow et al. 44 As a consequence, the study on the solution landscape (Figure 3), also known as loss surface of neural networks, is no longer supposed to be inaccessible and can even in turn provide guidance for global optimization. Interested readers can refer to the survey paper (Sun et al.45) for recent progress in this aspect.

Figure 3.

AI in mathematics

Recent studies indicate that nonsmooth activation functions, e.g., rectified linear units, are better than smooth ones in finding sparse solutions. However, the chain rule does not work in the case that the activation functions are nonsmooth, which then makes the widely used stochastic gradient (SG)-based approaches not feasible in theory. Taking approximated gradients at nonsmooth iterates as a remedy ensures that SG-type methods are still in extensive use, but that the numerical evidence has also exposed their limitations. Also, the penalty-based approaches proposed by Cui et al.46 and Liu et al.47 provide a new direction to solve the nonsmooth optimization problems efficiently.

The generalization ability of learning models

A small training error does not always lead to a small test error. This gap is caused by the generalization ability of learning models. A key finding in statistical learning theory states that the generalization error is bounded by a quantity that grows with the increase of the model capacity, but shrinks as the number of training examples increases.48 A common conjecture relating generalization to solution landscape is that flat and wide minima generalize better than sharp ones. Thus, regularization techniques, including the dropout approach,49 have emerged to force the algorithms to bypass the sharp minima. However, the mechanism behind this has not been fully explored. Recently, some researchers have focused on the ResNet-type architecture, with dropout being inserted after the last convolutional layer of each modular building. They thus managed to explain the stochastic dropout training process and the ensuing dropout regularization effect from the perspective of optimal control.50

AI in medical science

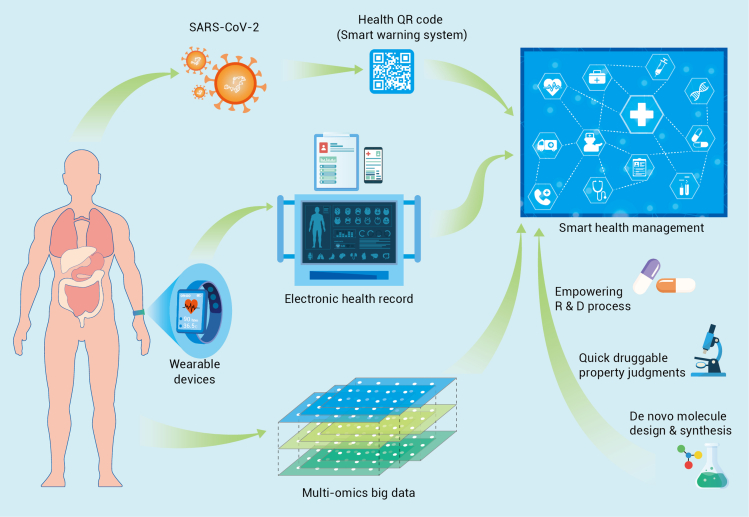

There is a great trend for AI technology to grow more and more significant in daily operations, including medical fields. With the growing needs of healthcare for patients, hospital needs are evolving from informationization networking to the Internet Hospital and eventually to the Smart Hospital. At the same time, AI tools and hardware performance are also growing rapidly with each passing day. Eventually, common AI algorithms, such as CV, NLP, and data mining, will begin to be embedded in the medical equipment market (Figure 4).

Figure 4.

AI in medical science

AI doctor based on electronic medical records

For medical history data, it is inevitable to mention Doctor Watson, developed by the Watson platform of IBM, and Modernizing Medicine, which aims to solve oncology, and is now adopted by CVS & Walgreens in the US and various medical organizations in China as well. Doctor Watson takes advantage of the NLP performance of the IBM Watson platform, which already collected vast data of medical history, as well as prior knowledge in the literature for reference. After inputting the patients' case, Doctor Watson searches the medical history reserve and forms an elementary treatment proposal, which will be further ranked by prior knowledge reserves. With the multiple models stored, Doctor Watson gives the final proposal as well as the confidence of the proposal. However, there are still problems for such AI doctors because,51 as they rely on prior experience from US hospitals, the proposal may not be suitable for other regions with different medical insurance policies. Besides, the knowledge updating of the Watson platform also relies highly on the updating of the knowledge reserve, which still needs manual work.

AI for public health: Outbreak detection and health QR code for COVID-19

AI can be used for public health purposes in many ways. One classical usage is to detect disease outbreaks using search engine query data or social media data, as Google did for prediction of influenza epidemics52 and the Chinese Academy of Sciences did for modeling the COVID-19 outbreak through multi-source information fusion.53 After the COVID-19 outbreak, a digital health Quick Response (QR) code system has been developed by China, first to detect potential contact with confirmed COVID-19 cases and, secondly, to indicate the person's health status using mobile big data.54 Different colors indicate different health status: green means healthy and is OK for daily life, orange means risky and requires quarantine, and red means confirmed COVID-19 patient. It is easy to use for the general public, and has been adopted by many other countries. The health QR code has made great contributions to the worldwide prevention and control of the COVID-19 pandemic.

Biomarker discovery with AI

High-dimensional data, including multi-omics data, patient characteristics, medical laboratory test data, etc., are often used for generating various predictive or prognostic models through DL or statistical modeling methods. For instance, the COVID-19 severity evaluation model was built through ML using proteomic and metabolomic profiling data of sera55; using integrated genetic, clinical, and demographic data, Taliaz et al. built an ML model to predict patient response to antidepressant medications56; prognostic models for multiple cancer types (such as liver cancer, lung cancer, breast cancer, gastric cancer, colorectal cancer, pancreatic cancer, prostate cancer, ovarian cancer, lymphoma, leukemia, sarcoma, melanoma, bladder cancer, renal cancer, thyroid cancer, head and neck cancer, etc.) were constructed through DL or statistical methods, such as least absolute shrinkage and selection operator (LASSO), combined with Cox proportional hazards regression model using genomic data.57

Image-based medical AI

Medical image AI is one of the most developed mature areas as there are numerous models for classification, detection, and segmentation tasks in CV. For the clinical area, CV algorithms can also be used for computer-aided diagnosis and treatment with ECG, CT, eye fundus imaging, etc. As human doctors may be tired and prone to make mistakes after viewing hundreds and hundreds of images for diagnosis, AI doctors can outperform a human medical image viewer due to their specialty at repeated work without fatigue. The first medical AI product approved by FDA is IDx-DR, which uses an AI model to make predictions of diabetic retinopathy. The smartphone app SkinVision can accurately detect melanomas.58 It uses “fractal analysis” to identify moles and their surrounding skin, based on size, diameter, and many other parameters, and to detect abnormal growth trends. AI-ECG of LEPU Medical can automatically detect heart disease with ECG images. Lianying Medical takes advantage of their hardware equipment to produce real-time high-definition image-guided all-round radiotherapy technology, which successfully achieves precise treatment.

Wearable devices for surveillance and early warning

For wearable devices, AliveCor has developed an algorithm to automatically predict the presence of atrial fibrillation, which is an early warning sign of stroke and heart failure. The 23andMe company can also test saliva samples at a small cost, and a customer can be provided with information based on their genes, including who their ancestors were or potential diseases they may be prone to later in life. It provides accurate health management solutions based on individual and family genetic data. In the 20–30 years of the near feature, we believe there are several directions for further research: (1) causal inference for real-time in-hospital risk prediction. Clinical doctors usually acquire reasonable explanations for certain medical decisions, but the current AI models nowadays are usually black box models. The casual inference will help doctors to explain certain AI decisions and even discover novel ground truths. (2) Devices, including wearable instruments for multi-dimensional health monitoring. The multi-modality model is now a trend for AI research. With various devices to collect multi-modality data and a central processor to fuse all these data, the model can monitor the user's overall real-time health condition and give precautions more precisely. (3) Automatic discovery of clinical markers for diseases that are difficult to diagnose. Diseases, such as ALS, are still difficult for clinical doctors to diagnose because they lack any effective general marker. It may be possible for AI to discover common phenomena for these patients and find an effective marker for early diagnosis.

AI-aided drug discovery

Today we have come into the precision medicine era, and the new targeted drugs are the cornerstones for precision therapy. However, over the past decades, it takes an average of over one billion dollars and 10 years to bring a new drug into the market. How to accelerate the drug discovery process, and avoid late-stage failure, are key concerns for all the big and fiercely competitive pharmaceutical companies. The highlighted emerging role of AI, including ML, DL, expert systems, and artificial neural networks (ANNs), has brought new insights and high efficiency into the new drug discovery processes. AI has been adopted in many aspects of drug discovery, including de novo molecule design, structure-based modeling for proteins and ligands, quantitative structure-activity relationship research, and druggable property judgments. DL-based AI appliances demonstrate superior merits in addressing some challenging problems in drug discovery. Of course, prediction of chemical synthesis routes and chemical process optimization are also valuable in accelerating new drug discovery, as well as lowering production costs.

There has been notable progress in the AI-aided new drug discovery in recent years, for both new chemical entity discovery and the relating business area. Based on DNNs, DeepMind built the AlphaFold platform to predict 3D protein structures that outperformed other algorithms. As an illustration of great achievement, AlphaFold successfully and accurately predicted 25 scratch protein structures from a 43 protein panel without using previously built proteins models. Accordingly, AlphaFold won the CASP13 protein-folding competition in December 2018.59 Based on the GANs and other ML methods, Insilico constructed a modular drug design platform GENTRL system. In September 2019, they reported the discovery of the first de novo active DDR1 kinase inhibitor developed by the GENTRL system. It took the team only 46 days from target selection to get an active drug candidate using in vivo data.60 Exscientia and Sumitomo Dainippon Pharma developed a new drug candidate, DSP-1181, for the treatment of obsessive-compulsive disorder on the Centaur Chemist AI platform. In January 2020, DSP-1181 started its phase I clinical trials, which means that, from program initiation to phase I study, the comprehensive exploration took less than 12 months. In contrast, comparable drug discovery using traditional methods usually needs 4–5 years with traditional methods.

How AI transforms medical practice: A case study of cervical cancer

As the most common malignant tumor in women, cervical cancer is a disease that has a clear cause and can be prevented, and even treated, if detected early. Conventionally, the screening strategy for cervical cancer mainly adopts the “three-step” model of “cervical cytology-colposcopy-histopathology.”61 However, limited by the level of testing methods, the efficiency of cervical cancer screening is not high. In addition, owing to the lack of knowledge by doctors in some primary hospitals, patients cannot be provided with the best diagnosis and treatment decisions. In recent years, with the advent of the era of computer science and big data, AI has gradually begun to extend and blend into various fields. In particular, AI has been widely used in a variety of cancers as a new tool for data mining. For cervical cancer, a clinical database with millions of medical records and pathological data has been built, and an AI medical tool set has been developed.62 Such an AI analysis algorithm supports doctors to access the ability of rapid iterative AI model training. In addition, a prognostic prediction model established by ML and a web-based prognostic result calculator have been developed, which can accurately predict the risk of postoperative recurrence and death in cervical cancer patients, and thereby better guide decision-making in postoperative adjuvant treatment.63

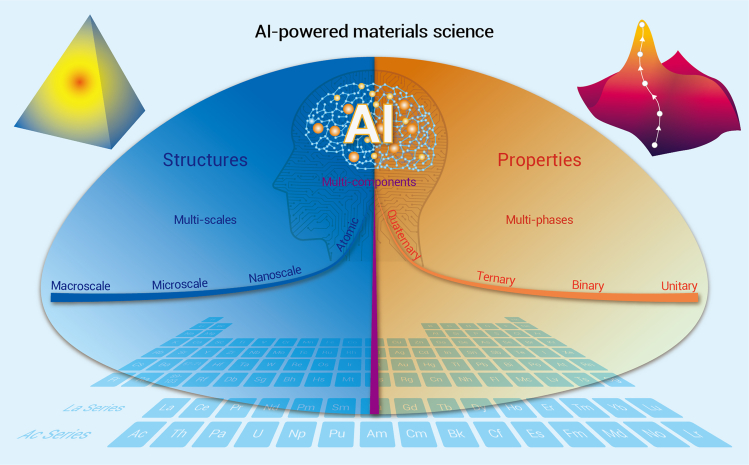

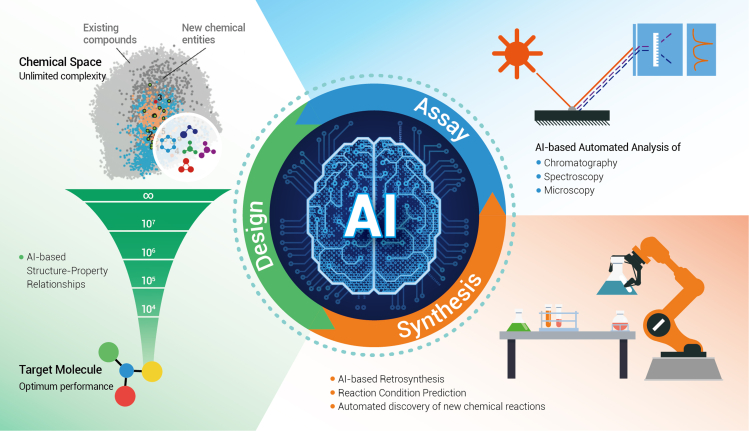

AI in materials science

As the cornerstone of modern industry, materials have played a crucial role in the design of revolutionary forms of matter, with targeted properties for broad applications in energy, information, biomedicine, construction, transportation, national security, spaceflight, and so forth. Traditional strategies rely on the empirical trial and error experimental approaches as well as the theoretical simulation methods, e.g., density functional theory, thermodynamics, or molecular dynamics, to discover novel materials.64 These methods often face the challenges of long research cycles, high costs, and low success rates, and thus cannot meet the increasingly growing demands of current materials science. Accelerating the speed of discovery and deployment of advanced materials will therefore be essential in the coming era.

With the rapid development of data processing and powerful algorithms, AI-based methods, such as ML and DL, are emerging with good potentials in the search for and design of new materials prior to actually manufacturing them.65,66 By integrating material property data, such as the constituent element, lattice symmetry, atomic radius, valence, binding energy, electronegativity, magnetism, polarization, energy band, structure-property relation, and functionalities, the machine can be trained to “think” about how to improve material design and even predict the properties of new materials in a cost-effective manner (Figure 5).

Figure 5.

AI is expected to power the development of materials science

AI in discovery and design of new materials

Recently, AI techniques have made significant advances in rational design and accelerated discovery of various materials, such as piezoelectric materials with large electrostrains,67 organic-inorganic perovskites for photovoltaics,68 molecular emitters for efficient light-emitting diodes,69 inorganic solid materials for thermoelectrics,70 and organic electronic materials for renewable-energy applications.66,71 The power of data-driven computing and algorithmic optimization can promote comprehensive applications of simulation and ML (i.e., high-throughput virtual screening, inverse molecular design, Bayesian optimization, and supervised learning, etc.), in material discovery and property prediction in various fields.72 For instance, using a DL Bayesian framework, the attribute-driven inverse materials design has been demonstrated for efficient and accurate prediction of functional molecular materials, with desired semiconducting properties or redox stability for applications in organic thin-film transistors, organic solar cells, or lithium-ion batteries.73 It is meaningful to adopt automation tools for quick experimental testing of potential materials and utilize high-performance computing to calculate their bulk, interface, and defect-related properties.74 The effective convergence of automation, computing, and ML can greatly speed up the discovery of materials. In the future, with the aid of AI techniques, it will be possible to accomplish the design of superconductors, metallic glasses, solder alloys, high-entropy alloys, high-temperature superalloys, thermoelectric materials, two-dimensional materials, magnetocaloric materials, polymeric bio-inspired materials, sensitive composite materials, and topological (electronic and phonon) materials, and so on. In the past decade, topological materials have ignited the research enthusiasm of condensed matter physicists, materials scientists, and chemists, as they exhibit exotic physical properties with potential applications in electronics, thermoelectrics, optics, catalysis, and energy-related fields. From the most recent predictions, more than a quarter of all inorganic materials in nature are topologically nontrivial. The establishment of topological electronic materials databases75, 76, 77 and topological phononic materials databases78 using high-throughput methods will help to accelerate the screening and experimental discovery of new topological materials for functional applications. It is recognized that large-scale high-quality datasets are required to practice AI. Great efforts have also been expended in building high-quality materials science databases. As one of the top-ranking databases of its kind, the “atomly.net” materials data infrastructure,79 has calculated the properties of more than 180,000 inorganic compounds, including their equilibrium structures, electron energy bands, dielectric properties, simulated diffraction patterns, elasticity tensors, etc. As such, the atomly.net database has set a solid foundation for extending AI into the area of materials science research. The X-ray diffraction (XRD)-matcher model of atomly.net uses ML to match and classify the experimental XRD to the simulated patterns. Very recently, by using the dataset from atomly.net, an accurate AI model was built to rapidly predict the formation energy of almost any given compound to yield a fairly good predictive ability.80

AI-powered Materials Genome Initiative

The Materials Genome Initiative (MGI) is a great plan for rational realization of new materials and related functions, and it aims to discover, manufacture, and deploy advanced materials efficiently, cost-effectively, and intelligently. The initiative creates policy, resources, and infrastructure for accelerating materials development at a high level. This is a new paradigm for the discovery and design of next-generation materials, and runs from a view point of fundamental building blocks toward general materials developments, and accelerates materials development through efforts in theory, computation, and experiment, in a highly integrated high-throughput manner. MGI raises an ultimately high goal and high level for materials development and materials science for humans in the future. The spirit of MGI is to design novel materials by using data pools and powerful computation once the requirements or aspirations of functional usages appear. The theory, computation, and algorithm are the primary and substantial factors in the establishment and implementation of MGI. Advances in theories, computations, and experiments in materials science and engineering provide the footstone to not only accelerate the speed at which new materials are realized but to also shorten the time needed to push new products into the market. These AI techniques bring a great promise to the developing MGI. The applications of new technologies, such as ML and DL, directly accelerate materials research and the establishment of MGI. The model construction and application to science and engineering, as well as the data infrastructure, are of central importance. When the AI-powered MGI approaches are coupled with the ongoing autonomy of manufacturing methods, the potential impact to society and the economy in the future is profound. We are now beginning to see that the AI-aided MGI, among other things, integrates experiments, computation, and theory, and facilitates access to materials data, equips the next generation of the materials workforce, and enables a paradigm shift in materials development. Furthermore, the AI-powdered MGI could also design operational procedures and control the equipment to execute experiments, and to further realize autonomous experimentation in future material research.

Advanced functional materials for generation upgrade of AI

The realization and application of AI techniques depend on the computational capability and computer hardware, and this bases physical functionality on the performance of computers or supercomputers. For our current technology, the electric currents or electric carriers for driving electric chips and devices consist of electrons with ordinary characteristics, such as heavy mass and low mobility. All chips and devices emit relatively remarkable heat levels, consuming too much energy and lowering the efficiency of information transmission. Benefiting from the rapid development of modern physics, a series of advanced materials with exotic functional effects have been discovered or designed, including superconductors, quantum anomalous Hall insulators, and topological fermions. In particular, the superconducting state or topologically nontrivial electrons will promote the next-generation AI techniques once the (near) room temperature applications of these states are realized and implanted in integrated circuits.81 In this case, the central processing units, signal circuits, and power channels will be driven based on the electronic carriers that show massless, energy-diffusionless, ultra-high mobility, or chiral-protection characteristics. The ordinary electrons will be removed from the physical circuits of future-generation chips and devices, leaving superconducting and topological chiral electrons running in future AI chips and supercomputers. The efficiency of transmission, for information and logic computing will be improved on a vast scale and at a very low cost.

AI for materials and materials for AI

The coming decade will continue to witness the development of advanced ML algorithms, newly emerging data-driven AI methodologies, and integrated technologies for facilitating structure design and property prediction, as well as to accelerate the discovery, design, development, and deployment of advanced materials into existing and emerging industrial sectors. At this moment, we are facing challenges in achieving accelerated materials research through the integration of experiment, computation, and theory. The great MGI, proposed for high-level materials research, helps to promote this process, especially when it is assisted by AI techniques. Still, there is a long way to go for the usage of these advanced functional materials in future-generation electric chips and devices to be realized. More materials and functional effects need to be discovered or improved by the developing AI techniques. Meanwhile, it is worth noting that materials are the core components of devices and chips that are used for construction of computers or machines for advanced AI systems. The rapid development of new materials, especially the emergence of flexible, sensitive, and smart materials, is of great importance for a broad range of attractive technologies, such as flexible circuits, stretchable tactile sensors, multifunctional actuators, transistor-based artificial synapses, integrated networks of semiconductor/quantum devices, intelligent robotics, human-machine interactions, simulated muscles, biomimetic prostheses, etc. These promising materials, devices, and integrated technologies will greatly promote the advancement of AI systems toward wide applications in human life. Once the physical circuits are upgraded by advanced functional or smart materials, AI techniques will largely promote the developments and applications of all disciplines.

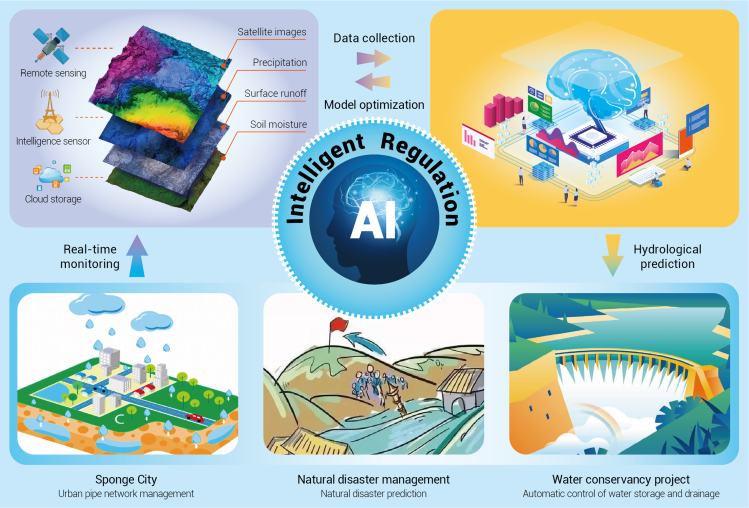

AI in geoscience

AI technologies involved in a large range of geoscience fields

Momentous challenges threatening current society require solutions to problems that belong to geoscience, such as evaluating the effects of climate change, assessing air quality, forecasting the effects of disaster incidences on infrastructure, by calculating the incoming consumption and availability of food, water, and soil resources, and identifying factors that are indicators for potential volcanic eruptions, tsunamis, floods, and earthquakes.82,83 It has become possible, with the emergence of advanced technology products (e.g., deep sea drilling vessels and remote sensing satellites), for enhancements in computational infrastructure that allow for processing large-scale, wide-range simulations of multiple models in geoscience, and internet-based data analysis that facilitates collection, processing, and storage of data in distributed and crowd-sourced environments.84 The growing availability of massive geoscience data provides unlimited possibilities for AI—which has popularized all aspects of our daily life (e.g., entertainment, transportation, and commerce)—to significantly contribute to geoscience problems of great societal relevance. As geoscience enters the era of massive data, AI, which has been extensively successful in different fields, offers immense opportunities for settling a series of problems in Earth systems.85,86 Accompanied by diversified data, AI-enabled technologies, such as smart sensors, image visualization, and intelligent inversion, are being actively examined in a large range of geoscience fields, such as marine geoscience, rock physics, geology, ecology, seismicity, environment, hydrology, remote sensing, Arc GIS, and planetary science.87

Multiple challenges in the development of geoscience

There are some traits of geoscience development that restrict the applicability of fundamental algorithms for knowledge discovery: (1) inherent challenges of geoscience processes, (2) limitation of geoscience data collection, and (3) uncertainty in samples and ground truth.88, 89, 90 Amorphous boundaries generally exist in geoscience objects between space and time that are not as well defined as objects in other fields. Geoscience phenomena are also significantly multivariate, obey nonlinear relationships, and exhibit spatiotemporal structure and non-stationary characteristics. Except for the inherent challenges of geoscience observations, the massive data at multiple dimensions of time and space, with different levels of incompleteness, noise, and uncertainties, disturb processes in geoscience. For supervised learning approaches, there are other difficulties owing to the lack of gold standard ground truth and the “small size” of samples (e.g., a small amount of historical data with sufficient observations) in geoscience applications.

Usage of AI technologies as efficient approaches to promote the geoscience processes

Geoscientists continually make every effort to develop better techniques for simulating the present status of the Earth system (e.g., how much greenhouse gases are released into the atmosphere), and the connections between and within its subsystems (e.g., how does the elevated temperature influence the ocean ecosystem). Viewed from the perspective of geoscience, newly emerging approaches, with the aid of AI, are a perfect combination for these issues in the application of geoscience: (1) characterizing objects and events91; (2) estimating geoscience variables from observations92; (3) forecasting geoscience variables according to long-term observations85; (4) exploring geoscience data relationships93; and (5) causal discovery and causal attribution.94 While characterizing geoscience objects and events using traditional methods are primarily rooted in hand-coded features, algorithms can automatically detect the data by improving the performance with pattern-mining techniques. However, due to spatiotemporal targets with vague boundaries and the related uncertainties, it can be necessary to advance pattern-mining methods that can explain the temporal and spatial characteristics of geoscience data when characterizing different events and objects. To address the non-stationary issue of geoscience data, AI-aided algorithms have been expanded to integrate the holistic results of professional predictors and engender robust estimations of climate variables (e.g., humidity and temperature). Furthermore, forecasting long-term trends of the current situation in the Earth system using AI-enabled technologies can simulate future scenarios and formulate early resource planning and adaptation policies. Mining geoscience data relationships can help us seize vital signs of the Earth system and promote our understanding of geoscience developments. Of great interest is the advancement of AI-decision methodology with uncertain prediction probabilities, engendering vague risks with poorly resolved tails, signifying the most extreme, transient, and rare events formulated by model sets, which supports various cases to improve accuracy and effectiveness.

AI technologies for optimizing the resource management in geoscience

Currently, AI can perform better than humans in some well-defined tasks. For example, AI techniques have been used in urban water resource planning, mainly due to their remarkable capacity for modeling, flexibility, reasoning, and forecasting the water demand and capacity. Design and application of an Adaptive Intelligent Dynamic Water Resource Planning system, the subset of AI for sustainable water resource management in urban regions, largely prompted the optimization of water resource allocation, will finally minimize the operation costs and improve the sustainability of environmental management95 (Figure 6). Also, meteorology requires collecting tremendous amounts of data on many different variables, such as humidity, altitude, and temperature; however, dealing with such a huge dataset is a big challenge.96 An AI-based technique is being utilized to analyze shallow-water reef images, recognize the coral color—to track the effects of climate change, and to collect humidity, temperature, and CO2 data—to grasp the health of our ecological environment.97 Beyond AI's capabilities for meteorology, it can also play a critical role in decreasing greenhouse gas emissions originating from the electric-power sector. Comprised of production, transportation, allocation, and consumption of electricity, many opportunities exist in the electric-power sector for Al applications, including speeding up the development of new clean energy, enhancing system optimization and management, improving electricity-demand forecasts and distribution, and advancing system monitoring.98 New materials may even be found, with the auxiliary of AI, for batteries to store energy or materials and absorb CO2 from the atmosphere.99 Although traditional fossil fuel operations have been widely used for thousands of years, AI techniques are being used to help explore the development of more potential sustainable energy sources for the development (e.g., fusion technology).100

Figure 6.

Applications of AI in hydraulic resource management

In addition to the adjustment of energy structures due to climate change (a core part of geoscience systems), a second, less-obvious step could also be taken to reduce greenhouse gas emission: using AI to target inefficiencies. A related statistical report by the Lawrence Livermore National Laboratory pointed out that around 68% of energy produced in the US could be better used for purposeful activities, such as electricity generation or transportation, but is instead contributing to environmental burdens.101 AI is primed to reduce these inefficiencies of current nuclear power plants and fossil fuel operations, as well as improve the efficiency of renewable grid resources.102 For example, AI can be instrumental in the operation and optimization of solar and wind farms to make these utility-scale renewable-energy systems far more efficient in the production of electricity.103 AI can also assist in reducing energy losses in electricity transportation and allocation.104 A distribution system operator in Europe used AI to analyze load, voltage, and network distribution data, to help “operators assess available capacity on the system and plan for future needs.”105 AI allowed the distribution system operator to employ existing and new resources to make the distribution of energy assets more readily available and flexible. The International Energy Agency has proposed that energy efficiency is core to the reform of energy systems and will play a key role in reducing the growth of global energy demand to one-third of the current level by 2040.

AI as a building block to promote development in geoscience

The Earth’s system is of significant scientific interest, and affects all aspects of life.106 The challenges, problems, and promising directions provided by AI are definitely not exhaustive, but rather, serve to illustrate that there is great potential for future AI research in this important field. Prosperity, development, and popularization of AI approaches in the geosciences is commonly driven by a posed scientific question, and the best way to succeed is that AI researchers work closely with geoscientists at all stages of research. That is because the geoscientists can better understand which scientific question is important and novel, which sample collection process can reasonably exhibit the inherent strengths, which datasets and parameters can be used to answer that question, and which pre-processing operations are conducted, such as removing seasonal cycles or smoothing. Similarly, AI researchers are better suited to decide which data analysis approaches are appropriate and available for the data, the advantages and disadvantages of these approaches, and what the approaches actually acquire. Interpretability is also an important goal in geoscience because, if we can understand the basic reasoning behind the models, patterns, or relationships extracted from the data, they can be used as building blocks in scientific knowledge discovery. Hence, frequent communication between the researchers avoids long detours and ensures that analysis results are indeed beneficial to both geoscientists and AI researchers.

AI in the life sciences

The developments of AI and the life sciences are intertwined. The ultimate goal of AI is to achieve human-like intelligence, as the human brain is capable of multi-tasking, learning with minimal supervision, and generalizing learned skills, all accomplished with high efficiency and low energy cost.107

Mutual inspiration between AI and neuroscience

In the past decades, neuroscience concepts have been introduced into ML algorithms and played critical roles in triggering several important advances in AI. For example, the origins of DL methods lie directly in neuroscience,5 which further stimulated the emergence of the field of RL.108 The current state-of-the-art CNNs incorporate several hallmarks of neural computation, including nonlinear transduction, divisive normalization, and maximum-based pooling of inputs,109 which were directly inspired by the unique processing of visual input in the mammalian visual cortex.110 By introducing the brain's attentional mechanisms, a novel network has been shown to produce enhanced accuracy and computational efficiency at difficult multi-object recognition tasks than conventional CNNs.111 Other neuroscience findings, including the mechanisms underlying working memory, episodic memory, and neural plasticity, have inspired the development of AI algorithms that address several challenges in deep networks.108 These algorithms can be directly implemented in the design and refinement of the brain-machine interface and neuroprostheses.

On the other hand, insights from AI research have the potential to offer new perspectives on the basics of intelligence in the brains of humans and other species. Unlike traditional neuroscientists, AI researchers can formalize the concepts of neural mechanisms in a quantitative language to extract their necessity and sufficiency for intelligent behavior. An important illustration of such exchange is the development of the temporal-difference (TD) methods in RL models and the resemblance of TD-form learning in the brain.112 Therefore, the China Brain Project covers both basic research on cognition and translational research for brain disease and brain-inspired intelligence technology.113

AI for omics big data analysis

Currently, AI can perform better than humans in some well-defined tasks, such as omics data analysis and smart agriculture. In the big data era,114 there are many types of data (variety), the volume of data is big, and the generation of data (velocity) is fast. The high variety, big volume, and fast velocity of data makes having it a matter of big value, but also makes it difficult to analyze the data. Unlike traditional statistics-based methods, AI can easily handle big data and reveal hidden associations.

In genetics studies, there are many successful applications of AI.115 One of the key questions is to determine whether a single amino acid polymorphism is deleterious.116 There have been sequence conservation-based SIFT117 and network-based SySAP,118 but all these methods have met bottlenecks and cannot be further improved. Sundaram et al. developed PrimateAI, which can predict the clinical outcome of mutation based on DNN.119 Another problem is how to call copy-number variations, which play important roles in various cancers.120,121 Glessner et al. proposed a DL-based tool DeepCNV, in which the area under the receiver operating characteristic (ROC) curve was 0.909, much higher than other ML methods.122 In epigenetic studies, m6A modification is one of the most important mechanisms.123 Zhang et al. developed an ensemble DL predictor (EDLm6APred) for mRNA m6A site prediction.124 The area under the ROC curve of EDLm6APred was 86.6%, higher than existing m6A methylation site prediction models. There are many other DL-based omics tools, such as DeepCpG125 for methylation, DeepPep126 for proteomics, AtacWorks127 for assay for transposase-accessible chromatin with high-throughput sequencing, and deepTCR128 for T cell receptor sequencing.

Another emerging application is DL for single-cell sequencing data. Unlike bulk data, in which the sample size is usually much smaller than the number of features, the sample size of cells in single-cell data could also be big compared with the number of genes. That makes the DL algorithm applicable for most single-cell data. Since the single-cell data are sparse and have many unmeasured missing values, DeepImpute can accurately impute these missing values in the big gene × cell matrix.129 During the quality control of single-cell data, it is important to remove the doublet solo embedded cells, using autoencoder, and then build a feedforward neural network to identify the doublet.130 Potential energy underlying single-cell gradients used generative modeling to learn the underlying differentiation landscape from time series single-cell RNA sequencing data.131

In protein structure prediction, the DL-based AIphaFold2 can accurately predict the 3D structures of 98.5% of human proteins, and will predict the structures of 130 million proteins of other organisms in the next few months.132 It is even considered to be the second-largest breakthrough in life sciences after the human genome project133 and will facilitate drug development among other things.

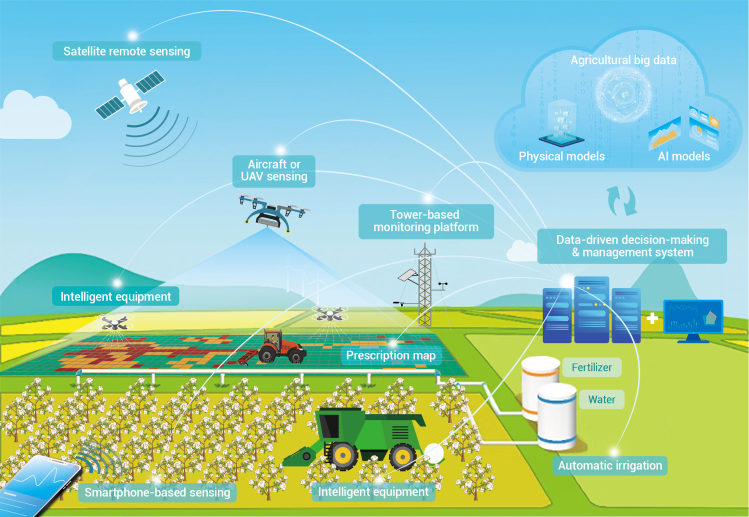

AI makes modern agriculture smart

Agriculture is entering a fourth revolution, termed agriculture 4.0 or smart agriculture, benefiting from the arrival of the big data era as well as the rapid progress of lots of advanced technologies, in particular ML, modern information, and communication technologies.134,135 Applications of DL, information, and sensing technologies in agriculture cover the whole stages of agricultural production, including breeding, cultivation, and harvesting.

Traditional breeding usually exploits genetic variations by searching natural variation or artificial mutagenesis. However, it is hard for either method to expose the whole mutation spectrum. Using DL models trained on the existing variants, predictions can be made on multiple unidentified gene loci.136 For example, an ML method, multi-criteria rice reproductive gene predictor, was developed and applied to predict coding and lincRNA genes associated with reproductive processes in rice.137 Moreover, models trained in species with well-studied genomic data (such as Arabidopsis and rice) can also be applied to other species with limited genome information (such as wild strawberry and soybean).138 In most cases, the links between genotypes and phenotypes are more complicated than we expected. One gene can usually respond to multiple phenotypes, and one trait is generally the product of the synergism between multi-genes and multi-development. For this reason, multi-traits DL models were developed and enabled genomic editing in plant breeding.139,140

It is well known that dynamic and accurate monitoring of crops during the whole growth period is vitally important to precision agriculture. In the new stage of agriculture, both remote sensing and DL play indispensable roles. Specifically, remote sensing (including proximal sensing) could produce agricultural big data from ground, air-borne, to space-borne platforms, which have a unique potential to offer an economical approach for non-destructive, timely, objective, synoptic, long-term, and multi-scale information for crop monitoring and management, thereby greatly assisting in precision decisions regarding irrigation, nutrients, disease, pests, and yield.141,142 DL makes it possible to simply, efficiently, and accurately discover knowledge from massive and complicated data, especially for remote sensing big data that are characterized with multiple spatial-temporal-spectral information, owing to its strong capability for feature representation and superiority in capturing the essential relation between observation data and agronomy parameters or crop traits.135,143 Integration of DL and big data for agriculture has demonstrated the most disruptive force, as big as the green revolution. As shown in Figure 7, for possible application a scenario of smart agriculture, multi-source satellite remote sensing data with various geo- and radio-metric information, as well as abundance of spectral information from UV, visible, and shortwave infrared to microwave regions, can be collected. In addition, advanced aircraft systems, such as unmanned aerial vehicles with multi/hyper-spectral cameras on board, and smartphone-based portable devices, will be used to obtain multi/hyper-spectral data in specific fields. All types of data can be integrated by DL-based fusion techniques for different purposes, and then shared for all users for cloud computing. On the cloud computing platform, different agriculture remote sensing models developed by a combination of data-driven ML methods and physical models, will be deployed and applied to acquire a range of biophysical and biochemical parameters of crops, which will be further analyzed by a decision-making and prediction system to obtain the current water/nutrient stress, growth status, and to predict future development. As a result, an automatic or interactive user service platform can be accessible to make the correct decisions for appropriate actions through an integrated irrigation and fertilization system.

Figure 7.

Integration of AI and remote sensing in smart agriculture

Furthermore, DL presents unique advantages in specific agricultural applications, such as for dense scenes, that increase the difficulty of artificial planting and harvesting. It is reported that CNNs and Autoencoder models, trained with image data, are being used increasingly for phenotyping and yield estimation,144 such as counting fruits in orchards, grain recognition and classification, disease diagnosis, etc.145, 146, 147 Consequently, this may greatly liberate the labor force.

The application of DL in agriculture is just beginning. There are still many problems and challenges for the future development of DL technology. We believe, with the continuous acquisition of massive data and the optimization of algorithms, DL will have a better prospect in agricultural production.

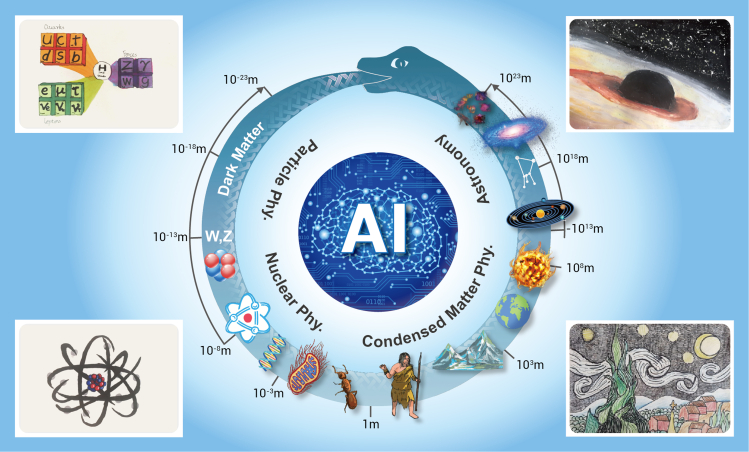

AI in physics

The scale of modern physics ranges from the size of a neutron to the size of the Universe (Figure 8). According to the scale, physics can be divided into four categories: particle physics on the scale of neutrons, nuclear physics on the scale of atoms, condensed matter physics on the scale of molecules, and cosmic physics on the scale of the Universe. AI, also called ML, plays an important role in all physics in different scales, since the use of the AI algorithm will be the main trend in data analyses, such as the reconstruction and analysis of images.

Figure 8.

Scale of the physics

Speeding up simulations and identifications of particles with AI

There are many applications or explorations of applications of AI in particle physics. We cannot cover all of them here, but only use lattice quantum chromodynamics (LQCD) and the experiments on the Beijing spectrometer (BES) and the large hadron collider (LHC) to illustrate the power of ML in both theoretical and experimental particle physics.

LQCD studies the nonperturbative properties of QCD by using Monte Carlo simulations on supercomputers to help us understand the strong interaction that binds quarks together to form nucleons. Markov chain Monte Carlo simulations commonly used in LQCD suffer from topological freezing and critical slowing down as the simulations approach the real situation of the actual world. New algorithms with the help of DL are being proposed and tested to overcome those difficulties.148,149 Physical observables are extracted from LQCD data, whose signal-to-noise ratio deteriorates exponentially. For non-Abelian gauge theories, such as QCD, complicated contour deformations can be optimized by using ML to reduce the variance of LQCD data. Proof-of-principle applications in two dimensions have been studied.150 ML can also be used to reduce the time cost of generating LQCD data.151

On the experimental side, particle identification (PID) plays an important role. Recently, a few PID algorithms on BES-III were developed, and the ANN152 is one of them. Also, extreme gradient boosting has been used for multi-dimensional distribution reweighting, muon identification, and cluster reconstruction, and can improve the muon identification. U-Net is a convolutional network for pixel-level semantic segmentation, which is widely used in CV. It has been applied on BES-III to solve the problem of multi-turn curling track finding for the main drift chamber. The average efficiency and purity for the first turn's hits is about 91%, at the threshold of 0.85. Current (and future) particle physics experiments are producing a huge amount of data. Machine leaning can be used to discriminate between signal and overwhelming background events. Examples of data analyses on LHC, using supervised ML, can be found in a 2018 collaboration.153 To take the potential advantage of quantum computers forward, quantum ML methods are also being investigated, see, for example, Wu et al.,154 and references therein, for proof-of-concept studies.

AI makes nuclear physics powerful

Cosmic ray muon tomography (Muography)155 is an imaging graphe technology using natural cosmic ray muon radiation rather than artificial radiation to reduce the dangers. As an advantage, this technology can detect high-Z materials without destruction, as muon is sensitive to high-Z materials. The Classification Model Algorithm (CMA) algorithm is based on the classification in the supervised learning and gray system theory, and generates a binary classifier designing and decision function with the input of the muon track, and the output indicates whether the material exists at the location. The AI helps the user to improve the efficiency of the scanning time with muons.

AIso, for nuclear detection, the Cs2LiYCl6:Ce (CLYC) signal can react to both electrons and neutrons to create a pulse signal, and can therefore be applied to detect both neutrons and electrons,156 but needs identification of the two particles by analyzing the shapes of the waves, that is n-γ ID. The traditional method has been the PSD (pulse shape discrimination) method, which is used to separate the waves of two particles by analyzing the distribution of the pulse information—such as amplitude, width, raise time, fall time, and the two particles that can be separated when the distribution has two separated Gaussian distributions. The traditional PSD can only analyze single-pulse waves, rather than multipulse waves, when two particles react with CLYC closely. But it can be solved by using an ANN method for classification of the six categories (n,γ,n + n,n + γ,γ + n,γ). Also, there are several parameters that could be used by AI to improve the reconstruction algorithm with high efficiency and less error.

AI-aided condensed matter physics