Abstract

Background

Intraoperative data may improve models predicting postoperative events. We evaluated the effect of incorporating intraoperative variables to the existing preoperative model on the predictive performance of the model for coronary artery bypass graft (CABG).

Methods

We analyzed 378,572 isolated CABG cases performed across 1,083 centers, using the national Society of Thoracic Surgeons Adult Cardiac Surgery Database between 2014–2016. Outcomes were operative mortality, 5 postoperative complications, and composite representation of all events. We fitted models by logistic regression (LR) or extreme gradient boosting (XGBoost). For each modeling approach, we used preoperative only, intraoperative only, or pre+intraoperative variables. We developed 84 models with unique combinations of the 3 variable sets, 2 variable selection methods, 2 modeling approaches, and 7 outcomes. Each model was tested in 20 iterations of 70:30 stratified random splitting into development/testing samples. Model performances were evaluated on the testing dataset using the c-statistic, precision-recall curve (AUPRC), and calibration metrics, including the Brier score.

Results

The mean patient age was 65.3 years, and 24.7% were women. Operative mortality, excluding intraoperative death, occurred in 1.9%. In all outcomes, models that considered pre+intraoperative variables demonstrated significantly improved Brier score and AUPRC compared with models considering pre or intraoperative variables alone. XGBoost without external variable selection had the best c-statistics, Brier score, and AUPRC values in 4 of the 7 outcomes (mortality, renal failure, prolonged ventilation, and composite) compared with LR models with or without variable selection. Based on the calibration plots, risk re-stratification for mortality showed that the LR model underestimated the risk in 11,114 patients (9.8%) and overestimated in 12,005 patients (10.6%). In contrast, XGBoost model underestimated the risk in 7,218 patients (6.4%) and overestimated in 0 patients (0%).

Conclusions

In isolated CABG, adding intraoperative variables to preoperative variables resulted in improved predictions of all 7 outcomes. Risk models based on XGBoost may provide a better prediction of adverse events to guide clinical care.

Keywords: XGBoost, intraoperative variable, prediction, risk model

INTRODUCTION

The Society of Thoracic Surgeons (STS) Adult Cardiac Surgery Database (ACSD) risk models1, 2 are based on logistic regression and only incorporate information available before the operation to inform preoperative decision making and counseling2. Intraoperative events may influence the risk of postoperative outcomes3. Existing risk models in clinical use, such as the STS models and EuroSCORE, do not consider intraoperative information, although such data could improve postoperative prediction and patient care. A possible benefit of such dynamic update of predicted risk includes quantitatively recalibrating patient and provider expectations based on intraoperative events, which may modify decision thresholds to pursue diagnostic tests such as head scans for questionable neurologic deficit or early preparation of dialysis catheter access for those with renal failure risk that increased because of intraoperative events.

Several studies have demonstrated the value of risk models that update risk estimates as more data are generated4–6. In a digital era, this updating could be automated and made available to support decisions at the bedside. However, whether adding intraoperative variables improves prediction, which variables are most important, and which analytic methods yield the more accurate predictions remain unknown. Accordingly, using the national STS ACSD dataset for coronary artery bypass graft (CABG), we sought to determine whether adding and which intraoperative variables into the existing STS preoperative model would improve the predictive performance of the model.

To address this aim, we used the STS database, which includes approximately 100 intraoperative variables related to coronary artery bypass graft (CABG). We also employed machine learning approaches in addition to logistic regression. Machine learning techniques based on tree-based models, such as gradient descent boosting, are suited to identify complex relationships in high-dimensional data. Although researchers have tested machine learning approaches to estimate risk for patients undergoing percutaneous coronary interventions7, 8, such methods have not been evaluated extensively in cardiac surgical risk modeling.

METHODS

Restricted by our Data Use Agreement with the STS, the data used for this study cannot be made publicly available to other researchers for purposes of reproducing the results or replicating the procedure. However, the data are available from the STS Database and Research Center via a specified procedure (https://www.sts.org/industry/sts-national-database-data-requests).

Cohort definition and data source

We included adult patients who underwent isolated CABG from July 2014 to December 2016 in the U.S. centers participating in the STS reporting. We used the STS ACSD data definition version 2.81. We excluded concomitant cases defined at those undergoing any concomitant cardiac operations except for pacemaker implantation, arrhythmia correction surgeries, or left atrial appendage ligation or occlusion. The criteria yielded 378,834 operations. We excluded 102 cases missing gender and 160 with intraoperative death. These exclusions yielded 378,572 operations performed by 2,730 surgeons in 1,083 centers.

The STS ACSD includes >90% of the cardiac surgery centers in the United States9. Clinical sites enter data using uniform STS definitions for patient characteristics and outcomes. The quality of the data has been rigorously validated by comparison with independent national and local datasets10. The database is de-identified, and the Participant User File (PUF) Research Program Committee of the STS Workforce and the Yale University Human Investigation Committee approved this study.

Outcomes

We studied 7 postoperative outcomes using standard STS ACSD definitions: operative mortality, defined as postoperative death from any cause either in-hospital or in discharged patients, within 30 days of the index operation (with the exclusion of intraoperative deaths), prolonged ventilation, defined as mechanical ventilation requirement >24 hours postoperatively, pneumonia, permanent stroke, defined as a neurologic deficit of abrupt onset caused by a disturbance in blood supply to the brain that did not resolve within 24 hours, reoperation for any reasons during the index hospitalization, deep sternal wound infection (DSWI), and renal failure defined as new dialysis requirement, increase in serum creatinine three times greater than the baseline and absolute rise of greater than 0.5mg/dl, or creatinine level >4mg/dL. We fitted models predicting postoperative renal failure on the data, excluding those with preoperative renal failure with or without dialysis need as done in previous works1, 2. Further specifications of the STS ACSD data definitions are available online11. Missing data were rare (<2% for all variables, except for ejection fraction, which was missing in 3%). Missing data were handled as described in the STS ACSD risk model specifications1. Briefly, missing data on categorical predictor variables were imputed to the lowest risk value, and missing data on continuous covariates were imputed to the conditional mean. Missing ejection fraction values were set to the mean values conditioned on congestive heart failure status and sex, and body surface area was conditioned on sex.

Candidate variables

We chose candidate preoperative variables based on the STS ACSD risk model for isolated CABG1. The variables were processed per the description for the STS ACSD model, which included splining of continuous variables such as age and creatinine, and combining categorical variables describing related disease states, such as congestive heart failure and New York Heart Association class variables1. Candidate intraoperative variables were all variables generated during the operation in the STS ACSD version 2.81. We did not employ any specific feature engineering because there are no established standards, unlike the preoperative variables. General categories of variables are summarized in Table 1, and the categories included operative approach, laboratory values, temperature measurements, transfusions, transesophageal echocardiogram results, case duration, cardioplegia strategy, and prophylactic antibiotics use. Cardiopulmonary bypass time and cross-clamp time were excluded because we included off-pump cases. Total operative time was available for all cases and was used instead as a measure of case length. Categorical variables that would be missing in off-pump cases, such as cardioplegia-related variables, were included but missingness was treated as a feature.

Table 1:

Predictor variables

| Preoperative variables | Type or level |

|---|---|

| Age | Linear spline with knots at 50 and 60 years, interaction term with case status and incidence of operation |

| Race/ethnicity | Caucasian, Black, Asian, Hispanic |

| Sex | Female, Male |

| Body surface area | Continuous, conditioned on sex |

| Chronic lung disease | Mild, Moderate, Severe |

| Last preoperative creatinine level | Linear spline with knots at 1.0 and 1.5, conditioned on preoperative dialysis |

| Preoperative dialysis | Yes, No |

| Hypertension | Yes, No |

| Diabetes | No, Non-insulin dependent, insulin dependent |

| Congestive heart failure | Yes, No, conditioned on NYHA class |

| Cerebrovascular disease | Yes, No, conditioned on stroke/TIA |

| Peripheral vascular disease | Yes, No |

| Atrial fibrillation | Yes, No |

| Immunosuppressed status | Yes, No |

| Left main disease | Yes (≥ 50% stenosis), No |

| Myocardial infarction | No, within 6 hours, 24 hours, 21 days, ≥21 days |

| Number of diseased vessels | Discrete continuous |

| PCI < 6 hours | Yes, No |

| Shock | Yes, No |

| Inotrope or IABP use preoperatively | Yes, No |

| Prior cardiovascular surgery | None, once, ≥ twice |

| Ejection fraction | Continuous |

| Mitral insufficiency | Yes, No (Yes = moderate or severe) |

| Tricuspid insufficiency | Yes, No (Yes = moderate or severe) |

| Aortic stenosis | Yes, No (Yes = moderate or severe) |

| Case status | Elective, Urgent, Emergent, Salvage |

|

| |

| Intraoperative variables | Type or level |

|

| |

| Operative approach | Sternotomy, thoracotomy, partial sternotomy, port access |

| Conversion of planned approach | Yes, No |

| Robot used | Yes, No |

| Prophylactic antibiotics used | Yes, No |

| Antibiotics given within 1 hour of incision | Yes, No |

| Prophylactic antibiotics redosed | Yes, No |

| Lowest body temperature | Continuous |

| Lowest hemoglobin, hematocrit | Continuous |

| Cardiopulmonary bypass use | None, Combination, Full, reasons if combination |

| Amino caprioc acid use | Yes, No |

| Tranexamic acid use | Yes, No |

| Clotting factor use | Yes, No |

| Cardioplegia delivery | Antegrade, Retrograde, Both |

| Cardioplegia type | Blood, Crystalloid, Both, Other |

| Blood product use | Yes, No |

| Unit of red blood cell transfused | Continuous |

| Unit of platelet transfused | Continuous |

| Unit of fresh frozen plasma transfused | Continuous |

| Unit of cryoprecipitate transfused | Continuous |

| Intraoperative transesophageal echo | Ejection fraction, valvular insufficiency |

| Time from skin incision to closure | Continuous |

Model development and validation techniques

For each of the 7 outcomes, we developed 12 models with different combinations of starting variable sets, variable selection methods, and relationship modeling for a total of 84 models (Figure 1). We used two starting variables sets: 1) preoperative variables only (same as the existing STS models), 2) intraoperative variables only, and 3) pre + intraoperative variables. Preoperative variables comprised 47 fields that were preprocessed using the method used in the previous STS ACSD risk models. Intraoperative variables consisted of 96 fields without specific preprocessing. The variables in each category are summarized in Table 1. We used two relationship modeling approaches: 1) logistic regression and 2) gradient descent boosting using XGBoost package12. XGBoost is a machine learning algorithm that makes a prediction based on a series of decision trees, with a highly efficient tree boosting algorithm with improved performance over other tree-based approaches in various settings7, 12. Its additional appeal is the ability to rank predictive variables on the order of importance to facilitate clinical interpretation of the model. We chose to use both XGBoost and logistic regression under the hypothesis that the XGBoost algorithm may yield better model performances given the increasingly large number of variables. Logistic regression is the approach that the STS ACSD risk models use. Therefore, logistic regression models were developed as a reference model against which the performance of XGBoost models was evaluated. We used two variable selection methods: 1) no external variable selection and 2) external variable selection by support vector classifier. XGBoost algorithm has an internal variable selection process, and we hereafter refer to models with ‘no variable selection’ as those without prior variable selection using support vector classifier. Parameters of XGBoost models (number of trees, learning rate, and depth of trees) were tuned via internal cross-validation for each outcome to optimize c-statistics of each model, with final parameters outlined in Supplemental Table S1. For each model, we split the dataset randomly into 70% training and 30% testing dataset. This was iterated 20 times to yield 20 estimates for model performance metrics in predicting the outcomes for internal validation. We chose the number of iterations to be 20 after observing that increasing the iterations further did not change the mean or the confidence interval for operative mortality. The random sampling of the split was stratified to ensure adequate sampling of rare events. We reported means and 95% confidence intervals of 20 iterations for each metric.

Figure 1:

Analysis flow for development and evaluation of models. The figure summarizes the modeling approach and metrics used to evaluate the performance. Combinations of variable sets, variable selection approach, and modeling technique for 7 outcomes resulted in 84 different models. CABG = coronary artery bypasss graft surgery; STS ACSD= Society of Thoracic Surgeons Adult Cardiac Surgery Database; AUPRC = area under the precision-recall curve

Performance metrics

We evaluated the model performance for the testing dataset in each model using c-statistics, the area under the precision-recall curve (AUPRC), Brier score, resolution, and reliability. C-statistics (AUROC) characterized model discrimination and ranged between 0 to 1, with a higher value corresponding to better discrimination13. AUROC is the proportion of the times patients with an event were accurately classified to have a higher probability of event within all possible pairs of patients with and without an event13. Because AUROC can provide a misleadingly optimistic view of the model performance when classifying event of low incidences, we also evaluated AUPRC, which relates positive predictive value (also known as precision) and sensitivity (also known as recall), and is less susceptible to unbalanced nature of datasets14. Therefore, AUPRC complements AUROC to characterize the discriminatory ability when the outcome of interest is rare, as it can uncover potentially faulty model performance in the precision-recall space by penalizing a high false-negative rate. Brier score is the mean squared error (MSE) of predicted probability of an event (ranges 0 to 1) and observed event (binary 0 or 1), with lower values corresponding to higher accuracy of the prediction15. Performance metrics were compared to their means to report whether one is numerically higher or lower, with the corresponding interpretation of better or worse. We did not evaluate the statistical significance of the difference because arbitrarily increasing the number of resampling iterations would drive the comparisons toward statistically significant differences.

We also assessed calibration using reliability measure, defined as the sum of MSE between the predicted probability and observed rate at each decile, with lower values corresponding to better calibration16. Reliability is more sensitive in capturing deviations of the predicted risks from the true rates than the calibration slope does. Resolution is the MSE between the deciles of predicted risks and the event rate of the entire cohort. Therefore, higher values of resolution indicate prediction across greater distances from the observed event rate and indicate models with better performance17. We also showed a continuous calibration plot showing model calibration for a wide range of risks using cubic spline smoothers. In contrast to the commonly used calibration plots with decile-based risk stratification, a continuous calibration plot offers an estimation of calibration in a continuum of predicted risk18.

Clinical interpretability

To provide clinically interpretable information beyond model performance metrics, we evaluated how many cases were re-stratified according to the predicted risk generated by the base model (logistic regression with preoperative variables without variable selection) and the model with the best performance. This step was performed on a randomly selected split of the data without multiple iterations. Risk strata were defined a priori based on authors’ consensus as clinically relevant cutoffs. We applied the same threshold for outcomes with similar incidences (mortality, renal failure, and stroke as <1%, 1–3%, 3–5%, 5–10%, and >10%). We reported proportions of cases with underestimated or overestimated risks compared to the true event incidence for the base and best models. We also made this comparison between pre+intraoperative variable models fitted with logistic regression and XGBoost. We elected not to use the net reclassification index for its susceptibility to yield false-positive results when using large datasets19.

To understand intraoperative variables that may be related to changing patients’ predicted risk, we fitted logistic regression using both pre and intraoperative variables over the entire dataset to estimate coefficients, odds ratio, and 95% confidence interval of each variable in its relationship to operative mortality. For the XGBoost model, we used the ‘feature_importances_’ function, a built-in function in the XGBoost package, to rank the input variables in the order of importance in fitting the particular model. Additionally, we evaluated two patients whose predicted probabilities of mortality were discrepant between logistic regression and XGBoost approaches to gain further insights into how different phenotypes are handled by each algorithm.

Data preprocessing and statistical analysis were implemented with Python (version 2.7) and the open-source packages available in Scikit-Learn20. Three authors (MM, TJD, and CH) had access to the data, did the coding, and take responsibility for the analyses. The final code was reviewed independently by one author (AC) for quality assurance.

RESULTS

Among the 378,572 hospitalizations for isolated CABG, the mean (standard deviation [SD]) patient age was 65.3 (10.2) years. Women comprised 93,425 (24.7%) of the cohort. Operative mortality, excluding intraoperative death, occurred in 1.9%. Permanent stroke occurred in 1.3%, renal failure in 2.1%, prolonged ventilation in 8.1%, reoperation for any reasons in 3.5%, DSWI in 0.3%. The composite event rate of the above adverse events was 12.1%. (Table 2). Intraoperative variables are summarized in Supplemental Table III.

Table 2:

Incidences of Postoperative Events

| Events | N=378,572 |

|---|---|

| Operative mortality | 1.88% |

| Permanent stroke | 1.31% |

| Renal failure | 2.11% |

| Prolonged ventilation | 8.05% |

| Reoperation | 3.47% |

| DSWI | 0.30% |

| Composite morbidity and mortality | 12.08% |

DSWI = deep sternal wound infection

Preoperative, Intraoperative, Pre+Intraoperative variables

Between models using only preoperative or intraoperative variables, models for all outcomes, except for reoperation, had better AUROC values with a preoperative variable set alone than those using only intraoperative variable sets. In all outcomes, models using pre+intraoperative variables had better AUROC than respective models using either intraoperative or preoperative variable sets alone. This relationship also held for Brier score and AUPRC in all outcomes, except for DSWI, in which there were no substantial differences in Brier score between 3 different variable sets (Figure 2). Other performance metrics are summarized in Supplemental Table III.

Figure 2:

Model performances for mortality and renal failure. Figure summarizes model performances for mortality and renal failure evaluated by 3 metrics. Circled red triangles represent the baseline model, which are logistic regression models using preoperative variables only without further variable selection. * indicates the model with best performance within the same variable set. For all metrics, the right end of the x-axis is better and the left end is worse. For example, for operative mortality, XGBoost model using pre+intraoperative variables without variable selection had the best performance in c-statistics, Brier score, and the area under precision-recall curve (AUPRC).

Logistic regression vs. XGBoost

Among the models using pre+intraoperative variables, XGBoost without prior variable selection had the best AUROC, Brier score, and AUPRC values in 4 of the 7 outcomes (mortality, renal failure, prolonged ventilation, and composite) compared with logistic regression models with or without variable selection. For DSWI, the logistic regression model with variable selection had the best performance in all 3 metrics. For reoperation and stroke, the model with the best performance varied across the metrics: discrimination (AUROC and AUPRC) was better in logistic regression models while calibration (Brier score) was better in the XGBoost model (Figure 2 for mortality and renal failure, Supplemental Table IV for other outcomes). The Supplemental methods describes two patients’ phenotypes of which the predicted risk of mortality differed largely between the XGBoost and logistic regression model.

Calibration plot

Continuous calibration plot demonstrated that for all outcomes, the logistic regression model tended to underestimate the patient risk at extremely high risks compared with the observed event rate (Figure 3). Calibration plots including the confidence band and outcomes other than mortality and renal failure are summarized in Supplemental Figure I and II. Calibration was generally good in the risk range, where the majority of patients resided. For all combinations of outcomes and variable sets (preoperative only or pre+intraoperative variables), XGBoost models showed better calibration across broader range of risks compared with the logistic regression model.

Figure 3:

Continuous calibration plot for 7 outcomes. Figure shows continuous calibration plot for mortality (left) and renal failure (right). Red lines are XGBoost and blue lines are logistic regression model calibrations. Dotted lines are models using preoperative variables only and solid lines are models using pre+intraoperative variables. Black line represents perfect calibration. The legend shows percent of the cohort that had predicted event probability above the indicate threshold in percentage. For example, for operative mortality, 2.3% of the patients had predicted probability of operative mortality >10%.

Risk re-stratification

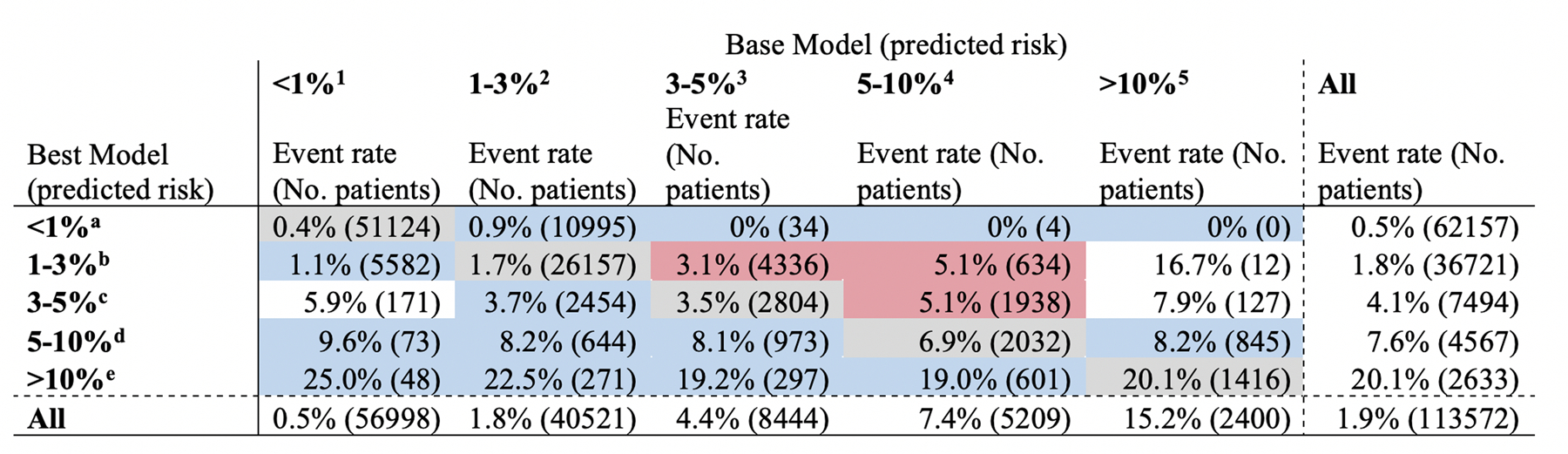

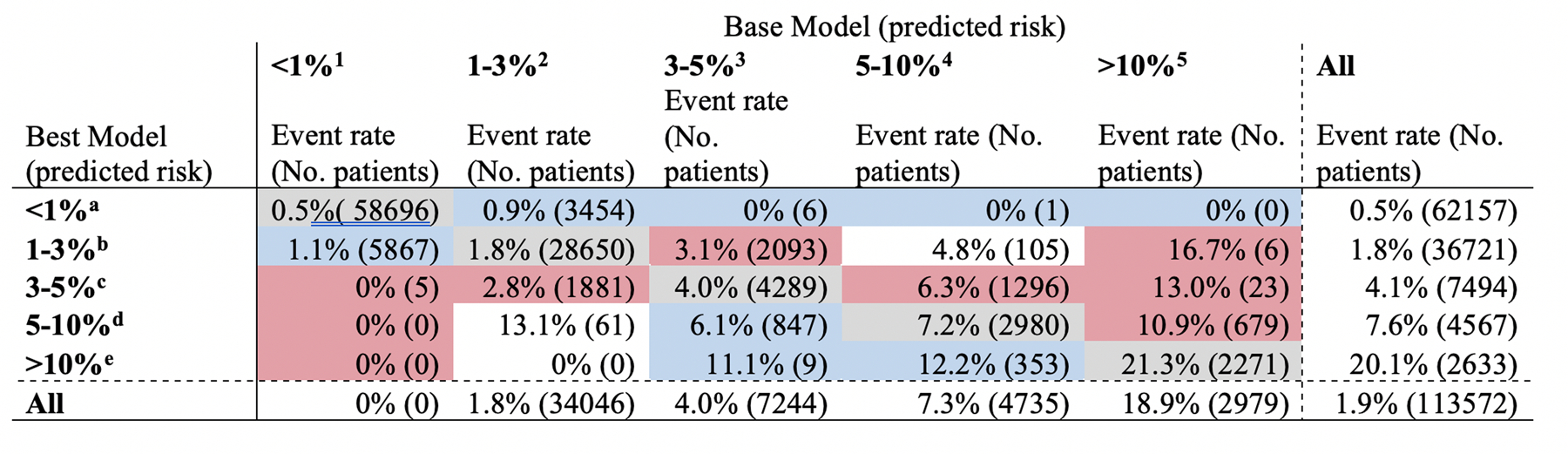

The shift table of risk strata was created to compare the mortality risk stratification by the baseline model (preoperative variable set with logistic regression without variable selection) and the model that performed optimally (preoperative and intraoperative variables with logistic regression without variable selection). For mortality, this showed that baseline model underestimated the risk in 11,114 patients (9.8%) and overestimated 12,005 patients (10.6%). In contrast, the best model underestimated the risk in 7,218 patients (6.4%) and overestimated 0 patients (0%) (Figure 4). Comparing models fitted over pre+intraoperative variables, logistic regression without variable selection underestimated the risk 7,137 patients (6.3%) and overestimated in 3,566 patients (3.1%), while XGBoost without variable selection underestimated the risk in 4,263 patients (3.8%) and overestimated in 1,886 patients (1.7%) (Figure 5). Therefore, using the same set of predictors for mortality, the XGBoost model yielded 54% fewer misclassifications in risk compared with the logistic regression model. For renal failure, using pre+intraoperative predictors, the XGBoost model yielded 112% fewer misclassifications in risk than the logistic regression model (Supplemental Table II).

Figure 4:

Shift table of predicted risk for operative mortality: Logistic regression with preoperative variables vs. XGBoost with pre+intraoperative variables. The figure shows predicted risk of operative mortality by the base model (logistic regression using preoperative variables without variable selection) and the best model (XGBoost using pre+intraoperative variables without variable selection). Actual observed mortality rate is indicated by the % and numbers in parenthesis indicate number of all patients in each predicted risk strata. Gray cells are those classified in the same stratum by both models. Base model underestimated 11,114 patients (9.8%) and overestimated 12,005 patients (10.6%). Best model underestimated 7,218 patients (6.4%) and overestimated 0 patients (0%).

*1–5 denotes cell location by the column and a-e denotes cell location by the row.

Figure 5:

Shift table of predicted risk for operative mortality: Logistic regression with pre+intraoperative variables vs. XGBoost with pre+intraoperative variables. The figure shows predicted risk of operative mortality by the base model (logistic regression using preoperative variables without variable selection) and the best model (XGBoost using pre+intraoperative variables without variable selection). Actual observed mortality rate is indicated by the % and numbers in parenthesis indicate number of all patients in each predicted risk strata. Gray cells are those classified in the same stratum by both models. Base model underestimated 7,137 patients (6.3%) and overestimated 3,566 patients (3.1%). Best model underestimated 4,263 patients (3.8%) and overestimated 1,886 patients (1.7%).

*1–5 denotes cell location by the column and a-e denotes cell location by the row.

Examples of patients with large discrepancies between logistic regression and XGBoost models are the following: A 42-year-old man with minimal comorbidity who underwent emergent 3-vessel CABG received 23 units of red blood cell and 16 units of plasma. This patient had a 44% and 6% chance of death predicted by XGBoost and logistic regression, respectively. A 70-year-old man who underwent salvage 5-vessel CABG with intraoperative IABP placement. XGBoost and logistic regression models predicted the risk of this patient’s mortality to be 30% and 10%, respectively. In a case where logistic regression had a significantly higher predicted mortality probability, a 52-year-old woman with minimal comorbidity undergoing elective 5-vessel CABG required IABP during the operation and had the lowest body temperature of 27°C. This patient had predicted mortality of 6% by XGBoost and 20% by logistic regression.

Intraoperative variables associated with the outcomes

Fitting logistic regression model for operative mortality using pre+intraoperative variable set, we identified intraoperative variables that improved the prediction of operative mortality. These included undergoing full sternotomy, intraoperative intraaortic balloon pump (IABP) use, lack of left internal mammary artery use, number of distal anastomosis performed, appropriate type and timing of antibiotics use, lowest body temperature, highest glucose and lowest hemoglobin level, cardioplegia route and type, blood product use, and postoperative residual tricuspid regurgitation and ejection fraction, and total case time (Table 3). Intraoperative variables identified as important features in the XGB model were similar, including: intraoperative IABP use, blood product use, timing and redosing of antibiotics, lowest body temperature, highest glucose and lowest hemoglobin level, cardioplegia route, postoperative valvular insufficiency, incision approach, and total case time. The relative importance score for the XGB model was the highest for plasma transfusion (score of 292), followed by any transfusion (220), intraoperative IABP use (182), red cell transfusion (123), and lack of internal mammary artery use (50) (Table 4).

Table 3:

Odds ratio estimate for variables selected by support vector machine in predicting mortality

| Variables | OR | 2.50% | 97.50% |

|---|---|---|---|

| Preoperative | |||

| Body surface area | 2.464 | 2.011 | 3.019 |

| Shock | 2.396 | 2.144 | 2.677 |

| Dialysis | 2.066 | 1.67 | 2.556 |

| Severe lung disease | 2.013 | 1.852 | 2.188 |

| Salvage status | 2.004 | 1.712 | 2.345 |

| IABP or inotrope dependent | 1.661 | 1.539 | 1.791 |

| Creatinine (splined at 1.0) | 1.512 | 1.194 | 1.914 |

| CHF with NYHA III/IV | 1.477 | 1.36 | 1.604 |

| MI (within 24 hours) | 1.469 | 1.303 | 1.657 |

| Tricuspid insufficiency | 1.463 | 1.335 | 1.604 |

| Peripheral vascular disease | 1.439 | 1.356 | 1.526 |

| Moderate lung disease | 1.331 | 1.207 | 1.467 |

| CHF with NYHA I/II | 1.324 | 1.245 | 1.408 |

| Immunosuppressed | 1.309 | 1.173 | 1.461 |

| CVD without stroke | 1.293 | 1.198 | 1.397 |

| MI (within 21 days) | 1.232 | 1.163 | 1.304 |

| Mitral insufficiency | 1.206 | 1.121 | 1.299 |

| Urgent status | 1.145 | 1.076 | 1.219 |

| Insulin-dependent diabetes | 1.142 | 1.074 | 1.214 |

| Number of diseased vessels | 1.135 | 1.074 | 1.199 |

| CVD with stroke | 1.127 | 1.054 | 1.206 |

| Age (splined at 50) | 1.116 | 1.095 | 1.138 |

| Left main disease | 1.079 | 1.025 | 1.136 |

| Age*Case urgency | 1.023 | 1.019 | 1.028 |

| Age*Reoperative status | 1.011 | 1.006 | 1.017 |

| Age (splined at 60) | 1.003 | 0.989 | 1.017 |

| Ejection fraction | 0.982 | 0.978 | 0.987 |

| Age | 0.933 | 0.924 | 0.943 |

| *Creatinine | 0.896 | 0.722 | 1.111 |

| Intraoperative | |||

| Intraoperative IABP | 4.419 | 4.054 | 4.816 |

| Any blood products used | 1.168 | 1.095 | 1.247 |

| pRBC (number of units used) | 1.122 | 1.103 | 1.142 |

| Intraoperative TEE performed | 1.093 | 1.027 | 1.163 |

| FFP (number of units used) | 1.066 | 1.04 | 1.092 |

| Highest intraoperative glucose level | 1.002 | 1.002 | 1.002 |

| Skin-to-skin time | 1.002 | 1.002 | 1.002 |

| Lowest body temperature | 0.98 | 0.97 | 0.989 |

| Blood cardioplegia use | 0.927 | 0.868 | 0.99 |

| Lowest intraoperative hemoglobin level | 0.924 | 0.909 | 0.94 |

| Number of distal anastomosis | 0.902 | 0.878 | 0.928 |

| Postop EF (increased) | 0.88 | 0.82 | 0.945 |

| LIMA used | 0.844 | 0.781 | 0.911 |

| *Appropriate antibiotics used | 0.844 | 0.696 | 1.022 |

| Antegrade cardioplegia | 0.822 | 0.764 | 0.884 |

| Postop tricuspid regurgitation (None) | 0.812 | 0.75 | 0.879 |

| Appropriate timing of antibiotics use | 0.793 | 0.65 | 0.967 |

| Antegrade and retrograde cardioplegia | 0.783 | 0.727 | 0.843 |

| Postop tricuspid regurgitation (trace/trivial) | 0.782 | 0.718 | 0.851 |

| Full sternotomy | 0.779 | 0.64 | 0.949 |

| Planned use of combination CPB | 0.585 | 0.474 | 0.721 |

Indicates variables with confidence interval crossing 1.0.

Table 4:

Variables on the order of importance in XGBoost model for mortality

| Intraoperative variables | Importance score |

|---|---|

| Plasma transfusion | 291.7 |

| Any transfusion | 219.9 |

| Intraoperative IABP use | 181.9 |

| Red cell transfusion | 122.8 |

| Internal thoracic artery use | 50.0 |

| Lowest hemoglobin level | 40.2 |

| Postop ejection fraction | 39.3 |

| Highest glucose level | 34.7 |

| Surgery duration | 30.7 |

| Cardioplegia delivery approach | 27.2 |

| Postop tricuspid insufficiency | 15.4 |

| *Number of distal anastomosis | 15.0 |

| Postop mitral insufficiency | 14.1 |

| Unplanned use of combination CPB | 13.7 |

| Platelet transfusion | 13.6 |

| Tranexamic acid use | 11.3 |

| Postop aortic insufficiency | 10.1 |

| Operative approach (full/partial sternotomy, thoracotomy) | 9.1 |

| Cryoprecipitate transfusion | 8.5 |

| Lowest body temperature | 8.3 |

| Whether additional prophylactic antibiotic dose given | 7.9 |

| Timing of antibiotics dosing | 7.3 |

| Clotting factor administration | 5.9 |

|

| |

| Preoperative variables | |

|

| |

| Preop IABP or inotrope use | 193.2 |

| Shock | 166.0 |

| CHF*NYHA class | 165.9 |

| Peripheral vascular disease | 115.9 |

| Age*Status | 97.8 |

| Chronic lung disease | 92.3 |

| Tricuspid insufficiency | 89.7 |

| Mitral insufficiency | 85.8 |

| Ejection fraction | 85.2 |

| Age | 63.6 |

| Creatinine | 59.7 |

| Timing of myocardial infarction | 43.0 |

| Status (elective, urgent, emergent, salvage) | 40.2 |

| Insulin-dependent diabetes | 19.5 |

| Sex*body surface area | 18.9 |

| Age*Redo sternotomy | 17.3 |

| Number of diseased vessels | 16.8 |

| Body surface area | 14.1 |

| Stroke | 13.8 |

| PCI within 6 hours | 12.0 |

| Left main disease | 11.6 |

| Immunosuppressed status | 10.4 |

| Hypertension | 10.4 |

| Atrial fibrillation | 9.9 |

| Aortic stenosis | 9.9 |

| Transient ischemic attack | 8.6 |

| Race | 7.5 |

| Ethnicity | 6.0 |

IABP = intraaortic balloon pump; CPB = cardiopulmonary bypass; CHF = congestive heart failure; NYHA= New York Heart Association; PCI = percutaneous coronary intervention.

Number of anastomosis was treated as continuous variable.

DISCUSSION

Using the national STS ACSD for isolated CABG, we demonstrated that including intraoperative variables provided a better model predicting postoperative events across all outcomes, although the gain was small in some outcomes. The findings were consistent with our two analytic approaches, though the XGBoost algorithm improved the model performance slightly more than logistic regression. This study highlights the potential value of including interoperative variables and using machine learning approaches to predict the risk of adverse events after surgery.

Our study adds to the current literature in several ways. First, the existing STS ACSD models only use variables that are available before the operation, which are predominantly patient characteristics. While this is an appropriate approach for a tool intended to characterize surgeon and hospital performances and support operative decision making, the potential value of intraoperative information had yet to be demonstrated conclusively. Our work showed a sizable, consistent gain in the model performance by adding the intraoperative variables. With the integration of such a model with an electronic health record system and mapping of pertinent variables, it may be possible to implement a dynamic risk model that updates the predicted risk for individual patients as the data become available. Our work can serve as a prototype for future endeavors.

Second, although machine learning models have been evaluated in large clinical registries, it has not been applied to the contemporary national STS ACSD with an extensive number of variables, and the potential advantage of machine learning approach applied to this commonly utilized dataset had been unknown. Our work demonstrated that performance gain occurred with the XGBoost approach compared with the logistic regression counterparts in 4 of the 7 evaluated outcomes. Even when the gains occurred, those measured by AUROC, Brier score, and AUPRC were small. However, when evaluating risk re-stratification across the pre-specified risk strata, the XGBoost model showed more accurate stratification. For example, for operative mortality, the logistic regression model overestimated risk in 10.6% of the patients, while overestimation was 0% in the XGBoost model. In examining several patients who had a discrepant predicted probability of mortality between XGBoost and logistic regression models, we noted that discrepancies may occur in patients with extreme observed value in variables that are key predictors of the event. This may be because algorithms had different ways of processing extreme values and that larger magnitude of predicted risks tends to have larger error margin.

The STS ACSD, despite being one of the most extensive clinical registries in cardiac surgery, constrains variables at the time of data collection, resulting in the categorization of the majority of the variables with only a small fraction retained as continuous variables. This likely limited the performance of the XGBoost algorithm, as a strength of the algorithm lies in better handling of extensive interactions between continuous variables in a high-dimensional space8. Therefore, the dataset likely did not allow the machine learning algorithm to realize its full potential in improving prediction, and emphasizes the importance of future work leveraging the rich health data that exist with electronic medical record systems21.

Additionally, a continuous calibration plot suggested that the XGBoost model may improve the prediction of those at extremely high risks in all outcomes. Although the confidence interval is wide at high-risk ranges due to most events having low incidences, the pattern appeared to be consistent that XGBoost follows the line of ideal calibration more closely than the logistic regression model counterparts did. This may have implications in better estimating the risks of those patients at extreme risks, which surgeons encounter rarely but may not have been predicted as well with existing models.

This study has several implications. First, as the predictions appear to improve with incorporating variables from multiple phases of care (preoperative and intraoperative), data acquisition, processing, and output platforms must evolve to shorten the time gap between the origination of the data and outputting the prediction in order for this improved prediction to make clinical impact21, 22. Given the large number of variables used, potential implementation solutions likely require integration of the prediction algorithm into the electronic medical record system. With appropriate data mapping, the model may continue to update prediction as the variable becomes available in the electronic medical record system. Second, as XGBoost may yield better prediction, not only by the conventional performance metrics but also by risk re-stratifications, especially for those with extremely high risk, widely used prediction models may benefit from adopting such a modeling approach.

Our analysis brought forth several intraoperative variables that were associated with adverse outcomes. For mortality, appropriate timing of antibiotic use and the use of antegrade cardioplegia compared to retrograde cardioplegia alone was associated with lower odds of operative mortality. As have been demonstrated, intraoperative body temperature and glucose levels were also predictive of mortality risk. As we did not evaluate such relationships in a rigorous causal inference framework, future works should determine whether they are markers or mediators of adverse outcomes. For example, the observation that transfusions are associated with increased risk of mortality may be a marker of severe conditions requiring transfusion, rather than the transfusion itself affecting short-term mortality. Similarly, the inability to use the left internal mammary artery may represent the surgeon-specific or process-related risk and not the immediate physiologic effect towards mortality. Evaluating causal pathways for the intraoperative variables will likely improve the dynamic prediction of risk.

Finally, while not the main aim of this study, both XGBoost and logistic regression models yielded similar sets of variables that were deemed important or statistically significant for the prediction. These include preoperative hemodynamic acuity, transfusion-related variables, peak intraoperative glucose level, and intraoperative echocardiographic findings.

Limitations

Our study shares limitations of the STS ACSD risk models in that the models were developed from the dataset of those who underwent the operation. Therefore, even the models using only the preoperative variables do not encompass the entire population of potential operative candidates, only some of whom will undergo the operation. Additionally, to evaluate intraoperative predictors, we excluded intraoperative deaths. Although this was an extremely small population, this difference in the definition of operative mortality, and consequently, the slight difference in event incidences, compared with the STS ACSD risk model, should be acknowledged. Tree-based models, including XGBoost used in this study, does not yield covariate coefficients as logistic regression models do. This limits the interpretation of the relationship between covariates and outcomes in the way with which the clinical community is familiar. In order to provide the interpretability of our results, we provided lists of variables that were deemed important by the XGBoost models. Finally, the dataset was studied retrospectively and although the phase of care to which the variable set belonged to was informed by the STS ACSD data definition, we did not have the knowledge of which variables were actually available to clinicians preoperatively and intraoperatively.

CONCLUSIONS

In predicting 7 outcomes commonly used to measure cardiac surgical outcomes, the addition of intraoperative variables to preoperative variables resulted in improved predictions of all outcomes. For most outcomes, the XGBoost model performed better than logistic regression counterparts, although the gain associated with the modeling technique was small when measured by calibration and discrimination metrics. Calibration plot and risk reclassification further demonstrated the potential advantage of the XGBoost approach. In an environment where high dimensional data can be processed, risk models based on XGBoost may provide a better prediction of adverse events to guide clinical care.

Supplementary Material

What is Known

Perioperative outcomes of coronary artery bypass graft are currently predicted from information available preoperatively.

Whether the addition of intraoperative variables improves the predictive performance of the models and the optimal ways of handling the increased number of predictive variables are unknown.

What this study adds

This study demonstrated that the addition of intraoperative variables consistently and substantially improved the model performance in predicting 7 perioperative mortality and morbidity outcomes.

The use of the machine learning approach showed a small gain in the model performance compared with the logistic regression approach.

Acknowledgement

The data for this research were provided by the Society of Thoracic Surgeons’ National Database Participant User File Research Program. Data analysis was performed at the investigators’ institution.

Source of Funding

This publication was made possible by the Yale Clinical and Translational Science Award, grant UL1TR001863, from the National Center for Advancing Translational Science, a component of the National Institutes of Health.

Footnotes

Disclosures

In the past three years, Harlan Krumholz received expenses and/or personal fees from UnitedHealth, IBM Watson Health, Element Science, Aetna, Facebook, the Siegfried and Jensen Law Firm, Arnold and Porter Law Firm, Martin/Baughman Law Firm, F-Prime, and the National Center for Cardiovascular Diseases in Beijing. He is an owner of Refactor Health and HugoHealth, and had grants and/or contracts from the Centers for Medicare & Medicaid Services, Medtronic, the U.S. Food and Drug Administration, Johnson & Johnson, and the Shenzhen Center for Health Information.

Wade Schulz was an investigator for a research agreement, through Yale University, from the Shenzhen Center for Health Information for work to advance intelligent disease prevention and health promotion; collaborates with the National Center for Cardiovascular Diseases in Beijing; is a technical consultant to HugoHealth, a personal health information platform, and cofounder of Refactor Health, an AI-augmented data management platform for healthcare; is a consultant for Interpace Diagnostics Group, a molecular diagnostics company.

REFERENCES

- 1.Shahian DM; O’Brien SM; Filardo G; Ferraris VA; Haan CK; Rich JB; Normand SLT; DeLong ER; Shewan CM; Dokholyan RS et al. The Society of Thoracic Surgeons 2008 cardiac surgery risk models: part 1--coronary artery bypass grafting surgery. Ann Thorac Surg 2009;88:S2–22. doi: 10.1016/j.athoracsur.2009.05.053 [DOI] [PubMed] [Google Scholar]

- 2.Shahian DM; Jacobs JP; Badhwar V; Kurlansky PA; Furnary AP; Cleveland JC; Lobdell KW; Vassileva C; Wyler von Ballmoos MC; Thourani VH; et al. The Society of Thoracic Surgeons 2018 Adult Cardiac Surgery Risk Models: Part 1-Background, Design Considerations, and Model Development. Ann Thorac Surg 2018;105:1411–1418. doi: 10.1016/j.athoracsur.2018.03.002 [DOI] [PubMed] [Google Scholar]

- 3.Aronson S; Stafford-Smith M; Phillips-Bute B; Shaw A; Gaca J; Newman M; Endeavors CAR Intraoperative systolic blood pressure variability predicts 30-day mortality in aortocoronary bypass surgery patients. Anesthesiology 2010;113:305–12. doi: 10.1097/ALN.0b013e3181e07ee9 [DOI] [PubMed] [Google Scholar]

- 4.Celi LA, Marshall JD, Lai Y, Stone DJ. Disrupting Electronic Health Records Systems: The Next Generation. JMIR Med Inform 2015;3:e34. doi: 10.2196/medinform.4192 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 5.Miotto R, Li L, Kidd BA, Dudley JT. Deep Patient: An Unsupervised Representation to Predict the Future of Patients from the Electronic Health Records. Sci Rep 2016;6: 26094. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 6.Rajkomar A, Oren E, Chen K, et al. Scalable and accurate deep learning with electronic health records. npj dig med 2018;1:18. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 7.Huang C; Murugiah K; Mahajan S; Li SX; Dhruva SS; Haimovich JS; Wang Y; Schulz WL; Testani JM; Wilson FP; Mena CI; et al. Enhancing the prediction of acute kidney injury risk after percutaneous coronary intervention using machine learning techniques: A retrospective cohort study. PLoS Med November 2018;15:e1002703. doi: 10.1371/journal.pmed.1002703 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 8.Mortazavi BJ; Bucholz EM; Desai NR; Huang C; Curtis JP; Masoudi FA; Shaw RE; Negahban SN; Krumholz HM Comparison of Machine Learning Methods With National Cardiovascular Data Registry Models for Prediction of Risk of Bleeding After Percutaneous Coronary Intervention. JAMA Netw Open 2019;2:e196835. doi: 10.1001/jamanetworkopen.2019.6835 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 9.Jacobs JP; Shahian DM; He X; O’Brien SM; Badhwar V; Cleveland JC Jr.; Furnary AP; Magee MJ; Kurlansky PA; Rankin JS; et al. Penetration, Completeness, and Representativeness of The Society of Thoracic Surgeons Adult Cardiac Surgery Database. Multicenter Study. Ann Thorac Surg 2016;101:33–41; doi: 10.1016/j.athoracsur.2015.08.055 [DOI] [PubMed] [Google Scholar]

- 10.Shahian DM; Jacobs JP; Edwards FH; Brennan JM; Dokholyan RS; Prager RL; Wright CD; Peterson ED; McDonald DE; Grover FL The society of thoracic surgeons national database. Heart 2013;99:1494–501. doi: 10.1136/heartjnl-2012-303456 [DOI] [PubMed] [Google Scholar]

- 11.The Society of Thoracic Surgeons Adult Cardiac Surgery Database Data Collection https://www.sts.org/registries/sts-national-database/adult-cardiac-surgery-database. Jan 1 2018. Accessed: Jan 10, 2020.

- 12.Chen T, Guestrin C. XGBoost: A Scalable Tree Boosting System presented at: Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining; 2016; San Francisco, California, USA. [Google Scholar]

- 13.Hanley JA, McNeil BJ. The meaning and use of the area under a receiver operating characteristic (ROC) curve. Radiology 1982;143:29–36. doi: 10.1148/radiology.143.1.7063747 [DOI] [PubMed] [Google Scholar]

- 14.Davis J, Goadrich M. The relationship between Precision-Recall and ROC curves presented at: Proceedings of the 23rd international conference on Machine learning; 2006; Pittsburgh, Pennsylvania, USA. [Google Scholar]

- 15.Brier GW. Verification of Forecasts Expressed in Terms of Probability. Monthly Weather Review 1950;78:1–3. doi: 10.1175/1520-0493(1950) [DOI] [Google Scholar]

- 16.Murphy AH. A New Vector Partition of the Probability Score. Journal of Applied Meteorology 1973;12:595–600. doi: 10.1175/1520-0450(1973) [DOI] [Google Scholar]

- 17.Toth Z, Talagrand O, Zhu Y. The attributes of forecast systems: a general framework for the evaluation and calibration of weather forecasts. Predictability of Weather and Climate 584 (2006): 595. [Google Scholar]

- 18.Finazzi S, Poole D, Luciani D, Cogo PE, Bertolini G. Calibration belt for quality-of-care assessment based on dichotomous outcomes. PLoS One 2011;6:e16110. doi: 10.1371/journal.pone.0016110 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 19.Pepe MS, Fan J, Feng Z, Gerds T, Hilden J. The Net Reclassification Index (NRI): a Misleading Measure of Prediction Improvement Even with Independent Test Data Sets. Stat Biosci October 2015;7:282–295. doi: 10.1007/s12561-014-9118-0 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 20.Pedregosa F Scikit-learn: Machine Learning in Python. J Mach Learn Res 2011;12:2825–2830. [Google Scholar]

- 21.Mori M, Schulz WL, Geirsson A, Krumholz HM. Tapping Into Underutilized Healthcare Data in Clinical Research. Ann Surg August 2019;270:227–229. [DOI] [PubMed] [Google Scholar]

- 22.Vahi K; Harvey I; Samak T; Gunter D; Evans K; Rogers D; Taylor I; Goode M; Silva F; Al-Shakarchi E; et al. A Case Study into Using Common Real-Time Workflow Monitoring Infrastructure for Scientific Workflows. J Grid Comput 2013;11:381–406. doi: 10.1007/s10723-013-9265-4 [DOI] [Google Scholar]

- 23.Galloway CD; Valys AV; Shreibati JB; Treiman DL; Petterson FL; Gundotra VP; Albert DE; Attia ZI; Carter RE; Asirvatham SJ; et al. Development and Validation of a Deep-Learning Model to Screen for Hyperkalemia From the Electrocardiogram. JAMA Cardiol 2019;4:428–436. doi: 10.1001/jamacardio.2019.0640 [DOI] [PMC free article] [PubMed] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.