Highlights

-

•

Intentional assessment design is essential for advanced practitioner courses.

-

•

Using programmatic assessment enables learner agency and individualised learning.

-

•

Aligning assessment longitudinally facilitates transparent achievement of advanced practitioner capabilities.

Keywords: Assessment, Advanced practice, Programmatic assessment, Radiation therapy, Pedagogy

Abstract

Creating meaningful assessment for advanced radiation therapy practice training programs is a challenge. This is because it requires a balance of formative and summative assessments, which meet the academic and professional needs of the practitioner, as well as the requirements of local service delivery, educational and professional standards. This paper discusses educational strategies and models used to integrate assessment into theoretical and clinical curricula, allowing practitioners to demonstrate higher order cognitive knowledge, advanced level clinical performance and attitudes/values associated with advanced practice. The discussion draws upon concepts of constructive alignment and programmatic approaches to assessment, which use Bloom’s taxonomy, Benner’s beginner to competent model of skill development, and Miller’s pyramid of clinical competence. These models are analysed with respect to an advanced practice program in adaptive radiation therapy to provide context.

Introduction

Assessment is a multi-facetted and essential component of health professional higher education curricula, and it has been defined as “a systematic process to measure or evaluate the characteristics or performance of individuals, for drawing inferences” [1]. Beyond an evaluation of tasks and skills, assessment is used to verify that learners are demonstrating the required knowledge, skills and behaviours to perform clinical activities accurately and effectively, for safe patient care. Assessment may be formative or summative [2], [3], [4], [5], [6], and when used in combination, these enable demonstration of breadth and depth of cognitive knowledge and clinical performance.

Assessment approaches within academic courses are often designed to validate discrete areas of knowledge or skills and are reflective of topics which stand-alone from one another, rather than as a continuum through the course. These examples of ‘assessment of learning’ confirm the student is able to practice safely in the profession upon graduation, however they do not consistently assess integration of theory, performance and attitudinal behaviours with a whole of program perspective. A more holistic and meaningful approach to assessment across the course continuum is essential in health professional programs, to enable learners to consistently demonstrate the broader range of expected capabilities and integrate these into clinical practice.

Internationally, health professional education course curricula and associated assessments are guided by performance standards, determined by regulatory authorities or professional organisations as appropriate to the jurisdiction. In Australia, entry to practice radiation therapy curricula are informed by the Medical Radiation Practice Board of Australia Professional Capabilities, and courses are evaluated with respect to Accreditation Standards which align with the professional capabilities [7], [8]. However, from the perspective of radiation therapy advanced practice in Australia, there are no published capabilities or competencies to inform course curricula and assessment. Instead, curriculum content is guided by the needs of the profession and workplaces, and assessment is framed to be meaningful for advanced practitioner radiation therapist (APRT) learning and expected outcomes. Additionally, there are seven characteristics of the advanced practitioner promulgated by the Australian Society of Medical Imaging and Radiation Therapy (ASMIRT) [9], which can be used to guide assessment outcomes, namely Professionalism; Expert Communication; Collaboration; Scholarship and Teaching; Clinical Expertise; Evidence-based Judgement; and Clinical Leadership.

This paper describes an intentional approach used to meet the challenges of creating meaningful assessment strategies, to allow practitioners to demonstrate the higher order cognitive knowledge, professional capabilities and clinical skills necessary for advanced practice. Reflection on the experience of creating an adaptive radiation therapy (ART) advanced practice program provides context to the discussion. The ART pathway sits within a Master of Advanced Radiation Therapy Practice course at the authors’ University.

Assessment for advanced practice

Designing postgraduate programs for qualified health practitioners requires educators to re-conceptualise assessment approaches towards capability rather than competency [10]. This is because the context of learning is different when compared with entry to practice programs, in that health practitioners have a range of existing clinical practice experiences to draw on and synthesise with program content, and because of the dynamic, unpredictable nature of practice [10].

Practitioners are able to determine the relevance and clinical applicability of learning to their practice, so authentic assessment strategies should align with varied workplaces. However, regardless of where the assessment takes place, if a meaningful approach to assessment design is used, the outcome for all practitioners should be the same. Nevertheless, the literature suggests with hospital-based assessment processes, particularly portfolios, outcomes are often based on the individual practitioner’s interpretations of expectations, which can vary considerably [11]. The challenge is to create standardised assessments for a range of practitioners, who are developing different advanced practice roles, across multiple clinical organisations. Therefore, flexibility and creativity are necessary in pedagogical design to account for these contrasting practitioner interpretations, clinical needs and experiences.

Designing and delivering a post graduate curriculum to facilitate learning for advanced practice is a primary example of this. Advanced practice can be performed across a broad range of specialist clinical areas, and each area can further be nuanced within each discrete clinical context. Such variation precipitates assessment strategies that are adaptable to individual workplaces and practitioner skill development, and that focus not only on assessment of learning, but ‘assessment as learning’ which additionally enables reflection on the learning experience itself. It is important to avoid the situation where assessment drives the learning, rather, there should be an emphasis on learning achieved through the assessment process [12].

Concurrently, the nature of advanced practice requires demonstration of higher order cognitive knowledge and applied decision-making [9]. Therefore, assessment must sustain academic and clinical validity to this expectation. Furthermore, assessment may be utilised as evidence of advanced practice expectations by practitioners when seeking recognition of their advanced status with their workplace or professional body. A flexible curriculum framework is essential to enable authentic and meaningful assessment of a range of advanced practice activities informed by local clinical needs, practitioner knowledge and skill development, higher order academic requirements, and professional body expectations.

A programmatic approach to assessment

Programmatic assessment is a strategy whereby assessment is planned comprehensively across a course to enable learners to systematically demonstrate holistic capability expectations [13]. Assessment is not approached as a single task within a single unit, but as a building of knowledge, skills, and professional attributes along the course continuum, aligned with the overall goals of the program of study [13]. The programmatic approach to assessment is considered to better integrate the different purposes of assessment throughout the curriculum - assessment as learning and assessment for learning, as well as the more traditional use, assessment of learning. The vertical and horizontal integration and intentional interlinking of assessments throughout a program constitutes a more meaningful strategy to frame the whole of learner competence development in health professions education courses [14], [15], [16], [17].

The key features of programmatic assessment include [14], [15], [16], [17], [18], [19], [20], [21];

-

•

A whole of course approach to assessment, where assessment elements are scaffolded longitudinally with a focus on supporting learning, and multiple assessment elements inform learner competence decisions.

-

•

No single assessment task defines learner outcomes, instead both formative and summative assessment tasks are interrelated and mapped across the program.

-

•

Assessment methods are purposively selected to be complementary and collectively reliable, and to enable a holistic judgement on learner performance.

-

•

Assessment expectations for the whole of course are visible to the learners, and continuous feedback supports progressive learning.

Programmatic assessment was operationalised in the Master of Advanced Radiation Therapy Practice ART pathway by approaching assessment mapping activities with the course learning outcomes, unit learning outcomes, and ASMIRT advanced practitioner expectations [9] in mind. Assessment methods were considered that were complementary at a unit and course level, with both formative and summative approaches established to allow assessment for, of, and as learning, and scheduled to enable learner feedback, reflection and self-monitoring of performance.

Although the tenets of programmatic assessment were applied, the capacity to take a whole course approach towards high stakes assessment decisions was limited by the part-time nature of the program and university expectations: working professionals are often completing one unit of learning per semester, and this unit must be passed in order to progress to the subsequent unit. An example of how assessment methods were approached in a programmatic way to meet the expectations of advanced practice is presented in Table 1, aligned with the ASMIRT advanced practitioner characteristic of ‘Expert Communication’ [9]. The course learning outcomes and assessment within the four units of the adaptive radiation therapy pathway were aligned. Additionally, assessment activities were designed to be complementary and present a holistic view of ‘expert communication’ across the course curricula, and include both ‘of learning’ (summative) and ‘for learning’ (formative and summative) tasks.

Table 1.

Example of a programmatic approach to assessment for a single ASMIRT characteristic of advanced practice.

| ASMIRT characteristic [9] | Course Learning outcomes | Unit of learning | Assessment as and for learning | Assessment of learning |

|---|---|---|---|---|

| Expert Communication | Apply critical thinking skills to the implementation of appropriate communication strategies both within the workplace and beyond that will influence and support advanced practice Demonstrate effective and strategic research, problem-solving, organisational and teamwork skills that reflect advanced practice |

Advanced Imaging for Radiation Therapy | Peer review of a clinical action plan regarding the advanced use of imaging in practice | Oral case presentation to an audience critiquing hybrid imaging applications in practice |

| Principles of Adaptive Radiation Therapy | Peer review of a narrated infographic submitted by another student | Narrated infographic appraising the implementation of adaptive radiation therapy workflows | ||

| Essentials of Advanced Health Care Practice and Research | Discussion forum activities to explore current and future considerations of advanced practice | Develop a teaching and learning plan (for peers or patients) to introduce a new intervention or enhancement to practice | ||

| Practice of Adaptive Radiation Therapy | Clinical practice critical incident reflections and action plans | Oral viva voce on clinical decision making in adaptive radiation therapy |

Meeting the challenge of creating meaningful assessment

In order to develop an effective, learner-centred assessment strategy which was not only an indicator of high-level knowledge and clinical performance, but carried meaning for practitioners, the principles of constructive alignment were key [22]. Clear learning outcomes were created to facilitate development and demonstration of higher order cognitive skills, such as analysis and synthesis, and advanced practice clinical performance, which were intrinsically linked to teaching activities, which in turn were aligned with assessment [23], [24]. The learning outcomes were aligned with both the clinical requirements at the level of the advanced practice role and national education standards at a postgraduate level [25].

Without clearly articulated, comprehensive and achievable learning outcomes related to knowledge and performance, students will not have a clear picture of what they need to achieve in their assessment. In developing the assessment strategy for the ART advanced practice curriculum, assessments were mapped by blueprinting of the assessment against the learning outcomes (Table 2). Assessment task instructions and marking rubrics were then developed to make visible to the learner and teacher the expectations of the activity, and to make explicit the alignment with the unit learning outcomes.

Table 2.

Example of constructive alignment between learning outcome and assessment.

| Proposed assessment | Alignment with unit learning outcome |

|---|---|

| Critical analysis of service delivery and patient outcomes related to adaptive radiation therapy (3000-word essay) |

|

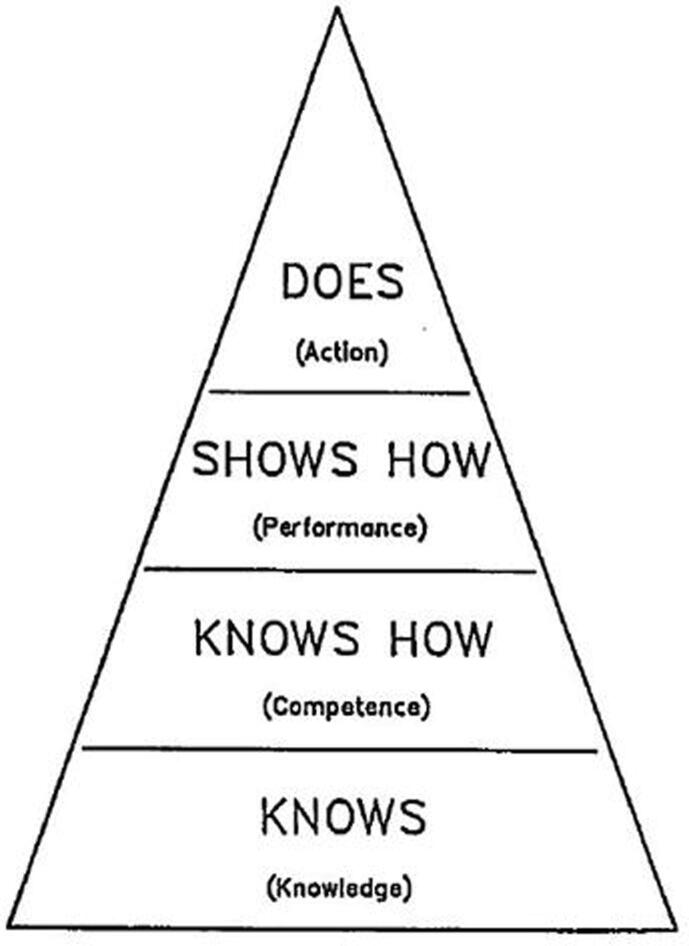

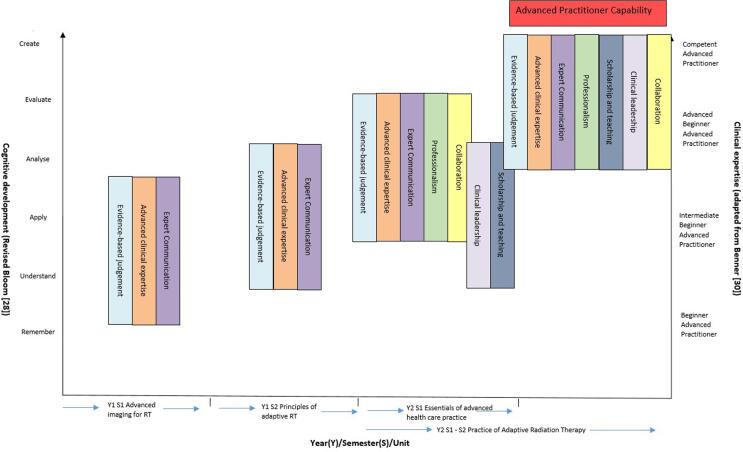

The development of the advanced practice assessment strategy was informed by three key educational models/classification systems (Bloom’s Taxonomy [26], [27], [28], Benner’s Novice to Expert model [29], [30] and Miller’s pyramid of competence (Fig. 1) [31], [32]). Each model has evolved from its original version to meet the needs of contemporary education and clinical practice (Fig. 2 depicts how Bloom’s revised taxonomy (2001) and an adapted Benner’s model align with assessments in our program). The three educational frameworks allowed for the creation of assessments, which evaluate advanced levels of knowledge and skill, attitude and behaviour, aligned to that which would be expected of an APRT.

Fig. 1.

Miller’s Pyramid [31] (reproduced with permission).

Fig. 2.

Alignment of ASMIRT advanced practice characteristics [9] with assessment of knowledge and performance.

Use of Bloom's Taxonomy [26], [27] and the revised Bloom’s Taxonomy [28] facilitated the design of complex assessments, which were warranted to reassure the profession and other members of the radiation oncology team that the APRTs were competent. By the end of the program, students are expected to demonstrate high levels of analysis, synthesis and evaluation, complex problem solving, clinical decision making and advanced clinical skills. Assessments were designed to test cognitive (knowledge-based), psychomotor (skills-based/practice) and affective expertise (values, attitudes) all of which were integral aspects of the ASMIRT advanced practice recognition guidelines [9]. Additionally, given the absence of nationally defined APRT capabilities in Australia, the validity of assessment design in meeting the required learning outcomes was ratified by clinicians seeking APRT implementation within their service. An example of one such assessment, a narrated infographic on ART and its mapping to the revised Bloom’s taxonomy is presented in Table 3.

Table 3.

Alignment of assessment task learning outcomes with the revised Bloom’s taxonomy (2001) [28].

| Bloom’s Taxonomy (2001) revised by Anderson et al [28] | Assessment learning outcome for narrated infographic on ART |

|---|---|

| Create | Demonstrates synthesis of cancer site characteristics and role of ART. |

| Evaluate | Comprehensive appraisal of role and rationale of ART for selected cancer site. |

| Analyse | Comprehensive examination of treatment delivery considerations of ART for the selected cancer site. |

| Apply | Insight evident regarding association of treatment delivery considerations with patient outcome. |

| Understand | Discussion demonstrates thorough knowledge through in depth description of ART and its application to the cancer site. |

| Remember | *Not applicable for this task, or level of study |

Benner’s Novice to Expert model of skill development for nursing [29], [30], which was based on a five-stage model of adult skill acquisition proffered by Dreyfus and Dreyfus [33], [34], provides a framework for clinical skill assessment through the developmental stages of ‘novice’ to ‘expert’. This is used primarily for assessing proficiency in entry to practice clinical skills assessment, however can be adapted for use in postgraduate courses and aligned with the development of advanced practice skills. The novice to expert model has received criticism in the past however, suggesting that it reflects the more traditional, authoritarian nursing models - the notion of ‘the expert’ [35]. Resultingly, use of the term ‘novice’ has been replaced by ‘beginner’ in our model, and ‘expert’ has been replaced by the term ‘competent advanced practitioner’, with two intermediary steps of ‘intermediate beginner’ and ‘advanced beginner’. This model has been applied within entry to practice courses at our University for two decades [36]. A recent adaptation of Benner’s model intended to account for advanced practice [37] includes two higher levels above competent, that of the ‘Advanced Expert’ at level six, and then the ‘International Influencer’ at level seven. Although level six of this model may be relevant for APRT once practising beyond the course in Australia, the level of international influencer lies beyond that expected of the APRT and is arguably more aligned with the consultant practitioner in the United Kingdom [38].

The beginner to competent model is further supported by Miller's pyramid of competence [31], [32] (Fig. 1), which depicts a series of steps through which the developing practitioner (advanced practitioner in the context of this paper) progresses. Using the example of developing the programmatic assessment strategy for the advanced practice ART program (Fig. 2), the initial theory and content of the first two online units of learning within the course (Advanced Imaging and Principles of Adaptive Radiation Therapy) allow the practitioner to develop content knowledge in anatomy, imaging and the principles of adaptive radiation therapy. This represents the bottom level of the pyramid ‘Know’ and it is where students draw on the evidence-base at the inception of development of their clinical expertise, albeit in a theoretical way initially. At this level, assessments test Bloom’s lower order cognitive skills such as knowledge and comprehension, for example a series of image recognition assessments and contouring tests. Essays are also used to allow the practitioner to demonstrate their ability to use evidence and combine this with reflections on current practice.

The next level of the pyramid is classified as ‘Knows how’, which relates to possession of the content knowledge, interpreting and applying it (Bloom’s Apply stage). At this level, clinical contouring software such as Elekta ProKnow® is used to test application of knowledge using clinical models, but within a theoretical context. A protocol development assessment is also required at this level to allow the practitioner to demonstrate application of knowledge to practice.

The third level ‘Shows how’ requires assessments which allow performance to be evaluated in the clinical context, and simulation is a useful mechanism for this. This occurs in year 2 of the adaptive radiation therapy program where clinically applicable advanced practice skills are demonstrated through assessments such as case studies and problem-based learning cases. These forms of assessment allow the practitioner to demonstrate not only content knowledge, but that they have advanced capacity in communication, professionalism and collaboration. The content and assessments at this level also allow the candidate to show higher levels of critical analysis, scholarship and leadership. As the units progress, assessment is scaffolded to build upon each of the ASMIRT advanced practitioner characteristics [9].

A clinical placement unit throughout year 2 of the course allows practitioners the opportunity to transition performance into action and consciously demonstrate the behaviours of the advanced practitioner, this is entitled the ‘Does’ level by Miller. It is at this point where assessment is conducted by clinical professionals in parallel with the university, through direct observation and peer-to-peer discussion in the workplace. Assessment at this level should not only include evaluation of competence and skills, but should also integrate assessment of behaviour related domains, such as communication, professionalism, respect for diversity (cultural, disability, age and gender-based) and collaboration. Development and assessment of these skills has been addressed through a collaboration with clinical centres of excellence with linear accelerator-based or MRI linear accelerator-based adaptive radiation therapy services. A clinical school model provides the opportunity for all practitioners studying the course who may not have the technology for daily adaptive treatment in their own workplace to learn and be assessed. During the clinical school period (3 weeks of mandatory placement) competence-based assessments are undertaken in contouring, daily adaptive planning, treatment and decision-making.

A portfolio of professional development is used to assess each characteristic of advanced practice in the context of specific learning outcomes for adaptive radiation therapy. This also allows the practitioner to demonstrate the attitudes, values and behaviours expected of one who has come to think, act and feel like a competent advanced practitioner [39]. This is an extension to the original Miller’s pyramid with the highest level identified by Cruess and Cruess as ‘Is’, which relates to the professional identity which the practitioner possesses and demonstrates. Given the difficulty experienced by radiation therapists being validated with a legitimate new professional identity when transitioning into APRT roles, this is particularly important [40].

Limitations

Although beyond the scope of this current paper, it needs to be acknowledged that without clearly designed assignment briefs and assessment rubrics, an evaluation of the utility of the assessment (including validity, reliability, feasibility, cost effectiveness acceptance and educational impact) [41], as well as timely and detailed feedback, assessment strategies will not be fit for purpose. This may impact translation of theory to practice, reflection and consolidation of skills and the practitioner being able to use the assessment as evidence to support application for APRT status. However, clear integration of each of these elements according to university policies, including academic governance procedures, moderation of assessment briefs and rubrics, and a two-week turnaround of feedback, is anticipated to minimise the risk of this occurring.

Conclusion

Developing course curricula for advanced radiation therapy practice programs requires a considered approach towards assessment strategies to ensure assessment is meaningful for the learner practitioner, the clinical workplace, and the profession. Using a programmatic approach to assessment with a whole of course view is important, with additional consideration towards the principles of constructive alignment, and scaffolded higher level cognitive and practice skill development models. Assessment expectations should be made explicit to the learner, but allow flexibility in execution to enable the practitioner to reflect on individual past experiences, learning needs, and workplace expectations. A recommendation, which has emerged out of this paper, is that more research should be undertaken into curriculum development for advanced practice in radiation therapy, including exploration of practitioner experiences of the education and assessment process during advanced practice training.

The Master of Advanced Radiation Therapy Practice is novel within Australia because it combines theoretical and clinical curricula. It has been developed in the absence of a national capability framework. There is the potential for the course to be used to inform the academic and clinical curricula required within in a national framework, should this opportunity arise. Such a capability framework would enable greater consistency for practitioners in demonstrating advanced practice standards across multiple clinical organisations. The intentional whole of program design of the ART pathway provides an opportunity to evaluate outcomes and use this framework to model other advanced practice opportunities.

Declaration of Competing Interest

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

References

- 1.American Educational Research Association . AREA Publications; 2014. American Psychological Association & National Council on Measurement in Education. The Standards for Educational and Psychological Testing. [Google Scholar]

- 2.Firmino M., Leite C. Assessment of and for learning in higher education: from the traditional summative assessment to the more emancipatory formative and educative assessment. Transnatl Curric Inquiry. 2014;11(1):13–29. doi: 10.14288/tci.v11i1.184316. [DOI] [Google Scholar]

- 3.Duers L.E., Brown N. An exploration of student nurses’ experiences of formative assessment. Nurse Educ Today. 2009;29(6):654–659. doi: 10.1016/j.nedt.2009.02.007. [DOI] [PubMed] [Google Scholar]

- 4.Hand H. Promoting effective teaching and learning in the clinical setting. Nurs Stand. 2006;20(39):55–65. doi: 10.7748/ns2006.06.20.39.55.c4173. [DOI] [PubMed] [Google Scholar]

- 5.Helminen K., Coco K., Johnson M., Turunen H., Tossavainen K. Summative assessment of clinical practice of student nurses: A review of the literature. Int J Nurs Stud. 2016;53:308–319. doi: 10.1016/j.ijnurstu.2015.09.014. [DOI] [PubMed] [Google Scholar]

- 6.Black P., Wiliam D. Developing the theory of formative assessment. Educ Assess Eval Account. 2009;21:5–31. doi: 10.1007/s11092-008-9068-5. [DOI] [Google Scholar]

- 7.MRPBA. 2019 [Google Scholar]

- 8.Medical Radiation Practice Board of Australia. Accreditation standards: Medical radiation practice. MRPBA; 2019.

- 9.Australian Society of Medical Imaging and Radiation Therapy (ASMIRT), Advanced Practice Advisory Panel. Pathway to Advanced Practice - Advanced Practice for the Australian Medical Radiations Professions. ASMIRT; 2017.

- 10.O'Connell J., Gardner G., Coyer F. Beyond competencies: using a capability framework in developing practice standards for advanced practice nursing. J Adv Nurs. 2014;70(12):2728–2735. doi: 10.1111/jan.12475. [DOI] [PubMed] [Google Scholar]

- 11.Wallis L., Locke R., Sutherland C., Harden B. Assessment of advanced clinical practitioners. J Interprof Care. 2022;12:1–5. doi: 10.1080/13561820.2021.1997950. [DOI] [PubMed] [Google Scholar]

- 12.Newble D., Jolly B., Wakeford R., editors. The Certification and Recertification of Doctors: Issues in the Assessment of Clinical Competence. Cambridge University Press; Cambridge: 1993. [Google Scholar]

- 13.Dijkstra J., Van der Vleuten C.P.M., Schuwirth L.W.T. A new framework for designing programmes of assessment. Adv Health Sci Educ. 2010;15(3):379–393. doi: 10.1007/s10459-009-9205-z. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 14.Schuwirth L.W.T., Van der Vleuten C.P.M. Programmatic assessment: From assessment of learning to assessment for learning. Med Teach. 2011;33(6):478–485. doi: 10.3109/0142159X.2011.565828. [DOI] [PubMed] [Google Scholar]

- 15.Dart J., Twohig C., Anderson A., Bryce A., Collins J., Gibson S., et al. The Value of Programmatic Assessment in Supporting Educators and Students to Succeed: A Qualitative Evaluation. J Acad Nutrit Dietet. 2021;121(9):1732–1740. doi: 10.1016/j.jand.2021.01.013. [DOI] [PubMed] [Google Scholar]

- 16.van der Vleuten C.P.M., Schuwirth L.W.T., Driessen E.W., Dijkstra J., Tigelaar D., Baartman L.K.J., et al. A model for programmatic assessment fit for purpose. Med Teach. 2012;34(3):205–214. doi: 10.3109/0142159X.2012.652239. [DOI] [PubMed] [Google Scholar]

- 17.Swan Sein A., Rashid H., Meka J., Amiel J., Pluta W. Twelve tips for embedding assessment for and as learning practices in a programmatic assessment system. Med Teach. 2021;43(3):300–306. doi: 10.1080/0142159X.2020.1789081. [DOI] [PubMed] [Google Scholar]

- 18.Wald N., Harland T. Measuring changes in higher-order cognition through the assessment of complex knowledge over time. Assess Eval Higher Educ. 2021;46(8):1285–1298. doi: 10.1080/02602938.2020.1871467. [DOI] [Google Scholar]

- 19.Wilkinson T.J., Tweed M.J. Deconstructing programmatic assessment. Adv Med Educ Pract. 2018;9:191–197. doi: 10.2147/AMEP.S144449. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 20.Heeneman S., de Jong L.H., Dawson L.J., Wilkinson T.J., Ryan A., Tait G.R., et al. Ottawa 2020 consensus statement for programmatic assessment – 1. Agreement on the principles. Med Teacher. 2021;43(10):1139–1148. doi: 10.1080/0142159X.2021.1957088. [DOI] [PubMed] [Google Scholar]

- 21.Ross S., Hauer K.E., Wycliffe-Jones K., Hall A.K., Molgaard L., Richardson D., et al. Key considerations in planning and designing programmatic assessment in competency-based medical education. Med Teach. 2021;43(7):758–764. doi: 10.1080/0142159X.2021.1925099. [DOI] [PubMed] [Google Scholar]

- 22.Biggs J., Tang C. McGraw-Hill Education; UK: 2011. Teaching for quality learning at university. [Google Scholar]

- 23.Lawrence J.E. Designing a unit assessment using constructive alignment. Int J Teacher Educ Profess Develop. 2019;2(1):30–51. doi: 10.4018/IJTEPD.2019010103. [DOI] [Google Scholar]

- 24.Shuell T.J. Cognitive conceptions of learning. Rev Educ Res. 1986;56(4):411–436. doi: 10.3102/00346543056004411. [DOI] [Google Scholar]

- 25.Australian Qualifications Framework Council. Australian Qualifications Framework Second Edition; 2013.

- 26.Bloom B.S., Engelhart M.D., Furst E.J., Hill W.H., Krathwohl D.R. Longman Group Ltd; London: 1956. Taxonomy of educational objectives handbook 1: Cognitive domain. [Google Scholar]

- 27.Adams N.E. Bloom’s taxonomy of cognitive learning objectives. J Med Lib Assoc. 2015;103(3):152–153. doi: 10.3163/1536-5050.103.3.010. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 28.Anderson L.W., Krathwohl D.R., Airasian P.W., Cruikshank K.A., Mayer R.E., Pintrich P.R., et al. Addison Wesley Longman; 2001. (Eds.) A taxonomy for learning and teaching and assessing: A revision of Bloom’s taxonomy of educational objectives. [Google Scholar]

- 29.Benner P. Pearson; 1984. From novice to expert: Excellence and power in clinical nursing practice. [Google Scholar]

- 30.Benner P., Tanner C., Chesla C. From beginner to expert: gaining a differentiated clinical world in critical care nursing. Adv Nurs Sci. 1992;14(3):13–28. doi: 10.1097/00012272-199203000-00005. [DOI] [PubMed] [Google Scholar]

- 31.Miller G.E. The assessment of clinical skills/competence/performance. Acad Med. 1990;65:S63–S67. doi: 10.1097/00001888-199009000-00045. [DOI] [PubMed] [Google Scholar]

- 32.Kare-Silver N, Mehay R. Assessment and Competence. In: Mehay R, (Ed.) The essential handbook for GP training and education (eBook). Routledge; 2021. 10.1201/9781846197918. [DOI]

- 33.Dreyfus S.E. The five-stage model of adult skill acquisition. Bull Sci Technol Soc. 2004;24(3):177–181. doi: 10.1177/0270467604264992. [DOI] [Google Scholar]

- 34.Dreyfus H.L., Dreyfus S.E. The Free Press; New York: 1986. Mind over machine: The power of human intuition and expertise in the era of the computer. [Google Scholar]

- 35.Cash K. Benner and expertise in nursing: a critique. Int J Nurs Stud. 1995;32(6):527–534. doi: 10.1016/0020-7489(95)00011-3. [DOI] [PubMed] [Google Scholar]

- 36.McInerney J.M., Baird M. Developing critical practitioners: A review of teaching methods in the Bachelor of Radiography and Medical Imaging. Radiography. 2016;22(1):e40–e53. doi: 10.1016/j.radi.2015.07.001. [DOI] [Google Scholar]

- 37.Mortimore G., Reynolds J., Forman D., Brannigan C., Mitchell K. From expert to advanced clinical practitioner and beyond. Br J Nurs. 2021;30(11):656–659. doi: 10.12968/bjon.2021.30.11.656. [DOI] [PubMed] [Google Scholar]

- 38.Booth L., Henwood S., Miller P. Reflections on the role of consultant radiographers in the UK: What is a consultant radiographer? Radiography. 2015;22(1):38–43. doi: 10.1016/j.radi.2015.05.005. [DOI] [PubMed] [Google Scholar]

- 39.Cruess R.L., Cruess S.R., Steinert Y. Amending Miller’s pyramid to include professional identity formation. Acad Med. 2016;91(2):180–185. doi: 10.1097/ACM.0000000000000913. [DOI] [PubMed] [Google Scholar]

- 40.Implementing M.K. Monash University; 2021. Radiation Therapy Advanced Practice: A Grounded Theory Study. [Google Scholar]

- 41.van der Vleuten C.P. The assessment of professional competence: developments, research and practical implications. Adv Health Sci Educ Theory Prac. 1996;1(1):41–67. doi: 10.1007/BF00596229. [DOI] [PubMed] [Google Scholar]