Abstract

Since 2019, the coronavirus outbreak has caused many catastrophic events all over the world. At the current time, the massive vaccination has been considered as the most efficient way to fight against the pandemic. This study schemes to explain and model COVID-19 cases by considering the vaccination rate. We utilized an amalgamation of neural network (NN) with two powerful optimization algorithms, i.e., firefly algorithm and artificial bee colony. For validating the models, we employed the COVID-19 datasets regarding the vaccination rate and the total confirmed cases for 51 states since the beginning of vaccination in the US. The numerical experiment indicated that by considering the vaccinated population, the accuracy of NN increases exponentially when compared with the same NN in the absence of the vaccinated population. During the next stage, the NN with vaccinated input data is elected for firefly and bee optimizing. Based upon the firefly optimizing, 93.75% of COVID-19 cases can be explained in all states. According to the bee optimizing, 92.3% of COVID-19 cases is explained since the massive vaccination. Overall, it can be concluded that the massive vaccination is the key predictor of COVID-19 cases on a grand scale.

Keywords: COVID-19, Vaccinated population, Forecasting, Neural networks, Modeling

1. Introduction

In December 2019 the severe acute respiratory syndrome coronavirus 2 or simply coronavirus disease 2019 (hereafter COVID-19) was detected for the first time at Wuhan city in China [1]. It is declared that the COVID-19 outbreak is the most devastating incident for the global public health since the 1918 influenza pandemic [2]. Based upon the weekly report of November 16, 2021 disseminated by WHO the worldwide cumulative number of deceased and approved cases were 5,092,761 and 252,826,597, respectively [3]. The virus with the exponential circulation and thousands of frameshift mutations adapts its genome randomly during the viral replication [4]. Although most mutations are not much diverging from their originations, yet a few variants can acquire novel characteristics comprising higher transmission and curtailing the efficacy of the existing medications and vaccines [5,6]. Current data confirms that vaccines hinder severe form of the disease [7]. On the flip side, there is not any convincing evidence suggesting the reduction in the virus transmission since the vaccination up to now; because the reduction in the COVID-19 cases can be ascribed to the other factors particularly confinements, social distancing and asymptomatic COVID-19 cases [8]. Generally speaking, the COVID-19 would infect hosts either directly or indirectly. The direct transmission happens with respiratory droplets of an infected host. Whereas the indirect infection occurs with the environmentally existing aerosols and fomites [9]. It is mentioned that the COVID-19 pandemic will not reach to its termination prior to the worldwide vaccination [10,11]. By the same token, due to existence of COVID-19 in the animal hosts and inadequate vaccination as well as unpredictable degrees of immunological responses, the eradication of the virus may not be plausible [12]. Reasoning from that fact, it is expected that the global herd immunity will take a long time.

From the beginning of the COVID-19 numerous investigators have investigated the prediction of COVID-19 pathway either on a national level or on a worldwide scale using different methods. Several studies have been conducted to forecast infected cases using nonlinear autoregressive neural network (NARANN) [[13], [14], [15]]. This model uses the lags of the dependent variable as its input. By the same token, the statistical-based models including linear regression techniques [[16], [17], [18]] and autoregressive integrated moving average (ARIMA) models [[19], [20], [21]] are broadly applied in the prediction of COVID-19 cases. In ARIMA process, akin to NARANN, the lags of the dependent variable (i.e., the infected cases) are inputted as the predictor. The main difference between the aforesaid models is that the ARIMA cannot consider the non-linear relations. In linear regression techniques, the COVID-19 is modeled by various dependent variables including travel records, mortality and recovered cases.

Some epidemiological studies utilized compartmental models namely susceptible-infected-recovered [22,23], susceptible-exposed-infectious-recovered [24,25] and susceptible–exposed–infected–recovered–deceased [26]. In these models, the COVID-19 cases are transferred among various compartment (e.g., from exposed to infected). Typically, the progression process is simulated by virtue of the mathematical modeling and numerical simulations using differential equations.

A number of studies used the Prophet library for approximation of the pandemic trend [[24], [25], [26], [27], [28], [29]]. The prophet prediction package is an open-source library which developed by Facebook. Overall, this model by utilizing an additive statistical model separates components of a time series COVID-19 data (i.e., the trend of data and weekly, monthly and seasonality impacts).

Deep learning models have been implemented to forecast the COVID-19 infected cases as well. As a general principle, the deep networks with several processing layers and loops, detach the sophisticated data in a way that the existing patterns could be learned. In a number of studies, the image processing using deep networks has been conducted by a number of studies [30,31]. In that regard, several convolutional neural networks have been trained with the clinical data for prognosticating the mortality rate as well as diagnosing COVID-19 using X-Ray images. The beep networks are also used in timeseries modeling. In an early study, a method based on deep polynomial neural network is developed [32]. The model provided a satisfactory performance where the sample was limited (i.e., in the earlier stages of the COVID-19 pandemic). Long Short-Term Memory (LSTM) model is used to predict COVID-19 cumulative cases [33]. The accuracy of several deep learning models including LSTM-based models, Recurrent Neural Network (RNN), Variational Auto Encoder (VAE) and Gated Recurrent Units (GRU) are compared using timeseries data of Spain, Italy, China, the USA, Australia and France [34]. The results revealed that the VAE outperformed the other techniques. Also, a comparison of LSTM-based models with ARIMA and prophet algorithm indicated that Stacked LSTM outperforms the other approaches [35]. In another study, a comparison of deep learning method, RNN, with the ANN in the COVID-19 prediction has revealed the superiority of the ANN over the RNN [36]. The authors used various datasets with similar GUP acceleration training parameters. The results have showed that the accuracy of the ANN was 1–2% higher than the RNN. Also, the computing time of the deep net was triplicated comparing to ANN. That is because the deep networks with various loops and hidden layers are more computationally expensive than ANN. Also, a comparison of ANN with ARIMA, LSTM and CNN using Johns Hopkins University Center for Systems Science and Engineering dataset indicated that when the models are fine-tuned, the prediction of ANN is similar to CNN and outperforms the LSTM (based on MAPE indicator) and ARIMA [37]. It is also indicated that the ANN and CNN are more computationally efficient than the LSTM and ARIMA.

Combined modeling and then prediction of COVID-19 have also been followed by several articles. In this modus operandi, the contaminated cases usually modeled with a machine learning technique and then it is optimized by a metaheuristic algorithm for reducing the error rate of the forecasting. A combination of LSTM with grey wolf optimizer has been proposed for the pandemic prediction [38]. In comparison with ARIMA, the hybrid approach indicated a lower error rate. A combination of the neural network with firefly and bee algorithms has been proposed for modeling the COVID-19 daily cases [39]. Based on the results, it can be concluded that both models were the robust forecaster of the pandemic in various countries. In another paper, an amalgamation of convolutional neural network and autoencoders has been implemented to predict the survival chance of the infected cases [40]. According to the results, the hybridizing increased the accuracy of the approximation up to 3.5%. Adaptive neuro fuzzy inference system (ANFIS) was benchmarked with a combination of multilayer perceptron and imperialist competitive algorithm (ICA-MLP) [41]. In a general sense, the ICA-MLP exceeded the ANFIS.

Ultimately, there are several reviews which have tried to investigated the proceeding literature of COVID-19 modeling using machine learning methods [[42], [43], [44]], optimization of forecasting and controlling COVID-19 [45], AI regression methods [46] and Mathematical and epidemiological modeling [47,48]. A summary of the published literature is reported at Table .1. It should be underlined that with growing the vaccinated population around the world, it is expected that the severe form of the disease and the hospitalization will be reduced. However, as noted previously there is not ample evidence implying the mitigation in the transmission of COVID-19 because of the vaccination. There is a need for modeling the COVID-19 infected cases via considering the massive vaccination effort. It helps in determining whether the vaccinated population is the key explanator of the infected cases. In light of that, this study schemes to forecast the COVID-19 cases in 51 states since the launch of vaccination in the US. We used the US dataset since it is freely accessible and regularly updated for all states/cites. Furthermore, the national health policy regarding massive vaccination, the consistency of vaccines injected across the states, and the state dynamicity were among factors regarding the dataset selection. As noted previously, the ANN has been shown to provide a higher (or similar) performance in predicting COVID-19 compared to deep nets [36]. To avoid high computing costs in training, we utilized ANN and will train it using firefly algorithm (ANN-FA) and artificial bee colony (ANN-ABC). Basically, in most cases ANN might be trapped in a local minimal. In such situations the FA and ABC are capable of finding the global minimum of the error function on the search plate. The ascendancy of ABC and FA over other common meta-heuristic algorithms (e.g., genetic algorithm and particle swarm optimization) in unravelling myriad problems has been highlighted by various studies [[49], [50], [51], [52], [53]]. Moreover, these algorithms have demonstrated a robust accuracy rate in predicting the COVID-19 cases in the country level [39]. Therefore, the objective of this study is to develop the ANN-FA and ANN-ABC for predicting COVID-19 confirmed cases by considering the vaccinated population. To our knowledge this study is one of the first studies which attempts to predict the pandemic considering the vaccinated population. It is instrumental inasmuch as it can be used by decision-making bodies to gauge the effects of the vaccination efforts on the COVID-19 pandemic. We expect that the current paper will contribute into the COVID-19 forecasting literature in the following aspects:

-

I.

This study considers the vaccinated population in the modeling. It will provide a better policy instrument.

-

II.

The developed models will be substantiated simultaneously with the COVID-19 dataset of 51 states. Hence, the models can be used for predicting in the smaller scale since each dataset (state) has a specific ID which can be used in state-level prediction. The proposed model is also flexible such that it can be extended to other scenario, such as investigating the sensitivity of geographical coordinates and the role of spill over impacts among states.

-

III.

This study will conduct a numerical experiment to determine whether the vaccinated population is the key explanator of the COVID-19 infected cases.

Table 1.

A summary of published literature regarding COVID-19 prediction/detection models.

| Reference | Goal | Model/Method | Category | Data level | Major results |

|---|---|---|---|---|---|

| [20] | Spatial modeling of COVID-19 | Seasonal ARIMA | Research article | Sample (India) | The model is applied effectively in considering the linear relations among districts |

| [14] | Approximating COVID-19 trend | Autoregressive neural networks with LM training | Research article | Sample (Egypt) | The neural network outperformed the ARIMA |

| [30] | Recognizing COVID-19 from X-Rays and CT scans | Deep learning model (Multilayer-spatial convolutional neural network) | Research article | Clinical data | With a detection accuracy of 93.63%, the proposed model is able to detect COVID-19 |

| [31] | Forecasting the mortality risk using X-ray | Deep learning/image processing techniques | Research article | Clinical data | Convolutional neural network showed the best performance in detecting the mortality risk |

| [32] | Developing an early predicting method for small datasets | Polynomial neural network with corrective feedback | Research article | Sample (China) | The model showed an acceptable performance in simulating small timeseries |

| [43] | Study of machine learning application for COVID-19 prediction | (Based on PRISMA guideline) ARIMA, ANN, Linear/Polynomial regression, decision tree, random forest, other methods | Scoping review | Published materials | Regarding mean absolute percentage error, LSTM and ARIMA showed the greatest values |

| [46] | Studying participation of AI regressions in predicting COVID-19 cases | PRISMA 2020 guideline | Systematic review | Published data in open access datasets | Regarding the R2 performances, the AI methods outperformed the statistical-based models. Among the AI methods, feed forward ANN, graph ANN, and swarm-based methods shows the best performances |

| [44] | Investigating the role of machine learning methods in various aspects of COVID-19 pandemic (predicting, screening, identification of potentially infected people, vaccine advancement) | The research articles are chosen on the grounds of abstract, approach, and conclusion | Critical review | Published materials | The AI algorithm has can considerably increase the quality of treatment, prediction, vaccine and drug development However, the AI models are not employed to indicate their performance in real world |

| [54] | Forecasting number of recovered, deaths and cumulative confirmed COVID-19 cases | ARIMA, LSTM stacked LSTM, prophet model |

Research article | Sample (world, India, Chennai city of India) | The error rate of SLSTM method was around 2% lower as opposed to other models |

| [41] | Predicting COVID-19 new/mortality cases . |

Adaptive neuro fuzzy inference system, multi-layered perceptron-imperialist competitive Algorithm |

Research article | Sample (Hungary) | In most instances, hybrid MLP-ICA outperforms the hybrid ANFIS |

The rest of this paper is prepared as follows: first and foremost, the data processing procedure is portrayed. Thereafter, the theoretical background of ANN, FA and ABC will be explained. In the final analysis, the ANN, ANN-FA and ANN-ABC modeling processes are presented.

2. Material and methods

2.1. Data processing

In this work we utilized two freely accessible datasets including the state-separated COVID-19 cases (with geographical coordinates) uploaded by Center for Systems Science and Engineering (Johns Hopkins University, GitHub) and the vaccinated data for each state, prepared by Our World in Data (GitHub page). At the outset, the dataset of cities was aggregated for each state on a daily basis. Fig. 1SM (supplementary materials) depicts the processed COVID-19 data for the states. The variability in data extent undermines the mapping capability of ANN. Also, to achieve an appropriate convergence, computing the net performance as well as avoiding high condensation of data in some nodes, the data should be standardized between 0 and 1 [55,56]; therefore, with Eq. (1) we normalized all data in a spectrum between zero and one by subtracting it from the min and dividing by difference between max and min:

| (1) |

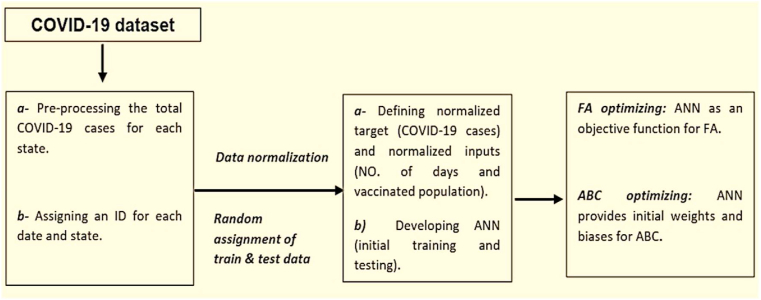

For the initial data processing, each date is assigned a specific number. These numbers are embedded as the input data in order to defining each day for MATLAB software. A corresponding technique was also used for each state. In this procedure we assigned each state an ID (e.g., 1 to 51 or geographical coordinates for each state) which can be used for the further identification. 75% of the dataset is randomly chosen for the training process. The remaining 25% is operated for testing phase as well. Fig. 1 summarizes the data processing procedure used in this study.

Fig. 1.

The modeling flowchart used in this work.

2.2. Neural network

Artificial neural network (ANN) mirrors the neural framework of the human brain. ANN includes many neurons which connected to each other with a specified arrangement. The ANN is capable of discerning nonlinear patterns, mapping from the data input to the output data. Each ANN has three independent layers including input, hidden and output layers [57]. Accordingly, the data in the input layer dispatches to the hidden layer. In the hidden layer, the processing neurons process the incoming signals from the previous layer. Hence, in ANN modeling, determination of the optimal number of hidden neurons is crucial. Lastly, the output layer presents the final outcome of the network. In general, ANN must be trained with a suitable backpropagation algorithm. In the learning process, firstly, the obtained input is processed in the hidden layer. Secondly, output weights of the processed input will be generated for each processing unit. Thirdly, the generated output will be matched with the target output and a bias will be computed in the output layer. Ultimately, the bias will come back to the hidden layer as feedback, helping the ANN to optimize the generated weights. In furtherance of training, Levenberg–Marquardt (LM) algorithm is widely implemented. The LM algorithm is the speediest backpropagation learning algorithm which has showed a high flexibility in solving various problems [58]. As a matter of fact, in the LM algorithm the calculation of the Hessian matrix is impeded. For this reason, the LM is the quickest backpropagation algorithm. Overall, the output of each neuron is a weighted sum of the inputs plus the bias term:

| (2) |

In Eq. (2) y is the output of jth neuron, B and W are bias and weights respectively, Xi represents the incoming signals from the ith neuron and finally f is sigmoid transfer function (i.e., ).

In this study we utilized LM algorithm for training the ANN. Also, one hidden layer is selected. It has been proved that one hidden layer will be enough for addressing any complex subject [59,60]. Following the standard practice, we utilized the sigmoid transfer function in the hidden nodes [61].

2.3. Firefly algorithm

Firefly algorithm (FA) is a meta-heuristic algorithm which is fruitfully applied in diverse fields [49]. FA is based upon swarm intelligence inspired from the patterns of the flashing lights of tropical fireflies [62]. Fireflies radiate many lights with some idiosyncratic patterns which assist them in finding food, partner as well as in their social communications. Theoretically, in furtherance of mathematical modeling, three suppositions must be exercised [63,64]. Firstly, all fireflies are unisex. This rule indicates that the insects' attractiveness should not be affected by the gender. Secondly, the attractiveness is conforming to the flashing lights in such a way that with escalating the distance, the flashing light and the attractiveness must be decreased. Based on this rule brighter fireflies will attract less radiant fireflies and if there was no brighter firefly, they will proceed haphazardly. Thirdly, for maximizing the attractiveness, the brightness is attained with an objective function on the basis of the optical physics. Overall, in FA the attractiveness of fireflies (β) is a depended variable of the light absorption coefficient (γ) and the distance of less bright fireflies from the brighter or the light source (r):

| (3) |

In Eq. (3), β0 is the attractiveness where the distance equals to zero. After creating the initial fireflies and their positions, computation of r is vital (see Eq. (4)). In that regard, the Cartesian distance is extensively used. Numerically:

| (4) |

Where Xin and Xjn are location vectors of firefly i (the faint firefly) and j (the brighter one) in nth dimension respectively. Hence, by defining the rij, the mobility of i in favor of j can be acquired as follow:

| (5) |

In Eq. (5), α presents a vector of random coefficients (α ∈ [0, 1]) and ε and t represent a vector of stochastic variables (given by a Gaussian function) and iteration number, respectively. Based upon Eq. (5), firefly i can widely excavate the search zone because randomization parameters are incorporated. It is mentioned that β0 = 1, α ∈ [0, 1] and γ = 1 are desired for the majority of instances [63].

In summation, for FA modeling the primary stages are definition of objective function and generation of the initial fireflies. Then, creating initial coefficients is desired. After the initial creation, the attractiveness of each coefficient and its error rate are computed. In the next stage, again new coefficients will be created and the error rate will be benchmarked against its initial rate. If the error rate is less than the previous (i.e., a better solution) the new coefficients will be replaced. This recursive process perseveres until the maximum iteration. Thus, the coefficients are ranked based upon a standard indicator of error and the optimal answers will be favored.

2.4. Artificial bee colony

Initially, ABC was implemented by inspiring from the swarm intelligence of honeybees [65]. As a meta-heuristic, ABC aims to solve complex optimizations by simulating the searching behavior of bees which in turn evolved to find nectar amidst the natural surroundings. In each colony there are some employed, onlooker and scout bees. The employed bees search for nectar sources in the locality of an existed source which they already knew. They also share the relevant data with the onlookers. Subject to the received info regarding the nectar fitness, the onlookers determine which source should be selected. Finally, the employed bees without an appropriate nectar source are turned into scouts. The scouts perform a new search to find the new food sources [65]. Basic parts of ABC are as follows [65,66]:

Initialize.

Repeat.

-

➢

Stir the recruited bees towards the forage site and ascertain the nectar quantity.

-

➢

Stir the observer bees towards the forage site and ascertain the nectar quantity.

-

➢

Stir the scouts towards the new forage and ascertain the new nectar site.

UNTIL (the ending condition).

In the first step of the ABC, the number of employed bees is similar to the food sources. This means that for each source there is an employed bee such that the solution area will be commensurate with those sources. In the next stage again, a new source is attained by the employed bees as following:

| (6) |

Where Vij is the ith new solution of the jth optimization parameter, Xij denotes the ith old answer of the jth parameter, i and k are vectors of random numbers bounded by 1 and the solutions (i≠k), j is between 1 and the optimizing parameters, φ is a random number within −1 and 1. In general, Eq. (6) shows that the new nectar sources must be created stochastically around former sources. After creating a new answer, the lucrativeness of the solution with the initial answer must be compared. If the new source exceeds the former source, it will be substituted. Otherwise, a penalty counter is defined and negative feedback will be generated. In the third step, observer bees decide which source should be selected. They use the shared info regarding the site fitness:

| (7) |

In Eq. (7) Pi is the probability of selecting source i and f is the source fitness. As before, if the fitness of the new solution exceeds the previous ones, it will be preferred. Otherwise, again negative feedback will be created. In the final stage, if the penalty counter contravenes the threshold (i.e., Lmax), the employed bees turn into the scout bees. Therefore, the above-mentioned steps are repeated again until the ending criteria of the algorithm will be satisfied.

In sum, for ABC modeling first some initial coefficients and their relevant fitness must be established. Then, new coefficients and their relevant fitness must be made around the former coefficients. In this step, the model's output must be compared with the target variable for calculating the bias value. If the bias is less than before, the previous coefficients will be forgotten. As such, the new coefficient will be fined if the error is greater than before. Following the calculation of the probability of selection, the new solution will be established around the coefficients with high-level fitness and the new error value will be computed. For enhancement, the coefficients are selected. In the case of insufficient progress, the coefficients will be forgotten and where the penalty limit is reached to its maximum, the new coefficients will be created randomly. Repeatedly, the substitution and forgotten processes are performed until the ending criteria can be met.

2.5. Statistical indicators

For evaluating the modeling preciseness, the root-mean-square-error (RMSE) and R-squared are applied. The RMSE and R2 are presented by Eqs. (8), (9)) respectively.

| (8) |

| (9) |

Where P, A, , and m are predicted value, actual data, average of predicted values, mean of actual data and number of data points, respectively. A higher amount of R2 and a lower value of RMSE show that a model is efficient for explaining the target variable based on the input data.

2.6. ANN training using ABC/FA

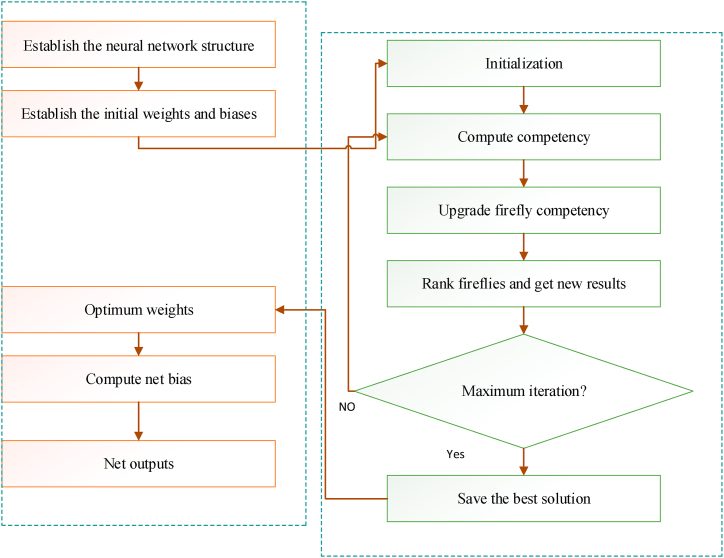

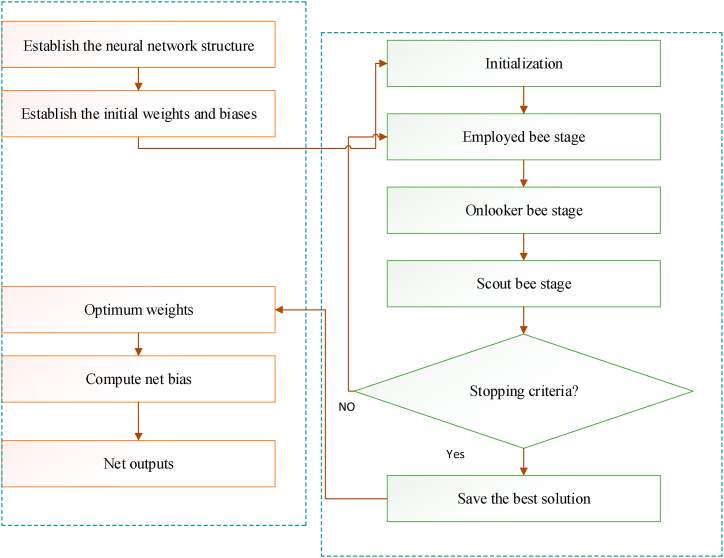

One of drawbacks of BP algorithms is the local minimal pitfall for error function. Commonly, the gradient descent approach minimizes the squared loss function using opposite direction of the slop. However, this could lead to a local extremum and hence the algorithm could be stuck in the trap. Instead of using BP algorithm, in such cases, the meta-heuristics namely FA and ABC can therefore be efficaciously applied in training the ANN. The main incentive for hybridizing meta-heuristics with ANN is their efficiency and resiliency in function approximation [67] (mentioned from Ref. [68]). Also, these algorithms solve complex approximations in a brief time, in virtue of their simple implementation [67]. The efficiency of ANN training using ABC in solving different problems has been demonstrated previously [69]. The ANN-FA also is proved to be efficient in timeseries modeling and classification [70]. The modeling flowcharts of the training procedure using FA and ABC are indicated by Fig. 2, Fig. 3.

Fig. 2.

The modeling flowchart of the ANN-FA.

Fig. 3.

The modeling flowchart of the ANN-ABC.

The hyperparameter calibration has an important impact on the ABC and FA in achieving the best minima for the loss function. These hyperparameters are reported in Table 2. As to FA, it is recommended that a light absorption coefficient (γ) of one, a β0 = 1 (attraction term), and a mutation factor (α) between 0 and 1 are appropriate values for the vast majority of implementations [63,71]. Upon several fine-tuning, we set β0 = 2, γ = 1 and α = 0.2. The α-dumping factor is also set at 0.99. For the ABC, we assign one food source (FN) for each onlooker bee [72]. To select the scout bees from the employed bees, the max number of the trial limit is set proportionally with number of decision variables and the population size. Through the tuning process, several values are tested. It its observed that this relation is reliable in training the ANN. Regarding both algorithms, the population size and the number of epochs can influence the computing cost and the precision. To compensate for this tradeoff between the model accuracy and the cost expansivity, we varied the population length, i.e., the number of bees and fireflies, from 5 to 50 at up to 500 iterations. The optimal population size will be chosen considering both criteria.

Table 2.

Control parameters of ABC and FA.

| Hyperparameters | FA | ABC |

|---|---|---|

| Swarm size | 5, 10, 15, 20, 25, 30, 35, 40, 45, 50, 55 | 5, 10, 15, 20, 25, 30, 35, 40, 45, 50, 55 |

| Mutation parameter (α) | 0.2 | – |

| Damping ratio (α-Dumping) | 0.99 | – |

| Attractiveness (β0) | 2 | – |

| Light absorption coefficient (γ) | 1 | – |

| Max of iteration | 500 | 500 |

| Max of trial limit (L) | – | Variable (L = round ((1/2)* Var*Pop)) |

| Ratio of food sources to bees | – | 1:1 |

3. Results and discussion

3.1. Neural network modeling

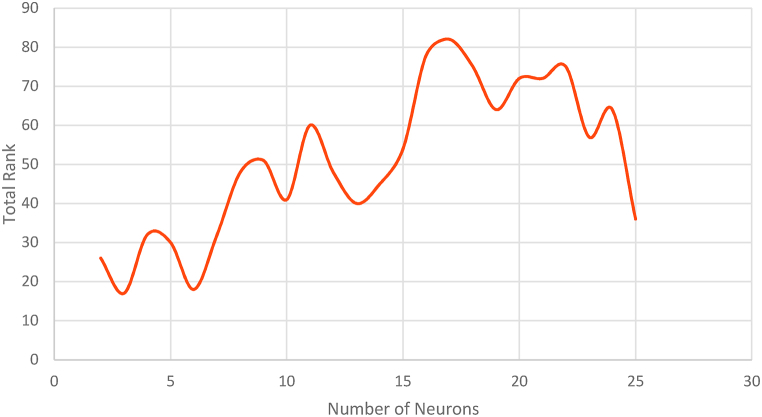

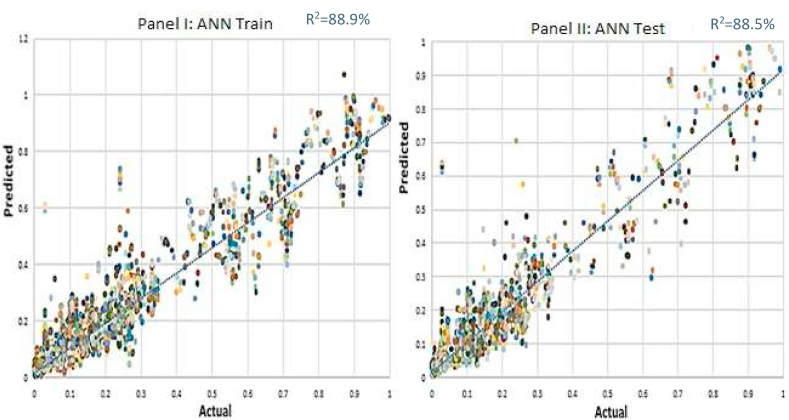

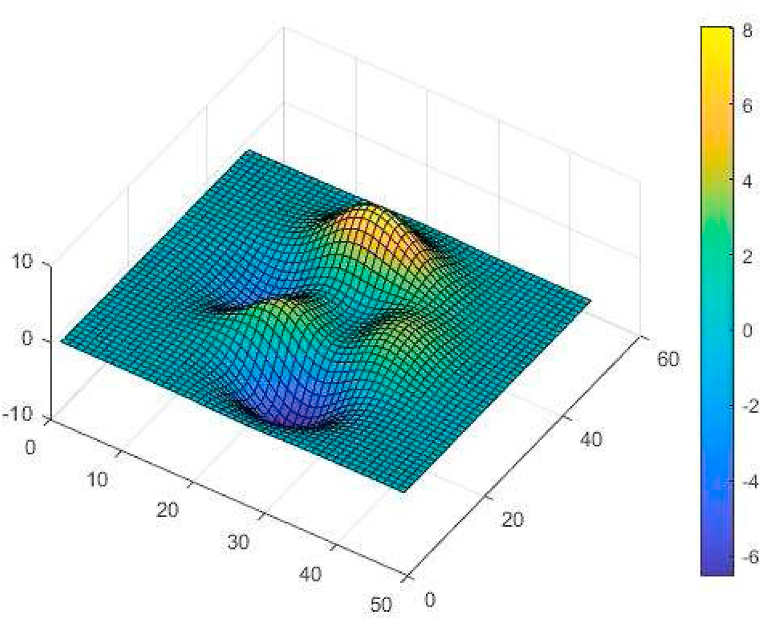

For ANN modeling we used MATLAB 2018. As already noted, the LM algorithm is selected in the training process. In developing ANN, finding the optimal number of hidden neurons is the most important stage. Generally speaking, the trial-and-error approach is used for this objective. In this study the trial-and-error method and a scoring system are employed [73]. In that regard, by considering different numbers for the hidden neurons (ranged from 2 to 25), numerous structures have been scored. The results are demonstrated in Table 3. Based upon the RMSE and R2 at both train and test steps, the models are ranked on the right side of the table. In scoring with respect to the error rate, the lower values have the best ranks. In regards of R2 the score is the rank of each model from large to small quantities. By aggregating all scores at the train and test stages, the total rank of each model is computed. According to Fig. 4 the maximum score is occurred with 17 neurons. Thereupon, the ANN with one hidden layer and 17 neuron is selected. Fig. 5 display the simulated results with this model in the train and test steps, respectively. It is important to note that the results are represented for 51 states. Each state was defined by a special ID which can be used for recognition. The total R2 is 88% which shows that in aggregate ANN has been able to explain around 88% of the COVID-19 cases in all states. These findings when compared to ANN irrespective of the vaccinated population (Fig. 2SM and 3SM in the supplementary materials), illustrate that the vaccinated population is the key explanator of the COVID-19 cases on the grand scale. This explanatory ability of the suggested ANN can be ameliorated by lowering the error rate. As a matter of fact, in most cases the relative minimum pitfall prevents ANN from achieving the global minimum of the loss function. In Fig. 6 the ANN minimal trap in the solution space is depicted. In that circumstance, FA and ABC as two powerful optimizing algorithms can be leveraged for increasing the predictability of the ANN. These algorithms are capable to conduct an extensive investigation in the search space to find the global minimum of the loss function and taking ANN out from the minimal pitfall. Therefore, the suggested ANN with the massive vaccination data is picked out for training by FA and ABC.

Table 3.

The ANN with various number of hidden neurons.

| Hidden neurons | Train |

Test |

Train |

Test |

Train |

Test |

Total rank | ||

|---|---|---|---|---|---|---|---|---|---|

| R2 | RMSE | R2 | RMSE | R2 | RMSE | ||||

| 2 | 0.781 | 0.778 | 0.0594 | 0.12 | 1 | 1 | 1 | 23 | 26 |

| 3 | 0.794 | 0.792 | 0.0547 | 0.372 | 2 | 2 | 2 | 10 | 16 |

| 4 | 0.799 | 0.794 | 0.0555 | 0.0492 | 3 | 3 | 1 | 23 | 30 |

| 5 | 0.854 | 0.815 | 0.0321 | 0.374 | 5 | 4 | 11 | 9 | 29 |

| 6 | 0.843 | 0.846 | 0.0423 | 0.501 | 4 | 6 | 3 | 4 | 17 |

| 7 | 0.861 | 0.848 | 0.0345 | 0.266 | 8 | 7 | 4 | 12 | 31 |

| 8 | 0.861 | 0.865 | 0.0282 | 0.406 | 8 | 8 | 23 | 8 | 47 |

| 9 | 0.874 | 0.872 | 0.0343 | 0.151 | 10 | 14 | 5 | 21 | 50 |

| 10 | 0.880 | 0.867 | 0.03336 | 0.421 | 17 | 10 | 6 | 7 | 40 |

| 11 | 0.880 | 0.870 | 0.0313 | 0.194 | 17 | 11 | 14 | 17 | 59 |

| 12 | 0.876 | 0.878 | 0.033 | 0.291 | 12 | 16 | 8 | 11 | 47 |

| 13 | 0.878 | 0.880 | 0.033 | 0.58 | 13 | 17 | 8 | 1 | 39 |

| 14 | 0.872 | 0.889 | 0.0332 | 0.451 | 9 | 23 | 7 | 5 | 44 |

| 15 | 0.882 | 0.872 | 0.0315 | 0.431 | 20 | 14 | 13 | 6 | 53 |

| 16 | 0.887 | 0.891 | 0.0302 | 0.258 | 23 | 24 | 17 | 13 | 77 |

| 17 | 0.889 | 0.885 | 0.0298 | 0.189 | 24 | 21 | 18 | 18 | 81 |

| 18 | 0.882 | 0.889 | 0.032 | 0.186 | 20 | 23 | 12 | 19 | 74 |

| 19 | 0.887 | 0.882 | 0.0298 | 0.546 | 23 | 19 | 18 | 3 | 63 |

| 20 | 0.884 | 0.882 | 0.0308 | 0.218 | 21 | 19 | 15 | 16 | 71 |

| 21 | 0.882 | 0.872 | 0.0308 | 0.143 | 20 | 14 | 15 | 22 | 71 |

| 22 | 0.880 | 0.876 | 0.029 | 0.154 | 17 | 15 | 22 | 20 | 74 |

| 23 | 0.876 | 0.884 | 0.0325 | 0.236 | 12 | 20 | 10 | 14 | 56 |

| 24 | 0.880 | 0.867 | 0.0294 | 0.221 | 17 | 10 | 21 | 15 | 63 |

| 25 | 0.861 | 0.832 | 0.0296 | 0.571 | 8 | 5 | 20 | 2 | 35 |

Fig. 4.

Total rank of ANN based on various number of hidden neurons.

Fig. 5.

ANN train (Panel I) and test (Panel II) results with LM algorithm.

Fig. 6.

Relative minimum pitfall in ANN.

3.2. Hybrid modeling

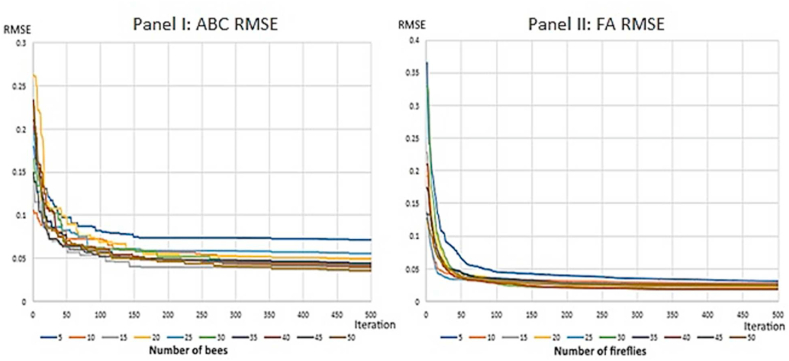

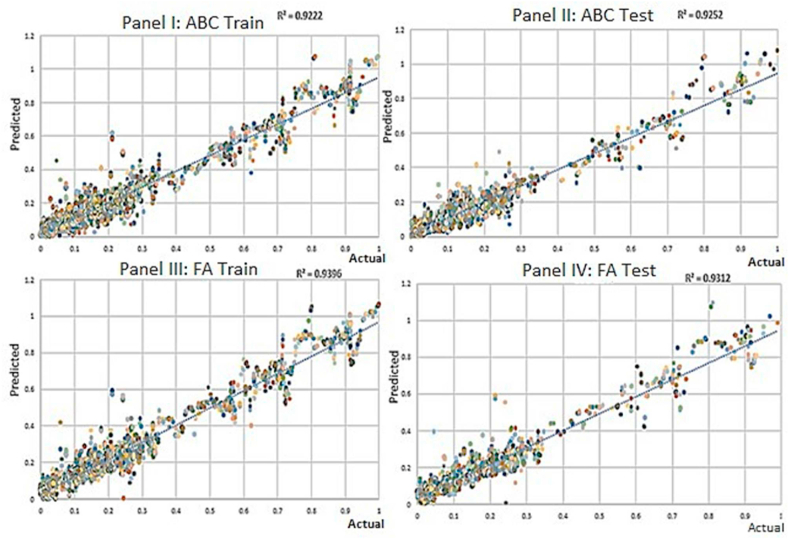

As earlier stated, the ANN provides the initial net for both FA and ABC. In general, for meta-heuristic training the swarm size must be optimized. In this step a balance between the swarm size and the computing time must be established. A large number of populations will cover a bigger area of the solution space. On the other side, the computing time increases as the population increases. For that reason, we run the hybrid models via various populations. The results are represented by Fig. 7. Considering the RMSE criteria the best patterns are achieved by using 50 fireflies and 15 and 50 bees. In case of FA, one can consider 10 fireflies to achieve the close performance with less computing time (or utilizes 40 fireflies with similar performance to the best pattern with less time). For ABC modeling, the model indicates a similar performance with 50 and 15 bees (the difference is tiny). Hence, with 15 bees the model can be trained with less computational cost. Moreover, in conformity with RMSE chart, 150 and 450 iterations can be utilized for FA and ABC respectively (e.g., instead of 500 iterations). Fig. 8 depict correlations between the predicted and actual COVID-19 cases for all states at both train and test levels. With an aggregate R2 in excess of 93% for ANN-FA and 92% for ANN-ABC, the proposed models are able to forecast COVID-19 new cases based upon the vaccinated individuals with a significant veracity. In toto, both models are able to predict the COVID-19 pandemic regarding the vaccination rates for each state. In accordance with the present study, the ANN-FA has indicated a better performance and can be implemented for policy making.

Fig. 7.

The RMSE indicator for ANN-ABC (Panel I) and ANN-FA (panel II) with different swarm sizes up to 500 epochs.

Fig. 8.

Train and test results of for ANN-ABC (Panels I and II respectively) and ANN-FA (Panels III and IV respectively).

The performance of ANN-FA/ABC is also compared with several machine learning algorithms for COVID-19 modeling, namely ANN with Baysian regulation [13], ANN with scaled conjugate gradient [13], linear regression [74], stepwise regression [75], robust regression [76] and regression-based neural network [77]. To provide a meta-heuristic benchmark, we also applied a combination of ANN with biogeography-based optimization [78,79]. All regression-based models have been implemented using Regression Learner App in MATLAB. The control parameters for the models are reported in Table.1SM in Supplementary materials. For the BBO algorithm, the hyper-parameters are number of iterations, population size, max of emigration and immigration and a mutation probability (biodiversity). The iteration and the population size are set at 500 and 300 respectively. Also, the emigration, immigration and mutation probability are fixed at 1, 1 and 0.005 correspondingly [80]. The results are presented in Table .4. In general, the results show that the vaccinated population is a key predictor of COVID-19. Considering the BP-ANNs, the Bayesian regularization has showed a close performance to LM algorithm. In case of linear, stepwise, and robust regressions, the R2 ranges from 61% to 67%, showing the lowest values among the alternatives. In sum, the regression neural network has a similar performance to ANN-ABC considering R2 indicator. However, according to RMSE, the ANN-ABC shows a better performance at both train and test levels.

Table 4.

The performance of all models in COVID-19 prediction.

| Models | Train |

Test |

||

|---|---|---|---|---|

| R2 | RMSE | R2 | RMSE | |

| ANN-Bayesian regularization | 0.895389 | 0.04737932 | 0.889155 | 0.054544 |

| ANN-Scaled conjugate gradient | 0.774312 | 0.078166 | 0.770568 | 0.084499 |

| ANN-Levenberg–Marquardt | 0.889 | 0.00298 | 0.885 | 0.189 |

| Linear regression | 0.650 | 0.097653 | 0.660 | 0.095263 |

| Stepwise regression | 0.670 | 0.094497 | 0.70 | 0.090234 |

| Robust regression | 0.61 | 0.10268 | 0.61 | 0.10201 |

| Regression neural network | 0.920 | 0.047314 | 0.910 | 0.049788 |

| ANN-FA | 0.9396 | 0.023531055 | 0.9312 | 0.046932 |

| ANN-ABC | 0.92 | 0.035001 | 0.9252 | 0.046553 |

| ANN-BBO | 0.8854 | 0.004031538 | 0.891472 | 0.004292239 |

4. Conclusion

With increasing the vaccinated population around the world, there is a need for modeling the COVID-19 pandemic considering the vaccination rate. In this study, we implemented conventional neural network fused with two powerful metaheuristic algorithms namely FA and ABC. We processed various datasets regarding the COVID-19 confirmed cases and the vaccinated population for 51 states in the US. In a general sense, the findings reveal that the vaccination effort is the vigorous explanator of the pandemic. At the overall scale, the FA optimizing with a total accuracy of 93.7% outperformed the ANN- ABC.

It should be pointed out the obtained results should be interpreted by considering some limitations. Firstly, it ought to be highlighted that ANNs are black-box models. In our model, the mechanism showing how vaccinated population is related to the transmission cannot be interpreted. Hence, such ANNs are incompetent to provide any biological ground for the results. Secondly, the vaccine injection is proved to prevent the severe COIVD-19. Hence, the infected individuals with mild symptoms may not take the diagnostic tests and thus, they are not recorded as the infected cases. Therefore, the real number of infected cases may be higher than the official recording. Thirdly, the efficacy of COVID-19 vaccines is varied. It may affect the generalizability of the study results as the types of vaccines are not akin around the world. Finally, other determinants including the level of preventive measures, herd immunity and lockdowns all have a significant impact on the reported infected cases. Therefore, the diminishing trend of the data could be attributed to those determinants instead of increase in the injected vaccines. However, defining the vaccine data as an explanatory input in predicting the pandemic has several privileges. First, it can be used directly for policy making since the massive vaccination is the most effective way to fight against COVID-19. Second, as mentioned earlier, there are evidences implying the reduction in the serious form of COVID-19 resulting from the vaccination. Yet, the impact of the massive vaccination on the transmitted cases must be specified. Overall, the study result allows the conclusion that the massive is the key predictor of the COVID-19 pandemic.

Note on contribution

Ebrahim Noroozi-Ghaleini: Conceived and designed the experiments; Performed the experiments; Analyzed and interpreted the data; Contributed reagents, materials, analysis tools or data. Mohammad Javad Shaibani: Conceived and designed the experiments; Performed the experiments; Analyzed and interpreted the data; Wrote the paper.

Funding

This study did not receive any grant from any funding institution.

Material and data availability

The daily COVID-19 confirmed cases dataset was obtained from the GitHub page of Center for Systems Science and Engineering (Johns Hopkins University) (https://github.com/CSSEGISandData/COVID-19). The vaccinated population dataset was obtained from the GitHub page of Our World in Data (https://github.com/owid/covid-19-data/tree/master/public/data). Some of algorithms implemented in this study are proprietary in nature. The control parameters and flowchart showing all stages of core algorithms are presented in the main text.

Declaration of competing interest

We declare that we have no financial or non-financial interests to disclose.

Footnotes

Supplementary data related to this article can be found at https://doi.org/10.1016/j.heliyon.2023.e13672.

Contributor Information

Ebrahim Noroozi-Ghaleini, Email: Ebrahim.noroozi@modares.ac.ir.

Mohammad Javad Shaibani, Email: mjshaibani@razi.tums.ac.ir, mjsheibani1993@gmail.com.

Appendix A. Supplementary data

The following is the supplementary data related to this article:

References

- 1.(WHO) W.H.O. 2021. WHO-Convened Global Study of Origins of SARS-CoV-2: China Part. [Google Scholar]

- 2.Cascella M., et al. 2022. Features, Evaluation, and Treatment of Coronavirus (COVID-19) Statpearls [internet] [PubMed] [Google Scholar]

- 3.(WHO) W.H.O. November 2021. COVID-19 weekly epidemiological update.https://www.who.int/emergencies/diseases/novel-coronavirus-2019/situation-reports [Google Scholar]

- 4.Raskin S. Genetics of COVID-19. J. Pediatr. 2021;97(4):378–386. doi: 10.1016/j.jped.2020.09.002. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 5.Chen A.T., et al. COVID-19 CG enables SARS-CoV-2 mutation and lineage tracking by locations and dates of interest. Elife. 2021;10 doi: 10.7554/eLife.63409. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 6.Chen J., et al. Mutations strengthened SARS-CoV-2 infectivity. J. Mol. Biol. 2020;432(19):5212–5226. doi: 10.1016/j.jmb.2020.07.009. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 7.Darby A., Hiscox J. Covid-19: variants and vaccination. BMJ. 2021;372:n771. doi: 10.1136/bmj.n771. [DOI] [PubMed] [Google Scholar]

- 8.Mallapaty S. Can COVID vaccines stop transmission? Scientists race to find answers. Nature. 2021;10 doi: 10.1038/d41586-021-00450-z. [DOI] [PubMed] [Google Scholar]

- 9.Galbadage T., Peterson B.M., Gunasekera R.S. Does COVID-19 spread through droplets alone? Front. Public Health. 2020;8:163. doi: 10.3389/fpubh.2020.00163. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 10.Marco V. COVID-19 vaccines: the pandemic will not end overnight. Lancet Microbe. 2020;2:30226. doi: 10.1016/S2666-5247(20)30226-3. 3. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 11.Sallam M. COVID-19 vaccine hesitancy worldwide: A concise systematic review of vaccine acceptance rates. Vaccines (Basel) 2021 Feb 16;9(2):160. doi: 10.3390/vaccines9020160. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 12.Skegg D., et al. Future scenarios for the COVID-19 pandemic. Lancet. 2021;397(10276):777–778. doi: 10.1016/S0140-6736(21)00424-4. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 13.Namasudra S., Dhamodharavadhani S., Rathipriya R. Nonlinear neural network based forecasting model for predicting COVID-19 cases. Neural Process. Lett. 2021:1–21. doi: 10.1007/s11063-021-10495-w. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 14.Saba A.I., Elsheikh A.H. Forecasting the prevalence of COVID-19 outbreak in Egypt using nonlinear autoregressive artificial neural networks. Process Saf. Environ. Protect. 2020;141:1–8. doi: 10.1016/j.psep.2020.05.029. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 15.Wieczorek M., et al. Real-time neural network based predictor for cov19 virus spread. PLoS One. 2020;15(12):e0243189. doi: 10.1371/journal.pone.0243189. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 16.Senapati A., et al. A novel framework for COVID-19 case prediction through piecewise regression in India. Int. J. Inf. Technol. 2021;13(1):41–48. doi: 10.1007/s41870-020-00552-3. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 17.Rath S., Tripathy A., Tripathy A.R. Prediction of new active cases of coronavirus disease (COVID-19) pandemic using multiple linear regression model. Diabetes Metabol. Syndr.: Clin. Res. Rev. 2020;14(5):1467–1474. doi: 10.1016/j.dsx.2020.07.045. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 18.Ogundokun R.O., et al. Predictive modelling of COVID-19 confirmed cases in Nigeria. Infectious Disease Modelling. 2020;5:543–548. doi: 10.1016/j.idm.2020.08.003. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 19.Alabdulrazzaq H., et al. On the accuracy of ARIMA based prediction of COVID-19 spread. Results Phys. 2021;27 doi: 10.1016/j.rinp.2021.104509. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 20.Roy S., Bhunia G.S. P.K. Shit, Spatial prediction of COVID-19 epidemic using ARIMA techniques in India. Modeling earth systems and environment. 2021;7(2):1385–1391. doi: 10.1007/s40808-020-00890-y. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 21.Sahai A.K., et al. ARIMA modelling & forecasting of COVID-19 in top five affected countries. Diabetes Metabol. Syndr.: Clin. Res. Rev. 2020;14(5):1419–1427. doi: 10.1016/j.dsx.2020.07.042. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 22.Bagal D.K., et al. Estimating the parameters of susceptible-infected-recovered model of COVID-19 cases in India during lockdown periods. Chaos, Solit. Fractals. 2020;140 doi: 10.1016/j.chaos.2020.110154. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 23.Boccaletti S., et al. Modeling and forecasting of epidemic spreading: the case of Covid-19 and beyond. Chaos, Solit. Fractals. 2020;135 doi: 10.1016/j.chaos.2020.109794. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 24.Dansana D., et al. Using susceptible-exposed-infectious-recovered model to forecast coronavirus outbreak. Cmc-Computers Materials & Continua. 2021:1595–1612. [Google Scholar]

- 25.Nawaz S.A., et al. A hybrid approach to forecast the COVID-19 epidemic trend. PLoS One. 2021;16(10):e0256971. doi: 10.1371/journal.pone.0256971. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 26.Viguerie A., et al. Simulating the spread of COVID-19 via a spatially-resolved susceptible–exposed–infected–recovered–deceased (SEIRD) model with heterogeneous diffusion. Appl. Math. Lett. 2021;111 doi: 10.1016/j.aml.2020.106617. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 27.Khayyat M., et al. Time series Facebook prophet model and Python for COVID-19 outbreak prediction. Comput. Mater. Continua (CMC) 2021:3781–3793. [Google Scholar]

- 28.Warushavithana M., et al. IEEE; 2021. A Transfer Learning Scheme for Time Series Forecasting Using Facebook Prophet. In 2021 IEEE International Conference on Cluster Computing (CLUSTER) [Google Scholar]

- 29.Tulshyan V., Sharma D., Mittal M. An eye on the future of COVID-19: prediction of likely positive cases and fatality in India over a 30-day horizon using the Prophet model. Disaster Med. Public Health Prep. 2020:1–7. doi: 10.1017/dmp.2020.444. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 30.Khattak M.I., et al. Automated detection of COVID-19 using chest X-ray images and CT scans through multilayer- spatial convolutional neural networks. Int. J. Interact. Multim. Artif. Intell. 2021;6:15–24. [Google Scholar]

- 31.Alonso J.P., Gala Y., Bañón A.L.S. COVID-19 mortality risk prediction using X-ray images. Int. J. Interact. Multim. Artif. Intell. 2021;6:7–14. [Google Scholar]

- 32.Fong S.J., et al. Finding an accurate early forecasting model from small dataset: a case of 2019-nCoV novel coronavirus outbreak. Int. J. Interact. Multim. Artif. Intell. 2020;6:132–140. [Google Scholar]

- 33.Elsheikh A.H., et al. Deep learning-based forecasting model for COVID-19 outbreak in Saudi Arabia. Process Saf. Environ. Protect. 2021;149:223–233. doi: 10.1016/j.psep.2020.10.048. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 34.Zeroual A., et al. Deep learning methods for forecasting COVID-19 time-Series data: a Comparative study. Chaos, Solit. Fractals. 2020;140 doi: 10.1016/j.chaos.2020.110121. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 35.Devaraj J., et al. Forecasting of COVID-19 cases using deep learning models: is it reliable and practically significant? Results Phys. 2021;21 doi: 10.1016/j.rinp.2021.103817. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 36.Wieczorek M., Siłka J., Woźniak M. Neural network powered COVID-19 spread forecasting model. Chaos, Solit. Fractals. 2020;140 doi: 10.1016/j.chaos.2020.110203. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 37.Istaiteh O., et al. IEEE; 2020. Machine Learning Approaches for Covid-19 Forecasting. In 2020 International Conference on Intelligent Data Science Technologies and Applications (IDSTA) [Google Scholar]

- 38.Prasanth S., et al. Forecasting spread of COVID-19 using google trends: a hybrid GWO-deep learning approach. Chaos, Solit. Fractals. 2021;142 doi: 10.1016/j.chaos.2020.110336. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 39.Shaibani M.J., Emamgholipour S., Moazeni S.S. Investigation of robustness of hybrid artificial neural network with artificial bee colony and firefly algorithm in predicting COVID-19 new cases: case study of Iran. Stoch. Environ. Res. Risk Assess. 2022;36(9):2461–2476. doi: 10.1007/s00477-021-02098-7. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 40.Khozeimeh F., et al. Combining a convolutional neural network with autoencoders to predict the survival chance of COVID-19 patients. Sci. Rep. 2021;11(1):1–18. doi: 10.1038/s41598-021-93543-8. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 41.Pinter G., et al. COVID-19 pandemic prediction for Hungary; a hybrid machine learning approach. Mathematics. 2020;8(6):890. [Google Scholar]

- 42.Rahimi I., Chen F., Gandomi A.H. A review on COVID-19 forecasting models. Neural Comput. Appl. 2021:1–11. doi: 10.1007/s00521-020-05626-8. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 43.Ghafouri-Fard S., et al. Application of machine learning in the prediction of COVID-19 daily new cases: a scoping review. Heliyon. 2021;7(10) doi: 10.1016/j.heliyon.2021.e08143. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 44.Lalmuanawma S., Hussain J., Chhakchhuak L. Applications of machine learning and artificial intelligence for Covid-19 (SARS-CoV-2) pandemic: a review. Chaos, Solit. Fractals. 2020;139 doi: 10.1016/j.chaos.2020.110059. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 45.Jordan E., et al. IEEE Access; 2021. Optimization in the Context of COVID-19 Prediction and Control: A Literature Review. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 46.Musulin J., et al. Application of artificial intelligence-based regression methods in the problem of covid-19 spread prediction: a systematic review. Int. J. Environ. Res. Publ. Health. 2021;18(8):4287. doi: 10.3390/ijerph18084287. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 47.Harjule P., Tiwari V., Kumar A. Mathematical models to predict COVID-19 outbreak: an interim review. J. Interdiscipl. Math. 2021;24(2):259–284. [Google Scholar]

- 48.Guan J., et al. Modeling the transmission dynamics of COVID-19 epidemic: a systematic review. Journal of Biomedical Research. 2020;34(6):422. doi: 10.7555/JBR.34.20200119. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 49.Kumar V., Kumar D. A systematic review on firefly algorithm: past, present, and future. Arch. Comput. Methods Eng. 2021;28(4):3269–3291. [Google Scholar]

- 50.Afshar-Nadjafi B., Niaki S.T.A. Seesaw scenarios of lockdown for COVID-19 pandemic: simulation and failure analysis. Sustain. Cities Soc. 2021;73 doi: 10.1016/j.scs.2021.103108. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 51.Senthilnath J., Omkar S., Mani V. Clustering using firefly algorithm: performance study. Swarm Evol. Comput. 2011;1(3):164–171. [Google Scholar]

- 52.Karaboga D., Akay B. A comparative study of artificial bee colony algorithm. Appl. Math. Comput. 2009;214(1):108–132. [Google Scholar]

- 53.Krishnanand K., et al. IEEE; 2009. Comparative Study of Five Bio-Inspired Evolutionary Optimization Techniques. In 2009 World Congress on Nature & Biologically Inspired Computing (NaBIC) [Google Scholar]

- 54.Devaraj J., et al. Forecasting of COVID-19 cases using deep learning models: is it reliable and practically significant? Results Phys. 2021;21:103817. doi: 10.1016/j.rinp.2021.103817. 103817. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 55.Yin L., et al. Ecosystem services assessment and sensitivity analysis based on ANN model and spatial data: a case study in Miaodao Archipelago. Ecol. Indicat. 2022;135 [Google Scholar]

- 56.Zheng W., et al. Simulation of phytoplankton biomass in Quanzhou Bay using a back propagation network model and sensitivity analysis for environmental variables. Chin. J. Oceanol. Limnol. 2012;30(5):843–851. [Google Scholar]

- 57.Abiodun O.I., Jantan A., Omolara A.E., Dada Kemi Victoria, Mohamed Nachaat AbdElatif, Arshad Humaira. State-of-the-art in artificial neural network applications: a survey. Heliyon. 2018;4(11) doi: 10.1016/j.heliyon.2018.e00938. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 58.Elsheikh A.H., et al. Modeling of solar energy systems using artificial neural network: a comprehensive review. Sol. Energy. 2019;180:622–639. [Google Scholar]

- 59.Karsoliya S. Approximating number of hidden layer neurons in multiple hidden layer BPNN architecture. Int. J. Eng. Trends Technol. 2012;3(6):714–717. [Google Scholar]

- 60.Hornik K., Stinchombe M., White H. Multilayer feed-forward network and universal approximator. Neural Network. 1989;2:7359–7366. [Google Scholar]

- 61.Yi-Chun D., Stephanus A. Levenberg-Marquardt neural network algorithm for degree of arteriovenous fistula stenosis classification using a dual optical photoplethysmography sensor. Sensors. 2018;18(7):2322. doi: 10.3390/s18072322. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 62.Yang X.-S., Papa J.P. 2016. Bio-inspired Computation and its Applications in Image Processing: an Overview. Bio-Inspired Computation and Applications in Image Processing; pp. 1–24. [Google Scholar]

- 63.Yang X.-S. Firefly algorithm, stochastic test functions and design optimisation. arXiv preprint arXiv:1003.1409. 2010 [Google Scholar]

- 64.Yang X.S., He X.S. Firefly algorithm: recent advances and applications. International Journal of Swarm Intelligence. 2013;1(1):36–50. [Google Scholar]

- 65.Karaboga D. Erciyes university, engineering faculty, computer; 2005. An Idea Based on Honey Bee Swarm for Numerical Optimization. Technical report-tr06. [Google Scholar]

- 66.Kamphuis I., et al. 19th International Conference on Electricity Distribution. Vienna; Austria: 2007. Massive coordination of dispersed using powermatcher based software agents. [Google Scholar]

- 67.Chong H.Y., et al. Advances of metaheuristic algorithms in training neural networks for industrial applications. Soft Comput. 2021;25(16):11209–11233. [Google Scholar]

- 68.Talbi E.-G. John Wiley & Sons; 2009. Metaheuristics: from Design to Implementation. [Google Scholar]

- 69.Karaboga D., Akay B., Ozturk C. International Conference on Modeling Decisions for Artificial Intelligence. Springer; 2007. Artificial bee colony (ABC) optimization algorithm for training feed-forward neural networks. [Google Scholar]

- 70.Alweshah M. Firefly algorithm with artificial neural network for time series problems. Res. J. Appl. Sci. Eng. Technol. 2014;7(19):3978–3982. [Google Scholar]

- 71.Yang X.-S., He X. 2013. Firefly Algorithm: Recent Advances and Applications. arXiv preprint arXiv:1308.3898. [Google Scholar]

- 72.Karaboga D., Basturk B. On the performance of artificial bee colony (ABC) algorithm. Appl. Soft Comput. 2008;8(1):687–697. [Google Scholar]

- 73.Colesanti C., Wasowski J. Investigating landslides with space-borne synthetic aperture radar (SAR) interferometry. Eng. Geol. 2006;88(3–4):173–199. [Google Scholar]

- 74.Bhadana V., Jalal A.S., Pathak P. IEEE; 2020. A Comparative Study of Machine Learning Models for COVID-19 Prediction in India. In 2020 IEEE 4th Conference on Information & Communication Technology (CICT) [Google Scholar]

- 75.Ribeiro S.P., et al. Worldwide COVID-19 spreading explained: traveling numbers as a primary driver for the pandemic. An Acad. Bras Ciências. 2020:92. doi: 10.1590/0001-3765202020201139. [DOI] [PubMed] [Google Scholar]

- 76.Mavragani A., Gkillas K. COVID-19 predictability in the United States using Google Trends time series. Sci. Rep. 2020;10(1):1–12. doi: 10.1038/s41598-020-77275-9. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 77.Dhamodharavadhani S., Rathipriya R., Chatterjee J.M. COVID-19 mortality rate prediction for India using statistical neural network models. Front. Public Health. 2020;8:441. doi: 10.3389/fpubh.2020.00441. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 78.Korouzhdeh T., Eskandari-Naddaf H., Kazemi R. Hybrid artificial neural network with biogeography-based optimization to assess the role of cement fineness on ecological footprint and mechanical properties of cement mortar expose to freezing/thawing. Construct. Build. Mater. 2021;304 [Google Scholar]

- 79.Kumaran J., Ravi G. Long-term sector-wise electrical energy forecasting using artificial neural network and biogeography-based optimization. Elec. Power Compon. Syst. 2015;43(11):1225–1235. [Google Scholar]

- 80.Li X., Yin M. Multi-operator based biogeography based optimization with mutation for global numerical optimization. Comput. Math. Appl. 2012;64(9):2833–2844. [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.