Abstract

Medical image analysis places a significant focus on breast cancer, which poses a significant threat to women's health and contributes to many fatalities. An early and precise diagnosis of breast cancer through digital mammograms can significantly improve the accuracy of disease detection. Computer-aided diagnosis (CAD) systems must analyze the medical imagery and perform detection, segmentation, and classification processes to assist radiologists with accurately detecting breast lesions. However, early-stage mammography cancer detection is certainly difficult. The deep convolutional neural network has demonstrated exceptional results and is considered a highly effective tool in the field. This study proposes a computational framework for diagnosing breast cancer using a ResNet-50 convolutional neural network to classify mammogram images. To train and classify the INbreast dataset into benign or malignant categories, the framework utilizes transfer learning from the pretrained ResNet-50 CNN on ImageNet. The results revealed that the proposed framework achieved an outstanding classification accuracy of 93%, surpassing other models trained on the same dataset. This novel approach facilitates early diagnosis and classification of malignant and benign breast cancer, potentially saving lives and resources. These outcomes highlight that deep convolutional neural network algorithms can be trained to achieve highly accurate results in various mammograms, along with the capacity to enhance medical tools by reducing the error rate in screening mammograms.

1. Introduction

The predominant cause of cancer-related deaths among women globally is breast cancer [1, 2]. According to a report by the World Health Organization's (WHO) cancer research institute, the International Agency for Research on Cancer, in 2018 globally, 17.1 million breast cancer cases were reported. The number of cases is predicted to increase to double the amount by 2025 [3]. Breast cancer is a highly invasive tumor that primarily affects women [4]. The high death rate among women makes it the second deadliest malignancy after lung cancer [5, 6]. A study by the National Institute of Cancer in China found 1.67 million breast cancer and 522,000 deaths cases from 2008 to 2012 [7].

Despite extensive efforts from medical professionals and researchers, a definitive method for treating breast cancer has yet to be established and reliable evidence for its prevention remains elusive [8–11]. Some components of breast cancer tissues are highly malignant and pose a severe danger to patients' lives as they can spread to other vital organs [12–15]. The growth of mammary cells can lead to tumors in women. Tumors are classified as benign or malignant based on the area, size, and location, using the BI-RAD scores [16, 17]. Benign tumors are not life-threatening and can be treated through medication to prevent further growth [17, 18]. Malignant tumors, on the other hand, can spread to other parts of the body via the lymphatic system or blood, making them much more dangerous [19–22]. This uncontrolled cell proliferation in the breast leads to the formation of malignant tumors, which can only be treated through surgery or radiation therapy [23, 24].

Early detection of breast cancer is crucial for accurate diagnosis and analysis, and many researchers are turning to biomedical imaging to aid specialist radiologists. Various methods such as MRI, mammography, and ultrasound are utilized to identify breast carcinoma [25, 26]. However, the large volume of images challenges radiologists in identifying potential cancerous areas. Therefore, an efficient automated method is needed, and computer-aided diagnostic (CAD) systems are being utilized in aiding radiologists in detecting cancerous breast tumors [27, 28].

Increasingly, deep learning techniques are being applied to medical imaging to develop automated computer-aided diagnosis (CAD) systems [29–35]. Deep learning is considered the most effective method for detecting and classifying medical images [29, 30]. With these techniques, the mammogram image's significant low to high-level hierarchical features can be directly extracted, making deep learning the most reliable medical imaging method [29]. Several CAD systems based on deep learning have been developed for breast lesions detection, which outperforms traditional systems [36]. Accurate detection of breast lesions is crucial for improving breast cancer diagnosis [29, 31]. However, detecting these lesions can be challenging due to their varying texture, shape, position, and size. Deep learning and image processing methods have been proposed to overcome the limitations of conventional technology, which cannot perform automated identification [29]. The final stage in the CAD model is the classification of breast lesions into benign or malignant, which is important in assessing the correctness of the diagnostic [30].

Currently employed methods for detecting breast cancer are slow, costly, and require extra efforts to run the radiology equipment. Accurately detecting breast cancer automatically from an image processing perspective is not easy. Hence, early diagnosis and proper treatment are deemed crucial. Therefore, an efficient screening system and automation are necessary for breast cancer detection due to the following reasons [12]: incorrect diagnoses and predictions, tumors appearing in low contrast areas, unreliable human diagnoses, overburdening of radiologists, human error in diagnosis, need for large training data to avoid overfitting in deep learning algorithms, high computational complexity, and longer processing time for accurate tumor identification.

A novel breast cancer detecting system is proposed with an improved architecture that integrates deep convolutional neural network (DCNN) and breast mammogram images to address previous drawbacks mentioned above. The proposed system intends to divide breast tumors into benign and malignant categories. The system's performance is evaluated and compared with existing classification systems using a public mammographic image dataset named INbreast. The new system includes transfer learning to fine-tune the pretrained DCNN and detailed results from experiments on the INbreast dataset. The system's performance is evaluated using the following metrics: AUC, specificity, accuracy, sensitivity, and F-1 score.

The rest of the paper is organized as follows. Section 2 presents the related work. Section 3 provides the proposed approach for breast cancer detection. Section 4 presents experimental analysis, results and comparison with existing work. Section 5 concludes this paper and presents future work.

2. Related Work

Breast cancer diagnosis in modern medical procedures often involves using mammography images [37]. A summary of recently developed systems for breast cancer diagnosis using mammogram images is presented in this section.

Structured support vector machine (SSVM) and conditional random field (CRF) are two structured prediction techniques proposed in [38] to classify mass mammograms. Both approaches used potential functions based on deep convolution and belief networks. The results demonstrated that the CRF method outperformed the SSVM method in training and inference time. Authors in [29] utilized four-fold cross-validation on X-ray mammograms from the INbreast dataset to estimate a full-resolution convolutional network (FrCN). It resulted in an F1 score of 99.24%, an accuracy of 95.96%, and a Matthews correlation coefficient (MCC) of 98.96%. In another study [39], the BDR-CNN-GCN approach was proposed by combining a graph-convolutional network (GCN) with a basic 8-layer CNN that includes batch normalization and dropout layers. The final BDR-CNN-GCN model was formed by integrating the two-layer GCN with the CNN. This method was tested using the MIAS dataset, and successful results were obtained with a 96.10% accuracy level.

Authors in [40] proposed modifying the YOLOv5 network for identifying and classifying breast cancers, with the algorithm run using specific parameter values. The modified YOLOv5 was compared with a faster RCNN and YOLOv3, achieving an accuracy of 96.50% and an MCC value of 93.50%. The diverse features (DFeBCD) method was proposed by [41], which classified mammograms into two categories normal and abnormal. They used two classifiers, an emotion learning-inspired integrated classifier (ELiEC) and SVM, with the IRMA mammography dataset. The ELiEC classifier outperformed SVM, achieving an accuracy rate of 80.30%. In [30], a deep-CNN model that utilized transfer learning (TL) was introduced to prevent overfitting when working with small datasets. DDSM, MIAS, BCDR, and INbreast were used to assess its performance. INbreast dataset achieved an accuracy of 95.5%, the DDSM dataset achieved an accuracy level of 97.35%, and the BCDR database achieved a 96.67% accuracy level.

Authors in [42] for extracting features from breast mammograms utilized lifting wavelet transform (LWT). Feature vectors' size was reduced using linear discriminant analysis (LDA) and principal component analysis (PCA). The classification was performed using the moth flame optimization and extreme learning machine (ELM) approach with MIAS and DDSM and datasets, achieving accuracy of 95.80% and 98.76%, respectively. In addition, researchers have also trained the CNN Inception-v3 model on 316 images, resulting in a sensitivity of 0.88, specificity of 0.87, and an AUC of 0.946 [43]. Furthermore, in [44], a CNN and TL classification method was proposed to evaluate the performance of eight fine-tuned pretrained models. Authors in [45] presented a hybrid classification model using Mobilenet, ResNet50, and Alexnet with an accuracy level of 95.6%. In [46], four different CNN architectures (VGG19, InceptionV3, ResNet50, and VGG16) were utilized for model training using 5000 images, while prediction models were evaluated on 1007 images.

Authors in [47] utilized alpha, geostatistics, and diversity analyses forms in their proposed breast cancer detection method. They employed the SVM classifier on MIAS and DDSM databases, which resulted in a detection accuracy level of 96.30%. The SVM classifier and gray level co-occurrence matrix (GLCM) were employed by [48] for detecting breast cancer abnormalities in the MIAS data set. Their method achieved an accuracy of 93.88% and surpassed the performance of the k-nearest neighbour (kNN) algorithm. Authors in [49] used AlexNet and SVM to enhance classification accuracy with data augmentation techniques. The method achieved 71.01% accuracy, which increased to 87.2% with SVM and was evaluated on DDSM and CBIS-DDSM datasets.

A DenseNet deep learning framework extracted image features and classified cancerous and benign cells by feeding them into a fully connected (FC) layer. The effectiveness of this technique was evaluated by adjusting the hyperparameters [50]. An algorithm named DICNN was developed by Irfan et al. [51], which uses a dilated semantic segmentation network and morphological operation. Combining these feature vectors with SVM classification yielded an accuracy of 98.9%.

Although prior breast cancer detection and classification systems have improved information extraction, several issues still need attention, such as low contrast in tumor location, high memory complexity, long processing time, and the need for a large amount of training data for deep learning approaches. In response to these problems, we propose a new approach to breast cancer detection and classification, which will be discussed in detail in the following section.

3. Methodology

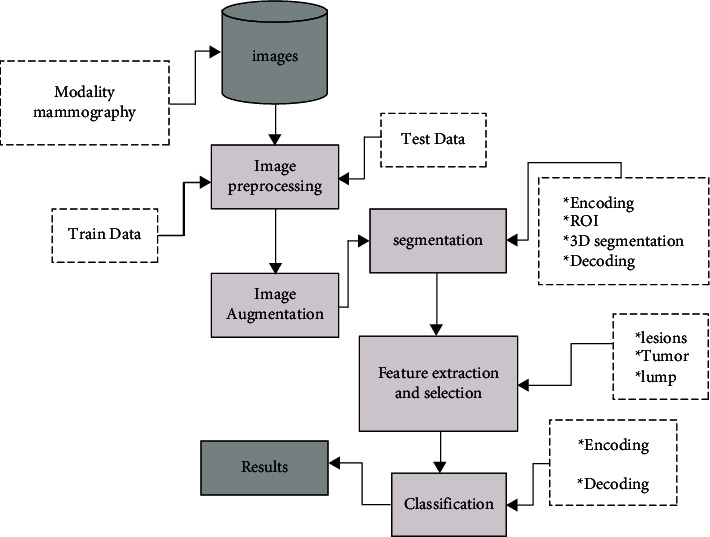

In this section, the processes used for implementing our proposed scheme are described in depth. The system consists of the following steps: (1) image enhancement, (2) image segmentation, (3) feature extraction and the selection, and (4) feature classification. The proposed system is illustrated in Figure 1.

Figure 1.

Architecture of the proposed system.

3.1. Dataset

This study used a digital breast X-ray database named INbreast to implement the proposed CAD approach. The INbreast dataset is a public database that contains more recent FFDM images. It typically has an image size of 3328 × 4084 pixels. It contains 115 patients' cases along with 410 mammograms with both craniocaudal (CC) view and a mediolateral oblique (MLO) view. Of these 115 patients, 90 had mammograms taken of both breasts, totaling 360 images, while the other 25 had only two mammograms taken each. In total, 410 mammograms were produced from 115 patients, including cases of normal, benign, and malignant breasts. 107 cases with breast lesions were used from the MLO and CC views for evaluation purposes.

3.2. Convolutional Neural Network

This subsection will examine the fundamental structure of all convolutional neural network (CNN) architectures. CNNs are deep neural networks used for image recognition and classification. In recent years, CNNs have become a crucial tool in image analysis, especially for identifying faces, text, and medical imaging. CNNs have a long history of success in image classification and segmentation, first developed in 1989. CNNs replicate the human brain's visual information processing by incorporating layers of “neurons” that only respond to their local surroundings. These networks can understand the topological aspects of an image through a combination of convolutional, pooling, and fully connected (FC) layers. The architecture of a CNN is shown in Figure 2.

Figure 2.

Standard architecture of CNN.

3.2.1. Convolutional Layers

The convolutional layers are assembled into feature maps based on local connections and weight distribution principles. A filter bank, a group of weights, connects neurons in a feature map to corresponding local regions in the preceding layer. Each feature map uses a different filter bank, and all the units in the map share the same filter row. This weight distribution and local connection help reduce the number of parameters by utilizing the close relationship between neighboring pixels and location-independent image features. The output of the weights is then sent to an activation function, such as ReLU or Sigmoid. This activation function enables the nonlinear transformation of the input data, which is necessary for the following processing stages.

3.2.2. Pooling Layer

As illustrated in Figure 2, the pooling layer follows the convolution layer and uses subsampling to integrate the features from the convolutional layer into a single layer semantically. This layer's primary objective is to decrease the size of the image by combining pixels into one value while preserving its features. In this layer, typical operations include max as well as main pooling.

3.2.3. Fully Connected Layer

The last layer in CNN is the dense classification layer, which is responsible for determining the category of input data based on extracted features from CNN. The number of units in the FC layer is the same as the number of different classifications (categories).

3.3. Proposed Workflow

This section provides the proposed workflow for breast cancer diagnosis using a deep convolutional neural network.

3.3.1. Image Enhancement

Image enhancement refers to increasing contrast and suppressing noise in mammogram images to assist radiologists in detecting breast abnormalities. Various image enhancement methods exist, including adaptive contrast enhancement (AHE). AHE improves the local contrast and reveals more image details, making it a helpful technique for enhancing both natural and medical images [52]. However, it may also result in considerable noise. In this paper, we utilized the contrast-limited adaptive histogram equalization (CLAHE) technique, a form of AHE, to enhance image contrast [52]. A drawback of AHE is that it can over-enhance the images due to the integration process [49]. To mitigate this issue, CLAHE is used as it limits the local histogram by setting a clip level, thus controlling contrast enhancement. Figure 3 illustrates an image enhanced by the CLAHE algorithm.

Figure 3.

Contrast enhancement for improve visibility.

Furthermore, CLAHE algorithm steps are given as follows [53]:

Split image into equal-sized contextual regions.

Apply histogram equalization to all contextual regions.

Limit the histogram to the level of the clip.

Reallocate the clipped values in the histogram.

Obtain enhanced pixel value through histogram integration.

3.4. Image Segmentation

Image segmentation involves dividing an image into regions with similar characteristics and features. Segmentation aims to simplify the image for easier analysis [54]. Popular image segmentation techniques include edge detection, partial differential equation (PDE), fuzzy theory, artificial neural network (ANN), region-based segmentation, and thresholding.

3.4.1. Thresholding Method

One of the simplest image segmentation methods is the thresholding method [55, 56]. The pixels of the image are split according to their intensity level. The global threshold is the most commonly used thresholding technique [57]. It is accomplished by setting a threshold value (T) constant throughout the image. The output image is derived from the original image based on the threshold value.

3.4.2. Region-Based Segmentation Methods

It is a simple approach compared to other methods, as it involves dividing an image into different sections based on predetermined. Compared to others, it is a straightforward method because it entails separating an image into different sections based on predetermined criteria [58]. There are two primary kinds of region-based segmentation: (1) region splitting and merging and (2) region growing. Region growing allows the removal of a region from an image using defined criteria, such as intensity. It involves selecting a starting seed point. It is important to note that unlike region growing, region splitting and merging work on the entire image [59].

In the present study extracting the region of interest (ROI) involves using both thresholding and region-based techniques. The tumor in the INbreast dataset samples cites moreira2012inbreast is labeled by a white bounding box, as shown in Figure 4. For extracting ROI, the tumor region is first determined by setting a threshold value based on the white color pixels in the image. The threshold for all images is determined to be 80 after several attempts, independent of tumor size. After identifying the greatest area inside this threshold within the image, the tumor is automatically cropped. Figure 4 shows ROI extracted using threshold and region-based methods.

Figure 4.

Defining region of interest (ROI).

The method for extracting ROI can be summarized in four steps:

Thresholding the grayscale mammogram image to create a binary image.

Labelling and counting the binary image objects, then retaining only the largest one, which is the tumor, as defined by the white bounding box.

Assign the largest area within the threshold value to “1” and the rest a value of “0.”

Multiply binary image with original mammogram image for obtaining final ROI without including other parts of breast or artifacts.

3.4.3. Feature Extraction and Selection

Numerous methods exist for feature extraction. Due to their exceptional performance, deep convolutional neural networks (DCNN) garnered significant interest in recent years. Consequently, the DCNN is utilized in this paper.

3.4.4. Deep Convolutional Neural Network

The success of DCNN in image classification and analysis has been documented in various studies [60, 61]. Convolutional neural networks (CNNs) are composed of multiple trainable stages that culminate in a supervised classifier and feature maps [62]. Three primary types of layers are employed to build CNN structures: convolutional, pooling, and fully connected (FC) layers [63]. The ResNet50 CNN classification model categorizes breast cancer as benign or malignant in this work.

3.4.5. Feature Learning through Transfer Learning

Machine learning has various feature learning methods (FL), allowing a system to automatically identify the representations required for feature detection, prediction, or classification from a preprocessed dataset [64]. This implies that the machine can learn and use the features to perform tasks such as classification or prediction. In deep learning, FL can be accomplished by constructing a complete CNN to train and test image datasets or adjust a pretrained CNN for classification or prediction on a new image dataset, referred to as transfer learning.

In deep learning, transfer learning (TL) is a widely-used technique that enables the utilization of a pretrained network for new prediction or classification tasks. This is achieved by adjusting the parameters of the pretrained network with randomly initialized weights for the new task. TL typically results in faster training than starting from scratch and is considered an optimization that saves time and improves performance, as stated in [65]. For this purpose, transfer learning is utilized to fine-tune ResNet50 CNN. This involves using pretrained weights from the ImageNet dataset [66] for retraining after preprocessing the collected dataset. The network parameters and hyperparameters are optimized during this process.

3.4.6. Classification

The features are taken from ResNet-50 and processed via a fully connected (FC) layer with a 40% dropout rate to avoid overfitting [67, 68]. This layer is then activated with the rectifying function, ReLU. All negative values are set to zero in the input matrix, while other remains unchanged. The use of ReLU leads to faster and more reliable convergence than a sigmoid activation function during training deep networks [69]. The output layer comprises a sigmoid function (binary classifier) to provide class probabilities. The sigmoid function normalizes the input into two outcomes, i.e., malignant vs. benign [70].

4. Evaluation and Results

The proposed deep convolutional neural network for mammogram imaging undergoes examination and validation in this section. Information about benchmark datasets, assessment metrics, and comparisons to other leading techniques are also covered.

4.1. Image Acquisition Process

The proposed system's performance is evaluated using digitized mammogram images from the INbreast dataset [71]. The database is used to demonstrate the efficiency and reliability of the proposed method for identifying breast cancer. INbreast dataset includes 336 mammogram images, with 269 abnormal and 69 normal images, where 220 are benign and 49 malignant cases. Tables 1 and 2 show the distribution of mammography images.

Table 1.

Normal and abnormal INbreast dataset.

| Total images (normal) | Total images (abnormal) | Training (normal) | Training (abnormal) | Testing (normal) | Testing (abnormal) |

|---|---|---|---|---|---|

| 67 | 269 | 40 | 162 | 27 | 107 |

Table 2.

Benign and malignant INbreast dataset.

| Total images (benign) | Total images (malignant) | Training (benign) | Training (malignant) | Testing (benign) | Testing (malignant) |

|---|---|---|---|---|---|

| 220 | 49 | 132 | 29 | 88 | 20 |

4.2. Metrics of Performance

The purpose of cross-validation is to improve efficiency, validate performance, and assess the results from the dataset. To assess the classification efficiency of the proposed method, multiple metrics are utilized such as confusion matrix, accuracy, sensitivity, specificity, error rate, F1 score, and area under the curve (AUC). All these metrics act as benchmark values for comparing the proposed method against previous algorithms [72]. These measurements are defined as follows.

4.2.1. Confusion Matrix

Confusion matrix represents the performance of a classifier in the form of a table. In ML, it is also known as an error matrix. The image regions are labeled positive or negative based on the data type. The classifier's decision can be correct (true) or incorrect (false). This results in four outcomes: true positive (TP), true negative (TN), false positive (FP), and false negative (FN). Correct decisions are represented along the diagonal of the confusion matrix.

4.2.2. Accuracy

Accuracy characterizes the suitable labeled images for benign, normal, and malignant mammograms. The accuracy of the process is computed as follows:

| (1) |

TP accurately represents positive examples; TN addresses classified negative examples; TN means incorrectly classified examples as accurately classified; and FN indicates accurately classified examples as the wrong sample.

4.2.3. Specificity

The chances that the test will correctly recognize the patient who has the disease is shown in the following equation:

| (2) |

4.2.4. Sensitivity

The chance that the test will correctly recognize a patient with the disease is shown in equation:

| (3) |

4.2.5. F1 Score

It is a weighted average of precision and recall used for assessing the classifier's performance. It considers both false positives and negatives in its calculation, as shown in the following equation:

| (4) |

4.2.6. Area Under the Curve (AUC)

AUC is the classifier's ability to distinguish between benign, normal, and malignant mammograms.

5. Results and Discussion

For this study, a subset is taken from the INbreast dataset, and each sample is increased to four images. During the experiment, 60% images were used for training, and the remaining 40% were used for testing. The samples were first subjected to enhancement and segmentation according to the procedures described in the “Methodology” section. Afterward, features were extracted from the samples using a CNN. Finally, all the samples were classified using ResNet-50.

The proposed DCNN method categorizes mammogram images of breast tumors into benign or malignant. A dataset named INbreast is used for experimentation. Table 3 displays the classification accuracy achieved by the proposed ResNet-50 method across the INbreast database. From the INbreast dataset, 132 benign and 29 malignant image samples were selected for training, and 20 malignant and 88 benign for testing. The resulting accuracy is 93%. The proposed ResNet-50 approach is also compared quantitatively with previously existing algorithms. The study's results revealed that the presented approach outperformed these algorithms with high accuracy, specificity, F1 score values, and sensitivity.

Table 3.

Summary of breast cancer detection on different mammography datasets.

| Authors | Dataset | Method | Accuracy (%) | Specificity (%) | Sensitivity (%) |

|---|---|---|---|---|---|

| Carneiro et al. [73] | INbreast | AlexNet | 86 | N.A. | N.A. |

| Dhungel et al. [16] | INbreast | AlexNet | 91.0 | N.A. | N.A. |

| Zhang et al. [74] | INbreast | AdaBoost | 87.93 | 97.73 | 57.20 |

| Pezeshki et al. [75] | MIAS | ANN | 61.0 | 77.0 | 95.03 |

| Zhang et al. [74] | DDSM | AdaBoost | 90.91 | 97.38 | 82.96 |

| Proposed approach | INbreast | ResNet-50 | 93.0 | 93.86 | 93.83 |

As shown in Table 3, the proposed approach demonstrated improved results on the INbreast database with an accuracy of 93.0%, specificity of 93.86%, and sensitivity of 93.83%. It outperforms other methods in terms of accuracy. Although the accuracy achieved by [16] is slightly higher at 91.0%, the proposed approach still exhibits the best performance compared to the other methods. Compared to existing methods, the proposed approach enhances breast cancer detection and classification performance. It can potentially be used for real-time evaluation and to support radiologists in automating the analysis of mammogram images. However, performance may vary when the same method is applied to different datasets due to factors such as background noise, lighting conditions, occlusion, overfitting, and the nature of the method.

The performance of the presented approach is also evaluated using the confusion matrix and ROC curves. Figure 5 illustrates the confusion matrix on the INbreast data set. AUC, a crucial statistical metric in the ROC curve, is computed using the INbreast data set. Metric in the ROC curve is calculated INbreast data set. ROC curves were constructed based on true positive rate (sensitivity) and false positive rate (1-specificity) rates, controlled by the threshold of the obtained probability maps. Figure 6 shows the ROC curve graph.

Figure 5.

Confusion matrix of the proposed approach for classification on INbreast database.

Figure 6.

ROC plot on INbreast dataset.

Table 4 presents our proposed system's results of breast cancer detection. The proposed approach achieved an F1 score and AUC of 93.03% and 93.02%, respectively, on the INbreast database.

Table 4.

Results of our proposed methodology for breast cancer detection and classification.

| Performance metrics | Result obtained (%) |

|---|---|

| Accuracy | 93.0 |

| Sensitivity | 93.83 |

| Specificity | 93.86 |

| F1 score | 93.03 |

| AUC | 93.02 |

In recent years, breast cancer detection and classification applications have gained widespread use in the medical field, making the diagnostic process more accurate [76, 77]. The goal of the proposed method is to enhance clinical diagnosis by enhancing the detection of breast cancer. The opinions of two medical specialists were gathered based on the accuracy level generated by our proposed algorithm. These experts expressed their appreciation for the improved results of ResNet-50 compared to other approaches. To sum it up, the proposed approach enhances performance compared to other methods and can be utilized for real-time evaluations along with helping radiologists automate the evaluation of mammograms.

6. Conclusion

The proposed system aimed to detect malignant breast masses and classify benign and malignant tissues in mammograms. A novel computer-aided detection (CAD) system is proposed, which involves thresholding and region-based segmentation techniques. A region-based method with a threshold of 80 determines the largest area included in this threshold. A deep convolutional neural network (DCNN) is utilized during feature extraction. Specifically, the ResNet-50 is retrained to classify the mammograms into two classes (malignant or benign), and its parameters were modified to classify breast mammograms. The proposed approach is applied to the INbreast database to evaluate its performance of the proposed approach. The proposed method achieved an accuracy of 93.0%, specificity of 93.86%, AUC of 93.02%, a sensitivity of 93.83%, and an F1 score of 93.03%, which are extremely satisfying results. The proposed method surpasses the detection and classification of mammograms, delivering more precise results and improved visual outcomes compared to other systems. The proposed system efficiently detects and classifies malignant breast masses with reduced computation time and produced satisfactory results. Alternative networks, such as deep convolutional networks (VGG) and AlexNet architecture, will be proposed for future development. In the future, we intend to extend this work by collecting large datasets on breast cancer in different age intervals to detect cancer in its early stages.

Contributor Information

Hameedur Rahman, Email: hameed.rahman@mail.au.edu.pk.

Rozilawati Ahmad, Email: rozie.ahmad@ukm.edu.my.

Abdul Rehman Javed, Email: abdulrehman.cs@au.edu.pk.

Data Availability

The (Breast Cancer Diagnosis) data used to support the findings of this study are included within the article.

Conflicts of Interest

The authors declare that they have no conflicts of interest.

References

- 1.Xian M., Zhang Y., Cheng H.-Da, Xu F., Zhang B., Ding J. Automatic breast ultrasound image segmentation: a survey. Pattern Recognition . 2018;79:340–355. doi: 10.1016/j.patcog.2018.02.012. [DOI] [Google Scholar]

- 2.Xu C., Gu Z., Liu J., et al. Adenosquamous carcinoma of the breast: a population-based study. Breast Cancer . 2021;28(4):848–858. doi: 10.1007/s12282-021-01222-3. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 3.Gardezi S. J. S., Elazab A., Lei B., Wang T. Breast cancer detection and diagnosis using mammographic data: systematic review. Journal of Medical Internet Research . 2019;21(7) doi: 10.2196/14464.e14464 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 4.Whang J. S., Baker S. R., Patel R., Luk L., Castro A. The causes of medical malpractice suits against radiologists in the United States. Radiology . 2013;266(2):548–554. doi: 10.1148/radiol.12111119. [DOI] [PubMed] [Google Scholar]

- 5.Adam F. Breast cancer: symptoms, causes, and treatment. 2022. https://www.medicalnewstoday.com/articles/37136 .

- 6.El-Chami D., Al Haddad M., Abi-Habib R., El-Sibai M. Recombinant anthrax lethal toxin inhibits cell motility and invasion in breast cancer cells through the dysregulation of rho gtpases. Oncology Letters . 2021;21(2):p. 163. doi: 10.3892/ol.2020.12424. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 7.Chen W., Zheng R., Zhang S., et al. Cancer incidence and mortality in China in 2013: an analysis based on urbanization level. Chinese Journal of Cancer Research . 2017;29(1):1–10. doi: 10.21147/j.issn.1000-9604.2017.01.01. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 8.Al-Antari M. A., Han S.-M., Kim T.-S. Evaluation of deep learning detection and classification towards computer-aided diagnosis of breast lesions in digital x-ray mammograms. Computer Methods and Programs in Biomedicine . 2020;196 doi: 10.1016/j.cmpb.2020.105584.105584 [DOI] [PubMed] [Google Scholar]

- 9.Nader R., Tannoury E., Rizk T., Ghanem H., Luvizotto Junior E. Atezolizumab-induced encephalitis in a patient with metastatic breast cancer: a case report and review of neurological adverse events associated with checkpoint inhibitors. Autopsy Case Reports . 2021;11 doi: 10.4322/acr.2021.261.e2021261 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 10.Mansour N., Bodman-Smith K., Khnayzer R. S., Daher C. F. A photoactivatable ru (ii) complex bearing 2, 9-diphenyl-1, 10-phenanthroline: a potent chemotherapeutic drug inducing apoptosis in triple negative human breast adenocarcinoma cells. Chemico-Biological Interactions . 2021;336 doi: 10.1016/j.cbi.2020.109317.109317 [DOI] [PubMed] [Google Scholar]

- 11.Younes M., Ammoury C., Haykal T., Nasr L., Sarkis R., Rizk S. The selective anti-proliferative and pro-apoptotic effect of a. cherimola on mda-mb-231 breast cancer cell line. BMC Complementary Medicine and Therapies . 2020;20(1):p. 343. doi: 10.1186/s12906-020-03120-1. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 12.Punitha S., Al-Turjman F., Stephan T. An automated breast cancer diagnosis using feature selection and parameter optimization in ann. Computers & Electrical Engineering . 2021;90 doi: 10.1016/j.compeleceng.2020.106958.106958 [DOI] [Google Scholar]

- 13.Mao Ye-J., Lim H.-J., Ni M., Yan W. H., Wong D. W. C., Cheung J. C. W. Breast tumour classification using ultrasound elastography with machine learning: a systematic scoping review. Cancers . Jan 2022;14(2):p. 367. doi: 10.3390/cancers14020367. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 14.Nasser H. A., Assaf S., Aouad G., Mouawad Y., Elamely S. Breast manifestations of type i diabetes mellitus. Breast Journal . 2020;26(10):2079–2080. doi: 10.1111/tbj.13938. [DOI] [PubMed] [Google Scholar]

- 15.Al Saud N., Jabbour S., Kechichian E., et al. A systematic review of zosteriform rash in breast cancer patients: an objective proof of flap reinnervation and a management algorithm. Annals of Plastic Surgery . 2018;81(4):456–461. doi: 10.1097/sap.0000000000001505. [DOI] [PubMed] [Google Scholar]

- 16.Dhungel N., Carneiro G., Bradley A. P. A deep learning approach for the analysis of masses in mammograms with minimal user intervention. Medical Image Analysis . 2017;37:114–128. doi: 10.1016/j.media.2017.01.009. [DOI] [PubMed] [Google Scholar]

- 17.Byra M. Breast mass classification with transfer learning based on scaling of deep representations. Biomedical Signal Processing and Control . 2021;69 doi: 10.1016/j.bspc.2021.102828.102828 [DOI] [Google Scholar]

- 18.Hitti E., Hadid D., Melki J., Kaddoura R., Alameddine M. Mobile device use among emergency department healthcare professionals: prevalence, utilization and attitudes. Scientific Reports . 2021;11(1):p. 1917. doi: 10.1038/s41598-021-81278-5. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 19.Shen Li, Margolies L. R., Rothstein J. H., Fluder E., McBride R., Sieh W. Deep learning to improve breast cancer detection on screening mammography. Scientific Reports . Aug 2019;9(1) doi: 10.1038/s41598-019-48995-4.12495 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 20.Jansson D., Dieriks V. B., Rustenhoven J., et al. Cardiac glycosides target barrier inflammation of the vasculature, meninges and choroid plexus. Communications Biology . Feb 2021;4(1):p. 260. doi: 10.1038/s42003-021-01787-x. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 21.El Zarif O., Haraty R. A. Innovation in Health Informatics . Amsterdam, Netherlands: Elsevier; 2020. Toward information preservation in healthcare systems; pp. 163–185. [Google Scholar]

- 22.Salloum G., Tekli J. Automated and personalized nutrition health assessment, recommendation, and progress evaluation using fuzzy reasoning. International Journal of Human-Computer Studies . 2021;151 doi: 10.1016/j.ijhcs.2021.102610.102610 [DOI] [Google Scholar]

- 23.Song B.-Il. A machine learning-based radiomics model for the prediction of axillary lymph-node metastasis in breast cancer. Breast Cancer . 2021;28 doi: 10.1007/s12282-020-01202-z. [DOI] [PubMed] [Google Scholar]

- 24.Mukand N. H., Ko N. Y., Nabulsi N. A. The association between physical health-related quality of life, physical functioning, and risk of contralateral breast cancer among older women. Breast Cancer . 2021;29 doi: 10.1007/s12282-021-01309-x. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 25.Sadad T., Munir A., Saba T., Hussain A. Fuzzy c-means and region growing based classification of tumor from mammograms using hybrid texture feature. Journal of computational science . 2018;29:34–45. doi: 10.1016/j.jocs.2018.09.015. [DOI] [Google Scholar]

- 26.Jubeen M., Rahman H., Rahman A. U., et al. An automatic breast cancer diagnostic system based on mammographic images using convolutional neural network classifier. Journal of Computing & Biomedical Informatics . 2022;4(1):77–86. doi: 10.56979/401/2022/93. [DOI] [Google Scholar]

- 27.Qader Zeebaree D., Mohsin Abdulazeez A., Asaad Zebari D., Haron H., Nuzly Abdull Hamed H. Multi-level fusion in ultrasound for cancer detection based on uniform lbp features. Computers, Materials & Continua . 2021;66(3):3363–3382. doi: 10.32604/cmc.2021.013314. [DOI] [Google Scholar]

- 28.Maqsood S., Damaševičius R., Shah F. M., MaskeliūNas R. Detection of macula and recognition of aged-related macular degeneration in retinal fundus images. Computing and Informatics . 2021;40(5):957–987. doi: 10.31577/cai_2021_5_957. [DOI] [Google Scholar]

- 29.Al-Antari M. A., Al-Masni M. A., Choi M.-T., Han S.-M., Kim T.-S. A fully integrated computer-aided diagnosis system for digital x-ray mammograms via deep learning detection, segmentation, and classification. International Journal of Medical Informatics . 2018;117:44–54. doi: 10.1016/j.ijmedinf.2018.06.003. [DOI] [PubMed] [Google Scholar]

- 30.Chougrad H., Zouaki H., Alheyane O. Deep convolutional neural networks for breast cancer screening. Computer Methods and Programs in Biomedicine . 2018;157:19–30. doi: 10.1016/j.cmpb.2018.01.011. [DOI] [PubMed] [Google Scholar]

- 31.Kooi T., Litjens G., van Ginneken B., et al. Large scale deep learning for computer aided detection of mammographic lesions. Medical Image Analysis . 2017;35:303–312. doi: 10.1016/j.media.2016.07.007. [DOI] [PubMed] [Google Scholar]

- 32.Maqsood S., Javed U. Multi-modal medical image fusion based on two-scale image decomposition and sparse representation. Biomedical Signal Processing and Control . 2020;57 doi: 10.1016/j.bspc.2019.101810.101810 [DOI] [Google Scholar]

- 33.Muzammil S. R., Maqsood S., Haider S., Damaševičius R. Csid: a novel multimodal image fusion algorithm for enhanced clinical diagnosis. Diagnostics . 2020;10(11):p. 904. doi: 10.3390/diagnostics10110904. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 34.Maqsood S., Javed U., Riaz M. M., Muzammil M., Muhammad F., Kim S. Multiscale image matting based multi-focus image fusion technique. Electronics . 2020;9(3):p. 472. doi: 10.3390/electronics9030472. [DOI] [Google Scholar]

- 35.Jabeen K., Khan M. A., Alhaisoni M., et al. Breast cancer classification from ultrasound images using probability-based optimal deep learning feature fusion. Sensors . 2022;22(3):p. 807. doi: 10.3390/s22030807. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 36.Yassin N. I. R., Omran S., El Houby E. M., Allam H., Allam H. Machine learning techniques for breast cancer computer aided diagnosis using different image modalities: a systematic review. Computer Methods and Programs in Biomedicine . 2018;156:25–45. doi: 10.1016/j.cmpb.2017.12.012. [DOI] [PubMed] [Google Scholar]

- 37.Rezaei Z. A review on image-based approaches for breast cancer detection, segmentation, and classification. Expert Systems with Applications . 2021;182 doi: 10.1016/j.eswa.2021.115204.115204 [DOI] [Google Scholar]

- 38.Dhungel N., Carneiro G., Bradley A. P. Deep learning and structured prediction for the segmentation of mass in mammograms. Proceedings of the MICCAI 2015: 18th International Conference Medical Image Computing and Computer-Assisted Intervention; October, 2015; Munich, Germany. Springer; pp. 605–612. [Google Scholar]

- 39.Zhang Yu-D., Satapathy S. C., Guttery D. S., Górriz J. M., Wang S. H. Improved breast cancer classification through combining graph convolutional network and convolutional neural network. Information Processing & Management . 2021;58(2) doi: 10.1016/j.ipm.2020.102439.102439 [DOI] [Google Scholar]

- 40.Suh Y. J., Jung J., Cho B.-J. Automated breast cancer detection in digital mammograms of various densities via deep learning. Journal of Personalized Medicine . 2020;10(4):p. 211. doi: 10.3390/jpm10040211. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 41.Chouhan N., Khan A., Shah J. Z., Hussnain M., Khan M. W. Deep convolutional neural network and emotional learning based breast cancer detection using digital mammography. Computers in Biology and Medicine . 2021;132 doi: 10.1016/j.compbiomed.2021.104318.104318 [DOI] [PubMed] [Google Scholar]

- 42.Muduli D., Dash R., Majhi B. Automated breast cancer detection in digital mammograms: a moth flame optimization based elm approach. Biomedical Signal Processing and Control . 2020;59 doi: 10.1016/j.bspc.2020.101912.101912 [DOI] [Google Scholar]

- 43.Wang Yi, Choi E. J., Choi Y., Zhang H., Jin G. Y., Ko S. B. Breast cancer classification in automated breast ultrasound using multiview convolutional neural network with transfer learning. Ultrasound in Medicine and Biology . 2020;46(5):1119–1132. doi: 10.1016/j.ultrasmedbio.2020.01.001. [DOI] [PubMed] [Google Scholar]

- 44.Masud M., Eldin Rashed A. E., Hossain M. S. Convolutional neural network-based models for diagnosis of breast cancer. Neural Computing & Applications . 2020;34:11383–11394. doi: 10.1007/s00521-020-05394-5. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 45.Eroğlu Y., Yildirim M., Çinar A. Convolutional neural networks based classification of breast ultrasonography images by hybrid method with respect to benign, malignant, and normal using mrmr. Computers in Biology and Medicine . 2021;133 doi: 10.1016/j.compbiomed.2021.104407.104407 [DOI] [PubMed] [Google Scholar]

- 46.Zhang H., Han L., Chen Ke, Peng Y., Lin J. Diagnostic efficiency of the breast ultrasound computer-aided prediction model based on convolutional neural network in breast cancer. Journal of Digital Imaging . 2020;33(5):1218–1223. doi: 10.1007/s10278-020-00357-7. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 47.Braz Junior G., da Rocha S. V., de Almeida J. D. S., de Paiva A. C., Silva A. C., Gattass M. Breast cancer detection in mammography using spatial diversity, geostatistics, and concave geometry. Multimedia Tools and Applications . 2019;78(10):13005–13031. doi: 10.1007/s11042-018-6259-z. [DOI] [Google Scholar]

- 48.Harefa J., Alexander A., Pratiwi M. Comparison classifier: support vector machine (svm) and k-nearest neighbor (k-nn) in digital mammogram images. Jurnal Informatika dan Sistem Informasi . 2017;2(2):35–40. [Google Scholar]

- 49.Ragab D. A., Sharkas M., Marshall S., Ren J. Breast cancer detection using deep convolutional neural networks and support vector machines. PeerJ . 2019;7:p. e6201. doi: 10.7717/peerj.6201. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 50.Kousalya K., Saranya T. Improved the detection and classification of breast cancer using hyper parameter tuning. Materials Today Proceedings . 2021 doi: 10.1016/j.matpr.2021.03.707. [DOI] [Google Scholar]

- 51.Irfan R., Almazroi A. A., Rauf H. T., Damasevicius R., Nasr E. A., Abdelgawad A. E. Dilated semantic segmentation for breast ultrasonic lesion detection using parallel feature fusion. Diagnostics . 2021;11(7):p. 1212. doi: 10.3390/diagnostics11071212. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 52.Pisano E. D., Zong S., Hemminger B. M., et al. Contrast limited adaptive histogram equalization image processing to improve the detection of simulated spiculations in dense mammograms. Journal of Digital Imaging . 1998;11(4):193–200. doi: 10.1007/bf03178082. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 53.Sahakyan A., Sarukhanyan H. Segmentation of the breast region in digital mammograms and detection of masses. International Journal of Advanced Computer Science and Applications . 2012;3(2) doi: 10.14569/ijacsa.2012.030218. [DOI] [Google Scholar]

- 54.Abid I., Almakdi S., Rahman H., et al. A convolutional neural network for skin lesion segmentation using double u-net architecture. Intelligent Automation & Soft Computing . 2022;33(3):1407–1421. doi: 10.32604/iasc.2022.023753. [DOI] [Google Scholar]

- 55.Ahmad R., Khalid A., Rahman H. Brain tumor detection using image segmentation and classification. LC International Journal of STEM . 2020;1(3):59–65. [Google Scholar]

- 56.Abbas M., Arshad M., Rahman H. Detection of breast cancer using neural networks. LC International Journal of STEM . 2020;1(3):75–88. [Google Scholar]

- 57.Kaur D., Kaur Y. Various image segmentation techniques: a review. International Journal of Computer Science and Mobile Computing . 2014;3(5):809–814. [Google Scholar]

- 58.Khan W. Image segmentation techniques: a survey. Journal of image and graphics . 2014;1(4):166–170. doi: 10.12720/joig.1.4.166-170. [DOI] [Google Scholar]

- 59.Kaur M., Goyal P. A review on region based segmentation. International Journal of Science and Research . 2015;4(4):3194–3197. [Google Scholar]

- 60.Han J., Zhang D., Hu X. Background prior-based salient object detection via deep reconstruction residual. IEEE Transactions on Circuits and Systems for Video Technology . 2014;25(8):1309–1321. [Google Scholar]

- 61.Zabalza J., Ren J., Zheng J., et al. Novel segmented stacked autoencoder for effective dimensionality reduction and feature extraction in hyperspectral imaging. Neurocomputing . 2016;185:1–10. doi: 10.1016/j.neucom.2015.11.044. [DOI] [Google Scholar]

- 62.LeCun Y., Kavukcuoglu K., Farabet C. Convolutional networks and applications in vision. Proceedings of the 2010 IEEE International Symposium on Circuits and Systems; June, 2010; Paris, France. IEEE; pp. 253–256. [Google Scholar]

- 63.Alexandre Spanhol F., Oliveira L. S., Petitjean C., Laurent H. Breast cancer histopathological image classification using convolutional neural networks. Proceedings of the 2016 International Joint Conference on Neural Networks (IJCNN); July, 2016; Vancouver, BC, Canada. IEEE; pp. 2560–2567. [Google Scholar]

- 64.Bengio Y., Courville A., Vincent P. Representation learning: a review and new perspectives. IEEE Transactions on Pattern Analysis and Machine Intelligence . 2013;35(8):1798–1828. doi: 10.1109/tpami.2013.50. [DOI] [PubMed] [Google Scholar]

- 65.Raúl de la F. L. Wild Data - Transfer Learning - Deep Learning - Blog. 2018. https://blog.stratio.com/wild-data-part-three-transfer-learning/

- 66.He K., Zhang X., Ren S., Sun J. Deep residual learning for image recognition. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition; June, 2016; Las Vegas, NV, USA. pp. 770–778. [Google Scholar]

- 67.Srivastava N., Hinton G., Krizhevsky A., Sutskever I., Salakhutdinov R. Dropout: a simple way to prevent neural networks from overfitting. Journal of Machine Learning Research . 2014;15(1):1929–1958. [Google Scholar]

- 68.Kuppusamy P., Kumari N. M. J., Alghamdi W. Y., et al. Job scheduling problem in fog-cloud-based environment using reinforced social spider optimization. Journal of Cloud Computing . 2022;11(1):p. 99. doi: 10.1186/s13677-022-00380-9. [DOI] [Google Scholar]

- 69.Brownlee J. A gentle introduction to the rectified linear unit (relu) for deep learning neural networks. Apr 2019. https://machinelearningmastery.com/rectified-linear-activation-function-for-deep-learning-neural-networks/

- 70.Al-Haija Q. A., Manasra G. F. Development of breast cancer detection model using transfer learning of residual neural network (resnet-50) American Journal of Science & Engineering . 2020;1(3):30–39. doi: 10.15864/ajse.1304. [DOI] [Google Scholar]

- 71.Moreira I. C., Amaral I., Domingues I., Cardoso A., Cardoso M. J., Cardoso J. S. Inbreast: toward a full-field digital mammographic database. Academic Radiology . 2012;19(2):236–248. doi: 10.1016/j.acra.2011.09.014. [DOI] [PubMed] [Google Scholar]

- 72.Dhasarathan C., Hasan M. K., Islam S., et al. Covid-19 health data analysis and personal data preserving: a homomorphic privacy enforcement approach. Computer Communications . 2023;199:87–97. doi: 10.1016/j.comcom.2022.12.004. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 73.Carneiro G., Nascimento J., Bradley A. P. Automated analysis of unregistered multi-view mammograms with deep learning. IEEE Transactions on Medical Imaging . 2017;36(11):2355–2365. doi: 10.1109/tmi.2017.2751523. [DOI] [PubMed] [Google Scholar]

- 74.Zhang H., Wu R., Yuan T., et al. De-ada∗: a novel model for breast mass classification using cross-modal pathological semantic mining and organic integration of multi-feature fusions. Information Sciences . 2020;539:461–486. doi: 10.1016/j.ins.2020.05.080. [DOI] [Google Scholar]

- 75.Pezeshki H., Rastgarpour M., Sharifi A., Yazdani S. Extraction of spiculated parts of mammogram tumors to improve accuracy of classification. Multimedia Tools and Applications . 2019;78(14):19979–20003. doi: 10.1007/s11042-019-7185-4. [DOI] [Google Scholar]

- 76.Aleem J., Qureshi P. A. A. A., Babar N., Sultan A., Rehman A. U. Metastatic choriocarcinoma of the breast: a rare entity. Cureus . 2022;14(2) doi: 10.7759/cureus.22417.e22417 [DOI] [PMC free article] [PubMed] [Google Scholar]

- 77.Shaban M., Raza S. E. A., Hassan M., et al. A digital score of tumour-associated stroma infiltrating lymphocytes predicts survival in head and neck squamous cell carcinoma. The Journal of Pathology . 2022;256(2):174–185. doi: 10.1002/path.5819. [DOI] [PubMed] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.

Data Availability Statement

The (Breast Cancer Diagnosis) data used to support the findings of this study are included within the article.