Abstract

High-content screening (HCS) provides an excellent tool to understand the mechanism of action of drugs on disease-relevant model systems. Careful selection of fluorescent labels (FLs) is crucial for successful HCS assay development. HCS assays typically comprise (a) FLs containing biological information of interest, and (b) additional structural FLs enabling instance segmentation for downstream analysis. However, the limited number of available fluorescence microscopy imaging channels restricts the degree to which these FLs can be experimentally multiplexed. In this article, we present a segmentation workflow that overcomes the dependency on structural FLs for image segmentation, typically freeing two fluorescence microscopy channels for biologically relevant FLs. It consists in extracting structural information encoded within readouts that are primarily biological, by fine-tuning pre-trained state-of-the-art generalist cell segmentation models for different combinations of individual FLs, and aggregating the respective segmentation results together. Using annotated datasets that we provide, we confirm our methodology offers improvements in performance and robustness across several segmentation aggregation strategies and image acquisition methods, over different cell lines and various FLs. It thus enables the biological information content of HCS assays to be maximized without compromising the robustness and accuracy of computational single-cell profiling.

Keywords: cell biology, cell segmentation, fluorescence microscopy, high-content screening, image-based cellular assays

Impact Statement

This methodological article describes a framework enabling cell segmentation for datasets without structural fluorescent labels to highlight cell organelles. Such capabilities favorably impact costs and possible discoveries in single-cell downstream analysis by improving our ability to incorporate more biological readouts into a single assay. The perspective of computational and experimental biologist coauthors ensures a multidisciplinary viewpoint and accessibility for a wide readership.

1. Introduction

Image-based cellular assays allow us to investigate cellular and population phenotypes and signaling and thus to understand biological phenomena with high precision.

In order to investigate specific biological processes and pathways, one can use tailored fluorescent labels (FL) such as immunofluorescence staining or fluorescent proteins. Cellular and subcellular features can then be detected and quantified by measuring fluorescence signal intensity and localization or by using multi-parametric measurements in a machine-learning framework( 1 ). Cell instance segmentation is a key part of such bioimage analysis pipelines as it allows us to study the cells at a single-cell level rather than at the population level.

Each FL has a function in an assay design. If a FL has as a primary function to label a specific cellular compartment, we call it a structural FL; otherwise, it is a nonstructural FL. Some nonstructural FLs tend to consistently label cellular compartments, even if it is not their primary function. We refer to them as structurally strong. Indeed, a FL can also, on top of its primary role (e.g., to label a protein associated with a signaling pathway), highlight cellular structures useful for segmentation. If they do not consistently do so, we call them structurally weak. For example, due to the unique behavior of individual cells when exposed to chemical compounds, signaling pathways highlighted by nonstructural FLs can lead to translocation to different cellular compartments, increased/decreased expression, or altered distribution. In fact, we observe that nonstructural FLs lie on a continuum between both categories: the structural and morphological information they carry can vary significantly.

Deep learning models for cell instance segmentation have recently reached the quality of manual annotations( 2 – 4 ), especially thanks to the emergence of models like U-Net( 5 ). Cellpose( 4 ) is a notable U-Net-based approach, which uses a multi-modal training dataset spanning several cell types and cell lines imaged under a variety of different imaging methods. It also benefits from being associated with a large community which incrementally increases the size and diversity of the dataset, in turn improving the performance of the model. Cellpose approaches the problem of multiple instance segmentation by predicting spatial gradient maps from the images, from which individual cell segmentations can be inferred. Other recent deep learning segmentation methods for cell biology are StarDist( 2 ) and NucleAIzer( 3 ) which make use of the U-Net architecture as well but with different representation for their images, and Mask-RCNN( 6 ) which directly segments regions of interests (ROIs) in images with deep learning methods. We select Cellpose as a foundation for our approach as it is a widely used and well-designed framework which offers to be the most generalist with its community-driven, ever-expanding training dataset.

However, a limitation of Cellpose is its heavy reliance on structural FLs for nucleic and cytoplasmic segmentation.

Cellpose was trained on several datasets, most of which relied on active staining of organelles’ structural proteins (e.g., cytoskeleton for cytoplasm)( 7 ). Indeed, only 15% of the Cellpose training dataset contains other fluorescent FLs. While such structural FLs are generally integrated in assay designs, being able to segment cells without using them allows to maximize the number of nonstructural FLs. Since the total number of available microscopy channels is subject to numerous limitations—such as the bleed-through effect( 8 )—and is typically limited to 4, the two extra channels made available can lead to assays delivering richer information about cellular processes.

In this article, we demonstrate that nonstructural FLs may contain information about cell and nuclear morphology that can be leveraged, even though they are not optimized in this regard, in contrast with structural FLs. Additionally, we demonstrate that a collection of nonstructural FLs are a sufficient substitute for structural FLs with regards to segmentation.

We note that our approach follows recent trends toward the “expertization” of generalist models like Cellpose 2.0( 9 ), encouraging the prediction of a wider range of cellular image types and styles, with very small additional training from humans in the loop. However, while those recent approaches still only apply to cell images with structural FLs, we hereby propose an extension to nonstructural FLs.

We thus propose a generic framework for nuclear and cytoplasmic segmentation without the need to include corresponding structural FLs in the assay design. Our framework requires few annotations to finetune a pre-trained generalist deep learning segmentation base model—here Cellpose—on each FL with a small set of annotated images. We show, on multiple datasets that we provide, that by combining the predictions from multiple nonstructural FLs with various structural characteristics, we are able to reach segmentation performance comparable to the state of the art without relying on structural FLs.

Our contribution has a significant impact on (a) cost, as we reduce the number of assays needed to extract the same biological information, by removing the dependency on one or two experimental structural FLs for accurate cell segmentation and (b) possible discoveries in downstream analysis, as we can monitor additional functionally relevant FLs for the exact same cells, thus obtaining a richer description of each cell’s phenotypic response to a perturbation.

2. Data

The context of our work is a very flexible live-cell imaging experimental framework that enables the analysis of a variety of cell lines and FLs reporting on a wide range of cellular processes over time. Furthermore, experimental parameters such as temporal and spatial resolution can be changed, as well as acquisition conditions. Those characteristics impact the microscope’s acquisition mode and thus the resulting noise and appearance of cells.

Accordingly, it is important to ensure our computational workflow is sufficiently flexible to accommodate changes in FLs, cell lines, and acquisition parameters.

2.1. Image data

We acquired data on five multi-color reporter cell lines derived from commonly used U2OS( 10 ) and A375( 11 ) cancer cell lines, that we designated CL1, …, CL5. In this article, we work with live-cell imaging data where fluorescent labeling is done by tagging proteins of interest with fluorophores, the combination of which we refer to as fluorescent reporter proteins (FRPs). Each reporter cell line expresses three or four spectrally distinct FRPs. Unless otherwise stated, we acquired all images with a Nikon A1R confocal microscope as a live video microscopy imaging sequence. For each experimental condition, we acquired images on three xy positions every 3 hr for 72 hr, at various optical zooms.

The dataset was acquired in the context of perturbation screens, in which cells are treated with multiple compounds, in a high-content screening (HCS) setup. It aggregates images from five assays. A table listing applied compounds is available in the Supplementary Material.

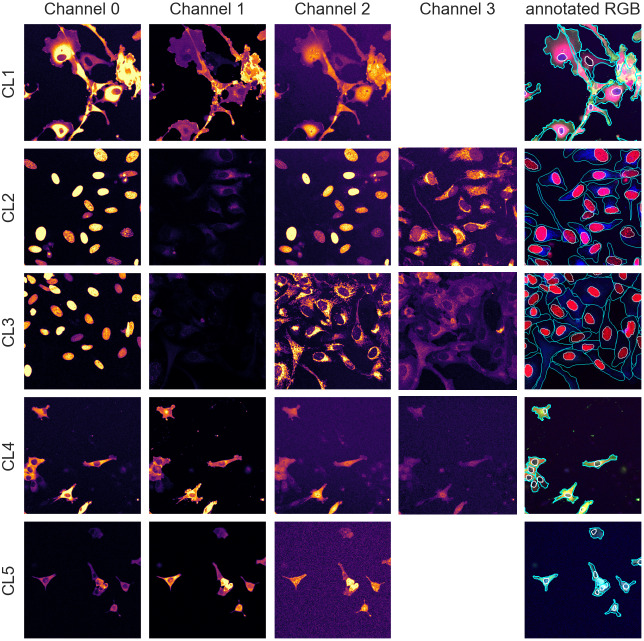

Table 1 helps understand the structural nature of the nonstructural FRPs used in the cell lines evaluated here. While some are structurally strong, labeling consistently a specific organelle (e.g., P1 of CL1), most are dynamic and can be considered as weak. Cell line CL5, for example, only expresses structurally weak FRPs.

Table 1.

Description of cell line components used in the assays—parental cell line, cell line name, number of annotated images, size of pixels, fluorescent reporter protein (FRP), channel number, localization, and structural characterization.

| Parental cell line | Cell line | # Annotated samples | Pixel size (μM) | FRP | Channel # | Localization | Structural characterization |

|---|---|---|---|---|---|---|---|

| U2OS | CL1 | 50 | 1.24 | mCerulean-RAF | 0 | Constant (cytoplasm) | Cyto: Strong Nuclei: Weak |

| Venus-RAS | 1 | Constant (nuclei and cytoplasm) | Cyto: Weak Nuclei: Strong |

||||

| mCherry-ERK | 2 | Dynamic (nuclei and cytoplasm) | Cyto: Weak Nuclei: Weak |

||||

| CL2 | 28 | 0.43 | mCerulean-53BP1trunc | 0 | Constant (nuclei) | Cyto: Weak Nuclei: Strong |

|

| Venus-ATF6 | 1 | Dynamic (endoplasmic reticulum) | Cyto: Weak Nuclei: Strong |

||||

| H2B-mCherry | 2 | Constant (nuclei) | Cyto: Weak Nuclei: Strong |

||||

| MTS-miRFP | 3 | Dynamic (mitochondria) | Cyto: Weak Nuclei: Weak |

||||

| CL3 | 22 | 0.43 | H2B-TagBFP | 0 | Constant (nuclei) | Cyto: Weak Nuclei: Strong |

|

| Venus-LC3 | 1 | Dynamic (cytoplasm) | Cyto: Weak Nuclei: Weak |

||||

| MTS-mCherry | 2 | Dynamic (mitochondria) | Cyto: Weak Nuclei: Weak |

||||

| palm-miRFP | 3 | Dynamic (membrane) | Cyto: Weak Nuclei: Weak |

||||

| A375 | CL4 | 50 | 1.24 | mCerulean-RAF | 0 | Constant (cytoplasm) | Cyto: Strong Nuclei: Weak |

| Venus-RAS | 1 | Constant (nuclei and cytoplasm) | Cyto: Strong Nuclei: Weak |

||||

| mCherry-ERK | 2 | Dynamic (nuclei and cytoplasm) | Cyto: Strong Nuclei: Weak |

||||

| miRFP-MEK | 3 | Constant (cytoplasm) | Cyto: Strong Nuclei: Weak |

||||

| CL5 | 50 | 1.24 | AKT-KTR-mCerulean | 0 | Dynamic (nuclei and cytoplasm) | Cyto: Weak Nuclei: Weak |

|

| ERK-KTR-Venus | 1 | Dynamic (nuclei and cytoplasm) | Cyto: Strong Nuclei: Weak |

||||

| miRFP-LC3 | 2 | Dynamic (cytoplasm) | Cyto: Strong Nuclei: Weak |

Note. For RAS and RAF proteins, different isoforms and/or mutations were tagged.

Abbreviations: KTR, kinase translocation reporter; MTS, mitochondria targeting signal; palm, palmitoylation signal; 53BP1trunc, 53BP1trunc(1220-1711).

2.2. Annotated dataset

We manually annotated the nuclei and cell boundary of 50 (resp. 28 and 22) images for each cell line/FL combination of cell lines CL1, CL4, and CL5 (resp. cell lines CL2 and CL3). We randomly selected these images from different experimental conditions and evenly distributed over time in order to capture the dynamic localization of certain proteins (e.g., due to experimental treatments and levels of expression at different stages of cell cycle) as well as possible variations in population size caused by cell division or cell death. The number of cells per image ranges from 20 cells to more than 100 in some images. For each set of annotated images, 80% were used for training, 10% for validation, and the last 10% for evaluation.

With an average of 50 cells per images, we have about 250 cells per validation/test set and approximately 2,000 individual cells in the training set, an appropriate number for training and evaluation. The annotations were carried out and validated by multiple biologists using the different channels available in combination. Example images and manual annotations are displayed in Figure 1.

Figure 1.

Image samples from the different assays showing individual fluorescence channels as well as a color version with manual segmentation annotations overlay. The images are cropped for ease of visualization.

Furthermore, to test our method for robustness to changes in assay and acquisition parameters, we also annotated five additional images of the CL1 cell line acquired with a different microscope (widefield) and higher temporal resolution, resulting in noisier images. We used this dataset for evaluation purposes only as presented in Section 4.3.2.

All datasets are publicly available at doi.org/10.6084/m9.figshare.21702068.

3. Methods

3.1. Generalist segmentation model backbone

In this article, we use Cellpose( 4 ), a state-of-the-art cell segmentation model, as a generalist segmentation model backbone.

We note, however, that our approach is model-agnostic and could therefore use alternative backbones( 2 , 3 ).

Cellpose segments images using a three-part pipeline: Firstly, it resizes the images so that the average cell diameter of the dataset conform to the model original training cells’ diameter.

Secondly, for a cell object

, a Cellpose model

, a Cellpose model

maps a rescaled image

maps a rescaled image

intrinsic intensity space to a flow and probability space

intrinsic intensity space to a flow and probability space

. The flow maps

. The flow maps

are the derivatives (along the X and Y axes) of a spatial diffusion representation of individual cell pixels from the cell’s center of mass to its extremities. Thirdly, Cellpose combines the

are the derivatives (along the X and Y axes) of a spatial diffusion representation of individual cell pixels from the cell’s center of mass to its extremities. Thirdly, Cellpose combines the

flow and probability maps to predict instance segmentations

flow and probability maps to predict instance segmentations

using flow analysis and thresholding on all three maps combined. First, the flows

using flow analysis and thresholding on all three maps combined. First, the flows

and

and

are interpolated and consolidated where the pixel-wise probabilities

are interpolated and consolidated where the pixel-wise probabilities

are above a pre-set threshold. The instance masks are then generated by analyzing the flows histogram from their peak. Cellpose overall segmentation process is summarized in the following equation:

are above a pre-set threshold. The instance masks are then generated by analyzing the flows histogram from their peak. Cellpose overall segmentation process is summarized in the following equation:

| (1) |

3.2. Segmentation model finetuning approach

In order to segment images with nonstructural FLs, we leverage the generalization powers of Cellpose to build a model zoo of pre-trained models finetuned on each of our cell line’s FLs using annotated data. Similarly to the out-of-the-box pre-trained generalist Cellpose model (which we call Vanilla Cellpose in the remainder of this article), our method rescales the images to the average diameter of the training set.

To finetune Vanilla Cellpose, we train for each organelle and cell line

combination (with

combination (with

and

and

one of the dataset cell lines) several Cellpose models on subsets of channels

one of the dataset cell lines) several Cellpose models on subsets of channels

taken from the powerset of the set of channels (excluding the empty set), with

taken from the powerset of the set of channels (excluding the empty set), with

the total number of channels. For simplicity, we denote this powerset as

the total number of channels. For simplicity, we denote this powerset as

. When

. When

, each individual channel of

, each individual channel of

from the same image sample is inputted as independent training samples. Each finetuned model

from the same image sample is inputted as independent training samples. Each finetuned model

is then used to predict segmentation flows by evaluating each channel from

is then used to predict segmentation flows by evaluating each channel from

individually. Those segmentations are then fused together at inference to produce a single segmentation map. To segment an image for an

individually. Those segmentations are then fused together at inference to produce a single segmentation map. To segment an image for an

combination we end up using the model trained using the channels

combination we end up using the model trained using the channels

for which the highest score is achieved on our evaluation set using the channels

for which the highest score is achieved on our evaluation set using the channels

.

.

| (2) |

When

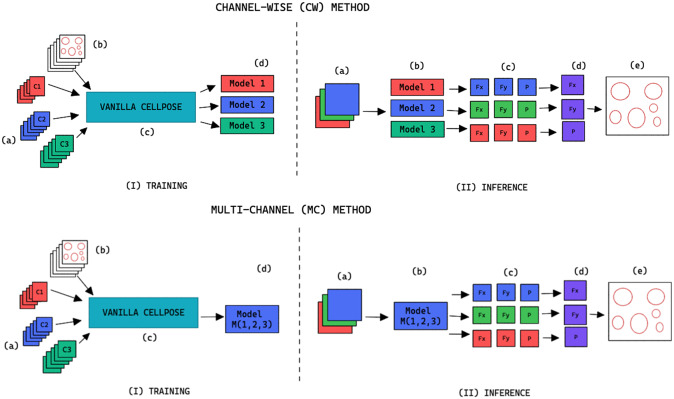

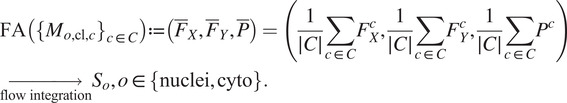

, we refer to the finetuning method as channel-wise (CW), such that a model is trained for each individual channel (see the upper part of Figure 2). The respective CW models can be used on their respective training channels or can be aggregated together to produce a channel-wise segmentation for several channels at once.

, we refer to the finetuning method as channel-wise (CW), such that a model is trained for each individual channel (see the upper part of Figure 2). The respective CW models can be used on their respective training channels or can be aggregated together to produce a channel-wise segmentation for several channels at once.

Figure 2.

Training and inference workflow for the segmentation of cell organelles without the use of structural FL using the channel-wise approach (top) and multi-channel approach (bottom). (I) Training: (a) Training set of multi-modal fluorescent images (three channels represented as red blue and green), (b) Training set annotations of the organelles segmentations, (c) Out-of-the-box pre-trained Cellpose model (Vanilla Cellpose), and (d) Finetuned model trained for each of the individual channels (channel-wise) or trained with a subset of the channels (multi-channel). (II) Inference: (a) Multi-modal fluorescent image (three channels), (b) Models selected from the model zoo corresponding to the image’s cell line and FL channel combination, (c) Spatial flows and probability maps output by the finetuned models for each of the channels, (d) Channel-wise averaging of the maps, and (e) Integration into the segmentation labels.

When

, we refer to the finetuning method as multi-channel (MC), such that a model is trained on several channels at once (see bottom part of Figure 2). The MC segmentation models can be evaluated on any subset of the channel set

, we refer to the finetuning method as multi-channel (MC), such that a model is trained on several channels at once (see bottom part of Figure 2). The MC segmentation models can be evaluated on any subset of the channel set

they were trained with.

they were trained with.

3.3. Segmentation model finetuning parameters

To finetune Cellpose, we train from the available generalist pre-trained model on our dataset using the approach detailed in Section 3.2. We retain the model’s hyper-parameters from the original Vanilla Cellpose training with the following two exceptions: (a) as detailed in Section 3.4, we use nondeterministic augmentations on each of our training samples; (b) we stop the training using early stopping on the validation set( 12 ), with a patience of 50.

Both additions are efficient regularization methods limiting over-fitting and contributing to the overall robustness of the segmentation methods with respect to changes in the imaging setting.

3.4. Data augmentation

Augmentation methods are used during training of the Vanilla Cellpose model to both virtually increase the size of our dataset as well as offer better generalization. They are performed iteratively from scratch on each image batch. For methods involving random distributions, the parameters are uniformly sampled from a pre-defined parameter range.

Each augmentation has an application probability

, adding more variability across epochs and samples. The augmentation methods are described in Table 2.

, adding more variability across epochs and samples. The augmentation methods are described in Table 2.

Table 2.

Augmentations applied to the training set during the finetuning of Cellpose models.

| Augmentation method | Parameter(s) | Parameter(s) distribution | Intent |

|---|---|---|---|

| Scaling | Scaling factor

|

|

Expand dataset with invariance to cell size |

| Rotation | Rotation angle

|

|

Expand dataset with invariance to cell orientation |

| Flipping | Probability

and

and

|

|

Expand dataset with invariance to cell orientation |

| Additive White Gaussian Noise (AWGN) | Mean

and standard deviation

and standard deviation

|

|

Emulate variation in the expression of the lit-up fluorescent pixels and random background noise( 13 ) |

| Poisson Noise | — | — | Emulate the noise generated by the fluorescence\microscope imaging( 14 , 15 ) |

| Salt and pepper |

,

,

, and

, and

|

|

Emulate variation in the location and expression of the FLs by simulating activation or inhibitions of fluorescent proteins( 16 ) |

| Brightness | Shift

|

|

Emulate the variance in microscope image acquisition and fluorescence intensity( 17 ) |

3.5. Segmentation fusion

Once the segmentations are generated for each image channel using fine-tuned models, a final image segmentation is generated by fusing the individual channel segmentation maps. We implement a method we name Flow Averaging (FA) to do so. We also consider several state-of-the-art methods. Fusion methods are described in Table 3.

Table 3.

Description of the segmentation fusion methods considered to generate aggregated segmentations from channel-wise segmentations.

| Method | Description | References |

|---|---|---|

| Flow Averaging (FA) (This study) | Fusion of flow maps followed by flow analysis | — |

| Selective and Iterative Method for Performance Level Estimation (SIMPLE) | Iterative majority voting over propagated segmentations, weighted by estimated performance | ( 18 ) |

| Simultaneous Truth and Performance Level Estimation (STAPLE) | Statistical fusion framework using hierarchical models of rater performance | ( 19 ) |

| Voting (V) | Pixel-wise voting | ( 20 ) |

| Majority Voting (MV) | Majority label voting in images patches | ( 21 ) |

The FA method uses Cellpose internal representations to aggregate the segmentation maps. FA averages the segmentation probability maps and flow maps obtained by running finetuned models on each channel individually, to obtain a final aggregated segmentation map.

Given

channels selected for an image, our approach yields

channels selected for an image, our approach yields

segmentation tuples

segmentation tuples

generated by the Cellpose-U-Net(s). From these tuples we average each individual maps along the channel dimensions, yielding maps

generated by the Cellpose-U-Net(s). From these tuples we average each individual maps along the channel dimensions, yielding maps

. The averaged maps are then transformed into instance segmentation masks using Cellpose’s integration method.

. The averaged maps are then transformed into instance segmentation masks using Cellpose’s integration method.

|

(3) |

3.6. General workflow summary

Our workflow can be summarized as follows, for an organelle and cell line

combination:

combination:

Annotate the organelles

on

on

images of the imaging assay of cell line

images of the imaging assay of cell line

Finetune a Vanilla Cellpose model

using the FL channels of

using the FL channels of

, for all (nonempty) subsets

, for all (nonempty) subsets

of the powerset of available channels in

of the powerset of available channels in

. For better readability, we consider the cell line

. For better readability, we consider the cell line

and organelle

and organelle

fixed in the latter and denote the model

fixed in the latter and denote the model

as

as

.

.Evaluate the finetuned models on a validation set, using the channel-wise and multi-channel strategies on individual channels or the fusions of channels.

Select out the best-performing model

and channels upon which to evaluate it

and channels upon which to evaluate it

using an evaluation metric

using an evaluation metric

(as defined in Section 4.1) on a validation set:

(as defined in Section 4.1) on a validation set:

|

(4) |

with

corresponding to the score obtained on average on a validation set when using a model trained on training channel(s)

corresponding to the score obtained on average on a validation set when using a model trained on training channel(s)

for inference on evaluation channel(s)

for inference on evaluation channel(s)

, using segmentation fusion when

, using segmentation fusion when

.

.

Use

for inference with channels

for inference with channels

—using segmentation fusion if

—using segmentation fusion if

—on new images of the (o, cl) combination, while keeping other models in the model zoo for potential future cell lines stemming from the same parental cell line and with some intersections in the respective sets of FLs.

—on new images of the (o, cl) combination, while keeping other models in the model zoo for potential future cell lines stemming from the same parental cell line and with some intersections in the respective sets of FLs.

A pseudo-code version of the proposed workflow is provided in the Supplementary Material.

4. Performance Evaluation Results

4.1. Evaluation metrics

For segmentation evaluation, we use the precision, recall, and F1-score. A predicted segmentation is considered as a true positive if the intersection-over-union (IoU) between this segmentation and a ground truth segmentation is above a threshold here set at 0.5. Recall is the percentage of cells detected, precision is the probability that a detected cell is really a cell, and F1-score is the harmonic mean of the two. We use the F1-score as our principal metric of evaluation for our models performance, as it measures accuracy through both precision and recall. Additionally, we also compute the Jaccard similarity( 22 ), the aggregated Jaccard index( 23 ), and the average precision( 2 ). The evaluation using these metrics is available in the Supplementary Material.

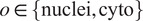

4.2. Segmentation fusion methods evaluation

Table 4 conveys the performance of the different segmentation fusion methods tested in this work. The benchmarking is shown on the aggregation of all channels for each image, on both nuclei and cytoplasm, using the channel-wise method. The results clearly indicate a better performance when using the FA method introduced in Section 3.5: FA always appears as the best fusion method among the ones benchmarked here, in some cases with a large margin.

Table 4.

Comparison of the performance of the different channel fusion methods on the test set images, assessed with F1-score as a segmentation metric.

| Organelle | Fusion method | CL1 | CL2 | CL3 | CL4 | CL5 |

|---|---|---|---|---|---|---|

| 0, 1, 2 (CW) | 0, 1, 2, 3 (CW) | 0, 1, 2, 3 (CW) | 0, 1, 2, 3 (CW) | 0, 1, 2 (CW) | ||

| Cytoplasm | FA | 0.8135 | 0.5138 | 0.3264 | 0.7769 | 0.6873 |

| SIMPLE | 0.729 | 0.4744 | 0.2967 | 0.6846 | 0.4218 | |

| STAPLE | 0.8043 | 0.4043 | 0.2876 | 0.6881 | 0.6635 | |

| Voting | 0.729 | 0.4571 | 0.3094 | 0.6916 | 0.4218 | |

| Majority Voting | 0.729 | 0.4344 | 0.3122 | 0.7035 | 0.4218 | |

| Nuclei | FA | 0.904 | 0.6871 | 0.843 | 0.8758 | 0.7994 |

| SIMPLE | 0.8016 | 0.3804 | 0.7634 | 0.7303 | 0.638 | |

| STAPLE | 0.8016 | 0.4398 | 0.7341 | 0.6822 | 0.6449 | |

| Voting | 0.8016 | 0.2968 | 0.7194 | 0.6784 | 0.6449 | |

| Majority Voting | 0.8016 | 0.3266 | 0.6941 | 0.6817 | 0.6449 |

Note. It must be noted that the comparison is drawn between the fusion of all channels for each cell lines, evaluated using the Channel-wise strategy—and not the best scoring strategy or set of training/evaluation channels. Although the fusion method performance holds for other variants of our overall method.

We believe that this result is owed to the fact that the diffusion maps are optimized representations combining information about the pixel-level probability and object-level shape properties. This makes them particularly useful for fusion and conveys them an advantage over raw image fusion and fusion of segmentation masks. We therefore set FA as the default fusion method, and present our workflow results using FA in the remainder of the article.

4.3. Segmentation evaluation

4.3.1. Results

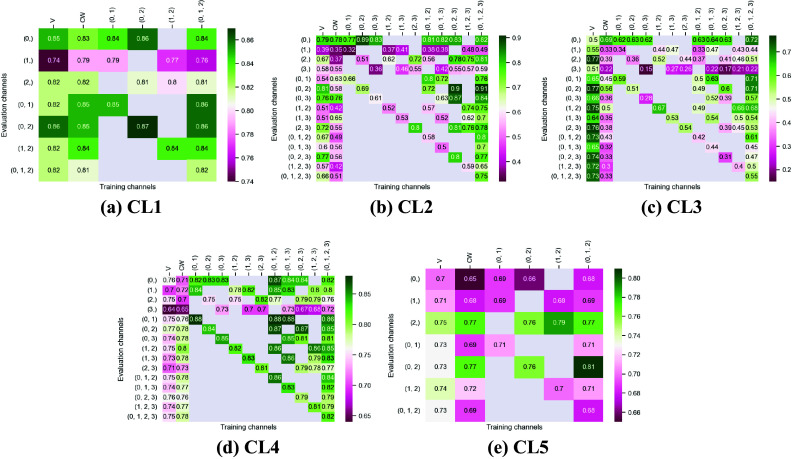

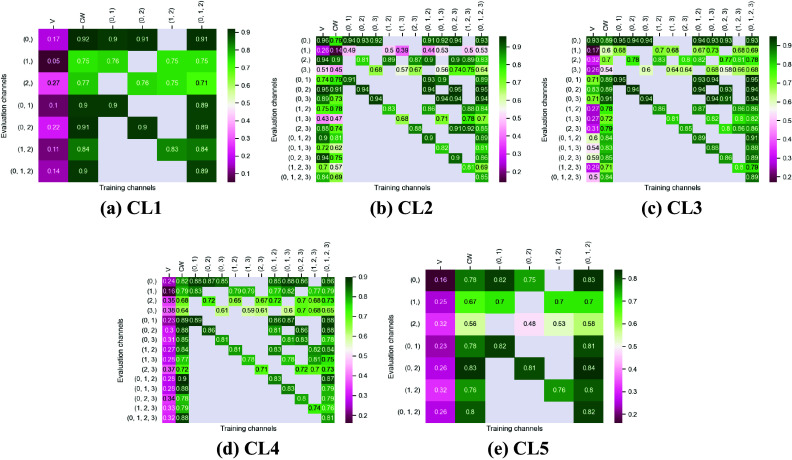

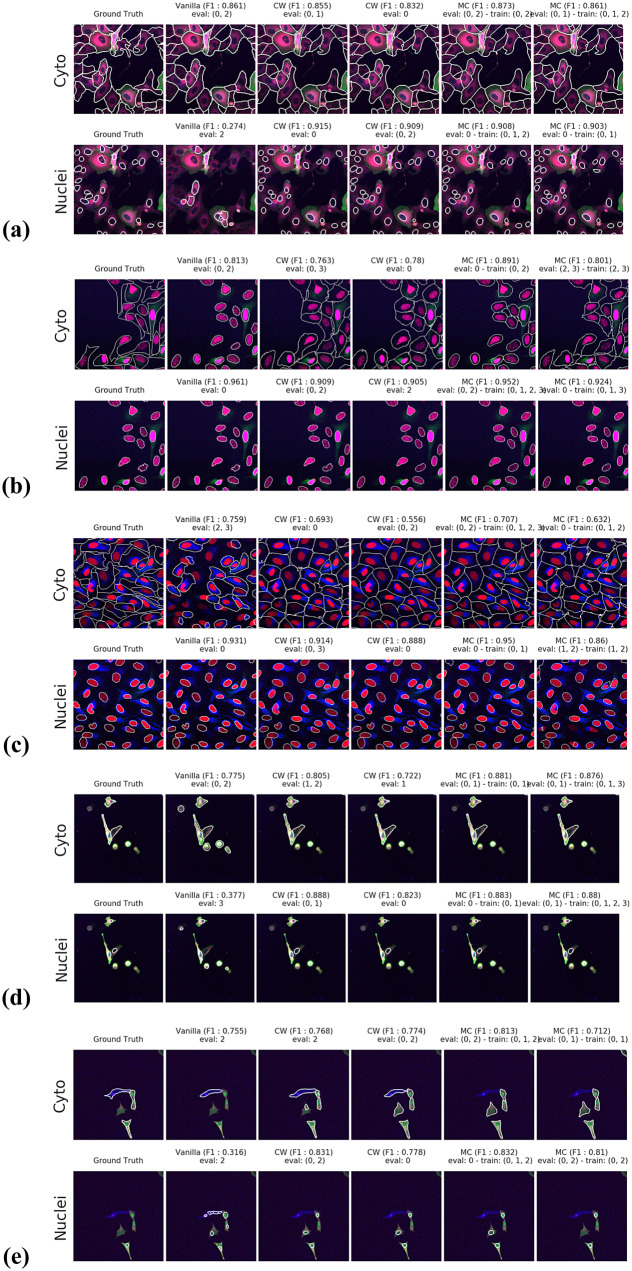

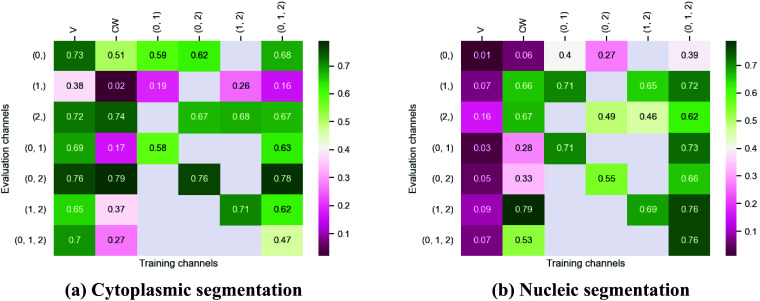

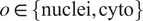

Figures 3 and 4 display the performance of our method using the F1-score metric. Those results were computed using fivefold cross-validation on annotated datasets. We evaluate the performance of Vanilla Cellpose against our method across every combination of training channels (channel-wise and multi-channel) upon every combination of evaluation channel aggregated together using the FA fusion method. The Vanilla Cellpose scores are evaluated on several channels at once using this fusion method as well. Examples of our segmentation are displayed in Figure 5.

Figure 3.

Fivefold cross-validated F1-scores for cytoplasm segmentation on all five cell lines. These tables show the evaluation using Vanilla Cellpose (V), the channel-wise (CW) strategy, and the multi-channel (MC) strategy as columns, on the powerset of channels as rows, aggregated together using the Flow Averaging (FA) method.

Figure 4.

Fivefold cross-validated F1-scores for nuclei segmentation on all five cell lines. These tables show the evaluation using Vanilla Cellpose (V), the channel-wise (CW) strategy, and the multi-channel (MC) strategy as columns, on the powerset of channels as rows, aggregated together using the Flow Averaging (FA) method.

Figure 5.

Segmentation examples using the proposed method on the test set images for (a–e) CL1 to CL5. We compare ground truth, Vanilla Cellpose results for best evaluation channel combination, channel-wise (CW), and multi-channel (MC) fine-tuning strategies. The respective training channel combination and evaluation channel combination are detailed in the figures. The images are cropped for ease of visualization.

Our results indicate that fine-tuning is an essential step when dealing with datasets which do not contain cytoplasmic and nucleic structural FLs, as indicated in Table 1. With fine-tuning, we indeed obtain state-of-the-art level results on individual channels trained independently (CW), the fusion of channels evaluated through independently trained models (CW), and the fusion of channels trained together (MC).

Furthermore, we observe that combining the different segmentations together outperforms not only the Vanilla Cellpose results but also the results of those finetuned models on their respective channels in all cases (the only exception being CL3 on cytoplasm which we will discuss in the next section). That is particularly striking for structurally weak FLs which, when aggregated together using either the CW or MC approach, reach the segmentation quality of structurally strong FLs (e.g., static FLs in a single organelle). Additionally, it can be noted that when trained on a set of channels which include both strong and weak FLs, the model performs well upon evaluation on the subset of its training channels which excludes the structurally strong FLs.

4.3.2. Impact of acquisition method on model generalization

We test the generalization capabilities of our model to other imaging acquisition procedures by evaluating the performance drift of a model trained on images with acquisition parameters

(confocal microscope, image size 512 × 512 pixels, and image scale of 1.24 μM per pixel) when applied to images acquired with parameters

(confocal microscope, image size 512 × 512 pixels, and image scale of 1.24 μM per pixel) when applied to images acquired with parameters

(widefield microscope, image size 2,044 × 2,044 pixels, and image scale of 0.32 μm per pixel). We use 50 annotated images acquired under conditions

(widefield microscope, image size 2,044 × 2,044 pixels, and image scale of 0.32 μm per pixel). We use 50 annotated images acquired under conditions

of cell line CL1 for training and testing a model, and 5 annotated images acquired under condition

of cell line CL1 for training and testing a model, and 5 annotated images acquired under condition

of the same cell line to evaluate the aforementioned model performance drift.

of the same cell line to evaluate the aforementioned model performance drift.

The segmentation evaluation results are shown in Figure 6 and demonstrate the ability of our model to generalize to acquisition method

by producing similar segmentation scores and outperforming Vanilla Cellpose. These results indicate that our approach does not merely finetune Cellpose to our dataset, but rather to a specific set of FLs of a cell line, successfully generalizing to other datasets with the same assay-related conditions.

by producing similar segmentation scores and outperforming Vanilla Cellpose. These results indicate that our approach does not merely finetune Cellpose to our dataset, but rather to a specific set of FLs of a cell line, successfully generalizing to other datasets with the same assay-related conditions.

Figure 6.

Evaluation of the F1 scores on the CL1 cell-line imaged with the

acquisition method (widefield). The tables are organized the same way as in Figures 3 and 4.

acquisition method (widefield). The tables are organized the same way as in Figures 3 and 4.

5. Discussion

Our work provides a reliable, reusable, and scalable method for the segmentation of cell images without structural FLs, with manageable annotation effort. Our results show that the proposed workflow leads to models outperforming Vanilla Cellpose on datasets with only nonstructural FLs, while requiring few annotated examples by leveraging Cellpose extended pre-training.

Our results show that leveraging nonstructural (and even structurally weak) FLs in concert improves segmentation, even when the signal is very heterogeneous between cells, and some cells do not appear at all in some channels.

Indeed, each channel provides some useful and potentially complementary information on nucleus and cytoplasm, which can be combined by segmentation fusion. Thus, aggregating channels together allows benefiting from complementary nonstructural FLs to outline individual cell objects. This is demonstrated by results in Figures 3 and 4 and qualitative examples in Figure 5. This observation is especially salient for cell lines which do not contain any structurally strong FLs (such as CL2 and CL3 for cytoplasm, and CL4 for nuclei) or for cell lines which segmentation models were trained and evaluated without their structurally strong FLs (e.g., CL1 without channel 0 for both nuclei and cytoplasm, or CL2 and CL3 without channel 0 and 2 for nuclei). For example, while cell line CL5 only expresses structurally unreliable FLs, it can be seen in Figures 3 and 4 that it is able to leverage its different FLs together to produce cytoplasmic segmentation with an F1-score of

when evaluated on the fusion of channels 0 and 2 using a model finetuned on all of its channels together. Excluding a structurally strong channel from the evaluation results in the same conclusion. For example, nucleic segmentation on CL2 scores an

when evaluated on the fusion of channels 0 and 2 using a model finetuned on all of its channels together. Excluding a structurally strong channel from the evaluation results in the same conclusion. For example, nucleic segmentation on CL2 scores an

of

of

when trained using channels 1 and 3 in MC, with FLs that highlight the endoplasmic reticulum and the mitochondria. Using Vanilla Cellpose on the same evaluation channels yields an F1-score of

when trained using channels 1 and 3 in MC, with FLs that highlight the endoplasmic reticulum and the mitochondria. Using Vanilla Cellpose on the same evaluation channels yields an F1-score of

. Similar results can be observed for CL3 on the same channels.

. Similar results can be observed for CL3 on the same channels.

Furthermore, it is significant to note that the use of a structurally strong FL influences the segmentation quality, even when that FL channel is not used at inference. For example, cell line CL3, which has two structurally strong FLs highlighting the nucleic structure (channels 0 and 2), performs nonetheless very well on segmenting nuclei using only the fusion of channels 1 and 3 when trained using all 4 channels (

). The same can be observed for CL4 on cytoplasmic segmentation: excluding channels 0 and 3 during the evaluation yields an F1-score of

). The same can be observed for CL4 on cytoplasmic segmentation: excluding channels 0 and 3 during the evaluation yields an F1-score of

with a model finetuned on all 4 available channels. This is particularly interesting for the segmentation of cell lines containing subsets of the FLs trained for in our model zoo. Future cell lines could benefit from the multi-channel models trained on some of their FLs as well as stronger—although possibly absent—FLs which would improve the segmentation quality.

with a model finetuned on all 4 available channels. This is particularly interesting for the segmentation of cell lines containing subsets of the FLs trained for in our model zoo. Future cell lines could benefit from the multi-channel models trained on some of their FLs as well as stronger—although possibly absent—FLs which would improve the segmentation quality.

However one must select nonstructural FLs carefully when applying the proposed workflow. Indeed, some FLs by nature or under the influence of a compound introduced in the assay regimen may be too unreliable for the segmentation task. This is exemplified in our results with channel 1 of CL2 on cytoplasmic segmentation. That FL which is dynamic in the endoplasmic reticulum carries almost no information relevant to the cytoplasmic segmentation by itself, as can be seen in Figure 1. Although models finetuned using this channel benefit from its presence (reaching a

F1-score on evaluation of the fusion of channel 0 and 2 trained using all four channels), models evaluated on it or trained with it in over-proportions perform poorly. If a cell line was constituted only of FLs of similarly unreliable structural information, our workflow would not be able to segment cells. It cannot—like any segmentation method—segment any organelles out of thin air, but it can leverage structurally unreliable FLs together—with structurally medium or strong FLs when they are available—to reach the quality of segmentation one would get by including functionally structural FLs in the cell lines.

F1-score on evaluation of the fusion of channel 0 and 2 trained using all four channels), models evaluated on it or trained with it in over-proportions perform poorly. If a cell line was constituted only of FLs of similarly unreliable structural information, our workflow would not be able to segment cells. It cannot—like any segmentation method—segment any organelles out of thin air, but it can leverage structurally unreliable FLs together—with structurally medium or strong FLs when they are available—to reach the quality of segmentation one would get by including functionally structural FLs in the cell lines.

For instance, CL3 is only constituted of structurally weak FLs with regard to cytoplasmic segmentation. The presence of those FLs explains the low F1-score displayed in Figure 3c. However, as can be seen in Figure 5c, it nevertheless outperforms Vanilla Cellpose in terms of recall and detection of each individual cell. In this specific instance—with highly dynamic and unreliable FLs—our method generates segmentation masks in the likes of a Voronoi diagram. While not optimal in its boundary detections, it translates to a better segmentation than Vanilla Cellpose, especially in the context of single-cell phenotypic analysis.

Finally, our approach is limited by the same limitations as Cellpose. Although it works as an “expertization” of generalist models similarly to Ref. (9), it is still constrained by the same limitations. For example, it does not handle occluded or overlapping cells. It is also susceptible to merging or splitting of individual cell instances which could only be corrected with a robust post-processing step. It is also limited in terms of building cell objects, as—like Cellpose—it detects nuclei and cytoplasm independently, thus yielding standalone cytoplasm and nuclei. In theory, these limitations can be overcome by using a different backbone than Cellpose, one which would handle such issues. It is indeed possible to apply our methodology with different architectures using our finetuning approach, although changes to the fusion method would be required.

6. Conclusion

In this work, we have designed, built, and tested a workflow to train and infer segmentations of nuclei and cytoplasm on images without functionally structural FLs and for a variety of cell line/FLs configurations. We demonstrated that our approach could be used for a range of assays while freeing up fluorescence channels for two experiment-specific FLs, where the use of structural FLs take space. We have shown that satisfactory segmentation performance can be achieved and replicated on various assays by leveraging multiple nonstructural FLs. The advantage of our method is that the freed fluorescence channels can then be used to monitor additional functionally relevant FLs. We thus obtain a richer description of each cell’s response to the perturbation, while limiting costs of assays needed to obtain the same biological information.

Our method is easily adaptable to fit a generalist image processing pipeline and be applied on various assays by aggregating segmentation models trained on multiple cell lines and FLs into a model zoo. Such a zoo of fine-tuned models will greatly support microscopy-based cellular assays and HCS.

Acknowledgments

We sincerely thank Amritansh Sharma for his detailed and insightful feedback on the segmentation workflow. In addition, we thank Oliver Lai, Claire Repellin, and Amritansh Sharma for their help annotating images. Finally, we also thank Catherine Lacayo, Claire Repellin, Oliver Lai, and Laura Savy for developing the cell biology tools and conducting the assays that provide the data used in this work.

Authorship contribution

Conceptualization: D.Z., A.F., T.W.; Data curation and visualization: D.Z.; Formal analysis: D.Z., A.F., T.W.; Funding acquisition: A.F., S.A.R., T.W., M.J.C.L.; Methodology: D.Z., A.F.; Software: D.Z., A.F.; Supervision: A.F., T.W.; Writing—original draft and reviewing finalized version: All authors.

Competing interest

The authors declare the following competing interests. All authors are shareholders of Cairn Biosciences and A.F., S.A.R., and M.J.C.L. were full-time employees of Cairn Biosciences, Inc at the time of the study.

Funding statement

This work was supported by grants from Région Ile-de-France through the DIM ELICIT program. The data analysis was carried out using HPC resources from GENCI-IDRIS (Grant 2022—AD011011156R2).

Data availability statement

The data used in this work can be found at http://doi.org/10.6084/m9.figshare.21702068. The code used in this work is not publicly available as it is implemented as part of commercial research that is proprietary to Cairn Biosciences. To ease reproducibility, a pseudo-code version of this implementation is available in the Supplementary Material.

Supplementary material

For supplementary material accompanying this paper visit https://doi.org/10.1017/S2633903X23000168.

click here to view supplementary material

References

- 1.Chandrasekaran SN, Ceulemans H, Boyd JD & Carpenter AE (2020) Image-based profiling for drug discovery: due for a machine-learning upgrade? Nat Rev Drug Dis 20(2), 145–159. doi: 10.1038/s41573-020-00117-w. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 2.Schmidt U, Weigert M, Broaddus C & Myers G (2018) Cell detection with star-convex polygons. In Medical Image Computing and Computer Assisted Intervention – MICCAI 2018, pp. 265–273. Cham: Springer International Publishing. doi: 10.1007/978-3-030-00934-2_30. [DOI] [Google Scholar]

- 3.Hollandi R, Szkalisity A, Toth T, et al. (2020) nucleAIzer: a parameter-free deep learning framework for nucleus segmentation using image style transfer. Cell Syst 10(5), 453–458.e6. doi: 10.1016/j.cels.2020.04.003. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 4.Stringer C, Wang T, Michaelos M & Pachitariu M (2020) Cellpose: a generalist algorithm for cellular segmentation. Nat Method 18(1), 100–106. doi: 10.1038/s41592-020-01018-x. [DOI] [PubMed] [Google Scholar]

- 5.Ronneberger O, Fischer P & Brox T (2015) U-net: convolutional networks for biomedical image segmentation. In International Conference on Medical Image Computing and Computer-Assisted Intervention, pp. 234–241. Cham: Springer. [Google Scholar]

- 6.He K, Gkioxari G, Dollár P & Girshick R (2017) Mask R-CNN. In Proceedings of the IEEE International Conference on Computer Vision, pp. 2961–2969. Venice, Italy: IEEE. [Google Scholar]

- 7.Pachitariu M (2020) Cellpose training dataset [Online]. doi: 10.25378/JANELIA.13270466.V1. https://janelia.figshare.com/articles/dataset/Cellpose_training_dataset/13270466/1. (2023). [DOI]

- 8.Pawley JB, editor (2006) Handbook of Biological Confocal Microscopy. New York: Springer US. doi: 10.1007/978-0-387-45524-2. [DOI] [Google Scholar]

- 9.Stringer C & Pachitariu M (2022) Cellpose 2.0: How to train your own model. bioRxiv. doi: 10.1101/2022.04.01.486764. [DOI] [PMC free article] [PubMed]

- 10.U2OS osteosarcoma cell line (ATCC® HTB-96 TM). https://www.atcc.org/products/htb-96 [Online]. (2023).

- 11.Human malignant melanoma A375 cells (ATCC® CRL-1619TM). https://www.atcc.org/products/crl-1619 [Online]. (2023).

- 12.Yao Y, Rosasco L & Caponnetto A (2007) On early stopping in gradient descent learning. Construct Approx 26(2), 289–315. doi: 10.1007/s00365-006-0663-2. [DOI] [Google Scholar]

- 13.Boulanger J, Kervrann C, Bouthemy P, Elbau P, Sibarita J-B & Salamero J (2010) Patch-based nonlocal functional for denoising fluorescence microscopy image sequences. IEEE Trans Med Imag 29(2), 442–454. doi: 10.1109/tmi.2009.2033991. [DOI] [PubMed] [Google Scholar]

- 14.Zhang Y, Zhu Y, Nichols E, et al. (2019) A Poisson–Gaussian denoising dataset with real fluorescence microscopy images. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 11710–11718. Long Beach, CA: IEEE. [Google Scholar]

- 15.Laine RF, Jacquemet G & Krull A (2021) Imaging in focus: an introduction to denoising bioimages in the era of deep learning. Int J Biochem Cell Biol 140, 106077. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 16.Tofighi M, Kose K & Cetin AE (2015) Denoising images corrupted by impulsive noise using projections onto the epigraph set of the total variation function (PES-TV). Signal Image Video Process 9(S1), 41–48. doi: 10.1007/s11760-015-0827-8. [DOI] [Google Scholar]

- 17.Morelli R, Clissa L, Amici R, et al. (2021) Automating cell counting in fluorescent microscopy through deep learning with c-ResUnet. Sci Rep 11(1), 22920. doi: 10.1038/s41598-021-01929-5. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 18.Langerak TR, van der Heide UA, Kotte NTJA, Viergever MA, van Vulpen M & Pluim JPW (2010) Label fusion in atlas-based segmentation using a selective and iterative method for performance level estimation (SIMPLE). IEEE Trans Med Imag 29(12), 2000–2008. doi: 10.1109/tmi.2010.2057442. [DOI] [PubMed] [Google Scholar]

- 19.Asman AJ, Dagley AS & Landman BA (2014) Statistical label fusion with hierarchical performance models. In SPIE Proceedings [Ourselin S and Styner MA, editors]. Washington, DC: SPIE. doi: 10.1117/12.2043182. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 20.Rohlfing T & Maurer CR (2005) Multi-classifier framework for atlas-based image segmentation. Pattern Recognit Lett 26(13), 2070–2079. doi: 10.1016/j.patrec.2005.03.017. [DOI] [Google Scholar]

- 21.Huo J, Wang G, Wu QMJ & Thangarajah A (2015) Label fusion for multi-atlas segmentation based on majority voting. In Lecture Notes in Computer Science, pp. 100–106. Cham: Springer International Publishing. doi: 10.1007/978-3-319-20801-5_11. [DOI] [Google Scholar]

- 22.Jaccard P (1912) The distribution of the flora in the alpine zone. New Phytol 11(2), 37–50. doi: 10.1111/j.1469-8137.1912.tb05611.x. [DOI] [Google Scholar]

- 23.Kumar N, Verma R, Sharma S, Bhargava S, Vahadane A & Sethi A (2017) A dataset and a technique for generalized nuclear segmentation for computational pathology. IEEE Trans Med Imag 36(7), 1550–1560. doi: 10.1109/TMI.2017.2677499. [DOI] [PubMed] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.

Supplementary Materials

For supplementary material accompanying this paper visit https://doi.org/10.1017/S2633903X23000168.

click here to view supplementary material

Data Availability Statement

The data used in this work can be found at http://doi.org/10.6084/m9.figshare.21702068. The code used in this work is not publicly available as it is implemented as part of commercial research that is proprietary to Cairn Biosciences. To ease reproducibility, a pseudo-code version of this implementation is available in the Supplementary Material.