Abstract

Purposeful sampling is widely used in qualitative research for the identification and selection of information-rich cases related to the phenomenon of interest. Although there are several different purposeful sampling strategies, criterion sampling appears to be used most commonly in implementation research. However, combining sampling strategies may be more appropriate to the aims of implementation research and more consistent with recent developments in quantitative methods. This paper reviews the principles and practice of purposeful sampling in implementation research, summarizes types and categories of purposeful sampling strategies and provides a set of recommendations for use of single strategy or multistage strategy designs, particularly for state implementation research.

Keywords: mental health services, children and adolescents, mixed methods, qualitative methods implementation, state systems

Recently there have been several calls for the use of mixed method designs in implementation research (Proctor et al., 2009; Landsverk et al., 2012; Palinkas et al. 2011; Aarons et al., 2012). This has been precipitated by the realization that the challenges of implementing evidence-based and other innovative practices, treatments, interventions and programs are sufficiently complex that a single methodological approach is often inadequate. This is particularly true of efforts to implement evidence-based practices (EBPs) in statewide systems where relationships among key stakeholders extend both vertically (from state to local organizations) and horizontally (between organizations located in different parts of a state). As in other areas of research, mixed method designs are viewed as preferable in implementation research because they provide a better understanding of research issues than either qualitative or quantitative approaches alone (Palinkas et al., 2011). In such designs, qualitative methods are used to explore and obtain depth of understanding as to the reasons for success or failure to implement evidence-based practice or to identify strategies for facilitating implementation while quantitative methods are used to test and confirm hypotheses based on an existing conceptual model and obtain breadth of understanding of predictors of successful implementation (Teddlie & Tashakkori, 2003).

Sampling strategies for quantitative methods used in mixed methods designs in implementation research are generally well-established and based on probability theory. In contrast, sampling strategies for qualitative methods in implementation studies are less explicit and often less evident. Although the samples for qualitative inquiry are generally assumed to be selected purposefully to yield cases that are “information rich” (Patton, 2001), there are no clear guidelines for conducting purposeful sampling in mixed methods implementation studies, particularly when studies have more than one specific objective. Moreover, it is not entirely clear what forms of purposeful sampling are most appropriate for the challenges of using both quantitative and qualitative methods in the mixed methods designs used in implementation research. Such a consideration requires a determination of the objectives of each methodology and the potential impact of selecting one strategy to achieve one objective on the selection of other strategies to achieve additional objectives.

In this paper, we present different approaches to the use of purposeful sampling strategies in implementation research. We begin with a review of the principles and practice of purposeful sampling in implementation research, a summary of the types and categories of purposeful sampling strategies, and a set of recommendations for matching the appropriate single strategy or multistage strategy to study aims and quantitative method designs.

Principles of Purposeful Sampling

Purposeful sampling is a technique widely used in qualitative research for the identification and selection of information-rich cases for the most effective use of limited resources (Patton, 2002). This involves identifying and selecting individuals or groups of individuals that are especially knowledgeable about or experienced with a phenomenon of interest (Cresswell & Plano Clark, 2011). In addition to knowledge and experience, Bernard (2002) and Spradley (1979) note the importance of availability and willingness to participate, and the ability to communicate experiences and opinions in an articulate, expressive, and reflective manner. In contrast, probabilistic or random sampling is used to ensure the generalizability of findings by minimizing the potential for bias in selection and to control for the potential influence of known and unknown confounders.

As Morse and Niehaus (2009) observe, whether the methodology employed is quantitative or qualitative, sampling methods are intended to maximize efficiency and validity. Nevertheless, sampling must be consistent with the aims and assumptions inherent in the use of either method. Qualitative methods are, for the most part, intended to achieve depth of understanding while quantitative methods are intended to achieve breadth of understanding (Patton, 2002). Qualitative methods place primary emphasis on saturation (i.e., obtaining a comprehensive understanding by continuing to sample until no new substantive information is acquired) (Miles & Huberman, 1994). Quantitative methods place primary emphasis on generalizability (i.e., ensuring that the knowledge gained is representative of the population from which the sample was drawn). Each methodology, in turn, has different expectations and standards for determining the number of participants required to achieve its aims. Quantitative methods rely on established formulae for avoiding Type I and Type II errors, while qualitative methods often rely on precedents for determining number of participants based on type of analysis proposed (e.g., 3-6 participants interviewed multiple times in a phenomenological study versus 20-30 participants interviewed once or twice in a grounded theory study), level of detail required, and emphasis of homogeneity (requiring smaller samples) versus heterogeneity (requiring larger samples) (Guest, Bunce & Johnson., 2006; Morse & Niehaus, 2009; Padgett, 2008).

Types of purposeful sampling designs

There exist numerous purposeful sampling designs. Examples include the selection of extreme or deviant (outlier) cases for the purpose of learning from an unusual manifestations of phenomena of interest; the selection of cases with maximum variation for the purpose of documenting unique or diverse variations that have emerged in adapting to different conditions, and to identify important common patterns that cut across variations; and the selection of homogeneous cases for the purpose of reducing variation, simplifying analysis, and facilitating group interviewing. A list of some of these strategies and examples of their use in implementation research is provided in Table 1.

Table 1.

Purposeful sampling strategies in implementation research

| Strategy | Objective | Example | Considerations |

|---|---|---|---|

| Emphasis on similarity | |||

| Criterion-i | To identify and select all cases that meet some predetermined criterion of importance |

Selection of consultant trainers and program leaders at study sites to facilitators and barriers to EBP implementation (Marshall et al., 2008). |

Can be used to identify cases from standardized questionnaires for in- depth follow-up (Patton, 2002) |

| Criterion-e | To identify and select all cases that exceed or fall outside a specified criterion |

Selection of directors of agencies that failed to move to the next stage of implementation within expected period of time. |

|

| Typical case | To illustrate or highlight what is typical, normal or average |

A child undergoing treatment for trauma (Hoagwood et al., 2007) |

The purpose is to describe and illustrate what is typical to those unfamiliar with the setting, not to make generalized statements about the experiences of all participants (Patton, 2002). |

| Homogeneity | To describe a particular subgroup in depth, to reduce variation, simplify analysis and facilitate group interviewing |

Selecting Latino/a directors of mental health services agencies to discuss challenges of implementing evidence- based treatments for mental health problems with Latino/a clients. |

Often used for selecting focus group participants |

| Snowball | To identify cases of interest from sampling people who know people that generally have similar characteristics who, in turn know people, also with similar characteristics. |

Asking recruited program managers to identify clinicians, administrative support staff, and consumers for project recruitment (Green & Aarons, 2011). |

Begins by asking key informants or well- situated people “Who knows a lot about…” (Patton, 2001) |

| Extreme or deviant case | To illuminate both the unusual and the typical |

Selecting clinicians from state agencies or mental health with best and worst performance records or implementation outcomes |

Extreme successes or failures may be discredited as being too extreme or unusual to yield useful information, leading one to select cases that manifest sufficient intensity to illuminate the nature of success or failure, but not in the extreme. |

| Emphasis on variation | |||

| Intensity | Same objective as extreme case sampling but with less emphasis on extremes |

Clinicians providing usual care and clinicians who dropped out of a study prior to consent to contrast with clinicians who provided the intervention under investigation. (Kramer & Burns, 2008) |

Requires the researcher to do some exploratory work to determine the nature of the variation of the situation under study, then sampling intense examples of the phenomenon of interest. |

| Maximum variation | Important shared patterns that cut across cases and derived their significance from having emerged out of heterogeneity. |

Sampling mental health services programs in urban and rural areas in different parts of the state (north, central, south) to capture maximum variation in location (Bachman et al., 2009). |

Can be used to document unique or diverse variations that have emerged in adapting to different conditions (Patton, 2002). |

| Critical case | To permit logical generalization and maximum application of information because if it is true in this one case, it’s likely to be true of all other cases |

Investigation of a group of agencies that decided to stop using an evidence-based practice to identify reasons for lack of EBP sustainment. |

Depends on recognition of key dimensions that make for a critical case. Particularly important when resources may limit the study of only one site (program, community, population) (Patton, 2002) |

| Theory-based | To find manifestations of a theoretical construct so as to elaborate and examine the construct and its variations |

Sampling therapists based on academic training to understand the impact of CBT training versus psychodynamic training in graduate school of acceptance of EBPs |

Sample on the basis of potential manifestation or representation of important theoretical constructs. Sampling on the basis of emerging concepts with the aim being to explore the dimensional range or varied conditions along which the properties of concepts vary. |

| Confirming and disconfirming case |

To confirm the importance and meaning of possible patterns and checking out the viability of emergent findings with new data and additional cases. |

Once trends are identified, deliberately seeking examples that are counter to the trend. |

Usually employed in later phases of data collection. Confirmatory cases are additional examples that fit already emergent patterns to add richness, depth and credibility. Disconfirming cases are a source of rival interpretations as well as a means for placing boundaries around confirmed findings |

| Stratified purposeful | To capture major variations rather than to identify a common core, although the latter may emerge in the analysis |

Combining typical case sampling with maximum variation sampling by taking a stratified purposeful sample of above average, average, and below average cases of health care expenditures for a particular problem. |

This represents less than the full maximum variation sample, but more than simple typical case sampling. |

| Purposeful random | To increase the credibility of results |

Selecting for interviews a random sample of providers to describe experiences with EBP implementation. |

Not as representative of the population as a probability random sample. |

| Nonspecific emphasis | |||

| Opportunistic or emergent |

To take advantage of circumstances, events and opportunities for additional data collection as they arise. |

Usually employed when it is impossible to identify sample or the population from which a sample should be drawn at the outset of a study. Used primarily in conducting ethnographic fieldwork |

|

| Convenience | To collect information from participants who are easily accessible to the researcher |

Recruiting providers attending a staff meeting for study participation. |

Although commonly used, it is neither purposeful nor strategic |

Embedded in each strategy is the ability to compare and contrast, to identify similarities and differences in the phenomenon of interest. Nevertheless, some of these strategies (e.g., maximum variation sampling, extreme case sampling, intensity sampling, and purposeful random sampling) are used to identify and expand the range of variation or differences, similar to the use of quantitative measures to describe the variability or dispersion of values for a particular variable or variables, while other strategies (e.g., homogeneous sampling, typical case sampling, criterion sampling, and snowball sampling) are used to narrow the range of variation and focus on similarities. The latter are similar to the use of quantitative central tendency measures (e.g., mean, median, and mode). Moreover, certain strategies, like stratified purposeful sampling or opportunistic or emergent sampling, are designed to achieve both goals. As Patton (2002, p. 240) explains, “the purpose of a stratified purposeful sample is to capture major variations rather than to identify a common core, although the latter may also emerge in the analysis. Each of the strata would constitute a fairly homogeneous sample.”

Challenges to use of purposeful sampling

Despite its wide use, there are numerous challenges in identifying and applying the appropriate purposeful sampling strategy in any study. For instance, the range of variation in a sample from which purposive sample is to be taken is often not really known at the outset of a study. To set as the goal the sampling of information-rich informants that cover the range of variation assumes one knows that range of variation. Consequently, an iterative approach of sampling and re-sampling to draw an appropriate sample is usually recommended to make certain the theoretical saturation occurs (Miles & Huberman, 1994). However, that saturation may be determined a-priori on the basis of an existing theory or conceptual framework, or it may emerge from the data themselves, as in a grounded theory approach (Glaser & Strauss, 1967). Second, there are a not insignificant number in the qualitative methods field who resist or refuse systematic sampling of any kind and reject the limiting nature of such realist, systematic, or positivist approaches. This includes critics of interventions and “bottom up” case studies and critiques. However, even those who equate purposeful sampling with systematic sampling must offer a rationale for selecting study participants that is linked with the aims of the investigation (i.e., why recruit these individuals for this particular study? What qualifies them to address the aims of the study?). While systematic sampling may be associated with a post-positivist tradition of qualitative data collection and analysis, such sampling is not inherently limited to such analyses and the need for such sampling is not inherently limited to post-positivist qualitative approaches (Patton, 2002).

Purposeful Sampling in Implementation Research

Characteristics of Implementation Research

In implementation research, quantitative and qualitative methods often play important roles, either simultaneously or sequentially, for the purpose of answering the same question through convergence of results from different sources, answering related questions in a complementary fashion, using one set of methods to expand or explain the results obtained from use of the other set of methods, using one set of methods to develop questionnaires or conceptual models that inform the use of the other set, and using one set of methods to identify the sample for analysis using the other set of methods (Palinkas et al., 2011). A review of mixed method designs in implementation research conducted by Palinkas and colleagues (2011) revealed seven different sequential and simultaneous structural arrangements, five different functions of mixed methods, and three different ways of linking quantitative and qualitative data together. However, this review did not consider the sampling strategies involved in the types of quantitative and qualitative methods common to implementation research, nor did it consider the consequences of the sampling strategy selected for one method or set of methods on the choice of sampling strategy for the other method or set of methods. For instance, one of the most significant challenges to sampling in sequential mixed method designs lies in the limitations the initial method may place on sampling for the subsequent method. As Morse and Neihaus (2009) observe, when the initial method is qualitative, the sample selected may be too small and lack randomization necessary to fulfill the assumptions for a subsequent quantitative analysis. On the other hand, when the initial method is quantitative, the sample selected may be too large for each individual to be included in qualitative inquiry and lack purposeful selection to reduce the sample size to one more appropriate for qualitative research. The fact that potential participants were recruited and selected at random does not necessarily make them information rich.

A re-examination of the 22 studies and an additional 6 studies published since 2009 revealed that only 5 studies (Aarons & Palinkas, 2007; Bachman et al., 2009; Palinkas et al., 2011; Palinkas et al., 2012; Slade et al., 2003) made a specific reference to purposeful sampling. An additional three studies (Henke et al., 2008; Proctor et al., 2007; Swain et al., 2010) did not make explicit reference to purposeful sampling but did provide a rationale for sample selection. The remaining 20 studies provided no description of the sampling strategy used to identify participants for qualitative data collection and analysis; however, a rationale could be inferred based on a description of who were recruited and selected for participation. Of the 28 studies, 3 used more than one sampling strategy. Twenty-one of the 28 studies (75%) used some form of criterion sampling. In most instances, the criterion used is related to the individual’s role, either in the research project (i.e., trainer, team leader), or the agency (program director, clinical supervisor, clinician); in other words, criterion of inclusion in a certain category (criterion-i), in contrast to cases that are external to a specific criterion (criterion-e). For instance, in a series of studies based on the National Implementing Evidence-Based Practices Project, participants included semi-structured interviews with consultant trainers and program leaders at each study site (Brunette et al., 2008; Marshall et al., 2008; Marty et al., 2007; Rapp et al., 2010; Woltmann et al., 2008). Six studies used some form of maximum variation sampling to ensure representativeness and diversity of organizations and individual practitioners. Two studies used intensity sampling to make contrasts. Aarons and Palinkas (2007), for example, purposefully selected 15 child welfare case managers representing those having the most positive and those having the most negative views of SafeCare, an evidence-based prevention intervention, based on results of a web-based quantitative survey asking about the perceived value and usefulness of SafeCare. Kramer and Burns (2008) recruited and interviewed clinicians providing usual care and clinicians who dropped out of a study prior to consent to contrast with clinicians who provided the intervention under investigation. One study (Hoagwood et al., 2007), used a typical case approach to identify participants for a qualitative assessment of the challenges faced in implementing a trauma-focused intervention for youth. One study (Green & Aarons, 2011) used a combined snowball sampling/criterion-i strategy by asking recruited program managers to identify clinicians, administrative support staff, and consumers for project recruitment. County mental directors, agency directors, and program managers were recruited to represent the policy interests of implementation while clinicians, administrative support staff and consumers were recruited to represent the direct practice perspectives of EBP implementation.

Table 2 below provides a description of the use of different purposeful sampling strategies in mixed methods implementation studies. Criterion-i sampling was most frequently used in mixed methods implementation studies that employed a simultaneous design where the qualitative method was secondary to the quantitative method or studies that employed a simultaneous structure where the qualitative and quantitative methods were assigned equal priority. These mixed method designs were used to complement the depth of understanding afforded by the qualitative methods with the breadth of understanding afforded by the quantitative methods (n = 13), to explain or elaborate upon the findings of one set of methods (usually quantitative) with the findings from the other set of methods (n = 10), or to seek convergence through triangulation of results or quantifying qualitative data (n = 8). The process of mixing methods in the large majority (n = 18) of these studies involved embedding the qualitative study within the larger quantitative study. In one study (Goia & Dziadosz, 2008), criterion sampling was used in a simultaneous design where quantitative and qualitative data were merged together in a complementary fashion, and in two studies (Aarons et al., 2012; Zazelli et al., 2008), quantitative and qualitative data were connected together, one in sequential design for the purpose of developing a conceptual model (Zazelli et al., 2008), and one in a simultaneous design for the purpose of complementing one another (Aarons et al., 2012). Three of the six studies that used maximum variation sampling used a simultaneous structure with quantitative methods taking priority over qualitative methods and a process of embedding the qualitative methods in a larger quantitative study (Henke et al., 2008; Palinkas et al., 2010; Slade et al., 2008). Two of the six studies used maximum variation sampling in a sequential design (Aarons et al., 2009; Zazelli et al., 2008) and one in a simultaneous design (Henke et al., 2010) for the purpose of development, and three used it in a simultaneous design for complementarity (Bachman et al., 2009; Henke et al., 2008; Palinkas, Ell, Hansen, Cabassa, & Wells, 2011). The two studies relying upon intensity sampling used a simultaneous structure for the purpose of either convergence or expansion, and both studies involved a qualitative study embedded in a larger quantitative study (Aarons & Palinkas, 2007; Kramer & Burns, 2008). The single typical case study involved a simultaneous design where the qualitative study was embedded in a larger quantitative study for the purpose of complementarity (Hoagwood et al., 2007). The snowball/maximum variation study involved a sequential design where the qualitative study was merged into the quantitative data for the purpose of convergence and conceptual model development (Green & Aarons, 2011). Although not used in any of the 28 implementation studies examined here, another common sequential sampling strategy is using criteria sampling of the larger quantitative sample to produce a second-stage qualitative sample in a manner similar to maximum variation sampling, except that the former narrows the range of variation while the latter expands the range.

Table 2.

Purposeful sampling strategies and mixed method designs in implementation research

| Sampling strategy | Structure | Design | Function |

|---|---|---|---|

| Single stage sampling (n = 22) | |||

| Criterion (n = 18) |

Simultaneous (n = 17) Sequential (n = 6) |

Merged (n = 9) Connected (n = 9) Embedded (n = 14) |

Convergence (n = 6) Complementarity (n = 12) Expansion (n = 10) Development (n = 3) Sampling (n = 4) |

| Maximum variation (n = 4) |

Simultaneous (n = 3) Sequential (n = 1) |

Merged (n = 1) Connected (n = 1) Embedded (n = 2) |

Convergence (n = 1) Complementarity (n = 2) Expansion (n = 1) Development (n = 2) |

| Intensity (n = 1) |

Simultaneous Sequential |

Merged Connected Embedded |

Convergence Complementarity Expansion Development |

| Typical case Study (n = 1) |

Simultaneous | Embedded | Complementarity |

| Multistage sampling (n = 4) | |||

| Criterion/maximum variation (n = 2) |

Simultaneous Sequential |

Embedded Connected |

Complementarity Development |

| Criterion/intensity (n = 1) |

Simultaneous | Embedded | Convergence Complementarity Expansion |

| Criterion/snowball (n = 1) |

Sequential | Connected | Convergence Development |

Criterion-i sampling as a purposeful sampling strategy shares many characteristics with random probability sampling, despite having different aims and different procedures for identifying and selecting potential participants. In both instances, study participants are drawn from agencies, organizations or systems involved in the implementation process. Individuals are selected based on the assumption that they possess knowledge and experience with the phenomenon of interest (i.e., the implementation of an EBP) and thus will be able to provide information that is both detailed (depth) and generalizable (breadth). Participants for a qualitative study, usually service providers, consumers, agency directors, or state policy-makers, are drawn from the larger sample of participants in the quantitative study. They are selected from the larger sample because they meet the same criteria, in this case, playing a specific role in the organization and/or implementation process. To some extent, they are assumed to be “representative” of that role, although implementation studies rarely explain the rationale for selecting only some and not all of the available role representatives (i.e., recruiting 15 providers from an agency for semi-structured interviews out of an available sample of 25 providers). From the perspective of qualitative methodology, participants who meet or exceed a specific criterion or criteria possess intimate (or, at the very least, greater) knowledge of the phenomenon of interest by virtue of their experience, making them information-rich cases.

However, criterion sampling may not be the most appropriate strategy for implementation research because by attempting to capture both breadth and depth of understanding, it may actually be inadequate to the task of accomplishing either. Although qualitative methods are often contrasted with quantitative methods on the basis of depth versus breadth, they actually require elements of both in order to provide a comprehensive understanding of the phenomenon of interest. Ideally, the goal of achieving theoretical saturation by providing as much detail as possible involves selection of individuals or cases that can ensure all aspects of that phenomenon are included in the examination and that any one aspect is thoroughly examined. This goal, therefore, requires an approach that sequentially or simultaneously expands and narrows the field of view, respectively. By selecting only individuals who meet a specific criterion defined on the basis of their role in the implementation process or who have a specific experience (e.g., engaged only in an implementation defined as successful or only in one defined as unsuccessful), one may fail to capture the experiences or activities of other groups playing other roles in the process. For instance, a focus only on practitioners may fail to capture the insights, experiences, and activities of consumers, family members, agency directors, administrative staff, or state policy leaders in the implementation process, thus limiting the breadth of understanding of that process. On the other hand, selecting participants on the basis of whether they were a practitioner, consumer, director, staff, or any of the above, may fail to identify those with the greatest experience or most knowledgeable or most able to communicate what they know and/or have experienced, thus limiting the depth of understanding of the implementation process.

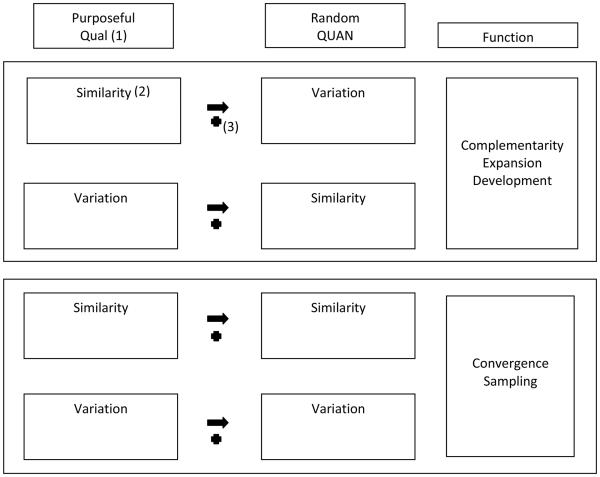

To address the potential limitations of criterion sampling, other purposeful sampling strategies should be considered and possibly adopted in implementation research (Figure 1). For instance, strategies placing greater emphasis on breadth and variation such as maximum variation, extreme case, confirming and disconfirming case sampling are better suited for an examination of differences, while strategies placing greater emphasis on depth and similarity such as homogeneous, snowball, and typical case sampling are better suited for an examination of commonalities or similarities, even though both types of sampling strategies include a focus on both differences and similarities. Alternatives to criterion sampling may be more appropriate to the specific functions of mixed methods, however. For instance, using qualitative methods for the purpose of complementarity may require that a sampling strategy emphasize similarity if it is to achieve depth of understanding or explore and develop hypotheses that complement a quantitative probability sampling strategy achieving breadth of understanding and testing hypotheses (Kemper et al., 2003). Similarly, mixed methods that address related questions for the purpose of expanding or explaining results or developing new measures or conceptual models may require a purposeful sampling strategy aiming for similarity that complements probability sampling aiming for variation or dispersion. A narrowly focused purposeful sampling strategy for qualitative analysis that “complements” a broader focused probability sample for quantitative analysis may help to achieve a balance between increasing inference quality/trustworthiness (internal validity) and generalizability/transferability (external validity). A single method that focuses only on a broad view may decrease internal validity at the expense of external validity (Kemper et al., 2003). On the other hand, the aim of convergence (answering the same question with either method) may suggest use of a purposeful sampling strategy that aims for breadth that parallels the quantitative probability sampling strategy.

Figure 1.

Purposeful and Random Sampling Strategies for Mixed Method Implementation Studies

-

(1)Priority and sequencing of Qualitative (QUAL) and Quantitative (QUAN) can be reversed.

-

(2)Refers to emphasis of sampling strategy.

-

(3)

Refers to sequential structure;

Refers to sequential structure;  refers to simultaneous structure.

refers to simultaneous structure.

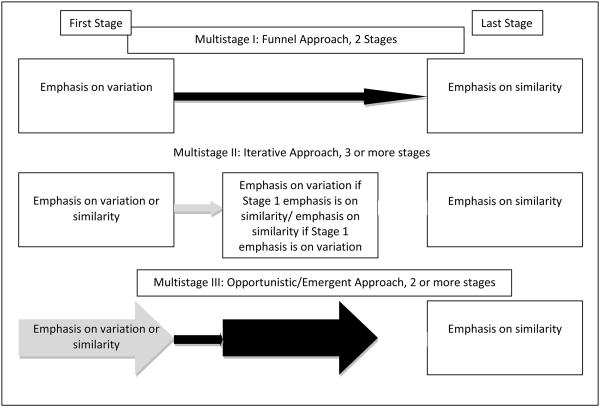

Furthermore, the specific nature of implementation research suggests that a multistage purposeful sampling strategy be used. Three different multistage sampling strategies are illustrated in Figure 1 below. Several qualitative methodologists recommend sampling for variation (breadth) before sampling for commonalities (depth) (Glaser, 1978; Bernard, 2002) (Multistage I). Also known as a “funnel approach”, this strategy is often recommended when conducting semi-structured interviews (Spradley, 1979) or focus groups (Morgan, 1997). This approach begins with a broad view of the topic and then proceeds to narrow down the conversation to very specific components of the topic. However, as noted earlier, the lack of a clear understanding of the nature of the range may require an iterative approach where each stage of data analysis helps to determine subsequent means of data collection and analysis (Denzen, 1978; Patton, 2001) (Multistage II). Similarly, multistage purposeful sampling designs like opportunistic or emergent sampling, allow the option of adding to a sample to take advantage of unforeseen opportunities after data collection has been initiated (Patton, 2001, p. 240) (Multistage III). Multistage I models generally involve two stages, while a Multistage II model requires a minimum of 3 stages, alternating from sampling for variation to sampling for similarity. A Multistage III model begins with sampling for variation and ends with sampling for similarity, but may involve one or more intervening stages of sampling for variation or similarity as the need or opportunity arises.

Multistage purposeful sampling is also consistent with the use of hybrid designs to simultaneously examine intervention effectiveness and implementation. An extension of the concept of “practical clinical trials” (Tunis, Stryer & Clancey, 2003), effectiveness-implementation hybrid designs provide benefits such as more rapid translational gains in clinical intervention uptake, more effective implementation strategies, and more useful information for researchers and decision makers (Curran et al., 2012). Such designs may give equal priority to the testing of clinical treatments and implementation strategies (Hybrid Type 2) or give priority to the testing of treatment effectiveness (Hybrid Type 1) or implementation strategy (Hybrid Type 3). Curran and colleagues (2012) suggest that evaluation of the intervention’s effectiveness will require or involve use of quantitative measures while evaluation of the implementation process will require or involve use of mixed methods. When conducting a Hybrid Type 1 design (conducting a process evaluation of implementation in the context of a clinical effectiveness trial), the qualitative data could be used to inform the findings of the effectiveness trial. Thus, an effectiveness trial that finds substantial variation might purposefully select participants using a broader strategy like sampling for disconfirming cases to account for the variation. For instance, group randomized trials require knowledge of the contexts and circumstances similar and different across sites to account for inevitable site differences in interventions and assist local implementations of an intervention (Bloom & Michalopoulos, 2013; Raudenbush & Liu, 2000). Alternatively, a narrow strategy may be used to account for the lack of variation. In either instance, the choice of a purposeful sampling strategy is determined by the outcomes of the quantitative analysis that is based on a probability sampling strategy. In Hybrid Type 2 and Type 3 designs where the implementation process is given equal or greater priority than the effectiveness trial, the purposeful sampling strategy must be first and foremost consistent with the aims of the implementation study, which may be to understand variation, central tendencies, or both. In all three instances, the sampling strategy employed for the implementation study may vary based on the priority assigned to that study relative to the effectiveness trial. For instance, purposeful sampling for a Hybrid Type 1 design may give higher priority to variation and comparison to understand the parameters of implementation processes or context as a contribution to an understanding of effectiveness outcomes (i.e., using qualitative data to expand upon or explain the results of the effectiveness trial), In effect, these process measures could be seen as modifiers of innovation/EBP outcome. In contrast, purposeful sampling for a Hybrid Type 3 design may give higher priority to similarity and depth to understand the core features of successful outcomes only.

Finally, multistage sampling strategies may be more consistent with innovations in experimental designs representing alternatives to the classic randomized controlled trial in community-based settings that have greater feasibility, acceptability, and external validity. While RCT designs provide the highest level of evidence, “in many clinical and community settings, and especially in studies with underserved populations and low resource settings, randomization may not be feasible or acceptable” (Glasgow, et al., 2005, p. 554). Randomized trials are also “relatively poor in assessing the benefit from complex public health or medical interventions that account for individual preferences for or against certain interventions, differential adherence or attrition, or varying dosage or tailoring of an intervention to individual needs” (Brown et al., 2009, p. 2). Several alternatives to the randomized design have been proposed, such as “interrupted time series,” “multiple baseline across settings” or “regression-discontinuity” designs. Optimal designs represent one such alternative to the classic RCT and are addressed in detail by Duan and colleagues (this issue). Like purposeful sampling, optimal designs are intended to capture information-rich cases, usually identified as individuals most likely to benefit from the experimental intervention. The goal here is not to identify the typical or average patient, but patients who represent one end of the variation in an extreme case, intensity sampling, or criterion sampling strategy. Hence, a sampling strategy that begins by sampling for variation at the first stage and then sampling for homogeneity within a specific parameter of that variation (i.e., one end or the other of the distribution) at the second stage would seem the best approach for identifying an “optimal” sample for the clinical trial.

Another alternative to the classic RCT are the adaptive designs proposed by Brown and colleagues (Brown et al, 2006; Brown et al., 2008; Brown et al., 2009). Adaptive designs are a sequence of trials that draw on the results of existing studies to determine the next stage of evaluation research. They use cumulative knowledge of current treatment successes or failures to change qualities of the ongoing trial. An adaptive intervention modifies what an individual subject (or community for a group-based trial) receives in response to his or her preferences or initial responses to an intervention. Consistent with multistage sampling in qualitative research, the design is somewhat iterative in nature in the sense that information gained from analysis of data collected at the first stage influences the nature of the data collected, and the way they are collected, at subsequent stages (Denzen, 1978). Furthermore, many of these adaptive designs may benefit from a multistage purposeful sampling strategy at early phases of the clinical trial to identify the range of variation and core characteristics of study participants. This information can then be used for the purposes of identifying optimal dose of treatment, limiting sample size, randomizing participants into different enrollment procedures, determining who should be eligible for random assignment (as in the optimal design) to maximize treatment adherence and minimize dropout, or identifying incentives and motives that may be used to encourage participation in the trial itself.

Alternatives to the classic RCT design may also be desirable in studies that adopt a community-based participatory research framework (Minkler & Wallerstein, 2003), considered to be an important tool on conducting implementation research (Palinkas & Soydan, 2012). Such frameworks suggest that identification and recruitment of potential study participants will place greater emphasis on the priorities and “local knowledge” of community partners than on the need to sample for variation or uniformity. In this instance, the first stage of sampling may approximate the strategy of sampling politically important cases (Patton, 2002) at the first stage, followed by other sampling strategies intended to maximize variations in stakeholder opinions or experience.

Summary

On the basis of this review, the following recommendations are offered for the use of purposeful sampling in mixed method implementation research. First, many mixed methods studies in health services research and implementation science do not clearly identify or provide a rationale for the sampling procedure for either quantitative or qualitative components of the study (Wisdom et al., 2011), so a primary recommendation is for researchers to clearly describe their sampling strategies and provide the rationale for the strategy.

Second, use of a single stage strategy for purposeful sampling for qualitative portions of a mixed methods implementation study should adhere to the same general principles that govern all forms of sampling, qualitative or quantitative. Kemper and colleagues (2003) identify seven such principles: 1) the sampling strategy should stem logically from the conceptual framework as well as the research questions being addressed by the study; 2) the sample should be able to generate a thorough database on the type of phenomenon under study; 3) the sample should at least allow the possibility of drawing clear inferences and credible explanations from the data; 4) the sampling strategy must be ethical; 5) the sampling plan should be feasible; 6) the sampling plan should allow the researcher to transfer/generalize the conclusions of the study to other settings or populations; and 7) the sampling scheme should be as efficient as practical.

Third, the field of implementation research is at a stage itself where qualitative methods are intended primarily to explore the barriers and facilitators of EBP implementation and to develop new conceptual models of implementation process and outcomes. This is especially important in state implementation research, where fiscal necessities are driving policy reforms for which knowledge about EBP implementation barriers and facilitators are urgently needed. Thus a multistage strategy for purposeful sampling should begin first with a broader view with an emphasis on variation or dispersion and move to a narrow view with an emphasis on similarity or central tendencies. Such a strategy is necessary for the task of finding the optimal balance between internal and external validity.

Fourth, if we assume that probability sampling will be the preferred strategy for the quantitative components of most implementation research, the selection of a single or multistage purposeful sampling strategy should be based, in part, on how it relates to the probability sample, either for the purpose of answering the same question (in which case a strategy emphasizing variation and dispersion is preferred) or the for answering related questions (in which case, a strategy emphasizing similarity and central tendencies is preferred).

Fifth, it should be kept in mind that all sampling procedures, whether purposeful or probability, are designed to capture elements of both similarity and differences, of both centrality and dispersion, because both elements are essential to the task of generating new knowledge through the processes of comparison and contrast. Selecting a strategy that gives emphasis to one does not mean that it cannot be used for the other. Having said that, our analysis has assumed at least some degree of concordance between breadth of understanding associated with quantitative probability sampling and purposeful sampling strategies that emphasize variation on the one hand, and between the depth of understanding and purposeful sampling strategies that emphasize similarity on the other hand. While there may be some merit to that assumption, depth of understanding requires both an understanding of variation and common elements.

Finally, it should also be kept in mind that quantitative data can be generated from a purposeful sampling strategy and qualitative data can be generated from a probability sampling strategy. Each set of data is suited to a specific objective and each must adhere to a specific set of assumptions and requirements. Nevertheless, the promise of mixed methods, like the promise of implementation science, lies in its ability to move beyond the confines of existing methodological approaches and develop innovative solutions to important and complex problems. For states engaged in EBP implementation, the need for these solutions is urgent.

Figure 2.

Multistage Purposeful Sampling Strategies

Acknowledgments

This study was funded through a grant from the National Institute of Mental Health (P30-MH090322: K. Hoagwood, PI).

REFERENCES

- 1.Aarons GA, Hurlburt M, Horwitz SM. Advancing a conceptual model of evidence-based practice implementation in child welfare. Administration and Policy in Mental Health and Mental Health Services Research. 2011;38:4–23. doi: 10.1007/s10488-010-0327-7. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 2.Aarons GA, Palinkas LA. Implementation of evidence-based practice in child welfare: service provider perspectives. Administration and Policy in Mental Health and Mental Health Services Research. 2007;34:411–419. doi: 10.1007/s10488-007-0121-3. [DOI] [PubMed] [Google Scholar]

- 3.Bachman MO, O’Brien M, Husbands C, Shreeve A, Jones N, Watson J, Reading R, Thoburn J, Mugford M, the National Evaluation of Children’s Trusts Team Integrating children’s services in England: national evaluation of children’s trusts. Child: Care, Health and Development. 2009;35:257–265. doi: 10.1111/j.1365-2214.2008.00928.x. [DOI] [PubMed] [Google Scholar]

- 4.Bernard HR. Research methods in anthropology: Qualitative and quantitative approaches. 3rd Alta Mira Press; Walnut Creek, CA: 2002. [Google Scholar]

- 5.Bloom HS, Michalopoulos C. When is the story in the subgroups? Strategies for interpreting and reporting intervention effects for subgroups. Prevention Science. 2013;14:179–188. doi: 10.1007/s11121-010-0198-x. [DOI] [PubMed] [Google Scholar]

- 6.Brown CH, Wyman PA, Guo J, Peña J. Dynamic wait-listed designs for randomized trials: New designs for prevention of youth suicide. Clinical Trials. 2006;3:259–271. doi: 10.1191/1740774506cn152oa. [DOI] [PubMed] [Google Scholar]

- 7.Brown CH, Wang W, Kellam SG, Muthén, Prevention Science and Methodology Group Methods for testing theory and evaluating impact in randomized field trials: Intent-to-treat analyses for integrating the perspectives of person, place, and time. Drug and Alcohol Dependence. 2008;S95:S74–S104. doi: 10.1016/j.drugalcdep.2007.11.013. … . [DOI] [PMC free article] [PubMed] [Google Scholar]

- 8.Brown C, Ten Have T, Jo B, Dagne G, Wyman P, Muthén B, et al. Adaptive designs for randomized trials in public health. Annual Review of Public Health. 2009;30:1–25. doi: 10.1146/annurev.publhealth.031308.100223. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 9.Brunette MF, Asher D, Whitley R, Lutz WJ, Weider BL, Jones AM, McHugo GJ. Implementation of integrated dual disorders treatment: a qualitative analysis of facilitators and barriers. Psychiatric Services. 2008;59:989–995. doi: 10.1176/ps.2008.59.9.989. [DOI] [PubMed] [Google Scholar]

- 10.Cresswell JW, Plano Clark VL. Designing and conducting mixed method research. 2nd Sage; Thousand Oaks, CA: 2011. [Google Scholar]

- 11.Curran GM, Bauer M, Mittman B, Pyne JM, Stetler C. Effectiveness-implementation hybrid designs: Combining elements of clinical effectiveness and implementation research to enhance public health impact. Medical Care. 2012;50:217–226. doi: 10.1097/MLR.0b013e3182408812. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 12.Denzen NK. The research act: A theoretical introduction to sociological methods. 2nd McGraw Hill; New York: 1978. [Google Scholar]

- 13.Duan N, Bhaumik DK, Palinkas LA, Hoagwood K. Purposeful sampling and optimal design. Administration and Policy in Mental Health and Mental Health Services Research. this issue. [DOI] [PMC free article] [PubMed]

- 14.Glaser BG. Theoretical sensitivity. Sociology Press; Mill Valley, CA: 1978. [Google Scholar]

- 15.Glaser BG, Straus AL. The Discovery of grounded theory: Strategies for qualitative research. Aldine de Gruyter; New York: 1967. [Google Scholar]

- 16.Glasgow R, Magid D, Beck A, Ritzwoller D, Estabrooks P. Practical clinical trials for translating research to practice: design and measurement recommendations. Medical Care. 2005;43(6):551. doi: 10.1097/01.mlr.0000163645.41407.09. [DOI] [PubMed] [Google Scholar]

- 17.Green AE, Aarons GA. A comparison of policy and direct practice stakeholder perceptions of factors affecting evidence-based practice implementation using concept mapping. Implementation Science. 2011;6:104. doi: 10.1186/1748-5908-6-104. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 18.Guest G, Bunce A, Johnson L. How many interviews are enough? An experiment with data saturation and variability. Field Methods. 2006;18(1):59–82. [Google Scholar]

- 19.Henke RM, Chou AF, Chanin JC, Zides AB, Scholle SH. Physician attitude toward depression care interventions: implications for implementation of quality improvement initiatives. Implementation Science. 2008;3:40. doi: 10.1186/1748-5908-3-40. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 20.Hoagwood KE, Vogel JM, Levitt JM, D’Amico PJ, Paisner WI, Kaplan SJ. Implementing an evidence-based trauma treatment in a state system after September 11: the CATS Project. Journal of the American Academy of Child and Adolescent Psychiatry. 2007;46(6):773–779. doi: 10.1097/chi.0b013e3180413def. [DOI] [PubMed] [Google Scholar]

- 21.Kemper EA, Stringfield S, Teddlie C. Mixed methods sampling strategies in social science research. In: Tashakkori A, Teddlie C, editors. Handbook of mixed methods in the social and behavioral sciences. Sage; Thousand Oaks, CA: 2003. pp. 273–296. [Google Scholar]

- 22.Kramer TF, Burns BJ. Implementing cognitive behavioral therapy in the real world: a case study of two mental health centers. Implementation Science. 2008;3(14) doi: 10.1186/1748-5908-3-14. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 23.Landsverk J, Brown H, Chamberlain P, Palinkas LA, Horwitz SM. Design and analysis in dissemination and implementation research. In: Brownson RC, Colditz GA, Proctor EK, editors. Translating science to practice. Oxford University Press; New York: 2012. pp. 225–260. [Google Scholar]

- 24.Marshall T, Rapp CA, Becker DR, Bond GR. Key factors for implementing supported employment. Psychiatric Services. 2008;59:886–892. doi: 10.1176/ps.2008.59.8.886. [DOI] [PubMed] [Google Scholar]

- 25.Marty D, Rapp C, McHugo G, Whitley R. Factors influencing consumer outcome monitoring in implementation of evidence-based practices: results from the National EBP Implementation Project. Administration and Policy in Mental Health. 2008;35:204–211. doi: 10.1007/s10488-007-0157-4. [DOI] [PubMed] [Google Scholar]

- 26.Miles MB, Huberman AM. Qualitative data analysis: An expanded sourcebook. 2nd Sage; Thousand Oaks, CA: 1994. [Google Scholar]

- 27.Minkler M, Wallerstein N, editors. Community-based participatory research for health. Jossey-Bass; San Francisco, CA: 2003. [Google Scholar]

- 28.Morgan DL. Focus groups as qualitative research. Sage; Newbury Park, CA: 1997. [Google Scholar]

- 29.Morse JM, Niehaus L. Mixed method design: Principles and procedures. Left Coast Press; Walnut Creek, CA: 2009. [Google Scholar]

- 30.Padgett DK. Qualitative methods in social work research. 2nd Sage; Los Angeles: 2008. [Google Scholar]

- 31.Palinkas LA, Aarons GA, Horwitz SM, Chamberlain P, Hurlburt M, Landsverk J. Mixed method designs in implementation research. Administration and Policy in Mental Health and Mental Health Services Research. 2011;38:44–53. doi: 10.1007/s10488-010-0314-z. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 32.Palinkas LA, Ell K, Hansen M, Cabassa LJ, Wells AA. Sustainability of collaborative care interventions in primary care settings. Journal of Social Work. 2011;11:99–117. [Google Scholar]

- 33.Palinkas LA, Fuentes D, Garcia AR, Finno M, Holloway IW, Chamberlain P. Administration and Policy in Mental Health and Mental Health Services Research. Aug 12, 2012. Inter-organizational collaboration in the implementation of evidence-based practices among agencies serving abused and neglected youth. epub ahead of print DOI 10.1007/s10488-012-0437-5. [DOI] [PubMed] [Google Scholar]

- 34.Palinkas LA, Holloway IW, Rice E, Fuentes D, Wu Q, Chamberlain P. Social networks and implementation of evidence-based practices in public youth-serving systems: A mixed methods study. Implementation Science. 2011;6:113. doi: 10.1186/1748-5908-6-113. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 35.Palinkas LA, Soydan H. Translation and implementation of evidence-based practice. Oxford University Press; New York: 2012. [Google Scholar]

- 36.Patton MQ. Qualitative research and evaluation methods. 3rd Sage Publications; Thousand Oaks, CA: 2002. [Google Scholar]

- 37.Proctor EK, Knudsen KJ, Fedoracivius N, Hovmand P, Rosen A, Perron B. Implementation of evidence-based practice in community behavioral health: agency director perspectives. Administration and Policy in Mental Health. 2007;34:479–488. doi: 10.1007/s10488-007-0129-8. [DOI] [PubMed] [Google Scholar]

- 38.Proctor EK, Landsverk J, Aarons G, Chambers D, Glisson C, Mittman C. Implementation research in mental health services: an emerging science with conceptual, methodological, and training challenges. Administration and Policy in Mental Health and Mental Health Services Research. 2009;36:24–34. doi: 10.1007/s10488-008-0197-4. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 39.Rapp CA, Etzel-Wise D, Marty D, Coffman M, Carlson L, Asher D, Callaghan J, Holter M. Barriers to evidence-based practice implementation: results of a qualitative study. Community Mental Health Journal. 2010;46:112–118. doi: 10.1007/s10597-009-9238-z. [DOI] [PubMed] [Google Scholar]

- 40.Raudenbush S, Liu X. Statistical power and optimal design for multisite randomized trials. Psychological Methods. 2000;5:199–213. doi: 10.1037/1082-989x.5.2.199. [DOI] [PubMed] [Google Scholar]

- 41.Slade M, Gask L, Leese M, McCrone P, Montana C, Powell R, Stewart M, Chew-Graham Cl. Failure to improve appropriateness of referrals to adult community mental health services – lessons from a multi-site cluster randomized controlled trial. Family Practice. 2008;25:181–190. doi: 10.1093/fampra/cmn025. [DOI] [PubMed] [Google Scholar]

- 42.Spradley JP. The ethnographic interview. Holt, Rinehart & Winston; New York: 1979. [Google Scholar]

- 43.Swain K, Whitley R, McHugo GJ, Drake RE. The sustainability of evidence-based practices in routine mental health agencies. Community Mental Health Journal. 2010;46:119–129. doi: 10.1007/s10597-009-9202-y. [DOI] [PubMed] [Google Scholar]

- 44.Teddlie C, Tashakkori A. Major issues and controversies in the use of mixed methods in the social and behavioral sciences. In: Tashakkori A, Teddlie C, editors. Handbook of mixed methods in the social and behavioral sciences. Sage; Thousand Oaks, CA: 2003. pp. 3–50. [Google Scholar]

- 45.Tunis SR, Stryer DB, Clancey CM. Increasing the value of clinical research for decision making in clinical and health policy. Journal of the American Medical Association. 2003;290:1624–1632. doi: 10.1001/jama.290.12.1624. 2003. [DOI] [PubMed] [Google Scholar]

- 46.Wisdom JP, Cavaleri MC, Onwuegbuzie AT, Green CA. Methodological reporting in qualitative, quantitative, and mixed methods health services research articles. Health Services Research. 2011;47:721–745. doi: 10.1111/j.1475-6773.2011.01344.x. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 47.Woltmann EM, Whitley R, McHugo GJ, et al. The role of staff turnover in the implementation of evidence-based practices in health care. Psychiatric Services. 2008;59:732–737. doi: 10.1176/ps.2008.59.7.732. [DOI] [PubMed] [Google Scholar]

- 48.Zazzali JL, Sherbourne C, Hoagwood KE, Greene D, Bigley MF, Sexton TL. The adoption and implementation of an evidence based practice in child and family mental health services organizations: a pilot study of functional family therapy in New York State. Administration and Policy in Mental Health. 2008;35:38–49. doi: 10.1007/s10488-007-0145-8. [DOI] [PubMed] [Google Scholar]