SUMMARY

Ventral temporal cortex (VTC) is the latest stage of the ventral ‘what’ visual pathway, which is thought to code the identity of a stimulus regardless of its position or size [1, 2]. Surprisingly, recent studies show that position information can be decoded from VTC [3–5]. However, the computational mechanisms by which spatial information is encoded in VTC are unknown. Furthermore, how attention influences spatial representations in human VTC is also unknown because the effect of attention on spatial representations has only been examined in the dorsal ‘where’ visual pathway [6–10]. Here we fill these significant gaps in knowledge using an approach that combines functional magnetic resonance imaging and sophisticated computational methods. We first develop a population receptive field (pRF) model [11, 12] of spatial responses in human VTC. Consisting of spatial summation followed by a compressive nonlinearity, this model accurately predicts responses of individual voxels to stimuli at any position and size, explains how spatial information is encoded, and reveals a functional hierarchy in VTC. We then manipulate attention and use our model to decipher the effects of attention. We find that attention to the stimulus systematically and selectively modulates responses in VTC, but not early visual areas. Locally, attention increases eccentricity, size, and gain of individual pRFs, thereby increasing position tolerance. However, globally, these effects reduce uncertainty regarding stimulus location and actually increase position sensitivity of distributed responses across VTC. These results demonstrate that attention actively shapes and enhances spatial representations in the ventral visual pathway.

RESULTS

Does a population receptive field (pRF) model predict responses in VTC?

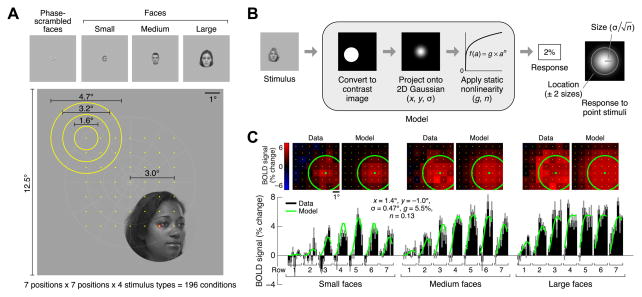

To develop a model of how spatial information is encoded in VTC, we measured fMRI responses (3T, 2-mm voxels) in a series of face-selective regions [13] while subjects fixated centrally and viewed images of faces that varied systematically in position and size (Figure 1A). We used face-selective regions as a model system as they are a highly studied subsystem of VTC [3, 14, 15] with a well-understood functional organization that is anatomically consistent across subjects [13, 16]. After estimating and denoising stimulus-evoked responses [17], we modeled responses in each voxel using the compressive spatial summation (CSS) model [12]. The CSS model characterizes the pRF [11] of a voxel and predicts the response to a face by first computing the spatial overlap between the face and an isotropic two-dimensional Gaussian and then applying a compressive nonlinearity (Figure 1B). Cross-validation analyses demonstrate that the CSS model accurately characterizes responses of individual voxels in face-selective regions IOG-, pFus-, and mFus-faces [13] and successfully predicts responses to faces at novel positions and sizes (Figure 1C, Figure S1A). To assess whether these results are specific to face stimuli, we also performed measurements using phase-scrambled faces. Although phase-scrambled faces evoke weaker responses and produce noisier pRF estimates, pRF properties are largely invariant to stimulus type (Figures S1B, S1C).

Figure 1. Compressive spatial summation accurately models responses in VTC.

(A) Stimuli. Subjects viewed faces while fixating centrally. Faces varied systematically in position (centers indicated by yellow dots) and size (sizes indicated by yellow circles). During each trial, face position and size were held constant while face identity and viewpoint were dynamically updated. (B) Compressive spatial summation (CSS) model. The response to a face is predicted by computing the spatial overlap between the face and a 2D Gaussian and then applying a compressive power-law nonlinearity. The model includes two parameters (x, y) for the position of the Gaussian, a parameter (σ) for the size of the Gaussian, a parameter (n) for the exponent of the nonlinearity, and a parameter (g) for the overall gain of the predicted responses. (C) Example voxel (left IOG, subject 1). Top row: Responses arranged spatially according to face position. Bottom row: Responses arranged serially for better visualization of measurement reliability and goodness-of-fit of CSS model. BOLD response magnitudes (black bars; median across trials ± 68% CI) are accurately fit by the model (green line). Note that a single set of model parameters accounts for the full range of the data. See also Figure S1.

What is the nature of pRFs in VTC?

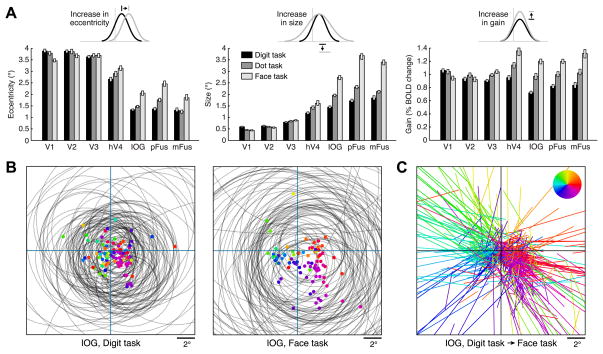

Similar to early and intermediate visual areas [11, 12], pRF size increases with eccentricity in face-selective regions within VTC (Figure 2A, Figure S2B), suggesting that size-eccentricity scaling is a pervasive organizing principle across the ventral visual pathway. However, different from earlier visual areas, pRFs in face-selective regions are quite large compared to their eccentricity. Consequently, these pRFs extend substantially into the ipsilateral visual field (Figure 2B). Also, unlike pRFs in earlier areas, pRFs in face-selective regions are consistently centered near the fovea, producing a representational scheme in which nearly all neural resources are dedicated to the central portion of the visual field (approximately the central 7°; see Figures 2B, S2A). This convergence of spatial coverage is consistent with the foveal bias of face-selective regions [14, 15]. Notably, this organization is different from the distributed tiling of visual space in earlier retinotopic visual regions [18], suggesting unique computational strategies in VTC. Interestingly, pRF properties vary hierarchically across face-selective regions: anterior regions in VTC generally have larger and more foveal pRFs than posterior regions (Figure 2A, Figure S2C), features also observed in monkey inferotemporal cortex (IT) [19–22].

Figure 2. Systematic organization of pRF properties across the ventral visual pathway.

(A) pRF size versus eccentricity. Each line represents a region (median across voxels ± 68% CI). Dotted lines indicate eccentricity ranges containing few voxels. The inset shows a schematic of pRF sizes at 1° eccentricity. IOG: inferior occipital gyrus; pFus: posterior fusiform; mFus: mid-fusiform. (B) pRF locations and visual field coverage in the left hemisphere. Top row: pRF centers (red dots) and pRF sizes for 100 randomly selected voxels from each region (gray circles). Bottom row: visual field coverage, computed as the proportion of pRFs covering each point in the visual field. Each image is normalized such that black corresponds to 0 and white corresponds to the maximum value. IOG, pFus, and mFus contain large pRFs centered near the fovea. See also Figure S2.

How are pRF properties affected by attention?

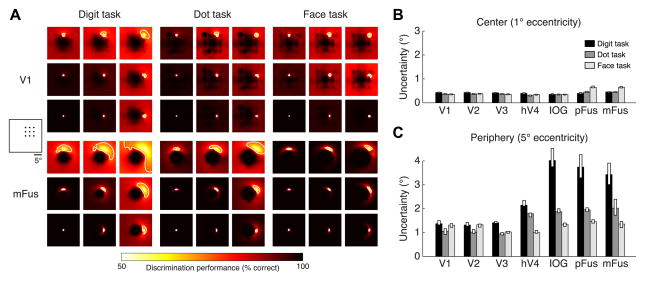

To understand the contribution of top-down attentional signals to the observed results, we measured pRFs under different attentional states. While maintaining central fixation, subjects performed one of three tasks: digit task (1-back task on rapid serial presentation of digits at fixation), dot task (detection of a red dot appearing on the faces; same as the first experiment), and face task (1-back task on the identity of the faces; see Supplemental Experimental Procedures and Figure S3A). In the digit task, attention is directed toward fixation, while in the dot and face tasks, attention is directed towards the faces.

Comparing pRF properties across tasks, we find no substantial changes in pRF properties in early visual areas V1–V3 (Figure 3A, Figure S3C). However, in hV4 and more substantially in face-selective regions, voxel responses are strongly modulated by the task (Figure S3B). In these regions, pRFs exhibit increased eccentricity, size, and gain when subjects attend to the faces (dot and face tasks) compared to when they attend to fixation (digit task) (Figures 3A–3C). These effects are consistent with the concept of response enhancement at the attended location [23], and the effects are large in size: for example, in mFus, comparing pRF properties across the digit and face task, respectively, the median pRF eccentricity increases from 1.3° to 1.9°, the median pRF size increases from 1.8° to 3.4°, and the median pRF gain increases from 0.83% to 1.32%.

Figure 3. Attention modulates pRF properties in VTC.

pRFs were measured under three tasks using the same stimulus. While maintaining fixation, subjects performed a one-back task on centrally presented digits (digit task), detected a small dot superimposed on the faces (dot task), or performed a one-back task on face identity (face task). (A) Summary of results. Each bar represents a region under a single task (median across voxels ± 68% CI). In hV4, and more so in IOG, pFus, and mFus, attending to the stimulus (dot task, face task) causes an increase in pRF eccentricity, size, and gain compared to attending to fixation (digit task). These effects are larger for the face task than for the dot task. (B) Visualization of pRFs for 100 randomly selected voxels from an example region, IOG (colored dots: pRF centers; gray circles: pRF sizes). In the digit task, pRFs are small and cluster near the fovea, whereas in the face task, pRFs are large and spread out into the periphery. (C) Visualization of pRF shifts for region IOG. For each voxel, a line is drawn that connects the pRF center under the digit task to the pRF center under the face task; color indicates the direction of the shift (see legend) and the same color is used for the corresponding dots in panel B. In general, pRFs shift away from the center. Although it appears as if there are many shifts to far eccentricities, the majority (81%) of pRF centers under the face task are actually located within 5° eccentricity. See also Figure S3.

Control experiments reveal that changes in pRF properties are observed even if tasks are interleaved on a trial-by-trial basis (Figure S3G), indicating that the changes cannot be attributed to variation in general subject arousal across tasks. Furthermore, performing the digit task on digits presented to the left of fixation produces leftward shifts of pRFs in hV4, IOG, and pFus compared to performing the digit task on central digits (Figure S3H). This indicates that even though attention is drawn away from faces during the digit task, pRF modulations occur in a manner consistent with response enhancement at the attended location irrespective of the content of the attended stimulus.

Interestingly, attentional effects in face-selective regions are stronger for the face task—which specifically requires perceptual processing of the faces—compared to the dot task (p < 10−9, two-tailed sign test in each region for each pRF property). Increases in pRF size under the dot and face tasks relative to the digit task are particularly intriguing as they indicate that locally, at the voxel level, attention to the stimulus increases the position tolerance of the neural representation.

What is the benefit of attentional modulation of pRFs?

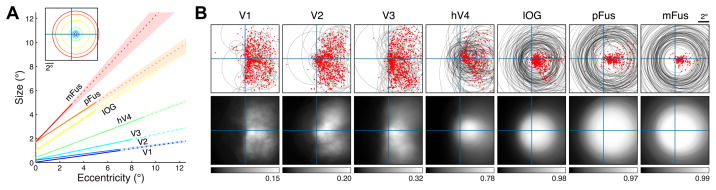

Although we have demonstrated local changes in pRF properties as a result of attention, an open question is whether these attention-induced changes are beneficial to the global, or distributed, representation of the stimulus. Specifically, we ask: does attention affect the ability of a collection of pRFs to discriminate the location of the stimulus? This question cannot be answered through simple summary statistics of pRF properties (such as the ones in Figure 3A) because discrimination performance depends not only on the properties of individual pRFs, but also on how the pRFs collectively tile the visual field. For example, large but overlapping pRFs might discriminate stimulus locations better than small, non-overlapping pRFs [24]. We therefore designed a model-based decoding analysis that quantifies the spatial discrimination performance of a collection of pRFs. In this analysis, we calculate spatial uncertainty, that is, the distance over which changes in stimulus position cannot be well discriminated based on the distributed responses across the pRFs (thus, low spatial uncertainty indicates good discrimination performance). We applied this analysis separately to each region, analyzing the pRFs observed under each task.

As expected from the stability of pRF properties in early visual areas, there is little change in spatial uncertainty in these areas across tasks (Figure 4A, top). In all tasks, spatial uncertainty in V1–V3 is less than 0.5° near the fovea (1° eccentricity) and less than 1.5° in the periphery (5° eccentricity) (Figures 4B, 4C). In contrast, the re are large changes in spatial uncertainty in face-selective regions across tasks. In the periphery, spatial uncertainty is substantially reduced under the dot task (1.9-fold reduction, on average across face-selective regions) and the face task (2.7-fold reduction, on average across regions) compared to the digit task (Figure 4A, bottom; Figure 4C). For example, in mFus, uncertainty in the periphery is more than 3° under the digit task, but only about 1° under the face task (Figure 4C). Importantly, these improvements are not simply due to increased pRF gain: improvements are still observed if pRF gain is held constant and only the task-induced changes in pRF location and size are considered (Figure S4A). These results indicate that attending the stimulus either explicitly (face task) or implicitly (dot task) reduces uncertainty with respect to the location of the stimulus. As a complement to our model-based decoding analysis, we also performed direct decoding of the distributed response patterns evoked by faces with no intervening modeling step. Results are consistent with our model-based analysis: in face-selective regions, there is improved decoding of face position in the periphery under the face task compared to the digit and dot tasks (Figures S4B–S4C).

Figure 4. Attention reduces spatial uncertainty in VTC.

For each region and task, we assess the quality of the representation of spatial information using a model-based decoding analysis. This analysis quantifies how well a linear classifier can discriminate stimuli at different visual field positions from a stimulus at a reference position. (A) Example results for a 3 × 3 grid of reference positions in the upper-right visual field (left inset). Each image is a map of discrimination performance for one reference position (indicated by the relative position of the image). We define spatial uncertainty as the square root of the area of the 75%-correct contour (white line). (B) Uncertainty at 1° eccentricity. Each bar represents uncertainty in a region under a single task (median across angular positions ± 68% CI). All regions exhibit low uncertainty irrespective of the task. (C) Uncertainty at 5° eccentricity. Face-selective regions I OG, pFus, and mFus exhibit high uncertainty under the digit task. However, this uncertainty is dramatically reduced under the dot and face tasks. See also Figure S4.

DISCUSSION

The experiments in the present study reveal that spatial representations are prevalent in the ventral ‘what’ visual pathway. First, we have shown that responses in VTC are modulated by changes in the position and size of the stimulus. These modulations are systematic and are accurately characterized by a pRF model utilizing spatial summation and a compressive nonlinearity. Second, spatial representations within VTC are actively shaped by top-down task demands. Specifically, attention modulates pRFs in high and intermediate levels of the ventral pathway, but not early visual regions. While prior research has shown that spatial attention shifts receptive fields in the dorsal ‘where’ visual pathway [6–9, 25], as well as intermediate visual areas in the ventral pathway [23, 26–28], we extend these results to high-level areas in the ventral pathway for the first time.

Attentional effects in the ventral visual pathway

The observed attentional modulations of pRFs are consistent with the theory that neural responses in visual cortex reflect the combination of bottom-up stimulus drive and a top-down attentional field that enhances responses to stimuli at the current locus of attention [23, 26, 29]. While both implicit (dot task) and explicit (face task) attention towards faces lead to response enhancement, we find that explicit attention towards faces produces larger modulations (see Figure 3A). This suggests that responses in the ventral visual pathway are modulated by both spatial and object-based attention, consistent with recent demonstrations of category-based attentional effects in the ventral pathway [30]. An interesting subject for future work is examining whether the attentional modulations observed here can be quantitatively described as an interaction between a global attentional field [7, 10, 23] and local classical receptive fields. Recent data suggest that the effect of a global attentional field on pRFs depends on pRF size, with larger effects obtained for larger pRFs [10]. Thus, these models predict larger attentional shifts of pRFs at higher stages of the visual processing hierarchy. We facilitate efforts to examine such questions and to further develop attentional models by making our data publicly available (http://kendrickkay.net/vtcdata/).

One question that stems from our findings is whether the demonstrated impact of attention on cortical responses has behavioral consequences. We hypothesize that attention-induced changes in the representation of spatial information in VTC may affect behavioral judgments of spatial position. Specifically, reduction of neural spatial uncertainty during the dot and face tasks compared to the digit task suggests that behavioral judgments of face position would be more accurate during the dot and face tasks. This hypothesis can be tested in future behavioral studies.

Another open question is exactly how the attentional modulations measured with fMRI manifest at the level of individual neurons. As prior electrophysiological studies have demonstrated that attention modulates neuronal firing rates in monkey IT [26, 28], we hypothesize that similar attentional modulations of RFs occur for individual neurons in the ventral visual pathway. Notably, our observation of task-dependent pRFs might explain the variability of previous reports of neuronal RF sizes in monkey IT: RFs were largest during passive viewing [31, 32] and anesthesia [19], whereas RFs were smallest during demanding discrimination tasks near the fovea [33].

Rethinking position tolerance in the ventral visual pathway

Position and size tolerance are considered key features of the ventral visual pathway, useful for object and face recognition. Tolerance indicates reduced sensitivity to incidental properties of a stimulus, such as the specific position or size at which it is viewed [34, 35]. Prevailing theories suggest that tolerance is achieved by systematic increase in receptive field sizes across processing stages in the ventral visual pathway [19, 20, 22, 36, 37]. Intuitively, a large receptive field implies that a wide range of stimulus positions and sizes drive the neural response [12].

While our pRF measurements are consistent with this account and reveal a hierarchy of pRF sizes within VTC, there are two aspects of our data that prompt a rethinking of position tolerance in VTC. First, we show that position tolerance at the level of individual voxels is partially the result of top-down attentional mechanisms and not simply due to static receptive field properties (see also [38]). Specifically, we find that when subjects attend to the stimulus, pRFs enlarge, thereby increasing position tolerance. Second, we show that the common intuition that larger pRFs degrade spatial information may be misleading. Despite the enlargement of pRFs when subjects attend the stimulus, the spatial precision with which the location of the stimulus is represented in VTC improves, rather than worsens.

At first glance, these observations seem inconsistent: how can attention increase spatial tolerance while also increasing spatial precision? The answer lies in the distinction between the local scale (i.e. information carried by a single voxel) and the global scale (i.e. information carried by distributed responses across voxels). At the local scale, each individual voxel shows reduced sensitivity to stimulus location due to increased pRF size. However, at the global scale, sensitivity to stimulus location improves, due to increased pRF coverage and scatter in the periphery (Figures 3B–3C) which together provide a better tiling of the visual field.

Conclusion

We have used a model-based approach to understand how attention influences representation in visual cortex. Our approach consisted of measuring responses to a wide range of stimulus conditions [39, 40], developing an encoding model that describes how stimulus information is represented locally [11, 41, 42], and using decoding analyses to quantify the information present in distributed responses [43]. Importantly, while we implemented this approach with fMRI, the approach is general and can be applied to other experimental techniques, such as EEG, MEG, ECoG, and electrophysiology. Comparing results from different experimental techniques in a common model-based framework may help elucidate the neural signals measured by different techniques [44] and may help resolve discrepancies in the sizes of attentional effects found by different techniques [45]. Overall, our study reveals that spatial information is systematically represented in the ventral visual pathway and that attention modifies and enhances this spatial representation. These results provide important insights into how position coding is implemented in the ventral visual pathway.

Supplementary Material

HIGHLIGHTS.

Developed population receptive field (pRF) model for ventral temporal cortex (VTC)

Model accurately predicts responses to novel stimulus positions and sizes

Attention increases pRF gain, eccentricity, and size in high- but not low-level areas

Attention improves the quality of spatial representations in VTC

Acknowledgments

We thank T. Naselaris, A. Rokem, J. Winawer, and E. Zohary for helpful discussions. We also thank A. Stigliani for assistance with behavioral experiments, J. Winawer for providing retinotopic mapping data, and N. Witthoft for assisting in the collection of face photographs. This work was supported by the McDonnell Center for Systems Neuroscience and Arts & Sciences at Washington University (K.K.), NEI grant 1R01EY02391501A1 (K.G.S.), and NEI grant RO1 EY03164 (to Brian Wandell). Computations were performed using the facilities of the Washington University Center for High Performance Computing, which were partially provided through grant NCRR 1S10RR022984-01A1.

Footnotes

Supplemental Information includes four figures and Supplemental Experimental Procedures, and can be found with this article online at doi:10.1016/j.cub.2014.12.050. Data from this study are provided at http://kendrickkay.net/vtcdata/.

AUTHOR CONTRIBUTIONS

K.K. conducted the experiment and analyzed the data. K.W. performed localizer experiments and assisted with data collection. K.K., K.W., and K.G.S. conceived and designed the experiments. K.K., K.W., and K.G.S. wrote the paper.

Publisher's Disclaimer: This is a PDF file of an unedited manuscript that has been accepted for publication. As a service to our customers we are providing this early version of the manuscript. The manuscript will undergo copyediting, typesetting, and review of the resulting proof before it is published in its final citable form. Please note that during the production process errors may be discovered which could affect the content, and all legal disclaimers that apply to the journal pertain.

References

- 1.Ungerleider LG, Mishkin M. Two cortical visual systems. In: Ingle DJ, Goodale MA, Mansfield RJW, editors. Analysis of visual behavior. MIT Press; 1982. pp. 549–586. [Google Scholar]

- 2.Goodale MA, Milner AD, Jakobson LS, Carey DP. Object awareness. Nature. 1991;352:202–202. doi: 10.1038/352202b0. [DOI] [PubMed] [Google Scholar]

- 3.Schwarzlose RF, Swisher JD, Dang S, Kanwisher N. The distribution of category and location information across object-selective regions in human visual cortex. Proceedings of the National Academy of Sciences of the United States of America. 2008;105:4447–4452. doi: 10.1073/pnas.0800431105. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 4.Kravitz DJ, Kriegeskorte N, Baker CI. High-level visual object representations are constrained by position. Cereb Cortex. 2010;20:2916–2925. doi: 10.1093/cercor/bhq042. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 5.Carlson T, Hogendoorn H, Fonteijn H, Verstraten FAJ. Spatial coding and invariance in object-selective cortex. Cortex. 2011;47:14–22. doi: 10.1016/j.cortex.2009.08.015. [DOI] [PubMed] [Google Scholar]

- 6.Silver MA, Ress D, Heeger DJ. Topographic maps of visual spatial attention in human parietal cortex. Journal of neurophysiology. 2005;94:1358–1371. doi: 10.1152/jn.01316.2004. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 7.Sprague TC, Serences JT. Attention modulates spatial priority maps in the human occipital, parietal and frontal cortices. Nature neuroscience. 2013;16:1879–1887. doi: 10.1038/nn.3574. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 8.Szczepanski SM, Konen CS, Kastner S. Mechanisms of spatial attention control in frontal and parietal cortex. J Neurosci. 2010;30:148–160. doi: 10.1523/JNEUROSCI.3862-09.2010. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 9.Saproo S, Serences JT. Spatial attention improves the quality of population codes in human visual cortex. Journal of neurophysiology. 2010;104:885–895. doi: 10.1152/jn.00369.2010. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 10.Klein BP, Harvey BM, Dumoulin SO. Attraction of position preference by spatial attention throughout human visual cortex. Neuron. 2014;84:227–237. doi: 10.1016/j.neuron.2014.08.047. [DOI] [PubMed] [Google Scholar]

- 11.Dumoulin SO, Wandell B. Population receptive field estimates in human visual cortex. Neuro Image. 2008;39:647–660. doi: 10.1016/j.neuroimage.2007.09.034. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 12.Kay KN, Winawer J, Mezer A, Wandell B. Compressive spatial summation in human visual cortex. Journal of neurophysiology. 2013;110:481–494. doi: 10.1152/jn.00105.2013. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 13.Weiner KS, Grill-Spector K. Sparsely-distributed organization of face and limb activations in human ventral temporal cortex. Neuro Image. 2010;52:1559–1573. doi: 10.1016/j.neuroimage.2010.04.262. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 14.Levy I, Hasson U, Avidan G, Hendler T, Malach R. Center-periphery organization of human object areas. Nature neuroscience. 2001;4:533–539. doi: 10.1038/87490. [DOI] [PubMed] [Google Scholar]

- 15.Yue X, Cassidy BS, Devaney KJ, Holt DJ, Tootell RBH. Lower-level stimulus features strongly influence responses in the fusiform face area. Cereb Cortex. 2011;21:35–47. doi: 10.1093/cercor/bhq050. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 16.Weiner KS, Golarai G, Caspers J, Chuapoco MR, Mohlberg H, Zilles K, Amunts K, Grill-Spector K. The mid-fusiform sulcus: a landmark identifying both cytoarchitectonic and functional divisions of human ventral temporal cortex. Neuro Image. 2014;84:453–465. doi: 10.1016/j.neuroimage.2013.08.068. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 17.Kay KN, Rokem A, Winawer J, Dougherty RF, Wandell B. GLMdenoise: a fast, automated technique for denoising task-based fMRI data. Front Neurosci. 2013;7:247. doi: 10.3389/fnins.2013.00247. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 18.Sereno MI, Dale AM, Reppas JB, Kwong KK, Belliveau JW, Brady TJ, Rosen BR, Tootell RB. Borders of multiple visual areas in humans revealed by functional magnetic resonance imaging. Science. 1995;268:889–893. doi: 10.1126/science.7754376. [DOI] [PubMed] [Google Scholar]

- 19.Gross CG, Bender DB, Rocha-Miranda CE. Visual receptive fields of neurons in inferotemporal cortex of the monkey. Science. 1969;166:1303–1306. doi: 10.1126/science.166.3910.1303. [DOI] [PubMed] [Google Scholar]

- 20.Boussaoud D, Desimone R, Ungerleider LG. Visual topography of area TEO in the macaque. The Journal of comparative neurology. 1991;306:554–575. doi: 10.1002/cne.903060403. [DOI] [PubMed] [Google Scholar]

- 21.op de Beeck H, Vogels R. Spatial sensitivity of macaque inferior temporal neurons. The Journal of comparative neurology. 2000;426:505–518. doi: 10.1002/1096-9861(20001030)426:4<505::aid-cne1>3.0.co;2-m. [DOI] [PubMed] [Google Scholar]

- 22.Issa EB, DiCarlo JJ. Precedence of the eye region in neural processing of faces. J Neurosci. 2012;32:16666–16682. doi: 10.1523/JNEUROSCI.2391-12.2012. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 23.Reynolds JH, Heeger DJ. The normalization model of attention. Neuron. 2009;61:168–185. doi: 10.1016/j.neuron.2009.01.002. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 24.Snippe HP, Koenderink JJ. Discrimination thresholds for channel-coded systems. Biological cybernetics. 1992;66:543–551. doi: 10.1007/BF00201025. [DOI] [PubMed] [Google Scholar]

- 25.Treue S, Maunsell JH. Attentional modulation of visual motion processing in cortical areas MT and MST. Nature. 1996;382:539–541. doi: 10.1038/382539a0. [DOI] [PubMed] [Google Scholar]

- 26.Moran J, Desimone R. Selective attention gates visual processing in the extrastriate cortex. Science. 1985;229:782–784. doi: 10.1126/science.4023713. [DOI] [PubMed] [Google Scholar]

- 27.Connor CE, Preddie DC, Gallant JL, Van Essen DC. Spatial attention effects in macaque area V4. J Neurosci. 1997;17:3201–3214. doi: 10.1523/JNEUROSCI.17-09-03201.1997. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 28.Richmond BJ, Wurtz RH, Sato T. Visual responses of inferior temporal neurons in awake rhesus monkey. Journal of neurophysiology. 1983;50:1415–1432. doi: 10.1152/jn.1983.50.6.1415. [DOI] [PubMed] [Google Scholar]

- 29.Desimone R, Duncan J. Neural mechanisms of selective visual attention. Annual review of neuroscience. 1995;18:193–222. doi: 10.1146/annurev.ne.18.030195.001205. [DOI] [PubMed] [Google Scholar]

- 30.Çukur T, Nishimoto S, Huth AG, Gallant JL. Attention during natural vision warps semantic representation across the human brain. Nature neuroscience. 2013;16:763–770. doi: 10.1038/nn.3381. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 31.Desimone R, Albright TD, Gross CG, Bruce C. Stimulus-selective properties of inferior temporal neurons in the macaque. J Neurosci. 1984;4:2051–2062. doi: 10.1523/JNEUROSCI.04-08-02051.1984. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 32.Tovee MJ, Rolls ET, Azzopardi P. Translation invariance in the responses to faces of single neurons in the temporal visual cortical areas of the alert macaque. Journal of neurophysiology. 1994;72:1049–1060. doi: 10.1152/jn.1994.72.3.1049. [DOI] [PubMed] [Google Scholar]

- 33.DiCarlo JJ, Maunsell JH. Anterior inferotemporal neurons of monkeys engaged in object recognition can be highly sensitive to object retinal position. Journal of neurophysiology. 2003;89:3264–3278. doi: 10.1152/jn.00358.2002. [DOI] [PubMed] [Google Scholar]

- 34.DiCarlo JJ, Zoccolan D, Rust NC. How does the brain solve visual object recognition? Neuron. 2012;73:415–434. doi: 10.1016/j.neuron.2012.01.010. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 35.Poggio T, Ullman S. Vision: are models of object recognition catching up with the brain? Ann N Y Acad Sci. 2013;1305:72–82. doi: 10.1111/nyas.12148. [DOI] [PubMed] [Google Scholar]

- 36.Yamins DLK, Hong H, Cadieu CF, Solomon EA, Seibert D, DiCarlo JJ. Performance-optimized hierarchical models predict neural responses in higher visual cortex. Proceedings of the National Academy of Sciences of the United States of America. 2014;111:8619–8624. doi: 10.1073/pnas.1403112111. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 37.Serre T, Wolf L, Bileschi S, Riesenhuber M, Poggio T. Robust object recognition with cortex-like mechanisms. IEEE Trans Pattern Anal Mach Intell. 2007;29:411–426. doi: 10.1109/TPAMI.2007.56. [DOI] [PubMed] [Google Scholar]

- 38.Olshausen BA, Anderson CH, Van Essen DC. A neurobiological model of visual attention and invariant pattern recognition based on dynamic routing of information. J Neurosci. 1993;13:4700–4719. doi: 10.1523/JNEUROSCI.13-11-04700.1993. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 39.Kay KN, Naselaris T, Prenger RJ, Gallant JL. Identifying natural images from human brain activity. Nature. 2008;452:352–355. doi: 10.1038/nature06713. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 40.Kriegeskorte N, Mur M, Ruff DA, Kiani R, Bodurka J. Matching categorical object representations in inferior temporal cortex of man and monkey. Neuron. 2008 doi: 10.1016/j.neuron.2008.10.043. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 41.Naselaris T, Kay KN, Nishimoto S, Gallant JL. Encoding and decoding in fMRI. Neuro Image. 2011;56:400–410. doi: 10.1016/j.neuroimage.2010.07.073. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 42.Kay KN. Understanding visual representation by developing receptive-field models. In: Kriegeskorte N, Kreiman G, editors. Visual Population Codes: Towards a Common Multivariate Framework for Cell Recording and Functional Imaging. MIT Press; 2011. [Google Scholar]

- 43.Haxby JV, Gobbini MI, Furey ML, Ishai A, Schouten JL, Pietrini P. Distributed and overlapping representations of faces and objects in ventral temporal cortex. Science. 2001;293:2425–2430. doi: 10.1126/science.1063736. [DOI] [PubMed] [Google Scholar]

- 44.Winawer J, Kay KN, Foster BL, Rauschecker AM, Parvizi J, Wandell B. Asynchronous broadband signals are the principal source of the BOLD response in human visual cortex. Curr Biol. 2013;23:1145–1153. doi: 10.1016/j.cub.2013.05.001. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 45.Boynton GM. Spikes, BOLD, attention, and awareness: a comparison of electrophysiological and fMRI signals in V1. Journal of vision. 2011;11:12–12. doi: 10.1167/11.5.12. [DOI] [PMC free article] [PubMed] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.