Abstract

There is a conflicting literature on facial emotion processing in autism spectrum disorder (ASD): both typical and atypical performance have been reported, and inconsistencies in the literature may stem from different processes examined (emotion judgment, face perception, fixations) as well as differences in participant populations. Here we conducted a detailed investigation of the ability to discriminate graded emotions shown in morphs of fear-happy faces, in a well-characterized high-functioning sample of participants with ASD and matched controls. Signal detection approaches were used in the analyses, and concurrent high-resolution eye-tracking was collected. Although people with ASD had typical thresholds for categorical fear and confidence judgments, their psychometric specificity to detect emotions across the entire range of intensities was reduced. However, fixation patterns onto the stimuli were typical and could not account for the reduced specificity of emotion judgment. Together, our results argue for a subtle and specific deficit in emotion perception in ASD that, from a signal detection perspective, is best understood as a reduced specificity due to increased noise in central processing of the face stimuli.

Keywords: Autism spectrum disorder, Emotion, Eye Tracking, Face Morph, Ambiguity, Confidence

1. Introduction

People with autism spectrum disorder (ASD) demonstrate pervasive dysfunctions in social communication, but it has been elusive to find the underlying specific processing deficits. A number of impaired components of social communicative functioning have been reported, notably including impaired face processing and emotion recognition. Yet even this literature is discrepant. In particular, several studies find reliable, but weak, deficits in the ability to recognize emotions from facial expressions (Law Smith et al., 2010, Philip et al., 2010, Wallace et al., 2011, Kennedy and Adolphs, 2012), although others do not (Baron-Cohen et al., 1997, Adolphs et al., 2001, Neumann et al., 2006) (see (Harms et al., 2010) for a review). This discrepancy may be attributed to the known heterogeneity of ASD, the stimuli and tasks used in the various studies, as well as ceiling effects or the compensatory strategies by individuals with ASD. However, it has been argued that as long as the measures are sensitive enough, behaviorally- or biologically-based measures can almost invariably detect group differences in facial emotion recognition (Harms et al., 2010). Two major methodological approaches could enhance sensitivity to reveal group differences and avoid ceiling effects: one is to modify the task demand (e.g., using difficult or unfamiliar tasks), the other is to manipulate the stimuli, such as face morphing (Law Smith et al., 2010, Wallace et al., 2011).

Impaired face perception or emotion recognition might also arise from atypical fixations onto faces, which have been reported in many studies, but again in a rather heterogeneous literature. For instance, it has been shown that adults with ASD have an increased tendency to saccade away from the eye region of faces when information is present in those regions (Spezio et al., 2007), but instead have an increased preference to fixate the location of the mouth (Neumann et al., 2006). During viewing naturalistic social videos, people with autism demonstrate abnormal patterns of social visual pursuit that are consistent with reduced saliency of eyes and increased saliency of mouths, bodies, and objects (Klin et al., 2002). When viewing static faces, people with autism view non-feature areas of the faces significantly more often but core feature areas of the faces (e.g., eyes and mouth) significantly less often than controls (Pelphrey et al., 2002) and they have piecemeal rather than configural strategies (Dawson et al., 2005). Similarly, some research suggests that people with ASD demonstrate active avoidance of fixating the eyes in faces, which in turn influences recognition performance of emotions (Kliemann et al., 2010), whereas other research suggests that children with ASD demonstrate gaze indifference and passive insensitivity to the social signals in others’ eyes at the time of initial diagnosis (Moriuchi et al., 2017). The atypical facial fixations are complemented by neuronal evidence of abnormal processing of information from the eye region of faces in blood-oxygen-level dependent (BOLD) fMRI (Kliemann et al., 2012) and single-neuron responses in the amygdala (Rutishauser et al., 2013). A recent study using comprehensive modeling with a large number of natural scene images showed that people with ASD not only have reduced saliency representation of faces, but show reduced saliency for many semantic-level attributes of visual stimuli (Wang et al., 2015).

On the other hand, however, many other studies have shown apparently normal social orienting and attention to facial features in people with ASD (see (Guillon et al., 2014) for a recent review). For example, infants who later develop autism show an equally strong face orienting response (Elsabbagh et al., 2013) and adults with ASD can have fixation dwell times onto faces that are largely typical (Kuhn et al., 2010, Nakano et al., 2010). In several studies, young children and adolescents with ASD show typical patterns of attention to eyes and mouth (de Wit et al., 2008, Falck-Ytter et al., 2010, McPartland et al., 2011). The latter study is particularly relevant, since it found typical fixation patterns despite impaired face recognition ability (McPartland et al., 2011). Taken together, these findings not only point to the need to resolve discrepancies in the literature, but also (and relatedly) to the need to distinguish between the various processes that contribute to facial emotion processing, so that we can better understand which specific components characterize ASD.

In this study, we used a two-alternative forced-choice task with a gradient of morphed faces along the fear-happy dimension to investigate the sensitivity and specificity with which people are able to distinguish emotions in facial expressions. Concurrent eye tracking provided important comparison data. Using morphed stimuli allowed us to parametrically control the intensity of the stimuli and to assess emotion discrimination at a fine-grained level. We not only examined eye movements with respect to stimulus levels (i.e., emotion intensity and ambiguity levels), but also with respect to behavioral judgment. We also quantified the fixation noise, and investigated whether such noise could predict the correctness of emotion judgment. Although we found remarkably similar eye movement patterns between participants with ASD and controls, as well as normal thresholds to report fear and normal confidence in judgments of emotions, participants with ASD showed reduced specificity to emotions.

2. Methods

2.1. Participants

Eighteen high-functioning participants with ASD (15 male) were recruited from our laboratory’s registry. All ASD participants met DSM-V/ICD-10 diagnostic criteria for autism spectrum disorder, and met the cutoff scores for ASD on the Autism Diagnostic Observation Schedule-2 (ADOS-2) revised scoring system for Module 4 (Hus and Lord, 2014), and the Autism Diagnostic Interview-Revised (ADI-R) (LeCouteur et al., 1989, Lord et al., 1994) or Social Communication Questionnaire (SCQ) (Rutter et al., 2003) when an informant was available. The ADOS-2 was scored according to the latest algorithm, and we also derived severity scores for exploratory correlation analyses (social affect (SA): 12.1±4.22 (mean±SD), restricted and repetitive behavior (RRB): 3.13±1.36, severity score for social affect (CSS SA): 8.00±1.71; severity score for restricted and repetitive behavior (CSS RRB): 7.13±1.36, severity score for social affect plus restricted and repetitive behavior (CSS All): 7.88±1.54). The ASD group had a full-scale IQ (FSIQ) of 105±13.3 (from the Wechsler Abbreviated Scale of Intelligence-2), a mean age of 30.8±7.40 years, a mean Autism Spectrum Quotient (AQ) of 29.3±8.28, a mean SRS-2 Adult Self Report (SRS-A-SR) of 84.6±21.5, and a mean Benton score of 46.1±3.89 (Benton scores 41–54 are in the normal range). ADOS item scores were not available for two participants, so we were unable to utilize the revised scoring system. But these individuals’ original ADOS algorithm scores all met the cutoff scores for ASD.

Fifteen neurologically and psychiatrically healthy participants with no family history of ASD (11 male) were recruited as controls. Controls had a comparable FSIQ of 107±8.69 (two-tailed t-test, P=0.74) and a comparable mean age of 35.1±11.4 years (P=0.20), but a lower AQ (17.7±4.29, P=4.62×10−5) and SRS-A-SR (51.0±30.3, P=0.0039) as expected. Controls were also matched on gender, race and education.

Participants gave written informed consent and the experiments were approved by the Caltech Institutional Review Board. All participants had normal or corrected-to-normal visual acuity. No enrolled participants were excluded for any reasons and all data are reported.

2.2. Stimuli and task

We asked participants to discriminate between two emotions, fear and happiness because these emotions are distinguished by particular facial features (Smith et al., 2005). We selected faces of four individuals (2 female) each posing fear and happiness expressions from the STOIC database (Roy et al., 2007), which are expressing highly recognizable emotions. Selected faces served as anchors, and were unambiguous exemplars of fearful and happy emotions as evaluated with normative rating data provided by the creators. To generate the morphed expression continua for this experiment, we interpolated pixel value and location between fearful exemplar faces and happy exemplar faces using a piece-wise cubic-spline transformation over a Delaunay tessellation of manually selected control points. We created 5 levels of fear-happy morphs, ranging from 30% fear/70% happy to 70% fear/30% happy in steps of 10% (Fig. 1B). Low-level image properties were equalized using the SHINE toolbox (Willenbockel et al., 2010) (The toolbox features functions for specifying the (rotational average of the) Fourier amplitude spectra, for normalizing and scaling mean luminance and contrast, and for exact histogram specification optimized for perceptual visual quality).

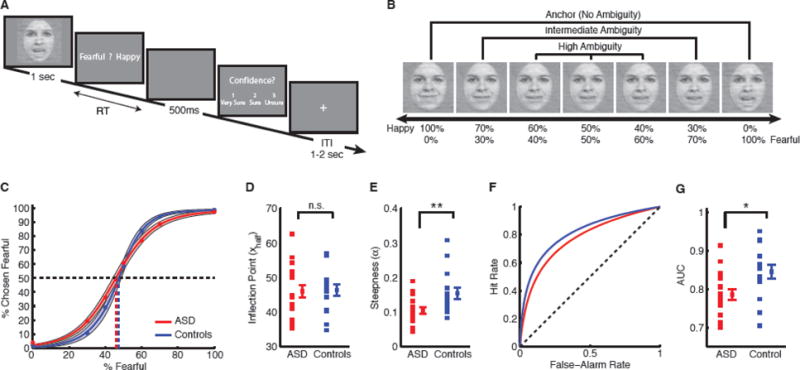

Fig. 1.

Task, sample stimuli, and behavioral results. (A) Task. A face was presented for 1 second followed by a question asking participants to identify the facial emotion (fearful or happy). After a blank screen of 500 ms, participants were then asked to indicate their confidence in their decision (‘1’ for ‘very sure’, ‘2’ for ‘sure’ or ‘3’ for ‘unsure’). Faces are not shown to scale. (B) Sample stimuli of one female identity ranging from 0% fear/100% happy to 100% fear/0% happy. (C) Group average of psychometric curves. The psychometric curves show the proportion of trials judged as fearful as a function of morph levels (ranging from 0% fearful (100% happy; on the left) to 100% fearful (0% happy; on the right)). Shaded area denotes ±SEM across participants. (D) Inflection point of the logistic function (xhalf). (E) Steepness of the psychometric curve (α). (F) ROC curves. (G) Area under ROC curves (AUC). Individual values are shown on the left and average values are shown on the right. Error bars denote one SEM across participants. Asterisks indicate significant difference using unpaired two-tailed t-test. *: P<0.05, and **: P<0.01. n.s.: not significant (P>0.05).

In each trial, a face was presented for 1 second followed by a question prompt asking participants to make the best guess of the facial emotion (Fig. 1A). After stimulus offset, participants had 2 seconds to respond, otherwise the trial was aborted and discarded. Participants were instructed to respond as quickly as possible, but only after stimulus offset. No feedback message was displayed and the order of faces was completely randomized for each participant. An inter-trial-interval (ITI) displaying a central fixation cross was jittered randomly with a uniform distribution between 1 to 2 seconds. Participants practiced 5 trials before the experiment to familiarize themselves with the task.

A subset of the participants (11 participants with ASD and 11 controls) also performed confidence ratings (Fig. 1A)—after emotion judgment and a 500 ms blank screen, participants were asked to indicate their confidence by pushing the button ‘1’ for ‘very sure’, ‘2’ for ‘sure’ or ‘3’ for ‘unsure’. This question also had 2 seconds to respond.

2.3. Behavioral analysis

We fitted a logistic function to obtain smooth psychometric curves (Fig. 1C):

where P is the percentage of trials judging faces as fear, x is the morph level, Pinf is the value when x approaches infinity (the curve’s maximum value), xhalf is the symmetric inflection point (the curve’s midpoint), and α is the steepness of the curve. Pinf, xhalf, and α were fitted from the observed data (P and x). Flatter curves (smaller α) suggest that participants were less sensitive to the change in emotion intensity since they made similar judgments given different morph levels, and vice versa for steeper curves (larger α). We derived these parameters for each participant.

We further quantified the face judgment performance using signal detection theory (SDT) (Macmillan and Creelman, 2005). We considered all faces with %fearful > 50% as fear faces, and all faces with %fearful < 50% as happy faces (we excluded 50% fear/50% happy faces from this analysis). We defined “hits” as fear faces that were judged as fear, “false alarms” as happy faces that were judged as fear, “misses” as fear faces that were judged as happy, and “correct rejections” as happy faces that were judged as happy. By shifting the stimulus ambiguity level (i.e., the difference between %fearful and %happy; the greater the difference, the less ambiguous), we were able to construct receiver operating characteristic (ROC) curves from the smooth psychometric curves (Fig. 1C). Each point in the ROC (Fig. 1F) is a function of the proportion of correctly judged fear faces (hit rate, y-axis) and the proportion of falsely judged fear faces (false-alarm rate, x-axis) at a given stimulus ambiguity level. The point in the lower left corner (lowest false-alarm rate as well as hit rate) corresponds to the lowest stimulus ambiguity level (i.e., anchor faces). For the following analysis, we computed the area under the ROC curve (AUC; Fig. 1G). A smaller AUC results from a lower hit rate and/or a higher false-alarm rate, which in turn suggests reduced specificity.

2.4. Eye tracking and apparatus

Eye tracking was carried out using a non-invasive infra-red remote Tobii X300 system which recorded binocular gaze at 300Hz. The Tobii visualization software (Tobii Studio™ 2.2) was used to record eye movements and perform gaze analysis. Fixations were detected by Tobii Fixation Filter implemented in Tobii Studio. The Tobii Fixation Filter is a classification algorithm proposed by (Olsson, 2007) and detects quick changes in the gaze point using a sliding window averaging method. Velocity threshold was set to 35 [pixels/samples] and distance threshold was set to 35 [pixels] in our study. Although this fixation filter did not control for the minimum fixation duration, we derived qualitatively the same results when excluding fixations that were shorter than 100 ms. Furthermore, although our procedure did not quantitatively verify gaze recording accuracy, participants with ASD had a similar valid recording duration per trial compared to controls (ASD: 947±27.8 ms, controls: 959±32.5 ms; t(31)=1.10, P=0.28), indicating a similar recording quality of eye movement.

To quantitatively compare the fixation densities within certain parts of the face, we defined three ROIs: eyes, mouth and center (Fig. 2a). Each ROI is a rectangle and the eye and mouth ROI have the same size. The fixation density was calculated for each participant and for each morph level during the entire 1 second stimulus period, and was normalized within each participant. Fixation locations were smoothed with a 40-pixel 2D Gaussian kernel with a standard deviation of 10 pixels. Statistical maps were computed after density maps were smoothed with a smaller Gaussian kernel (20-pixel in size, with a standard deviation of 5) to obtain more localized differences.

Fig. 2.

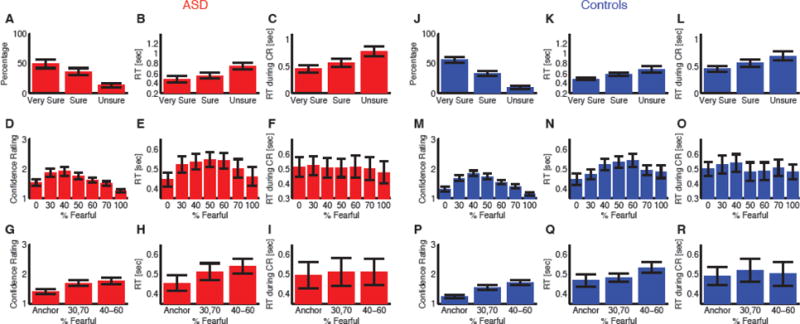

Confidence judgments. (A–I) Participants with ASD. (J–R) Controls. (A, J) Both participants with ASD and controls reported more high confidence. (B, K) Participants judged facial emotions faster when they subsequently indicated higher confidence. (C, L) Participants tended to report confidence faster for higher confidence. (D, M) Explicit confidence ratings showed highest confidence for anchor faces and lowest for the most ambiguous (50% fear/50% happy) faces. (E, N) The reaction time (RT) for the fear/happy decision can be considered as an implicit measure of confidence because it showed a similar pattern as the explicit ratings. (F, O) RT of reporting confidence did not vary as a function of morph level. (G, P) Explicit confidence ratings varied systematically as a function of emotion ambiguity. (H, Q) Implicit confidence measures (RT) also varied systematically as a function of emotion ambiguity. (I, R) Participants did not show difference in RTs of reporting confidence for different ambiguity levels. Error bars denote one SEM across participants.

Participants sat approximately 65cm from an LCD display with a 23-inch screen (screen resolution: 1920 × 1080). The refresh rate of the display was 60 Hz and the stimuli occupied the center of the display (11.9°×11.9° visual angle). Stimuli were presented using MATLAB with the Psychtoolbox 3 (Brainard, 1997) (http://psychtoolbox.org).

3. Results

3.1. Emotion judgments

We asked participants to judge emotional faces as fearful or happy (Fig. 1A). Faces were either unambiguously happy or unambiguously fearful (“anchors”), or graded ambiguous morphs between these two emotions (Fig. 1B). For each participant, we quantified behavior as the proportion of trials identified as fearful as a function of morph level (Fig. 1C). For both participants with ASD and controls, we found a monotonically increasing relationship between the likelihood of identifying a face as fearful and the proportion of fear shown in the morphed face (Fig. 1C), showing that participants with ASD could well track the gradual change in emotions. We quantified each psychometric curve using two metrics derived from the logistic function: i) xhalf—the midpoint of the curve (in units of %fearful) at which participants were equally likely to judge a face as fearful or happy, and ii) α—the steepness of the psychometric curve. Based on these two metrics, we found that participants with ASD had similar xhalf compared to controls (Fig. 1D; ASD: 46.0±7.38 (mean±SD), controls: 46.4±6.28; two-tailed t-test across participants: t(31)=0.13, P=0.90, effect size in Hedges’ g (standardized mean difference): g=0.044, permutation test with 1000 runs, P=0.96), however, they had significantly smaller α (Fig. 1E; ASD: 0.11±0.037, controls: 0.16±0.062; t(31)=2.86, P=0.0075, g=0.98, permutation P=0.006), suggesting that participants with ASD had flatter psychometric curves. Flatter curves (smaller α) suggest that participants with ASD were less specific in their emotion judgments, since they made similar judgments given different morph levels, consistent with a previous study showing that people with ASD have reduced specificity when judging multiple emotions in facial expressions (Kennedy and Adolphs, 2012).

We further confirmed our results using signal detection theory (see Methods). We considered all faces with %fearful > 50% as fear faces, and all faces with %fearful < 50% as happy faces (we excluded 50% fear/50% happy faces from this analysis). We constructed ROC curves (Fig. 1F) from the smooth psychometric curves, with correctly judged fear faces as “hits” and incorrectly judged happy faces as “false alarms”. Participants with ASD had a shallower ROC curve (Fig. 1F) and thus a smaller AUC compared to controls (Fig. 1G; ASD: 0.79±0.060, controls: 0.85±0.069; t(31)=2.66, P=0.012, g=0.91, permutation P=0.012), confirming that the reduced specificity in participants with ASD was due to more incorrect judgments of emotions.

Each participant completed 252 trials in 3 blocks (36 trials per morph level), and participants with ASD had a similar number of valid trials (i.e., trials that participants responded to with a button press) compared to controls (ASD: 246.7±4.91, controls: 248.4±4.58; t(31)=1.04, P=0.31, g=0.36, permutation P=0.31). Participants with ASD took a similar amount of time to complete the entire experiment (ASD: 22.5±5.72 minutes, controls: 20.1±3.73 minutes; t(31)=1.42, P=0.17, g=0.48, permutation P=0.14). We furthermore checked that the reduced specificity of emotion judgment in ASD was constant across time and not driven by differential adaptation, by repeating our analysis separately for the first and second half of the trials. Again, participants with ASD had a similar number of valid trials compared to controls for both the first half (ASD: 122.8±2.90, controls: 123.4±3.27; t(31)=0.53, P=0.60, g=0.18, permutation P=0.57) and second half (ASD: 123.8±3.00, controls: 125.0±1.93; t(31)=1.30, P=0.20, g=0.44, permutation P=0.19) of the experiment. Participants with ASD had a smaller AUC in both the first half (ASD: 0.78±0.058, controls: 0.83±0.068; t(31)=2.46, P=0.020, g=0.84, permutation P=0.016) and second half (ASD: 0.80±0.081, controls: 0.86±0.076; t(31)=2.49, P=0.019, g=0.85, permutation P=0.014) of the experiment, confirming that the reduced specificity of emotion judgment in ASD is not a function of time on task.

Together, our results show that although participants with ASD had a normal threshold for reporting emotions along the fear-happy dimension, their specificity was reduced.

3.2. Confidence judgments

For 11 of the participants with ASD and 11 controls, after reporting a face as fearful or happy, we also asked them to report their confidence in their decisions (Fig. 1A). Both participants with ASD and controls reported high confidence of ‘Very Sure’ more often than middle confidence of ‘Sure’ and low confidence of ‘Unsure’ (Fig. 2A, J; one-way repeated-measure ANOVA of confidence levels; ASD: F(2,20)=6.72, P=0.0059, η2=0.40; controls: F(2,20)=22.7, P=7.25×10−6, η2=0.69). As expected, they judged emotions significantly faster when they subsequently reported higher confidence (Fig. 2B, K; ASD: F(2,20)=23.1, P=6.42×10−6, η2=0.26; controls: F(2,19)=9.13, P=0.0013, η2=0.32) and reported confidence significantly faster when they reported higher confidence (Fig. 2C, L; ASD: F(2,20)=14.7, P=1.18×10−4, η2=0.22; controls: F(2,19)=8.55, P=0.0014, η2=0.22). Notably, there was no significant difference between participants with ASD and controls in this respect (two-way repeated-measure ANOVA of participant group X confidence level; main effect of participant group: all Ps>0.70; main effect of confidence level: all Ps<1.86×10−7) and there were no interactions between participant groups and confidence levels (all Ps>0.47).

Emotion ambiguity can be defined as the variability or consistency in categorizing a given morphed face as a particular emotion (that is, mapping the face stimulus onto the two alternative category labels provided in our task). The more variable is the judgment, the more ambiguous is the emotion shown in the face (Fig. 1B, C). When breaking this down by morph levels, both participant groups reported significantly higher levels of confidence for anchor faces (no ambiguity) compared to ambiguous faces (Fig. 2D, M; one-way repeated-measure ANOVA of morph levels; ASD: F(6,60)=17.2, P=1.81×10−11, η2=0.28; controls: F(10,60)=31.2, P=1.07×10−16, η2=0.44). The reaction time (RT) for the fear/happy decision can be considered an implicit measure of confidence because it showed a similar pattern to the explicit ratings, and is what one would expect theoretically (longer processing times would be required for more ambiguous stimuli). Indeed, we found that RT for the fearful/happy judgment was faster for anchor faces compared to ambiguous faces (Fig. 2E, N; ASD: F(6,102)=7.32, P=1.61×10−6, η2=0.045; controls: F(6,84)=5.02, P=1.93×10−4, η2=0.082; note that all 18 ASD participants and 15 controls were included for this RT analysis). However, the RT of reporting the confidence judgment itself was similar across morph levels (Fig. 2F, O; ASD: F(6,60)=0.50, P=0.80, η2=0.0038; controls: F(6,60)=1.64, P=0.15, η2=0.015), possibly because all participants first made an emotion discrimination prior to their confidence judgment, and would thus already have judged confidence by the time this response was requested. Again, we found no significant difference between participant groups (two-way repeated-measure ANOVA of participant group X morph level; main effect of participant group: all Ps>0.36; main effect of morph level for explicit confidence rating: P=3.09×10−28, RT of emotion judgment: P=1.15×10−10, and RT of confidence judgment: P=0.22) nor any interactions (all Ps>0.33).

For further analyses, we grouped all trials into three levels of ambiguity (Fig. 1B; anchor, intermediate (30%/70% morph), and high (40%–60% morph)), which showed the expected systematic relationships with explicit confidence ratings (Fig. 2G, P; one-way repeated-measure ANOVA of ambiguity levels; ASD: F(2,20)=30.1, P=9.34×10−7, η2=0.21; controls: F(2,20)=69.3, P=1.02×10−9, η2=0.41) and RT of emotion judgment (Fig. 2H, Q; ASD: F(2,34)=21.0, P=1.13×10−6, η2=0.046; controls: F(2,28)=8.83, P=0.0011, η2=0.075; note that all 18 ASD participants and 15 controls were included for this RT analysis), but not RT of confidence judgment (Fig. 2I, R; ASD: F(2,20)=0.45, P=0.64, η2=0.0014; controls: F(2,20)=1.04, P=0.37, η2=0.0045). Still, this relationship was similar in participant groups (two-way repeated-measure ANOVA of participant group X ambiguity level; main effect of participant group: all Ps>0.31; main effect of ambiguity level for explicit confidence rating: P=1.39×10−15, RT of emotion judgment: P=4.03×10−9, and RT of confidence judgment: P=0.28) and there were no interactions between participant groups and ambiguity levels (all Ps>0.13).

Thus, we found very similar patterns of confidence judgments (both explicit confidence ratings and implicit measures using RT) between participants with ASD and controls, arguing that participants with ASD were not impaired in judging ambiguity and confidence.

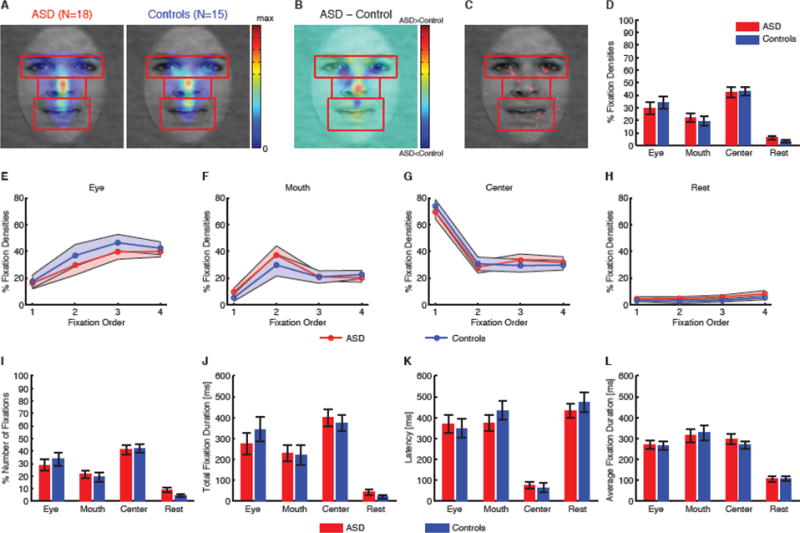

3.3. Fixation analysis did not reveal differences between participants with ASD and controls

Could the reduced specificity of emotion judgment in participants with ASD be attributed to fixating different parts of the face? To answer this question, we next analyzed fixation patterns. We first analyzed fixation densities. Fixation density maps were remarkably similar between participant groups (Fig. 3A), although participants with ASD fixated slightly more at the center and less around eyes (Fig. 3B). Direct comparisons with individual density maps (two-tailed t-test at each pixel, uncorrected with P<0.05; Fig. 3C) revealed small regions near the eyes that participants with ASD fixated less, but regions around the center that participants with ASD fixated more. These results may be consistent with previous findings that have suggested that individuals with ASD actively avoid eyes (Spezio et al., 2007, Kliemann et al., 2010) (but see (Moriuchi et al., 2017)) and have a stronger bias to fixate the center of the image (Wang et al., 2015). However, these differences tended to be small—when we analyzed fixation densities in each region of interest (ROI) (Fig. 3A, D), we found that the groups were statistically indistinguishable in terms of their propensity to fixate the eye (ASD: 29.7±20.3% (mean±SD), controls: 34.1±20.0%; two-tailed t-test: t(31)=0.63, P=0.53, g=0.22, permutation P=0.56), mouth (ASD: 22.1±14.9%, controls: 19.3±13.8%; t(31)=0.55, P=0.59, g=0.19, permutation P=0.59) and center (ASD: 42.3±16.4%, controls: 43.2±12.8%; t(31)=0.16, P=0.88, g=0.054, permutation P=0.88) ROI. The difference between the eye and mouth ROI was also similar between participant groups (ASD: 7.62±33.3%, controls: 14.8±32.3%; t(31)=0.63, P=0.53, g=0.21, permutation P=0.51).

Fig. 3.

Eye movement comparisons between participants with ASD and controls. (A) Fixation density maps to quantify eye movements for participants with ASD and controls. Each map shows the probability of fixating a given location within a 1s period after face onset. The scale bar (color bar) is common for both plots (arbitrary units). The ROIs (eye, mouth, center) used for analysis are shown in red (not shown to participants). (B) Group difference density map shows areas that participants with ASD fixated more than controls (red), and vice versa (blue), with green meaning there was no difference between groups. (C) Statistical map shows areas that had significant difference in density maps between groups (red; two-tailed t-test between individual density maps at each pixel, P<0.05 uncorrected). (D) Percentage of fixation density in each ROI. (E–H) Percentage of fixation density in each ROI over serial order of fixations. (E) Eye. (F) Mouth. (G) Center. (H) Area not in any of the ROI. Shaded area denotes ± SEM over the group of participants. (I) Percentage of the number of fixations in each ROI. (J) Total fixation duration in each ROI. (K) Latency of the first fixation onto each ROI. (L) Average fixation duration on each ROI. Error bars denote one SEM.

In the above analysis, we collapsed fixations from the entire trial. However, differences could appear at individual fixations because people with ASD may have atypical bottom-up attention (Wang et al., 2015). To investigate this possibility, we next performed fixation-by-fixation analysis on the first 4 fixations in fixation serial order and compared fixation densities in ROIs over serial order of fixations (Fig. 3E–H). Both participants with ASD and controls started from the center (Fig. 3G; note the preceding central fixation) and subsequently saccaded to the eye (Fig. 3E) and mouth (Fig. 3F). Fixations on eyes gradually increased over time whereas fixations on the mouth reached their peak at the second fixation. There was only a small proportion of fixations outside ROIs (Fig. 3H). However, at each fixation in serial order, participants with ASD had similar fixation densities as controls in each ROI (Fig. 3E–H; two-tailed t-test at each fixation: all Ps>0.05), suggesting that they had normal viewing patterns when judging facial emotions.

Furthermore, in each ROI, we found remarkably similar percentage of the number of fixations (Fig. 3I), total fixation duration (Fig. 3J), latency to first fixate onto an ROI (Fig. 3K), as well as mean fixation duration (Fig. 3L), between participant groups (all Ps>0.05; Supplemental Results).

In conclusion, the reduced specificity of emotion judgment in participants with ASD, at least in our sample, is difficult to attribute to gross differences in eye movements, and instead appears to arise from central cognitive processing differences.

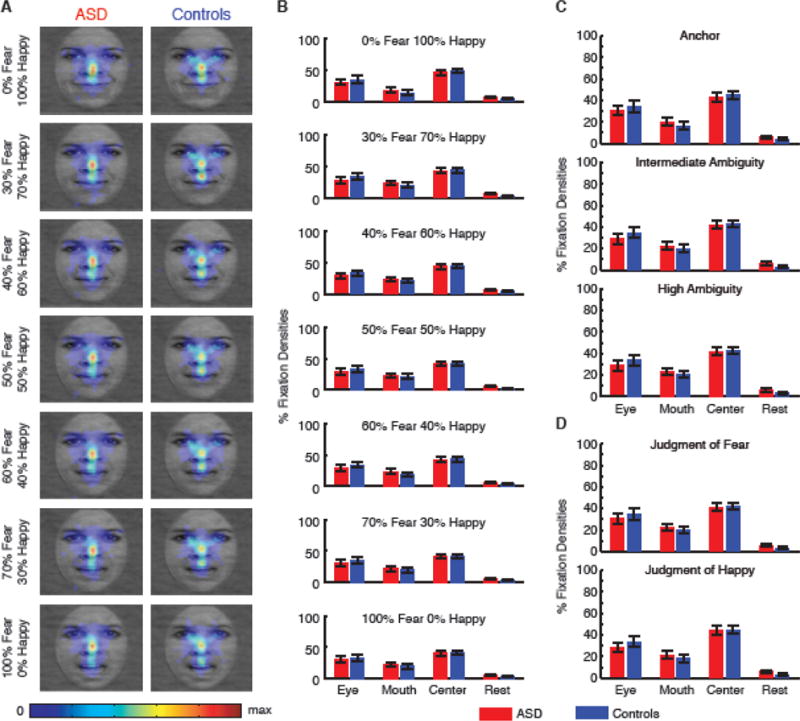

3.4. Fixation density was similar across morph levels, ambiguity levels, and emotion judgments

Does fixation density vary systematically as a function of stimulus and/or emotion judgment? To answer this question, we first separately analyzed fixation density at each morph level. For both participants with ASD and controls, fixation density maps were remarkably similar across morph levels (Fig. 4A). Direct comparisons between participants with ASD and controls in each ROI showed no significant difference at each morph level (Fig. 4B; all Ps>0.05). Furthermore, we also found remarkably similar fixation densities when we broke the results down by ambiguity levels (Fig. 4C). At each ambiguity level, participants with ASD did not differ in fixation density from controls in any of the ROIs or the difference between the eye and mouth ROI (all Ps>0.05). Together, our results suggest that participants adopted uniform strategies to view faces regardless of stimulus.

Fig. 4.

Fixation densities across stimulus levels and behavioral judgments. (A) Fixation density maps breaking down for each morph level. Each map shows the probability of fixating a given location within a 1s period after face onset. The scale bar (color bar) is common for all plots (arbitrary units). (B) Percentage of fixation density in each ROI when breaking down for each morph level. (C) Percentage of fixation density in each ROI when breaking down for each ambiguity level. (D) Percentage of fixation density in each ROI when behavioral judgments were all ‘fear’ (upper) or ‘happy’ (lower). Error bars denote one SEM.

We next analyzed fixation densities broken down by behavioral judgments (Fig. 4D). Within each participant group, fixation densities did not differ between behavioral judgments (two-tailed paired t-test, Ps>0.05 for all ROIs). Between participant groups, fixation densities did not differ in any ROI for either behavioral judgment (all Ps>0.05). Our results suggest that eye movements did not bias perceptual decisions.

In conclusion, our results suggest that eye movements during facial emotion judgment were independent of stimulus levels or behavioral judgments in our task.

3.5. RT but not fixation noise was associated with the correctness of emotion judgment

We next analyzed RT and eye movement as a function of the correctness of emotion judgment. We found that when judging happy faces (%fearful < 50%), RT was shorter for happy responses than fear responses, whereas when judging fear faces (%fearful > 50%), RT was shorter for fear responses than happy responses. This was the case for individual morph levels, as well as the average of all fear or happy faces, for both participants with ASD and controls (Fig. 5A). These results together showed that participants took longer to respond when they made an incorrect judgment, likely because they were less confident or decisive in those trials (the opposite of a standard speed-accuracy tradeoff). This result was further confirmed by a significant interaction between stimuli and response in both participants with ASD (two-way repeated-measure ANOVA of stimuli X response for all fear vs. happy faces: main effect of stimuli: F(1,34)=0.64, P=0.43, η2=0.013, main effect of response, F(1,34)=1.24, P=0.27, η2=0.0072, interaction: F(1,34)=15.5, P=3.82×10−4, η2=0.090) and controls (main effect of stimuli: F(1,28)=0.0084, P=0.93, η2=1.45×10−4, main effect of response, F(1,28)=1.50, P=0.23, η2=0.018, interaction: F(1,28)=13.8, P=9.13×10−4, η2=0.16). However, these differences between the correctly judged emotion and incorrectly judged emotion showed no significant differences between participants with ASD and controls, nor any significant correlation with xhalf, α, AQ, or SRS scores (all Ps>0.05).

Fig. 5.

RT and eye movement as a function of response. (A) RT. (B) The number of fixations per trial. (C) Mean fixation duration. The filled bars denote fear response and the open bars denote happy response. Red: ASD. Blue: controls. Error bars denote one SEM across participants. Asterisks indicate significant difference using paired two-tailed t-test. *: P<0.05, **: P<0.01, and ***: P<0.001.

We further examined the possibility that noise in eye movements might be associated with the correctness of emotion judgment. Both the number of fixations per trial and mean fixation duration could index fixation noise, i.e., the more fixations per trial and/or the shorter the mean fixation duration, the noisier the viewing. However, we found that both the number of fixations per trial (Fig. 5B) and mean fixation duration (Fig. 5C) were similar between correctly judged emotions and incorrectly judged emotions, suggesting that fixation noise was not associated with the correctness of emotion judgment.

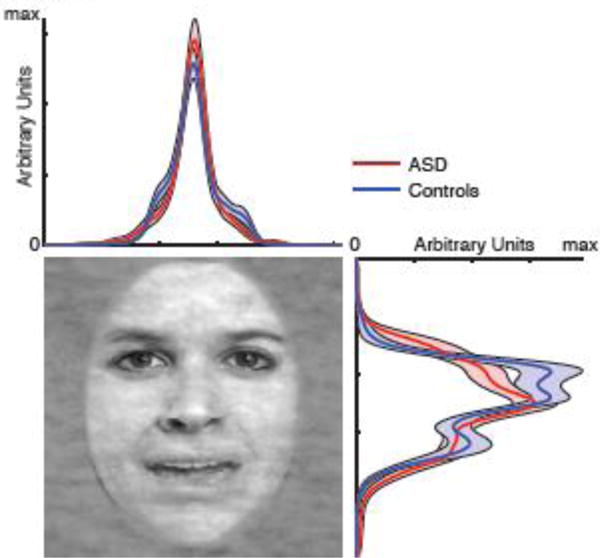

3.6. Neither spatial distribution of fixations nor fixation parameters could predict reduced specificity of emotion judgment in ASD

Could reduced specificity of emotion judgment in participants with ASD be due to different fixation parameters? We next examined spatial distribution of fixations. For each participant, we collapsed fixations from all trials during the 1s stimulus period. We found that participants with ASD had remarkably similar fixation distributions compared to controls (Fig. 6). Direct comparison at each pixel revealed no significant difference along either vertical or horizontal dimensions (two-tailed t-test, all Ps>0.05). Our results suggest that reduced specificity of emotion judgment in participants with ASD could not be attributed to different spatial distributions of fixations.

Fig. 6.

Spatial distribution of fixations along each dimension of the face. Each curve is the probability density function of fixating a given location within a 1s period after face onset. Shaded area denotes ±SEM across participants.

Participants with ASD had similar valid recording durations per trial compared to controls (ASD: 947±27.8 ms, controls: 959±32.5 ms; t(31)=1.10, P=0.28, g=0.38, permutation P=0.26). Furthermore, both groups had similar total number of fixations per trial (ASD: 3.14±2.18, controls: 2.84±0.48; t(31)=0.53, P=0.60, g=0.18, permutation P=0.91). These results ruled out the possibility that any eye movement difference was due to poorer recording of eye movements.

Lastly, we examined in detail whether fixation parameters could predict incorrect judgment of emotions. We used the number of fixations per trial and mean fixation duration. Also, we considered all faces with %fearful > 50% as fear faces, and all faces with %fearful < 50% as happy faces (we excluded 50% fear/50% happy faces from this analysis), and partitioned all trials into correct trials (response matched stimulus) and incorrect trials (vice versa). We constructed a general linear mixed model (GLMM) to analyze the relationship between the correctness of emotion judgment and participant group, the number of fixations per trial, and mean fixation duration. This analysis used all single trials from all participants, thus having the highest resolution.

We first used participant group and mean fixation duration as fixed effects and by-participant random intercept as well as by-participant random slopes as random effects. We found that participant group could predict the correctness of emotion judgment with a significant regression coefficient (β=0.51, 95% confidence interval (CI) = [0.26 0.76], F(1,6942)=16.3, P=5.51×10−5), confirming that participants with ASD had more incorrect emotion judgments and thus reduced specificity of emotion judgment (also see our ROC analysis above). However, we did not find a significant regression coefficient for mean fixation duration (β=0.00041, 95% CI = [−0.00048 0.0013], F(1,6942)=0.82, P=0.36), suggesting that mean fixation duration could not predict the correctness of emotion judgment. Similarly, using participant group and the number of fixations per trial as fixed effects and by-participant random intercept as well as by-participant random slopes as random effects, we confirmed that participant group could predict the correctness of emotion judgment (β=0.32, 95% CI = [−0.011 0.66], F(1,6942)=3.60, P=0.058), however, we found that the number of fixations per trial could not predict the correctness of emotion judgment either (β=−0.049, 95% CI = [−0.15 0.053], F(1,6942)=0.88, P=0.35). Lastly, a full model with the fixed effects of participant group, the number of fixations per trial, and mean fixation duration, did not significantly outperform the model shown above without the fixed effect of mean fixation duration (χ2(4)=3.24, P=0.52) and the model shown above without the fixed effect of the number of fixations per trial (χ2(4)=3.32, P=0.51), confirming that mean fixation duration and the number of fixations per trial, two metrics indexing fixation noise, could not explain the variance in emotion judgment.

Taken together, we found that reduced specificity of emotion judgment in participants with ASD could not be attributed to differences in the spatial distribution of fixations, or in basic fixation parameters.

4. Discussion

In this study, we used a quantitative two-alternative discrimination task with morphed levels of facial emotion stimuli in order to further probe the components of facial emotion processing that may be atypical in ASD. We further conducted concurrent eye tracking, in order to help determine whether abnormal fixation patterns might be sufficient to explain any findings. Participants with ASD had normal thresholds to report fear in the fear-happy morphs (50% inflection points were identical) and normal confidence judgments and reaction times, but they had reduced specificity of emotion judgment when analyzing the full range of morphed stimuli (that is, their psychometric curves were flatter and they had a smaller area under the ROC curves). However, such reduced specificity of emotion judgment could not be attributed to any aspects of eye movements, since participants with ASD had similar fixation density, latency and duration compared to controls. Furthermore, for both participants with ASD and controls, eye movements were similar across stimulus levels and behavioral judgments. Spatial distribution of fixations and fixation parameters could not predict the reduced specificity of emotion judgment in participants with ASD either. Together, our results have revealed a deficit in emotion judgment in participants with ASD that appears to be limited to the specificity with which subtle emotions can be discriminated, likely attributable to central perceptual or cognitive mechanisms.

4.1 Novelty

While a sizable prior literature in ASD has investigated emotion perception and fixations to faces separately, far fewer studies have examined how eye movements can influence face perception, or have conducted quantitative psychometric analyses. This has generally resulted in findings of reduced accuracy in emotion perception in ASD, but without an explanation of the underlying mechanism. Typically, prior studies have left open multiple possibilities that could explain the findings, ranging from atypical fixations to impaired conceptual knowledge to impaired sensory noise in perceptual processing (the mechanism we advance here). In the present study, we conducted detailed and comprehensive analyses of emotion perception from faces using a sensitive task with morphed stimuli, which allowed us to parametrically control the intensity of the stimuli and to assess emotion discrimination at a fine-grained level. With simultaneous eye tracking, we not only examined eye movements with respect to stimulus levels (i.e., emotion intensity and ambiguity levels) and behavioral judgment, but also quantified the fixation noise and investigated whether such noise could predict the correctness of emotion judgment. Most significantly, we used a signal-detection theoretic approach in our analysis. Together with the eye tracking data, this allowed us to distinguish between competing explanations for impaired emotion recognition and suggest a specific processing component of emotion judgment that may be atypical in ASD.

4.2 Possible caveats

It has been shown that people fixate more at the eye region for fearful faces whereas they fixate relatively more at the mouth region for happy faces (Scheller et al., 2012). Also, people may primarily use information revealed by eyes to judge fearful faces but use more mouth information to judge happy faces (Smith et al., 2005). However, in the present study, both participants with ASD and controls showed similar fixation patterns across a gradient of emotions. This is likely because our morphed stimuli generally show blends rather than strongly prototypical emotions. The lack of a difference between participants with ASD and controls in eye movements may be specific to our morphed stimuli, and partly explained by the nature our task, which had neither an objective ground truth of the emotion nor feedback about the judgment. Since each trial was preceded with a central fixation cross and the duration of the stimuli was relatively short (1s), any fixation differences between groups might be reduced. Finally, autism spectrum disorders are well known to be highly heterogeneous at the biological and behavioral levels (Happe et al., 2006), and the lack of the group difference is likely partly attributable to the enormous heterogeneity of ASD. The inconsistent findings on face fixations in ASD may be accounted by various demographic and experiment-related factors (Guillon et al., 2014). In our study, small sample size may well have provided insufficient power to detect subtle differences in fixations that could theoretically translate into the behavioral impairment we found.

People with ASD often show noisier viewing behavior, as indexed by shorter valid recording duration and poorer alignment between fixations and object centers (Wang et al., 2015). However, we here found a similar fixation distribution between groups along both vertical and horizontal dimensions as well as a similar valid recording duration. Furthermore, metrics indexing fixation noise (the number of fixations per trial and mean fixation duration) could not predict the correctness of emotion judgment (and thus specificity of emotion judgment) either. This is likely because (1) the relatively short timing of the viewing and thus the smaller overall number of fixations might have constrained potential variability in viewing patterns, and (2) the preceding central fixation cross constrained the spatial distribution of fixations for both participant groups. However, participants with ASD showed a slightly higher center bias, consistent with our previous finding when participants view complex images freely (Wang et al., 2015).

In the present study, our conclusions rest on a single pair of facial expressions, fear and happy, and we chose these two emotions because they are highly distinguishable (Ekman and Friesen, 1975), drive the most prominent difference in eye movement on facial features (Scheller et al., 2012), and are commonly used and directly compared in prior autism studies (Neumann et al., 2006, Adolphs et al., 2008, Kliemann et al., 2010, Kliemann et al., 2012, Rutishauser et al., 2013). Using morphed faces of this pair of emotions not only allowed us to assess emotion discrimination at a fine-grained level, but also allowed us to parametrically investigate another dimension of emotion—emotion ambiguity. However, the increased number of stimuli required to represent morphing levels restricted us to testing only these two emotions, leaving open the question whether our results generalize to other emotions. A larger set of emotions, as well as of facial expressions without emotional meaning, would be important to study in future studies.

4.3 Reduced specificity of emotion judgment in ASD

The reduced specificity of emotion judgment in ASD arose from more inconsistent judgments at morph levels that have a more dominant judgment towards a particular emotion, i.e., participants with ASD had more variable judgments for emotions near anchors, resulting in a flatter psychometric curve and a shallower ROC curve. This is consistent with noisier and more random viewing behavior in autism (Pelphrey et al., 2002, de Wit et al., 2008, Wang et al., 2015). For example, people with autism view non-feature areas of the faces significantly more often but core feature areas of the faces less often (Pelphrey et al., 2002), and this is the case for both positive and negative emotions (de Wit et al., 2008). In particular, similar to our finding, in an emotion recognition task, there is a significantly reduced selectivity of emotion in autism, arising from a combination of giving lower intensity ratings to the concordant emotion label for a face but higher intensity ratings to the discordant emotion label for a face, demonstrating a noisier behavior (Kennedy and Adolphs, 2012). Furthermore, this study also shows significantly decreased test-retest reliability in autism. Similarly using morphed stimuli, adolescents with ASD are found to be less accurate at processing the basic emotional expressions of disgust, anger and surprise compared to controls (Law Smith et al., 2010), and they require more intense facial expressions for accurate emotion identification, particularly to sad expressions (Wallace et al., 2011). All of these findings are consistent with the present finding of reduced specificity in our task.

Although the literature on emotion recognition in ASD is quite mixed (Harms et al., 2010), these discrepancies may be attributed to several factors and their interactions, including demographics (e.g., age, IQ, etc), the stimuli and experimental task demands, the dependent measures of interest, ceiling effects, and compensatory strategies, making it desirable to have more demanding tasks and stimuli in order to avoid ceiling effects, constrain strategies, and reveal subtle differences between groups. Indeed, in a previous study, we found that an impairment in social attention was strongly diminished under conditions of low cognitive load (Wang et al., 2014).

4.4 Reduced specificity of emotion judgment may result from reduced saliency for faces in general

People with ASD show reduced attention to faces but pay more attention to inanimate objects (Dawson et al., 2005, Sasson, 2006). Some characteristics, such as preference for inanimate objects and a lack of interest in faces, are often evident very early in infancy (Kanner, 1943, Osterling and Dawson, 1994). Compared to faces, people with ASD also show circumscribed interests to a narrow range of inanimate subjects and are fascinated with gadgets, devices, vehicles, electronics, Japanese animation and dinosaurs, etc. (Kanner, 1943, Lewis and Bodfish, 1998, South et al., 2005). These circumscribed interests are evident in children and adolescents (Sasson et al., 2008), as well as in 2–5 year-olds (Sasson et al., 2011). Furthermore, people with ASD orient less towards faces during visual search, a deficit that appeared independent of low-level visual properties of the stimuli (Wang et al., 2014). A recent study with a comprehensive analysis of multiple levels of saliency as well as a wide range of categories of objects has shown that people with ASD have reduced saliency for faces and for locations indicated by social gaze, yet a general increase in pixel-level saliency at the expense of semantic-level saliency, as well as a stronger image-center bias regardless of object distribution (Wang et al., 2015).

Although a sizable literature has shown that the relative saliency of facial features is also altered in autism (Pelphrey et al., 2002, Neumann et al., 2006, Spezio et al., 2007, Kliemann et al., 2010), which we did not observe in the present study, the reduced specificity of emotion judgment may be due to the fact that faces are in general less salient to people with ASD. Specifically, this is likely because (1) early in development, people with ASD pay less attention to faces, so the mechanisms for detecting and discriminating emotions do not develop as well as in controls, which in turn makes the face and emotion processing noisier; and (2) when paying less attention, the signal-to-noise ratio (SNR) for the online discrimination of faces and emotions reduces (as attention boosts SNR), making face and emotion processing noisier. Together, reduced attention to faces and other people may lead to reduced specificity to facial emotions as we observed in the present study.

Supplementary Material

Highlights.

People with ASD show reduced specificity in emotion judgment.

A quantitive task dissects the specific components underlying this deficit.

This deficit can be explained by increased noise from a signal detection Perspective

This deficit can not be attributed to gross differences in eye movements.

Acknowledgments

We thank Tim Armstrong and Sai Sun for help with running the experiment, and Lynn Paul for psychological assessments. This research was supported by grants and fellowships from Autism Science Foundation, the Simons Foundation, and an NIMH Conte Center. The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

Footnotes

Publisher's Disclaimer: This is a PDF file of an unedited manuscript that has been accepted for publication. As a service to our customers we are providing this early version of the manuscript. The manuscript will undergo copyediting, typesetting, and review of the resulting proof before it is published in its final citable form. Please note that during the production process errors may be discovered which could affect the content, and all legal disclaimers that apply to the journal pertain.

Author Contributions

S.W. and R.A. designed experiments and wrote the paper. S.W. performed research and analyzed data.

The authors declare no conflict of interest.

References

- Adolphs R, Sears L, Piven J. Abnormal Processing of Social Information from Faces in Autism. Journal of Cognitive Neuroscience. 2001;13:232–240. doi: 10.1162/089892901564289. [DOI] [PubMed] [Google Scholar]

- Adolphs R, Spezio ML, Parlier M, Piven J. Distinct Face-Processing Strategies in Parents of Autistic Children. Current Biology. 2008;18:1090–1093. doi: 10.1016/j.cub.2008.06.073. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Baron-Cohen S, Jolliffe T, Mortimore C, Robertson M. Another Advanced Test of Theory of Mind: Evidence from Very High Functioning Adults with Autism or Asperger Syndrome. Journal of Child Psychology and Psychiatry. 1997;38:813–822. doi: 10.1111/j.1469-7610.1997.tb01599.x. [DOI] [PubMed] [Google Scholar]

- Brainard DH. The Psychophysics Toolbox. Spat Vis. 1997;10:433–436. [PubMed] [Google Scholar]

- Dawson G, Webb SJ, McPartland J. Understanding the Nature of Face Processing Impairment in Autism: Insights From Behavioral and Electrophysiological Studies. Developmental Neuropsychology. 2005;27:403–424. doi: 10.1207/s15326942dn2703_6. [DOI] [PubMed] [Google Scholar]

- de Wit TCJ, Falck-Ytter T, von Hofsten C. Young children with Autism Spectrum Disorder look differently at positive versus negative emotional faces. Research in Autism Spectrum Disorders. 2008;2:651–659. [Google Scholar]

- Ekman P, Friesen WV. Unmasking the Face: A Guide to Recognizing Emotions from Facial Clues. Englewood Cliffs, NJ: Prentice Hall; 1975. [Google Scholar]

- Elsabbagh M, Gliga T, Pickles A, Hudry K, Charman T, Johnson MH. The development of face orienting mechanisms in infants at-risk for autism. Behavioural Brain Research. 2013;251:147–154. doi: 10.1016/j.bbr.2012.07.030. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Falck-Ytter T, Fernell E, Gillberg C, Von Hofsten C. Face scanning distinguishes social from communication impairments in autism. Developmental Science. 2010;13:864–875. doi: 10.1111/j.1467-7687.2009.00942.x. [DOI] [PubMed] [Google Scholar]

- Guillon Q, Hadjikhani N, Baduel S, Rogé B. Visual social attention in autism spectrum disorder: Insights from eye tracking studies. Neuroscience & Biobehavioral Reviews. 2014;42:279–297. doi: 10.1016/j.neubiorev.2014.03.013. [DOI] [PubMed] [Google Scholar]

- Happe F, Ronald A, Plomin R. Time to give up on a single explanation for autism. Nat Neurosci. 2006;9:1218–1220. doi: 10.1038/nn1770. [DOI] [PubMed] [Google Scholar]

- Harms M, Martin A, Wallace G. Facial Emotion Recognition in Autism Spectrum Disorders: A Review of Behavioral and Neuroimaging Studies. Neuropsychol Rev. 2010;20:290–322. doi: 10.1007/s11065-010-9138-6. [DOI] [PubMed] [Google Scholar]

- Hus V, Lord C. The Autism Diagnostic Observation Schedule, Module 4: Revised Algorithm and Standardized Severity Scores. J Autism Dev Disord. 2014;44:1996–2012. doi: 10.1007/s10803-014-2080-3. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Kanner L. Autistic disturbances of affective contact. The Nervous Child. 1943;2:217–250. [Google Scholar]

- Kennedy DP, Adolphs R. Perception of emotions from facial expressions in high-functioning adults with autism. Neuropsychologia. 2012;50:3313–3319. doi: 10.1016/j.neuropsychologia.2012.09.038. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Kliemann D, Dziobek I, Hatri A, Baudewig J, Heekeren HR. The Role of the Amygdala in Atypical Gaze on Emotional Faces in Autism Spectrum Disorders. The Journal of Neuroscience. 2012;32:9469–9476. doi: 10.1523/JNEUROSCI.5294-11.2012. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Kliemann D, Dziobek I, Hatri A, Steimke R, Heekeren HR. Atypical Reflexive Gaze Patterns on Emotional Faces in Autism Spectrum Disorders. The Journal of Neuroscience. 2010;30:12281–12287. doi: 10.1523/JNEUROSCI.0688-10.2010. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Klin A, Jones W, Schultz R, Volkmar F, Cohen D. Visual fixation patterns during viewing of naturalistic social situations as predictors of social competence in individuals with autism. Arch Gen Psychiatry. 2002;59:809–816. doi: 10.1001/archpsyc.59.9.809. [DOI] [PubMed] [Google Scholar]

- Kuhn G, Kourkoulou A, Leekam SR. How Magic Changes Our Expectations About Autism. Psychological Science. 2010;21:1487–1493. doi: 10.1177/0956797610383435. [DOI] [PubMed] [Google Scholar]

- Law Smith MJ, Montagne B, Perrett DI, Gill M, Gallagher L. Detecting subtle facial emotion recognition deficits in high-functioning Autism using dynamic stimuli of varying intensities. Neuropsychologia. 2010;48:2777–2781. doi: 10.1016/j.neuropsychologia.2010.03.008. [DOI] [PubMed] [Google Scholar]

- LeCouteur A, Rutter M, Lord C. Autism diagnostic interview: A standardized investigator-based instrument. J Autism Dev Disord. 1989;19:363–387. doi: 10.1007/BF02212936. [DOI] [PubMed] [Google Scholar]

- Lewis MH, Bodfish JW. Repetitive behavior disorders in autism. Mental Retardation and Developmental Disabilities Research Reviews. 1998;4:80–89. [Google Scholar]

- Lord C, Rutter M, Couteur A. Autism Diagnostic Interview-Revised: A revised version of a diagnostic interview for caregivers of individuals with possible pervasive developmental disorders. J Autism Dev Disord. 1994;24:659–685. doi: 10.1007/BF02172145. [DOI] [PubMed] [Google Scholar]

- Macmillan NA, Creelman CD. Detection theory: a user’s guide. Mahwah, New Jersey: Lawrence Erlbaum Associates; 2005. [Google Scholar]

- McPartland J, Webb S, Keehn B, Dawson G. Patterns of Visual Attention to Faces and Objects in Autism Spectrum Disorder. J Autism Dev Disord. 2011;41:148–157. doi: 10.1007/s10803-010-1033-8. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Moriuchi JM, Klin A, Jones W. Mechanisms of Diminished Attention to Eyes in Autism. American Journal of Psychiatry. 2017;174:26–35. doi: 10.1176/appi.ajp.2016.15091222. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Nakano T, Tanaka K, Endo Y, Yamane Y, Yamamoto T, Nakano Y, Ohta H, Kato N, Kitazawa S. Atypical gaze patterns in children and adults with autism spectrum disorders dissociated from developmental changes in gaze behaviour. Proceedings of the Royal Society B: Biological Sciences. 2010;277:2935–2943. doi: 10.1098/rspb.2010.0587. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Neumann D, Spezio ML, Piven J, Adolphs R. Looking you in the mouth: abnormal gaze in autism resulting from impaired top-down modulation of visual attention. Social Cognitive and Affective Neuroscience. 2006;1:194–202. doi: 10.1093/scan/nsl030. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Olsson P. Real-time and offline filters for eye tracking. Msc: KTH Royal Institute of Technology; 2007. vol. [Google Scholar]

- Osterling J, Dawson G. Early recognition of children with autism: A study of first birthday home videotapes. J Autism Dev Disord. 1994;24:247–257. doi: 10.1007/BF02172225. [DOI] [PubMed] [Google Scholar]

- Pelphrey K, Sasson N, Reznick JS, Paul G, Goldman B, Piven J. Visual Scanning of Faces in Autism. J Autism Dev Disord. 2002;32:249–261. doi: 10.1023/a:1016374617369. [DOI] [PubMed] [Google Scholar]

- Philip RCM, Whalley HC, Stanfield AC, Sprengelmeyer R, Santos IM, Young AW, Atkinson AP, Calder AJ, Johnstone EC, Lawrie SM, Hall J. Deficits in facial, body movement and vocal emotional processing in autism spectrum disorders. Psychological Medicine. 2010;40:1919–1929. doi: 10.1017/S0033291709992364. [DOI] [PubMed] [Google Scholar]

- Roy S, Roy C, Fortin I, Ethier-Majcher C, Belin P, Gosselin F. A dynamic facial expression database. Journal of Vision. 2007;7:944–944. [Google Scholar]

- Rutishauser U, Tudusciuc O, Wang S, Mamelak AN, Ross IB, Adolphs R. Single-Neuron Correlates of Atypical Face Processing in Autism. Neuron. 2013;80:887–899. doi: 10.1016/j.neuron.2013.08.029. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Rutter M, Bailey A, Berument S, Lord C, Pickles A. The social communication questionnaire. Los Angeles, CA: Western Psychological Services; 2003. [Google Scholar]

- Sasson N. The Development of Face Processing in Autism. J Autism Dev Disord. 2006;36:381–394. doi: 10.1007/s10803-006-0076-3. [DOI] [PubMed] [Google Scholar]

- Sasson NJ, Elison JT, Turner-Brown LM, Dichter GS, Bodfish JW. Brief Report: Circumscribed Attention in Young Children with Autism. J Autism Dev Disord. 2011;41:242–247. doi: 10.1007/s10803-010-1038-3. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Sasson NJ, Turner-Brown LM, Holtzclaw TN, Lam KSL, Bodfish JW. Children with autism demonstrate circumscribed attention during passive viewing of complex social and nonsocial picture arrays. Autism Research. 2008;1:31–42. doi: 10.1002/aur.4. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Scheller E, Buchel C, Gamer M. Diagnostic Features of Emotional Expressions Are Processed Preferentially. PLoS ONE. 2012;7:e41792. doi: 10.1371/journal.pone.0041792. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Smith ML, Cottrell GW, Gosselin F, Schyns PG. Transmitting and Decoding Facial Expressions. Psychological Science. 2005;16:184–189. doi: 10.1111/j.0956-7976.2005.00801.x. [DOI] [PubMed] [Google Scholar]

- South M, Ozonoff S, McMahon W. Repetitive Behavior Profiles in Asperger Syndrome and High-Functioning Autism. J Autism Dev Disord. 2005;35:145–158. doi: 10.1007/s10803-004-1992-8. [DOI] [PubMed] [Google Scholar]

- Spezio ML, Adolphs R, Hurley RSE, Piven J. Analysis of face gaze in autism using “Bubbles”. Neuropsychologia. 2007;45:144–151. doi: 10.1016/j.neuropsychologia.2006.04.027. [DOI] [PubMed] [Google Scholar]

- Wallace GL, Case LK, Harms MB, Silvers JA, Kenworthy L, Martin A. Diminished Sensitivity to Sad Facial Expressions in High Functioning Autism Spectrum Disorders is Associated with Symptomatology and Adaptive Functioning. J Autism Dev Disord. 2011;41:1475–1486. doi: 10.1007/s10803-010-1170-0. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Wang S, Jiang M, Duchesne Xavier M, Laugeson Elizabeth A, Kennedy Daniel P, Adolphs R, Zhao Q. Atypical Visual Saliency in Autism Spectrum Disorder Quantified through Model-Based Eye Tracking. Neuron. 2015;88:604–616. doi: 10.1016/j.neuron.2015.09.042. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Wang S, Xu J, Jiang M, Zhao Q, Hurlemann R, Adolphs R. Autism spectrum disorder, but not amygdala lesions, impairs social attention in visual search. Neuropsychologia. 2014;63:259–274. doi: 10.1016/j.neuropsychologia.2014.09.002. [DOI] [PMC free article] [PubMed] [Google Scholar]

- Willenbockel V, Sadr J, Fiset D, Horne G, Gosselin F, Tanaka J. Controlling low-level image properties: The SHINE toolbox. Behavior Research Methods. 2010;42:671–684. doi: 10.3758/BRM.42.3.671. [DOI] [PubMed] [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.