Abstract

Empirical observations of how labs conduct research indicate that the adoption rate of open practices for transparent, reproducible, and collaborative science remains in its infancy. This is at odds with the overwhelming evidence for the necessity of these practices and their benefits for individual researchers, scientific progress, and society in general. To date, information required for implementing open science practices throughout the different steps of a research project is scattered among many different sources. Even experienced researchers in the topic find it hard to navigate the ecosystem of tools and to make sustainable choices. Here, we provide an integrated overview of community-developed resources that can support collaborative, open, reproducible, replicable, robust and generalizable neuroimaging throughout the entire research cycle from inception to publication and across different neuroimaging modalities. We review tools and practices supporting study inception and planning, data acquisition, research data management, data processing and analysis, and research dissemination. An online version of this resource can be found at https://oreoni.github.io. We believe it will prove helpful for researchers and institutions to make a successful and sustainable move towards open and reproducible science and to eventually take an active role in its future development.

Keywords: Open science, Reproducibility, MRI, PET, MEG, EEG

1. Introduction

Science is an incremental progress towards creating and organizing knowledge through theories and testable predictions. Reproducibility is a core part of science: being able to repeat or recreate scientific results is essential for the complex process of knowledge accumulation. Due to its relevance, different terms have been introduced to describe specific aspects of the process, including “reproducibility” when the same data and methods are used, “replicability” when new data but same methods are used, “robustness” when the same data but different methods are used, and “generalizability” when new data and methods are used (Whitaker, 2019). Here, we use “reproducibility” as an umbrella term, encompassing all aspects of recreating scientific results (Poldrack et al., 2020). Open science tools and practices have been developed to advance reproducibility, as well as accessibility and transparency at all stages of the research cycle and across all levels of society. Together, they remove barriers to sharing and facilitate collaboration, with the goal of improving reproducibility and, ultimately, accelerating scientific discoveries. Importantly, such practices facilitate, but do not guarantee, higher quality.

Empirical observations of how labs conduct research indicate that the adoption rate of open practices and tools for reproducible and collaborative science, unfortunately, remains in its infancy. Even when members of a specific scientific community have taken a central role in open science advocacy and tool development, like in the neuroimaging community, the impact on the rest of the very same community is limited. A recent survey (Paret et al., 2022) including researchers who are senior and likely to hold a positive attitude towards open science, indicated that 42% have never pre-registered a neuroimaging study and 34% have never shared their raw neuroimaging data. Many of those who indicated that they pre-registered or shared their data at least once likely did not do so in all their studies, and thus, the actual rate of pre-registration and data sharing in neuroimaging is likely much lower.

The limited adoption of open science practices is at odds with the overwhelming evidence that a lack of open practices in general can hinder reproducibility with costs for scientific progress and for society. Indeed, reproducibility issues have been undermining the foundation of scientific research in several fields, such as psychology (Open Science Collaboration, 2015; Klein et al., 2018), social sciences (Camerer et al., 2016, 2018), neuroimaging (Munafò et al., 2017; Botvinik-Nezer et al., 2020; Li et al., 2021), preclinical cancer biology research (Errington et al., 2021; Errington et al., 2021), and more (Hutson, 2018; Nissen et al., 2016; Serra-Garcia and Gneezy, 2021). As a response, there has been a rise in the development of tools and approaches to facilitate reproducibility and open science, in the spirit of Findability, Accessibility, Interoperability, and Reusability principles (FAIR) (Wilkinson et al., 2016; Gorgolewski and Poldrack, 2016; Nosek et al., 2018; Nosek et al., 2012; Nosek and Lakens, 2014; Poldrack et al., 2017; Poldrack et al., 2020; Poldrack et al., 2019; Clayson et al., 2022). Beyond their potential to mitigate transparency and reproducibility issues, these practices provide important benefits for individual researchers by increasing exposure, reputation, chances of publication, number of citations, media attention, potential collaborations, and position and funding opportunities (Allen and Mehler, 2019; McKiernan et al., 2016; Nosek et al., 2022; Markowetz, 2015; Hunt, 2019). Hence, one could have expected a higher uptake for such beneficial practices and tools.

Recently, a parallel top-down change of policies started to further support the adoption of open science practices and tools. For example, funding agencies are now enforcing the implementation of certain open data practices for publicly funded research (e.g., the NIH in the U.S. and the ERC in Europe; de San Román 2021; de Jonge et al., 2021), and some require a plan for research data storage and sharing, openly accessible publication formats and dissemination plans beyond the classical journal publication. Additionally, they provide funding for the development of necessary software, hardware, and collaborative infrastructure to support the transition to open and reproducible neuroscience (e.g., the NIH BRAIN Initiative, NIH ReproNim project (Kennedy et al., 2019), NSF CRCNS, EU Human Brain Project, German NFDI). These efforts by funding agencies are complemented by stakeholder institutions like the OHBM, the International Neuroinformatics Coordinating Facility (INCF), the Chinese Open Science Network (COSN), and the Open Science Framework (OSF), who provide platforms for the development of standards and best practices of open and FAIR neuroscience research, assemble training material, and promote open science practices. More-over, journals have started changing their policies with regard to open access options and data sharing. Together, these institutional measures aim at fostering the benefits of open science practices, and the adoption of open and reproducible science standards will be increasingly required for labs and individual researchers.

Nevertheless, multiple barriers of entry to open science practices are driving the modest rate of adoption in the general research community. Among them are lack of knowledge or training and lack of skills or resources. A survey by Borghi and Van Gulick (2018) found that 65% of researchers reported openness and reproducibility as motivation for implementing research data management in MRI, but between 40-50% pointed to the lack of best practices/tools and knowledge/training as main obstacles for embracing these practices. Likewise, a more recent survey indicated that similar percentages of researchers in neuroimaging have never learned how to pre-register or share their data online and that they know too little about pre-registration platforms and suitable data repositories (Paret et al., 2022). These later challenges could be alleviated by a simplified overview of the open resources available. However, information required for implementing open science practices over the full research cycle is currently scattered among many different sources. Even experienced researchers in the topic often find it hard to navigate the ecosystem of community-developed tools and to make sustainable choices.

This manuscript provides an integrated overview of community-developed resources critical to support open and reproducible neuroimaging throughout the entire research cycle and across different neuroimaging modalities (particularly MRI, MEG, EEG, and PET). Instead of detailing, as others before, why one should adopt open and reproducible practices (Munafò et al., 2017; Nosek et al., 2012; Poldrack et al., 2017; McKiernan et al., 2016), we focus on providing a resource overview. Our goal is to make it easier for scientists to select the most valuable instruments for their practice at every step of the research workflow, and consequently accelerate the broader adoption of open science tools and practices, increasing scientific reproducibility and openness. We provide justification on why each implements good practices, as well as how to integrate them into the research workflow.

In this review we do not aim to recommend particular tools over others, as the ideal ones may depend on many factors that vary between researchers. However, we highlight some points to consider at the time of selection. Typically it is advisable to choose tools that integrate with other tools and practices already established in the lab, have a relatively fast learning curve, and a long-term benefit. In order to increase sustainability, tools should be relatively mature, well maintained, and supported by an active community. Another indicator is whether the tools and practices are integrated in already established toolboxes or supported by large open science organizations. If still multiple tools meet these criteria, then it might be advantageous to choose one that is used by peers and collaborating partners. When we recommend practices, we state the problems they are supposed to address. We also encourage the readers to join the development teams and leadership of those tools, becoming an active part of the open neuroimaging community. Contributions from individuals who are experiencing barriers to the uptake of specific practices are particularly encouraged, since they can help mitigate these barriers for the benefit of everyone.

The manuscript is organized following the different steps of the research cycle: study inception and planning, data acquisition, research data management, data processing and analysis, and research dissemination. For each step we provide a figure with subgoals (subsections in the text) in the headings, some recommendations on how to achieve them in a bullet list, and supporting tools indicated by icons (see Figs. 1–5). To further guide the readers, the manuscript is accompanied by a detailed table containing links and pointers to the resources featured in the text of each section (see Table S1). In addition, the content is available online as a Jupyter Book at https://oreoni.github.io (https://doi.org/10.5281/zenodo.7083031).

Fig. 1.

Study inception and planning

For each step, the figure contains the main goals (headings), specific recommendations (bullet list), and useful tools (icons).

Sources: Icons from the Noun Project: Registration by WEBTECHOPS LLP; Share by arjuazka; Computer warranty by Thuy Nguyen; Logos: used with permission by the respective copyright holders.

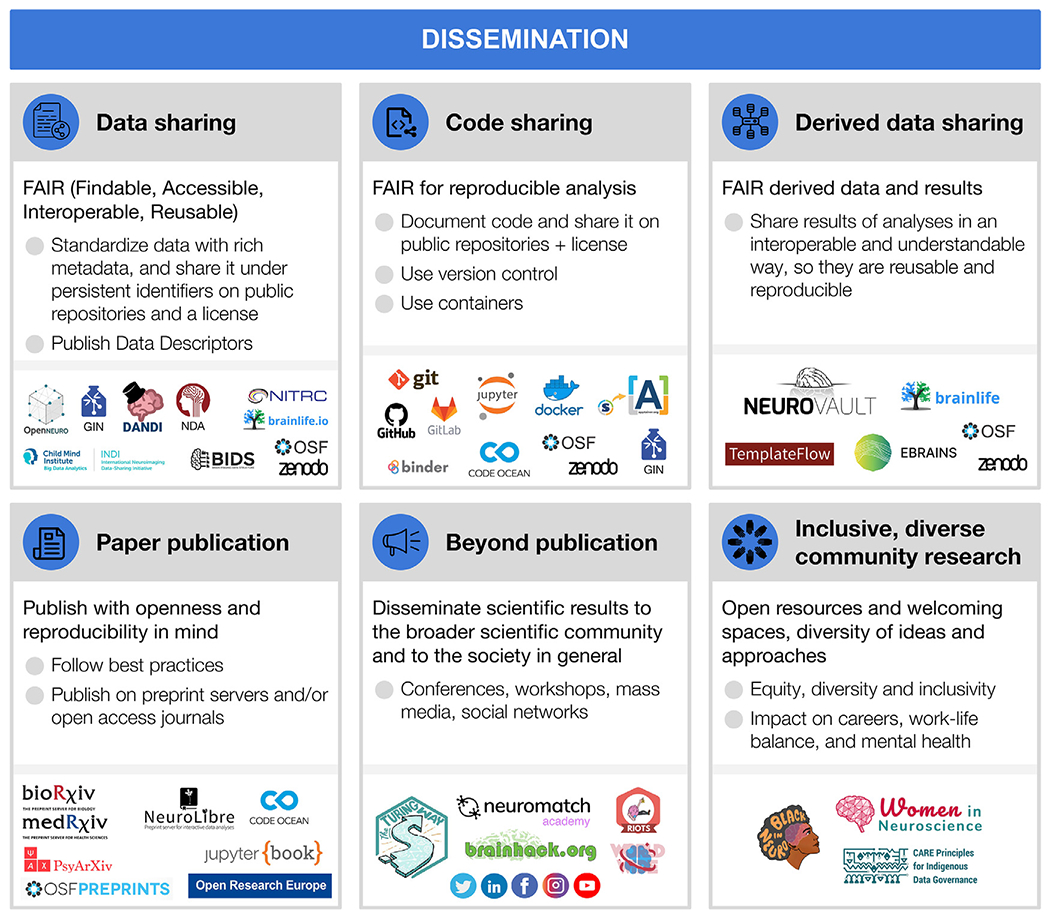

Fig. 5.

Dissemination

For each step, the figure contains the main goals (headings), specific recommendations (bullet list), and useful tools (icons).

Sources: Icons from the Noun Project: Data Sharing by Design Circle; Share Code by Danil Polshin; Data by Nirbhay; Publication by Adrien Coquet; Broadcast by Amy Chiang; Logos: used with permission by the copyright holders.

2. Study inception, planning, and ethics

Each individual decision from the beginning of the study will contribute to facilitate or hamper reproducibility. In the current section, we will describe practices and tools for preparation, piloting, preregistration, obtaining participants’ consent and ongoing quality control and assessment (see Fig. 1).

2.1. Study preparation and piloting

Research projects usually begin with descriptions of general, theoretical questions in documents such as grants or thesis proposals. Such foundations are essential but necessarily broad. When the project moves from proposal to implementation, these descriptions are translated into concrete protocols and stimuli, a process that can be streamlined by the incorporation of open procedures and comprehensive piloting. The promise is that the more preparation and piloting is conducted prior to data collection, the more likely it is that the project will be successful: that analyses of its data can contribute to answering its motivating ideas and questions (Strand, 2021).

Standard Operating Procedures (SOPs) can take different forms, and are powerful tools for planning, conducting, recording, and sharing projects. Ideally, SOPs describe the entire data collection procedure (e.g., recruitment, performing the experiment, data storage, preprocessing, quality control analyses), in sufficient detail for a reader to conduct the experiment themselves with minimal supervision, thereby contributing to reproducibility. SOPs may begin as an outline with vague descriptions, preferably during the pilot stage, and then become more detailed over time. For example, if a session is lost due to a button box signal failure, an image of its correct settings could be added to the SOPs. At the end of the project, its SOPs should be released along with its publications and datasets, to provide a source of answers for the detailed procedural information that may be needed for experiment reproducibility or dataset reuse, but are not included in typical publications.

Many resources can assist with experiment planning and SOP creation. Documents and experiences from similar studies conducted locally are valuable, but should not be the only source of information during planning, since, for example, a procedure may be considered standard in one institution but not in another. Public SOPs can serve as examples, as can protocols published on specialized sites (e.g., Protocol Exchange, protocols.io, Nature Protocols; see Table S1). Best practices guides are now available for many imaging modalities (MRI: Nichols et al. 2017; MEG/EEG: Pernet et al. 2020; fNIRS: Yucel et al. 2021; PET: Knudsen et al. 2020). Open resources for stimulus presentations and behavioral data acquisition are also recommended to increase reproducibility (see Section 3.2).

Piloting should be considered an integral part of the planning process. By “piloting” we mean the acquisition and evaluation of data prior to the collection of the actual experimental data, verifying the feasibility of the whole research workflow. While it is not a necessary prerequisite for reproducibility, it is a good scientific practice to produce higher quality research, and facilitates reproducibility via better documentation and SOPs. A piloting stage before starting data collection is important, not only for ensuring that the protocol will go smoothly when the first participant arrives, but also that the SOPs are complete and, critically, that the planned analyses can be carried out with the actual experimental data recorded. For example, pilot tests may be set up to confirm that the task produces the expected behavioral patterns (e.g., faster reaction time in condition A than B), that the log files are complete, and that image acquisition can be synchronized with stimulus presentation. Piloting should also include testing the data storage and retrieval strategies, which may include storing consent documents (Section 2.3), questionnaire responses, imaging data, and quality control reports. SOPs also prescribe how data will be organized, preferably according to a schema (e.g., the Brain Imaging Data Structure, Section 4.1). Data organization largely determines the efficient implementation of analysis pipelines and improved reproducibility, reusability, and shareability of the data and results.

Analyses of the pilot data are very important and can take several forms. One is to test for the effects of interest: establishing that the desired analyses can be performed and that the data quality is sufficient to produce valid and reproducible results (Sections 2.2. and 2.4; for power estimation tools see Table S1). A second type of pilot analysis is to establish tests for effects not of direct interest, but suitable for controls. As discussed further in Section 2.4, positive control analyses involve strong, well-understood effects that must be present in a valid dataset. It is worth mentioning that well structured and documented openly available datasets (see Section 4) could also serve for analysis piloting though they would lack the test for potential site specific technical issues. Simulations could also be used to ensure that the planned analysis is doable and valid.

2.2. Pre-registration

Pre-registration is the specification of the research plan in advance, prior to data collection or at least prior to data analysis (Nosek and Stephen Lindsay, 2018). Pre-registration usually includes the study design, the hypotheses and the analysis plan. It is submitted to a registry, resulting in a frozen time-stamped version of the research plan. Its main aim is to distinguish between hypothesis-testing (confirmatory) research and hypothesis-generating (exploratory) research. While both are necessary for scientific progress, they require different tests and the conclusions that can be inferred based on them are different (Nosek et al., 2018)

Registered reports is a relatively novel publishing format that can be seen as advanced pre-registration. This format is becoming very common, with a growing number of hundreds of participating journals (Hardwicke and Ioannidis, 2018; Chambers, 2019). In a registered report, a detailed pre-registration is submitted to a specific journal, including introduction, planned methods and potentially preliminary data. Then, it goes through peer review prior to data collection (or prior to data analysis in certain cases, for example for studies that rely on large-scale publicly shared data). If the proposed plan is approved following peer review, it receives an “in-principle acceptance”, indicating that if the researchers follow their accepted plan, and their conclusions fit their findings, their paper will be published. An in-principle accepted registered report is sometimes required to be additionally pre-registered. Recently, a platform for peer review of registered reports preprints was launched, named “Peer Community in registered reports” (see Table S1).

There are many benefits to pre-registration, from the field to the individual level. Transparency with regard to the research plan, and whether an analysis is confirmatory or exploratory, increases the credibility of scientific findings. It helps to mitigate some of the effects of human biases on the scientific process, and reduces analytical flexibility, p-hacking (Simmons et al., 2011) and hypothesizing after the results are known (Nosek et al., 2019; Munafò et al., 2017; Nature, 2015; Kerr, 1998). There is initial evidence that the quality of pre-registered research is judged higher than in conventional publications (Soderberg et al., 2021). Nonetheless, it should be noted that pre-registration and registered reports are not sufficient to fully protect against questionable research practices (Paul et al., 2021; Devezer et al., 2021; Rubin, 2020) and their general impact will depend on the extent journals will implement them. Registered reports also mitigate publication bias by accepting papers based on hypothesis and methods, independently of the findings. Indeed, it has been shown that pre-registered studies and registered reports include more null findings (Allen and Mehler, 2019; Kaplan and Irvin, 2015; Scheel, 2020) and report lower effect sizes (Schäfer and Schwarz, 2019) compared to other studies. For the individual researcher, registered reports with a two-stage review are an excellent example in which authors benefit from feedback on their methods before even starting data collection. They can help improve the research plan and spot mistakes in time, and provide assurance that the study will be published (Wagenmakers and Dutilh, 2016; Kidwell et al., 2016). It should be noted, though, that registered reports can require a significant time commitment, that is likely to pay off in the long-term but is not easily accommodated in many traditional project funding models.

While pre-registration is not the common practice yet, it is becoming more common over time (Nosek and Stephen Lindsay, 2018) and requirements by journals and funding agencies are already changing. There are many available templates and forms for pre-registration, organized by discipline or study types, for example for fMRI and EEG (see Table S1), and published guidelines for pre-registration in EEG are also available (Govaart and Schettino, 2022; Paul et al., 2021). There are different approaches as to what should be pre-registered. For instance, some believe it should be an exhaustive description of the study, including the background and justification for the research plan, while others believe it should be a short and concise document, including only the necessary details to reduce the likelihood of p-hacking and allowing reviewers to review it properly during the peer review process (Simmons et al., 2021). Pre-registration can also be flexible and adaptive by pre-registering contingency plans or complex decision trees (Benning et al., 2019).

Once researchers develop an idea and design a study, they can write and pre-register their research plan (Nosek and Stephen Lindsay, 2018; Paul et al., 2021). Pre-registration could be performed following the piloting stage (Section 2.1), but studies can be pre-registered irrespective of whether they include a piloting stage or not. There are many online registries where researchers can pre-register their study. The three most frequently used platforms are: (1) OSF, a platform that can also be used to share additional information about the study/project (such as data and code), with multiple templates and forms for different types of pre-registration, in addition to extensive resources about pre-registration and other open science practices; (2) aspredicted.org, a simplified form for pre-registration (Simmons et al., 2021); and (3) clinicaltrials.gov, which is used for registration of clinical trials in the U.S. (see Table S1).

Once the pre-registration is submitted, it can remain private or become public immediately, depending on the platform and the re-searcher’s preferences. Then, the researcher collects the data and executes the research plan. When writing the manuscript to report the study, the researcher is advised to include a link to the pre-registration, clearly and transparently describe and justify any deviation from the pre-registered plan and also report all registered analyses. Additional analyses to deepen some results or looking into unexpected effects are encouraged, and are part of the routine scientific investigation. The added benefit of pre-registration is that such analyses do not need to reach pre-specified levels of significance because they are reported as exploratory.

2.3. Ethical review and data sharing plan

The optimism of the scientific community about improving science by making all research assets open and transparent has to take into account privacy, ethics and the associated legal and regulatory needs for each institution and country. Whereas on the one hand sharing data (most often collected with public funds) is critical to advance science, on the other hand, sharing data can in some situations become infeasible to safeguard privacy. Data governance concerns the regulatory and ethical aspects of data management and sharing of data files, metadata and data-processing software (see Sections 6.1–3). When data sharing crosses national borders, data governance is called International Data Governance (IDG). IDG depends on ethical, cultural and international laws.

Data sharing is beneficial for both reproducibility and the exploration and formulation of new hypotheses. Therefore, it is important to ensure, prior to data collection, that the collected data could be later shared. Open and reproducible neuroimaging thus starts by (1) planning which data would be collected; (2) planning how these data would later be shared; (3) having ethical and legal clearance to share data; but also (4) the infrastructural means for this sharing (for more information about data sharing and available platforms, see Section 6; for data governance see Section 4).

Since 2014, the Open Brain Consent project (see Table S1), which was founded under the ReproNim project umbrella, provides examples and templates translated to multiple languages to help researchers prepare consent forms for data sharing, including the recent development of an EU GDPR-compliant template (The Open Brain Consent working group, 2021). Such consent should include a statement about how the data will be shared, with whom, potential risks, and that the consent to share can be withdrawn (which is separate from consent to participate and withdraw from the study). Data sharing forms should also make explicit how these factors determine to what extent a later withdrawal or editing of the data on the repository is possible. Given the international nature of the majority of neuroscience projects, IDG has become a priority (Eiss, 2020). Further work will be needed to implement an IDG approach that can facilitate research while protecting privacy and ethics. Specific recommendations on how to implement IDG have been proposed (Eke et al., 2022).

It should be noted that ethical review comprises more than data sharing procedures. Its goals are safety, self determination, and the protection of rights of study participants. A central element is informed consent to participate in the study, which requires that technical and scientific aspects of the study as well as regulations for participation in the study are transparently communicated to the participants. Clinical research may require adherence to additional, country-specific regulations. Finally, when planning the recruitment procedures, it is important to aim for equity, diversity and inclusivity (Henrich et al., 2010; Forbes et al., 2021), avoiding obtaining results that may not generalize to larger populations and improving the quality of research (e.g., Baggio et al. 2013).

2.4. Looking at the data early and often: monitoring quality

Inevitably, unexpected events and errors will occur during every experiment and in every part of the research workflow. These can take many forms, including dozing participants, hardware malfunction, data transfer errors, and mislabeled results files. As data progresses through the workflow, issues are likely to cascade and amplify, perhaps masking or mediating experimental effects, thereby damaging the reliability of the results. The impact of such surprises can range from the trivial and easily corrected to the catastrophic, rendering the collected data unusable or conclusions drawn from it invalid. Identifying issues and errors as early as possible is important to enable adding corrective measures to the protocol, but also because some issues are much easier to detect when the data are in a less-processed form. For example, a number of typical artifacts in anatomical MRI are known to be easier to identify in the background of the image and regions of no-interest (Mortamet et al., 2009), and can easily remain undetected if the first quality control check is set up after, e.g., brain extraction, which masks out non brain tissue. Thus, it is fundamental to pre-establish within the SOPs (Section 2.1) the mechanisms set in place to ensure the quality of the study. There are several mechanisms available that help to ensure that all required data are being recorded with sufficient quality and in a way that makes them analyzable.

Quality control checkpoints.

Establishing quality control (QC) checkpoints (Strother, 2006) is necessary for every project: which data are usable for analyses, and which are not? At these key points in the pre-processing or analysis workflow the data’s quality is checked, and if insufficient, it does not move on to the next stage. Results from low quality data are much less likely to be reproducible with new data or methods. Critically, the exclusion criteria of each checkpoint must be defined in advance (preferably stated in the SOPs and the pre-registration document, see Sections 2.1 and 2.2) to preempt unintentional cherry-picking (i.e., excluding data points to reinforce the results), which is a major contributor to irreproducibility via undisclosed flexibility. Some criteria are widely accepted and applicable, for example, that all neuroimaging data should be screened to eliminate clear artifacts, such as data corrupted by incidental electromagnetic interference or participants movements. A similarly well-established checkpoint of the workflow is visualizing and inspecting the outputs of surface reconstruction methods in MRI, checking time activity curves in high binding regions for PET or power spectral content in MEG and EEG; these fundamental QC checkpoints and their implementation are heavily dependent on the immediately previous processing step. Such QC may be conducted manually by experts using software aids, like visual summary reports or visualization software such as MRIQC (Esteban et al., 2017). More objective, automatic exclusion criteria, are currently an open and active line of work in neuroimaging (e.g., Ding et al. 2019; Kollada et al. 2021; Esteban, Blair, et al. 2019). Some QC checkpoints, such as for acceptable task performance or participant movement, are often defined for individual tasks, experiments and hypotheses.

Quality assurance (QA).

Tracking QC decisions will also enable identifying structured failures and artifacts that require not just excluding affected datasets, but rather taking corrective actions to preempt propagation to additional datasets. When a mishap occurs, the experimenters should investigate its cause, and if possible, change the SOPs (Section 2.1) and related materials to reduce the chance of it happening again. For example, if many participants report confusion about task instructions, the training procedure and experimenter script could be altered. Automated checks and reports can be very effective, such as real-time monitoring of participant motion during data collection (Heunis et al., 2020), or validating that image parameters are as expected before storage (e.g., with XNAT Marcus et al. 2007 or ReproNim tools Kennedy et al. 2019).

Positive control analyses.

A final aspect of quality assurance is the incorporation of positive control analyses: analyses included not because they are of interest for the scientific questions, but because they provide evidence that the dataset is of sufficient quality to conduct the analyses of interest, and that the analysis is valid. Ideally, positive control analyses focus on strong, well-established effects that must be present if the dataset is valid. For example, with task fMRI designs, button pressing, which should be associated with contralateral motor activation, is often a convenient target for positive control analysis. In MEG and EEG, participants can be asked to blink their eyes, open their mouths, or clench their jaws, and the recordings checked for the associated artifacts. Positive control analyses should also be carried out during piloting, when changes to the protocol are still possible (see Section 2.1). For example, if button presses are not clearly detectable during piloting, the acquisition sequence may not have sufficient SNR for the planned analyses and thus should be modified. Positive controls can further serve for analysis pipeline optimization prior to conducting the optimized analysis on the outcome of interest, thus preventing legitimate optimization from turning into p-hacking.

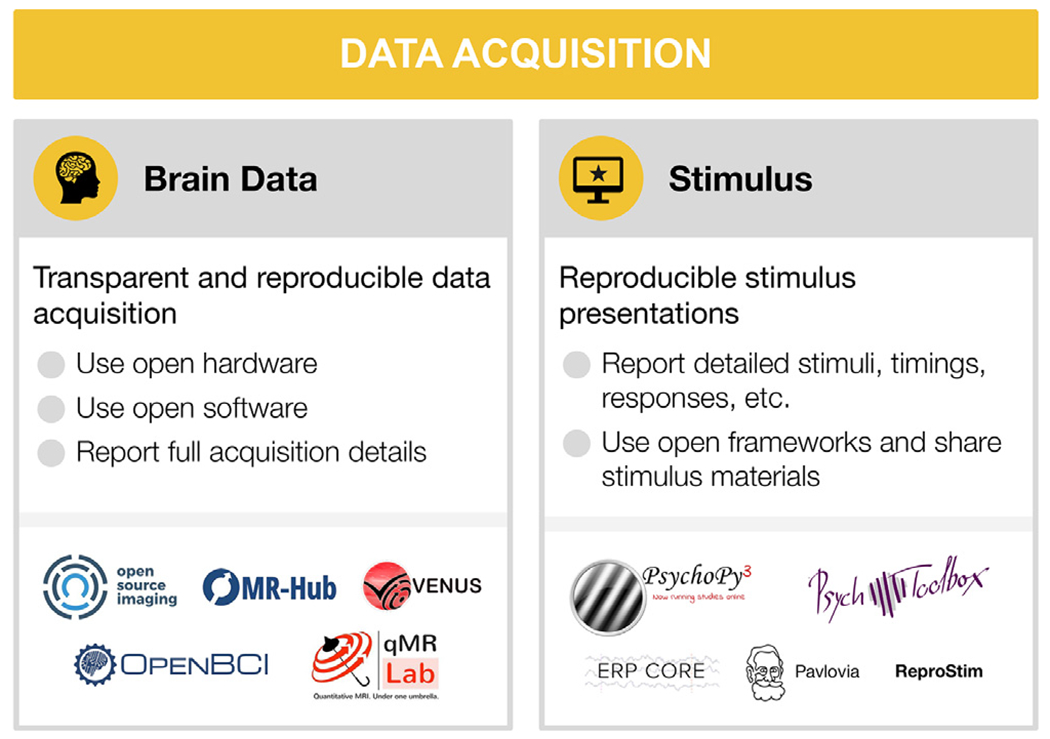

3. Data acquisition

Data acquisition is largely carried out with vendored systems. Manufacturers typically keep their software and hardware closed or semi-open at most. As a result, researchers often receive highly processed (e.g., reconstructed) data as ‘raw’ data from the devices. The lack of transparency in the acquisition details and downstream proprietary processing prevents end-to-end reproducible neuroimaging workflows. Reproducibility is endangered, for instance, by heterogeneity in data formats, definition of critical experimental parameters, and technological differences that are translated into the data as spurious, non-biological differences between acquisition devices.

A central issue is the proprietary nature of acquisition protocols. Many imaging device manufacturers require developers to use building blocks from vendor-exclusive toolboxes. This closes the door on open-source development and hampers multi-center consensus for modern imaging methods, especially in research. These shortcomings of mostly closed solutions have triggered a growing interest in open-source acquisition hardware and software (Winter et al., 2016), Here, we provide a brief review of these developments and accompanying solutions aimed at fostering open and collaborative acquisition method development across imaging modalities (see Fig. 2).

Fig. 2.

Data acquisition

For each step, the figure contains the main goals (headings), specific recommendations (bullet list), and useful tools (icons).

Sources: Icons from the Noun Project: Brain by parkjisun; Computer Screen by Icon Solid (adapted with a star); Logos: used with permission by the copyright holders.

3.1. Brain data acquisition

A common approach advocated by MRI researchers is establishing consensus protocols to standardize data acquisition. One of the flagship applications of this strategy is the Human Connectome Project (HCP) protocol, which achieved this within the confines of a single vendor (Smith et al., 2013). The HCP acquisition sequences and reconstruction software are compiled for different MRI scanner versions of a single vendor, openly distributed and maintained for fMRI applications (Uğurbil et al., 2013). However, it is generally difficult to achieve good inter vendor agreement using off the shelf software even for widely used protocols, such as apparent diffusion coefficient and longitudinal relaxation time (Sasaki et al., 2008; Lee et al., 2019). In addition, not all software options are available from all vendors (for example, compressed sensing (Lustig et al., 2008) and frequency-domain based parallel imaging methods (Breuer et al., 2005; Griswold et al., 2002). More over, even seemingly simple image enhancement protocols, such as image inhomogeneity corrections, are often scarcely documented and validated but can affect inferences drawn from an experiment (Schmitt and Rieger, 2021; Jellús and Kannengiesser 2014). Users typically have access to key parameters of pulse sequences, which are at the center of data acquisition. The exact pulse sequence descriptions are vendor-specific and may even change between software upgrades of a single vendor. This makes it difficult to evaluate multi-center replicability of new acquisition methods or to acquire longitudinal data with confidence.

Fortunately, in the last decade, several vendor-neutral data acquisition pulse sequences and reconstruction frameworks have been developed to mitigate this problem: Pulseq (Layton et al., 2017), PyPulseq (Ravi et al., 2019), GammaStar (Cordes et al., 2020), TOPPE (Nielsen and Noll, 2018), ODIN (Jochimsen and von Mengershausen, 2004), and SequenceTree (Magland et al., 2016) (see Table S1). Although these tools vary in vendor compatibility and the flexibility of their acquisition runtime, they enable vendor-neutral deployment of pulse sequences with transparent access to all the details needed. Nevertheless, vendor-neutral raw data (k-space, i.e. the 2D or 3D Fourier space representation of the image) collection is half the battle.

To complete the puzzle of MRI acquisition, interoperable and open-source reconstruction frameworks are essential. Thanks to ISMRM-RD (Inati et al., 2017), a k-space data standard, community-developed reconstruction tools can have a unified way to run advanced reconstruction algorithms against undersampled raw data (Maier et al., 2021). Some of these tools include Gadgetron (Hansen and Sørensen, 2013), BART (Uecker et al., 2015), MRIReco.Jl (Knopp and Grosser, 2021) (see Table S1 for further tools and details). By streamlining these acquisition and reconstruction tools using data standards at multiple levels (Karakuzu et al., 2021; Inati et al., 2017) on a data-driven and container mediated workflow engine (Di Tommaso et al., 2017), end-to-end reproducible MRI workflows can be developed. A recent study has shown that this approach can significantly reduce inter-vendor variability of quantitative MRI measurements (Karakuzu et al., 2020; Karakuzu et al., 2022). Given the growing open-source MRI acquisition ecosystem, a variety of end-to-end workflows are possible. Therefore, community-driven validation frameworks have a key importance for interoperable solutions (Tong et al., 2021). Facilitated by these standards, effective and open communication methods development sets the future direction for reproducible MRI research (Stikov et al., 2019).

In PET, the variety between different scanners is even larger than in MRI. An overview over different scanner types based on their usage for a specific radiotracer targeting the serotonin transporter, namely [11 C]DASB, is given in (Nørgaard et al., 2019). Different PET scanners export images in slightly different data formats with little overlap in the Digital Imaging and Communications in Medicine (DICOM) PET specific tags. As with MRI, reconstruction is vendor/machine specific but open source solutions to image reconstruction are being developed, for instance the OMEGA toolbox (Wettenhovi et al., 2021). Data acquisition for PET is further complicated by the use of different PET tracers, injection methods, scan duration and scan framing or injected radioactivity dose.

In MEG and EEG, the problem of standardized data acquisition starts even earlier: unlike the common DICOM data format used across vendors in MRI or PET, MEG and EEG manufacturers do not use a common data format, and format specifications are rarely made public. More importantly, equipment implementation significantly differs between vendors, for example with respect to MEG sensor types, software noise suppression techniques, and EEG amplifiers and electrodes. There have been some efforts on developing open versions of some proprietary tools, for example, the Maxwell filtering for signal space separation by the MNE-python team (Gramfort et al., 2014). Additionally, initiatives, such as the OpenBCI, offer open EEG hardware and tools for biosensing and brain computer interfacing through continuous community driven development. As we have mentioned, very little is known on how the variability of data acquisition parameters affect downstream comparability of results. The EEGManyLabs project (Pavlov et al., 2021) will provide a comprehensive dataset in this regard, as many labs with different equipment try to replicate the same studies.

Given the large variations across different vendors for all neuroimaging modalities, which often cannot be overcome, it is crucial to report all data acquisition parameters in a comprehensive and standardized manner to make potential differences in data acquisition across studies and sites transparent (for a discussion of reporting guidelines see Section 6.4).

3.2. Stimulus presentation and behavior

Several actively maintained programs for stimulus presentation and response logging are available. Open source software include PsychoPy (Peirce et al., 2019) in Python and Psychtoolbox (Brainard, 1997; Pelli, 1997; Kleiner et al., 2007) in MATLAB. Both have many users, making it possible to get assistance and perhaps find an already-implemented task protocol (e.g., on Pavlovia for Psychopy). Modality specific resources also exist, for instance the ERP CORE (Compendium of Open Resources and Experiments; Kappenman et al. 2021) openly provides optimized paradigms for several widely used ERP components, along with scripts, data processing pipelines, and sample data.

Using open stimuli and presentation software generally increases the likelihood a dataset will be useful to others, and its results reproducible (Strand and Brown, n.d.2022). Although desirable, it is not always possible to use fully open stimuli, particularly in the case of commercial movies, audio plays, and image databases. Stimuli, presentation scripts, behavioral tests and related material should be shared whenever possible (see DuPre et al. 2019 for a list of datasets sharing naturalistic stimuli and Section 6). Researchers should always check the licenses on the stimulus materials they plan to use or share. To facilitate stimuli feature analysis and exact reproducibility of the experimental paradigms, such projects as ReproNim’s ReproStim (Connolly and Halchenko, 2022) could automate recording and archival of audio-visual stimuli. When specific stimuli or material can not be released, they should be described as unambiguously as possible and, if possible, providing the source, such as identification number (e.g., a GTIN), and scripts to (re)produce used stimuli from the commercial media.

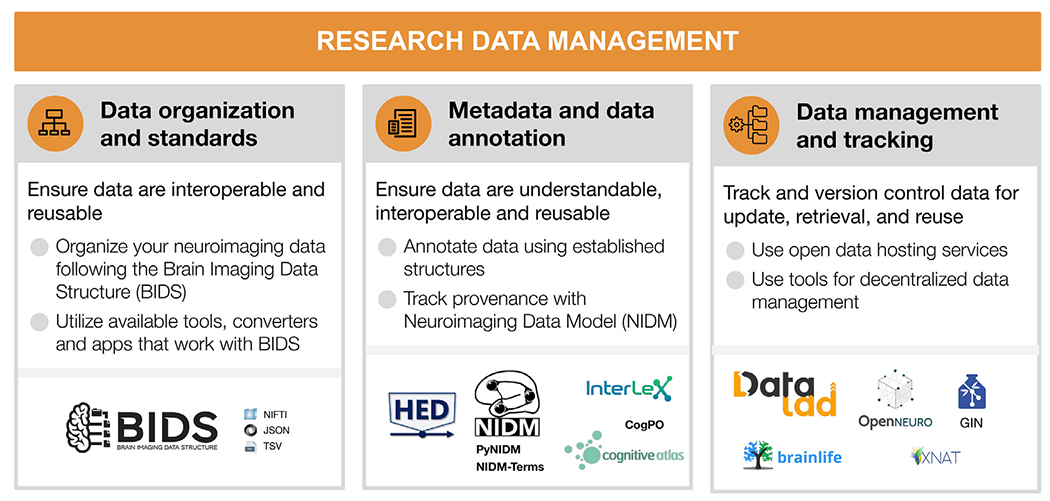

4. Research data management

Good research data management (RDM), i.e. how data are organized, maintained, annotated, tracked, stored, and accessed throughout a research project, forms the basic foundations of result reproducibility, data reusability, and research efficiency (Wilkinson et al., 2016; Gorgolewski and Poldrack, 2016; Nosek et al., 2018; Nosek et al., 2012; Nosek and Lakens, 2014; Poldrack et al., 2017; Poldrack et al., 2020; Poldrack et al., 2019; Borghi and Van Gulick, 2021a; Poline et al., 2022). Consequently, Data Management Plans (DMPs) are widely required by funders even at the application phase (e.g., NIH and NSF in the U.S., ERC in Europe), increasingly expected by scientific peers, and holds considerable benefits for individual researchers. It is good practice to develop, review and execute DMPs for every experiment, whether or not it is required by the funding agency. While specific RDM requirements vary across subdisciplines, this section highlights RDM standards and tools applicable across neuroimaging, ranging from data organization to annotation and publication (see Fig. 3).

Fig. 3.

Research data management

For each step, the figure contains the main goals (headings), specific recommendations (bullet list), and useful tools (icons).

Sources: Icons from the Noun Project: Structure by Adam Baihaqi from NounProject.com; Metadata by M. Oki Orlando; Data Management by ProSymbols; Logos: used with permission by the copyright holders.

4.1. Data organization and standards

Neuroimaging experiments result in complicated data that can be arranged in many different ways. Historically, data were organized differently between institutions and within labs. This lack of consensus (or a standard) could lead to misunderstandings and suboptimal usage of various resources: human (e.g., time wasted on rearranging data or rewriting scripts expecting certain structure), infrastructure (e.g., data storage space, duplicates), and financial (e.g., disorganized data have limited longevity and value after first publication, because it is hard or even impossible for other researchers to understand and use them). Finally, and most importantly, it produces poor reproducibility of results, even within the lab where data were collected, because it is more likely to include errors and less likely to be accessible to future lab members (or even to the original researcher who obtained the dataset, months or years after they worked on it). Therefore, the need for a data standard in the neuroimaging community became essential.

The Brain Imaging Data Structure (BIDS) is a community-led standard for organizing, describing, and sharing neuroimaging data [RRID:SCR_016124]. BIDS is an evolving standard, which supports multiple neuroimaging modalities including MRI (Gorgolewski et al., 2016), quantitative MRI (Karakuzu et al., 2021), MEG (Niso et al., 2018), EEG (Pernet et al., 2019), intracranial EEG (Holdgraf et al., 2019), PET (Norgaard et al., 2022), Microscopy (Bourget et al., 2022), and imaging genetics (Moreau et al., 2020). Many more extensions are under active development, for example, fNIRS, motion capture, and animal neurophysiology. The BIDS specification documents how to organize the data, generally based on simple file formats (such as NIfTI for tomographic data (Cox et al., 2004), and JSON for metadata) and folder structures. This specification can be extended through community-driven processes to incorporate new neuroimaging modalities or sets of data types.

Multiple applications and tools have been released to make it easy for researchers to incorporate BIDS into their current workflows, maximizing reproducibility, enabling effective data sharing, and supporting good data management practices. For example, BIDS converters make it easier to convert data into BIDS format (e.g., MNE-BIDS (Appelhoff et al., 2019) for MEG and EEG, dcm2bids, ReproNim’s HeuDiConv (Halchenko et al., 2021) and ReproIn (Visconti di Oleggio Castello et al., 2020) for MRI and PET2BIDS for PET; see many more on Table S1). The BIDS validator can help researchers make sure their dataset is BIDS-valid following conversion.

Once data are in BIDS, tools are available to ease interaction with the data. Two commonly used software packages are PyBIDS (Yarkoni et al., 2019), and BIDS-Matlab (Gau et al., 2022). These tools facilitate useful dataset queries—such as how many participants are part of a dataset or what tasks were performed— as well as programmatically retrieving specific files—such as all functional runs for a specific subject. Finally, BIDS apps are containerized analysis pipelines that use full BIDS datasets as their input and produce derivative data (Gorgolewski et al., 2017). Examples of BIDS apps include MRIQC (Esteban et al., 2017) for MRI quality control, fMRIPrep (Esteban et al., 2019) for fMRI preprocessing, and PyMVPA (Hanke et al., 2009) for statistical learning analyses of large datasets (see more at Table S1).

BIDS is a community-led standard and strives to be open and inclusive. The BIDS specification is the result of the ongoing collaboration, shared knowledge, discussion, and consensus through the email discussion list, shared Google docs, and GitHub. Questions are also answered on the Neurostars forum and the Brainhack Mattermost channel. BIDS has a well-specified governance structure where everybody is welcome to participate (see BIDS Code of Conduct, Table S1), and the BIDS Starter Kit is a growing resource intended to simplify the learning process for newcomers.

4.2. Metadata and data annotation

Metadata and data annotation induces consistency and facilitates data replication and reuse. It improves the clarity of the dataset, the ability for collaborators to understand the conditions in which the data were collected, and the ability to effectively share and reuse them. Commonly, metadata files are data dictionaries that map key terms from an agreed-upon vocabulary to data values that contain detailed and standardized information about the key terms. For example, a key called “SampleFrequency” might map to a numerical value, or a key “TaskDescription” might map to a free-form text that describes the task used in a specific experiment. The BIDS standard has proposed a consistent metadata structure in its specification along with a set of specification terms and tags.

Data annotation is also crucial for most data analyses in neuroimaging. For example, when analyzing task-based data, an experiment’s reproducibility is largely determined by the extent to which events are clearly documented. Beyond reproducing previous findings, exhaustively annotated events can allow researchers to re-use the data for means that were originally not thought of during data collection (Bigdely-Shamlo et al., 2020). However, even if each study is fully annotated, without a standard to consistently describe facets of events, all annotations will remain cumbersome and error-prone to work with, and achieving a state of machine readability will require effortful labor.

To address this problem, the Hierarchical Event Descriptor (HED) standard has been continuously developed over the past years (Robbins et al., 2021; Robbins et al., 2021). Drawing on a set of hierarchical vocabulary structures (the HED base schema) and application rules, the HED standard allows for both human- and machine readability, validation, and search of annotations across studies. HED is also fully integrated with the BIDS standard (see Section 4.1), and can be extended by researcher supplied schemas.

Additionally, the Neuroimaging Data Model (NIDM; Maumet et al., 2016; Keator et al., 2013) effort aims to build a core structure for neuroscience datasets to improve searching across publicly-available datasets. The initiative also provides tools to create and use NIDM documents from BIDS datasets (Appelhoff et al., 2019). To effectively describe neuroscience data, well-developed community-driven vocabularies are needed. NIDM is built using semantic web techniques and builds off the PROV (provenance) vocabulary (Moreau et al., 2015). Moreover, the NIDM-Terms effort has begun to collect and extend sets of community-developed controlled vocabularies and techniques for associating concepts with selected study variables of publicly-available neuroimaging datasets (e.g., OpenNeuro, ABIDE, ADHD200, and CoRR). This keeps a registry of the domain-relevant vocabularies and concepts used to annotate datasets, further facilitating concept reuse, and improved inter-dataset search. The NIDM team has developed a JavaScript web application, as well as Python-based command line annotation tools, that allow researchers to annotate their BIDS structured datasets and single tabular files (e.g., csv and tsv spreadsheets), and export BIDS JSON-formatted data dictionaries, NIDM JSON-LD data dictionaries, and NIDM semantic web documents, into sidecar files that accompany the data files. Currently, the NIDM-Terms annotation tools allow researchers to associate their study variables with concepts available in the Cognitive Atlas (Poldrack et al., 2011), the InterLex information resource, and those in the canonical NIDM terminology/ontology as well as encourage them to add descriptive information to improve the clarity of their variables. Such an effort harmonizes and improves the consistency of neuroimaging data and thus makes querying across neuroimaging datasets more efficient.

4.3. Data management and tracking

Raw data and derivatives (outputs from processed data) form the basis for scientific analyses and insights. Being able to efficiently store, retrieve, and update data, derivatives, and metadata across a variety of available storage options is crucial to enable further research (Borghi and Van Gulick, 2021b). As files change and evolve over the course of a project, there is a need to identify which data have been used in the generation of a result, and, in case the data were subject to change or updates, which exact version of the data has been used. The ability to manage data and metadata and track the data-analysis process provides a basis for rigor and reproducibility.

DataLad (Halchenko et al., 2021) is an open-source, community-developed, general purpose tool for managing and version controlling digital files in a decentralized manner. It tracks data of any type or size in a scalable, Git-repository-based overlay structure, called the dataset (practically, a structure of folders and files). DataLad allows tracking data and metadata files stored on local devices as well as remote or cloud infrastructure. DataLad can retrieve public data from major providers such as OpenNeuro, the Canadian Open Neuroscience Platform, the International Neuroimaging Data-sharing Initiative, the Healthy Brain Network Serial Scanning Initiative, Data sharing for Collaborative Research in Computational Neuroscience, the Human Connectome Project’s open access dataset (Van Essen et al., 2013), and many more. Beyond public data, with appropriate permissions or authentication, it can retrieve data from web-based storage providers including major cloud storage services, and local and remote paths (Halchenko et al., 2021; Hanke et al., 2021). DataLad implements this decentralized data management functionality in order to ensure streamlined access to tracked data regardless of hosting service, and to expose datasets for easy access on repository hosting structure. It separates management of file content from lean metadata management by tracking pointers to the services that host managed files (i.e., local infrastructure, remote hosting services, or multiple storage solutions at once). Using these pointers, it enables streamlined on-demand file retrieval in uniquely identified versions from the registered source. Importantly, data retrieval works via streamlined commands regardless of where the data are hosted. Information about DataLad can be found in the DataLad Handbook (Wagner et al. 2021, see Table S1). Entire computing environments could be efficiently managed in DataLad using datalad-container extension (Meyer et al., 2021) developed in collaboration between DataLad and ReproNim projects.

Brainlife is another open science project that allows data management. Brainlife is a free and open community-oriented, non-commercial cloud platform that provides web services to support reproducible data management and analysis. Brainlife tracks data provenance automatically for the users. As data are analyzed using the Graphical User Interfaces (GUI) and the platform’s data processing applications, provenance metadata information is automatically generated and associated with the data derivatives. The users do not have to manually save data versions, the platform does that automatically and it allows visualizing data provenance graphs.

DataLad and Brainlife are synergistic but not overlapping projects that address different user bases and needs. Indeed, DataLad and Brain-life interact nicely with one another and all published datasets retrieved by DataLad are readily accessible at brainlife.io/datasets.

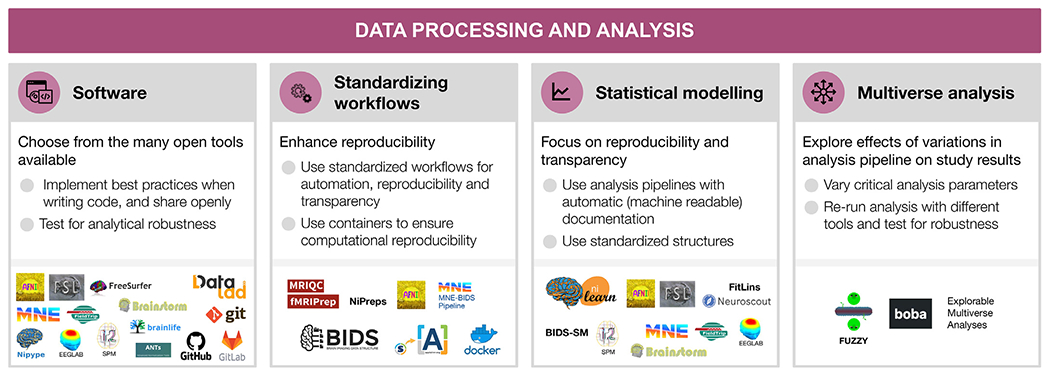

5. Data processing and analysis

Researchers typically execute a set of signal pre-processing steps prior to advanced data analysis, to, for instance, identify and remove noise, align data spatially and temporally, segment spatio-temporal regions of interest, identify patterns and latent signal structures (e.g., clustering), integrate the information from several modalities, introduce prior knowledge about the device or the physiology of the specimen, etc. The combination of the operations that take the unprocessed data as the input, prepare the data for analysis, and finally, perform advanced analysis, comprise a full analysis pipeline or workflow. In implementing such analysis workflows, software has emerged as a critical research instrument greatly relevant to ensure the reproducibility of studies (see Fig. 4).

Fig. 4.

Data processing and analysis

For each step, the figure contains the main goals (headings), specific recommendations (bullet list), and useful tools (icons).

Sources: Icons from the Noun Project: Software by Adrien Coquet; Workflow by D. Sahua; Statistics by Creative Stall; Chaos Sigil by Avana Vana; Logos: used with permission by the copyright holders.

5.1. Software as a research instrument

The digital nature of neuroimaging data along with the large, and constantly increasing, net amounts of daily acquired data, place software as a central instrument of the neuroimaging research workflow. As a result, many toolboxes containing utilities ranging from early steps of preprocessing to statistical analysis and visualization of results have emerged, and some have largely shaped the software development in the field, e.g., AFNI (Cox, 1996; Cox and Hyde, 1997), FSL (Jenkinson et al., 2012), SPM (Penny et al., 2011; Litvak et al., 2011; Flandin and Friston, 2008), FreeSurfer (Dale et al., 1999; Dale and Sereno, 1993), Brainstorm (Tadel et al., 2011, 2019), EEGLAB (Delorme and Makeig, 2004; Delorme et al., 2021), MNE-Python (Gramfort et al., 2013, 2014), Field-Trip (Oostenveld et al., 2011) (see Table S1). More recently, some software packages have been developed to cover additional aspects of the neuroimaging workflow. For instance, nibabel (Brett et al., 2020) to read and write images in many formats, the Advanced Normalization Tools (ANTs) for image registration and segmentation, or Nilearn (Abraham et al., 2014) for statistical analysis and visualization. Workflow engines conveniently connect between the building blocks and determine how the steps are executed in the computational environment. Solutions range from general-purpose scripting (e.g., Bash or Python) to neuroimaging-specific libraries (e.g., NiPype; Gorgolewski et al. 2011). Researchers have all these tools (and others) at their disposal to “mix-and-match” in their workflow. Therefore, ensuring the proper development and operation of the software engine is critical to ensure the reproducibility of results (Tustison et al., 2013).

Relatedly, the variety of software implementations is an additional motive of concern. As remarked by Carp (2012a, 2012b) based on the analysis of thousands of fMRI pipelines, analytical flexibility in combination with incomplete reporting precludes the reproducibility of the results. A recent comprehensive investigation, the Neuroimaging Analysis Replication and Prediction Study (NARPS; Botvinik-Nezer et al. 2020), found that when 70 different teams were asked to analyze the same fMRI data to test the same hypotheses, each team chose a distinct pipeline and results were highly variable. Other studies suggest similar problems in EEG (Šoškić et al., 2021; Clayson et al., 2021), PET (Nørgaard et al., 2020)and diffusion MRI (Schilling et al., 2021).

There are two crucial aspects of the high analytical variability and its effect on results in neuroimaging. First, when high analytical variability (that potentially affects results) is combined with partial reporting or with incentives to find significant effects, it can alarmingly undermine the reliability and reproducibility of results. Second, even in the apparently ideal scenario in which the researcher performs a single pre-registered valid analysis and reports it fully and transparently, it is still likely that the results are not robust to arbitrary analytical choices. Therefore, new tools are needed to allow researchers to perform a “multiverse analysis” (Section 5.4), where multiple data workflows are used on the same dataset and all the results are reported and their agreement or convergence discussed. Community-led efforts to develop high-quality “gold standard” workflows may also reduce researchers’ degrees of freedom as well as accelerate data analysis, although different pipelines may be optimal for different research questions and data.

Nevertheless, neuroimaging researchers frequently encounter gaps that readily available toolboxes do not cover. These gaps, amongst a number of other reasons (e.g., deploying a data workflow on a high-performance computer), pushes researchers into creating their own software implementations. However, most neuroimaging researchers are not formally trained in related fields of computer science, data science, or software engineering, and formal software development practices are often not included in undergraduate or graduate level neuroimaging training. This mismatch often results in undocumented, hard to maintain, and disorganized code; largely as a consequence of unawareness of software development practices. It also increases the likelihood of undetected errors that may remain even after running tests on the code.

The first and foremost strategy available to maximize the transparency of research methods is openly sharing the code with the minimal restrictions possible (see Section 6.2; Barnes, 2010; Gorgolewski and Poldrack, 2016). Complementarily, version control systems, such as Git (Blischak et al., 2016, see Table S1), are the most basic and effective tool to track how software is developed, and to collaboratively produce code. Beyond making the code available to others, software tools can implement further transparency strategies by thoroughly documenting their tools and by supporting implementations with scientific publications (Barnes, 2010; Gorgolewski and Poldrack, 2016).

5.2. Standardizing preprocessing and workflows

Although the diversity in methodological alternatives has been key to extracting scientific insights from neuroimaging data, appropriately combining heterogeneous tools into complete workflows requires substantial expertise. Traditionally, researchers used default workflows distributed along with individual software packages, or alternatively, individual laboratories have developed in-house analysis workflows that resulted in highly specialized pipelines. Such pipelines are often not thoroughly validated and difficult to reuse due to lack of documentation or accessibility to outside labs. In response, several community-led efforts have spearheaded the development of robust, standardized workflows.

An early effort towards workflow standardization was the Configurable Pipeline for the Analysis of Connectomes (C-PAC; Craddock et al. 2013), which is a “nose-to-tail” preprocessing and analysis pipeline for resting state fMRI. C-PAC offers a comprehensive configuration file, editable directly with a text editor or through C-PAC’s graphical user-interface, prescribing all the tools and parameters to be executed, and thereby making strides towards keeping methodological decisions closely traced. Similarly, large-scale acquisition initiatives released workflows tailored for their official imaging protocols (e.g., the HCP Pipelines Glasser et al. 2013 and the UK Biobank Alfaro-Almagro et al. 2016).

Conversely, fMRIPrep (Esteban et al., 2019) proposed the alternative approach of restricting the pipeline goals to the preprocessing step, while accepting the maximum diversity possible of the input data (i.e., not tailored to a particular experimental design or analysis-agnosticity). This approach has recently been proposed for additional modalities (e.g., dMRI, ASL, PET) and population/species of interest (e.g., fMRIPrep-rodents, fMRIPrep-infants) under a common framework called NiPreps (Neuroimaging PREProcessing toolS). NiPreps is a community-led endeavor with the goal of ensuring the generalization of the building blocks of preprocessing across modalities (e.g., the alignment of fMRI and dMRI with the same participant / animal’s anatomical image) and specimens (e.g., using the same brain extraction from anatomical data using the same algorithm and implementation on both human adults and rodents). Similar standardization efforts are starting to be adopted for EEG (Desjardins et al., 2021) and MEG (e.g., MNE-BIDS pipeline; Jas et al., 2018). Further examples of standardized workflows are found in Table S1.

An additional and relevant premise of standardized workflows is transparency — tools must be transparent not only in their implementation, but also in their reporting. For example, fMRIPrep produces visual reports with the double goal of assessing the quality of results, and also providing the researcher with a resource to comprehensively understand every step of the workflow. In addition, the report includes a text description which comprehensively describes each major step in the pipeline, including the exact software version and principle citation. This text, referred to as the “citation boilerplate”, is released under a public domain license, and therefore can be included verbatim in researcher’s manuscripts, facilitating accurate reporting and proper referencing of academic software. A final relevant aspect towards transparency is the comprehensive documentation of pipelines.

In most cases, standardized workflows preprocess datasets in a fully automated manner, taking a BIDS dataset as input and outputting data that is ready for subsequent analysis with little manual intervention. Importantly, such workflows are typically designed to be as robust as possible to diverse input data (e.g., with varying parameters or sampling distant populations), a challenge that is facilitated by data standardization (i.e., BIDS). Additionally, workflows must be portable, enabling users to execute them in a wide variety of environments. A key technology in this endeavor is containers—such as Docker and Apptainer/Singularity—which facilitate packaging specific versions of heterogeneous dependencies while ensuring cross-platform compatibility (e.g., high-performance computing clusters, desktop, or cloud services). The BIDS apps framework (Section 4.1) leverages containers by standardizing input parameters to make it trivially easy to execute a wide variety of standardized workflows on BIDS datasets. An example of a higher-level combination of workflows is found in Esteban et al. (2020), which describes an MRI research protocol using MRIQC and fMRIPrep. Finally, recent efforts to standardize the outputs of workflows (BIDS Derivatives), further enhances the interoperability of workflows, by ensuring their outputs are compatible with subsequent analysis.

5.3. Statistical modeling and advanced analysis

Analysis of neuroimaging data is particularly heterogeneous and prone to excessive analytical flexibility and underspecified reporting (Carp 2012a,2012b). Whereas preprocessing is ideally performed once per dataset, there is often a large number of types of analyses that may be used with the preprocessed data. In MRI and fNIRS, for example, analyses range from multi-stage general linear models (GLMs), multivariable decoding analyses, to anatomical and functional connectivity, and more. In PET, analyses consist of region-wise averaging, although voxel-wise approaches are gaining popularity, followed by kinetic modeling and subsequent statistical analyses, which can be GLM or more advanced, such as latent variable models. In MEG and EEG, the broad variety includes analyses such as evoked response potentials, power spectral density, source reconstructions, time-frequency, connectivity, advanced statistics and more. Each type of analysis also has a wide variety of subtypes, parameters, and statistical models that can be specified, and the form of that specification varies across the dozens of analysis packages that implement each type of analysis.

Data analysis reporting may be made more transparent by sharing code that relies on open-source software. A prime example is SPM (Flandin and Friston, 2008), which has been open source since its inception in 1991. Additional widely used open-source tools for data analysis are FSL and AFNI for MRI, and some examples of reproducible pipelines for MEG and EEG developed based on each of the following software: EEGLAB (Pernet et al. 2020) Fieldtrip (Andersen, 2018b; Meyer et al., 2021; Popov et al., 2018), Brainstorm (Niso et al., 2019; Tadel et al., 2019), SPM (Henson et al., 2019) and MNE-Python (Andersen 2018a; van Vliet et al., 2018; Jas et al., 2018) (see Niso et al. 2022 for a detailed review on main EEG and MEG open toolboxes and reproducible pipelines). Reproducibility is also improved when relying on modular and well-documented software such as Nilearn, which offers versatile methods to perform advanced analyses of fMRI data, from GLM to connectomic and machine learning (Abraham et al., 2014). Ideally, a single analysis script is created, from signal extraction, data analysis, and reproducing all figures.

An additional challenge for the reproducibility of analysis workflows is the representation of statistical models across distinct implementations of analysis software. For example, GLM approaches to analyze fMRI time series are prevalent and supported by all of the major statistical packages (e.g., AFNI, SPM, FSL, Nilearn). However, specifying equivalent models across packages is non-trivial and requires time consuming package specific model specification (Pernet, 2014), which obfuscates details of the statistical model, exacerbates variability across pipelines, and makes it difficult to perform multiverse analyses (see Section 5.4). The BIDS Stats Model (BIDS-SM, see Table S1) specification has been proposed as a implementation-independent representation of fMRI GLM models. BIDS-SM describes the inputs, steps, and specification details of GLM-type analyses, and encodes them in a machine readable JSON format. The PyBIDS library provides tooling to facilitate reading BIDS-SM, and FitLins (Markiewicz et al., 2021) is a reference workflow that fits BIDS-SM using AFNI or Nilearn. The transformative potential of BIDS-SM is showcased by Neuroscout (de la Vega et al., 2022), a turnkey platform for fast and flexible neuroimaging analysis. Neuroscout provides a user-friendly web application for creating BIDS-SM on a curated set of public neuroimaging datasets, and leverages FitLins to fit statistical models in a fully reproducible and portable workflow. By standardizing the entire process of statistical modeling, users can formally specify a hypothesis and produce statistical results in a matter of minutes, while simultaneously ensuring a fully reproducible and transparent analysis that can be readily disseminated to the scientific community.

5.4. Multiverse analysis

The variety of data workflows reflects the enormous interest and the need for novel software instruments, but it also poses an important risk to reproducibility. The multitude of possible combinations of methods and parameters in each of the analysis steps creates an extremely large number of combinations to select from. This problem is often referred to as “researcher degrees of freedom” or “the garden of forking paths” (Gelman and Loken, 2013). Importantly, analytical choices affect results. This has been shown for preprocessing of fMRI data (Strother et al. 2004; Churchill et al., 2012; Churchill et al., 2012). While this work focused mainly on the aspect of tailoring preprocessing to e.g. maximize predictive models, recent efforts in fMRI (task fMRI: Botvinik-Nezer et al., 2020; Carp, 2012a; preprocessing of resting-state fMRI: Li et al. 2021) and PET (specifically for preprocessing: Nørgaard et al. 2020) focused more on the variability of outcomes in general when analysis pipelines were varied. In addition, recent studies showed high variability in diffusion-based tractography dissection (Schilling et al., 2021) and event-related potentials in EEG preprocessing (Šoškić et al., 2021; Clayson et al., 2021). Another large-scale attempt to estimate the analytical variability for EEG, EEGManyPipelines (see Table S1), is currently ongoing.

The converging findings of these studies across modalities suggest that it is crucial to test the robustness of reported results to specific analytical choices. One proposed solution to tackle the analytical variability, where many different analytical approaches are compared, is multiverse analysis (Hall et al., 2022). There are two broad types of multiverse tools. In a “numerical instabilities” approach, different setups and numerical errors or uncertainties in computational tools are evaluated, analyses are rerun several times, and variability, robustness, and “mean answer” are estimated (Kiar et al., 2020). One tool of this type that is being developed is “Fuzzy” (Kiar et al., 2021). Alternatively, in a “classic multiverse analysis”, multiple pipelines are used with the same data and the results are compared across pipelines. Such an analysis could be conducted by a single or by multiple researchers (Aczel et al. 2021). Although multiverse analysis was suggested before in other fields (Simonsohn et al., 2020; Steegen et al., 2016; Simonsohn et al., 2015; Patel et al., 2015), there are not yet mature “classic multiverse analysis” tools for high-dimensional data like in neuroimaging. Explorable Multiverse Analyses is an R-tool that allows the readers to explore different statistical approaches in a paper (Dragicevic et al., 2019). Other tools, such as the Python-based Boba (Liu et al., 2021), aim to facilitate multiverse analyses by allowing users to specify the shared and the varying parts of the code only once and by providing useful visualizations of the pipelines and results. However, these tools currently fit simpler analyses and datasets compared to the ones common in neuroimaging.

In neuroimaging, recent progress has been made in creating infrastructure for multiverse analysis in fMRI, based on the C-PAC tool (see Section 5.2; Li et al. 2021). Ongoing efforts to formalize machine-readable standards for statistical models (BIDS-SM) and pipelines to estimate them (e.g., FitLins; Markiewicz et al., 2021), and their integration with datasets using platforms such as Brainlife (Avesani et al., 2019), could facilitate the development of multiverse tools. In order to make sense of a multiverse analysis, one needs methods to test for convergence across results of diverse analysis pipelines with the same data. Such a method for fMRI image-based meta-analysis was recently used in NARPS (Botvinik-Nezer et al., 2020) as well as in subsequent projects (Bowring et al., 2021). Another simple statistical approach to a multiverse analysis was presented with PET data (Nørgaard et al., 2019), although it lacks statistical power, due to the use of a very conservative statistic. A different approach is to use active learning to approximate the whole multiverse space (Dafflon et al., 2020). Moreover, Boos et al. (2021) provided an online application to explore the effects of the choice of parameters on the results (data-driven auditory encoding, see Table S1). Progress is still needed until such tools are mature enough to allow scalable multiverse analysis in neuroimaging.

6. Research dissemination

Through the whole research cycle a range of outputs far beyond publications are produced, and each of them can have different levels of reproducibility and openness (see Fig. 5). For shared resources to be useful, they need to follow the FAIR principles (Wilkinson et al., 2016), to ensure they are: Findable (e.g., using persistent identifiers, such as Digital Object Identifiers (DOI) or Research Resource Identifiers (RRIDs), and described with rich metadata indexed in a searchable resource), Accessible (e.g., shared in public repositories, under open access or controlled access depending on regulations, so they can be retrievable by their identifier using standardized communication protocols), Interoperable (e.g., following a common standard for organization and vocabulary), and Reusable (e.g., richly described, with detailed provenance and an appropriate license). Indeed, without a license, materials (data, code, etc.) become unusable by the community due to the lack of permission and conditions for reuse, copy, modification, or distribution. Therefore, consenting through a license is essential for any material to be publicly shared.

A useful generalpurpose resource, beyond neuroimaging, including practical guidelines on reproducible research, project design, communication, collaboration, and ethics is The Turing Way (TTW, The Turing Way Community et al. 2019, see Table S1). TTW is an open collaborative community-driven project, aiming to make data science accessible and comprehensible to ensure more reproducible and reusable projects.

6.1. Data sharing

Making data available to the community is important for reproducibility, allows more scientific knowledge to be obtained from the same number of participants (animal or human), and also enables scientists to learn and teach others to reuse data, develop new analysis techniques, advance scientific hypotheses, and combine data in mega- or meta-analyses (Poldrack and Gorgolewski, 2014; Laird, 2021; Madan, 2021). Moreover, the willingness to share has been shown to be positively related to the quality of the study (Wicherts et al., 2011). Because of the many advantages data sharing brings to the scientific community (Milham et al. 2018), more and more journals and funding agencies are requiring scientists to make their data public (curated and archived with a public record, but controlled access) or open (public data with uncontrolled access) upon the completion of the study, as long as it does not compromise participants’ privacy, legal regulations, or the ethical agreement between the researcher and participants (see Sections 2.3 and 7).

For data to be interoperable and reusable, it should be organized following an accepted standard, such as BIDS (Section 4.1) and with at least a minimal set of metadata. Free data-sharing platforms are available for publicly sharing neuroimaging data, such as OpenNeuro (Markiewicz et al., 2021), Brainlife (Avesani et al., 2019), GIN (G-Node Infrastructure), Ebrains, Distributed Archives for Neurophysiology Data Integration (DANDI), International Neuroimaging Data-Sharing Initiative (INDI), Neuroimaging Tools & Resources Collaborator (NITRC), etc. (see Table S1). Data could also be shared on institutional and funder archives such as the National Institute of Mental Health Data Archive (NDA); on dedicated repositories, such as the the Cambridge Centre for Ageing and Neuroscience, Cam-CAN (Shafto et al., 2014; Taylor et al., 2017) or The Open MEG Archive, OMEGA (Niso et al., 2016); or on generic archives that are not neuroscience or neuroimaging specific, such as figshare, GitHub, the Open Science Framework, and Zenodo. If allowed by the law and participants’ consent (see Section 2.3), data sharing can be made open, or at least public.

Once curated and archived, data can further benefit the individual researcher, for example by adding them to the scientific literature in the form of data descriptors. Such an article type is not intended to communicate findings or interpretations but rather to provide detailed information about how the dataset was collected, what it includes and how it could be used, along with the shared data. In addition, an “open science badge” for data sharing is available in an increasing number of scientific journals (Kidwell et al., 2016) and some prizes are also available as a recognition for such efforts (e.g., OHBM’s Data Sharing prize).