Abstract

To achieve automated counting of drill pipes during gas extraction in coal mines, this study proposes an intelligent counting method based on an improved YOLOv11 model and Savitzky-Golay (SG) smoothing. First, images of underground gas extraction drilling operations were captured, and a dataset was constructed. Subsequently, improvement strategies for the YOLOv11 object detection model were developed to meet the requirements for rapid drill pipe counting. Ablation experiments demonstrate that the proposed improvements enhance YOLOv11’s detection accuracy. The improved YOLOv11 achieves precision, recall, mAP50, and mAP50-95 scores of 94.7%, 95.7%, 96.6%, and 65.8%, respectively, surpassing the performance of YOLOv8, YOLOX, Faster R-CNN, and CenterNet. The trained YOLOv11 model was then applied to detect drill pipes in test videos, and SG smoothing was applied to the bounding box area curves. Finally, the smoothed area curves were used to count the drill pipes, with the test video confirming a total of nine drill pipes, consistent with the actual count. The results demonstrate that the proposed method effectively mitigates the impact of complex underground environments and achieves intelligent and accurate drill pipe counting, providing technical support for standardized gas extraction in coal mines.

Keywords: Drill pipe counting, Object detection, Savitzky-Golay smoothing, YOLOv11

Subject terms: Engineering, Electrical and electronic engineering

Introduction

In 2020, China officially proposed the strategic goal of “achieving carbon peaking by 2030 and carbon neutrality by 2060.” It is foreseeable that the proportion of coal in energy consumption will gradually decline by 2060. However, even after achieving carbon neutrality, China will still require 300 to 400 million kilowatts of coal-fired generators, with an annual coal consumption of 390 to 640 million tons. This indicates that, despite achieving carbon neutrality, China will need to mine significant amounts of coal each year, particularly before reaching the carbon peak. Safety remains the most critical issue in coal mining. Mine safety is one of the most pressing challenges in China. In February 2019, China’s Coal Mine Safety Administration reported that 224 coal mine accidents resulting in 333 fatalities occurred in 2018, with gas disasters being the leading cause of coal mine safety incidents. Underground space technology and geotechnical engineering analysis are one of the directions for underground safety1–3. However, gas extraction is the most effective measure to mitigate gas accidents in coal mines4. Before gas extraction, it is necessary to drill holes into the coal seams or gas-rich areas, making drill pipe counting a critical step to ensure the successful completion of coal seam gas extraction tasks. It can accurately reflect the depth of the borehole, enabling the staff to determine whether the borehole has accurately penetrated the gas-rich coal seam as required by the design. This ensures the effectiveness and coverage of the gas drainage boreholes, allowing the gas to be fully extracted.

The traditional approach uses subjective manual counting of drill pipe counts. With the development of computer vision, drill pipe counting methods based on image recognition have been proposed. Gao Rui et al.5proposed an improved ResNet network for gas extraction drill pipe counting in 2020. They filtered the video classification confidence by integration method and counted the number of falling edges of the confidence curve to achieve drill pipe counting. Fang J. et al.6proposed an ECO-HC-based drill pipe counting method, which establishes a counting model by analyzing the waveform graph of the drill pipe object trajectory to realize drill pipe counting. Dong Zhang et al.7 improved the MobileNetV2 neural network and used it for drill pipe counting. The features of the rig in multiple working states were extracted first. The confidence data were generated by identifying the four rig working states (loading drill pipe, drilling, unloading drill pipe, stopping) during the whole drilling process to count the number of drill pipes. Currently, drill pipe counting methods are mainly based on image recognition, for which the precision and efficiency need to be improved.

The purpose of object detection is to identify and locate local patterns with semantic definitions in an image8. Nowadays, advances in computer hardware have driven the rapid development of deep learning9,10, and the research of object detection of images has made great progress11–13. In 2014, Ross14proposed a deep-learning-based object detection model, RCNN, and achieved a precision improvement of more than 30% compared to the best results provided by previous models using artificial features. To improve the performance of RCNN even further, its improved versions Fast RCNN15 and Faster RCNN16 have been proposed one after another. RCNN is a typical two-stage object detection model, and the speed is limited by its mechanism. Joseph et al. transformed the object detection into a regression problem to be solved. Accordingly, YOLO17 was proposed and the YOLO architecture enables substantial improvement in object detection speed. To improve precision and speed, a series of extensions of YOLOs18 were proposed as YOLO v219, YOLO v320, YOLO v421, YOLO v522–24, YOLO v6, YOLO v7, YOLO v8, and YOLOv11. And YOLOv11 is a relatively good performance detection framework, which was successfully applied to many fields.

Object detection has developed rapidly, making significant progress in both accuracy and speed, and is widely used in coal mines. Kefei Zhang et al.25 proposed a new data augmentation model for coal gangue detection, called a dual attention deep convolutional generative adversarial network, which to some extent promotes the development of more efficient, environmentally friendly, and cleaner coal gangue separation technologies. Tun Yang et al.26 proposed the YOLO Region model to solve the problem of inaccurate obstacle detection in unmanned rail electric locomotives underground in coal mines. The underground environment of coal mines is complex and dangerous, and unauthorized personnel entering may lead to serious accidents. Huawei Jin proposed a KD-YOLO model based on the enhanced YOLOv8 framework for detecting personnel in hazardous scenes in coal mines, addressing issues such as dim lighting, uneven illumination, complex backgrounds, and dust interference27. Huaxing Mu proposes an improved underground pose change object detection method called Slim-YOLO-PR_KD to address the low detection accuracy caused by limited computing resources and variable object poses in coal mines28. Compared with other advanced detection models, Slim-YOLO-PR_KD has the fastest detection speed and maintains high detection accuracy for changing objects.

In this study, we propose a drill pipe counting method based on object detection. Real-time video of the gas extraction drilling process is captured using downhole monitoring equipment, and the drill pipes in each video frame are detected using deep learning techniques. The coordinates of the drill pipe frames are calculated based on the detection results and recorded in real time. However, due to the complex underground environment, the lighting conditions in the well are poor and uneven, resulting in low-quality video imaging. This can lead to irregular area curves for the detected drill pipe coordinates, making direct counting challenging.

Savitzky-Golay (SG)29 filtering technique is considered to be the most popular smoothing process, which is capable of removing noise from various signals. Compared to the classical wavelet transform and Kalman filter, SG smoothing has fewer parameters, faster operation speed, and preserves the height and shape of the signal30. Based on the above, this paper proposes to use the SG filtering technique to smooth the real-time area curve and obtain the smooth drill pipe coordinate frame area curve (relative size of drill pipe), thus achieving automatic counting of drill pipes.

Counting schemes and related theories

Counting schemes

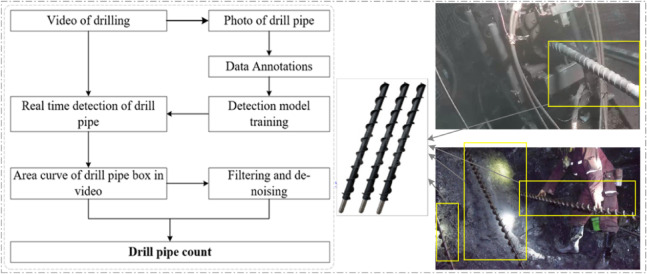

Since the coal seam is rich in methane gas, an explosion may occur when the gas volume fraction reaches 5–15%. If the volume fraction exceeds 15%, gas combustion accidents may happen. In gas extraction, the borehole depth can reach hundreds of meters. To achieve the design goal of the borehole and fully extract gas from the coal seam at the coal face, it is crucial to control the actual depth of the borehole precisely. Completing hundreds of meters of drilling requires hundreds of drill pipes. The underground environment of coal mines is complex, and workers are subject to intense physical labor. Relying solely on underground workers to count the drill pipes makes it difficult to meet the design goal, leading to potential safety hazards. To enable intelligent drill pipe counting in coal mine gas extraction and monitor the borehole depth in real time, this paper proposes a drill pipe counting system based on computer vision for gas extraction, as shown in Fig. 1.

Fig. 1.

Drill pipe counting scheme.

The drill pipe counting scheme for gas extraction, as shown in Fig. 1, is based on object detection. In this method, the drill pipe in each frame of the image is detected in real-time to determine the coordinate area of the drill pipe, i.e., its relative size. A real-time curve representing the relative size of the drill pipe is generated, and the drill pipe count can be determined in real-time after applying a smoothing process. Consequently, the intelligent counting method for drill pipes in gas extraction drilling within coal mines consists of two main components: object detection and signal smoothing.

YOLO theory

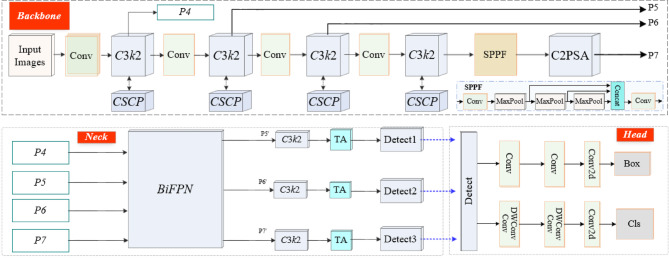

Improved YOLOv11

YOLOv11, a state-of-the-art object detection algorithm, was released by Ultralytics on September, 2024. Compared to previous models in the YOLO series, YOLOv11 achieves significant improvements in both accuracy and speed. The improved YOLOv11 network architecture is illustrated in Fig. 2.

Fig. 2.

YOLO11 network architecture.

The YOLOv11 architecture consists of a backbone network, a neck network, and detection heads. It employs an improved version of CSPDarknet53 as the backbone network, which performs five downsampling operations. Additionally, the backbone integrates a Spatial Pyramid Pooling Fast (SPPF) module to enhance the diversity of multi-scale feature representations. In the backbone network, the CBS (Convolution, Batch Normalization, and SiLU activation function) module sequentially performs convolution, batch normalization, and nonlinear mapping, with the SiLU activation function used for the nonlinear mapping.

YOLOv11 replaces the C2f module with the C3 K2 module, as shown in Fig. 3. Furthermore, it enhances feature extraction capabilities using the C2PSA module, which incorporates a pyramid slicing attention (PSA) mechanism. The PSA mechanism employs a multi-level design, improving upon the SE attention mechanism to better handle multi-level features. The neck network adopts a PAN-FPN structure, which enhances the fusion of shallow positional information and deep semantic information through a bottom-up path.

Fig. 3.

YOLO11 network architecture.

Improvement and design of backbone network

In YOLOv11, although the C3k2 module enhances feature representation capability and receptive field, it performs poorly in small object detection and complex background scenarios. Additionally, it suffers from computational redundancy, information loss, and training difficulties. The underground coal mine environment is highly complex and subject to numerous interferences. To achieve more accurate detection of drill pipes, we replace the C3k2 module in YOLOv11 with the Cross-Stage Partial Connections (CSPC) network.

CSPC directly passes a portion of the feature map to subsequent stages, maintaining the integrity of information flow, reducing gradient vanishing issues, and facilitating the reuse of information between different feature levels. The structure of the CSPC module is illustrated in Fig. 4.

Fig. 4.

CSPC module.

The designed CSPC module operates by dividing the input feature map into two parts: one large and one small. The larger portion of the feature map is forwarded across stages to preserve the original information, while the smaller portion undergoes multiple convolutional operations to learn deep features. Subsequently, these two parts are merged, achieving feature fusion and multi-scale feature extraction, thereby enhancing the model’s performance.

Improvement of neck network

The attention mechanism is a method that helps network models differentiate the importance of input data by assigning different weights, and it has been widely applied in the field of computer vision, particularly in object detection tasks. In this paper, we introduce a triple attention mechanism and a weighted bidirectional feature pyramid network (BiFPN) to improve the neck network of YOLOv11, as shown in Fig. 5.

Fig. 5.

Triple attention.

Figure 5 illustrates the network architecture of the triple attention mechanism. ‘BN’ is a batch normalization and ‘Conv’ is a convolution operation. This structure leverages various rotation and arrangement operations to integrate information across different dimensions, effectively capturing the intrinsic features of the data. It enhances the network’s ability to understand and process complex data structures. The improved neck network we proposed uses a modified BiFPN, whose P3 level features were not utilized, as shown in Fig. 6.

Fig. 6.

The employed BiFPN Network.

The BiFPN network employs a bidirectional feature propagation mechanism, enabling both top-down and bottom-up feature flows to facilitate more comprehensive and enriched information exchange across different levels. Additionally, BiFPN integrates feature refinement and selection operations, optimizing the fusion results by adjusting feature weights to achieve better representation of critical features. It dynamically selects the most useful features based on their importance and confidence, further enhancing the accuracy and performance of object detection. Furthermore, in terms of network topology, BiFPN demonstrates greater flexibility by leveraging neural architecture search to determine the optimal irregular feature network topology. This design effectively balances accuracy and efficiency, adapting to various tasks and resource constraints.

|

1 |

|

2 |

where, Resize is an upsampling or downsampling operation, and Conv is a convolution operation.  ,

,  ,

,  and

and  are the feature maps in Fig. 5.

are the feature maps in Fig. 5.  ,

,  ,

,  ,

,  , and

, and  are both parameters that can be learned.

are both parameters that can be learned.  is a small constant. The BiFPN enables adaptive connections between multi-level feature maps, allowing them to simultaneously capture both global and local information. This enhances the accuracy and efficiency of object detection.

is a small constant. The BiFPN enables adaptive connections between multi-level feature maps, allowing them to simultaneously capture both global and local information. This enhances the accuracy and efficiency of object detection.

Loss function

In the improved YOLOv11, the Slide Loss function is introduced. To simplify hyperparameter configuration, the average IoU value of all bounding boxes is used as the threshold. Samples with IoU values below are classified as negative, while those above are considered positive. However, this approach results in relatively large loss values for samples near the classification boundary (i.e., samples with IoU values close to) during training, as these samples do not fully belong to either the positive or negative category. This issue negatively impacts the model’s learning process.

To better handle boundary samples and utilize them more effectively during network training, a higher weight is assigned to these difficult samples. Specifically, positive and negative samples are first identified based on the threshold, and a weighting function, Slide, is introduced to emphasize samples near the boundary, as illustrated in Fig. 7.

Fig. 7.

Weighted Function Slide.

The Slide weighting function, as shown in formula (3), is designed to enhance the importance of hard samples, ensuring that the model learns these critical but less frequent samples more effectively, thereby improving the overall training performance.

|

3 |

where, µ is the threshold for IoU,  is the weight of negative and positive samples that are far from the boundary.

is the weight of negative and positive samples that are far from the boundary.  is a weighting function within the boundary region, characterized as a monotonically increasing or decreasing function. It is employed to ensure smooth transitions and to emphasize boundary samples.

is a weighting function within the boundary region, characterized as a monotonically increasing or decreasing function. It is employed to ensure smooth transitions and to emphasize boundary samples.

Evaluation of detection models

Object detection is a combination of recognition and localization of objects in images, so it is impossible to evaluate the performance of an object detection model using precision alone. Compared to other metrics, precision, recall, and average precision are used relatively most. In this paper, the only category of detection object is drill pipe, so the precision, recall, and average precision (AP) are chosen for the evaluation of the detection model. Before calculating precision, recall, and AP, some related concepts need to be defined.

The predicted result is consistent with the actual value and is a positive sample, denoted as TP; the predicted result is consistent with the actual value, but the predicted result is a negative sample, denoted as TN; the predicted result is inconsistent with the actual value, and the predicted result is a positive sample, denoted as FP; the predicted result is inconsistent with the actual, and the predicted result is a negative sample, denoted as FN. The recall and precision are defined as Eqs. (4) and (5), respectively:

|

4 |

|

5 |

where,  and

and  represent the recall and precision, respectively. After obtaining the precision and recall, the average precision of the detection object can be calculated, as shown in Eq. (6):

represent the recall and precision, respectively. After obtaining the precision and recall, the average precision of the detection object can be calculated, as shown in Eq. (6):

|

6 |

Savitzky-Golay smoothing theory

While smoothing the data using Savitzky-Golay (SG), the original signal is fitted by a local least squares polynomial approximation, which removes noise from the signal. In the filtered data, noise is removed while the original signal is maintained. SG smoothing does not require converting the data from the time domain to the frequency domain, and the data can be processed directly in the time domain, which can preserve the characteristics of the original data very well.

SG smoothing includes two parameters: polynomial order and window length. The polynomial order parameter sets the degree of the smoothing process. Fitting 2 m + 1 discrete data in a given window by least squares method, assuming continuous data as  . The n-order fitting polynomial is shown in formula 7.

. The n-order fitting polynomial is shown in formula 7.

|

7 |

where, a denotes the coefficient of the polynomial, i denotes the weight of the smoothing, k denotes the order, and g(n) denotes a polynomial function about order n. The mean square error between the original data and the fitted polynomial is  , and the derivatives of each coefficient should be zero, which yields.

, and the derivatives of each coefficient should be zero, which yields.

|

8 |

where, the coefficients of the polynomial can be solved for, and thus the fitted nth order polynomial g(n) can be obtained.

Experiments and simulations

Data set

In the field of deep learning, the training set and the test set are generally divided into 4:1. This paper proposes the YOLOv11-based drill pipe detection method. A total of 678 images of drill pipe were collected, from which 561were randomly selected for training, and the remaining 117 were used for testing the trained model. The experimental data comes from the coal mine in Huainan, China, and the image acquisition uses the underground monitoring video of the coal mine. The collected images are shown in Fig. 8. The underground environment of coal mines is complex, characterized by low and uneven illumination, as well as the presence of dust. These factors significantly increase the difficulty of drill pipe detection.

Fig. 8.

Display of collected data.

During model training, the training data are further divided into a validation dataset and a training dataset. When training the model, the maximum number of iterations and the training batch size were set to 500 and 8, respectively.

Ablation experiment

The loss curve in deep learning training serves as a crucial tool for monitoring the training process and evaluating model performance. Typically, the loss curve illustrates the variation in loss values over iterations during training. The loss curves of the model before and after improvement are shown in Fig. 9.

Fig. 9.

Loss and recall curve.

In Fig. 9, YOLOv11 represents the loss curve of the original model, while “improved” corresponds to the loss curve of the improved model of YOLOv11. We present the Box Loss and the total loss in the figure, where the total loss is the sum of Box Loss, classification loss, etc. A comparison of the curves indicates that the improved model converges faster and achieves better performance than the original model. To evaluate the contribution of different module combinations to the model’s performance and verify the effectiveness of the designed modules for drill pipe detection in underground coal mines, YOLOv11 was used as the baseline algorithm for the ablation study. Metrics such as accuracy, recall, mAP50, and mAP50-95 of the bounding boxes were compared. The results of the ablation study are presented in Table 1.

Table 1.

Results of ablation experiments.

| Yolov11 | CSPC | TA | BiFPN | Slide | P/% | R/% | mAP50/% | mAP50-95/% |

|---|---|---|---|---|---|---|---|---|

| √ | 92.3 | 93.8 | 95.4 | 62.7 | ||||

| √ | √ | 93.1 | 94.0 | 96.2 | 64.2 | |||

| √ | √ | 93.3 | 94.5 | 96.0 | 63.8 | |||

| √ | √ | 93.6 | 93.3 | 96.2 | 63.9 | |||

| √ | √ | 94.1 | 93.7 | 96.6 | 63.4 | |||

| √ | √ | √ | 93.8 | 95.2 | 97.1 | 64.6 | ||

| √ | √ | √ | √ | 94.3 | 94.8 | 97.3 | 65.6 | |

| √ | √ | √ | √ | √ | 94.7 | 95.7 | 97.6 | 65.8 |

In the table, “√” indicates that the corresponding module is utilized. As shown in the table, each module independently achieves good performance in the detection of drill pipes in underground coal mines. Specifically, the CSPC module, by introducing a cross-stage partial network structure, effectively enhances the network’s ability to focus on and process critical information in the target images. The TA and BiFPN modules exhibit excellent performance in focusing on, extracting, and fusing information across different feature scales, improving the precision by 1.0% and 1.3%, respectively, compared to the baseline model. Moreover, the introduction of the Slide module significantly enhances the model’s recognition performance.

After integrating the CSPC module into the baseline model, all evaluation metrics improved. Specifically, precision increased by 0.8%, mAP50 by 0.8%, and mAP50-95 by 1.9%, enhancing the model’s ability to extract domain-specific information for drill pipe targets. The inclusion of the TA module further enhanced the model’s capacity for multi-feature extraction and recognition of small drill pipe targets while reducing computational complexity. This optimization provided room for speed improvement and resulted in increases of 1.5% in precision, 1.7% in mAP50, and 1.9% in mAP50-95 compared to the baseline model. The addition of the BiFPN module led to a 2.0% increase in precision, a 1.9% improvement in mAP50, and a 2.9% improvement in mAP50-95. Finally, incorporating the Slide feature fusion module improved mAP50 by 2.2% and mAP50-95 by 3.1%, effectively enhancing the model’s ability to fuse features at different scales. Compared to the YOLOv11, the improved model demonstrates significant enhancements in both accuracy and regression performance, effectively meeting the requirements for drill pipe detection in underground coal mines.

Comparison with other models

To further evaluate the improvement achieved by the proposed model, comparative experiments were conducted against classical models such as YOLOX, Faster R-CNN, CenterNet, and YOLOv8. Four metrics were used to assess the performance of each model: mean average precision (mAP), accuracy, detection speed, and the number of parameters. The comparative results of these metrics for each model are presented in Table 2.

Table 2.

Comparison results of other models.

| Algorithms | P/% | R/% | mAP50/% | mAP50-95/% | |

|---|---|---|---|---|---|

| YOLOX | tiny | 92.1 | 92.5 | 93.6 | 57.8 |

| s | 93.2 | 93.8 | 94.6 | 60.0 | |

| m | 93.7 | 94.1 | 94.8 | 61.3 | |

| n | 92.4 | 93.2 | 94.7 | 59.2 | |

| YOLOv8 | n | 92.3 | 93.0 | 95.1 | 62 |

| s | 93.6 | 94.1 | 95.8 | 63.5 | |

| YOLOv11 | n | 93.8 | 94.3 | 95.0 | 64.1 |

| Improved YOLOv11 | n | 94.7 | 95.7 | 96.6 | 65.8 |

| Faster RCNN | VGG | 89.3 | 90.4 | 92.4 | 60.3 |

| ResNet50 | 92.6 | 93.3 | 95.6 | 56.7 | |

| CenterNet | 83.7 | 84.2 | 89.9 | 54.0 | |

In Table 2, “VGG” represents the backbone network of Faster R-CNN, which uses VggNet. In the comparative experiments, the improved YOLOv11 model demonstrated outstanding performance across evaluation metrics. The precision reached 94.7%, an improvement of 0.9% compared to the original YOLOv11. The recall increased to 95.7%, showing a gain of 1.4%. The mAP@50 reached 96.6%, reflecting an enhancement of 1.6% over the original YOLOv11. Furthermore, the mAP@50–95 of the improved model reached 65.8%, representing a 1.7% increase. These results indicate that the improved model not only maintains high localization accuracy under conditions of high recall but also exhibits enhanced robustness across different IoU thresholds.

Compared with YOLOX and YOLOv8, the improved demonstrates outstanding performance across all evaluation metrics. Notably, in terms of the comprehensive performance metric mAP50-95, YOLOv11 surpasses YOLOv8 and YOLOX by 2.3% and 8%, respectively.

When compared with Faster R-CNN (based on VGG and ResNet50) and CenterNet, YOLOv11 similarly exhibits superior performance. Specifically, it achieves a 5.2% higher mAP50 than Faster R-CNN (VGG) and shows significant improvements in critical performance indicators such as precision (P/%) and recall (R/%).

In summary, the improved YOLOv11 model demonstrates exceptional performance in object detection tasks, particularly in balancing precision and recall, as well as maintaining stability across various IoU thresholds. The test results of the improved YOLOv11 are demonstrated in Fig. 10.

Fig. 10.

Display of detection results.

We present the test results of two sets of images in Fig. 10, where the second set is affected by uneven lighting interference. The results shown in the figure indicate that our improved YOLOv11 demonstrates superior robustness. The detection results are summarized in Table 3, with the input images having a resolution of 600 × 960 pixels.

Table 3.

Box coordinates.

| Image | Improved YOLOv11 | YOLOv11 | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| L | T | R | B | area | score | L | T | R | B | area | score | |

| 1 | 220 | 542 | 467 | 948 | 154,882 | 0.93 | 222 | 534 | 487 | 960 | 147,576 | 0.80 |

| 2 | 201 | 509 | 431 | 882 | 138,908 | 0.90 | 217 | 531 | 438 | 901 | 145,382 | 0.81 |

| 3 | 190 | 482 | 370 | 799 | 125,268 | 0.91 | 201 | 491 | 373 | 805 | 125,280 | 0.79 |

| 4 | 187 | 475 | 364 | 775 | 118,368 | 0.88 | 200 | 495 | 347 | 724 | 111,215 | 0.66 |

| 5 | 234 | 564 | 362 | 772 | 135,300 | 0.87 | 236 | 578 | 354 | 756 | 137,484 | 0.76 |

| 6 | 239 | 574 | 362 | 765 | 135,005 | 0.88 | 241 | 580 | 357 | 761 | 136,956 | 0.79 |

| 7 | 225 | 558 | 344 | 730 | 128,538 | 0.86 | 230 | 560 | 348 | 734 | 127,380 | 0.75 |

| 8 | 184 | 474 | 270 | 604 | 96,860 | 0.83 | 185 | 483 | 276 | 611 | 99,830 | 0.75 |

| 9 | 237 | 573 | 485 | 960 | 159,600 | 0.90 | 239 | 584 | 485 | 960 | 163,875 | 0.84 |

| 10 | 201 | 494 | 391 | 822 | 126,283 | 0.93 | 211 | 524 | 393 | 826 | 135,529 | 0.87 |

| 11 | 182 | 472 | 350 | 760 | 118,900 | 0.89 | 193 | 477 | 358 | 774 | 118,144 | 0.82 |

| 12 | 226 | 557 | 345 | 739 | 130,414 | 0.84 | 227 | 546 | 346 | 747 | 127,919 | 0.78 |

| 13 | 216 | 539 | 349 | 744 | 127,585 | 0.88 | 216 | 523 | 343 | 751 | 125,256 | 0.78 |

| 14 | 234 | 577 | 352 | 741 | 133,427 | 0.87 | 237 | 570 | 346 | 740 | 131,202 | 0.81 |

| 15 | 177 | 473 | 268 | 589 | 95,016 | 0.81 | 182 | 473 | 270 | 594 | 94,284 | 0.75 |

| 16 | 0 | 0 | 0 | 0 | 0 | 0.00 | 0 | 0 | 0 | 0 | 0 | 0.00 |

In Table 3, (L, T) and (R, B) represent the top-left and bottom-right coordinates of the detected objects in Fig. 9, respectively. “Area” denotes the area of the bounding box, while “Score” refers to the confidence score. From the data in the table, it can be observed that the improved YOLOv11 achieves relatively higher detection scores. Additionally, the smaller the drill pipe length, the smaller the corresponding bounding box area. This characteristic allows us to count drill pipes by analyzing changes in the bounding box area.

Drill pipe counting based on SG smoothing

During the drilling process, the length of the drill pipe decreases, resulting in a reduction in the area of the detection coordinate frame. Based on this observation, the location of the coordinate frame for the drill pipe detected in the video was recorded in real time. The test video was collected at Liu Zhuang Mine during the period from 16:36 to 17:08 on May 10, 2022. YOLOv11 was utilized for drill pipe detection in the video, and the area of the drill pipe coordinate frame was continuously recorded in real time. We set the confidence thresholds to 0.1 and 0.7, respectively, and the results are shown in Fig. 11.

Fig. 11.

The area curve of the box. (a) Confidence=0.1; (b) Confidence=0.4; (c) Confidence=0.7

The results in Fig. 11a are obtained with the confidence threshold set to 0.1, while Fig. 11b and c correspond to confidence threshold settings of 0.4 and 0.7, respectively. The left side of the figure is the detection result of YOLO v11, while the right side is the detection result of our proposed improved method. As can be seen from the comparison in the figure, setting the confidence threshold to 0.7 significantly reduces redundant bounding boxes, and the proposed improved Yolov11 detection algorithm yields relatively good results.

The underground environment is highly complex and subject to various disturbances. As a result, it is challenging to count the number of drill pipes directly from the drill pipe coordinate frame area curve. To address this, SG smoothing was applied to process the area curve of the drill pipe coordinate frame. The results of SG smoothing with different parameters are illustrated in Fig. 12.

Fig. 12.

SG smoothing of area curve. (a) sg = 11; (b) binarization, sg = 11; (c)sg = 51; (d)binarization, sg = 51; (e) sg = 101; (f) binarization, sg = 101; (g) sg = 201; (h) binarization, sg = 201; (i) sg = 301; (j)binarization, sg = 301.

Figure 12a, c and e, g, and i show the SG convolutional smoothing results with different parameters, while Fig. 12b, d and f, h, and j display their corresponding binarized curves. The polynomial order used in the SG smoothing is 3. As the smoothing window size increases, the area curve of the drill pipe coordinate frame becomes progressively smoother. Furthermore, the counting results for different values of m and n are shown in Table 4.

Table 4.

Counting results.

| n | m | |||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 11 | 21 | 31 | 41 | 51 | 61 | 71 | 81 | 91 | 101 | 111 | 121 | 131 | 141 | 151 | 161 | 171 | 181 | 191 | 201 | |

| 2 | 46 | 27 | 15 | 17 | 14 | 12 | 10 | 10 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 |

| 3 | 46 | 27 | 15 | 17 | 14 | 12 | 10 | 10 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 |

| 4 | 67 | 46 | 27 | 19 | 17 | 17 | 12 | 12 | 10 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 | 9 |

In Table 4, when the parameter m exceeds 101, the area curve of the drill pipe coordinate frame shows minimal changes with further increases in m. When m is set to 101, it becomes possible to accurately count the drill pipes. The total number of drill pipes is 9, which is consistent with the actual situation.

Figure 13 presents the drill pipe curve recorded during the inspection. The trend of the curve clearly demonstrates that the method proposed in this paper not only enables an intelligent count of drill pipes used for gas extraction but also effectively records the drilling status. Moreover, it facilitates monitoring the working conditions of the downhole workers.

Fig. 13.

Relative size curve of the drill pipe.

Conclusion

The intelligent counting of gas extraction drill pipes holds significant importance for the safe production and thoughtful development of coal mines. This study proposes a rapid drill pipe counting method based on an improved YOLOv11 model and Savitzky-Golay (SG) smoothing. Video footage of gas extraction drilling was collected to establish an experimental dataset, and an enhanced YOLOv11 approach was developed. The improved YOLOv11 model was used to detect drill pipes in the videos and to record the real-time coordinates of bounding box areas. Parameters for SG smoothing were determined experimentally. The results indicate that the proposed method effectively detects drill pipes in videos using the improved YOLOv11 model. After applying SG smoothing to the bounding box area curves, the method achieves rapid and accurate drill pipe counting.

Author contributions

Author Contributions: Cheng Cheng: Conceptualization, Formal analysis, Ivestigation. Xiaoyu Cheng: Writing – original draft. Debo Li: Writing – review & editing.Jiawei Zhang: Visualization, Investigation.

Funding

This research was funded by Anhui University of Science and Technology Introduction High-level Talents Scientific Research Fund, Grant NO. 2021yjrc02 and Open Research Grant of Joint National-Local Engineering Research Center for Safe and Precise Coal Mining, Grant NO. EC2021006.

Data availability

The datasets generated and/or analysed during the current study are not publicly available due privacy concerns (some images contain coal miners) but are available from the corresponding author on reasonable request.

Declarations

Competing interests

The authors declare no competing interests.

Footnotes

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

References

- 1.Duan, S. et al. Multi-index fusion database and intelligent evaluation modelling for geostress classification. J. Tunn. Undergr. Space Technol.149, 105802–105802 (2024). [Google Scholar]

- 2.Pei, Q., Wu, C., Ding, X. & Huang, S. A weight factor-based backward method for estimating ground stress distribution from the point measurements. J. Bull. Eng. Geol. Environ.82, 365 (2023). [Google Scholar]

- 3.Wojtecki, L., Iwaszenko, S. & Apel, M. M. J. Use of machine learning algorithms to assess the state of rockburst hazard in underground coal mine openings. J. J. Rock. Mech. Geotech. Eng.14, 703–713 (2022). [Google Scholar]

- 4.Leilei, S. et al. The influence of closed pores on the gas transport and its application in coal mine gas extraction. J. Fuel. 254, 115605–115605 (2019). [Google Scholar]

- 5.Rui, G., Le, H., Bao, L., Jingyi, W. & Yuhang, C. Research on underground drill pipe counting method based on improved ResNet network. J. J. Mine Autom.46, 32–37 (2020). [Google Scholar]

- 6.Jie, F., Zhenbi, L. & Liang, X. Drill pipe counting method based on ECO-HC. J. Coal Technol.40, 186–189 (2021). [Google Scholar]

- 7.Dong, Z. & Yuanyuan, J. Drill pipe counting method based on improved MobileNetV2. J. J. Mine Autom.48, 69–75 (2022). [Google Scholar]

- 8.Ghasemi, Y. Deep learning-based object detection in augmented reality: A systematic review. J. Computers Ind.139, 103661–103661 (2022). [Google Scholar]

- 9.Alzubaidi, L., Zhang, J., Humaidi, A. J., Al-Dujaili, A. & Farhan, L. Review of deep learning: concepts, Cnn architectures, challenges, applications, future directions. J. J. Big Data. 8, 1–74 (2021). [DOI] [PMC free article] [PubMed] [Google Scholar]

- 10.Lai, W., Hu, F. & Kong, X. The study of coal gangue segmentation for location and shape predicts based on multispectral and improved mask r-cnn. J. Powder Technology: Int. J. Sci. Technol. Wet Dry. Part. Syst.407, 117655–117655 (2022). [Google Scholar]

- 11.Zhao, Z. Q., Zheng, P. & Xu, S. T. Object detection with deep learning: a review. J. arXiv e-prints30, 3212–3232 (2019). [DOI] [PubMed] [Google Scholar]

- 12.Wenhao, L. et al. Fast location of coal gangue based on multispectral band selection. J. China Laser. 48, 190–200 (2021). [Google Scholar]

- 13.Cao, J. et al. A survey on deep learning based visual object detection. J. J. Image Graphics. 27, 1697–1722 (2022). [Google Scholar]

- 14.Girshick, R., Donahue, J., Darrell, T. & Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. J. IEEE Comput. Soc. Abs/. 1311, 2524, 580–587 (2014). [Google Scholar]

- 15.Dickey, L. J. & Shaw, L. C. Proceedings of the ieee international conference on computer vision. J. Elsevier ence B.v.amsterdam37, 1–387 (2014). [Google Scholar]

- 16.Ren, S., He, K., Girshick, R. & Sun, J. Faster r-cnn: towards real-time object detection with region proposal networks. J. IEEE Trans. Pattern Anal. Mach. Intell.39, 1137–1149 (2016). [DOI] [PubMed] [Google Scholar]

- 17.Redmon, J., Divvala, S., Girshick, R. & Farhadi, A. You only look once: unified, Real-Time object detection. J. Comput. Vis. Pattern Recognit. IEEE 779–788 (2016). abs/1506.02640.

- 18.Jiang, P., Ergu, D., Liu, F., Cai, Y. & Ma, B. A review of Yolo algorithm developments. J. Procedia Comput. Sci.199, 1066–1073 (2022). [Google Scholar]

- 19.Redmon, J. & Farhadi, A. Yolo9000: better, faster, stronger. J. IEEE. 08242, 6517–6525 (2016). abs/1612. [Google Scholar]

- 20.Tian, Y. et al. Apple detection during different growth stages in orchards using the improved yolo-v3 model. J. Computers Electron. Agric.157, 417–426 (2019). [Google Scholar]

- 21.Wenhao, L., Meng-Ran, Z., Feng, H., Kai, B. & Hongping, S. Coal gangue detection based on multi-spectral imaging and improved Yolo v4. J. Acta Optica Sinica. 40, 72–80 (2020). [Google Scholar]

- 22.Mathew, M. P. & Mahesh, T. Y. Leaf-based disease detection in bell pepper plant using Yolo v5. J. Signal. Image Video Process.16, 841–847 (2022). [Google Scholar]

- 23.Duan, S. et al. Tunnel lining crack detection model based on improved yolov5. J. Tunn. Undergr. Space Technol.147, 105713–105713 (2024). [Google Scholar]

- 24.Shuqian, D. et al. Tunnel lining crack detection model based on improved YOLOv5. J. Tunn. Undergr. Space Technol. Incorporating Trenchless Technol. Res.147, 105713–105713 (2024). [Google Scholar]

- 25.Kefei, Z. et al. Enhancing coal-gangue object detection using GAN-based data augmentation strategy with dual attention mechanism. J. Energy. 287, 129654–129654 (2024). [Google Scholar]

- 26.Yang, T., Guo, Y., Li, D. & Wang, S. Vision-based obstacle detection in dangerous region of coal mine driverless rail electric locomotives. J. Meas.239, 115514–115514 (2025). [Google Scholar]

- 27.Jin, H., Ren, S., Li, S. & Liu, W. Research on mine personnel target detection method based on improved yolov8. J. Meas.245, 116624–116624 (2025). [Google Scholar]

- 28.Mu, H. et al. Slim-yolo-pr_kd: an efficient pose-varied object detection method for underground coal mine. J. J. Real-Time Image Process.21, 160–160 (2024). [Google Scholar]

- 29.aatay, C. & Inan, H. A unified framework for derivation and implementation of savitzky–golay filters. J. Signal. Process.104, 203–211 (2014). [Google Scholar]

- 30.Schafer, R. W. What is a savitzky-golay filter? J. Signal. Process. Magazine IEEE. 28, 111–117 (2011). [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.

Data Availability Statement

The datasets generated and/or analysed during the current study are not publicly available due privacy concerns (some images contain coal miners) but are available from the corresponding author on reasonable request.